Abstract

Since ChatGPT's emergence, extensive research has explored the role of generative artificial intelligence (GenAI) in delivering written feedback (WF), encompassing diverse aims, methodologies and findings. This includes studies examining second language (L2) contexts in which such feedback is used, although, to date, no attempt has been made to synthesize these studies for cumulative knowledge building. To provide clarity on the state-of-the-art on GenAI-influenced L2 WF studies, this Preferred Reporting Items for Systematic Reviews and Meta-Analyses-informed scoping review article explores a dataset of 51 such studies taken from Social Sciences Citation Index/Emerging Sources Citation Index-indexed publications since 2022. Two researchers manually coded these studies for data regarding publication outlets, country/region and L2 focus, research aims, research methods, findings and identified (or author-disclosed) limitations. Findings reveal that most studies address improvements to writing quality arising from GenAI produced feedback, and/or student and teachers’ perceptions of such feedback. A diverse set of methods include revision analysis, pre-tests/post-tests of writing quality and quantitative surveys. Results cover improvements in writing quality or skills, mixed perceptions, and varied feedback uptake and revision behaviours particularly when comparing artificial intelligence and human feedback. We close by identifying gaps in cumulative knowledge and suggesting directions for future research.

Keywords

Introduction

The field of second language (L2) writing has already seen fundamental changes in the wake of generative artificial intelligence (GenAI), as researchers, practitioners and students struggle to tackle the technological, ethical, and pedagogical challenges that GenAI has raised. Nowhere is this more apparent than studies on written feedback (WF), which, despite being no stranger to artificial intelligence (AI)-focused research prior to GenAI, for example, studies on written corrective feedback (WCF) tools such as

Despite syntheses available covering WF for L2 writing prior to GenAI (Shi and Aryadoust, 2024) as well as syntheses of GenAI-based feedback for first language (L1) writing (Lee and Moore, 2024), to the best of our knowledge, there is, as yet, no specific systematic review of studies focusing specifically on GenAI WF for L2 writing. By WF, we refer to studies covering: (a) use of GenAI for general feedback on L2 writing (including feedback on grammar, lexis, content and organization); (b) GenAI WCF (specifically covering feedback on errors and their resolution); (c) GenAI AWE (covering evaluations of writing quality, typically against an assessment rubric); or (d) any combination of these.

While recent L2 GenAI reviews have begun to touch on L2 WF, they do so only in passing. M. Li's (2024) focused literature review examines various aspects of GenAI for L2 writing including development, collaborative processing, teacher feedback and assessment, with WF discussed only as one strand within that wider landscape. Similarly, S. Li's (2025) review explores topics such as prompt engineering, assessment, and discourse comparison. Feedback again forms only one component of a broader synthesis, finding that GenAI feedback is more focused on organization while instructor feedback is more focused on substance, together with a necessity for further research involving criteria-based GenAI feedback practices and GenAI feedback quality evaluation. However, neither review provides a systematic, dedicated analysis of GenAI-generated WF for L2 writing. Therefore, in a contribution to collective knowledge building in this space, the present study offers the first Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA)-informed scoping review that concentrates exclusively on this topic. By systematically mapping 51 empirical studies, coding their publication venues, countries/regions, languages, research objectives, methodologies, findings and limitations, this review extends prior syntheses by offering a comprehensive evidence base to guide both future research and pedagogical practice for GenAI-focused L2 WF.

Method

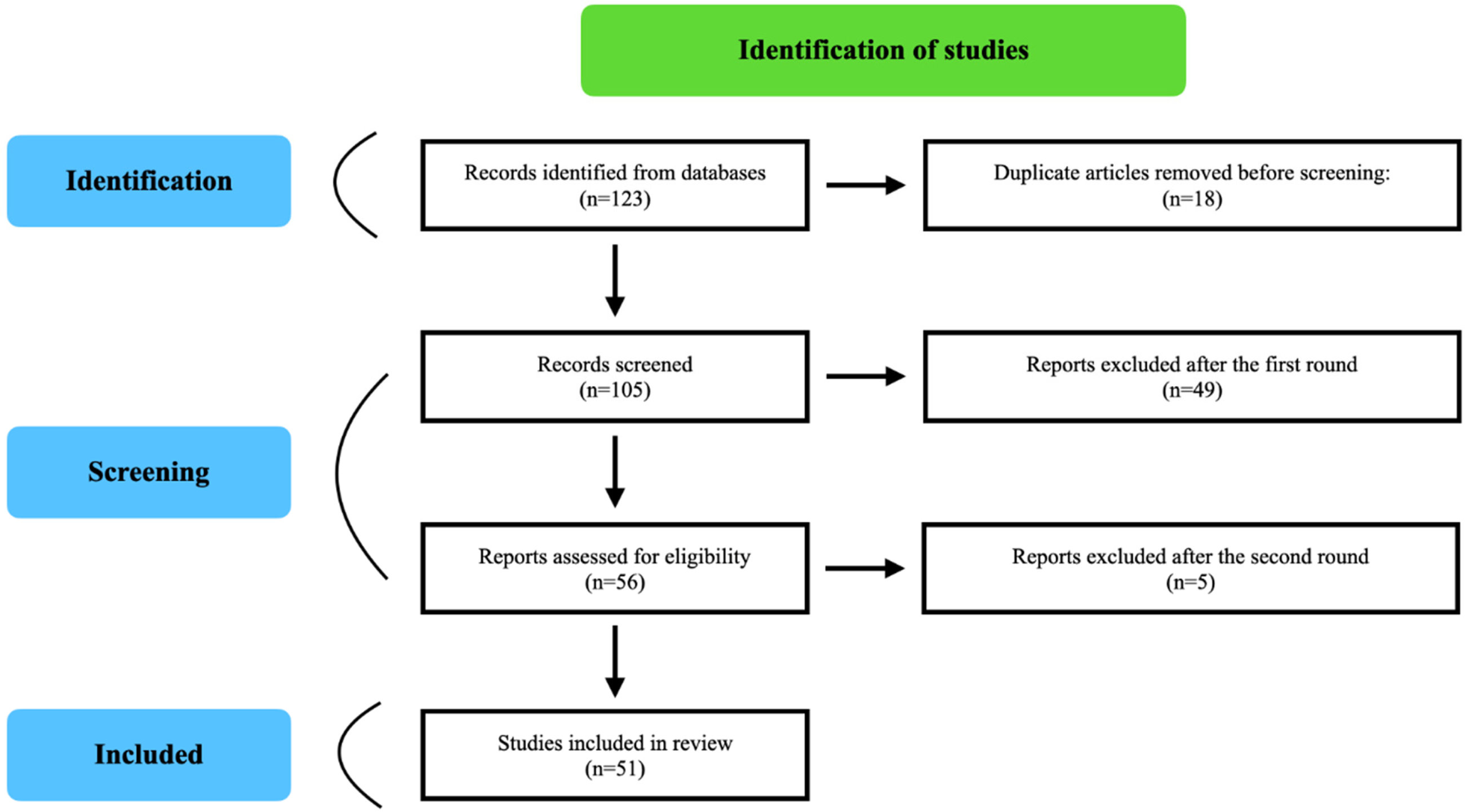

Typically, scoping reviews follow a PRISMA approach (Page et al., 2021). PRISMA is an evidence-based set of reporting standards designed to improve the transparency and completeness of reporting in systematic reviews and meta-analyses. It is not a methodological guideline for conducting reviews but a framework for transparent reporting, often including a flow diagram to document the study selection process. This is presented in Figure 1 regarding the sequence of identification, screening and eligibility statistics in our study, following PRISMA 2000 guidelines.

Identification, Screening, and Eligibility Statistics.

Identification

We first searched Web of Science and Scopus for relevant Social Sciences Citation Index/Emerging Sources Citation Index (SSCI/ESCI)-indexed studies published up to 23 April 2025. Major keywords incorporated ‘Generative Artificial Intelligence,’ ‘Generative AI,’ ‘GenAI,’ ‘GAI,’ ‘Large Language Model,’ ‘LLM,’ or ‘ChatGPT,’ paired with ‘feedback,’ ‘writing/written feedback,’ ‘corrective feedback,’ ‘error correction,’ ‘error feedback,’ ‘grammar correction,’ ‘direct feedback,’ ‘indirect feedback,’ ‘metalinguistic feedback,’ ‘unfocused feedback,’ ‘comprehensive feedback,’ ‘focused feedback,’ ‘reformulations,’ ‘response,’ ‘comment,’ ‘editing,’ or ‘revision.’ We also utilized the snowballing technique to ensure comprehensiveness (Biernacki and Waldorf, 1981). An initial search yielded 123 pertinent SSCI/ESCI-indexed articles, of which 105 studies remained after removing 18 duplicate articles before screening.

Screening

Regarding screening, for inclusion into the dataset, studies had to focus specifically on: (a) L2 writing; (b) L2 written (corrective) feedback, or at least AWE containing WF rather than scores alone; and (c) the use of generative AI in the provision of said feedback, either pre-writing, during-writing or post-writing. GenAI in this study is defined as the use of large language models that can produce human-like text to provide automated, contextually relevant WF for L2 writing. This separates GenAI from other forms of AI such as AWE scoring, automated speech recognition, or rule-based (non-predictive) AI feedback systems such as grammar checkers or template-based feedback tools.

Papers were excluded for the following reasons: no specific focus on WF (

Both researchers read each paper individually to determine its inclusion/exclusion for the dataset, separately colour-coding article entries in the dataset for inclusion/exclusion in a first round of reviews, then meeting to discuss all agreed cases for exclusion and disagreements. The first round led to 49 agreed exclusions, and with six disagreements reaching a second round of coding. A remaining six articles were excluded in the second round, for a final dataset of 51 articles. Due to the small number of articles involved, it was not necessary to conduct quantitative inter-coder reliability checks as these are prone to fluctuation based on sample size and given each article was read and discussed between both coders, with disagreements resolved in person following collaborative reading and re-reading of articles.

Each article was then manually re-read in its entirety (including the abstract, introduction, research questions, methods, results, etc.) to determine: (a) country/region and language focus (where not clear from the metadata); (b) stated research aims; (c) research methods and procedures, including feedback type(s) (WF, WCF and AWE) and feedback timing (pre-writing, during-writing, post-writing); and (d) reported study findings. The researchers also made notes on identified (or author-disclosed) methodological limitations, for example, small participant samples, lack of control group in experimental designs, or limited information about GenAI prompts used in each study.

Results

Publication Outlets

The dataset includes 35 distinct publication outlets. Journals with multiple GenAI and L2 WF studies include

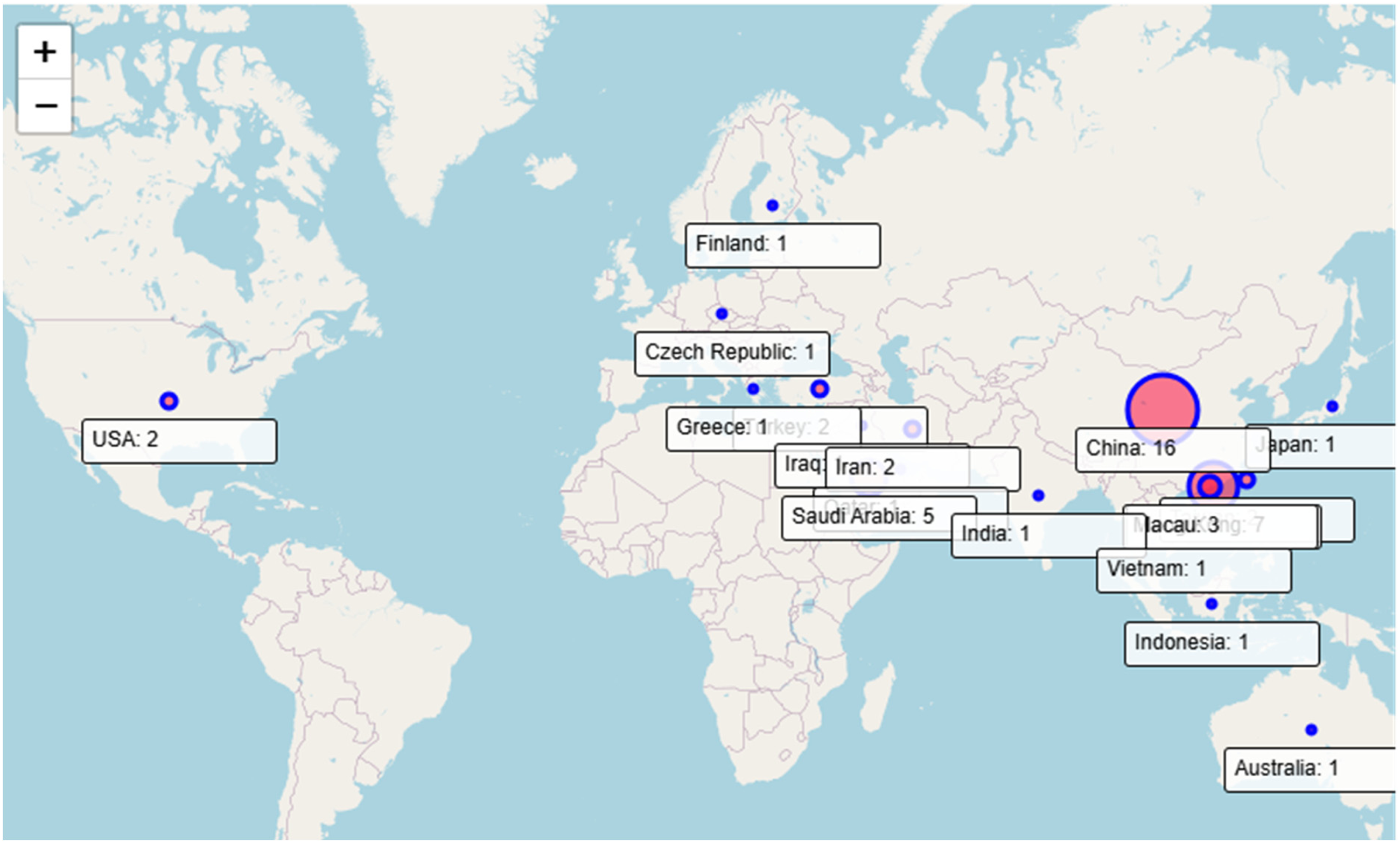

Country/Region and Language Focus

Most studies targeted L2 English (

The research was mostly conducted in Mainland China (

Geographical Location of Featured Generative Artificial Intelligence Second Language Written Feedback Studies.

Research Aims

A diverse set of research aims and questions were present across the studies in our dataset, which can be grouped into several overarching themes.

Most studies (

A significant number of studies (

Eleven studies compared the effectiveness, quality, or uptake of AI-generated WF versus teacher or peer feedback (e.g., Asadi et al., 2025; Lin & Crosthwaite, 2024; Guo and Wang, 2024; Zou et al., 2025). Aims included evaluating differences in feedback types (e.g., direct, indirect and metalinguistic) and their impact on revision practices and writing outcomes.

Relatedly, several studies (

A smaller number of studies (

Finally, a few studies explored specific or novel use cases of AI feedback. These included its role in brainstorming or enhancing coherence and grammar (e.g., Arifin et al., 2024; Su et al., 2023), the effects of prompting strategies on feedback quality (e.g., Tam, 2024) and comparisons between collaborative and individual engagement with AI feedback (Yan, 2024). A small subset (

Research Methods and Procedures

Feedback Type and Timing

Thirty-two studies were classified as involving GenAI-produced WF, with 11 studies identified as featuring exclusively WCF. Four studies explicitly combined WF and WCF, with three explicitly combining AWE grades with WF. With relation to feedback timing, most studies provided feedback post-writing (

Research Methods

Many studies (

Surveys were also used in 16 studies to collect data on student or teacher perceptions, attitudes, motivation, or feedback literacy (e.g., Abduljawad, 2024; Guo and Wang, 2024; Teng, 2024). Interviews, often semi-structured, were also employed in 16 studies to explore perceptions, experiences, or challenges with AI feedback (e.g., Arifin et al., 2024; Kurt and Kurt, 2024; Zou et al., 2025), providing in-depth qualitative data on engagement or feedback uptake.

Observation was used to study student interactions with AI feedback or classroom dynamics (e.g., Abduljawad, 2024; Arifin et al., 2024; Yan, 2024). Often, this complemented other methods such as interviews or surveys. Stimulated recall sessions, where participants reflected on their writing or feedback processes (often while reviewing drafts or screencasts), were used to explore cognitive and behavioural engagement (e.g., Koltovskaia et al., 2024; Long, 2024; Yeung, 2025).

Several studies (

Study Findings

Many studies (

A subset of studies compared the effectiveness of AI feedback with teacher feedback. Several studies found no significant difference between AI and teacher feedback in improving writing (e.g., Alsofyani and Barzanji, 2025; Escalante et al., 2023), while others reported teacher and AI feedback as complementary, particularly for organization or specific error types (e.g., Luo et al., 2025; Zou et al., 2025). Several studies noted that GenAI provided more reformulation or metalinguistic feedback than teachers, but which was often redundant or less relevant for content (e.g., Lin & Crosthwaite, 2024; Li et al., 2024). Notably, combining AI and teacher feedback was more effective than either alone for improving writing quality or addressing diverse error types (e.g., Asadi et al., 2025; Han and Li, 2024; Luo et al., 2025).

Students and teachers generally reported positive perceptions of AI feedback, citing benefits such as motivation, practicality, interactivity, or independence (e.g., Abduljawad, 2024; Kurt and Kurt, 2024; Naz and Robertson, 2024). Some studies, however, noted mixed or negative perceptions, including confusion, mistrust and concerns over over-reliance on AI (e.g., Chen et al., 2025; Escalante et al., 2023; Lo et al., 2025). AI feedback was seen to enhance engagement (behavioural, cognitive and affective) and feedback literacy, with students showing increased motivation, self-efficacy, or collaborative tendencies (e.g., Koltovskaia et al., 2024; Rad et al., 2024; Teng, 2024). However, some studies noted superficial engagement or reliance on AI, reducing creativity or agency (e.g., Shi et al., 2025; Zhan and Yan, 2025). Students preferred AI feedback over peer feedback for editing/proofreading (e.g., Allen and Mizumoto, 2024) or used AI for brainstorming, lexis and coherence (e.g., Arifin et al., 2024). In such cases, AI was perceived as functioning like a personal tutor, although the effectiveness of this role often depended on how the feedback was prompted (e.g., Tam, 2024).

Regarding revisions, studies found that students often accepted and incorporated AI feedback, particularly for form-related corrections (e.g., grammar and lexis), but were less likely to revise content-related feedback (e.g., Chen et al., 2024; Long, 2024). Selective uptake was noted when feedback was excessive or unclear, and uptake varied by proficiency or technological competence (e.g., Tran, 2025; Yan and Zhang, 2024).

Finally, studies assessing the accuracy and reliability of AI feedback reported generally strong performance in detecting and correcting errors in English, but less so in other languages such as Greek or Chinese (e.g., Fokides and Peristeraki, 2024; Yang and Chen, 2025). Other limitations included inaccuracies, misinterpretation of author intent, or errors/hallucinations (e.g., Alsaweed and Aljebreen, 2024; Naz and Robertson, 2024).

Identified Issues

Recurring methodological limitations indicated challenges in ensuring robust study designs in our dataset. Particularly, assessments of ‘writing quality’ often did not relate to the feedback sought from GenAI (e.g., Escalante et al., 2023; Mahapatra, 2024; Lo et al., 2025), with a reliance on self-reported proficiency or skills in some studies (Abduljawad, 2024). Small sample sizes or limited participants were explicitly noted in a few studies (e.g., Tran, 2025,

Discussion and Conclusion

This short review article has outlined the aims, methods, findings, and limitations of over 50 published studies on GenAI-assisted written (corrective) feedback for L2 learning and teaching. Overall, the data reveals a rapidly growing and geographically diverse body of research into GenAI-generated feedback on L2 writing (at least, for English L2 writing), and employing varied research designs to examine writing improvement, engagement and perceptions. Findings appear to suggest AI-generated (corrective) feedback does support improvements in linguistic accuracy and learner engagement, though issues remain around methodological consistency, feedback relevance, prompt transparency and the balance between AI and human input. Our synthesis confirms some of the broad trends also noted by M. Li (2024) and S. Li (2025), such as differences between GenAI and teacher feedback and the mixed perceptions of students and teachers. However, unlike these broader reviews, our study contributes a dedicated and systematic analysis of GenAI-generated WF for L2 writing. By focusing exclusively on feedback, we were able to categorize studies by feedback type (WF, WCF and AWE), timing (pre-writing, during-writing and post-writing), research aims, methods and outcomes, providing a level of granularity not available in earlier syntheses. Furthermore, our scoping review highlights issues that have not been systematically charted before, including the: lack of prompt transparency; predominance of English over other L2 s; and under-exploration of pre-writing and during-writing feedback. In doing so, this study moves beyond the descriptive overviews of previous reviews to establish a more comprehensive evidence base that can inform both future empirical research and practical integration of GenAI feedback in L2 writing pedagogy.

Regarding pedagogical implications, the findings highlight the potential of AI-generated feedback, particularly from tools such as ChatGPT, to enhance L2 writing instruction by supporting individualized, scalable and targeted feedback across diverse learner needs. These insights can inform language education policies that integrate AI responsibly into curricula, promote teacher training in AI literacy and guide best practices for balancing human and machine feedback.

Key priorities for future research include the need for a meta-analysis of GenAI L2 feedback studies dealing with ‘writing quality’ or improvements to other writing skills. Such an analysis could help disentangle evidence-based critiques from uncritical enthusiasm by identifying where and how GenAI feedback genuinely contributes to writing development, and where its limitations remain. Secondly, more attention needs to be paid to the links between revisions arising from GenAI feedback and said ‘writing quality,’ as many studies have not yet taken this into account. Thirdly, far more studies are needed on L2s other than English. Fourth, we strongly recommend that all GenAI-related studies on L2 WF (and more generally) include – as a matter of course – information on the prompts used, given the crucial importance of prompts on all aspects of GenAI output. Fifth, more studies investigating GenAI feedback use at pre-writing and during-writing stages are needed. Finally, a move from self-reported or rater-led data sources to online methods including screencasts, etc., can help capture more authentic, process-oriented insights into how learners engage with GenAI feedback in real time, revealing patterns that may be overlooked in retrospective accounts.

In terms of the study's limitations, due to space requirements for

Footnotes

Ethical Approval and Informed Consent Statements

This study received an ethics waiver from the University of Queensland as it does not involve human subjects with all data sourced from publicly available sources.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interest

The authors declare no conflicts of interest in the submission or publication of this scoping review.

Data Availability Statement

Data is available upon request from the authors.