Abstract

This paper explores the literature related to literacy assessment in the early years of schooling in an era of neoliberalism and reports on a key aspect of a study which focused on the literacy assessment practices of early years teachers and literacy leaders in Australian Catholic schools within the Melbourne archdiocese. Background: The study was undertaken in a period following the Melbourne Catholic Education system’s devolution of literacy assessment responsibility to schools after a long period of mandated literacy assessment (1998–2012) but also occurred within a neoliberal high-stakes assessment environment, characterised by heightened levels of teacher accountability. Research Objectives: The intention of the research was to explore the literacy assessment beliefs and practices of teachers in early years classrooms (F–2), to explore the impacts of assessment devolution and the implications in terms of the literacy assessment practices in the early years in Australian Catholic primary schools in the Melbourne Archdiocese. Methods: A predominantly qualitative case study approach consisting of two phases was used to explore literacy assessment in the early years of schooling. Phase 1 consisted of an online questionnaire comprising both open and closed questions, which was completed by 76 literacy leaders from 76 Catholic schools in the Melbourne archdiocese. The questionnaire was designed to elicit information on schools’ literacy assessment practices in the early years and if these had changed since a Catholic Education Melbourne (CEM) policy change in 2012 allowing schools greater autonomy over literacy assessment in the early years. Phase 2 involved semistructured interviews with 23 early years teachers and seven literacy leaders from eight diverse schools to investigate their literacy assessment practices in greater detail and identify how they responded to the opportunities that came with devolved decision-making.

The literature: Neoliberalism, literacy assessment and the early years of schooling

Currently there is a focus in education on measuring literacy numerically through a range of international, national, and local mechanisms. There is increased accountability on schools which is characterised by the centrality of high-stakes testing such as the Organisation for Economic Co-operation and Development (OECD) Programme for International Student Assessment (PISA), the Progress in International Reading Literacy Study (PIRLS), and the Australian National Assessment Program—Literacy and Numeracy (NAPLAN).

Literacy in neoliberal terms has become a valued commodity and one that needs to be measured to demonstrate learning and to ensure children can eventually contribute economically to society. Savage (2017) stated, “Education, therefore, is now framed and justified in policy as primarily a site for building human capital and contributing to economic productivity, from the early childhood years, right through to the tertiary level” (p. 150). Additionally, in a historical overview of literacy policy, Fehring and Nyland (2012) posited, “The neo-liberal discourse of literacy as human capital is very evident as the dominant ideology framing major curriculum initiatives being promoted” (p. 8). Neoliberalism has changed education from focusing on “personal transformation as well as being part of a social justice ideology” (Fehring and Nyland, 2012, p. 9) to economic productivity. In the current climate, neoliberal policies are shaping curriculum, pedagogy and assessment and the early years of schooling are not immune.

Neoliberalism is described as a political ideology that focuses on competition and free markets and is associated with globalisation. In recent decades, policies based on neoliberalism have become dominant in education (Broom, 2012). In a neoliberal model, free market economic principles are applied to education, which encourage parents’ and students’ choice of schools, assess students using standardised assessments that call mostly for factual recall, promote competition through the public rating and ranking of schools, and ensure compliance through such means as accountability contracts and standards (Broom, 2012, p. 17). Proponents of neoliberalism see it as necessary to increase educational efficiency within a world in which goods, services, and jobs easily cross borders. Increased efficiency can only be attained argue neo-liberals, if individuals are able to make choices within a market system in which schools compete. (Hursh, 2007, p. 498, p. 498)

Neoliberal ideology is found in the education policies of many countries including the United States, the United Kingdom, and Australia. Supporters of neoliberalism believe that competition improves education and that quantifiable information from standardised tests needs to be made available to inform decision-making (Hursh, 2007; Reid, 2010, 2019). This includes parents having access to schools’ assessment data to assist in choosing a school for their child. However, the benefits of competition in education have been called into question, Reid (2010) noted that it creates winners and losers, with schools always at the top and bottom. He stated, “Far from promoting transparency by encouraging openness, collaboration and rigour across an education system, the high-stakes test approach closes this down, by fostering a competitive jockeying for position between individual schools” (p. 18).

Further, Matters (2005) contended that the demand to improve decision-making through data occurs because we are living in an age of accountability facilitated by new technology. Matters (2005), in a critique of neoliberalism’s role in education, also noted the government’s take on monitoring teachers and schools to ensure students are educated to the highest possible standards to have the competitive edge when they enter the workforce; this requires schools to be accountable and to ensure standards are met. If individuals have this competitive edge, they are less likely to be out of work for extended periods of time, and the chances of them relying on the government for welfare is therefore decreased. Collecting data from schools is identified as essential as proof of learning and so, too, is making data available to parents to assist in school selection (Pratt, 2016). However, data are being “used by” and even “used on” teachers to facilitate changes in school outcomes (Pratt, 2016, p. 897). In these neoliberal times, data have become a valued commodity.

Additionally, the “system of testing and accountability permits the state to determine the goals and output without directly intervening in the process itself, thereby reducing resistance” (Hursh, 2007, pp. 28–29). Or as Ball (1990) stated, governments control from a distance; there is a perception of school autonomy, but the process of testing and reporting assessment data to the public means teachers really have no choice but to engage with data game.

The standardization of literacy teaching and assessment in the early years

Between 1998 and 2012, Australian Catholic primary schools in Melbourne- Victoria, implemented an approach to literacy designed to improved outcomes by providing consistency of practice and assessment in early years classrooms. The Children’s Literacy Success Strategy (CLaSS), was an initiative by the Catholic Education Commission of Victoria (CECV) and the Centre of Applied Educational Research, Faculty of Education at the University of Melbourne, and was based on research by Crévola and Hill (2005). The approach was modelled on the Victorian Department of Education’s Early Years Literacy Program which was implemented in Victorian state schools (Department of Education, Victoria, 1997). CLaSS and The Early Years Program were grounded on the principle that schools have a “narrow window of opportunity” (Crévola and Hill, 2005, p. 4) to improve student literacy learning outcomes, and that early intervention is essential as children who fail to make progress in the first 2 years of schooling rarely catch up with their peers.

As part of the CLaSS project, each school was required to appoint a literacy coordinator to ensure successful implementation of the project. The Catholic system provided schools with additional funding to support the role of the literacy coordinator and targeted ongoing professional learning for literacy coordinators, early years teachers, and principals. The literacy coordinator role was seen as pivotal for improving literacy teaching, learning, and assessment. The literacy coordinator acted as a coach and mentor to teachers in the early years. The title of coordinator was subsequently changed to literacy leader in recognition of the importance of leading literacy change in schools (Fullan et al., 2006). During the CLaSS period (1998–2012), all early years teachers, literacy leaders, and school principals participated in professional learning facilitated by Hill and Crévola and literacy staff from the Catholic Education Office Melbourne. The professional learning was designed to build literacy principals’ and literacy leaders’ capacity to lead literacy improvement and for early years teachers to teach and assess literacy in the early years to ensure early literacy success.

Internationally, in the late 1990s, a similar approach to CLaSS and the Early Years Literacy Program was adopted in United Kingdom. The National Literacy Strategy (NLS) required schools in the United Kingdom to implement a structured daily 1 hour reading session. All three approaches CLaSS, The Early Years Program and NLS had in common a standardized approach to literacy teaching and the use of mandated literacy assessments used as evidence of student learning. Additionally, all three approaches saw an increase in students reading scores in the initial years of implementation of the approaches however these results subsequently plateaued, and the approaches were eventually dropped. In the Victorian context CLaSS and the Early Years Program were not replaced by anything specific but the changes to the National Literacy Strategy in the United Kingdom were more significant.

According to Moss (2017), the dismantling of the NLS was politically motivated. The National Literacy hour had received widespread condemnation and criticism after results had plateaued. A government review, led by a pro phonics proponent, resulted in the NLS being dropped in favour of a phonics first based approach to the teaching and assessing of reading in the early years. Moreover, Moss (2017) argued that the dismantling of the National Literacy Hour in the United Kingdom in 2010, a very similar approach as CLaSS, was problematic. She stated that teachers had a familiar way of working and assessing stripped from them, virtually overnight, with very little guidance provided to support them with the change: The department had stepped back from its role as the authoritative and reliable source of support for schools. Schools were on their own now, acting as purchasers in a market for system improvement resources, choosing their own path, and following their own ideas, making their own fixes, and thus, of course, free to make their own mistakes too. (Moss, 2017, p. 61, p. 61)

In 2012, Catholic Education Melbourne (CEM) moved from the long period of prescriptive, mandated literacy assessment that was part of the CLaSS approach (1998–2012) to allowing schools much greater autonomy in terms of literacy assessment in the early years. However, the literacy assessment autonomy occurred in a climate characterised by high levels of accountability in relation to mandated government testing, reporting against mandated curriculum standards, and reporting of data to the wider community.

Teacher assessment autonomy in an era of accountability

Butland (2008) called for Australian policymakers to learn from the mistakes of other countries and to support teachers to feel confident in contextualising student learning, providing a broad curriculum and ensuring assessment is linked with learning (p. 26). Moreover, Hargreaves (2010) called for teachers’ professional judgement to be respected, like that of a doctor, noting that data matters but so, too, does teacher judgement (p. 58). In the current climate with its focus on standardised, quantitative data, the importance of teacher judgement is often missed. Reid (2019) stated that we need to change the current discourse, which lacks support for teacher judgement. He stated, “It is the professional judgement of teachers made in the context of their teaching that not only provides the best way to support student learning, but also the best evidence for system-wide accountability” (pp. 298–299).

Enabling teachers to use their professional judgement in the process of assessing students’ literacy requires a level of autonomy. The importance of teacher autonomy is well documented in the literature (Butland, 2008; Caldwell, 2016; Hargreaves, 2010; Phelan, 1996; Pitt and Phelan, 2008). If the aim is to create autonomous students, autonomous teachers are required (Larson, 2011; Pitt and Phelan 2008). Autonomy is defined by Pitt and Phelan (2008) as the ability to “think for oneself in uncertain or complex situations in which judgement is more important than routine … Teaching involves placing one’s autonomy at the service of the best interests of children” (pp. 189–190). The belief that high-performing teachers are required to produce high-performing students (Appel, 2019) has resulted in key performativity measures (Ball, 2003) and teacher surveillance mechanisms, which include teacher standards, prescriptive curriculum, and standardised testing, all of which curtail teachers’ autonomy. Strong and Yoshida (2014) described autonomy as the aspect teachers desire most in their teaching. However, autonomy is difficult to achieve in an era of high accountability. A large-scale quantitative study in the United Kingdom, in which 1,100 teachers completed an online questionnaire about their perceived autonomy, found that teachers had lower professional autonomy than other professions, with teachers reporting particularly low autonomy over assessment and data collection. The researchers reported that school policies were restraining teachers’ assessment autonomy (Worth & Van Den Brande, 2020).

Performative measures impact teachers’ autonomy in developing and carrying out meaningful assessment in their day-to-day teaching. Moreover, a focus on summative assessment, using mandated assessment, is causing an erosion of assessment for learning (AfL) in the classroom, according to Popham (2006), a staunch advocate of AfL. AfL occurs throughout the teaching and learning cycle and results in statistically significant gains in student learning (Black and Wiliam, 1998; Organisation for Economic Co-operation and Development [OECD], 2005). Popham (2006) argued that in the current high stakes testing climate, with its focus on accountability and scores, teachers find it difficult to maintain fidelity to AfL, abandoning it to focus on teaching to the test to enable improvement in scores (p. 2). He is not alone in this argument; Darling-Hammond and Adamson (2010) stated that high-stakes testing can limit teachers’ instruction, resulting in teachers emulating the content and format of the test. They discussed the limitation of multiple-choice-style summative assessments and called for a much broader view of assessment. Similarly, Stiggins (2008) noted that although high-stakes testing serves accountability purposes, the obsession with this form of assessment has done education no favours in terms of narrowing the so-called gap it was designed to narrow and called for a refocus on assessment that matters—AfL—to truly narrow this gap. Adoniou (2018) also criticised the focus on assessment at the expense of teaching, calling for a renewed focus on teaching that she argues has been lacking in the past 10 years. She acknowledged a need for teachers to be accountable but not at the expense of teaching: Of course teachers should be accountable, they have a hugely important job—the education of Australia’s future. However, it is unfair to be held accountable to a regime you have had little to no say in. And for the last decade all the talk has been about testing, and nobody has been talking about teaching. (para. 5)

Assessment knowledge and beliefs

The importance of teachers’ knowledge of assessment is well documented by theorists and researchers (DeLuca and Klinger, 2010; Mertler, 2004; Popham, 2006; Stiggins, 1999). However, again, there is a recognised tension between the knowledge that teachers produce from inside the classroom and the knowledge from researchers and policymakers, according to Meyers et al. (2009). In the Australian context, AITSL (2018) has set out professional standards for teachers to ensure high-quality learning and teaching for all students. There are seven standards that teachers perform at increasing levels of proficiency. Standard 5 directly relates to assessment and states that teachers need knowledge around assessment to ensure they can effectively assess student learning, provide feedback to students on their learning, and make consistent and comparable judgements. The continuum recognises that these skills develop over time as teachers progress from graduate teachers to lead teachers.

The terms “assessment-capable teachers,” “assessment knowledge,” and “assessment literacy” are used interchangeably throughout the literature, without great differentiation, to discuss the knowledge and dispositions educators possess in relation to assessment and how these are used in their practice (Mertler, 2004). Willis et al. (2013) acknowledged the lack of clarity in the field and providing the following definition for assessment literacy: Assessment literacy is a dynamic context dependent social practice that involves teachers articulating and negotiating classroom and cultural knowledges with one another and with learners, in the initiation, development and practice of assessment to achieve the learning goals of students. (p. 2)

Consistently, there is an expectation that teachers need to have an excellent knowledge of assessment practices and tools and how they can be used to improve student learning (Claveric, 2010). However, again, teachers’ ability to assess effectively has often been called into question. Some lament the lack of teacher knowledge around assessment: “There is a wealth of research evidence that the everyday practice of assessment in the classroom is beset with problems and shortcomings” (Black and Wiliam, 1998, p. 5). Likewise, Mertler (2006) posited that teachers lack the fundamentals of assessment, and this affects their ability to use assessment to improve learning and motivate students. This deficit view of teachers’ ability to assess effectively is widely held by experts in the area (Masters, 2013; Popham, 2006; Stiggins, 1991). Griffin (2014) went as far as saying that curriculum specialists do not have the requisite knowledge to be assessment experts. He noted the success of specialist programs facilitated by the Assessment Research Centre at the University of Melbourne to build the capacity of teachers’ assessment knowledge, citing the demonstrated success in the improved levels of students across 400 schools (p. 1).

Research over a 30-year period has identified that teachers themselves have doubts about their knowledge in assessment and express their desire for further assistance in the area: “In short, across multiple studies conducted over decades, a significant proportion of teachers report uncertainty or a desire for improvement in their assessment practices” (Kim and Young, 2010, p. 6). A large-scale empirical research undertaken by Lysaght and O’Leary (2017) involved 594 primary school teachers from 42 schools in the Republic of Ireland who completed a self-assessment questionnaire to audit their own assessment capacity. The data revealed an urgent need to develop teachers’ assessment literacy (p. 271). The researchers proposed that this capacity building should take place on site in schools rather than through large-scale external professional learning.

There are those that hold a more positive view of teachers’ assessment knowledge (e.g., Goertz et al., 2009; Howley, 2013; Leighton et al., 2010), positing that teachers possess a sophisticated, grounded knowledge of assessment. This knowledge base consists of theoretical ideas about assessment as well as practical logistics around linking assessment to teaching and learning (Howley, 2013). This knowledge base should be taken seriously when researching and discussing assessment literacy (Howley, 2013).

Many studies have illustrated that teachers’ knowledge of assessment can be built through working with an “expert” (Black et al., 2003; Goe and Mardy, 2008), and some recognise that teachers can build their knowledge of assessment though rigorous dialogue around evidence through professional learning team (PLT) meetings (Griffin, 2010, 2014; Meyers et al., 2009). Assessment literacy is built when teachers reflect on their practice (Howley, 2013). Teachers who possess assessment literacy do not blindly implement what they are “told” by experts; they adjust their assessment practices to suit the context (Howley, 2013). The system in which teachers work also plays a role in supporting teachers to build their assessment knowledge. Wylie et al. (2009) noted that teacher professional development is insufficient without considering the larger system in which teachers work. Administrators need to provide support for their teachers’ growth within a larger systemic context.

Bradbury and Roberts-Holmes (2018) conducted four qualitative research projects between 2008 and 2017 that focused on classroom assessment practices in the first year of schooling in the United Kingdom. The findings from their research indicated that a focus on assessment and quantifiable data had filtered down and was impacting negatively in the early years. They discussed the “datafication” of the early years, a term used to describe the dominant production and use of data with young children and the impact this has on both children and teachers. Their research also indicated that datafication resulted in a narrow approach to teaching and assessing literacy. Bousfield and Ragusa (2014) also discussed datafication as leading to an “adultification” of childhood, describing it in the Australian context as children being subjected to developmentally inappropriate expectations, pressure, stress and precocious knowledge in response to NAPLAN testing and reporting. Adultification, we argue, is a side-effect of individualisation, managerialism and neo-liberal government policy played out in Australian schools and exposing children to the harsh realities of political, economic and social life. (p. 170)

The following section of this paper reports on research that investigated how early years teachers responded to the system’s devolution of literacy assessment that had been part of the CLaSS approach. Interestingly,theorists have contended a devolved approach is difficult to achieve in an era characterised by a high level of accountability (Ball, 2003; Hursh, 2007; Reid, 2010). Ball (2003), drawing on the work of Du Gay (1996), raised the question of whether there can ever be true control in education, describing a “controlled de-control” (Ball, 2003, p. 217) and further noting there is only an appearance of freedom in a devolved environment. Deregulation in an era characterised by neoliberal policy would be better described as reregulation, a mere shifting of sands (Ball, 2003). Additionally, Farris-Berg (2014) reiterated Ball’s (2003) and Du Guy’s (1996) argument, stating, “Many teachers say they control what happens in their classroom within the boundaries of policies that have already been determined by other parties” (para. 9). The devolution of assessment responsibility by the Catholic system to teachers afforded greater opportunity for autonomy in the early years, but this autonomy occurred in an era characterised by high accountability due to a range of key neoliberal policies

Children’s literacy success strategy

At the commencement of CLaSS, standards and targets were set in relation to students’ reading achievement in the first 2 years of schooling. Teachers were required to assess students at both the beginning and end of the school year using standardised literacy assessment tools. A system requirement was for assessment results to be reported to CEM via an online portal. The online portal also allowed schools to compare their literacy assessment results to schools with similar enrolment demographics (“like schools”) and was used as a mechanism for the system to monitor the literacy performance of schools.

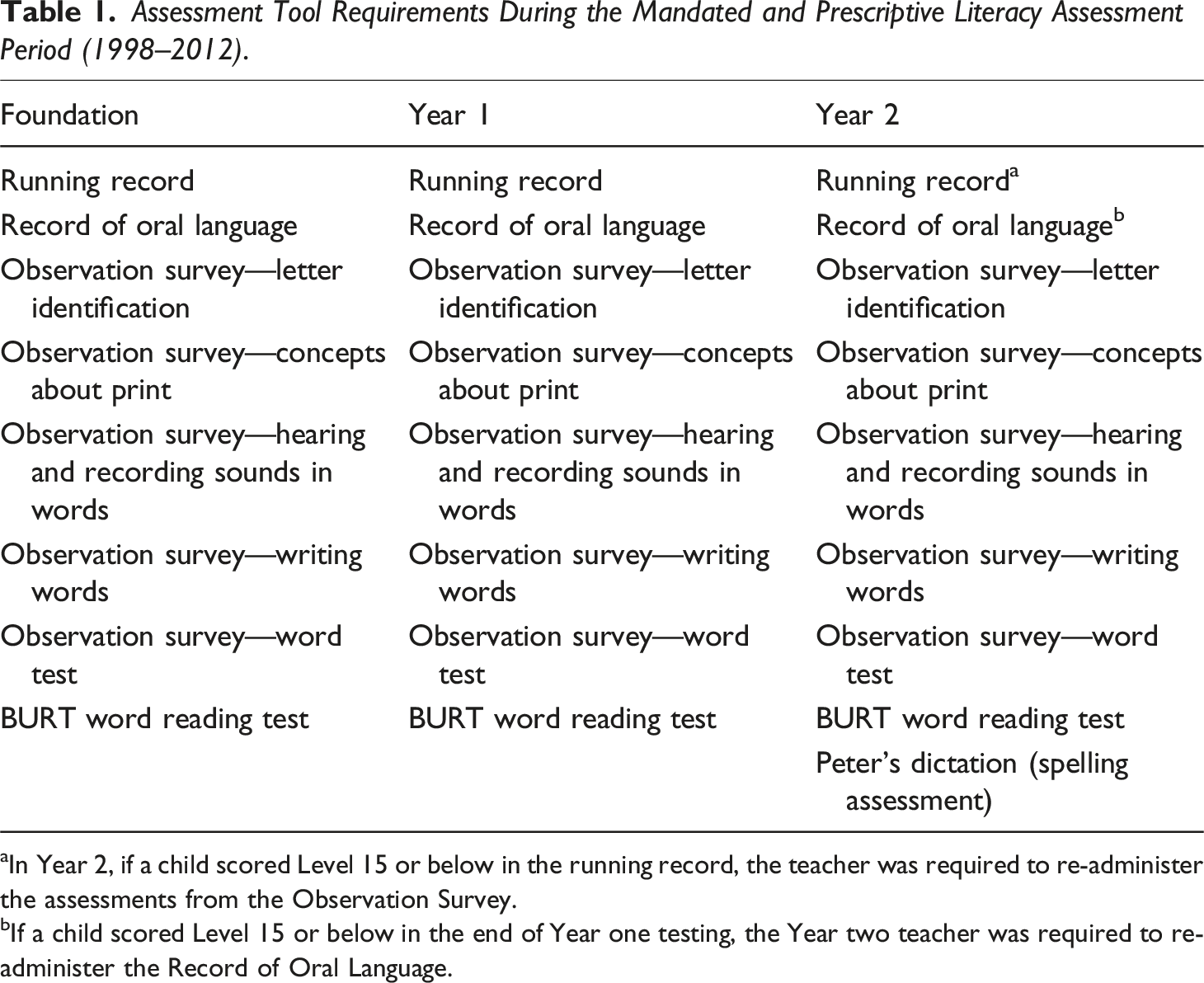

Assessment Tool Requirements During the Mandated and Prescriptive Literacy Assessment Period (1998–2012).

In Year 2, if a child scored Level 15 or below in the running record, the teacher was required to re-administer the assessments from the Observation Survey.

If a child scored Level 15 or below in the end of Year one testing, the Year two teacher was required to re-administer the Record of Oral Language.

The Observation Survey was designed to be completed in its entirety, enabling the teacher to gain a more holistic picture of a student’s literacy strengths and needs (Clay, 2005). It was originally designed by Clay (1993) to be used with children who had completed 12 months at school but, from 1998 to 2012, CEM prescribed its use for children in Year 1 and 2 as well as the first year of school (Foundation level). In addition to the Observation Survey, teachers were required to administer the Record of Oral Language (Clay et al., 2007), and CEM also mandated the BURT Word Reading Test (Gilmore et al., 1981) prior to 2012. Once children were in Year two of school, and if they could decode a text level of 15, teachers were no longer required to administer the Observation Survey but would continue to administer a word reading test (Gilmore et al., 1981) and a dictation task to assess spelling (Peters, 1975).

In 2008, after 10 years of CLaSS, the CEM established a focus group to consult with principals and literacy leaders from 10 schools to review the literacy assessment practices of Catholic primary schools in the Melbourne archdiocese. I worked with the focus group during 2008 and the leadership teams form the 10 schools articulated that the CLaSS approach to assessment was overly complex, time consuming to administer, insufficiently attentive to differing school contexts, and did not inform teaching. Participants in the focus group reported they were not using the data collected from the mandated literacy CLaSS assessments to inform teaching; rather, they reported that they largely saw the data collection as a requirement for the system. This tension of teachers using data for dual purposes—to inform teaching and improve learning as well as for accountability purposes—is well documented (e.g., Parr and Timperley, 2016). Because of this tension, it was decided that further research was required, and the Assessment Research Centre (ARC) at the University of Melbourne was engaged in 2011 to investigate all aspects of the CLaSS-mandated literacy assessment in the early years (F–2).

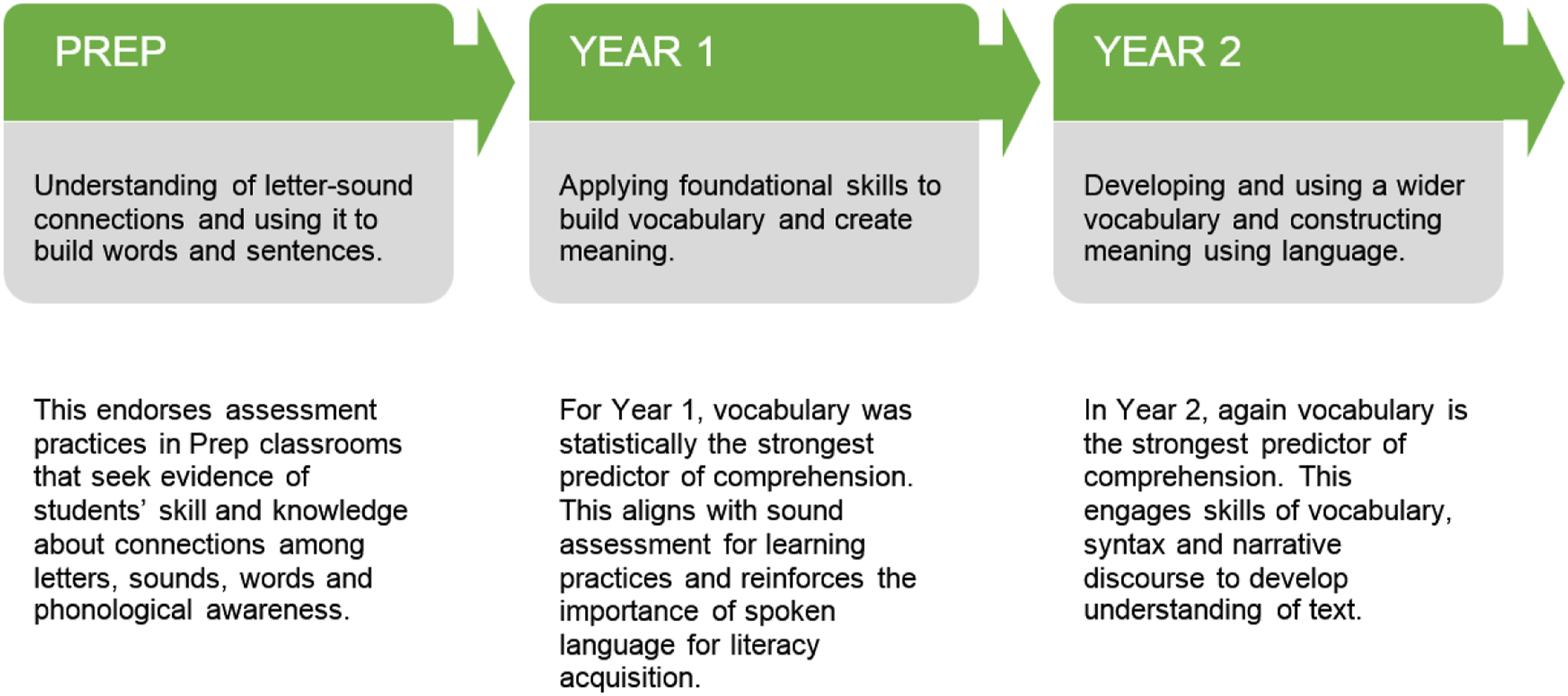

The ARC research involved 28 Catholic primary schools from across Victoria trialling a range of literacy assessment tools. The ARC recommended a focus on the skill development of students from Foundation to Year two and identified the component skills that contribute to reading comprehension in the early years, as depicted in Figure 1 ARC component skills that contribute to reading comprehension. Note. Adapted from Report to Principals P–2 Literacy Assessment (p. 2) by Catholic Education Melbourne, East Melbourne: Catholic Education Melbourne. Copyright 2013. Adapted with permission.

The ARC recommended the teaching and assessment of both constrained and unconstrained literacy skills. The literacy testing during the mandated period was dominated by the assessment of constrained skills. Constrained skills have been identified as finite, that is they can be assessed and have a ceiling. They are print and sound related and include such things as knowledge of letters and sounds and an awareness of how print and books work (Dougherty Stahl, 2011). When teaching and assessing of constrained skills are prioritised in the early years this leaves less time to focus on unconstrained skills. Unconstrained skills have been identified as important in determining long term literacy success (Snow and Matthews, 2016) and also need to be prioritised in terms of teaching and assessing in the early years although these skills are much more difficult to measure as they are not finite and include such things as vocabulary knowledge, oral language such as narrative telling and describing as well as comprehension.

The ARC report resulted in a seismic change of CEM policy around school-level literacy assessment and reporting. Instead of being compelled to implement a plethora of literacy assessments, which focussed on assessing constrained skills, and reporting the results to the CEM, after 2012, it was decided that schools would only be required to collect two pieces of evidence to enable the system to track student achievement. Schools would still be required to administer the Record of Oral Language (Clay et al., 1993) at the beginning of the year and administer a running record (Clay, 2013) at the end of the year using the prescribed texts; all other literacy assessment decisions were at the school’s discretion. This marked a very strong pendulum swing from highly prescribed and centralised decision-making to that which involved far greater choice and was more localised. It offered schools and teachers increased agency and empowerment in decision-making around literacy assessment.

Little was known about how schools responded to this devolution and the decisions that schools were making in terms of literacy assessment in the early years because of the change in official system requirements. My research aimed to find out about impact of this change on the literacy assessment practices of teachers in the early years.

Research design

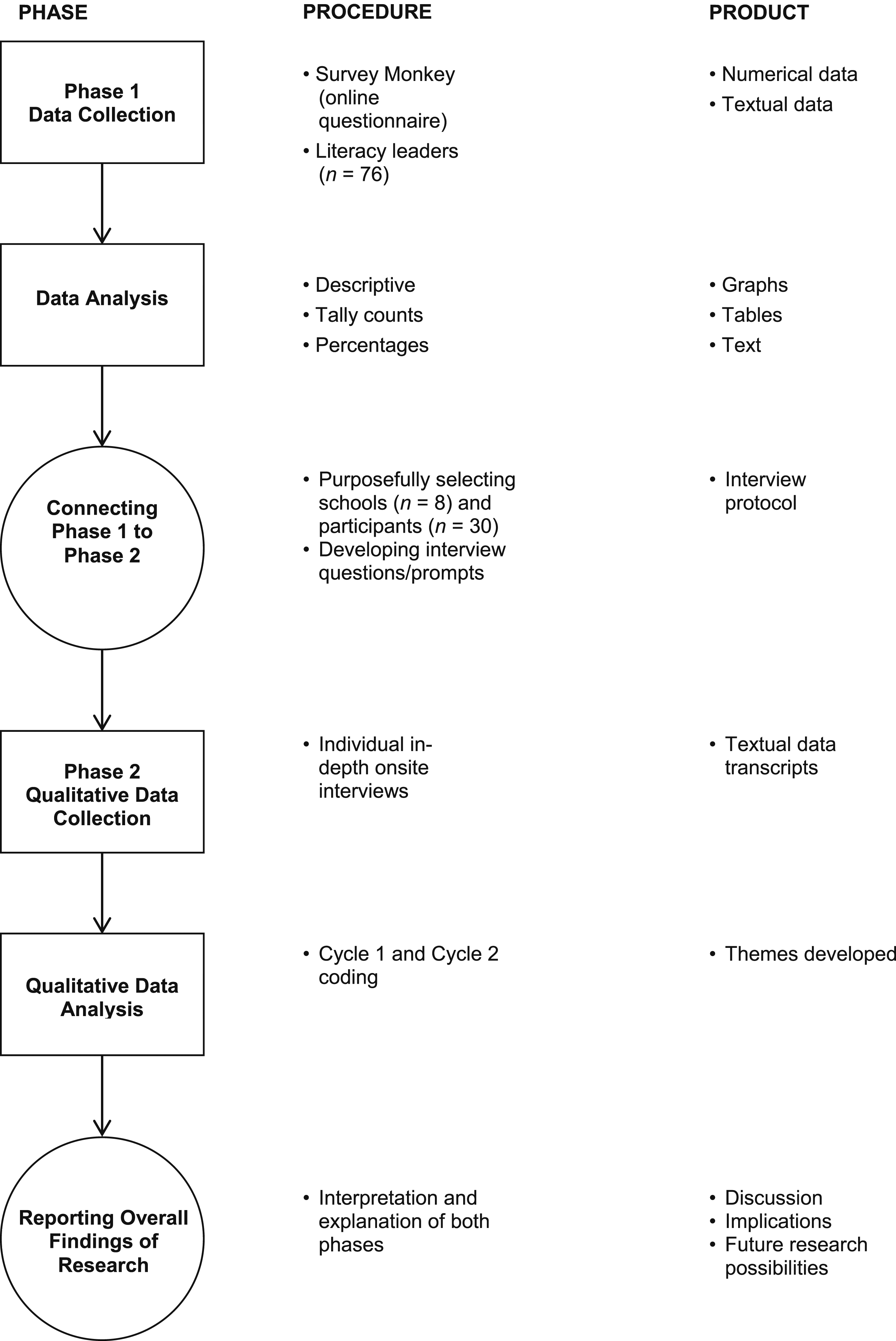

My research consisted of a two-phased, predominantly qualitative mixed-methods sequential explanatory design (Ivankova, Creswell & Stick, 2006). The benefits of this design are that the two data collection phases built upon each other (Creswell, 2015). The sequential explanatory design method has been identified as popular, but it is also recognised as challenging to conduct as it takes time to implement the two stages in sequence. A further challenge is identifying the results from the initial phase that require further explanation (Creswell, 2015, p. 38). Other considerations for the researcher using a sequential explanatory design involve how the researcher prioritises the different data collection and analysis methods, how the two phases are connected during the research process, and how the results of both phases are integrated (Ivankova et al., 2006 p. 4).

Advocates of a sequential explanatory design approach recommend using a visual representation: Such graphical modelling of the study design might lead to better understanding of the characteristics of the design, including the sequence of the data collection, priority of method and the connecting and mixing points of the two forms of data within a study. (Ivankova et al., 2006, p. 4, p. 4)

Figure 2 Visual model for the two phases of the sequential explanatory design model.

Participants for both data collection phases were drawn from Australian Catholic schools in the Melbourne archdiocese. The aim of Phase 1 was to find system-wide information via an online questionnaire about the impacts of assessment devolution and what this meant in terms of literacy assessment practices in the early years in Australian Catholic primary schools in the Melbourne archdiocese. Phase 2 involved qualitative data collection in the form of semistructured interviews with literacy leaders and early years teachers in eight schools to enable a more refined explanation (Ivankova et al., 2006, p. 5) of the questionnaire results from Phase 1 by exploring participants’ views in relation to literacy assessment in the early years in greater detail.

Data collection phase 1 questionnaire

To gain a systemic picture of the devolution’s impact I sent an outline of the research to the principals of the 110 Catholic primary schools in the Melbourne archdiocese, including a consent form seeking the schools’ involvement in both phases of the research. I also asked for support from the Catholic Education regional managers who promoted my research through their contact with schools.

Ninety-three principals responded with their permission to contact the schools’ literacy leaders to seek their engagement with the research. The literacy leaders were contacted, and the research outlined to them, and they were invited to complete the online questionnaire. Literacy leaders were chosen in the first instance to complete the phase 1 questionnaire due to their role in leading literacy learning in the early years being prioritized during the mandated assessment period. A link to the questionnaire was emailed to the literacy leaders in the 93 schools. Of the 93 literacy leaders who expressed interest, 76 completed the questionnaire.

An online questionnaire was developed to collect as much information as possible on the impact of the devolution of assessment for schools. The questionnaire consisted of open and closed questions. Questions were designed to gain demographic information as well as more detailed textual and numerical information about each school’s early years literacy assessment practices.

The Phase 1 questionnaire was administered through the Survey Monkey software tool and consisted of 19 questions. Information was elicited from the literacy leaders in first instance into their school’s assessment practices and to determine what, if any, changes they had made to their assessment since the 2012 literacy assessment devolution.

The initial questions on the questionnaire were designed to find out demographic information on the schools and literacy leaders. This demographic information was important to enable me to explore if school demographics such as size, location and the experience of the literacy leader were factors in how the schools recontextualised and reproduced official assessment policy.

The literacy leaders were asked to identify the literacy assessments they had maintained from the mandated assessment period and those they no longer used. Literacy leaders were also asked to identify any additional assessment they used since the assessment devolution, this provided insights into how innovative schools had been as a result of the devolution.

I asked literacy leaders to identify the CEM literacy assessment professional learning activities they had engaged with as part of the literacy assessment devolution to gain insights into how official messages form the system were recontextualised and reproduced at the school level. Additionally, I asked the literacy leaders for their opinions on the support the system provided schools with the transition from the highly mandated assessment period to the period allowing schools much greater literacy assessment autonomy.

I asked the literacy leaders to comment on the questionnaire on how often they spent time discussing literacy assessment and how they managed these discussions. They were also afforded the opportunity to provide additional discursive comments in relation to literacy assessment in the early years, the responses provided key insights into the assessment beliefs and practices of the literacy leaders.

Data collection phase 2 semi structured interviews

To determine participants for Phase 2 of the research, the questionnaire asked the literacy leaders if they were willing to participate in a face-to-face interview, which would include interviewing three teachers from the early years classrooms in their schools. Fifty-eight respondents expressed an interest in participating in Phase 2 of the research. A second question to determine the schools to be involved in Phase 2 asked literacy leaders to identify the extent to which their assessment literacy practices in the early years had changed since the 2012 policy change that allowed schools greater autonomy over literacy assessment. Respondents identified their assessment practices as having changed either significantly, moderately, or not at all. The aim was to identify schools that fell into these three categories initially and then to reduce this number to a manageable number of schools to engage in the research.

The purposive sampling used in this research could be described as heterogeneous or maximum variation sampling as participants for phase 2 of the research were selected based on the diversity they could offer to the research. In this instance, schools were selected based on both their responses to the questionnaire and their diversity in terms of students’ socioeconomic background, school size, and school location. Eight diverse schools were selected from the 58 phase 1 schools that had indicated an interest in participating in Phase 2 of the research. Of these eight schools, • four literacy leaders identified their schools as having made significant changes to their literacy assessment since the 2012 devolution; • three literacy leaders identified their schools as having made moderate adjustments to their literacy assessment since the 2012 devolution; and • one literacy leader identified that the literacy assessment practices of their school had not changed since the 2012 devolution.

In total, seven literacy leaders and 23 early years teachers were interviewed for the Phase 2 data collection.

I devised the questions for phase 2 of the research to build upon the results from the phase 1 questionnaire and to answer both the research questions. The interview questions were also framed by both Bernstein’s theoretical framework and the literature used to inform the study. A copy of the interview protocol can be found in the appendix.

Bernstein’s (1971) described three interrelated message systems of schooling: (a) curriculum—that is, what is to be taught; (b) pedagogy—that is, how it gets taught; and (c) evaluation—that is, how the learning is assessed. I asked participants questions to find out about these areas; I asked about the aspects of literacy they thought were important to teach and assess and how they used the data gathered from their literacy assessments. Additionally, I asked the participants questions to ascertain who and what influenced the early years teacher’s assessment beliefs and practices.

The literature described how a range of neoliberal policies including, high-stakes testing, a curriculum based on standardised expected achievement, and school data, have constrained teachers’ autonomy. I consequently asked questions to explore teachers’ interrogation, innovation, resistance, or acceptance of these neoliberal policies to determine how the early years teachers reproduced these policies as part of their classroom literacy assessment practices.

Additionally, as my research explored the CEM policy change affording early years teachers greater literacy assessment autonomy, I asked questions to find out about how this policy change impacted the participants’ literacy assessment beliefs and practices.

The use of Bernstein’s pedagogic device as a theorising tool

Following the initial coding of the data to determine themes, Bernstein’s pedagogic device (1990, 1996, 2000) was used as a theoretical lens to examine the data further. Bernstein’s framework provided a mechanism for exploring the connections, complexities, and tensions in the field of early years literacy assessment. Application of this theoretical lens assisted with the identification and analysis of teachers’ literacy assessment beliefs and practices. Additionally, it provided a language for discussing how these beliefs and practices were shaped and influenced by different agents. In earlier research, Chen and Derewianka (2009) likewise applied a Bernsteinian lens to their research around literacy policy shift, arguing it provides a mechanism for exploring the “forces at work in this period of change” (p. 235).

Bernstein’s pedagogic device is a symbolic framework that illustrates a relay of knowledge from the Official Recontextualising Field (ORF) to the Pedagogic Recontextualising Field (PRF). The ORF dominated by the state and systems who have official power to make decisions relating to pedagogy, curriculum, and assessment, this knowledge is then made accessible to educators who operate in the PRF through a policy and programs which are relayed to the school and classroom level. Bernstein posited that there are many forces at play in the ORF who jostle for control over what happens in the PRF, he stated “Today the state is attempting to weaken the PRF through its ORF, and thus attempting to reduce relative autonomy over the construction of pedagogic discourse and over its social contexts” (Bernstein, 2000, p. 33). Therefore, whoever has the greatest control in the ORF has great power and influence over what happens in schools and classrooms.

Results and discussion of phase 1

A key finding from the data collected in Phase 1 of the study revealed that of the 76 schools identified, 75 had changed their literacy assessment practices as a result of the devolution, thereby leaving only one school who maintained the same literacy assessment tools and practices from the compulsory and mandated assessment period (1998–2012). Although schools were given the opportunity to be innovative, the schools did not seize this opportunity but opted to maintain many of the previously mandated tools, albeit in more flexible ways, such as choosing which tools from The Observation Survey to use and to which children to administer them. The strong influence of the system was still apparent. After over 12 years of following system mandates, schools may have found it difficult to fully embrace assessment autonomy. Bernstein (2000), when discussing autonomy and change, identified a point where you can be in the “prison of the past” or a boundary as a “tension point which condenses the past yet opens the possibility of futures” (p. 206). It appears that schools were at the boundary point, holding onto many literacy assessment practices of the past, although the Phase 1 participants also identified adding an additional range of literacy assessment tools, thus demonstrating a freeing of the past and embracing future possibilities.

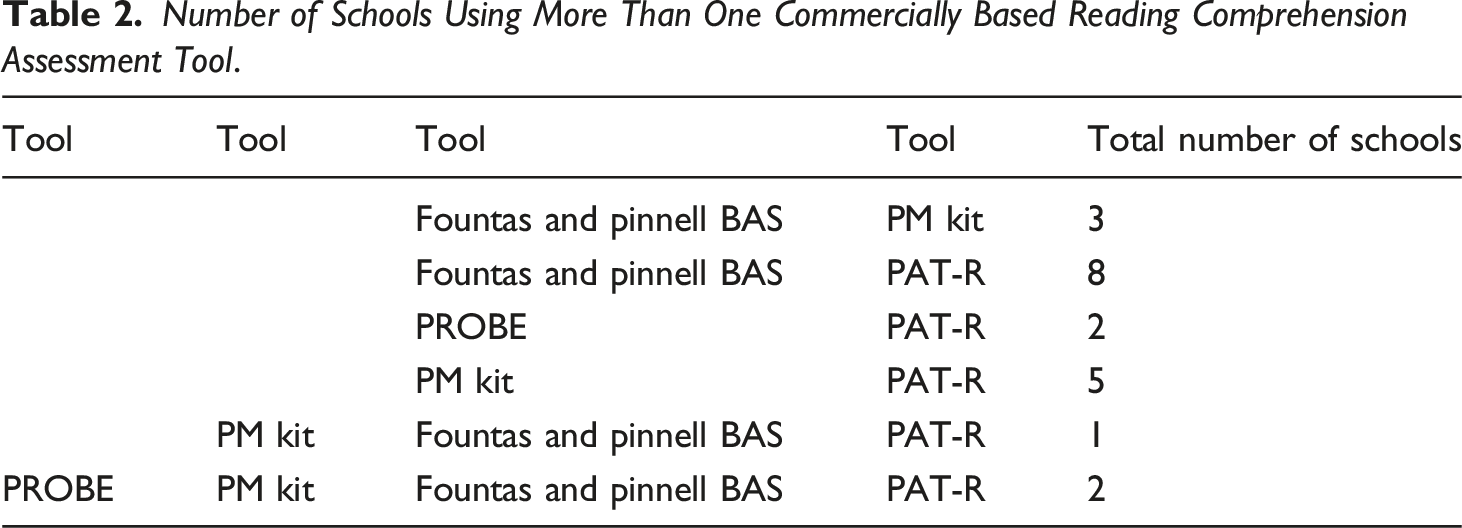

Number of Schools Using More Than One Commercially Based Reading Comprehension Assessment Tool.

Researchers have identified this overreliance on commercially produced assessment packages as an area of concern; Paris et al. (2001) discussed effective literacy assessment involves making judicious choices and being selective about which tools are used for which purposes and for which students. In their research, they found teachers frequently added too many new assessments to existing assessments and identified this as a burden for both teachers and students (p. 14). This was the case for many of the schools that participated in Phase 1 of my research, as they reported a heavy reliance on commercially produced literacy assessment tools. Implications for teachers in terms of workload, particularly in those schools that were using multiple formal tools to assess reading and comprehension, needs to be considered. One literacy leader from a school that was using multiple commercial literacy assessment tools echoed this dilemma, stating in a reflective discursive comment on the questionnaire, “Are we collecting too much ‘broad’ data that is difficult to analyse? Should our focus be on collecting minimal but concise data that will assist in setting a learning and teaching plan?” (Literacy Leader, School 17).

Although commercially produced, standardised tools are popular with schools, as shown in the Phase 1 data, they have drawn criticism from some theorists. Jones (2003), building on the work of Shepard et al. (1998), explicitly denounced the use of formal standardised assessments with children in the early years of school, instead encouraging the use of teacher judgement and a more informal approach to assessment for this age group. David Berliner, an eminent educational psychologist, took this further in an online article for the Washington Post (see Strauss, 2011), criticising the use of formal assessments with young children: Often [the assessment] provides unreliable scores and therefore invalid inferences about the abilities of children are made too often. Potentially more valid information, at least as reliable as the tests themselves, and unlikely to elicit anxiety on the part of teachers or students can be obtained from professional educators much quicker and for drastically less money. (para. 23)

While many researchers have criticised commercially based teaching programs (e.g., Allington 2005; Duncan-Owens, 2010; Lingard et al., 2017), similar attention to teachers’ use of commercially based assessment tools is needed. The focus on quantifiable data as a form of evidence of learning has given rise to commercially produced literacy assessment packages that enable the production of this type of numerical data. The commercially produced literacy assessment tools identified by the literacy leaders provide numerical data to measure growth over time. Students were completing many of these comprehension assessments using an online portal. Teachers are not required to mark the assessments as this is done via the computer program. The increased use of computer-based assessment tools has been directly attributed to the datafication of education (Lingard et al., 2017), and Mayer-Schönberger and Cukier (2013) asserted that this will continue to grow in an era where data that can be quantified are highly valued. These commercially produced assessment programs often have software that allows generation of quantitative data in the form of graphs and tables; these at-a-glance numerical data are often appealing to schools, especially when presenting reports to the community.

The Progressive Achievement Tests in Reading (PAT-R) is a test of reading comprehension and word knowledge assessment and was one of the main commercially produced assessment tools used by just under half of the respondents to assess reading comprehension. Masters (Earp, 2018) cited that of 9,444 schools across Australia, more than 7,000 are using PAT-R. The use of the PAT-R tool has drawn criticism due to the alliance between the publishers of the assessment, the Australian Council for Educational Research (ACER), and the Australian Curriculum, Assessment and Reporting Authority (ACARA), the creators of the National Assessment Program—Literacy and Numeracy (NAPLAN). Spina (2017) noted, “ACER is an organisation with substantial involvement in the production, delivery and analysis of NAPLAN through contractual arrangements with ACARA” (p. 200). Spina further stated that many government policies and initiatives, such as the National School Improvement Project, a cross-sector project commencing in 2012 with a focus on improving literacy outcomes in schools where NAPLAN data were identified as low (ACARA, n.d.), mandated the use of commercially produced literacy assessment programs, including PAT-R. Spina described this as “deeply enmeshed intertextuality of edu-business, government and departmental institutional texts that form ruling relations” (p. 200).

Teachers adopting commercially produced reading comprehension assessments, one of which has received government endorsement, illustrates the government working with publishers to strongly influence teachers’ practices, in this case the reproduction of reading assessments in schools. This concern was reiterated by Au (2008) when discussing the U.S. context, describing official education policy as creating opportunities for the alliance via increased opportunities for publishers who create and market testing materials to teachers. Bernstein (1996) discussed this type of collusion, which can occur between those responsible for official policy and those who recontextualise materials, in this case “evidence-based” literacy assessment tools for teachers. Bernstein proffered that this alliance creates an autonomy over pedagogic discourse. In the current climate, Bernstein’s ideas are realised through a prevalence of neoliberal discourse around the importance of quantifiable data, which influences the types of literacy assessment that schools adopt. Ultimately, teachers are influenced by the official messages from the government and the less official messages from the publishers. Ball (2012) identified how the private sector is strongly influencing policy: In effect, to different extents in different countries, the private sector now occupies a range of roles and relationships within the state and educational state in particular, as sponsors and benefactors, as well as working as contractors, consultants, advisers, researchers, service providers and so on and both sponsoring innovations (by philanthropic actions) and selling policy solutions and services to the state, sometimes in related ways. (p. 112)

Ball (2012) highlighted the “murky” relationship between the government and those in the private sector who are gaining financially from their involvement in policy. The government engages in neoliberal discourse around schools failing, and publishers produce materials that enable schools to provide evidence of learning. Teachers could possibly see the government-endorsed PAT-R assessment as more valid than other non-government-endorsed assessments.

One literacy leader discussed the limitations of using commercially based assessment tools, stating in a discursive comment, “I would love that we invest in teacher knowledge about assessment and what it indicates instead of reaching for programs for assessment” (School 33). Further, through his research, Hardy (2018) noted that even though teachers may interrogate commercially produced assessment processes, they still engage with them and cautioned, “They potentially narrow teachers’ attention to more standardized measures of students’ learning” (p. 1). Moss (2017) stated that in the current climate, a focus on assessment also results in assessment driving curriculum: “Taken to its logical extreme, instead of building the curriculum and then deciding how it can be best assessed, the assessment tools themselves become the curriculum” (p. 62). The prevalence of published literacy assessment tools was a significant difference to the mandated and prescriptive assessment period (1998–2012).

The questionnaire used in Phase 1 to gather information from the 76 Melbourne Catholic school literacy leaders on literacy assessment practices in the early years provided a broad range of information through the open-ended and closed questions. Emerging from this was that after devolution of decision-making around assessment to schools by the system, the 76 schools still used the assessment tools that were mandated prior to 2012 but made changes to those specific aspects they used or when they were using them. From the responses, it was evident that all schools engaged in more literacy assessment than during the mandated prior to 2012, including the use of a range of commercially produced literacy assessment tools.

The devolution of assessment to schools enabled greater autonomy, although it appeared for the same types of constrained literacy skills and understandings were being assessed as during the mandated and prescriptive assessment period, such as letter knowledge, word knowledge, and decoding. Seventy five school identified assessing the letter knowledge of children when they entered school and 54 identified assessing phonemic awareness of students in their first year of school. It also seemed that these skills were being assessed using multiple tools. The schools had also added several formal, evidence-based tools in the form of commercially produced literacy assessment packages to assess literacy in the early years, and these varied from school to school, although schools identified they were using multiple formal tools to assess literacy skills. Just over a quarter of the schools identified adopting multiple commercial tools to assess comprehension, something that was not required to be assessed during the mandated period.

The assessment autonomy afforded schools appeared to be highly influenced by current neoliberal discourse around quantifying literacy learning. The role of publishers in recontextualising this neoliberal discourse was evident with schools using formal, evidence-based literacy assessment tools produced by publishers as part of their assessment routine.

The Phase 1 data indicated that schools, when offered the opportunity of literacy assessment autonomy, did not engage in an assessment revolution; rather, it could be described as more of a shifting of the sands. Bernstein (2000), in discussing the politics of recontextualisation, asserted, “The positions remain but the players change” (p. 68). The Phase 1 data indicated the system still had an official role, but the new “players” could be identified as the publishers and their commercially produced literacy assessment tools that teachers used to assess literacy in the early years.

Results and discussion phase 2

Phase 2 involved conducting 30 semistructured interviews with 23 early years teachers and seven literacy leaders from eight purposefully selected schools who engaged with the first phase of the data collection to find out how these teachers responded to the opportunities that came with devolved decision-making. This phase of the research sought to inquire into the extent to which participants took the opportunity to interrogate, innovate on, resist, or accept both previously mandated and current literacy assessment priorities and practices. Further, Phase 2 sought to find out what this strategy of literacy assessment devolution and autonomy ultimately meant for the early years educators’ assessment beliefs and practices.

A systematic analysis of the data established four themes that can be described as “grounded” in the data (Namey et al., 2008). The four themes are (1) Engaging with Assessment, (2) Assessment Expertise, (3) Assessment Conceptions and (4) Sociocultural Politics. The first three themes related closely to the teachers’ literacy assessment beliefs and practices, and the fourth related to factors both within and outside the school that were influencing the literacy assessment practices of the early years teachers. In this paper I report on the first three themes.

In line with Butler-Kisber’s (2010) ideas around interpreting data, the following section provides a rich written discussion of key elements of the first three themes from the phase 2 data analysis (1) Engaging with Assessment, (2) Assessment Expertise and (3) Assessment Conceptions. I discuss each theme and illuminate it with participant quotations (using pseudonyms) to honour the voices of the participants. I also make links to relevant literature to support the findings and provide further validity.

Engaging with assessment: frustration

Teachers’ frustration was evident in terms of embracing autonomy while being constrained by working in an era characterised by high levels of accountability. A teacher who had been teaching for over 20 years and had experienced a great deal of professional autonomy early in her career stated, Because I’ve been in the education for over 20 years, and I’ve seen things come and go, I sometimes wish that we didn’t have to do quite as much assessment and we could concentrate on the teaching. So, I get a little frustrated. I know we need to be accountable and I think we are, but sometimes I think there is too much assessment and not enough teaching. (Kelly, Years 1/2, School 8)

In Australia, teachers had greater autonomy throughout the 1960s, 1970s and 1980s. Teachers, not an external organisation, created the curriculum and decided what was taught and how, and teachers were responsible for monitoring and reporting on children’s progress (Chen and Derewianka, 2009). As a neoliberal agenda arose, teachers’ autonomy decreased, and governments and education systems had considerable influence over what was taught, how it was taught, and how it was assessed. Some teachers in this research were cynical about the current educational climate with an emphasis on assessment, particularly a focus on numerical data: I’ve got some children on [level] 66 for BURT which is amazing but I know that’s good but I then want to find out what does that correlate with? What does that mean? Where should they go? So, I think the formal assessment, I think we need to make sure that we’re using it properly and analysing it because it’s there for a purpose. It’s telling us something but I think at the moment, it’s just looking at the scores unfortunately. (Isabella, Foundation, School 7)

The emphasis on numerical data has been criticised by many, Netolicky et al. (2019) asserted this focus is an oversimplification of assessment: “We value spreadsheets, numbers, box-ticking, percentages, test scores and quantitative data over the complexities of the individual, of teaching, of learning, and of schools” (p. 3).

The early years teachers in Phase 2 mentioned creating the right balance between assessment and teaching as being important. There was a general feeling of being overwhelmed by the data. The teachers recognised that, as professionals, having autonomy was important and felt they were best placed to make decisions about assessment, as described in this comment: “I think that we are the professionals; we know our cohort of kids better than anybody else, so why not allow us to make those decisions on what would best suit the needs of our kids and what I think works?” (Janine, Foundation, School 3).

The early years teachers indicated a relinquishing of responsibility and stated that they relied on the school’s literacy leader to tell them what to assess and how to assess it, as illustrated in the following excerpts: But for me, like as the classroom teacher, I just get told. The literacy leader will tell me and I’ll be like, “Okay. Well, thanks”. Now I know that’s what I have to do. (Lyly, Years 1/2, School 4) The English leaders might say, “Okay, we all need to upload our level of text”, and they might say, “Okay, well for our professional learning team meeting, can everyone please bring along the students’ text level”. (Isabella, Foundation, School 7)

The data indicated the devolution of responsibility to schools had not decreased data requirements; rather, schools were setting their own mandates for literacy assessment, and many teachers articulated they felt frustrated with the pressure to administer and collect the school’s mandated data, particularly the more formal data. True autonomy appeared elusive; as Ball (2003) posited, there is only an appearance of freedom in a devolved environment (p. 217)

Teachers spoke about higher levels of expertise that came with an increase in accountability and how this sometimes led to feelings of frustration as assessment had become all consuming. Janine, a Foundation teacher from School 3, stated she felt the school-based mandated literacy assessment requirements were excessive describing it as “death by assessment”. This is noteworthy and indicative of the early years teachers interviewed as part of the Phase 2 research who felt assessment was hindering literacy teaching rather than enhancing it.

The participants from Phase 2 expressed a need for greater support with professional development to ensure they could use the data effectively. This comment illustrates this: I think we’re just becoming so much more accountable every year. I notice it myself from year to year. We’re just so much more accountable to the parents. They want more information. I think they’re entitled to that information. And I think it’s just putting more pressure on us too. So, I believe in it. I think that we need to have that pressure, but at the same time, we need the professional support. We need professional development to support us. And we need time. (Jen, Years 1/2, School 2)

Many of the Phase 2 early years teachers did not feel they had any real autonomy. With a devolution of responsibility to the school from the system, literacy leaders and leadership teams had set up school-based mandates in terms of literacy assessment that seemed to be motivated by the neoliberal accountability agenda of having quantifiable data to demonstrate success. The datafication of literacy is a term used to describe this process of using quantifiable data to demonstrate success, it is “a complex process where data has increased, and this affects the practices, values and subjectivities in a setting” (Bradbury, 2019, p. 8). Many of the formal commercially produced literacy assessment tools that schools were using provided visible and quantifiable literacy data that could be monitored and tracked over time.

It appeared that teachers had a certain level of autonomy over their day to day informal literacy assessments, but leadership teams in schools had introduced their own mandated literacy assessments that teachers were required to follow. Many of the experienced teachers interviewed, such as Janine in School 3, Nat in School 7, Jen in School 2, and Kelly in School 8, were outspoken and questioned the demands the school-mandated assessment placed upon them, seeing it as undermining their professionalism and autonomy.

Teachers engaged in what could be identified as a range of both formal and informal assessment practices but felt most comfortable with collecting data based on their own teaching and observations.

Assessment Expertise: Teacher autonomy

Teachers interviewed in Phase 2 of this research engaged in a range of literacy assessment practices and used a range of tools. However, it was clear that teachers also believed teacher judgement in literacy assessment was essential. Teachers stated they felt teacher judgement obtained through less formal literacy assessment practices, such as observations recorded anecdotally, were more beneficial than the data from formal literacy assessment tools. A participant who noted that the data from formal assessment could result in a narrow view of the students illustrated this: I think the anecdotal stuff, just the day-to-day things that you pick up in the classroom is probably the most important, and of course, when you don’t know the children as well at the start of the year, getting all that information from testing can put them in a pigeon hole. But it’s the anecdotal stuff that is important. (Simone, Years 1/2, School 1)

Many theorists support teacher judgement as a valid assessment method. Allal (2013) described teacher judgement as being comparable to clinical judgement in the medical profession, stating that this type of judgement requires two types of knowledge: 1. Singular—this is the knowledge the assessor has on everything about the individual; and 2. General—the more formal professional knowledge that includes the norms and rules for the profession. (p. 22)

Allal’s (2013) depiction of these two types of knowledge came through strongly in the data. Teachers spoke passionately about needing to know the child but were also aware of the requirements of the profession in terms of assessment. As professionals, they could engage in a discourse around literacy assessment and had a metalanguage to describe their literacy assessment practices.

As part of using teacher judgement for assessment, all teachers discussed the importance of engaging in observational assessment. Goodman (1978) coined the term “kid watching” in relation to assessing students. She asserted that teachers, through having a detailed knowledge of language development, engage in observing children as a more authentic form of assessment. Prioritising observation as a valid assessment practice by teachers has been identified in other research (e.g., DeLuca and Hughes, 2014; Wragg, 2011). The teachers in my research stated, In the early years, it’s a lot of observation and writing your notes down because it’s happening there and then. (Sue, Year 1, School 3) It is about “noticing”. (Simone, Years 1/2, School 1)

Twombly (2014) called for teachers to spend time getting to know a child’s strengths and needs through interacting and observing the child. She stated, “Information gathered from personal contact and close observation of students will help teachers to recognise strengths in children who do not test well or struggle with some skills” (p. 46). She posited that this way of gathering data enables a more complete picture of the child to be achieved. Many teachers highlighted how important observational assessments were and discussed the recording of these observations through anecdotal notes, as illustrated in the following excerpts: When I’m doing guided reading, it just has their name, whatever their focus is, and then there’s a spot for a running record—a spot to write any words that they’ve found difficult, there’s a spot for comprehension, and there’s a spot to write the next step. (Emma, Years 1/2, School 5) We have an anecdotal recording book for each student. So, we keep it there. And we have their individual files, where anything—anything that we record, it goes into their file. (Jen, Years 1/2, School 2)

All teachers identified that they used anecdotal notes to record the assessment they gathered while interacting with students and found this form of assessment highly valuable. Seely Fullan et al. (2006) stated, “Teachers value more authentic assessment gathered through teaching interactions because they are less public, and less visible to parents and other stakeholders…because authentic assessments more closely identify the individual student’s strengths and weaknesses” (p. 325). Teachers identified with this point and discussed teaching opportunities as the best way to find out about a student’s strengths and needs.

It was evident from the phase 2 data that the teachers had a great deal of expertise when it came to assessing literacy in the early years of schooling; however, teachers’ literacy assessment practices were often constrained by external structures or controls in the form of official curriculum mandates from not only the government and Catholic systems but also those who engage in pedagogising assessment discourse for teachers in the form of publishers’ assessment programs. School-based leadership decisions were also impacting teachers’ literacy assessment autonomy.

Assessment conceptions: Beliefs and understandings

Researchers have found that teachers’ beliefs are not static and are influenced by myriad factors, including the situation a teacher is faced with, the context the teacher is working in, and prior experiences (Borg, 2006; Brown et al., 2015; Fives and Buehl, 2012). In terms of this research, teachers were faced with a situation whereby the Catholic system had provided schools with the opportunity for greater autonomy over decisions relating to literacy assessment tools and procedures, following a long period of mandated and prescriptive literacy assessment. This autonomy, however, occurred in a high-stakes context where teachers’ work and students’ results were constantly scrutinised. The school context, including personnel and demographics, also played a role in assessment beliefs and practices.

There can be conflict between what teachers claim to do, want to do, and actually do (Szőcs, 2017, p. 142). It is essential for teachers to be able to articulate their beliefs, according to Mockler (2011), but additionally, teachers need opportunities “to systematically reflect on their practice, and to develop strategies for reflection and learning located within an understanding of who they are and why they do what they do” (p. 526).

Teachers described the importance of not just being a technician but having a clear understanding of why specific assessments were chosen: “I am a better teacher because it’s now not just, ‘Oh, this is what we’re going to do,’ but ‘This is why we’re going to do it’ and how it’s going to improve outcomes” (Kelly, Years 1/2, School 8).

Taggart and Wilson (2005) described the importance of teachers operating beyond the technical level and moving to contextual decision-making. When teachers engage in contextual decision-making, they demonstrate an understanding of making decisions based on knowledge, values, and students’ needs, as opposed to merely following mandates. Kelly, in the above excerpt, demonstrates making decisions beyond the technical level. Other teachers spoke about asking questions, a clear indication that they were prepared to question mandates: We have to prioritise and we have to ask, “Why are we using it and what do we want to get out of it?” (Mel, Literacy Leader, School 6) If you can’t give me a purpose for implementing something or if I can’t see the immediate impact of how this data is going to drive my future teaching then I suppose my question back to the person telling me that I have do it is well, “Why? Where’s the research telling me that this is going to actually have an impact and help me improve the learning for this child?” (Nat, Year 1, School 7)

Although participants engaged in a critique of their own assessment practices as well as current mandates, this appeared more prevalent with teachers who had been teaching for a longer period. It also seemed that this critique did not lead to resistance. Teachers were prepared to verbally question mandates but did not go further in resisting these mandates. Overall, it appeared that the participants were very compliant even when they did not agree with mandates. I infer that in the current climate, where teachers’ work is under “surveillance” through a range of performativity measures, teachers felt compelled to comply with mandates and had little opportunity to resist these mandates. It was also evident that teachers believed strongly about assessment not just being about a score: “If you just collect it and have a number, it’s useless” (Nick, Year2, School 7).

Teachers reiterated the criticism of using numerical data and rejected the datafication of literacy. Bradbury (2019) described the effect of datafication: “What matters is what can be measured; and in early years if there is nothing that can be measured, practice must change so that scales, statements and norms can be applied” (p. 17). There was, however, a real sense from the teachers in this research that they were aware of the limitations of using numbers in measuring and reporting on literacy. Although they were mandated to produce some numerical data, this was in conjunction with the detailed knowledge they had of their students, which was obtained through a range of assessment processes based on both qualitative and quantitative data.

Barnes et al. (2015) noted the differences in beliefs based on the contexts in which teachers operate. Those working in a high-stakes accountability context compared to a low-stakes accountability context will view assessment differently.

The Phase 2 teachers identified that scores were not enough in terms of using the data for informing their teaching and discussed the need for assessment to be purposeful: If it’s going to give me immediate information that I can then use to drive my future teaching, then it’s a good piece of assessment. If I’m going to sit there and think, “What is this actually telling me?” Then, I mean, I can’t see the purpose behind it. (Nat, Year 1, School 7)

Part of assessment being purposeful was being able to use it to inform teaching, as discussed by Elly and Kelly: I find the ongoing conferencing the most useful because I think when you’re sitting down and meeting with students at least once a fortnight for both reading and writing, you can get an idea of where they’re at, but also, they’re setting the goals with you and you’re having the conversation with them. (Elly, Years 1/2, School 1) But as you go along and you spend more time in a classroom, you realise that you’ve got to know where those kids are to give them the best chance of moving forward. (Kelly, Years 1/2, School 8)

The teachers believed in the importance of using the data to inform the next steps in teaching and learning. This required having a good understanding of the developmental nature of learning, as illustrated in the following two excerpts: I know exactly what the students are doing. I think I also have a pretty good understanding of the development of reading and the development of writing. (Janine, Foundation, School 3) I always find it’s pinpointing exactly where that child’s needs are and knowing exactly what it is that is either inhibiting them from learning or what extension they need. (Jen, Years 1/2, School 2)

Griffin (2009) discussed the importance of teachers having not only content knowledge but also an understanding of the developmental progression of learning. He sees this as more important than reporting against curriculum standards as the focus is on the learner’s needs and what they are ready to learn next—in other words, what they can do rather than what they cannot do. The teachers shared Griffin’s belief, discussing how important assessment was in determining what they were ready to learn next.

It was evident from the Phase 2 data that participants had a lot to say about their current literacy assessment practices. They possessed clearly articulated beliefs, although these articulated beliefs were not always congruent with their practices. All participants acknowledged how important assessment was in terms of teaching and knowing students’ needs.

A key finding from this research is that teachers felt confident sharing their clearly articulated beliefs when it came to discussing literacy assessment. They shared a language for talking about assessment, as well as articulating the importance of not only knowing what to assess but also having clearly articulated beliefs about why they assessed the way they did.

Conclusion

Issues central to teachers’ work today, including professionalism, teacher agency, and empowerment, were identified through the research process. Importantly, the research identified how contemporary early years teachers are grappling with assessing literacy amidst a range of local, system, state, and national policies that appear to constrain teacher agency, and yet these teachers demonstrated a commitment to assessing literacy in authentic and meaningful ways to support students’ literacy learning.

This research demonstrates that teachers in the early years have clearly articulated beliefs in relation to literacy assessment; they value literacy assessment and engage in wide-ranging assessment practices using a range of tools to support children’s learning but prefer qualitative assessment based on observation requiring teacher judgement. Additionally, all teachers in this study discussed their literacy assessment practices confidently. Their ideas around what constitutes good literacy assessment were grounded in current theory, positing that good literacy assessment should impact both learning and teaching, be fair, and reflect contextual factors including cultural and linguistic diversity. However, discussion of these assessment values did not stop them from engaging in practices that were contrary to their articulated beliefs. Teachers, although not always agreeing with measuring and reporting literacy numerically or with engaging young children in “test-like” assessments, felt compelled by their school leadership to do so.

The influence of policy and publishers was evident in how teachers reproduced literacy assessment in the early years. Many teachers lamented having to use many of the school-mandated commercially produced literacy assessment tools, but in practice, teachers complied with their use and did not take an active role in resisting the use of these tools. The overt influence of edu-business on schools’ assessment practices was apparent across all schools involved in the research, and teachers’ ability to resist the use of these tools appeared limited.

Teachers in this research showed they were knowledgeable individuals whose assessment knowledge and beliefs were based on both experience and theory, but their literacy assessment practices were being constrained by a range of policy factors outside of their control. Theorists such as Comber and Nixon (2009) and Dinham (2015) have argued that teachers today are excluded from policy debate and formation. Further, Ellison et al. (2018) stated, “Practicing teachers may not hold a privileged epistemic position in policy debate, but we believe that their experience, knowledge, and skills have much to contribute to educational change” (p. 168).