Abstract

Based on 40 interviews with fact-checkers in editorial and reporting roles and a content analysis of 3154 debunking articles published between January and December 2022 across eight countries and over 20 organizations, this article systematically compares and analyzes news factors in fact-checking as a journalistic subgenre. Which specific pieces of inaccurate information are considered worthy of verification? We first inductively identify the key factors emphasized by professionals and then measure their frequency in verification articles. Three factors serve as crucial prerequisites for verification decisions: checkability (Is the claim inherently verifiable?), verifiability [Are sufficient data and resources available for verification?], and virality [Is the claim widespread enough to prevent the amplification of falsehoods?]. Once these thresholds are met, we evaluate additional news factors in fact-checking products, including reach, prominence of targets and sources, timeliness, and relevance across organization types [independent or affiliated with legacy media], misinformation topics, geographical scope [national, international, transnational], and fact-checking targets [online rumors or statements from public figures]. Moderate yet significant associations were identified among the variables. Among the results, independent organizations and legacy media desks emphasize source prominence more than global news agencies, conducting slightly more verifications targeting public figures instead of online rumors. User requests, considered a form of participatory transparency, are particularly valued by independent units, while legacy media and global news agencies place a higher priority on timeliness.

Introduction

While research on newsworthiness is well-established in journalism studies (Galtung and Ruge, 1965; Harcup & O’Neill, 2001, 2017; Schulz, 1976; Staab, 1990), the specific selection processes in fact-checking remain underexplored. Although fact-checking shares similarities with traditional journalism and draws its epistemology from journalistic practices (Suomalainen et al., 2025), it is considered an “adjacent” field (Lauer and Graves, 2024) operating under distinct principles. Most notably, fact-checkers do not set the news agenda. Their primary objective is to verify statements by public figures, online rumors, and other publicly disseminated materials (Graves, 2022). Fact-checking has emerged in response to challenges posed by networked and hybrid media ecosystems (Micallef et al., 2022). In his pioneering work on the emergence and development of the fact-checking movement in the U.S., Graves (2016) examines how professionals select “facts” to verify, drawing on interviews and newsroom observations. He highlights the influence of news values such as timeliness, relevance, and balance in this process. Further studies, also based on interviews, have expanded research to examine how fact-checkers in 19 countries prioritize claims for verification, highlighting the crucial role of perceived harm and its magnitude (Sehat et al., 2024).

This paper builds on previous theoretical and empirical studies of fact-checking issue prioritization (Mahl et al., 2024; Micallef et al., 2022; Sehat et al., 2024; Suomalainen et al., 2025) by explicitly linking fact-checkers’ selection criteria to established news value frameworks in journalism (Caple and Bednarek, 2016; Harcup & O’Neill, 2017)—a connection underexplored in the literature. Drawing on 40 interviews across eight countries and triangulating these qualitative insights with a large-scale quantitative content analysis of over 3000 fact-checking articles, the study provides robust empirical evidence of selection practices. It also examines how these factors vary across (a) organization types (independent, legacy media-affiliated, or global news agencies), (b) verified topics, (c) scope of verification (national, international, transnational), and (d) fact-checking targets (statements by public figures or online rumours). By capturing this variation, the study sheds light on nuanced decision-making often overlooked in previous field mappings. Importantly, all organizations in the content analysis were also interviewed, and the study focuses exclusively on the selection process—rather than broader epistemological premises or verification phases—offering a comprehensive and systematic examination that addresses key gaps in qualitative and quantitative fact-checking research.

To broaden the analysis beyond the U.S. case study, this research examines four European countries with distinct media system models—the UK, Germany, Spain, and Portugal—alongside four Latin American nations with varying levels of disinformation resilience (Humprecht et al., 2020)—Argentina, Brazil, Chile, and Venezuela—expanding the scope beyond the Western context. We analyze 40 interviews with fact-checkers in reporting or editorial roles to examine how they explain their selection process for verifying misinformation. Through an inductive approach, we identify three preconditioning factors—checkability, verifiability, and virality—alongside five additional criteria: target and source prominence, user requests, timeliness, and relevance. Based on this framework, we conduct a quantitative content analysis of 3154 verification articles to measure the frequency of the most prevalent factors across organizational types, topics, verification targets, and geographical scope. Before outlining the methodology, we provide a brief overview of the emergence of the fact-checking movement, its unique characteristics, existing literature on journalistic newsworthiness, and the under-discussed criteria for fact-checking verification.

Current developments in fact-checking journalism

Fact-checking is a structured global initiative supporting external verification by traditional journalists and civil society actors, including NGOs and academics. Anchored in evidence, it involves evaluating the correctness, accuracy, and authenticity of political claims, online rumours, media reports, and other third-party content (for an epistemological discussion, see Mahl et al., 2024; Suomalainen et al., 2025). It differs from conventional journalistic fact-checking, which verifies their own material before publication (Graves, 2022). The first such organizations emerged in the U.S. in the early 2000s, responding to passive journalistic neutrality that led journalists to report claims without verifying their accuracy. It was seen as a “reform movement” or “democracy-building tool” (Amazeen, 2020; Graves, 2016). The initiative has since received support from journalism, media, philanthropic organizations, tech firms, and public administration (Graves, 2022). From 2015 onward, the field became institutionalized with the International Fact-Checking Network (IFCN), followed by the European Fact-Checking Standards Network (EFCSN) in 2020 (Lauer and Graves, 2024). Within these, fact-checkers organize the annual “Global Fact” conference, foster regional partnerships, and consolidate memberships based on a code of principles ensuring non-partisanship, fairness, transparency of sources, funding, methods, and an honest correction policy (IFCN, 2024).

Since 2016, there has been a significant increase in organizations worldwide, driven by post-truth politics and concerns about the erosion of fact-based discourse. This was evident during events such as the elections of Donald Trump in the U.S., Rodrigo Duterte in the Philippines, the Brexit referendum, and the peace agreement referendum in Colombia. Fact-checkers have collaborated with tech companies to combat misinformation. In 2016, Google enhanced fact-check visibility in search results using the Claim Review system—a tagging tool allowing fact-checkers to label articles for better visibility on search engines and social media. That year, Meta launched its third-party fact-checking program, funding IFCN member organizations committed to a code of good practice to verify information on Facebook, Instagram, and Threads. The project expanded significantly, with Meta’s support now covering about 120 countries (Lauer and Graves, 2024), shifting the field toward verifying online rumors rather than claims by public figures (Bélair-Gagnon et al., 2023; Cazzamatta, 2025b, 2025e; Graves et al., 2023; Vinhas and Bastos, 2023). However, in early 2025, Zuckerberg announced plans to dismantle the program in the U.S. and replace it with community notes similar to those on X. The effects of this remain uncertain. These contexts highlight extrinsic factors influencing verification practices. However, we focus mainly on intrinsic information factors that make content attractive for verification, as discussed below.

News factors in journalism and fact-checking

News factors form a well-established research tradition in journalism studies, alongside bias and gatekeeping theories, analyzing journalistic decision-making. The concept links news selection to event characteristics that influence an event’s news value and shape journalists’ assessments of newsworthiness (Staab, 1990). Early discussions trace back to Walter Lippmann (1998[1922]). Key milestones include Galtung and Ruge’s (1965) catalogue of 12 factors. Rosengren (1970) criticized this earlier approach for focusing on media coverage rather than events, urging comparisons between intra- and extra-media data. Schulz (1976) advanced the field by empirically operationalizing factors’ intensity, affecting news prominence and placement. Staab (1990) classified this ‘causal model’ as apolitical, excluding intentionality in journalistic decisions. Scholars note that journalists can strategically use news factors to construct newsworthiness. Selection is shaped not only by event attributes but also by newsroom agendas, ideological perspectives, and political and media systems (Golan, 2010; Harcup & O’Neill, 2017; Staab, 1990). The most recent catalogue, adapted for the digital era, was proposed by Harcup and O’Neill (2017), adding shareability, audiovisual elements, and entertainment alongside traditional criteria.

Nonetheless, the question of external fact-checking presents a distinct line of inquiry and, in contrast to the extensive research developments outlined above, remains relatively underexplored, with few theoretical frameworks or empirical analyses available to date (Mahl et al., 2024; Micallef et al., 2022; Sehat et al., 2024; Suomalainen et al., 2025). Here, the focus is not on how an event becomes news, but rather on how a specific piece of inaccurate information, amid a vast array of falsehoods, is deemed worthy of verification. Mahl and colleagues (2024), for instance, examine issue selection alongside organizational structures and verification practices, framing it as a key component of what they term ‘fact-checking cultures’. Graves (2016) shows that fact-checkers orient themselves towards journalism’s well-known news perceptions. Fact-checkers primarily concentrate on assertions related to significant policy issues (relevance), false accusations between political candidates or claims made by political figures (prominence), statements that have sparked debate, garnered media coverage, or are trending topics in the news (timeliness). Sehat and colleagues (Sehat et al., 2024) identify several external factors influencing claim prioritization, including the urgency of a statement, resource availability, and the interests of various stakeholders. A central aspect of their analysis is the assessment of potential harm caused by misinformation, whether physical, economic, or psychological.

Fact-checkers refine this news sense by intentionally considering the perspectives and concerns of their readers (user request). Further studies with a more global scope also highlight that claim selection often involves close monitoring of social media and the consideration of reader suggestions (Micallef et al., 2022). Several organizations accept readers suggestion for verification and call attention to cases where the verification was published due to popular request (Graves, 2016: pp. 65–73). Originality is not a priority in fact-checking, unlike traditional journalism, where reporters pursue new angles and aim to avoid being scooped by competing outlets. In fact-checking, an increased number of correction efforts is viewed as beneficial, with similar verifications appearing across different sites (ibid.). Organizations, particularly independent ones, offer multiple hyperlinks to promote the verification of their peers (Cazzamatta, 2025a).

Another critical factor influencing fact-checking decisions is balance. Although fact-checkers evaluate claim accuracy beyond the typical ‘he said/she said’ style, challenging traditional neutrality and (orthodox) objectivity (Cazzamatta, 2025d), they still aim to address the entire political spectrum. This commitment is especially strong in the U.S. (Graves, 2016), given its polarized landscape. This raises concerns about false balance, as candidates vary in their relationship with fact-based discourse. Earlier critiques by Uscinski and Butler (2013) highlighted this issue when discussing selection effects: Do Republicans lie more, or are they just targeted more often? However, this took place before post-truth politics, when fact-checking focused mainly on political claims without policing social media (Graves et al., 2023; Suomalainen et al., 2025; Vinhas and Bastos, 2023). After 2016, studies linked the rise of disinformation to far-right parties (Hameleers, 2020).

However, before considering these factors, an important threshold regarding the ‘checkability’ of information must be addressed (Suomalainen et al., 2025). There is consensus that verifiable claims consist of “facts, not opinions” (Graves, 2016: p. 63), though distinguishing them can be challenging. In an earlier critique, Uscinski and Butler (2013) argued that fact-checkers often verify statements that cannot be verified—not because they are opinions, but because they involve future predictions (which cannot be verified as the future is unknown) or causality, requiring social scientific methods media practitioners typically lack. However, these observations refer to early fact-checking stages and have been rebutted by leading scholars (Amazeen, 2015). Notably, Uscinski and Butler studied organizations that were not fully institutionalized, irregularly operating, and based much criticism on anecdotal evidence. As the interviews show, fact-checkers are aware of checkability’s limitations.

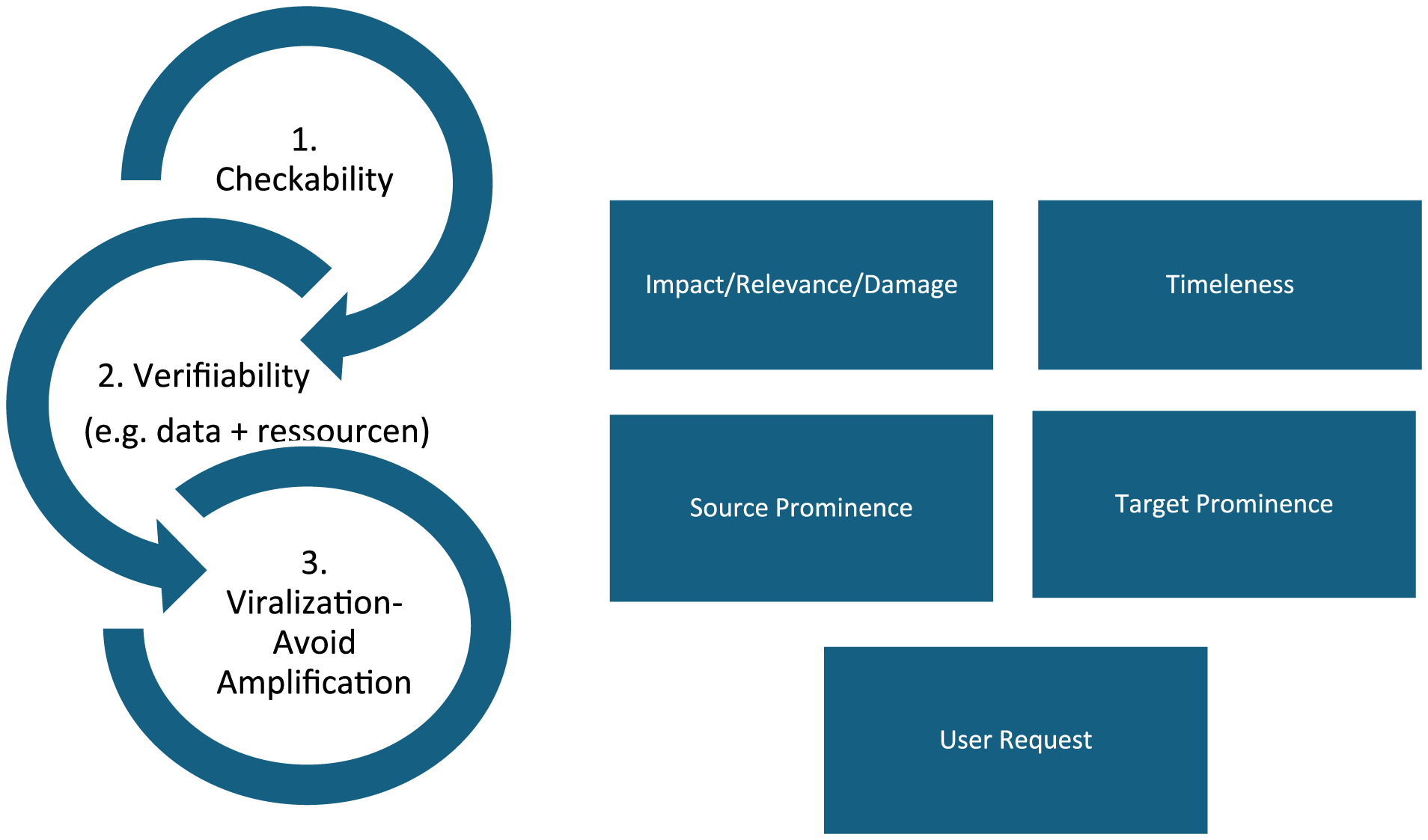

Nonetheless, even checkable claims can be difficult to verify if public and reliable data or independent experts are unavailable, which significantly impacts organizations operating in environments with restricted press freedom and political accountability (Cazzamatta, 2025c; Lauer, 2024). We differentiate between these two factors: ‘checkability’ refers to whether a claim is inherently verifiable, while ‘verifiability’ pertains to practical checkability—whether rumors and statements can be substantiated with available evidence and resources (see methods and Figure 1 below). Some fact-checking guides also provide recommendations for verifying information without amplifying falsehoods (Cook and Lewandowsky, 2012; Lewandowsky et al., 2020; Mantas and Benkelman, 2020). In the case of online falsehoods, numerous considerations arise regarding a rumor’s virality and whether intervention is warranted.

Research questions

Because the field still lacks concrete operationalizations for measuring fact-checking selection criteria, we refrain from developing hypotheses and instead maintain open research questions. We begin by inquiring about the criteria used to select information for verification, asking: RQ1: How do fact-checkers discursively explain their selection process for verifying misinformation?

After identifying the most frequently discussed factors by fact-checkers operating in eight countries, we aim to measure their prevalence in verification articles by asking: RQ2: Which factors were mostly identified within sampled verification articles?

Finally, we seek to observe variations in the verification factors by asking: RQ3: What are the most recurrent news factors identified across (a) types of organizations, (b) verified topic, (c) scope of verification and (d) target of fact-checking?

Methods

Sampling of countries and organizations

The choice of countries was constrained by language, contextual expertise, and funding. We initially selected four European nations based on media systems theory (Hallin and Mancini, 2004): the UK representing the Liberal model, Germany representing the Democratic Corporatist model, and Portugal and Spain representing the Polarized Pluralist model. Given the challenges of applying this typology outside the Western world, our selection of Latin American countries—Argentina, Brazil, Chile, and Venezuela—was informed by alternative indicators, including resilience to disinformation (Humprecht et al., 2020). This selection included nations with varying levels of deliberative democracy, journalistic professionalism, political polarization, and social media use for news. In each country, where possible, we selected three types of organizations with broader reach—independent fact-checkers, legacy media units, and global news agencies—to capture variability in verification criteria for the content analysis (for details, see Supplemental Material). For the interviews, we engaged more fact-checking organizations than those in the content analysis.

Qualitative interviews

Given the limited literature on verification factors, mostly focused on the U.S., we analyzed 40 interviews with fact-checkers, examining their explanations of the criteria used to select claims or rumors for verification. We initially contacted the founder or editor-in-chief of the 23 organizations included in the content analysis (see Supplemental Material Table 1) via email, explaining our research aim. Using snowball sampling, these contacts recommended additional interviewees. The interview guidelines are part of a broader project investigating micro, meso, and macro-level factors influencing fact-checkers’ work. For this article, we specifically analyzed responses related to selection criteria. Interviews were conducted online between August 2024 and February 2025 in the interviewees’ preferred language—English, German, Spanish, or Portuguese. We aimed to speak with founders in director or editorial roles, as well as fact-checkers involved in daily verification. In small organizations, these roles are often combined. All interviews were recorded with prior authorization and anonymized, as even those who consented to name disclosure shared substantial off-the-record information. We did not upload transcripts to the Open Science Framework (OSF) or include organizational details, as this would not comply with the European General Data Protection Regulation (GDPR). All conversations were transcribed and inductively coded by the project PI using NVivo. See the Supplemental Material for example sentences for each identified category from the qualitative analysis—checkability, verifiability, viralization/avoid amplification, impact, target and source prominence, timeliness, and user request (Table 1). Based on the criteria mentioned by interviewees (see Supplemental Material Table 3), a codebook for the quantitative content analysis was developed.

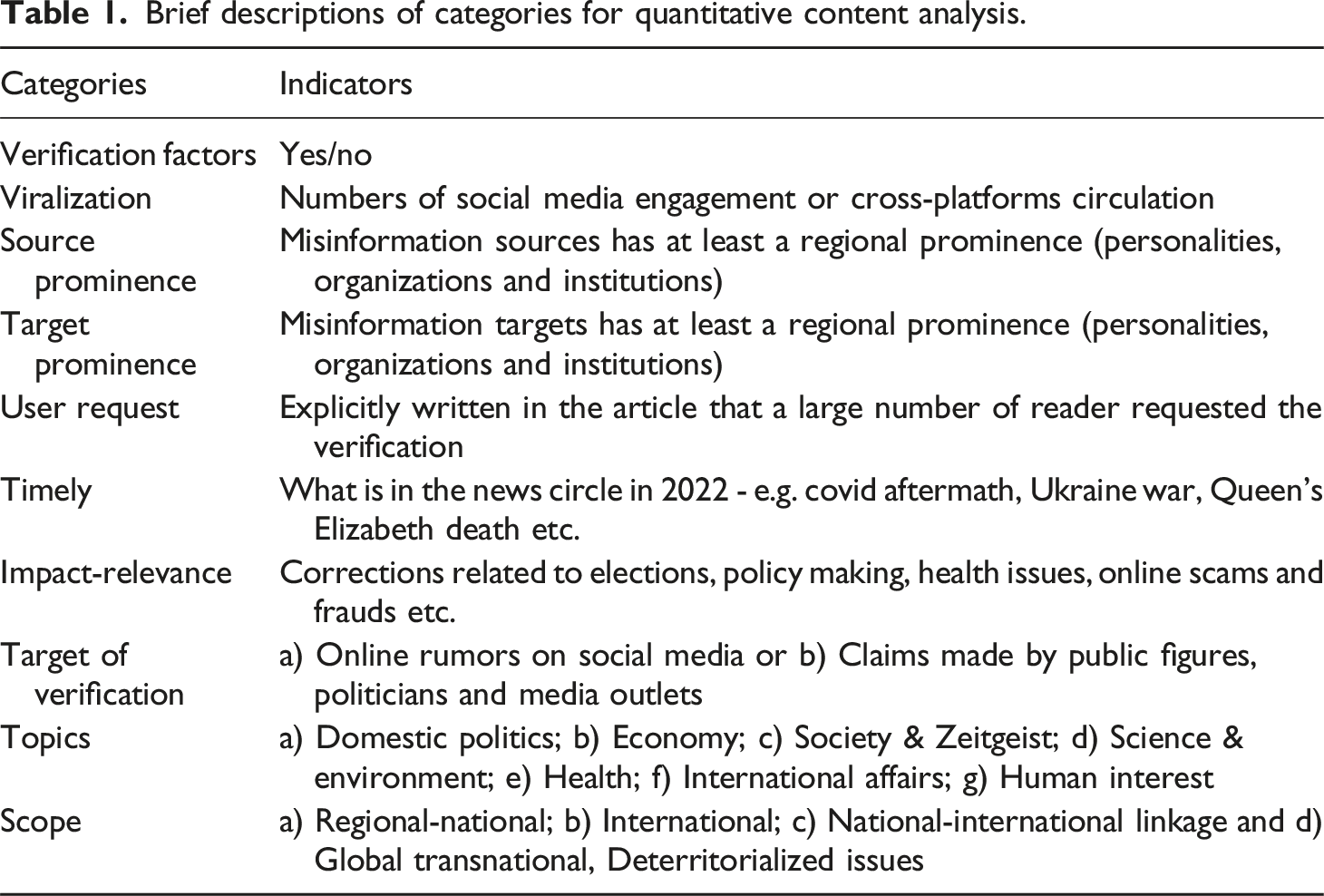

Quantitative content analysis

After identifying the main verification factors mentioned in interviews, we searched for corresponding indicators in verification articles published by 23 fact-checking organizations between January and December 2022. These articles had already passed the initial thresholds of checkability and verifiability, referred to as pre-conditioning factors (see below). For category definitions, see the codebook in the Supplemental Material. The codebook categories (Table 1) were developed deductively and inductively, following a pre-test with 400 articles from the 23 organizations. We collected all published links from these organizations using the Feeder extension, totaling about 13,100 links. To ensure representativeness, we drew a stratified 25% sample within each country and organization, selecting every fourth published link. Stratified sampling improves representativeness by accounting for uneven publication patterns across organizations, which vary widely in output. This approach avoids bias and ensures proportional inclusion (Riffe et al., 2014), making it more suitable than traditional methods like the “representative week” in online contexts. Articles without verdicts, such as investigative or explanatory reports, were excluded. After removing duplicates, we finalized 3,154 articles for manual coding. Eight research assistants with relevant language skills and contextual knowledge coded the articles over 6 months, following 40 hours of training to achieve acceptable reliability. Krippendorff’s alpha was calculated within language groups to ensure that any misunderstandings stemmed from flaws in category definitions, not language proficiency. In addition to the verification factors (dichotomously coded as presence/absence; Krippendorff’s α: 0.88–0.97), we also coded verified topics (α: 0.93–0.97), scope of verifications (α: 0.77–0.95), and fact-checking targets (α: 0.80–1.0), as described below:

Brief descriptions of categories for quantitative content analysis.

Findings

Based on interviews with fact-checkers from organizations across eight countries, three key factors were identified as prerequisites for verification before considering traditional journalistic news factors, as shown in Figure 1 (RQ1). The illustration is divided into two dimensions. On the left, factors considered prerequisites for verification—checkability, verifiability, and viralization—are presented, while traditional journalistic factors—timeliness, prominence, impact, potential to cause harm (relevance), and user request—are shown on the right. News factors influencing verification practices identified in interviews.

Pre-conditioning verification factors (RQ1)

Given the distinct epistemology of fact-checking, the primary pre-conditioned factor is “checkability”—the extent to which a claim or rumor is fact-checkable or inherently verifiable. As one interviewee explained, “We would never target opinions. We would never target things about the future, like when, for example, a politician makes a promise.” In this sense, fact-checking requires a factual claim; without it, verification is impossible. Since fact-checking does not assess beliefs, fact-checkers often struggle to verify fact-less statements by certain politicians. As another interviewee noted, “[I]n most TV shows, politicians, especially populist politicians, make far more claims than we can possibly fact-check.”

Although some fact-checkers initially assert that their selection process follows the same criteria as traditional news production—such as “journalistic relevance” and “public interest”—they emphasize the differences when discussing the first dimension of Figure 1, based on pre-conditioning factors. As one interviewee stated, “we embrace the complexity, (…) the difficulty of simplifying something. In conventional journalism, you can generally find a way to publish something quickly by phrasing it right; by taking out bits you can’t stand up.” Another fact-checker observed that in traditional journalism, headlines like “a politician says vaccines don’t work” or “vaccines don’t work according to this politician” may be published, driven by the politician’s prominence, even though vaccine efficacy is scientifically established. As one interviewee pointed out, “the criteria that they [traditional journalists] use aren’t the same as those we use for fact-checking.” Fact-checkers emphasize they do not seek to support specific viewpoints or shape narratives around opinions, as “it’s essential that data or scientific evidence support the claim.” “We are much more concerned not only with ensuring the accuracy of the information we publish, but also with making it verifiable—meaning anyone can retrace our steps and reach the same conclusion.” [interviewee].

After a claim or rumor is deemed fact-checkable, another critical layer may prevent verification: verifiability, or the practical feasibility of fact-checking the information (Figure 1). This depends on whether misinformation can be verified using available evidence and resources within a specific timeframe. As one interviewee explained, “it depends on our capabilities. Sometimes, we can only do it when it’s possible because we have hundreds of pieces of content to check.” Fact-checkers emphasize the importance of these criteria, given that “there is way more disinformation (…) spread out there than [they] can debunk during a day” [interviewee]. While this constraint applies universally, limited press freedom and weaker deliberative democracies exacerbate challenges related to data availability in some countries. “Ultimately, our decision to fact-check a claim depends on whether we have the means to verify it—whether we can identify the element that proves it false. Often, we encounter important topics but cannot debunk them due to a lack of available data. In Venezuela, this limitation frequently prevents us from addressing issues for which we have no practical means of providing evidence [interviewee].”

A third conditioning factor is how widespread or viral a piece of content is, as fact-checkers face the dilemma of not amplifying falsehoods or increasing visibility for bad actors— “we don’t want to give fakes a platform or more audience if they don’t have it in the first place” [interviewee]. Another interviewee emphasized, “We risk enlarging or maximizing the visibility or exposure of information that wasn’t widespread in society.” This concern is especially relevant for legacy media with larger audiences. Some partners of the Meta Third-Party Fact-Checking project can verify information, prompting the platform to label it false and reduce its visibility. In this context, “fact-checking reaches those affected by disinformation and can eventually prevent people from falling for more disinformation. However, it’s important not to amplify it, especially when it appears on [national legacy media] homepage.” Verifying information internally for platforms differs from publicly disseminating it on national news websites, particularly when the content has not yet gained significant traction. Moreover, fact-checkers can themselves become targets of disinformation campaigns: “There is a specific Russian disinformation campaign that sends fakes directly to fact-checkers, asking us to debunk them to garner more reach. We regularly get these emails [interviewee].”

Viralization is identified as the most critical prerequisite for information verification among interviewees. Fact-checkers outline several metrics to assess a piece of content’s potential virality, including cross-platform spread, frequency of shares, likes, and comments, Google Trends indicators, recurring audience inquiries, the identity of those disseminating the content, and the temporal relevance or potential harm of the information.

Factors echoing traditional news criteria (RQ1)

After surpassing these three thresholds—namely, a claim or rumor must be inherently ‘fact-checkable,’ data and resources must be available for practical verification (verifiability), and the information must have gone viral—more traditional news criteria come into play. The most discussed criterion is the relevance or impact of the misinformation to be verified.

Discursively, some professionals use “impact” to refer to virality: “If we notice a piece of disinformation circulating on social media but hasn’t had high impact, generated much attention, or gone viral enough, we set it aside and don’t necessarily verify it.” Certainly, higher exposure means greater impact, but here we treat impact as potential harm (Sehat et al., 2024) or social relevance. For instance, dangerous health-related claims or statements interfering with elections. According to fact-checkers, impact is “a matter of public danger, (…) it could be on health, environment, climate change, danger, the impact (…) on the social agenda.” This potential to cause harm correlates with negativity factors, reflecting the detrimental impact on society. “For instance, during COVID-19, there were calls to burn 5G towers because people believed they spread the virus, or claims that injecting seawater could prevent it. Similarly, harmful recommendations related to cancer treatment were widespread. These falsehoods, which could cause significant harm to health, are top priorities for verification.”

A frequently discussed factor is timeliness, referring to misinformation related to current events, such as a hurricane in the U.S. or the war in Ukraine. A fact-checker at a global news agency explains: “I always start my day looking for material, posts, potentially false or misleading content (…) with potential disinformation about the big topics of the week, day, or moment.” Fact-checkers at legacy media prioritize misinformation part of the ongoing news cycle. The prominence of misinformation sources, as creators or disseminators, influences both the visibility of misleading information and its social impact. Public figures, such as politicians, lawmakers, and institutions, play a significant role in shaping public opinion. This raises the issue of balance. Fact-checkers strive to maintain balance by verifying content from all political sides, even if certain spreaders dominate one side. Some argue that parties in government generate more discourse, make more announcements, and are more frequently interviewed by media. Thus, “the party of government is probably likely to be overrepresented.” Here, balance does not imply strict 50/50 distribution but ensures comprehensive verification of relevant perspectives.

Conversely, the prominence of the target of misinformation is less frequently emphasized by fact-checkers. When addressed, it is typically linked to potential harm. As one interviewee noted, “[i]s this about certain individuals, perhaps politicians or marginalized groups, or situations where the misinformation could have significant consequences, such as impacting elections?” Lastly, audience suggestions also influence the selection process. Fact-checkers receive verification requests via social media, reflecting efforts to enhance participatory transparency and involve the audience in the news process (Karlsson, 2010). Similar user requests can also indicate spreadability.

Factors most identified within verification articles (RQ2)

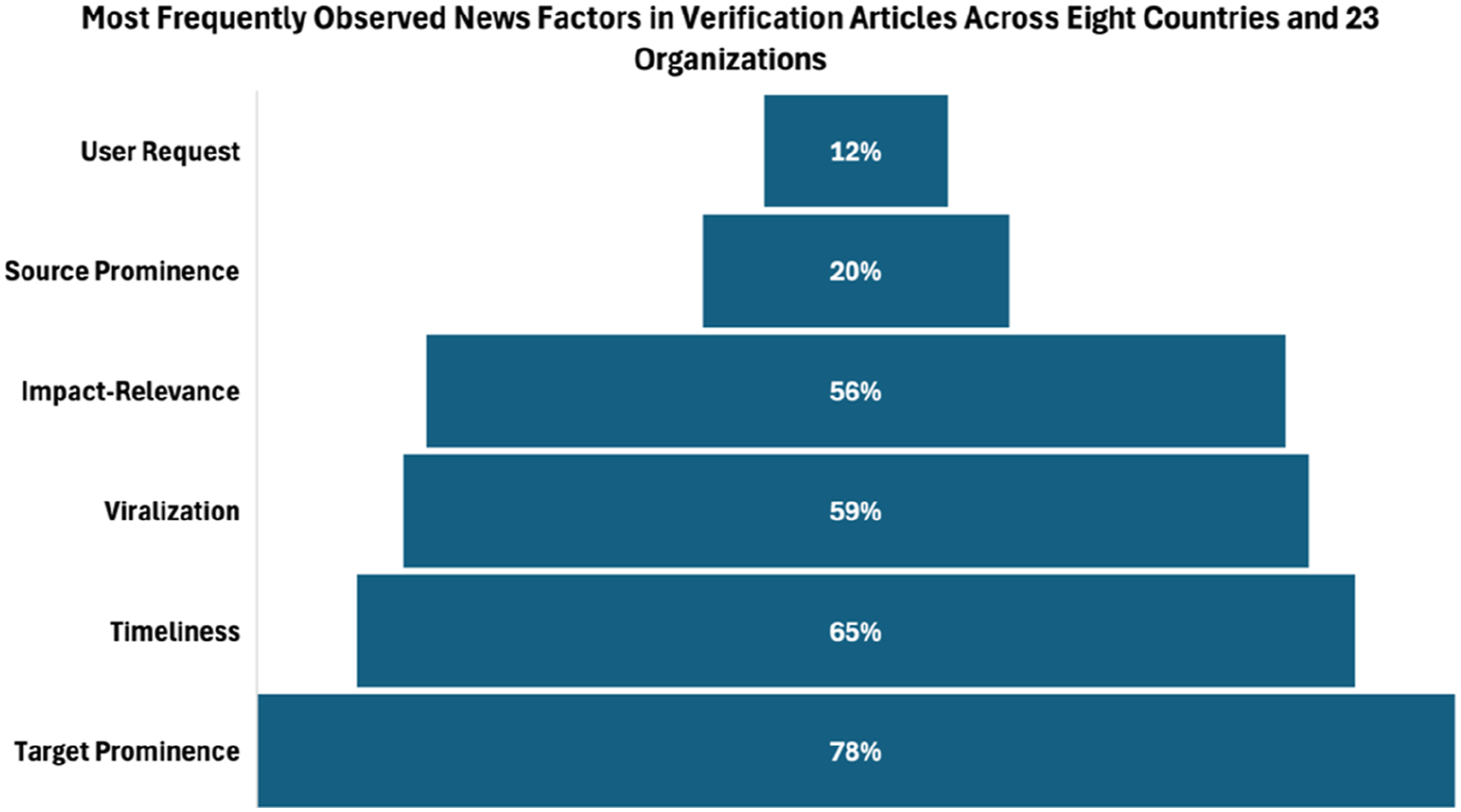

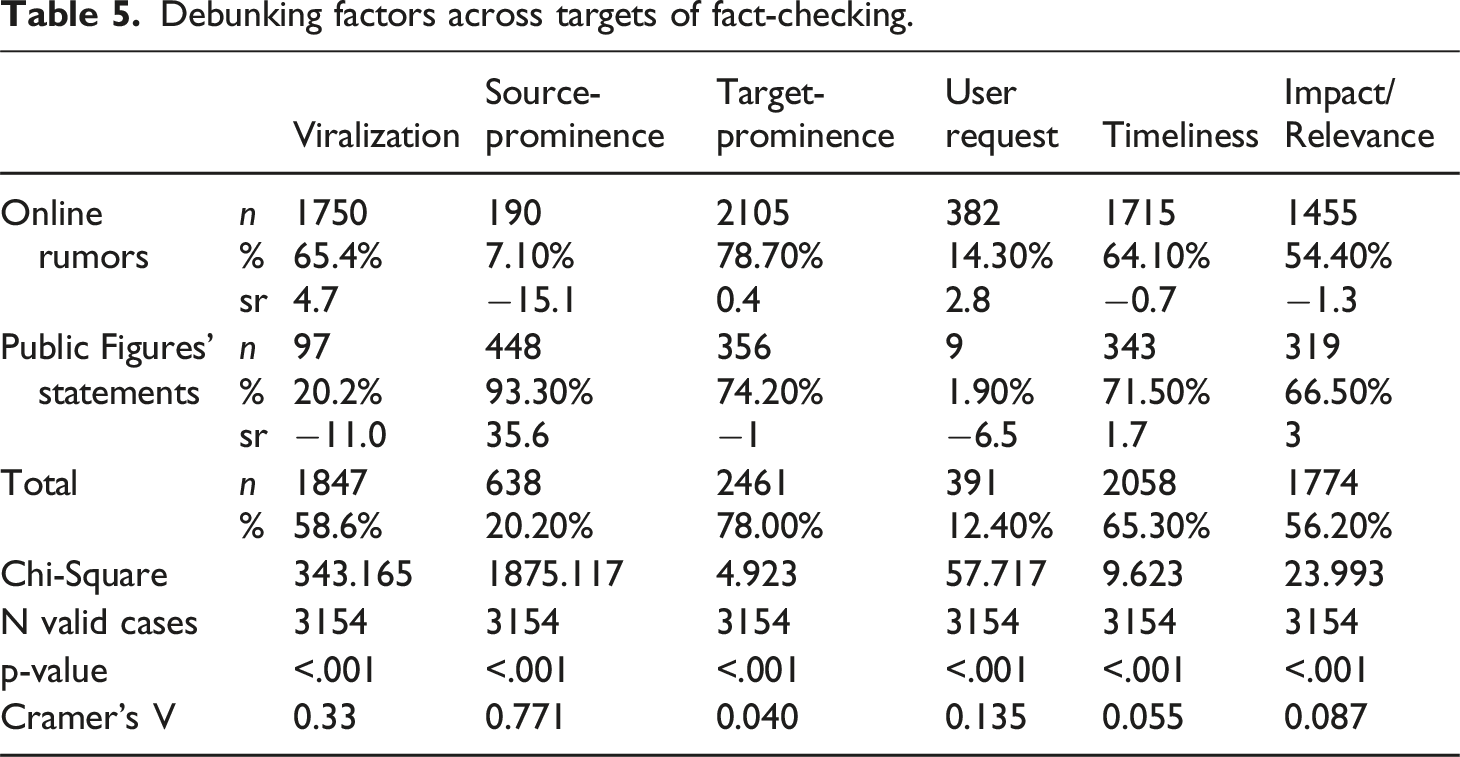

Based on the interviews, we evaluated how frequently specific factors appeared in verification articles produced by fact-checkers to address RQ2. Since these articles were published with a verdict, we assumed they met the first threshold of being fact-checkable, with sufficient resources and data for verification. We then measured indicators of viralization, source and target prominence, user requests, timeliness, and impact within the articles. We note a disparity between the factors emphasized by fact-checkers and those identified in the content analysis. For example, target prominence (78%) appeared far more frequently than source prominence (20%), as shown in Figure 2. Two factors help explain this. The prominence of disinformation targets is closely linked to relevance, specifically the potential to cause harm. Here, “target” refers not to the intended audience but to the actor falsely accused of a problem or actions misleadingly attributed to them. To gain credibility, misinformation often attributes false messages to renowned institutions, politicians, or media organizations. News factors present in verification articles.

The second factor explaining this disparity between discourse and practice is the high number of articles verifying online rumors (84%; n = 2,674) compared to public figure statements (15%; n = 480). This new “debunking turn” (Graves et al., 2023) is widely discussed in the literature and linked to increasing verification demands from tech platforms like Meta, indicating smaller organizations tend to rely heavily on this funding and may be more susceptible to content homogenization (Bélair-Gagnon et al., 2023; Cazzamatta, 2025e). Consequently, source prominence is less detectable. Notably, the Meta Third-Party Fact-Checking Program prohibits fact-checkers from verifying and labeling politicians. To circumvent this constraint—widely criticized in the fact-checking community—fact-checkers often focus on unknown social media users reproducing the same distorted information. That said, these results must be interpreted cautiously, as tracing source prominence is challenging.

Timeliness (65%) appears as the second most frequently identified factor, encompassing claims related to political debates in regional media or misinformation about global events such as the pandemic, the war in Ukraine, the death of Queen Elizabeth, and the Qatar World Cup. Nonetheless, timeliness should not necessarily be equated with social relevance or impact (56%) as strictly operationalized here. Impact, or potential harm, pertains to falsehoods about elections, new constitutions, legislative debates, police actions, health issues, and efforts to protect citizens from online scams and fraud. Viralization appeared in 59% of verified articles. Indicators included social media interactions (e.g., shared over 5,000 times) or cross-platform circulation, especially when articles noted the falsehood appeared across multiple media. User requests were mentioned less frequently (12%) but can also indicate viralization. However, not all organizations inform readers that verification was prompted by audience suggestions, which makes it difficult to trace. These are general figures; nonetheless, we expect some variations in the presence of news factors based on the type of organizations, debunked topics, targets of the fact-checking, or even the scope of the verified information, as we will explore below.

Strengths of news factors according to types of organizations, topics, scope, and targets of verifications (RQ3)

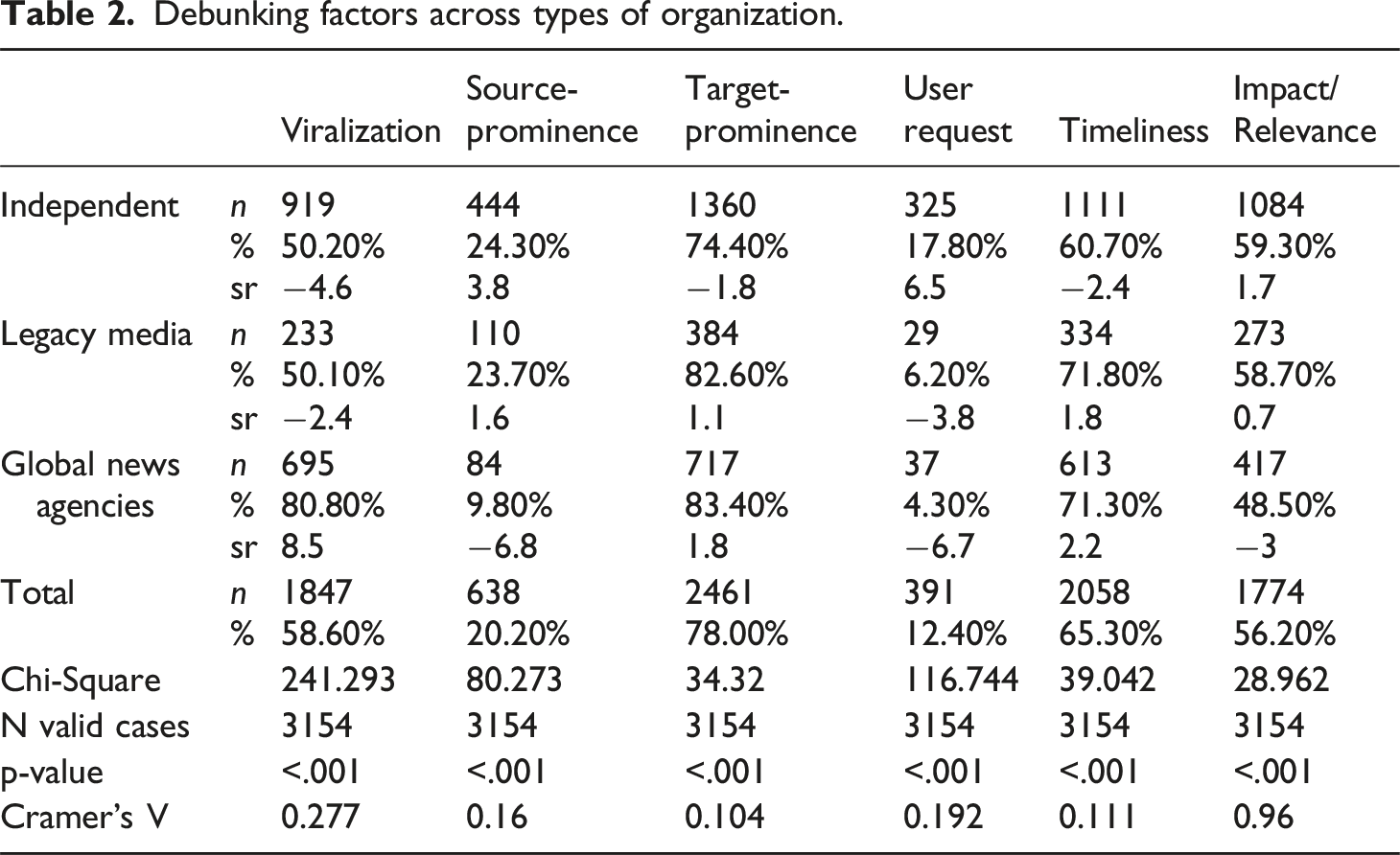

Debunking factors across types of organization.

Global news agencies target viral misinformation more than expected (standardized residual [sr] = 8.5), as they primarily focus on verifying online rumors rather than public figure statements (Cazzamatta, 2025b). This affects the presence of source prominence within global news agencies (sr = −6.8), which appears less than expected, since online rumors are mostly spread by anonymous users and bots. Standardized residuals for source prominence are higher for independent organizations and legacy media desks (3.8 and 1.6, respectively), since they generate slightly more verifications targeting public figures.

User requests, understood as a form of participatory transparency, are more valued by independent units (sr = 6.5), aligning with their stronger emphasis on transparency compared to media counterparts (Humprecht, 2019; Seet and Tandoc, 2024). Timeliness is prioritized more by legacy media and global news agencies (sr = 1.8 and 2.2, respectively), but this does not translate into impact or potential harm, which is higher among independent units (sr = 1.7). Despite the high value placed on timeliness in global events, global news agencies are less likely to verify content with significant damage potential (sr = −3).

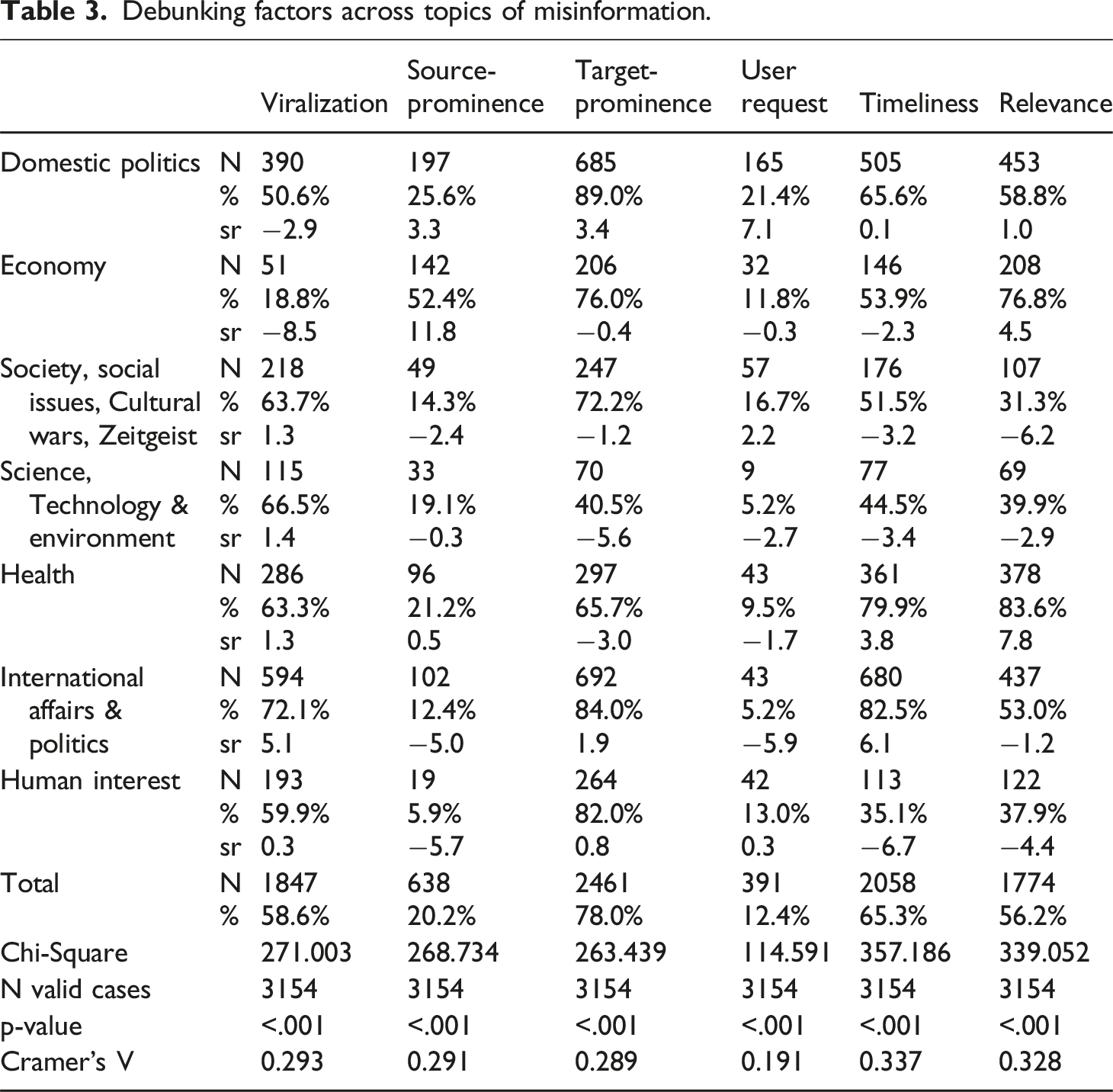

Debunking factors across topics of misinformation.

Source prominence is especially notable in topics like “Domestic Politics” (sr = 3.3) and “Economy” (sr = 11.8), likely due to the verification of public figure statements in parliamentary speeches or media interviews. “Domestic Politics” is also the only topic where user requests play a significant role, appearing more frequently than expected (sr = 7.1), possibly due to its direct impact on daily life. Target prominence is notable in “Domestic Politics” and “International Affairs,” particularly when politicians and public figures are victims of slander or defamation. Timeliness is present in “Domestic Politics” (sr = 0.1) but more pronounced in “Health” (sr = 3.8) and “International Affairs” (sr = 6.1). It is important to note that data was collected in 2022, during the aftermath of the COVID-19 pandemic and the start of the war in Ukraine, which shaped the urgency of fact-checking. Finally, impact was most evident in “Domestic Politics” (sr = 1.0), “Economy” (sr = 4.5), and especially “Health” (sr = 7.8), given the potential harm caused by misinformation (Sehat et al., 2024) in these areas (Table 3).

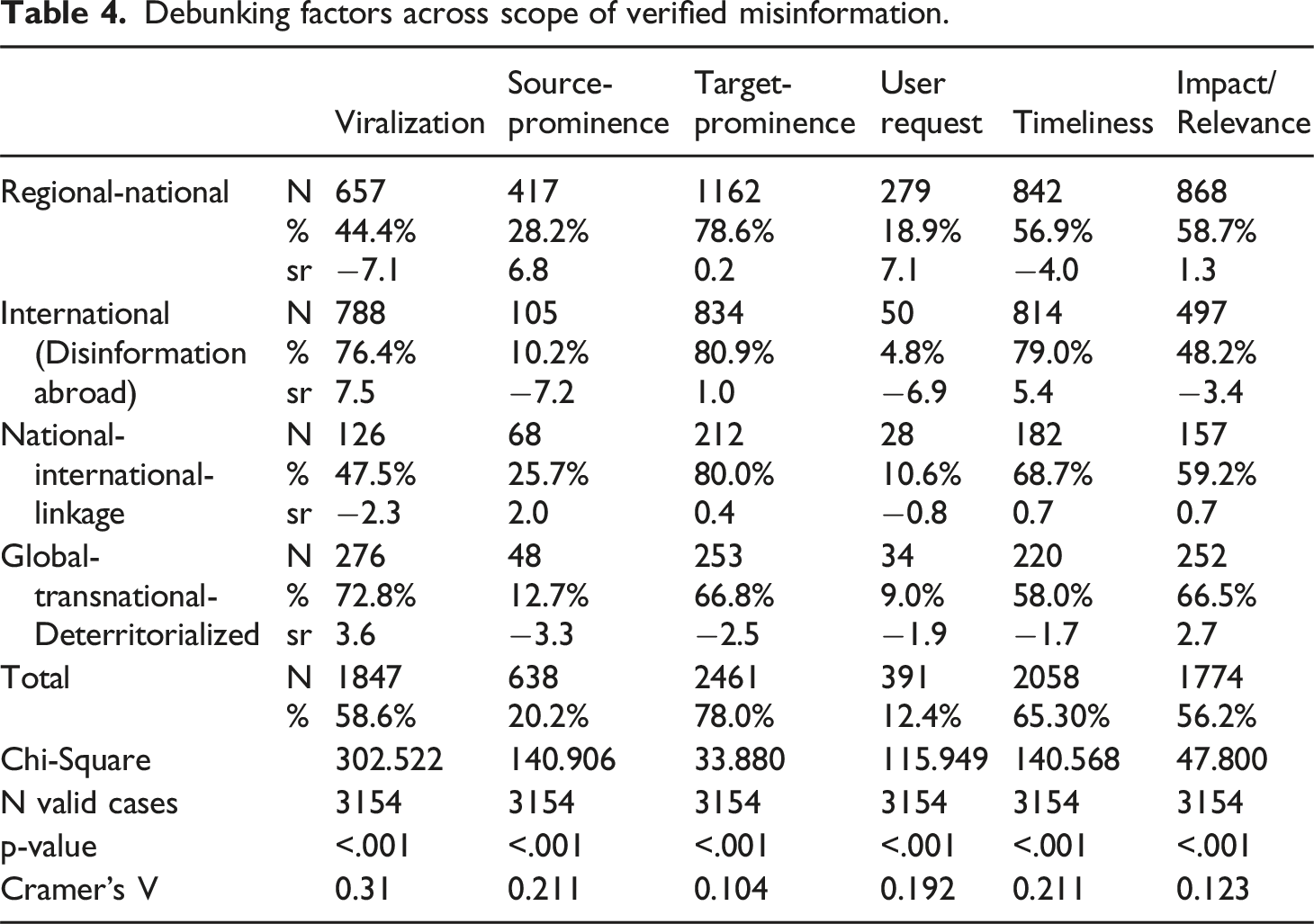

Debunking factors across scope of verified misinformation.

Debunking factors across targets of fact-checking.

Discussion and conclusion

This article advances fact-checking research by examining verification selection criteria during a period focused more on debunking online rumors than assessing public figures’ statements, extending analysis beyond the U.S. Interviews with fact-checkers from eight countries identified three key prerequisites for verification: checkability, verifiability, and viralization. “Checkability” refers to whether a claim is inherently verifiable, while “verifiability” concerns its practical feasibility—whether sufficient evidence and resources exist to substantiate it. Both are essential, as fact-checkers cannot rely solely on claims but must corroborate them with evidence to ensure transparency and credibility. Viralization is also critical, as fact-checkers seek to avoid amplifying falsehoods. It is assessed through cross-platform spread, engagement metrics (shares, likes, comments), Google Trends data, and recurring audience inquiries. After surpassing this threshold, traditional journalistic factors such as timeliness, prominence, impact, potential harm, and user requests remain influential in fact-checking (RQ1).

Fact-checking remains deeply rooted in journalism (Suomalainen et al., 2025) yet considered an adjacent practice (Lauer and Graves, 2024). Fact-checkers did not explicitly reference recent journalistic newsworthiness elements like shareability, audiovisuals, and entertainment, as defined by Harcup and O’Neill (2017). Nonetheless, some overlap exists. Virality, which prompts verification, closely relates to shareability of (false) content. Visuals also influence virality, as social media posts typically include images and videos integral to verification. Entertainment is rarely mentioned, likely because its potential for harm (Sehat et al., 2024) is comparatively lower.

In the second step, we conducted a content analysis of debunking articles that met these thresholds to measure the frequency of various factors. We observe, for instance, that although entertaining falsehoods—primarily coded as human-interest stories—are also verified, the factors driving these verifications are user requests and virality, rather than social relevance. While source prominence was frequently discussed in interviews, it appears less often in articles due to the higher number verifying online rumors (84%; n = 2674) versus statements from public figures (15%; n = 480). Timeliness (65%) is a key factor, often linked to political debates and major global events. However, it differs from impact (56%), which concerns the potential harm of misinformation (Sehat et al., 2024), particularly in elections, legislation, policing, health, and online fraud prevention (RQ2).

We identified a moderate but significant correlation between verification factors and organization types, topics, fact-checking targets, and scope (RQ3). However, the most notable variations arise between articles focused on debunking online rumors and those verifying statements made by public figures. Viralization is especially relevant for online rumors, while source prominence is stronger in articles centered on public figures. User requests play a critical role in debunking online rumors, particularly on encrypted platforms, where direct monitoring is limited but usage is high—especially in Latin America. The overrepresentation of relevance—social harm—in articles focused on public figures’ statements (sr = 3.0) suggests a normative tension: should fact-checkers prioritize high-profile political discourse with greater institutional weight or focus more on viral misinformation on social media? This raises important questions about the scope, purpose, and public responsibility of verification in increasingly fragmented, hybrid media environments (Graves et al., 2023; Vinhas and Bastos, 2023).

Our article has several limitations. First, it focuses solely on factors mentioned by fact-checkers during interviews, rather than applying an extensive catalogue of traditional news factors, which can be challenging to operationalize in fact-checking. Further research should operationalize not only the frequency but also the intensity of these factors. This would enable correlating the strength of verification factors with the size of debunking articles or the online engagement generated by misinformation. Although Meta’s discontinuation of Crowdtangle challenges social media engagement measurement, alternative computational methods could be valuable. Nevertheless, we provide a starting point for operationalizing comparative studies of fact-checking practices. It would also be interesting to observe whether these news values change with the potential discontinuation of Meta’s third-party fact-checking project in Europe and Latin America.

Supplemental Material

Supplemental Material - The truth game: Verification factors behind fact-checkers’ selection decisions

Supplemental Material for The truth game: Verification factors behind fact-checkers’ selection decisions by Regina Cazzamatta in Journalism.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study is supported by the German Research Council/ DFG: Deutsche Forschungsgemeinschaft (CA2840/1-1; Project Number 8212383).

Supplemental Material

Supplemental material for this article is available online.

Author biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.