Abstract

This study explored the presence of digital echo chambers in the realm of partisan media’s news comment sections in South Korea. We analyzed the political slant of 152 K user comments written by 76 K unique contributors on NAVER, the country’s most popular news aggregator. We found that the political slant of the average user comments to be in alignment with the political leaning of the conservative news outlets; however, this was not true of the progressive media. A considerable number of comment contributors made a crossover from like-minded to cross-cutting partisan media and argued with their political opponents. The majority of these crossover commenters were “headstrong ideologues,” followed by “flip-floppers” and “opponents.” The implications of the present study are discussed in light of the potential for the news comment sections to be the digital cafés of Public Sphere 2.0 rather than echo chambers.

The news comment section manifests the interactive and participatory nature of digital journalism. The comment section allows readers to express their thoughts and feelings in response to the news stories that they read. Moreover, online news comments themselves generate subsequent user reactions. The vast majority of digital news consumers—that is, 83% of South Koreans (Korea Press Foundation, 2016), 78% of Americans (Stroud et al., 2016), 69% of Germans (Springer et al., 2015), and 79% of Australians (Barnes, 2015)—read comments. Important is that people consider the news comments as valid cues to public opinion (Barnes, 2015; Diakopoulos and Naaman, 2011; Jang and Lee, 2010; Lee, 2012; Springer et al., 2015). User comments are sometimes more importantly used to infer public opinion than news stories to which the comments are juxtaposed (Hsueh et al., 2015). The power of news comments in social cognition has been further demonstrated to the extent that the perceived congeniality of user comments could affect readers’ perception of the tone of the news to be more supportive of their issue positions (Lee et al., 2021).

This growing importance of user comments in the digital news diets signifies that the political landscape of partisan media’s news comment sections would play pivotal roles in the formation of public opinion comparable to the political leaning of partisan outlets. In a situation where a one-sided opinion of a partisan news story is supported by hundreds of user comments, people are more likely to conceive the resonated voice as a public opinion without knowing much about opposing perspectives. We thus introduce online news comment sections as an alternative venue for testing the presence of online echo chambers wherein congenial ideas are insulated against counter-attitudinal information (Sunstein 2001).

By analyzing the political alignment between partisan news stories and their user comments, this research aims to advance the current understanding of the relationship between digital news consumption and the growing political polarization. We begin by reviewing the extant literature on echo chambers. We then underscore the necessity of expanding echo chamber discussions to online news comment sections, given the growing empirical evidence challenging the echo chamber hypothesis. To analyze the content of millions of news comments, we employ a semi-unsupervised deep learning method and investigate whether partisan media’s political biases resonate with their partisan audience in the comment sections. We find that the average user comments’ political slant aligns with the conservative news outlets’ political leaning. But this tendency does not appear in the progressive media. Relatedly, conservative commenters, compared to liberal ones, are less inclined to cross ideological lines to argue with their political opponents. We conclude how our findings shed new light on the academic discussions about online echo chambers in the era of digitized news media.

Concerns about online echo chambers and growing counter-evidence

The echo chamber refers to a situation where people are continuously exposed to like-minded information (Sunstein 2001). Extant research has identified how a digitized media environment leads people to live in echo chambers in two respects. The first is selective exposure. People tend to choose like-minded information over challenging one; such a tendency has been facilitated upon the rise of partisan media in conjunction with the increasing media selectivity (Garrett, 2009; Iyengar and Hahn, 2009; Knobloch-Westerwick and Meng, 2011; Stroud, 2010). Likewise, by their own choice, people are surrounded by similar voices. Second, algorithms managing personal news feeds are postulated to be customized based on the users’ prior browsing history. This supports the insular consumption of the news against opposing ideas (Flaxman et al., 2016). This phenomenon has been conceptualized as filter bubbles (Pariser, 2011).

However, there is burgeoning evidence casting doubt both on selective exposure and filter bubbles leading toward online echo chambers. First, studies employing web tracking technologies found a considerable amount of traffic crossing ideological lines (Dubois and Blank, 2018; Flaxman et al., 2016). In Gentzkow and Shapiro's (2011) study, 55% of the web traffic of the progressive New York Times (e.g., www.nytimes.com) was from political conservatives and moderates. For the conservative Fox News (www.foxnews.com), the corresponding number was 22%. Second, a Facebook research team recently revealed no overwhelming effect of algorithmic curation on like-minded news exposure (Bakshy et al., 2015; see Eady et al., 2019 for similar findings with Twitter data). Furthermore, news exposure via search engines like Google rather increased cross-cutting exposure (Cardenal et al., 2019; see Flaxman et al., 2016 for counter-evidence). Lastly, by showing a weak correlation between digital media use and political extremism, Boxell et al. (2017) argued for limited roles of digital media in the growing ideological segregation online (see Guess et al., 2018 for more detailed review).

In addition, the Reuter’s 2019 Digital News Report shed light on an emerging trend in digital news habits (Newman et al., 2019). People used to access news via direct visits to news outlet’s web pages and via social media. Yet, a growing number of people worldwide obtain news through news aggregators and search engines. This “deeply aggregated” type of news access is the most prevalent in South Korea. About 75% of South Koreans get news online via news aggregators, whereas only 4% do so via direct visits to the mainstream news outlets’ websites and 9% via social media. Surprisingly, over 94% of news aggregator users in South Korea indicated Naver as their preferred gateway to the news (Kim et al., 2018). These numbers together indicate that when Naver selects a handful of news stories on its main page, the stories could reach about seven in ten South Koreans instantly.

Centralized news consumption of this sort further challenges traditional ideas of how people end up with their own echo chambers. First, on Naver, news brands are not the primary factor in news selection. People habitually read news headlines presented on Naver’s main page (Korea Press Foundation, 2018, p.63). By design, Naver displays no information about news sources on the main page, and the information appears when users click a headline and open a news story. 1 Second, Naver deploys algorithms to select and rank news stories. However, its algorithmic curation is far from personalized news feeds based on users’ prior interactions with the platform. South Koreans express solid preferences to news stories that are widely viewed by others (58.3%) over the news tailored based on their browsing history (12.8%; Korea Press Foundation, 2018). Naver’s news curation, therefore, utilizes popularity metrics—that is, including the most viewed or most commented articles and trending topics— more than personal data (Korea Press Foundation, 2016).

This challenging evidence importantly offers a vital opportunity to media scholars to expand the current discussion of online echo chambers beyond users’ self-selection to like-minded partisan news content and the customized algorithms catering to their extant preferences.

Comment sections of partisan media, another echo chambers?

We thus introduce the news comment section of partisan media as an alternative venue for testing the echo chamber hypothesis. It is known that a small fraction of the digital audience contributes news comments, such as 12.1% of South Koreans (Korea Press Foundation, 2018), 25% of Americans (Purcell et al., 2010), 12% of Germans (Springer et al., 2015), and 17.1% of Australians (Barnes, 2015). However, as noted earlier, research has corroborated that comment reading is a predominant trend of digital news diets and comment readers use the user-generated news comments as a barometer to gauge what others think about an issue at hand. Accordingly, we postulate that exposure to news comment sections filled with politically homogeneous voices would make people experience online echo chambers.

Extant research tells two different stories in this regard. On one side, focusing on message boards and chatrooms, Wojcieszak and Mutz (2009) discovered that online discussion forums did not offer much opportunity for being exposed to cross-cutting political ideas as they were expected to be. Hsueh et al. (2015) also supported participants’ tendency to leave news comments consistent with the existing commentaries from other users. These findings lead us to hypothesize that the comment sections of partisan media will function as online echo chambers filled with homogeneous voices; thus, news comments would magnify the biased political perspectives of partisan media.

Studies on the motivations behind people posting news comments discover the other side of the story. Specifically, the top three motives of German newsreaders who write comments were (1) to share one’s opinion, (2) to introduce novel aspects of a topic at hand, and (3) to express their disagreement with the journalists' opinion (Springer et al., 2015). South Korean newsreaders also reported that they are more likely to comment when expressing their anger and disagreement with the news stories (Kim and Kim, 2018). Moreover, Chung and colleagues' (2015) experimental study showed that participants leave more comments on news stories that are biased “against” their existing attitudes to balance out. In contrast, news stories biased “in favor” of participants’ existing attitudes are more widely shared or liked. These findings inform us to reject the idea of news comment section being online echo chambers because user comments on partisan media are supposed to be discordant and argumentative with the political leanings of the corresponding news stories.

This competing literature leads us to pose a research question and test the presence of echo chambers in the news comment sections by analyzing the ideological alignment between partisan news stories and their user comments. This states our first research question as follows:

Are the political slants of user comments align with partisan news media’s political leanings? We acknowledge that comment posting and comment reading are two distinctive behaviors in the discussion of online news comments (Diakopoulos and Naaman, 2011; Springer et al., 2015). However, they are interlocked in that comment readers are exposed to what comment contributors have posted. Suppose, comment contributors have a propensity to submit their comments only to news stories congenial to their political beliefs and values. In that case, comment readers are destined to get exposed to news comment sections where the same opinions bounce back and forth between news reporters and their audiences. Along this line, an imperative question arises as to whether comment contributors make their submissions only to ideologically congruent media or also to ideologically incongruent media. If echo chambers exist within partisan media’s comment sections, ideological homophily will emerge among comment contributors. That is, most comment contributors would not likely crossover ideological spectrum; instead, they would most likely share their feelings and opinions with fellow like-minded partisans. These commenting behaviors would be an essential source of information that allows current scholarship to better estimate the ideological landscape of partisan media’s comment sections. To prove this possibility, we pose the second research question as follows:

Do comment contributors leave their opinions only on the like-minded partisan media? Alternatively, do they also leave comments across the political spectrum?

Method

To address these research questions, we collected news stories and their associated user comments on Naver, the number one news portal that is the preferred gateway to the news for seven in ten South Koreans (71.4%; Korea Press Foundation, 2018). The platform offers the comment section, which can be viewed by anyone but only can be written by logged-in users. Although user IDs of commenters are partially anonymized (e.g., abcd****), the raw data accessible on Naver included the full numeric user identification. This identification number was unique to each user; it was in a hashed format such that we could not match this information to the profiles of Naver users. We could nonetheless utilize this anonymized hash number to cluster all the comments written by the same user. In this manner, we were unprecedently able to analyze the number of comments each user had contributed to Naver and examined whether the user commented on both progressive and conservative media or mostly on like-minded partisan media. We then developed a deep learning-based text classifier to infer political orientations of user comments. The target period of data collection span 6 months: from January 1st to July 6th in 2019.

Data collection

Using “minimum wage” as a keyword, we collected in 1567 news stories from the given period. 2 We targeted three well-known conservative media—that is, the Chosun Ilbo, Joongang Ilbo, and Donga Ilbo—and gathered 1094 news articles and 291,780 user comments. There were 473 news articles and 99,912 user comments from the three notable progressive media—that is, the Hankyoreh, Kyunghyang Shinmun, and OhmyNews. We intentionally limited our analyses to the topic of minimum wage because it is one of the most controversial issues, where ideological lines with the progressive ruling party supporting for the minimum wage hike and the opposing conservative party alleging putting breaks on the wage hike (Garikipati, 2019).

We also crawled user comments on the list of top-30 “most commented” news stories. Naver News presents the list on each of seven major news categories, including national, politics, economy, culture, world, and lifestyle, amassing about 210 news stories with the highest number of user comments per day. We collected around 38 million user comments by 1.8 million unique users from a total of 42,896 “most commented” news stories for the same six-month period. This additional dataset (hereafter referred to as 38M-Dataset) was used for training and validating our deep learning-based bias classifier.

Analyzing the Political Slants of Comments: Neural Network for Text Classification

User-contributed news comments are, by nature, informal, irregular, and erratic. Rule-based approaches in computational analysis of such noisy data fail to comprehend this complexity appropriately. To achieve both accuracy and scalability, we employed neural networks as a classifier for the task of identifying a political bias in user comments. BERT (Bidirectional Encoder Representations from Transformers; Devlin et al., 2018) is a large-scale language representation model that can extract machine-computable features from natural language by learning which element is essential in a sentence. BERT has achieved state-of-the-art performance on various text classification tasks over other previously introduced rule-based methods and word embedding, and deep learning technologies, such as word2vec and TextCNN (Han et al., 2019). We thus used BERT to build a bias classifier.

BERT learns how to classify a given text through stages of pre-training and fine-tuning. First, the purpose of the pre-training session is to enable a model to understand the target language via binge exposure. Without learning guidance such as labels, BERT tries to predict whether a target sentence will appear or not on a given sentence or to fill in randomly masked words in sentences. To answer these questions, the model learns the features of language using large-scale resources like Wikipedia. This step involves techniques like dynamic embedding, which compiles a contextual representation of words in a sentence. Upon such pre-training, BERT learns how to represent natural language in the database as vectors that are machine-understandable. Second, in the fine-tuning stage, BERT is then trained for a specific downstream task like bias classification. Unlike the pre-training step that involves million-scale labels, the fine-tuning step is light and typically involves orders of magnitude fewer labels. To this end, we added an extra output layer to the pre-trained model. This added layer performs the actual classification task, and through this second round, BERT learns how to answer various classification questions with the use of readily available answer keys. The model makes small adjustments with the repeated practices of this sort, ultimately achieving high accuracy in the given task.

There are multiple pre-trained BERT models, each trained on datasets of different languages and topic domains. We first opted for a multilingual pre-trained BERT model introduced by Google (Devlin et al., 2018). However, this general model did not yield reasonable performance in our data, and we switched to KorBERT, a Korean pre-trained BERT model released by the Electronics and Telecommunications Research Institute of Korea (ETRI, 2019). With additional training on Korean sentences extracted from news articles and encyclopedias, this Korean language specialized deep learning linguistic model showed improved performance in our task compared to Google’s general-purpose, multilingual models (Han et al., 2019).

Human labels

To build a KorBERT-based bias classifier, we compiled a thousand scale ground truth data (i.e., answer keys) from human labels. Three coders were employed to this end and trained to classify user comments on the minimum wage-related news stories as liberal, conservative, or others/neutral. We randomly sampled 4872 user comments from the 38M-Dataset, which includes over 38 million user comments to the Naver’s most commented news stories for the past 6 months. After a week of training sessions, three coders analyzed 250 user comments from the sampled dataset and achieved acceptable inter-coder reliability (Krippendorff’s alpha = 0.702). Coders thus continued to label the rest independently. This human-labeled dataset consisted of 1345 liberal (27.6%), 1597 conservative (32.8%), and 1930 other (39.6%) comments.

Amplifying human-labeled comment dataset

To ensure a sufficient amount of training data, we expanded the comment labels by matching user IDs. This step involved first identifying users who had authored at least two comments in the 4872 human-labeled datasets. The political orientations of these users (N = 1176) were computed with the mean of their comment labels, for which scores were negative (−1) for a liberal comment and positive (+1) for a conservative comment. Selected were users whose mean bias scores were either below –0.80 (i.e., consistently liberal) or above +0.80 (i.e., consistently conservative). Consequently, we had identified political leanings of 250 unique users, of whom 65 were liberals and 175 were conservatives. These users were found to be authors of 93,565 comments in 38M-Dataset. Among these, we labeled 17,535 comments contributed by the identified liberal users as liberal (18.7%) and 76,030 comments written by the identified conservative users as conservative (81.3%). This step for expanding the comment labels is critical for training the KorBERT-based bias classifier. We note that the expanded dataset was highly skewed toward conservative samples. Because an imbalanced dataset can compromise the accuracy of a classifier, following Ganganwar's (2012) suggestion, we downsampled the conservative comments and balanced the number of comments from each side, leaving the final sample size to 35,070.

KorBERT-based bias classifier

Before training the KorBERT-based bias classifier that outputs the political stance of each news comment, we set aside 10% of the comments samples as a test set, then split the remainder into 90% of a training set and 10% of a validation set. Having the questions and answer keys from the training set, the KorBERT-based bias classifier was first self-taught and then was evaluated on the validation set. By repeating multiple passes over this so-called fine-tuning process, the classifier advances its accuracy on the validation set.

As a result of this training, the classifier can score a given comment by the likelihood of it belonging to one of the categories. Here, we set the likelihood of a comment being conservative as an inference score such that comment with an inference score of 1 is conservative, 0 is liberal, and 0.50 is unclassified which has no dominant feature of either category. If the scores were higher than 0.80, we assumed the inferred conservative label to be correct. If they were lower than 0.20, we did the inferred liberal label to be correct. If the scores higher than 0.20 but lower than 0.80, we assumed the inferred label either as liberal or conservative to be incorrect, thus renaming them as “unclassified” (see the Supplementary Material to review how the cutoff points were determined).

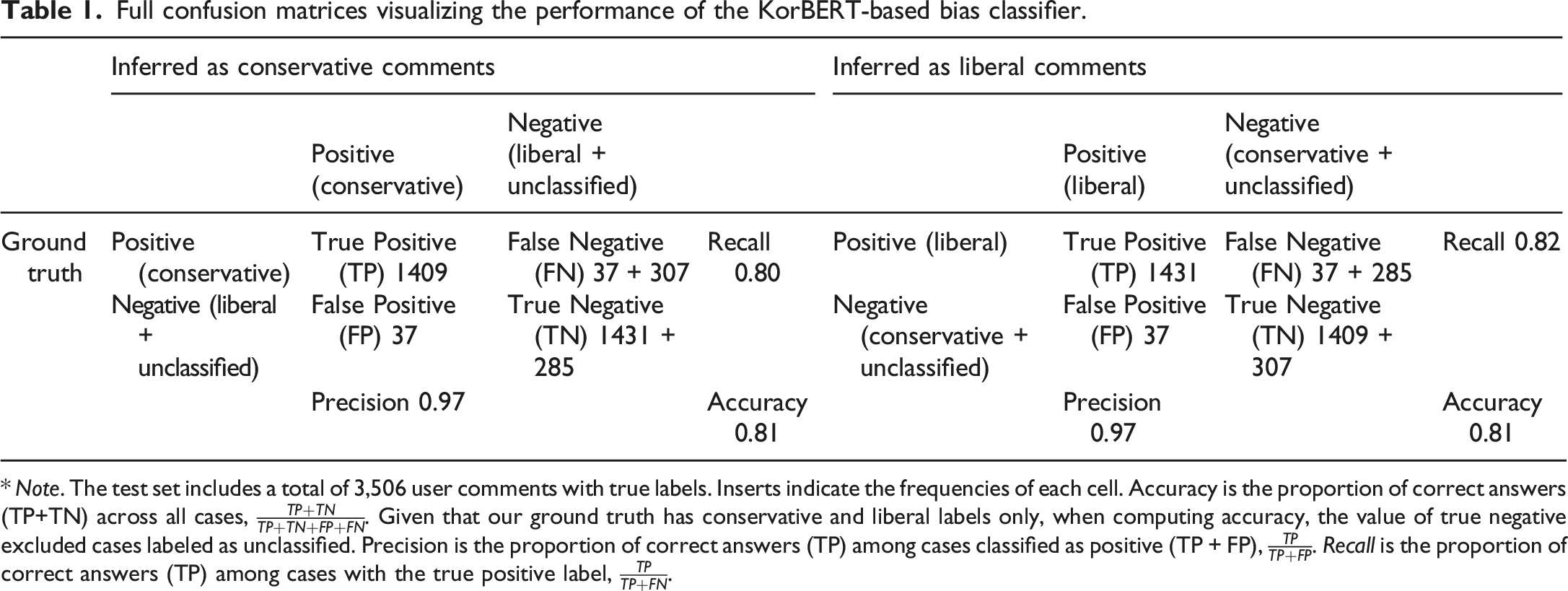

Full confusion matrices visualizing the performance of the KorBERT-based bias classifier.

* Note. The test set includes a total of 3,506 user comments with true labels. Inserts indicate the frequencies of each cell. Accuracy is the proportion of correct answers (TP+TN) across all cases,

The results demonstrate the high performance of our classifier with an accuracy of 0.81. The precision and recall were 0.97 and 0.80 for conservative comments and 0.97 and 0.82 for liberal comments. An accuracy of 0.81 suggests that 81% of the user comments are accurately labeled as conservative and liberal. A precision of 0.97 indicates that the chance of a case being wrongly classified as conservative (or liberal) is only 3%. A recall of 0.80 (or 0.82) indicates that the chance of our classifier missing a case that should be classified as conservative (or liberal) is 20% (or 18%).

Results

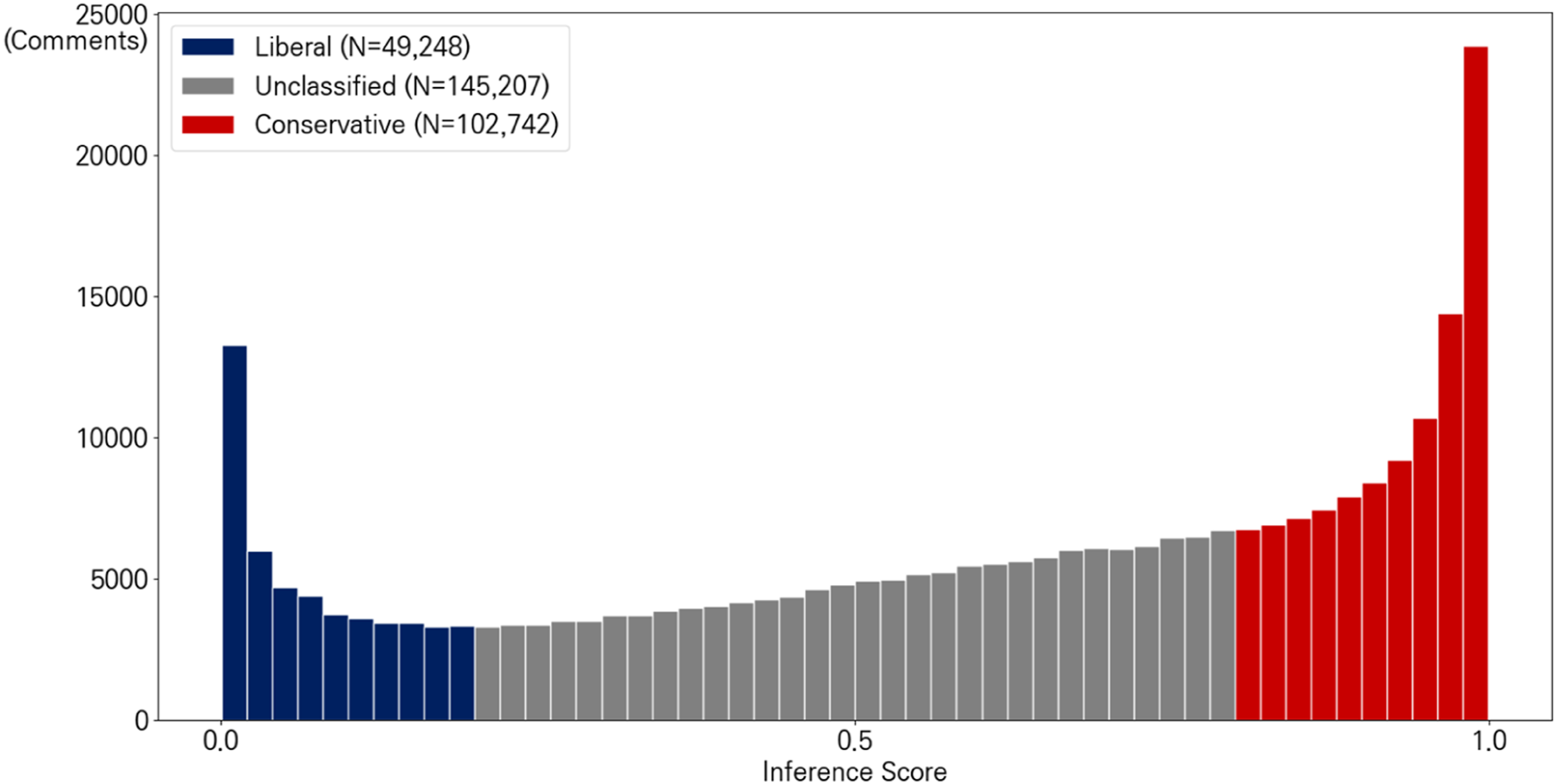

Out of 391,692 user comments from 1567 news stories on minimum wage, 24.1% had been deleted, either by the authors themselves or by Naver due to the violation of the platform’s ethical guidelines. This statistic corresponds to an earlier finding that, in general, 26.9% of news stories on Naver’s economy news section had been removed (Kim and Oh, 2018). Our binary bias classifier (0 = liberal; 1= conservative) inferred the political slants of the remaining 297,197 user-generated news comments. The results are in Figure 1. Distribution of the inferred political slants of online news comments.

Looking at Figure 1, the inference score of 0 or 1 denotes that our bias classifier identified a given user comment as liberal or conservative without a doubt. By contrast, the inference score of 0.5 indicates that the bias classifier found no dominant features of liberal or conservative bias in the given comment. Based on our threshold policy, 48.86% of the comments remained “unclassified”; there was no significant difference across the six partisan media studied. As a result, a total of 151,990 politically charged user comments were identified such that 32.4% of them were liberal (N = 49,248) and 67.6% of them were conservative (N = 102,742).

Political alignment between partisan news stories and their user comments

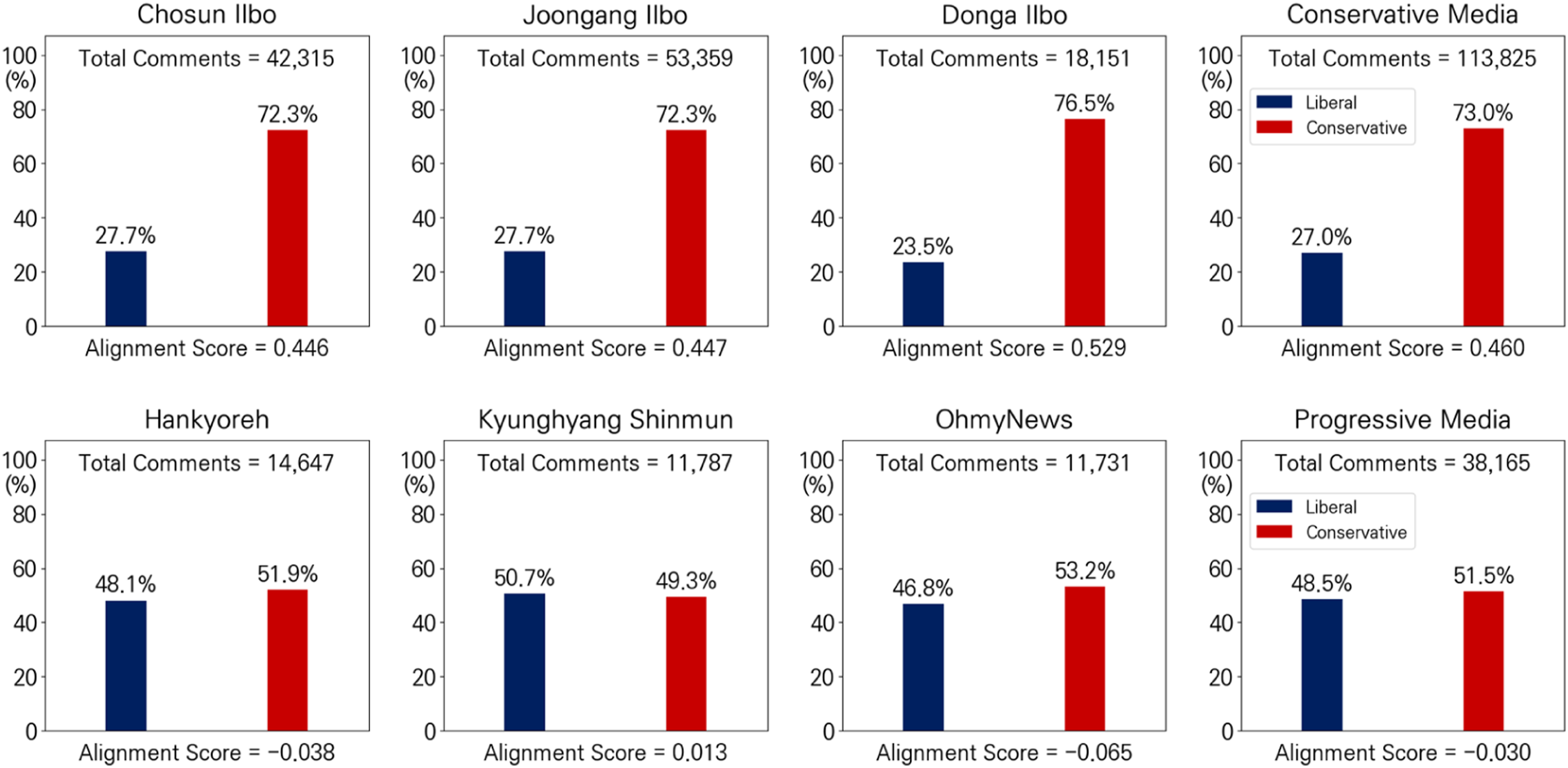

To investigate whether the user comment section of partisan media functions as digital echo chambers (RQ1), we plot the proportion of liberal and conservative comments within each of the six partisan media outlets. Figure 2 shows the results. The proportion of liberal and conservative news comments within the progressive and conservative media’s comment sections.

The figure demonstrates that the comment sections of the three conservative media (i.e., Chosun Ilbo, Joongang Ilbo, and Donga Ilbo) were pro-conservative. On average, 73.0% of the comments (versus 27.0%) echoed the news outlets’ evident political bias. This tendency, however, did not appear in the three progressive media (i.e., the Hankyoreh, Kyunghyang Shinmun, and OhmyNews). Albeit marginal, a more fraction of comments disagreed with the liberal bias of the outlet’s partisan coverage (51.5%) than agreed (48.5%).

To compare such asymmetry in detail, we computed an alignment score, a measure of bias alignment between the partisan news stories and their user comments (see also Gentzkow and Shapiro's (2011) Isolation index). The score, ranged from −1 to +1, was computed based on the difference between the proportion of liberal and conservative user comments. A positive score (+) indicates the degree to which the overall comments resonate with the political leaning of the partisan media. A negative score (−) indicates the degree to which the overall comments challenge the news outlets’ political bias. The distinctive difference in the alignment scores between the progressive media (−0.030) and its conservative counterpart (+0.460; see Figure 2) concludes that the idea of online echo chambers might be at work in the comment sections of the conservative media, but not in the progressive media. 3

Commenting behaviors crossing ideological spectrum

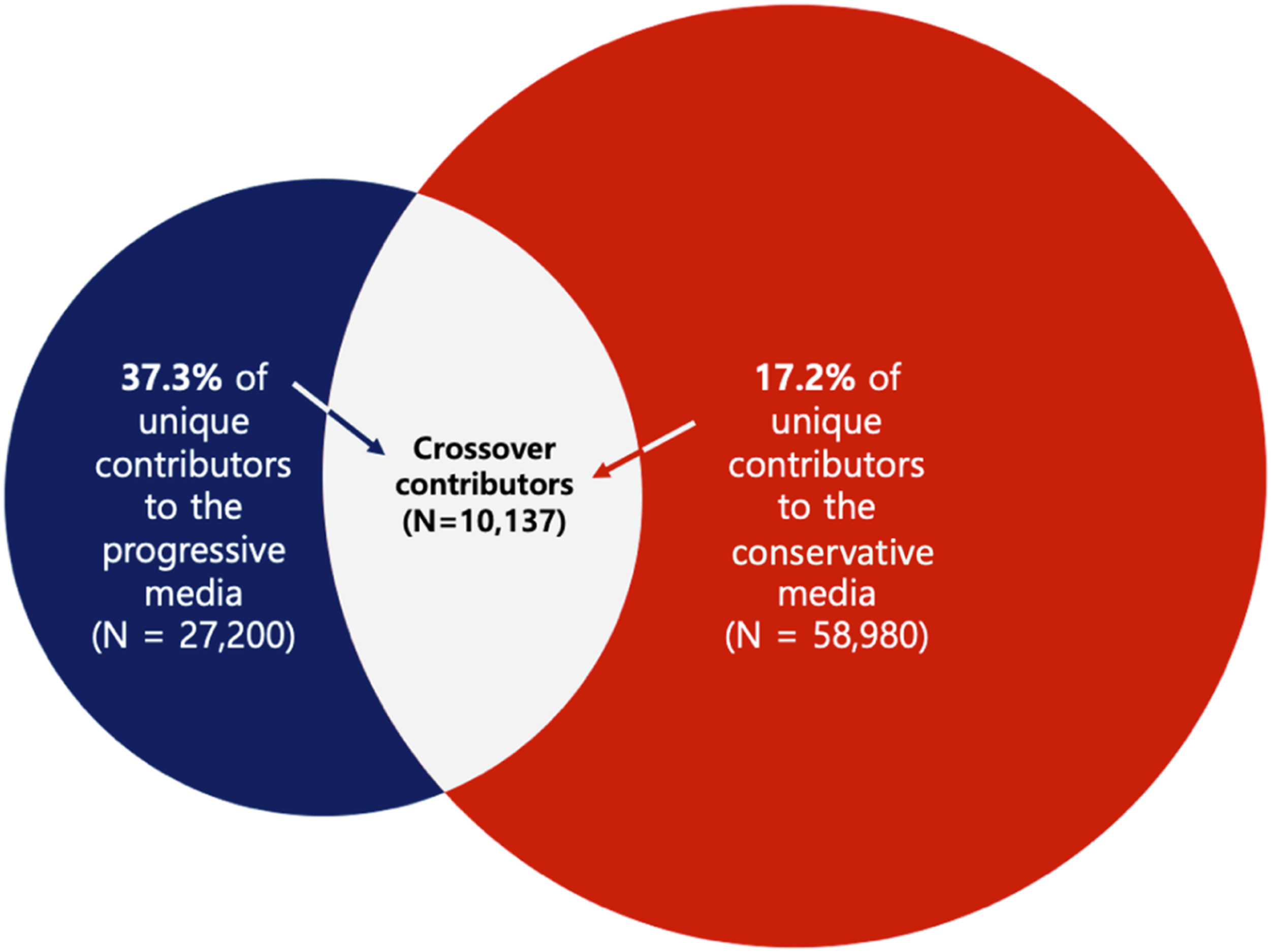

Next, to explore cross-cutting commenting behaviors along ideological lines, we analyzed the overlap of comment contributors between the progressive and conservative media (RQ2). Figure 3 presents the findings. Here, the numbers of unique commenters of the progressive and conservative news outlets were 27,200 and 58,980, respectively. A total of 10,137 commenters submitted their opinions across the ideological spectrum. Precisely, about two in five commenters who posted on the progressive media (37.3%) also visited and contributed to the conservative media. The corresponding number was less than half (17.2%) in the conservative media (see Figure 3). This finding suggests that contributors are not ideologically segregated. Nonetheless, a tendency of political homophily is much stronger among the conservative commenters than liberal commenters. The overlap of unique comment contributors to the progressive and conservative media.

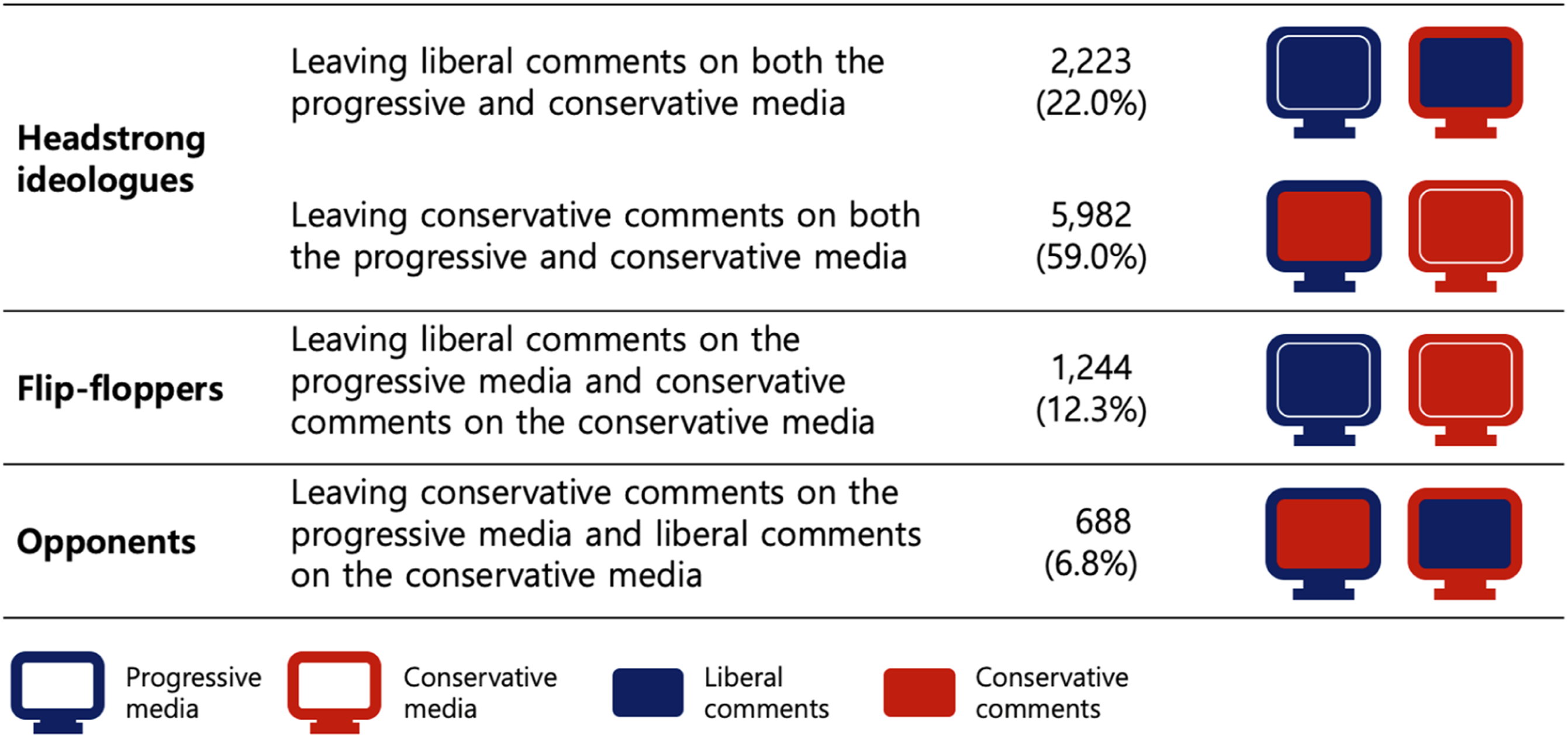

The unique set of 10,137 comment contributors, who made a crossover between the ideologically like-minded and cross-cutting partisan media, provides an excellent opportunity to examine how individuals break out of echo chambers. On average, these individuals posted 7.19 comments, a rate that is much higher than the average number of comments posted by non-crossover contributors (i.e., 1.66 submissions). We further looked at a typology for the crossover commenters. Based on the inferred political bias of their comments and the political leaning of the news outlet where the comments were posted, we developed three types of commenters crossing ideological lines: (1) the headstrong ideologues, (2) the flip-floppers, and (3) the opponents. First, the headstrong ideologue type refers to those commenters whose political orientations persist regardless of whether they are posting on like-minded or cross-cutting partisan media. Second, the flip-flopper type refers to commenters who leave user responses congenial to the news outlets’ political bias. Lastly, commenters classified as opponents challenge the news outlet’s political worldviews across ideological lines—that is, their comments are always against the political bias of the news stories. Figure 4 depicts this typology further. A typology for crossover comment contributors.

The vast majority of crossover commenters were headstrong ideologues (81.0%; see Figure 4). Headstrong ideologues are compelling evidence against the echo chamber hypothesis because their activities on the ideologically discordant partisan media would enhance political diversity in the user comment sections and spur argumentations. Although the number is small, the presence of opponents (6.79%) adds further evidence against ideological segregation resulting from digital echo chambers. Our analyses illustrate a significant overlap of comment contributors between the progressive and conservative media, and these crossover commenters play pivotal roles in precluding partisan media from being monolithic, one-colored, and single-minded.

Discussion

We successfully analyzed the political slants of 151,990 online news comments written by 76,043 unique contributors by building a semi-unsupervised deep learning-based bias classifier. This innovative approach to content analyses allowed us to explore online echo chambers in the realm of user comments posted under ideologically biased partisan news stories. Our study is the first few that proved the political orientations of user comments based on a massive real dataset (see Park et al., 2011; Toepfl and Piwoni, 2015 for rare exceptions).

All in all, we found mixed evidence of the user comment sections being digital echo chambers. This partially reflects earlier findings that the primary motive to leave comments was to express political disagreement, not agreement (Chung et al., 2015; Diakopoulos and Naaman, 2011; Kim and Kim, 2018; Springer et al., 2015). However, our finding also revealed an interesting ideological asymmetry. The political slant of the overall user comments on the conservative media was in alignment with news outlets’ political leaning; yet, a similar pattern was not observed in the progressive media’s comment sections. Besides, many more conservative commenters, compared to liberal ones, were inclined to share their opinions on the like-minded news outlets only. Whereas about two in five unique contributors commenting on the progressive media left their opinions on the conservative media as well, the corresponding number halved to one in five among the unique contributors of the conservative media.

These findings on ideological asymmetries add valuable empirical evidence to the growing body of literature on liberal-conservative differences in motivation, social cognition, and values. Garrett and Stroud (2014) have discovered that conservatives are significantly more likely to avoid counter-attitudinal information than liberals. Studies from neuroscience further claim conservatives are more reactive to aversive stimuli than liberals (for a review, see Jost, 2017; Jost and Amodio, 2012). Being more ideologically cohesive than liberals (Grossmann and Hopkins, 2015; Lelkes and Sniderman, 2016), conservatives have also found to be more actively engaged in defending their party’s issue stances (Bullock, 2011; Jost, 2017). Consistent with the earlier findings, our analyses on the alignment score showed how well the user comments resonated with the political bias of the conservative partisan media compared to the progressive media. Our results on the user analyses further demonstrated conservatives’ propensity being disproportionately active on politically congenial than uncongenial news outlets.

Before discussing the implications of our findings, it is noteworthy that in our data, the conservative media published more news stories than the progressive media (996 versus 382), attracted more user comments (113,825 versus 38,165), and had a unique number of comment contributors twice as many as those to the progressive media (58,980 versus 27,200). This could be reducible to the heavily leaned media landscape in South Korea favoring conservatives (Hahn et al., 2013; Han, 2018; Lee, 2008; Rhee and Choi, 2005; Shin and Lee, 2014). As a stark demonstration, the top three conservative newspapers (i.e., the Chosun Ilbo, Joongang Ilbo, and Donga Ilbo) together take up 72.5% of the readership of the 10 national dailies (Korea Audit Bureau of Certification, 2018). Also, combined web traffic to these three dailies’ websites accounts for 79.1% of the total web traffic to news media online, including individual homepages of broadcasters, news agencies, Internet opinion blogs, and magazines (Choi et al., 2016). One may suspect a disproportionate use of Naver between Korean liberals and conservatives. However, it is less likely given the fact that 94% of news aggregator users in South Korea use Naver (Kim et al., 2018), and 71% of South Koreans access the news online through Naver (Korea Press Foundation, 2018). Also, the South Koreans found Naver politically neutral, as was Yahoo! News in the US (Newman et al., 2019).

Our findings make substantial contributions to the current understanding of political polarization concerning digital news consumption. There is burgeoning evidence of asymmetric attitude polarization, with conservatives moving further to the right than liberals have to the left (Grossmann and Hopkins, 2015; see Han and Kim, 2020 for similar patterns emerging in South Korea). This greater polarization featured among conservatives may be, in part, explained by their digital media habits. To elaborate, a stronger ideological homophilic nature of conservatives may build the right-wing partisan media’s news comment sections to be more homogeneous and compatible. Thus, conservative comment readers may gauge public opinion on their side and reinforce their political worldviews accordingly. By contrast, given the relatively high presence of challenging ideas in the left-wing partisan media’s comment sections, liberal comment readers may be more aware of arguments from the other sides, and thus their attitudes tend to be less extreme (see Bail et al., 2018 for counterevidence of cross-cutting political interactions increasing political polarization). Likewise, if the high visibility of challenging arguments in the news comment sections could alleviate political extremism, news organizations are strongly encouraged to adjust their algorithm-led comment curation, from which, in general, displays the latest or most popular user comments to the top to make the top-listed user comments be more politically diversified. To better inform this sort of algorithm development, we invite future research to test the interactions of political biases between news stories and their associated user comments on attitude polarization via the perception of public opinion.

On a related note, our findings speak to the potential for online news comment sections to be the so-called Public Sphere 2.0 rather than echo chambers. First, we underscore that a significant proportion of both progressive and conservative media’s comment sections reflect voices from the other side (48.5% and 27.0%, respectively). Second, there are crossover commenters in both progressive (37.3%) and conservative (17.2%) media who indeed crossed ideological lines and submitted their opinions. A closer look at our typology of the crossover comment contributors reveals a couple of captivating narratives. To begin, although “headstrong ideologues” were presumed to be the most dedicated users of the partisan media (Iyengar and Hahn, 2009), they consisted of the majority of crossover commenters who actively engage in sharing their perspectives on the politically discordant online discussion forum. Headstrong ideologues would have commented on uncongenial news stories to introduce alternative views, persuade the other side, express their disagreements, or derogate their political opponents. Whichever the reasons are, participation in online discussion forums of the cross-cutting partisan media is a positive sign of people breaking out of their own echo chambers.

Moreover, other types of crossover commenters, such as “flip-floppers” and “opponents,” were inconsistent in their political standpoints when they made submissions to the conservative or progressive media. This suggests that both are ideologically mixed or moderate who are conventionally seen as less politically interested or engaged and thus have frequently neglected much in the analyses. However, we found that those ideologically moderates willingly share their thoughts on politically contentious issues than they were conventionally expected. This points to the significance of future research to further theorize the development of the news comment sections into the digital cafés of Public Sphere 2.0, as suggested by Ruiz et al. (2011).

This rosy-possibility of news comment sections should not overshadow their downsides in expressing political discord online. User comments, especially to partisan news, are often characterized by their incivility toward political opponents, divergent political worldviews, and other contributors (Coe et al., 2014; Muddiman and Stroud, 2017). Incivility in user comments is known to foster distrust in journalism (Lee and Tandoc, 2017), hatred toward political parties (Hwang et al., 2014), and avoidance of online discussions in general (Springer et al., 2015). To reduce these concerns, some high-profile news organizations, including NPR, CNN, and Reuter, have terminated their comment sections. However, the majority are still running the sections and putting many endeavors to moderate comment sections to do more good than harm to participatory journalism (World Association of Newspapers and News Publishers, 2016). Our findings on the state of partisan media’s news comment sections add critical insights to these efforts and suggest that greater political diversity in user comment sections may enhance their potentials to support deliberative democracy.

In closing, our study bears limitations that can be improved. First, while we drew a comprehensive map of political slants in user comment sections, our analyses focused on a single case of news portal sites: Naver. Although Naver is the top news source in South Korea, the media landscape is continually changing. Most notably, YouTube emerges as a popular online platform. Using YouTube for news consumption is a double-edged sword. On the one hand, it offers an unprecedented wide array of channel choices. On the other hand, it comes with the price of radicalizing users through exposure to more and more extreme than the mainstream political news (Ribeiro et al., 2020), which strongly suggests ideological segregation online leading toward political extremism. With this emerging trend in mind, future research is invited to investigate digital echo chambers in multiple media platforms. Second, the present study concerns one hot-button partisan issue of minimum wage. For a generalized discussion, it is important to expand the current analysis to multiple social issues. Lastly and method-wise, the comments dataset, which was expanded from human-labeled news comments, could be validated further. Although we assumed that individuals’ political worldviews on the minimum wage would be dichotomous and remain consistent over the observed time (i.e., half of a year), there is a chance that the commenters’ political positions need to be treated with a more complex model and might have evolved during the time. Despite these limitations, our methodological advancement with the adoption of deep learning technologies opens many doors for communication scholars to take full advantage of ample data available online in a way to instantaneously gauge the formation of public opinion in the era of digital news media.

Supplemental Material

sj-pdf-1-jou-10.1177_14648849211069241 – Supplemental Material for News comment sections and online echo chambers: The ideological alignment between partisan news stories and their user comments

Supplemental Material, sj-pdf-1-jou-10.1177_14648849211069241 for News comment sections and online echo chambers: The ideological alignment between partisan news stories and their user comments by Jiyoung Han, Youngin Lee, Junbum Lee and Meeyoung Cha in Journalism

Footnotes

Acknowledgment

We thank Jiwan Jeong for allowing us to access the Naver News Archive and his helpful comments on our data analysis.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Institute for Basic Science (IBS-R029-C2), Ministry of Science and ICT in Korea via IITP (2021-0-01696), and the National Research Foundation of Korea (NRF-2017R1E1A1A01076400, NRF- 2019S1A5B5A01040041).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.