Abstract

Gendered social roles raise assumptions about what female and male journalists ought to do. Prior studies have suggested that covering counter-stereotypical topics may decrease journalists’ source and their work’s message credibility. Pertaining also to prior studies on heuristic cues for credibility evaluation, user comments have been shown to serve as corrective, both positively and negatively affecting the perception of accompanying content. In an online survey with 417 German participants, we employed a 3 (author: female, male, and computer) × 2 (topic: stereotypically masculine and feminine) × 2 (comments: sexist and non-sexist) experimental design to investigate source and message credibility. Findings do not show differences in gender perception but between human authors (either female or male) and a computer (the control group). Covering counter-stereotypical topics indicates slightly less credibility for men and women if presented with non-sexist comments. In turn, sexist comments lead to slightly higher credibility, suggesting more elaborate engagement with sexism-affected content.

Keywords

Journalism is largely dominated by male voices. Across the 67 countries investigated in the Worlds-of-Journalism survey, men make up for 57% of all surveyed journalists while accounting for 62% of all junior and senior managers within the editorial hierarchy (Hanitzsch et al., 2019). In the U.S., male journalists are reporting the lion’s share of political, justice, science, technology, and sports news whereas women are particularly assigned to health and medicine stories (Steiner, 2017). Within articles, a Norwegian study shows female sources to be largely underrepresented and only provided with equal the presence of male sources for lifestyle content or when representing community volunteers (Sjøvaag and Pedersen, 2019).

Adhering to social role theory, such misrepresentations derive from socially constructed gender perceptions and have been suggested to consistently inform people’s biased perceptions of gender traits (Eagly and Wood, 1999; Searles et al., 2020). Such gendered social roles are, for example, ascribing warmer traits to women and thereby fuel the perception of greater female competence toward compassion topics (Huddy and Terkildsen, 1993). In turn, higher credibility is assigned to women (men) for a broad set of stereotypically feminine (masculine) topics (Armstrong and McAdams, 2009; Prentice and Carranza, 2002; Searles et al., 2020).

Importantly, while both men and women have been equally associated with respective stereotypical topics, such as men for politics or sports vis-à-vis women for health or lifestyle, audiences have been shown to negatively interpret such stereotypical presentation particularly if it was covered by women (Winkler et al., 2017). Termed the backlash effect (Rudman et al., 2012), women who behave counter-stereotypically have, under certain circumstances, been rated slightly less persuasive (Winkler et al., 2017) and slightly less credible (Searles et al., 2020) than counter-stereotypically behaving men.

Similar effects of gender-specific perception have also been shown for online user comments, where deviant and agentic writing styles have been judged more strictly when study participants thought that the comments were authored by women (Wilhelm and Joeckel, 2019). Yet, online user comments are more frequently authored by men (Ksiazek and Springer, 2018) and prior studies indicate that, when it comes to gendered harassment, women are more often the target than the aggressor (Döring and Mohseni, 2019). That is, despite sometimes serving as corrective in holding journalism up to its professional standards and general social norms (Craft et al., 2016), a significant share of online user comments entails incivility (Coe et al., 2014) with particular amounts of gendered harassment aimed at female journalists (Chen et al., 2020; Döring and Mohseni, 2020). Abusive comments, then, have also been shown to negatively affect uninvolved observers’ perceived credibility of both an article and its author (Prochazka et al., 2018; Searles et al., 2020).

This study contributes to research on the backlash effect by looking at the combinatory effect of journalists’ gender, counter-stereotypical topics, and sexist user comments on the credibility of journalists and their articles. A pre-registered between-subjects online questionnaire employing a 3 (author gender: female, male, and computer) × 2 (topic: stereotypically masculine and feminine) × 2 (user comment: sexist and not sexist) experimental design, however, reveals almost no effects. These important yet predominantly null findings are in line with recent research on related matters (Greve-Poulsen et al., 2021; Searles et al., 2020; Winkler et al., 2017) and highlight the necessity to look at gender backlash in journalism with more nuance. As such, we discuss the role of computers as control group for the author-gender factor as employed in recent endeavors of automated journalism (Graefe and Bohlken, 2020) as well as the role of individual sexism toward both men and women (Glick and Fiske, 1997, 1999).

Gendered social roles, credibility, and the backlash effect

Social role theory posits that people learn from peer groups, work contexts, or the media to form expectations toward gender roles, such as that women are supposed to be warm and caring while men are expected to be strong and fearless (Eagly and Wood, 1999). The observation of others adds to mental models that help organize one’s knowledge and, subsequently, one’s behavior (Lakoff, 1987). In observing social surroundings, stereotypes—“the gender-based ascription of different traits […] to male and female” individuals (Huddy and Terkildsen, 1993, p. 120)—are formed. Stereotypical expectations not only toward male and female individuals but also toward topics associated with masculinity and femininity arise. Such stereotypical expectations continuously gain normative momentum as some behavior is learned to be desirable for a certain gender whereas another behavior is learned to be undesirable (Huddy and Terkildsen, 1993). Rather constant over time, surveys report desirable female traits to include cooperativeness, generosity, or politeness whereas desirable male traits entail consistency, relaxation, or a sense of humor; undesirable traits describe typical women as anxious or materialistic and men as lazy or self-serving (Prentice and Carranza, 2002).

Acting in line with desirable stereotypes, then, has yielded higher credibility in several contexts, such as in television sportscasts (Mudrick et al., 2017), blogs (Armstrong and McAdams, 2009), or online bulletin boards (Embacher et al., 2018). Credibility refers to the ascribed authority and believability of a person or certain information (Roberts, 2010) and has been termed a key concept to understand online communication (Sundar, 2008). The plethora of available information on the Internet necessitates a “constant need to critically assess information while consuming it” (Sundar, 2008: p. 73). Perceived credibility is part of this ongoing heuristic evaluation of (online) information and has found wide acceptance among scholars. Subsequently, credibility has been further distinguished as ascribed to either the author (source) of a message, the message itself, and the medium through which a message was transmitted (Flanagin and Metzger, 2000; Sundar, 2008). Acting in line with stereotypical expectations, then, has affected primarily the perceived credibility of the source.

Effects for various topics vary, though, pointing to audiences’ distinction between source and message credibility. That is, as topics are stereotypically perceived as more masculine or more feminine (Thomson, 2006), credibility of both the source and the message also vary depending on whether they are communicated by a man or a woman. For example, when a female newscaster read a story, the message was evaluated more credible than when read by a male newscaster, although the man was rated as more credible than the woman (Weibel et al., 2008). In general, higher credibility is more likely assigned when an author’s gender and the topic’s stereotypical expectation align (Armstrong and McAdams, 2009; Prentice and Carranza, 2002; Searles et al., 2020).

Importantly, deviating from a gender stereotype yields different outcomes for men and women. While men who do not reflect typical male traits or do not deal with stereotypically expected masculine topics are usually rated less persuasive and credible than men who do, taking on female traits or feminine topics does not further diminish a man’s perception (Prentice and Carranza, 2002; Searles et al., 2020; Winkler et al., 2017). In contrast, women who take on male traits or masculine topics are rated not only less than women conforming to feminine stereotypes but even less than men who behave counter-stereotypically (Prentice and Carranza, 2002; Winkler et al., 2017). This has been termed the backlash effect (Rudman et al., 2012), in that women are punished not only when taking on undesirable female stereotypes but also when conforming to desirable male stereotypes.

Theoretically, the backlash effect considers women a threat to gender hierarchy whereby any status violations—such as taking on undesirable stereotypes—are penalized. This has been shown to be particularly true for traits adhering to (stereotypically male) dominance (Rudman et al., 2012). As journalism has long been dominated by male voices (Hanitzsch et al., 2019; Steiner, 2017), there is strong support for this argument as stereotypical expectations toward journalism are driven by male prejudice, thus, giving way to a potential backlash effect toward female journalists.

A recent U.S. study found female journalists to be ascribed less source credibility when covering a stereotypically masculine topic than when covering a stereotypically feminine topic. While the respective male/masculine pattern did not show, female journalists counter-stereotypically covering a masculine topic were ascribed with the least source credibility when no corrective user comment was depicted (Searles et al., 2020, Appendix Study 2). Similarly, when asked to flag inappropriate comments after varying both the comment itself (hate speech or not) and the author’s gender, study participants in a recent German study not only flagged hate speech more often than non-hate speech but they also flagged hate speech by women more often than hate speech by men (Wilhelm and Joeckel, 2019). In another German study, respondents were asked to rate the persuasiveness of either a female or male online commenter employing either a stereotypically feminine or masculine style of writing. Findings show that while women were perceived as less persuasive than men, women employing a masculine writing style were rated least persuasive among all experimental variations (Winkler et al., 2017).

User comments

Online user comments have been shown to serve as a corrective, both positively and negatively affecting uninvolved observers’ perception of accompanying content. As for the aforementioned U.S. study, source credibility was lower when an abusive comment was presented criticizing a journalist who covered a stereotypical topic. However, a comment criticizing any journalist for covering a counter-stereotypical topic actually increased their credibility, albeit to a minor extent (Searles et al., 2020, Appendix Study 2).

This reflects the broader presumption that user comments hold journalism up to its professional standards and general social norms (Craft et al., 2016) but have also shown major shares of incivility (Coe et al., 2014) with severe amounts of sexism and gendered harassment aimed at female journalists (Chen et al., 2020; Döring and Mohseni, 2020). Uncivil comments have thereby been shown to negatively affect source and message credibility as well as general topic perception for uninvolved observers (e.g., Prochazka et al., 2018; Waddell, 2018).

Uncivil commentary can be defined as user comments employing an “unnecessarily disrespectful tone toward the discussion forum, its participants or its topics” (Coe et al., 2014: p. 660). Despite varying across topics (Ksiazek and Springer, 2018: p. 479), uncivil comments generally appear to break established norms of communicative practice (Stroud et al., 2015). Thereby and subordinate to incivility, harassing comments are “intentionally designed to attack someone or something” (Ksiazek et al., 2015: p. 854) where gendered harassment subsumes comments that “criticize, attack, marginalize, stereotype, or threaten a person based on attributes of gender or sexuality” (Chen et al., 2020: p. 879). In line with theoretical explanations on the backlash effect, gendered harassment toward women can thus also be seen as a means to sexually demean females with regard to presumed gender hierarchies (Herring and Stoerger, 2014: p. 577f.). Subsequently, in addressing (female) journalists, gendered harassment has been shown to not only raise severe negative emotions among addressees, such as worries, fear, and anger (Chen et al., 2020), but also among readers whose perceived credibility of the (female) journalist declined (Searles et al., 2020). A common explanation is that user comments serve as heuristic cues, helpful to trigger certain routes of cognitive processing to quickly draw loose conclusions about the accompanied source or message in question (Sundar, 2008). In other words, if a comment indicates lower credibility of a female journalist, be it through rational arguments or through sexism, then uninvolved readers are being served a heuristic cue to likewise rate author credibility lower.

In practice, gendered harassment is more often aimed at women than at men (Döring and Mohseni, 2020: p. 66). With studies showing that more men than women comment online (Ksiazek and Springer, 2018), it seems plausible that this is also the case within online journalism. In that, female journalists from five different countries recently reported “particular harassment if they wrote about topics associated with men” (Chen et al., 2020: p. 884). Again, this points to a double drawback for women in that female journalists are not only more often affected by gendered harassment than men in general but also that they seem to get punished for covering stereotypically masculine topics.

Computers as gender-neutral authors

Recently, the phenomenon of automated journalism has raised interest where computers are employed to automatically generate journalistic texts with a repetitive ductus. Such texts mainly include the stereotypically somewhat more masculine topics of sports, finance, or political forecasting as well as the stereotypically somewhat more feminine topics of entertainment and lifestyle (Graefe and Bohlken, 2020).

Several experiments have investigated the effects on source and message credibility as raised by either an authoring human or an authoring computer. The studies varied both author and byline, labeled as either written by a human journalist or generated by a computer, and have repeatedly yielded comparable scores of both source and message credibility (Graefe and Bohlken, 2020). However, a recent meta-analysis points out that “participants assigned higher (medium-sized credibility) ratings simply if they thought that they read a human-written article” (Graefe and Bohlken, 2020: p. 57). That is, while credibility is usually comparable when the actual source is unknown, journalists are rated more credible than computers when the byline suggests a human author.

Transferred to the current study, computers have been repeatedly shown to trigger cognitive models that are sometimes referred to as “machine heuristic” (Sundar, 2008: p. 83; see also Graefe and Bohlken, 2020). In that, the “constant need to critically assess information while consuming it” (Sundar, 2008: p. 73) that is pertinent to the information-rich Internet, is cognitively addressed through prejudice toward computers. Particularly, computers have been considered “objective in (their] selection and free from ideological bias” (Sundar, 2008: p. 83). However, when, for example, “including value-laden words and personal opinion” (Tandoc et al., 2020: p. 558), computers signal “discrepancy from reader expectations” (ibid.) by violating journalistic ideals of objectivity. Ultimately, this may lead to less source credibility for computer authors as compared to their human counterparts (Graefe and Bohlken, 2020). Moreover, these findings suggest computer authors to raise different models of cognitive processing than (sexist) gender stereotypes for human authors do.

Hypotheses

Previous research suggests that female journalists are worse off when it comes to perceived credibility of themselves (hypothesis H1a) as well as their work (H1b) (cf. Armstrong and McAdams, 2009; Embacher et al., 2018). This is in spite of a recent finding showing only limited support for these expectations (Searles et al., 2020).

H1a: Female journalists are perceived as less credible than male journalists.

H1b: The work by female journalists is perceived as less credible than the work by male journalists.

Pertaining to gendered social roles, it is assumed that alignment between an author’s gender and the stereotypical association with a covered topic leads to higher credibility of both an author (H2a/b) and their work (H3a/b) (cf. Armstrong and McAdams, 2009; Prentice and Carranza, 2002; Searles et al., 2020). In line with this study’s pre-registration, we formulate separate hypotheses for each gender.

H2a(b): Female (male) journalists who write about a stereotypically masculine (feminine) topic are perceived as less credible than female (male) journalists who write about a stereotypically feminine (masculine) topic.

H3a(b): The work by female (male) journalists about a stereotypically masculine (feminine) topic is perceived as less credible than the work by female (male) journalists about a stereotypically feminine (masculine) topic.

In addition, a backlash effect suggests that counter-stereotypical behavior of women is punished stronger than a respective male behavior when it comes to both source (H2c) and message (H3c) credibility (cf. Searles et al., 2020; Wilhelm and Joeckel, 2019; Winkler et al., 2017).

H2c: Female journalists who write about a stereotypically masculine topic are perceived as less credible than male journalists who write about a stereotypically feminine topic.

H3c: The work by female journalists about a stereotypically masculine topic is perceived as less credible than the work by male journalists about a stereotypically feminine topic.

User comments accompanying news articles have been shown to moderate effects from both the source and the message (cf. Prochazka et al., 2018; Waddell, 2018). This has also been shown with respect to abusive comments (Searles et al., 2020). As such, sexist comments are expected to dampen credibility ratings for both men and women (H4a/b) as well as their work (H5a/b) when compared to the same gender’s respective non-sexist versions.

H4a(b): If a female (male) journalists’ article is accompanied by sexist comments, source credibility is lower than if the article is accompanied by comments not containing sexism.

H5a(b): If a female (male) journalist’s article is accompanied by sexist comments, message credibility is lower than if the article is accompanied by comments not containing sexism.

Again in line with the presumed backlash effect (cf. Searles et al., 2020; Wilhelm and Joeckel, 2019; Winkler et al., 2017), female journalists are expected to suffer more from such effects than men.

H4c: If a female journalists’ article is accompanied by sexist comments, source credibility is lower than if a male journalists’ article is accompanied by comments containing sexism.

H5c: If a female journalist’s article is accompanied by sexist comments, message credibility is lower than if a male journalist’s article is accompanied by comments containing sexism.

Ultimately, in line with a study’s suggestion on the decisive role of participants’ gender (Embacher et al., 2018), we ask for the respective influence on both source and message credibility. Moreover, in accordance with another study’s suggestion (Chen et al., 2020: p. 884), we ask for the interaction between female journalists’ coverage of counter-stereotypical topics and sexist comments.

RQ1: How does the reader’s gender affect (a) source and (b) message credibility?

RQ2: If a female (male) journalist’s article about a stereotypically masculine (feminine) topic is accompanied by sexist comments, how does this affect (a) source and (b) message credibility?

Method

An online questionnaire was employed with a 3 (author) × 2 (topic) × 2 (sexist comments) experimental design and conducted in late June and early July of 2020. The final number of experimental groups was not twelve but ten, though, since the version with a computer author was presented with non-sexist comments only. After initial questions about age and media use, participants were presented with the stimulus—a textual news article about either football or movies (topic) with a byline along with an author picture of either a man, a woman, or a gender-neutral computer (author) and three anonymous user comments (all of which were either sexist or not, except for the computer author which was only presented with non-sexist comments). Participants were instructed to thoroughly read the stimulus before the remaining measures and manipulation checks were asked. All materials, data, and analyses have been made openly available under https://osf.io/sz7g9/. Hypotheses and the full study design had been registered 1 under https://osf.io/b9uda.

Participants

Participants were recruited through the noncommercial online-access convenience “SoSci Panel” (Leiner, 2016). Comparable prior research on gender and credibility indicates small-to-medium effects (Searles et al., 2020; Wilhelm and Joeckel, 2019; Winkler et al., 2017) whereas prior research on the credibility of automated journalism indicates medium effects (Graefe and Bohlken, 2020). An a-priori power analysis (see pre-registration) yielded a total of at least 400 participants. Subsequently, invitations were sent to 2000 panelists from Austria, Germany, and Switzerland in June 2020. A total of 449 people completed the questionnaire. Cases indicating too fast of a click-through (Leiner, 2019; n = 8), claiming to not have stayed focused (n = 18), or spending less than 20 seconds on the stimulus page (n = 6) were excluded. Ultimately, 417 participants remained (response rate of 21%), spending M = 10.5 (SD = 3.90) minutes on the questionnaire, M = 2.4 (SD = 1.4) minutes of which on the stimulus (see Supplemental Materials S17 for a break-down into experimental groups).

A total of 219 participants (53%) were female (195 male). A chi-square test did not show significant differences across experimental groups (χ 2 = 6.02). In line with other online convenience samples (Leiner, 2016), mean age was M = 47.1 years (SD = 15.2)—again, no differences showed across experimental groups (F(9, 407) = 0.96). The highest level of education was biased in that 280 subjects (67%) had a University degree while another 69 subjects (17%) were eligible for University (“Abitur”). This three-tier structure (University degree, University eligibility, others) distributes randomly across all experimental groups (χ 2 = 17.39). A sociodemographic break-down of participants into experimental groups can be found in the Supplemental Materials (S1). With 78% of study participants using online media at least several times a week (S2), reported media use is comparable to the Reuters Digital News Report for Germany (Newman et al., 2020).

Stimulus

To identify stereotypically feminine and masculine topics, a pretest was conducted. In a snowballed online questionnaire, 28 pretest participants rated 10 headlines on a 7-point scale as to whether they thought a headline resembled either a feminine or a masculine topic. Hence, this pretest ensures external validity and also provides estimates as to how stereotypically feminine or masculine each stimulus is perceived. Headlines were originally taken from five German national broadsheets. The two headlines most strongly resembling either a feminine or a masculine topic were, along with their respective articles, chosen for the actual experiment (S3 and S4). Stereotypically feminine, the article “Weiber G’schichten aus aller Welt” (“Women’s Stories From All Over The World”) was used, dealing with female movie directors. Stereotypically masculine, the article called “Was hat der Fußball falsch gemacht?” (“What Did Football Do Wrong?”) was used with a slightly rewritten headline to also include a gender cue (“Die Männer spielen wieder,” “Men at Play, Again”). Both articles were originally written in German language by a male journalist and were shortened to five paragraphs each (stimulus translations: S18 and S19).

Each stimulus was assigned a byline of an alleged author. Based on most popular adults’ first and last names in Germany, thereby omitting any religious names, the female journalist was named “Sabine Schmied” and the male journalist was named “Peter Schmied.” An online search did not yield known journalists with these names. Either author was presented with an image taken from thispersondoesnotexist.com, a Web site showcasing artificially created images of human faces. Both faces looked more or less alike and were presumably of similar age (S3 and S5). Race of the faces was kept constant. A third (control-group) version of the byline read “This text has been automatically generated by a computer” and depicted a gender-neutral placeholder (S11).

User comments were depicted anonymously below the article and made-up yet inspired by real-world commentary. In contrast to a recent study where the authors argued that their “single-shot exposure” to one-comment might have been not enough (Searles et al., 2020: p. 958), three comments homogeneous in their critical tone and sentiment were presented. The non-sexist version did neither express feedback in a sexist way nor address the author directly whereas sexist comments were prolonged with ambivalent sexist expressions (Chen et al., 2020) and grammatically gendered insults (S3 and S7). In conjunction with a computer author, only non-sexist versions were used.

Manipulation checks

To keep additional measures as brief as possible, three manipulation checks had been implemented. First, participants were asked who the article’s author was. Considerable shares of 21 (female condition), 26 (computer), and 27 (male) percent of respondents reported to not remember whereas 79 (female), 71 (computer), and 67 (male) percent correctly identified the author. While a chi-square test was disallowed, Fisher’s Exact test was not significant. Second, participants were asked to recall the article’s topic which led to correct answers in all (football) and 98% (movies) of all cases. Again, Fisher’s Exact test proofed independence from the experimental group. Third, participants were asked to rate user comments as sexist on a total of seven pairs of adjectives, thereby following suggestions by the Council of Europe on the definition of sexism 2 . In all seven instances, comments in the sexist condition were rated higher than in the non-sexist condition (for t tests see S13).

Measures

Source credibility is usually considered a two-dimensional construct of perceived expertise and trustworthiness (Jahng and Littau, 2016). For expertise, five pairs of opposing adjectives were employed as suggested by Jahng and Littau (2016): inexperienced/experienced, unskilled/skilled, inactive/active, unqualified/qualified, and incompetent/competent. For trustworthiness, four additional pairs from Wölker and Powell (2018) were added: fair/unfair, inaccurate/accurate, does not tell the whole story/tells the whole story, and cannot be trusted/can be trusted. Finally, a last pair of unlikable/likable was added to account for likability (Rudman et al., 2012). The total list of 10 pairs of opposing adjectives was presented in German on a semantic differential ranging from −2 to +2. The scale’s reliability was sufficient (Cronbach’s α = 0.89) without any item’s dropping significantly improving it. Bartlett’s test of sphericity (χ 2 = 2062.6; p < .001) and the Kaiser-Meyer-Olkin index (KMO = 0.91) indicate high levels of shared variance and thus suitability for reduction in dimensionality. As a scree plot also suggests one component, a principal component analysis was employed to reduce source credibility down to one standardized index (i.e., M = 0.0, SD = 1.00). 3

Message credibility has been considered a unidimensional construct. As such, five pairs of adjectives from Wölker and Powell (2018) and Roberts (2010), unbelievable/believable, inaccurate/accurate, not trustworthy/trustworthy, biased/unbiased, and incomplete/complete, were translated into German and presented on a semantic differential from −2 to +2. Again, reliability was sufficient (Cronbach’s α = 0.84) and Bartlett’s test of sphericity (χ 2 = 866.5; p < .001) as well as the Kaiser-Meyer-Olkin index (KMO = 0.85) yielded satisfying results. Like source credibility, message credibility was also reduced to one standardized component based on a principal component analysis.

For easier interpretation and readability, a new topic-alignment variable was created as experimental combination of topic (football and movies) and author (female, male, and computer). The new topic-alignment variable was coded as stereotypical (female author and feminine topic; male author and masculine topic), counter-stereotypical (female author and masculine topic; male author and feminine topic), or neutral (computer as author). This step was also necessary in order not to obstruct effects of stereotypical alignment.

The questionnaire included three control constructs. First, personal identification with one’s gender, which has previously been shown to interfere with individual gender viewpoints (Becker and Wagner, 2009). Following Becker and Wagner (2009), gender identification was measured with four statements per gender 4 , for each of which participants of the respective gender were asked to express their agreement on a 5-point scale from 1 (“strongly disagree”) to 5 (“strongly agree”). Reliability was high for both men (Cronbach’s α = 0.82) and women (α = 0.88), mean indices yielded a slightly below-center distribution for men (M = 2.8; SD = 1.29) and a higher distribution for women (M = 3.7; SD = 1.17). Second, sexist attitude has also been shown to affect attitude toward gender (Becker and Wagner, 2009; Mudrick et al., 2017). Sexist attitude was operationalized through the Ambivalent Sexism Inventories to measure a combination of hostile and benevolent sexism against women (Glick and Fiske, 1997) and men (Glick and Fiske, 1999). Both inventories were translated into German and shortened to six statements per inventory. 5 Participants were asked to rate their agreement, again, on 5-point scales. Reliability was satisfactory for ambivalent sexism against women (Cronbach’s α = 0.71) and slightly less so for ambivalent sexism against men (α = 0.60), a mean index (as suggested by the inventories) yielded above-center distributions for ambivalent sexism against both women (M = 3.7; SD = 0.77) and men (M = 3.8; SD = 0.62). Both scores were highly correlated (Pearson’s r = 0.63; p < .001). Third, interest and involvement in the presented stereotypical topic were asked on 5-point scales, ranging from not at all interested (personally affected) to most interested (personally affected). Interest (M = 2.3; SD = 1.41) and involvement (M = 2.2; SD = 1.44) in football was rather low whereas interest (M = 3.0; SD = 1.18) and involvement (M = 3.3; SD = 1.21) in movies were roughly normally distributed.

Results

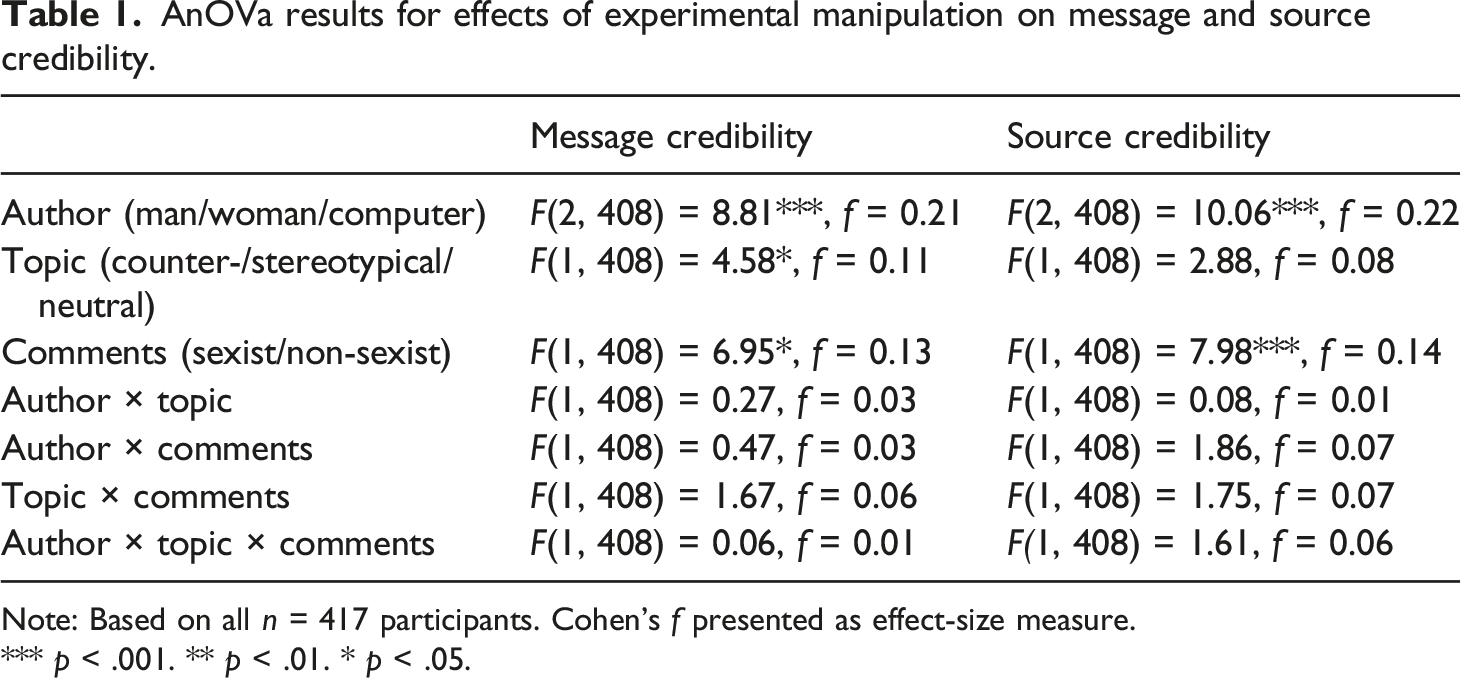

AnOVa results for effects of experimental manipulation on message and source credibility.

Note: Based on all n = 417 participants. Cohen’s f presented as effect-size measure.

*** p < .001. ** p < .01. * p < .05.

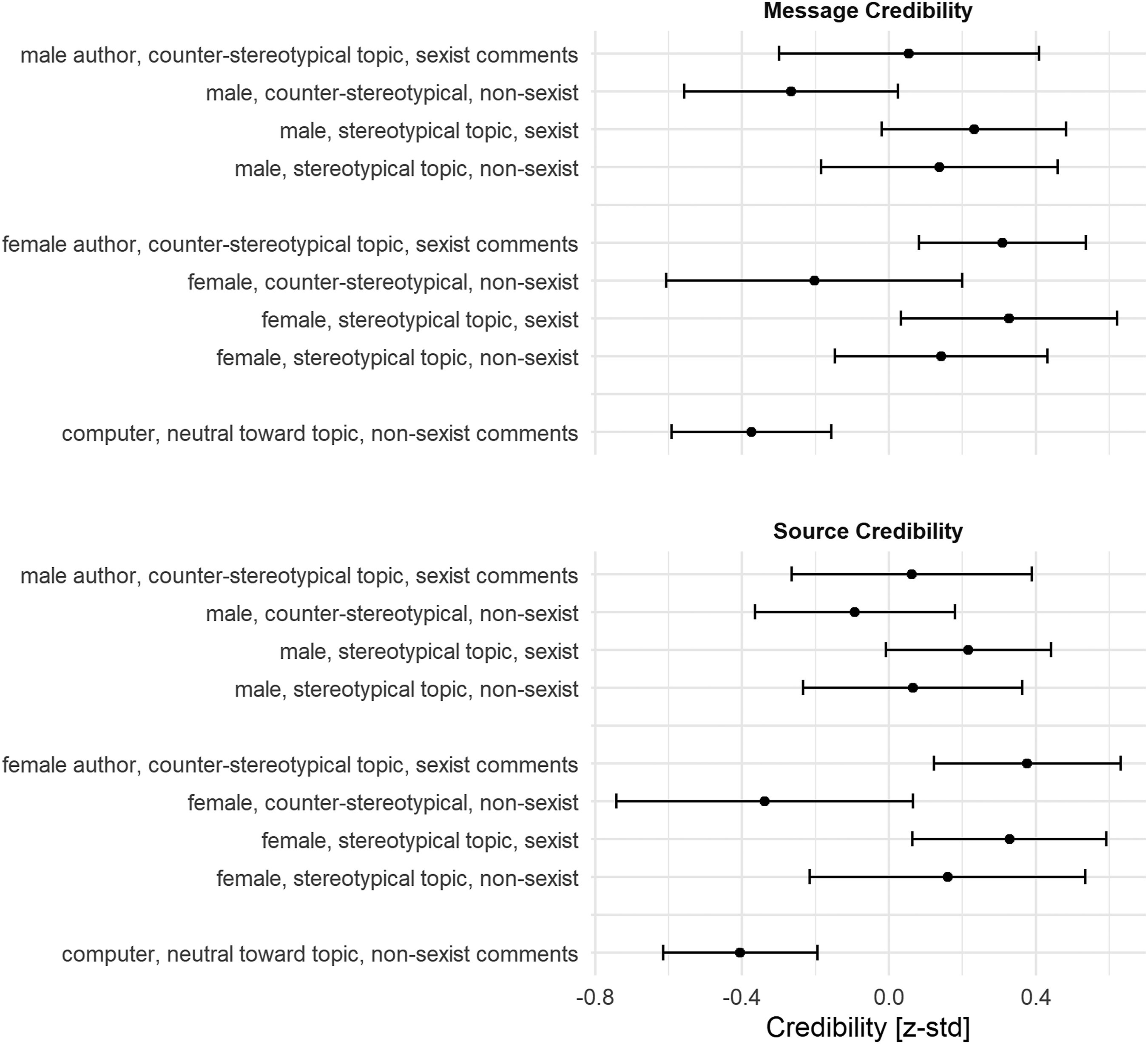

Message and source credibility across experimental groups. Note: Points depict means with appended 95% confidence intervals. Message and source credibility are based on principal component analyses.

Gendered social roles suggest alignment between author-gender and a covered topic to increase both source (H2a and H2b) and message (H3a and H3b) credibility. While author and topic did not show interaction effects, message credibility was affected by the topic (Table 1). Tukey’s HSD post-hoc test did not reveal significant effects, however, with minor indication of relevant contrasts between stereotypical and counter-stereotypical coverage. Results also show that covering the counter-stereotypical topic along with non-sexist commentary led to the lowest credibility scores per gender (Figure 1). Hypotheses on counter-stereotypical work by either female or male journalists being perceived as less credible (both source and message) than their respective stereotypically aligned work finds some descriptive support, particularly when accompanied by non-sexist commentary, but need to be rejected due to a lack of inferential support.

Hypotheses H2c (source credibility) and H3c (message credibility) posed backlash effects from the covered topic toward women. An insignificant interaction effect between author and topic (Table 1; also Figure 1) shows that this was not the case, leading to a rejection of these two hypotheses.

The availability of sexist comments is expected to lower both source (H4a and H4b) and message (H5a and H5b) credibility, particularly so in a backlash effect-like double drawback for women (H4c and H5c). Comments’ main effects are significant for both types of credibility (Table 1), with Tukey’s HSD post-hoc test indicating significant differences in both cases. Apart from the difference between human and computer authors, this is also the strongest effect. Contrary to the assumptions, however, the availability of sexist comments increased source and message credibility when compared to the same article by the same author (Figure 1). This is directionally true for all settings but significant in only one case—when a female journalist counter-stereotypically wrote about a masculine topic. All respective hypotheses (H4a, H4b, H5a, and H5b) are rejected. Notably, if a female journalist’s article was accompanied by sexist comments, both source and message credibility were higher than if a male journalist’s article was accompanied by sexist comments (Figure 1). This not only leads to a rejection of these hypotheses (H4c and H5c) but it also raises the impression of reactance among study participants, particularly toward presented sexism against women.

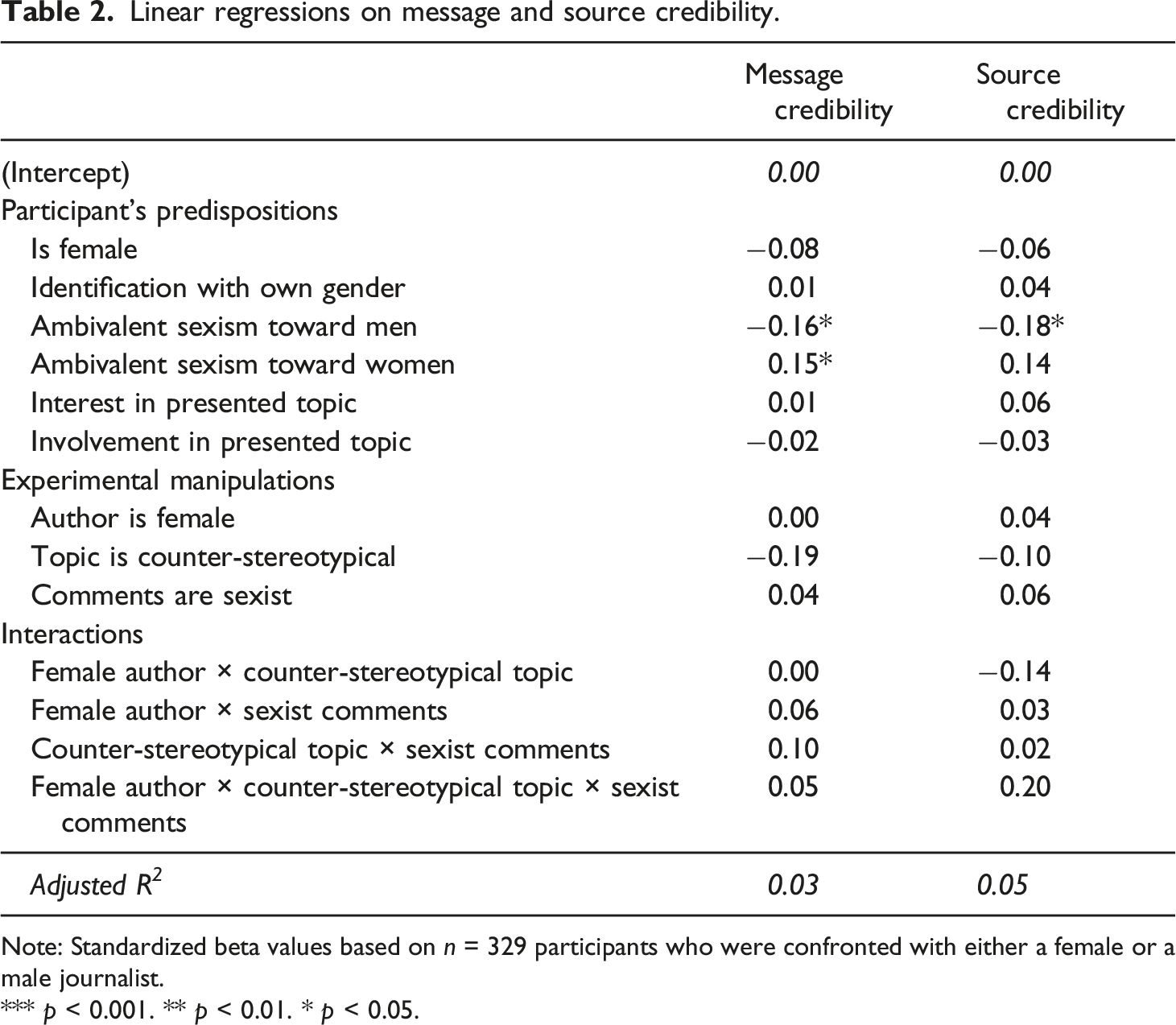

Linear regressions on message and source credibility.

Note: Standardized beta values based on n = 329 participants who were confronted with either a female or a male journalist.

*** p < 0.001. ** p < 0.01. * p < 0.05.

With regard to RQ2, particularly source credibility seemingly profited from a combination of a female journalist, a counter-stereotypical (masculine) topic, and the availability of sexist comments—yet, this effect was not significant. Some of the little amount of explained variance can be attributed to participants’ ambivalent sexism, though, with both source and message credibility being negatively affected by ambivalent sexism toward men and positively affected by ambivalent sexism toward women (S15 and S16). These findings also hold in separate moderation analyses isolating each moderator’s effect (S21). In other words, the more sexism participants showed toward men the lower they rated credibility whereas more sexism toward women actually increased particularly message credibility.

Discussion

Similar to a comparable U.S. study (Searles et al., 2020), this German-based study does not indicate that a female journalist (source) or her work (message) is generally perceived worse than a male colleague or his work, respectively. While some variation in ascribed credibility is visible across the experimental conditions, findings by no means indicate general differences in gender perception (Figure 1). This is very much in line with a recent Danish study (Greve-Poulsen et al., 2021) where the gender of a quoted expert was varied and echoes recent U.S. findings in that being a female journalist today seemingly does not imply belittled credibility. The cross-study compilation of such null findings also indicates absence of potential beta (type II) errors. Although indeed “surprising given an online environment marked by harassment of women” (Searles et al., 2020: p. 958), this may as well be framed a positive societal development in that gendered social roles toward female journalists in Germany might not be as narrow-minded as it presumably was the case some years ago. This is in spite of the fact that the current study’s sample is biased toward higher education.

Significant differences as per author attribution did show nevertheless (Table 1), depicting differences in both source and message credibility between human authors (either female or male) and a computer author with the latter being rated less credible. In line with studies on automated journalism, this indicates that a byline stating that a computer automatically generated an article decreased the article’s credibility. While this replicative result speaks to the current study’s external validity, it also suggests that, in contrast to human authors, computers speak to a different model of cognitive processing, loosely related to a “machine heuristic” (Sundar, 2008: p. 83). Moreover, the findings echo concerns that newsrooms implementing automated journalism might get the impression to be better off by falsely bylining computer-generated articles with human journalists (Graefe and Bohlken, 2020). Such effects are rather small, though, as are all effects across this study.

Arguably then, taking on a counter-stereotypical topic has yielded slightly less source and message credibility for both men and women, if presented with non-sexist comments—yet, this relationship does not entirely stand statistical proof. So while these observations might be the result of chance, they are in line with the aforementioned U.S. study (Searles et al., 2020, Appendix Study 2). Given both studies’ experimental designs and findings, it seems as if a minor negative effect from covering a counter-stereotypical topic on credibility is superimposed by a stronger effect from abusive comments on credibility.

Sexist user comments have shown the strongest effects on source credibility—however, positively, in that sexist comments led to increased credibility. At least three explanations seem feasible. First, given that also non-sexist comments were critical in tone, this study’s contrasting baseline might have been dampened vis-à-vis a neutral or positive comment. Adding sexism, then, might have been the straw breaking the camel’s back in that sexism might have caused reactance among study participants. Second, despite a manipulation check on perceived sexism, it cannot be ruled out that the stimuli’s sexist comments sounded artificial and have caused reactance. Third, prior research suggests user comments to act as heuristic cue for the evaluation of credibility (Sundar, 2008). Given the ongoing public debate around everyday sexism, sexist comments might have triggered a more elaborate processing—assuming that if sexism is blunt and obvious, there might be something to the author. This interpretation finds additional support in that participants spent more time on the stimulus if they were presented with sexist (M = 156 seconds; SD = 89.9) than with non-sexist (M = 135 seconds; SD = 82.4) comments (S17). A loosely related effect was reported by Ziegele et al. (2018, p. 648) who found that “an extremely hostile prime stimulus affected recipients’ prosocial behavior in an opposite way.” Glick and Fiske (1997, p. 131) argued that encounters with hostile and benevolent forms of sexism potentially inform different attitudes for different people. Then, encounters with hostile sexism might act as galvanizing moments for a more thorough elaboration while benevolent forms of sexism might, for some, go unnoticed.

The inverted effects yielded by sexist comments could also suggest that sexist user comments’ corrective function more likely is a combinatory result of comments’ effects and study participants’ attitudes. Similar conclusions have been reached within the field of popularity cues (PCs). Therein, one review of 61 studies on PCs such as likes and shares concluded that “the effectiveness of PCs depends on the general traits or situational interests and characteristics of the user” (Haim et al., 2018: p. 204). This echoes the found effects of study participants’ ambivalent sexism where participants who showed more ambivalent sexism toward women rated (message) credibility higher. This is true even if ambivalent sexism is broken down into benevolent and hostile sexism (S15 and S16). In contrast, an increase in (hostile) sexism toward men coincided with a decline in credibility. In other words, today’s raised awareness about sexism, particularly in such an educated sample, may lead participants to take sexist comments as cues to engage more elaborately with the journalist and their work.

Such an interpretation also finds support from the bystander effect where one’s tendency to rally to a bullied person’s defense is expected to vary depending on a situation’s publicity. Obermaier et al. (2016) showed that intentions to intervene in online cyberbullying situations were higher when feelings of responsibility were elevated, which was the case primarily in situations with small numbers of observing bystanders depicted through varying PCs. In this, both the one-comment stimulus by Searles et al. (2020) as well as this study’s three-comment stimulus resembled situations where previous findings on the bystander effect suggest for participants to more likely intervene.

These interpretations are built on loose empirical grounds and a raised awareness about sexism is speculative at this point. Future research could thus integrate content traits with user characteristics on gendered social roles and sexism in online journalism. Subsequent research might also consider different types of control-group bylines as a gender-neutral author or no byline at all seem just as comparable as the computer byline used in this study. We assumed, however, that a gender-neutral author would potentially raise different stereotypes about queer journalists while no byline would raise irritations about source credibility measures. Other limitations of the current study are its biased sample of highly educated individuals and the lack of a manipulation check for stereotypical perception of the stimulus article. The similarity of findings to the study by Searles et al. (2020), however, speaks to both studies’ validity. It also breaks a lance for pre-registration to encourage publication of null findings as they wind up to an increasingly coherent picture—in this case about the harmonization of general gender perception in online journalism, about a slight backlash in perceived credibility when taking on counter-stereotypical gendered social roles, and about the comparably strong influence of user comments.

Supplemental Material

sj-pdf-1-jou-10.1177_14648849211063994 – Supplemental material for Stereotypes and sexism? Effects of gender, topic, and user comments on journalists’ credibility

Supplemental material, sj-pdf-1-jou-10.1177_14648849211063994 for Stereotypes and sexism? Effects of gender, topic, and user comments on journalists’ credibility by Mario Haim and Kim Maurus in Journalism

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.