Abstract

Online comments and contributions from users are not always constructive nor rational. This also applies to content that is directed at journalists or published on journalistic platforms. So-called ‘dark participation’ in online communication is a challenge that journalists have to face because it lowers users’ perceived credibility of media brands and hinders a deliberative discourse in comment sections. This study examines how journalists perceive themselves in relation to dark participation, what measures they take against it, and how they assess the efficacy of these measures. Based on in-depth interviews (N = 26), we find that journalists overall considered themselves to be effective in handling dark participation. The perceived efficacy differed according to the grade of engagement with users. Journalists who interacted very much or very little with users perceive the efficacy of their interventions to be highest, whilst those with medium levels of interaction rate their efficacy to be lower. Furthermore, the perceived amount of dark participation also affected the perceived efficacy.

The possibilities to contribute to the public sphere are dramatically increased by the interactive potential of the Internet. This also applies to user participation in journalism. Comment sections allow users to actively discuss news articles or complement discourses (Ziegele, 2016) and are intended to be channels for constructive audience feedback (Singer et al., 2011) as well as a forum for deliberative discussions (Braun and Gillespie, 2011). However, they are also enabling ‘dark participation’ (Quandt, 2018), such as attacks on persons or groups, ‘trolling’, strategic ‘piggy-backing’ on journalistic reputation, and attempts to manipulate the audience. For journalistic organizations, these developments have severe consequences as dark participation impacts the discussion quality, and thereof potentially leads to reduced user participation (Stroud et al., 2016; Ziegele, 2016) and negative perceptions of the media brand (e.g. Prochazka et al., 2018).

On a broader level, the active involvement of users in the news making processes and journalistic routines, known as participatory journalism, affects fundamental aspects of journalism (Graham and Wright, 2015; Singer, 2015). For journalism as well as journalism research, the rise of dark participation and the increasing relevance of user participation more generally pose new questions with regard to journalistic roles and practices.

Journalistic roles describe the way journalists do their job. In the empirically recorded self-perception of their role, interaction with users and readers was not of high priority for journalists for a long time (Weaver et al., 2018). Accordingly, the sharp increase in the volume of user comments, some of which are malicious in intent, clashes with journalists’ traditional role perceptions, which do not provide any guidelines for this. It challenges and thus fosters a transformation of traditional ideas about professional journalists’ role and accountability (Carlson and Lewis, 2015).

Without established practices for dealing with such a problem, the first step is to ask what journalists consider to be their task in the context of user participation in the first place. What do journalists feel responsible for? This question has been addressed in the context of user participation in general through research on participatory journalism. However, the increasing prevalence of dark participation compared to the first years of user participation has added a new facet to the phenomenon. Attacks against journalists and the spread of disinformation threaten the core of journalistic work and require journalists to rethink their role regarding user comments. We therefore want to investigate the perceived accountability of journalists in the context of dark participation.

We especially focus on three kinds of dark participation defined as norm-transgressing behaviour violating norms of honesty and emotionality (Frischlich et al., 2019): attacks on journalists and journalistic organizations, incivility and disinformation. Journalists frequently experience personal attacks (Obermaier et al., 2018) and are accused of not reporting accurate or producing ‘fake news’ (Tong et al., 2020). Comment sections are also frequently a place for hate and offensive speech (Ksiazek and Springer, 2020) as well as disinformation, which is spread by coordinated actors (Bradshaw and Howard, 2017) but also users themselves (Osmundsen et al., 2020).

In the face of these kinds of dark participation, journalists not only have to reconsider their role but also have to develop new practices to cope with the development. In journalism research, such practices have been researched under the label of moderation. In bigger news outlets, moderation is sometimes done by community managers who are employed to deal with user-generated content. Other outlets integrate the task into positions such as social media editors or online journalists. In Germany, community management has not been widely established as a specific journalistic role in newsrooms (Koliska and Chadha, 2018).

In the literature, moderation is mainly thought of as a mechanism to improve the quality of discussions and to prevent incivility and hate speech. Empirically, studies differentiated moderation styles, which refer to the willingness of the journalists to interact at eye-level with the users (Frischlich et al., 2019; Ziegele et al., 2018). The means journalists use to interact with the audience and the content of the actual answers they post in their comments are less examined. What information do they post to react to disinformation or attacks against the news outlet? In other areas within journalism, the concepts of fact-checking (Graves and Cherubini, 2016) and transparency (Hellmueller et al., 2013) gained prominence in recent years as means to cope with disinformation and a perceived loss of trust in journalism. We want to investigate whether journalists also use these practices in their dealing with user comments.

However, we are not only interested whether journalists use transparency and fact-checking when interacting with users, but also how they perceive the efficacy of these measures. Do journalist perceive their measures to be effective in coping with incivility, disinformation and attacks on news media? The perceived efficacy of the measures could be crucial since journalists’ perceived efficacy regarding their new accountabilities could also have an impact on the future journalistic handling of participatory journalism.

Taken together, we want to examine (1) what journalists see as their task concerning dark participation, (2) how journalists assess the efficacy of their measures and (3) how this perception is associated with different moderation strategies such as transparency. We used qualitative in-depth interviews with journalists from German news outlets and found that journalists felt responsible for hate speech on their platform but not so much for checking facts in user comments. We also found that the degree of interactivity influenced the efficacy perception.

Journalistic role perceptions and accountability in the face of dark participation

Journalistic role perceptions are closely related to the tasks journalists feel responsible for. In most Western democracies, the context of the current study, a majority of journalists perceive themselves as providers of neutral and precise information (Weaver et al., 2018; Weischenberg et al., 2006). With the rise of participatory journalism, this role has been complemented by responsibilities for user interaction, which involve monitoring and curating user-generated content (Gallagher, 2018), stimulating an active discussion and trying to maintain a constructive discussion climate (see Frischlich et al., 2019).

However, it is not entirely clear whether the accountability perception of journalists is in line with theoretical claims that highlight the importance of user interaction (Lewis et al., 2014). Empirical evidence on the journalistic perception of user comments in general and the perceived accountability regarding the moderation of (negative) comments, in particular, is ambivalent.

On the one hand, studies demonstrated that journalists are increasingly aware of the potential of user comments and interact at least sometimes with their audience in comment sections (Chen and Pain, 2017; Graham and Wright, 2015; Nielsen, 2014; Santana, 2011). For example, Singer and Ashman (2009) showed that the majority of the interviewed journalists felt a ‘duty to care’ (p. 17) in order to uphold the reputation of their journalistic organization as well as to offer users preferably civil discussions.

On the other hand, journalists often struggle with the implementation of the theoretical ideals of user interaction. In part, they try to defend their role and influence in the news production rather than accepting participatory elements as an inherent part of online journalism (Heinonen, 2011; Hermida, 2011; Lewis et al., 2014). Thus, journalistic organizations often only provide comment sections as a confined space for participatory journalism (Graham and Wright, 2015; Hanitzsch and Quandt, 2012), in which up to 80% of journalists rarely or never interact with users (Santana, 2011). 33.3% even strongly oppose to respond (Chen and Pain, 2017). Chen and Pain (2017) and Gallagher (2018) noted that journalists perceive uncivil comments but deliberatively decide not to respond.

Looking closer at perceived responsibilities, Chen and Pain (2017) identified two distinct types. Two-thirds of the journalists rated the interaction with users as necessary for the discussion quality as well as for a closer bond of users with the news site. In contrast, the other third opposed to interact with users and only saw their responsibility in the dissemination of information. These two types are mirrored in related work by Robinson (2010). So-called ‘convergers’, journalists who wanted a broader scope of action for users of news sites, viewed user interactivity as a journalistic responsibility. Thus, they were willing to interact with their audience. In contrast, journalists who had a traditional hierarchical journalist-audience relationship conception, so-called ‘traditionalists’, often ignored users.

Taken together, the studies suggest that perceived responsibilities differ according to how journalists understand their roles. Whether journalists engage in user interaction depends on how they perceive their role and which accountabilities they derive from that role. For example, a journalist with a traditional role understanding as information provider might not consider any interaction even in the face of dark participation. In contrast, journalists perceiving themselves as community builders (Lewis et al., 2014) would feel the obligation to intervene interactively if they perceive their rules to be broken. Therefore, the investigation of journalists’ specific accountability perception is crucial because it is a prerequisite for their applied moderation strategy. This is especially true for dark participation, which affects journalistic core values such as the distribution of accurate and unbiased information. Previous research mainly asked for the measures taken to cope with dark participation and did not ask for journalists’ perceived accountability, even though this is the prerequisite for taking action. Taking one step back, we therefore asked:

RQ1: What accountabilities do journalists perceive with regards to dark participation?

Moderation strategies against dark participation

Dark participation poses a particular challenge to journalists because, by attacking journalists or journalistic organizations and spreading distorted information, it affects areas that are part of journalism’s core business. Therefore, journalism developed practices that aim to preserve control over its comment sections. These practices are usually captured under the umbrella term moderation describing ‘governance mechanisms that structure participation in a community to facilitate cooperation and to prevent abuse’ (Grimmelmann, 2015: 47). The literature further distinguished different moderation styles, which apply to post-moderation of already published comments and describe how journalists in general cope with user-generated content in their comment sections. Authoritative moderation refers to establishing a hierarchy between moderators and commenters without much interaction for example, by deleting comments or admonishing users (Frischlich et al., 2018). An interactive moderation style is characterized by moderators relating to posts of users and engaging in bi-directional communication (Frischlich et al., 2018) to facilitate a discussion (Suau et al., 2019) for example, by giving additional information. Applied to dark participation, we want to look at moderation strategies journalists use against (1) attacks on journalists and journalistic organizations, (2) incivility and (3) disinformation.

Moderation strategies against incivility: For incivility such as hate speech among users, research has shown that journalists mainly use authoritative measures to limit illegal content (Pöyhtäri et al., 2014), or to manage norm-transgressing behaviour such as hate speech (Frischlich et al., 2019). Usually, authoritative moderation is used in the form of shutting down comment sections or excluding users (Thurman et al., 2016). Qualitative results of Loke (2012) and quantitative findings of Bergström and Wadbring (2015) revealed that a majority of journalists were in favour of filtering and deleting in the case of uncivil comments.

Moderation strategies against attacks on journalism: Previous studies showed that the term ‘fake news’ is frequently used in comment sections (Boberg et al., 2018), and journalists themselves often report that users attack journalistic organizations (Obermaier et al., 2018). In these cases, simply deleting content could fail to have the desired effect. Instead, an interactive strategy that is more substantive to the comments may be more promising. Beyond research on moderation, a concept has received increased attention in journalism research in recent years that could also apply to attacks on journalists: transparency. Hellmueller et al. (2013) argued that over time, journalism is shifting from objectivity to transparency as the central norm of the profession. Other authors emphasize that transparency gains importance as it fosters public accountability (Singer, 2007) and serves as a means to maintain professional autonomy (Allen, 2008; Curry and Stroud, 2019). Some studies also found that citizens expect journalism to be transparent on a general level (van der Wurff and Schönbach, 2014) and that transparency increases trust in journalism (Meier and Reimer, 2011; Wintterlin, Engelke et al., 2020). These findings suggest that journalists could adequately react to the challenge of accusations by being transparent about their working practices. This could be achieved by setting up distinguished spaces where newspapers explain how they do sourcing, why they included a story in the news, or why specific comments are moderated. Additionally, moderators can explain users why the news outlet acted like this in replies to the comments. However, if transparency as part of an interactive moderation strategy is regularly used to cope with dark participation is largely unexplored in previous studies.

Moderation strategies against disinformation: A third challenge journalists are confronted with is an online environment characterized by information from unreliable sources (e.g. manipulative agents spreading distorted information and trying to influence public opinion). Such problematic information is violating the norm of honesty (Frischlich et al., 2019) and also impacts comment sections of newspapers. The establishment of pro-active fact-checking is the most visible means journalism has implemented. Newsrooms increasingly feel the need to engage in fact-checking editorially and reach a broad audience (Graves and Cherubini, 2016). Following Amazeen (2020), fact-checking can be understood historically as a democracy-building tool and emerges in an environment where democratic institutions are under attack. Pingree et al. (2018) noted that journalism could benefit from fact-check stories through an increase in media trust, epistemic political efficacy and future news intent. Fact-checkers are often perceived as journalistic activists (Haigh et al., 2018) who go beyond what traditional newsrooms are able to accomplish. However, some news organizations also implement fact-checking departments in their newsroom (Humprecht, 2019). If fact-checking and communicating the results to users is also used by moderators has not been examined before.

The perceived efficacy of interventions against dark participation

Going beyond descriptive approaches to capture moderation styles used by journalists, research has shown effects of the moderation style on user participation. Interactive moderation increased the level of deliberation in comment sections (Stroud et al., 2015), strengthens the perceived social presence of news organizations (Marchionni, 2015) and decreases the level of incivility (Ziegele et al., 2018).

Taking the journalists’ perspective, some studies revealed that journalists are discouraged by a growing culture of harassment and abuse (Wright et al., 2020) as well as the number of comments (Meyer and Carey, 2014). Further, Bergström and Wadbring (2015) found that journalists who frequently use social media are more critical towards the quality of comments. Nonetheless, they feel the obligation to keep control of the comment sections to preserve the authority and quality of the news organizations’ brand (Hermida and Thurman, 2008; Singer, 2010). Whether this is successful from the journalists’ point of view was not in the focus of previous research. Do journalists perceive themselves as an impactful actor in the context of dark participation, or do they have the impression that they cannot make much of a difference?

In our study, we refer to the concept of efficacy to capture the journalists’ point of view on moderation against dark participation. According to Bandura (1977), self-efficacy as the perceived ability to successfully perform a task is an essential prerequisite for actual action. Following this definition, efficacy is separated into two aspects: ability and success. These aspects mirror the differentiation of internal and external efficacy in the political context (Craig and Maggiotto, 1982). Ability or internal efficacy refers to the perception that one is qualified to act in a field and possesses the basal knowledge to participate. External efficacy refers to the feeling that the actions and attitudes resonate in the field and are taken account by relevant actors. We want to apply the concept of efficacy to journalists and ask about their perceived efficacy in the context of dark participation. Do they feel qualified to cope with dark participation? And do they feel that their participation in discourses resonates and influences future actions of spreaders of dark participation?

As a third aspect, Bandura mentions perceived self-efficacy as an essential prerequisite for future action. Especially for journalists, who are used to preserve professional control over the process (Singh and Dillon, 2012), the perceived efficacy of the measures taken might be crucial (as shown with regard to citizens’ future participation intent by Halpern et al. (2017). Low levels of internal and external efficacy in dealing with aspects of participatory journalism could therefore be an explanation why journalists do not interact anymore and lose control over comment sections. A low level of internal as well as external efficacy is also relevant because of a second aspect: career commitment. Low levels of internal and external efficacy may push young journalists out of their jobs (Reinardy, 2011) and were linked to job satisfaction respectively burnout (MacDonald et al., 2016).

Taken together, the challenges of incivility, disinformation and accusations respectively possible interventions following from these challenges have been studied in some detail by journalism research. However, there is still a notable lack of studies focusing on how journalists perceive the efficacy of their interventions against dark participation. As efficacy is central for the willingness to engage in future actions, it is crucial to understand how they perceive the efficacy of their measures in the context of dark participation. Therefore, we derive the research question:

RQ2: How do journalists assess the efficacy of their moderation as intervention against dark participation?

RQ3: How is the perceived efficacy associated with the use of different intervention strategies?

Method

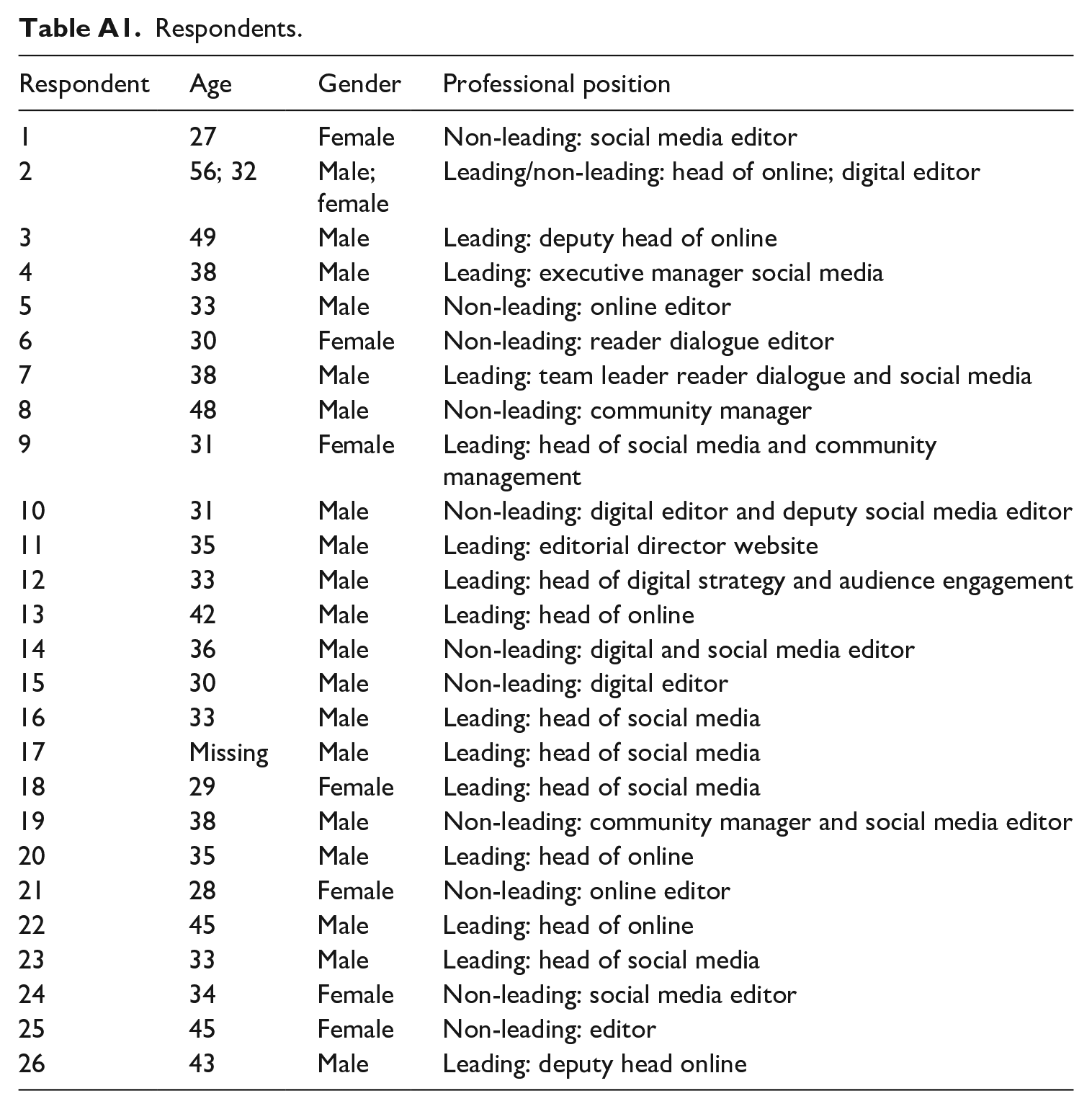

To answer our research questions, which aspects of dark participation journalists feel responsible for and how they perceive the efficacy of their measures, we conducted a series of semi-structured, in-depth interviews (N = 25) with journalists responsible for user interaction in German newspapers. The selection of interview partners followed a multi-level procedure that samples journalists from a wide range of newspapers and groups them in various ‘clusters’, combining features like political leaning, influence on the public opinion and trustworthiness as perceived by the general public (for details, see Frischlich et al., 2018). Within each selected newspaper, we interviewed the journalist responsible for user participation. These were only in some cases community managers. Depending on the structure of the newspaper, the journalists had different job descriptions such as digital editor, head of social media, or community manager. Especially in smaller news outlets, there are often no special roles of community managers and the moderation is distributed among the online journalists. The interviews were conducted by three team members from April to May 2019 via telephone. Table A1 (see Appendix) provides an overview of the participants and their characteristics. The average length of the interviews was 38 minutes and 57 seconds (range 1810–5700 minutes). They were transcribed for analysis. The pilot-tested, semi-structured topic guide included questions on interactions with users in general, dark participation, its consequences for the work process, and the efficacy of various measures, including participative moderation, transparency about journalistic working practices and fact-checking. To measure the internal efficacy, journalists were asked if they feel personally qualified to cope with dark participation. To measure the external efficacy, the journalists were asked if they perceive the individual measures to make a difference for user participation in their comment sections.

The data analysis followed an abductive approach (Timmermans and Tavory, 2012), which starts with deductively determined categories from the guideline, which are combined with inductive categories that emerged during the coding of an initial subsample. In the following, quotes were sorted to first-order concepts and more granular second-order themes following the principles of a qualitative content analysis (Mayring, 2010). This procedure allowed the researchers to identify general trends in the data with regard to the main categories. In particular, we identified similarities in several main categories: level of being affected by dark participation, accountability, interventions performed, the external efficacy of the interventions and the perceived internal efficacy. To provide the reader with an impression of the numerical distribution of the responses, we decided to include them in the description of the results. These numbers do not allow statements about journalists interacting with audiences in general, but the sample of 25 experts for user participation from a wide range of German newspapers nonetheless allows to identify trends worth investigating in future studies.

In a second step, we tried to identify similarities of journalists with regard to their accountability and efficacy perception. This analysis does not identify similarities within categories but between respondents. Using this two-level approach, we were able to identify both trends in categories and types of journalists who react similarly to the challenge of dark participation.

Results

The perceived accountability for interventions against dark participation

Exploring RQ1, we asked what kind of dark participation journalists feel responsible for. We found the degree of perceived responsibility to vary strongly according to the respective type of dark participation. Most journalists stated high accountability for distorted information (n = 15) and fake news-accusations addressed to them personally or their news organization (n = 14). These high levels of perceived responsibility stemmed from an inner conviction, that is, an internal motivation and necessity to take action. It was justified with the norm of fact-checking, as a respondent explained, ‘This is an editorial duty for us and it is done regularly’ (male, 38, leading). For incivility, the group of journalists who felt a high responsibility is very small (n = 6). On the other hand, only three journalists stated that they do not feel responsible at all. If one further asks what perceived accountability depends on, one can use the distinction between external and internal motivation as a basis (Plant and Devine, 1998). It distinguishes motivations by whether they arise from an internal conviction or external pressure. Based on this distinction, the journalists were rather externally motivated by the amount of hate speech and not because of a professional, inner conviction. ‘I also find it difficult to say that it’s a pure editorial task to take action against it and somehow contain it for the own community’ (female, 29). Thus, the perceived accountability was more a reactive one as a journalist stated: ‘delete insults, but you don’t have to invest more’ (male, 31).

The perceived efficacy of moderation strategies

RQ2 concerns the question of how the journalists assess the efficacy of their interventions. Overall, the majority of journalists (n = 13) perceived themselves in general as highly effective concerning their interventions against dark participation.

‘I think that all this fake news, opinion making is a phenomenon that the internet simply brings with it and that you have to live with and learn to deal with. And I believe that if you deal with it, you can learn to deal with it and to a certain extent control something like that for your platform.’ (male, 40).

Some perceived their interventions to be moderately effective (n = 8) and only five journalists mentioned a feeling of powerlessness because of unreasonable users or aspects of technical feasibility. One journalist explained, ‘Well, I definitely don’t have the power. Sometimes there are situations where you just have to keep your feet still and then people have to keep on discussing among themselves.’ (female, 27)

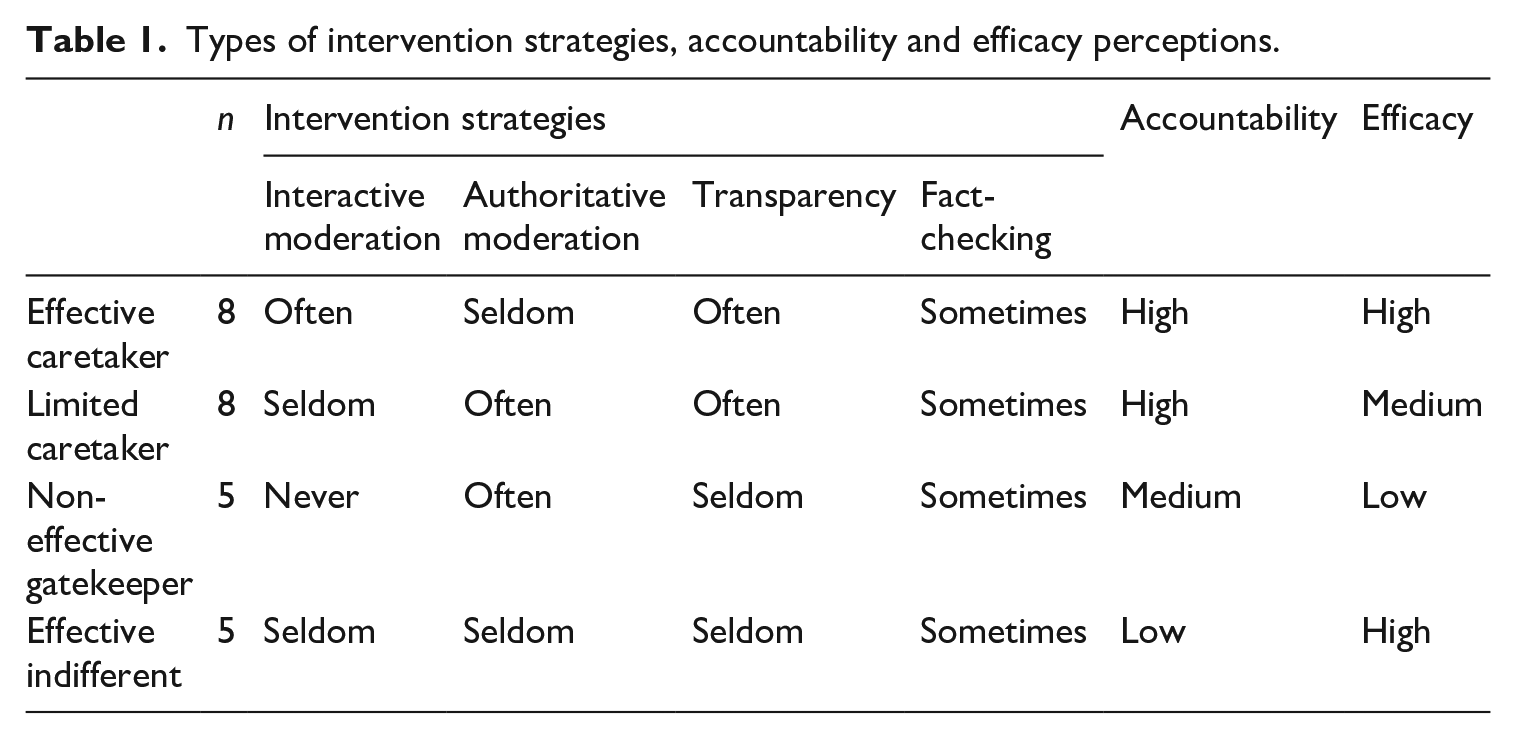

However, as the perceived efficacy varies depending on the specific intervention type, a typology approach was used to evaluate how the intervention strategies are linked to the perceived efficacy and thus, which interventions journalists perceive to be most effective.

We sorted the respondents according to the interventions they performed and afterwards interpreted the categories of efficacy and accountability.

We found four types of combinations (Table 1) and labelled them: (1) effective caretaker, (2) limited caretaker, (3) non-effective gatekeeper (4) effective indifferent. As we did not find systematical differences with regard to fact-checking, we focused the description of our analysis on moderation and transparency. In terms of fact-checking, almost all respondents across all types reported that counter-checking comments occurs at least sometimes. There seems to be a consensus that checking facts in user comments is sometimes necessary for moderation. ‘Claims are often written in comments. I then look at it and, if it’s obvious to me that it’s a fake claim, I try to argue against it and, in individual cases, I also do research and then debunk the comment.’ (male, 48)

Types of intervention strategies, accountability and efficacy perceptions.

Effective caretaker: Participants of the first type ‘effective caretaker’ reported high levels of accountability as well as efficacy. A typical representative of this type was 35 years old, worked mainly for a highly influential conservative newspaper, and was in a leading position. The respondents of this type were mainly motivated intrinsically to counter dark participation because they felt the need to protect deliberation in comment sections. ‘I think that the readers want to exchange views on this, and that they also see our platform as a platform where this can be done, is very, very important to me.’ (female, 31). Thus, they reported the highest accountability to intervene: ‘After all, as journalists we are also responsible for a lot of education, aren’t we? [. . .] Especially about fake news and so on. And then to clear up and report the truth or the state of facts. That is actually and has always been the task of journalists and I think that’s even more the case today.’ (female, 28)

These high levels of accountability were reflected in a variety of measures against dark participation. All the respondents of this type interacted with their users in their moderation.

‘A very important and decisive point to make the comment section constructive is that the readers know that they are getting feedback and that they are not speaking into an empty space, but that something is coming back.’ (female, 31)

However, all of them at least occasionally used authoritative moderation when they blocked content that violated their netiquette. The respondents also reported many transparency efforts to cope with allegations. Five of eight even established separate formats to provide the readers with information about the journalistic work. These measures ranged from offering explanations for deleting a comment to livestreams from editorial meetings. Seven of eight perceived to be highly affected by the amount of negative comments. Nonetheless, they rated their own measures to be highly effective: ‘The moment I believe that we would no longer be able to control the situation, we would either have to think about deleting the account, because we can no longer control it, because that would damage the brand.’ (male, 33)

Not only their external efficacy perception was high, but they also reported the highest internal efficacy regarding their qualifications to cope with dark participation.

Limited caretaker: In contrast, participants of the second type, ‘limited caretaker’ (n = 8), reported mixed external and internal efficacy using primarily authoritative and only partly interactive moderation. ‘Of course, we delete quite vehemently if it gets really bad or goes below the belt and moderate and try to answer if we see any sense in it.’ (male, 43) Although they reported high levels of accountability, they either lacked the staffing or the belief in the extensive use of interactivity. Additionally, in contrast to the first type, accountability was driven by external rather than internal factors. ‘I also find it difficult to say that it is a purely editorial task to take action against it and somehow contain it for your own community.’ (female, 29). They seemed to be in a position of justification which lead them to use authoritative moderation and, remarkably, transparency. Six out of eight established separate formats for explaining journalistic work to users. And all of them implemented some kind of transparency in their moderation. Journalists of this type were on average 36 years old and worked in a leading position for conservative and liberal media, which were only moderately influential.

Taken together, both types felt the need to intervene against dark participation. However, the grade of interactivity they used in their moderation differed. The results showed that those who interacted more with users are also the ones who perceived their actions to be more effective.

Non-effective gatekeeper: The third type ‘non-effective gatekeeper’ (n = 5) reported medium levels of accountability. In contrast to the first two types, the perceived accountability did not result in any interactive moderation. ‘Starting to comment does not help, so at least not to the extent that we can do this here.’ (male, 34). Also transparency efforts to explain journalistic practices to the users were less common compared to the first two types. When transparency was used occasionally, it was mostly with regard to the sources of the coverage. Regularly, the respondents of this type moderated authoritatively by deleting content. However, the strategy did not work from their perspective. The respondents reported a low level of efficacy perception. ‘You don’t usually have control, yes. We have already deleted an article, simply because we could not get a handle on it at all.’ (female, 45). Also their internal efficacy perception was only medium. A typical representative of this type was 38 years old and worked mainly for conservative and liberal newspapers of low influence.

Effective indifferent: The fourth type ‘effective indifferent’ (n = 5) did not feel accountable for taking action against the different forms of dark participation. Consequently, they used neither interactive nor authoritative elements extensively. Instead they mainly relied on pre-moderation of content before publication. A typical representative of this type was 40 years old and worked for a conservative newspaper. They were mainly motivated externally. ‘Our feeling is that now it’s no longer just that you’re doing research to get a story around, but that you’re also doing research because you’re worried that someone might be out to get you.’ (male, na). Nevertheless, efficacy was perceived to be high, which can be explained by the low amounts of dark participation the respondents of this type perceive. ‘I think because we have a very well-structured comment system, that we don’t have to deal with a lot of comments every day, we have a relatively good overview.’ (male, 30). Asked about their internal efficacy, they reported mixed levels regarding their ability to cope with dark participation.

Taken together, two intervention types reported high efficacy perceptions: 1) Participants of the first type who combined interactive and authoritative moderation with many transparency efforts and 2) participants of the forth type who were characterized by the lowest engagement in all intervention strategies.

Discussion

Research on the understanding of journalistic roles suggests that some journalists have not yet accepted interaction with users as part of their role as a journalist (Hermida, 2011). As a result, these journalists are extrinsically motivated to interact with users, and accordingly, their accountability perceptions are low (Santana, 2011). It can be assumed that they do not perceive their interventions to have great effect. In the present study, however, we focused on those for whom an intrinsic motivation and accountability perception may be most beneficial, as it is part of their job description: the ones responsible for user interaction.

Previous studies (e.g. Singer, 2010) demonstrated journalists to see the flood of negative comments as a challenge because these comments damage the media brand and contribute to a poisoned opinion climate. As a result, such comments make constructive discourse more difficult, and naturally, journalists call their meaningfulness into question. We therefore paid special attention to this type of user comments and asked journalists how they perceive their responsibility to deal with them, and how they assess their measures. This adds to the previous literature which mainly focused on the types of moderation strategies journalists employ (e.g. Wintterlin, Schatto-Eckrodt et al., 2020) and how users perceive them (e.g. Curry and Stroud, 2019).

Confirming the findings of previous studies on accountability with regard to user participation (Chen and Pain, 2017; Robinson, 2010), our study reveals the perception of a responsibility to take care of dark participation to be located on a continuum. There is notable variation according to the type of dark participation. Most of them stated that they perceive a high necessity to take action against the spread of distorted information and accusations against the newspaper. For other forms of dark participation, that is, hate speech and social bots, respondents in our sample stated only low or medium levels of accountability perceptions. The need to take care of hate speech and bots is mostly rather extrinsically triggered and reactive. In general, compared to studies on journalistic handling of user comments in general (Chen and Pain, 2017; Santana, 2011), the interviewed journalists felt more responsible for engaging with user comments if they contain dark participation.

In terms of measures that derive from this responsibility, we see little variation: They mainly apply moderation of their own comment section and make journalistic work processes transparent. In doing so, they hope to curb attacks against their own newspaper and distorted information in particular.

However, the perceived effectiveness of interventions differs: The types of journalists who interact with the users either very much or very little are the ones who consider their measures to be most effective. Journalists who use many interactive features in their moderation and try to explain journalistic work with the help of transparency strategies perceive their measures as being well received. Similarly, journalists who act very restrictively and interact little with users also regard their measures as being successful. This can be explained by the fact that journalists in the latter group have less exposure to (and experience with) dark participation. A very restrictive way of dealing with user comments seems to work from their perspective. Nevertheless, this finding that those who engage in little to no measures are among those who perceive themselves to be most effective gives rise to further questions. For example, the question arises as to whether the efficacy of their moderation is equally well assessed from the perspective of the audience. Or whether they are actually confronted with so little dark participation that there is little need for them to apply the measures.

In contrast, journalists who interact with the audience but mainly use authoritative intervention styles are those who perceive their measures to be least effective. This finding corresponds with the results of studies on the users’ perspective (Ziegele et al., 2018), which also found that an authoritative way of moderation lowers the discursive quality and increases incivility. Future research could examine why journalists nonetheless apply an authoritative moderation style when interacting with users. Structural factors on the side of media organizations as well as individual role perceptions could play a role here (Wintterlin, Engelke et al., 2020).

The findings of our study contribute to the discussion about the relationship between users and journalists in two ways: First, the results showed that journalists often do not feel responsible for dark participation happening in their own comment sections. This is particularly thought-provoking when one realizes that journalistic comment sections are places that are intended to enable deliberative discourse and facilitate social understanding. They can hardly fulfil this purpose if media companies simply do not strive for an appropriate culture of discussion.

Second, the interviews revealed possible reasons why this is the case. In particular, the frequency of interaction with users was shown to be a decisive factor. With the exception of journalists, who delete comments in advance without any interaction, a higher frequency of interaction also seems to lead to higher efficacy perceptions. If journalists engage a lot with user comments, for example, make journalistic work transparent to users and communicate interactively with them, they perceive their own measures as particularly effective. The crucial point, however, is that interaction at eye level with users requires willingness on the part of journalists and both time and financial resources that many media companies apparently do not have. What remains are journalists who have internalized interaction with users as part of their role. However, these journalists are still outnumbered in German newsrooms by journalists, who only reluctantly take part in user interaction, even in positions responsible for precisely this interaction. Future research could examine the role of context factors such as the amount of user comments and the temporal and financial capacities (Ihlebæk and Krumsvik, 2015) as well as factors on the side of individual journalists and journalistic organizations such as age and editorial line that may predict the level of interaction with users and the resulting perceived efficacy.

For future development in the area of user comments on news websites, the results indicate that capacities for user interaction are crucial. A higher interaction with users is associated with higher efficacy perception on the part of journalists, which in turn is necessary for the future willingness to engage with user comments. Alternatively, from the journalists’ point of view, systematic pre-moderation seems to work, which is not realizable for high comment volumes without algorithmic support.

Our study has several limitations, that should be considered when interpreting the findings. The qualitative nature of the study made it possible to investigate the interplay of accountability, efficacy, and interaction with users and to derive hypotheses for future research. However, the data did not allow for statistical testing of these connections. Additionally, we only interviewed German journalists from larger newspapers and the results might not be transferable to other countries with different journalistic cultures (Hanitzsch, 2007) or local newspapers which experience less dark participation (Preuß et al., 2017).

Nonetheless, our study offers first insights into the perceived efficacy of journalists who are confronted with dark participation. The results suggest that journalists do not feel ‘lost in the stream’, but that the interaction with users actually improves their efficacy perceptions.

Footnotes

Appendix

Respondents.

| Respondent | Age | Gender | Professional position |

|---|---|---|---|

| 1 | 27 | Female | Non-leading: social media editor |

| 2 | 56; 32 | Male; female | Leading/non-leading: head of online; digital editor |

| 3 | 49 | Male | Leading: deputy head of online |

| 4 | 38 | Male | Leading: executive manager social media |

| 5 | 33 | Male | Non-leading: online editor |

| 6 | 30 | Female | Non-leading: reader dialogue editor |

| 7 | 38 | Male | Leading: team leader reader dialogue and social media |

| 8 | 48 | Male | Non-leading: community manager |

| 9 | 31 | Female | Leading: head of social media and community management |

| 10 | 31 | Male | Non-leading: digital editor and deputy social media editor |

| 11 | 35 | Male | Leading: editorial director website |

| 12 | 33 | Male | Leading: head of digital strategy and audience engagement |

| 13 | 42 | Male | Leading: head of online |

| 14 | 36 | Male | Non-leading: digital and social media editor |

| 15 | 30 | Male | Non-leading: digital editor |

| 16 | 33 | Male | Leading: head of social media |

| 17 | Missing | Male | Leading: head of social media |

| 18 | 29 | Female | Leading: head of social media |

| 19 | 38 | Male | Non-leading: community manager and social media editor |

| 20 | 35 | Male | Leading: head of online |

| 21 | 28 | Female | Non-leading: online editor |

| 22 | 45 | Male | Leading: head of online |

| 23 | 33 | Male | Leading: head of social media |

| 24 | 34 | Female | Non-leading: social media editor |

| 25 | 45 | Female | Non-leading: editor |

| 26 | 43 | Male | Leading: deputy head online |

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Federal Ministry of Education and Research (BMBF) via the grant number 16KIS0496, ‘PropStop: Detection, Proof and Combating of Hidden Propaganda Attacks via Online Media’.