Abstract

Drawing on scholarship in journalism studies and the sociology of expectations, this article demonstrates how news media shape, mediate, and amplify expectations surrounding artificial intelligence in ways that influence their potential to intervene in the world. Through a critical discourse analysis of news content, this article describes and interrogates the persistent expectation concerning the widescale social integration of AI-related approaches and technologies. In doing so, it identifies two techniques through which news outlets mediate future-oriented expectations surrounding AI: choosing sources and offering comparisons. Finally, it demonstrates how in employing these techniques, outlets construct the expectation of a pseudo-artificial general intelligence: a collective of technologies capable of solving nearly any problem.

Keywords

Introduction

Over the past 10 years, artificial intelligence (AI) has become a major concern across industry, government, and academia. Rather than a single technology, AI is a loosely defined set of algorithms, techniques, and technologies that offer a powerful ‘mathematical method for prediction’ (Broussard, 2018: 32). In the United Kingdom, amid the success of companies like DeepMind, policymakers have identified AI as a major area for state investment and oversite. In April 2018, the Government published a national strategy for AI, calling for a wide range of policy interventions to facilitate AI development (Clark et al., 2018). Some went as far as to suggest that AI research and development can help mitigate the damaging impacts of Brexit (Marlow, 2018).

Discussion of AI continues to infuse public conversation. Scholars, politicians, and public commentators are debating everything from the geostrategic imperatives of AI, to the costs and impacts of automation, to the potent dangers of algorithmic discrimination. While this conversation has become quite diverse, much of the public debate hinges on expectations of future developments of AI. Whether debating the effects of the integration of driverless cars that have not yet been fully engineered and integrated, or the concern over superintelligent AI systems that may or may not ever exist, the future looms heavily over present consideration of AI. Indeed, scholars have recognized that expectations of future possibilities have always been central to how AI is theorized and developed (Graubard, 1988; Guzman, 2018).

This prevalence of expectations matters because expectations matter. Expectations concerning new and emerging technologies can have profound influence on public understanding and on public meaning (Scheufele and Lewenstein, 2005). Beyond the recognition that ‘dreams’ of AI’s future have shaped research direction and priorities (Ekbia, 2008: 2), scholarship in the sociology of expectations has demonstrated that expectations can shape the innovation, development, and design of new technologies (Brown et al., 2000) by mobilizing professional networks, helping experts chose between alternatives, and legitimizing certain technological solutions (van Lente, 2012).

Journalism is a frequent site for expectations of the future – especially those involving emerging technologies (Kitizinger and Williams, 2005). Indeed, Neiger has suggested that ‘it might be claimed that one of the main roles of journalism is to refer to future events’ (2007: 313). While some have considered how future-oriented discussion in news might influence public understanding of new technologies, little is known about the wider influence of journalistic expectations (Nerlich and Halliday, 2007). Similarly, we know little about either the expectations surrounding artificial intelligence in news, or how news outlets mediate, amplify, and influence those expectations in ways that can shape their impact in the world. Given the extraordinary promise seen in AI, it presents a useful case for investigating how news media can shape impactful expectations around new and emerging technologies.

This article undertakes a critical discourse analysis of a set of strategically selected news articles from the first 8 months of 2018 to better understand the role news outlets play in mediating expectations of emerging technologies. While analysis identified several recurring expectations in the corpus, this article highlights one: the persistent expectation that ‘AI will be everywhere and in every object, as ubiquitous as oxygen’ (Williams, 2018). In addition to offering AI as a solution to a diverse set of problems – from the profound to the mundane – outlets also mitigate objections or challenges arising along with the widescale integration of AI. In doing so, these outlets help legitimize AI as a good solution to myriad problems – thereby potentially influencing not only public meaning of AI, but also the landscape of technical development. Specifically, we observe outlets mitigating these diverse challenges using two reporting techniques or practices: choosing sources and offering comparisons. Below, we investigate each of these techniques through cases drawn from the corpus of news texts. In doing so, this article demonstrates some of the specific techniques through which news outlets can mediate expectations of new and emerging technologies in ways that matter in the world.

In helping to construct an expectation that AI will be a diverse catch-all solution to a range of public problems, news outlets help produce what we call a pseudo-artificial general intelligence. Artificial general intelligence (AGI) is a hypothesized system that could replicate any task now requiring human intelligence. AGI does not exist. However, we describe how news helps construct an expectation not of a single system that can do everything, but of a set of systems that can. In this way, news offers a discursive backdoor into the fantastic expectation of a perfectly generalizable system. In addition to broadly overestimating the capacity of AI, this expectation elides the true costs, risks, and dangers of integrating AI across sectors.

Literature review

Coverage of emerging technologies

For several decades, scholars have investigated news coverage of emerging technologies. Notably, much of this research has focused on biotechnology (Priest, 2008), nanotechnology (Cacciatore et al., 2012), or communication platforms or technologies (Arceneaux and Weiss, 2010). Although this literature is varied, a few key findings stand out in their relevance here.

First, early research in this area suggested that, at least for topics like nanotechnology, lay people often hold ideas about new technologies even when they do not have extensive technical or first-hand knowledge. These opinions and ideas appear to be heavily influenced by media descriptions and media frames (Nisbet and Lewenstein, 2002; Scheufele and Lewenstein, 2005).

Second, although there are notable exceptions, coverage is often positive. It frequently promotes or highlights the benefits of new technologies (Arceneaux and Weiss, 2010; Metag and Marcinkowski, 2014; Scheufele and Lewenstein, 2005) or employs frames of scientific progress or economic prospects (Nisbet and Lewenstein, 2002).

Third, the coverage of emerging technologies can serve as a useful case to understand broader dynamics within journalism studies. For example, Cacciatore et al. (2012) compare coverage of emerging technologies in print and online – finding important differences in the amount of coverage and the predominant themes. Others study emerging technologies to better understand the relations animating public trust in science and scientists (Anderson et al., 2011) or online incivility (Anderson et al., 2014).

Finally, Kitzinger and Williams (2005) find that media discussion of stem cell technologies mostly concerns their future potential. The observe, ‘the real battleground is about the plausibility of diverse visions of utopia and dystopia and about who can claim the authority (in terms of both morality and expertise) to produce a credible version of the future’ (p. 739).

Coverage of artificial intelligence

Despite the established literature on coverage of emerging technologies, seven decades of scholarship on AI, and strong evidence that news coverage of artificial intelligence has increased recently (Fast and Horvitz, 2017), there remain few systematic or empirical studies of media coverage of AI. Confirming findings about coverage of emerging technologies more generally, Fast and Horvitz (2017) estimate that between 2 and 3 times more articles have been optimistic than pessimistic in the New York Times since 1986. That being said, Chuan et al. (2019) observe that the proportion of negative stories has increased rapidly in the last few years. More generally, both Chuan et al. (2019)’s study of US coverage between 2009 and 2018 and Brennen et al.’s (2018) analysis of UK coverage in 2018 find a persistent business orientation to coverage in terms of article topics, news pegs, and sources.

Several studies consider the framing of AI articles. Charting key words in news articles about AI, Fast and Horvitz note a significant change over the past 30 years. For example, they observe a shift from ‘space’ in the late 1980s, to ‘chess’ in the late 1990s, to ‘driverless vehicles’ now. Chuan et al. (2019) find that more than twice as many stories had episodic framing than thematic framing. In a small content analysis looking for Nisbet’s (2009) eight frames, Obozintsev (2018) observes that the ‘social progress’ and ‘Pandora’s box/Frankenstein’s monster/runaway science’ frames are the most common across a small sample from four US outlets.

In perhaps the most in-depth qualitative analysis, Natale and Ballatore (2017) undertake a thematic analysis of articles on AI from Scientific American and New Scientist between 1956 and 1975. They find three dominant recurring themes: elements and concepts from other fields brought into consideration of AI; discussion of controversies about AI; and a ‘rhetorical use of the future, imagining that present shortcomings and limitations will shortly be overcome’ (p. 1). Situating this persistent invocation of the future, they observe that ‘Predictions and visions of the future are one of the main ways in which mythical ideas about technologies substantiate into particular cultural and social imaginaries’ (p. 7).

Notably, this finding of a persistent future orientation in news reporting on AI aligns with decades of commentary on the discourses surrounding AI more broadly. When the first scholars chose to call their new research program ‘artificial intelligence,’ they were relying on ‘an idea of the future potential of computers’ (Guzman, 2018) rather than on current capabilities. But also, in choosing such an evocative term, they offered an ‘incentive to create a research enterprise of truly mythic proportions’ (Graubard, 1988: V). Ekbia calls AI a ‘dream of the creation of things in the human image, a dream that reveals the human quest for immortality and the human desire for self-reflection’ but one that, more pragmatically, ‘stimulates inquiry, drives action, and invites commitment’ (2008: 2). Guzman observes that these expectations have meant that the daily work of AI and the technologies developed were interpreted and evaluated not only within the context of how they furthered understanding of the mind and technology in the present but also based on how they contributed to the ultimate goal of AI that was yet to be realized (2018: 9).

Looking at contemporary discourses surrounding AI, Elish and boyd note a reliance on ‘potentials of such technologies as much, if not more, than current functionalities,’ reinforcing ‘a blurring of the line between fantasy and reality’ (2018: 62).

Sociology of expectations

Echoing Ekbia’s suggestion that the ‘dream’ of AI has been instrumental in spurring on technological research and development (2008: 2), over the past 20 years, scholars within the sociology of expectations have broadly considered how ‘expectations mobilize the future into the present’ (Brown, 2003). This approach asks how expectations, as ‘real-time representations of future technological situations and capabilities‘ can shape scientific and technological innovation and change (Borup et al., 2006: 285, 286).

The recognition that representations of the future play a key role in social action (Flichy, 2007) can be traced back to the beginning of modern social theory (Borup et al., 2006: fn 19). What distinguishes the sociology of expectations is the recognition that expectations intervene in the world – that expectations ‘do something. . .An expectation is not just a description of a (future) reality, but rather a change or creation of a new reality’ (van Lente, 2012: 772). In other words, expectations are performative (MacKenzie, 2006).

Consolidating existing research, van Lente identifies three ways in which expectations can performatively intervene in the world. First, they can provide legitimation to a project or technology, helping to justify it before it has proven successful. Second, expectations can provide heuristic guidance, helping designers of technologies choose directions when there are many available paths. Third, expectations can provide coordination, mobilizing people and resources to build, design, or extend technologies.

Given its interest in investigating how expectations help shape innovation, scholarship in the sociology of expectations has focused on how expert communities produce and respond to expectations (Hedgecoe and Martin, 2003; Messeri and Vertesi, 2015). There are, however, a small number of studies that have investigated the role that news outlets or other communication intermediaries, such as press officers (Samuel et al., 2017), can play in the production of expectations. Nerlich and Halliday (2007) show how metaphors within news coverage of the avian flu helped produce expectations around the disease. They draw on the sociology of metaphors to argue that metaphors ‘can be not just representative, but performative’ and can ‘be used by experts and the media to shape visions of the past and/or the future to try and affect our social and political actions in the present’ (p. 50).

Journalists, however, do more than produce metaphors. In covering stories, they have many ways of influencing and shaping content. Yet, the sociology of expectations lacks a full accounting of how journalists construct and mediate expectations in ways that constrain and enable their performative capacities. Given this and given the lack of a rich and detailed accounting of the coverage of AI in UK mainstream media, this article asks what common expectations of the future can be identified across coverage of artificial intelligence? How do news outlets mediate, shape, and/or amplify discursive expectations of artificial intelligence? Finally, it asks how news mediation shapes the potential performativity of expectations of artificial intelligence?

Methods

Addressing these research questions, this article undertakes a critical discourse analysis of a corpus of news articles produced between January and August 2018 that concern artificial intelligence. In covering the 2018 Consumer Electronics Show, the New York Times announced that 2018 was the ‘Year of A.I.’ (Richardson and Chen, 2018). As noted above, in April 2018, the UK published and promoted a national strategic plan to encourage AI investment and success. The 2019 AI Index Report shows a significant increase from 2017 to 2018 in the number of journal publications on AI, across topics and countries (Perrault et al., 2019). Rather than provide a longitudinal look at how coverage has changed over time, we wanted to capture a snapshot in time at a moment when AI was attracting huge amounts of attention in government, academia, and public discussion. We chose 8 months as a compromise that would provide a diverse set of events and cases yet would also generate a manageable corpus of texts.

First, six UK outlets were selected, representing a variety of outlet types and political orientations. The six include, two left wing outlets, the Guardian and HuffPost; two right-wing outlets, the Telegraph and the Daily Mail (as well as its separate online iteration the MailOnline); one tech-specific outlet, Wired UK; and one public-outlet, the BBC. Using Lexis Nexis and/or native search features, all articles containing the phrases ‘artificial intelligence,’ ‘machine learning,’ ‘deep learning,’ or ‘neural network’ were collected. This corpus of 760 articles informed a previous study looking at sources, news pegs, and key themes in UK news coverage (Brennen et al., 2018). Most notably, this previous study found articles had little variety in types of news pegs: including industry products or research, industry promotional events, academic research, state reports, or technology conferences.

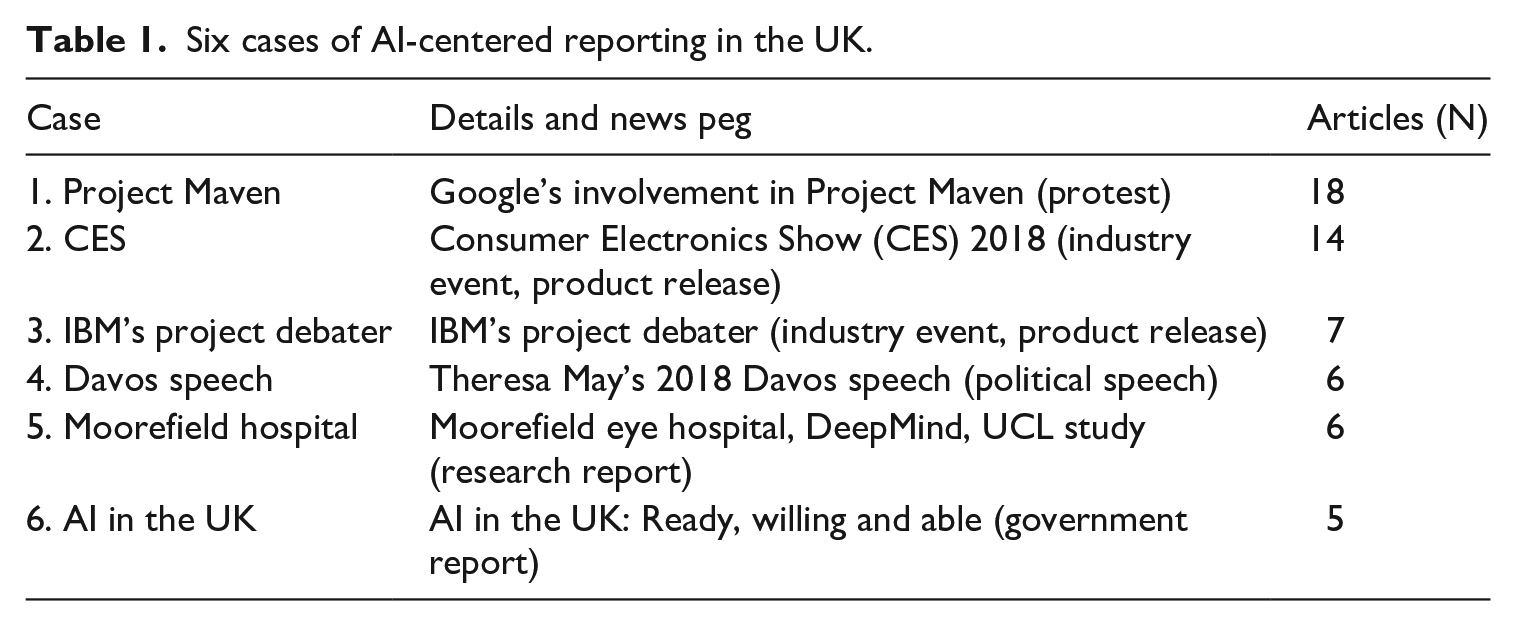

Following Natale and Ballatore (2017), we selected a small number of cases over the time period for further analysis. These cases were strategically selected to represent a variety of the notable events covered by many of the studied news outlets over the 8 months. That is to say, cases include stories with a variety of news pegs (see Table 1 for a list of cases and their news pegs). By selecting cases by news pegs, we hoped to generate a diverse group of news stories that included both the major events over the time period and also more routine stories. The six selected cases involve articles from all of the outlets, and total 56 news stories – a mix of news, analysis, and commentary.

Six cases of AI-centered reporting in the UK.

For each case, we also collected texts referenced in news articles in the existing corpus. This includes antecedent texts, including political speeches, reports, press releases, and other news articles. Including these documents allowed us to look at how news translated, adopted, or rejected content and expectations from antecedent texts.

The variety of critical discourse analysis employed here begins from an assumption that texts are themselves ‘elements of social events’ that are constrained and enabled by social relations (Fairclough, 2003: 9, 22). Analysis oscillates between ‘specific texts’ and ‘the “order of discourse”, the relatively durable social structuring of language which is itself one element of the relatively durable structuring and networking of social practices’ (p. 3). Here, this approach provides a means of moving between individual news articles, cases, and the expectations of artificial intelligence on which they draw. At the same time, critical discourse analysis disentangles ‘the role of discourse in the (re)production and challenge of dominance,’ power, and social inequality (van Dijk, 1993: 249 emphasis in original). We adopt this method to investigate how discourses of the future construct and support AI’s increasingly dominant role across public life. More and more, public and commercial institutions look to AI to solve myriad problems, from diagnosing and treating medical patients, to solving crimes, to teaching students. Our analysis is particularly interested in the specific techniques that journalists employ in contributing to and engaging with AI discourse, especially in ways that support AI’s ascendant role as a one-size-fits-all solution to public problems.

After selecting the cases we undertook an interpretive reading of each relevant text. Each was iteratively coded multiple times using the qualitative analysis software NVivo to mark topics, expectations, and journalistic techniques. Codes were synthesized for each case and then across cases to help us recognize trends and commonalities across the corpus and identify which topics, expectations, and techniques were the most common.

Analysis

While several distinct expectations emerged across cases, the most notable and persistent involved the expectation that artificial intelligence will be seamlessly integrated across our lives, serving as a solution to a wide variety of problems.

Looking across cases, we identify two common reporting techniques or practices that outlets employ to counter the challenges posed by AI integration: choosing sources and making comparisons between humans and AI systems. These techniques help outlets bolster expectations that AI can solve a range of problems, from the profound to the frivolous, from identifying and curing cancer, to resetting the British economy, to automatically reading clothing labels ‘so you don’t have to painstakingly examine the tiny tags’ (Macdonald, 2018). Through these techniques, outlets also address the many challenges and issues that arise along with the integration of AI across problems and sectors. In addressing these diverse challenges, outlets ultimately help legitimize (van Lente, 2012) AI as both a possible and as a good solution to myriad issues.

Sourcing

Google Maven

By choosing who is quoted in articles, outlets can wield significant power to shape the discursive expectations of AI. On March 6, 2018, Gizmodo first reported that Google had a contract with the US Department of Defense (DoD) to ‘build AI for drones’ as part of the larger DoD Project Maven (Conger and Cameron, 2018). Project Maven, which was overseen by the DoD’s ‘Algorithmic Warfare Cross-Functional Team,’ involved using existing Google AI products for image recognition of drone images.

Covering the project, Gizmodo observed that news of the partnership ‘set off a firestorm among employees’ (Conger and Cameron, 2018). However, the outlet did not include statements from any current employees upset by the project. Gizmodo was not alone in this: none of the articles in the corpus first reporting the partnership cited a Google employee. In their news articles covering the project, the Guardian, Wired and the MailOnline all cite the same official Google statement that observed the project ‘is for non-offensive uses only.’

Even though the Gizmodo article did not directly quote any of the employees upset by Project Maven, it quotes a speech given by Google and Alphabet’s former executive chairman Eric Schidt in 2017 that the outlet claims, ‘summed up the tech industry’s concerns.’ It quotes Schmidt observing, ‘there’s a general concern in the tech community of somehow the military-industrial complex using their stuff to kill people incorrectly’ (Conger and Cameron, 2018). In the original interview, Schmidt’s use of ‘incorrectly’ is somewhat unclear; he appears to be referring in general to ethical concerns about military applications of Google products. In the context of the article, however, Schmidt’s quote appears to imply that employees are concerned that the system will make a technical error. Much of the article is spent providing detailed description of the technology used in the project. It also quotes a Google spokesperson framing Maven as part of a long-standing technical relationship between Google and the DoD. ‘We have long worked with government agencies to provide technology solutions. This specific project is a pilot with the Department of Defense, to provide open source TensorFlow APIs that can assist in object recognition on unclassified data.’ And while the spokesperson observes vaguely that there are ‘valid concerns’ with this sort of collaboration, the only other discussion in the article specifying those concerns notes that current uses of AI by law enforcement and the military have motivated some to ‘warn that these systems may be significantly biased in ways that aren’t easily detectible.’ That is to say, it identifies an ethical concern arising from a technical failure. Notably, many outlets adopted a similar technical frame in first reporting this story. One Guardian article cites a DoD official observing that in building these AI systems, Google can increase the efficiency of visual recognition, allowing analysts to ‘do twice as much work, potentially three times as much, as they’re doing now’ (2018).

Almost a month later, the New York Times reported that more than 3000 Google Employees had signed a letter addressed to Google CEO Sundar Prichai protesting the company’s involvement in Project Maven (Shane and Wakabayashi, 2018). In releasing a copy of the letter, the Times provided the protesters a platform to express their ethical concerns with the project. The protesters argue that ‘we cannot

While the New York Times eventually provided protestors space to explain their concerns in their own words, it highlights how initial reporting on Maven by Gizmodo and other outlets failed to capture those concerns. By choosing and then contextualizing quotes by Schmidt and a Google spokesperson, the Gizmodo article reroutes the ethical concerns over participating in warfare to a technical concern with the ability of the project to be accurate. In doing so, the outlet implies that ethical concerns could be assuaged through ensuring the technical capability of the system. That is to say, while the employees articulate a fundamental objection to the integration of AI into defense work, by choosing the quotations it did within the context of the article, Gizmodo reframes their concerns into a form that can be addressed merely with more technological development. As a result, the implication is that the concern raised about Maven can be addressed simply with further research and development of AI across sectors.

Comparison

Comparing humans and machines has long been a trope in public discourse, from John Henry, to the Turing Test, to computer chess (Ensmenger, 2012). We observe that comparing AI with humans serves as a second technique through which news outlets address ongoing challenges to AI integration and mediate discursive expectations of AI relevance. We offer two examples from the cases studied here.

Project debater

In June 2018, IBM organized a debate between an AI system and two human debate champions. IBM designed the system, called Project Debater, to generate semantic arguments from a wide range of collected documents. The debate was held at IBM’s San Francisco office, where a crowd of IBM staff served as judges. IBM invited a number of reporters to cover the event.

In setting up this event as a competitive debate, IBM invited outlets to compare the AI system to the human debaters – an approach that outlets embraced. Many headlines of the event framed it explicitly as a competition; the Guardian wrote ‘Man 1, machine 1: landmark debate between AI and humans ends in draw’ (Solon, 2018).

Beyond framing the event as a competition, outlets deployed the human debaters as a benchmark to assess the AI system itself. Outlets more or less describe a sort of détente between humans and AI. Reporters gave humans an advantage in delivery and in the use of ‘more expressive language, more original language’ (NA, 2018, citing Dario Gil of IBM). Yet, ‘while the humans had better delivery, the group agreed, the machine offered greater substance in its arguments’ (Lee, 2018). That is, many suggested that the AI had an advantage in marshaling facts and information. While perhaps a testament to fact that the audience consisted of IBM employees, the audience nonetheless voted the AI system ‘more persuasive (in terms of changing the audience’s position).’ They made their determination given ‘the amount of information it conveyed’ (Solon, 2018) in the debate.

In covering the debate for the Guardian, Olivia Solon not only adopted this competition frame, she leveraged it to explore future potential of this system. Rather than moonlighting on the debate circuit, the system, she noted, could be extended to aid corporate boardroom decisions, where there are lots of conflicting points of view. The AI system could, without emotion, listen to the conversation, take all of the evidence and arguments into account and challenge the reasoning of humans where necessary (Solon, 2018).

Here Solon does the work of extending this narrow AI system, designed to produce semantic arguments within structured debates, to a far wider set of potential uses within the realm of decision making. But what is especially notable here is how, through comparison with humans, she converts the system’s peculiarities into strengths. In noting the system’s expressive deficiencies, the judges observed Project Debater’s strangeness, its non-humanness. The inability to use ‘expressive language’ is indicative of Project Debater’s inability both to be rhetorically persuasive and to have any true sense of what it is saying. For this system, as all other AIs, outputs hold no meaning.

Yet, Solon converts this non-humanness into a benefit. She imagines that unburdened by human emotion, the system can serve corporate boardrooms – or any other small group setting – as an objective mediator. This, of course, has long been the dream for new technologies: that in one way or another they can be more objective than humans. Whether it is film cameras (Kittler, 1999), PET scan machines (Dumit, 2004), or the X-ray (Brennen, 2017) commentators have long imagined that new technologies might serve as objective mediators of human experience.

Putting aside the well-established fact that AI systems often have built-in biases (boyd et al., 2014), Solon’s expectation hinges on a model prescribing that decision making can and should be removed from the messiness of human emotion and complexity. This model of decision-making seems to rest entirely on the marshaling of informationally pure facts. It imagines that given appropriate information, the right solution should present itself. Only by drawing on this sort of model of decision making, can Solon work to convert Project Debater’s limitations into strengths. Some might regard the inability of the AI system to be rhetorically persuasive or to understand what it says as a weakness that disqualifies it to make decisions. Yet, Solon – and IBM behind her – through this comparison of the AI and the human debaters, ultimately converts that limitation into a benefit that can underwrite the wider extension of this technology to other sectors. While there is more going on here than a simple comparison between Project Debater and humans, contrasting the two provides Solon a platform for a more complicated effort to legitimize the extension of AI into the realm of human problem solving.

Moorfield eye hospital AI study

In August 2018, an academic research partnership between Moorfield Eye Hospital, DeepMind, and University College London announced it had trained an AI system to detect more than 50 different forms of eye disease from retinal scans. Halfway through the press release announcing the result, the collaboration wrote that: To establish whether the AI system was making correct referrals, clinicians also viewed the same OCT scans and made their own referral decisions. The study concluded that AI was able to make the right referral recommendation more than 94% of the time, matching the performance of expert clinicians” (2018).

In reporting on the collaboration, several British outlets expanded this to frame the experiment as a competition in which the ‘artificial intelligence was pitted against humans’ (Walsh, 2018). The MailOnline chose the headline begining ‘Google “robomedics” spot disease faster than doctors’ (Pickles, 2018).

That outlets adopted a competition frame is not surprising. Highlighting competition and conflict is a frequent narrative strategy across many types of journalism (Iyengar et al., 2004). Even still, drawing out the direct comparison between humans and AI supports what many recognize as the ‘future potential’ (Walsh, 2018) not only of this research, but of artificial intelligence in medical diagnoses and treatment more broadly. The Guardian reprinted a quote from Moorfield’s chief, observing, ’artificial intelligence is showing the potential to transform the speed at which diseases can be diagnosed and treatments suggested, making the best use of the limited time of clinicians’ (Gibbs, 2018).

The comparison between AI and eye specialists also directly supports expectations of the system’s potential as a solution to the lack of capacity in the NHS. The Telegraph wrote that AI can ‘speed up treatment for people who currently wait up to 16 weeks for eye tests, because of shortages in the NHS’ (Knapton, 2018). The AI’s applicability to the NHS rests on its ability to replace trained specialists. Here, however, the comparison hinges on a single set of tasks rather than a broader contrast of the AI and human experts in terms of actual deployment in medical practice. In a way, this extension of a single point of comparison falls into what Ekbia calls the attribution fallacy ‘the propensity of people to uncritically accept implicit suggestions that some AI program or other is dealing with real-world situations’ (2008: 8–9). That is, the single point of comparison within a controlled experiment becomes a stand-in for the much broader set of tasks and roles that eye specialists perform. Leveraging this comparison, none of the news outlets question if other types of solutions might not also address this problem at the NHS – such as hiring more medical staff. Neither do they question what might be lost in replacing human experts with AI systems, or the cost of doing so.

Discussion

Recognizing that outlets shape, mediate, and amplify certain expectations should not necessarily be taken as an indictment of journalists. Journalists likely do not set out to intervene in the discursive futures of AI, rather, they are likely far more focused on meeting deadlines and telling interesting stories. While there remains little empirical insight into the current practices of technology journalists in the UK (Brennen et al., 2018), existing scholarship on science and technology journalism more broadly suggests journalists face a host of constraints, pressures, and challenges. These include precarious employment, high story quotas, limited resources, and lack of subject expertise (Schäfer, 2017). Amid these challenges, orienting a story to future development of AI can serve as an important way for journalists to add complexity and layers of meaning to articles (Brennen, 2018).

This article has identified the expectation of the wide relevance of AI in just a handful of cases. Future research is needed to better assess its prevalence. That being said, existing research indicates these cases are not unique. The expectation that AI will be singular, autonomous, and widely relevant recalls what Graubard recognizes as a persistent ‘hubris’ predicated on the central ‘myth’ of machines that ‘might soon be able to replicate the intelligence of a human brain’ (1988: V). Indeed, this dream of AI is written into a common definition of AI: systems able to ‘perform tasks that would otherwise require human intelligence’ in part through ‘the capacity to learn or adapt to new experiences or stimuli’ (House of Lords, 2018: 14). But the cases outlined above show that this expectation of wide relevancy can manifest as what Broussard has called ‘technochauvenism,’ the ‘belief that tech is always the solution’ (2018: 20). For Broussard, technochauvenism obscures that many problems resist technological solutions, that ‘there has never been, nor will there ever be, a technological innovation that moves us away from the essential problems of human nature’ (p. 21). Indeed, in the context of AI, many commentators and theorists do the opposite, leveraging AI to reframe and understand human nature itself (Boden, 2006).

Scholars and commentators often distinguish between two forms of AI. Narrow or weak AI are systems capable of completing one or two tasks requiring human intelligence. General or strong AI are systems that would be capable of doing any task involving human intelligence. Although artificial general intelligence does not yet exist and many believe we are very far from ever building it (Ford, 2018), it remains the stated goal of many researchers and research organizations (Boden, 2016: 21). DeepMind, for example, has made it very clear that they aim to build advanced AI – sometimes known as Artificial General Intelligence (AGI) – to expand our knowledge and find new answers. By solving this, we believe we could help people solve thousands of problems (DeepMind, 2020).

The promise of AGI is of a single technical system that is perfectly generalizable across cases; a single system that could ‘solve thousands of problems.’

The expectation that AI is widely relevant and can be a solution to nearly any problem shares much with the hope of an AGI. Both extend the ‘dream’ (Ekbia, 2008) of the wide generalizability of AI systems. Instead of a single system capable of solving any problem; this expectation offers a collective of systems capable of doing so. In this way, the expectation can be seen as discursively supporting a pseudo-artificial general intelligence. In part, the construction of this pseudo-AGI hinges on a lack of specificity in how outlets speak of AI: treating it more as a singular technology, rather than wide array of techniques, technologies, and approaches. But also, pseudo-AGI draws on a fundamental optimism as to AI’s promise and potential that infuses both scholarly discourse (Guzman, 2018) and media reporting on AI – but also appears in much of the reporting on new and emerging technologies (e.g., Metag and Marcinkowski, 2014).

The implication of a pseudo-AGI constructed through news outlets’ persistent suggestion that AI is relevant across sectors raises several potential concerns. First, it may elide the resources and effort required to build AI containing systems. Elish and boyd observe this in how media treat IBM’s Watson as being capable of ‘moving seamlessly between contexts’ (2018: 66) – a description that they note obscures the work required to make Watson applicable to any particular problem. Falling into an ‘attribution fallacy’ (Ekbia, 2008: 8–9) pseudo-AGI suggests AI can be cheaply and seamlessly integrated into existing systems or easily extended into new sectors. Yet, building new systems and infrastructures always requires human labor, money, data, coordination, and even energy (Strubell et al., 2019). Imagining AI as unproblematically relevant to any problem risks news outlets failing to account for these costs and underestimating what it actually required for AI integration. As shown above, amid the excitement of reporting DeepMind’s success in matching eye specialists in diagnosing disease, there was little discussion of the cost of scaling up, the challenges of integrating AI into existing systems, or what we may lose if human doctors are less involved in diagnosing patients.

Similarly, this expectation may also result in news outlets underestimating of the risks of AI integration. On one hand, many scholars and commentators have recognized that AI systems always bring built in biases and flaws due both to issues in training data and in algorithmic design. On the other, as a recent report recognized, AI is a ‘dual use technology’ (Brundage et al., 2018) which, in addition to many beneficial applications, can also be put toward ‘malicious uses.’ While Project Maven may provide useful tools for intelligence gathering, it raises both ethical and practical concerns about introducing new vulnerabilities, risks, and limitations. Yet, drawing instead on long-running discourses of the unlimited future potential of AI, much of the discussion focuses attention on the capacity of AI, rather than the unintended consequences of its adoption (Tenner, 1997).

Finally, emphasizing the wide relevancy of AI, may encourage us to miss other potentially easier solutions to the problems we face. When AI is seen as a skeleton-key solution, it is easy to forget that there are myriad other possibilities – some of which might not involve new technologies.

Grand expectations for artificial intelligence abound. In announcing the AI and Data Grand Challenge, the UK Government made clear its ambitions to ‘put the UK at the forefront of the AI and data revolution’ (Department for Business, energy and Industrial Strategy, 2020). To these policy makers, as it has been to many computer scientists for decades (Boden, 2006), AI is not only an engine of innovation amid uncertain times, it is our inevitable future. This article has shown how, for better or worse, news media play a role in shaping the discursive futures of AI. But also, in revealing how news media participate in constructing those futures, this article demonstrates that despite the sense of inevitability around artificial intelligence, the futures of AI, just like the technologies themselves, are still in the process of being built.

Footnotes

Acknowledgements

The authors would like to thank each member of the research team at the Reuters Institute for the Study of Journalism for their help and feedback on this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was completed as part of the Oxford Martin Program on Misinformation, Science, and Media, a 3-year project funded by the Oxford Martin School.