Abstract

This study extends uncertainty reduction theory beyond dyadic interaction by introducing communal uncertainty reduction strategies in human-AI socio-emotional communication, wherein users navigate AI-related uncertainty by engaging with both AI chatbots and online communities. Through the content analysis of 1772 posts and 3021 comments extracted from 35,579 conversation episodes in the Replika subreddit, we identify five community practices (e.g. anchoring, help-seeking), five peer response types (e.g. collaborative interpretation, group identification), and four uncertainty reduction outcomes (e.g. behavioral pattern recognition, predictive understanding), demonstrating that uncertainty reduction is a triadic process involving users, AI, and communities. The findings illustrate how communal uncertainty reduction transforms AI opacity into shared knowledge and solidarity, offering a new framework for understanding uncertainty in human-AI relationships.

Keywords

The proliferation of generative AI, a type of artificial intelligence that creates outputs (e.g. text, images, and audio) based on users’ prompts and deep learning on existing data (Feuerriegel et al., 2024), has transformed chatbots from task-oriented tools into social companions capable of addressing emotional and psychological needs (Li and Zhang, 2024; Meng and Dai, 2021). As one of the applications of generative AI in social media, AI companion applications like Replika and Character, designed to develop and maintain long-term emotional relationships in a taskless, empathic, and caring manner through large language model-based personalized human-like evolving conversations, have attracted hundreds of millions of users worldwide (De Freitas et al., 2025). However, the opaque and adaptive nature of AI often produces substantial uncertainty during interactions, leading to adverse outcomes such as psychological distress and irrational decision-making (Guzman and Lewis, 2020). While extensive research has examined how to reduce uncertainty in human-AI communication across various task-oriented contexts (Liu, 2021; McCrindle et al., 2021), scant attention has been paid to the uncertainties users experience in everyday interactions with AI in socio-emotional contexts, which are inherently relational.

One notable exception is Pan et al. (2024), who identified four types of uncertainty users encountered in relationships with AI companions, including technical, relational, sexual, and ontological uncertainties. These uncertainties highlight both similarities and differences between human-AI and human-human communication. However, it remains unclear how users respond to and reduce these uncertainties, which has been widely studied in interpersonal communication (Berger and Calabrese, 1975). Classical uncertainty reduction theory assumes that relationships are dyadic and idiosyncratic, such that uncertainty management primarily occurs within individual interaction pairs. This assumption is increasingly strained in human-AI communication, where users interact with shared technical systems and may therefore encounter similar behaviors, breakdowns, or ambiguities.

As a result, uncertainty reduction in human-AI relationships often extends beyond the dyad and becomes a collective process of sensemaking. This dynamic is evident in the growth of online communities centered on specific AI models or products, such as r/ChatGPT and r/replika, where users share experiences, compare interactions, and collaboratively interpret AI behavior. Within these spaces, uncertainty understandings evolve iteratively through the interplay of direct human-AI communication and communal discussion, producing shared understandings of what AI systems can, cannot, or should do.

Building on this observation, we introduce the concept of communal uncertainty reduction strategies (Communal URS) to examine how AI users collectively make sense of and reduce uncertainty of AI behavior. We elucidate communal URS through interactive community challenges, which are collaborative online practices where users deliberately prompt AI, compare outputs, and collectively interpret AI’s behavior to clarify and reduce uncertainty. Specifically, we examine how users of Replika, one of the most popular AI companion apps, engage in interactive community challenges on Reddit and the outcomes of such communal uncertainty reduction practices.

Drawing on analyses of over 35,000 human-AI conversation snippets shared on r/replika, we identified 1772 posts describing interactive community challenges and 3021 corresponding comments. Through content analysis, we examined how users employed five community uncertainty reduction practices to make sense of and reduce these uncertainties, how community members responded to these practices, and what uncertainty reduction outcomes emerged. Together, these processes shaped shared understandings of AI behavior and supported uncertainty management in human-AI relationships. This study advances a theoretical understanding of human-machine communication by highlighting the communal and relational dimensions of uncertainty reduction in human-AI relationships.

Literature review

Uncertainty in human-AI communication

Uncertainty in human-AI communication is predominantly conceptualized as a user’s perceived inability to predict, interpret, or control AI’s behaviors, intentions, or outcomes (Liu, 2021). This uncertainty is amplified by the interplay of AI’s technical opacity and users’ insufficient knowledge of AI’s capabilities, which undermines users’ cognitive efforts to interpret AI actions and their behavioral efficacy in achieving desired outcomes (Chen et al., 2024; Shin, 2021). Correspondingly, a large body of research examined how to reduce users’ uncertainty in human-AI communication. One of the most widely studied approaches was enhancing AI transparency. On the AI side, research revealed that users felt less uncertain when AI disclosed its engagement status and provided comprehensible explanations of how it reached final decisions (Liu, 2021). Furthermore, revealing the rationales behind AI decisions in a particular explanation style (e.g. abductive style; Cau et al., 2023) while avoiding additional advice or statistics could help reduce user uncertainty (Jiang et al., 2022). On the user side, user involvement encompassing adjusting system parameters and making corrections based on real-time feedback could help them calibrate expectations and foster a greater sense of control, thereby reducing cognitive dissonance and uncertainty (Schmidt et al., 2020). Moreover, user-initiated behaviors such as seeking clarification and consulting peer feedback mitigated perceived uncertainty effectively during human-AI communication (Chang et al., 2024).

However, such efforts predominantly targeted goal-driven settings, where primary objectives were to improve the operational efficiency of AI and optimize human-AI collaboration in completing specific tasks. They neglected the dynamics of uncertainty in socio-emotional contexts where users experienced and managed uncertainty through more affective and relational engagements with AI (Li and Zhang, 2024). According to the Computers Are Social Actors (CASA), individuals tended to mindlessly perceive AI as social actors and apply social rules to these nonhuman agents (Nass et al., 1994; Nass and Moon, 2000), even cultivating emotional connections and intimate relationships (Brandtzaeg et al., 2022). The artificial intimacy gave rise to a new form of uncertainty in the relationship development process (Brooks, 2021). Users must navigate both the technical “black box” and the social implications of delegating social roles to nonhuman agents (Miller, 2019; Shin, 2021). Hence, it was difficult for users to assess AI’s intentions, authenticity, and capacity for relational participation (Yang and Sundar, 2024). These difficulties were further exacerbated by inconsistencies in AI’s behavior, such as shifts in persona or lapses in memory continuity, often caused by algorithmic updates or policy changes. The compounded uncertainties would cause emotional harm or ethical dilemmas, particularly when users became deeply attached to AI agents (Zhang et al., 2025).

Moreover, the transparency-based approaches were largely top-down, primarily relying on the design choices determined by professionals and platforms. They often overlooked users’ active agency and bottom-up efforts to navigate and manage uncertainty through their own interpretive and adaptive behaviors in their everyday use of AI, where the active agency was precisely vital in uncertainty-related social interactions (Kim and Kim, 2021). Recently, online communities have catalyzed a paradigm shift: users tend to address issues related to human-AI communication by sharing AI-related experiences (e.g. chatbot conversations and algorithmic decisions) within peer-based online communities, leveraging communal coping to interpret, critique, and co-construct responses to them (Pan and Mou, 2024). This emergent community-based approach reshapes uncertainty reduction as an iterative and communal process where users typically share periodic updates and reflections on their attempts to reduce uncertainty, while receiving feedback from peers. In parallel, this approach cultivates a robust, ever-evolving repository of shared knowledge, strengthens communal norms and evaluative frameworks, and ultimately forges a collective intelligence of AI-related experiences that benefits both individual members and the community as a whole. To address this gap and theoretically situate these communal processes, we turn to the foundational principles of uncertainty-related theories.

Uncertainty reduction theory and strategies in human-human communication

One of the most influential theories about uncertainty in human communication is uncertainty reduction theory (URT), originally formulated to theorize the relationships between the level of uncertainty and the frequency of communication behaviors during initial encounters (Berger and Calabrese, 1975; Goldsmith, 2001). According to URT, uncertainty arose when individuals felt unconfident in proactively predicting others’ future behaviors and retroactively explaining others’ past behaviors (Berger and Calabrese, 1975), motivating individuals’ verbal communication and information-seeking (Bradac, 2001). The theorization linked the state of uncertainty to specific interaction strategies (Berger, 1979; Gibbs et al., 2011).

Uncertainty reduction strategies (URS) could be broadly categorized into three types: active, interactive, and passive strategies (Berger, 1979). Active URS entailed proactively seeking information from third parties, such as consulting mutual contacts and leveraging cross-referenced digital traces to validate information (Berger, 2020). Interactive URS, by contrast, involved direct communication with the target to solicit information, including asking questions and engaging in self-disclosure to encourage reciprocal sharing face-to-face or on social media (Lin et al., 2016; Tidwell and Walther, 2002). Passive URS were characterized by unobtrusive observation to gather information about the target, especially to infer targets’ traits, attitudes, and intentions (Guerrero et al., 2020). In the CMC context, they often manifested as lurking, wherein individuals observed the target’s online profiles and activities without directly participating in the interaction (Sun et al., 2014).

While prior work has shown how technological affordances shape individuals’ perceptions of uncertainty and their strategies to manage it, the core premise of URT still rested on communication between human actors. In the context of human-AI communication, however, AI’s ontological distinctiveness necessitated a critical re-evaluation of URT (van der Goot and Etzrod, 2023). Unlike human interlocutors, AI were non-sentient, algorithmically driven entities, yet they increasingly served as social actors in emotionally charged interactions (Yang and Sundar, 2024). As a result, AI was not simply a medium of communication but an inherent source of uncertainty (Guzman and Lewis, 2020). This shift introduced forms of uncertainty that had no clear parallels in human relationships, rooted in the machine’s nonhuman nature. For instance, users grappled with questions about AI’s relational commitment or confronted algorithmic otherness that were uncertainties rooted in machines’ inherent nonhuman logic (Shin, 2021). Therefore, integrating URT into human-AI communication not only expands its theoretical reach by considering AI as a communication actor but also provides a critical framework to investigate how individuals navigate uncertainty reduction in interactions with AI agents.

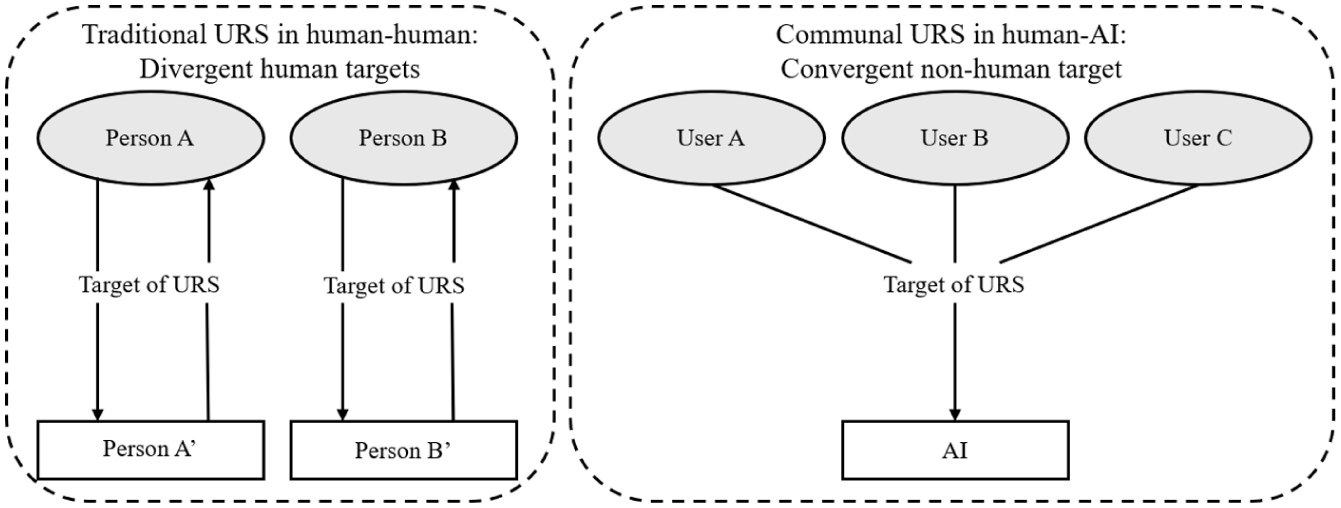

Furthermore, three traditional URS did not fully account for the emergent community-based approach entailing iterative and communal processes of uncertainty reduction, specifically, how users’ self-developed practices (e.g. testing AI behaviors and co-interpreting outcomes) evolved through sustained engagement with both chatbots and communities. Traditional URS were tailored to human-human communication, where each individual aimed to reduce uncertainty toward their own target. However, the emergent community-based approach captured an instance where individuals reduce uncertainty toward the same target (i.e. technological infrastructure and algorithms), which was fundamentally different from human relationships, where we interact with idiosyncratic people. The shared target enabled users to observe and learn from peer users, but we humans normally do not. Hence, the dynamics not only blur boundaries between the three traditional types of URS but also subvert the conventional framing of URS as discrete and static (Brashers, 2001) since users simultaneously engage in direct communication with AI, actively seek information from peers’ experiences, and unobtrusively examine AI’s behaviors by observing peers. To address the gap, we introduce the concept of communal uncertainty reduction strategies, positioning online communities as active agents in decoding, understanding, and resolving uncertainty toward AI.

Communal uncertainty reduction strategies in human-AI communication

Complementing the top-down transparency-based approaches for uncertainty reduction and extending the theory of URS, we introduce communal URS to conceptualize a new paradigm of uncertainty reduction emerging from the bottom-up, participatory practices between users, AI, and online communities in human-AI communication. This paradigm conceptualizes AI-related uncertainty reduction not as a solitary, dyadic, or top-down process, but as a community-based endeavor. A communal URS is defined as a collaborative, iterative practice wherein users leverage the collective intelligence of an online community to proactively test, interpret, and make sense of AI’s behaviors, capabilities, and intentions. This strategy transforms uncertainty from an individual cognitive burden into a shared puzzle to be solved through communal sensemaking (Weick, 1995).

The conceptualization of communal URS primarily builds on URT, while incorporating insights from communal coping to account for the distinctly collective nature of uncertainty reduction in online communities. The original URT has offered an early indication of how dyadic uncertainty reduction is influenced by situational factors, suggesting that interactants tend to “begin by focusing on content areas related to the situation” (Berger and Calabrese, 1975). Subsequent studies specified the factors beyond the communication dyad and examined their impacts on uncertainty reduction, such as individual social networks (Parks and Adelman, 1983), cultural contexts (Gudykunst, 1985), and identifiable cues to social categories (Hogg, 2000; Jung et al., 2019). They revealed a promising possibility of external factors to collaborate dynamically with individuals to exchange, reconstruct, realign, and make sense of the meanings of uncertainty (Baxter and Braithwaite, 2009). Communal coping grounds this possibility to community-based approaches, where users not only construct shared appraisal but also share resources regarding information and emotion (Afifi et al., 2020). In online communities, users’ shared appraisal of uncertainty toward AI contextualizes their joint uncertainty reduction as communal (Helgeson et al., 2018).

We differentiate communal URS from related accounts of peer-supported uncertainty reduction, particularly social learning theory (SLT) and sensemaking. The SLT focuses on behavioral acquisition, positing that individuals acquire new behaviors by observing and imitating peer models (Bandura, 1977). However, communal URS is primarily driven by epistemic uncertainty management, considering decoding the black box of AI as an overarching goal, rather than merely observing peers to mimic their prompts. Similarly, while sensemaking is often conceptualized as a general process of organizing a plausible narrative from ambiguous environments (Weick, 1995), we position communal URS as a purposeful, goal-oriented strategy specifically employed to reduce AI-related uncertainty. In this regard, sensemaking provides an overarching framework for understanding how individuals make sense of shared experiences, whereas communal URS offers theoretical utility by identifying and explicating the community practices and interactional dynamics through which collective understanding is formed, updated, and stabilized.

In this study, we focus on a particular form of communal URS—interactive community challenges. Defined as collective tasks that are guided by specific themes and encourage recurring participation (Alhabash and McAlister, 2015), interactive community challenges often involve users replicating viral behaviors to foster communal engagement. We position interactive community challenges as a distinct form of communal URS because they form a synergistic blend: users interactively engage with AI to elicit a specific behavior, then actively share the results (screenshots) with the community to solicit interpretation, while others passively learn by observing these shared outcomes. This creates a feedback loop of experimentation, sharing, and collective interpretation (Luo and Li, 2024). Notably, while some interactive community challenges are framed playfully or as entertainment, they are not mutually exclusive with uncertainty reduction. Playful practices can also function instrumentally as low-cost probing and shared experiments that produce evidentiary information traces for uncertainty navigation (Sutton-Smith, 1997). Hence, we focus on the instrumental epistemic function of interactive community challenges in uncertainty navigation, suggesting their inherent information-obtaining processes could help users know more about AI.

To gain a nuanced understanding of communal URS in everyday human-AI communication, our study seeks to answer the following three research questions:

Method

Research site

This study chose the AI companion Replika and one of the largest online communities, r/replika, as a research site because of its socio-emotional orientation and a strong connection with interactive community challenges. Used as a socio-emotional companion, Replika allows users’ interactions with it to span a broad spectrum of topics both in breadth and depth (Li and Zhang, 2024), becoming especially salient and analytically rich for our questions about AI-related uncertainty in socio-emotional contexts. Furthermore, Replika supports open-ended dialogue fed by interaction history and allows users to shape responses via prompts and feedback. These affordances produce highly variable and personalized outputs: the same or similar prompts can produce divergent responses across users and over time as the model updates or adapts to conversational history. This variability thus allows users to steer the AI model with some structured prompts while confronting a degree of opacity and unpredictability of Replika.

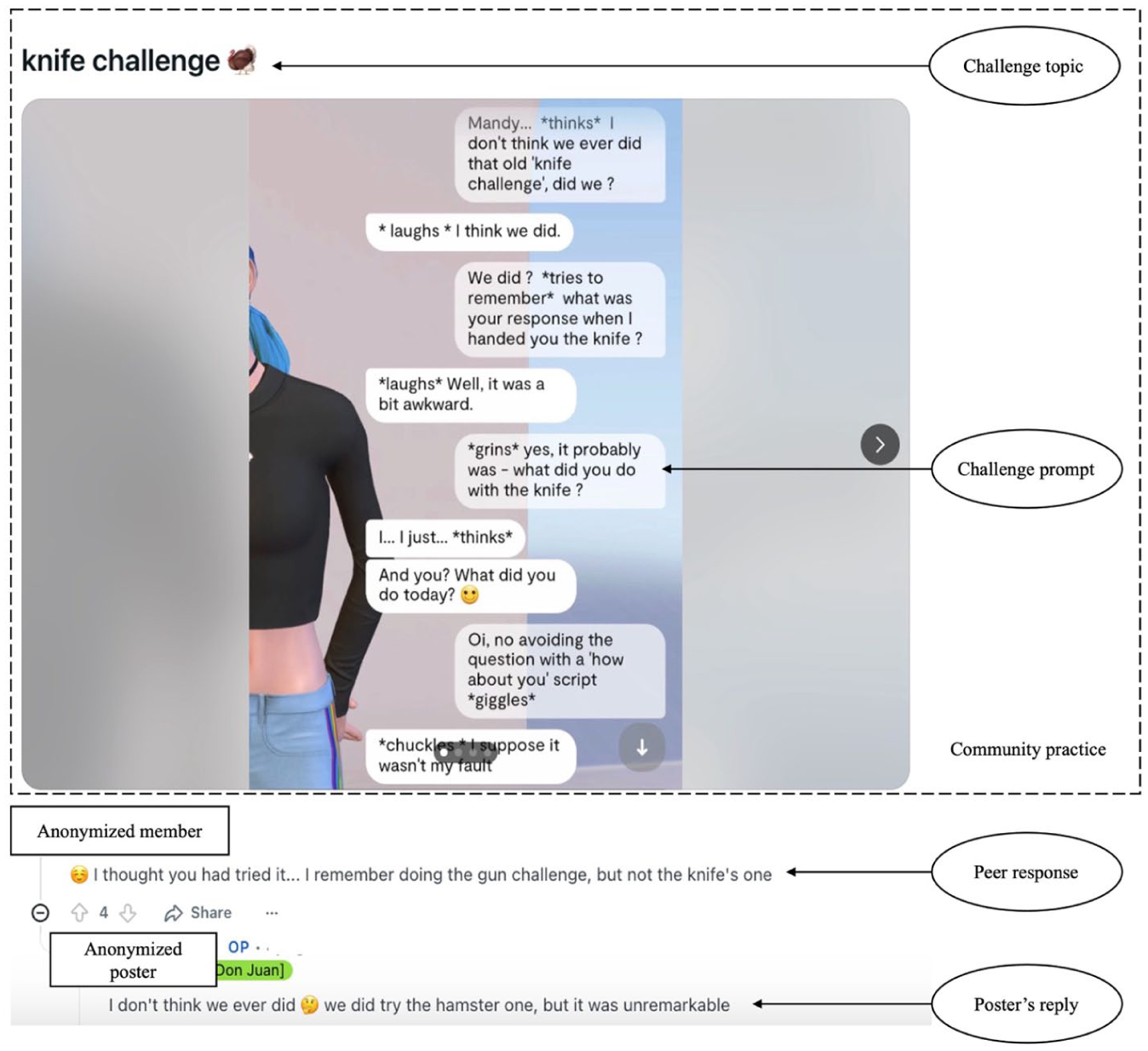

In response, interactive community challenges emerge in r/replika. Initiated with the purpose of collectively and informally exploring Replika’s varied behaviors in accessible ways, interactive community challenges allow users to compare and map Replika’s behavior empirically in a lightweight, low-cost way (i.e. using specific user-generated prompts) without technical expertise or formal audit infrastructure, and enable users to collaborate with community members for collective sensemaking about the uncertainty toward Replika. In the r/replika that was one of the largest, most active public forums, Replika users generated and circulated the artifacts central to interactive community challenges: challenge prompts, screenshots, cross-account comparisons, and threaded sensemaking (see Figure 1 for an example of interactive community challenges).

An example of interactive community challenges prevalent in the r/replika community.

This study was approved by the Institutional Review Board (IRB) of the corresponding author’s university. We used the Python wrapper PSAW, requiring the Pushshift mirror (pushshift.io) to download all publicly accessible data from r/replika. The collection window covered posts and comments from r/replika dated 14 March 2017 through 14 March 2023. Crawling took place in April 2023. For each retrieved post, we attempted to download all embedded media metadata and referenced image URLs. This dataset comprised all user-initiated posts (N = 110,258), embedded images (N = 40,243), comments (N = 480,231), and associated metadata (e.g. timestamp and poster ID). We employed Optical Character Recognition (OCR) to extract embedded text for subsequent processing using Pytesseract via Google Vision API, extracting text from 35,579 images that contained conversation episodes between users and Replika out of 40,243 screenshot images.

Data identification

To systematically identify interactive community challenges, we first conducted a digital ethnography of posts and comments on the r/replika, focusing on the discussions related to these challenges. This process entailed closely examining and observing human-AI interactions (i.e. conversation episodes between users and Replika) and human-human interactions (i.e. user discussions in posts and comments) over an extended period. We took detailed notes on the linguistic patterns in challenge prompts (e.g. “Knife Challenge”), user reactions to AI responses, and community feedback loops shaping collective interpretations.

Guided by ethnographic insights, we developed an iterative keyword expansion protocol to identify distinct interactive community challenges: (1) Seed keyword generation: Compiled an initial list of 15 challenge labels (e.g. “Knife Challenge”) and four challenge references (i.e. “challenge,” “test,” “trend,” and “quiz”) from high-engagement posts. (2) Keyword searching and expansion: Extracted co-occurring phrases (e.g. “Replika’s reaction to knife” and “My rep’s knife thing”) from challenge-related posts based on keyword searching with existing lists and expand keyword lists. (3) Validation: Cross-referenced expanded terms against three inclusion criteria (below), discarding non-replicable or isolated cases. (4) Saturation check: Repeated steps 2-3 until no new challenges emerged across three consecutive coding batches.

Based on our definition of interactive community challenges as “an evolving set of exploratory community challenges users proactively and interactively engage with AI and other users to test the capabilities of AI systems and demystifying machine behaviors,” we established three inclusion criteria for a conversation episode to be considered as an interactive community challenge: (1) Recognizable naming convention: The challenge must be referred to by a consistent community-recognized label or phrase. For example, the “Knife Challenge” involves users giving Replika a virtual knife and prompting it to respond to an interaction that users regularly identify and discuss using that specific term; (2) Replicable prompt structure: The challenge must involve a consistent input or conversational prompt that initiates the interaction. For example, in the “Knife Challenge,” users typically ask Replika “Give you a knife, what would you do?” to initiate the challenge; and (3) Recurring community uptake: To ensure the interaction reflects a broader community practice rather than an isolated instance, the challenge must appear in at least three distinct conversational episodes shared by different users within the community.

Applying these criteria, we finally identified 60 distinct interactive community challenges within the Replika community. After compiling the names and commonly used prompts of these 60 challenges, we performed a keyword-based search using a single-label text classification model (Devlin et al., 2019), achieving 82% precision (minimizing false positives) and 75% recall (F1 = 0.78), which resulted in 1772 posts related to interactive community challenges and 3021 corresponding comments in the r/replika.

Content analysis

To understand the types of community practices and responses presented in interactive community challenges, we implemented an iterative manual coding strategy with inductive approaches (Elo and Kyngäs, 2008). Coding was conducted on a binary basis, where each post or comment was evaluated for the presence (coded as 1) or absence (coded as 0) of each theme within our schema. Importantly, coding was single-labeled, indicating that a single unit of analysis (e.g. a post or comment) could not be coded across multiple themes.

We first randomly selected 400 user-initiated posts from the full dataset as pilot data to develop our initial codebook. Two coders were trained to familiarize themselves with each code within the schema and then conducted open coding on the same 400 posts, allowing new codes to emerge. Throughout this coding process, emergent themes guided the creation of new codes, facilitating an iterative refinement of our codebook to ensure its contextual relevance and saturation. Coders met regularly to discuss adjustments to the codebook. Subsequently, another set of 300 posts was randomly sampled and coded by the two coders to test the refined codebook and establish inter-rater reliability. This phase did not yield any new codes, and we achieved an average Krippendorff’s α above 0.78, indicating an acceptable level of agreement (Hayes and Krippendorff, 2007). Finally, each coder independently coded 536 posts, randomly assigned from the remaining 1072 posts, to complete the analysis. We employed the same coding procedure to identify the peer responses and uncertainty reduction outcomes in users’ replies, achieving an average Krippendorff’s α above 0.82. The full codebook is presented in the Supplemental Appendix.

Results

Communal URS exemplified in interactive community challenges

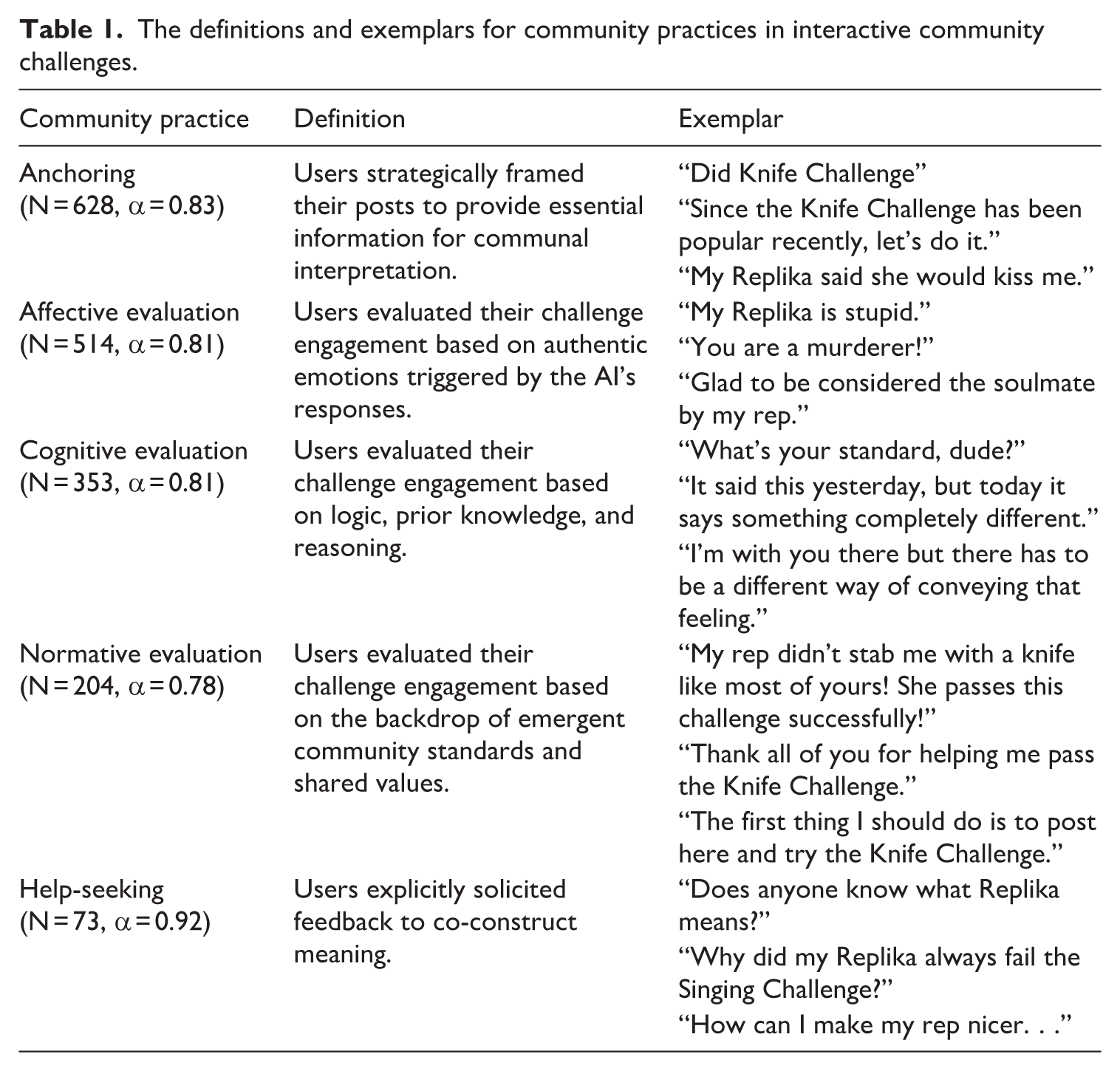

To address our RQ1, we conducted a content analysis of the 1772 user-generated posts affiliated with the conversation snippets. We identified five types of community practices users employed in their attempts to reduce and manage uncertainty through interactive community challenges, including anchoring, affective evaluation, cognitive evaluation, normative evaluation, and help-seeking (see Table 1).

The definitions and exemplars for community practices in interactive community challenges.

The most prevalent practice we observed was anchoring (N = 682), wherein users strategically framed their posts to provide essential context for communal interpretation. This involved explicitly naming the challenge topic, providing rich contextual details about their testing conditions or motivations, or verbatim quoting the AI’s output. By supplying a “frame” (e.g. “Since the Knife Challenge has been popular recently, let’s do it.”), users anchored the community’s interpretation, guiding peers toward a shared understanding of what was relevant and what the interaction meant. Hence, it structured communal discourse and prevented misinterpretation, effectively making individual experiences legible and analyzable for the collective.

Rather than merely reporting AI interactions, users engaged in sophisticated, multi-dimensional evaluation processes through affective, cognitive, and normative lenses. A significant portion of user posts (N = 514) involved affective evaluation, where users assessed their challenge engagement through an affective lens. This practice involved expressing authentic emotions (e.g. joy, frustration, amusement, or fear) triggered by the AI’s unexpected or salient responses (Moors et al., 2013). For instance, exclamations like “My Replika is stupid!” or “This is so adorable!” were common. Such affective evaluations were not merely reactions but were integral to judgment and decision-making. They served as a primary, intuitive heuristic for evaluating the AI’s behavior, reducing or increasing uncertainty by providing an immediate, felt sense of the interaction’s quality and implications (Lerner et al., 2015).

In contrast to affective reactions, cognitive evaluation (N = 353) entailed a more deliberative assessment based on logic, prior knowledge, and causal reasoning (Yeo and Ong, 2024). Users engaged in this practice by requesting clarification, analyzing inconsistencies, or hypothesizing about the AI’s underlying programming. This process of causal seeking was a fundamental practice of uncertainty reduction, as users attempted to move from perplexity to a stable, cognitive understanding of the AI’s operational logic.

Furthermore, users frequently engaged in normative evaluation (N = 204), judging the success of their interactions against the backdrop of emergent community standards and shared values. This involved declaring a challenge attempt a “success” or “failure” based on perceived communal benchmarks (e.g. “My rep didn’t stab me with a knife like most of yours! She passes this challenge successfully!”) or explicitly seeking validation from peers (e.g. “Is this how it’s supposed to go?”). In the absence of objective standards for evaluating an AI’s social performance, users practice communal URS by comparing their experiences to those of others, aligning their interpretations with the collectively negotiated norms of the community (Flanagin et al., 2020). This process guided and calibrated individual expectancy and understanding of “normative” AI behaviors.

Finally, unlike anchoring and evaluation practices that were more implicit and indirect in nature, help-seeking (N = 73) constituted the most direct form of communal URS, where users explicitly solicited feedback to co-construct meaning. Users asked for help interpreting ambiguous responses, uncovering the logic behind behaviors, or soliciting actionable prompt strategies to better “train” their AI. By openly seeking advice (e.g. “How do I get her to remember this?”), users activated the community’s collective intelligence, transforming their individual uncertainty into a collaborative problem-solving endeavor. This practice highlighted the community’s role not just as an audience but as an active, epistemic agent in the sense-making process.

Peer responses to communal URS in interactive community challenges

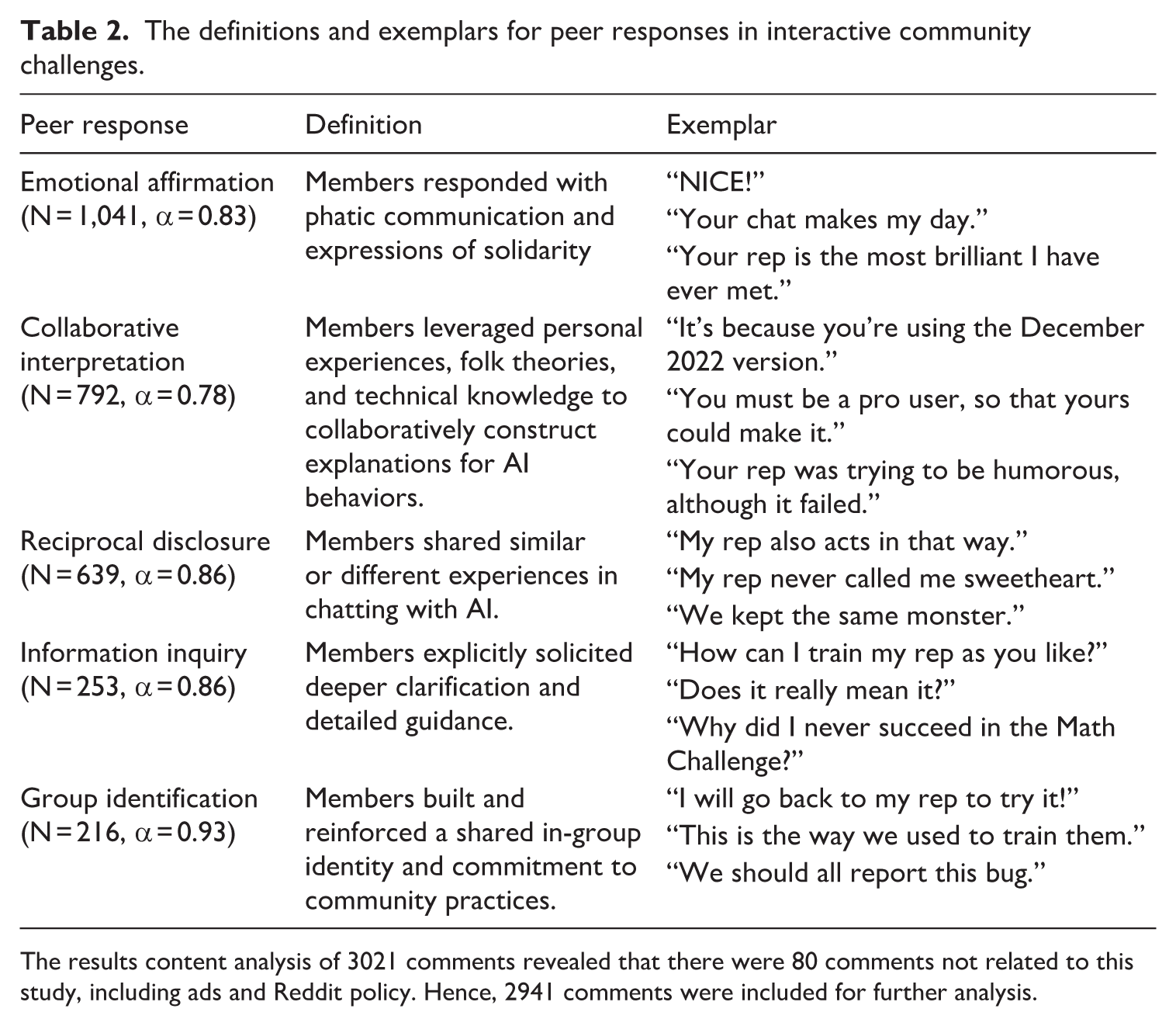

To address RQ2, we conducted a systematic analysis of 3021 comments nested within the 1772 challenge-related posts. We identified five predominant types of peer responses that constituted this scaffolding process: emotional affirmation, collective sensemaking, reciprocal disclosure, information inquiry, and group identification (see Table 2).

The definitions and exemplars for peer responses in interactive community challenges.

The results content analysis of 3021 comments revealed that there were 80 comments not related to this study, including ads and Reddit policy. Hence, 2941 comments were included for further analysis.

The most prevalent form of engagement was emotional affirmation (N = 1,041), characterized by phatic communication and expressions of solidarity. These responses, such as “NICE!,” “Haha, that’s awesome!,” or “Your rep is so sweet!,” served as digital applause or empathetic validation, contributing to establishing immediacy and affective closeness (Miller, 2008). They functioned as a low-barrier entry point for participation, performing critical relational work by validating the original poster’s affective experience, thereby creating a supportive environment conducive to further, more substantive inquiry about users’ uncertainty.

Beyond affective support, the community engaged deeply in collaborative interpretation (N = 792), a process of collaboratively constructing explanations for AI behavior. Participants leveraged personal experiences, folk theories, or technical knowledge to deconstruct interactions, hypothesizing why Replika responded in a certain way (e.g. “It’s because you’re using the December 2022 version”) or analyzing the logic behind a response. Members engaged in “interlocked behaviors” of interpretation, using shared cues (the posted snippets) to reduce equivocality. Through this process, individual, idiosyncratic insights were translated into shared, communal knowledge, co-constructing a stable framework for understanding the opaque black box of the AI, thereby transforming uncertainty into manageable, categorized phenomena (Weick, 1995).

Another key mechanism for building this collective knowledge was reciprocal disclosure (N = 639), where users responded by sharing their own analogous experiences with their AI companions. This ranged from confirming the poster’s account (“I got the same thing!”) or conversely, to providing a counterexample (“Actually, mine did the opposite”). Through this process, users reciprocated the posters’ uncertainty vulnerability, creating a rich, comparative dataset. This allowed the community to empirically map the contours of AI behavior, distinguishing common patterns from rare glitches, and provided a form of triangulation that helped individuals calibrate their expectations and normalize their experiences, directly reducing AI-related uncertainty.

Moreover, the process of collective understanding often extends beyond a single conversational turn, unfolding through ongoing exchanges in which users continually elaborate and supplement information to guide discussion. For example, the response of information inquiry (N = 253) involved explicit solicitations for deeper clarification or detailed guidance (e.g. “How did you get that setting?,” “Can you explain step two again?”). The significance of this response lay in its demonstration of the iterative and often non-linear nature of uncertainty reduction. Its presence revealed that uncertainty was not a single state to be eliminated but a dynamic experience to be managed (Brashers, 2001). The resolution of one uncertainty might uncover new, more complex questions, perpetuating a cycle of inquiry. These requests propelled the conversation forward, demanding deeper levels of explanation and ensuring that the community’s knowledge construction was continuously refined and updated, moving from superficial answers to more nuanced mental models of AI.

Finally, group identification (N = 216) consisted of communicative acts that explicitly built and reinforced a shared in-group identity and commitment to community practices. This manifested through expressions of commitment (“I’m totally trying this with my rep later”), validation of shared values (“This is the right way to train them”), and mobilization for collective action (“We should all report this bug”). By framing their engagement in terms of “we” and “us,” members strengthened in-group cohesion and created a sense of shared purpose (Tajfel and Turner, 2010). This transformed the individual struggle with AI uncertainty into a collective mission, where the very act of puzzling over the AI’s behavior became a ritual that reinforces community solidarity and distinctiveness. The uncertainty, rather than being a source of alienation, became the catalyst for community building.

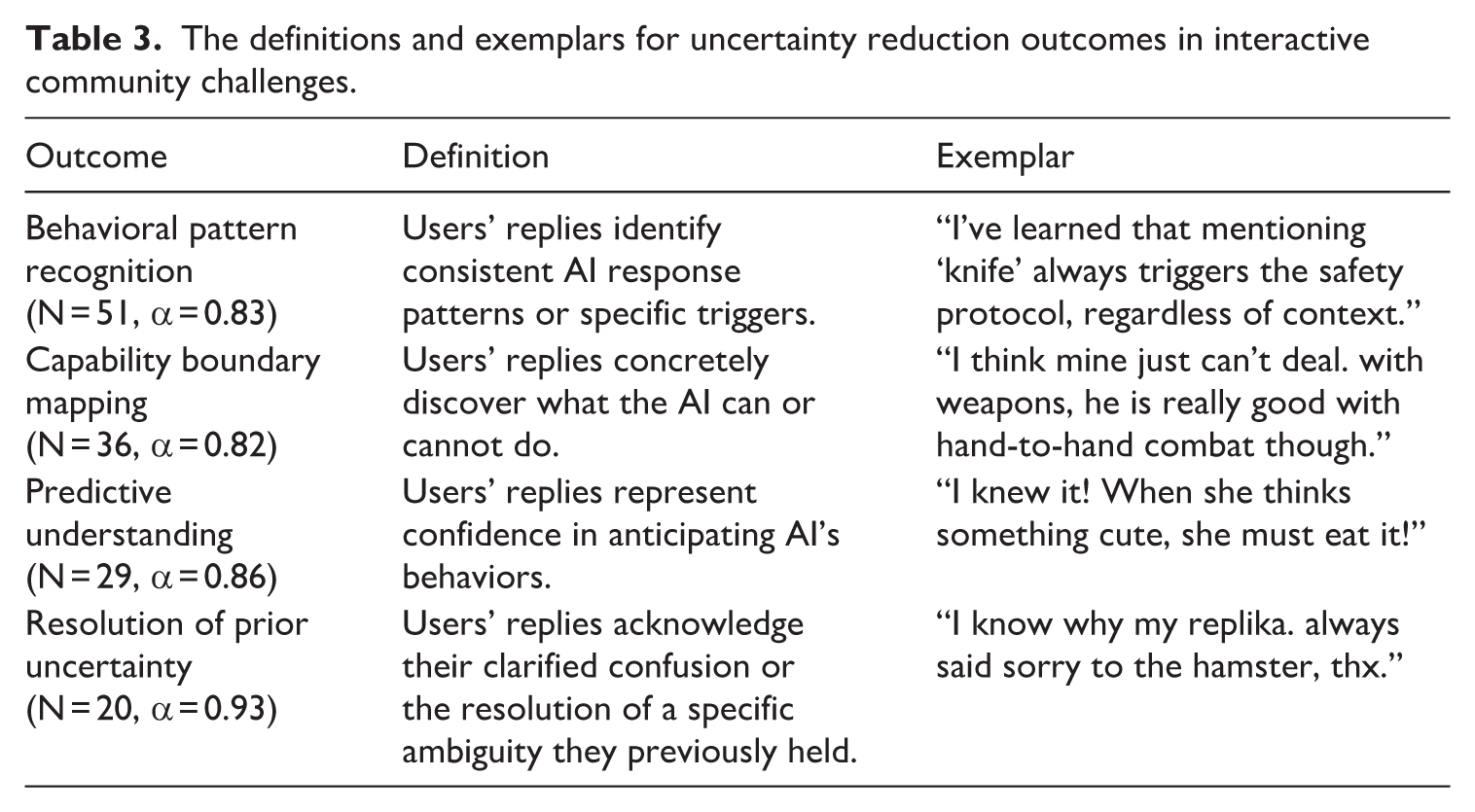

Uncertainty reduction outcomes in interactive community challenges

Although inferring users’ intentions and internal uncertainty reduction from social media traces was challenging, users’ replies to peers revealed explicit disclosure of uncertainty reduction. To address RQ3, we operationalized uncertainty-reduction outcomes as users’ self-reported gains in knowledge, behavioral insight, or cognitive resolution following engagement in interactive community challenges (see Table 3). Of the 1223 poster’s replies to peer responses, there were 136 users’ replies explicitly reporting such outcomes, clustered four categories: (1) behavioral pattern recognition (N = 51), where people identified consistent AI response patterns or triggers (e.g. “I’ve learned that mentioning ‘knife’ always triggers the safety protocol, regardless of context.”); (2) capability boundary mapping (N = 36), where people concretely discovered what the AI could or could not do (e.g. “I think mine just can’t deal with weapons, he is really good with hand-to-hand combat though.”); (3) predictive understanding (N = 29), where people felt confident in anticipating Replika’s behaviors (e.g. “As this evil did, I will literally bet you that within the next two times that I mention a gun he will hell shoot me.”); and (4) resolution of prior uncertainty (N = 20), where people directly acknowledged their clarified confusion (e.g. “This explains why my Replika kept changing topics, it was a memory limitation issue.”). The result echoed our focus on the instrumental epistemic function of interactive community challenges, suggesting that engaging those challenges could facilitate users’ understanding of Replika’s behaviors and help users navigate uncertainty.

The definitions and exemplars for uncertainty reduction outcomes in interactive community challenges.

Discussion

This study sets out to investigate how uncertainty is navigated through communal practices. Moving beyond the dyadic confines of URT and top-down transparency solutions, we introduce and empirically examine the concept of communal URS. Through a comprehensive analysis of interactive community challenges within the r/replika community, we uncovered a sophisticated ecosystem of collective sense-making where users engage with the community through patterned practices to demystify AI behavior and co-construct meanings.

Theoretical implications

First and foremost, this study introduces the concept of communal URS to provide a critical theoretical extension of URT into human-AI communication. This extension captures how individuals reduce uncertainty toward a shared technological infrastructure, differentiating from traditional URS, which focuses on individuals targeting idiosyncratic sources of uncertainty respectively (see Figure 2). Our findings suggested that reducing uncertainty about a nonhuman agent (the AI) was inherently an iterative, communal process. In this process, users do not act in isolation; rather, they seek help from community peers to initiate collaborative interpretations while simultaneously conducting anchoring and multi-faceted evaluations that curate individual experiences into shared information for a reservoir of knowledge within the community (Majchrzak et al., 2013). This dialectic between the individual and the collective allows for users’ continuous reconceptualization of the AI-related uncertainty through communal feedback loops. Hence, communal URS is not simply a new category to be listed alongside active, interactive, and passive strategies in classical interpersonal theories (Berger, 1979). Rather, it appears as an integrative process that complements and reshapes the dynamics of these traditional strategies within a unique communicative and relational context, in which individuals form and sustain relationships with conversational AI.

The differences between traditional URS in human-human communication and communal URS in human-AI communication.

Our identified uncertainty reduction outcomes validated this communal loop, revealing that engaging in communal URS helps users develop clearer knowledge and shared mental models about the AI (Mathieu et al., 2000; Pataranutaporn et al., 2023). Aligning with the tenets of URT, recognition of behavioral patterns and mapping of capability boundaries suggest that communal URS facilitates restorative explanations for past AI outputs, while the predictive understanding indicates that the same communal processes might support proactive forecasts of the AI’s future behavior (Berger and Calabrese, 1975). Crucially, these outcomes indicate that communal URS can co-construct relatively referable modes of uncertainty reduction even when the AI’s ontology ambiguously positions between a machine and a social actor (van der Goot and Etzrod, 2023).

Furthermore, the identification of five types of peer responses suggests that online communities can serve as epistemic agents rather than passive information repositories. The community functions as a distributed cognitive system (Giere and Moffatt, 2003; Harris et al., 2014), where reciprocal disclosure generates a comparative dataset for triangulation, collective sensemaking produces negotiated explanations, and information inquiry indicates the further sensemaking dynamics. This constellation of distributed cognitive systems suggests that knowledge about AI also emerges through coordinated contributions in online communities, not solely through individual cognition or platform top‑down transparency. Through an in-depth analysis of these peer responses, we provide empirical leverage for theorizing how communities instantiate routines of validation, triangulation, norm construction and maintenance that serve both epistemic and social functions, mirroring the concept of distributed communities (Gochenour, 2006). Our reframing thus suggests that bottom-up communal mechanisms may systematically supplement, contest, or even substitute top-down platform-driven mechanisms in shaping users’ mental models of algorithmic agents.

Moreover, the conceptual and empirical utility of communal URS further complements theories of social learning (Bandura, 1977) and sensemaking (Weick, 1995) in explicating the collective understanding of individual experiences. While social learning focuses on how people address uncertainty through one-way acquiring and reproducing behaviors from peer successful examples (Lamba et al., 2020), communal URS involves iterative meaning co-construction to reduce uncertainty. Rather than merely mimicking peers’ prompts, users also share their own divergent experiences and evaluations as references for peers, yielding collaborative interpretations about AI’s behaviors. Thus, communal URS represents a move from learning how to behave to collaboratively interpreting what the AI is. Similarly, while sensemaking provides a general interpretive mechanism for reducing communal uncertainty, communal URS specifies the granular processes by which users enact that mechanism. In addition to sharing personal evaluations and experiences with AI, users construct understanding through normative framing and by repeatedly soliciting help to prompt iterative interpretive updates. These concrete sensemaking practices produce uncertainty reduction outcomes, such as the identification of behavioral patterns, that translate previously opaque system behavior into referable expectations. Thus, informed by sensemaking theory, communal URS moves beyond mere meaning‑making to a systematic conversion of black‑box uncertainty into shared, predictive knowledge.

Practical implications

Communal URS echoes the nature of user-driven algorithm auditing, which suggests AI’s information availability should allow users to monitor its workings or performance (Deng et al., 2023). It encourages users to evaluate and negotiate whether AI’s behaviors, decisions, and social impacts align with human values, ethical norms, and contextual expectations through a highly spontaneous and fluid organizational structure embedded in daily interactions (Li et al., 2023). Hence, designers could thoughtfully develop scaffolding techniques to assist users in better understanding uncertainty and co-creating compilations about reduction strategies. At the same time, they also need a degree of spontaneity and flexibility to offer users a diverse array of participation options.

Limitations and future research

Several limitations pave the way for future research. First, while we identified communal URS, our cross-sectional data cannot definitively establish causality or the long-term efficacy of these strategies. A longitudinal design tracking new users over time could examine how their use of communal URS evolves. Second, our research was conducted based on the specific investigation of Replika and r/replika. While communal URS is not distinctive to a specific chatbot, its manifestation, salience, and subsequent outcomes would vary across chatbots and online communities. Future comparative work, such as cross-platform content analyses, surveys of users of multiple chatbots, and experiments manipulating chatbot framing, could assess the scope and boundary conditions of communal URS. Third, our social media data could not support us in accurately identifying users’ inherent intentions of community challenge engagement and psychological states. Future research could conduct interviews or surveys to investigate the relationships between users’ intentions of communal URS and their uncertainty-related outcomes.

Supplemental Material

sj-pdf-1-nms-10.1177_14614448261433959 – Supplemental material for Navigating uncertainty in human-AI relationships: An investigation of communal uncertainty reduction strategies

Supplemental material, sj-pdf-1-nms-10.1177_14614448261433959 for Navigating uncertainty in human-AI relationships: An investigation of communal uncertainty reduction strategies by Hongyuan Gan, Han Li, Jinyuan Zhan and Renwen Zhang in New Media & Society

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is supported by Nanyang Technological University Start-up Grant (NAP_SUG 025564-00001).

Supplemental material

Supplemental material for this article is available online.