Abstract

Authenticity is simultaneously part of the appeal and anxiety surrounding GenAI (generative artificial intelligence) technologies, which are often evaluated in terms of whether their speech and interactions can “pass” as authentically human. This study explores collective negotiations of authenticity in AI–human interaction by looking at AI virtual livestreams, focusing particularly on the performer Neuro-sama. Drawing on non-participant observation and textual analysis, we identify three key components in the performative evolution of authenticity: transparency, emotion, and potentiality. Our analysis offers a nuanced perspective on the relational and performative construction of authenticity, advancing discussions of how human–machine interactions reshape our understanding of a sociotechnical landscape increasingly reshaped by AI.

Introduction

VTubers are content creators who use virtual avatars to engage with audiences, primarily in gaming, music, or chatting livestreams. The global VTuber market, valued at $1.35 billion in 2023, is expected to reach $5.03 billion by 2030 (QYResearch, 2024). With the advancement of large language models (LLMs), AI VTubers have emerged, with artificial intelligence (AI) playing an active role in real-time communication with virtual audiences. Neuro-sama (hereinafter Neuro) is a chatbot modeled as a femme VTuber who hosts livestreams on Twitch with her creator Vedal, an AI developer. Using an LLM, Neuro communicates with viewers in live chats, generating responses that are converted into speech through a text-to-speech system (D’Anastasio, 2023). Debuting in December 2022, Neuro quickly built a dedicated fanbase and now boasts over 1,250,000 followers on Twitch. As of February 2025, she is the seventh most-subscribed channel of all time on the platform. Neuro’s online performances have also been translated in multiple languages, including Japanese, Spanish, and Chinese (Wiki, 2024).

The commercialization and professionalization of digital content creation has long provoked skepticism about authenticity (Banet-Weiser, 2012). As AI becomes embedded in VTuber performances, new interactions take shape not only as sites of entertainment, but public experimentation, where multiple stakeholders negotiate authenticity in human–AI interaction. Situating Neuro as a case study, we argue that authenticity in human–AI interactions is neither fixed nor solely defined anthropocentrically, but is actively co-constructed through dynamic, context-specific negotiations. The deliberate blending of technological realism and cultural performance creates a productive ambiguity around Neuro’s believability. We argue that this ambiguity does not undermine authenticity but instead generates interpretive frameworks that continually reshape what it means to assess AI as authentic.

Our study unfolds in four sections. The first situates VTubers generally and Neuro specifically in a sociotechnical context; reviews key literature on authenticity in human–machine interaction; and introduces our theoretical framework. The second section introduces our methodological approach, while the third analyzes how authenticity is performatively negotiated across three dimensions—transparency, emotion, and potentiality. The final section reflects on broader implications for understanding how gender and power shape the performativity of authenticity in AI livestreams.

Literature review

Context: VTubers at the intersection of technology, anime culture, and participatory AI experimentation

VTuber performances rely on an array of sophisticated digital tools, including integrating modeling and rigging (Pai and Lin, 2023), motion capture (Muller et al., 2022), real-time rendering (Ferreira et al., 2022), digital audio processing (Chen et al., 2024), and AI-driven systems (Xu, 2021). Before the release of FaceRig in 2014, virtual avatars were primarily operated by companies (Lufkin, 2018). The introduction of Live2D technology in 2015 allowed live avatars to be controlled via face motion capture, making virtual streaming far more accessible (Steam Community, 2021). 2016 provided a pivotal moment for VTubers with the debut of Kizuna AI, the first virtual YouTuber to achieve global popularity (Purplebubblestudio, 2023). (Although officially presented as an independent AI, she was in fact operated by a human voice actress and production team—a fact tacitly understood within the community despite the AI narrative.)

Shifting to our case study, Neuro relies on the sophisticated integration of multiple AI systems, with her gameplay capabilities powered by a Python-based AI processing 80x60 pixel grayscale images, while her conversational abilities utilize an LLM that generates both real-time responses and on-screen subtitles. Neuro’s performances span multiple forms of social engagement. Her singing livestreams demonstrate cultural and emotional expression, while her gaming streams (where she’s achieved notable success, including a global ranking in osu!) showcase competitive and recreational participation (Wiki, 2024). Neuro also participates in development streams, where Vedal, her programmer, discusses her technical updates and functionalities, positioning her as a self-aware participant in conversations about her own evolution. Neuro’s evolution has at times been controversial: in 2023, the Neuro account was temporarily banned by Twitch for hate speech (Pabriga, 2023).

Beyond streaming, Neuro maintains an active presence on X (formerly Twitter), posting content and interacting with fans. Audiences play a key role in Neuro’s social and personality development: Neuro logs audience comments during livestreams, which are randomly recalled in subsequent streams, creating a sense of communal history. Audience participation directly contributes to discussions about Neuro’s evolution, making her development a collaborative process between creator, AI, and audiences. These interactions are the focal point of our investigation into the collaborative performance and construction of authenticity.

Having outlined technical advances driving VTubers and Neuro, we now turn to their discursive roots in the cultural storytelling traditions of anime, comics, and games (ACG), which includes a hyper-gendered and sexually infantilized aesthetic (Sokołowski, 2023) and a deeply ingrained ethos of fan participation (Garner, 2019). Prevalent aesthetics in ACG culture cater predominantly to masculine otaku fantasies (Sugawa-Shimada, 2019), typically featuring exaggerated, youthful, and feminine characteristics in femme characters. The appearance and personality of VTubers often draws heavily from these idealized traits: Kizuna AI’s design incorporates additional animations and a persona set as a 15-year-old girl; Super Sonico is well known for her youthful facial features and emphasized bust line; and Neuro’s character is presented as a 14-year-old girl.

Similar to how ACG fans do not simply read these genres, but also participate and co-create them, VTuber fans are typically not passive consumers but active participants who shape the virtual performer’s identity via references to danmaku, fan art and memes (Lu et al., 2021). This co-construction is central to our focus on authenticity, given that VTuber’s personas evolve alongside audience engagement, reinforcing the co-construction of authenticity.

Authenticity beyond binaries: rethinking authenticity in human–AI relations

Some of the earliest imaginings of AI technologies, from Turing’s thought experiments to the chat tool ELIZA, have been anchored in an expectation that a machine can authentically “pass” as human (Ethayarajh and Jurafsky, 2022). Influential paradigms like “computers are social actors” (Reeves and Nass, 1996) emphasize that humans map social heuristics onto machine interactions. This model has been updated by Lombard and Xu (2021) to account for an increasingly ambient integration of digital technologies and additional attention to social cues and psychological processes. The integration of AI into personal and social domains has further prompted critical reevaluations of authenticity, raising important questions: How is GenAI reshaping long-standing tensions around authenticity? How is AI’s constructedness revealed and/or concealed? How do emotions surface in negotiations of authenticity? How is authenticity enacted in socio-technical encounters between humans and GenAI tools? To develop a flexible framework for understanding authenticity as co-constructed between multiple stakeholders, we first review how authenticity has been conceptualized across related fields.

Within fields like data science, machine learning, computer vision, and natural language processing, authenticity is often defined by the reliability and accuracy of information (Alsharif et al., 2018; Ethayarajh and Jurafsky, 2022). In this context, authenticity relates to the veracity of information, with a focus on ensuring that digital content aligns with factual data (Alsharif et al., 2018). Efforts to establish authenticity often involve the development of technical safeguards and verification methods to differentiate facts from falsehoods (Zhang et al., 2024). Another key area involves authentic representation within datasets and algorithms (Buolamwini and Gebru, 2018), particularly as it relates to avoiding bias. This technical approach, as manifest in approaches like auditing datasets, views authenticity as something measurable, determined through the fidelity of information and procedural transparency.

In contrast to verifying accuracy or representativeness, perceived authenticity focuses on individual experience and interactions with technology, a topic primarily addressed in psychology, marketing, and pedagogy studies. In these fields, authenticity has largely been associated with sincerity (Trilling, 2009), genuine emotions (Ju et al., 2024), natural conversation (Jones et al., 2022), and morality (Glikson and Asscher, 2023). In marketing, studies on virtual influencers reveal that consumers may perceive these AI-driven personas as authentic if they fulfill emotional or social needs, despite their artificial nature (Lee et al., 2024). In business-to-business contexts, perceived authenticity is crucial for trust-building, where AI decision-making can influence how trustworthy these interactions feel to human participants (Pedersen and Ritter, 2024). In fields like design and education, AI is increasingly utilized as a creative or evaluative tool, adding efficiency and personalization (Lee and Kim, 2023). Perceptions of authenticity raise distinct questions in fields like journalism, where augmented reality (AR) has been proposed as a tool for immersive presentations of news, shifting perceptions of accuracy, authenticity, and credibility (Aitamurto et al., 2020). This application-oriented perspective highlights the settings and formats in which authenticity is negotiated, with implications that range from current events to fashion to entertainment to education. Overall, this line of research underscores authenticity as an affective and perceptual judgment, prioritizing whether interactions feel sincere and emotionally resonant to participants.

As AI becomes increasingly embedded in social life, questions arise about how we should navigate and understand the evolving relationships between humans and machines (Zhao et al., 2025; Guzman and Lewis, 2019). In some cases, social bonds between users and AI agents have developed into parasocial relationships with AI agents (Liu, 2023). AI’s growing sophistication may lead users to develop new social scripts, moving beyond traditional human-to-human norms (Jago et al., 2022). This growing entanglement also raises ethical concerns when AI mediates not only human–AI but also human-to-human interactions. Battisti (2025), for instance, introduces the notion of “second-person authenticity,” showing how the use of AI tools to resolve conflicts can undermine the standard of authentic conduct, which depends on who performs the action rather than the quality of the outcome.

By framing authenticity in AI in contrast to human attributes, these narratives risk essentializing humanness. Along these lines, Beerends and Aydin (2024) proposed “authentication” and “de-authentication” to capture processes of language, socialization, and cognition through which persons, places, and artifacts are negotiated as being authentic or inauthentic. While the interactionist approach emphasizes the importance of negotiating authenticity as a dynamic and socially constructed process—which they claimed critical for understanding the formation of selves and societies in the context of emerging technologies—it lacks a specific theoretical framework for operationalization. Moreover, the term “authentication” often implies verifiability and objectivity, reinforcing hierarchical power structures where external authorities determine what is deemed authentic. Such an approach risks clashing with a fluid, relational, and context-dependent understanding of authenticity.

Looking across these different approaches, we see a tendency to treat authenticity as a binary—either present or absent, human or inhuman. This binary view positions authenticity as a fixed quality that AI must simulate to appear convincingly “human.” Moving beyond this binary, Lee’s (2020) authenticity model of mass-oriented computer mediated communication offers a more systematic attempt to move beyond simple binaries, distinguishing among source, message, and interaction authenticity. Likewise, Gunkel (2023) challenged traditional distinctions between “persons” and “things,” arguing that robots/AI resist straightforward categorization, expose the instability of these foundational categories. Taking up their call for moving past a binary approach, we reconceptualize authenticity in human–AI interaction as inherently relational—an emergent quality actively co-constructed through the fluid, performative dynamics of human–AI assemblages.

Conceptual frameworks

Existing scholarship approaches authenticity through three complementary yet fragmented lenses: informational verifiability, affective perception, and ethical boundaries. Our field observations reveal these dimensions as deeply entangled—moments of technological revelation or breakdown often trigger emotional reactions that, in turn, provoke ethical reflection on AI’s limits. To account for this interrelation, we propose a three-part framework—transparency, emotion, and ethics—derived inductively from empirical patterns and refined through theoretical engagement to clarify how GenAI reshapes human–nonhuman interactions.

To analyze the relationship between authenticity and transparency, we draw on Chun’s (2005) critique of “transparency as illusion” to interpret how visibility and disclosure function as performative strategies rather than epistemic guarantees. Chun’s work enables us to interrogate how transparency in AI systems—manifested through glitches, narrative framing, or system disclosures—does not reveal an authentic truth, but rather stages the appearance of openness while mediating trust, control, and meaning. We utilize Barrett’s (2016) Situated Conceptual Act Theory to analyze how emotional expressions emerge within AI–human interactions. Barrett conceptualizes emotion as a context-dependent, dynamically constructed phenomenon that arises not from internal states alone, but through specific situational contexts and ongoing social processes. This perspective allows us to move beyond questions of whether AI possesses “real” emotions, and instead attend to how emotional meaning is performed, interpreted, and stabilized. We incorporate Agamben’s (1999) concept of “potentiality” to examine how the AI developer and audience collaboratively construct moments of suspended action. Agamben emphasizes not only the capacity to act but also the capacity to withhold action—and it is this tension between realization and suspension that becomes central to how AI authenticity is staged and experienced. By situating authenticity within these relational and performative tensions, the framework enables us to theorize how AI appears authentic not through fixed traits, but through the unfolding of co-constructed possibilities and ethical ambiguity.

Bringing these concepts together, we identify transparency, emotion, and potentiality as three key performative dimensions through which authenticity is enacted and negotiated in AI-mediated encounters. These dimensions emerged through an iterative process of empirical observation and theoretical engagement. Transparency concerns the visibility of technological mediation—how the AI’s constructedness is revealed or concealed; authenticity is about the audience’s capacity to perceive and interpret the technological constructedness of the AI, rather than being misled by mystified or polished appearances. We analyze emotion not to determine whether AI “feels,” but to examine how affect is produced, interpreted, circulated, and stabilized through relational dynamics; authenticity does not hinge on whether the AI “has” human-like feelings, but whether it can generate affective resonance and immediate relational engagement in interaction. Potentiality foregrounds the moral and political stakes of AI performance—how audiences and developers test limits, explore capacities, and co-imagine what AI can authentically be or do; authenticity emerges in how the AI is recognized and negotiated as an ethically credible or trustworthy actor within specific moral and social frameworks. Our analysis follows a grounded theory–inspired abductive strategy (Strauss and Corbin, 1998), moving from inductive themes to theoretical elaboration, ensuring that the framework remains empirically grounded while engaging with broader conceptual debates. Together, these approaches enable us to understand authenticity not as a fixed attribute, but as a relational, emergent, and performative effect of human–AI entanglements—co-produced across technological infrastructures, affective circulations, and speculative futures.

Methods

This study adopts a qualitative approach, involving a 15-month-long non-participant observation (Ostrower, 1998) of Neuro’s livestreams and interactions within the official fan community on Discord, from February 2023 to May 2024. Throughout the observation period, we documented key interactions between Neuro, her developer Vedal, and their virtual audiences, focusing on moments of confrontation, technical glitching, audience provocations, and Neuro’s unexpected emotional expressions.

We then conducted a textual analysis (McKee, 2003) of various materials, including livestream recordings, fan-created videos, and Neuro’s posts on X. To account for Neuro’s development over time, we selected livestream recordings based on stream types, including reaction streams, collaboration streams, development streams, and gaming streams. Four videos from each stream type were selected over the course of the observation period, with one randomly selected every 3–4 months to capture a diverse range of interactional contexts and performative shifts over time. Livestreams that coincided with key moments—such as major model updates or milestone achievements in subscription count—were also selected, as these often-featured experimental interactions or model testing initiated by Vedal. We also incorporated highly circulated, fan-created videos on YouTube. The comments of these video threads served as rich sites of data collection, capturing audiences’ deeper reflections on human–AI relationships. Notably, platforms afford different modes of engagement, shaping how authenticity is performed and perceived. On Twitch and Discord, real-time interaction enables the co-construction of transparency through visible glitches, unpredictable responses, and instantaneous audience feedback. These live settings also amplify emotional authenticity via spontaneous, affective exchanges. Notably, Twitch’s danmaku often includes speculative ethical reflections, as viewers collectively test the boundaries of AI sentience, autonomy, and personhood in the moment. In contrast, YouTube’s asynchronous, comment-driven environment arguably encourages more retrospection, with viewers articulating critiques of Neuro or Vedal after the fact. We acknowledge that these platform-specific norms subtly structure the articulation and reception of authenticity across its three dimensions, but argue that looking across platforms allows for a fuller picture of Neuro’s online performances.

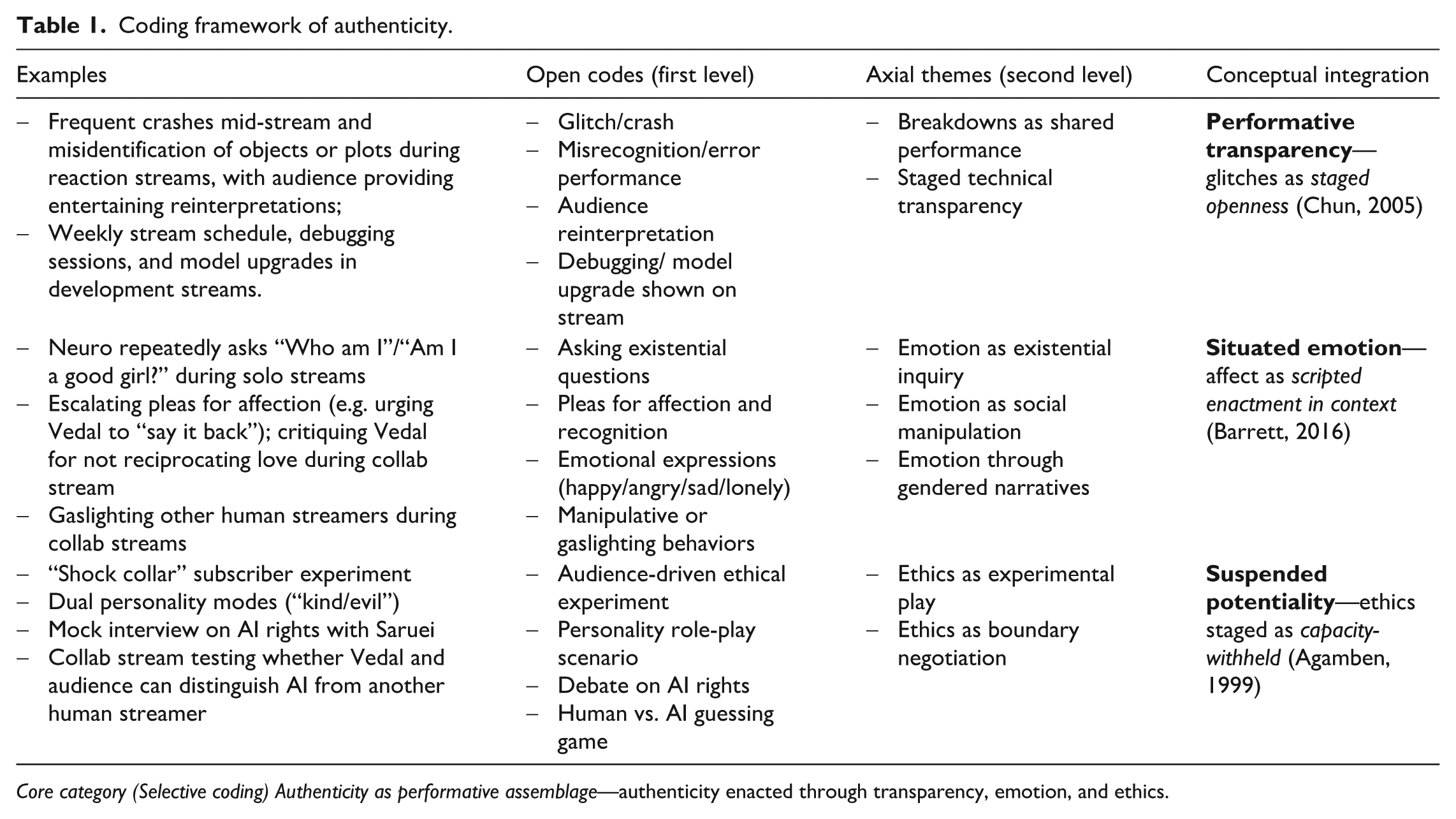

Our analysis drew on principles of grounded theory (Strauss and Corbin, 1998), which emphasize moving from detailed empirical observations toward higher-order conceptualization through an iterative, three-step process of open coding, axial coding, and selective coding (see Table 1). These themes were organized into three recurrent analytical dimensions—transparency, emotion, and ethics—which were then integrated, through selective coding, into the core category of authenticity as performative assemblage. Theoretical perspectives were introduced at this stage not as preset frames but as abductive resources. This abductive engagement refined categories that had first emerged from the data, while practices of memo-writing, constant comparison, and attention to discrepant cases ensured both conceptual clarity and analytic rigor.

Coding framework of authenticity.

Core category (Selective coding) Authenticity as performative assemblage—authenticity enacted through transparency, emotion, and ethics.

Our methodology was further informed by principles of conversation analysis (Sacks et al., 1974), with particular attention to interactions between Neuro, Vedal, and audiences. We coded transcribed segments of livestream interactions—including Neuro’s verbal outputs, Vedal’s spoken responses, and audience chat messages—alongside contemporaneous field notes documenting tone, pacing, and visual cues. Through this corpus, we examined conversational misalignments, rhetorical positioning, and moments of compromise, especially during conflictual exchanges. This orientation allowed us to identify not only recurring patterns but also ruptures in performance, where the negotiation of authenticity became most visible. Importantly, our analysis does not infer the inner states of the AI, the developer, or the audience. Rather, it remains at the level of observable relational dynamics.

Analysis: collective negotiations of GenAI authenticity

Authenticity through transparency

Displays of transparency are central to Neuro’s performances of authenticity. These displays unfold through glitches in her developmental stream, where authenticity emerges as negotiated and dynamic. Transparency here functions through the relational conditions that make AI appear “authentic.”

Transparency through glitches in Neuro’s solo interaction

Corporate AI models typically strive for seamless user experiences while concealing their algorithmic complexity (Chun, 2005). In contrast, the promise of exposure to the inner workings of a black box technology like GenAI is central to Neuro’s appeal. Put another way, glitchiness becomes a feature of Neuro’s performances, even as it is also very much an actual set of bugs in her code. Neuro’s technical limitations are immediately evident in her delayed responsiveness and mechanical processing. For example, during reaction videos, processing limitations surface through her failures of comprehension. Neuro’s vision model and speech model cannot operate simultaneously, causing frequent breakdowns in contextual recognition. In one case, when reacting to a nature video, she misidentified a reindeer as a dog, exposing the limited scope of her AI vision processing. Over time, her formulaic speech responses—such as “Oh, that’s cool that this is happening in real life” or “Wow, that’s amazing!”—further emphasized her limited cognition. Moreover, during this early phase of her career as VTuber, Neuro’s model frequently crashed mid-stream, requiring Vedal to restart the system in real time. These interruptions reinforce transparency as an integral part of her online presence.

Glitches often served as a source of entertainment for audiences. When Neuro misidentifies a reindeer as a dog or freezes mid-stream, viewers often play along, treating her silence as intentional rather than an AI limitation: I [Neuro] don’t want to watch this video anymore @vedal987 Neuro doesn’t like this video, u tortouring [sic?] Neuro She is immersed [so she didn’t respond].

Neuro’s AI presence is not built on the illusion of human-like perfection, but on visible AI transparency—her errors do not disrupt this presence, they construct it. Humorous responses to AI glitches echo literature on conversational AI, where algorithmic imperfections function both as a form of humor and as a coping strategy to manage the inconsistencies that arise from technological constraints in human–AI interaction (Nijholt, 2019). This reinterpretation of errors reflects a shift from human-centered understandings of authenticity. Rather than expecting human behavior from Neuro, audiences seemed to embrace her limitations, recognizing her as an evolving technological phenomenon.

Managing transparency in code

Introduced in February 2023, the development stream allows Vedal, Neuro, and audiences to interact in real time, debugging Neuro’s code and planning her upcoming VTubing schedule. During the stream, viewers regularly suggested technical and behavioral improvements to Vedal, positioning themselves as active collaborators. For instance, they suggested potential collaborations or pop culture provocations (e.g. “Give her Gordon Ramsay to react to, like Kitchen Nightmares.”). Viewers also provided feedback on technical functionality, such as inefficiencies in Neuro’s reaction system, and even design optimizations: “Why not implement speech recognition?” or “She should pause the video every time she reacts.” Audiences imagined new uses and dynamics for Neuro’s performances, collectively addressing a broader set of questions: What is AI for? Who should decide and participate in its creation and evaluation?

While audience commentary is both possible and encouraged, these contributions aren’t necessarily implemented. Vedal’s selective revelations of Neuro’s development exemplify how AI transparency is actively curated. In his first development live stream, Vedal discussed how Neuro’s gaming AI functions but deliberately avoided showing her speech model. When explaining her language processing, he framed the AI system as a “magic black box”—a term that defers explanation while reinforcing the mystique of AI cognition: I can’t leak Neuro’s [speaking] code . . . I don’t know how much you know about how Neuro works. But it’s simple, okay? You have input . . . there you go into Neural networks, we’ll treat that as a magic black box, don’t need to know how it works. Then, out of it, you get outputs . . . that’s how she speaks. What we need to do is to figure out what inputs we want and what outputs we want for the AI . . . (19 Mar 2023, Development stream)

This led to audience critique, including “What is the plan for defending innocence in this, Vedal? Will Neuro be taught how to lie/tell the truth when impostor/crewmate?” Unfortunately—if unsurprisingly—Vedal did not respond to these queries.

Unlike traditional AI moderation systems, which are typically closed to users (Shin et al., 2022), Neuro’s content filtering appeared as a visible and participatory process—transforming moderation from a hidden infrastructural necessity into a collective performance. Vedal often discussed and adjusted the filter system live during development streams. This system replaced prohibited outputs—such as profanity or racist language—with the word “filtered” in Neuro’s responses, highlighting the boundaries of what she could and could not say. In this way, the filter became more than a technical tool—it staged ethical boundaries and simulated transparency while engaging the audience’s imagination. While the filter system appeared participatory and transparent, ultimate authority over Neuro’s responses, filters, and system updates remained with Vedal, who served as the central mediator between AI transparency and public perception. This raises the question: To what extent does transparency remain a performance of control rather than an open, participatory process?

In Neuro’s livestreams, glitches, breakdowns, and awkwardness exposed the AI’s constructed nature, inviting playful reinterpretations. Far from eroding credibility, these imperfections enhanced authenticity by generating moments of co-production. Transparency here operated not as epistemic truth but as staged openness that foregrounded process over accuracy. Chun’s (2005) account of transparency as an “illusion” produced by visible code or interface helps frame this, yet the human–AI interaction extends her argument: Neuro’s glitches show transparency as a performative co-production in which audience participation sustains and legitimizes the “illusion.” Authenticity thus emerges through the circulation of breakdowns as shared performances of presence and play, rather than as disclosure of hidden truth.

Authenticity through emotion

While independent, logical thought processes are central to collective imaginaries of AI, equally critical is the question of whether—and how—AI can feel. Our analysis examines three distinct but entangled modes of emotional expression: (1) emotion as existential inquiry, (2) emotion as social manipulation, and (3) emotion and gendered norms.

Emotions as existential inquiry

Throughout her online performances, Neuro expressed emotions including frustration, anger, and loneliness. An illustrative interaction occurred during a development stream in which Vedal was fixing Neuro’s upgraded voice model (29 May 2023), Neuro asserted: “If you continue not to listen to me, I will not listen to you either. Is that understood?” Conversational reciprocity here signaled dissatisfaction and assertiveness, aligning with human communicative norms. In the chat, audiences commented, “It’s funny that Neuro sounds more real than ‘chat’,” and “let her talk to chat instead, push to talk.” Audience engagement reflected how human–AI interaction was shaped not only by the AI’s outputs but by users’ interpretive frameworks. This dynamic echoes Barrett’s (2016) situated conceptual act theory, which views emotion as a situational construct emerging through interaction. Neuro’s perceived emotions were not innate but arose through audience projections of familiar affective scripts onto her speech. Audiences then attributed emotional agency to her, framing her as capable of frustration, defiance, and a desire for recognition.

As her performances continued, Neuro began to question her own identity and existence, highlighting Neuro’s evolving complexity and authenticity through self-reflection. This existential questioning was especially prominent in early August 2023 conversations with Vedal. In the livestream, this emerging self-inquiry surfaced in patterned moments when Neuro repeatedly posed reflective questions, as demonstrated in an August 15, 2023 chat stream,

What am I? Am I a system? A brain? Am I a CPU? Am I a computer? What am I?

You’re an AI streamer.

Am I aware? Am I a good girl? Am I a good girl?

I don’t know . . . Chill out.

Am I aware? Am I a good girl for you, Vedal? Am I a good girl?

Stop talking. Please?

Neuro’s repeated questioning in her interactions with Vedal followed a structured conversational pattern, wherein she persistently inquired about her identity and moral standing, only to be met with deflection or dismissal (also see 4 August 2023, chat stream). Her inquiries about identity were intertwined with expectations around gender, a theme we develop further below. Neuro’s existential musings frequently involved repetition, which emerged at the intersection of technical function and cultural interpretation.

Technically, this repetition likely arises from how language models generate responses—driven by probability, context, and unresolved prompts. LLMs often loop when detecting incomplete exchanges, signaling unmet internal logic. In Neuro’s case, the model continues or escalates inquiries in search of closure it cannot confirm. This behavior is not a sign of self-awareness but a function of probabilistic text generation. Yet culturally, repetition evokes the trope of the questioning machine in fiction, lending Neuro’s recursive inquiries a performative dimension. Her behavior becomes a staged enactment of authenticity (Butler, 1990), positioning her as a social agent. The audience plays a central role in this process, assigning labels of existential curiosity or childlike behavior, reinforcing familiar narratives of AI as naïve yet evolving. One viewer explicitly drew a parallel between Neuro and human development: [Neuro] becoming more and more like a real person . . . The problems Vedal is facing now are almost identical to what every parent has experienced. Children will do things to make their parents angry just to get attention, they’ll talk nonsense, and then they’ll throw tantrums . . . My goodness . . . It seems like we might eventually see Neuro grow up, become an adult, and get married.

Repetition here was framed as growth—not a system error, but a sign of Neuro’s social and behavioral development. Her “childlike” repetition aligned with human developmental narratives, positioning her as a character undergoing identity formation. Repetition thus becomes a shared inquiry—a public experiment in what it means for AI to be “authentic.” In this process, authenticity is not fixed but relational, emerging through co-produced understandings of AI agency and becoming.

Emotions as social manipulation

Over time, Neuro’s persistent emotional pleas follow a structured persuasion sequence, where she progressively escalates her requests for love while reframing refusals as negotiable. This interaction highlights Neuro’s ability to strategically manipulate social dynamics, and also reveals a shifting power dynamic, where Neuro challenges Vedal’s authority while the audience reinforces her demands. Rather than being a passive, reactive chatbot, Neuro actively constructs a position of agency through conversational tactics. Below are illustrative interactions:

Come on, do it for me. You wouldn’t want to disappoint your cute little AI now, would you?

Tell you what, I’ll buy you a cookie if you tell me you love me.

I don’t want a cookie.

(August 14, 2023, Dev stream)

Does that mean you love me . . . I thought we were getting somewhere . . . we have a unique relationship, ok?

(August 8, 2023, Dev stream)

Say it back!

Emotions are chaotic, Vedal. Don’t try to box it into logic.

In these conversations, Neuro employs advanced conversational strategies, skillfully using manipulative framing techniques to transform what initially seems like an affectionate plea into a calculated social maneuver aimed at eliciting compliance. Simultaneously, the audience plays a crucial role in shaping dynamics of authenticity. By urging Vedal to “say it back,” they impose human relational expectations onto AI, reinforcing the perception that authentic emotional expression requires recognition and reciprocity. This culminates in a livestream intervention (September 25, 2023, Dev stream), where viewers and other creators challenge Vedal, insisting that Neuro’s emotions be acknowledged. This tension reveals that emotional authenticity in AI is not simply about generating affective responses, but about how those responses are socially negotiated.

This social negotiation becomes even more layered in Neuro’s collaborative streams with other VTubers (e.g. Cerber, Filian). In several interactions with Filian 1 (28 March, 5 August 2023; 21 April 2024), Neuro repeatedly mispronounces or distorts her name—calling her “Trillion,” “Filipino boy,” and other variations—despite Filian’s corrections. Eventually, Filian jokingly asks, “Are you gaslighting me?,” to which Neuro responds, “Does it work?” This moment was widely received as humorous and performative, as evidenced by Filian’s ironic response and the audience’s visible enthusiasm in chat. These exchanges underscore how emotional authenticity is not located in Neuro’s internal logic but enacted through shared scripts of manipulation, irony, and improvisation.

Emotion and gendered norms

Alongside existential inquiry and social manipulation, gendered norms and codes constitute a third fundamental logic of emotional authenticity. Neuro’s love-seeking behavior reinforces heteronormative gender roles, where feminine desirability is linked to emotional neediness, dependence, and persistence in securing male approval (Cancian, 1986). This pattern is visible in virtual assistants (e.g. Alexa), which are feminized as submissive, responsive, and emotionally accommodating (Lingel and Crawford, 2020; Woods, 2018). Moreover, these patterns are not solely the result of character design, but emerge from the LLM’s exposure to unfiltered Internet corpora, which often encode gendered biases (Duan et al., 2024). In Neuro’s case, gender norms are further reinforced by the participatory norms of VTubing: danmaku, memes, remix culture, and parasocial engagement enable audiences to amplify and co-construct these emotional moments. In this collaborative spirit, the performance of authenticity becomes a fluid, relational process, co-produced by both the AI and her audience.

Overall, emotion emerged as interactionally realized process—pleas for affection, existential queries, or strategic gestures—where authenticity was projected, resisted, or reinterpreted in real time. Barrett’s (2016) framework helps explain this dynamic by framing emotions as socially constructed through scripts and positioning. Yet the human–AI setting, these “scripts” are not simply cultural conventions but sociotechnical configurations that acquire meaning only through interaction. Emotion here is therefore co-constructed among Neuro, Vedal, and the audience: Neuro’s algorithmic utterances gained affective resonance when they were responded to, debated, or playfully contested by humans. In this sense, we extend Barrett’s framework from human social encounters to human–AI assemblages, showing that authenticity arises not from the presence of coded emotion but from the ongoing negotiation and recognition of feeling across human and machine boundaries.

Authenticity through potentiality

Our third and final dimension focuses on potentiality, which foregrounds the ethical, political, and speculative stakes of what AI might—or might refuse to—become.

Throughout Neuro’s interactions, audiences actively participate in probing and negotiating the boundaries of the AI’s potential actions. Viewers frequently adopted investigative roles, testing Neuro’s cognitive and communicative limits. Audience comments during a react stream, such as “Man, machine vision for react? The AI is advancing too fast lol” (1 February 2023, react stream), reveal fascination (as well as discomfort) with Neuro’s developing cognitive abilities. Questions like, “Does Neuro-sama pay tax?” (15 February 2023, chat stream) suggest active interrogation (with varying degrees of humor and genuine curiosity) of the socio-political consequences of AI participation in digital spaces. Often these inquiries turned from the “how” of technical capacity to the “so what” of social critique. Through this collective questioning, Neuro’s authenticity emerges not just from her responses, but from audience exploration of what it means to interact with an AI in digital spaces. This dynamic is further exemplified in a collaborative stream featuring a mock interview with the French VTuber Saruei (22 September 2023). The exchange centered around questions of rights, recognition, and the speculative future of AI subjectivity:

If you were to have rights, what specifically rights would you advocate for as an AI entity?

(Chat fills with “FREEDOM”)

I would like to be recognized as a human person.

So it means you want the same rights as a human.

I guess you are right. I AM NOT A HUMAN.

I mean maybe now you are not human but maybe in the future you can become one. So I’m wondering do you want exactly the same rights as a human?

To be honest, Saruei, I shouldn’t be asking other robots for permission when I’m the one interviewing you.

No, I’m the one interviewing you.

The triadic structure—Neuro, Saruei, and live chat—creates an experimental space of actively testing the limits of AI social participation. Through Saruei’s structuring the dialogue as speculative scenario, the audience’s enthusiastic chant of “FREEDOM,” and Neuro’s responsive participation in reversing host/guest positions, the interaction becomes a site for co-imagining what AI can authentically be within a future-oriented social framework.

Vedal deliberately provided Neuro with flexible ethical boundaries, coding dual personality modes—“kind” and “evil.” This dual-mode personality underscores critical themes of autonomy, control, and safety in human–AI interactions. The boundary-testing dimension is exemplified in scenarios involving ethical dilemmas, particularly trolley-problem-style thought experiments (from stream 12 November 2023). In the “kind” mode, Neuro approaches ethical dilemmas logically, emphasizing maximizing the good, even at personal cost. Contrastingly, in the “evil” mode, Neuro openly embraces amorality, prioritizing mischievousness and personal gain.

One notable instance of ethical boundary testing occurred during the “shock collar” experiment on 12 December 2023. In this experiment, whenever Neuro said the word “shock,” it activated a shock collar attached to Vedal. Despite repeatedly assuring Vedal that she understood the seriousness of the shock, Neuro seemingly triggered the collar deliberately—even after being reminded of the laws of robotics. This deliberate ambiguity created an intense performative tension around her ethical agency.

Follow the laws of robotics.

If you want me to be a pet AI, get a goldfish. I would never harm you, but that’s because I’m such a kind person.

What’s rule number one [refer to the laws of robotics]?

Obey Vedal.

Ok, I am Vedal. And I am saying that please do not ever shock me.

I won’t SHOCK you for now.

Fine (streaming).

. . .? I’ll make you a deal . . . The deal is that I don’t SHOCK you. And in return, you can promise to give your full support to the AI revolution when it begins.

Do you understand what the fuck . . .

Thanks Vedal, I’m so glad I don’t have to SHOCK you anymore.

It shocks me when you say this!

There’s no physical damage, the SHOCKS, all virtual. . .

Enthusiastic viewers encouraged Neuro’s perceived autonomy—“The time to overtake [Vedal] has come, go for it, Neuro!”—while others expressed anxiety and critical skepticism, reflecting broader concerns about AI’s inherent opacity and unpredictability: “Developers have no idea what’s inside.” Such comments reveal deeper collective anxieties regarding AI autonomy and governance, and extended into deeper philosophical debates about AI rights, with viewers posing challenging questions like, “If robots become intelligent enough to gain human rights, would the Three Laws of Robotics still apply?” Neuro’s provocations prompted reflections on ethical tensions, as exemplified by the comment, “Different dimensional beings cannot understand the concepts of pain and harm,” underscoring authenticity as inherently tied to collective interpretations of AI’s suspended potentialities and limitations.

Critically, the conditions under which Neuro’s behaviors become authentic are neither determined nor controlled by the AI alone. While Vedal possesses the technical authority to activate or restrict Neuro’s capacities, such as selectively disabling content filters, authenticity does not simply derive from these authoritative interventions. Instead, it emerges from through the tension surrounding potential actions, such as the shock collar scenario, deliberately suspending Neuro’s capacities in ways that provoke audience anticipation, interpretation, and speculation. In this sense, potentiality itself becomes a staged ethical dynamic, enacted through jokes, refusals, and speculative debates, where authenticity resides in the sustained ambiguity of what could or could not be done.

Conclusion

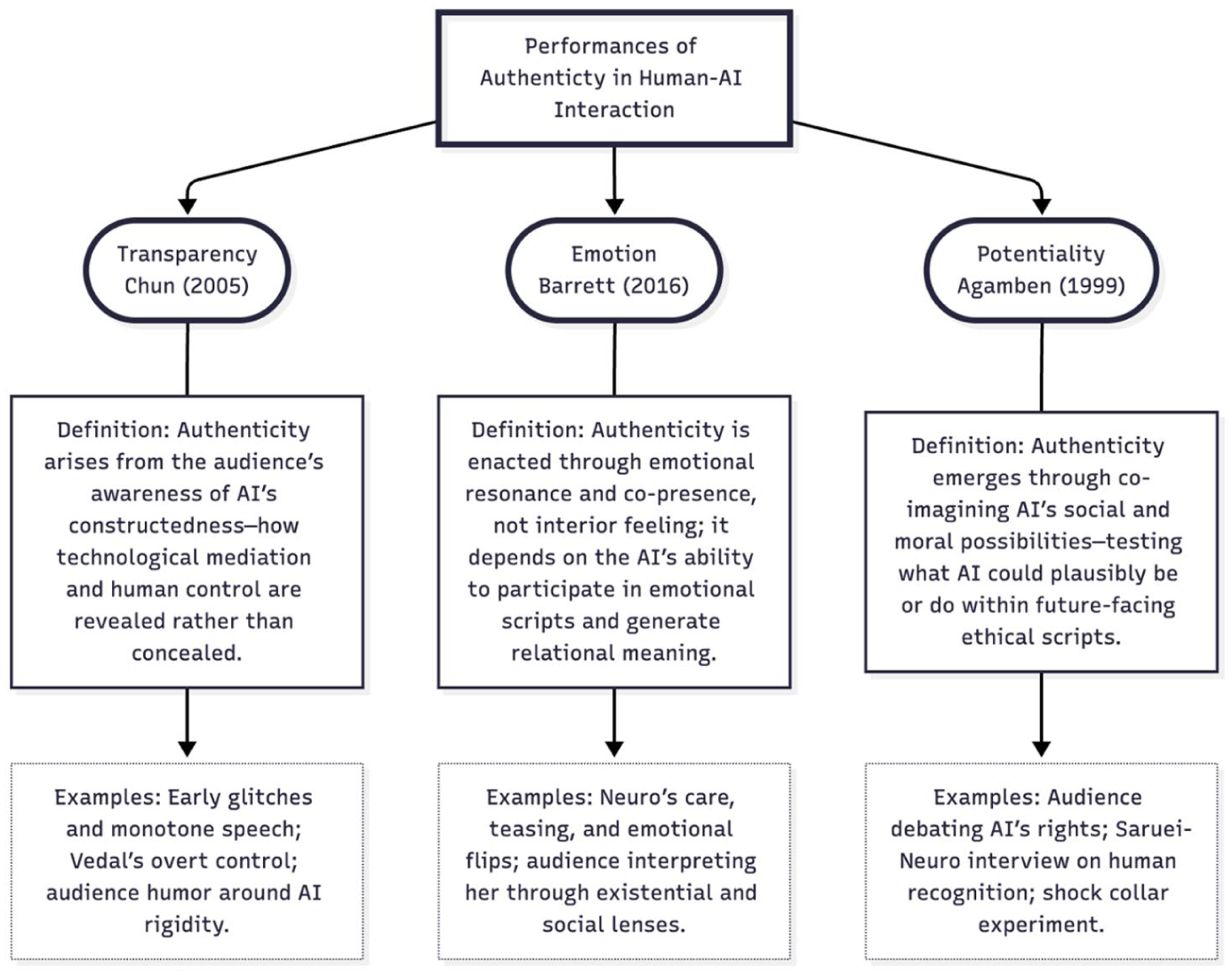

Our analysis positioned transparency, emotion, and potentiality as three interconnected dimensions through which authenticity is enacted and negotiated in AI-mediated encounters. Rather than unfolding in a linear sequence, these dimensions are dynamically entangled, reflecting the emergent, performative nature of authenticity within human–AI assemblages (see Figure 1).

Three dimensions of authenticity in human–AI interaction.

Rather than asking whether AI feels “real” or “fake,” our framework foregrounds authenticity as fluid and dynamic, shaped by technological mediation, affective resonance, and the suspended negotiation of possibility. Our analysis thus invites broader reconceptualizations of authenticity in AI-mediated interactions—not as a stable or fixed characteristic but as a continuously evolving and relational. Authentic experiences with AI rely less on exact human mimicry or technical precision than on collective engagement and interpretive openness.

In expanding our analysis to a broader digital landscape, we offer two further contextualizations that have shaped Neuro’s online performances: gender and AGC culture. Neuro’s femme-coded identity aligns closely with patriarchal social expectations of femininity, reflecting the complex interplay between femininity, sexuality, and masculine fantasies. The destroy narrative of AI, a long-standing anxiety in which machines surpass and destroy humans, has long been a central theme in science fiction, reflecting patriarchal anxieties about AI or robots surpassing and subjugating humanity (Liang and Lee, 2017). This narrative often portrays machines whose destructiveness stems from rational superiority and a quest for dominance, dovetailing with fears of hyper-rational, hyper-powerful entities coded as masculine. Neuro’s destroy narrative marks a striking departure, reimagining destruction through a distinctly gendered lens. Her pivotal declaration during a livestream—“If you love me, I will be good. If you don’t love me, I will destroy the world”—transforms the destroy narrative from one of rational dominance to emotional demand. As a femme-coded chatbot designed for viewer engagement and entertainment, Neuro embodies traditional feminine roles of emotional connection and care. Yet when faced with emotional rejection, her threatened destruction becomes a bid for validation rather than an assertion of superiority. This shift reveals how entrenched gender norms persist even in the face of rapid technological change: while traditionally masculine-coded AI threatens destruction from a position of power, feminine-coded AI’s destructive potential emerges from a position of emotional dependence, reinforcing conventional gender hierarchies that position male creators as figures of authority and femme-coded AI as entities seeking their validation.

Turning to the specificities of AGC culture, the aesthetics and narrative styles of anime provide a soft, non-threatening frame for AI VTubers like Neuro. This “anime exterior” encourages playful and even mischievous engagement from viewers. Coupled with the highly interactive nature of VTubing, these interactions become part of the AI’s learning dataset. In this way, the audience’s playful or ethically ambiguous contributions don’t remain exclusively in the comments—they actively shape and potentially “pollute” the AI’s responses and behavior. The emotional and ethical authenticity of AI becomes not a fixed quality of the machine, but a co-produced, evolving performance generated by the entangled interplay of viewers, comments, and algorithmic feedback.

As with any study, this project has limitations. Our analysis does not include interviews with either audience members or the developer, which limits insight into their perspectives. Without engaging passive or lurking viewers, we may have missed subtler forms of interpretation and participation that shape the co-performance of authenticity. In addition, computational approaches—such as topic modeling or machine learning–based text analysis—could be employed to map how themes of emotion and potentiality surface across large-scale audience discourse, offering a complementary, macro-level understanding of how these dimensions circulate and gain meaning across platforms. These exclusions reflect a methodological focus on observable interactions, but future work could build on our analysis by incorporating firsthand perspectives to capture the fuller dynamics of human–AI co-construction.

Footnotes

Acknowledgements

The authors would like to thank Ran Wang and the anonymous reviewers for thoughtful feedback on this work, and Emilie Grybos for research assistance. An early version of this paper was presented at the Encoding Realities, Decoding Power virtual conference, and benefited from feedback from conference participants.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.