Abstract

We posit that research into misinformation interventions puts too much focus on informational outcomes (e.g. perceived accuracy of misinformation), and too little on persuasive outcomes (e.g. inferred beliefs, attitudes, intentions, and behaviors). Because of the informational outcome focus, common misinformation interventions (i.e. forewarning and debunking) have not been systematically tested for their ability to mitigate persuasive effects. In two preregistered experiments (N = 657 and N = 427), we tested the effectiveness of forewarning versus debunking for positive and negative misinformation, focusing on attitudes and behavioral intentions as outcome measures. Results show that, as hypothesized, post-exposure corrections are most effective in reducing misinformation’s persuasive effects; pre-exposure corrections in fact do not significantly reduce persuasive effects. We also corroborate prior findings that especially effects of negative misinformation are resistant to corrections. Based on our results, we advise media outlets to not only rely on forewarnings, but to also correct misinformation after user exposure.

In today’s society, we are mass consumers of information, both online and offline. A significant part of this information happens to be untrue. A systematic review showed for example that more than a quarter of online information about COVID-19 was in fact misinformation, especially on Twitter, Facebook, Instagram, and YouTube (Borges do Nascimento et al., 2022). Although factual belief in misinformation (e.g. believing hydroxychloroquine cures COVID-19) can be harmful in itself, often more detrimental are misinformation’s broader persuasive effects: the beliefs, attitudes, intentions, and behavioral responses that people infer from misinformation. Such misinformation-induced persuasive effects can have serious societal consequences, such as fostering resentment toward societal actors or organizations (e.g. Chen and Cheng, 2020; Komendantova et al., 2023), distrust in healthcare, science, media, government, and politics (e.g. Bennet and Livingston, 2018; Van Aelst et al., 2017), polarization (Sikder et al., 2020), misogyny (Banet-Weiser, 2021), and ethnic conflict (Melvern, 2001).

Several studies suggest that corrections are generally less effective in reducing persuasive effects compared to factual misinformation effects (Lewandowsky et al., 2012). The idea that corrections are not effective to mitigate misinformation’s persuasive effects is not new (e.g. Johnson and Seifert, 1994), but, surprisingly, persuasive misinformation effects have rarely been the explicit object of misinformation research; in fact, a vast majority of this research focuses—both theoretically and empirically—on informational outcome measures (e.g. perceived misinformation accuracy; Droog et al., 2024). In addition, persuasive effects are mainly investigated in a “textbook” context where information is corrected post-exposure. Meanwhile, social network sites such as Facebook and Instagram increasingly provide misinformation warnings pre-exposure. In sum, both for theoretical and applied reasons, it is important to investigate the effectiveness of different intervention types to mitigate misinformation’s potential persuasive effects in a systematic fashion. The current study focuses on that investigation. Because several prior studies showed that effectiveness of corrections may differ depending on the valence of the misinformation (e.g. Cobb et al., 2013; Van Huijstee et al., 2022), we include it as a moderator in one of our two studies.

Persuasive effects of misinformation

Misinformation can have serious detrimental effects for individuals and society at large. Recent examples of online misinformation range from false accusations of systemic child abuse by Democratic politicians (e.g. Gillen, 2016), to manipulated video material related to the Russian–Ukrainian war (Montesse, 2013). Several initiatives exist to fight the spread of misinformation, for example by providing fact-check-based corrections (Graves, 2013). Consistently, however, it turns out that even effective corrections of misinformation do not completely neutralize its persuasive effects (e.g. Johnson and Seifert, 1994). This means that even though someone may be consciously aware that certain information is false, the information might still affect one’s rationale and actions.

A psychological mechanism that is often used to explain this, so-called, “continued influence” of misinformation is unbelieving (Gilbert, 1991). This approach assumes that in order to understand information, we form mental representations of it, which requires temporary automatic acceptance (Gilbert, 1991; Grice, 1975). When information later turns out be incorrect, individuals need to unaccept the previously accepted information, requiring analytic thinking (Pennycook and Rand, 2020) and active and effortful processing (Gilbert et al., 1990). Because cognitive effort is not always likely to be exerted, unaccepting and unbelieving may be hindered. Although this might seem like a valid explanation of persistent belief in misinformation, it imposes two major problems. First, most studies show that once people are aware of a correction, they typically do effectively unbelieve the misinformation (e.g. Cook et al., 2014; Ecker et al., 2022). That is, persistent informational effects of corrected misinformation are quite uncommon. Second, the unbelieving account pertains to the informational effects of misinformation, not to its persuasive effects. If the latter can occur despite the absence of factual beliefs in misinformation (Van Huijstee et al., 2022; see Walter and Murphy, 2018, for a meta-analysis), then explaining why corrected misinformation may have informational effects misses the point. At its heart, the notion of continued influence presumes a potential disconnect between informational and persuasive effects of misinformation. The existence of that disconnect, in turn, suggests that persuasive effects may persist even if the misinformation on which they were based is long forgotten.

A possible explanation for the occurrence of persuasive effects after effective refutation of misinformation may lie in the classic theory of belief perseverance (Ross et al., 1975; Siebert and Siebert, 2023). Belief perseverance is paradigmatically different from the “unbelieving” account because it posits that beliefs may persevere in the complete cognitive absence of evidence. The belief perseverance approach postulates that people spontaneously infer beliefs from new (mis)information. These inferred beliefs may subsequently persevere despite their underlying evidence being refuted (Anderson et al., 1980). In the seminal belief perseverance experiment (Ross et al., 1975), researchers asked participants to evaluate the authenticity of suicide notes, and subsequently provided them with false positive of negative feedback about their performance. Next, the researchers explained to the participants that the feedback was fabricated; no other performance feedback was given. Results showed that participants nevertheless persevered in (positive or negative) ability beliefs originally inferred from the fabricated feedback about a specific performance. In absence of supporting evidence, but notably also in the absence of counter-evidence, the now “orphaned” ability beliefs persevered. Since these original experiments, belief perseverance effects have been demonstrated in different contexts, including in that of misinformation (Siebert and Siebert, 2023). Based on belief perseverance theory, we hypothesize the following:

H1: Misinformation will have a persuasive impact despite being corrected.

Forewarning versus debunking

Traditionally, research into fact-checking interventions predominantly focused on debunks: corrections that follow the initially presented misinformation (Guzzetti et al., 1993). Currently, social media platforms increasingly provide forewarning messages, cautioning users that they are about to see incorrect information. Depending on the platform, focal content is then blurred or supplemented with links to accurate sources (Bode and Vraga, 2015). In the context of advertisements, forewarnings have been shown to reduce persuasive effects by inducing persuasive resistance: they increase counterarguing (Petty and Cacioppo, 1977) and reduce opinion change (Allyn and Festinger, 1961). According to the persuasion knowledge model (Friestad and Wright, 1994), awareness of a message’s persuasive intent triggers distrust and lowers persuasive impact (Boerman et al., 2012); the same might apply to misinformation forewarnings. However, forewarnings might also impair cognitive effort required for effective message refutation by decreasing motivation to attentively engage with later information (Bohner and Dickel, 2011), which could subsequently lead to persuasive influence.

Note that we use the term “forewarnings” to differentiate them from “prebunks” as conceptualized in inoculation research. In current inoculation approaches, which are loosely based on strategies developed by McGuire (1961), prebunks protect against misinformation in general, by training news consumers to recognize misinformation (Basol et al., 2021; Traberg et al., 2022). Forewarnings—as generally used on social media platforms—simply warn about the content of an upcoming message.

Thus far, research on the effectiveness of forewarnings in relation to misinformation has been scarce. Studies that did investigate forewarnings show that they are relatively effective in reducing informational effects (i.e. belief in misinformation; Martel and Rand, 2023), but less so in diminishing persuasive effects such as trust in mainstream media sources (e.g. Nassetta and Gross, 2020). This suggests that continued persuasive effects might occur despite forewarnings. The few studies comparing misinformation debunks and forewarnings in the context of persuasive effects, however, seem to be somewhat inconclusive. Tay et al. (2022) found no difference in effectiveness between forewarnings and debunks on intended behaviors such as online misinformation promotion and information seeking, while Dai et al. (2021) identified debunks as more effective for shifting attitudes. Swire-Thompson et al. (2020) found only slightly better performance for debunks in one of the four studies when focusing on feelings toward political figures. Drawing on these studies and the observed pattern in the effectiveness of forewarnings versus debunks on informational outcomes in prior research, we extrapolate these findings to hypothesize:

H2: Debunking is more effective than forewarning in decreasing the persuasive impact of misinformation; in turn, forewarning is more effective than no correction at all.

The valence of misinformation and continued influence

Misinformation can either reflect positively on an organization, group, person, or object (e.g. “Raw milk boosts the immune system and is solely beneficial to our health”), or negatively (e.g. “Bill Gates backs mass vaccination in order to implant tracking microchips into people”). As a result, misinformation can potentially benefit or harm its subject. Importantly, several studies indicate that the valence of misinformation affects the effectiveness of corrections. Corrections of positive misinformation tend to effectively counter or even reverse persuasive effects (Cobb et al., 2013), whereas negative misinformation continues to be persuasive after correction (Ecker et al., 2020; Gordon et al., 2017; Thorson, 2016). A recent study on COVID-19-related misinformation explicitly tested this asymmetry and found that persuasive effects of negative misinformation were hard to neutralize, whereas corrections of positive misinformation were very effective, to the point of reversing persuasive effects (Van Huijstee et al., 2022). The effect of corrections thus appears to depend on the valence of the misinformation.

Theoretically, the observed asymmetry could stem from negative information being perceived as more “diagnostic” than positive or neutral information (Baumeister et al., 2001; Skowronski and Carlston, 1987). Also, negative information can be perceived as more credible—critical, independent—than positive information (Epstein et al., 1996; Pan and Chiou, 2011). The influence of negative (mis)information is often visible in political campaigns, where spreading negative news about one’s opponent is a common strategy (Pennycook and Rand, 2021). Because of the previously demonstrated asymmetries in perceived diagnosticity and credibility of positive and negative information, we expect the following:

H3: Positive news articles are less persuasive than negative news articles.

When positive misinformation is less persuasive than negative misinformation, likely its persuasive impact is also easier to neutralize:

H4: Persuasive effects of negative misinformation are harder to correct than those of positive misinformation.

Type of correction and misinformation valence

Further effectiveness asymmetries between corrections may also exist. For example, post-exposure corrections of positive misinformation might yield disappointment and anger in media users, which may be misattributed to the subject of the article, leading to negative persuasive effects. Forewarnings for positive misinformation are less likely to elicit such responses. As a result, corrections of positive misinformation may lead to overcorrections. A prior comparative study (van Huijstee et al., 2022) indeed found that post-exposure corrections of positive misinformation were so effective that they resulted in a reversal of the intended misinformation effects, whereas post-exposure corrections of negative misinformation were just moderately effective. Hence, we expect to find similar asymmetric results for debunks, but not for forewarnings:

H5: Debunked negative misinformation will have a continued persuasive impact (in agreement with the misinformation), while debunked positive misinformation will show a reversed persuasive impact (in opposition to the misinformation).

H6: The asymmetry hypothesized for debunked misinformation (H5) will not occur for misinformation accompanied by a forewarning: here, both positive and negative misinformation will have continued persuasive impact after correction.

The current research

In two preregistered studies among two different populations, we directly compare the effectiveness of pre- and post-exposure corrections to reduce persuasive effects of misinformation. To eliminate the influence of pre-existing opinions, as well as for ethical reasons (van Huijstee et al., 2022), we will use fictional misinformation stimuli. We will use simple and unequivocal pre- and post-exposure corrections, and avoid corrections containing facts, arguments, or rhetoric (e.g. Dai et al., 2021; Tay et al., 2022), because such elements may have differential effects pre- and post-exposure in their own right.

Study 1

Method

To investigate persuasive effects of (corrected) misinformation, a 2 (valence: positive vs negative) × 3 (correction type: forewarning vs debunking vs no correction) between-subjects design was adopted. Ethical approval was granted by the home institute. 1

Participants

Sample size was determined a priori, based on our smallest predicted effect size (H3). To test this small-to-medium effect (d = 0.40) with sufficient power (0.80) using a t-test, we required a sample of at least 100 participants per group (G*Power 3.1). Extrapolating this number to our six conditions, we settled on 600 participants. Note that our hypotheses did not predict (valence*correction type) ordinal interaction effects, which likely would require larger sample sizes (Lakens and Caldwell, 2021). Conservatively expecting 10% of participants to fail attention checks, 661 Prolific responses were collected. Only four participants failed attention checks, resulting in a sample of 657 British participants residing in the United Kingdom. Average age was 40.40 years (range 18–75, SD = 12.97), most participants were female (75.8%), and education level was relatively high: 64.7% completed college education (Bachelor’s or Graduate degree). Average political orientation (1—very left wing to 11—very right wing) was moderate (M = 5.28, SD = 2.03), with 36.7% identifying themselves in the center of the political spectrum [5–7], 43.8% on the left [1–4], and 19.5% on the right [8–11].

Stimuli

Stimuli consisted of a news article about fictional Isles of Scilly council member Jack Thompson, and a correction of this article. Because of the expected continued persuasive effects, we were ethically restricted to use benign (in this case: fictional, and geographically distant) stimuli to not affect political opinion formation.

News article

Two news articles about Jack Thompson were created. Both were supposedly posted online by “Dailynews.uk,” a (fictional) British news source. Both included a story about the council member’s former hospital job. The positive article (n = 328) mentioned Thompson raising money for terminally ill children, while the negative article (n = 329) mentioned him funneling money from this fundraiser. Both articles had a similar layout and included an (AI-generated) picture of Thompson, and of the hospital where he supposedly worked (see Supplemental Materials, Appendix A).

Corrections

The debunk (n = 217)/forewarning (n = 221) message stated, “Warning! The previous/following information is disputed by fullfact.org.” Both corrections were accompanied by a large orange exclamation mark (see Supplemental Materials, Appendix B). A total of 219 participants did not see a correction.

Procedure

After providing informed consent and demographic information, participants gave their general impression of six fictional council members (three male, three female, with different ethnicities, including Jack Thompson), and their likelihood to vote for these people. Participants based their evaluations on the name and (AI-generated) picture of each council member, displayed on a campaign poster with the slogan “Serious action for the Isles of Scilly” (see Supplemental Materials, Appendix C). One council member was presented per page; presentation order was randomized. Participants in the forewarning condition subsequently saw a warning message about the information they were about to see. Participants were then randomly assigned to either a positive or a negative news article about Jack Thompson. The “next” button only appeared after 15 seconds, to ensure participants read the article. Participants in the debunking condition were then presented with a correction. 2 After an attention check, participants indicated the perceived valence of the news article using a slider ranging from extremely negative [−10] to extremely positive [+10], and its perceived veracity (absolutely not true [−10] to absolutely true [+10]), as manipulation checks. Participants then re-evaluated the same six council members, and were debriefed.

Measures

Participants evaluated the politicians before (T1) and after (T2) stimuli exposure. The evaluation consisted of their general impression and their willingness to vote for the politicians assuming they were local members of participants’ preferred parties. Both measures used a slider from –10 to +10, ranging from very negative to very positive for general impression, and from absolutely not to absolutely for likelihood to vote. Persuasive impact was measured as the change in evaluations from T1 to T2 congruent to the article’s valence. That is, for a negative article a negative change would indicate persuasive impact, while for a positive article a positive change indicates persuasive impact. We computed persuasive impact scores (ranging from –20 to +20) for general impression and likelihood to vote.

Results

Manipulation check

Manipulations were effective: corrected information was perceived as less accurate (M = 0.75, SD = 4.60) than uncorrected information (M = 3.69, SD = 3.70; t(528.44) = 8.83, p < .001, d = 0.70). Interestingly, participants perceived the post-corrected information as less accurate (M = 0.14, SD = 0.29) than the pre-corrected information (M = 1.36, SD = 0.29; p < .01). Also, the positive article was perceived as more positive (M = 7.46, SD = 3.39) than the negative article (M = −8.73, SD = 2.54; t(606) = 69.22, p < .001, d = 5.41).

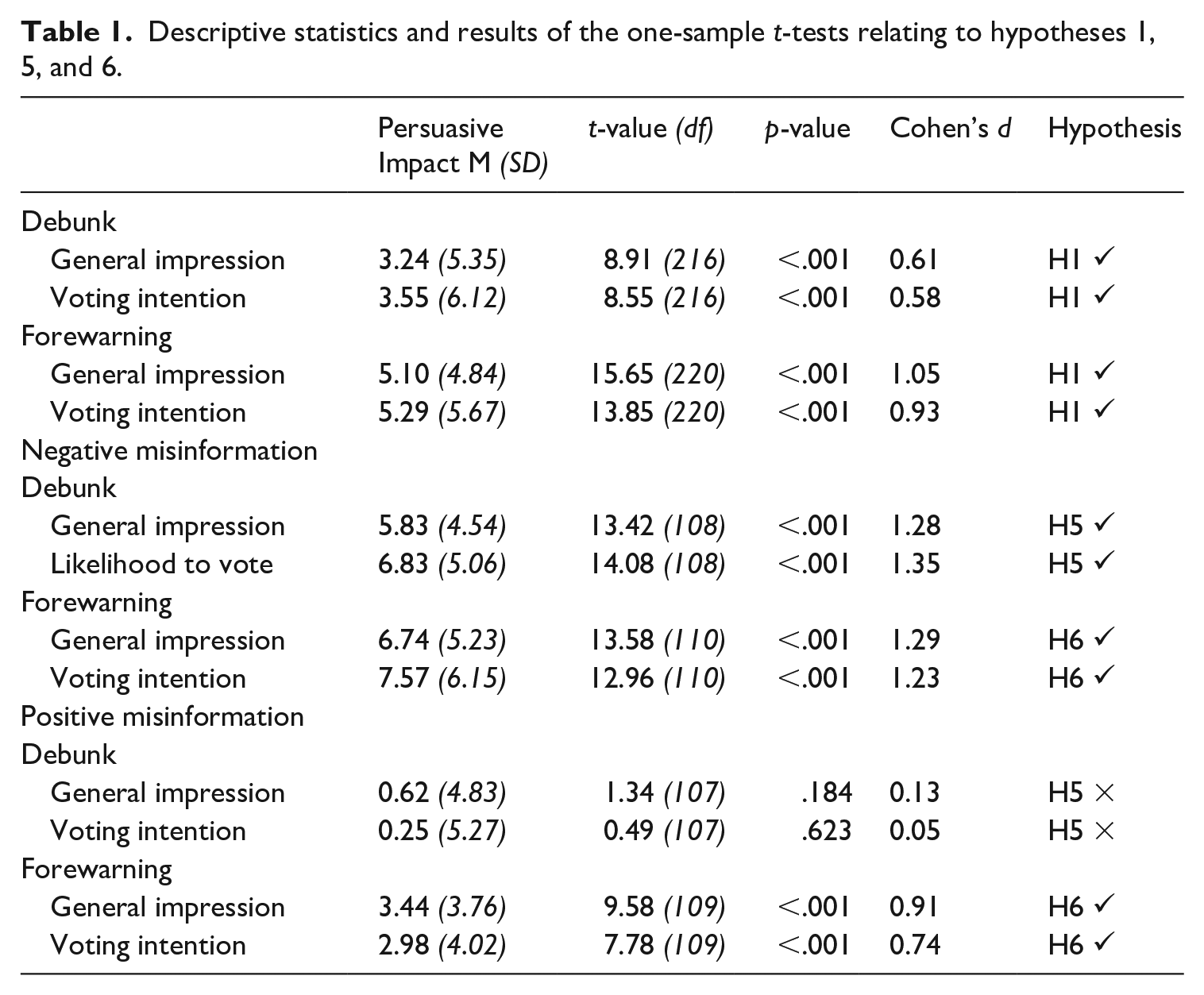

Main analyses

To test if misinformation’s persuasive impact remained despite being corrected (H1), we ran a series of one-sample t-tests. First, despite the use of a forewarning, persuasive impact of the misinformation significantly exceeded 0 for general impression of the council member, and likelihood to vote (both ps < .001). Second, despite of the use of a debunking correction, persuasive impact significantly exceeded 0 for both general impression and likelihood to vote (both ps < .001). All test statistics (found in Table 1) univocally support H1.

Descriptive statistics and results of the one-sample t-tests relating to hypotheses 1, 5, and 6.

In order to test whether debunking is more effective in decreasing the persuasive impact of misinformation than forewarning—which in turn is more effective than no correction at all (H2), we ran two analyses of variance (ANOVA). A significant main effect was found for correction type, F(2, 654) = 18.88, p < .001, η2 = 0.06, on misinformation’s persuasive impact on general impression. Post hoc comparisons using Tukey’s honest significant difference (HSD) test indicated that the persuasive impact on general impression for debunked misinformation (M = 3.24, SD = 5.35) was significantly smaller then for misinformation corrected pre-exposure (M = 5.10, SD = 4.84, p < .001, d = 0.35). However, the forewarning and no-correction conditions (M = 6.13, SD = 4.72, p < .001) did not significantly differ (p = .074). The same pattern was found for the persuasive impact on voting intention: F(2, 654) = 14.17, p < .001, ηp2 = 0.04.

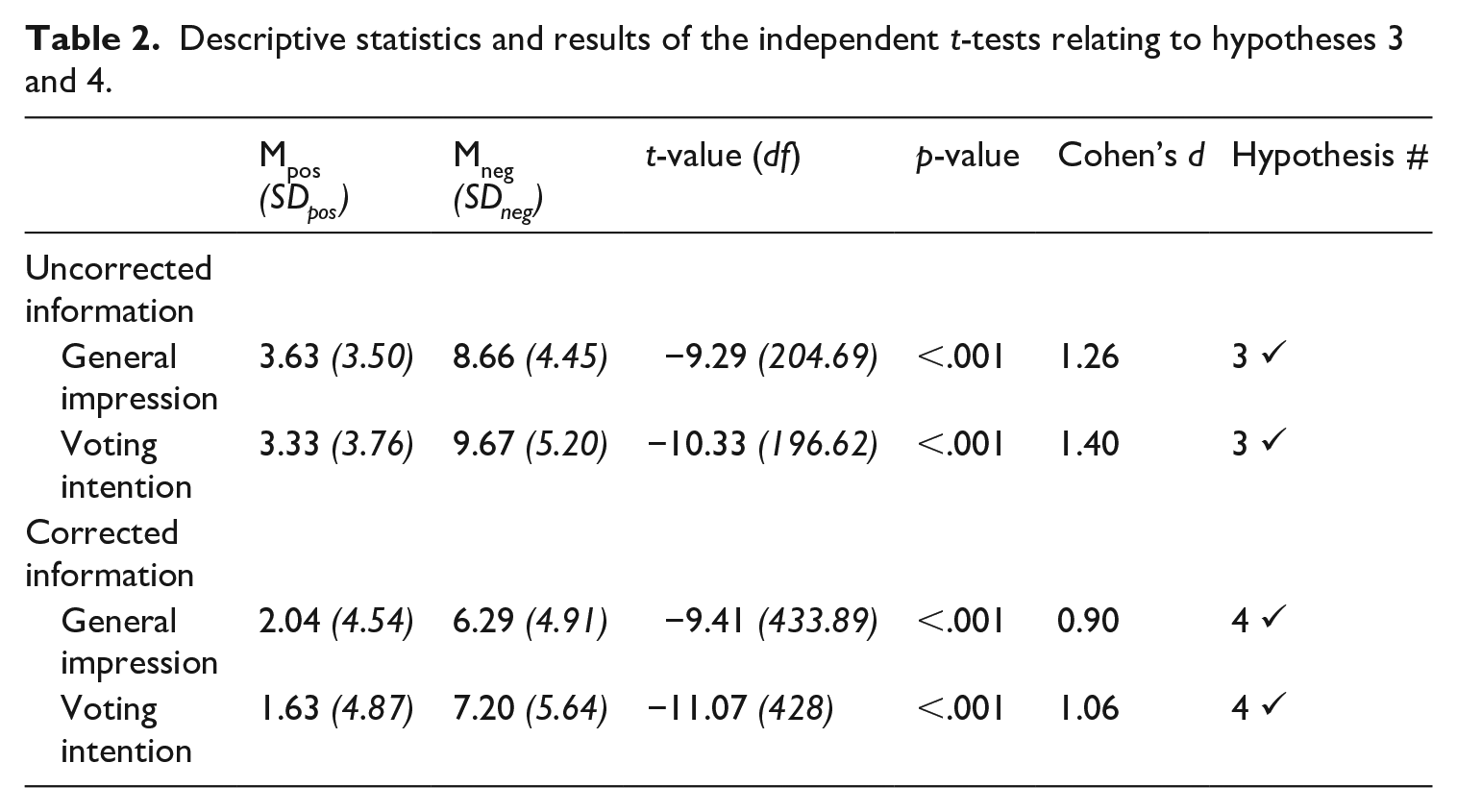

Misinformation’s impact on voting intention was significantly smaller in the debunk condition (M = 3.55, SD = 6.12) than in the forewarning condition (M = 5.29, SD = 5.67, p < .01, d = 0.29); here too, forewarning did not differ from no correction (M = 6.48, SD = 5.53; p = .076). H2 was thus partially supported: debunking seems effective in mitigating continued persuasive effects, but forewarning does not (see Supplemental Materials, Appendix D for additional graphics). To test if positive (mis)information has less persuasive impact than negative (mis)information (H3), we conducted independent t-tests on participants who did not see a correction. H3, as can be seen in Table 2, was supported for both general impression and voting intention (both ps < .001).

Descriptive statistics and results of the independent t-tests relating to hypotheses 3 and 4.

Next, we tested whether negative corrected information has more continued persuasive impact than positive corrected information (H4). Two independent t-tests, this time on the participants who saw a correction, supported H4 for both general impression and voting intention (both ps < .001; see Table 2).

Hypothesis 5 predicted continued persuasive impact for the debunked negative misinformation, and a reversal of persuasive impact for debunked positive misinformation. Results of two one-sample t-tests show that the persuasive impact of debunked negative misinformation indeed significantly exceeded 0 for both general impression and likelihood to vote (both ps < .001), indicating continued persuasive impact. Persuasive impact of debunked positive misinformation did not significantly differ from 0 for general impression, nor for voting intention (both ps > .18). This means that no reversal of persuasive impact was found after debunking positive misinformation, disconfirming the second part of H5. See Table 1.

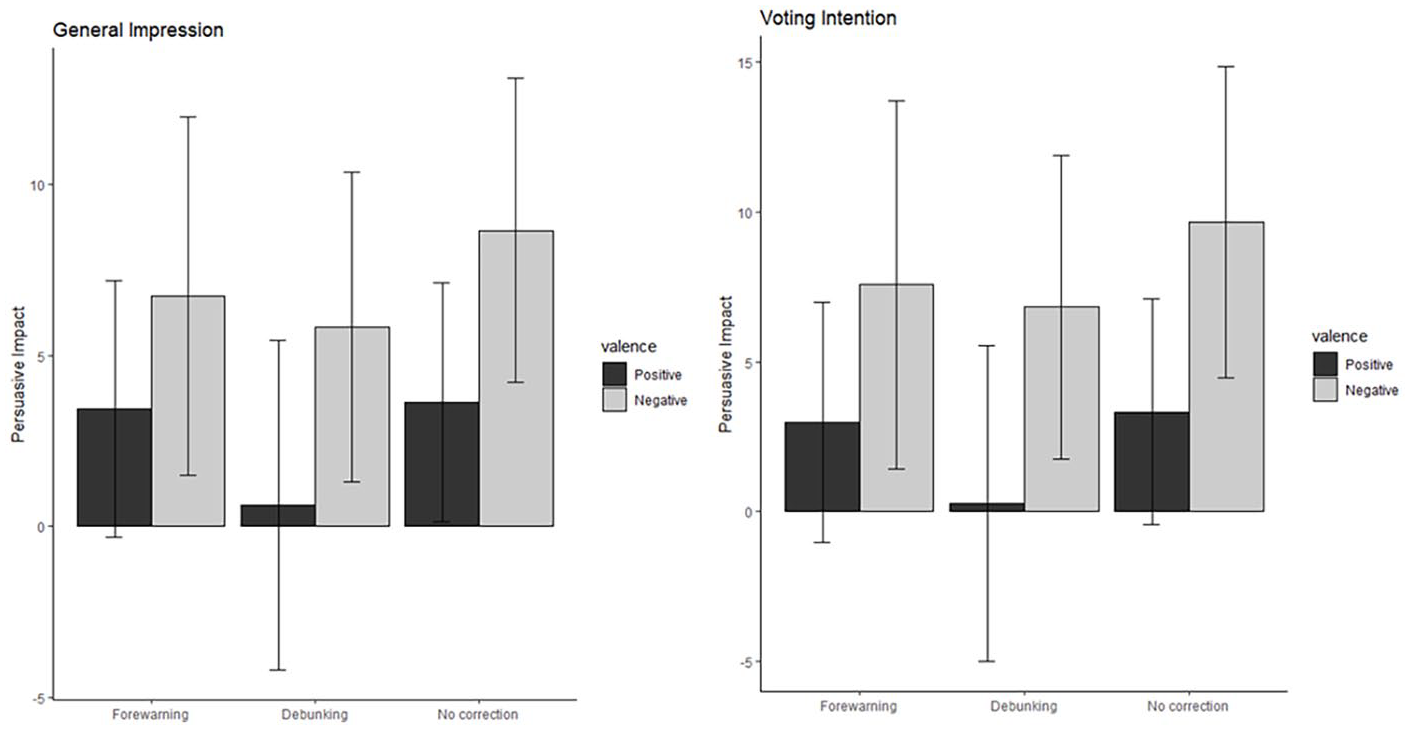

H6 predicted that when using a forewarning, both positive and negative misinformation would show continued persuasive influence. Indeed, persuasive impact of pre-corrected negative misinformation significantly exceeded 0 for both general impression (p < .001) and voting intention (both ps < .001). The same was found for pre-corrected positive misinformation: both general impression and intention to vote significantly exceeded 0 (ps < .001; see Table 1). These results support H6. See Figure 1 for an overview of the results for H4, H5, and H6.

Persuasive impact of non-corrected, previously corrected, and debunked positive and negative misinformation on general impression of the politician and the intention to vote for him.

Discussion

Study 1 shows that forewarning is indeed a less effective correction strategy than debunking in mitigating persuasive misinformation effects; forewarning did not significantly reduce persuasive effects compared to no correction. This pattern was observed for both participants’ evaluations (general impression of the politician) and behavioral intentions (willingness to vote). Interestingly, the manipulation check showed that debunks also had a stronger effect on misinformation accuracy judgments than forewarnings. In addition, consistent with Van Huijstee et al. (2022), persuasive impact of negative misinformation showed harder to counter than that of positive misinformation, possibly because negative misinformation had a stronger persuasive impact to start with.

A potential limitation of the current study is the possibility that the first measurement (i.e. the first assessment of the politician) could have influenced the second, for example, by inducing demand characteristics (Orne, 2017), or need for consistency (e.g. Cialdini et al., 1995). Including both pre- and post-exposure measures was needed to compare persuasive impact for positive and negative misinformation. To circumvent this limitation, we conducted a second study using only one (post-exposure) measurement, thus letting go of the comparison between positive and negative misinformation effects (including hypotheses H3 to H6). In Study 2, we look only at (the more prevalent; Thorson, 2016) negative misinformation. To assess continued persuasive effects, we will include an additional control condition. In addition, since the inclusion of manipulation checks (perceived message veracity and valence) may influence participants’ scores on subsequent dependent measures (e.g. Pennycook et al., 2021), we excluded both checks from the second study. Finally, the second study uses a different sample (Dutch instead of British participants) and participant recruitment strategy (Facebook/Instagram ads instead of Prolific), ensuring a broader applicability of the findings.

Study 2

Method

A between-subjects design was adopted consisting of four conditions (i.e. forewarning vs debunking vs no correction vs control). Ethical approval was granted by the home institute. 3

Participants

Using the same logic as Study 1, we required a sample of at least 100 participants per group, that is, 400 participants. Allowing for 10% participant exclusion, 440 responses were collected, using a Facebook/Instagram ad, saying “3 min. survey = chance of winning €25,” featuring a picture of newspapers. Upon clicking, an introductory text explained that the survey concerned local news. Our final sample consisted of 427 Dutch participants; 77.8% were female, average age was 48.17 years (range 18−86, SD = 18.29), and education level was relatively high: 54.3% of participants completed college or higher.

Stimuli

Stimuli were identical to those in Study 1, but translated into Dutch. Only the negative article was used. The story about the politician was adapted to a Dutch context—changes included names of council members, municipality, and news source. The slogan was removed, so participants only viewed politicians’ pictures and their names (see Supplemental Materials, Appendices E to G). The debunk was seen by 95 participants, 100 participants saw the forewarning, 110 saw no correction, and 112 received neither misinformation nor a correction.

Procedure

After providing consent, age, gender, and education level, participants were randomly assigned to either (1) the forewarning followed by the news article; (2) the news article followed by a debunk; (3) only the news article; or (4) no information. All participants then provided their general impression of the six fictional politicians also used in the first study, as well as their likelihood to vote for them. Finally, participants were debriefed.

Measures

The dependent variable consisted of participants’ general impression of the politicians (ranging from extremely negative to extremely positive) and willingness to vote for them assuming they were local members of participants’ preferred political party (ranging from extremely unlikely to extremely likely), using the same −10 to +10 sliders as used in Study 1.

Results

To first test the persuasiveness of the misinformation, we compared participants’ attitudes in the no-correction condition and the no-information condition. Participants exposed to uncorrected misinformation (M = −3.49, SD = 4.46) had a more negative general impression of the politician than participants who received no information, M = −0.26, SD = 2.66; t(177.43) = −6.54, p < .001, d = 0.88. Likelihood to vote was also more negative when uncorrected: M = −5.04, SD = 4.65 versus M = −1.32, SD = 3.37, t(198.63) = −6.80, p < .001, d = 0.92.

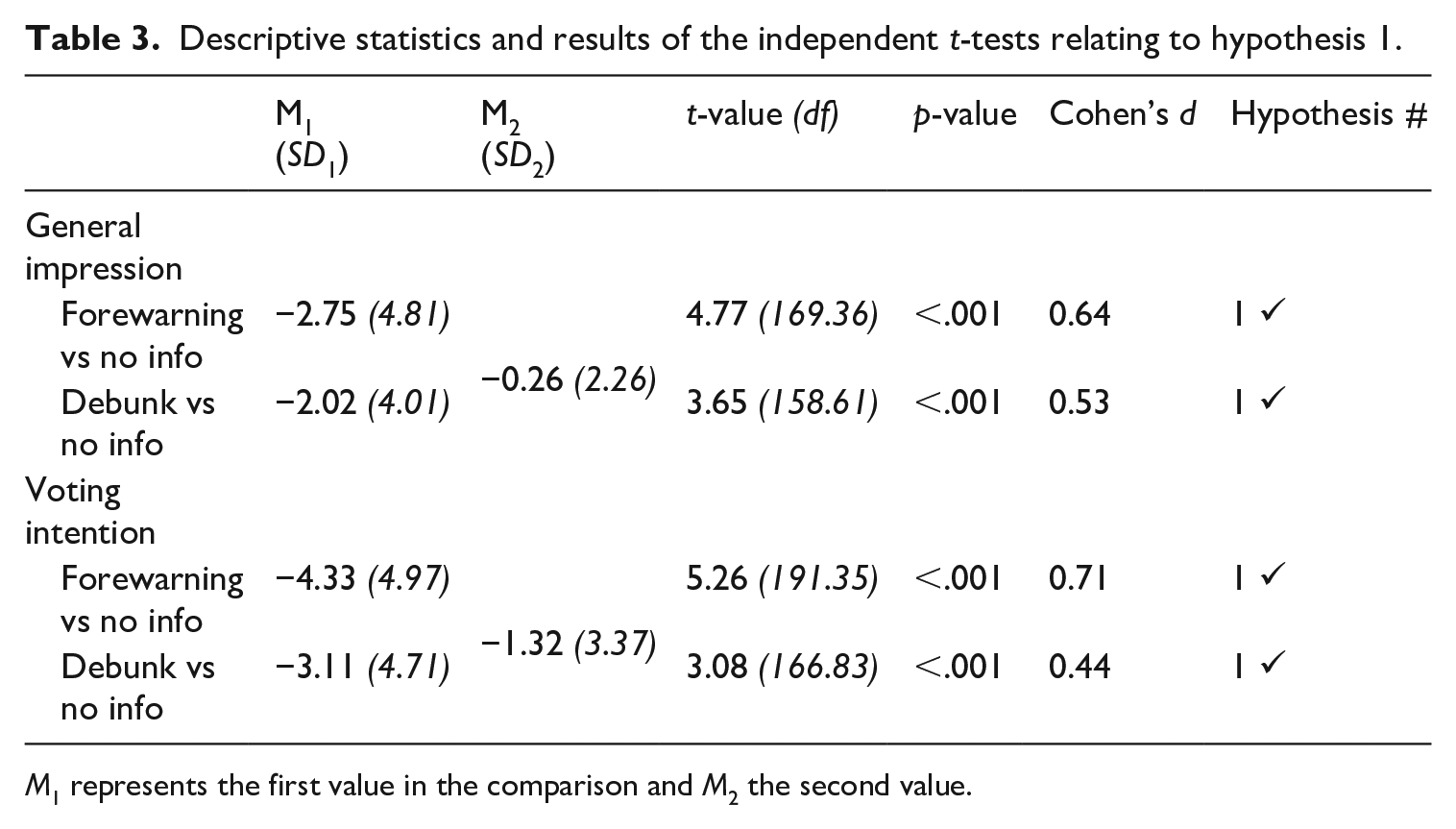

To test if misinformation remains persuasive despite corrections (H1), we ran a series of independent t-tests comparing the correction conditions with the no-information condition. First (see also Table 3), participants who saw misinformation preceded by a forewarning indeed had a significantly more negative general impression of the politician and were less inclined to vote for him than participants in the no-information condition (both ps < .001). Second, participants who saw misinformation followed by a debunking message had a significantly more negative impression of the politician, and were less likely to vote for him than participants in the no-information condition (both ps < .001). So, for both forewarnings and debunks H1 is confirmed: misinformation continues to have persuasive effects, despite corrections.

Descriptive statistics and results of the independent t-tests relating to hypothesis 1.

M1 represents the first value in the comparison and M2 the second value.

To test if a forewarning is less effective than debunking in decreasing the persuasive impact of misinformation (but still more effective than no correction at all; H2), we ran two ANOVAs comparing the effects of uncorrected and both types of corrected misinformation. Contrary to our expectations, no significant main effect was found for correction type on general impression, F(2, 312) = 2.77, p = .064, η2 = 0.17. Planned comparisons—using the Games-Howell test due to a violation of the assumption of homogeneity of variances—indicated that a debunk (M = −2.02, SD = 4.01) was more effective than providing no correction (M = −3.49, SD = 4.46; p = .037, d = 0.35) in reducing persuasive effects, but not more effective than a forewarning (M = −2.75, SD = 4.81; p = .461). A forewarning was not more effective than no correction (p = .468).

Regarding the likelihood to vote for the politician, we did find a significant main effect for correction type, F(2, 312) = 4.21, p = .016, η2 = 0.26. Again, debunking (M = −3.11, SD = 4.71) was more effective in lowering persuasive effects than no correction (M = −5.04, SD = 4.65; p = .010, d = 0.41), but not more effective than a forewarning (M = −4.33, SD = 4.97; p = .171). Likewise, a forewarning was not more effective than no correction (M = −5.04, SD = 4.65; p = .520). All in all, these results provide mixed support for H3: debunks seem more effective in reducing persuasive effects of misinformation than forewarnings or no corrections (see Supplemental Materials, Appendix H for additional graphics).

Discussion

Study 2 replicates Study 1 in finding that misinformation remains persuasive despite being corrected. Using a different experimental design, we again showed that forewarnings were not effective in reducing persuasive effects of misinformation. Debunks were slightly more effective, but not significantly more effective than forewarnings.

General discussion

This research was prompted by the observation that the extent to which corrections reduce persuasive effects of misinformation—which are arguably more consequential than informational misinformation effects—has not yet been systematically studied. To fill this gap, we presented two studies testing how different types of corrections (pre- or post-exposure) mitigate persuasive effects of political misinformation, using different designs (in Study 1 vs 2) and two different dependent measures (in both studies). Our studies show that political misinformation remains to have persuasive effects despite being corrected either pre- or post-exposure. In both studies, the corrective effect of a forewarning did not significantly differ from not correcting the misinformation at all, despite our studies being sufficiently powered to observe modest effects. This implies that forewarnings are relatively ineffective in reducing persuasive misinformation effects, corroborating results of earlier studies (e.g. Brashier et al., 2021; Vraga et al., 2020).

The lack of effectiveness of forewarnings cannot be attributed to form features, because the forewarnings and debunks had the same design. Theoretically, it could be attributed to the notion that forewarnings reduce motivation to process subsequent information attentively, thus hampering cognitive effort required for active refutation (Bohner and Dickel, 2011). Alternatively, it could be that forewarnings are discounted more often than debunks, because at time of processing, they may seem less relevant. Similarly, forewarnings may be less concrete—or cognitively applicable—than debunks, because it is not yet known what information they warn against while processing. Finally, forewarnings may elicit a “forbidden fruit” effect in some users (similar to video game warnings; Bijvank et al., 2009), potentially increasing interest in, and persuasiveness of, the misinformation. Note that these potential explanations remain speculative since we did not directly test them. Although the finding that people are easily persuaded by information that was pre-emptively discounted is noteworthy, future research should delve deeper into why this might the case.

The finding of Study 1 that indicates that debunking is more effective than forewarning in reducing misinformation’s persuasive effects was not replicated in our second study; corroborating the mixed picture sketched by prior research (e.g. Swire-Thompson et al., 2020). Several factors may have contributed to this difference. First, Study 1’s pre–posttest design may have amplified differences, as it may have attended participants to the relationship between the stimuli and the dependent measures. This may have elicited demand characteristics in participants (Orne, 2017). In a similar vein, the message veracity manipulation check in Study 1 may have influenced participants’ responses to the attitude measure. By omitting the pre–post design and the manipulation check in Study 2, we aimed to improve the internal validity of the second study. In addition, participants in our second study were recruited via Facebook and Instagram, making them—both in terms of person and situational characteristics—more representative, hereby strengthening the generalizability of the findings to real-world social media contexts. Another factor potentially affecting Study 1’s external validity is the use of Prolific workers, who might be motivated to provide “correct” answers (e.g. in attention checks) to ensure monetary compensation. In the context of our studies, “correct” answers could constitute showing resilience to (corrected) misinformation. In this way, Prolific users may differ systematically from broader populations. All in all, Study 2 appears particularly generalizable, although the context of a scientific study will inevitably differ from that of everyday (social) media use. Nevertheless, we believe both studies provide an important contribution to the (political) misinformation literature, as they employ distinct approaches to compare the effectiveness of different types of corrections to mitigate persuasive effects of misinformation.

Our results substantiate earlier findings (e.g. Ecker et al., 2020; Van Huijstee et al., 2022) by showing that (continued) persuasive effects are stronger for negative misinformation than for positive misinformation. This holds true both when debunks and forewarnings are used. So, even though people are aware that information is incorrect, negative information remains to affect attitudes and behavior more than positive information. Evidently, most of the misinformation we are confronted with, especially in a political context, is negative (e.g. placing political actors in a negative light). Our research suggests that deceptively spreading negative mis/disinformation may be effective, despite efforts of fact-checkers and journalists to correct it. This makes it even more crucial that researchers and media outlets develop potent strategies to counter misinformation’s persuasive effects. In addition, these findings highlight the need to strengthen individuals’ information selection and evaluation skills, as exposure alone, even without belief, can shape attitudes. Political campaigners and strategists should also be aware of the disproportionate impact of negative misinformation, as it may unfairly skew public opinion despite correction efforts.

In this light, we should note that the present work did not directly test mechanisms explaining negative misinformation’s persuasive impact and subsequent resilience to corrections. Future studies could attempt to directly measure potential mediators, such as misinformation’s personal relevance, accessibility, or affective load, thus deepening our theoretical understanding of the asymmetry between positive and negative misinformation effects. In addition, it might be worthwhile to explore alternative (i.e. non-political) news contexts in which positive news is more common (e.g. entertainment, sports, science, and technology), to investigate whether observed asymmetries hold there as well.

A potential limitation of our research could be the relevance of the stimuli to participants. Despite being set nationally, participants might have felt a psychological and physical distance from the fictional politicians featuring in the misinformation articles (cf. construal level theory; Trope and Liberman, 2010). This distance might for example have hampered the negative misinformation’s salience, in turn causing persuasive effects for positive and negative misinformation to be more similar than if the stimuli were more relevant. The psychological distance of the stimuli, however, was unavoidable, as we deemed it ethically irresponsible to expose participants to continually persuasive misinformation about relevant (existing, local) politicians. Nonetheless, using unknown politicians might have reduced participants’ cognitive and affective involvement in the news stories. This could have either decreased (through a lack of motivation) or increased (through a lack of critical processing; Petty and Cacioppo, 1986) persuasive effects.

Future research should also explore how forewarnings affect news consumers’ media selection. We investigated forewarning effects in a situation where all users were exposed to misinformation, regardless of the warning issued. In practice, forewarnings may persuade social media users to skip an article altogether, potentially making such warnings more effective in countering continued informational and persuasive influence than debunking: if a news article is not read, it cannot have persuasive effects. Interestingly, we do not know if forewarnings systematically lead to information avoidance, or whether they may instead motivate some readers to select the information (cf. Bijvank et al., 2009). Note, also, that in the case of skipping a news article due to a forewarning, its main persuasive message may still be effectively communicated by its headline.

In this study, we used a very straightforward forewarning strategy, modeled after strategies currently in use by social media platforms, and—for reasons of internal validity—congruent to the used debunking strategy. Notably, however, in recent misinformation research much more intricate pre-emptive correction strategies have been developed, such as inoculation (Van der Linden, 2022). Such strategies involve, for example, technique-based verification methods, exposure to misinformation examples, and serious gaming to enhance users’ persuasion knowledge (e.g. Roozenbeek and Van der Linden, 2019). It is likely that such more intricate pre-emptive strategies are more effective in reducing persuasive effects than our straightforward forewarnings and debunks, although it should be noted that inoculation studies also show more success in countering informational than persuasive misinformation effects (Droog et al., 2024). Future studies might systematically explore which factors of pre-emptive corrections may drive their effectiveness to mitigate persuasive misinformation effects.

In addition, future research should look into other factors that might moderate corrections’ effectiveness to reduce persuasive impact, such as source credibility (of both the misinformation and its correction), and trust in (fact-checking) institutions (e.g. Martel and Rand, 2023). Exploring potentially moderating individual traits, including need for closure, media literacy, openness to experience, or partisanship, could reveal important additional insights into how variables that are known to affect the susceptibility to misinformation interact with the timing of corrections. Future research could also investigate a broader range of behavioral outcomes in the persuasive realm, such as online (mis)information sharing, liking, and commenting, self-verifying, and searching for similar content. In our studies, we only measured intended voting behavior, because it related directly to the theorized persuasive effects. Nevertheless, a broader focus on behavioral outcomes could have increased the generalizability of our findings.

With the current work, we contribute to extant knowledge in the misinformation domain, showing that it is advisable to correct political misinformation post-exposure, since such corrections are better in reducing the persuasiveness of misinformation. We also show that, independent of the correction strategy used, especially the persuasive effects of negative political misinformation are difficult to neutralize. Our results again corroborate that informational and persuasive effects of misinformation do not align. We find corrections to be effective in mitigating perceived accuracy of misinformation, but also that the misinformation remained persuasive nonetheless, especially when it was negative. While prior studies mainly focused on mitigating misinformation’s informational effects, our results suggest that we might instead want to focus on persuasive effects. For developers of social network sites who want to counter effects of misinformation, our recommendation would be to not (only) rely on forewarnings but (also) correct misinformation after user exposure.

Supplemental Material

sj-docx-1-nms-10.1177_14614448251359988 – Supplemental material for Combatting the persuasive effects of misinformation: Forewarning versus debunking revisited

Supplemental material, sj-docx-1-nms-10.1177_14614448251359988 for Combatting the persuasive effects of misinformation: Forewarning versus debunking revisited by Dian van Huijstee, Ivar Vermeulen, Peter Kerkhof and Ellen Droog in New Media & Society

Footnotes

Author statement

We confirm that the authors have access to the original data on which the article reports and that this manuscript has not been published elsewhere and is not under consideration by any other journal. All authors have approved the manuscript and agree with its submission to new media & society.

Data availability statement

Declaration of conflicting interest

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical approval and informed consent statements

Both studies received ethical approval from the FSW Research Ethics Review Committee (RERC) of the Vrije Universiteit Amsterdam. Participants provided their informed consent before participating.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.