Abstract

Platform governance is simultaneously a matter of public concern and a professional calling for Trust and Safety, the nascent field tasked with setting and enforcing standards of acceptable behavior on digital platforms. Yet we know little about how the growing professionalization of platform governance shapes content moderation practices. Using the schema of content abuse from the Trust & Safety Professional Association, we analyzed the Community Guidelines of 12 diverse livestreaming platforms. Our findings reveal significant alignment between professional guidelines and industry practices, which is especially pronounced for Twitch and YouTube. However, industry standards only partially address the policies of adult camming platforms and AfreecaTV, a Korean-based livestreaming service, revealing notable absences in how Trust and Safety imagines the boundaries of the industry. We reflect on the priorities and exclusions of emerging industry standards and conclude with a call for academics and practitioners to broaden the conversation around content moderation.

Keywords

Platform governance is simultaneously a matter of public concern, bringing together states, corporations, and civil society organizations, and a professional calling for Trust and Safety, the nascent field tasked with setting and enforcing standards of acceptable behavior on digital platforms (Caplan, 2018; Shulruff, 2024; Zuckerman and Rajendra-Nicolucci, 2023). The increasing professionalization of platform governance is reflected in the rise of industry associations, 1 third-party vendors, 2 educational initiatives, 3 and the entry of the term “Trust and Safety” into public discourse, 4 coming a long way from its debut in a 1999 eBay press release announcing the launch of a dedicated customer service program (Cryst et al., 2021). As with any industry, professional associations “offer means and venues through which members can interact, provide valuable training and professional development to association members, and can play a role in establishing and enforcing collective standards for appropriate behavior” (Carter and Mahallati, 2019: 52). However, in the context of Trust and Safety, standard setting serves a meta function, setting standards for the people who set standards online. Given its potential public impact, the professionalization of Trust and Safety has become increasingly polarized, simultaneously promising greater capacity to address complex issues and threatening to reduce public accountability and consumer choice (Douek, 2020; Keller, 2024; Moran et al., 2025).

Yet the existence of Alt Tech platforms, which rhetorically “position themselves as bastions of unrestricted free speech and free flow of content” (Viejo Otero and Scharlach, 2025: 2), motivates skepticism about claims of industry consensus. Unsurprisingly, these platforms do not publicly participate in professional organizations, conferences, or research collaborations, even as they share features and monetization strategies with mainstream social media. A rare comparison of mainstream (YouTube, Twitter) and Alt Tech platforms (Bitchute, Parler) found that the latter lacked hate speech policies and were overall shorter and vaguer around enforcement (Buckley and Schafer, 2022). This suggests that Alt Tech platforms adopt a different approach to platform governance, although it remains unclear if this approach endures over time or extends to other platforms. Indeed, despite their oppositional branding, Alt Tech platforms are meaningfully constrained in their approach to content moderation by the policies and preferences of institutional actors such as advertisers, app stores, and payment processors (Cowls et al., 2023; Wade et al., 2024). Accordingly, recent work concludes that “little is known about Alt Tech platforms, their governance mechanisms and how these interact with their contents” (Siapera, 2023: 447).

The academic literature suggests two other fissures within the “imagined industry” of social platforms (Maris, 2021) related to the absence of adult content and disproportionate presence of the United States and Europe as regulatory contexts. Although adult content is a prominent topic of concern for content moderation (Gillespie, 2018), platforms that permit explicit material are often excluded from conversations on Trust and Safety. For example, there are no adult content platforms listed among the public sponsors of the Trust & Safety Professional Association, even as PornHub joined the organization in 2023. 5 Similarly, the Journal of Online Trust and Safety, which launched in 2021, has not published any research on adult content platforms, despite overlapping technological features and policy practices (Franco and Webber, 2024; Petit, 2025; Ruberg, 2022; Stegeman, 2024). As with Alt Tech platforms, the moderation of adult content is significantly constrained by industry actors like payment processors and faces regulatory pressure from US anti-trafficking legislation FOSTA/SESTA (Franco and Webber, 2024; Hill, 2024; Stardust et al., 2023). Finally, although Trust and Safety issues are “of global importance and often disproportionately impactful in other markets” (Eissfeldt and Mukherjee, 2023), Western companies and legislation dominate the conversation. 6

To investigate the policy priorities of the Trust and Safety profession and their alignment with industry practices, especially outside of Western, mainstream platforms, we focus on the case of livestreaming. The technical challenges of moderating live content (Cai et al., 2021) have led platforms to delegate significant responsibility to performers to moderate the behavior of their interacting audiences (Hernández, 2019; Jones, 2020; Taylor, 2018). This strongly incentivizes companies to publish detailed policy guidelines, making them ideal for comparison. In what follows, we discuss the role of Trust and Safety within the growing professionalization of platform governance and the state of the art in comparative platform policy research. We then introduce our method of data collection and analysis, using the schema of content abuse types from the Trust & Safety Professional Association (TSPA) to analyze the Community Guidelines of 12 diverse platforms. Our findings reveal significant alignment between industry standards and the policy documents of all platforms, which is especially pronounced for Twitch and YouTube. However, industry standards only partially address the policies of adult camming platforms and AfreecaTV, a Korean-based livestreaming service, revealing notable absences in how the field of Trust and Safety imagines the boundaries of the industry. We reflect on the priorities and exclusions of these emerging industry standards and conclude with a call for academics and practitioners to broaden the conversation around content moderation.

Trust and Safety as the professionalization of platform governance

While the language of Trust and Safety only appeared at the end of the 20th century (Cryst et al., 2021), the practices it refers to – setting and enforcing standards for acceptable behavior online – are much older. Such concerns predate the rise of the Web, playing an important role in networked proto-social media environments like Bulletin Board Systems, Usenet, and Multi-User Dungeons (Driscoll, 2022). These Internet communities, and the early Web-based versions that followed, were typically managed by volunteers and system administrators (Zuckerman and Rajendra-Nicolluci, 2023). As websites, portals, and eventually platforms expanded, the volunteer approach to moderation struggled to keep up, leading to the rise of “commercial content moderation” with contracted workers and complex automated systems responsible for enforcing the rules (Gorwa, 2024; Roberts, 2019). Recent legislation, including FOSTA-SESTA in the United States (Stardust et al., 2023) and the Digital Services Act and Digital Markets Act in the European Union (Keller, 2024), has placed greater regulatory pressure on social media corporations, bound up with greater public scrutiny (Marchal et al., 2024). In response, major social media platforms have turned to “network forms of organization” for the “development and enforcement of content policy making” (Caplan, 2023: 3456), collaborating with experts, civil society organizations, government agencies, and other industry actors, albeit in highly brokered arrangements.

Professionalization facilitates networked coordination, bringing people together to exchange information and develop shared standards (Carter and Mahallati, 2019). 7 No event better exemplifies the professional turn in platform governance than the launch of the TSPA in 2020 to support ‘the global community of professionals who develop and enforce principles, policies, and practices that define acceptable behavior and content online and/or facilitated by digital technologies.’ 8 In other words, the people who create the paradigms that commercial content moderators operate under (Roberts, 2019). From the beginning, TSPA has received support from big tech companies, including social media (e.g., Meta, Twitch), dating (e.g., Match Group, Bumble), e-commerce (e.g., Airbnb, Depop), and, perhaps unsurprisingly, vendors that provide Trust and Safety services (e.g., ActiveFence, WebPurify). Membership is open to professionals from a wide range of backgrounds, reflected in the organization’s inclusion of the volunteer-oriented Independent Federated Trust and Safety Association. Although not all mainstream social platforms are formal sponsors – YouTube is notably absent – the majority are based in the United States, Europe, or Israel. The association brings professionals together through networking opportunities, a shared Slack space, annual events like TrustCon, and an extensive suite of online resources, including a curriculum that “defines core concepts, terms, and standard practices that make up the body of knowledge we call ‘Trust and Safety’” (Trust & Safety Professional Association, n.d.; see also, Moran et al., 2025). Because the association’s membership is not public, it is difficult to determine how many people working at Alt Tech, adult content, or non-Western platforms are included among its ranks.

Professionalization plays an important role in self-governance. From the voluntary ratings for film and television to the credentialing boards for medical professions to the extensive licensing, registration, and arbitration services provided by financial organizations, professional associations serve two goals: the “protection of the profession and protection of the public” (Akers, 1968: 477). While TSPA lacks formal credentialing or licensing functions, an early analysis of the organization suggests that it offers a “form of softer governance” that shapes industry standards beyond the approach of any individual company (Metwally, 2022: 9). Indeed, the online curriculum’s definition of the field offers a clear example of soft governance, directing how people should conceptualize and approach the work of platform governance, in line with platforms’ increasing reliance on “forms of soft governance tactics to address social and political expectations” (Scharlach, 2024, 3). Yet, it is

difficult to assess the extent of collaboration or level of influence one set of experts has over the other, however, since companies traditionally do not disclose details, choosing instead to refer to ‘external engagement’ or ‘consultation’ in the policy development process. (Metwally, 2022: 15; see also, Caplan, 2023)

To investigate the association’s “soft” policy priorities and their alignment with actual industry guidelines, we turn to comparative policy research.

Comparative platform policy research

Given growing restrictions on access to platform data and industry insiders (Gorwa, 2024), policy documents offer an essential resource for platform governance research. However, these documents are challenging objects to work with due to the gap between promise and practice. Indeed, policy is only one task among many for Trust and Safety professionals, who are also involved in incident response, research, product advice, and collaboration (Shulruff, 2024). Despite these structural ambiguities, policy documents define permissible conduct and provide evidence of how platforms navigate tradeoffs “in the types of abuse they choose to focus on” (Jiang et al., 2020: 88). As public-facing documents, policies must address the interests of diverse stakeholders, including users, developers, advertisers, regulators, investors, and competitors (Gillespie, 2018; Scharlach et al., 2024; Taylor, 2018). Consequently, such policies are “typically where we see the institutional principles come into sharpest relief” (Taylor, 2018: 250). Regardless of whether they are noticed, read, or contested, policy documents offer a foundation to compare expressions of policy priorities across platforms, analyze strategic corporate positioning, and trace the development of Trust and Safety as a professional field.

Comparative policy research has identified substantial commonalities across mainstream social media platforms. As Gillespie (2018) writes,

. . . the guidelines at the prominent, general purpose platforms are strikingly similar. This makes sense: these platforms encounter many of the same kinds of problematic content and behavior, they look to one another for guidance on how to address them, and they are situated together in a longer history of speech regulation that offers well-worn signposts on how and why to intervene. (p. 52)

At the discursive level, these policies rhetorically employ “strategic vagueness” in defining key issues such as harm (DeCook et al., 2022), harassment (Pater et al., 2016), and advertiser-friendly content (Kopf, 2024), allowing platforms substantial latitude in enforcement. Researchers have also highlighted the gendered dynamics of policies on nudity and sexual content (Ruberg, 2021; Taylor, 2018; Zolides, 2021), the stigmatized construction of sex work (Bhalerao and McCoy, 2022), and the guiding principles that justify platform rules (Chan et al., 2023; Scharlach et al., 2024). Other work addresses temporal dynamics, often making use of the Platform Governance Archive (Katzenbach et al., 2023). For example, a longitudinal analysis of Facebook, Twitter, and Reddit found that the policies of all three platforms substantially increased in complexity over time (Dubois and Reepschlager, 2024). A study comparing policy changes and news coverage found that negative press attention positively predicts policy changes, shedding light on one of the mechanisms driving policy development (Marchal et al., 2024). Connecting moderation policies to practice, de Keulenaar et al. (2023) document the plasticity in how Twitter has defined and responded to objectionable content, flexibly adapting to public scrutiny and consumer preferences.

Despite commonalities in form, discursive address, and temporal dynamics, another line of research highlights variations in the substantive content of platform policies. For example, Jiang et al. (2020) compared the Community Guidelines of 11 major social media platforms and found substantial variation in the types of rules they contained, ranging from 66 on Facebook to only 18 on Discord. Subsequent research has further substantiated variation in the complexity and comprehensiveness of Community Guidelines, especially when accounting for more diverse types of platforms in terms of politics, geography, and commercial purpose (Nahrgang et al., 2025; Schaffner et al., 2024; Singhal et al., 2023). However, the high-level analysis of these studies leaves little space to reflect on the structural dynamics driving these differences. Furthermore, the granularity of the schema of rules (66 in Jiang et al., 2020 and 80 in Nahrgang et al., 2025) makes it difficult to directly compare policy priorities across platforms. And more importantly, the schema’s origins within a specific company’s guidelines (Meta) make the finding that Facebook has the greatest number of categories unsurprising.

Moving beyond the mainstream reveals further variance depending on the type of alternative platform involved. On the progressive end of the spectrum, Codes of Conduct on Mastodon offer a modern interpretation of federalist political theory (Gehl and Zulli, 2023). However, most research is concerned with the greater permissiveness of Alt Tech platforms and their relationship to “censorship” (Siapera, 2023). In addition to single-platform case studies on Bitchute (Siapera, 2023) and Gab (Van Dijck et al., 2023), there have been a few cross-platform policy investigations, including the comparison of mainstream and Alt Tech platforms discussed in the introduction (Buckley and Schafer, 2022). More recently, Viejo Otero and Scharlach (2025) compared how nine Alt Tech platforms rhetorically frame safety, finding that these platforms “critique or implicitly reject the legal and normative frameworks tied to safety by reframing moderation as censorship, and by championing the idea of free space for digitally mature individuals who self-regulate and self-defend against perceived digital threats” (p. 2). Together, these studies suggest that Alt Tech platforms express their ideological opposition to emerging content moderation standards through policy, even as other work argues that payment processors and app stores meaningfully constrain this opposition (Cowls et al., 2023; Wade et al., 2024). As the high-profile case of Parler’s removal and eventual reinstatement to Apple and Google’s app stores illustrates, “app store governance is an instance of platform governance of platforms” (Cowls et al., 2023: 3).

Many of these institutional pressures cross-apply to adult content platforms. As Webber and Franco (2024) observe, “Adult labour platforms do not develop their policies arbitrarily or in a vacuum; they do so in order to comply with the stringent rules developed and enforced by payment intermediaries, that is, credit card networks and payment processors” (p. 2; see also Hill, 2024; Stardust et al., 2023). Adding further context, a large-scale analysis of Privacy Policies and trackers embedded within porn websites supports the claim that adult platforms operate within a “parallel ecosystem” of advertising (Maris et al., 2020). Similar to mainstream social media, adult content platforms employ “vague terms” to police sexual content (Hill, 2024: 176) and struggle to address the specificity of fabricated media formats like animation and Generative AI (Petit, 2025). A comparison of BongaCams, LiveJasmin and Chaturbate found that the camming platforms’ policies consistently “minimize the connection between their platforms and work, sex, and sex work” (Stegeman, 2024: 2), and have significant prohibitions on non-normative sexual behavior (see also Stardust, 2024; Webber and Franco, 2024). Although important research on the governance of adult content platforms is being published in interdisciplinary venues like New Media & Society and topical journals like Porn Studies, these platforms remain underrepresented in journals focused on Internet policy and content moderation.

While all the platforms we’ve discussed so far are transnational, only this final domain of research foregrounds the importance of geographic and regulatory context. These factors shape how individual companies adjust their policies for different markets, as well as the dynamics between companies operating in different parts of the world. Exemplifying the former, Ahn et al. (2023) document how Facebook “splinters” its moderation efforts via policy exceptions and distinct reporting tools to maintain compliance within different regulatory environments. There is also a growing body of work comparing the regulatory approaches of the United States and China, identifying a “dual-track” of platform governance that has “different mechanisms with different characteristics” (Liu and Yang, 2022) which prompts companies to engage in “parallel platformization,” creating products with similar features but different policies for mainland versus transnational markets (Kaye et al., 2021: 245). Although the particular relationship between the United States and China has begun to receive more international attention with platform studies’ geopolitical turn (Gray, 2021), other parts of Asia continue to be overlooked, even as the continent ‘contains the world’s largest population of active social media users, the most vibrant and diverse digital economies and Influencer cultures, as well as among the most dynamic and complicated Internet regulations and policies’ (Abidin et al., 2025: 1).

Despite the lively body of research on platform policies, the minimal comparison across industry sectors or regulatory contexts makes it difficult to identify patterns. Furthermore, researchers tend to focus on the use of language, examining the rhetorical construction of key policy issues like harm, harassment, or sex work. While these approaches contribute to our understanding of policy issues, they do not offer a holistic account of the structure, rules, and general orientations of platform policies (Braman and Roberts, 2003). To facilitate such a comparison, we turn to livestreaming as a particular type of social platform that crosses industrial and regulatory environments. Livestreaming, also called camming in the adult context, is defined by the live broadcast of video, audience interactions through chat windows, the integration of direct monetization features, and the delegation of moderation to broadcasters (Hernández, 2019; Jones, 2020; Ruberg, 2022; Taylor, 2018). Live content poses distinct moderation challenges due to the unpredictability and ephemerality of real-time interactions. Although automation offers some help, broadcasters must ensure their audiences act appropriately. This delegation of responsibility strongly incentivizes platforms to publish detailed and direct policies about acceptable use. However, effectively comparing such policies requires a new methodological approach that moves beyond the focus on single issues or rhetorical justifications.

Methods for mapping policy priorities

We developed a novel protocol for mapping policy priorities, defined as overarching areas of regulatory concern. Based on previous longitudinal research, we anticipate that policy priorities reflect more enduring commitments than individual rules (e.g., Marchal et al., 2024). Our protocol consists of five steps: platform selection, policy collection, rule identification, rule classification, and priority analysis.

Step 1: platform selection

To facilitate comparison, we ensured that each platform: (1) offered livestreaming as a primary service, (2) addressed a transnational audience, and (3) had English-language policies. We sought out diverse platforms that reflected the potential industry fissures addressed above, selecting two mainstream platforms (YouTube, Twitch), three Alt Tech platforms (Kick, DLive, Rumble), one non-Western platform (AfreecaTV 9 ), and six adult content platforms. Within the latter group, we included platforms focused on both private shows (LiveJasmin, Streamate) and public shows (Chaturbate, Myfreecams, BongaCams, Stripchat), following the recommendations of previous research (Jokubauskaitė et al., 2023).

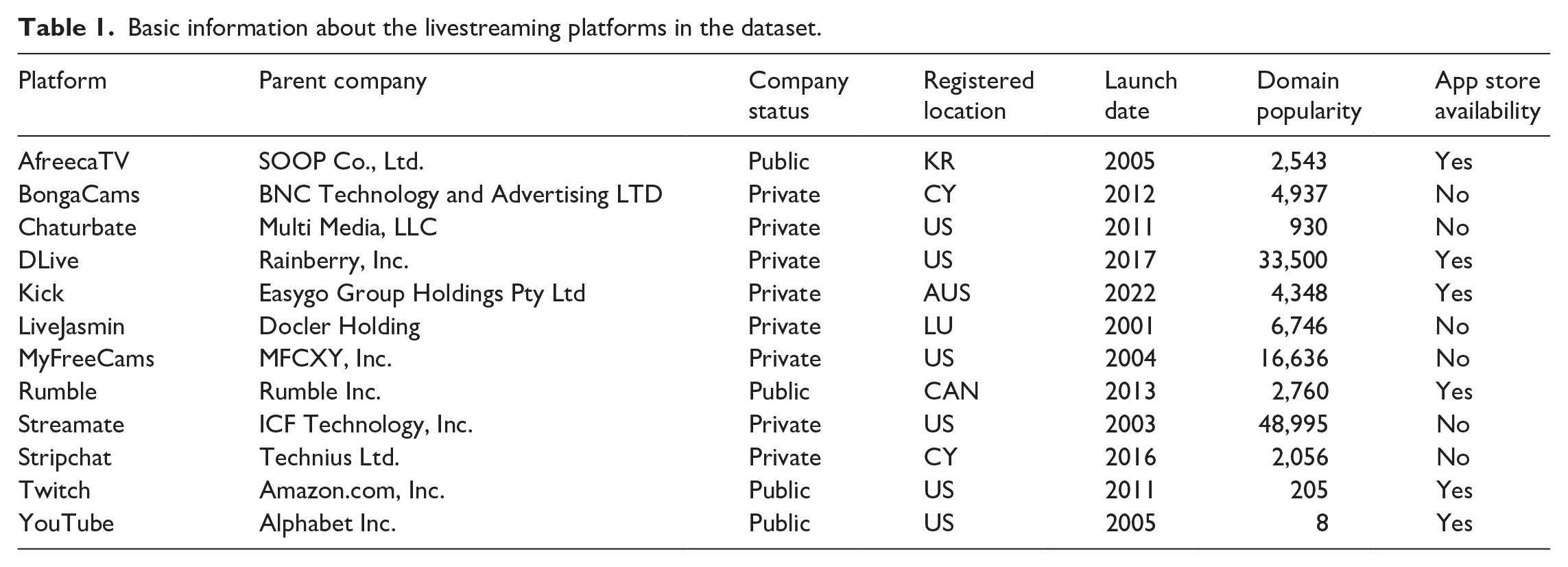

Table 1 contains an overview of our sample compiled from policies, platform websites, industry news, business registries, and investor relations reports. We used the Tranco list generated on 31 July 2024 10 for domain popularity (Le Pochat et al., 2019). Most companies are registered in the United States. LiveJasmin is the oldest, launched in 2001, and Kick is the newest, launched in 2022 with financial support from an online crypto casino company (Craig, 2023). While comparable user metrics are not available, 9 of the 12 platforms’ domains fall within the top 10,000 most popular sites worldwide. In addition, since adult platforms typically operate a network of “white label” websites that feature the same content (Jokubauskaitė et al., 2023), our table may under-represent their popularity (see Supplemental Appendix 1).

Basic information about the livestreaming platforms in the dataset.

Step 2: policy collection

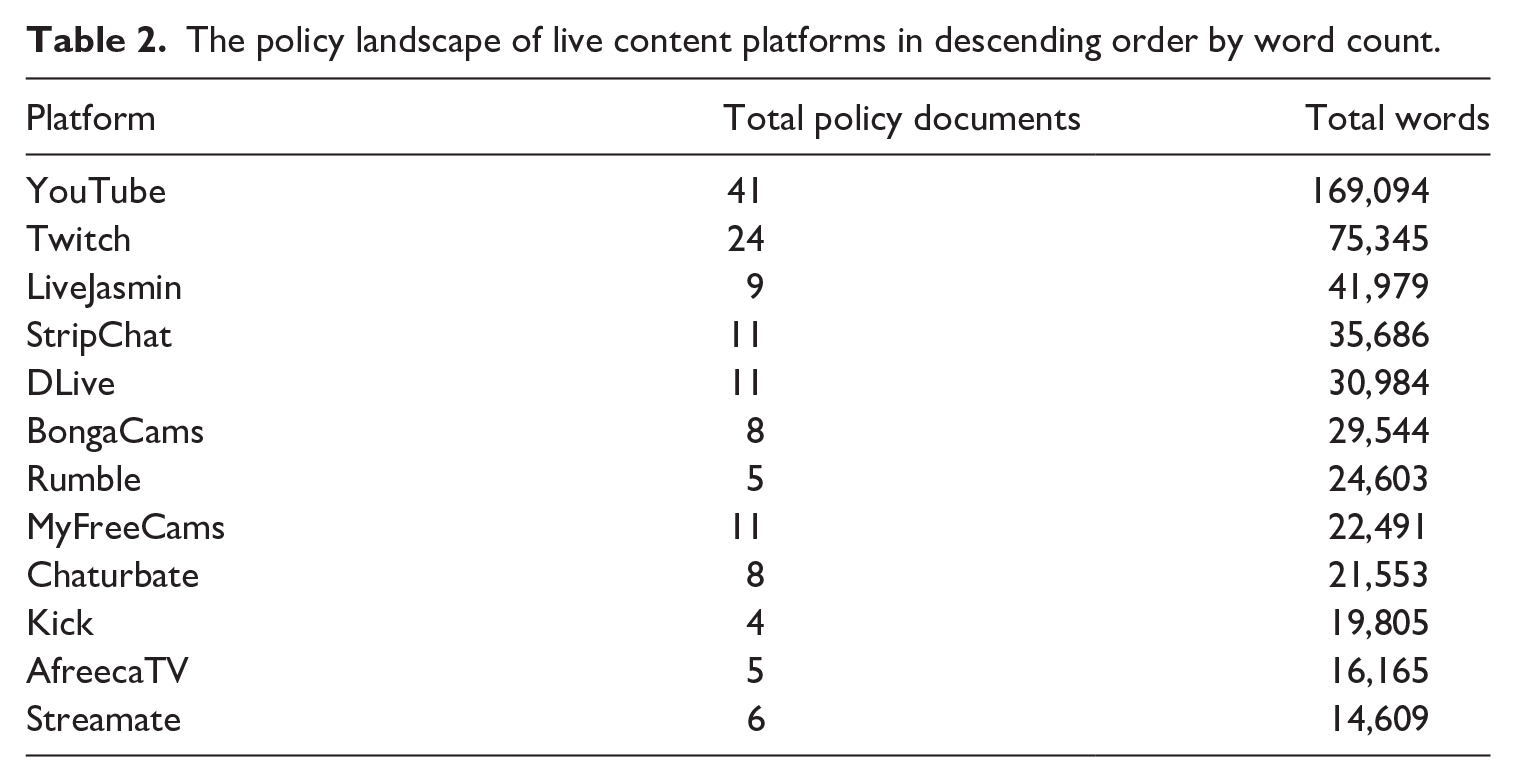

Next, we manually collected policies, employing an iterative process where we (1) visited the homepage of the platform and looked for policy links, (2) clicked the links to additional policies contained within each document, (3) conducted keyword searches for platforms and policy names identified in previous research, 11 and (4) conducted keyword searches for any new policy types identified. We copied the text of each policy into separate files, available upon request, resulting in a set of 143 documents totaling 501,858 words (see Table 2). If platform policies are “like a constitution, documenting the principles as they have been forged over routine encounters with users and occasional skirmishes with the public” (Gillespie, 2018: 45–46), the commercial regulatory documents of platforms are lengthy even by constitutional standards. According to research from the Comparative Constitutions Project, the average national constitution is 22,291 words, 12 only slightly more than half the length of the average word count of the livestreaming policies included in our dataset. There was considerable variation in the number and naming of policies, but on average, platforms had nearly 12 policy documents, totaling over 40,000 words.

The policy landscape of live content platforms in descending order by word count.

Step 3: rule identification

Given our interest in content moderation, we narrowed our focus to documents that directly addressed user behavior. These policies are typically referred to as Community Guidelines (Gillespie, 2018), although only four platforms in our dataset had documents named as such (YouTube, Twitch, Kick, Stripchat). For the rest, we manually identified policies related to user conduct (see Supplemental Appendix 2). We entered rules from each policy into a spreadsheet that preserved the document’s organizational structure. Twitch’s Community Guidelines, for example, are organized into five main sections of Safety, Civility & Respect, Illegal Activity, Sensitive Content, and Authenticity. Our spreadsheet converted the section names into “1st order categories.” Within each category, there are further divisions (e.g., Civility & Respect contains three “2nd order” categories of Hateful Conduct, Harassment, and Sexual Harassment). This process continued until we reached a “terminal rule,” or a guideline for behavior with no further specifications (e.g. “Repeatedly negatively targeting another person with sexually-focused terms, such as ‘whore’ or ‘virgin’”). YouTube had the most rules (283) and the most tiers of categorization (6), while Rumble had the fewest rules (24), and both Chaturbate and LiveJasmin only used a single tier to distinguish between rules for broadcasters and audience members (see Supplemental Appendix 3).

Step 4: rule classification

To assess the alignment between emerging industry standards and platform practices, we developed a codebook based on TSPA’s schema of online abuse types, which organizes 24 rules into seven categories of (1) Violent & Criminal Behavior, (2) Regulated Goods & Services, (3) Offensive & Objectionable Content, (4) User Safety, (5) Scaled Abuse, (6) Deceptive & Fraudulent Behavior, and (7) Community-specific Rules.

13

For clarity, we italicize types of abuse and

Step 5: priority analysis

We tabulated the results for each platform, converting sums to a percentage of total rules to give a sense of relative attention to particular topics. We then created ranked lists of abuse types for each platform. Finally, the first two authors hosted a structured workshop with all members of the research team to discuss the results and collaboratively interpret their significance, reflecting on patterns, absences, and claims from previous research.

Industry standards and their discontents

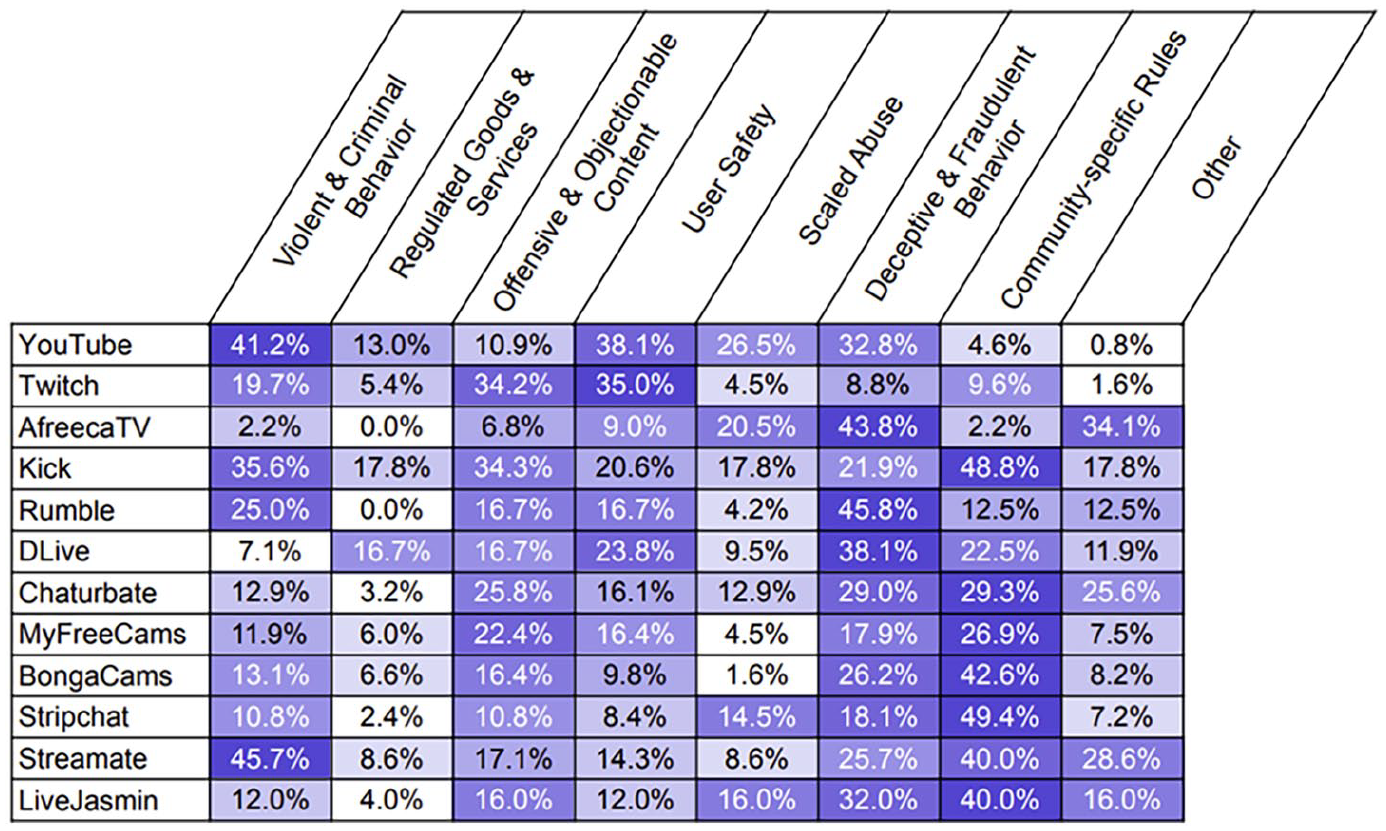

Websites, applications, and platforms have long published guidelines for acceptable behavior under an assortment of names, including Acceptable User Policy, Code of Conduct, and the more recent Community Guidelines. The Trust & Safety Professional Association’s online curriculum for policy development outlines emerging industry standards for the types of abuse that policies should address. Our analysis of the user policies of twelve diverse livestreaming platforms reveals substantial alignment between these standards and actual policy documents. Although only four platforms had policies explicitly labeled “Community Guidelines,” all platforms contained policies that addressed six out of seven of the abuse types outlined in the TSPA schema: Violent & Criminal Behavior; Offensive & Objectionable Content; User Safety; Scaled Abuse; Deceptive & Fraudulent Behavior; and Community-specific Rules. The seventh type of abuse, Regulated Goods & Services, was absent from Rumble and AfreecaTV’s policies (Figure 1), meaning that they lacked explicit rules about

A heatmap of policy priorities in Community Guidelines, where darker colors indicate stronger priorities.

If we move from general types of abuse to specific rules, we find a little more variation. While no platform had policies that contained all 24 of the rules elaborated in the TSPA schema, 18 of the rules appeared in at least half of the platforms (see Supplemental Appendix 5). Furthermore, three platforms contained nearly all of the rules: Stripchat only lacked a rule prohibiting

While all livestreaming platforms generally align with industry standards, two platforms most closely approximate the industry ideal: YouTube and Twitch. Both have extensive policies that cover all types of abuse and nearly all rules from the TSPA curriculum (see Table 2, Supplemental Appendix 5). Further evidence of alignment can be found in the low percentage of rules coded as Community-Specific or Other. Compared to other platforms, YouTube and Twitch’s policies place greater priority on User Safety, due, in large part, to elaborate discussions of what counts as

If the policies of YouTube and Twitch operate squarely within the “imagined industry” of Trust and Safety (Maris, 2021), a closer look is needed to assess the potential fissures we identified in the paper’s introduction: Alt Tech platforms, adult content platforms, and non-Western platforms. In what follows, we show how these fissures play out in terms of policy priorities and situate our findings within claims from previous research.

Alt Tech platforms: all rhetorical bark, no policy bite

By definition, Alt Tech platforms position themselves in opposition to industry standards around content moderation, typically characterizing it as a form of “censorship” or “bias” (Siapera, 2023). This oppositional stance extends to the livestreaming context. All three of the Alt Tech platforms we analyzed – Kick, DLive, and Rumble – rhetorically position themselves against Big Tech norms. Kick emphasizes free expression, beginning their Community Guidelines by announcing that they “value the importance of constructive dialogue over knee-jerk reactions often associated with ‘cancel culture.’” DLive leans toward a transactional community appeal: “the service provides creators with an opportunity to earn rewards from their viewers and the TRON blockchain.” Rumble avoids a preamble altogether by opting against providing separate Community Guidelines. Each choice expresses an alternative ethos: the rebellious style of Kick fits with the branding of the platform (Craig, 2023), the techno-utopianism of DLive aligns with the strategies of other blockchain-affiliated social media (Nagappa, 2023), and Rumble’s minimization of rules allows it to signal regulatory compliance as a publicly traded company (Keller, 2024) while avoiding the appearance of a heavy-handed approach (Buckley and Schafer, 2022; Siapera, 2023). Rumble’s rules further signal their allegiance to “alternative” policies through the selection of Antifa as an example of a group that promotes “violence or unlawful acts.”

Yet the oppositional positioning of Alt Tech quickly fades away when it comes to policy priorities. The policies of all three platforms address each of TSPA’s major topics of abuse (except for Rumble’s lack of rules about Regulated Goods & Services, discussed above). Their rules broadly focus on Deceptive & Fraudulent Behavior, Offensive & Objectionable Content, and User Safety, although Kick and Rumble also prioritize Violent & Criminal Behavior. Here we both align with and diverge from Buckley and Schafer’s (2022) earlier analysis of Parler and Bitchute. The alignment comes from a shared focus on fraud and inauthentic behavior, as well as a partially shared focus on violent content. The divergence comes from our findings about the prioritization of user safety and offensive content, concerns that were relatively absent in their analysis. Furthermore, these differences may be shrinking; while not included in our coded set, the most recent Community Guidelines for Parler 14 open with an explicit acknowledgment of “the harmful impact of hate speech,” even as they “reject censorship in favor of counter-speech” as a solution, and the very first section of Bitchute’s Community Guidelines is now ‘Respect & Decency.’ 15 Similarly, while previous research on Alt Tech platforms has emphasized their relative brevity and lack of explanation (Buckley and Schafer, 2022; Siapera, 2023), our analysis challenges this tendency. Kick is near the top of our sample in terms of the number of rules, DLive is in the middle, and Rumble is at the very bottom, highlighting diversity in the structure of Alt Tech policies (see Supplemental Appendix 3).

Another area of alignment between Alt Tech livestreaming platforms and industry standards concerns their consistent restrictions on

Alt Tech platforms must walk a fine line between crafting policies necessary to secure their continued operation and obscuring the existence of policies that could draw ire from their target user base. As Viejo Otero and Scharlach (2025) argue, while “Alt Tech platforms rhetorically perform compliance with mainstream references to safety by adding rules about what is harmful and what they are legally obligated to enforce,” they also “position themselves as anti-performance performers” (p. 8). This rebellious ethos generates further tension when, for example, a platform is publicly traded, as the case of Rumble illustrates. What Rumble users want does not necessarily align with what Rumble investors want, leaving the platform to perform a balancing act of signaling regulatory compliance and risk management to investors without alienating their users. Thus, where Alt Tech platforms most often differ from their mainstream compatriots is not in the existence of policies on particular topics, but their rhetoric. While it may be an overstatement to claim that the ideological opposition of Alt Tech platforms is all rhetorical bark, especially without analyzing enforcement, there is certainly less policy bite than promised.

Speech without bodies, bodies without speech

The relationship between adult content platforms and the field of Trust and Safety is lopsided, with the former paying significantly more attention to the latter. All of the adult content platforms in our dataset had rules addressing all seven types of abuse outlined by the TSPA. Their policies prioritized Deceptive & Fraudulent Behavior, Offensive & Objectionable Content, and Violent & Criminal Behavior, similar to the Alt Tech platforms, but without much attention to User Safety, discussed below. Stripchat, the newest adult content platform in our dataset, most closely aligns with the mainstream TSPA industry ideal, reflected in the platform’s inclusion of a policy explicitly named Community Guidelines and coverage of every type of rule except for malware. While many rules were standard, we found some unique interpretations, including the prohibition against “misleading” the audience about the performer’s gender on BongaCams and LiveJasmin. Similarly, the articulation of Offensive & Objectionable Content rarely invoked

While the standard concerns of Trust and Safety are well represented in adult content platforms, our analysis also suggests that these platforms have additional policy priorities. Adult content platforms stand out for their strong prioritization of Community-Specific Rules, featured in up to half of all rules. These include format restrictions on pre-recorded content or links to other websites, as well as extensive content-based restrictions, including bans on advertisements, sleeping, having an animal in the room, showing men, or not showing one’s face – activities that do not raise concerns on mainstream platforms but become fraught when brought into the proximity of sex. Other speech restrictions seem more tied business interests, including BongaCam’s prohibition on expressing a “Frivolous and/or disrespectful attitude toward the website,” Chaturbate’s ban of “service uniforms including, by way of example only, military uniforms or religious attire,” and Stripchat’s policy against “Asking users to cover your personal expenses like utility bills, medical treatment, loans, or any other expense that would lead users to believe that you are beseeching.” Although the restrictions against advertising and external links can be a way to ensure compliance with US legislation FOSTA-SESTA, they may also “obstruct webcammers’ other (sex) work, to limit competition” (Stegeman, 2024: 338). Adult livestreaming platforms are thus more permissive in terms of sexual content and nudity, but more restrictive of other forms of speech.

As the discussion of speech restrictions suggests, adult content platforms must also try to appease diverse audiences with competing interests; indeed, this is a trademark of platform policies and an inherent challenge of content moderation as “an exhausting and unwinnable game” (Gillespie, 2018: 73). Yet adult content platforms reveal a distinct formulation of these tensions in the trade-off between the idea of “free” expression (often conceptualized as the American idea of free speech, especially by Alt Tech platforms) and sexual expression. All of the general-audience platforms in our sample have strong restrictions on sexual expression, accompanied by more liberties for other forms of expression, even serving as the main branding of Alt Tech platforms like Rumble and Kick. Conversely, adult content platforms are inherently more permissive when it comes to sexual expression but have substantially more restrictions on broadcaster speech. Of course, adult content platforms still have significant constraints on sexual expression and its context, including much stronger regulations around who is allowed to express themselves, with performers needing to sign up, verify their identity, and have their faces appear on camera whenever they broadcast (see also Franco and Webber, 2024). 17 Livestreaming performers thus face a choice between platforms that permit greater speech expression or greater bodily expression, although in neither case are permissions unlimited.

The tradeoff between speech and sexual expression is not related to any inherent quality of expression itself. Free speech activists and organizations often champion sexual expression under the umbrella of free speech, just as sex work and pro-pornography activists appeal to the importance of free speech (Strossen, 2024). Instead, the enduring distinction suggests the relevance of institutionalized factors. For adult content platforms, the inclusion of sexual expression introduces pressing risk management challenges, making it important for these platforms to demonstrate compliance with state regulations and the concerns of payment processors like Visa and Mastercard (Franco and Webber, 2024). For general-audience platforms, concern with the stigma of adult content animates attempts to draw boundaries around legitimate work (Taylor, 2018). In the face of livestreaming’s dubious legitimacy, researchers have documented the long history of Twitch’s attempts to regulate, downplay, and distance itself from sexual expression (Ruberg, 2021; Taylor, 2018; Zolides, 2021). Other industry actors also shape the decisions of general-audience platforms, including app stores’ prohibition on pornography (Tiidenberg, 2021) and advertisers’ concerns with brand safety (Caplan and Gillespie, 2020). In other words, the siloing of sexual expression and speech expression onto separate platforms is primarily a question of regulatory convenience, allowing platforms on both sides of the divide to limit their overall risk exposure and target particular audiences. But this convenience is, itself, the result of a long legacy of policy choices on the part of lawmakers, corporate leaders, and professional organizations (Faucette, 1995; Strossen, 2024). Some of these decisions have become so embedded in technical systems and policy norms that it is essential to remember that they were, and continue to be, choices.

Lost in translation: the Korean context

In Korea, AfreecaTV has long “been one of the most popular platforms for the live streaming of playing games, cooking, eating, and putting on make-up since 2006,” especially among the younger generation (Song, 2018: 1). While the platform also courts transnational creators and audiences with overseas operations in Hong Kong, Thailand, and the United States (Hwang, 2024), its policies reflect the company’s position within the Korean regulatory context. Indeed, AfreecaTV’s Community Guidelines fit the least well with the TSPA schema, containing only 15 of the 24 standard types of rules. Furthermore, over 34% of their policies did not correspond with any established categories. This means that AfreecaTV does not, for example, have any rules on Regulated Goods & Services and very few dealing with Violent & Criminal Behavior – two areas that have historically motivated international, cross-platform cooperation (Douek, 2020). Rules dealing with

Many of the rules classified as Other refer to legal compliance, in line with Korea’s “pervasive system of restricting political speech online and offline” (Ahn et al., 2023: 2852). Sometimes the policies specify the relevant law, as in the case of the Juvenile Protection Act or the Telecommunication Act, and sometimes the law is unspecified, as in the more ambiguous imperative to avoid the “Use of names that are considered slang, antisocial and contrary to relevant statutes.” In the context of Korea’s speech restrictions designed to maintain the “ethical standards” of online communities (Eggleston and Lloyd Wilson, 2023: 275), absences may, counterintuitively, indicate the significance of a policy priority. Some concerns may not be elaborated within private forms of governance because they are covered by public regulations, making it superfluous for companies to outline independent visions. Thus, AfreecaTV’s lack of rules around

Conclusion

If platform policies represent a “monopolization of judgment” (Hallinan, 2021: 716), our comparison of the Community Guidelines of twelve diverse livestreaming platforms indicates a standardization of judgment as well. The policy curriculum published by the Trust & Safety Professional Association broadly maps onto actually-existing policies, suggesting a shared understanding of the problems facing online communities and the purpose of platform policies. That mainstream platforms like YouTube and Twitch align with industry standards is unsurprising. Indeed, both companies engage in “networked governance” (Caplan, 2023) and have supported efforts to professionalize the field of Trust and Safety. While the remaining platforms are further removed from industry ideals, lacking established rule types or containing rules that do not fit the TSPA schema, their substantial overlap remains surprising. While mainstream social media, Alt Tech platforms, adult content platforms, and non-Western platforms differ in their formulation and enforcement of rules, they publicly address the same problems, lending credence to the claims that livestreaming platforms, regardless of branding, geography, or market sector, face shared structural pressures involving the behavior of their users and the need to appease various regulators and industry actors.

Despite the broad uptake of industry standards for content moderation, our analysis also shows how those same standards exclude particular actors from how the industry is imagined and, more importantly, addressed. The first exclusion concerns adult content platforms, which minimally feature in the growing crop of professional associations, although individual platforms and employees may participate (Luscombe, 2024). Indeed, the policy documents of camming platforms demonstrate clear engagement with industry conventions. This alignment was most pronounced in the case of Stripchat, which follows the same document naming conventions, rhetorical styles, and rule types of mainstream social media. Adult content platforms also have extensive community-specific restrictions on the form and content of broadcasts, amounting to much more stringent control over speech and identity verification that has substantial parallels to the Chinese livestreaming context (Qiu and Dwyer, 2023). This leads to the second exclusion, which concerns non-Western platforms. While we only examined a single platform in this category, AfreecaTV’s policies had the highest share of rules that did not fit with the TSPA schema, most of which were related to national legislation. Furthermore, some of the absences in AfreecaTV’s policies, especially the lack of rules related to

Debates over the future of platform governance offer competing visions of a divided ecosystem with contrasting visions of speech regulation (Van Dijck et al., 2023) and an increasingly homogeneous approach to platform governance dominated by the big tech companies (Douek, 2020; Keller, 2024). We argue that the debate is too narrow; although there is significant policy consolidation, we should not restrict our analysis to the usual subjects. Mainstream, Alt Tech, adult, and non-Western platforms offer similar services, facilitated by similar technologies, within a similar “stack” of regulation (Cowls et al., 2023). Imagined or not, they inhabit the same industry, and both practitioners and academics would benefit from expanding their understanding of where Trust and Safety happens and what forms it takes. While there may be reasons to focus on particular sectors, these should be active choices subject to justification rather than taken for granted. The tradeoff between “free” expression and sexual expression that plays out across livestreaming platforms is institutional, not inherent, and a broader examination of different aspects of the industry can foreground relevant institutional differences. Parochialism, whether in terms of place or pornography, holds back our understanding of how to create social communities online.

We conclude with directions for future research. A major limitation of our study is its focus on transnational platforms with English-language policies. The inclusion of AfreecaTV demonstrated the salience of different regulatory contexts and future research could test the broader applicability emerging industry standards for Trust and Safety, particularly within China, which has the most developed livestreaming market and a distinct relationship between commercial and state modes of regulation (Liu and Yang, 2022; Qiu and Dwyer, 2023). In addition, policy formation is only one part of Trust and Safety and does not directly track with enforcement practices (de Keulenaar et al., 2023; Dergacheva and Katzenbach, 2024); future research would thus benefit from comparing different modes of content enforcement across platforms and types of abuse. Finally, to better understand the mechanisms influencing policy development, researchers could investigate personal motivations and organizational dynamics through ethnographic observation (Taylor, 2018) and practitioner reflections (Maxim et al., 2022). Policy priorities emerge from past practices and anticipated futures, and our cross-sector analysis attests to the value of comparative approaches for surfacing otherwise overlooked factors shaping the governance of our complex platform ecosystem – including, increasingly, the soft governance exerted by professional associations.

Supplemental Material

sj-docx-1-nms-10.1177_14614448251357225 – Supplemental material for Priorities and exclusions within Trust and Safety industry standards

Supplemental material, sj-docx-1-nms-10.1177_14614448251357225 for Priorities and exclusions within Trust and Safety industry standards by Blake Hallinan, CJ Reynolds, Rebecca Scharlach, Dana Theiler, Noa Niv, Omer Rothenstein, Isabell Knief and Yehonatan Kuperberg in New Media & Society

Footnotes

Acknowledgements

Blake Hallinan would like to thank the real conversations that took place with colleagues at Global Digital Intimacies and the imagined conversations that took place with policy-related podcasts (Moderated Content, The Sunday Show, Rational Security, Ctrl-Alt-Speech, Views on First, and Tech Policy Podcast) for the intellectual inspiration. All authors would like to thank the reviewers for pushing us to bring the argument forward.

Data availability statement

The dataset from this study is available from the corresponding author on reasonable request.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.