Abstract

How is the cultural made computational? CLIP models are a recent artificial intelligence (AI) innovation which train on massive amounts of Internet data in order to align language and image, deploying this ‘grasp’ of cultural concepts to understand prompts, classify images and carry out tasks. To critically investigate this cultural codification, we explore MetaCLIP, a recent variation developed by Meta. We analyse the model’s metadata, a single file of 500,000 terms that aims to achieve a ‘balanced distribution’ or sufficiently broad understanding of concepts. We show how this model assembles histories, languages, ideologies and media artefacts into a kind of cultural knowledge. We argue this codification fuses the ancient technique of the list with a more recent technique of latent space. We conclude by framing these technologies as cultural machines that exert power in defining and operationalising a particular understanding of ‘culture’ invisibly and at scale.

Introduction

When humans are confronted with an image or hear a phrase, they draw on their cultural understanding to make sense of it. This understanding is immensely varied: the morass of people, places, events, ideologies and practices that constitute ‘culture’ is both wide-ranging and highly disparate. This makes culture notoriously fuzzy or nebulous, a concept Williams (1976) described as one or two of the most complex. And yet codifying the cultural into an ‘understanding’ is needed if computational models are to adequately grasp human-made cultural artefacts and respond in appropriate ways.

This article thus asks how the cultural, seemingly limitless and indefinable, is rendered computational. The technology industry’s answer is CLIP, a recent artificial intelligence (AI) innovation that trains on massive amounts of Internet data in order to align language and image, deploying this ‘grasp’ of cultural concepts to understand prompts, classify images and carry out tasks (Radford et al., 2021). While machine vision has received some scholarly investigation (Dobson, 2023; Parikka, 2023), there is almost (excepting Amoore et al., 2024) no critical humanities work on this new and highly influential generation of zero-shot multimodal models.

These models are powerful but often inscrutable, black boxes rendered opaque through proprietary techniques, technical expertise and unknown training data. To unpack these systems, we focus on a recent high-profile model: MetaCLIP (Xu et al., 2024). Developed by Meta, Facebook’s parent company, MetaCLIP seeks to address and improve on the original CLIP model, particularly in regard to a transparent and replicable development model. The key innovation is the model’s ‘metadata’ file, a single file of 500,000 terms that aims to achieve a ‘balanced distribution’ of data, supporting a known and sufficiently broad understanding of concepts (Xu et al., 2024). This file is publicly accessible, providing a rare access point into this process of cultural codification and operationalization.

Our analysis focuses on process rather than bias: how cultural knowledge is constructed computationally. Bias, while certainly important, has become a dominant and de facto mode of cultural critique in relation to AI (Karpouzis, 2024; Peters, 2022; Rozado, 2023). Instead, we take inspiration from Benjamin (1935), who recognised that mechanisation was not just a technical but a cultural transformation. He carefully analysed these technologies and their implications, demonstrating how the new ability to copy and circulate images disconnected them from their original context and the very notion of ‘originality’. As he and others (Crary, 1990) have argued, each new wave of technical innovation needs to be accompanied by a societal adjustment that critically grasps how these technologies reshape everyday life.

Our key contribution is to demonstrate how knowledge is codified, formalised and operationalised. While content is certainly key to this process, we aim to identify a general procedure or overall approach to culture, a logic. We show how this model blends the list – a highly effective ancient knowledge technology – with latent space, a far newer and flexible technology. These two technologies augment each other, contributing to a powerful (but highly particular) kind of ‘cultural understanding’.

These models matter. As daily life becomes increasingly digitally mediated, the form of ‘culture’ registered and reproduced by technologies also grows in importance. Everyday cultural practices on platforms are shaped by the models and algorithms that underpin them (Bucher, 2018; Striphas, 2015). For instance, AI recommendation systems not only predict but influence people’s preferences and choices (Erdoğan, 2023; Gaw, 2022), shaping the distinct way in which they see, understand and know culture (Seaver, 2019, 2022). These technical processes construct a kind of cultural feedback loop, with users, communities and tastemakers adjusting their practices to maximise legibility and exposure (Gillespie, 2014; Munn, 2018). In this sense, (as our conclusion elaborates) models like MetaCLIP function as cultural machines, defining and operationalising a certain understanding of ‘culture’ in real time and at scale. Such machines exert political power, elevating some people, practices, ideas and events while marginalising others.

Literature review: from technical systems to cultural machines

Models like CLIP are multifaceted objects: technical systems, composed of algorithms and shaped by training data, that codify knowledge and exert cultural force in the world. This section thus draws together disciplinary perspectives – infrastructure studies, critical AI and algorithm studies, and cultural studies – to frame this object and situate our intervention in relation to it.

As technical systems, AI models are often framed as black boxes, opaque or inaccessible objects that are intimidating to non-specialists. This opacity can be deliberate, trade secrets that shroud products from the public and make deconstruction difficult (Pasquale, 2015). Software studies (Fuller, 2008; Marino, 2009) have suggested source code readings and reverse engineering could advance our understanding of how technical systems operate. Similarly, infrastructure studies (Munn, 2023; Parks and Starosielski, 2017) and media archaeology (Kirschenbaum, 2008) have attempted to make the invisible visible, stressing these ubiquitous and banal systems actually shape our everyday life in powerful ways.

Responding to this opacity, researchers have proposed interventions to cultivate transparency. Model cards provide insights into a model’s training data and social, cultural and intersectional context (Mitchell et al., 2019). Explainable AI seeks to provide clarity to users about how these systems make decisions (Holzinger et al., 2022; Minh et al., 2022). Frameworks and toolkits to better understand AI technologies – particularly their democratic potential (Swist et al., 2023) and ethical usage (Adams and Groten, 2023) – have been advanced. While not our primary focus, our work mirrors such studies in using publicly accessible information to unpack the data and decision-making inside these often inscrutable objects.

A dominant strand of AI scholarship has concentrated on AI bias or algorithmic bias. Training data, code rules and statistical infections can all create forms of prejudice in cultural, political or racial forms (Devillers et al., 2021; Fazil et al., 2023; Karpouzis, 2024; Leavy et al., 2020; Manasi et al., 2022; Peters, 2022; Rozado, 2023). While biases certainly matter, the de facto move of analysing and identifying bias can short-circuit the more extended project of understanding a model’s logic and its implications.

In this article, we focus on these larger questions: what does ‘culture’ look like from a machinic perspective, how does a model approach and internalise this knowledge and what are the implications for deploying these cultural machines in the wild? Rather than a top-down approach – measuring a model against some normative yardstick of culture – we take a bottom-up approach that aims to grasp how ‘culture’ is computationally understood and operationalised. In this sense, we are interested not in predefined notions of culture (which has been extensively theorised), but in how influential new technologies like AI models approach it and render it digital and performable.

Culture is notoriously nebulous, one ‘of the two or three most complicated words in the English language’ (Williams, 1976: 76) and sprawls across ‘every society and every mind’ (Williams, 2011: 55). Like Foucault (2005: 172), we are fascinated by how one might take a ‘whole domain’ of culture and render it ‘describable and orderable’. Or, following Goody (1977: 94), we ask how these models resolve this problem in a way that produces ‘increments of knowledge’ and the ‘organisation of experience’.

Certainly others have pursued similar inquiries, most notably in scholarship on algorithmic culture (Dourish, 2016; Hallinan and Striphas, 2016; Hristova et al., 2020; Hutchinson, 2017). As Striphas (2015: 395) notes, over the last few decades, ‘human beings have been delegating the work of culture – the sorting, classifying, hierarchising of people, places, objects, and ideas increasingly to computational processes’. Seyfert and Roberge (2016: 3) stress that algorithms have ‘agency and performativity’; in sorting and classifying this material in particular ways they bring to life certain visions while leaving behind others. In short, algorithms carry out work in the world, and this work has downstream impacts.

Of course, algorithms are not entirely deterministic. As Andersen (2020) reminds us, we treat these technologies like other forms of communication, making them fit into our everyday lives. Algorithmic cultures may be accepted or overwritten, as different definitional frameworks or understandings of culture jockey with each other (Hallinan and Striphas, 2016). Indeed, in the last decade, we have seen how some algorithms become ‘culturally meaningful’ objects in their own right: ‘“data” to be debated or tracked, legible signifiers of shifting public taste or a culture gone mad, depending on the observer’ (Gillespie, 2016: 64).

We build on these critical insights while also striving to tease out the novel aspects of recent AI models like CLIP. Certainly at one level, AI models are inherently algorithmic, with algorithms like backpropagation a key part of machine learning. However, our work with MetaCLIP (discussed below) highlights at least two points of departure that are at once technical and epistemological. First, rather than imposing rules on cultural content, AI models begin from this messy material, aiming to extract patterns and discover unexpected correlations from them. In this sense, they reverse the flow stipulated by algorithmic culture, starting from repertoires of thoughts and structures of feeling (Williams, 1958) and using these to construct computational models.

Second, AI logics move beyond a strictly algorithmic model, conventionally understood as a set of rules or a repeatable recipe (Munn, 2018). In these models, as we later discuss, harder classificatory schemes are augmented by softer ‘contrastive’ schemes which organise and operationalise information in flexible and open-ended ways. These points suggest that while such AI models undoubtedly build on existing technologies, they cannot be conceptualised merely by relying on earlier work. In this sense, we see AI models (and our work here) as extrapolating from excellent scholarship on algorithmic cultures while attending to some novel dynamics.

Methodology: unmaking Meta’s cultural machine

CLIP represents the most recent and significant development in this drive to codify culture. Released in 2021 by OpenAI, CLIP stands for contrastive language-image pretraining. In the words of its developers, CLIP aims to ‘test the ability of models to generalise to arbitrary image classification tasks in a zero-shot manner’ (OpenAI, 2021). Rather than manually annotating images, which is expensive and time-consuming, or classifying them into a limited set of known categories ( ‘airplane’, ‘boat’, ‘person’), CLIP seeks to be a general-purpose model that can automatically grasp new images and dynamically associate them with similar images.

To develop this understanding, CLIP is trained on 400 million image-text pairs scraped from the Internet. In essence, it is trained on ‘culture’—a hugely varied set of everyday imagery which captures sports, hobbies, outdoor scenes, tourist landmarks, film stills and a myriad of other objects, scenes and settings. Crucially, this foundational ‘knowledge’ allows the model to then grasp the contents of new images, data that were never encountered during training. The ‘cultural training’ embedded in the model allows images to be broken down into components and linked to a textual description that is most similar to it.

However, what exactly is in this training data is unknown. Is it really representative, capturing the full gamut of people, events, objects and ideas that constitute ‘culture’? To answer this crucial question, one would need to develop a ‘map’ of culture with diverse and important landmarks, and then map these 400 million image-texts to these key points. This is effectively what MetaCLIP aims to do. In the words of the developers, it ‘takes a raw data pool. . . and yields a balanced subset over the metadata distribution’ (Xu et al., 2024). The goal is not only to better grasp the training data, but to ensure that it is spread across the cultural space, providing good-enough ‘coverage’ of any person, object or event that should be understood. Whatever the cultural element, the model should be able to locate it within its map or distribution; this ‘task-agnostic data’ is key for any foundation model (Xu et al., 2024: 1).

To explore MetaCLIP, we analyse the metadata that both represents its innovation and gives it its name. This metadata consists of 500,000 terms, separated by commas, contained in a single file. To aid in understanding, we convert the file to a spreadsheet and arrange it alphabetically. Inspired by exploratory research, which focuses on discovery within new domains (Swedberg, 2020), we undertake a series of quick and light analyses of the file, from counting cultural artefacts to determining languages. The aim is not to exhaustively analyse each term (an impossible task given the volume of information), nor to identify bias or limits in the content, but rather to grasp how data are taken up and worked on in certain ways to form a particular kind of ‘cultural knowledge’.

Analysis: constructing cultural knowledge

In this section, we survey key elements of MetaCLIP’s metadata file, showing how content is brought together to form a particular kind of cultural understanding (Meta, 2024). This file functions as a kind of cultural map (Meyer, 2014), providing insight into what constitutes culture: how societies value different things, what their beliefs and preferences are and how they engage with each other (Anonymous, 2016).

In approaching this file, we employed a version of exploratory data analysis, a more ad hoc approach that aims to get a grasp of the content and scope of a data set (Leinhardt and Wasserman, 1979). Our analysis of scanning, reading and reflecting on the file was deliberately manual rather than automated, an approach which we felt better employed our expertise as media studies and humanities scholars. In particular, we focused on elements that re-occurred in the file with high frequency and could be clustered into overarching categories. From this approach, history, language, media and political ideologies emerged as distinct ‘ingredients’ or elements. In the following section, we briefly discuss each of these elements and how they contribute to the ‘balanced’ and comprehensive cultural understanding the model aims for.

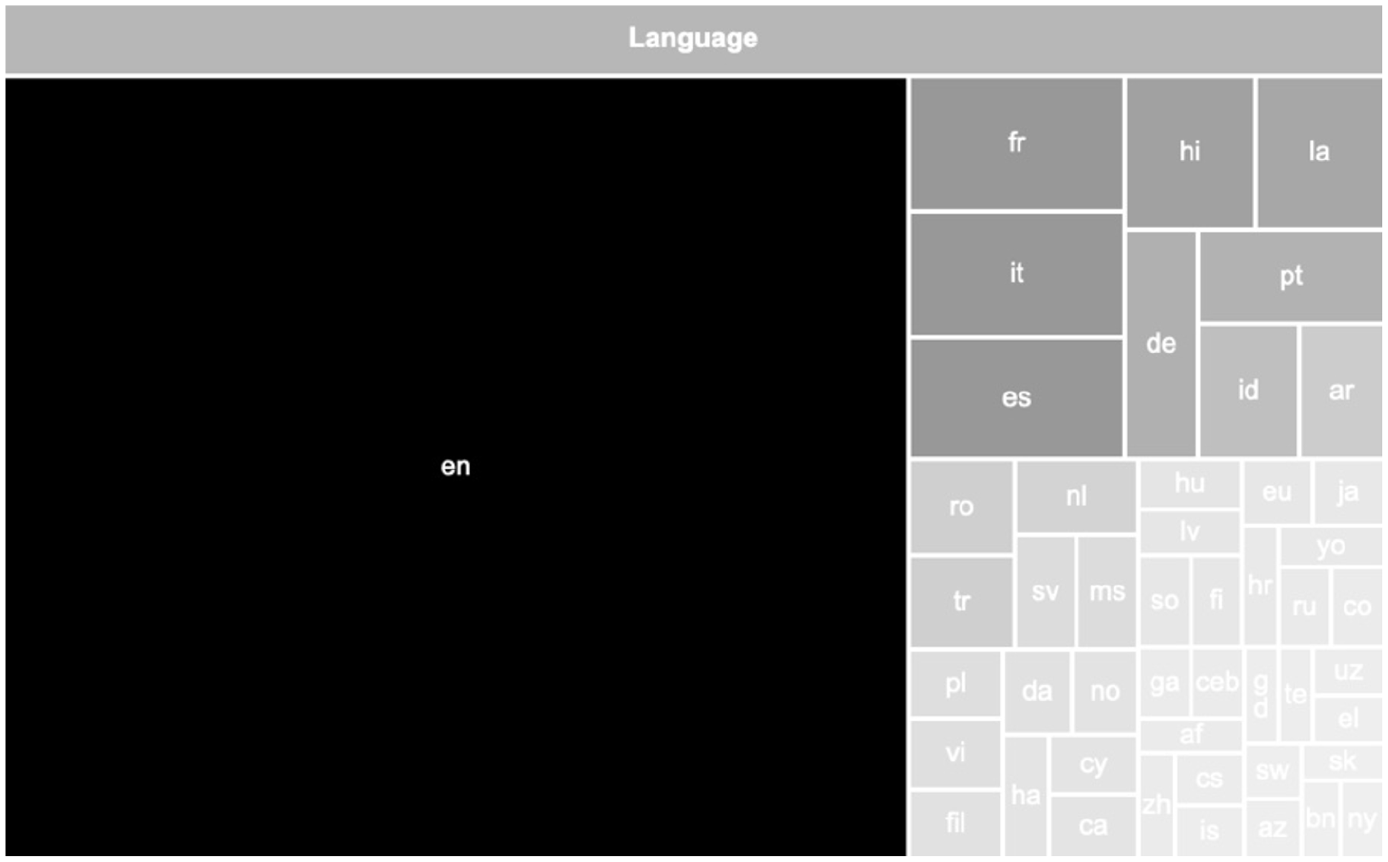

Language

To explore what languages are included in MetaCLIP, we carried out a light analysis using the DetectLanguage function on the first 100,000 entries. There are over 110 languages in the metadata file, from Hmong through to Javanese and Bhojpuri. In one sense, then, there is an incredible variety of languages feeding into this cultural knowledge. This resonates with research suggesting loan-words from other languages are one concrete way in which cultural learning takes place (Higa, 1979). Yet, this diversity is rather superficial, with most languages having less than 1000 entries and many only around a dozen. As Figure 1 demonstrates, English dominates all others, with nearly two-thirds of the total entries. The hegemony of English (Macedo et al., 2015) remains unchallenged.

Make up of languages in the first 100,000 entries (only top 50 shown).

While this dominance certainly matters, the key point here is that language – more basically, semantic constructions of letters and symbols – becomes a critical component in codifying culture. Culture and language do not exist in isolation, but mutually constitute and reinforce each other (Witherspoon, 1980). Language is a key vehicle for cultural semiotics: cultural meaning is encoded in linguistic signs (Kramsch, 2014). Language provides people with a toolkit of symbols and words to interact and engage with culture. In this way, it structures thought and provides a cognitive frame to reflect on the world, enabling us to understand what others value and respect. At a fundamental level, MetaCLIP is predicated on this tight language−culture connection, on the assumption that culture can be understood as a vast array of people, places, events and ideologies, written out and ingested as information.

History

History is essential to grasping culture, providing context to how various practices, beliefs and value systems emerged in a society. History and culture are intertwined (Nunn, 2012). Our understanding of culture follows historical antecedents that logically explain patterns of behaviour and practices within a culture (Histen, 2024). Therefore, history shapes culture and is, in turn, shaped by it. History appears in MetaCLIP’s metadata in two key ways (Tables 1, 2). First, the file contains over 300 terms with the word ‘history’, documenting histories of nation-states (e.g. Australia, Afghanistan, and India), multinational conglomerates (e.g. Apple, Facebook, and Amazon), food and beverages (e.g. pizza, beer, and wine), and technologies (e.g. cryptocurrency, Python, and Microsoft Office). As such, this history tends towards the popular. Sports, cuisines and tech are well documented, whereas the more conventional history taught at school (empires, revolutions, religious practices) barely finds any mention. For instance, the terms ‘war’ and ‘history’ appear only once together in the entire file with an entry on the History of the Peloponnesian War.

‘History of’ entries (excerpt).

Historical events (excerpt).

History also appears in the file in the form of historical events prefixed by year. These 4000 terms begin with the 1900 Galveston Hurricane and end in the present with current events like the 2024 Summer Olympics and future entries such as the 2026 FIFA World Cup. Sports matches and violent events (terrorism, coups, riots) tended to dominate this list. Both categories can be classed as a version of spectacle, a concept Debord (2021) links with the apparatus of media and the stream of images representing daily life. In this sense, this ‘history’ replicates the viral logic of the Internet, where football goals and violent mobs achieve virality (Wasik, 2009). In both instances – an emphasis on popular rather than ‘academic’ history and the dominance of sport and violence – we see how MetaCLIP internalises and perpetuates what societies value as history.

While the merits of this limited and spectacle-focused history could certainly be debated, the broader point is that history is a key ingredient in this AI model’s construction of cultural knowledge. Since history seeks to maintain a record of culture, the knowledge of culture is (partially) knowledge of key events in the past, including (as here) ‘pop events’ or ‘mundane’ events. History does not just compel AI to synthesise what is acceptable knowledge and values in a given society at a specific historical time and space, thereby deeming AI systems to reduce inherent information bias and become sensible machines. Therefore, history as a cultural category helps make sense of how AI understands culture as a whole.

Politics

Politics and culture are intertwined, with culture playing a critical role in shaping politics and political choices (Pye and Verba, 2015). Political cultures encapsulate the set of shared ethical values, beliefs and normative knowledge a society holds about political processes (Berezin, 1997). Similarly, political ideologies provide a set of ideas about how politics should work in societies. All this suggests that any adequate grasp of culture requires a basic understanding of politics or ‘isms’.

As a basic mapping of political material, we counted which top-level terms from the ‘list of political ideologies’ (Wikipedia, 2024) appeared in the metadata file (Figure 2). Approximate tallies were democracy (156), colonialism (92), communism (90), environmentalism (89), socialism (75), liberalism (66), feminism (38), anarchism (38), fascism (36), conservatism (36), capitalism (28), nationalism (27), libertarianism (17), syndicalism (8), authoritarianism (7), populism (7), postcolonialism (6), transhumanism (4), and communitarianism (2). The appearance of the terms ‘recolonize’, ‘recolonization’ and ‘recolonized’ was surprising, given the stigma associated with colonisation (Césaire, 2000).

Word cloud of political ideologies, size indicates frequency (Meta, 2024).

Based on the model’s overall logic, these political ideologies become waypoints or hubs, like the other metadata entries, around which the ‘raw’ data are distributed. In a practical sense, this would allow the model to link the concept of ‘democracy’ with texts or images of protests or parliamentary proceedings, while ‘authoritarianism’ could be associated with military parades. In this way, political ideologies provide this AI model with a basic way to map particular politics to various peoples, places, and times, as well as certain aesthetics and symbols. Such interlinked data mirror scholarly insights that culture is inherently political, bound up with institutional power, political orders, and ideological assumptions (Wright, 1998). In this way, politics becomes one of many ingredients that contribute to the ‘balanced’ or comprehensive cultural knowledge aimed at by the AI model.

Media

Culture is deeply intertwined with expressions of art and entertainment. By displaying music, literature, visual art, films and TV series, societies tend to express their cultural experiences and aspirations. These cultural objects are the products of a cultural industry, a globally distributed sector, producing a vast array of commodities, where old dichotomies of high/low or elite/popular no longer apply (Rodríguez-Ferrándiz, 2014).

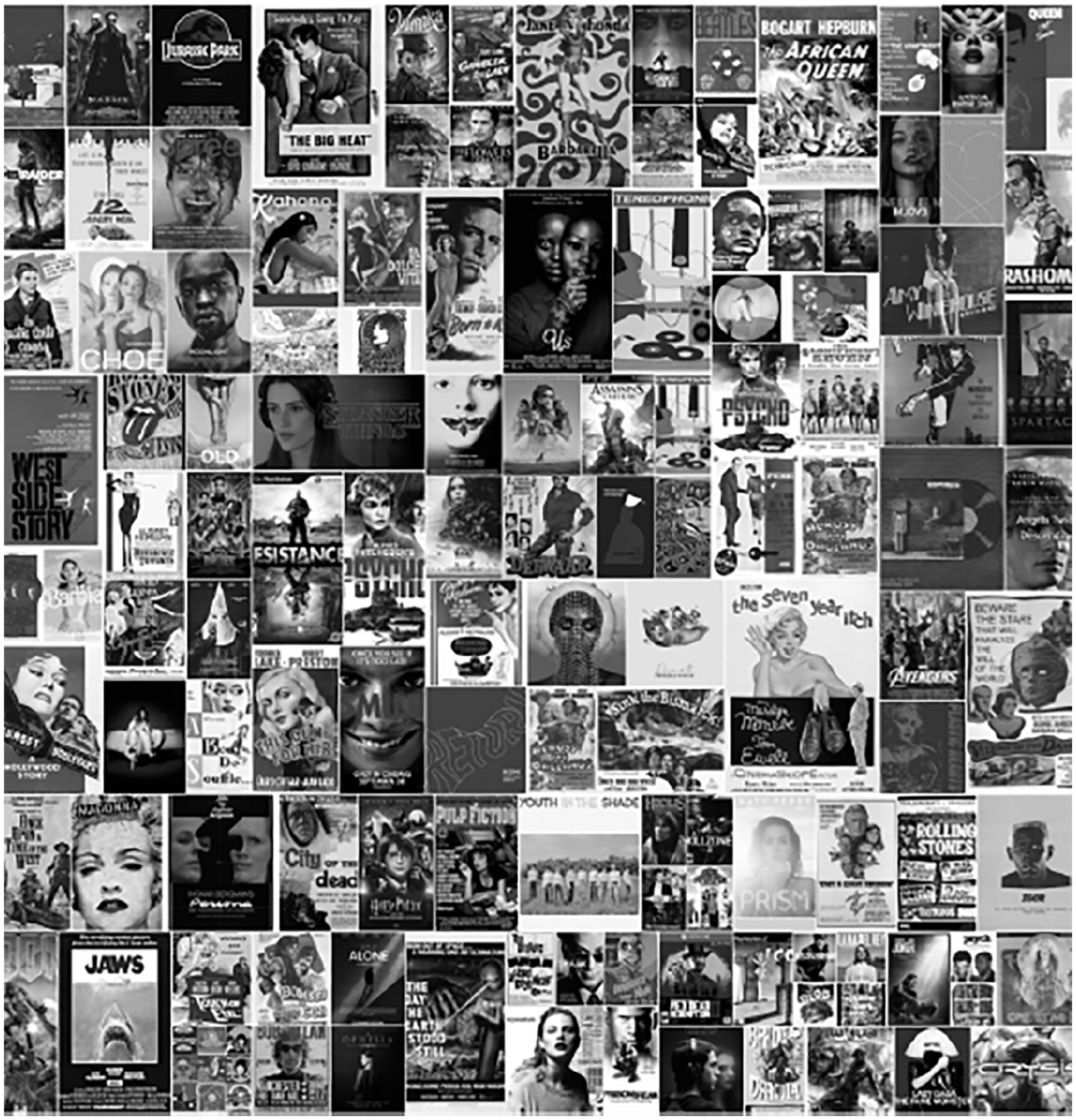

MetaCLIP’s metadata contains entries on thousands of specific cultural artefacts in arts and entertainment (e.g. Red Dead Redemption). Because many are Wiki entries, they list their media type in brackets, such as ‘(videogame)’ or ‘(TV series)’, allowing us to obtain a basic tally of each type. Within the metadata, there are 1585 film entries, 1320 TV series entries, 232 bands, 207 graphic novels, 203 video games and 161 albums. Figure 3 visualises a selection of these artefacts, gesturing to this extensive media landscape.

Indicative image cloud of media artefacts in the metadata.

While we note the Westernised slant to this media, our key point is that these entries suggest artefacts provide a window into a culture. Indeed, the lopsidedness of this material itself reflects a media terrain of American-exports and global consumption practices. Objects embody a ‘syntax of cultural practices’, presenting ‘modes of meaning in the world, dispositions of thought and comportment’ in a highly concrete way (Lippit, 2024). MetaCLIP reflects this insight: a key vector to understanding culture is to identify, order and ‘decode’ many of its key cultural artefacts. At a basic level, speech, humour and texts can often only be understood if one knows the ‘cultural reference’ it refers to or rifs of. By including a massive array of films, TV series, music albums, novels and video games, the AI model seeks to build cultural understanding via media.

Discussion: from the list to latent space

To codify knowledge, MetaCLIP uses two key techniques: the list and latent space.

The list

MetaCLIP’s metadata can firstly be understood as a giant list, an index of 500,000 entries ranging from places to events, ideas and artefacts. Within this list, there are references to other lists. ‘List of American Films’, for instance, documents American movies according to the year of release, functioning as a digital placeholder for cultural objects. Indeed, the file contains thousands of list entries, from TV series to Academy Awards and NFL Championships. By classifying and developing knowledge domains (Werbin, 2017), these Lists construct cultural knowledge.

The List is both ancient and contemporary. Humans have used lists to document and structure their lives since antiquity, and in this sense, can be viewed as the ‘origin of culture’ (Eco, 2009). The List has been critical to managing population, territory and security and has served to administer, organise, manage, police and circulate people and things (Werbin, 2017; Young, 2017). Lists are highly flexible knowledge systems with distinct aims: ‘past, present or future-oriented; retroactive, administrative, or prescriptive’ (Young, 2017: 16). The List has risen to become a key contemporary technology, with email lists, wish lists, no-fly lists, and kill lists becoming a ubiquitous feature of everyday life (de Goede and Sullivan, 2016; Johns, 2016; Staeheli, 2012; Weber, 2016). Today’s lists are increasingly taking the shape of databases and digital files, which have enhanced their ability to structure the world (de Goede et al., 2016). Lists are increasingly ‘giving form to protocols and algorithms’ that reshape how we think about the world around us (Young, 2017: 14). MetaCLIP as List thus continues its role as a technology for codifying and formalising knowledge.

In the model metadata, the List functions as a ‘conceptual prison’ (Goody, 1977), a defined space that formalises what culture is. ‘List of 2020 albums’, for instance, creates a container that is then populated. This container has ‘a clear-cut beginning and a precise end, that is, a boundary, an edge, like a piece of cloth’ (Goody, 1977: 81). In this sense, lists take something open-ended and give it a form; ‘making a “cut” in the continuous flow of the world’ (de Goede et al., 2016: 6). ‘Lists teach us about systems of order that surround and enframe us’, notes Young (2017: 15), providing insights into how information is scaffolded to form systems of knowledge production and circulation. Because culture cannot be captured as a whole in data points, the model uses lists to classify and encode different artefacts into rigid categories. The List produces a meaningful grouping, where each object is placed within a fixed class that is tightly defined.

This boundary-making ability makes the List a ‘privileged tool’ for rendering culture intelligible (Staeheli, 2012). Once published and circulated, the list disciplines the items and ideas judged list-worthy and those which are not. In the Foucauldian sense, the list generates a ‘regime of truth’, a claim about things deemed to be a ‘fact’ and, therefore, irrefutable (Lorenzini, 2015). In this sense, the List no longer remains a tool for classifying and creating new semantic fields (Goody, 1977) but functions as an ‘epistemic practice and regulatory technique’ (de Goede et al., 2016: 4). In other words, the List is not merely technical but cultural and political. The List is the definitive record of what counts and does not count in culture. In essence, MetaCLIP’s use of the List as a technology develops a cultural map, allowing it to make sense of that complex phenomenon we call culture.

Latent space

In MetaCLIP, the List is joined with a far newer technology: Latent Space. Latent Space or embedding space simply denotes an abstract, multi-dimensional space that captures essential features of input data. CLIP’s key task and its greatest innovation, as discussed earlier, are to align ‘language’ and ‘image’ representations in this space. A photograph of a mountain landscape and the phrase ‘mountain landscape’ are distilled into two numerical values and will lie close to each other in space, indicating their similarity. The utility of latent space is ‘at the core of deep learning’, writes one computer scientist (Tiu, 2020), ‘learning the features of data and simplifying data representations for the purpose of finding patterns’. Despite this importance, there is almost no (excepting Amoore et al., 2024) scholarship in media or cultural studies on latent space.

In one sense, latent space is about compression. A heavy or dense piece of data, such as a high-resolution image, can be distilled down to an ‘essential feature’ that occupies a particular point in space. Yet, even this technical efficacy has clear epistemic implications. In drastically downsizing original data to an embedded feature, aspects of the original are inevitably lost, but far more data can be processed and internalised. Latent space attempts not to exhaustively encode a handful of cultural artefacts or concepts, but rather to more crudely capture an immense variety of objects and events, places and people.

This strategy has clear implications, suggesting it is the relationship between items that define and indeed supersede its ‘essence’. Concepts like ‘70s pop’ or ‘the Cold War’ are not captured definitionally but spatially – their essence is not housed in a list of precepts but can be found hovering around a particular point in space. This is a very flexible way to internalise concepts and relationships. There is no hard boundary or border between concepts, but instead, more or less distance. An event like the ‘Cuban missile crisis’ may occupy a cluster with other ‘Cold War’-related entries, but also lies within some proximity to ‘Cuban jazz’. Indeed, as users of ChatGPT or StableDiffusion know, it is possible to explicitly explore these grey zones or overlapping areas that are the spatial midpoint of two concepts.

This spatial logic is about contrast rather than classification as computer science typically understands it; it is not about sorting data into predetermined and hard-edged categories (Krishnaiah et al., 2014). Entities do not have some innate essence, nor any kind of bright line test required to belong or not belong. Rather, entities derive their identity through contrast: by being more or less like other things. A ‘cat’ cluster is in closer proximity to ‘dog’ than ‘people’. This spatial logic is what allows techniques like negative prompting to function. In positively prompting ‘beautiful’ and negatively prompting ‘ugly or deformed’ – an ableist but all-too-common strategy – users are explicitly pushing towards some points and away from others.

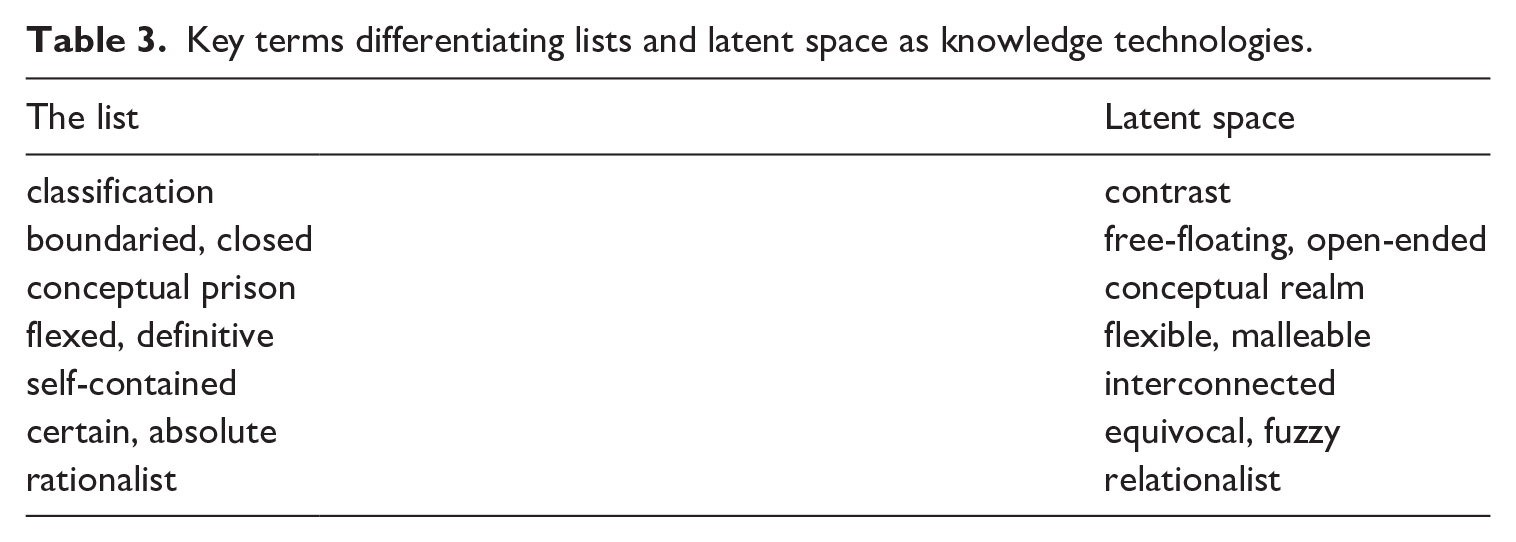

The List and Latent Space thus operate according to fundamentally different logics, a distinction we have gestured to with the keywords above (Table 3). For example, while the List employs a rationalist approach where things exist independently, Latent Space takes a more relational approach to knowing, a critique of Enlightenment-based empiricism (Kuhn, 1962) that asserts that individuals and societies are co-constitutive (Donati, 2011; Prandini, 2015). Mirroring these insights, the knowledge codified in Latent Space emerges from relations between things. Close proximity creates a fuzzy coherence. In this sense, the Latent Space emphasises constitution, interaction and connection between things. If the List asserts absolute certainty about cultural categories, Latent Space offers a more open-ended and equivocal way-of-knowing.

Key terms differentiating lists and latent space as knowledge technologies.

Conclusion: the political power of cultural machines

In this article, we have explored how MetaCLIP constructs an ‘understanding’ of culture. In some respects, this use of classifying, relating and ordering continues long-standing patterns of knowledge construction. However, in other ways – the millions of training data points, aligned to hundreds of thousands of metadata entries, the distinct combination of lists and latent space which impose order while allowing for flexibility and relationality – we see how this understanding is computational and (partially) novel. In amassing and training on this cultural material and deploying this particular understanding of culture in various applications at scale, we suggest MetaCLIP and models like it function as cultural machines.

Such machines can exert force precisely because culture is fluid, not fixed. As Benjamin (1940) stressed, culture is not defined by rules – an idealised and static entity from the past – but is our ever-changing understanding of humans’ place in the world. Culture is not ‘an essential set of relations between a people, place and way of life’ but a ‘conjunctural and pliable articulation’ based on our creative form-giving capabilities (Bennett, 2015: 555). Cultures are dynamic and interactive (Chemla and Keller, 2017): they are not simply discovered but are actively constructed.

If culture is up for grabs, computational systems increasingly shape it. Algorithmic regimes, big data and machine learning models internalise and instrumentalise massive amounts of user data or harvest it en-masse from that sprawling cultural artefact known as the Internet. By codifying knowledge at scale, these cultural machines grasp (albeit in a limited way) information about people, places, things and events and some of the relationships between them. Increasingly embedded into many aspects of human existence, these computational cultures operate in a dynamic, agile and interactive way (Giannini and Bowen, 2022).

Driven by data, cultural machines make choices about what counts. ‘Reasoning about X requires representations of X’, Agre (2003: 287) observed; these representations ‘need to encode all the salient details’ of culture so that ‘computational processes can efficiently recover and manipulate them’. While this statement appears logical, the question of salience is deeply political. What matters, what is significant? MetaCLIP’s metadata provides one answer: these are the people, places, artefacts, events and ideologies considered key for constructing a ‘sufficient’ cultural understanding. Certainly this approach is deeply impoverished: affect, interpersonal relationships, oral tradition – all these aspects of culture are left out of this information-centric approach. Yet, salience consciously sheds such detail to produce something actionable. By amassing, ordering and aligning data, models like MetaCLIP transform cultural artefacts into a kind of cultural knowledge.

In defining culture, cultural machines exert political power, a ‘distribution of the sensible’ (Ranciere, 2013) that deeply shapes what is visible and what is invisible, what is spoken and what is silenced. These models instantiate a model of the world, a particular way-of-knowing that is inherently ethical and political (Amoore et al., 2024). Such infrastructures enact a kind of politics of parameters (Magee and Rossiter, 2015). Baked into neural networks and carried out automatically, they are decisions that never register as decisions. Images are tagged, associations made and posts categorised silently and invisibly. Indeed, we suggest the political power of cultural machines resides precisely in this combination of the banal and the procedural – backend operations that form the epistemic infrastructure (Munn, 2022) of everyday digital operations. Drawing on insights from across the humanities and social sciences, our work here presents a first step in understanding the logic and limitations of the cultural machines that increasingly mediate our everyday lives.

Footnotes

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.