Abstract

This article examines emerging online safety issues for Australian teenagers (12–17 years) in their use of social media, apps and online games drawing on findings from a multi-phase, mixed-methods research project carried out from January 2022 to July 2023. Based on the research, we develop a new understanding of ‘social digital dilemmas’, situating our analysis within the rapidly changing social media environment that uses algorithmic technologies and recommendation systems to automatically feed and personalise content to users. These social digital dilemmas are negotiated within relational social networks taking into account digital skills and literacies, household rules and realms of responsibility for children’s online safety that cut across differences in gender, family structure, cultural background and children’s disability status. Based on our findings, we make conclusions about the regulatory context for children’s online safety and suggest how to develop more effective online safety policies and approaches.

Introduction

The negotiations over children’s online safety by young people and their parents or carers can best be explained in terms of ‘social digital dilemmas’. The concept of ‘digital dilemma’ was first proposed by Livingstone and Blum-Ross (2020) to explain the way that parenting is enacted at the forefront of society’s ideas and values of childhood. Dilemmas over children’s digital media use manifest as tricky and troublesome points of tension and disagreement in everyday family life. Because of this, young people’s digital media use is oftentimes a focus of intense parental, institutional and social regulation, with parents applying a range of household rules and mediation strategies. For young people aged 12 to 17, their digital media patterns and practices and how they experience and manage online risks and harms draw on complex repertoires or ‘cultural toolkits’ of practices, literacies, skills and techniques that traverse the categories of childhood and adolescence (Fine 2004). These are performed within ‘webs of relationships’ (Mason, 2004) not limited to parents and family units (of which there is diversity in structure and composition). They extend to institutions, friendships, interest groups and kinship networks as well as to materials and media – the ‘contextual fabric’ of people’s lives (Twamley et al., 2021). Importantly, for many young people this includes social media, apps and online games.

Young people’s engagement with digital media is also influenced by the design and operations of social media platforms, including the data collection and algorithmic processing that drives platform business models and profits. Data from users’ social interactions online are used to develop sophisticated targeted commercial advertising products and strategies, resulting in a particular kind of ‘social dilemma’ dealt with in the 2020 Netflix documentary drama ‘The Social Dilemma’ directed by Jeff Orlikowski. In the ‘platform paradigm’ with its extensive ecosystem of companies, apps and advertisers (Burgess, 2021), social relations are no longer something that can be separated from data relations but rather, form the foundation of what Couldry and Mejias (2019) have referred to as a new social order of ‘data colonialism’. Building on this understanding, we propose ‘social digital dilemmas’ to refer to two contemporary processes: the everyday negotiations over young people’s online safety within relational networks that intersect with the larger social stage on which ideas and practices of childhood and youth are performed, and the algorithmic and automated commercial digital media platform environment in which young people’s online activities and social life are a source of data commoditisation.

We develop and apply this idea with the support of research findings for the ‘Emerging Online Safety Issues: Co-designing social media education with young people’ project, which took place in Australia from January 2022 to July 2023 (Humphry et al., 2023). The aim of the project was to understand the key and emerging issues for Australian teenagers using social media, apps and online games, with the objective to co-design evidence-based online safety social media education with young people. The project used a mixed-methods methodology involving focus groups (n = 7), co-design workshops (n = 3) and a nationally representative survey (n = 1228) with young people aged 12–17 years old and parents or carers (referred to as just parents below) of the same age group. In the following, we examine the key survey and focus group results synthesised as a complete set from the perspectives of the young people and parents with the aim to generate new understandings of the complexities and ever-shifting challenges surrounding platform media and its regulation.

Traditions and approaches to children’s online safety

There is a rich tradition of research on children’s online safety that has evolved with the rapid changes in digital media and the online environment. Much of the early concerns of online safety focused on negative effects of media on children and issues of online abuse by adult strangers and peers such as sexual solicitation and cyberbullying (Livingstone et al., 2015; Sergi et al., 2017). As the Internet evolved and the range and type of online activities, sites and household digital media diversified, research developed to tackle a larger array of safety issues and experiences encountered by children, including inequality, mobile online safety, so-called Internet addiction and family conflict (Barbovschi et al., 2014; Carter et al., 2020; Kutrovátz, 2022; Livingstone and Blum-Ross, 2020).

Parental mediation

Parental management of children’s media use has been a focus of research to understand the different ways that parents ‘mediate’ the perceived negative effects of media on their children (Clark, 2011). Much of this scholarship has focused on younger children, starting with examinations of television use in the 1990s (see, for example, Austin, 1993; Van der Voort et al., 1992) but as the focus turned to the Internet, this expanded to encompass older age groups including teenagers (Livingstone and Helsper, 2008) and tweens (Shin et al., 2012) as well as mobile technologies, video games and other digital media in and beyond the home (Kalmus et al., 2022; Kristiansen and Jensen, 2023; Nagy et al., 2023). Livingstone and Helsper (2008) explained that parental mediation strategies arise within a particular context in which much of the responsibility of online safety lies within the private arena of household rules and practices. These are also negotiated in the context of societal discourses of children that, as Adorjan et al. (2022) observed, represent young people in a paradoxical relationship to digital technologies ‘as both agentic creators and technologically savvy digital citizens, and vulnerable to a plethora of risks entailed through accessing ICTs’ (p. 113). Commonly referred to parental mediation strategies include taking an active role in children’s media use, which focuses on learning through talk, a restrictive role, whereby strict rules and limitations are placed on access and use, and co-viewing, which involves parents sharing the media experience and being present during use but with minimal intervention (Livingstone and Helsper, 2008).

A strength of the research on parental mediation of children’s online safety is the emphasis on family systems within which media is accessed and used, allowing for deeper analysis and contextualisation of digital media use and experiences of children’s online safety. Research on parental mediation of teenagers’ online safety has tried to account for the evolving capacities, interests, maturities and socialities of children by age, gender, birth order, ethnicity and family income, as well as by teenagers’ perceptions of parental mediation approaches (Adorjan et al., 2022; Cabello-Hutt et al., 2018; Griffiths et al., 2016; Livingstone and Helsper, 2008; Sanders et al., 2016). Within this scholarship, research has found that parental intervention recedes as children get older, attributed to teenagers’ need for more independence (Sanders et al., 2016) as well as less acceptance of imposed parental controls (Cabello-Hutt et al., 2018). There are varying accounts of the effectivity of parental mediation strategies (Griffiths et al., 2016; Livingstone and Helsper, 2008; Lwin et al., 2008), and whether the gender of the child plays a significant role in influencing mediation styles (Lau and Yuen, 2016; Lee, 2013). However, the gender of the parent has been found to make a difference, with mothers carrying out more supervision of their children’s online activities than fathers, reflecting gendered cultural norms (Duek and Moguillansky, 2020). Differing psycho-social developmental paradigms also shape parental mediation of digital media use, reinforcing a risk discourse that parents are expected to negotiate (Jeffery, 2021). There has been less attention, however, given to emerging online safety issues that arise from the integration of algorithmic tools in online sites and services used regularly by teenagers. Such changes not only introduce new kinds of online issues and experiences but also shape how young people negotiate these issues with their parents, and other important social figures in their lives, set within a rapidly changing social media environment.

Automated and algorithmic social media environment

The emergence of new apps and platforms is a regular occurrence in the fast-moving and global social media landscape. BeReal and TikTok are examples of the new programmes that gained popularity among Australian teenagers from the time our research commenced in early 2022. The impact of algorithmic content curating practices on the children’s online safety is related broadly to what Striphas (2015) observed as an era of algorithmic culture, characterised by calculated, automated and curatorial practices that have replaced moderating roles once held by humans such as critiques, curators and editors. This systemic shift towards an automated and algorithmic culture not only impacts on what content users see and interact with but also how content is produced in these digital spaces (Napoli, 2014). It is here that users, audiences and online content creators interact with algorithmic and automated media, with young people, who are creating, sharing and experiencing their lives digitally, especially at the whim of this transformation.

The scholarly enquiry surrounding this moment of flux in the digital media environment has ranged from positive perspectives of algorithms as sensemaking technologies for media-saturated spaces (Ruckenstein, 2023; Wilson, 2017) to the impacts of algorithmic power on politics (Bucher, 2018), and bias within algorithmic design, especially against people of colour (Noble, 2018). Audiences are acted on and sorted by algorithms in new ways based on their data activities and traces, ‘now increasingly being selected, calculated, interpreted and anticipated by media’ (Mathieu and Jorge, 2020: 1). This shift simultaneously impacts how content is created as everyday users and Internet celebrities especially begin to game the algorithm for increased visibility (Cotter, 2018; Hutchinson, 2023). As online content creators recognise ‘the game’ associated with the process of cultural production, so too do audiences engage with that material.

Those same audience members are predominately young social media users and online gamers who are very much a part of ‘the visibility game’ subject to the platform affordances of content exposure and those who create that content. This same moment has prompted scholars to reposition user agency within the cycle of cultural production. Lingel (2021) made a compelling argument that while social media users are at the end of these algorithmic and automation mechanics, this is also a moment to reignite their agency to steer and direct how these media technologies understand who they are and the sort of engagement they desire in these spaces. Stepnik (2023) develops this observation through what she terms ‘active curation’ – the process of employing user agency with the tools provided to curate one’s algorithmic social media space. With this as a backdrop, automation and algorithmic process of social media are central to concerns related to the social digital dilemmas young people face in their everyday digital media lives.

Methodology

The project used a multi-phase mixed-methods research methodology involving focus groups (n = 7), co-design workshops (n = 3) and a national survey (n = ~1228) with participants aged 12–17 years (n = 628) and parents (600) of the same age groups.

Focus groups

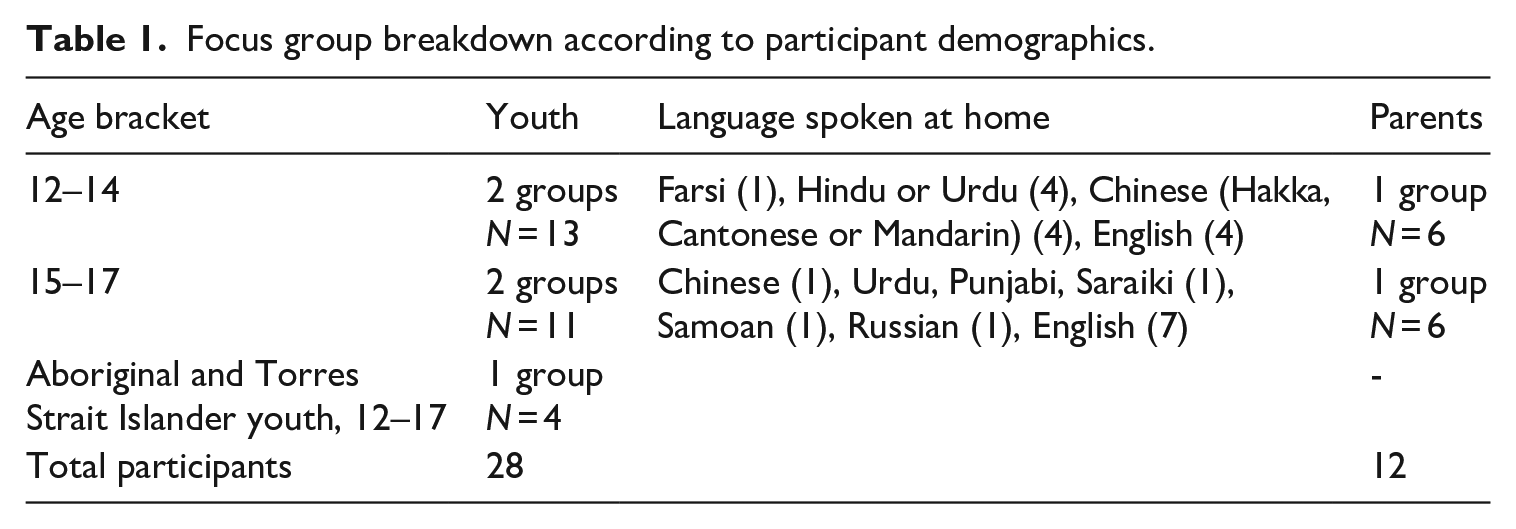

The initial qualitative research involved seven peer facilitated focus groups with young people aged 12–17 years and parents of children in the same age group. To capture differences in experience and skill between younger and older teenagers as well as parental mediation strategies, we divided the focus groups by age. We held the youth focus groups in two age groups – 12–14 and 15–17 years – and parent focus groups were similarly organised according to the age of their children. Participating parents were recruited through a separate panel to that of the young people (see Table 1 – Focus group breakdown according to participant demographics).

Focus group breakdown according to participant demographics.

In Australia, online safety laws and regulations have been changing alongside international reform in this space, including a review of Australia’s privacy laws with the release of the exposure draft of the Privacy Legislation Amendment (Enhancing Online Privacy and Other Measures) Bill 2021 (referred to as the ‘Online Privacy Bill’) for public consultation (Australian Government, 2021). The proposed changes included age verification to limit the collection of children’s personal information by social media companies and stricter parental consent for those under 16. In the focus groups, we asked about young people’s uses of social media, apps and online games, what was regarded as harmful online and responses to harm, including household rules and parents’ approaches to managing online safety. We also explored young people’s experiences with algorithmic feeds and recommenders and found out what they knew and thought about the proposed legal changes to children’s online privacy. We recruited participants with the aim to ensure inclusion and representation of Culturally and Linguistically Diverse young people and Aboriginal and Torres Strait Islander young people in rural and metropolitan areas, a goal that we achieved in the focus groups and national survey. For both the focus groups and the survey, two questions were used to identify Culturally and Linguistically Diverse participants: whether they were born overseas (not in Australia), and whether they speak a language other than English at home. A positive answer to one or both questions warranted their inclusion in the category.

The group activities, moderation guides, setup and timings were the same for all youth groups and varied slightly for the parent groups to account for their parental role and relationship to their children. For the focus group data, we transcribed all the audio files into text, which were then compiled, coded and analysed using the qualitative data analysis software NVivo. We applied a multi-stage thematic analysis that involved collaboratively developing coding categories and meta-themes and then these were synthesised and consolidated into a shared codebook. Participant quotations used in this analysis were extracted from NVivo using searches on meta-level codes and specific categories. The inductive and iterative process aligned with a grounded analysis approach that allowed for the codes and themes to emerge and for new themes to be incorporated through focusing, refining and sampling (Charmaz, 2006).

Co-design workshops

Following the focus groups, we delivered two survey co-design workshops, one with young people aged 12–17 years and another with parents of children aged 12–17 years, using the online focus group format via Zoom. In the workshops, our participants carried out collaborative activities to design the questions that formed the basis of the survey. During this process, we highlighted themes that had emerged from the focus groups and asked participants to engage in an Appreciative Inquiry process to recast themes into probing questions for the next phase of research. Appreciative Inquiry is a co-design method that emphasises the strengths, potentials and/or opportunities about a community or group (Cooperrider and Whitney, 2016). In the specific context of this study, Appreciative Inquiry was an effective underpinning framework for facilitating the involvement of participants in different components of the project, first in the survey design and later in the creative development and production of online safety stories, scripts and video prototypes (Humphry et al., 2023).

Surveys

Following the completion of workshops, in which we co-designed the survey with our participants, we ran a nationally representative survey with a total number of 1228 Australians in every state and territory, including 628 young people aged 12–17 years and 600 parents of 12- to 17-year-olds. Our sample targets for young people were 50/50% by gender, 30% for age 12–13 years, 30% for age 14–15 years, and 40% for age 16–17 years, respectively. The sample was sourced through survey panels and a generic link sent out via our partners using the Alchemer software. The surveys were conducted online; the average survey length was 15 minutes; it consisted of 43 questions and all respondents were compensated for their time. Quotas were set by gender of child/parent and state to ensure the sample demographics matched the Australian Bureau of Statistics demographics. However, quotas could not be placed on age of child in the youth survey due to smaller sample of 12s–13s, so this was conducted on a best-efforts basis and data were weighted to as close to representative as possible.

The co-designed survey first asked youth participants demographic questions, such as their gender, age, state, area where they live (urban/regional), cultural background, disability status and number of siblings. Subsequent questions measured social media use patterns, age at which sites were first used, frequency and diversity of online activities and the motivations of use, perceptions of online risks and harms, as well as the types and frequency of negative experiences online and strategies used by young people to mitigate harms, the role of parental mediation and household rules pertaining to social media use and understandings of online privacy and attitudes to the proposed new privacy laws. The survey data were analysed using descriptive and bivariate statistics, as well as measures of statistical significance in group comparisons, presented in the next section.

The project was carried out in compliance with the University’s Working with Children and Vulnerable Adults Policy. All investigators had valid Working with Children Checks for the duration of the project and our research processes complied with the Human Research Ethics Committee-approved protocols (protocol id: 2022/167).

Findings

Online practices and patterns

Australian teenagers between the ages of 12 and 17 years are active across a range of digital media including content platforms (e.g. YouTube, Instagram, Facebook), games (e.g. Roblox, Fortnite, Minecraft), messaging apps (e.g. Snapchat, WhatsApp, Discord) and video hosting and streaming apps such as TikTok and Twitch. The strong attraction of young people to social media platforms and mobile apps is driven by activities such as connection with peer groups, the ability to learn new skills, to build their own sense of identity and the need for understanding the world around them.

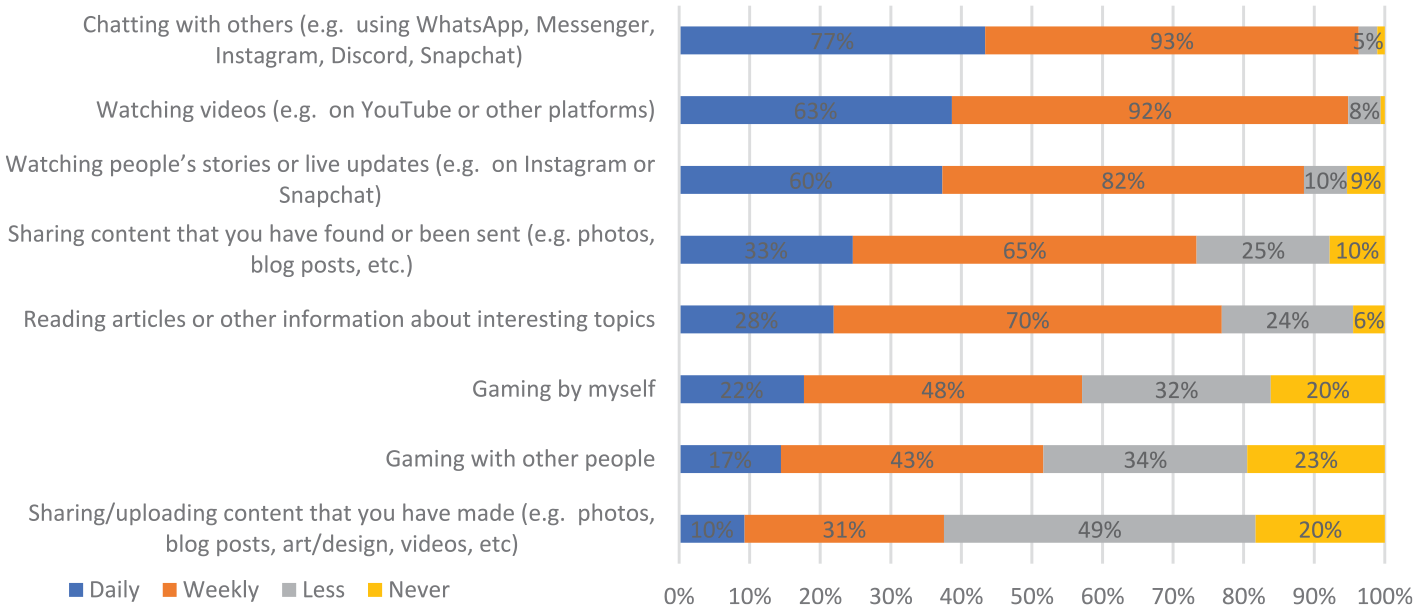

The survey found that YouTube was the most frequently used social media platform (used by 75% daily or weekly) followed by Instagram, Snapchat and TikTok. The top online games used were Minecraft (58%), Roblox (47%) and Fortnite (36%). Common daily activities (see Figure 1) included chatting (77%), watching videos (63%) and watching people’s stories or live updates (60%). A vast majority of young people (70%) read articles and other information of interest at least weekly, which included sharing content found. Most played games weekly, whether alone (70%) or with others (60%), while less than a quarter did this every day. Finally, 80% of young people said they had uploaded and shared content they made, whether in the form of photos, blog posts, art or videos, with over half of them doing so on a weekly basis and 10% sharing content daily. These digital media platforms and uses are integrated into daily routines and activities of socialising, information seeking, schoolwork, gaming and other recreational activities.

Online activities by young people.

Australian teenagers have an extensive repertoire of practices and techniques for managing different social contexts and audiences. This young person in one of the focus groups of older teens explained how they split friendship groups using sophisticated segmentation strategies within and across platforms:

Young people in the focus groups noted the influence of algorithms on the content they consume. Some mentioned ways they shape algorithmic recommendations to exert more active curation over what they see in their feed, while others linked algorithms to a more passive reception of content through scrolling:

The survey found statistically significant gender differences in the use of Instagram (82%* young women vs 72% young men)

1

with teenage girls especially using Instagram for chatting and sharing photos and videos, creatively utilising the group-based communication features for socialising:

Teenage boys in general were more likely to play games and chat through Discord and in-game chat services, affirming the important role of interest-based groups for mediating friendships (Itō, 2013). These gender differences observed in the focus groups were substantiated in the survey, in which girls were found to prefer using platforms with messaging and visual affordances, such as Instagram (82%**), Snapchat (79%**), TikTok (78%**), Facebook (59%*) and BeReal (48%**), while boys constituted the primary user base for Discord (72%**), Reddit (54%**), Fortnite (51%**) and Twitch (47%**). Young people who identify as nonbinary predominantly used Discord (58%** vs national average of 41%) and Reddit (26%* vs national average of 14%). Weekly usage patterns were largely consistent with those mentioned above, further revealing a slight, yet significant preference for Fortnite (29%* vs 23%) and Minecraft (25%** vs 17%) among boys compared to girls.

There were some differences found in the online platforms used and practices of Culturally and Linguistically Diverse young people. 2 Based on the survey results, these young people were more likely to join social media to socialise (74%* compared to national average of 68%) and learn about things that are going on in the world (42%* compared to national average of 35%). From the focus groups, we found that many adopted a digital mentor role in the family, with several sharing stories of teaching their parents or younger siblings how to use features of an app or service, including how to stay safe online. This group of young people spoke of staying in touch with their transnational family networks as key drivers for signing up to social media and messaging apps. Our findings indicated a wide range and variety of social media use patterns among Aboriginal and Torres Strait Islander young people. The survey found that this group of young people were active content creators: one in five created and shared their own content on social media, compared to a national average of 1 in 10 of those surveyed. However, the group reported significantly lower daily use of messaging apps (35%** vs 77%) and following their friends’ and family’s live updates (26%** vs 62%).

Young people who reported having a disability were found to be engaging with a wide range of platforms and online services and were sharing online content found or received more frequently than their peers (38% shared daily compared to 33% in the general population). We also found that parents of children with a disability were more risk averse about their children’s digital use and took more steps to keep their child safe online such as giving advice or restricting use. This was differentiated by the gender of the parents, with mothers significantly more likely to have suggested safety strategies than fathers. This indicates that the online practices of young people with a disability were negotiated according to different risk assessments and parental mediation styles with parents, particularly mothers, taking greater responsibility to support their children’s online safety.

Overall, young people’s online practices and patterns surfaced in the findings and focus groups related to the broad use of social media, messaging apps and online games in their lives, with algorithmic recommendations playing an influential role in content curation and consumption. Differences in use of platforms by gender were apparent and variations in digital media literacy among family members lead to some groups of young people becoming the digital experts in their household. In general, there was a divergence between parents’ assessment of their children’s skills and safety and those of their children and a higher assessment of risk by parents of young people living with a disability.

Online harms and safety responses

Young people are in general resilient in their online media use and have built capacities to respond to the harms they face in their digital experiences, while their parents are more concerned about their children’s experiences online. In the survey we found that 55% of young people feel safe, while only 33% of their parents consider their children safe online. Young people felt most unsafe on Twitter (now X), Facebook and TikTok, while Minecraft and YouTube were seen as the safest platforms. Parents broadly indicated that they were less likely to understand platforms as a safe place than their children did. Young people were, nevertheless, exposed to a range of harms in their daily lives presenting them and their carers with significant social digital dilemmas that required a spectrum of network and relational negotiations.

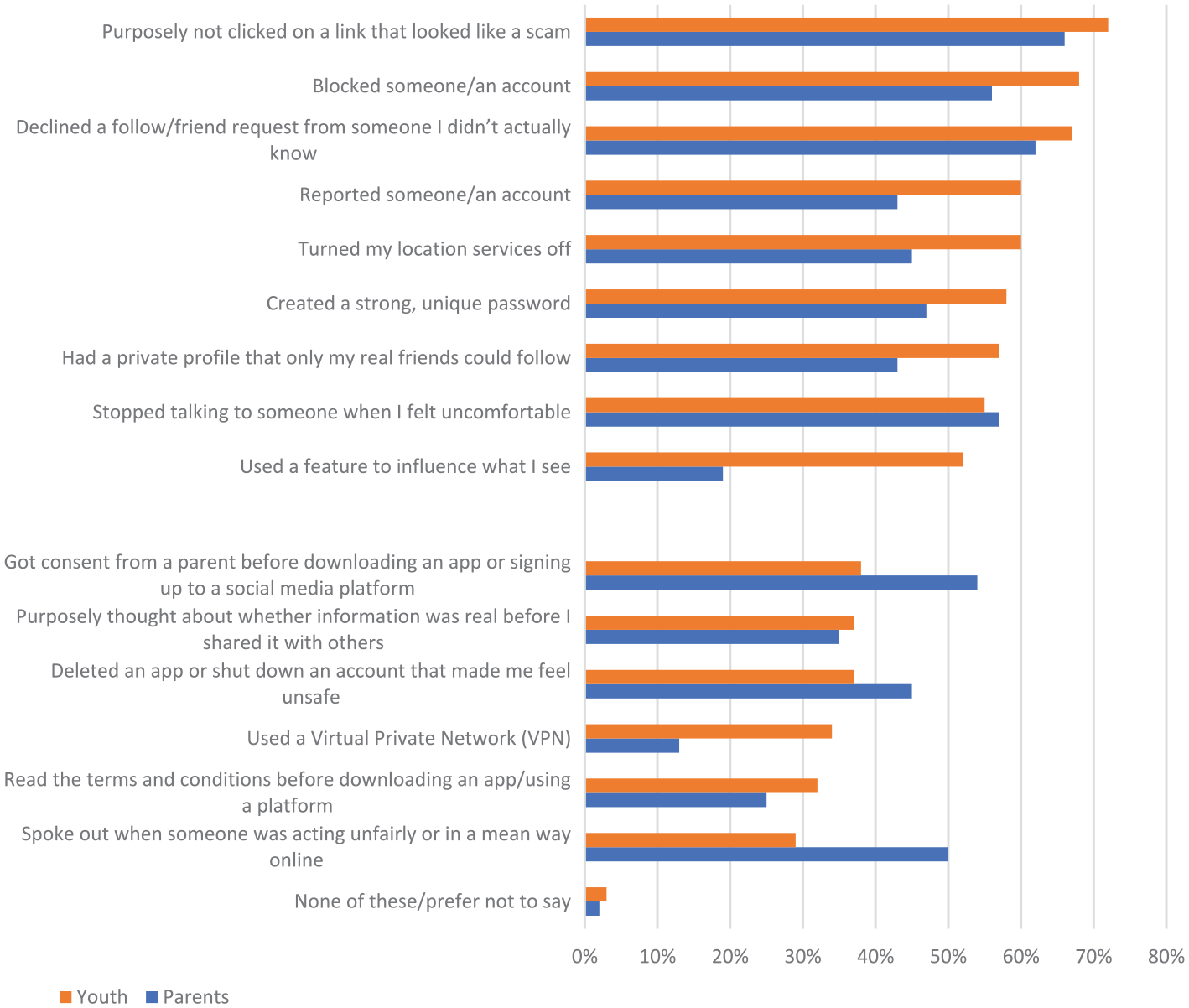

Young people often do not tell their parents about the negative experiences they have online and find it sometimes difficult to identify information from misinformation. Notably, parents underestimated the negative experiences that young people identified, for example, 54% of young people reported feeling that they are wasting their time scrolling compared to 26% reported by parents of their children and 51% of young people said that they see unwanted advertisements compared to 35% of parents. Young people reported various skills they have to protect themselves from harms including avoiding scams, blocking accounts, not sharing their location settings, keeping their accounts secure and dealing with unwanted content and individuals in their networks (see Figure 2).

Steps taken to stay safe – youth practices/parent advice given.

Platform recommenders were another technology that young people frequently spoke about, noting how the content they are exposed to through them was often incorrect or was not considered relevant because the age-restricted measures in place fail to align with their own level of maturity. Young people have sophisticated ways of recognising the limitations of recommendation systems and engaging their own agency to seek the content they wish to see:

They are skilled at avoiding age limit features to be able to access the content they want to see:

Young people’s resilience and the range of self-regulation tactics they employed also point to the coping mechanisms that are required to create safe and productive environments. Peer-to-peer knowledge sharing is one way that young people collectively build resilience in dealing with risks to keep themselves safe:

Further to this, many young people were acutely aware they spend a large amount of time on devices and have designed self-regulation strategies to manage this scenario:

Some of the areas where young people were doing less to protect themselves and others online were speaking out when they witnessed someone else behaving meanly, detecting and not sharing misinformation, and reading the terms and conditions before signing up to a new app or platform. Beyond these kinds of safety issues, young people also felt at times a tremendous need to be part of the social media ecosystem to ensure they are not missing out with their friends:

The ways in which young people experienced their digital media spaces presented them with opportunities to develop a range of safety and helpful coping mechanisms that they often shared among peers, forming part of their broader repertoire of digital skills and literacies. While this is a positive approach, a key challenge is that safety features on platforms to limit online harms are for the most part insufficient or missing for these emerging issues, such as algorithmically recommended violent or abhorrent unsolicited content, the circulation of false information, lack of ways to quickly and effectively remove content and exploitative uses of personal data. In the process, the responsibility falls to young people and their parents to develop other online safety strategies to combat potential harms such as household rules and practices.

Family and household rules and negotiations

Our research found that parents in general felt overwhelmed with the demands of having to monitor and keep their children safe online and ill-equipped to educate and develop their children’s digital safety skills. For many, daily struggles over their teenage children’s digital media use were marked by disagreements and tension, creating a heightened atmosphere in the home. Parents in the focus groups used phrases such as ‘ongoing battle’, ‘learning curve’, ‘give them an inch and they take a mile’. Nevertheless, we found a spectrum of responses with agreed household rules and negotiation techniques that parents used to assist them in managing the online time and experiences of their children. These reflect a range of parental mediation styles that mostly aligned with the model of active, restrictive and co-viewing roles in children’s media use (Livingstone and Helsper, 2008).

These household rules include an all or nothing approach to young people’s phones (confiscation or removal for a period), implementation of parental controls and screen time apps for managing time limits, increased levels of involvement in young people’s digital worlds and leveraging lived experiences into teachable moments. We also found that many Aboriginal and Torres Strait Islander young people reported having strict rules in place to govern their social media use (31% compared to 13% nationwide), while Culturally and Linguistically Diverse young people were more likely to say they have no rules in place compared to the national average (51% compared to 44% nationwide).

We found that parental mediation strategies were highly influenced by sociocultural factors and family circumstances – sometimes exposing stark power asymmetries. One single mother with three boys explained that her involvement in the regulation of her children’s digital use and safety was curtailed by her former partner:

In another example discussed earlier of a mother whose daughter has special needs, rules around digital use and online safety were conducted according to a different risk assessment and more proactive supervision to keep her safe:

Similarly, the stricter rules found to be in place in Aboriginal and Torres Strait Islander family households confirmed findings of other research that has shown Indigenous parents and caregivers in Australia are both more aware of the online harms their children are exposed to and more engaged in parental mediation practices such as monitoring, blocking and explicit instruction (eSafety Commissioner, 2023). As Carlson and Frazer (2018, 2020, 2021) explained, Indigenous parents’ different parental mediation styles and safety assessments need to be considered within the context of settler colonialism and the importance of cultural protocols and connections with community and kin. Overall, all parents acknowledged the benefits of social media and online games but this was tempered by a sense of resignation towards the contemporary ‘digital reality’ that Australian young people are part of and parents cannot escape or resist. This narrative, which oftentimes ran alongside one of risk, was apparent in many parent responses:

At the same time, there were stories shared of the creative ways that their children’s digital use augmented family life such as in this parent’s recollection of their holiday:

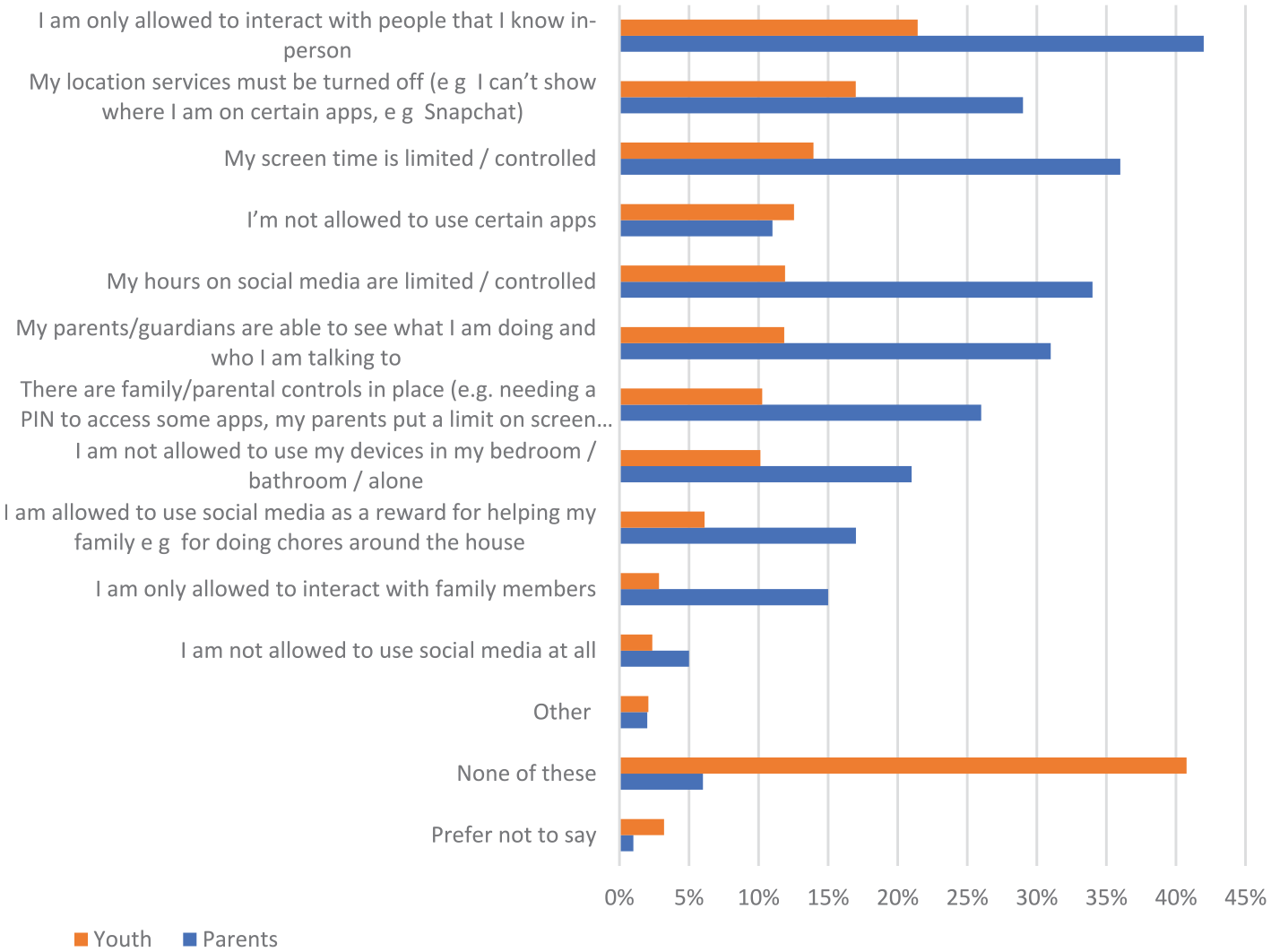

There were a variety of attitudes by young people about the need for household rules to protect their online safety. Many young people recognised that online safety was a shared responsibility even though they felt strongly that they should have the main charge of this as reflected in their active engagement in self-regulation tactics to combat the negative aspects of social media. However, a likely source of tension was the difference in the perception of household rules in place that governed young people’s online use and safety (Figure 3). Parents were much more likely than young people to say there are rules governing online uses in place (with a 14.9 percentile average difference), and almost half of young people (44%) and over a third of parents (34%) admitted that young people do want they want online and they have little control. The age of the young person was a key factor in the enforcement of rules relating to online safety, and these were often relaxed as children matured: 72% of households with children aged 12–13 years had rules in place, compared to 62% of those aged 14–15 years and 53% of those older than 16 years. This observation suggests that broad regulation of adolescence by a fixed age fails to acknowledge the variety of maturity and parental mediation approaches across this group of users.

Difference in the proportion of parents and youth reporting rules governing online uses.

Both young people and their carers strongly advocated for more transparent and accountable social media platforms and for governments to have a greater role in providing more fit-for-purpose data privacy laws and online safety education for young people. However, when it came to support for age verification there were mixed results, with 72% of young people and 86% of parents in favour of more effective age limits, while 86% of young people and 83% of parents saying young people would get around them. There was an essential mistrust that companies, whose business models depend on algorithmically driven data commoditisation, could be relied on to serve the interests of young people or that there were sufficient protections in place to ensure their legal compliance. The idea of an increased role of government and stronger parental consent was qualified by anxieties about whether this would result in state overreach and more work for parents as well as less privacy, independence and social connection for young people.

Discussion

In this article, we shared the research we carried out that examined the emerging online safety issues for Australian teenagers in the context of automated and algorithmic platform media, examined from the perspectives of both the young people and parents. The aim was to highlight the key social digital dilemmas that arise in the online practices and safety issues of young people and the parental negotiations of their children’s online access and use. We also situated young people’s online safety issues within the context of new and proposed laws such as the exposure draft of the Online Privacy Bill (2021). These social digital dilemmas refer to two interconnected processes: first, the struggles over young people’s digital media use and safety within their relational networks with parents expected to play the principal role in supporting and managing their children’s safety, and second, the dramatic rise of powerful commercial digital media platforms that exploit and appropriate young people’s social lives for data, without sufficient regulatory controls in place to mitigate and offset the harms that they produce.

We found that these online environments establish dynamics of visibility, content sharing and creation, and algorithmic curation that have new implications for young people’s safety and the skills needed to maintain and maximise the beneficial uses of social media. Young people have developed digital literacies to navigate these social digital dilemmas that include a wide range of new skills and knowledges such as how to influence the algorithmically controlled content recommended to them in their feeds. Nevertheless, there is a lack of transparency around how platforms prioritise content and the relationship of personalisation of users’ feeds to young people’s data privacy. Young people engineer their algorithmic literacies through feel and by trial and error, which Ruckenstein (2023) refers to as ‘friction’ to explain the tacit and affective reactions by users to the operation of algorithms in their everyday lives as more than a passive resignation to their powers. These frictions point to a key social digital dilemma highlighted by the research findings: that despite the active engagement of young people in their own online safety and their evolving algorithmic literacies, there is a limit to the agency that they can exercise over the content they see and interact with, and how their personal information is used. There is a constellation of agents involved in setting this limit – parents, peers, schools, governments and especially, platform companies, who restrict information about how users’ data are handled and curtail user access and control over their proprietary algorithms.

Household rules are one of the means through which parents manage their children’s online safety and there are a variety of parental mediation styles we found that aligned with those previously identified. These mediation styles were notably differentiated by family circumstances and by gender, with mothers taking a greater responsibility overall for their children’s online safety. Common parental concerns about their children’s digital use and online safety were oftentimes expressed in terms of a loss of control or nostalgia for an unmediated childhood past, pointing to the shifting boundaries of family life and of ‘doing family’ (Morgan, 1996) that accompanies young people’s increasing and evolving digital media engagement. As children get older and their social lives become more intertwined with their digital use, digital activities become less visible to parents and less well comprehended. This is also an outcome of the highly personalised nature of online use, which is further enhanced by algorithmic operations and mobile media access. As this happens, more restrictive approaches not only become less applicable but also less effective, driving a shift by parents to a more active and negotiation-oriented approach.

There was a strong desire among young people and parents to distribute the responsibility of children’s online safety more effectively with an onus on platform companies and governments doing more. Nevertheless, young people and parents expressed a high degree of ambivalence about the ability of technology companies and governments to do this in a way that protects young people’s data privacy and their agency without creating extra work for parents, particularly if required to provide consent and verify age on a regular basis. Simultaneously, parents want to exert control of their own household rules and regulations yet feel generally under-equipped and skilled to respond confidently to the complex challenges of their young people’s safety needs and issues. This is exacerbated by the different digital worlds that young people inhabit and policies and solutions that don’t adequately account for family diversity and sociocultural differences.

Globally, there is a push for regulatory reform as a response to the lack of action by technology companies to improve the experience and safety of young people on their social media platforms. These legal changes introduce a range of ways to regulate technology companies, including expanding government powers for take downs, garnering information and applying penalties. In Australia, these changes include the Online Safety Act 2021, which enhanced the powers of the eSafety Commissioner for dealing with issues such as cyberbullying, image-based abuse and illegal or restricted online content. It also includes review of Australia’s data privacy laws and a government supported trial of age assurance systems for limiting access to online pornography and social media (Lowrey, 2024). In November 2024, the Australian parliament passed the Online Safety Amendment (Social Media Minimum Age) Bill that legislates an obligation on social media companies to take reasonable steps to prevent children under the age of 16 from having accounts on ‘age-restricted social media’. The new term broadly covers any service that enables social interaction and the sharing of content between two or more users, with the Minister for Communications granted special provisions to specify what is included in this definition (Australian Government, 2024). However, as Nash (2021) notes, ‘age-gating is rarely a wholly beneficial policy solution [and can] potentially infringe on privacy rights’ (p. 277). Moreover, technologies and procedures vary considerably to check and verify age of the user, from self-declaration by the user and parents vouching for their children’s age, through to estimating age with AI pattern recognition tools (Margetts et al., 2021). Our research underscored these points and demonstrated that while there is general support for more effective limits by age, there are significant perceived drawbacks: young people hold concerns about an erosion of privacy, autonomy and connection in their digital lives, and parents worry about the handling of their children’s personal information and the increased burden of managing their children’s digital media use and safety.

This points to a society-wide social digital dilemma that accompanies the sharing of responsibility for children’s online safety across families, platforms, institutions, and governments. Laws and regulations as well as all types of digital media platforms form part of the ‘contextual fabric’ (Twamley et al., 2021) of young people’s lives and shape parental mediation of their children’s online safety. The focus on preventing harm through blocking access by age runs the risk of negating variations in children’s maturity and capacity as well as cutting off young people from the benefits they obtain from social media participation. It may exacerbate rather than solve the daily social digital dilemmas that arise by adding to the complexities of family digital life without equipping young people or parents with the resources and skills they need to effectively negotiate these. Furthermore, it ignores the fundamental problems of data commoditisation inherent to platform media, which affect every user but especially young people. As governments around the world assert legal and regulatory powers over commercial digital platforms, a process underway but highly volatile and fluctuating, the challenge is to maintain focus on the more complex task of making online environments safer and less exploitative rather than fall back on simplistic and ultimately harmful discourses about children. These shifts involve realignments of governance practices and power relations that are at the very core of social forms of organisation and ideas of childhood, involving institutional as well as cultural processes of change (Newman, 2005).

Conclusion

In conclusion, we suggest that social digital dilemmas arise in the relational negotiation of key and emerging issues in young people’s online safety. Parents have developed a variety of strategies or styles of parental mediation to support and manage children’s digital lives. Young people are themselves confident with managing their own online safety but still encounter negative experiences and have difficulties with the lack of transparency and accountability of platforms that use heavily automated and algorithmic systems for curating content and controlling visibility. However, there is a disconnect between young people and their parents in relation to perceptions of family and household rules, sense of safety, confidence levels and adherence to rules, which is a source of significant family tension. These social digital dilemmas are differentiated by parental mediation styles and a range of sociocultural factors, including gender of child and parent, family circumstances, cultural identity and disability status of children, and are further complicated by the rapidly changing legal and regulatory context. When new online safety laws and measures are introduced, these create their own set of demands and trade-offs, requiring significant cultural and familial negotiation. The implications of the findings for policy suggest the need for a multi-layered approach that is sensitive to the complex interplay of responsibility for online safety in a shifting regulatory landscape, grounded in evidence of young people’s own practices and skills and the complexities of digital family life. Our research demonstrates the need to include young peoples’ voices and perspectives to recognise their right to digital engagement (Livingstone and Third, 2017), to better equip diverse groups of parents with digital skills and literacies and ensure the continual change of technology and its cultural appropriation remain in focus for policymaking.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The author(s) received financial support for the research from the Online Safety Grants Program of the Office of the eSafety Commissioner (Australian Government).