Abstract

TikTok has emerged as a powerful platform for the dissemination of mis- and disinformation about the war in Ukraine. During the initial three months after the Russian invasion in February 2022, videos under the hashtag #Ukraine garnered 36.9 billion views, with individual videos scaling up to 88 million views. Beyond the traditional methods of spreading misleading information through images and text, the medium of sound has emerged as a novel, platform-specific audiovisual technique. Our analysis distinguishes various war-related sounds utilized by both Ukraine and Russia and classifies them into a mis- and disinformation typology. We use computational propaganda features—automation, scalability, and anonymity—to explore how TikTok’s auditory practices are exploited to exacerbate information disorders in the context of ongoing war events. These practices include reusing sounds for coordinated campaigns, creating audio meme templates for rapid amplification and distribution, and deleting the original sounds to conceal the orchestrators’ identities. We conclude that TikTok’s recommendation system (the “for you” page) acts as a sound space where exposure is strategically navigated through users’ intervention, enabling semi-automated “soft” propaganda to thrive by leveraging its audio features.

Introduction

Wars have always been mediated, perceived, experienced, and remembered through a medium whose features shaped wartime realities (Boichak and Hoskins, 2022; Merrin, 2018). The widespread adoption of social media platforms has revolutionized the way in which information is shared during times of conflict, affording users to rapidly and easily reshape and disseminate news to global audiences at an unprecedented speed and scale (Vilmer et al., 2018). Since the outset of the war in Ukraine in February 2022, TikTok has emerged as an unexpected focal point for spreading audiovisual content about the conflict (Divon and Eriksson Krutrök, 2023; Geboers and Pilipets, 2024). Videos with the hashtag #Ukraine were viewed 36.9 billion times in the first three months after the Russian invasion (TikTok, 2022), and the short-form video platform, owned by the Chinese company ByteDance, has been found to serve as the harbinger of war while communicating high volumes of videos containing mis- and disinformation (Kuźmiński, 2022).

The unique infrastructural characteristics of TikTok, including the platform’s algorithmic distribution, emphasis on content over interpersonal connections, and culture of remixing and meme creation, make it vulnerable to the spread of mis- and disinformation. Accordingly, TikTok has proven to be a fertile ground for the propagation of mis- and disinformation, with instances of misleading information appearing in nearly 20% of videos discovered by the platform’s search engine. Additionally, TikTok’s recommendation system (the “for you” page) has been found to display disinformation about the war in Ukraine to new users within 40 minutes of signing up, as reported by Newsguard’s Misinformation Monitor (2022a, 2022b). The spread of mis- and disinformation on TikTok during the Ukrainian conflict involves a diverse range of actors, including state actors, individuals, and computational elements such as algorithms and features (O’Connor, 2022). This multifaceted phenomenon spans from shallow misinformation to sophisticated disinformation campaigns, highlighting the need for continued investigation.

This paper looks at the trajectory of mis- and disinformation being algorithmically and automatically curated, amplified, distributed, and consumed on the platform’s recommendation system via the popular sound feature, considered as TikTok’s backbone (Richards, 2022). We argue that TikTok’s aural paradigm shift (Abidin and Kaye, 2021) has refocused attention on the power of sound as a tool for disseminating mis- and disinformation in innovative and affective ways that exploit the platform’s vernacular (Kaye et al., 2022). Gibbs et al. (2015) describe platform vernacular as the “genres of communication emerge from the affordances of particular social media platforms and the ways they are appropriated and performed in practice” (p. 257). We suggest that TikTok’s vernacular is particularly impactful in disseminating semi-automated propaganda messages in what we term sound spaces. These are automation-based environments that facilitate various forms of affective attunement, where emotional expression and social bonding are encouraged by auditory practices, enabling persuasion and influence to thrive.

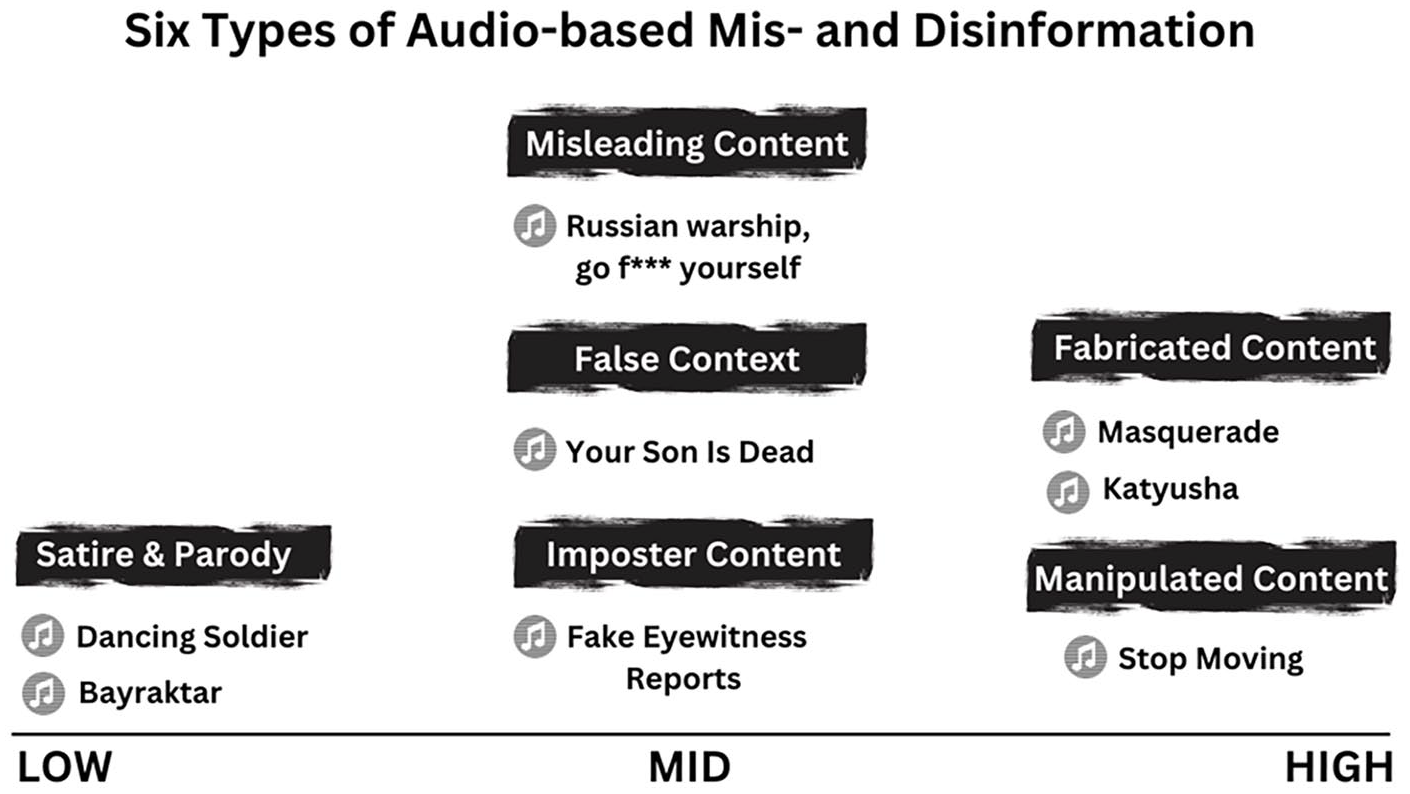

Therefore, we first establish the notion of computational propaganda as our theoretical lens, understood as the “use of algorithms, automation, and human curation to purposefully manage and distribute misleading information” (Woolley and Howard, 2019: 3). Second, we define misleading information on TikTok, encompassing both mis- and disinformation, with the latter serving as the core of propaganda designed to deceive and harm (Wardle, 2020), while the former often functions as an amplifying agent. Third, we examine sound as a potent instrument for computational propaganda, analyzing war-related sounds used in over 25,000 videos. We categorize these sounds into a typology of six types of audio-based mis- and disinformation (Wardle, 2020), spanning from low (satire and parody content) to mid-level (false context and imposter content) and up to high volume (manipulated and fabricated content). By connecting these findings to the computational propaganda features: automation, scalability, and anonymity (Woolley and Howard, 2019), we conclude that TikTok’s sound feature serves as the core element of multimodal campaigns utilizing video, images, audio, and text to facilitate extensive semi-automated “soft” propaganda campaigns, resulting in up to 80 billion individual views per campaign.

From false information to computational propaganda

While false information has been used for centuries to influence public opinion, the proliferation of digital communication technologies, social media, and other online platforms during the 2010s has created novel opportunities for spreading such information at an unprecedented scale and speed. Accordingly, the exploration of false information within propaganda studies (Auerbach and Castronovo, 2013) has led to a nuanced understanding, distinguishing between “misinformation” and “disinformation”—distinct terms that categorize the various forms and intentions behind the dissemination of false information and its political sway (Marwick and Lewis, 2017). The former is defined as inaccurate or incorrect information disseminated without the expressed intention of deceiving (Jack, 2017), while the latter involves the deliberate dissemination of falsifiable statements intended to deceive and amplify deception (Wardle and Derakhshan, 2017).

Various scholars have been exploring the textual and visual traces of mis- and disinformation online in recent years. Current work by Peng et al. (2023) provides a framework for understanding how visual artifacts influence the credibility perceptions of misinformation, while Thomson et al. (2022) explored strategies to mitigate the impact of mis- and disinformation on journalistic work. Meanwhile, Hameleers et al. (2020) analyzed the credibility of textual versus visual disinformation, uncovering that the latter is often deemed more credible due to the ease with which visual information can be taken out of context to serve as ostensibly reliable “proof.” Emerging techniques for creating synthetic videos that are difficult to distinguish from genuine footage, commonly known as “deepfakes,” have spurred scrutiny into novel avenues for video-based disinformation, leading to the widespread belief that much of online content cannot be trusted (Vaccari and Chadwick, 2020).

With misleading content spanning technologies, platforms, and tools, Wardle (2020) argued that mis- and disinformation can be understood as a form of “pollution” in the digitally connected world, where irrelevant, redundant, inferior, and unwanted information is disseminated with a certain level of intent to deceive audiences. To account for the proliferation of technically advanced strategies, non-human factors, and platform infrastructures used to spread mis- and disinformation, Woolley and Howard (2019) introduced the term “Computational Propaganda.” They define computational propaganda as the “use of algorithms, automation, and human curation to purposefully manage and distribute misleading information” (Woolley and Howard, 2019: 3), where misleading information encompasses both mis- and disinformation.

The features of computational propaganda are (1) automation: enabling propaganda campaigns to be scaled up efficiently, (2) scalability: permitting instant and colossal reach within content distribution, and (3) anonymity: enabling the perpetrators of such campaigns to evade detection and remain unknown (Woolley and Howard, 2019). Computational propaganda has the capacity to be used for a multitude of purposes, including advertising and commercial marketing. However, its most profound impact is observed in the sphere of politics, particularly in the context of foreign information manipulation and interference (Bolsover and Howard, 2017). Computational propaganda leverages automated infrastructures to disseminate misleading information, amplify messages or viewpoints, and manipulate public opinion. Such tactics can ultimately create patterns of behavior that pose a formidable threat to the core values, procedures, and political processes of democratic societies (Borrell, 2022).

In alignment with current scholarly trends in mis- and disinformation research, the scrutiny of computational propaganda has predominantly focused on visual and textual elements. Scholars have examined the significant influence of social bots on Facebook and WhatsApp during election times in Brazil in 2014 (Arnaudo, 2017) and in the U.S. during the 2016 elections (Woolley and Guilbeault, 2017), where they achieved measurable impact through verbatim tweets on Twitter. Sanovich (2017) focused on Russia’s sophisticated use of online propaganda and counter-propaganda tools on Facebook, including trolls masquerading as organic users and elaborately substantiating even their most patently false claims. Thus far, this scrutiny has overlooked the multimodal nature of audiovisual platforms such as TikTok, including its auditory components.

TikTok and the spread of mis- and disinformation

Since its international launch in 2018, TikTok has emerged as one of the most influential video-sharing platforms globally, amassing one billion unique users in 2021 (Silberling, 2021). Despite its massive popularity, TikTok’s Chinese ownership and government connections, coupled with concerns over privacy and content moderation, have subjected the platform to heightened scrutiny (Zeng and Kaye, 2022). Recent research indicates that TikTok has become a fertile and complex ground for both the dissemination and combating of mis- and disinformation (Basch et al., 2021). Even users’ creative efforts to debunk such falsehoods inadvertently contribute to a proliferative cycle, where they propagate misleading content further (Southerton and Clark, 2023). The proliferation of mis- and disinformation narratives on TikTok can be attributed to the platform’s content-centric approach, which emphasizes video consumption over following specific users, potentially diminishing users’ attentiveness to source evaluation and credibility. Content is prominently featured on the “for you” page, the platform’s default interface, delivering a personalized stream of videos that are algorithmically recommended based on users’ interests.

TikTok has enhanced its mechanisms to combat mis- and disinformation narratives, especially amid events like the war in Gaza since October 7, 2023, by intensifying content moderation and engaging external fact-checkers to preserve the accuracy of shared information during crises (TikTok Newsroom, 2023). Nonetheless, as evidence mounts of TikTok’s failure to protect users from misleading information about the Gaza war (Dwoskin, 2023), the platform is seen within long-standing political contexts in which the dissemination and impact of mis- and disinformation have been explored. Bösch and Ricks (2021), in their study of right-wing propaganda efforts, investigated the use of fake accounts that visually mimicked official institutions on TikTok ahead of the German federal election in 2021. In the times of the COVID-19 crisis, Shang et al. (2021) found that the most common types of mis- and disinformation were conspiracy theories, false cures, and false information about COVID-19 testing. Those types were amplified by the help of the platform core technique to sample, reuse, and remix content through “duetting”—to post your video side-by-side with a video from another creator—or “stitching”—users’ ability to clip scenes from another user’s video into their own (Ebbrecht-Hartmann and Divon, 2022; Quick and Maddox, 2024).

In the context of the Russian invasion of Ukraine in 2022, propaganda efforts were identified by Kuźmiński (2022), who observed techniques such as the utilization of deep-faked videos on TikTok, where audiovisual propaganda content manifested in meme form. With a viral potential higher than YouTube (Guinaudeau et al., 2022), TikTok’s memes are powerful vehicles for the intentional production and dissemination of mis- and disinformation content, often driven by what Zulli and Zulli (2022) refer to as “imitation publics.” Those are the collection of people whose “digital connectivity is constituted through the shared ritual of content imitation” (p. 7). As TikTok’s algorithm privileges meme-based videos with accelerated exposure (Divon, 2022; Ling et al., 2022), users who engage in mimesis effectively become part of the human force propagating mis- and disinformation content to wider audiences.

TikTok’s popularity for news consumption and media criticism (Literat et al., 2023) has led to a shift in how users disseminate information, relying more on relational and emotional resonance rather than traditional markers of authoritative endorsement. This trend is further reinforced by the platform’s unique blend of playfulness, performance, affective appeal, and intimacy, which greatly influences how users circulate and amplify information within its ecosystem (Cervi and Divon, 2023; Grandinetti and Bruinsma, 2022). Central to this scrutiny, TikTok’s platform-specific feature of sound, often identified as its backbone (Richards, 2022), has been notably overlooked

“Use this sound” for computational propaganda on TikTok

The utilization of sound as an influential tool for propaganda is a long-standing practice. Lasswell (1934) emphasizes that propaganda can take “spoken, written, pictorial or musical form” (p. 521). The use of aural persuasion as propaganda gained traction in the era of mass media, particularly with the development of radio broadcasting in the late 1920s, as totalitarian regimes were harnessing audio to gain a monopoly (David, 2018). However, the academic exploration of sound as a persuasive tool was then sidelined by the global dominance of video via the widespread distribution of television, computers, and smartphones (Ramos Méndez and Ortega-Mohedano, 2017).

TikTok has spurred an aural paradigm shift in research, driven by the popularity of audio memes (Abidin and Kaye, 2021). An audio meme is a short sound clip that includes snippets from songs, movies, or other audio sources, gaining popularity online through its repeated use and variations, encapsulating trends, humor, emotions, and platform dialects in a shareable format (Pilipets et al., 2023). When engaged in audio meme creation, users have access to extensive repositories of organized audio clips, which can be reused through the “use this sound” feature available in each video. Thus, audio memes have evolved into an interactive and collaborative experience on the platform (Kaye, 2023), enabling users to enhance their videos with music, voiceovers, sound effects, or multiple audio layers, with each sound automatically linked to a “sound page,” aggregating all videos utilizing that specific audio. Furthermore, being an engagement booster, creators utilize audio memes as a visibility strategy by incorporating trending audio snippets, thus engineering their videos into popular audio meme streams (Bhandari and Bimo, 2022).

In this sound-centric ecosystem, TikTok’s automated indexing and amplification of videos, guided by auditory practices, enhances users’ discovery and engagement with shared human experiences on the platform. As a result, audio memes have transformed into a communal and cultural storytelling medium (Ramati and Abeliovich, 2022), empowering users to express and amplify their voices, including narratives of diasporic identity (Divon and Ebbrecht-Hartmann, 2022; Jaramillo-Dent et al., 2022), and cultivate a sense of belonging within their in-groups (Vizcaíno-Verdú and Abidin, 2022). Thus, sound use on TikTok exemplifies secondary orality (Venturini, 2022), as dispersed individuals engage with the ephemeral and participatory nature of memetic content, continuously recreating and sharing anew to resonate their collective sentiments. This networked infrastructure forms what we call sound spaces, which are automation-based environments in which persuasion and influence can thrive with the help of auditory practices, allowing users to echo their message with various forms of emotional expressions and social bonding.

TikTok fosters bottom-up content creation by making the core components of music (such as hooks, riffs, and beats) easily accessible and discoverable. This enables users to effortlessly pick up, combine, and piece these elements into new, personalized assemblages. Thus, TikTok’s infrastructural setting and user culture make the platform susceptible to hosting and spreading recontextualized media like stolen YouTube videos, computer game footage, and other forms of viral mis- and disinformation (Nilsen et al., 2022; Weikmann and Lecheler, 2022) and propaganda by state actors (Rahmawati and Hadinata, 2022). However, the issue of automation around sound-based computational propaganda has been unresearched, with only O’Connor (2022) highlighting the role of automated sound mechanisms in spreading misleading COVID-19 content on TikTok, where videos containing vaccine misinformation generated 20,019,798 views due to the use of viral sounds.

By foregrounding the memetic function of TikTok in a complex ecology of users’ imitation, affective attunement, and attention hijacking, we observe a polluted information ecosystem consisting of conspiracies, hoaxes, falsehoods, or manipulated media, as described by Wardle (2020) under “information disorder.” With the focus on TikTok’s audio functionalities as users’ “attention-grabbing techniques” (Marwick, 2015: 334), we apply a typology of mis- and disinformation (Wardle and Derakhshan, 2017) ranging from low (satire and parody content) to high volume (fabricated content), creating a map of diverse memetic sound-based videos. By connecting these findings to the computational propaganda features and applying them to sound, we attempt to offer a unique analysis, asking: (Q1) Automation—which role does automation play in spreading mis- and disinformation? (Q2) Scalability—how effective is the use of sound in terms of its impact and reach, as indicated by metrics such as views? (Q3) Anonymity—how can we identify sound-based disinformation and trace it back to its perpetrators?

Method

Data collection, sample, and analysis

To gain a comprehensive understanding of the computational elements involved in war-related content on TikTok, we applied an exploratory user-centric approach (Grandinetti and Bruinsma, 2022) based on the walkthrough method as the main data-gathering procedure (Light et al., 2018) while adopting the perspective of a TikTok user with an “analytical eye” (p. 791). The walkthrough method is “a way of engaging directly with an app’s interface to examine its technological mechanisms and embedded cultural references to understand how it guides users and shapes their experience” (Light et al., 2018: 882). Despite the limitations of this method when applied to highly personalized and algorithmically driven apps, we echo the call made by Duguay and Gold-Apel (2023), asserting that this method should serve as a springboard for more comprehensive and in-depth content analysis.

To mitigate the bias inherent to our TikTok feeds, we created a research-focused account using SIM cards different from those on our personal phones while maintaining the exact geolocation of our original location (outside of Ukraine) to ensure exposure to international content. As we embarked on a daily engagement with war-related content on our “for you” page, we actively searched for such videos in the first few weeks following the invasion of Ukraine on February 24, 2022, using keywords like “Ukraine,” “Invasion,” “Putin,” and more. In doing so, we effectively trained the algorithm to prioritize war-related content on our “for you” page, known for its “centripetal force” as described by Duguay and Gold-Apel (2023), making it a challenging screen to disengage from and the focal point for user consumption and attention. With abundant Ukrainian and Russian war-related videos flooding in, we utilized the in-app bookmark system and our platform literacy to save videos featuring recurring multimodal patterns and sound-based memes.

After a month of daily scrolling, we have reached a collection of diverse materials composed of approximately 300 videos that were featured on our war-trained “for you” page. All videos included primary auditory components, including the use of either (1) songs, (2) audio scraps, (3) human speech, (4) sound effects, and (5) mashups of audio. The classification of these components was undertaken as an integral part of our walkthrough approach, aimed at achieving a nuanced comprehension of potential auditory functions on TikTok. Individual videos accumulated up to 88 million views, and unique sounds have been used in more than 36,000 different videos.

We then adopted a purposive sampling technique (Sandelowski, 1995) to “deliberately look for information-rich cases” (p. 81) that feature war-related content. To ensure the chosen videos from each sound were relevant, we included videos showcasing hashtags like #ukraine, #putin, or #invasion and utilized their visual cues like the Ukraine flag or images of Vladimir Putin or Vladimir Zelensky. We analyzed the accounts of users who shared original sounds while trying to source them back to the origin and checked their accounts concerning follower count, views, and reach. We relied on the Ethics Working Committee of the Association of Internet Research (AoIR) and took great care in our research decisions, like removing personal information from saved videos. We identified six types of audio-based examples that fit Wardle and Derakhshan’s (2017) theoretical framework of mis- and disinformation, supplemented by the intensity of information disorders found in our data, ranging from (1) low volume with satire and parody content and false connection, over (2) mid volume with misleading content, false context, and imposter content, to (3) high volume with manipulated content, and fabricated content (see Figure 1).

Six types of audio-based mis- and disinformation, each associated with distinct sounds.

Finally, we applied a multimodal content analysis per sound “to examine textual, aural, linguistic, spatial, and visual resources” (Pearce et al., 2020: 6) embedded within the videos, intending to identify how distinct auditory layers were used in a memetic fashion as the main driver and design element of multimodal videos on TikTok. This type of analysis helped us to observe aspects of computational propaganda, focusing on the level of richness in audiovisual modality and sophistication. By that, we gained a deeper understanding of the ways in which computational propaganda and its elements of automation, scalability, and anonymity (Woolley and Howard, 2019) manifest on TikTok.

Findings

A typology of sound-based mis- and disinformation

Low-volume information disorder

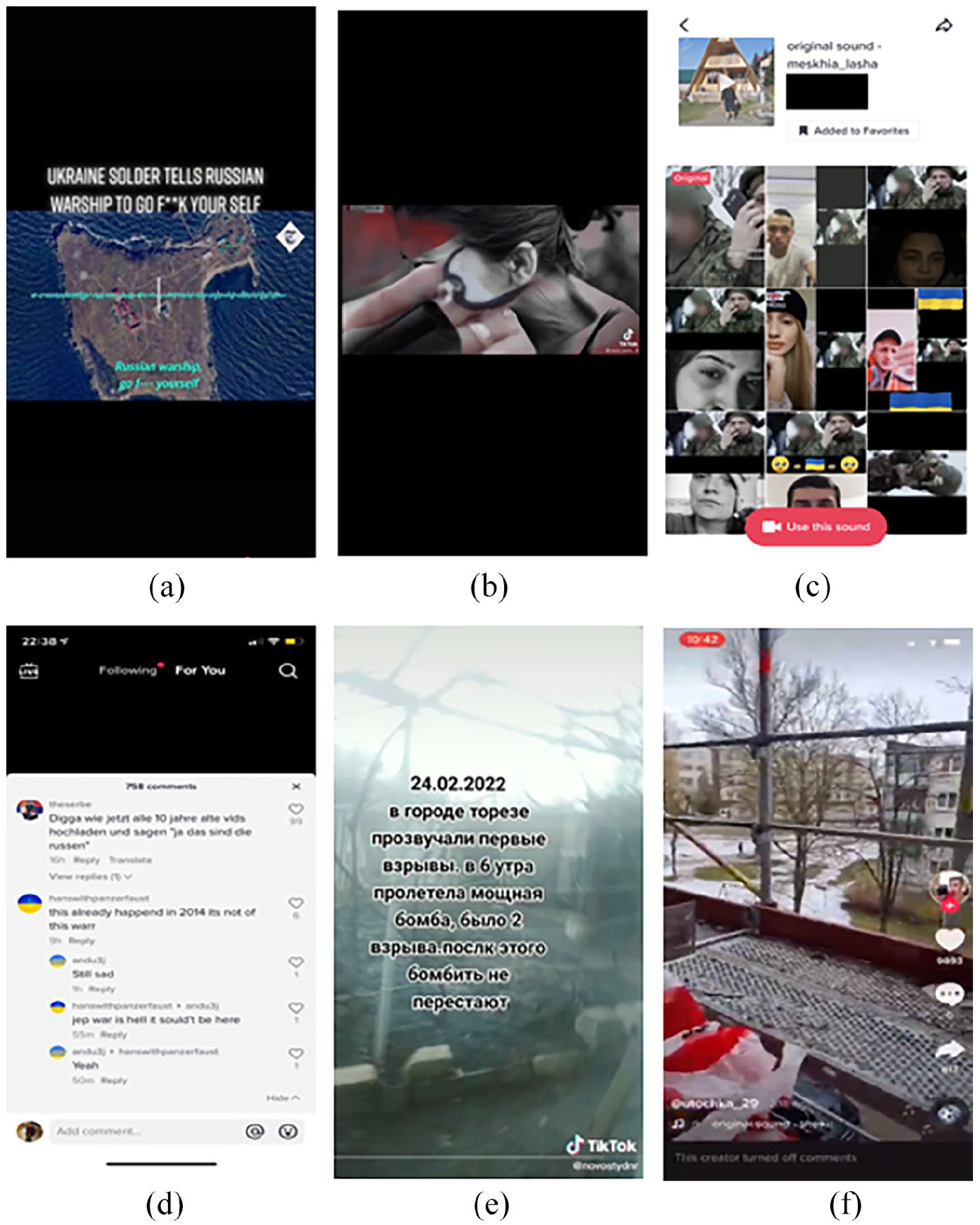

According to Wardle (2020), low-information disorder encompasses a wide range of practices, including the use of satire and parody as a deliberate strategy to evade fact-checking and spread rumors and conspiracy theories. We have identified two distinct examples of low-volume disorder: the first is the dancing soldier by the Ukrainian soldier Alex Hook (

Screenshots from TikTok videos illustrating examples of low-volume information disorder.

By taking a well-known pop song and infusing it with fresh and unexpected meaning, Hook strategically integrated his video into a popular audio meme landscape (Bhandari and Bimo, 2022), thereby ensuring broader discoverability and reach by automatically weaving his video in the ongoing stream of videos using the Michael Jackson sound. This dissonant performance – blending common internet dialects of sound and play in wartime (Divon and Eriksson Krutrök, forthcoming) – features a dancing soldier on the battlefield, garnered global attention, with media coverage portraying it as a touching scene of a “courageous soldier” dancing to reassure his daughter of his safety (Neog, 2022).

Hook proceeded to create 126 more videos featuring him dancing to other songs (i.e., Nirvana’s “Smells Like Teen Spirit”) while dressed in combat gear in various settings and situations that evoked the ambiance of a warzone. As his video series progressed, Hook began to reveal his face often and danced in front of tangible objects associated with war, such as a tank (see Figure 2[b]). In addition, he enlisted the participation of his soldier comrades in several videos, where they co-danced in different scenarios (see Figure 2[c]). While Hook’s profile garnered approximately 1 billion views by March 24, his distinguished dancing style sparked a dance challenge on TikTok featured with the #AlexHook hashtag (476 million views). Dance challenges on TikTok are meme-based performances in which users imitate a set of choreographed moves, calling others to coopt by using a designated hashtag (Klug, 2020). As “attention-grabbing techniques” (Marwick, 2015: 334), challenges enhance the algorithmic visibility of users and amplify their agenda and social capital (Cervi and Divon, 2023; Boffone, 2021). In addition to their scalability, TikTok dance challenges exhibit automation by using pre-existing sounds and choreography, enabling content creation through imitation. However, these challenges are a hybrid form of automated amplification, as their quick, copy-and-paste method combines with significant human agency, making them a “semi-automated” feature of computational propaganda.

Hook’s videos, which incorporate popular songs and a distinctive dancing style, align seamlessly with TikTok’s playful ethos, where each user functions as a performer who “externalizes personal political opinion via an audiovisual act” (Medina-Serrano et al., 2020: 264). This dynamic fosters a more interactive form of political communication performance compared to other video-centric platforms, urging users to assert agency in times of conflict. As they become widespread internet memes (Shifman, 2013), Hook’s videos on TikTok extend their influence to platforms like Instagram and Facebook, sparking extensive streams of imitation. This cross-platform memeification not only automatically broadens the reach and impact of the original content but also harnesses the interconnectedness of imitation-based communities, allowing individuals to collaboratively shape the personalization and presentation of warfare information in users’ feeds.

Hook’s videos, however, also have the potential to mislead viewers as they convey, via whimsical songs, expressive body performance, and captions, “a misleading narrative for the users, who will only see a certain part of the story” (Woolley and Howard, 2019: 54) in which the strength ratio of military forces at the outset of the conflict imply a future Ukrainian victory. These videos act as conduits for a carefully constructed pro-Ukrainian propaganda narrative, intentionally excluding any scenes of fighting, killing, or death and instead emphasizing the valor of soldiers confidently positioned on the battlefield, aiming to project an aura of control and assurance. By presenting such content, both platform users and the global audience are exposed to messages crafted to sway public opinion in favor of Ukraine.

Furthermore, the utilization of diverse audiovisual elements to create an individualized and humanized depiction of Ukrainian soldiers was found to be a recurrent strategy. The virality of profiles inspired by Hook, such as @jefbix (see Figure 2[d]), illustrates how constructed ambiguity, uncertainty, and vagueness (Larina et al., 2019) are used to engage viewers with minimal information about the identities of the profiles’ agents. Through the accessibility of anonymity on TikTok, these agents channel their digital labor into influencing and mobilizing audiences, all the while defying conventional expectations of soldiers in wartime and consciously omitting personal aspects of their lives beyond the context of war, crafting a deliberately sterilized online presence.

Moreover, specific segments of Hook’s videos were later incorporated into a sound-based TikTok campaign initiated by “Visit Ukraine,” a Ukrainian State organization (see Figure 2[e]). This promotional video appropriated Hook’s virality by creating a musical mashup of his various song choices and featuring armed soldiers dancing with high morale. By that, the Ukrainian State has been coopting a memetic computational momentum in which “dancing soldiers” scaled up to synchronize with international pop songs, which has become an aesthetic. This aesthetic is a set of audiovisual and affective codes performed by users who seek to cultivate a persuasive mind-set and elicit an emotional resonance with their audience (Paasonen, 2015). This type of communicative aesthetic is “entangled with the technical structures of the platforms that are used” (Schreiber, 2017: 145) and has epistemological consequences for how users are informed and educated about the war on TikTok.

The second example of low-volume information disorder with the potential to fool is the Bayraktar audio meme. Bayraktar is a recent Ukrainian patriotic propaganda song that pays tribute to the Turkish Bayraktar TB2 combat drone, which was deployed on behalf of the Ukrainian forces in March 2022. The song was written by Ukrainian soldier Taras Borovo, mocking both the Russian Armed Forces and the invasion itself (Weichert, 2022). Marked by its straightforward yet enthralling rhythm, the song garnered extensive airplay on Ukrainian radio stations, became a rallying cry at protests, underwent various remixes, and eventually found its way to TikTok. On TikTok, a multitude of remixes have emerged featuring an array of musical styles, including drum and bass (Bayraktar, Lostlojic), ska (BARAKTA, Big City Germs), heavy metal (Ukraine War Song, Metal Versio), and even a house remix (Slap House Remix, Adi Foxx). These creative adaptations underscore the power of anonymity on TikTok by effectively detaching the sound and its core message from their original source.

This example underscores the influence of sound-based platforms such as TikTok, which thrive on user-generated content remixes, turning the Bayraktar song into an effective earworm that further cements this audio into listeners’ memory (Abidin and Kaye, 2021) while carrying pro-Ukrainian propaganda messages. The explicit goal of the song, as described by the artist, was to “influence people, keep morale high and reduce Russian influence” (Weichert, 2022) as users harnessed this song as a template for mocking Russian myths and symbols, such as the cabbage soup Shchi and demonizing Russian soldiers as humanoid monster and bandits (see Figure 2[f]). The demonization of the enemy, aimed at mobilizing allies (Conserva, 2003), is effectively implemented here through a “soft” form of propaganda. According to Mattingly and Yao (2022), this form is achieved by repackaging messages in various pop culture forms that appeal to mass audience preferences. TikTok’s sound-trigger system enables the automatic amplification of these messages, which rewards the use of trending meme-based templates with virality (Divon, 2022), thus facilitating their widespread reach. The rapid scalability of memetic sound-based content becomes even more apparent given the ability to share TikTok videos on other platforms such as Telegram or WhatsApp.

Ultimately, the sound feature serves as the foundation for these low-information disorder instances, showcasing computational propaganda elements like “semi-automation” in content production via the “reuse sound” feature, pre-planned choreography, swift scalability through sound challenges, and the utilization of anonymity, enabling propaganda songs to circulate through remixes independently of their original creators.

Mid-volume information disorder

Middle-volume information disorder includes three types: (1) the use of misleading content to manipulate how an issue or individual is perceived, (2) the sharing of genuine content with false contextual information, and (3) the impersonation of genuine sources through imposter content (Wardle, 2020). We found sound-based examples of all three garnering over 100 billion views in various variations, demonstrating computational propaganda features like scalability. The first example is the Russian warship go f meme (80 billion views). During a Russian attack on Snake Island in Ukraine’s territorial waters on February 24, 2022, border guard Roman Hrybov communicated the final message to the Russian missile cruiser Moskva, saying, “Russian warship, go f*** yourself.” This phrase, being widely adopted and transformed into an audio meme, is an example of “misleading content” (Wardle, 2020) as it was disseminated with the intention of misleading how the incidents on Snake Island were perceived.

The audio meme phrase falsely claimed that 13 Ukrainian border guards had died as heroes in the battle for the island while fighting against the Russian flagship of the Black Sea Fleet and the most powerful warship in the region. Initially, Ukrainian government sources used the meme to spread false information, with the Ukrainian President announcing the death of the border guards (Thompson and Alba, 2022). However, a few days later, Ukrainian officials clarified that the soldiers were actually alive and had been captured by Russian forces. Despite this correction, the original misleading version continued to circulate, propagated through various sounds like “Russian Warship Go Fuck Yourself” and “Russian Warship.” Related searches such as “Russian warship go f meme,” “Russian warship meme,” or “snake island Ukraine video” lead to pages with views ranging between 150–200 million, showcasing the widespread dissemination of this misinformation.

This audio meme embodied the David and Goliath narrative, invoking a profound sense of asymmetric conflict (Fischerkeller, 1998) by contrasting a high-tech warship with a handful of soldiers on a deserted island, resonating with Western and pro-Ukrainian sentiment while bypassing logical reasoning of the events. The narrative was utilized as state propaganda to boost morale (Woolley and Howard, 2019) while simultaneously concealing its origins in government sources that had spread misleading information via the meme, thus illustrating the role of anonymity in computational propaganda. The “reuse sound” feature facilitated the dissemination of this audio, offering users a straightforward method to create videos with the military radio sound as a template, enhancing its extensive reach through a “semi-automated” process. These variations, including sound, image, and text, punctured the myth of the invaders’ superiority while the distinction between the falseness of the content and its genuine circumstances became increasingly obscured (see Figure 3[a]).

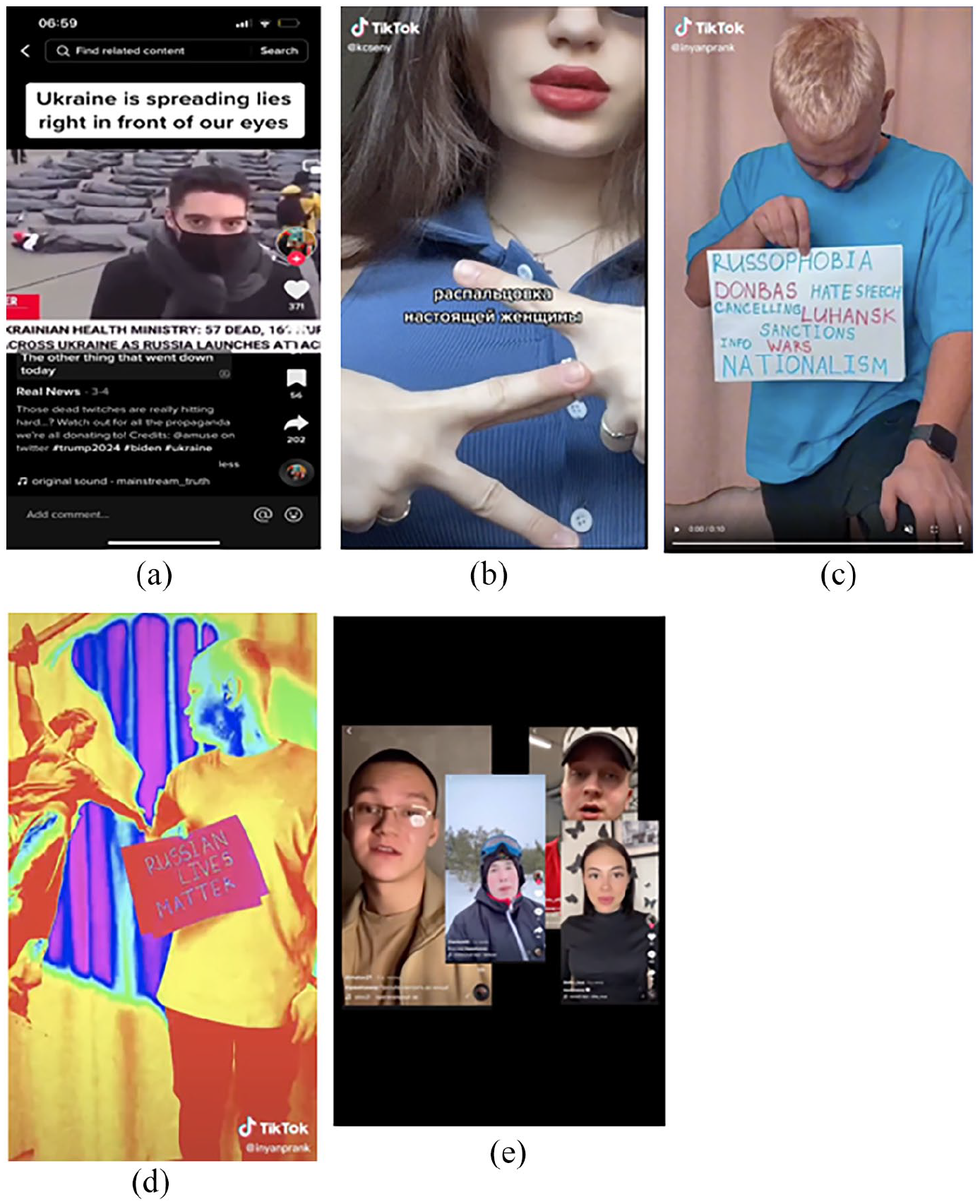

Screenshots from TikTok videos illustrating examples of mid-volume information disorder.

The second example is False Context—Your son is dead meme (10 million views). The meme portrays a Ukrainian-speaking soldier breaking the news of a Ukrainian fighter’s death over the phone to what appears to be his mother. Clearly indicating that genuine content was shared with false contextual information (Wardle, 2020), the videos were watermarked with the sign “NewsFront”—a website based in Russia-occupied Crimea, founded in 2014 by the head of the Crimean branch of the pro-Putin political party Rodina and a Russian businessman who was formerly a Kremlin official. In 2020, the U.S. Department of State (2022) described NewsFront as part of a “disinformation and propaganda ecosystem” involving Russian state actors to disseminate their ideologies widely. Thus, this video can be perceived as a pro-Russian strategy designed to instill fear and propagate a narrative that demoralizes Ukrainian soldiers and citizens.

The emotional resonance is amplified during the tragic moment through the incorporation of a melancholic instrumental snippet from the song Veridis Quo (a wordplay on the Latin expression “Quo Vadis,” meaning “to what dreadful place”) by Daft Punk. Orchestrated with an affective intent known to resonate in conflict-related online content (Papacharissi, 2015), the music in the video intensifies as the soldier relays the heartbreaking news, complemented by the sound of the mother’s sobs and the visual display of the soldier’s ID badge, enhancing the emotional gravity of the scene (see Figure 3[b]). The different emotional manipulations employed resulted in the formation of what can be referred to as an “affective audio network” (Primig et al., 2022: 7), in which users leveraged the platform’s functionalities of remixing trending sounds, such as the Duet feature, to unite around a shared experience and enhance its reverberation throughout the platform (see Figure 3[c]). Each new video iteration became embedded in what Phillips (2013) calls cycles of amplification, in which user engagement creates self-reinforcing feedback loops that boost content visibility and reach. This phenomenon extends beyond what is commonly termed “content without context” (Bhandari and Bimo, 2022: 7) on TikTok, involving the misleading contextualization and appropriation of content for propaganda purposes, all while the original creators maintain anonymity, as they could not be traced. However, commenters attempted to bring the video back into context and combat its falsehoods by noting it was originally posted in 2014, before the existence of TikTok (see Figure 3[d]).

The third example is Imposter Content—Fake eyewitness reports meme (50 billion views). Within this meme format, users crafted point-of-view (POV) 15-second-long videos in which they filmed in various locations perceived as places of conflict or adversity, utilizing sound effects to simulate the experience of accidentally capturing war-related incidents. For example, a video by user @novostydnr posted on the day of the invasion featured footage of an indistinct garden obscured by fog. The video included overlaid text informing viewers of an explosion in the city of Thorez at 6:00 a.m., accompanied by the sound of sirens (see Figure 3[e]). Similar to other videos in the eyewitness report genre we have found, this video garnered high engagement rates as it was watched 1.2 million times and shared over 1,000 times, demonstrating the appeal of this type of content.

The automated capacity to impersonate authentic sources through deceptive content was ultimately revealed upon clicking on the video’s sound feature, which was labeled as “оригинальный звук—Марія Антонова” (original sound—Maria Antonova). Upon tapping, the user is directed to the sound overview page with an “original” labeled video on top, which leads to the account of user @mariia_antonova. The user had posted a training alert video in the city of Chernihiv, Ukraine, on January 28, 2020, more than 2 years before the invasion, and her sound had been used in 2,040 videos, many of which were posted on February 24, 2022. An additional instance of imposter content is exemplified in a sound used in a video created by @utochka_29 on February 24, gaining more than 1.2 million views within 21 hours. The POV video captures the creator opening a window and gazing out onto a street in Ukraine. Suddenly, the sound of gunshots overtakes the video, and the creator shakes the phone to create a blurry glimpse into the moment (see Figure 3[f]). Upon tapping the applied sound, the user is directed to an overview page of a sound labeled as “original sound” accompanied by two emojis (a football and a fire symbol). The “original”-labeled video on top dates to 2020 and includes amateur video footage from a soccer game and a synthetically added sound of gunshots.

This proliferation of imposter content involving the impersonation of authentic sources represents a significant driver of information disorder on the platform. While TikTok’s reusable audio functionality was originally designed to facilitate lip-syncing playback (Rettberg, 2017), the platform’s vernacular of templatability (Rintel, 2013) enables swift and effortless leverage of a vast library of original sounds. This has enabled audio manipulation, such as sirens and gunshots, to create a sense of presence in active combat zones and imminent danger. The issue of anonymity is being addressed, as we have found that tapping on the sound and examining its overview page reveals the trustworthy source of many of these misleading videos, allowing for easy debunking. It becomes apparent that these videos often use sounds taken from various sources on the internet. Nonetheless, a concerning trend on TikTok is that perpetrators can easily disguise their rapidly scaling sound-based campaigns by deleting the original source video, while the sound can still be used and spread across the platform.

Ultimately, in the mid-volume information disorder category, all elements of computational propaganda, as outlined by Woolley and Howard (2019), become evident. Sound-based propaganda messages, enabled by applied audio templates, have scaled up to 100 billion views through “semi-automated” content production. Meanwhile, perpetrators have successfully maintained their anonymity through various techniques, such as deleting original sound files.

High-volume information disorder

High-volume information disorder can take two forms: (1) manipulated content, where genuine information, especially including sound, is not only combined with false contextual information (as in mid-volume) but actively altered to deceive and (2) fabricated content, which refers to entirely false information created to deceive and cause harm (Wardle, 2020).

We have identified pro-Russian propaganda attempts to spread disinformation via manipulated content manifested in the example Stop Moving—You’re Supposed to be Dead! The manipulated TikTok video shows 57 body bags of alleged Ukrainian casualties on the first day of the invasion, with one person shown moving and struggling to get out. However, the original video material shows Austrian climate protesters demonstrating in Vienna in early 2022, as documented by fact-checkers on Twitter (

Screenshots from TikTok videos illustrating examples of high-volume information disorder.

Fabricated content intending to deceive and do harm (Wardle, 2020) appears in different variations under the umbrella term #RussianLivesMatter. We identified 414 videos, including the abbreviation #RLM (for #RussianLivesMatter) in the captions. Videos with this hashtag carried three pro-Russian sound-based campaigns that combined different sounds, trends, filters, and hijacked challenges to deceive different target groups. The first sound-based trend is Masquerade by Chicago-based trap artist Siouxxie from 2020, which went viral in different variations with over 500,000 videos. This was made possible with the help of famous US-American influencer Charli D’Amelio, garnering more than 90 million views and helping to turn the sound into a dance challenge with a fixed set of specific dance moves.

The popular dance challenge was reappropriated by Russian creators at the end of February 2022 in order to spread a pro-Russian narrative and help popularize the “Z”-symbol, which developed “from a military marking to the main symbol of public support for Russia’s invasion of Ukraine” (Sauer, 2022). Female Russian TikTok users like @kcseny, with 2.5 million followers, performed a modified version of the dance. The videos are broken into two parts. In the beginning, @kseny and others show traditional selfie poses like the V-sign or the devil’s horn hands with a Russian textual overlay translating as “How basic girls pose with their fingers.” In the second part, users perform a “Z” shape with their fingers, accompanied by a text overlay suggesting that this might be “How real woman pose their fingers” (see Figure 4[b]) to emphasize Russian users’ self-empowerment. The use of an insider gesture to resonate with and signal inclusion to a specific group represents a nuanced and sophisticated method of message propagation, tailored in a platform-specific manner. This computational propaganda tactic involves leveraging a popular sound as a memetic medium (Zulli and Zulli, 2022), modified into a dance challenge that enables semi-automated content production and amplification via a multimodal template.

A second fabricated pro-Russian sound-based trend used the Soviet folk-based song and military march Katyusha composed in 1938. During World War II, Katyusha gained fame as a patriotic song, inspiring the population to defend their land (Von Geldern and Stites, 1995). We identified more than 100 ten-second videos utilizing this sound, each depicting a person kneeling while holding an English-language sign condemning “Russophobia.” In the second part of the video, the person stands up and turns the sign, revealing the words “Russian Lives Matter” while TikTok’s rainbow flash effect is applied, turning the video into a platform-specific mesmerizing audiovisual experience (see Figure 4[c] and (d)). Uncovering the orchestration of coordinated propaganda attempts, some users had similar typographical errors in the captions, including Russian-language instructions, such as “You can publish, description: Russian Lives Matter #RLM.”

In another coordinated Russian campaign, Russian influencers read out a manuscript of pro-Kremlin narratives using hashtags like “Come on World,” promoting the falsehood that Ukraine perpetrated a genocide against Russian speaking in the Donbas region over the last eight years. According to Vice News (Gilbert, 2022), the Telegram group that spread the manuscript amassed over 500 members, including Russian TikTok influencers with 1.2–1.8 million followers. In the videos, users recited a script, turning it into audiovisual human bots, amplifying a message while charging it with influencers’ familiarity (Arriagada and Bishop, 2021), as well-known faces on the Russian part of the platform (see Figure 4(e)). The seemingly coordinated campaigns suggested they try to influence opinion about the war among English and Russian-speaking audiences by applying international pop songs, Russian folk songs, or Russian-language messages. Those examples prove TikTok users’ considerable proficiency in utilizing multimodal production techniques, ranging from sounds and effects to challenges.

Ultimately, in the high-volume information disorder examples, all elements of computational propaganda were exhibited. The strategic use of performative videos—integrating imitative sound, text, and dance, operationalized by human agency—enabled semi-automated content production, achieving extensive reach. Additionally, anonymity was a notable feature, as many videos containing manipulated content could not be traced back to their creators, who effectively maintained their elusive identities.

Discussion

In today’s era of global connectivity, various actors exploit digital platforms through computational propaganda techniques to spread misleading information within a polluted information ecosystem. Our study underscores the pivotal role of computational propaganda mechanisms by pinpointing and dissecting their three fundamental components: (a) automation, (b) scalability, and (c) anonymity (Woolley and Howard, 2019), which were actively employed on TikTok following the 2022 Russian invasion of Ukraine.

Our findings build upon prior research, primarily focused on visual mediums for disseminating misleading information on social media (Hameleers et al., 2020; Peng et al., 2023), including TikTok (Shang et al., 2021). Instead, we highlight the auditive practices as a critical factor in creating, curating, amplifying, and disseminating misleading information on TikTok. While the weaponization of information is not new (Forest, 2021), disinformation campaigns have undergone significant transformation due to the influence of algorithmic recommendation systems, language model-based chatbots capable of generating text and images, and software applications designed to execute automated scripts imitating human actions. Nevertheless, these tactics still necessitate human involvement, encompassing campaign setup, scriptwriting, and tool operation. Buchanan et al. (2021) introduce the term “human-machine team” to capture this phenomenon, highlighting that “at the core of every output of GPT-3 is an interaction between human and machine: the machine continues writing where the human prompt stops” (p. 1).

Our findings reveal the utilization of both algorithmic and human factors as a means to “purposefully manage and distribute misleading information” (Woolley and Howard, 2019: 3). Therefore, we categorize these tactics as “semi-automated.” Prominent is the reuse sound feature, identified as a key mechanism within the platform that effectively amplifies and spreads misleading information. The reuse sound semi-automates content production by providing users with a sound layer and, in the case of the dancing soldier, an additional choreography (a dance challenge) that reduces the hurdles of creative efforts and affords the shared ritual of content imitation on TikTok (Zulli and Zulli, 2022). Our study has also highlighted the capacity for semi-automation and scalability afforded by the duet feature on TikTok (Your son is dead). This feature allows users to effortlessly record their emotional reactions to what they perceive as war-related moments while watching the original video, with both videos displayed side by side. The duet function intensifies the affective intent (Papacharissi, 2015) of a video and fosters potential duet chains with an increasing number of users reacting to the reactions . Therefore, the cycle of misleading narratives on the platform is perpetuated, fueled by users’ emotional expression and sustained through techno-social bonds, all amplified by auditory practices within a networked infrastructure we refer to as the sound space.

The primary goal of propaganda is to prompt specific actions or inactions from the audience (Walton, 1997), with semi-automated strategies currently emerging as the most effective method for disseminating audiovisual messages. While text-based messages spread by social bots have played a significant role in mobilizing a labor force for propagating fake news on platforms like Twitter (Shao et al., 2017), TikTok’s algorithm-driven recommendation system is better at engaging users in becoming contributors to the propagation of mis- and disinformation content. Especially, as a form of content creation labor, the role of sound enables malicious actors to outsource propaganda, either intentionally (Come on World) or covertly (Fake eyewitness reports). In addition, this approach provides a cloak of anonymity, as propaganda songs are disseminated through remixes (Katyusha) detached from their original creators. This is a manifestation of the remix culture (Primig et al., 2022: 2), in which “publication does not mark the end of content but the beginning of an ever-new reinterpretation of content fostered by a participatory paradigm.”

In both Russia and Ukraine, computational propaganda techniques have rapidly produced content with remarkable scalability, effectively expanding its reach to broader audiences and garnering up to 88 million views for videos like the Dancing Soldier and Bayraktar. Unpacking this further using Wardle and Derakhshan’s (2017) framework of low, middle, and high information disorder, our study reveals that both Russia and Ukraine employ a wide range of propaganda tactics, displaying almost “full-spectrum propaganda capabilities” (Forest, 2021: 25). While Ukraine predominantly utilizes low and mid-information strategies, such as satire and parody, and misleading content, Russia employs more advanced high-information disorder tactics, including fabricated content and clearly manipulated content. This contrast may be attributed to Russia’s role as the aggressor, justifying its invasion by invoking narratives related to the great patriotic war (Katyusha), national pride and strength (Masquerade), and accusing Ukraine of deceit (Stop Moving). In contrast, Ukraine employs techniques like satire and parody to portray itself as an unjustly attacked, playful (Dancing Soldier), witty (Russian warship go f*** yourself), and formidable adversary (Bayraktar).

On a larger scale, our findings highlight substantial tensions baked into platform recommendation systems engineered to showcase diverse content across cultures and geographies (Burgess et al., 2024). When regions like Gaza during recent wars, or Ukraine as we analyzed in our paper, become worldwide media hotspots, recommendation systems employ geographical diversification to amplify and distribute content from these areas across international feeds. This automated visibility of disputed regions capitalizes on users’ attention and reciprocates their desire to transcend traditional media silos (Yan et al., 2023). We argue that while recommendation systems indeed democratize and reshape epistemological approaches to how we learn about wars, their automated engineering of content diversity renders them vulnerable to users’ exploitation of spreading mis- and disinformation. Equipped with high digital literacies, our findings show users’ manipulation of TikTok’s recommendation system (the “for you” page) by leveraging audio features and platform-specific vernacular (e.g., hashtags and challenges) to trigger automated exposure. Against this, in line with Burgess et al. (2024), we claim that responsibly enhancing and circulating content diversity on platforms necessitates implementing safeguards that uphold informational integrity. This crucial action ensures that user engagement does not compromise the accuracy of content, maintaining a delicate yet essential balance for sustaining a non-polluted digital information ecosystem during disruptive events such as wars and beyond.

While the Wardle and Derakhshan framework provides an initial classification of empirical examples, its informativeness has limitations. Future research should strive to incorporate this framework into a more comprehensive model that considers contextual information, drawing insights, for instance, from Quandt’s (2018) work on dark participation, for a nuanced understanding of actors, motivations, subjects, audiences, and processes. Due to our research’s qualitative approach, we could not provide a comprehensive illustration that quantifies the extent of misleading information networks on TikTok. However, revisiting TikTok in February 2023, one year after our initial study, we found that propaganda efforts persist. Ukrainian military personnel engaged in new dance challenges (e.g., Wednesday Addams Dance), while Pro-Russian accounts redistributed manipulated videos (e.g., Stop Moving). Future research should prioritize platform literacy as TikTok users excel in crafting engaging content for social influence. However, some users may lack the skills to identify and combat misinformation, leading to the unwitting spread of war-related audio memes.

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.