Abstract

Despite opaque automated systems and few formal channels for participation, YouTubers navigate algorithmic governance on the platform through a strategy we call user-generated accountability: the generation of publicity via content creation to reveal failures, oversights, or harmful policies. Through an analysis of 250 videos, we identify common strategies, concerns, and targets of accountability. Creators primarily upload vlogs that acknowledge the platform’s positive aspects, even as they express concern with YouTube’s policies, automated enforcement systems, poor communication practices, and discrimination against certain creators or content. In publicizing critiques of platform operations, videos enroll creators and audiences as active stakeholders in platform governance that can coordinate actions to draw the company’s attention to matters of concern. We argue that user-generated accountability practices offer a productive starting point for understanding how platform governance disputes come to be and how systems might be shaped or rebuilt to better serve the needs of competing stakeholders.

Keywords

Introduction

From the personalized home page to the selection of videos up next, algorithms are inescapable on YouTube. Beyond the more obvious aspects of the interface, algorithms also power decisions around the placement of ads, the eligibility of videos for monetization, the enforcement of copyright, and the moderation of content. In other words, automated techniques mediate what audiences see, which creators are seen, and the possibilities for extracting social and economic value from visibility (Rieder et al., 2018). Such techniques both respond to and enable the immense scale of YouTube, a platform with more than 2.6 billion monthly users and 500 hours of content uploaded every minute (YouTube, n.d.). However, details about the back-end operations of the platform are scarce, constrained by technical challenges and economic incentives (Gillespie, 2018), and compounded by the power differential between a platform and its users (Savolainen, 2022). Unsatisfied with the black-boxing of algorithmic governance (Katzenbach and Ulbricht, 2019) and platform governance on YouTube more generally (Tarvin and Stanfill, 2022), creators have begun to seek accountability through other means, deploying their skills, audiences, and situated knowledge to investigate the platform’s operations (Kingsley et al., 2022).

Such initiatives refract public concerns around the politics of algorithms through the prism of platform culture. For example, YouTubers Andrew Platt, Sealow, and Nerd City collaborated on a “demonetization detector” to test how the title of a video impacts its eligibility for monetization, analyzing over 15,000 words. 1 In a trilogy of videos that racked up more than 3.5 million views, they revealed the “secret codes” and “secret rating systems” assigned to demonetized videos, and words likely to trigger automatic demonetization, including references to demonetization, mental health, and the LGBTQ community. 2 Although the talk of “top secret” information flirts with conspiratorial logics common to popular discussions of platform governance (Lewis and Christin, 2022; Savolainen, 2022), something that the recurring appearance of mad scientist character “Dr. Downvote” and the parody song “I Want Transparency” explicitly play with, the videos are quite careful in their discussion of evidence including the aforementioned audit, public statements from the company, leaked documents from an employee whistleblower, emails from a YouTube partner manager, and the advertising categories within the AdWords program. And, despite their playful delivery, the videos express deep-seated frustration that YouTubers cannot rely on the platform for meaningful information about governance decisions.

These videos illustrate a growing phenomenon we term user-generated accountability, or the generation of publicity via content creation to reveal failures, oversights, or harmful policies on a platform. User-generated accountability simultaneously derives support from creators and audiences and pressures the platform to acknowledge and remedy issues. Creators transform “algorithm audits” (Sandvig et al., 2014) and “gossip” (Bishop, 2019) about automated systems into user-generated content to explain how platform governance works and why the public should care. Prior research demonstrates that YouTubers actively engage with platform governance issues through content production, although it tends to focus on specific issues such as copyright enforcement (Kaye and Gray, 2021) and demonetization (Caplan and Gillespie, 2020), or specific communities such as beauty vloggers (Bishop, 2019) and BreadTube (Cotter, 2022). Building on and broadening this work, we investigate YouTubers’ calls for accountability on the platform through a grounded analysis of platform callout videos, identifying the forms calls for accountability take, the actors YouTubers target, and the underlying concerns they address.

In what follows, we review the challenges of accounting for algorithmic governance, introduce the rise of user-driven accountability initiatives, and argue that YouTube is an industry bellwether for such practices. Next, we introduce the methodology of our study, outlining the collection and analysis of 250 videos that provide an account of governance on the platform and hold the platform to account. We then present our findings regarding YouTubers’ stances toward YouTube, the format of the videos, targets of accountability, and topics of concern. In the discussion section, we build on the primary theme presented in these videos—YouTube creators’ lament that they must make public noise to get responses from the platform—to understand creators’ feelings about the failure of current accountability systems and the strategies they use to bypass these failings. Finally, we conclude with the theoretical, methodological, and empirical contributions the study makes to YouTube research and our understanding of how users intervene in platform governance. As research into platform governance seeks to understand the layered and overlapping perspectives of platform stakeholders, our descriptive account of these practices offers a productive starting point for understanding how platform governance disputes come to be and how systems might be shaped or rebuilt to better serve the needs of competing stakeholders.

Literature review

Accounting for algorithmic governance

The power of platforms is, to no small degree, attributable to automation. As Rieder and Hofmann (2020) succinctly state, “The combination of infrastructural capture and algorithmic matching results in forms of socio-technical ordering that make platforms particularly powerful” (p. 2). While algorithmic matching can take many forms depending on the type of platform, for social media, moderation is an “essential, constitutional, definitional” function (Gillespie, 2018: 21). Yet, moderation is more than the mere removal of content. It involves the full array of decisions and decision-making systems around the removal, reduction, qualification, and promotion of people and content—in other words, who or what gets seen. To manage massive flows of content, platforms employ an array of algorithms, resulting in a system where there is “a form of social ordering that relies on coordination between actors, is based on rules and incorporates particular complex computer-based epistemic procedures” (Katzenbach and Ulbricht, 2019). Such management is successful, or at the very least impactful, with recommendation systems delivering most of the content that users encounter on social media platforms, especially video platforms like YouTube (Solsman, 2018).

Despite the ubiquity and centrality of these systems, technical complexity and commercial interests combine to make accountability difficult. Accountability refers to the extent to which decision-makers are expected to justify their choices to those affected by these choices, to be held answerable for their actions, and to be held responsible for their failures and wrongdoings (Perel and Elkin-Koren, 2016: 481). We disambiguate the definition into two parts, distinguishing between weaker and stronger forms of accountability. Weak accountability entails providing an account of decision-making, while strong accountability also assigns responsibility for said decisions, moving from the descriptive task of providing an account to the political task of holding accountable. The former is primarily epistemic, concerned with the conditions of knowledge, while the latter requires action and more explicitly grapples with power relationships. Proponents of accountability typically refer to the stronger sense of the term while targets of accountability often angle toward the weaker sense as a way to delimit responsibility, a common theme in platform governance (Scharlach et al., 2023).

The complex relationships between human and machinic actors surrounding automated systems make it difficult to know who or what is responsible for any particular decision. From the user perspective, it is unclear when automation is at work, if humans are involved, and what are the reasons guiding decision-making (Perel and Elkin-Koren, 2016). These challenges are compounded by choices platforms make to obfuscate particular decisions (Gorwa et al., 2020), as well the technical complexity of these systems, which are dynamic and respond to how users interact with them (Rieder and Hofmann, 2020). Platform automation also represents a fundamental shift in what governance means, collapsing the distinction between adjudication and enforcement (Perel and Elkin-Koren, 2016). This undermines due process because there are few opportunities to understand and contest decisions. Even when platforms offer some form of appeal, the combination of unintuitive reporting systems and a lack of trust in their efficacy result in low rates of uptake (Vaccaro et al., 2020). Furthermore, the absence of information about content removal downplays the value tradeoffs involved (Elkin-Koren, 2020) and depoliticizes the process of moderation (Gorwa et al., 2020).

In the face of these challenges, there have been many proposals to promote accountability. These include calls for transparency (Suzor et al., 2019), algorithm audits (Sandvig et al., 2014: 16), and more dramatic regulatory solutions such adversarial procedures for evaluating and contesting content moderation decisions (Elkin-Koren, 2020). Yet, transparency initiatives may not produce accountability without a critical audience capable of understanding such reports (Kemper and Kolkman, 2019). Platform observability, proposed by Rieder and Hofmann (2020) and predicated on continuous monitoring, responds to the information asymmetry between platforms and users and offers a promising way forward. However, Rieder and Hofmann largely overlook the potential role of creators and other users in this process, focusing on more institutionalized actors like researchers, regulators, and civil society organizations. This is an area of significant untapped potential, as emergent literature on user-driven engagements with platform governance makes clear.

User-driven accountability initiatives

User experiences of platform governance offer valuable sources of insight into the operation of such systems. As those on “the receiving end of governance,” users can help reveal power dynamics and inequalities (Zeng and Kaye, 2022: 84). Focusing on how users orient themselves to and talk about the algorithm can help us understand how these systems work and, even more, “how users believe it should work” (Gillespie, 2017: 75). Given the challenge of speaking about universal experiences, researchers have tended to focus on theoretically interesting subsets of users who, for various reasons, are disproportionately affected by platform governance decisions. This includes people engaged in overtly political speech at both ends of the political spectrum (Haimson et al., 2021; Riedl et al., 2023). Demographic factors are also associated with disproportionate exposure to harm, with women, non-White people, and members of the LGBTQ community receiving particular attention in the academic literature (e.g. Haimson et al., 2021; Southerton et al., 2021). Users whose livelihoods rely on social media are also more likely to be concerned with platform governance decisions, especially creators more vulnerable to moderation for identity-based factors, including the proximity to sex work (Leybold and Nadegger, 2023; Southerton et al., 2021).

Yet, creators are not passive subjects, simple canaries in the coal mine highlighting the stakes of platform governance for those who bother to pay attention, although they are also that (cf. Pinchevski, 2022). They actively respond to platform governance with strategies of optimization, which focus on identifying individual or collective strategies of success on a platform, and activist strategies, which are concerned with changing the conditions of operation on a platform. “Cultural optimization” provides an overarching category for thinking about how creators of cultural goods orient their work for distribution on digital platforms (Morris, 2020). There is also a professionalized para-industry that helps creators navigate the precarity of platforms, composed of intermediaries like self-styled algorithmic experts (MacDonald, 2021), multichannel networks (Siciliano, 2022), and creator talent agencies (Bishop, 2019).

While optimization relies on accounts of how platforms work, activist strategies are concerned with the stronger notion of accountability. Some activist strategies draw on tactics from labor organizing (e.g. Bucher et al., 2021), and there are promising directions for research that have not yet coalesced around a shared vocabulary, including work on algorithmic counter-publics (DeVos et al., 2022), user-driven or everyday algorithmic auditing (Eslami et al., 2019), and algorithmic resistance (Velkova and Kaun, 2021). What this work has in common is concern with how users understand and respond to algorithmic systems, including mobilizing for more diverse recommendations (Bishop, 2019; DeVos et al., 2022; Peterson-Salahuddin, 2022) and search results (Velkova and Kaun, 2021), as well as resisting stigma (Leybold and Nadegger, 2023) and challenging the individualism promoted by competitive platform environments (Cotter, 2022; Hallinan and Brubaker, 2021). This work makes clear that users are relevant stakeholders and actors in platform governance (Reynolds and Hallinan, 2021). Opening up this approach, seeing how human and machine overlap in the experience of platform accountability, requires contextualizing the work in specific platform environments. This brings us to our specific focus on YouTube.

YouTube as bellwether

Although promising, many user-driven accountability initiatives are small scale, more theoretical than empirical. For example, Velkova and Kaun (2021) analyze artist Johanna Burai’s project “World White Web,” which employs SEO practices and publicity to intervene in racialized Google image search results. The case highlights the linkage between algorithmic power and media power, with journalists at mainstream publications boosting the visibility of the project and enabling the linking practices that changed Google Image search results. Existing platforms, in the sense of “a place from which to speak and be heard” (Gillespie, 2010: 352), play an essential role in the ability of user-driven accountability initiatives to intervene in the operations of digital platforms. Given the importance of leveraging visibility, YouTube is an essential platform to study due to the established media power held by its creators. The scale and professionalization of creators promise greater ability for such actors to draw attention to “power struggles over accountability, morality, and authority taking place on social media platforms” (Lewis and Christin, 2022: 1634).

Beyond the size of the platform, there are a number of other factors that place it at the forefront of user-driven accountability initiatives. First and foremost is the YouTube Partner Program. Launched in 2012, this revenue-sharing agreement allows eligible creators to access a portion of the ad revenue generated by their content (Caplan and Gillespie, 2020), becoming a major driver of professionalization among creators (Siciliano, 2022). Another factor is the Partner Manager program where some creators have access to a designated YouTube employee who supports the creator and can help them understand and address platform governance decisions, making the human element of governance more obvious than comparable platforms. Relatedly, YouTube was one of the first platforms to implement an appeals process, allowing “users to appeal strikes for community guidelines violations as early as 2010” (Díaz and Hecht-Felella, 2021: 18). Finally, the long-format video emphasis of YouTube makes discourse an essential element of what creators already do and can facilitate more in-depth public-facing accountability initiatives.

Other creator-driven video platforms like Twitch and TikTok have taken up accountability initiatives in modified ways. For example, TikTok allows users to appeal content removals, account bans, and demonetization decisions, and also uses a “strikes” system for violations of content and community guidelines. Twitch operates a partner system that, much like YouTube, only grants creators of a certain size access to perks like having a dedicated human Partner Manager to contact in case of grievances or disputes. These similarities in company operations and policy choices emerge in spite of the fact that neither platform focuses on long-format video, with TikTok instead emphasizing short-form content and Twitch operating almost exclusively as a livestreaming video site. Modeling of YouTube’s innovations despite these differences in content formats or market focus by other video-sharing platforms demonstrates YouTube’s role as an industry leader in creating communicative avenues between platform and creators, however limited (Díaz and Hecht-Felella, 2021).

We see the significance of these structural factors evinced in research addressing controversies on YouTube like copyright enforcement, where creators “post videos sharing their experiences” (Kaye and Gray, 2021: 1). Other studies have looked at creator responses to the changing conditions of the YouTube Partner Program, especially around the “adpocalypse” and the shift to stricter eligibility requirements for monetization (Caplan and Gillespie, 2020). YouTubers also engage in many of the strategies of user accountability discussed in the previous section, including a user-driven audit of demonetization keywords that revealed a number of several terms related to LGBTQ identities (Kingsley et al., 2022; see also Southerton et al., 2021), algorithmic gossip about racial discrimination (Bishop, 2019), work on the platform’s permissiveness of predatory behavior (Berge, 2023; Tarvin and Stanfill, 2022), celebrity scandals (Lewis and Christin, 2022), and community responses to perceived political radicalization through algorithmic recommendations (Cotter, 2022).

While this work speaks to a vibrant culture of engagement with platform governance, studies tend to focus on specific issues such as copyright enforcement (Kaye and Gray, 2021) or specific communities such as beauty vloggers (Bishop, 2019) or BreadTube (Cotter, 2022). This makes it difficult to determine continuities between issues and communities. There has not yet been any broad investigation into user-driven accountability practices on the platform. Taking this work as a springboard, we thus ask,

RQ1: What forms do calls for accountability on YouTube take?

RQ2: What topics or concerns do these calls address?

RQ3: What actors do YouTubers target when calling for accountability?

Methods

To answer these questions, we sought out videos featuring discussions of platform accountability and employed multiple sampling strategies to ensure that our data set featured diverse topics, concerns, and creators. We started by creating video networks through YouTube Data Tools (Rieder, 2015), using three videos auditing the demonetization algorithm from Nerd City, Andrew Platt, and Sealow as seeds. From these results, we selected three additional videos at random and created another round of video networks, resulting in a total of 94 videos. Next, we conducted 21 keyword searches using the “video list” function of YouTube Data Tools, drawing upon terminology featured in the titles of the previous videos about breaking, hacking, or gaming the YouTube algorithm, as well as concerns from the literature including censorship, racism, sexism, and anti-LGBTQ discrimination. For each search, we gathered 50 recommended videos, sorted by YouTube’s perception of relevance to the query, resulting in the addition of 1050 videos (see Supplemental Appendix 1 for a full list of search queries). Finally, we manually added 185 videos from a combination of recommendations on videos from our searches, algorithmic recommendations pushed to our YouTube home pages, references made to other videos in our sample, and additional videos from creators already in our sample.

From this collection of 1329 videos, we eliminated duplicates and any videos that were not primarily in English. We then removed videos that were not about the YouTube algorithm or platform operations, 3 videos from major media outlets, videos from academics not made specifically for YouTube, and videos from channels whose primary purpose was to sell professional social media branding and content optimization courses or services. 4 It was quickly evident that certain queries were less successful than others (see Supplemental Appendix 1). “Algorithm audit,” for example, primarily returned talks from academic conferences, indicating that despite the term’s popularity among academics and policymakers, it has not been taken up by creators themselves. Likewise, “channel audit” produced a mix of financial audits and First Amendment audits, 5 while “youtube SEO audit” resulted in exclusively professional SEO advice.

After cleaning the data, we had a set of 429 videos for coding, including 46 videos from the video networks sample, 198 videos from the keyword searches, and 185 manually added videos. At this point, we began to develop the codebook following the principles of abductive analysis, where researchers treat existing theory “as sensitizing notions that inform research but do not determine the scope of perceivable findings” (Timmermans and Tavory, 2012). We independently analyzed 10 videos, noting themes and concerns, and then met to compare notes. We also compared our observations with the coding schemas of related research about attribution of responsibility (Haimson et al., 2021), stance toward the platform (Eslami et al., 2019; Kaye and Gray, 2021), and themes in demonetization discourse (Kingsley et al., 2022). Synthesizing this information, we developed a codebook analyzing the format of the video, stance toward YouTube, targets of accountability, and topics of concern (see Supplemental Appendix 2 for full codebook). To capture a more detailed picture of accountability practices, we coded the targets of accountability and topics of concern non-exclusively. Employing this codebook, we randomized our list of selected videos and one author began coding from the top of the list while the other worked from the bottom. Engaging in constant comparison, we coded until both coders agreed that theoretical saturation had been reached at 250 videos, checking through titles in the remaining sample to verify no unique topics had been missed.

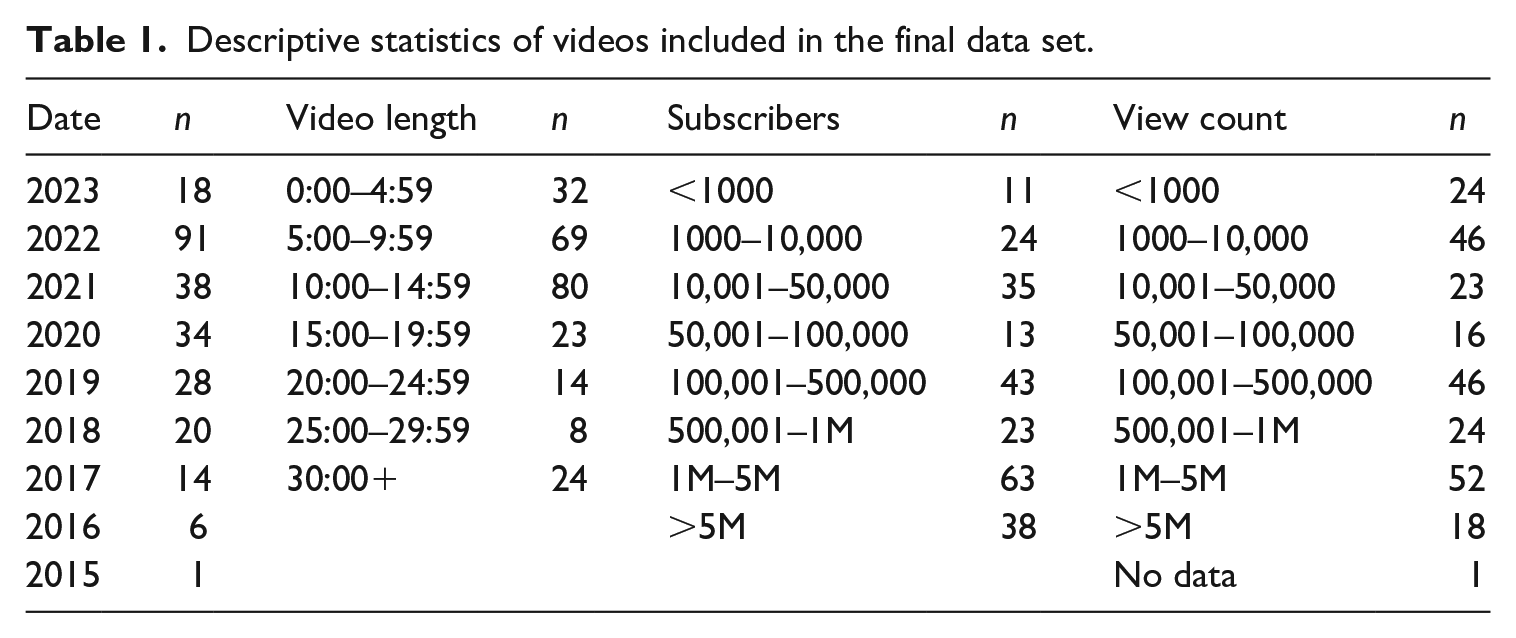

An overview of our final data set is available in Table 1, which includes 172 unique channels and videos from 2015 to 2023. In terms of channel size, we have a diverse spread ranging from fewer than 100 up to tens of millions of subscribers. However, the sample is top-heavy, with nearly half of the videos coming from channels with more than 500,000 subscribers, 6 speaking to the importance of platform accountability concerns even, and especially, among highly successful YouTube creators. While we did not formally code the different types of channels, our analysis of the videos revealed that our sample includes major content areas like gaming, animation, politics, and lifestyle, as well as more niche areas such as books, guns, swords, and model boats. The diversity of topics provides a rich source of data to begin to map platform accountability practices on YouTube, but it is certainly partial. Beyond the exclusive focus on English-language videos, there are surprising topical absences. For example, beauty creators are not represented in our sample, despite our inclusion of keyword searches related to sexism and racism as relevant issues established in the literature (Bishop, 2019), 7 a limitation we return to later in the article.

Descriptive statistics of videos included in the final data set.

Findings

What does accountability on YouTube look like? Like a lot of content on YouTube, it often looks like a person, sitting at a desk, directly talking to the camera. Vlogs were the most popular format, representing 66% of the videos in our sample (see Figure 1). Scripted content, including video essays and animation, was a distant second at 20%, followed by conversational videos such as podcasts and talk shows (7.2%). Fourteen videos in our sample (5.6%) were experiments meant to test the YouTube algorithm. The most popular experimental format from our data set exploited a (seemingly now patched) bug in YouTube’s watch time metric. Creators made videos that could only be understood by lowering the playback speed to 0.25% so that the platform would regard a 1-minute video as receiving 4 minutes of watch time, boosting the channel’s watch time metric by as much as 400%.

Format and stance of accountability videos.

As the watch time experiments attest, accountability videos need not be antagonistic toward the platform. Indeed, creators in our data set adopted notably varied stances toward the platform (see Figure 1). While a negative stance was most prominent, we were surprised that only 33.2% of the videos expressed an overtly negative stance given our inclusion of strong search terms like “racist,” “homophobic,” and “censorship.” Instead, we found that many critical videos balanced objections to the platform with acknowledgements of the difficulty of content moderation, positive steps that the platform had taken, or an expression of personal investment in the YouTube community, resulting in what we coded as a mixed stance (29.2%). For example, YouTuber JiDionPremium offered the following explanation amid a serious discussion of racism on the platform: I love YouTube from the bottom of my heart bro, like this platform changed my life, this platform changed my life and I owe everything to it, but I’ll be damned if I just sit back here and I don’t address what I see.

8

We also found videos that took a neutral or undetermined stance toward YouTube, reflecting a weaker notion of accountability, including many experiments and videos where creators discussed financial compensation (23.2%). Finally, some creators actively championed the platform (14.4%), defending its policies and characterizing success as meritocratic, similar to the messaging of the “algorithmic experts” studied by MacDonald (2021).

While algorithmic governance is notoriously “black boxed” (Bishop, 2019), creators make sense of the platformed environment through diverse sources of evidence including platform metrics (45.6%), discussed in greater detail below, as well as news reports (8.8%), external research like academic articles and creator-driven audits (16%), and personal narratives (58.8%). Few videos rivaled the elaborate audit of demonetization on the platform discussed in the introduction (Kingsley et al., 2022), although its findings were amplified through citations from other creators. Instead, most videos engaged with more mundane practices of sensemaking, supporting the broader relevance of “algorithmic gossip” beyond the beauty community (Bishop, 2019). The prevalence of personal narratives may be due, in part, to the fact that being a YouTuber is a fairly esoteric job where creators are the primary experts. It is also likely connected to the popularity of vlogs in our sample, which lend themselves to personal narratives, and a creator-centric model of community (Meisner, 2023), where audiences often tune in because they are interested in the persona of specific creators. Providing personal experiences is thus an easy and sensible way to communicate with this group.

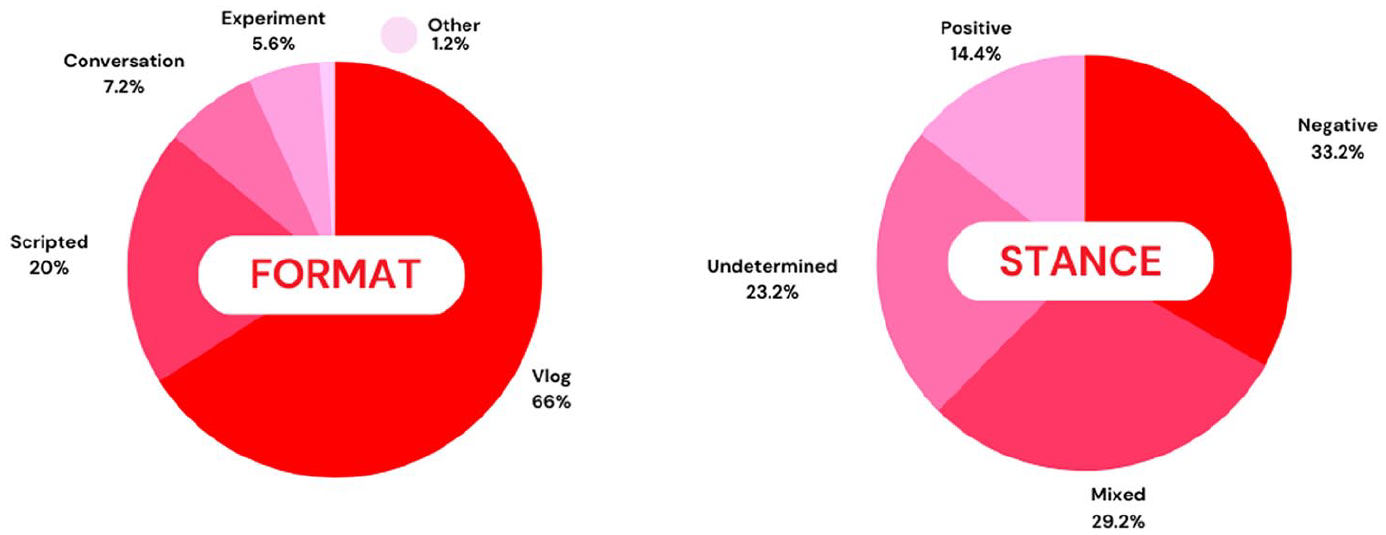

Creators marshaled this evidence toward both weak and strong notions of accountability. As previously discussed, providing an account does not necessarily imply holding someone (or something) accountable and just over 20% of the videos in our sample lacked any targets of accountability. These videos primarily consisted of experiments to “game” YouTube’s algorithms or YouTubers reporting back-end metrics like the advertising rates for different types of content (see Figure 2). Although these videos did not explicitly frame the platform as problematic, they recognized that audiences lack information about the working realities of creators. By providing accounts of their metrics, workflows, and attempts to optimize their content for YouTube, these creators provide a level of transparency that helps viewers (and aspiring creators) understand the experiences and creative decisions of YouTubers.

Screenshot from a video by Shelby Church showing behind-the-scenes advertising rate metrics on the YouTuber creator dashboard. 9

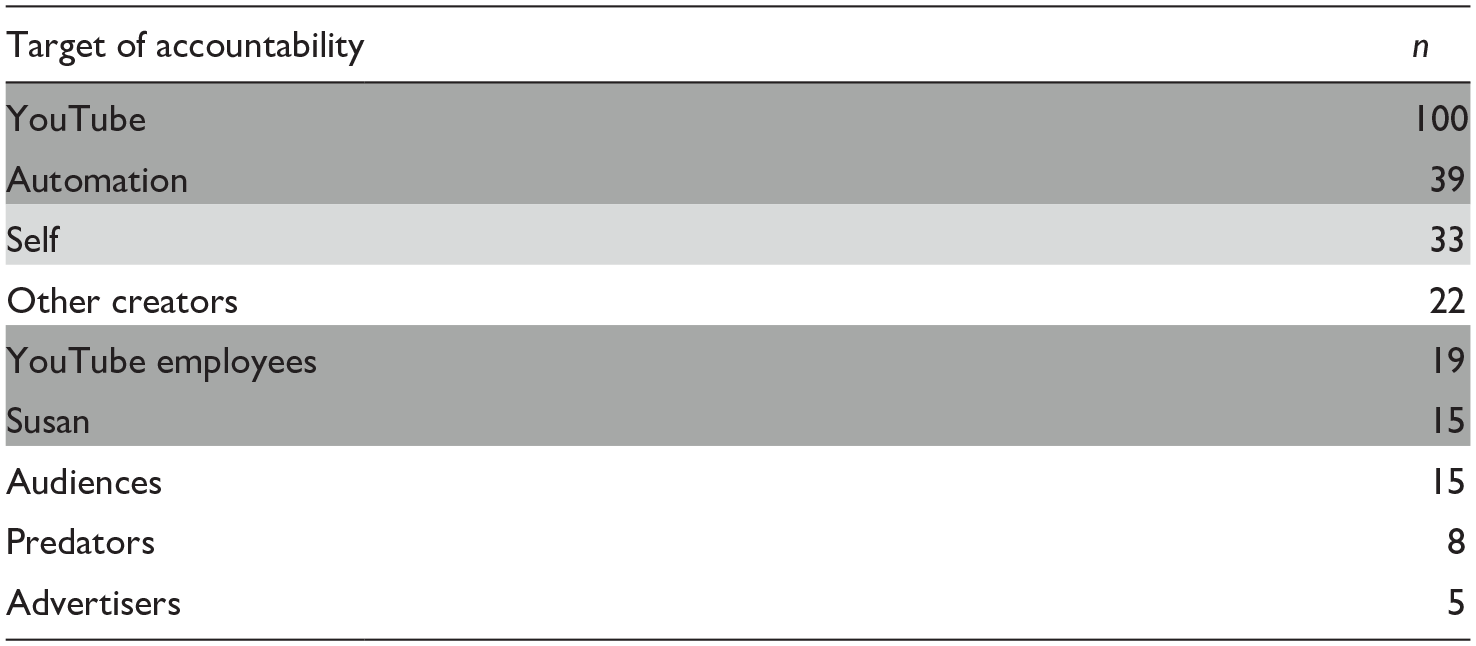

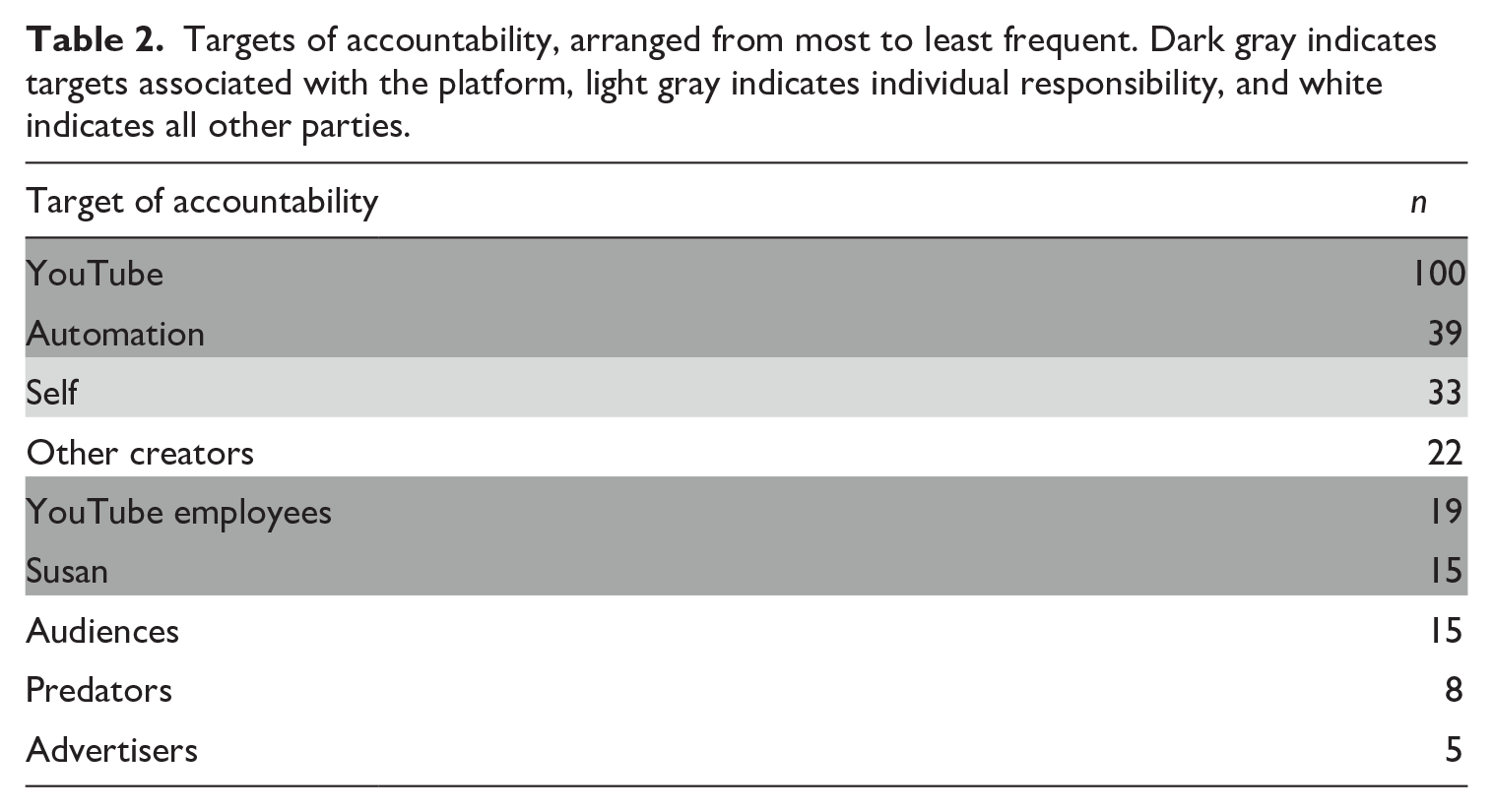

Among the remaining videos (n = 141), the platform was the most popular target of accountability (see Table 2). Most of the time, creators talked about the platform generally, speaking about “YouTube” as a collective entity (100 videos) or “Automation” as a stand-in for all the systems that govern visibility (39). However, some creators singled out specific actors associated with the platform, including “YouTube Employees” involved in content moderation and policy teams (19) and former YouTube CEO Susan Wojcicki, often invoked as simply “Susan” (15). 10 While Wojcicki and other employees of YouTube operate as agents of the platform, we distinguish them in our coding to highlight how YouTubers themselves address them as distinct actors. In many cases, creators singled out Susan Wojcicki for particular ire. Likewise, many YouTubers note that they have spoken directly to a YouTube employee, which seems to produce a qualitatively different experience than calling out generically to “YouTube.” Echoing social media platforms’ top-down emphasis on individual responsibility (Scharlach et al., 2023), the “Self” was the next most frequent target, appearing in videos where YouTubers discussed their own need to create more optimized content or adhere to platform policies (33 videos). While less prominent and coherent as a category, creators also addressed “Other Creators” (22), “Audiences” (15), “Predators” (8), 11 and “Advertisers” (5).

Targets of accountability, arranged from most to least frequent. Dark gray indicates targets associated with the platform, light gray indicates individual responsibility, and white indicates all other parties.

What issues prompted YouTubers to make videos about the platform? A common theme, often articulated alongside a weaker notion of accountability, addresses how creators should understand and respond to algorithmic governance on the platform. At the heart of this process is “Explaining metrics” (45.6%), where YouTubers share and explain the meaning of the facts and figures that populate their creator dashboards. Such interpretation often transitioned into a discussion of “Optimization” practices, appearing nearly as often in the data set (44.8%) and filled with strategies for playing the visibility game. “Experimentation” appeared in 28.4% of videos, including videos which themselves were just experiments and videos which reported the results of prior experiments. More critical versions of this theme focused on the stress that tracking these measures places on YouTubers, as well as the psychological effects of poor performance. To this end, “Creator well-being” appears in 6% of our sample.

Algorithmic governance, especially on social media platforms, is not all about the algorithm. As Rieder and Hoffman (2020) note, algorithms are only one component of “a much larger system that includes other various instances of ordering, ranging from data modeling to user-facing interfaces and functions that inform and define what users can see and do” (p. 8). Creators similarly situate discussions of platform governance within a broader suite of policies and practices. Complaints about YouTube’s “Policies,” including the Terms of Service, Community Guidelines, and requirements for monetization, were the most frequent code, appearing in 50.4% of videos. Alongside policy complaints, issues with “Corporate communications,” or rather, the lack of communication from YouTube about policy changes and the application of specific policies, appeared in 29.6% of videos. YouTubers big and small noted the need to turn to Twitter to get YouTube to respond to their concerns (Berge, 2023), a strategy common to creators from other video platforms like Twitch (Meisner, 2023). In order to make Twitter appeals more effective, some creators requested their fans and fellow creators “Signal boost” their messages (14.4%). These were often paired with frustrations about YouTube’s “Appeal process” (10.8%), including doubts about the involvement of humans. Videos also claimed that YouTube has a “Cultural disconnect” with the norms and values of the platform’s users (12.8%) and discussed specific YouTube “Features” such as the dislike button or community post (18%).

These broader considerations exist alongside focused attention on automated recommendation and moderation systems, reflected in the discussions of bias. YouTubers put forth the idea that the platform discriminates against certain creators (“Creator bias”) based either on demographic characteristics (e.g. Black, LGBTQ) or their popularity (e.g. special treatment for bigger creators). While this theme appeared in only 28.8% of videos, it was the subject of some of the most watched videos in our sample, a few of which also achieved mainstream news coverage. Accusations of “Content bias” also appeared (26.8%), attributing discrimination based on the content of a video. Prominent in this set of videos were creators of “dark” content like true crime channels and pedophile hunters, and channels that demonstrate or discuss weapons (including in a historical or creative context). It also included a smaller number of videos that claimed that YouTube artificially boosts content it favors and has double standards for the subject matter of advertisements. Notably, one video in this set provided evidence that anti-LGBTQ advertisements were being served before videos made by LGBTQ creators.

While we did not code for political stance, it became obvious that left-leaning channels critiqued algorithmic recommendations through the language of “bias,” while right-wing content highlighted similar concerns through the terminology of “censorship” (Riedl et al., 2023). “Censorship” was invoked in 22% of our sample and reflects the idea that YouTube explicitly bans certain types of content or topics. Despite its prominence in academic and journalistic discourse, the concept of rabbit holes, or the idea that YouTube’s recommendation algorithms create “Radicalization,” was only mentioned 5 times (2%), with one video regrettably noting that getting the algorithm to direct new viewers to conspiracy theory content was significantly more difficult than it had been in prior years.

Discussion

User-generated accountability videos set the agenda for how the platform community understands and responds to YouTube’s algorithms. By testing, auditing, and challenging practices of recommendation and moderation, creators simultaneously intervene in the production of particular algorithmic imaginaries (Bucher, 2017) and push YouTube to accept responsibility for its governance decisions. Through publicizing critiques of platform operations, user-generated accountability videos enroll creators and audiences as active stakeholders in platform governance. In addition, they draw the platform’s attention to matters of concern, a necessary function given that even former YouTube CEO Susan Wojcicki has admitted to not fully understanding how the platform’s algorithms work (Gutelle, 2022). YouTubers thus combine strategies from labor activism (Bucher et al., 2021), academic research (Rieder et al., 2018), and investigative journalism (e.g. Larson et al., 2016) to identify discriminatory practices, call for their redress, and respond to the limitations of official, top-down mechanisms of accountability. Although this article is concerned with videos on YouTube about YouTube, the uptake of public-facing accountability initiatives reflects the broader challenges of achieving accountability in an age of automation and platformization. As Michael Power (1997) argues, truth-seeking tests like audits “are needed when accountability can no longer be sustained by informal relations of trust alone but must be formalized, made visible and subject to independent validation” (p. 11).

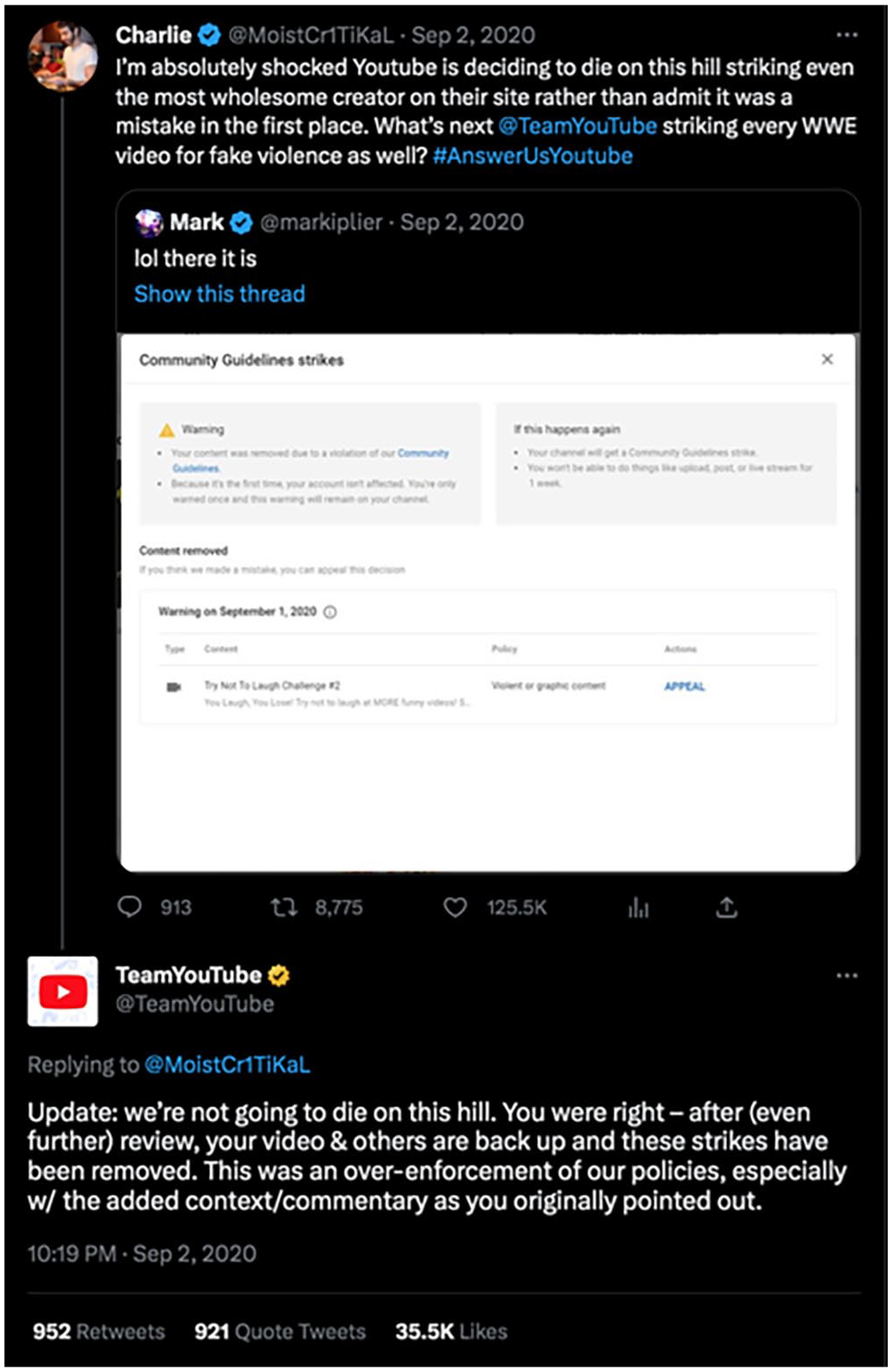

However, the success of user-generated accountability hinges on making sure that the right actors hear the message. Creators find it difficult to get YouTube’s attention, especially, ironically, through its in-built appeals process. For many creators, appeals are a necessary but insufficient first step toward accountability and they expressed serious frustrations with the process (Vaccaro et al., 2020), from the fact that they have only 600 words to make their case to YouTube’s failure to specify what part of a video violates its policies (except for copyright strikes) to the limitation that YouTubers can only appeal a decision once. Obviously, given the nature of our data set, these appeals were often unsuccessful, driving creators to go off-platform for assistance (Berge, 2023). Tweeting at YouTube, especially the @TeamYouTube account, was the primary strategy cited, alongside making YouTube-related hashtags (e.g. #PickASideYouTube, #AnswerUsYouTube). The @TeamYouTube account serves as one of YouTube’s primary support channels and has posted over 2.3 million tweets in one of 10 languages during its 7 years on Twitter. Most questions are answered by links to YouTube Help pages, but prominent disputes generate more extraordinary responses, such as the reply to MoistCr1TiKaL and Markiplier’s fight with the platform over a Community Guidelines strike they received for making reaction videos to a fake road rage video featuring cartoon mascots (see Figure 3).

@TeamYouTube’s response to MoistCr1TiKaL admitting they wrongly enforced their policies. Several years later, YouTube’s automated systems would strike these same videos again.

Creating videos rarely seems to be the first recourse for content creators, in part because diverting from their normal content carries a potential algorithmic penalty for their channels. Larger YouTubers can first try methods like working through their partner manager and resolving issues behind the scenes. Thus, posting accountability videos to YouTube seems to be a kind of last resort for creators for whom no other accountability strategy has worked. When these videos succeed, however, the reach they achieve can be significant enough to push them onto YouTube’s Trending page 12 or into the columns of industry journalists, magnifying their effects (Velkova and Kaun, 2021). Momentum can also be created by the sheer volume of creators speaking on the same topic, reflected in YouTube’s recent changes to their swearing policy, which the platform admitted created a “stricter approach than we intended” (Clark, 2023).

These results demonstrate that the ability to “make some noise,” or what Velkova and Kaun (2021) refer to as “media power,” is unevenly distributed among big and small creators who work to generate publicity at very different scales. Small creators recognize this disparity and rather than appealing to YouTube directly often begin their push for accountability by asking larger creators to intercede on their behalf, whether speaking to partner managers or signal boosting their content. MamaMax 13 epitomized this strategy by tagging big creators on Twitter and asking them to retweet #YouTubePickASide, as well as mentioning them directly in his video. “I want the people I call out in the video to do something because they’re the ones YouTube is going to listen to,” he explained. 14 Anti-vaccine journalist Alison Morrow echoed this point in regard to the need to go to Twitter as a smaller creator, saying, “Then you have to go to Twitter and cause a ruckus if you’re a little person like I am and don’t have the ear of the big cheese.” 15 There is a sense among smaller creators that 20,000 likes on a YouTube video does not move YouTube’s needle the way 20,000 likes on a tweet seem to, as Twitter is a much smaller platform and requires less engagement to make an issue trend.

The importance of signal boosting means that user-generated accountability strategies rely on generating solidarity between creators and audiences on YouTube. Accordingly, in discussing “users” and our analytic of “user-generated accountability,” we intend a broad understanding of platform users that encompasses creators (and their content creation teams, including editors and social media managers); active audience members who engage with content through liking, sharing, commenting, and boosting calls for attention on and off YouTube; and even passive audience members who merely watch or scroll through content. While calls for accountability are often initiated and fronted by content creators, as we focus on in this article, they are not exclusively so. In addition, even when led by creators, public-facing calls for accountability rely on the participation of many different platform users. While calls for accountability can also be initiated by external bodies, including researchers, regulators, legislators, and non-governmental organizations (NGOs) and media watchdogs, in focusing on creator-led accountability initiatives, our data set surfaces concerns that are both individual and societal. That is, while many of the videos in our set focus on singular, often atomized situations with discrete resolutions, others focus on ongoing issues that affect the whole of YouTube or the interconnected ecosystem of social media platforms. Thus, the question of who is seeking accountability is multitudinous, ranging from solo creators pursuing resolutions for issues regarding single videos, to large populations of users seeking to rectify platform-wide concerns, to discussions of external regulators attempting to support ways to hold platforms accountable at a societal level.

Yet, societal concerns expressed through user-generated accountability also remain inescapably tied to individual experiences on the platform, resulting in an interpersonalization of politics (Lewis and Christin, 2022). In one video, 25 YouTubers appeared on camera to back the grievances of MamaMax, whose video criticizing child predators had been removed by YouTube. This is community building by both choice and necessity, as most creators expressed that they felt they could not leave YouTube; no other platform offered them the same reach, nor could they easily move their audiences elsewhere. Feeling trapped significantly increased frustrations regarding the platform’s perceived lack of communication with its creators. As MoistCr1TiKaL explained, “It’s very hard to play by their rules when we don’t have a clear understanding of what they are or how they apply to the platform. It is so fucking frustrating that I continue to have to get splintered information from a ton of creators, as well as my own research, as well as my own YouTube contact who also doesn’t have all of the information at hand because internally YouTube doesn’t allow him access to all the information. . .” Many creators echoed these sentiments regarding the lack of transparency about YouTube’s systems, algorithmic and human, and explained that having to repeatedly advocate for themselves contributed to burnout and even led some to consider quitting (Eslami et al., 2019).

The problem with feeling unheard is not limited to creators’ attempts to lobby the platform. Many YouTubers feel similar frustrations toward regulatory and legislative bodies. For example, reflecting on his attempt to testify in front of the Canadian parliament against Bill C-11, an amendment to Canada’s Broadcasting Act meant to force online platforms to join their older media counterparts in being required to feature a certain amount of Canadian-produced content, YouTuber J.J. McCullough said, “We tried to speak out but were ignored” (Mandel, 2023). Speaking to Maclean’s reporter Nicholas Seles, McCullough explained that The thing that really struck me from the parliamentary hearings . . . was that when witnesses are testifying, you would think they’re the center of attention. But when you’re there in-person, almost none of the politicians seem to be listening at all. Everybody is just on their phone. It was incredibly upsetting and disrespectful. It felt like whistling in the wind. (Seles, 2022)

McCullough’s remarks, echoing much of the rhetoric in our sample about the difficulties of getting YouTube to listen to concerns, express a familiar sentiment among content creators that although their livelihoods revolve around making noise that other people want to hear, people in power rarely seem to listen.

Whether speaking to the platform, to external regulators, or to other creators and audiences, the strategy of making noise leverages publicity to respond to feelings of powerlessness. In their analysis of platform drama on YouTube, Lewis and Christin observed that conflict “frequently takes place between a range of individuals operating without institutional backing. For content creators, this leads to a sense of precarity and powerlessness, even as they build large audiences” (Lewis and Christin, 2022: 1649). Going public with grievances can force a response from YouTube in return, countering the lack of institutional backing. As a platform, YouTube is uniquely suited to strategies of user-generated accountability, as the entire structure of the site revolves around generating mass publicity and visibility. At the same time, YouTube’s problems are problems at scale due to its 2.6 billion monthly users. An issue that affects just 1% of the platform may not draw major corporate attention even as it plagues 26 million people. YouTube’s failure to respond to problems is, accordingly, rarely a question of incompetence from platform leadership and far more often a question of prioritizing governance needs in terms of problem scale. Attention to a problem is not just about notifying the platform that it exists, but incentivizing the dedication of resources toward rectifying it. Thus, publicizing problems with the platform in a way that draws attention from audiences, news media, and other creators represents one of the most important ways YouTube’s creators participate in platform governance.

Finally, the fact that most YouTube systems and processes require interaction with automation before encountering a human—if, indeed, creators can ever reach a human representative—escalates feelings of powerlessness. Situations that may feel easily explainable to another person must instead be formatted and channeled into machine-driven systems, rendered in ways that make them legible to algorithms at the cost of time, labor, and clarity. YouTube instructs creators to rely on their creativity, personality, and the development of human connection with their audiences in order to succeed on the platform, but forces them to craft grievances, appeals, and suggestions for automated systems that diminish the role of these skills. It is then unsurprising that creators turn to methods of public appeal that instead rely on the same competencies that make them successful on YouTube. YouTube’s platform governance turns into a system where the company attempts to triage the massive number of complaints, appeals, and content moderation actions using automation while creators, frustrated by these same systems, try to find ways to bypass them and reinsert humans into governance processes.

Conclusion

Through an analysis of 250 YouTube videos, we identified typical accountability strategies on the platform. Creators primarily upload vlogs that draw upon their personal experience as evidence, alongside comparisons to the experiences of other creators. Most YouTubers were critical of the platform, even as they often acknowledged positive aspects, and YouTube itself was the actor most often targeted for accountability. Finally, we found that creators were primarily concerned with YouTube’s policies, automated enforcement systems, poor communication practices, and feelings of discrimination against certain creators or content types. Overall, YouTubers sought to address these problems by making noise, or generating publicity to draw YouTube’s attention to particular problems. The need to make noise is especially impacted by the massive scale of YouTube’s governance operations. This explains, in part, why creators turn to a much smaller platform, Twitter, to get YouTube’s attention. Scale also makes it easier for big creators to drum up trends, hashtags, and fan support than smaller creators. Regardless of creator size, explicit requests for “signal boosting” from viewers and other creators were common. The combination of these actions creates a sense of community between YouTubers, and between creators and their audiences, in a joint effort to achieve accountability. Many attempts to hold YouTube accountable take on a kind of platform versus platform format, where creators maintain that you need a big audience of your own or an impromptu audience gathered on Twitter to generate enough attention to get in touch with YouTube, let alone influence the platform’s decisions.

Our study has several limitations. First, we examined only English-language videos, but the YouTube communities in many languages, including Spanish, Portuguese, Arabic, and Hindi, are large, very active, and face additional challenges generating publicity and drawing YouTube’s attention. Thus, a multilingual study of user-generated accountability videos promises to add rich texture to how different language communities on YouTube strategize publicity and accountability. Second, as mentioned in our findings, some notably popular and well-studied interest communities on YouTube are not represented in our sample, such as beauty influencers (Bishop, 2019). It is not clear if this is due to our keyword search choices, the timing of our sampling, the limitations of our sample size, or something unique about how these communities negotiate accountability with YouTube. Third, while we did not explicitly code for gender, it was evident that, at least among the biggest creators in our sample, self-identified men were dominant. This is unsurprising, as gender gaps on YouTube across national contexts and content verticals are well-documented (e.g. Wegener et al., 2020). Future research could focus on user-generated accountability strategies by gender, as we suspect there may be meaningful communicative differences. Finally, our study focuses only on the calls for accountability that creators make directly to YouTube and thus does not reflect the full landscape of accountability practices aimed at YouTube (see Cunningham and Craig, 2019). Research focusing on the roles of policymakers, regulators, and NGOs in holding YouTube to account, and on how creators relate to these institutions, would provide a fuller picture of the accountability pressures on YouTube and similar platforms.

This project makes several contributions. First, on a theoretical level, we develop the concept of user-generated accountability, which draws attention to how the public can engage with the politics of platforms beyond individualized adjustments or the pursuit of commercial optimization (Morris, 2020; Siciliano, 2022). As automated decision-making continues to expand into new areas of life, our theoretical outlining of user-generated accountability offers a tool for participating in the inevitable algorithmic disputes of the future. As YouTube has long served as an industry bellwether in establishing accountability mechanisms and creator programs, especially for video-driven platforms, and as creators increasingly expand their footprint across multiple platforms, the tactics they use to engage with platform failings also need to become increasingly mobile. Second, on a methodological level, we demonstrate the importance of considering content creators as political actors that promote both particular algorithmic imaginaries and techniques of algorithmic literacy (Reynolds and Hallinan, 2021). We elevate the organic knowledge base of the platform and examine how creators audit and publicize the secretive and proprietary systems that set the conditions of digital culture. Third, on an empirical level, we identify popular concerns around algorithmic governance on YouTube, including the demonetization of LGBTQ-related content, the lack of human involvement in policy enforcement and appeals, and the difficulty of holding automated systems to account. We demonstrate the bottom-up ways for testing algorithms and setting platform agendas through publicizing problems on and off YouTube. YouTubers have been on the leading edge of forcing changes or drawing media attention to platform algorithms and their discriminatory effects, and the subsequent need, as YouTube conceded, for “human judgment” to make “contextualized decisions on content” (Wojcicki, 2017) alongside the operations of algorithms. Our study thus affirms the need for human–machine collaboration in the automation of platform governance, and sheds light on the strategies that creators use to assert human accountability while living and working amid automated systems.

Supplemental Material

sj-pdf-1-nms-10.1177_14614448241251791 – Supplemental material for User-generated accountability: Public participation in algorithmic governance on YouTube

Supplemental material, sj-pdf-1-nms-10.1177_14614448241251791 for User-generated accountability: Public participation in algorithmic governance on YouTube by CJ Reynolds and Blake Hallinan in New Media & Society

Footnotes

Acknowledgements

The authors would like to thank special issue editors Christian Pentzold and Christian Katzenbach for their expertise and guidance on curating this special issue, and our co-panelists on the “Algorithmic Imaginations” panel at the Association for Internet Researchers, Ted Striphas, Mikayla Brown, and Hector Postigo, for thinking with us on an early version of this work. Our thanks as well to anonymous reviewers of AoIR and New Media & Society for their helpful comments and advice.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.