Abstract

Australia’s News Media Bargaining Code requires Google and Facebook to negotiate payments with news publishers for news content appearing on the platforms. Facebook and Google lobbied against the code through a highly visible public-facing campaign which included a series of blogs, videos and pop-up communications across their interfaces including News Feeds, Google Search and Home Page, and You Tube, and culminated in Facebook banning Australian users from accessing Australian news and related content. This article presents the findings of a detailed study of platform discourse in response to the News Media Bargaining Code, using critical discourse analysis, and drawing on theoretical frameworks from Althusser, Foucault and Chun. It also investigates the role of the user interface in platform power, particularly how platform users are interpellated by digital platforms. The findings suggest Facebook and Google’s discursive strategies were deployed to protect, strengthen and enforce platform sovereignty. The case study offers lessons for platform regulation globally in understanding how platforms respond to legislation.

Keywords

Introduction

Australia’s Treasury Laws Amendment (News Media and Digital Platforms Mandatory Bargaining Code) Act 2021 (NMBC) requires digital platforms to negotiate payments with news publishers for content appearing on the platforms. It is part of what has been called the regulatory turn of governments globally towards digital platforms (Bossio et al., 2022; Flew et al., 2021). The NMBC was introduced as a key recommendation of the Digital Platforms Inquiry (DPI) conducted by the Australian Competition and Consumer Commission (ACCC) with the stated goal of addressing the power imbalance between digital platforms and news publishers. Facebook and Google lobbied against the code, both behind closed doors, and through a highly visible public-facing campaign which included blogs, videos and pop-up communications across their interfaces including News Feeds, Google Search and Home Page, and You Tube. The campaign culminated in Facebook banning Australian users from accessing Australian news and related content on the platform on 17 February 2021.

This article offers an in-depth analysis of the platforms’ public responses to the code at a textual level. I use critical discourse analysis (CDA) to examine the language, design, placement and use of proprietary user interfaces in platforms’ campaign efforts. Drawing on Althusser (2020 [1972]), Chun (2011, 2016) and Foucault (1972, 1980), this research also investigates the role of the user interface in platform power, in particular, how platform users are interpellated by digital platforms. The findings suggest discursive strategies and proprietary interfaces were deployed as a means to protect, strengthen and enforce ‘platform sovereignty’ (Bratton, 2015). Platform sovereignty sees platforms seek to operate outside state-imposed regulatory frameworks, create their own rules and laws, and indeed, encroach into state sovereignty. Facebook’s incursion into government health communications during the Covid-19 pandemic, as discussed below, is one example among many of how platforms used NMBC negotiations to demonstrate their sovereignty.

The News Media Bargaining Code

In July 2019, the ACCC released findings of its DPI into the power of digital platforms, with a focus on Facebook and Google. It made 23 recommendations in relation to competition and market share, consumer protection, data and privacy, misinformation, news consumption habits and the impact of declining advertising revenue for traditional media. Significantly, the ACCC found that Google was an unavoidable trading partner for news media outlets, and that it was necessary for news media outlets to be on Facebook in order to reach audiences (Australian Competition and Consumer Commission, 2019). A key recommendation was that each platform establishes its own code of conduct governing its commercial relationship with news media businesses. However, unsatisfied with Facebook and Google’s progress on their self-regulated codes, in April 2020 the Government directed the ACCC to develop a mandatory code of conduct, which would require Facebook and Google to pay news media businesses for the news content shared on the platforms, notify media companies of upcoming algorithm changes that are relevant to the ranking or distribution of news content and report on what data it collects about users’ engagement with news content (Frydenberg and Fletcher, 2020).

In July 2020, the ACCC released the draft NMBC, which passed into law in February 2021 after extensive public and private lobbying from Facebook and Google resulted in a watered-down code. Several analyses of NMBC have described it as a catalyst for the global regulatory turn, wherein governments are seeking to rein in digital platforms (Bossio et al., 2022; Flew, 2021; Flew et al., 2021). Picard and Park (2021) note new inquiries and/or regulations regarding digital platform power and monopoly have arisen in 47 US states, the European Union, South Korea and Japan following the NMBC. Similarly, the literature notes the learnings other jurisdictions can draw from the implementation of the NMBC legislation, such as the prospect of creating ‘accidental policy’ as an unintended consequence of other legislative agendas (Picard and Park, 2021); the difficulties of negotiating with major platforms that may lead to compromised legislation (Lee and Molitorisz, 2021); and the need for regulators to have enforcement powers (Wilding, 2021). With global attention on platform power, academics and journalists have argued that Facebook and Google’s primary objective in their responses to the NMBC was to avoid an international precedent for how platforms could be regulated (Barnet, 2020, 2021; Bossio, 2021; Bruns and Angus, 2021; Leaver, 2021; Margetts et al., 2021; Meade, 2021; Meese, 2020, 2021). This article contributes to the ongoing discussion through a detailed textual analysis of the public relations campaigns waged by Google and Facebook on their respective platforms.

Platform politics, regulation and sovereignty

In the early days of digital platforms, governments viewed their role as one of facilitating growth rather than regulation. However, as the potential harms of platforms become more broadly known, we are now seeing a global regulatory turn (Flew et al., 2021). Where governments were once content to let platforms self-regulate, they are now moving towards direct intervention (Gorwa, 2019). Definitional ambiguity about what a digital platform is or does has proven to be an obstacle to regulation. Platforms make use of discursive strategies, including the word ‘platform’ itself, to suggest that they are a ‘neutral’ technology, a mere mediator of content and services (Gillespie, 2010). Similarly, Napoli and Caplan (2017) argue that digital platforms strategically deploy language to avoid regulation, by insisting, for example, that they are tech companies and not publishers. The UK’s Department of Digital, Culture, Media and Sport directly challenges platforms’ self-definition, recommending the scope of technology companies’ liabilities be tightened and that tech companies be defined as somewhere between a publisher and a platform (Flew et al., 2021: 134). The Australian approach, however, has been less critical of existing definitions. Rather, the ACCC in the DPI and NMBC adopts a definition of platforms as ‘online search engines, social media and digital content aggregators’ (Australian Competition and Consumer Commission, 2019: 4). This recalls the techno-centric framing of platforms themselves (Gillespie, 2010; Napoli and Caplan, 2017) and suggests the Australian Government has no immediate interest in regulating platforms as publishers.

Several studies have evaluated digital platforms’ discursive strategies and the ways they are used to pursue platform companies’ ideological and economic priorities. There are, however, vastly more analyses of user-generated discourse within platforms, than analyses of platform-produced discourses, which both creates a limitation to understanding platform discourse and provides an opportunity for my paper to contribute to the field. Of the studies that do exist, themes of the platforms’ discourse include intercultural connection (Elkins, 2019), gig worker autonomy and freedom (Shibata, 2020), and sustainability and the sharing economy (Ranchordás and Goanta, 2020). Popiel (2018) argues platforms have established themselves as powerful political actors through lobbying and public relations campaigns. Popiel and Sang (2021) conducted a content analysis of platforms’ publicly available policy blog posts and found that platforms sought to minimize regulation, while also seeing a role for governments, particularly in coordinating global standards, through minimal, ‘frictionless’ regulation. Their analysis also highlights an adherence to the ideology of tech solutionism (Morozov, 2013) and the Silicon Valley ethos (Levina and Hasinoff, 2017) that focuses on disruption, privatization and individualism. These discourses serve platforms’ interests by reinforcing the neoliberal hegemony of small government and disguise platform power behind an appearance of benevolence and competence.

There is an established field of study examining the economic and political power of platforms, including platform politics (Gillespie, 2010), platformization (Helmond, 2015), platform capitalism (Srnicek, 2017), surveillance capitalism (Zuboff, 2019) and technopolitics (Sadowski, 2020). Van Dijck et al. (2018) have conceptualized the ‘platform society’ to demonstrate how platforms are increasingly acting as state institutions, and a similar theme is explored by Couldry (2012) investigating the link between platforms and social order. Bratton (2015), examining platform sovereignty, notes that sovereignty is the right to make laws: ‘authority over its own internal mechanisms and institutions; and . . . the right to separately determine their own domestic structures of authority’ (p. 20). For digital platforms, this sovereignty is uniquely derived from its infrastructure, over which it has complete control (Bratton, 2015). Pasquale (2018) has described platforms as possessing functional sovereignty through their extra-judicial decision-making, a theme also analysed by Lehdonvirta (2022) and Suzor (2019), among others. This article aims to contribute to these discussions by locating the digital platforms’ public communications in the NMBC case study as key sites of enforcement of platform sovereignty.

Research framework

This article uses CDA to analyse the texts and the infrastructure used by Facebook and Google in the NMBC case study. CDA emerged from the field of structural linguistics (Mchoul and Grace, 1995) and is now broadly used across media and social science disciplines. CDA seeks to analyse discursive practices and their relationships to power through both descriptive and normative approaches (Fairclough, 2010). As discussed above, there is an evolving field of research using CDA to analyse platform discourse and its relationship to power, regulation and sovereignty (Elkins, 2019; Popiel and Sang, 2021; Ranchordás and Goanta, 2020; Shibata, 2020).

My discourse analysis is supported by theoretical frameworks from Chun, Althusser and Foucault. For Chun (2011), the creation of the graphical user interface (GUI) in the late 1970s was critical to the transformation of users into subjects. Users moved from text-based command interfaces to a user-friendly, graphics-based interface, which gave users a new feeling of control and empowerment. Chun relates the ease of the GUI to Althusser’s (2020 [1972]) concept of ideological interpellation, wherein individuals are recruited into the dominant paradigm through discursive tactics such as appeals to obviousness, deflection, repetition and the hailing of subjects. For Althusser, ideology functions to reproduce the forces and relations of production. In the digital age and on the platformized web, however, the value extracted from workers is no longer their labour power, but the time they spend on the platform, the data they produce and their very reliance on a given technology or service.

The use of user data by platforms is only now beginning to be regulated, after many years of operating largely free from government oversight. Users choose to become subjects of the platform, and thus be governed by it, in exchange for access to the platform. Unlike disobeying a law, users cannot operate outside the platform’s rules, which reinforces their subjectivity and entrenches users’ service of platform political and economic aims. Viewing platform technology through Chun’s interface paradigm allows us to ask how communication between platform and users is affected by platform infrastructure as well as language, and how these underpin user subjectivity. In a similar way, Foucault (1972: 49) articulated the need for discourse analysis to include not only language but ‘the objects of which they speak’, which in this case is the user interface. Foucault’s (1980, 1988) work places emphasis on the production of truth and knowledge as an exercise of power, as well as on the production of subjectivities through discourse. These approaches are appropriate for seeking to understand how platforms articulate and leverage power through discourse and infrastructure.

Research questions

RQ1. What discourses are invoked in Google and Facebook’s public campaign against the NMBC and how are they used to pursue and enforce platform sovereignty?

RQ2. How do these platform companies’ GUIs interpellate users into ideological positions that support platforms’ political aims?

RQ3. What lessons can be learnt from the NMBC case study about the campaign tactics of digital platforms?

Method

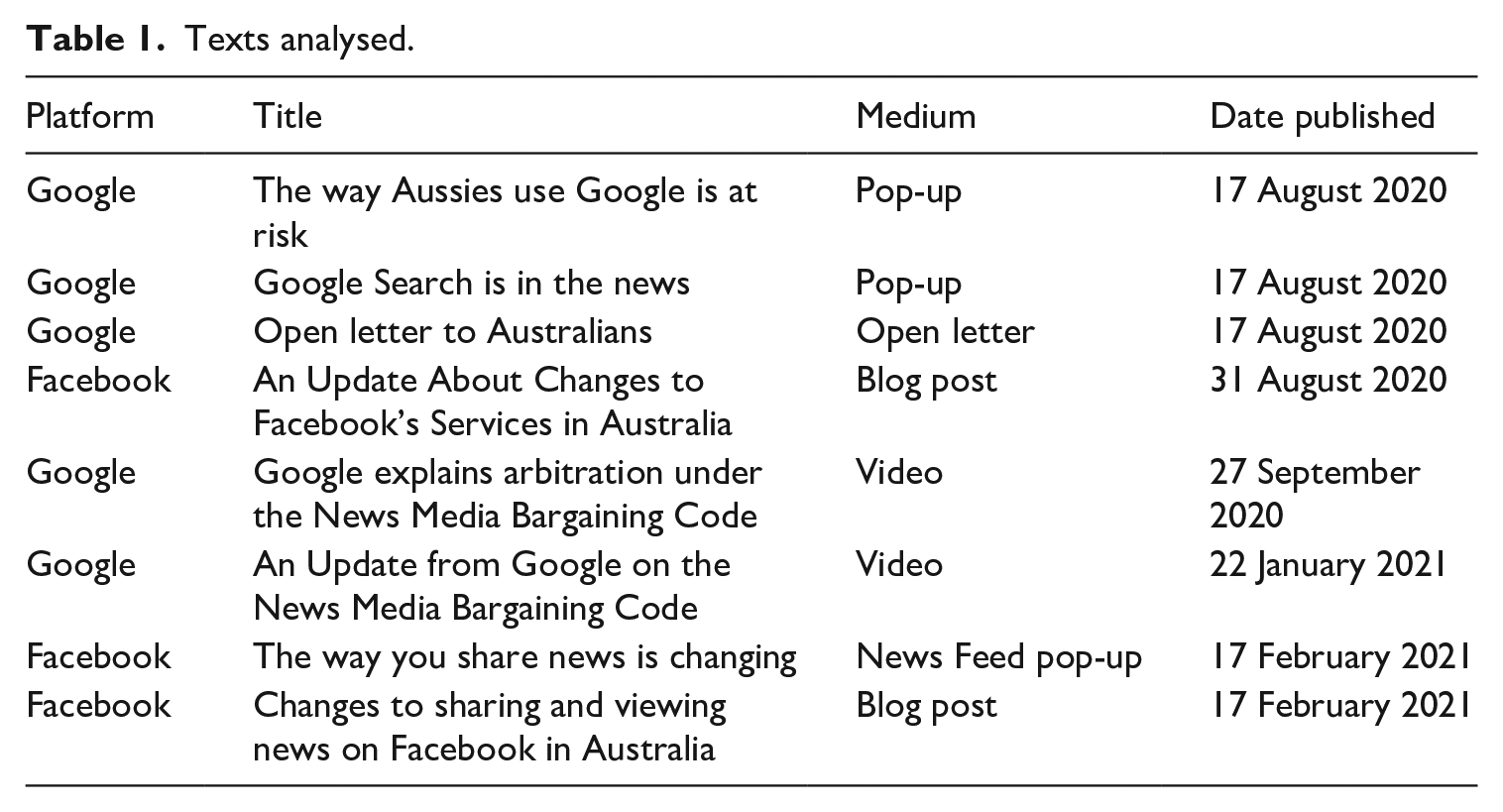

The ACCC released the draft NMBC on 31 July 2020, with the bill being passed into law on 25 February 2021. This span of nearly 7 months offers a natural window for data collection. In this time, there were 1501 press articles mentioning the NMBC (according to Australia and New Zealand Newsstream) and the Australian government and regulator produced a combined 10 press releases. In the same time, Facebook produced four public statements, including three posts published to their internationally focused Newsroom and one pop-up placed in users’ News Feeds. Google published 29 blog posts, open letters and videos, together with pop-up and banner ads across its home page, Search results and YouTube. The first stage of my research involved a schematic review of some of the news and government texts, before focusing on a set of key documents. I selected key texts published by Google and Facebook for deeper analysis, based on their relevance, genre, uniqueness of message and language, and the timing of the text’s distribution relative to the overall timeline. Significantly, platforms’ key messages were repeated across texts, and thus, I have chosen not to re-analyse the same arguments across different mediums. The key texts were all published on the platforms’ own websites, which allowed me to investigate the relationship between the texts and their infrastructural context (see Table 1). I used an inductive approach to analysing each text, moving from a close reading of the texts to larger observable patterns, before linking these to the broader questions of platform power, regulation and sovereignty. As is common in qualitative research, my textual analysis is focused on elements that are relevant to answering my specific research questions. However, I suggest that the broad themes derived from these texts are also relevant beyond the specific scope of case study.

Texts analysed.

Findings and discussion

Platform versus state sovereignty

One month after the release of the draft NMBC, the Managing Director of Facebook Australia and New Zealand, Will Easton, published a post to Facebook’s global Newsroom titled ‘An Update About Changes to Facebook’s Services in Australia’. This statement is the first time Facebook publicly issued its threat to block Australian news content. It states,

Assuming this draft Code becomes law, we will reluctantly stop allowing publishers and people in Australia from sharing local and international news on Facebook and Instagram. This is not our first choice – it is our last. (Easton, 2020)

In naming ‘publishers’ and suggesting that they are active on Facebook and Instagram, the statement positions publishers and Facebook as different types of actors. Althusser (2020) noted that appeals to obviousness are a key tactic of ideological interpellation, and in Easton’s statement we see how Facebook is obviously not a publisher. The statement acts to reinforce Facebook’s own ‘techno-centric framing’ (Napoli and Caplan, 2017), used to emphasize the technical aspects of their business model and downplay the significant social, cultural and economic role they play. The statement also frames the clash between Facebook and the Australian Government in a way that both foregrounds and conceals the structural power of the platform. On one hand, Facebook presents itself as ‘reluctantly’ reacting to the measures proposed by the government. On the other hand, it reveals Facebook’s sovereign power over news outlets and users. That is, its ability to unilaterally determine who can operate within its infrastructure, and what they can do.

It is worth considering to whom this threat is directed. Facebook’s Newsroom can be freely viewed by anyone and, as with most corporate news pages, a typical audience would include journalists and policymakers. It does not invoke the reader as ‘you’, the user, and as a result it does not appear to be targeted at Australian users. Easton is the highest-ranking Facebook employee in Australia and his status conveys the legitimacy and seriousness of the message. It is a message from one powerful political actor intended to reach other powerful actors. As Meese (2020) notes, policy makers in countries such as the United Kingdom and Canada were watching the Australian case carefully at the time, as those countries prepared their own platform regulation. Facebook’s threat in Australia appears to foreshadow how it plans to approach regulation globally.

Facebook’s threat was realized nearly 6 months later when it banned news content for Australian users, issued a public statement and placed a pop-up in users’ News Feeds. The statement in the Newsroom accompanied briefings Facebook offered international journalists, with the Australian media unaware until after the fact (Meade, 2021). This once again suggests Facebook was more concerned with sending a signal to other jurisdictions considering regulating the platform than with its Australian audience. In addition to news media content, many government health and emergency services Pages were also banned, including the Bureau of Meteorology, various health departments, and fire and rescue services (Moon, 2021; Taylor, 2021). In the middle of the Covid-19 pandemic, users in Australia could no longer find official information about the virus on Facebook, including information about Australia’s impending vaccination rollout. A key message in Facebook’s statement on the role of health information is instructive for how it positions the company and the Australian government:

We recognise it’s important to connect people to authoritative information and we will continue to promote dedicated information hubs like the COVID-19 Information Centre, that connects Australians with relevant health information. Our commitment to remove harmful misinformation and provide access to credible and timely information will not change. (Easton, 2021)

With Facebook recognizing the importance of ‘authoritative information’, and committing to connect users to ‘relevant health information’ and ‘remove harmful misinformation’, it is positioning itself as the provider of official health information to the exclusion of the Australian Government. Not only does this statement not mention the government’s role as a provider of health information and the administrator of the Australian health system, but the statement is accompanied by the blocking of government health Pages to Australian Facebook users. As Barnet (2021b) notes, amid growing Covid misinformation, Facebook’s act of shutting down the most reliable source of health information was farcical. The absurdity of the statement is made clear when one recalls it was delivered via Facebook’s news and policy blog, and not communicated directly to users – those most vulnerable to misinformation. Facebook appears to have positioned itself as the sole arbiter of legitimate information. This hierarchy of knowledge (Foucault, 1980) is a discursive tactic through which power relations are revealed, as Facebook appears to enforce its sovereignty through its incursion into a clear government function.

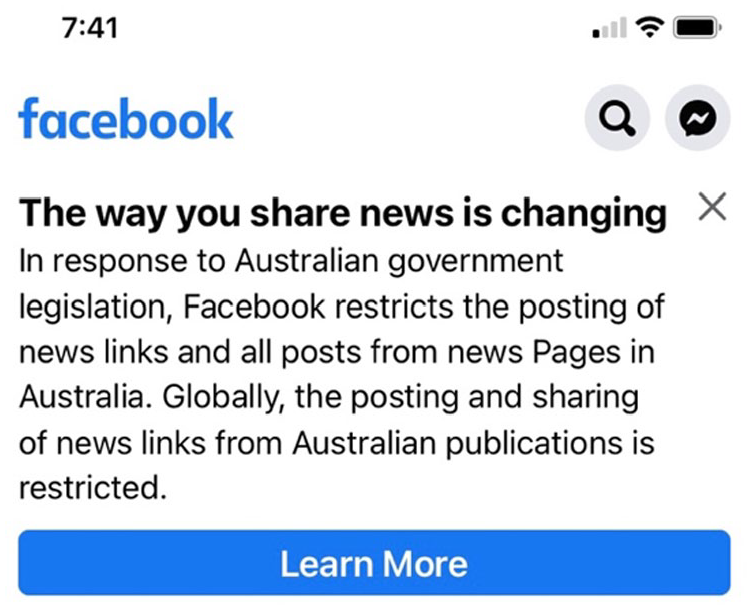

Users found out about the news ban through a pop-up notification in their individual News Feeds (shown in Figure 1). This was the first and only time Facebook communicated directly with its users through its interface about the NMBC. Unlike its Newsroom statements, the pop-up had no authorial attribution. Rather than a representative addressing users on behalf of the company, the pop-up appeared as a unidirectional and unavoidable communication directly from the platform. The text notably does not address users by name, which we know Facebook is capable of doing. For example, Facebook addresses users by name in the prompt, ‘what’s on your mind, [user name]’. These actions taken together suggest little regard for users. The pop-up was phrased as a statement of the new restrictions, which the user had no ability to contest. Instead, as others have noted (Meade, 2021; Meese, 2021), the platform’s more nuanced communications were addressed to other powerful political actors. The company appeared more concerned with avoiding an international regulatory precedent. As we now know, this tactic proved successful, with the government amending the NMBC bill so that Facebook and Google would not be designated as platforms – and thus, directly regulated – provided they entered self-administered commercial arrangements with publishers (Barnet, 2021a).

Facebook (2021) screenshot by author.

The pop-up also notably does not mention the health information communicated in Facebook’s statement. At the time of the ban, Facebook claimed that due to the lack of definition in the draft NMBC, they had to take a broad definition of the term ‘news content’ and, as a result, inadvertently blocked health department Pages (Bossio, 2021). However, as internal Facebook whistleblowers have subsequently claimed, the breadth of the Page takedown process was deliberate, in order to knowingly take down health pages as a bargaining tactic to force the government’s hand (Hagey et al., 2022). This display of platform power, combined with the clear incursion into a government function of public health information, reveals the extent of platform sovereignty: a situation wherein platforms hold as much, if not more, power than states. In its response to the NMBC, Facebook demonstrated not only an unwillingness to be regulated but also the power to displace the governments in an area as important as health communication during a pandemic.

Google’s responses display a similar ideology, which is most prevalent in their video ‘An Update from Google on the News Media Bargaining Code’ on 22 January 2021, spoken by Google Managing Director Mel Silva. The video was posted on the Google blog and on YouTube and was ostensibly aimed at Australian users. In the closing section of the video, Silva states,

We’re not against a new law, but we need it to be a fair one. Google has an alternative solution that supports journalism. It’s called Google News Showcase. It would operate under this new law and would support Australian journalism without breaking how Search works. (Google, 2021: 1:26)

The statement ‘we’re not against a new law, but we need it to be a fair one’ directly challenges the government’s attempt at regulation. Throughout Google’s public statements in response to the draft NMBC, it has sought to position the government as incompetent and untrustworthy, while offering itself up as the technocratic alternative. Silva’s ‘alternative solution’ appears to suggest platforms are better placed to create legislation than the state. As both Bratton (2015) and Lehdonvirta (2022) note, proposing alternative, watered-down regulation is a key tactic employed by platforms attempting to avoid the state. Indeed, creating technological solutions that are intended to eradicate the need for regulation are attempts to displace the state and any potential policy responses.

Power relations and the construction of truth

Between 7 August 2020 and 21 January 2021, Google published nine videos via their blog, YouTube and social media channels. With the exception of the Mel Silva video discussed above, which had 2.2 million views, Google’s videos received little attention, with viewership (as on 10 October 2021) ranging between 1231 and 30,442 per video. This suggests that, rather than being a key communication tool, the videos were produced to prevent a data void, which Golebiewski and boyd (2019) define as occurring when ‘obscure search queries have few results associated with them, making them ripe for exploitation by media manipulators with ideological, economic, or political agendas’ (p. 2). Google’s prolific content made its own perspective dominate any Search or YouTube results about the NMBC, thus preventing malevolent or opposing content from occupying that space.

The videos are also instructive for what they reveal about power relations. The video ‘Google explains arbitration under the News Media Bargaining Code’ features comedian Greta Lee Jackson on a bus:

Proposed laws can be confusing, so I’ll use an analogy to break it down. Take a seat. So, this bus we’re on picks up people at their homes and drops them off at restaurants all across town. Sure, people could have walked or driven themselves, but the bus is convenient. Under a new law being drafted, the bus driver would need to pay the restaurants for delivering those customers to their doorstep. Sounds weird, huh? (Google, 2020a: 0:01)

Jackson’s words, sweet tone of voice and pace when delivering her lines is reminiscent of a primary school teacher talking to young children. This is reinforced with the command to ‘take a seat’, as a teacher might instruct a student, suggesting both authority and trust. These combined with the overly simplified explanation of the bus and restaurants constructs a power relation between Jackson and the audience wherein Jackson, as a stand-in for Google, is both superior and benevolent.

The video also implies that Google is superior to the government in matters of law and technical expertise – in the analogy, the proposed law is ‘weird’. Notably, the analogy is never explained to the audience; Jackson does not say that Google is the bus, and publishers are the restaurants. Without these points of reference, or the broader context of the NMBC, the analogy as described does indeed sound unfair. This appears to be a deliberate discursive strategy designed to confuse the real issue and prompt the audience to agree that the proposed law is unfair – thus remaining sympathetic and continuing to use the platform. These tactics are replicated by Silva, who, like Jackson, uses a teacher-like tone and pace, and is dressed authoritatively in a blazer. Silva states,

I’m Mel Silva, and I lead Google here in Australia. If you’re like most Australians, you use Google Search to find and learn things online. Whether it’s help with homework, an easy dinner recipe, or directions to the local take-away shop. But a proposed new law, the News Media Bargaining Code, would break how Google Search works in Australia. Now I know that sounds pretty full on, but it’s true. (Google, 2021: 00:01)

Phrases such as ‘whether it’s help with homework, an easy dinner recipe, or directions to the local take-away shop’ are reminiscent of Jackson’s bus-and-restaurant analogy, where information is presented in a simplified way to appeal to a presumedly unsophisticated audience. Similarly, the phrase ‘pretty full on’, along with Jackson’s ‘sounds weird, huh?’, employs Australian colloquialisms in an attempt to appeal to the audience, creating intimacy and implying the speakers can be trusted because they are just like the viewers. These videos appear to be constructing a position of trust and authority, while positioning the government as incompetent.

While the rhetorical strategies adopted by Google and Facebook were different, the message about government was the same: the government cannot be trusted on the issue of platform regulation. In Easton’s Facebook statement this is evident in the following extract:

The proposed law fundamentally misunderstands the relationship between our platform and publishers who use it to share news content. It has left us facing a stark choice: attempt to comply with a law that ignores the realities of this relationship, or stop allowing news content on our services in Australia. With a heavy heart, we are choosing the latter. (Easton, 2021)

Here, language such as ‘fundamentally misunderstands’ and ‘ignores the realities’ constructs a position of state incompetence, and the need for platform autonomy free from government interference. It appeals to Easton’s authority, by virtue of his high-level position, to emphasize Facebook’s role as the technical expert, as compared to the government’s technological illiteracy. As with the health information example, Facebook again positions itself as the holder of official knowledge, a function traditionally carried out by the state and its institutions. This power relation, in the positioning of Facebook as superior to the government, supports the broader platform sovereignty perspective. Threats and bans played a key role in Facebook and Google’s efforts to avoid being regulated under the NMBC. However, it is clear these efforts were supported by the discursive construction of power relations, wherein the platforms were positioned as trustworthy and authoritative, in contrast to an incompetent government and a dependent – and dependable – user base.

Platforms’ infrastructural power

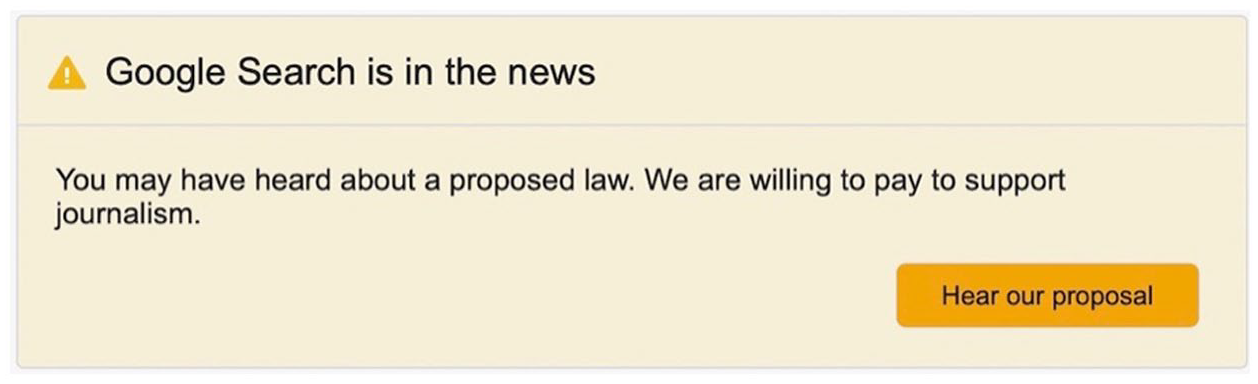

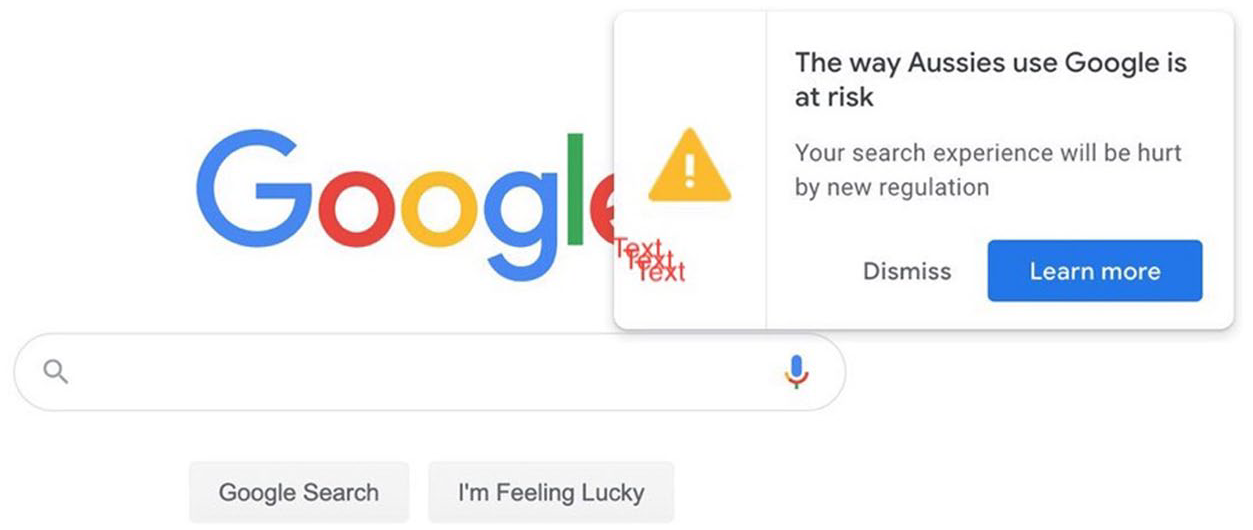

On the same day that Google released its Open Letter, it also began a campaign of pop-up ads targeting users, which appeared in various positions on the Google homepage and at the top of Search results. These pop-ups gave Google unprecedented access to users, and indeed, according to Google’s own commissioned research, more than half of all adults in Australia saw the pop-ups and Open Letter. Fifty-five percent viewed them via the Google homepage and 48% via YouTube. In total, 72% of people surveyed were aware of the NMBC (Cheik-Hussein, 2020).

The GUI has been key to facilitating this access. As discussed above, the GUI acts as a gateway between users and the internal workings of computers, visually representing the computing options available to users. For Bratton (2015), the power of the GUI is that to users the image is the technology and, thus, the image takes on the power to dictate user action. The concept of icon as technological object can be seen in Google’s exclamation point, which appeared in all the platform’s public communications and can be seen in Figures 2 and 3. The yellow triangle suggests it is intended as a direction to the user, just as a traffic sign directs road users. The exclamation point is a symbol signifying importance and emphasis. Together they serve as a warning. With users having been conditioned through their use of interfaces to recognize icons as technological objects, which in turn direct user action, the repetitive exclamation point and triangle together create a new object that signals platform communication and demands user attention. Recalling Althusser and Chun, repetition is a key tactic of interpellation.

Google (2020b) screenshot by author.

Google (2020c) screenshot by author.

This is not the first time a platform has communicated directly with users over policy and regulatory issues. Following the scandal around how Google Search results for the term ‘Jew’ preferenced anti-Semitic results, Google used a pop-up at the bottom of the Search Home Page to direct users to its public statement (Noble, 2018). Similarly, Wikipedia uses a banner at the top of the page to solicit donations during their annual fundraising campaign (Linek and Traxler, 2021). Likewise, a range of websites famously held a black-out to protest Stop Online Piracy Act and the Protect IP Act (SOPA/PIPA) censorship legislation in the United States (Kozak, 2018).What is notably different about the Google pop-ups, however, was their longevity. For nearly 7 months, it was unavoidable for Australian users of Search, Gmail, YouTube and other Google applications to see one or another pop-up. The messages of the pop-ups, which were reconstructions of the text in Google’s videos and blog posts, were perhaps not as important as the delivery medium, which demonstrated Google’s sheer infrastructural might and power.

With the pop-ups, there was limited action a user could take: they could click on a button and be taken to the Open Letter, or they could dismiss the pop-up and continue with their search. As noted earlier, interfaces are designed to give the impression of individual user control and direct manipulation of the screen (Chun, 2011). Each icon on the interface represents a choice for the user. However, actual user choice is limited by design, in order to reduce complexity for the user. As Bratton (2015) notes, designers of systems and interfaces always select which actions they want users to be able to take. While users may continue to feel in control and autonomous via their interfaces, their choices and scope of actions are limited by what the platform chooses to offer them.

If users chose in this case to click through to ‘learn more’ they would land on Google’s Open Letter, which states: ‘You’ve always relied on Google Search and YouTube to show you what’s most relevant and helpful to you. We could no longer guarantee that under this law’ (Silva, 2020). Here, language such as ‘you’ve always relied’ and ‘most relevant and helpful to you’ acts to position users as dependent upon a benevolent Google. What is not said, but implied through an appeal to obviousness, is that Google has made its services relevant and helpful purely for beneficent reasons, and that these would not be under threat if not for government interference. This is a deflection from the fact that Google makes its services useful and relevant in order to keep users on their platform, thus allowing Google to monetize users’ data and attention though advertising. Deflection, according to Althusser (2020), is another key discursive strategy in the ideological interpellation of subjects. Zuboff (2019) makes a similar observation, highlighting former Google CEO Eric Schmidt’s deflective move when he stated: ‘The reality is that search engines including Google do retain this information for some time’. As Zuboff (2019) notes, it is not in the imperative of search engines but rather surveillance capitalism – and thus the drive for profit – that requires this data (p. 15). The Open Letter replicates the rhetorical strategy Zuboff points out, for its focus is on how Google helps users, but not why.

The significance of this framing is highlighted by the next sentence of the letter: ‘We could no longer guarantee that under this law’. The sentence acts as a threat by virtue of the power relations between the speaker, Mel Silva, in her role of authority at Google, and the audience, individual Google users. The threat is only possible because of the access Google has to its users, and through the preceding work done to position Google as being on users’ side.

The address of the audience as ‘you’ is common across the Google pop-ups and Open Letter, as well as in Facebook’s pop-up, as noted earlier. In the classic Althusserian example, the police officer calls out ‘hey, you there!’ to a person on the street, and the person turns around, understanding they were the subject being addressed (Althusser, 2020: 48). The person recognizes the hail as for them because they understand the power relation between themself and the authoritative voice that hailed them, thus already existing within ideology. Likewise, Google addressing users as you is not merely a hail, but an ideological act, for, as Althusser describes, they are the same thing. Chun (2016), in applying Althusser’s hail to the digital age, highlights how networks create the ‘you’ rather than a ‘we’ in order to serve ideological aims. In the pop-up, the ‘you’ creates distance between Google and the user, acting as an assertion of power, much like the police officer’s hail. Whereas the officer’s authority is conferred by the state, Google’s authority is granted via the terms and conditions that bind users, and Google’s infrastructural power. This distance acts to suggest it is the computer system itself sending the message to millions of Australian users, and not any of the individuals that work at Google. The unregulated nature of platforms and their economic imperatives are rendered invisible to users, who come to see themselves as part of the system. When the platform speaks to users, they are doing so from a constructed position of power that is distant and authoritative, yet trustworthy.

Conclusion

In this article, I set out to conduct a discourse analysis of platforms’ public responses to Australia’s News Media Bargaining Code. Google and Facebook used different strategies for similar aims. Google led the debate via the interface they provide to users in order to command public opinion and prevent a data void. Facebook, on the other hand, communicated less frequently and aimed their addresses to global political elites. Notably, the platforms’ efforts were successful enough to avoid being designated under the legislation, and although the platforms must still enter financial arrangements with publishers to maintain this status, Facebook and Google have succeeded in preventing a regulatory precedent being set.

My analysis has shown how platforms employ techno-centric framing to position themselves as neutral intermediaries or tech companies to avoid regulation (Gillespie, 2010; Napoli and Caplan, 2017), and that the Australian government has adopted the same framing, unlike other jurisdictions such as the United Kingdom. Platforms position themselves as superior to a technologically illiterate government, as well as benevolent and trustworthy to users, both of which are used to suggest platforms are best placed to create alternatives to government legislation. Importantly, Facebook was willing to block access to verified government public health information during a pandemic as a demonstration of platform power and an incursion into state sovereignty. Platforms also employed the strategies of ideological interpellation (Althusser, 2020), such as appealing to obviousness, deflection, repetition and hailing subjects as ‘you’, which helps maintain a user base locked into a largely unregulated system of attention and data capture and monetization. These discursive strategies all suggest the question of platform versus state sovereignty remains ongoing.

Similarly, my analysis shows that the sustained use of the GUI as a site of campaign communication, particularly for Google over a period of nearly 7 months, helps to reinforce the ideology of platform sovereignty. Tactics such as repetition, illusion of choice and positioning through the relations of power help situate the GUI as a site of ideological interpellation (Chun, 2011, 2016). Finally, this analysis suggests regulators should be mindful of how they define terms such as platform to avoid adopting platforms’ own political framings. Regulators should also consider implementing or utilizing their own proprietary communication channels rather than relying on platform apps, as platforms have shown their willingness to block access as a bargaining tactic.

This article has employed a deep reading of platform responses to the NMBC to highlight how platforms use communications in their battle to avoid being regulated. The analysis supports earlier research that shows Australia is at the forefront of the digital platform regulatory turn, and that platforms will take extreme measures, such as blocking access to entire countries, in order to achieve their aims. Finally, this article contributes to a growing body of research examining the intersections of platform power, regulation and sovereignty.

Footnotes

Acknowledgements

I would like to thank and acknowledge the invaluable contribution from Christopher John Müller and Tai Neilson, whose feedback helped inform my thinking on this topic and shape the structure of this article. I appreciate your collective guidance, patience, generosity and wisdom.

Author’s Note

The author agrees to this submission and asserts that the article is not currently being considered for publication by any other print or electronic journal.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.