Abstract

This article analyses the novel form of live political fact-checking, as performed by the Norwegian fact-checking organisation Faktisk.no during the Norwegian parliamentary election campaign in 2021. The aim of the study was to investigate the epistemological consequences of introducing a breaking news logic to political fact-checking. Through methods of participatory observation, interviews and textual analysis, the study finds that Faktisk.no used several strategies to bridge the ‘epistemic gap’ between the logics of breaking news and political fact-checking. Combined, these strategies pushed the live fact-checking towards a

Introduction

During the recent decade, political fact-checking has risen to become a global phenomenon (Cheruiyot and Ferrer-Conill, 2018; Graves, 2016; Graves and Cherubini, 2016). The practice goes beyond the traditional ideal of balance in political journalism as it involves evidence-based assessments of the truthfulness of a political claim (Graves and Amazeen, 2019). Political fact-checking thereby involves a much higher degree of what Ekström et al. (2021) label ‘epistemic effort’, meaning the degrees to which journalists invest time and efforts to produce knowledge claims. Genres of journalism involve varying degrees of such epistemic efforts. Producing breaking news from the newsroom desk typically involves a low degree of epistemic effort, because the immediacy of breaking news prevents the journalists from investing time and resources for extensive critical assessments of sources and information. Political fact-checking, on the other hand, emerged as an organised response to long-standing criticism of conventional objective reporting, in which journalists are reluctant to challenge questionable claims and default instead to ‘he said/she said’ reporting that gives equal weight to competing views (Graves, 2016). Political fact-checking and breaking news journalism therefore occupy the opposite ends of a scale from low to high degrees of epistemic labour in various forms and genres of news work. A question is therefore what happens when fact-checkers decide to apply a breaking news logic and perform live fact-checks of political debates. How do they then seek to bridge the gap between the high degrees of epistemic efforts required by traditional political fact-checking and the low degrees of epistemic efforts normally involved in breaking news production? To what degrees can they produce both critical and credible fact-checks when time is of essence?

This article analyses such an attempt at bridging the ‘epistemic gap’ between breaking news reporting and political fact-checking, namely the Norwegian fact-checker Faktisk.no’s live fact-checking of political debates during the 2021 parliamentary election campaign in Norway. This novel form of political fact-checking emerged in the United States and later in the United Kingdom, for example, when the British fact-checking service Fullfact started fact-checking televised political debates in 2015 by tweeting fact-checks of claims while the debate was still aired (Hawkins, 2016). A similar initiative took place when US fact-checkers at PolitiFact and FactCheck.org teamed up with Washington Post and Duke Reporters’ Lab in conjunction with the US state of the union address in 2018. Together, they created the FactStream app as a common digital space to disseminate the live fact-checks from all three newsrooms (Adair, 2018). These fact-checkers’ solution to the epistemological tension between the logics of breaking news production and political fact-checking was not to do original checking but simply to flag when debaters repeated claims that had already been checked (How PolitiFact live fact-checks debates, 2020). Inspired by the British fact-checkers Fullfact, Faktisk.no first started experimenting with live fact-checks of political debates in 2017. In conjunction with the 2021 parliamentary elections in Norway, they decided to live-check three major debates. They wanted to not only fact-check claims based on previous fact-checks but also do original live fact-checks of claims not previously checked. This unique case thereby represents a more epistemologically ambitious attempt at merging the logics of breaking news production with political fact-checking.

This article presents an analysis of Faktisk.no’s live fact-checking in 2021, using mixed-methods involving participatory observation, interviews and textual analysis. The aim of the analysis is twofold: first, we wish to find out which strategies Faktisk used to bridge the epistemic gap between breaking news and political fact-checking. Second, we wish to investigate the epistemological consequences of those strategies. The article starts with a review of research on fact-checking and epistemology, ending with the formulation of two research questions. The research methods are then outlined, before findings are presented and discussed. Ultimately, the article concludes that live fact-checking, in the case of Faktisk, involves strategies to minimise complexities in claims to fact-check, a reliance on predefined credibility of sources, and a push towards what we label

Journalism, fact-checking and epistemology

Journalists are ‘epistemic workers’ whose professional role involves ‘assessing the knowledge claims made by others, and then making knowledge claims of their own’ (Örnebring, 2016: 75). They prepare well for scheduled events such as elections, political meetings and sports events and have established routines for both the assessment and the production of knowledge claims, for instance, interviewing sources that are pre-justified as reliable (Ekström and Westlund, 2019; Ettema and Glasser, 1998). Journalism studies scholars have approached epistemology as the study of how news publishers and journalists know what they know and how they articulate and justify their knowledge claims. Research into epistemologies has advanced in recent years amid digitalisation and changing conditions for journalism, as well as developments of various epistemologies of digital journalism (e.g. Ekström et al., 2020). This includes emerging forms of participatory journalism (Kligler-Vilenchik and Tenenboim, 2020), structured journalism (Graves and Anderson, 2020), as well as data-driven epistemologies of journalism (Carlson, 2018; Ekström et al., 2022).

The epistemology of breaking news production

The news industry seemingly has accelerated their production cycles and developed epistemologies of digital journalism focusing on breaking news (Usher, 2018). Studies have focused on the epistemologies of live blogging (Thurman and Walters, 2013) and online live broadcasting that puts emphasis on liveness and out-there-ness, as well as rapid newsrooms desk production of breaking news articles using pre-justified source materials (Ekström et al., 2021; Ilan, 2021). Importantly, in breaking news and live reporting, the knowledge claims gravitate towards quickly updating information about unfolding public events. Journalists have developed routines and standards for approaching a network of pre-justified sources, but also language that allows them to publish news quickly without making strong knowledge claims. In online live broadcasts, journalists visualise out-there-ness and produce the live moment from the event site, and they seek out interviews with pre-justified sources such as public officials, similarly to (online) sourcing practices more generally (Thorsen and Jackson, 2018).

The logic of breaking news production pushes journalists to calculate their epistemic efforts, that is, ‘what is required to fulfil epistemic claims in a particular news story and what is doable within a restricted time frame and with the resources available’ (Ekström et al., 2021: 188). Breaking news journalists rely on several strategies to balance epistemic efforts with time pressure. One such strategy is adding disclaimers, which allow journalists to publish unverified claims (Rom and Reich, 2020). Ekström et al. (2021) identify three other strategies (which they call ‘resources’): (1) Modality choices, implying the use of descriptive rather than evaluative language; (2) Attributions, implying that knowledge claims are ‘outsourced’ to sources; and (3) Reduction of knowledge claims, implying for instance that breaking news journalists alert about something possibly happening instead of claiming that something is happening.

Political fact-checking involves a very different kind of epistemic labour than breaking news production. A question at the heart of this article is therefore if any of the strategies utilised to balance epistemic efforts with time pressure in breaking news production will be applied by political fact-checkers when they introduce a breaking news logic to their practice.

Political fact-checking and epistemology

The rise of political fact-checking as a distinct practice both within journalism and increasingly as a profession external to traditional journalistic institutions is well documented in the United States (Graves, 2016), in Europe (Graves and Cherubini, 2016) and to a certain extent in Africa (Cheruiyot and Ferrer-Conill, 2018) and other parts of the world (Kajimoto, 2021). In essence, political fact-checking epitomises what Hanitzsch et al. (2019) label the ‘monitorial role’ of journalism, in which holding those in power accountable, being a critical watchdog and otherwise adhering to the ideal of the press as a ‘fourth estate’ are defining characteristics. In the words of Graves (2016: 8), the fact-checking movement asks political reporters to ‘challenge public figures by publicising their mistakes, exaggerations, and deceptions. It asks them to intervene in heated political debates and decide who has the facts on their side’.

In recent years, the immense growth of such practices world-wide has inspired much research, mostly related to the development of fact-checking as a profession, its corrective potential and the effectiveness of fact-checking (see Nieminen and Rapeli, 2019, for a review). A smaller body of research is concerned with the epistemology of fact-checking (i.e. how, with what standards and methods, and with which degrees of certainty fact-checkers produce knowledge claims). While journalists are interested both in sources’ opinions and claims, fact-checkers are mainly focusing on claims that are possible to fact-check. Unlike most practices of journalism, fact-checking involves drawing conclusions and it can therefore not to the same extent rely on strategies to moderate and ‘soften’ knowledge claims. As argued by Graves (2016), ‘Fact-checking as a genre forecloses the ability of journalists to shift into a strategic register in order to finesse evidence and manage risk’ (p. 68). Such work relies on certain views, standards and practices related to what knowledge and certainty are, how to identify clearly formulated knowledge claims and then either verify or debunk these.

Uscinski (2015) criticised political fact-checking for being based on a positivist-empiricist epistemology implying that facts are viewed as unambiguous and not subject to interpretation. He argued that ‘what passes for fact checking is actually just a veiled continuation of politics by means of journalism rather than being an independent, objective counterweight to political untruths’. (p. 243). Graves (2017) offers an alternative view as he finds that fact-checkers tend to have a more nuanced understanding of the objectivity norm than a positivist-empiricist epistemology would suggest. He argues that fact-checkers’ verification relies on what he labels ‘factual coherence’ rather than straightforward correspondence, and that the challenges fact-checkers face in making knowledge claims in principle are no different from, for instance, scientific inquiries.

When the ‘slow’ practice of fact-checking merges with the immediacy of breaking news production, this epistemological debate over the neutrality of facts and reliability issues concerning the ways in which fact-checkers choose, assess and draw conclusions on the truthfulness of political claims becomes even more pertinent. Previous examples indicate that live political fact-checking has applied a strategy that avoids the added epistemic pressure created by immediacy by basing the live fact-checks on previous fact-checks only. This is how Politifact performs their live fact-checks (How PolitiFact live fact-checks debates, 2020), and it means that the fact-checking is not done live, during the debate. Instead, relevant fact-checks are re-published when previously fact-checked claims are repeated during the debates.

Based on this discussion of the ‘epistemic gap’ between political fact-checking and breaking news production, and the various strategies fact-checkers might use to bridge this gap, these two research questions will guide our analysis of live fact-checking at Faktisk.no:

RQ2 is analytical in nature and builds on the findings of the RQ1 and will therefore predominantly be addressed in the ‘Discussion’ section.

About Faktisk

Faktisk (which translates to both ‘Factually’ and ‘Actually’) is Norway’s only independent, non-profit fact-checking organisation. It was established in 2017 and is owned by two commercial news companies (VG and Dagbladet), two public service broadcasters (NRK and TV 2) and two media companies (Polaris Media and Amedia). Faktisk is a member of the International Fact-Checking Network (IFCN) and is a signatory of this network’s code of principles. Originally, Faktisk used a ‘truth-o-meter’ to conclude their fact-checks. This ‘truth-o-meter’ had a 5-point scale from ‘Factually/Actually completely true’ to ‘Factually/Actually completely false’. However, Faktisk abandoned this scale in 2021, not long before the election, because – according to the editor – they found it increasingly difficult to use, both when identifying claims to fact-check and when writing up conclusions. This signals a willingness to take epistemological questions in fact-checking seriously. This, in combination with the ambition to do original fact-checks during the debates and not only rely on previously performed fact-checks, makes Faktisk.no a particularly interesting and unique case to analyse in an international context.

Materials and methods

This study is based on participatory observation, interviews, and textual analysis of claims and fact-checks conducted in conjunction with Faktisk’s live fact-checking of political debates during the parliamentary election campaign in Norway in August and September 2021. In this section, we will present the various methods utilised and the data they generated.

Participatory observation and interviews

The election was held on 13 September 2021, and Faktisk performed live fact-checks of three major televised debates during the weeks leading up to the election. We were granted access to Faktisk’s newsroom for two of these live fact-checks. The first and second authors were present during the first debate between party leaders, organised and broadcasted by the commercial broadcaster TV 2. For the second debate, organised and broadcasted by the public service broadcaster NRK, the first author was present in the newsroom. We were granted full access to the Faktisk newsroom, implying that we could take part in preparation meetings and everything the fact-checkers did during the operations of the newsroom before, during and after the debates. The Faktisk newsroom is a small, open-plan office space, where seven to nine fact-checkers worked with live fact-checking during the debates. All fact-checkers present gave their informed consent to being observed, and the observations were participatory in the sense that we asked a lot of questions about what they were doing and why they were doing it during the 2 days. All fact-checkers were very open, and we did not experience any attempts at holding information back from us. We used a shared Google document to take and coordinate notes during the 2 days.

After the election, we conducted semi-structured interviews with four fact-checkers central to the live fact-checking. The interviews lasted between 40 minutes and 2 hours and were conducted either on site at Faktisk or via Zoom (due to corona restrictions). The interviews focused on their experience with live fact-checking, the rationale for doing it, the preparations it required and how to identify claims.

Analysis of claims and fact-checks

To get a deeper sense of the epistemological consequences (RQ2) of Faktisk’ live fact-checking practice, we performed textual analysis of the claims the fact-checkers identified during all three debates (1) and the fact-checks they produced (2). For comparative purposes, we also analysed a set of ‘ordinary’ fact-checks produced by Faktisk (3). The fact-checkers at Faktisk used Google Suite as their main workflow tool, and the whole workflow from identifying claims, finding relevant sources, links, and the actual fact-checks was organised in Google spreadsheet documents, which we were granted access to and could analyse in hindsight. We based the analysis of claims (1) on a ‘typology of statements’ common in argumentation analysis and developed by Sproule (1980) and later discussed and refined by, among others, Freeman (2000). This typology includes four types of statements – descriptions, interpretations, evaluations and analytical statements – out of which the first three are relevant to our analysis:

This analysis allowed us to identify what types of claims were fact-checked (2). To further identify the epistemic characteristics of the fact-checks, we categorised them as

Finally, we did a similar analysis of ordinary fact-checks produced by Faktisk. We downloaded all fact-checks produced by Faktisk and made available through the Google Fact-Check Explorer and Claim Review. Even though only a handful (

Findings

To best identify the strategies Faktisk used (RQ1) and the epistemological consequences of those strategies (RQ2), we will present the empirical findings in line with the chronological order of the fact-checking practice. This means that we will present the findings of the observations, and partly also interviews and textual analysis data, according to the two different phases of the live fact-checking work: (1)

First, however, we will address a question related to the purpose of live fact-checking; why did Faktisk.no decide to pursue such a practice? In our interviews, the fact-checkers perceived this almost like an existential question, linking their answers to the reasons why Faktisk originally was established in 2017. The following quote captures this sense of existential significance: At the time, we felt an immense pressure to deliver, considering the experiences with the election campaign in the USA and with Brexit [in 2016]. We visited Fullfact in England to see how they worked with elections, including live fact-checking of debates, which was very exciting, and when we came back, we were fired-up, like, wow, maybe it’s possible for us to do something similar. We launched Faktisk 5. July [in 2017], when Norway was in the middle of a campaign period before a very important parliamentary election. There were strong expectations that one side would attack the other and that there might be a political shift, so it was natural for us to . . . this was something we could prepare before we launched. Because fact-checking is fresh produce. (Interview_D)

Since the launch of Faktisk in 2017 coincided with an upcoming parliamentary election, how to fact-check this election was pivotal for their preparations, and live fact-checking of political debates thereby became an integral part of their practice.

The preparation phase

Live fact-checking during televised election debates required several steps of preparations. From our interviews with four of the fact-checkers we learnt that they ran several external meetings before the election debates with (1) producers of the televised debates to be briefed about the main topics of the debates, (2) political commentators to discuss what to expect in the televised debates, and finally, (3) political parties to explain more about their work methods. ‘We were rather well prepared, actually’, said one of the fact-checkers (Interview_A). So, when the fact-checkers turned up for work on the evenings of the debates, they had already prepared a lot of material for potential fact-checks.

The structure of the work performed at Faktisk was the same during the two evenings we were present in the newsroom. The fact-checkers met up for work at around 5 pm and gathered in a meeting room at 5.30 pm. The deputy editor connected her laptop to the meeting room monitor and pulled up a Google spreadsheet file. This file was their shared work area during the preparation phase and the actual debate. It organised everything, from research to final fact-checks, including who would work on what. The file had two sheets. One for preparations labelled ‘Prepared material’ and one for live fact-checking labelled ‘Claims’. The ‘prepared material’ sheet had the following column-headings: main topic, sub-topic, responsible, description, previous fact-checks, and links. Based on the external meetings and the research the fact-checkers subsequently had done, the sheet already contained a lot of information when the 5.30 pm meetings started.

During the meeting, the fact-checkers briefly discussed all sub-topics, added a few more, and discussed potential sources to base fact-checks on and what kind of additional material they could produce before the debate started. Parts of the discussion centred on the reliability of sources. At one point, when they discussed a newly published report on the effects of reduction of Norwegian oil production to global climate change – a report they believed would be discussed during the debate – they agreed to just link to the report in a tweet if it came up and not address any potential claims. The reason for this was that the report was controversial, and the conclusions had been contested by other experts, so doing fact-checks related to this report would be complicated. This strategy of not fact-checking claims based on controversial sources but instead link to the actual sources would come up several times during our observations in both the preparation stage and during the actual debates.

Even though much of the 5.30 pm meetings was devoted to such discussions about where to draw borders between what to fact-check and not, other parts of the meeting were not marked by such discussions. Certain topics and potential claims seemed to be less problematic, and the reason seemed to be linked to the types of sources potential fact-checks could be based on. For instance, if a fact-check could be based on statistics from Statistics Norway (SSB – the national statistical bureau) or similar statistics-producing bodies, like the Ministry of Finance, then no further discussion was deemed necessary. One typical exchange illustrates this:

This exchange illustrates not only that the fact-checkers had preconceived notions of the credibility of certain sources and certain types of source material. It also illustrates that much of the material the fact-checkers prepared in advance of the actual debates was material suited to provide factual context to the topics the politicians would discuss and potential claims they would make, and not necessarily explicit corrections or confirmations of claims. This was also brought up in our interviews: ‘We are not only fact-checking things, we also try to contribute with a factual basis to the debate’ (Interview_B).

After the 5.30 pm meetings, the fact-checkers would return to the newsroom and start working on the sub-topics they had been assigned in the spreadsheet, and fact-checks on potential claims related to those sub-topics. Two things seemed important in this work: simplicity in communication and avoiding ambiguity. To achieve this, they would rely on the production of plain and easy to interpret charts with visualisations of statistical data. If the visualisation contained any form of ambiguity, it would not be used, as the following example illustrates: Minutes before the debate 31 August started, a fact-checker was making a chart on car usage in various parts of the country (a sub-topic that potentially would come up under the ‘Climate’ main topic). He stated out loud that he didn’t trust the presentation of the data and summoned some of the others. They discussed it briefly, before concluding that they should just drop it.

The live phase

When the debates started, the fact-checkers switched to the other of the two sheets in the Google spreadsheet file, the one labelled ‘Claims’. Two fact-checkers would work on a column labelled ‘Claim’. They listened very closely to the debate and wrote down claims in real time, as they identified them, in addition to noting the exact time the claim was stated in a column labelled ‘Time’. Two other fact-checkers would then review the claims as they were entered in the sheet and mark in bold those they thought could be fact-checked. They would then rewind the debate (in the TV player) based on the time entered in the Time column to secure an accurate clean copy of the claim, which they wrote in a column labelled ‘Clean copy’. In our interviews, the fact-checkers reflected on how to identify and select claims. One said, ‘it should be a claim where it is possible to add some background’ (Interview_A). Another said, It is often something concrete, something we know there are statistics for, which makes it easy ‘to graph’. [. . .] Either something we have information or material on or something we can provide material on rather quickly. (Interview_C)

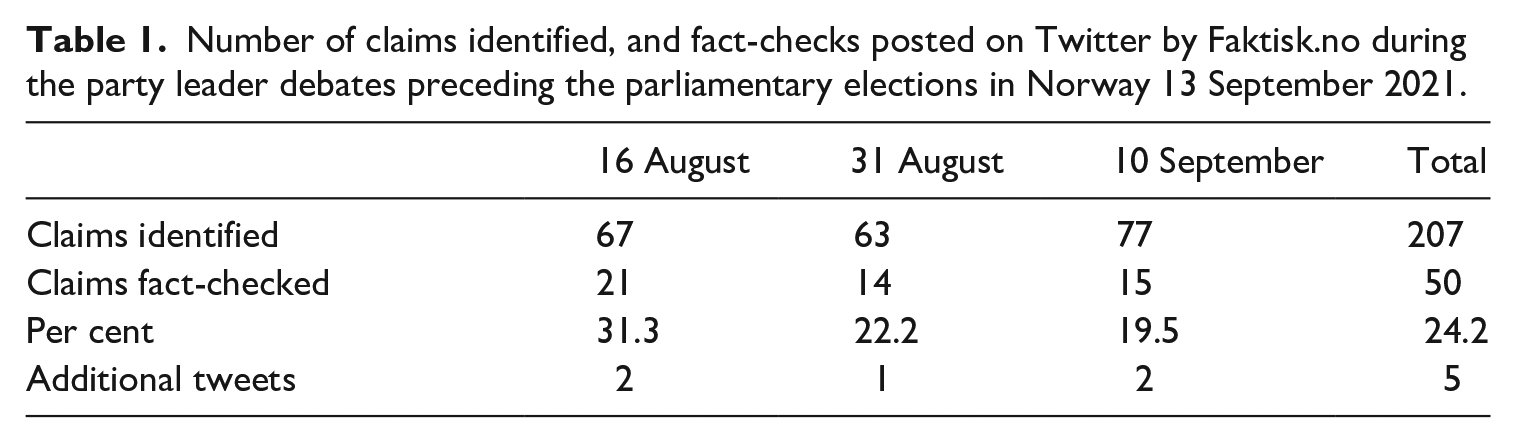

When a claim was bolded for further processing, the fact-checkers responsible for the relevant sub-topic would enter links to prepared source material in a ‘Links’ column and formulate texts to be used in tweets in a ‘Text’ column. The deputy editor would copy that text to the ‘Tweet’ column, review it before publishing a tweet with the same text and the link, if there was any. Table 1 displays how many claims the fact-checkers identified during each of the three debates, and how many of those claims resulted in actual fact-checks being tweeted. About one out of every fifth claim identified was fact-checked, meaning most claims were never processed beyond the stage of identification. In addition, they produced some contextual tweets not based on fact-checks of claims (five in total), and several of the fact-checks were published as multiple tweets in threads, thereby increasing the rapidity of publishing.

Number of claims identified, and fact-checks posted on Twitter by Faktisk.no during the party leader debates preceding the parliamentary elections in Norway 13 September 2021.

During the debates, the small newsroom was filled with a tense atmosphere coupled with an explicit awareness that the risk of making mistakes was high. Finding the right balance between immediacy and accuracy was at times determined by decisions made within the scope of a few seconds. The following example illustrates this: At 10.03 pm during the debate on 31 August, a fact-checker shouted that a bar chart to be used in a fact-check on oil production was ready. The deputy editor immediately copied the link from the spreadsheet into Twitter, but a few seconds later the fact-checker shouted ‘Stop! I must change something!’. She had mistaken oil production per day with production per year in the chart. Fifteen seconds later, the fact-checker said she was done with the update, but the damage had already been done. Several Twitter users had already pointed out the mistake, and the deputy editor deleted the whole tweet while moaning loudly. We observed a few other such mistakes, and the deputy editor several times stressed the importance of avoiding mistakes, not only to avoid losing credibility but also to avoid retributions.

The characteristics of the live fact-checks

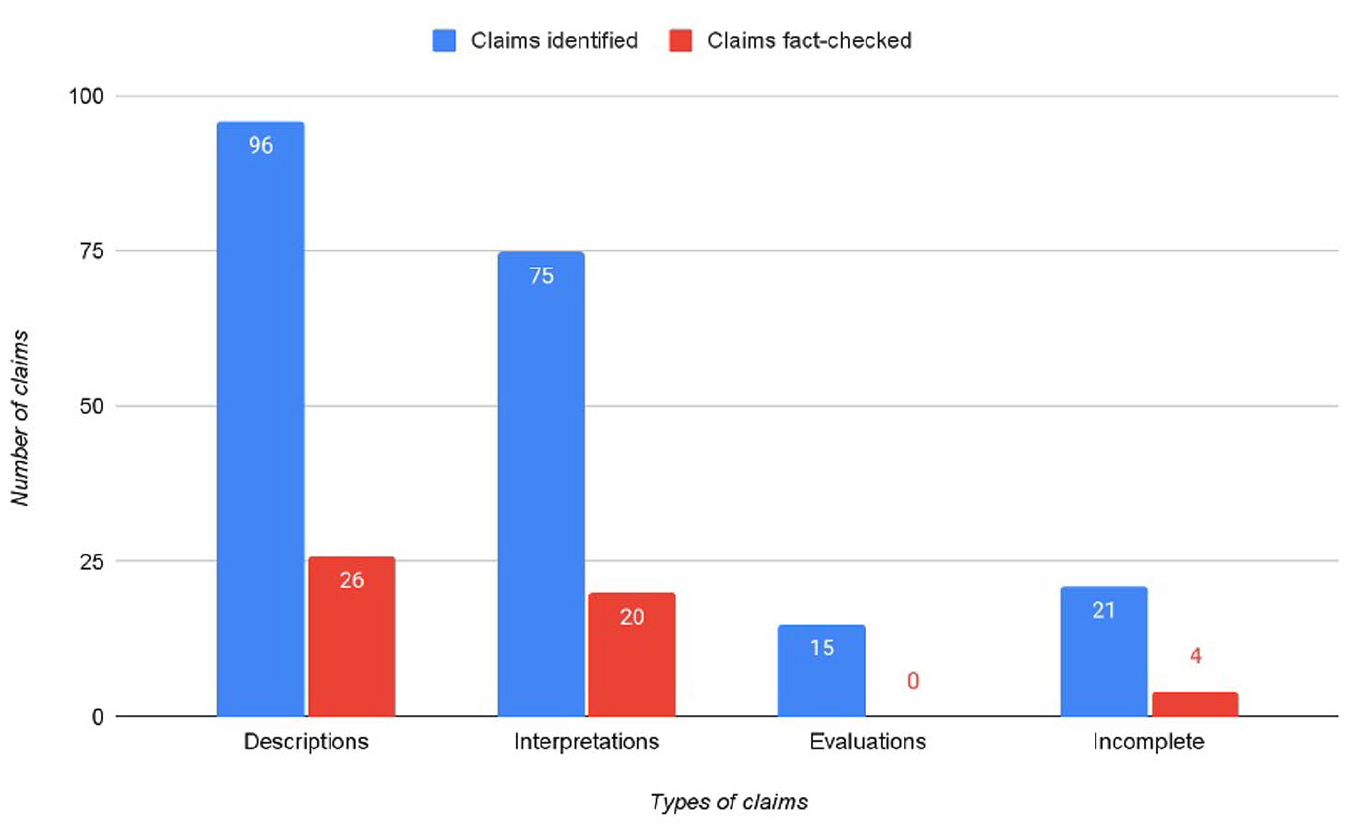

In this section, we will present the findings of the analysis of identified and fact-checked claims according to the typology of statements presented earlier. Most of the claims identified during the three live fact-checks were either descriptions (46%) or interpretations (36%). Seven per cent were evaluations. A few claims were categorised as incomplete because they weren’t written in full in the Google spreadsheet, even though some of them were fact-checked (see Figure 1).

Types of claims identified and fact-checks produced by Faktisk.no during the party leader debates preceding the parliamentary elections in Norway, 13 September 2021 (

As seen in Figure 1, more than half (52%) of the claims that were fact-checked were descriptive claims, meaning claims that were fact-based, uncontroversial, and without definitions, causal mechanism or perspectivations. Typical examples of such claims were as follows:

‘8 out of 10 fear we are headed towards a divided national health service’ (claim by the leader of the Labour Party during the debate 16 August 2021);

‘During the last years, fewer people are in need of social security benefits’ (claim by the leader of the Conservative Party during the debate 31 August 2021).

Forty per cent of all fact-checked claims were interpretations. This is a higher share than what we found in ordinary fact-checks produced by Faktisk, according to our analysis of the Google Fact-Check Explorer data. One-third of these 31 fact-checks were based on interpretive claims. This suggests that Faktisk did not shy away from live fact-checking more complex claims. However, the interpretive part of the claims was not considered in the fact-checks, which focused on the descriptive part of the claims only or did not problematise the definitions the claims were based on. A typical example of such interpretive claims and the fact-checks was as follows:

‘3000 more teachers are now working in our schools, thanks to KrF’s teacher norm’ (claim by the leader of the Christian democratic party (KrF) during the debate 10 September). The fact-check of this claim focused only on whether there are 3000 more teachers and did not discuss the claimed causal relationship with the politics of KrF.

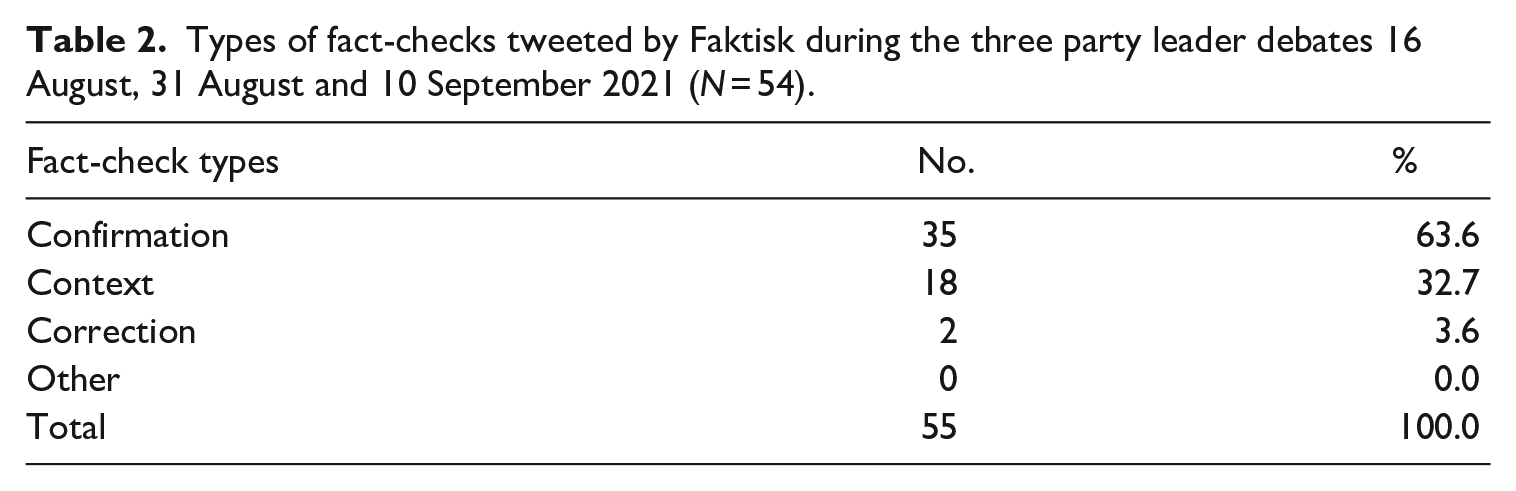

This way of reducing interpretive claims to descriptive claims made the fact-checks less complex. We find three additional characteristics of the fact-checks which also reduced the complexity: first, and as seen in Table 2, most of the tweeted fact-checks confirmed claims, while only two of them corrected claims. This is very different from the fact-checks Faktisk normally produces, according to our analysis of the Google Fact-Check Explorer data. Twenty-five of these 31 ordinary fact-checks were corrections, while 4 were confirmations.

Types of fact-checks tweeted by Faktisk during the three party leader debates 16 August, 31 August and 10 September 2021 (

Correcting a claim is, one could argue, more controversial and thereby a more complex process than confirming a claim, which would suggest that if time is of essence, it is easier to do a fact-check that confirms a claim rather than a fact-check that corrects a claim.

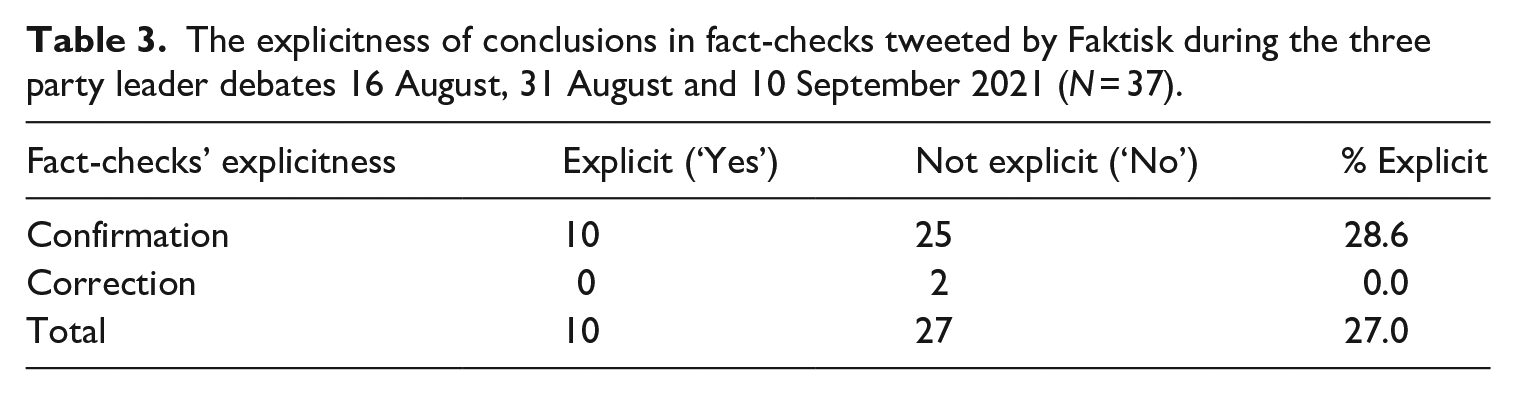

The second complex-reducing characteristic is related to the fact that about one-third of the fact-checks neither confirmed nor corrected a claim but instead provided contextual information to the claim or to a topic discussed. This represents a move from fact-checking to providing relevant information, a move made easier by the fact that the fact-checks at Faktisk were no longer accompanied by the truth scale in which conclusions had to be stated. This, in turn, is related to the third complex-reducing strategy, namely, that most of the fact-checks which confirmed or corrected a claim did not do so explicitly. As seen in Table 3, the two fact-checks that corrected claims did not do so explicitly, and only seven of the confirming fact-checks stated explicitly that the claim was confirmed or correct. Again, this finding is very different from what Faktisk does in ordinary fact-checks. In the Google Fact-Explorer data, we found that 29 of the 31 fact-checks concluded explicitly with statements such as ‘this is wrong’ or ‘this is correct’.

The explicitness of conclusions in fact-checks tweeted by Faktisk during the three party leader debates 16 August, 31 August and 10 September 2021 (

Sourcing live fact-checks

The 55 fact-checks referenced 59 sources. Almost one-third of these sources were statistical bureaus, predominantly Statistics Norway, SSB (12 occurrences). Governmental sources were used 7 times, while data from government directorates were used as sources 10 times, thereby making government bodies the most dominant source type. Three of the live fact-checks were based on previous fact-checks produced by Faktisk, while other Norwegian news media were used as sources 10 times. Three fact-checks did not disclose any sources.

If we consider the types of data material the sources contained, we find that statistical data (including data from surveys and polls) was most common. If we include economic data (mostly from the national budget), we find that 83% of the source material was numeric data.

Discussion

This section first discusses the findings in relation to the strategies Faktisk used in their live fact-checking (RQ1) and second in relation to the epistemological consequences of those strategies (RQ2).

Epistemic gap-reducing strategies in live fact-checking

The practice of live fact-checking political debates bridges what can be characterised as two different epistemic logics: A breaking news epistemic logic, especially connected with desk reporting of breaking news, in which a norm of immediacy in reporting pushes journalists to lower their epistemic efforts and adapt knowledge claims (Ekström et al., 2021), and a fact-checking logic, in which norms of accuracy and thorough investigations push fact-checkers to raise their epistemic efforts and produce credible knowledge claims. In order to bridge this epistemic gap between the two different logics, our study identifies three key strategies utilised by Faktisk.

First, the newsroom used a

Second, the newsroom deployed a

Third, the fact-checkers used a

These strategies align well with findings from previous research regarding the epistemology of breaking news. The emphasis on descriptive claims and thereby descriptive rather than interpretive or evaluative language is the same kind of

Pre-epistemic certainty and confirmative epistemology

RQ2 asked what the epistemological consequences of introducing a breaking news logic to political fact-checking were. One significant consequence was that most of the epistemic work was moved to the preparation phase, before claims to fact-check were identified. This is quite different from ordinary fact-checking, where much epistemic work is performed after a claim is identified and a decision to fact-check it is made. The immediacy of live fact-checking left little time to engage in epistemic work during the actual debates. Moreover, the affordances of Twitter left little room for nuances and possibilities for balancing various sources against each other.

This created a reliance on sources and source material with as little potential for creating controversy as possible. Sources with predefined credibility (like Statics Norway (SSB), governmental bodies and other news media organisations) and source material seemingly deprived of interpretive potential (numbers) were prioritised. We therefore argue that the practice of live fact-checking resembled what Pleasants (2009: 670), based on Wittgenstein’s discussions in

The fact that most of the fact-checks produced during the live debates were confirming claims rather than debunking them – a characteristic that strongly differs from the fact-checks Faktisk normally produces – is also indicative of this reliance on pre-epistemic certainty. Combined, we find that these two characteristics constituted the live fact-checking as a practice based on what we label

A consequence of this confirmative epistemology was that the live fact-checking diverged from traditional political fact-checking in several respects. First, the live fact-checking became, at least to a certain degree, detached from political claims and instead geared towards providing general, uncontroversial information regarding the topics the politicians debated. Second, the live fact-checking served more as a certifier of hegemonic perspectives than as a corrective of such perspectives. The practice thereby adhered to ideals of enlightenment and information provision – and thereby to what Hanitzsch et al. (2019) label the ‘accommodative role’ of journalists – rather than ideals associated with holding those in power accountable, that is, the ‘monitorial role’. As such, the practice of live fact-checking analysed in this article seems to align with Uscinski’s (2015: 243) argument about political fact-checking being a ‘veiled continuation of politics’. Moreover, the practice mirrors the practice of crisis reporting, in which a symbiotic relationship between journalism and political authority often occurs in what Hallin (1984: 116–118) labelled a ‘sphere of consensus’. This might seem like a paradox, since political fact-checking in many respects epitomises the ‘fourth estate’ ideal and is strongly associated with a critical distance to political elites (Graves, 2016). An interesting question is, therefore, whether the immediacy of breaking news is incompatible with the critical perspectives normally embedded in political fact-checking.

Conclusion

In this article, we have investigated the novel practice of live fact-checking of political debates, as performed by the Norwegian fact-checking organisation Faktisk. The article has specifically addressed the practical and epistemological consequences of merging the logic of breaking news production with that of political fact-checking, which are very different logics when it comes to the epistemic efforts involved. Our findings indicate that the three strategies of

These epistemological consequences made enlightenment and information provision important ideals guiding the practice, which thereby adhered more to an accommodative journalistic role than to the more critical ‘fourth estate’ monitorial role normally associated with political fact-checking. There is, therefore, a risk that live political fact-checking, with its reduction of epistemological complexity and reliance on what we have discussed as pre-epistemic certainty, might cater to the political elite more so than to the critical public. A potential consequence of this is that live political fact-checking, as performed by Faktisk, might add fuel to the growing criticism of mainstream media lacking diversity of perspectives and critical distance to elites (Zelizer et al., 2022). This is, therefore, an important area for future research, as it relates to questions concerning who and what political fact-checking, and journalism in general, are for.

Based on these conclusions, one might ask why fact-checkers perform live fact-checking of political debates. Even though the immediacy of live fact-checking clearly restrains what could be achieved by such a practice, questioning it would be like questioning why journalists produce news. Immediacy is, and has always been, at the heart of news production. It’s a defining characteristic of what journalism is (Deuze, 2005), and fact-checking is no different. As our findings demonstrate, the sense of urgency that shaped the work performed by the fact-checkers of Faktisk – particularly during election campaigns – was existential in nature. It was part of the very reason for the existence of the organisation. However, this existential significance of immediacy might be explained by the fact that Faktisk was established and financed by legacy journalistic media organisations (and fact-checkers were recruited from the same organisations). The tremendous growth in fact-checking organisations worldwide in recent years also means a diversification of the field in terms of institutional belonging. An interesting question for future research is, therefore, whether fact-checkers coming from other institutional settings, for instance, nongovernmental organisations (NGOs), have different understandings of the significance of immediacy and thereby what political fact-checking is or should be.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Research Council of Norway under grant number 302303.