Abstract

This article approaches aerial images from a media geographical perspective, tracing the development of three modes of seeing from above: historical aerial reconnaissance before and after the introduction of aerial photography; the advent of satellite-based remote sensing during the space age; and finally the development of drone-based imaging practices in the second half of the 20th century. Starting from the assumption that the aerial image is not a geomedium per se, but rather one that emerges as such through various co-operative processes of establishing geographical references with and within it, we draw on Goodwin’s notions of “co-operative action” and Farocki’s concept of “operative imagery” to theorize useful aerial images as co-operative images. Consequently, our historical analysis looks beyond the aerial image itself, taking into account the wider practices, infrastructures, and intended use cases involved in its operationalization.

Keywords

Co-operative aerial images

Whether as satellite images in Google Maps or as real-time drone footage, aerial images have become ubiquitous elements of the media landscape we encounter in our everyday lives. We use them to navigate, find specific locations, or measure distances, usually with a simple click or swipe. Accordingly, aerial images nowadays appear to their users as readily available interfaces—georeferencial “ready-mades,” so to speak. The preceding transformations, which generate the referentiality and computability of these “sedimented architectures for perception” (Goodwin, 2018: 245), usually remain opaque. This article will shed light on these transformations by asking how the view from above is rendered operational (Farocki, 2004; Hoel, 2018) through co-operative action (Goodwin, 2018), turning aerial observations and photographs into geomedia in the process.

Historically, the notion of geomedia (Lapenta, 2011; McQuire, 2016; Thielmann, 2010) and geomedial practices of aerial images (Abend, 2013; Hildebrand, 2017) has been tied to the digital qualities of locative media and the socio-technical changes brought about by their ubiquitous availability. To engage with the pre-digital aerial image, we follow a less technology-centered approach, as proposed by Fast et al. (2018), who define geomedia “[. . .] as a relational concept that captures the fundamental role of media in organizing and giving meaning to processes and activities in space” (p. 4). Starting from the assumption that aerial photographs are not geomedia per se, we shift the focus toward their production, asking how they attain the ability to organize and give meaning to activities in space, or, to be more specific, which practices and processes historically allowed aerial images to act as useful geographical references in subsequent operations.

“Useful images” (Nohr, 2014) that become “part of an operation” (Farocki, 2004: 17) in this fashion have been variously theorized as “operative images” (Hoel, 2018; Krämer, 2009), sometimes with a specific focus on (semi-)autonomous forms of vision and processing carried out by machines (Andrejevic, 2022; Farocki, 2004). As we will show, the chains of operations in whose context the aerial image can be considered operative become increasingly intricate over the course of its—predominantly military—history, enlisting more and more human and nonhuman actors as time goes on. To account for the various—and not always straightforward—ways in which the efforts of these actors relate to each other, we draw on Charles Goodwin’s (2018) notion of “co-operative action” based on “substrates,” that is, materials left behind by earlier actors within the chain:

1

Both in the immediate presence of others, and when working with materials created by a predecessor, action is built by performing operations on a substrate created by someone else. The new action cumulatively incorporates the materials and resources provided by the substrate, and is thus co-operative, something built through the separate contributions of different actors. (Goodwin, 2018: 258)

Bringing Goodwin into dialogue with the notion of operative images, we arrive at the concept of the co-operative aerial image—an image whose usefulness in organizing activities in space derives from the various layers of co-operative action inscribed into it. This conflation of the image’s operative and geomedial qualities is supported by Aud Sissel Hoel’s (2018: 12) observation that if “[. . .] images are instruments, interfaces, measuring media, manipulable diagrams—the boundaries between image and medium start to become porous, leaving both terms transformed.”

Our analysis will trace the operationalization of the view from above through three major developments in the field of aerial reconnaissance: the introduction of aerial photography in the late 19th century; the advent of satellite imagery during the space age; and finally the development of drones in the second half of the 20th century. This entails engaging with the employed mobility platforms, the practices and infrastructures used for capturing, transmitting, and computing the images, as well as with the further social and political applications they are being produced for. In this media historical approach, we situate the view from above in relation to two dominant perspectival modes—the vertical and the non-orthogonal, oblique view—which have long been deemed to be “useful” in the context of various practices. In doing so, we will show that the aerial images’ capacity for organizing processes and activities in space both depends on and becomes part of specific spatiotemporal regimes that can be traced through history alongside the images themselves.

In addition to the review of scientific literature on the three areas, we methodically rely on the analysis of historical documents, such as manuals, protocols, and reports.

The emphasis on military practices of seeing from above stems from the fact that for most of the time span in question, the military held a quasi-monopoly on advanced aeronautics and aerial imaging.

Early aerial reconnaissance

Operationalizing the view from above

As many of the practices, rules, and infrastructures which will later enable the operationalization of the aerophotograph are already established in the context of balloon observation, it is there—well before the popularization of photography—that we will start our history of the aerial image as a geomedium.

The development of ballooning at the end of the 18th century made it possible to verify the constructed bird’s-eye views found in Renaissance artworks such as Da Vinci’s “Imola Plan” and Jacopo De Barbari’s “Plan of Venice.” The balloon transported the human gaze to previously impossible heights, bringing about a veritable “balloonomania” (Kaplan, 2018), which produced numerous drawings, reports, and newspaper articles about this “new way to see.” On one hand, these early records convey the immediate, sensual experiences of the early aeronauts; on the other hand, they testify to the fact that the bird’s-eye view first had to be learned (Kaplan, 2018). This learning process consisted in understanding the four transformations Caren Kaplan (2013) deems characteristic for the aerostatic view: “changes in scale, access to the previously inaccessible, sharp delineation and equalization or flattening” (p. 31). As Kaplan (2018) notes, one of the early balloonists, Thomas Baldwin, already speculated on harnessing the transformations provided by the balloon view for geographic purposes, even anticipating the practice of projection-based overlays:

A new system, that of Balloon-Geography here suggested itself: in which the essentials of proportion and bearings would be far more accurate, than by the present method, both for maps and charts, viz. to make drawing by sight, from the car of a balloon, with a camera obscura, aided by a micrometer applied to the underside of the transparent glass. (Baldwin, 1786: 133)

In the context of military operations, aerostatic viewing platforms provided a strategic advantage in much the same fashion as earlier forms of lookouts—like watchtowers—had done by allowing the observation of troop movements from an elevated perspective. Accordingly, the Field Service Manual for Balloon Companies (1917), first published by the French forces and later translated for the American Signal Corps, begins with the simple statement:

The balloon is an observation station from which by reason of its height and relatively fixed position in space continuous observation and immediate direct transmission of data are possible. (p. 1)

This short statement reveals two themes that remain central to the various forms of aerial imagery that would soon emerge: on one hand, the fixed and—more importantly—known position of the observation platform plays a crucial role in the operationalization of the image, as it provides the most basic point of reference to which the observed objects could be related. On the other hand, the Field Service Manual already reflects the surveying of the surrounding situation, as a data-generating practice—as a form of rendering observations quantifiable—which does not end with the supposedly simple act of seeing. Rather, the visual recording of the environment is understood as part of an operational chain, which also includes the transmission and further processing of the information.

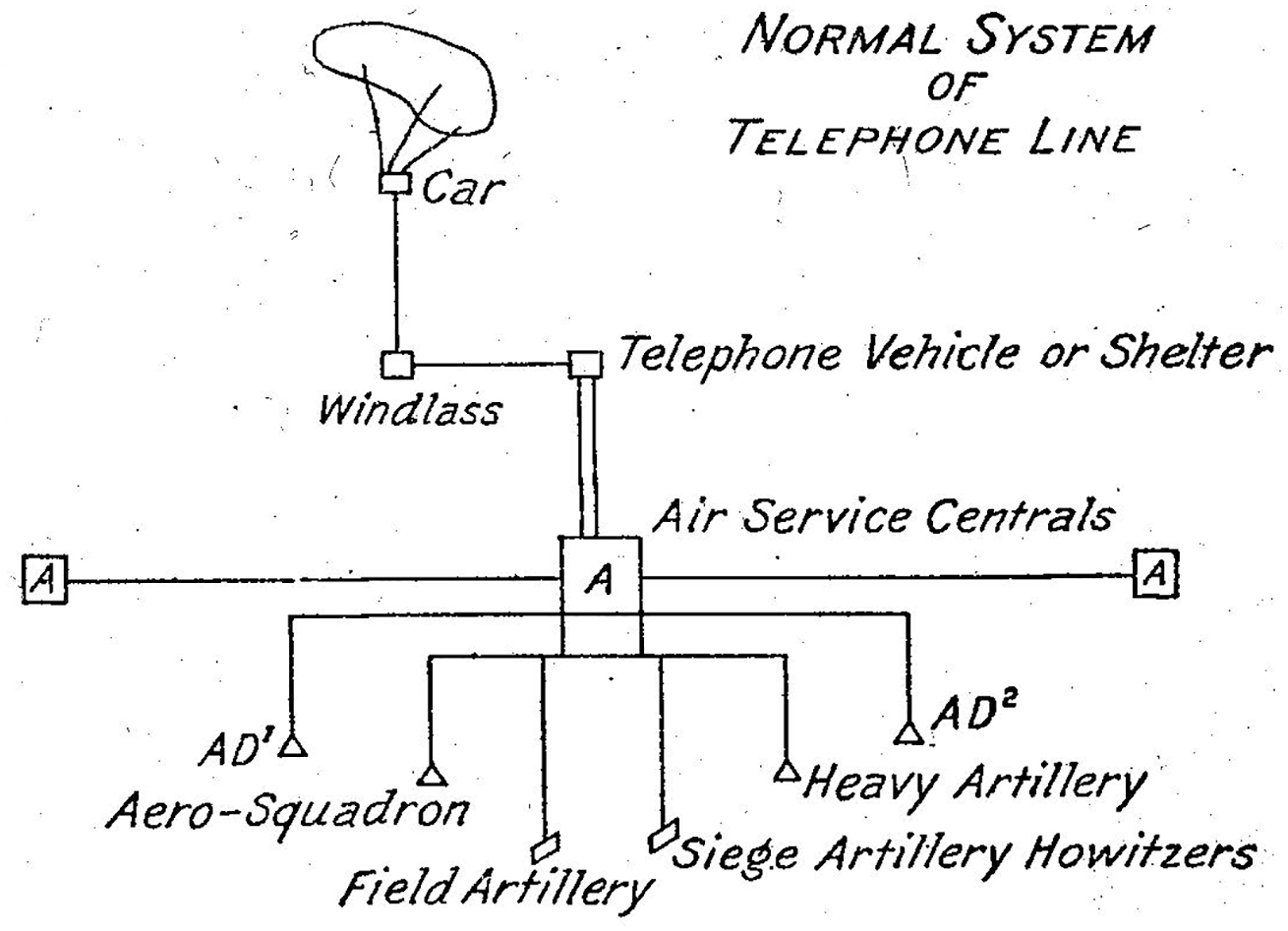

While the air-to-ground communication initially took the form of handwritten notes or hastily prepared sketch maps that had to be dropped by the balloon pilots and picked up by the ground crew, the slowness of the process eventually led to the use of modern communication technology: the winch cable was supplemented by a telegraph or telephone line, through which the balloonist or aérostier was in constant contact with the ground crew and the artillery batteries he directed. This infrastructural embedding in a precisely planned communication network calculated to minimize latency (Figure 1) was intended to guarantee the rapid and accurate transmission of data. 2 At the same time, disciplining the balloonist’s gaze seemed to promise a reduction in subjective interpretations and thus an increased congruence between the reports and the situation on the battlefield: “As a general rule reports rendered should be unvarnished, that is, only what is actually seen should be mentioned to the exclusion of all personal interpretation of the observer” (Field Service Manual for Balloon Companies, 1917: 22).

Communication chain of an observation balloon (Field Service Manual for Balloon Companies, 1917: 19).

The aérostier was supposed to satisfy a claim to objectivity based on the mechanistic notion of an “unvarnished” perception preceding further subjective assessments, an idea presumably influenced by the recent development of photography and its understanding as a technology able to capture reality “as it is.” In the context of our argument, this disciplining can be understood as an attempt at operationalizing the aérostier’s gaze, producing a formalized substrate ready to serve as the basis for further co-operative action. The idealized observer position assumed in this way, however, can hardly be reconciled with the actual reports of balloonists who frequently complained about unstable flight conditions and views restricted by weather and smoke (Holmes, 2013). The flattening effect caused by the elevated viewpoint meant that even familiar structures on the ground—now reduced to their upper surface—necessarily became the objects of guesswork and interpretations. Accordingly, there could be no question at all of a “human camera” feeding objective, that is, unfiltered data about the situation into the connected communications networks. The situated practice of surveying the battlefield was always contingent on individual interpretation and inference and thus ran counter to the ideal of an impersonal “view from nowhere” (Haraway, 1988), or rather “no one”, as it was phrased in the Service Manual for Balloon Companies.

From observing to imaging

At the beginning of World War I, the need to extend reconnaissance to areas behind enemy lines led to an increase in the use of airplanes, which had the advantage of higher mobility when compared to balloons. Due to the expansion and complexity of the war effort, as well as the improvement of air defenses and the resulting necessity of higher flight altitudes, plane operators quickly found themselves overburdened with the task of observing their surroundings while piloting their machines (Siegert, 1992). In order to relieve the pilots, the use of two-seater aircraft with dedicated onboard observers was soon established. While the mobility platform had changed, the observers’ method of recording their sightings still followed the aérostier’s earlier example of linking the physical landscape to the geometrical account of the map via common landmarks. This coordination of geographic knowledge gathered from above with already existing data sets or maps obtained from ground surveys represents the archetype of the practice of “ground truthing”—a practice that would go on to shape the cartographic instrumentalization of all future forms of vertical aerial and satellite imagery (Cosgrove and Fox, 2010: 24).

The map—being the achievement of previous co-operative cartographic efforts—not only enabled observers to orient themselves spatially, but also provided a framework for georeferencing their observations, which now could be condensed into symbols placed within a coordinate grid before transmission. Maps and coding books thus became what Goodwin calls “environmental laminations” helping to create “[. . .] a relational package for constituting meaning that links operations on a consequential environment, including perception, to an actor carrying out particular forms of action” (Goodwin, 2018: 262). To ensure rapid communication of the collected information, the notes were dropped over specific locations on the ground. Further optimizing the flow of these informational substrates meant moving the infrastructures for evaluating aerial reconnaissance reports closer to airfields and landing sites, with the centers of command following suit (Finnegan, 2006).

At the same time, aircrews started taking aerial photographs with their own 35-mm cameras. However, there were no organized efforts to make use of these photographs, since the process of rendering them co-operative—their development and evaluation—was still too time-consuming. Thus, the aerial photograph was first used as a souvenir, which the flight crews sent to their families (Collier, 1994).

The ongoing positional warfare and the ever-growing labyrinthine trench courses of World War I foregrounded the shortcomings of ground-based survey practices which turned out to be cumbersome and sometimes outright impossible, when it came to charting contested territory with a survey table and fragmented geodetic networks (Collier, 1994). These developments also affected the accuracy of observations made from the air, as the geographical reference system became less reliable. As only vertical aerial photography could meet the informational demands of the military, cartographic material was soon regularly supplemented and updated by pictures taken from above, prompting a sharp increase in the number of images taken. The industrial scale of production and the linear structure in which the processes of image-taking, retrieval, development, and evaluation were arranged led Allan Sekula to note that “[t]he making of these reconnaissance prints was one of the first instances of virtual assembly-line image production. [Henry Ford’s first automobile assembly-line became operative only in 1914]” (Sekula, 1975: 28).

Whereas aérostiers had already been under strict orders to keep observation and interpretation separate, the introduction of airplane photography further disjoined these processes in both a personal and a spatiotemporal sense. Had the individual aeronaut tried to understand the landscape above which he was hovering in situ, a team of trained interpreters now retrospectively viewed and evaluated the aerial photographs from the safety of the ground. Consequently, the emergence of the co-operative aerial image has to be understood through this transition from individual, situated practices of seeing and sense-making toward distributed practices of imaging and interpretation involving a growing number of human and nonhuman actors.

Operationalizing the aerophotograph

Whereas operationalizing the aérostier’s gaze had meant “reading” the landscape itself and transmitting codified bits of geographical information to the ground, operationalizing the aerophotograph shifted the interpretative effort toward “determining the three-dimensional identity of an ambiguous two-dimensional image, whose placement could then be fixed by a sign on a drawn map” (Sekula, 1975: 28). While the interpreter now was removed from the situation itself, engaging with an image of the terrain, the general logic of deflation (Latour, 1986), that is, the reduction of a terrain “[. . .] to a set of coded topographic features, ‘grounded’ by the digital logic of the grid” (Sekula, 1975: 28) largely remained the same.

Relating aerial photographs to other kinds of cartographic material was no easy task. Again, a frame of reference had to be established by finding common landmarks within both the map and the photograph—which could prove difficult, if the interpreter was unsure of what he saw in the image in the first place.

A common method of interpretation involved identifying flattened objects by proxy, usually by their shadows, from which the object’s shape, height, and tilt could be inferred. In preparation, the interpreter first placed the photograph within the context of a reference map and then oriented both the photograph and the map according to the light source within the image (Porter, 1921). The practice of turning the map and photograph until the shadows fell toward the interpreter not only highlights the role of the interpreter’s body within the specific spatial arrangement needed for translating one inscription of geographic information into another; it also enabled the interpreter to carry over insights from their everyday sense-making to the realm of “professional vision” (Goodwin, 1994), as it guaranteed that the shadows within the image behaved as one would expect from day-to-day experience. Consequently, this interpretational method led to a shift in the rhythm of image-taking practices, placing emphasis on series of multiple photographs taken at different times of the day to facilitate the cross-examination of multiple shadows.

Once the objects within the image had been identified, their exact positions were determined with the aid of a so-called “compilation diagram,” a simplified map of prominent river and road courses produced from the available survey table measurements and cadastral records. A staff of topographers and technical draftsmen then searched for common features between photograph and diagram in an effort to situate the image within its geographical context. Matching two or more features also meant that the photograph’s scale could be inferred from their distance within the diagram, allowing further measurements to be carried out directly within the photograph. The geographic metadata produced in this way was then inscribed on the glass negative for archival purposes.

As these practices illustrate, the aerial image has to be understood as a sedimentary architecture consisting of the accumulated substrates of co-operative actions carried out by various predecessors. These involve the practices of image production, retrieval, and interpretation outlined above, but also prior efforts like the manufacturing of the cameras and airplanes, earlier mapping endeavors, the production of supplementary materials, and so on.

Besides these more obvious layers of co-operative action, yet another kind of accumulation has to be considered in the production of aerial photographs: The physical traces of warfare are not only inscribed into the landscape-turned-battlefield but also into the image of the terrain, forming another kind of substrate in the process. Here, Goodwin’s hyphenated use of the term “co-operation” allows us to describe the practice of wartime photography—which usually resulted in violence against the co-operating adversaries—without invoking associations of aligned interests and consent tied to the common usage of “co-operation”:

Instead, the organization of co-operative action is agnostic about mutual benefit and solidarity. Benefit to the other, and cost for the party performing the action, are possible outcomes of the process of building action co-operatively, but not among its defining characteristics. (Goodwin, 2018: 6)

Consequently, the knowledge of one’s participation in co-operative but adversarial practices of image-taking and interpretation led to attempts at purposefully hiding or creating misleading marks in the terrain. Allan Sekula (1975) implicitly acknowledges this kind of co-operative action between opposing factions when he describes the practice of camouflage induced by aerial reconnaissance as “[. . .] a low-level language game [. . .] in which the indexical status of the sign was thrown into question, thereby inflating the suspicions of the photo-interpreter” (p. 28).

When Bernhard Siegert (1992) suggests that the aerial photograph facilitates “geography” in a very literal sense, that is, the violent practice of “writing” and “re-writing” the terrain itself via constant bombardments, he likewise understands the battlefield as a sedimented architecture of co-operative action. Siegert’s “writing” is complemented by the interpreter’s “reading” of the photographs, creating a cycle in which the aerial mapping of a territory enables its further deformation, which in turn necessitates renewed and ever-faster photographic efforts to account for the changes (Figure 2).

Aerial photograph of the St. Eloi craters in Belgium in 1916 and their inscription on a map a year later (http://digitalarchive.mcmaster.ca).

Optically consistent vertical images

By 1915, the now firmly established practice of aerial reconnaissance had sparked the development of specialized equipment. The new cameras photographed vertically downward from the fuselage of the aircraft and employed various techniques to detect or compensate for possible skew. In some cases, the cameras were equipped with indicators for the angle of inclination whose readings showed up in the image, making it possible to extrapolate the scale of the photograph at a later time (MacLeod, 1919). These measures became necessary, as the usually oblique orientation of the camera apparatus and the resulting distorted geometry made it difficult to directly superimpose map and photograph—a practice that promised great improvements in the speed and accuracy of the interpretation (MacLeod, 1919).

The flattening effect resulting from the vertical orientation of the camera—the same flattening that had bewildered the early balloonists’ perception—here emerges as the basic condition for the further operationalization of the image, opening it up to a whole range of new practices of measurement and calculation (Andreas, 2015; Krämer, 2009). By providing a common perspective and therefore a homogeneous language of geometrical laws, the vertical view generated “optically consistent” (Latour, 1986, 2006) images that could be scaled and combined at will (Latour, 1986: 7). Pictures optically flattened through the orthographic perspective not only were consistent with other images taken in the same way, but they were also consistent with a wide array of substrates produced by traditional means of surveying and mapping in the form of flat inscriptions of topographic features. As a result, the vertical image could now tap into the operational qualities of the map, its “referentiality” and “connection to an ‘outside’” (Krämer, 2009: 103) more effectively than before.

Minor discrepancies in optical consistency could be retroactively corrected for via the use of so-called projectographs. These apparatuses projected the negatives directly onto a cartographic drawing, which could then be aligned with the projection by means of tilting and repositioning the drawing board. Re-enacting the geometrical relationship between the airplane and the ground with the help of projector and drawing board allowed the image technicians to correct for angular inaccuracies introduced during the flight itself—a process that would be greatly expanded and automated by later forms of algorithmic correction.

By converting the photographic central projection into an orthogonal projection, the corrective practice—called rectification—also shifted the assumed observer position within the geometric construction to infinity. Due to its position at the intersection of aeronautics and photography—at the time both cutting-edge scientific disciplines—the aerial photograph had already acquired a nimbus of objectivity that was now further reinforced by this dislodgement of the subjective observer position. Consequently, the balloonist’s “unvarnished” perception was seen as inferior to the camera mechanism’s ability to quickly generate “factual material” (Wecker, 1916: 3) of wide swathes of surveyed terrain. This upscaling was supported by the development of magazine cameras and intervalometers, ushering in new practices of continuous image-taking, which meant that multiple photographs taken on the same flight could be combined into one large photomosaic that could depict up to 60 km of surveyed territory (Andreas, 2015). Here, the process of operationalization shifts from singular images to series of photographs, utilizing the spatiotemporal relations between the images as the source for further co-operative action: photographs could be taken at either slightly different points in time, making use of the difference between the images to track changes in the landscape, or at slightly different points in space, making use of the congruence between overlapping parts of the photographs to create complex composite images. While the map would go on to enjoy the role of a unifying reference system, photographs were now also increasingly related to one another.

Although the vertical aerial photograph enabled a geometric and metrological recording of space, it made it difficult to interpret the structures and objects represented in the picture, making its primary purpose one of locating, not of identifying. Inversely, the oblique image facilitated the identification of objects and structures while eluding photogrammetric calculations and definite localization, leading Allan Sekula (1975) to note that “[e]ach of the two types gravitates toward a different kind of estheticized reading; one tends to deny the other to acknowledge the referential properties of the image” (p. 29).

As the inherent ambiguity of the vertical image is caused by the geometric relation between observer and observed objects, its indeterminacy cannot be “fixed” by simply raising the image quality. The resulting need to enlist the help of supplementary images and reports, forming an ad hoc network of relational geographical knowledge, was already reflected by the practitioners, as a report on aerial observation written in the 1920s evidences:

In the military sense, nothing is of independent value. Nothing exists except in its relation to something else [. . .]. Even the clearest and most detailed of aerial photographs may require other data—such as other photographs, or balloon reports, or the testimony of sour prisoners—to make it truly illuminating. (Porter, 1921: 182f)

These related data points could then be consolidated into a “plan directeur,” a “printed, contoured, co-ordinated map” (Porter, 1921: 122) to distribute to one’s own troops in preparation of an attack, foregrounding the fact that the map still frequently acted as an “obligatory passage point” (Callon, 2006) through which all geographical information had to pass before being rendered actionable.

As we have shown, the aerial photograph’s rapid transformation from postcard motif to co-operatively achieved tool for co-operative action—from mere representation to geomedium—is underpinned by the rapid development of distributed practices of image-taking, combination, and interpretation. Within this process, the orthogonal perspective of the vertical aerial image plays a vital role, as it guarantees the image’s interoperability by means of optical consistency. While the integration of aerophotographs into the operational chains of military reconnaissance can initially be understood as a detour, reluctantly undertaken due to the impossibility of keeping battlefield maps up to date via ground-based methods of topographic surveying, aerial images that could be produced at high speeds and en masse would go on to become an indispensable substrate for co-operative mapping efforts—a trend that would only intensify with the advent of post-war remote sensing.

Satellite imagery

Remote sensing

The term “remote sensing” was first coined by US naval researcher Evelin Pruitt in the 1950s. While it certainly seemed necessary to differentiate between photographs taken from airplanes and those taken from satellites at the dawn of the space age, “remote sensing” was soon used in a more general sense “[. . .] to describe the science—and art—of identifying, observing, and measuring an object without coming into direct contact with it” (Graham, 1999).

Accordingly, all aerial photography—starting with Julius Neubronner’s famous pigeon photography and similar experiments with kites and balloons—could be considered to be remote sensing avant la lettre. Through this conceptual lens, the history of the production of co-operative aerial images up to this point can be told as one of remotion, of increasingly detaching the observer from the observed situation in both a spatial and psychological sense: from the early balloonists’ attempts at forced objectivity, to the division of labor between photographers and interpreters in the context of airplane photography up to the high-altitude remote sensing employed by two political blocs eyeing each other suspiciously from afar.

In the context of satellite imagery, the term provides two important focal points for the discussion: on one hand, satellites’ greater operating altitude—or remoteness from earth’s surface—created new challenges for the operationalization of the image, necessitating new practices of camera calibration, image retrieval, storage and combination. On the other hand, the change from “observation” to the more general “sensing,” signals the increasing importance of machinic forms of perception able to capture and process information beyond the visible spectrum of the human eye.

Co-operative (geo)political action

In the 1950s, the trend toward increasing flight altitudes in hopes of avoiding countermeasures culminated in the development of the US-American U2 spy plane. With the U2, photographs taken from higher altitudes became widely used as means of justification and persuasion in matters of foreign affairs.

In this context, the co-operative history inscribed into reconnaissance images becomes a point of contention in the political arena: in order to exert its power to organize actions in space, the image’s interpretation has to be defensible—at least until the proposed course of action has been taken. Consequently, the practices of interpretation leading up to the public presentation of the image are usually omitted in the argument (Kurgan, 2013), obfuscating the images’ status as “highly encoded, non-literal, non-transparent, and opaque documents” (Amad, 2012: 83) and creating an impression of geomedial ready-mades providing self-evident and transparent information instead.

If camouflage was aimed at casting doubt onto the indexical status of individual signs within the image, the use of aerial imagery in foreign affairs saw the whole process of analysing aerial photographs being called into question, as various stakeholders, including (geo)political adversaries, could not only put forth their own interpretations, but challenge the images’ veracity by foregrounding the interpretative nature of the practice itself.

These persuasive practices can be traced from the successful use of aerial photographs as evidence for the deployment of Soviet launchpads during the Cuban Missile Crisis, which consequently lent credibility to both the political actors and the practice of plane-based surveillance, up to the Bush administration’s forced concession that aerial images are indeed subject to cumbersome interpretative practices in the wake of Colin Powell’s attempt at justifying the Iraq War via a presentation of photo-slides (Cosgrove and Fox, 2010).

Where World War I aerophotography had primarily been employed within the circular order of mapping and shooting outlined above, spy plane and satellite imagery added several nuanced forms of (non-)action to the repertoire of possible further co-operative efforts. On one hand, satellite photography’s capabilities for surveilling vast swathes of territory in an almost panoptic fashion allowed states to discipline each other using the possibility of presenting accusatory photographs. On the other hand, mutual knowledge of each other’s capabilities for satellite reconnaissance produced strategies whereby one’s military assets could be purposefully exposed as a demonstration of strength. These strategies presupposed adversarial imaging practices in much the same way as the game of camouflage did, but with an inverse logic of willfully leaving substrates for the surveilling party in an attempt to influence their understanding of the situation. 3 Finally, as the case of the United States’ fear of a “Missile Gap” illustrates, satellite reconnaissance could also lead to the end of the chain of operation consisting in non-action or even a reduction in activity: The United States’ perceived inferiority in the number of ballistic missiles when compared with the USSR was proven to be unfounded by satellite imagery, leading to a cutback on the costly efforts to “catch up” (May, 1998).

Spatiotemporal regimes of image-taking and retrieval

Observation satellites are constantly moving across the earth’s surface at fixed altitudes, imposing a strict spatiotemporal regime on the process of operationalization: the target area can only be photographed at fixed intervals dictated by the time the satellite needs to complete its revolution around earth. In addition, the photographic material produced by early reconnaissance satellites could only be retrieved when the satellite flew over friendly or neutral territory—a limitation that remained even after radio transmission of image data became available with the NOAA polar orbiting spacecraft, a weather satellite (Cracknell, 2018). Complex logistical schemes employed to retrieve the images, like the use of “film-return buckets” in which undeveloped film material was sent back to earth (Day et al., 1998) foreground once more Bruno Latour’s (1986) observation that bringing back homogeneous pictures of the earth is a vital step in exerting control over a territory that has been mobilized in this way. While the satellite’s elliptical movement around the earth posed logistical difficulties for rendering the substrates of the image-taking process accessible to further co-operative action, it proved to be a useful resource in localizing the images. Reconnaissance satellites’ highly predictable orbits meant that knowledge of when an image had been taken could be directly translated into an assessment of where the image had been taken from, making it easier to connect the image to other sources of geographical information.

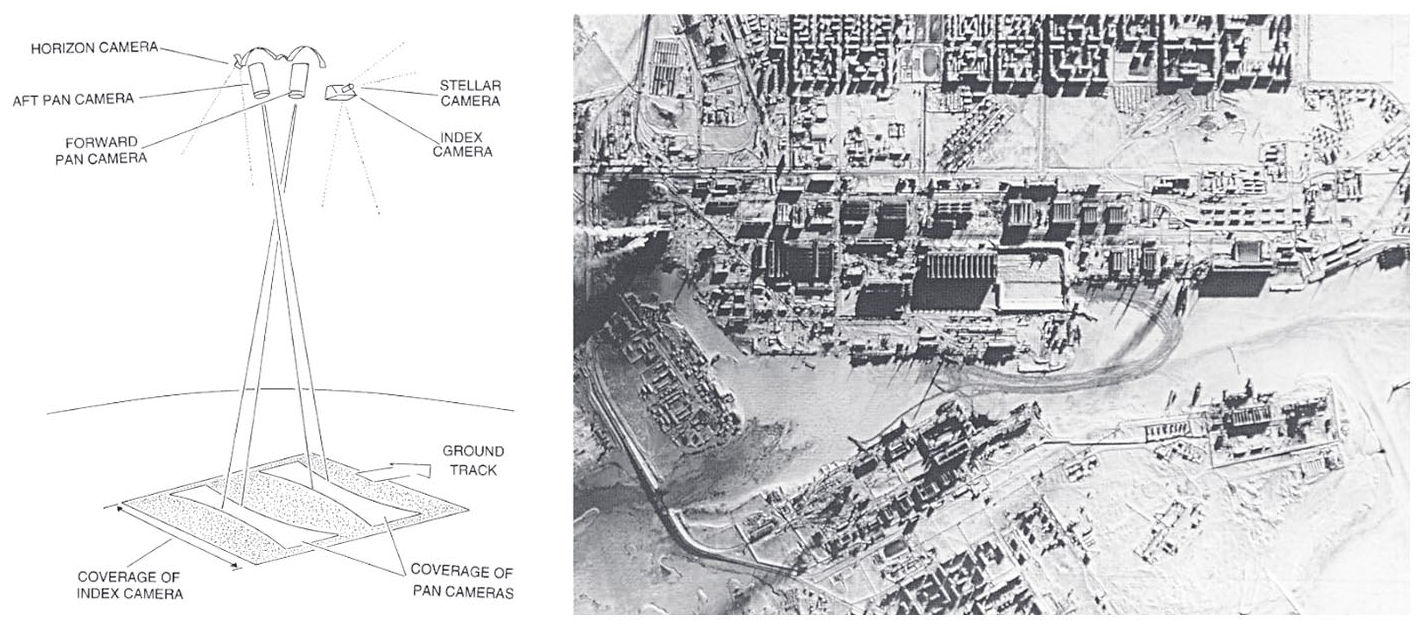

Later CORONA-Satellites carried multiple cameras, complicating the supplementary relation between images employing different perspectival modes. Two oblique—or panoramic—cameras provided the high image detail needed for object identification and allowed the stereoscopic measurement of heights within the image (Figures 3 and 4). Using these images, objects could be positioned with a deviation of a few miles, using knowledge of the orbital track and altitude, the time that the photograph was taken, and the attitude of the spacecraft (Day et al., 1998). The latter could be determined in relation to the stars, which were captured by two additional cameras: an even more oblique “horizon camera” and an outward-looking “stellar camera.” While the ground—and its cartographic representation—no longer served as the only positional references but were now complemented by celestial reference points harking back to the traditional localization practices of astronavigation, the practice of locating the panoramic images precisely within a geographic framework still relied on a vertically aligned “index camera,” whose pictures also underwent a rectification process.

Coverage of a KH-4B camera system (Campbell, 1996);

Besides the domains of image retrieval and localization, the practices of camera calibration and “ground truthing” were also presented with technical challenges caused by the high altitudes of spy planes and satellites. Atmospheric and optical distortions, which were of little consequence at shorter distances, now resulted in significant discrepancies between the actual and imaged position and size relations between the objects being imaged. While the first response to these complications was further infrastructurization in the form of mobile and static target arrays that could be used as physical calibration marks—the most prominent being an array of concrete crosses in the desert of Arizona—the further development of onboard sensors for precise measurements of an air- or spacecraft’s altitude and attitude soon rendered these infrastructures obsolete.

Operationalizing image databases

For a long time, the output frequency of satellite photography outpaced the ensuing practices of processing and interpretation. Even after the infrastructures—which were initially aligned to the much slower pace of spy plane reconnaissance—had been upscaled, large numbers of images went straight to the archives (Wheelon, 1998). This continuous archiving led Lisa Parks to characterize the satellite image’s “tense [a]s one of latency” (Parks, 2005: 91), in which “[t]he satellite image is not really produced, then, until it is sorted, rendered, and put into circulation” (Parks, 2005: 91), allowing the images to be retrieved at any point in time deemed opportune. As Parks notes, this shift toward practices of “vision through time” (Parks, 2005: 91) enables views of the past to be rendered operational in the present moment, lending themselves not only to the persuasive practices outlined above, but also to a multitude of subsequent uses that could not be conceived at the time of image production. Whereas the operationalization of observations and images obtained during the infancy of aviation was characterized by a sense of urgency fueled by the desire to shorten the intervals between reconnaissance and military action, the satellite image has all the time in the world, so to speak. Image archives, in which the products of preceding co-operative actions are stored for possible future use, follow a sedimentary logic epitomized by Google Earth’s “Time Lapse” feature: a visualization of the historic development of different geographic regions relying on satellite images dating back as far as 1984.

The case of Google Earth exemplifies how the conditions of digital image production, distribution and processing reconfigure the way in which images are rendered co-operative. Large databases drastically widen the scale at which these images can be (re-)combined and overlaid; algorithms for automatically scaling, rectifying and overlapping aerial photographs not only automate processes previously carried out by human actors, but permit the combination of pictures taken under vastly different conditions (e.g. altitude, time of the day), ultimately resulting in global-scale photomosaics. While the practices outlined in the last chapter made use of the difference or congruence between comparatively small image series, the satellite image’s usefulness now rests on aggregated data sets of satellite imagery being readily searchable. These databases-turned-maps can now, at least for the most part, be accessed by the public, opening the aerial view to a diverse field of non-military practices ranging from zoological research, to spatial planning and art (Abend, 2013).

The sheer amount of image material, often supplemented by additional layers of sensor readings provided by sensors like the Landsat satellite’s Multispectral Scanner System, also led to the interpretative practices being increasingly reconfigured and redistributed between human and technical agents. Image analysis software simplifying measurements and promising better readability via adjustments to attributes like color and contrast, and GIS software providing cartographic frameworks and overlays, have become integral tools for the interpretation of aerial photographs. Furthermore, algorithms and—most recently—machine learning solutions are increasingly employed to automate practices formerly carried out by human interpreters, for example, deriving the structure of objects from their shadows (Liasis and Stavrou, 2016) or detecting change between images taken at different times (Lu et al., 2004). 4

Drone imagery

Operationalizing the oblique image

While high-altitude reconnaissance provided extensive photographic coverage of the earth’s surface, the US military desired new methods of aerial imaging independent of the spatiotemporal regimes imposed by satellites to aid their operations in the Vietnam War. From the mid-1950s, the US relied on small radio-controlled aircraft to act as a vantage point closer to the ground—a development that would usher in the further operationalization of the oblique aerial image.

The early history of the drone is marked by a change of perspective from “an object that is seen as a target from below” to “an object that sees targets from above”: Between 1930 and 1950, the drone was initially developed as a realistic target display body designed to train ground-to-air defenses (Maxwell, 1992; O’Malley, 2013). Called “target drones,” these display bodies were guided by sight from the ground using simple remote controls. Efforts to equip these target drones with cameras did not take place until the mid-1950s, when developments in radar technology and air defense weapons made reconnaissance by manned aircraft considerably more dangerous. The attempts at removing human actors from aerial reconnaissance were also politically motivated: the tense situation of the Cold War evoked fears over the loss of manned aircraft which could lead to secret information being revealed by captured pilots or—in the worst case—to an escalation into open conflict (Newcome, 2004). While developments in satellite reconnaissance gradually allowed the mapping of large areas, the military still lacked information to supplement the satellite imagery and aid in identifying the imaged structures on the ground.

Consequently, smaller drone models, such as the MQM-57 Falconer, were outfitted with a photo camera that could be triggered by remote control. These were equipped with a radar enhancement device, which increased the radar signature of the drone, allowing the operator to pilot it without visual contact. Tracking the drone’s route in this fashion meant that radar detection also became a key element in the localization and subsequent operationalization of the images taken along the way.

A significant advantage over other established reconnaissance systems was that these small drones did not require their own major infrastructure. They could be deployed in a matter of minutes, and it took less than an hour to develop the images (Blom, 2010). The shortened chain of operations made it cost-effective and time-efficient, despite its rather makeshift qualities as an aerial platform. Similar to early balloon reconnaissance, the focus rested on obtaining and processing geographical information as quickly as possible. However, “seeing” in this case came at the price of being seen, as rendering the drone trackable not only aided in the production of co-operative images but exposed the drone to enemy radar—and subsequent targeting—at the same time.

This issue highlighted the need for automating navigational practices. Building on the development of the cruise missile, larger, mostly jet-propelled target drones, like the Ryan Aeronautical Model “Firefly,” were outfitted with a camera, mechanical timer, gyrocompass and altimeter. Equipped with a mechanical navigation system, they could be programmed to fly in a predetermined direction for a set time while remaining at a set altitude. When the timer expired, the drones returned in the opposite direction. This simple form of programming required precise planning of the flight to account for possible environmental and weather effects (Blom, 2010). The programming that preceded these images was thus essential for their operationalization: locating the images on a map meant placing them along the path described by the antecedent navigational data in the same fashion in which the knowledge of a satellite’s orbit underpinned the localization of the images taken by its cameras.

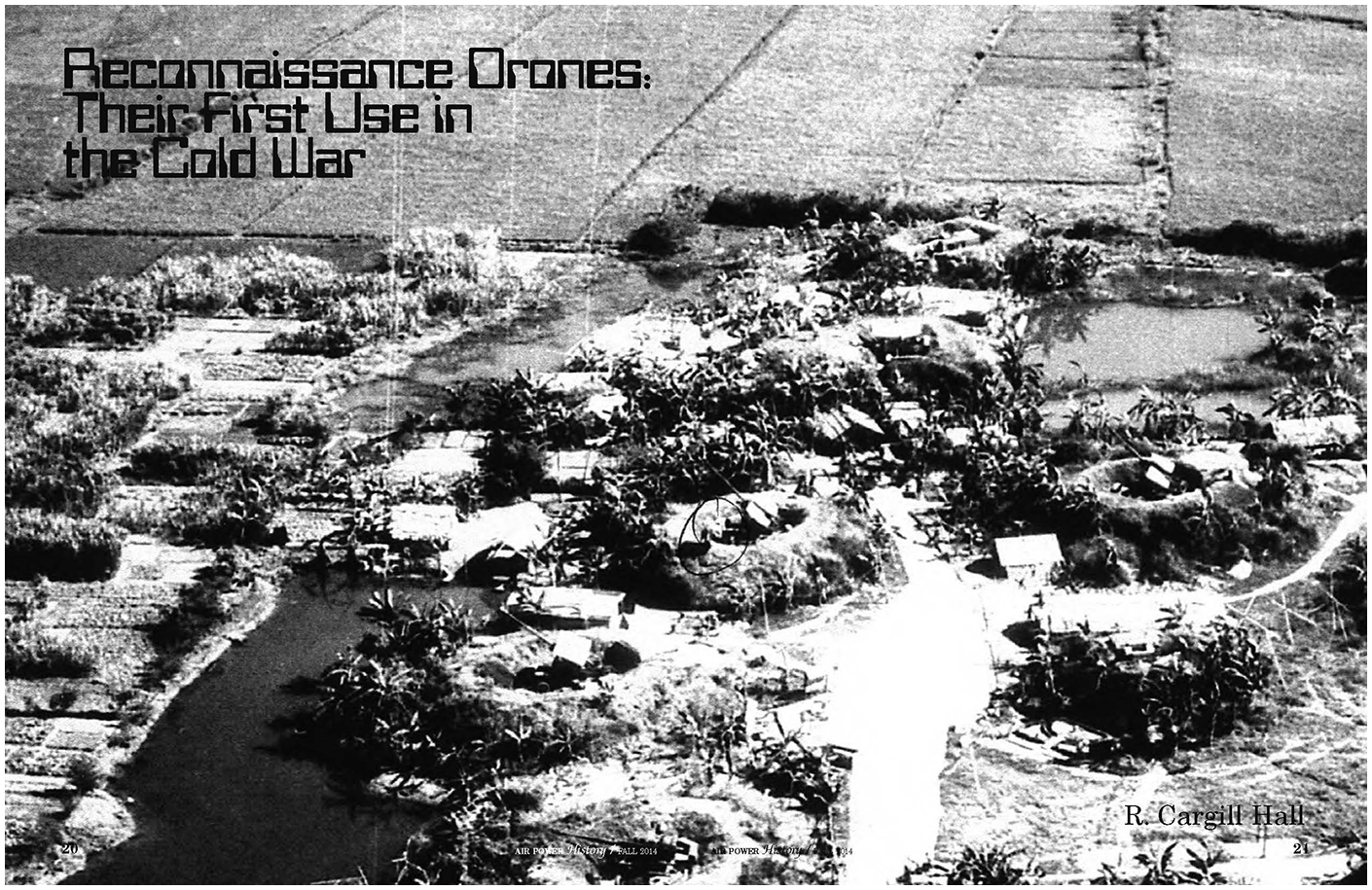

The Firefly model exemplified the drone’s much more lenient spatiotemporal regime by allowing for both low- and high-altitude photography, as well as oblique and vertical images that could be produced at short notice. Due to the often distorted and slanted perspectives, these images offered only a small degree of optical consistency, preventing the actual superimposition of image and map (Figure 5). Accordingly, they could only be operationalized within narrow co-operational contexts, acting as useful supplements to maps and satellite images by facilitating the identification of objects just like the early oblique aerophotographs did.

Aerial photograph of anti-aircraft artillery site during the Vietnam War taken by a Ryan 147SC drone (Cargill Hall, 2014: Cover).

From co-operative image to co-operative footage

The coupling of drone and TV camera, pioneered in Lockheed’s prototype MQM-105 Aquila, enabled a live-video-feed to be sent directly to ground stations for real-time operation on it. This change from individual aerial shots to a continuous video transmission fundamentally reconfigured the co-operational character of aerial images within the military chain of operations.

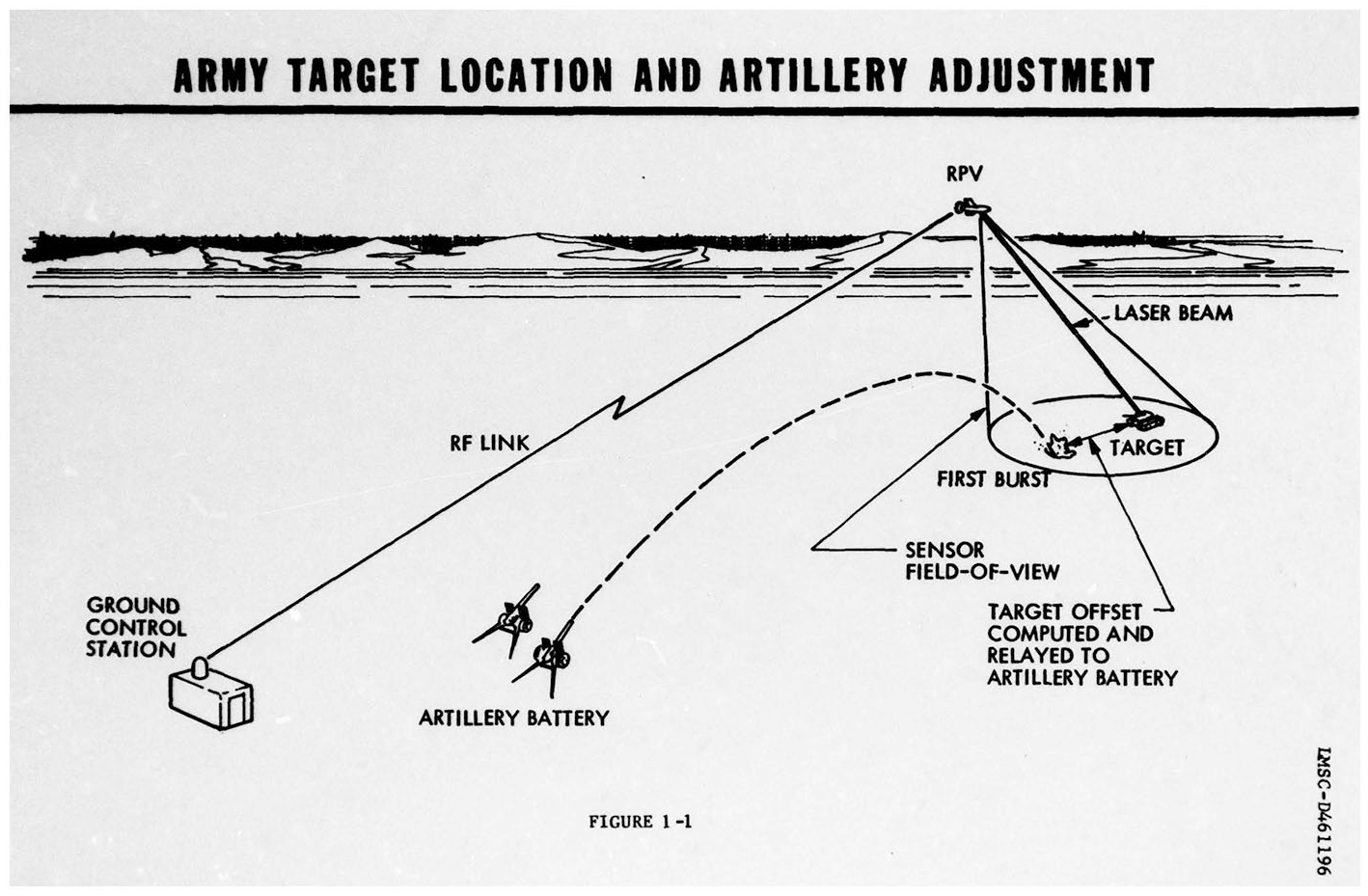

A final report on the pilot project Aquila (1972–1987) explains that the drone was supposed to simultaneously handle a host of different practices which had formerly been carried out separately: “Experience with the system in field tests points toward a greatly improved capability to observe, identify, locate, and strike enemy targets using conventional or guided artillery projectiles or missiles” (Grover, 1979: 6). In this context, the camera’s footage no longer provided optical consistency, but was used for identifying and tracking objects which could also be marked for laser targeting via the image (Figure 6). The almost real-time nature of the images made it possible to act directly on and with them without having to transfer or translate them to another frame of reference like a map. This kind of acting at a distance or “teleaction” as Lev Manovich (2001) calls it “makes instantaneous not only the process by which objects are turned into signs but also the reverse process—the manipulation of objects through these signs” (p. 170). Here, the oblique perspective becomes the main source of co-operative action, as it produces easily readable images that can be readily interpreted by human observers without having to pass through a chain of different translations. Recalling the division of labor brought about by the two-seater aircraft, a sensor operator marked the targets via the live-video feed, while the drone flew over pilot-defined waypoints. At the same time, the operator observed whether the artillery hit the target and marked the impact via a second layer on the screen. From the data of the laser and the marking on the screen, the Ground Control Station—the central computing unit of the system—calculated the offset between target and impact site. The displayed image no longer provided the basis for manual practices of calculation carried out along its surface, but an interface with which the input values for automated processes of positioning and georeferencing could be set. In this context, the drone image must be understood as an element of a scopic system—a part of “an arrangement of hardware, software, and human feeds that together function like a scope” (Knorr Cetina, 2009: 64), which collects, augments, and transmits the reality of the observed area.

Chain of operation of an Aquila drone (Tactical Systems Research & Development Division, 1977: 3).

In contrast to prior practices of aerial photography and satellite imagery, the processes of image production, interpretation, and calculation are now increasingly redistributed between human and nonhuman actors, exhibiting a shift from serial structures to a more parallel architecture. The continuous flow of information, already apparent here through the scopic arrangement of the live-video feed, can be understood as a central quality of the drone image. Accordingly, footage produced by drones can be tied back to the observational practices of “continuous observation and immediate direct transmission of data” (Field Service Manual for Balloon Companies, 1917: 1) as well as the idea that “nothing is of independent value” (Porter, 1921: 182f) already found in the context of early aerostatic platforms. In the case of the drone, the operationalization of the image is established through a direct visual connection with the observed location, in which the oblique image no longer acts as a supplement, but becomes the centerpiece of co-operative action.

From projection to processing

With the introduction and improvement of the Global Positioning System (GPS) and satellite-based live-video feeds in the 1990s, it became possible to control drones over long distances. Consequently, positional and navigational calculations were not just increasingly redistributed between human actors and technical systems but also conducted without involving the image at all.

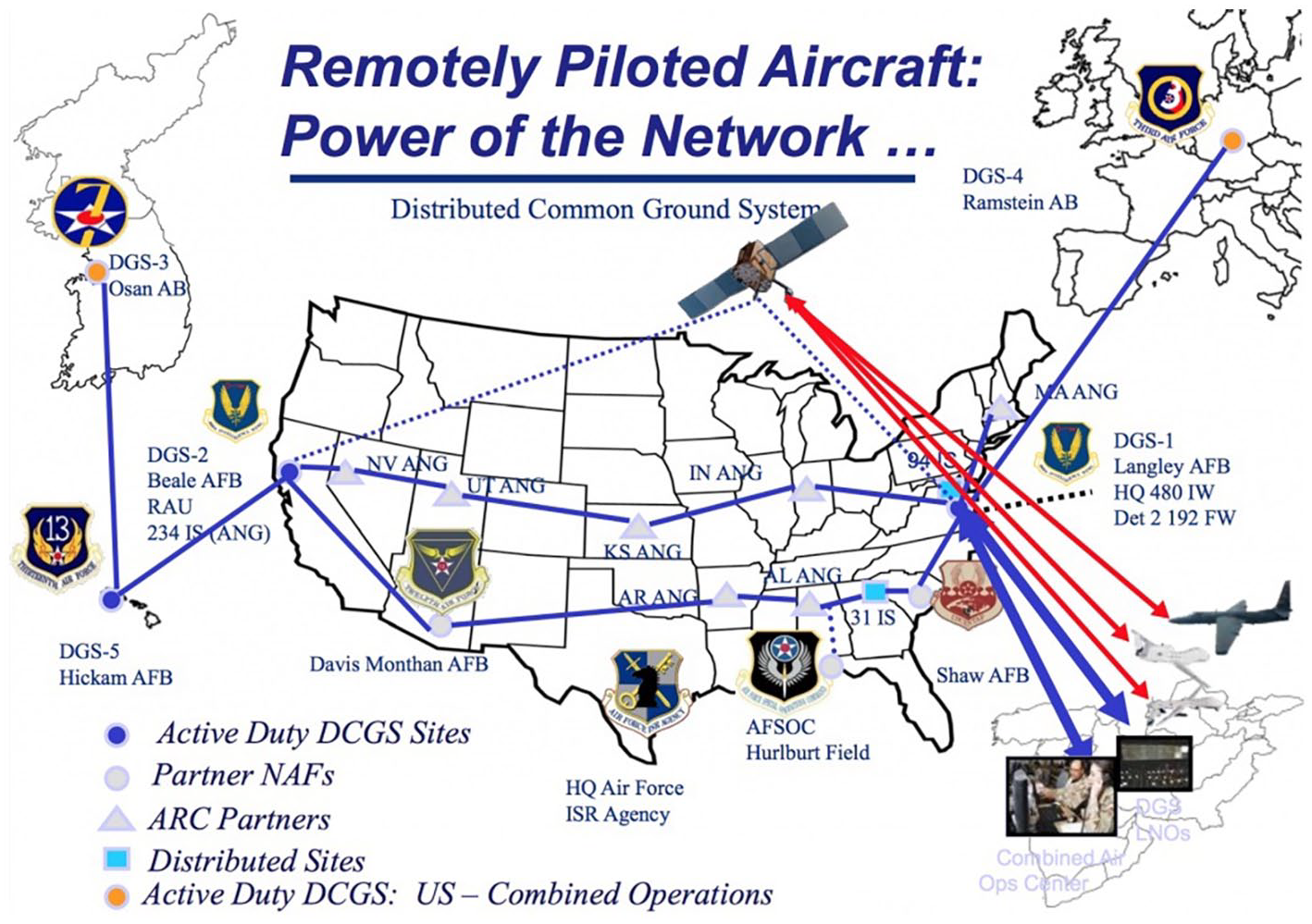

The US’s declaration of the “war on terror” in 2001 marked another turning point in the history of aerial imagery, shifting the focus from the photographic coverage of geographical formations to the localization, identification and killing of individuals. While the co-operative nature of the aerial image at least partly depended on the substrates left behind by adversary predecessors, that is, infrastructures, military equipment and other physical traces, the changes in scale outlined above meant that these traces could no longer be counted on for an understanding of the situation depicted in the image—if they were present at all. To counter this lack of visible information, newer drone models, like the infamous General Atomic’s MQ1 Predator, are integrated into a tightly meshed global intelligence network: “As targets have contracted to individuals, so intelligence gathering has swollen to incorporate data-mining and interception on a global scale” (Gregory, 2014: 12). Similar to the early Aquila drones, the Predator drones are controlled by a pilot and a sensor operator. In this case, however, the images taken are not only sent to a single control station, but are distributed via the so-called Distributed Common Ground System (DCGS), a global network of 27 connected nodes (Figure 7).

Representation of the Distributed Common Ground System (https://dronecenter.bard.edu/drone-geography/).

Within the DCGS the images are viewed simultaneously by full-motion video analysts, who transmit their results to other “screeners.” Together with an intelligence tactical coordinator, they review the images and forward the information via a system known as Internet Relay Chat to the mission intelligence coordinator. The intelligence coordinator combines the information with that from ground forces and other units and passes it on to the pilot and sensor operator. Meanwhile, a geospatial analyst provides information about the geographic features of the area being viewed (Cockburn, 2015). This exchange of information continues until a decision is reached at the end of the chain—to shoot or not to shoot. The practices of targeting and firing are—similarly to previous systems—carried out by marking the target on the screen with the major difference that the missiles are now launched from the drone itself.

While the pilot and sensor operator are subjected to the flow of near real-time video transmission, image analysts take and examine individual still images, revisit certain scenes or enlarge image areas. Consequently, the computational connections of oblique aerial images eschew optical consistency based on vertical alignment for other forms of consistency provided by the digital qualities of the image:

We are facing a paradigm shift [from geometry to algorithm] with the old [projection] being incrusted into the new [processing]. If the progressive convergence of vision and representation since the fifteenth century has made possible the instrumentalization of the gaze, its algorithmization has rendered the image itself operative, and this operativity supposes the understanding of the world as a database. (Hoelzl and Marie, 2015: 3)

The central attributes of the drone image—which is simultaneously produced for the human and machinic gaze—are its distributedness, modularity and interoperability. It informs human decision-making processes while at the same time playing a “vital role in synchronous data-to-data relationships” due to its digital nature (Hoelzl and Marie, 2015: 3). Accordingly, the drone image can be characterized as an operational image in several respects: (1) to human operators, the drone’s near real-time images grant the ability to “teleact” (Manovich, 2001) and thus exercise power at a distance; (2) read by machines, they “do not represent an object, but rather are part of an operation” (Farocki, 2004: 17); and (3) as the description of the common ground system has shown, the video footage is continuously processed into and accompanied by other forms of operative imagery like symbols, diagrams, graphs and maps “and thus open[s] up spaces to handle, observe and explore what is depicted” (Krämer, 2009: 104, transl. by author).

While Marc Andrejevic (2021) draws on the artistic work of Trevor Paglen (2014) and Harun Farocki (2004) to theorize the drone image as an image made by machines for machines, we propose that the drone image is the prototypical co-operative image, made into a useful tool by operations carried out by human and nonhuman actors at the same time.

Following Hutchins (1995) the co-operations performed within the common ground system can be understood as a form of distributed cognition whose centerpiece is the co-operative drone footage which informs the actor’s decision-making process while being simultaneously refined—made easier to use—by their continuous interpretation. While the navigational processes described by Hutchins boiled down to the question of “Where am I,” the common ground system centers on the question of “Who is that?,” which often equals “What information about that person can we connect to the drone image?”

As political, cultural, economic, demographic, and military background information can hardly be captured within the image but are deemed relevant to the decision-making process nonetheless, they are brought in from the outside in the form of additional data streams—a method that often relies on intercepting and tracking digital communications and devices. While earlier forms of aerial reconnaissance saw the reading of physical traces within the terrain as signifiers of adversarial co-operative action, digital traces now also fulfill that role:

Digital data collection might be understood as enabling a new form of reverse flow, that is, the ability to capture the rhythms of the activities of our daily lives via the distributed, mobile, interactive probes carried around by the populace. (Andrejevic, 2015: 195)

The scopic structure of these data-to-data relations recontextualizes the actual situation, transforming it into a “synthetic situation,” a “composite of information bits that may arise from many areas around the world and feature the most diverse and fragmented content” (Knorr Cetina, 2009: 69). This informational composite, focused on the co-operative drone image, can be understood as an attempt to resolve the apparent contradiction between removing oneself from a situation while retaining situational awareness (Suchman, 2016), extending established military imaginaries of situational awareness into a form of data-based “response presence” (Knorr Cetina, 2009) that demands intensified monitoring practices and a permanent preparedness to act. This anticipatory logic brought about by the continuous informational flow of drone imagery signifies a shift from the reactive mode of action connected to earlier imaging practices toward one of pre-emptive action (Andrejevic, 2021).

As the military term “kill-chain” implies, the co-operative actions creating these kinds of synthetic situations lead to a binary decision informed by the view from above. Here, the inherent ambiguity of the aerial image plays a janiform role: on one hand, it is framed as a problem to be overcome by infrastructurization and distributed interpretational practices. On the other hand, it provides a resource of vagueness ready to be exploited for retrospective practices of justification should the need arise. In its function as a medium of justification/conviction, the oblique image connects to everyday visual habits much more readily than the top-down vertical view, giving an impression of being “easy to read.” Yet the data streams involved in its operationalization and the ensuing decision-making process are rendered opaque, just like the interpretative practices producing the persuasive satellite image were before.

Conclusion

Up to the present day, a wide range of practices and infrastructures have been employed to render the view from above operational—first in the form of the balloonist’s gaze, later in the form of aerophotos, satellite imagery, and drone footage. By following these historical trajectories, we showed that for the longest time, the geomedial qualities of aerial images depended on their orthogonal perspective for providing the optical consistency needed for superimposition with the geographic operative image par excellence—the map. Accordingly, the oblique view was relegated to a mere supplement, feeding singular bits of information into the reference system.

With the paradigmatic shift from the geometric to the algorithmic brought about by digital drone footage, this supplementary relationship between the vertical and the oblique gets called into question. Machine vision approaches allow for the localization of objects within oblique images as well as for the identification of objects within vertical images. At the same time, the ability to teleact on the terrain via the oblique image emancipates the aerial image from the map on whose ability to organize space it depended for most of its lifetime. Gaining independence from the obligatory passage point of cartographic representations also meant that the oblique aerial image could be distributed across the whole chain of operations almost simultaneously, rearranging the practices of identification, localization, and decision-making formerly carried out in a serial fashion into a more parallel configuration.

Across the different periods in time, aerial images are not only to be understood as co-operative because they presuppose joint efforts of human and nonhuman actors, but also because they derive meaning from physical and digital substrates produced by various actors whose interests do not necessarily have to align, for example, adversaries in military conflicts.

The three types of aerial imagery analyzed in this article each exhibited a particular spatiotemporal character pertaining to both the conditions under which they were rendered co-operative and their eventual use: the practices of early aerial reconnaissance mainly fueled short cycles of writing and rewriting both the map and the terrain; the satellite’s constant processes of orbital movement, image-taking and archiving not only drastically widened the volume of produced images but also the temporal horizon of their use; finally, the near-real-time drone video complemented by other data streams produces a synthetic situation which allows for another form of “vision through time” (Parks, 2005) not concerned with the past but with the immediate future, establishing a logic of pre-emptive action.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation)—SFB 1187 “Media of Cooperation.”