Abstract

The growing ubiquity of algorithms in everyday life has prompted cross-disciplinary interest in what people know about algorithms. The purpose of this article is to build on this growing literature by highlighting a particular way of knowing algorithms evident in past work, but, as yet, not clearly explicated. Specifically, I conceptualize practical knowledge of algorithms to capture knowledge located at the intersection of practice and discourse. Rather than knowing that an algorithm is/does X, Y, or Z, practical knowledge entails knowing how to accomplish X, Y, or Z within algorithmically mediated spaces as guided by the discursive features of one’s social world. I conceptualize practical knowledge in conversation with past work on algorithmic knowledge and theories of knowing, and as empirically grounded in a case study of a leftist online community known as “BreadTube.”

The growing ubiquity of algorithms in everyday life has prompted cross-disciplinary interest in what people know about algorithms. The resultant literature is diffuse and rapidly growing. It collates a variety of concepts—folk theories, algorithmic skills, algorithmic competencies, algorithmic literacy, the algorithmic imaginary, among many others, and spans a variety of domains—human–computer interaction (HCI), digital literacy, media studies, and labor studies, to name a few. Moreover, this literature has established the significance of algorithmic knowledge for a diverse array of inquiries, including those related to user behavior; user experience; agency and autonomy; fairness, accountability, and trust in systems; digital, media, and information literacies; and algorithmic power, control, and management.

The purpose of this article is to build on this growing literature by highlighting a particular way of knowing algorithms evident in past work, but, as yet, not clearly explicated. Specifically, I conceptualize practical knowledge of algorithms to capture knowledge located at the intersection of practice and discourse. This way of knowing algorithms involves sensemaking refracted through the discursive landscape of one’s social world (Clarke and Star, 2008), but locates knowledge in the situated practices around algorithms that this sensemaking inspires.

I conceptualize practical knowledge in conversation with past work on algorithmic knowledge and theories of knowing, and as empirically grounded in a case study of a leftist online community known as “BreadTube” (Figure 1). Through a description of this community’s discourses and practices around YouTube’s algorithms, I both exemplify what practical knowledge looks like (one version of it) and how it functions. “BreadTube” refers to a loosely connected network of leftist YouTubers and their audiences. The community coalesced around a shared interest in spreading leftist ideology and opposing the propagation of far-right ideology online (Kuznetsov and Ismangil, 2020; Maddox and Creech, 2020), which necessarily invited discussion and consideration of algorithms. As the BreadTube.tv (n.d.) website states: The goal of the community is to challenge the far-right content creators who have taken advantage of the profit-driven algorithms used by services like YouTube for the purpose of spreading hate. We wish educate people on how their world operates, the alternative possible visions for our future, and how we organize ourselves to get there.

r/BreadTube homepage (as of February 2022).

As this “mission statement” alludes, algorithms regulate the visibility of people and ideas online, as platforms deploy them for sorting, filtering, and moderating content (Bucher, 2018; Gillespie, 2014; Noble, 2018). Algorithms’ gatekeeping role has been of particular interest in relation to YouTube, where many, including BreadTubers, fear the platform’s algorithms contribute to far-right radicalization (Hosseinmardi et al., 2021; Munn, 2019; O’Callaghan et al., 2015; Ribeiro et al., 2020). BreadTube’s preoccupation with algorithms’ role in the relative visibility of far-right and leftist content on YouTube made (algorithmic) knowledge building more salient and, so, fruitful for my inquiries. Given this context, throughout this article, I focus on Internet algorithms, specifically, which bounds my conceptualization of practical knowledge, though I hope the insights that follow may prove useful for other contexts.

In what follows, I begin by describing broad themes in the body of work on algorithmic knowledge. As part of this overview, I explain how this literature creates a space for a kind of knowledge-in-practice guided by the discursive landscape of a social world. Then, following discussion of my methodology, I describe some prominent discourses in the BreadTube community, how the community reads these discourses into their understanding of YouTube’s algorithms, and how they have cultivated practices reflective of these readings. To conclude, I offer a summative explication of practical knowledge, as exemplified in the case study.

Existing work on algorithmic knowledge

Through a thematic tour of the body of work on algorithmic knowledge—one which highlights some, but by no means all research in this area—I describe in this section how this literature lays a foundation for practical knowledge. Throughout, I draw on theories of knowing to further scaffold this foundation. The tour is divided into three parts: work locating knowledge in thought, by way of operational and propositional ‘‘facts’’; work locating knowledge in practices; and work acknowledging the context-contingent nature of knowledge.

Knowledge located in thought

In general, the literature exploring algorithmic knowledge has predominantly focused on operational and propositional ‘‘facts’’ people know about algorithms (“the algorithm is/does X”). Moreover, there has been a particular emphasis on the former, namely, knowledge about what algorithms do, how, and sometimes why. Here, the HCI tradition of investigating users’ “folk theories” has been foundational in its focus on intuitive causal frameworks people use to explain algorithmic behavior and impacts (DeVito, 2021; DeVito et al., 2017). Seminal works in this area by Rader and Gray (2015), Eslami et al. (2015, 2016), and DeVito et al. (2017, 2018) have all contributed to our understanding of how social media users know algorithms from a technical standpoint. For example, both Rader and Gray (2015) and Eslami et al. (2016) investigated whether or not users knew that Facebook did not display all available posts in their news feeds. While Rader and Gray (2015) focused on “users’ beliefs about what the Facebook News Feed chooses to display, and why” (p. 173), Eslami et al. (2016) focused on “how users reason about the operation of [online platform] algorithms” (p. 2371). DeVito et al. (2017) identified two different classes of folk theories about a rumored transition to an algorithmic timeline on Twitter: operational theories (knowing some criteria that shapes algorithmic curation) and abstract theories (a nonspecific sense that an algorithmic timeline would change Twitter). Building on this work, DeVito (2021) recently introduced a typology of folk theories according to complexity: “basic awareness” (knowing algorithms are present), “causal powers” (knowing algorithms “play[] a causal role in a distinct outcome/outcomes” (p. 13), “mechanistic fragments” (knowing some factors or datapoints algorithms take into account), and “mechanistic ordering” (knowing something about the pathways or sequence of steps algorithms take). Following earlier folk theories research, Dogruel (2021) also recently demonstrated the prevalence of three folk theories about how algorithms work (personal interaction theory, popularity theory, and categorization theory) among Internet users, as well as a theory that platforms’ economic orientation shapes functionality.

The focus on knowledge about algorithmic operations has also extended beyond folk theories work. In their article about “algorithmic knowledge gaps,” Cotter and Reisdorf (2020) investigated “what Internet users do or do not know about how algorithms work in the context of search engines” (p. 747). Studying “algorithm skills,” Hargittai et al. (2020) defined “understanding algorithms” as “Having some sense of how systems process information about users, and how they can and may use information they have about the user when they present content to said user” (p. 8). In the context of voice assistants, Gruber et al. (2021) focused on “people’s awareness and understanding of how algorithms influence what people see” (p. 1774). Developing and validating an instrument measuring the cognitive dimensions of algorithmic literacy, Dogruel et al. (2021) focused on “being aware of the use of algorithms in online applications, platforms, and services and knowing how algorithms work” (p. 2). Alvarado et al. (2020) examined beliefs about algorithmic recommendation on YouTube among middle-aged users, focusing on the factors and actors shaping algorithmic recommendation.

Beyond knowledge of algorithmic operations, other work has considered propositional understanding of the qualities, significance, and impact of algorithms. For example, French and Hancock (2017) identified four folk theories about Facebook’s and Twitter’s algorithms: rational assistant (algorithms serve users’ interests), the unwanted observer (algorithms serve platforms’ interests via data collection), the transparent platform (feeds are unfiltered) and the corporate black box (algorithms are opaque and not easily controlled). Festic (2020) investigated users’ awareness of risks associated with “algorithmic selection applications,” which included privacy and surveillance concerns, diminishing variety of content online, and manipulation. Ytre-Arne and Moe (2021) investigated perceptions of the positive and negative consequences of algorithms, synthesizing five folk theories: “algorithms are confining, practical, reductive, intangible, and exploitative” (p. 807). Karizat et al. (2021) looked at TikTok users’ folk theories about the platform’s For You Page algorithm and identity, finding that participants viewed the algorithm as appraising accounts based on social identities, which led to amplification and suppression that mirrored patterns of marginalization. Studying Costa Rican Spotify users, Siles et al. (2020) identified two prominent folk theories: useful recommendations require surveillance and algorithmic recommendation requires appropriate training to increase precision. Through her concept of “algorithmic gossip,” Bishop (2019) demonstrated how beauty vloggers learn about changes to YouTube’s algorithm in the absence of platform-provided information, while also highlighting beliefs about the discriminatory nature of YouTube’s algorithm.

Knowledge located in practices

The examples of work that locates algorithmic knowledge in operational and propositional facts in the previous section are undeniably important. Yet, this conceptualization of algorithmic knowledge only represents one way of knowing algorithms. Specifically, it privileges forms of knowledge that Ryle (1945) calls “know that,” in contrast to “know how.” While the former constitutes “knowing that something is the case”—familiarity with facts, rules, or heuristics—the latter constitutes “knowing how to do things” (Ryle, 1945: 4). The former locates knowledge in thought, while the latter locates knowledge in action. Moreover, know how rests on the notion of tacit knowledge, that “we can know more than we can tell” (Polanyi, 2009: 4).

Recognizing such “know how,” many recent studies have begun to demonstrate that knowing algorithms entails not only knowing that an algorithm is/does X, Y, or Z, but also knowing how to accomplish X, Y, or Z within algorithmically mediated spaces. For example, DeVito et al. (2018) demonstrated that folk theories of how social media algorithms function inform self-presentation practices. Jarrahi and Sutherland (2019) conceptualized “algorithmic competencies” to describe how Upwork workers formulate practices to “work around or manipulate [algorithms]” (p. 578). Klawitter and Hargittai (2018) conceptualized algorithmic optimization skills among creative entrepreneurs, which pertained to augmenting the visibility of goods for sale on online markets. Similarly, Bishop (2018) and Cotter (2019) highlighted, respectively, how YouTube beauty vloggers and Instagram influencers express knowledge about algorithms through their visibility practices. Evans (2021) showed how Hip-Hop artists in Chicago implement their knowledge of platform algorithms through “clout-chasing” practices. Other studies have focused on practices of resistance to algorithms underwritten by knowledge of them. For example, van der Nagel (2018) described two tactics, “Voldemorting” (avoiding mentioning certain names or keywords) and screenshotting, which users mobilize to resist platform algorithms based on their explanatory theories about them. Likewise, Festic (2020) highlighted Internet users’ awareness of risks associated with “algorithmic selection applications” and the practices they subsequently develop for managing these.

Closely related to practices, some work has drawn attention to the embodied dimension of algorithmic knowledge through discussion of affective experiences. Central here is Bucher’s (2018) foundational argument for an epistemology of algorithms that positions “experience and affective encounters as valid forms of knowledge of algorithms” (p. 94). Through her concept of the “algorithmic imaginary” (more on this soon), Bucher (2018) emphasizes knowing through doing, suggesting that we make sense of algorithms via “habitual practices of being in algorithmically mediated spaces” (p. 114). Informed by this concept, Swart’s (2021) study of young people’s news practices documented how feelings of surprise (both negative and positive) resulted in reflection on algorithms and resultant “norms and attitudes about how social media algorithms ought to function” (p. 6). Likewise, in her aforementioned study, Bishop (2019) also invoked the algorithmic imaginary in describing how some beauty vloggers make sense of their relative successes with YouTube’s algorithms through an “affective lens.”

Toward practical knowledge of algorithms

Beyond “know that” and “know how,” some work has productively drawn attention to the context-contingent nature of algorithmic knowledge, which allows for interpretive flexibility. This perspective rests on what Bucher (2018) refers to as algorithms’ “manyfoldedness,” that “algorithms exist on multiple levels as ‘things done’ in practice” (p. 19). Such a manyfoldedness suggests that different people know algorithms in different ways at different times under different contexts as they are “incorporated within specific sociomaterial practices” (Bucher, 2018: 31). As mentioned, through her concept of the algorithmic imaginary, Bucher suggests that moods, affects, and sensations that result from encounters with algorithms grant special embodied insight about them. Through situated encounters, Bucher (2018) suggests, “people learn what they need to know in order to engage meaningfully with and find their way around an algorithmically mediated world” (p. 98). The concept of the algorithmic imaginary, thus, brings together “know that” and “know how,” but further specifies the importance of the specific conditions under which people experience algorithms.

While the algorithmic imaginary and its application in later studies has generatively highlighted the situated and embodied nature of algorithmic knowledge, more could be said about the intersection of discourse and social practices in this. In her original study, Bucher focused on “personal algorithm stories” that capture individual experiences, but less so the social dimensions of knowledge, which seems to have shaped subsequent uses of the concept. Alternately, while some work has brought forward social practices, these studies do not always relate them to discursive understandings of algorithms and/or recognize this as knowledge.

Broader theories of knowledge, particularly the social worlds’ framework, help explain the how discourse and social practice intersect in knowledge building. This framework suggests that knowledge building unfolds as objects gain meaning for people via their participation in “shared discursive spaces” (Clarke and Star, 2008: 113), or social worlds (Bowker and Star, 1999; Lave and Wenger, 1991). Social worlds are understood to be “groups with shared commitments to certain activities, sharing resources of many kinds to achieve their goals and building shared ideologies about how to go about their business” (Clarke and Star, 2008: 113). The concept captures the organization of life as groups do things together and develop a shared language and ethos for their “doing.” From a social worlds’ perspective, our values, beliefs, and attitudes about knowledge objects are constructed via social practices and relations (Clarke and Star, 2008).

This perspective further suggests that knowledge building entails becoming, as meaning comes into focus as people discern the systems of social relations in which they find themselves. As Lave and Wenger (1991) put it: “Learning thus implies becoming a different person with respect to the possibilities enabled by [. . .] systems of relations” (p. 53). Alternately, as Warschauer (2003) explained it: “learning how is intimately tied up with learning to be, in other words developing the disposition, demeanor, outlook, and identity of the practitioners” (p. 122). This “learning to be” not only entails identity work, but involves developing an intuitive sense for what to do in a situation. In the language of Bourdieu’s (1998) practice theory, knowing involves conjuring interpretive schemes via the habitus, or cultivating a kind of practical sense for what is to be done in a given situation—what is called in sport a “feel” for the game, that is, the art of anticipating the future of the gamer which is inscribed in the present state of play. (p. 25)

In this case, knowledge may not necessarily be that which can be verbalized, but rather understanding tacitly expressed via action.

Returning to the literature on algorithmic knowledge, some past work has gestured toward the importance of discourse and social practices. Previously, I demonstrated how discursive ideals of authenticity and entrepreneurship circulating among Instagram influencers shapes how they understand algorithms and subsequently pursue visibility (Cotter, 2019). Likewise, in her study of how news organizations grapple with algorithms, Bucher (2018) highlighted that her subjects “rely on well-known journalistic discourses of professionalism when talking about the role of algorithms in journalism” (p. 138) and discussed how they developed a sense of how to respond. Christin (2017) explained how web journalists and legal professionals “interpret and make sense of algorithms in different ways” (p. 10), according to institutional context, which gives rise to “strategies for minimizing the impact of algorithmic techniques in their daily work” (p. 9). Siles et al. (2020) illustrated the role of “cultural repertoires” in folk theories of algorithmic recommendations on Spotify among Costa Rican users. The authors showed how national identity, profession, academic major, trade, and group membership offer resources for users to draw on in making sense of algorithms.

These studies help position algorithmic knowledge as a practice that leverages meaning making within local assemblages of people, algorithms, practices, and settings. In the rest of the article, I build on this work to single out and more explicitly explicate the concept of practical knowledge, as well as bring it to life with empirical data. For now, I offer a brief definition, on which I elaborate later. Practical knowledge entails knowing how to accomplish X, Y, or Z within algorithmically mediated spaces (practice) as guided by the discursive features of one’s social world (discourse). In this way, practical knowledge represents, as Bourdieu (1998) put it, a “practical sense” or feel for how to work with and around algorithms, as filtered through the lens of social worlds.

Method

In this research, I chose a case study approach in order to foreground social context and relations shaping encounters with algorithms. As mentioned, I further deliberately targeted the BreadTube community as a social world with a manifest interest in algorithms. Recall that BreadTubers share a collective interest in amplifying the visibility of leftist content and counteracting the so-called alt-right pipeline on YouTube. I collected data from online discourse materials (social media discussions, videos, images, articles, etc.) and semi-structured interviews with members of the BreadTube community (n = 20). Using these dual sources of data allowed for a degree of triangulation, allowing me to both observe BreadTube “in the wild” as an online community and solicit in-depth inner insights on BreadTubers’ beliefs and experiences. Data collection primarily took place between January and May 2020. 1 I collected online data mainly from the BreadTube subreddit (r/BreadTube), a BreadTube Facebook group, YouTube, and Twitter. Interviews lasted on average an hour and 15 minutes, and focused on the practices by which participants learned about and interacted with algorithms, and how they understood themselves and the social world of BreadTube, particularly in relation to algorithms. 2 I recruited most interviewees by reaching out to users who posted comments or threads in r/BreadTube about algorithms. These reflections offered a starting point for unpacking what participants knew about YouTube’s algorithms and how they knew what they knew. 3

I employed an inductive qualitative approach informed by constructivist grounded theory (Charmaz, 2014). That is, while I did not conduct a grounded theory study, I adopted many of its techniques and tenets. This approach served my aim of investigating and giving depth to an underdeveloped domain of empirical inquiry: knowledge of algorithms at the intersection of social practices and discourse. I followed an iterative approach. I began by collecting and coding online materials, particularly attending to prominent discourses I discerned in these. I then explored these discourses in interviews and refined and expanded coding as I learned more. What I learned in interviews further fed back into online data collection. Once I completed initial coding, I conducted focused coding by condensing and refining frequent or significant codes I had identified (Charmaz, 2014). I also employed mapping techniques to organize and clarify insights. 4

Importantly, the findings that follow should be considered an orderly account of a far messier reality (Star, 1983). While necessarily reductive, this account allows for greater clarity in the conceptualization of practical knowledge. Moreover, my findings and the data from which I drew them should also be understood as partial, historical, situated by nature of my own position (see Supplementary Appendix D).

How BreadTube knows algorithms

In this section, I begin by explaining high-level discourses among BreadTubers about the community’s disunity, which implicate an existential anxiety about the community’s commitment to collectivist values. Then, I describe how this anxiety shapes more specific discursive readings of YouTube’s algorithms as part of the community’s struggle to actualize collectivist values. Finally, I describe how BreadTubers respond to these readings through practices that preserve the community through a reaffirmation of their collectivist values. In doing so, I illustrate the intersection of discourse and practice in algorithmic knowledge within this community.

BreadTube discourses: disunity and the struggle to actualize collectivist values

The BreadTube community notoriously exists in a constant state of existential crisis, which is evident in discourses about the community’s disunity. First, the community hosts a spectrum of beliefs, ranging from Social Democratic to Maoist, which has engendered considerable infighting. There is regular commentary within BreadTube of the community “cannibalizing” itself. Moreover, many BreadTubers recognize that the community’s infighting interferes with its ability to come together for tactical unity in promoting leftist ideas or action. As BreadTube creator Secular Talk (2020) noted in a video, infighting renders the community “politically impotent and ineffectual.”

Alongside sectarian rifts, a second discourse within the community concerns BreadTube’s “unbearable Whiteness” (Watanabe, 2019). Although BreadTube advocates for social, political, and economic equity, various members of the community have raised red flags about the marginalization of content creators of color in BreadTube spaces, which reveals additional divides. For example, in her video “Why is ‘LeftTube’ so White?,” Black BreadTube creator Kat Blaque described how interest in her work depends on valuations of it by White audiences (Wilkins, 2019). Acknowledging these points, a user commented on a r/BreadTube post titled “Where are the Black Breadtubers?”: “it’s hard for me to go on YouTube and hear from all these intersectional people—and yet the Tubers of color have maybe 10,000 subs, at most. It’s a huge disparity between the two. . .”

These discourses about BreadTube disunity co-exist with the community’s aversion to Liberalism and corresponding alignment with collectivist modes of governance, particularly as manifested in values of solidarity and equity. As I will now explain, the community’s anxiety over the contradiction between the community’s fractures and these values materializes in their discursive readings of algorithms as encouraging individualism through competition and conflict. These readings of YouTube’s algorithms, described, respectively, below under the headings “Survival Under Capitalism” and “The Algorithm Demands a Sacrifice,” highlight the tension YouTube’s algorithms inspire in relation to the community’s collectivist commitments.

Survival under capitalism

Many in the BreadTube community read YouTube’s algorithms as enforcing a neoliberal capitalist order, which encourages competition. This reading engenders inner turmoil among the community as a result of the incongruity of using YouTube while also speaking out against capitalism. Yet, with no viable alternatives to YouTube, many in the community, as one BreadTuber told me in an interview, see themselves as coping with “survival under capitalism.” Although BreadTubers advocate for social and economic equity, this position conflicts with their simultaneous awareness that YouTube’s recommendation algorithm revolves around engagement metrics (e.g. subscribers, likes) that differentiate between creators by assigning value to them. As BreadTube creator Coffin (2019) explained in a video, engagement metrics “tell you how popular something is. They tell you how credible the person saying something is and they provide an incentive to try to create content that will get those metrics.”

By emphasizing engagement metrics, some BreadTubers believe YouTube’s recommendation algorithm creates different “classes” of BreadTube creators. In a Medium article about BreadTube, Black (2019) explained: There are people who have an incredible amount of social capital like Contrapoints, Hbomberguy, [Philosophy Tube], and the like, who control the fluctuations of the market through what they decide to Tweet or talk about. There’s a “middle class” of content creators who are associated with them who have enough social capital to sway an audience, but not enough to be immune from pressure at the top. And then there’s everyone else. No sway whatsoever. No sizeable control over what gets brought in the spotlight or how conversations unfold.

This statement encapsulates a view that BreadTube creators with many subscribers and views have been chosen as “elites” by algorithms that reward visibility with more visibility and, so, allow them to accumulate financial, social, and cultural capital.

Importantly, various BreadTube creators have noted the racial dimensions of this hierarchization. Angie Speaks (2020) explained in a video, the type of people who make up the top tier of BreadTube [. . .] are usually White men who are academics or have extensive degrees in certain things, and they are oftentimes from not working class backgrounds. [. . .] That class controls the narrative of the left.

Professor Flowers (2020) illustrated one way this happens, arguing that Black BreadTube creators who present “more nuanced and to the point discussions about race” frequently do not resonate with BreadTube’s predominantly White audience and, thus, are not considered part of BreadTube.

Many BreadTubers further see the enactment of a hierarchical order as essential to the design of YouTube, as BreadTube creator Non-Compete explained in a video: YouTube will never give us tools to work together as creators, because they want us all to have this capitalist mindset. [. . .] They very much want you to be in the mindset that you were a capitalist, you are out here to compete, you were out here to fight with everyone else elbow to elbow to push yourself up through the algorithm. They are not going to give us communal tools to operate . . . (Johnson, 2019)

Feeling obliged to adopt this “capitalist mindset” provokes unease, as BreadTube creators feel forced into competition with one another. Thus, as Peter Coffin put it in a video, BreadTubers see the attention economy structured by algorithms as “a pure expression of neoliberal capitalism” (Angie Speaks, 2019).

The algorithm demands a sacrifice

Many BreadTubers also believe that algorithms impede unity within the community by rewarding participation in BreadTube’s “drama” (see also Christin and Lewis, 2021). They believe that creating or participating in conflict in the community through YouTube content draws attention, which leads to increased engagement, which leads to increased visibility. Thus, BreadTubers commonly refer to some variation on “algorithmically-driven bouts of conflict” (Khaled, 2019). BreadTube creator Re-Education (2019) addressed this point in a video: Drama always gets the most views. Drama always gets the most attention. [. . .] but none of it really matters, because at the end of the day, the capitalists are the ones that make all of the money. Every single tweet, every single repost, every single like that you make online is all a transaction. It’s all a way for them to gain more eyes, gain more attention, and thusly more ad revenue money. But every single time, it chips away a little part of our movement. We can still criticize each other. Of course we can. But that doesn’t mean that we have to cannibalize ourselves every time the algorithm demands another sacrifice.

Similarly, speaking of “Twitter pile-ons,” Tyler

5

told me in an interview: You’ll have literally thousands or tens of thousands of people calling you a terrible person. And sometimes it may even be justified, but sometimes it can be overblown. And then people who receive that think that’s authentic, but really, the algorithm encouraged it.

As these comments suggest, many BreadTubers believe YouTube’s algorithms give those who participate in “drama” a leg up in terms of visibility, thus encouraging others to join in the fray. In this way, some BreadTubers read YouTube’s algorithms as inflaming existing rifts within the community and obstructing attempts to achieve unity.

Some BreadTubers additionally read algorithms as deepening existing rifts in the community by siloing audiences on YouTube. For example, one user commented on a BreadTube video: I reckon “lines in the digital sand” [about who comprises BreadTube] are getting drawn partly just because of human tribalism, but this is massively amplified by the recommendation algorithms. They’re clustering people based on their viewing habits, and this is accelerating and fragmenting ideological polarisation.

In a video, BreadTube creator T1J took up this reading and linked it to BreadTube’s racial divides, arguing “videos made by people of color, aren’t really made for White people. And so they’re thought of as a completely different genre. And YouTube’s algorithm no doubt considers them a different genre as well” (Peterson, 2019). Making a similar point, a BreadTuber tweeted: . . . it does seem that YouTube’s algorithm is partly to blame for the whiteness of LeftTube’s canon. It never showed me Kat Blaque until the LeftTube is white video, and I had to find out about TJ1 from a link Jack Saint put up.

In short, the BreadTube community recognizes itself as a community divided by conflicting ideologies and racial biases. Believing that YouTube’s algorithms encourage competition and conflict, many BreadTubers view them as exacerbating the community’s already fractured state by urging individualism. Essentially, they see algorithms as a counterforce to their commitment to collectivism.

From discourse to practice: BreadTube’s greater-good tactics

In response to the above readings of YouTube’s algorithms, BreadTubers have cultivated practices that seem to reflect an attempt to actualize their commitment to collectivism. In this, they have developed tactics for amplifying leftist content, which, from a strictly instrumental perspective, may seem illogical. Yet, these tactics serve a greater-good of (at least ostensibly) upholding the sustainability and integrity of the community, particularly by reaffirming shared collectivist values of solidarity and equity. Such “greater-good tactics” require individual BreadTubers to make sacrifices and/or assume risks, but, as BreadTube creator Non-Compete explained in a video: “‘The rising tide will lift all the boats’ and we have to mindfully, thoughtfully apply ourselves to these algorithms” (Johnson, 2019). In their quest to make leftist content more visible on YouTube, the BreadTube community works to realize an ideological commitment to collectivism and resist the disciplinary force perceived of YouTube’s algorithms. The community particularly accomplishes this through building an infrastructure of communal support for making lesser-known creators visible and helping them succeed in spite of algorithmic prescriptions for individualistic pursuits of visibility.

In a system perceived as prioritizing competition and conflict, supporting lesser-known creators would seem to offer few benefits for individuals and, indeed, incur significant costs. Yet, calls for “bigger” BreadTube creators to platform or “signal boost” lesser-known creators have resounded in the community. Mainly, the costs revolve around BreadTube’s tendency to be self-critical and turn against anyone who expresses a “bad take.” Creators worry that giving a lesser-known creator a “shout out,” or otherwise allying with them, could hurt their reputation if the lesser-known creator turns out to be controversial in some way. Moreover, vetting and working with lesser-known creators takes time and energy and often interferes with creators’ ability to maintain the momentum of their own channel. Many BreadTube creators recognize that YouTube’s recommendation algorithm rewards regular and frequent publishing and when they reduce their productivity, the algorithm penalizes the reach of their subsequent videos. Non-Compete discussed this in a video: if we miss a week or two on our YouTube channels, the algorithm hits us so hard, and then the monetization drops. [. . .] We’re living paycheck to paycheck and the pressure to continually pump out content for ourselves, for our own individual channels is extremely high. (Johnson, 2019)

For these reasons, if BreadTubers only cared about the visibility of individual creators, it would make little sense to devote time, energy, and resources to supporting lesser-known creators. And yet, the BreadTube community widely champions this. In a continuation of his above comments, Non-Compete exemplified this in pledging his support to smaller creators, particularly marginalized creators, in spite of acknowledged risks: We’re not going to stop platforming people who suffer from oppression and have righteous anger. We’re going to continue to try to find small leftist creators to support. [. . .] However, I want you to understand that that’s a big risk that we’re taking onto our shoulders when we do that. There are always effects and you can see on the algorithm, you’ll see the videos where we lose the most subscribers, a lot of those videos are videos where I’m interviewing smaller content creators or people who, for whatever reason, you know, the audience doesn’t respond to well, and I’ll lose subscribers . . . (Johnson, 2019)

As these comments suggest, BreadTubers’ commitment to supporting other creators is a matter of principle, of prioritizing solidarity with the rest of the community, particularly those facing structural barriers.

Notably, this commitment is also a pragmatic attempt to increase the overall visibility of leftist content. In interviews and online, BreadTubers routinely acknowledged that YouTube’s algorithm recommends content based on common viewing patterns across users (i.e. collaborative filtering). Many BreadTubers seek to create a “leftist pipeline” as a counterforce to the alt-right pipeline. For example, one user wrote in r/BreadTube: we (as viewers) need to streamline the nascent left-wing pipeline. We have the greatest power over the algorithm—sure Google can set the test parameters—but we are the source data. We need to create a stratified alliance between progressive forces. If you are a hard line “scary” leftist and don’t feel like hiding your viewpoint or getting involved in political games, I would suggest building a community between channels espousing your vanguard views and the more popular issue based left channels. If you are more moderate or undecided, try to do the same for the more issue based left channels and the moderate edutainment channels.

Many BreadTubers believe that for individual creators to increase their visibility, they must establish links to other creators, particularly those with a substantial following. Many prominent creators’ decision to share the “wealth” of their visibility with others hinges on this understanding. They believe doing so strengthens the connection of lesser-known creators with the rest of the BreadTube network, and, thus, increases the visibility of BreadTube as a whole.

Creators share their “visibility wealth” mainly via linking to other creators in video descriptions; referencing them in videos; and liking, commenting on, and sharing their videos. In an interview, BreadTube creator Thought Slime offered an example of one creative way they platform lesser-known creators: I think one thing that does [help BreadTube’s visibility] [. . .] is we need to shout out one another and show each other smaller channels and help them grow. And so that’s something . . . In every video, I shout out a smaller channel. I made it a whole bit. [. . .] I have a new, unique intro every video where I . . . Something spooky happens. It’s a whole thing that I do. I do that because I know that if I didn’t, people would be like, “Oh, this isn’t what I came here for.” They would press that little 30-second, skip forward button until I was done talking about it.

Thought Slime and many other BreadTube creators particularly seek to platform BreadTube creators of color. As another example, in her video, “Algorithms & skin tone bias (colorism), to be dark on the internet/‘breadtube,’” Mbowe (2021) paired a curated list of 45 Black YouTubers with the argument that individuals can counter the colorist tendencies of YouTube’s algorithms “by simply going out of your way to engage with content creators that have a bit more melanin.” Of such efforts, a user mused in r/BreadTube: “A conscious choice to exploit the algorithm while platforming those with a worthy message who haven’t got that reach, is the opposite of marginalizing them.”

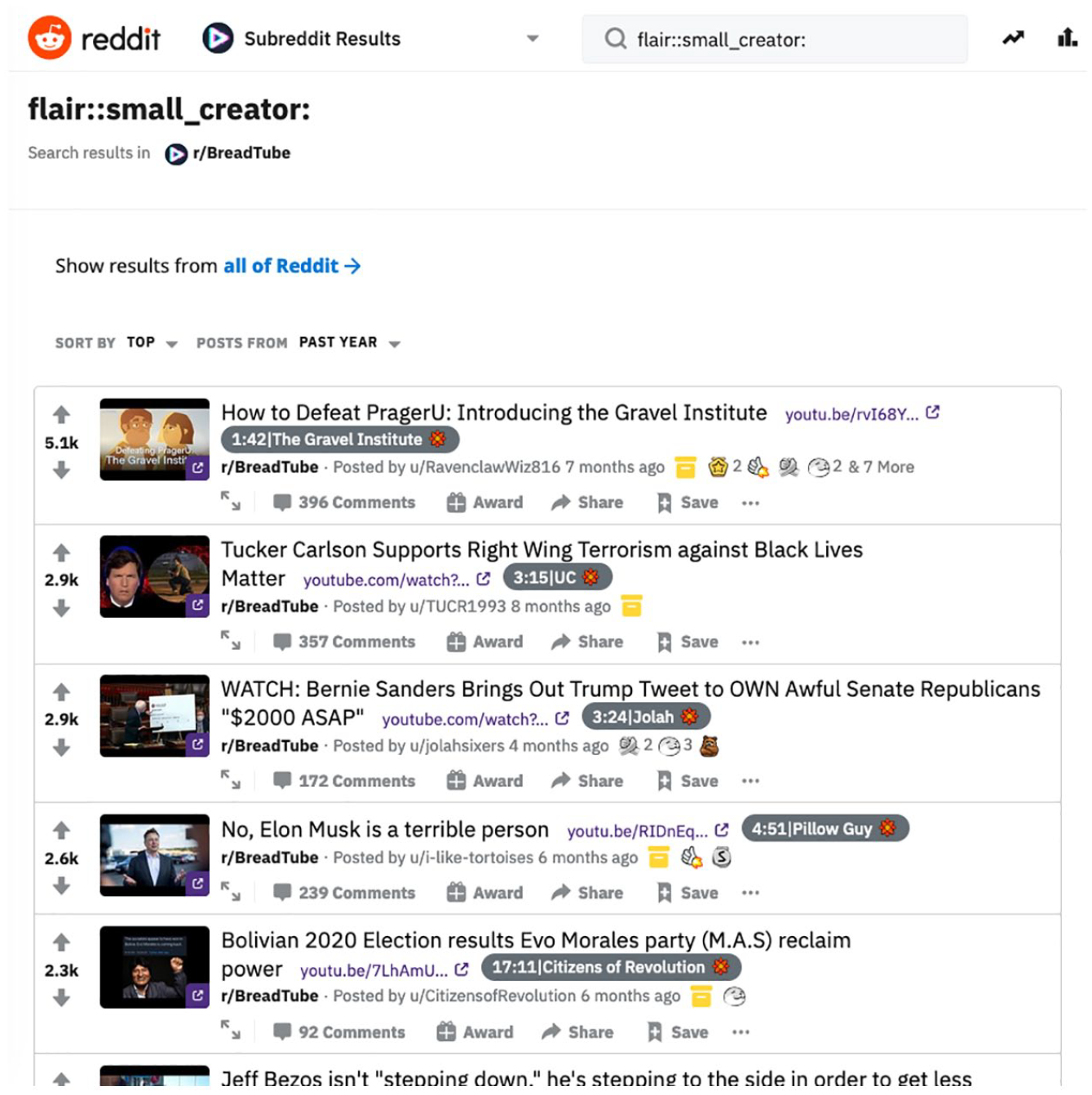

Similarly, the BreadTube subreddit has spent time strategizing how to make lesser-known creators more visible on YouTube. One tactic the subreddit has implemented is to use “flair” to highlight lesser-known creators (Figure 2). Flair is an identifier, or tag, attached to reddit usernames. This tactic grew from a suggestion by a user in r/BreadTube: In the current state of the subreddit, posts of new creators are often difficult to find among videos of more well known creators. The more well known a creator is, the more likely the audience is to comment and engage with the post linking to the video. This leads to reddit’s algorithm making the content of already known creators more visible, since it strives to maximise engagement. However, I think the subreddit can and should support smaller creators. The first step in that is making those creators more visible. Let’s hack Reddit’s algorithm with one simple (and legal) trick. This can simply be circumvented by enabling a flair for new creators. This would enable interested members of the subreddit to search for videos specifically of creators that are just starting out, while not hindering the normal enjoyment of the subreddit. Once new channels are more visible, promoting them through other methods is also easier.

r/BreadTube small creator flair (screenshot from February 2022).

One additional example of a greater-good tactic is BreadTube.tv, an open source “distribution platform” built by members of the community to share BreadTube content. As the BreadTube.tv (n.d.) website explains, Services such as YouTube use hidden algorithms to serve content to users, the preferences of these algorithms are determined by YouTube’s values, which [. . .] is the generation of capital through Advertising. Replacing a service like YouTube is unfeasible for any community, let alone one as small as ours, however utilizing its features and circumnavigating the algorithm using our own listings is possible.

On a GitHub page seeking input on how to build BreadTube.tv “anarchistically,” Dirk Kelly (2019), one of the creators, lists the values that guide the project: “Decision making relative to the amount we’re impacted,” “Solidarity in our purpose,” and “Equity in the value created by this platform.” Kelly then articulates the project’s goal as “design[ing] a platform for our cause that challenges the existing power structures and their A L G O R I T H M S.” While it does not seek to supplant YouTube, BreadTube.tv represents a technical mechanism for subverting the platform’s capitalist structure in order to encourage greater equity than the community believes the platforms’ algorithms naturally permit.

In sum, while supporting lesser-known creators can be risky, time-consuming, resource-intensive, the BreadTube community sees it as a sort of redistribution of visibility wealth, uplifting those facing material constraints, and generally increasing the collective visibility of the community. The above-described tactics demonstrate BreadTubers’ awareness of the community’s belief that supporting other BreadTube creators—namely, those with fewer subscribers—is something they “should” do. In formulating these tactics, we can see a kind of knowledge in action. BreadTubers’ response to algorithms defies a strictly instrumental understanding of what tactics effectively increase visibility, as based on operational or propositional facts. Instead, BreadTubers’ seek to square their collective pursuit of visibility with the community’s shared values of solidarity and equity. Notably, earnest acceptance of these shared values is not necessarily what motivates the adoption of greater-good tactics. Mere awareness of the shared values and a desire to remain in alignment with the community, in some cases, may be the prevailing motivation. Whether earnestly felt or performative, supporting lesser-known creators invokes an ideological commitment to dismantling systems of power and promoting tactical unity among leftists.

Practical knowledge

BreadTube has cultivated an understanding of what YouTube’s algorithms mean in relation to prominent discourses circulating within the community and has subsequently formulated a sense of how they should respond. Against this background, I now formally introduce practical knowledge. Here, “practical” emphasizes knowledge located in practice, as shaped by local discourses. BreadTube demonstrates practical knowledge in the co-constitution of discursive readings of and practices around YouTube’s algorithms. BreadTubers read the algorithms as requiring individualistic pursuits of visibility, as animated by overarching discourses about the community’s disunity. In response, BreadTubers have developed tactics to boost the visibility of leftist content on YouTube that prioritize the community’s collectivist values.

As the BreadTube case study illustrates, practical knowledge captures how people locate and configure algorithms within their social worlds and orient themselves to them accordingly. It grows from collective self-conceptualization—a sense of who and what a social world is and values. Practical knowledge begins with a shared understanding of what algorithms “want”—which ways of being/doing/thinking are deemed valuable and rewarded—and deciding whether and how to appease them. This decision inheres in a social world’s practices, with sensemaking structured by the system of relations therein. Thus, practical knowledge is knowing-as-becoming: it builds a social world and preserves it, as members dynamically account for algorithms in the shared interests, activities, discourses, spaces, and objects that comprise their system of relations. Beyond algorithmic know-that and know-how, which entail a sense of viable responses to algorithms, practical knowledge entails a sense of resonant responses.

Practical knowledge also stands in stark contrast to ways of knowing algorithms that focus on interactions between an individual and an algorithm, as abstracted from situations. While such knowledge is essential, it does not fully determine how users will behave or orient themselves to algorithms. When viewing BreadTube’s responses to YouTube’s algorithms as following from an understanding of what the algorithms do and how, they might seem curious, irrational even. The concept of practical knowledge helps us look beyond a “planning model of human action” (Suchman, 2007: 31) rooted in cognitivism, which conceives of humans as fundamentally rational beings, disconnected from the context, locatedness, and embodiment entailed by people’s experiences with algorithms. Indeed, practical knowledge is the action. It is knowledge expressed intuitively in action. Moreover, it is more about sociality than cognition.

Conclusion

In this article, I described and empirically illustrated practical knowledge, a social practice-oriented way of knowing algorithms. In both scholarly and mainstream discussions of algorithmic knowledge, I urge efforts to account for practical knowledge as a legitimate form of local expertise that does not necessarily demand technical expertise. The foregoing analysis contributes a starting point for future work that can further flesh out the boundaries, dynamics, and implications of practical knowledge. I hope practical knowledge offers useful vocabulary in this work, but claiming its unconditional applicability to all possible contexts would be short-sighted. I do not mean to suggest that practical knowledge looks exactly the same for, say, algorithms deployed in criminal sentencing or assessing creditworthiness. Indeed, future work will need to attend to the local conditions under which people interact with algorithms, since practical knowledge is highly contextual in nature. Algorithms are boundary objects (Clarke and Star, 2008): they cut across myriad domains, perform different functions in different contexts, and affect people in different ways and to different degrees (Bucher, 2018). Methodologies that can accommodate context, contingency, and historicity lend themselves to the study of practical knowledge.

To close, alongside other ways of knowing algorithms, practical knowledge offers an additional reserve of insight about the co-constitution of people and algorithms, particularly relevant to questions of autonomy, agency, and power, as it comes into being through action. Those of us concerned with human–algorithm interaction should be mindful of the wisdom realized in people’s maneuvering around algorithms, which sometimes is only visible when cast against the backdrop of their social worlds.

Supplemental Material

sj-pdf-1-nms-10.1177_14614448221081802 – Supplemental material for Practical knowledge of algorithms: The case of BreadTube

Supplemental material, sj-pdf-1-nms-10.1177_14614448221081802 for Practical knowledge of algorithms: The case of BreadTube by Kelley Cotter in New Media & Society

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Science Foundation [grant number SES-1946678].

Supplemental material

Supplemental material for this article is available online.

Notes

Author biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.