Abstract

This study explores relocated algorithmic governance through a qualitative study of the Ubenwa health app. The Ubenwa, which was developed in Canada based on a dataset of babies from Mexico, is currently being implemented in Nigeria to detect birth asphyxia. The app serves as an ideal case for examining the socio-cultural negotiations involved in re-contextualising algorithmic technology. We conducted in-depth interviews with parents, medical practitioners and data experts in Nigeria; the interviews reveal individuals’ perceptions about algorithmic governance and self-determination. In particular, our study presents people’s insights about (1) relocated algorithms as socially dynamic ‘contextual settings’, (2) the (non)negotiable spaces that these algorithmic solutions potentially create and (3) the general implications of re-contextualising algorithmic governance. This article illustrates that relocated algorithmic solutions are perceived as ‘cosmopolitan data localisms’ that extend the spatial scales and multiply localities rather than as ‘data glocalisation’ or the indigenisation of globally distributed technology.

Keywords

Introduction

The health sector has witnessed an increase in algorithmic solutions over the last decade. Although research has focused on evaluating the efficacy of these solutions from the perspective of governance, researchers have not investigated adequately the situated experiences of those in the (non)negotiable spaces that can be constructed by these algorithmic solutions.

Researchers have extensively studied the algorithmic systems’ technical interoperability in social contexts and as a means for effective governance (Bengio et al., 2019; Hoque et al., 2020; Wimmer et al., 2018). Algorithmic governance, as a form of management by and based on technology, still tends to overlook social context-specificity because the idea of ‘data on the move’ instead of ‘people on the move’ clashes with the current, formal understanding of diversity. Our study contributes to the emerging paradigm of contextual research (Loukissas, 2019; Masso and Kasapoğlu, 2020; Kasapoğlu et al., 2021; Petrović et al., 2020) by assessing individuals’ perceptions about the algorithmic governance and the tensions that arise in a local context. Because algorithms do not determine in absolute, non-negotiable ways how one space is converted into another (Kitchin and Dodge, 2014), this study assesses the mutual dependencies in algorithmic contexts, as expressed by individual end users in the process of algorithmic governance. Our goal is to advance the research on the situatedness of algorithmic governance by examining the on-field understandings and potential tensions in implementing algorithmic solutions negotiated by and between parties in a local, socio-cultural context.

We have conducted qualitative in-depth interviews with data experts, medical practitioners and parents to explore their perceptions of Ubenwa, an algorithmic mobile health (mHealth) application (app; hereinafter referred to as Ubenwa). Ubenwa detects birth asphyxia – the main cause of neonatal mortality worldwide – by decoding the cries of infants through a machine learning algorithm that detects potential biases in infant cry patterns. The algorithmic solution of Ubenwa is an ideal case study because the app was developed in Canada, the datasets were based on the cry patterns of babies born in Mexico and now the solution is being tested in Nigeria. Ubenwa illustrates an exemplary case for investigating the potential socio-cultural tensions that arise from the delocalisation and re-contextualisation of algorithmic solutions.

We do not know how the perceived (dis)empowerment through data governance and self-determination or, in other words, the extent to which the implementation of the Ubenwa in Nigeria is accepted and re-appropriated at an individual level. Indeed, despite Nigeria’s adoption of Ubenwa according to the principle of ‘algorithmic good’, unintended issues may still emerge from the diversity of and differences in the socio-cultural contexts in the development, testing and implementation phases of the app. At the current stage, one in which the app is still not in use, we can at best reveal the prevalent understandings of the reallocation of power through cross-border algorithmic solutions and map the discourse of such potential reallocations of power.

The aim of our article is to reveal people’s perceptions about dislocated algorithmic solutions and the (non)negotiable spaces that these algorithmic solutions potentially construct. More specifically, we answer three questions: (1) How do individuals understand the Ubenwa as a negotiated algorithmic solution? (2) How do the Nigerian parties understand the multiple and potentially competing social contexts in which the data underlying the Ubenwa have been collected, developed and tested? (3) How do the Nigerian parties approach the re-contextualisation of the algorithmic solution in their local social context? In answering these questions, we explore how individuals perceive, based on their local socio-cultural perspectives and the (non)negotiable spaces that these algorithmic solutions potentially construct, the efficacy and legitimacy of algorithms as tools for global health governance.

Theoretical background

Algorithmic governance in context

We start from the theoretical assumption that algorithms are technologies situated in social contexts (Couldry and Mejias, 2019). Here ‘social context’ means the practical settings in which actions occur and desires have meaning, and the social interactions that inform and are informed by data. Algorithms are not simply neutral sets of rules (O’Neil, 2016) but are mathematical formalisations embedded in precise worldviews and localised socio-cultural values. However, such contextual views of algorithms are often neglected (Kitchin and Dodge, 2014).

Disagreements arise over how to understand the social fabric that underpins the design of algorithms and how algorithms, in turn, construct the context(s) in which they are implemented. Some studies treat context as a formal or technical category (Prince, 2020) in which algorithmic governance occurs rather than actively shapes the context. Others have warned against falling into a ‘contextual trap’ (Usher, 2020) that ignores the power geography (Prince, 2020) or the values and meanings that individuals ascribe to algorithmic solutions in specific socio-cultural contexts (Aizenberg and van den Hoven, 2020). Scholars have discussed governance through algorithms in detail (Latzer and Just, 2020) and the potential, unintended consequences, such as automated inequalities and discrimination (Eubanks, 2018; Noble, 2018). However, aside from exploring the contexts in which the solutions are developed, researchers have not explained sufficiently how individuals in the contexts in which the solutions are implemented understand the relocated algorithmic solutions.

The concepts of data colonialism (Couldry and Mejias, 2019) and techno-colonialism (Madianou, 2019) have been suggested to explore the appropriation of territories through data. However, Calzati (2021) has called for theoretical elaborations to situate data colonialism in context and reveal its networked ecology. The interest in algorithmic governance, the locality of data and the situated use of algorithmic solutions are also often overlooked because of international institutions that focus on economic growth and effectivity (Held, 2016; Mayer and Acuto, 2015). While Mayer and Acuto (2015) have suggested that new conceptual frameworks are required for considering the socio-technical geographies of today’s global governance, research is also needed to identify individuals’ socio-cultural perspectives about algorithmic governance in multiple and diverse contexts.

Recent approaches have brought social contexts into the discourse on algorithmic governance. Loukissas (2019) emphasises the shift from ‘data sets’ to ‘data settings’ and claims that data are always local and localised. In other words, data are created in context and by the context; data also shape that context. Data are transferred repeatedly across borders and across different spatial scales and contexts (Mühlhoff, 2020) to be used and re-used for different purposes in various settings. Although algorithms are produced through individual performances and social interactions that are (un)consciously mediated in relation to the mutual constitution of the code and space (Kitchin and Dodge, 2014), we still know little about how individuals perceive and understand the multiplicity of data contexts and the use of relocated algorithmic systems.

Our study relies on a relational approach to social contexts (Couldry and Mejias, 2019; Kitchin and Dodge, 2014), which suggests re-examining the human–technology relationship as a multi-layered process. According to this approach, we assume that the value and meaning that individuals confer to algorithms depend on the contexts in which people have access to their perceptions about where the technology belongs and who owns it. The relational approach is based on the ways that individuals perceive themselves in mutual relation to the social context(s) (Archer, 2017; Donati, 2021; Masso et al., 2020a), in which algorithmic governance evolves, is designed and is implemented. The relational approach provides us with a framework to explore whether and how individuals attribute qualities to global algorithmic systems through their perceived and localised social relationships.

In summary, in this article, we propose the concept of (non)negotiable spaces of algorithmic governance. The concept involves a set of dynamic structural, cultural and social practices through which algorithmic applications are mutually created, accessed and interacted with. We assume that the intersections of contexts and algorithms are mostly visible in cases in which data technologies are relocated. We also assume that we can study these intersections by exploring individuals’ perceptions about them. Our article explores individuals’ understandings of the multiple social contexts of health governance and thereby contributes to a better understanding of contextual thinking in algorithmic governance.

Multiple contexts of health governance

Algorithmic governance in health care has a relatively long tradition (Maglogiannis et al., 2007). Increased data availability and cross-border data sharing have brought health algorithms to global attention. At present, the increase in both data and interest has not gone hand-in-hand with a critical understanding, especially from a socio-cultural perspective, of the tensions inherent in health algorithms.

By examining individuals’ perceptions about the Ubenwa mHealth app, we unravel these issues and explore the ways in which the related parties perceive the application of an algorithm developed in another context. The app records a child’s crying and analyses the cry’s amplitude and frequency patterns to provide an instant diagnosis. 1 The underlying algorithm was developed, trained through machine learning and tested in Canada using an initial dataset of 1389 asphyxiated and non-asphyxiated samples of infant crying from the ‘Baby Chillanto’ database in Mexico (Onu et al., 2017; Rosales-Pérez et al., 2012), which has been tested extensively and used by research institutions (Jeyaraman et al., 2018). 2 Ubenwa is now in clinical trials in both Canada and Nigeria and is tested on real-life patients; the app continues to collect and annotate infant cries (Onu et al., 2020). Ultimately, the adoption of Ubenwa in Nigeria provides an accessible, diagnostic tool for detecting birth asphyxia in a resource-poor country.

Compared with existing diagnostic mechanisms, such as the Apgar method, for detecting birth asphyxia, Ubenwa is 95% cheaper, offers an immediate diagnosis, and requires little or no expertise (Onu et al., 2020). However, the socio-cultural understandings and meanings that individuals ascribe to this negotiated, algorithmic solution remains unknown. To overcome this gap in knowledge, we examine the perceived benefits and challenges of the Ubenwa as articulated by data experts, medical practitioners and parents in Nigeria. We assume that how the application has been developed and is currently implemented represents a contextual, if not contested, instance of algorithmic governance.

Prior research has explored thoroughly the potential of mHealth apps in developing countries where there are shortages in health care resources (Chib, 2013; Hoque et al., 2020; Steinhubl et al., 2015). Innovation in mHealth apps is expected to bridge the resource gap in opportunities between developed and developing countries (Chib, 2013) and between urban and rural areas (Shukla and Sharma, 2016) thereby providing better health care solutions for diagnosis, consultation and counselling. The existing literature has also demonstrated the usefulness of interventions through mHealth tools (Early et al., 2019) and the potential of mHealth tools to transform how health care is delivered and to improve its quality (Steinhubl et al., 2015).

Researchers have examined in detail the technical barriers to the implementation of mHealth solutions in multiple contexts, such as a moderate readiness for interoperable health care systems and integration with existing health information systems (Hoque et al., 2020; Ojo, 2018; Wimmer et al., 2018). Other studies have addressed ethical concerns about data privacy, security and trust that arise from relocating algorithms across contexts (Jokinen et al., 2021; Steinhubl et al., 2015); others have addressed the social contextual and individual variations in applications of mHealth solutions (Ibrahim et al., 2017; Smahel et al., 2019). International evaluation criteria have been introduced (Hoque et al., 2020) to tackle barriers to the implementation of algorithmic solutions across contexts.

In summary, algorithmic solutions that cross borders provide valuable resources for developing countries. Research has explored the technical barriers but has widely ignored the local, socio-cultural understandings that individuals ascribe to these algorithmic solutions. Kitchin and Dodge (2014) suggest that algorithms do not convert space in a universal, non-negotiable way, so that all assumed determinations do not occur similarly in all social contexts. Nevertheless, researchers rarely examine people’s understanding, situated in a social-cultural perspective, about the contextual implications of the dislocation and relocation of negotiated algorithmic solutions. To avoid future failures, it is essential to examine the perceptions of those directly involved in the use and implementation of algorithmic solutions and how they understand (non)negotiable spaces constructed by global algorithmic governance.

Methodology

This study used in-depth interviews as the main method for exploring how people understood and negotiated the Ubenwa as a relocated algorithmic solution. We first conducted an online survey among Nigerians to map the general understanding about, expectations of and perceived challenges for health governance through algorithms (n = 171); a snowball method was combined with posting of a link to the survey on thematic Facebook groups for parents of new-born babies in Nigeria. We used the results of the initial survey to compose an interview plan, which was the main method of this article, to focus on general understandings about, expectations of and perceived problems for health governance through algorithms, as highlighted in the initial quantitative survey.

Purposeful sampling

We applied a purposeful sampling technique (Suri, 2011) to collect interview data. As indicated in previous theoretical (Kitchin and Dodge, 2014) and empirical studies (Masso and Kasapoğlu, 2020), the application of the relocated algorithmic solutions assumes mutual negotiations of algorithms and space through multiple, individual agencies.

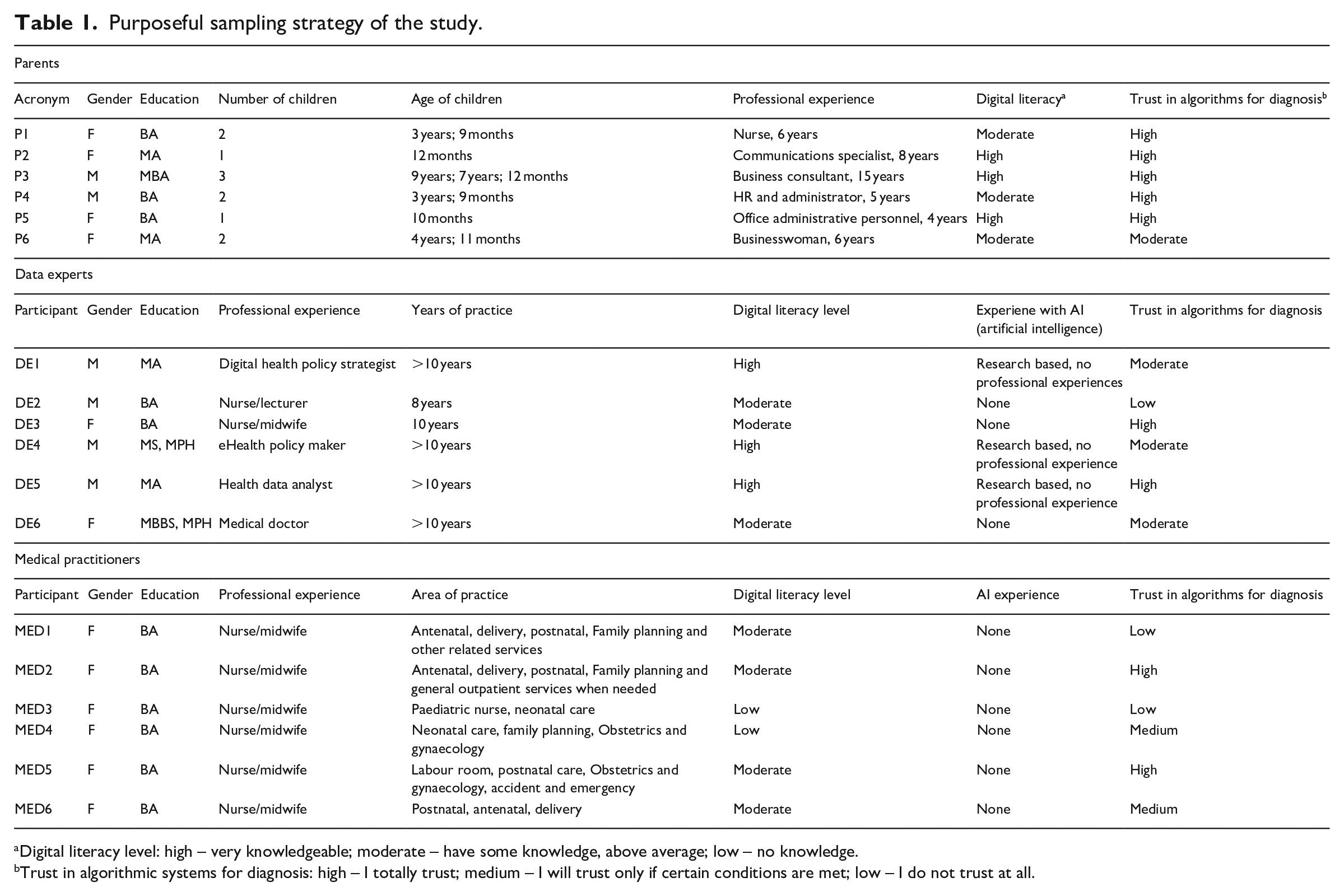

The sample for this study included three main groups of interviewees in Nigeria (N = 18): (1) parents, the main user targets of the Ubenwa (n = 6); (2) data experts involved in the development and implementation of algorithmic solutions (n = 6); and (3) medical practitioners, who provided support to parents using the Ubenwa (n = 6). The data experts are key stakeholders of digital health in Nigeria and consist of health officials and individual researchers who make a potential contribution to mHealth solutions. The medical practitioners, including experienced nurses and midwives, mediate the experience of disadvantaged groups (e.g. people who are illiterate and people from rural areas) and represent the decision makers who interpret the outcomes of the Ubenwa algorithm. In this study, parents represent the end users of the app and were interviewed to evaluate the user experience, which is crucial for algorithmic solutions.

All participants possessed fairly good digital literacy insofar as they were rather educated, thus they could voice their experiences and opinions about data, algorithms and global algorithmic governance, which are all abstract topics (see the explanation of study limitations in the ‘Discussion’ section). These subsamples were varied purposefully by gender, age, field of expertise, years of practice and so forth, to ensure a variety of perspectives about the Ubenwa as a negotiated algorithmic solution. Table 1 provides a detailed description of the interviewees according to relevant contextual information.

Purposeful sampling strategy of the study.

Digital literacy level: high – very knowledgeable; moderate – have some knowledge, above average; low – no knowledge.

Trust in algorithmic systems for diagnosis: high – I totally trust; medium – I will trust only if certain conditions are met; low – I do not trust at all.

Design and procedure

To facilitate interviews and capture participants’ perspectives on the Ubenwa, we developed semi-structured questions. The interviews combined open-ended questions with projective techniques (Soley and Smith, 2008) and the walk-through method (Light et al., 2018).

The interview plan covered the following key topics: (1) general understanding of and personal experience with algorithmic health management and (2) perceptions about the Ubenwa regarding the contextual multiplicity in which the solution was developed and implemented. We adopted the walk-through method to analyse participants’ perceptions about the Ubenwa. We designed and used pictorial posters (please see Appendix 1) about the app’s various functional interfaces to facilitate participants’ expressions of their understanding about the app during interviews (posters were based on Leuthner and Das (2004), Onu et al. (2017) and Ubenwa Health (2021)). The posters contained images of the step-by-step documentation of Ubenwa’s screen functions and process flows. We asked descriptive (e.g. What do you generally think about the Ubenwa app?) and analytical questions (e.g. How do you understand the Ubenwa as a relocated solution? How would you compare it with a more traditional Apgar method? Why would you use/resist the Ubenwa as a relocated solution?) to encourage discussion and prevalent perspectives about the Ubenwa as a negotiated algorithmic solution.

In most cases (except for two interviews with data experts), face-to-face interviews were preferred, to allow direct contact with the participants and to gather non-verbal cues in addition to what they said. The face-to-face interviews were conducted in Abuja, Nigeria, in the places where interviewees felt most comfortable (i.e. home, workplace). We conducted online interviews on Skype to mimic face-to-face scenarios. Interviews lasted around 1 hour. With the interviewees’ consent, interviews were recorded and transcribed verbatim; when interviewees did not consent to recording, a detailed written report was drafted. Thematic analysis was applied to the transcripts to identify general thematic patterns (Braun and Clarke, 2006), and we combined manual and computer-aided analysis techniques (Woolf and Silver, 2017). Transcriptions were imported into MAXQDA to sort and organise the collected interview data. Codes were applied to identify, categorise and compare data on key topics, patterns and relationships (Kuckartz and Rädiker, 2019).

We used primary background information about the interviewees (Table 1) to contextualise and interpret the results. Moreover, to further frame our findings with additional background information (Charrington, 2018; Laffan, 2020; Latremouille, 2020) and to explore the interviewees’ understandings about how Ubenwa interacts with the contexts in which the solution was designed and developed, we relied on three video interviews conducted with the Ubenwa development team in 2018, 2019 and 2020.

The current study complies with policies on ethical research and protects the anonymity of the interviewees. To maintain confidentiality, we coded the interviewees using abbreviations: P (parents), DE (Data experts) and MED (medical practitioners) and Arabic numerals 1–6. 3 The results are presented below in three categories: (1) health governance through the lens of the Ubenwa developed in Canada, (2) understanding of the ‘data settings’ from Mexico and (3) perceptions about the potential indigenisation of the Ubenwa in Nigeria.

Findings

Overall, the results of the initial survey show that, although only 33% of the respondents expressed acceptance of testing the Ubenwa on their babies, about half of the total sample surveyed (52%) were amenable to the use of algorithmic solutions to diagnose their children’s health generally. This suggests that further in-depth exploration of individuals’ understandings about the contexts in which the Ubenwa is implemented is needed to uncover the potential ‘negotiated nature’ of the solution and to avoid failures in global algorithmic governance.

Health governance through the Ubenwa

Generally, the interviewees perceived the Ubenwa, which was developed in Canada, as a positive solution and tool to compensate for the limitations of Nigeria’s health care infrastructure. The solution was perceived to be valuable, especially in remote areas where clinics are poorly equipped, for reducing infant mortality drastically.

Positive responses from both data experts and medical practitioners were based on their expectations that the Ubenwa would diagnose asphyxia quickly and provide support to end users with little or no clinical experience and, more generally, to the Nigerian health care system. Respondents describe Ubenwa’s advantages as ‘fast’, ‘quick diagnosis’, ‘effective’, ‘immediate’, ‘timely’, ‘innovative’ and ‘easy’. Given how informants described the advantages, they tended to perceive Ubenwa as an efficient – often an ideal – form of algorithmic governance. The efficiency ideal has been noted not only by the Ubenwa developers (Laffan, 2020; Latremouille, 2020) but also in contexts in which the solutions were implemented as witnessed by interviewees’ perceptions and the presentation of the Ubenwa app during the interviews. As two interviewees noted, I am quite impressed with the developers’ quest to meet a critical need in the Nigerian healthcare industry. Birth asphyxia is no joke, it’s a matter of life and death. (DE6) The advantage is technological advancement in our health sector . . . Technology has the capacity to improve quality of care. (MED2)

However, other interviewees expressed concerns about algorithmic governance solutions for global health. Experts perceived that data collected outside of those contexts in which algorithmic solutions would be implemented could be biased. Some cited potential issues for diagnosing birth asphyxia on the basis of cry data alone. A data expert believed the app might lead to misdiagnoses because of contextual variations in crying patterns as well as instances when infants with asphyxia do not cry at all (DE4). A medical practitioner expressed reservations about changing their work practices as a result of introducing and using algorithmic solutions, especially when their use would occur in place of more traditional methods (please see Appendix 1). These traditional methods, such as a physical examination and the Apgar method (MED1), were perceived as more reliable because they involved more human intervention during physical examinations and the clinical decision-making process.

There are cases of severely asphyxiated babies who do not cry. In such cases Ubenwa would not be useful and such babies stand a high risk of death. (DE4) I believe more in using physical examination – skin colour, activity; I prefer using those than crying. Crying is one . . . but can’t diagnose perfectly. (MED1)

The data experts also expressed their fears insofar as they are at the intersection of global data governance and local self-determination. For example, one data expert expressed their fear that the Ubenwa could possibly harm users in implementation contexts or when medical experts rely solely on the algorithm to treat new-borns. If a child were misdiagnosed with birth asphyxia via Ubenwa, the child would be given unnecessary treatment, which may have unintended consequences.

It may not diagnose early, or it may misdiagnose and result in false-positive; and if these biases are there, if the diagnosis is not right, then a lot of things can go wrong. (DE1)

Interestingly, some parents often understood the potential harms of algorithmic governance as only including physical harms, so they did not consider the potential risks of non-invasive diagnostic tools. Another parent argued that Ubenwa could cause harm if it were made available to the public: people would lack expertise for interpreting the results and mishandle cases of asphyxia (P1). A data expert, in turn, expressed concern about the potential harm to individuals if their data were accessed (DE2).

Anybody even a layman [can use the app] that doesn’t know about care of a child. . . . So that is why I think that is a harm, because the layman would take the judgement of the machine . . . there needs to be someone that learns when the app is being used. (P1) There should be certain measures to make sure that information put into the app is not accessible to everybody. Some parents will not want their personal information out there. (DE2)

In general, our findings reveal that in algorithmic governance, the multiplication of contexts involves not only global solutionist (Madianou, 2019) and developmentalist (Horner, 2020) ideals but also attracts concerns about potential technical or physical harms, as expressed by data experts and parents, respectively. Thus, dislocated algorithmic governance may not always be subject to algorithmic abstraction and may occur neither deterministically nor universally. Respondents perceived the relocated algorithmic governance of the Ubenwa as enhancing the effectiveness of the health care infrastructure in Nigeria. However, respondents expressed both acceptance of and resistance to the algorithm-based diagnosis methods but expressed acceptance of traditional human-led approaches.

The ‘data settings’ from Mexico

Despite the significant benefits of global algorithmic solutions, the interviewees expressed several concerns about the data source (i.e. Mexico) because it was not the same as the implementation context (i.e. Nigeria).

The developers of Ubenwa used the Chillanto database and, as noted in interviews with the development team, the database is unique (Charrington, 2018). Interviewees from each group thought that the data from Mexico were a valuable resource for improving the health sector and promoting social well-being overall in Nigeria. The data from Mexico were perceived to be ‘good’, ‘effective’ and ‘useful’ for the ultimate goal of preventing death through asphyxia in Nigeria. As two experts noted, What matters is the Ubenwa app is working effectively irrespective of the country data. (MED2) Countries that have data should use their data, but others that did not can use data from other countries. Half bread is better than none. (DE5)

We provided our interviewees with information that the development team disclosed in their interviews (Charrington, 2018) regarding their reasons for using data from Mexico. In response, our interviewees were also cautious about using non-local data. Interviewees expressed concerns not only about the validity of data underlying the algorithm, as discussed in previous section, but also about how their localised meanings were not utilised in the development of the app. In other words, respondents understood data as having socio-cultural ‘settings’ (Loukissas, 2019) that frame and give meanings to actions rather than as being formal data sets.

Because most of the interviewees had little direct knowledge of Mexico as a country and only knew of the country from TV, they perceived the data from Mexico to be unfamiliar and unsafe. According to the interviewees, these ‘data settings’ from Mexico include local lifestyles and perceptions about emotional expression, parental care and health habits, which differ from typical Nigerian practices. One parent emphasised that Everything is not the same everywhere; norms, customs, traditions, upbringing, environment determine a lot of things. (P3)

And a data expert said that Because there would be environmental differences, social differences, terrain difference, cultural difference, those things would definitely come into play. (DE3)

Beyond that, data experts, unlike parents and medical practitioners, highlighted more technical and ethical issues involved in deploying the app in a context other than Mexico. These issues include lack of accountability and transparency, both of which were studied previously (Jokinen et al., 2021; Steinhubl et al., 2015). Interviewees questioned the ‘goodness’ of the data from Mexico from a socio-cultural (DE2) and a physiological (DE3) point of view such that they ascribed cultural meanings to the data, including variations in behaviour. As two experts noted, Data sources are unique to people and locations. (DE2) I don’t know if Mexican babies cry with their mouths closed, so long as they cry with their mouths open and their vocal cords are intact, I think it’s the same sound they would give out. (DE3)

Unlike the experts, parents also mentioned that certain socio-economic connotations may have influenced the data from Mexico and, consequently, may have affected how the algorithm interprets data from a Nigerian context. While the interviewees emphasised the importance of the Ubenwa for supporting diagnoses in countries with higher risks of asphyxia due to limited economic and health resources, some parents questioned the applicability of cross-border solutions because the developers did not consider differences in local socio-economic conditions.

I don’t think it turns out well. It’s just like going to a well-formed economy, collecting data of standard of living, and then using that to form a policy in another country where the standard of living is low. (P5)

In summary, the interviewees expressed worries about whether and how global algorithmic governance could be extended across social contexts, especially when the mutual relationships between algorithms and social contexts are constantly (re)produced through individual performances and social interactions. Therefore, data sources perceived as ‘external’ are understood both as empowering social contexts in which the algorithmic solutions are implemented and as potentially loaded with cultural meanings, norms and customs that are more complex than the dyadic conversion from one place to another. The interviewees understood data as ‘settings’ (Loukissas, 2019) with clear socio-cultural and socio-economical boundaries, including localised lifestyles, norms and identities, as well as different emotional significances attached to these boundaries.

Localising Ubenwa in Nigeria

The interviewees discussed extensively the notion of indigenising the Ubenwa algorithmic solution with local data from Nigeria. Interviewees from each group believed that the use of local data would promote the acceptability of algorithmic governance of health in the local community.

The data experts were aware of, and emphasised, their crucial role in mediating trust between themselves and the parents and medical practitioners as end users of the app (DE1). Moreover, they believed that trust could be established more effectively if local data were used, which could be perceived more readily as their ‘own’ and ‘safe’. One interviewee also thought that local data would improve diagnosis (MED5); another thought it would reduce errors and promote trust through data ownership (P4): Local data would help in its adoption and convince people, especially experts who will be the advocates of this tool. (DE1) Using Nigerian data would ensure accuracy in diagnosis because the app is trained with the right sample. (MED5) It would encourage ownership. . . . It will kind of build faith and belief that, yes, this works because having the data is one thing but agreeing that yes, this works is another thing. (P4)

At the same time, some interviewees had worries about using of data from Nigeria as the dataset of the Ubenwa algorithm. Some data experts and parents perceived that the data from Nigeria would be of low quality because of a lack of standards for and trust in the collection and management of such data. The interviewees expressed fears that data from Nigeria might be falsified for specific interests (DE5) and therefore could be considered a potential source of power and manipulation. At the same time, one interviewee thought that data falsification would occur in order to provide a positive image of the health system (P2).

I do not trust data from Nigeria because they are not accurate and full of errors. (DE5) In Nigeria, people want to be seen a certain way, so they’ll always falsify data. People are always lying about data. (P2)

Other interviewees thought that using data from Nigeria as the dataset for the Ubenwa algorithm would reflect local socio-cultural diversity. However, they also thought that it would be challenging to achieve a sufficient representativeness of contextual diversities.

4

A medical practitioner emphasised that Something’s happening in the North cannot be compared to the one in the South . . . we are very diverse, our culture, environment and so many things. (MED1)

A parent expressed their concern: Also ensuring the collected samples covers the diverse regions in Nigeria, because as I said earlier, we are very different even amongst ourselves as Nigerians. (P6)

The interviewees advanced several suggestions for further developing the Ubenwa and for considering the social contexts in which it is implemented. A medical practitioner (MED3) proposed that data could be collected from areas across the country to ensure higher accuracy and a better performance of the algorithm across various socio-cultural contexts. Another medical practitioner emphasised their key role in mediating the use of algorithmic solutions in areas with fewer health care or digital resources (MED2).

Instead of testing in just one clinic in Nigeria, they should have expanded the scope of testing. That way, they can gather more data from the different regions to improve the system. (MED3) To provide other options for accessing the service, especially for practitioners in the villages. For example, they might not have electricity to charge their devices and other problems might arise, so it’s important to devise ways to make Ubenwa easily available for such cases. (MED2)

Data experts, instead, claimed that the Ubenwa used non-local datasets because of the lack of a comprehensive framework to regulate the development, testing and use of global algorithmic solutions in local contexts. The experts recommended the adoption in Nigeria of guidelines for ethical artificial intelligence (DE1) or the involvement of stakeholders, in particular, clinicians and technology experts, to assure data collection in different hospitals (DE5).

We do not have such guidelines, but we can adopt one of those guidelines from any country that have issued guidelines like that . . . (DE1) I will encourage clinicians and technology experts to work together. . . . This will make the system more robust as different perspectives and experience would come into play. (DE5)

Parents suggested that a larger portion of the population, and not just local community members, government and health professionals, needed to know about and be involved in the local implementation of global algorithmic solutions. For example, one parent said, Create awareness . . . because when you create awareness that would determine how its’ going to be used. Because if you don’t know much about it, it won’t be used. (P1)

In summary, the interviewees suggest that negotiations are required to enable active contextualisation and localisation of global algorithmic solutions. The context–algorithm negotiations, based on the example of the Ubenwa, tend to blur and extend spatial scales from the global–local axis to a multiplicity of localities. The medical practitioners perceive themselves as intermediaries in the relocated algorithmic governance, on one hand, between parents and data experts and, on the other hand, between Canada, Mexico and Nigeria. This ‘triadic closure’ ensures that local socio-cultural differences in, technical interoperability of and trust in global algorithmic solutions are all considered.

Discussion

Our article aims to reveal the perceptions about dislocated algorithmic governance and the (non)negotiable spaces that these algorithmic solutions potentially construct. We looked at the Ubenwa mHealth app. The app was developed in Canada, its database is from cry patterns of infants born in Mexico, and the app was tested and now implemented in Nigeria. We conducted in-depth interviews to explore how those (i.e. data experts, medical practitioners and parents) who may be involved in the implementation of this relocated algorithmic solution understand the potential (dis)empowerment of infants who may be potentially marginalised as data subjects.

Throughout the article, we have understood algorithmic governance as a set of dynamic contextual practices in which algorithmic applications are created, accessed and interacted with. We presented an empirically grounded approach to algorithmic governance-in-context to explore people’s insights about (1) relocated algorithms as socially dynamic ‘contextual settings’, (2) the (non)negotiable spaces that these algorithmic solutions possibly construct and (3) the general implications of re-contextualising algorithmic governance.

First, our research deepens the understanding of algorithms as relationally developed in contextual settings (Kitchin and Dodge, 2014; Loukissas, 2019). Our study highlights that the design of algorithmic solutions in diverse social contexts requires data that are treated as ‘social shapers’, which must be both trusted and socially identified with, so that designers of algorithms can prioritise all reliable practices. Our study provides support to prior studies (Kitchin and Dodge, 2014; Loukissas, 2019) and corroborates the view that algorithmic governance does not have a prevailingly global format. Instead, because algorithmic governance depends on context, data subjects, who are often marginalised, go unheard in an attempt for universalism in algorithmic governance. Therefore, while the discipline of algorithmic governance has developed a high level of theory, the discipline needs to catch up with individuals’ practices, knowledge and understanding.

Our study also illustrates how relocated algorithmic governance does not follow the logic of localisation, or ‘data glocalisation’, in global data solutions (Kitchin and Dodge, 2014). In that process, global data technology is indigenised as soon as it is applied to contexts other than those in which it was developed. Instead, we suggest that relocated algorithmic governance follows a relational logic in which the ability of algorithms to move across state borders depends on the multi-layered assemblages of social relationships within and between the multiple social contexts. Such datafied cosmopolitan localism (Sachs, 1992) is based on not only the valuing of contextual diversities as a universal right but also the sovereignty of data and lives. This is a process in which global algorithmic governance translates contexts into nodes in a global network across which people can access distributed health care.

Second, our study contributes to the understanding of the re-localisation of algorithmic solutions in multiple contexts. Our research shows how the multiplication of contexts carries, in addition to solutionist (Madianou, 2019) and developmentalist ideals (Horner, 2020), socio-cultural issues tied to the diversity, thickness and specificity of each context. These socio-cultural issues inform and are informed by the use of these same ‘relocated’ solutions. Accordingly, our study confirms other research, particularly research that advocates a paradigm shift in how academic understand learning machines (Bengio et al., 2019; Masso and Kasapoğlu, 2020). In other words, we ought to think of machines as capable of learning from social contexts and individual experiences, rather than as autonomous subjects.

Third, our study highlights that, while relocated algorithmic solutions are increasingly used to respond to local problems they are designed to solve, they must also negotiate potential cross-contextual tensions. This calls for ‘negotiating algorithms’ that are able to learn from contextualised usage and account for and accommodate the tensions that arise when multiple contexts (and their respective governance) coalesce.

Our study has some limitations. In exploring the multiplicity of contexts in which algorithmic systems are developed and implemented, we inevitably face the challenge of embracing a wide range of individual perspectives. Unfortunately, we were unable to gather data on the perspectives of experts from Mexico and Canada. Instead, we chose to explore how people in Nigeria perceived the local and non-local attributes and powers as they connect to global algorithmic systems. Moreover, because of the COVID-19 pandemic, we were prevented from investigating the views of people from rural areas of Nigeria. However, we were able to interview medical practitioners who had constant contact with people in rural communities as part of their daily work.

Future research might implement a longitudinal design that follows algorithmic solutions from their conceptualisation and design through subsequent development and testing to potential relocations. Future research could also take a latitudinal approach to assess the extent to which an algorithmic solution has been relocated in different contexts and with what ‘adaptive’ results. Our study was based on the views of people from Nigeria, where the app is being implemented; therefore we hope that the empirical results provide valuable information for further adaptations of the Ubenwa.

Footnotes

Appendix 1

Acknowledgements

The authors thank all the interviewees who participated in this study.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work has been supported by the development programme ASTRA of Tallinn University of Technology for years 2016–2022 (2014–2020.4.01.16-0032) and European Commission through the H2020 project Finest Twins (Grant No. 856602).