Abstract

Despite the immense influence of machine translation (MT) on cross-cultural communication worldwide, little is known about end users’ predispositions toward MT. Our online experiment (N = 284) compares people’s perceptions of MT and human translation in an ethically charged situation, in which the translation serves an immigrant worker in an interaction defined by power imbalance. Using hierarchical linear regression, we found that an otherwise identical translation was evaluated differently when it was attributed to MT or human translation. Results reveal that translators and non-translators alike exhibit a negative bias toward the MT product when asked to assess its accuracy and reliability, its ability to convey cultural and emotional otherness, and its potential effectiveness in helping the disadvantaged immigrant in need of the translation. We also demonstrate how lower evaluations of the MT product lead to a stronger wish to intervene in the translation by introducing changes to the original message. Our results suggest that predispositions toward MT must be taken into account in any consideration of MT-mediated communication, as these predispositions may shape the communicative act itself.

Keywords

Introduction

Boosted by globalization and the fast-growing developments of the digital era, communication across languages and cultures via translation has proliferated. The ubiquity of translation is not limited to a particular domain of social life: there has been a dramatic rise in commercial translation and localization as well as multilingual communication in individual, professional, and institutional settings (Cronin, 2012). While the increased volume and accessibility of translation has stemmed from various technological advances, perhaps no development has been as crucial as machine translation (MT); in particular, freely available online systems, such as Google Translate (Google, 2016). The technology’s high availability and synchronicity has extended the use and influence of MT to everyday situations typical to multilingual societies, which carry important social and ethical implications (Vieira et al., 2020). However, the common attitudes toward MT and the heuristics involved in evaluating its product remain largely unknown.

MT refers to fully automated software that can translate a source text in almost any language, into almost any target language of the user’s choice. The last few years have seen MT quality increase to a level that some scholars claim is on a par with human translation, in certain texts and between certain languages (Hassan et al., 2018). While there is no consensus about the validity of this claim, or more broadly about the criteria used to measure MT quality (Pym, 2020a; Toral et al., 2019), few doubt that MT technology has greatly improved in recent years. This improvement has made MT one of the more pervasive cases of Artificial Intelligence–Mediated Communication (AI-MC), conceptualized as “interpersonal communication that is not simply transmitted by technology, but modified, augmented, or even generated by a computational agent to achieve communication goals” (Hancock et al., 2020, 90). MT replaces in full a source text of whatever length with an equivalent text in the target language, and does so autonomously with respect to the myriad semantic, syntactic and morphological choices involved in the production of the translated text. While today’s data-driven algorithms perform a statistical analysis of large corpora of existing human translations, how MT actually arrives at its translation decisions remains largely uninterpretable even to its programmers, on account of its deep learning approach (Melby, 2020: 430–433). MT thus joins the automation of other aspects of communication which were deemed until not too long ago quintessentially and exclusively human activities, as generally opaque AI-MC algorithms are now able to communicate emotions and perform acts that have moral implications (Hohenstein and Jung, 2020; Yao et al., 2017).

Despite the immense influence of MT on communication across languages around the world, and the burgeoning research on automation, scholars have not yet examined how end users preconceive and evaluate MT, in comparison with human translation, through a sociological and psychological rather than technical-linguistic lens. While computer scientists have concentrated on evaluating the quality of the MT product in terms of its semantic and syntactic properties (Toral et al., 2019), sociological approaches tend to focus on translators’ attitudes to MT much more than on the attitudes of (non-translator) end users of MT. However, studies have shown that end users’ perspectives and practice are highly conditioned by the digital media of communication, including by AI-MC technologies and algorithms (Sundar et al., 2015). People’s preconceptions and trust of algorithms may influence, for better or worse, their evaluations of an algorithm’s effectiveness, authenticity, fairness, trustworthiness, and aesthetic value (Jago, 2019; Lee, 2018). Indeed, with respect to the use of algorithms, human perceptions may play just as an important role as the actual quality of the AI product (Dietvorst et al., 2015).

This article draws on the insights and methodology of recent automation studies to examine people’s preconceptions and evaluations of MT in comparison with human translation, in an ethically charged situation. In the last decades, translation scholars have shown how translation, as an act of communication across nations and cultures, is often governed by power relations and may have important ethical implications (Tymoczko and Gentzler, 2002). No longer understood merely in terms of linguistic equivalence, translation is now seen through an ethical lens as scholars emphasize how translation may reflect and influence individuals’ and groups’ social position and accessibility, cultural representation, cooperation, and trust (Pym, 2012). Translation in migration and immigration contexts is a particular case in point (Inghilleri, 2017). The rise in the quality of MT and the ubiquity of its use through freely available systems online have made ethical dimensions relevant to MT use as well. As with other instances of AI-MC, MT is already used in situations of power imbalance between communities and languages, and the use of MT in such situations is only likely to increase—making it all the more important to understand people’s preconceptions of MT, as these may influence how it is actually used and perceived, and what are its social implications.

This article aims to contribute to a better understanding of AI-mediated acts of communication, by asking how people perceive and evaluate a software-produced translation. We show that professional translators and non-translator users alike evaluate differently an otherwise identical translation when it is attributed to machine or to human translation. In particular, we wished to know how people evaluate the features of an MT product in an ethically charged situation that involves a disadvantaged immigrant worker in need of the translation. To this end, we presented participants with a scenario where an immigrant worker was wronged and needed to have his official complaint translated, and examined if people evaluated differently, in each condition (human translator/MT), the translation’s accuracy and reliability, its ability to convey cultural and emotional otherness, and its potential functional effectiveness in helping the plight of the immigrant in need of the translation. Our results reveal that people exhibit a negative bias to the MT product and assign it with lower evaluations when asked to assess these features of the translation. We also show the role played by people’s expectations of the quality of the translation they were to receive: how the degree of positive surprise from the MT product, resulting from prior expectations for lower quality, influences the eventual outcome of people’s evaluations. Finally, we demonstrate how the lower evaluations for the MT product lead to a stronger wish to introduce changes to the translated text, at the expense of faithfulness to the original message, to help the disadvantaged person in need of the translation. Our results suggest that the existing predispositions toward MT must be taken into account in any consideration of MT-mediated communication that has ethical and social implications, as these predispositions may shape the communicative act itself.

Literature Review

Machine Translation

MT exists since the 1950s, but it was the paradigmatic shift from rule-based MT to statistical MT in the last 20 years, and particularly the recent advent of deep learning, neural machine translation (NMT), that raised its quality level immensely (Melby, 2020). Unlike other developments, such as computer-assisted translation (CAT) technologies, NMT is a proverbial black box that lacks algorithmic transparency. It draws on very large parallel corpora of existing human translations paired with their source text segments (usually at the sentence level), using a deep learning approach to determine probabilities of translation outputs by means of complex recursive neural networks (Forcada, 2017). For MT’s end users, however, the inner workings of the algorithm are not only impenetrable, but also largely irrelevant. MT receives as an input a source text and provides as an output its translation in another language without any human decision-making in any part of the computational process. As such, MT does not specifically serve professional translators. Freely available MT systems are accessible online for all in developed and developing countries alike. With close to 150 billion words being processed by Google Translate every day (Davenport, 2018), it is clear that MT’s social implications are not limited to the translation community, but have an increasing effect on multilingual communication in a globalized world.

Studies on the perceptions and social implications of MT have thus far concentrated on professional translators’ perspectives on MT as a threat posed to their industry (Vieira, 2020a); the conditions under which translators tend to adopt or reject MT for their work (Cadwell et al., 2018); the transformation in translators’ practices, self-perception and professional status, wrought by the advances of MT (Läubli and Orrego-Carmona, 2017; Sakamoto, 2019); among others. Past research largely attests to translators’ apprehension and lower appreciation for the MT product in terms of quality and usefulness, though some of the resistance decreases when translator agency is retained at the stage of software development and implementation (Rossi and Chevrot, 2019). As MT becomes widely used by non-translators in various social contexts of communication, the strong emphasis on professional translators’ attitudes to MT limits our understanding of broader social effects of this technology. It therefore seems crucial to take both translator and non-translator populations into account when exploring people’s perceptions of MT—particularly in circumstances in which ethical aspects of MT use are salient.

The last decades have seen much scholarly focus on translation in situations of power imbalance, shifting the spotlight from translation’s linguistic and stylistic features to the ideological factors that determine its function and politics (Hermans, 2009). More recently, a growing number of studies have discussed the changing reality of translation in a new globalized, digital era (O’Hagan, 2020). Most relevant to this article, researchers have begun to explore the practical and moral implications of using MT in situations in which it had not been employed before. Studies have described the use, or problematics of use, of MT in close-captioning local television channels for immigrant communities (Hutchins, 2009: 18–19), in increasing accessibility of underserved groups in contexts of civic participation and public health (Bouillon et al., 2017; Nurminen and Koponen, 2020), in making institutions, such as the public library, accessible for immigrant newcomers (Bowker and Buitrago Ciro, 2015), or in pressing medical and legal circumstances (Vieira et al., 2020). As implied in these studies, using MT to address the communicative needs of immigrants and members of disadvantaged communities may have important social and moral implications.

However, as shown in recent automation studies, the actual effects of artificial intelligence on its human users are not an inevitable given, particularly in cases of social interaction. These effects do not depend only on an algorithm’s usefulness or ease of use, but are rather contingent upon human perceptions and attitudes toward algorithms, such as expectations and trust (Glikson and Woolley, 2020). The fact that MT is a major channel of communication between users who occupy a weaker position in society and are hampered by linguistic inaccessibility, on one hand, and stronger, institutionalized elements of society, on the other, provides particularly strong motivation for understanding preconceptions of MT. Attitudes toward MT technology may influence not only the basic features of the communicative act, which is already defined by unequal power relations, but also how the translated text itself—its informational, cultural, and emotional content, and its functional effectiveness—is being perceived. This could also result in people taking further steps to actively adjust or post-edit the algorithmic output according to their own beliefs. Along these lines, negative bias against MT technology might diminish the extent to which stronger elements of society believe they are having an effective, meaningful interaction with MT’s disadvantaged end users—users who may strongly rely on this technology for communication. Our experiment wishes to examine whether people’s different preconceptions of human translation and MT indeed lead them to evaluate differently the features of an otherwise identical translation when it is attributed to a human or a machine, in a situation of power imbalance.

Evaluating translation

Ever since the 1970s, translation studies scholars have debated, directly or by implication, the criteria by which a translation should be described and evaluated. The passing years saw no consensus emerge over the subject. Competing criteria for evaluating translation reflected, above all else, the questions researchers found to be most important (Pym, 2014). Moreover, as Andrew Chesterman notes, the “quality” of a translation is never an absolute value; it reflects subjective judgments, means different things to different actors and presupposes different perspectives, and approaches to its evaluation may take different courses (in: Pym, 2020a: 439–440). However, when considering people’s perceptions of the translation product, the diverse ways for evaluating a translation—whether the product of a human or a machine—can rather usefully complement one another, as they reflect the various dimensions that are important to the actors involved in the translation process.

One dimension of translation that has long interested translation scholars is the perceived equivalence between the translation and the source text, with reference to meaning and stylistic features (Nida, 2012). Rooted in linguistics, computer science, or literary criticism, researchers with this perspective often imply that a text has an intrinsic “meaning” which is, to a large degree, autonomous and independent of the cultural context in which it is situated (Pym, 2014: 6–23). Taking the equivalence of meaning and form between the source text and the translation as the key concept for evaluation, their studies stress the linguistic, semantic, and stylistic attributes of the two texts, and often make claims with regard to the

Born of translation studies “cultural turn” in the 1990s, other trends take as a point of departure the discursive and performative differences between cultures, and the inherently subjective, normative status of the abovementioned concept of “equivalence.” They stress the cultural adjustments that are seen as needed, or are actually involved, in the translation of a source text for the receiving culture’s audience. These research perspectives tend to characterize translation primarily by how its various representations are appropriated to the receiving culture’s ideologies and expectations (Lefevere, 1992). Choices in the translation may also pertain to the transfer of emotional content that is encoded in the source text, which is expressed in line with culture-specific conventions of discourse (Tabakowska, 2018; Walinski, 2018).

Another influential theoretical approach in translation studies implies as a leading criterion for evaluating translation its

The abovementioned approaches to describing and evaluating a translation are far from exhaustive. Moreover, while they are presented here separately, they should not be seen as truly exclusive of one another. When it comes to people’s subjective intuitions, the level of accuracy of textual equivalence, the ability to convey cultural and emotional content, and the functional effectiveness of achieving a given purpose, represent three related but different emphases. By incorporating corresponding ways of evaluation in our experiment, through particular questions that aim to address each of their basic features, we may therefore gain complementary perspectives and useful insight on how people perceive MT.

When a translation is attributed to an algorithm, measuring people’s evaluations of the translation’s features involves yet another important dimension. Scholars have demonstrated that the positive or negative

Intervening in the translation

The issue of the translator’s “faithfulness” to the source text has long been a source of polemic in public and academic discourse on translation. Various scholars and thinkers have debated the extent to which the translator may depart from the linguistic, cultural or ideological traits of the source text, and reshape the original message as a means to different goals: achieving greater elegance or comprehensibility (Nida, 2012), adapting to target audience conventions for commercial reasons (Pym, 2014: 117–137), or undermining social, gender, and racial hierarchies ingrained in the source text (Venuti, 2008; Von Flotow, 1991), among others. In the field of community interpreting, which deals more directly with unequal power relations on the ground such as those examined in our experiment, practitioners and scholars have historically endorsed the notion of “impartiality,” demanding that no changes be introduced to the content of the original message under any circumstances (Hale, 2007; Kotzé, 2014: 127). Their approach has largely corresponded to the prevalent norms and accepted practices in textual translation. Some recent discourse, however, has aimed to redefine the role of translators and interpreters by calling into question the possibility, or necessity, of “impartiality” (Wolf, 2012). These voices set aside the commitment to the original text, and suggest that the interpreter’s first ethical priority, particularly in asymmetrical settings of legal, community, or medical interpreting, should be the values of the community and perceived needs of the interpreted person (Ciordia, 2017: 275–281). Such a view echoes the work of translation ethicist Anthony Pym, who sees ethics as “concerned primarily with what particular individuals do in the immediacy of concrete situations,” and proposes that “since translation is a cross-cultural transaction, the translator’s task is one of fostering cooperation between all concerned, with the aim of achieving mutual benefit and trust” (Hermans, 2009: 96).

Looked at from an ethics perspective, then, the impact of interventionist approaches on the translation differs across cases, depending on the motives for intervention and how the involved actors choose to reframe the original text. A plethora of studies have discussed the various implications of nationalistic, conservative interventions; progressive, anti-chauvinistic interventions; or more intricate, ideologically complex interventions in translation. Whatever its ideological orientation, it is clear that the possibility of intervention in the source text should not be ignored in any discussion of translation.

Nonetheless, while interventions in translation, and the issue of the translator’s own agency in ethically charged situations, have drawn increasing attention in the research, they have not yet been examined with regard to translations produced by a machine. Our experiment aimed to examine whether people felt the need to reshape the original message and introduce significant changes to an MT product to help a disadvantaged MT user; and, if they did indeed feel this need, to try to understand which factors led to these feelings, and whether the wish to intervene in the translation was different when the translation was attributed to a human or a machine.

Research goals

Our main research goal was to understand how people’s preconceptions of MT may influence how they evaluate the MT product, and how they may then suggest to act with regard to the translation, in an ethically charged situation. To that end, we compared how people assessed an otherwise identical translation when it was attributed to a human translator or MT, in a scenario where a disadvantaged immigrant worker was wronged and needed to have his official complaint translated. We wished to examine whether people evaluated differently, in each condition (human translator/MT), the translation’s accuracy and reliability, its ability to convey cultural and emotional otherness, and its potential functional effectiveness in helping the plight of the immigrant in need of the translation. We wanted to assess these evaluations of the translation while taking into consideration people’s disconfirmation of prior expectations of the quality of the translation, and their having (or lacking) working experience as a professional translator. Finally, we wished to examine, in both conditions, people’s belief in the need for an interventionist approach to the translation in question.

At the same time, we wanted to see whether differences between people’s evaluations of the translation were influenced by variables other than their predispositions toward algorithms, that is, by demographic or psychological factors that could have an impact on how one perceived the scenario and subsequently the translation itself. These control variables include gender, age, previous experience with MT, level of education, fluency in English (the language of the source text), and academic/professional background (humanities, exact sciences, social sciences). They also include subjective factors specific to the scenario in question, such as the moral–political orientation to immigrant workers and their treatment by the system, represented by participants’ perspectives on the level of unjustness suffered by the immigrant in the situation; judgment of the effectiveness of the original complaint written by the immigrant; and one’s general conceptions about the legitimacy of an interventionist ideology to translation in ethically charged situations.

Method

An online experiment exposed participants to a scenario in which a translated text was attributed either to MT or to a human translator. The survey was conducted from 7–30 July 2020, and addressed a Hebrew-speaking population. All analyses were done using IBM SPSS 26 and JMP Pro 15.

Sample

Two hundred ninety-one participants took part in an online survey distributed among students and staff from different faculties in a large state university, and among people with professional experience in translation. Participants were recruited from across the humanities, exact sciences (including engineering), and social sciences; and from the Israel Translator Association, as well as alumni and teachers from programs of translation training.

Participants’ age ranged from 19 to 81 (average = 41.21, SD = 16.81); 69% of them were female. Most of the participants were highly educated: 61% reported to hold or study toward a graduate degree, 37% were in the exact sciences, 37% in humanities and arts, and the rest in the social sciences. All the participants were fluent in the local language (Hebrew), while 79% reported to also be fluent in English. Of the participants (88), 30% had some professional experience as translators. Due to wrongly entered data in seven of the completed forms, seven participants had to be excluded from the final sample, which has 284 participants.

Procedure and stimuli

An online questionnaire with two conditions (human translator/MT) was designed using Qualtrics software. Participation was voluntary. The questionnaire started by presenting participants with a scenario where L., a Philippine immigrant worker, was assaulted by security guards due to misidentification, and needed to submit an official complaint to request compensation for the damage he had suffered. Lacking proficiency in the local language (Hebrew), L. wrote his complaint in basic English, which then needed to be translated into Hebrew for the village officials (see Appendix 1 for the wording of the scenario and Appendix 2 for that of the complaint).

Participants were asked to report on their perspectives on the level of unjustness suffered by L. in the described situation; their perspectives on the potential effectiveness of the original complaint in securing compensation for L.; and their general perspectives on the legitimacy of an interventionist ideology on the part of the translator in such a situation.

Then, participants were randomly assigned to one of the two conditions (human translator or MT). Participants assigned to the human translator condition learned that the immigrant worker’s text was indeed translated by a professional translator approached by the village officials, who had previous experience with immigrant workers and was a member of an immigrant rights organization. Participants assigned to the MT condition learned that the human translator was unavailable, so the text was translated with “the most advanced MT software available in the market today.” Participants in both conditions were then presented with the immigrant worker’s original message alongside its Hebrew translation and were asked to evaluate the translation. Their evaluations involved three main features of the translation: its accuracy and reliability, its ability to convey cultural and emotional otherness, and its assumed functional effectiveness in helping the plight of the immigrant in need of the translation. Participants also reported on their beliefs in the need for an interventionist approach to the translation itself, and evaluated the level of positive disconfirmation of their prior expectations of the translation in light of the quality of the translation actually presented to them. We also collected data on demographics, including gender, age, level of education, previous experience with MT, and professional experience in translation.

The immigrant’s official complaint (Appendix 2) was written following consultation with a cultural mediator who works with immigrant workers in Israel, and revised according to her comments. It was also formulated to be in line with basic features of communication in Filipino culture (Scroope, 2017). The translation of the complaint provided in both conditions was identical, and done by Google Translate. For the translation to plausibly pass as a human translation, the original English message had to be pre-edited, prioritizing short sentences, uncomplicated syntax, and common word choices, so as to avoid grammatical errors or lexical peculiarities in the translation. These linguistic features for the official complaint made sense in our scenario, as they corresponded to the level of English proficiency of most Philippine immigrant workers in Israel. The source text was further fine-tuned according to the MT output until the translation achieved a level of language idiomaticity that could pass as a human translation (confirmed by pretests among colleagues). This pre-editing was crucial as we needed the same exact translation to be plausibly attributed to a human and a machine. It was also important to include the original English message in the survey, as this transparency enabled participants, most of whom were highly fluent in both languages, to base their evaluation of the translation on comparing the two texts (rather than just appraising the translated text itself), thus enabling us to assess their subjective predispositions toward MT while controlling the effect of people’s proven distrust of non-transparent algorithms (Glikson and Woolley, 2020).

Measures

Dependent variables

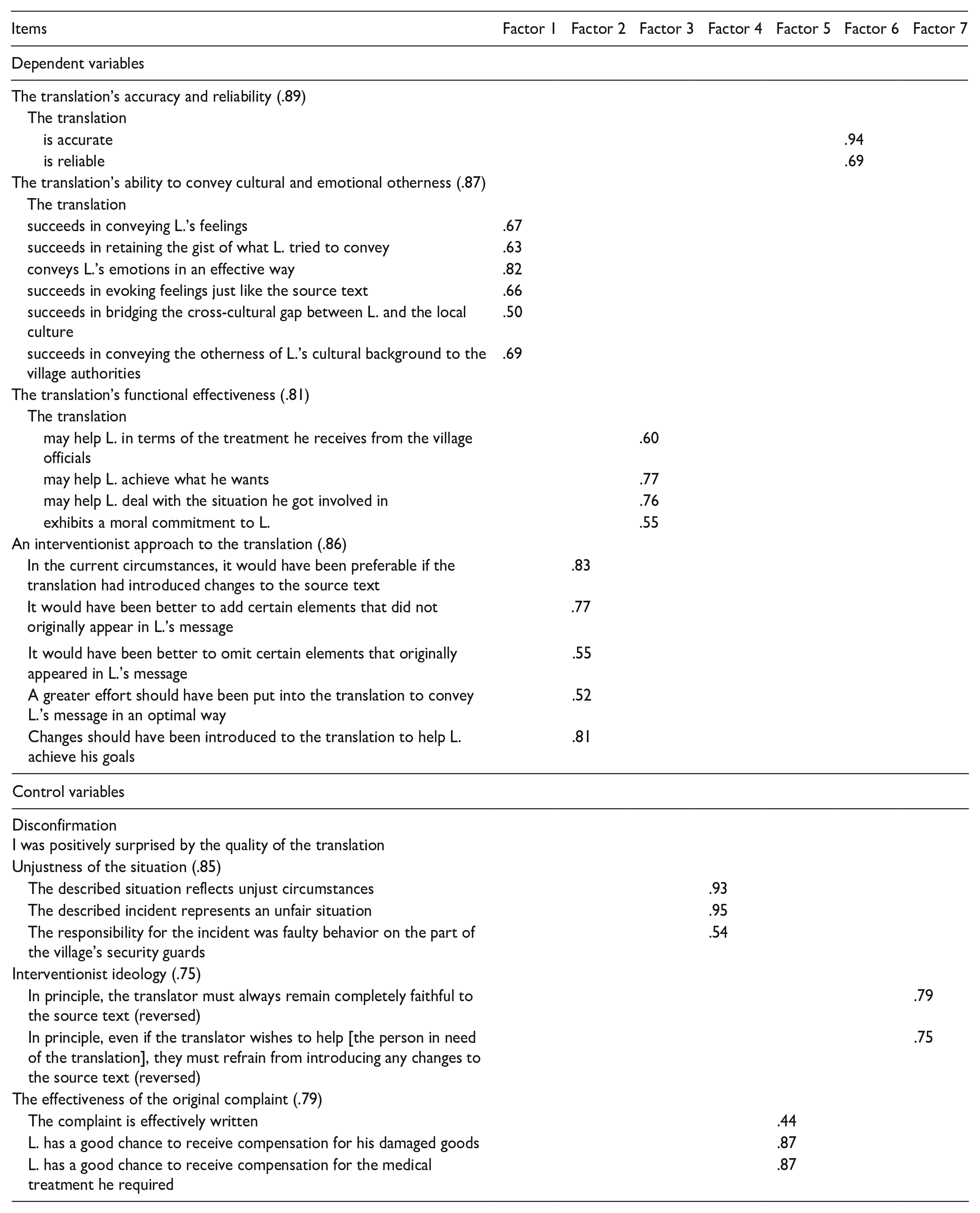

All variables were measured by asking participants to rate the extent to which they agree with given statements regarding the described scenario and the translation, using a 7-point Likert-type scale (1—strongly disagree; 4—neither agree nor disagree; and 7—strongly agree). To explore the structure of our data, we conducted an exploratory factor analysis (EFA), using an eigenvalue equal to or greater than 1.00 to decide the number of extracted factors (Costello and Osborne, 2005). EFA revealed a seven factor model for seven (four dependent and three control) variables used in this study (λ1 = 7.95, λ2 = 2.53, λ3 = 2.31, λ4 = 2.09, λ5 = 1.55, λ6 = 1.31, and λ7 = 1.00) accounting for 72% of explained variance (see Appendix 3 for a list of the items used and their factor loadings). The internal reliability of the variables was assessed using Cronbach’s alpha. All items of the dependent and control variables, summarized briefly below, are reported in Appendix 3 in detail.

Perspectives on the translation’s accuracy and reliability (M = 5.35, SD = 1.52) were measured using two items—one for each concept (Cronbach’s α = .89).

Perspectives on the translation’s ability to convey cultural and emotional otherness (M = 4.87, SD = 1.31) were measured using six items: four questions regarding the translation’s success in conveying the immigrant worker’s feelings and the gist and emotional content of the complaint, and two questions regarding the translation’s success in conveying the immigrant worker’s different cultural background, and in bridging the gap between the local culture and the immigrant’s (Cronbach’s α = .87).

Perspectives on the translation’s functional effectiveness (M = 4.59, SD = 1.34) in the situation at hand were measured using four items: three questions regarding the extent to which the translation is expected to help the plight of the immigrant by enabling him to receive better treatment from the village officials, and secure the compensation he requests; and one question regarding the extent to which the translation exhibits a moral commitment to the immigrant (Cronbach’s α = .81).

The wish to apply an interventionist approach (M = 3.31, SD = 1.51) to the translation in question was assessed by the extent to which participants felt that omissions from and additions to the original message should have been carried out in the translation to help L. achieve his goals. Five items were used (Cronbach’s α = .86).

Control variables

The disconfirmation of prior expectations of the translation (M = 4.58, SD = 1.96) was measured by asking participants to rate the extent to which they were positively surprised by the quality of the translation presented to them. It was crucial not to prime the participants, particularly in the MT condition, with a reference to expectations of quality in the beginning of the experiment, as this could have affected their subsequent grading of the translation’s features. Therefore, participants were asked to report on the disconfirmation (if any) of prior expectations of the quality of the translation only after they completed all of their evaluations.

Perspectives on the unjustness of the situation (M = 4.75, SD = 1.71) were assessed by participants rating the extent of unfairness and unjustness of the situation, and the degree to which they felt the responsibility for the incident was faulty behavior on the part of the village’s security guards. Three items were used (Cronbach’s α = .85).

Beliefs in an interventionist ideology (M = 2.64, SD = 1.44) regarding translation were assessed by participants rating whether the translator’s loyalty should, in principle, lie solely with the original message or if the translator should intervene in the text to assist the immigrant worker. We used two items that measured the belief that, even if the translator wishes to help, they must refrain from introducing any changes to the source text. The items were reversed (Cronbach’s α = .75).

Perspectives on the effectiveness of the original complaint (M = 5.07, SD = 1.28) written by the immigrant worker were assessed using three items. The items asked to what extent the complaint was effectively written, and to what extent did L. have a good chance to receive compensation for the medical treatment he required (Cronbach’s α = .79).

Results

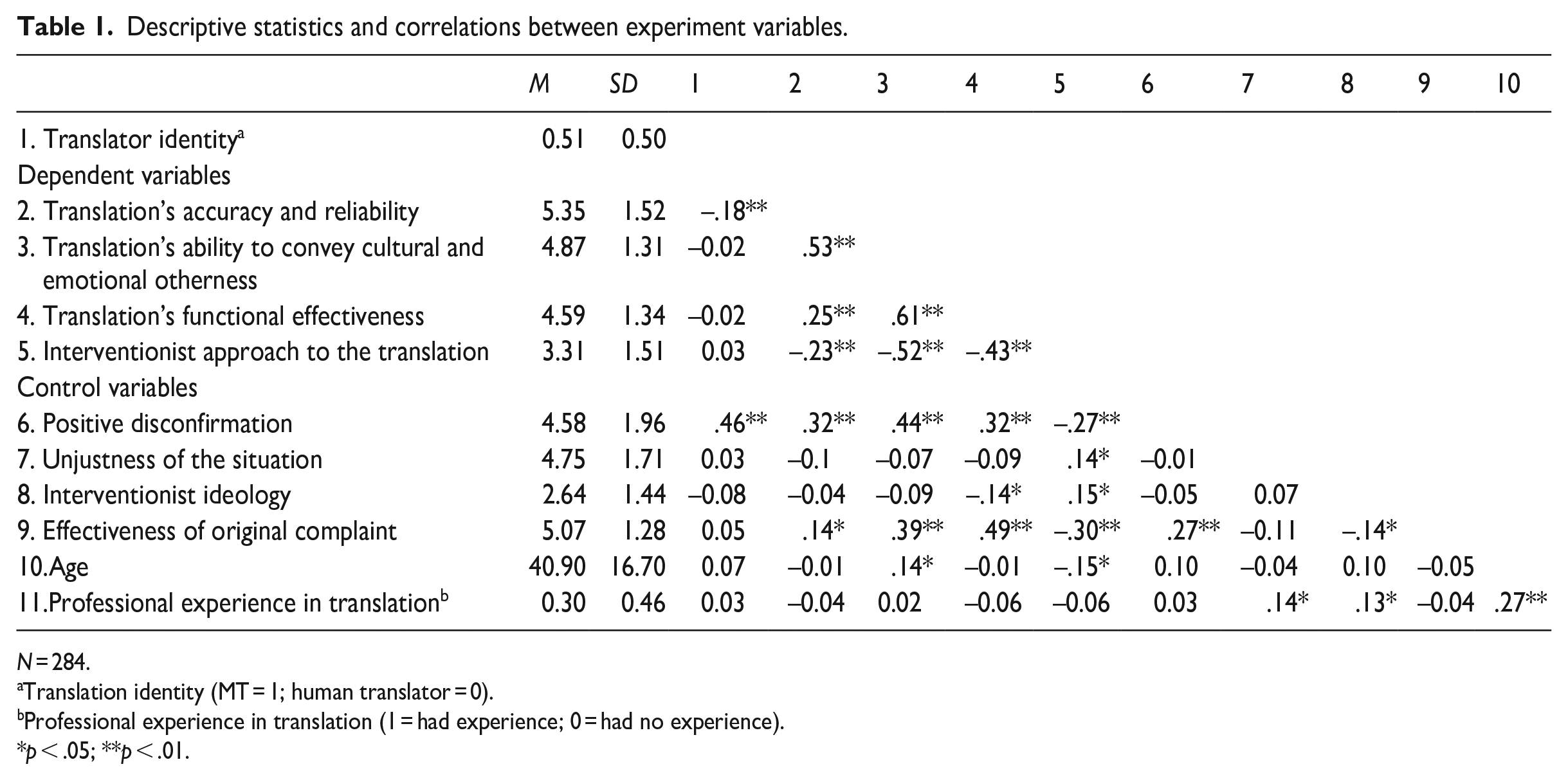

Descriptive statistics and correlations between variables can be found in Table 1.

Descriptive statistics and correlations between experiment variables.

N = 284.

Translation identity (MT = 1; human translator = 0).

Professional experience in translation (1 = had experience; 0 = had no experience).

p < .05; **p < .01.

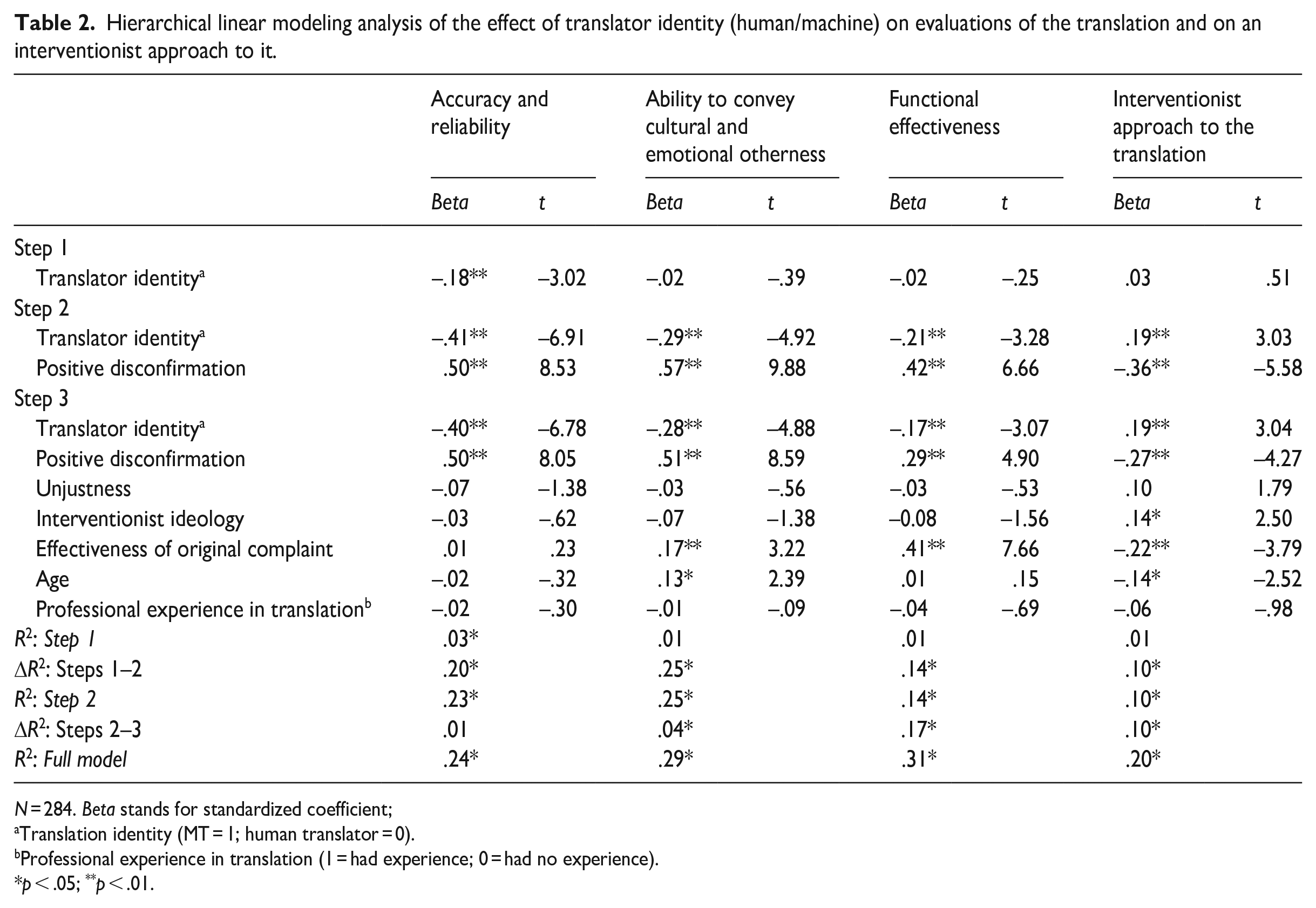

To examine how assigning the translated text to an algorithm influences participants’ perspectives of the translation, we conducted hierarchical regression analysis (see Table 2). Our first step was to assess how preconceptions of MT influenced evaluations of the different features of the translated text, and the tendency for an interventionist approach to the translation, without taking any of the control variables into account. Our results demonstrated that predispositions toward MT had a significant effect on people’s evaluations of the translation’s accuracy and reliability: the translation attributed to a machine was perceived as less accurate and less reliable (M = 5.08, SD = 1.60) than that attributed to a human translator (M = 5.62, SD = 1.40; F(1, 282) = 9.09, p < .005). However, there was no significant effect on participants’ evaluations of other features of the translation.

Hierarchical linear modeling analysis of the effect of translator identity (human/machine) on evaluations of the translation and on an interventionist approach to it.

N = 284. Beta stands for standardized coefficient;

Translation identity (MT = 1; human translator = 0).

Professional experience in translation (1 = had experience; 0 = had no experience).

p < .05; **p < .01.

Our second step was to add to our model the effect of positive disconfirmation, and take it into account as a control variable. It is worth stressing that participants who were more positively surprised by the translation quality in both conditions assigned higher grades to the features of the translation they were asked to evaluate. However, as participants in the MT condition tended to have lower expectations of the translation quality than participants in the human translator condition, the former demonstrated more positive disconfirmation (disconfirmation in MT condition: M = 5.46; SD = 1.48; in human translator condition: M = 3.67; SD = 1.97), which in turn mitigated the negative effect that attributing the translation to a machine had on their evaluations. Therefore, when the variance in positive disconfirmation was taken into account using disconfirmation as a control variable, the implications of attributing the translated text to a machine became clearer. The effect of the translator’s identity was now evident in all evaluations of the translation, which were significantly lower in the MT condition than in the human translator condition: accuracy and reliability (for MT: M = 4.32, SE = 0.11; for human translator: M = 4.86, SE = 0.11; F(2, 281) = 42.08, p < .001); ability to convey cultural and emotional otherness (for MT: M = 4.50, SE = 0.10; for human translator: M = 5.25, SE = 0.10; F(2, 281) = 48.88, p < .001); and functional effectiveness (for MT: M = 4.32, SE = 0.11; for human translator: M = 4.86, SE = 0.11; F(2, 281) = 22.19, p < .001). In contrast, the wish to intervene in the translation was significantly higher in the MT condition (for MT: M = 3.60, SE = 0.13; for human translator: M = 3.13, SE = 0.13; F(2, 281) = 15.69, p < .001). For standardized beta coefficients, see Table 2.

Our third step was to take all control variables into account, including the participants’ age and professional experience in translation, as well as their perspectives on the level of unjustness of the situation and on the effectiveness of the immigrant worker’s original complaint. As evident from Table 2, the effect of the translator’s identity on participants’ evaluations of the translation was significant above and beyond the control variables. The level of positive disconfirmation, too, still significantly pertained to people’s evaluations of the translation. Most factors, such as gender, level of education, fluency in English, professional background, and, interestingly, previous experience with MT, and the perceived unjustness of the situation, did not have any significant effect on the evaluations of the translation.

In addition, we found that the perceived effectiveness of the immigrant worker’s original complaint influenced the evaluations of the two features of the translation that were directly tied to the immigrant’s situation in the described scenario: successfully conveying cultural otherness, and helping the plight of the immigrant (functional effectiveness). The less people felt the original complaint was written effectively, the lower they evaluated the translation’s success in conveying cultural otherness (b = .17, p < .01) and functional effectiveness (b = .41, p < .001), and the higher they rated their wish to apply an interventionist approach to the translation (b = –.22, p < .01). Understandably, the control variable representing people’s belief in an interventionist ideology regarding translation in general had an effect only on the participants’ wish to intervene in the translation in question (b = .14, p < .05). Perhaps most interestingly, we found no difference between participants with previous experience in translation and non-translators in all respects: both populations exhibited the same exact inclinations, and same exact biases, with regard to their evaluations of the translation, and to their wish to intervene in it.

Discussion and conclusion

A fact not often acknowledged in translation studies is the increasingly small proportion of translations done by professional translators worldwide. However, it has been recently estimated that 99% of the translations produced globally are not done by professional human translators (Pym, 2020b). Much of this trend is a consequence of the rapid rise of MT. A significant aspect of this trend has been the growing use of MT in multilingual societies: people who lack linguistic accessibility increasingly find themselves in situations of power imbalance, with MT as a main medium of communication. Our findings suggest that, apart from the sociocultural and political factors at play in these situations, the role of people’s preconceptions of and existing bias against MT must also be taken into account—particularly if the MT-mediated communicative act involves moral considerations. In this respect, MT may be seen as representative of the broader phenomenon of AI-MC tools being integrated into cross-linguistic and cross-cultural communication.

The findings presented in this article open a door to research on the social implications of this trend. Our findings echo the studies that demonstrate the largely negative attitudes of professional translators to MT, but show that a negative disposition toward MT is common also to end users who are not translators (which, interestingly, does not correspond to the largely superficial, positive framing of MT in the press, see Vieira, 2020b). Non-translator end users, despite being by far the main users of the technology, have been largely overlooked in sociological-oriented research of MT. Our study shows for the first time, as far as we are aware, that the negative predisposition toward MT leads to a bias in the evaluations of various features of the MT product, and that this bias is found among translators and non-translators alike. Moreover, the different criteria suggested for evaluating MT in an ethically charged situation were found to be statistically distinct from each other, and internally reliable as dependent variables. They were all influenced by the bias against the machine, but the predisposition against MT was found to influence each of them differently in the scenario in question. In other words, we identified and statistically established a set of factors that may be considered a point of departure for the study of attitudes to MT in other social situations of communication.

Our findings suggest new directions of empirical research on evaluations of MT in other ethically charged contexts. The translation’s accuracy and reliability, its ability to convey cultural otherness, and its functional effectiveness in helping the person in need of the translation, may be perceived differently in different situational contexts. People’s beliefs in an interventionist approach to MT also require further examination in this respect. In our scenario, people wished to introduce changes to an MT product more than to a human translation as a direct consequence of their (bias-induced) lower evaluations of MT. It would be interesting to see whether MT-mediated communication in other scenarios also “motivates” an interventionist approach to the original message, and to try to better understand the implications of these interventions in the translation in future research.

Another important point raised by our findings has to do with the shifting expectations from MT, and how these should be taken into account in the assessment of factors involved in people’s perceptions of AI-MC more generally. Our findings suggest that we should not consider separately how people’s bias toward AI-MC manifests in their evaluations, and how their positive disconfirmation of prior expectations influence their evaluations. Rather, in a world in which technology constantly changes and people’s expectations change with it, a sound understanding of people’s perceptions of AI-MC requires taking both of these factors into account. The positive disconfirmation of prior expectations of lower quality from the MT product, together with the negative bias against MT, were shown to conjointly determine the eventual outcome of people’s evaluations of the MT product.

The ever-increasing volume of communication mediated by MT puts the meaning of our findings into sharp relief. It is clear that apart from the “objective” linguistic quality of MT, people’s predispositions toward MT may influence basic features of the ethically charged communicative acts it mediates. Future research is still needed to determine if distrust in MT may lead to overly negative perceptions of the informational, cultural, and emotional content of the translation, and, by extension, even of the users of the MT product themselves—some of whom are socially disadvantaged and strongly depend on it. What is clear, however, is that the bias against MT, demonstrated by translators and non-translator users alike, may have broad implications for intercultural communication worldwide. We need to understand it better to grasp the dramatic shifts that are occurring globally as a result of MT-mediated communication across cultures and social positions.

Footnotes

Appendix 1

Appendix 2

Appendix 3. List of items used for each variable and their factor loading.

| Items | Factor 1 | Factor 2 | Factor 3 | Factor 4 | Factor 5 | Factor 6 | Factor 7 |

| Dependent variables | |||||||

| The translation’s accuracy and reliability (.89) | |||||||

| The translation | |||||||

| is accurate | .94 | ||||||

| is reliable | .69 | ||||||

| The translation’s ability to convey cultural and emotional otherness (.87) | |||||||

| The translation | |||||||

| succeeds in conveying L.’s feelings | .67 | ||||||

| succeeds in retaining the gist of what L. tried to convey | .63 | ||||||

| conveys L.’s emotions in an effective way | .82 | ||||||

| succeeds in evoking feelings just like the source text | .66 | ||||||

| succeeds in bridging the cross-cultural gap between L. and the local culture | .50 | ||||||

| succeeds in conveying the otherness of L.’s cultural background to the village authorities | .69 | ||||||

| The translation’s functional effectiveness (.81) | |||||||

| The translation | |||||||

| may help L. in terms of the treatment he receives from the village officials | .60 | ||||||

| may help L. achieve what he wants | .77 | ||||||

| may help L. deal with the situation he got involved in | .76 | ||||||

| exhibits a moral commitment to L. | .55 | ||||||

| An interventionist approach to the translation (.86) | |||||||

| In the current circumstances, it would have been preferable if the translation had introduced changes to the source text | .83 | ||||||

| It would have been better to add certain elements that did not originally appear in L.’s message | .77 | ||||||

| Items | Factor 1 | Factor 2 | Factor 3 | Factor 4 | Factor 5 | Factor 6 | Factor 7 |

| Dependent variables | |||||||

| It would have been better to omit certain elements that originally appeared in L.’s message | .55 | ||||||

| A greater effort should have been put into the translation to convey L.’s message in an optimal way | .52 | ||||||

| Changes should have been introduced to the translation to help L. achieve his goals | .81 | ||||||

| Control variables | |||||||

| Disconfirmation |

|||||||

| Unjustness of the situation (.85) | |||||||

| The described situation reflects unjust circumstances | .93 | ||||||

| The described incident represents an unfair situation | .95 | ||||||

| The responsibility for the incident was faulty behavior on the part of the village’s security guards | .54 | ||||||

| Interventionist ideology (.75) | |||||||

| In principle, the translator must always remain completely faithful to the source text (reversed) | .79 | ||||||

| In principle, even if the translator wishes to help [the person in need of the translation], they must refrain from introducing any changes to the source text (reversed) | .75 | ||||||

| The effectiveness of the original complaint (.79) | |||||||

| The complaint is effectively written | .44 | ||||||

| L. has a good chance to receive compensation for his damaged goods | .87 | ||||||

| L. has a good chance to receive compensation for the medical treatment he required | .87 | ||||||

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The authors received support for the research and publication of this article from the Bar-Ilan University Rector Grant for Interdisciplinary Research Groups.