Abstract

This article sheds light on mechanisms by which online social interactions contribute to instigating far-right political violence. It presents an analysis of how violence against ethnic and religious minorities is motivated and legitimized in social media, as well as the situational conditions for such violent rhetoric. Online violent rhetoric in a Swedish public far-right social media discussion group was studied using a combination of machine-learning tools and qualitative analysis. The analysis shows that violent rhetoric primarily occurs in the context of narratives about criminals and crimes with (imagined) immigrant perpetrators and often particularly vulnerable victims, linked to a social problem definition of a corrupt and failing state as well as the alleged need to deport immigrants. The use of dehumanizing and infrahumanizing expressions both legitimizes political violence and spurs negative emotions that may increase motivation for violent action.

Keywords

Introduction

The far right is on the rise. This trend is visible in electoral successes of parliamentary parties, changes in popular discourse and emerging protest mobilizations. It also finds a tangible expression in right-wing violent hate crimes, ‘physical assault or killing of individuals on the basis of a bias towards the victim’s identity’ (Koehler, 2016: 62), henceforth termed far-right violence for short. Contemporary examples of such violence range from arson attacks against refugee housing facilities to high profile mass shootings – such as Utøya in Norway 2011, Christchurch in New Zealand 2019 and El Paso in Texas 2019. Such violence is also present as a latent threat by the emergence of vigilante groups with a far-right outlook in several countries (Bjørgo and Mareš, 2019).

Previous research has established that the discursive environment provided by mass media is an important factor for explaining variation in levels of far-right violence. Recent studies have also highlighted the importance of online social media in this regard (Müller and Schwarz, 2020; Wahlström and Törnberg, 2019). However, extant studies tend not to be very precise about the media content and online interactions that lead to far-right violence. Arguably, not all activities on this type of forum have equal potential to contribute to violent actions. A better understanding of the impact of social media therefore requires identifying and analysing statements that motivate violence, legitimize/support violence and/or express the intention of using violence for political purposes, as well as the situational context in which they tend to occur. By studying such micro-dynamics, we aim to unpack mechanisms behind correlations between the social media activity and violent actions demonstrated by authors such as Müller and Schwarz (2020).

The overall purpose of this article is therefore to study how far-right political violence is promoted and instigated in social media, through an analysis of violent and dehumanizing rhetoric in an online far-right milieu. The study is guided by the following two research questions: (1) How is violence motivated and legitimized in social media discussion threads? (2) In what discursive and interactional contexts in these threads does violent rhetoric occur? For our case study, we use the Swedish public far-right Facebook group Stand up for Sweden. With its approximately 170,000 members in 2018, it was possibly the largest political Facebook group in Sweden around the time data were collected for this study. 1 As one of the main arenas of the Swedish far-right counter-public (Törnberg and Wahlström, 2018), it may provide a support context for potentially violent perpetrators beyond the dedicated militant organizations. We argue that it is of particular interest to study the prevalence and dynamic of violent rhetoric in large and public online groups and noticeboards, as opposed to smaller, closed and more explicitly radical communities, as the former tend to aspire to broader acceptance and impact beyond a limited hard-core activist milieu.

In the next section, we outline our case and its context, followed by a contextualization of this study in relation to previous research. We then present the theoretical framework of our article, arguing that right-wing violence is promoted through motivational frames and legitimizing accounts expressed and negotiated online. After outlining our mixed-methods approach – combining machine-learning and qualitative analysis – we present our results, identifying the typical characteristics of the discussion threads where violent rhetoric occurs, as well as the motivation for and legitimization of violence in this rhetoric.

Background – the far right and right-wing violent hate crimes in Sweden

We follow Mudde (2019) in using the term far right to speak about both the reformist radical-right – which is against aspects of liberal democracy, such as minority rights and free speech – and the extreme right – which is fundamentally antidemocratic even in its most basic sense. Far-right parties and extra-parliamentary far-right organizations and activists are interlinked parts of a broader far-right milieu dominated by various forms of nativist nationalism (Castelli Gattinara and Pirro, 2019). They argue that people belong to different distinct ethnic cultures that must be kept separate, and share negative sentiments concerning perceived threats to the nation and its traditions, including immigration, Islam and feminism (Caiani and Císař, 2018; Elgenius and Rydgren, 2019).

In recent decades, Sweden has had relatively high levels of far-right violence (Ravndal, 2018) and two waves of radical-right political representation on the national level – in the early 1990s and in the 2010s onwards. In the 1991 parliamentary election, the right-wing populist party Ny Demokrati (in English, ‘New Democracy’), received 7% of the votes, only to drop out for good in 1994. However, around that time, militant activism peaked (Bjørgo, 1997; Lööw, 1995); refugee centres were attacked and set on fire, and individuals perceived to belong to ethnic and sexual minorities, or the political opposition were physically assaulted, sometimes killed.

The Swedish far-right movement of the 1980s and 1990s never became a mass movement. However, one of its campaign groups Bevara Sverige Svenskt (in English, ‘Keep Sweden Swedish’), with links to neo-Nazi groups and individuals, came to spawn the party Sverigedemokraterna (SD; in English, ‘Sweden Democrats’). During the early 2000s, it grew in support until it entered the Swedish Parliament in 2010 with 6% of the votes (Elgenius and Rydgren, 2019). In the next parliamentary election, in 2014, it received over 13% of votes, and in the most recent election in September 2018, it became the third largest party with over 17%. 2 In the 2010s, Sweden also witnessed increased militant activities by a small but dedicated and violent group, the Nordiska Motståndsrörelsen (NMR; in English, ‘Nordic Resistance Movement’). During a short period, several towns and cities also faced local manifestations of the international Soldiers of Odin vigilante movement.

While the far right in Sweden has so far been unable to mobilize mass protests, it has been successful in mobilizing online. Several far-right and right-wing populist alternative media sites have appeared, and discussion groups with anti-Muslim, anti-immigrant and outright racist content attract many followers. The largest Swedish social media group of this kind – which provides the material for our analysis – is the Facebook group Stand up for Sweden (in Swedish, ‘Stå upp för Sverige’; see Ekman, 2019; Merrill and Åkerlund, 2018; Törnberg and Wahlström, 2018). It started in February 2017 in support of a police officer who had written a blog post depicting street crime in Sweden as essentially an immigrant problem. The police officer himself publicly distanced himself from the group because of its Islamophobic and xenophobic content. On 25 June 2019, the main moderator of the group was sentenced to a suspended prison sentence for not removing posts expressing incitement to racial hatred (Eskilstuna District Court, 2019).

Online hate and far-right violence

According to an influential study by Koopmans and Olzak (2004), variations in the frequency of violent political actions can partly be explained by different discursive opportunities provided by mass media. They argue that the incidence of political violence is dependent on the extent to which it is likely to gain visibility, reactions (resonance) and legitimacy in the public sphere. In a broader sense, discursive opportunities capture the extent to which the discursive climate provides support for a movement’s activities. Indirectly, entrenched ideas about citizenship in different countries may also provide varying degrees of resonance for far-right claims making (Giugni et al., 2005).

Since the theory about discursive opportunities was originally presented, alternative online media (and in particular, social media) have reshaped the media landscape in fundamental ways that have brought about novel means of direct interaction among people scattered over large geographical areas. This development includes the far right (Caiani and Kröll, 2015). Recent empirical studies (Müller and Schwarz, 2020) also support the view that in general terms, activities in far-right social media explain variations in levels of far-right violence. They demonstrate geographic and temporal correlations between violent far-right incidents and anti-immigrant comments on the social media pages of the radical-right party Alternativ für Deutschland (AfD; in English, ‘Alternative for Germany’). Therefore, research must acknowledge the potentially crucial role-played by social media and alternative online media as a discursive and social context for radical-right violence (Wahlström and Törnberg, 2019).

On an individual level, Pauwels and Schils (2016) demonstrate that exposure to extremist content in online new social media increased the propensity for participating in violent political actions. The mass media, and arguably social media, also appear to support various forms of contagion effects in relation to political violence (e.g. Chorev, 2019; Koch, 2018). Similarly, because reactions from bystanders also appear to affect the likelihood of further actions (Braun and Koopmans, 2014), reactions in online social media could also be expected to have such an effect.

Several studies have documented and analysed the prevalence of far-right discourse on forums and noticeboards, including Facebook (see for example, Burke and Goodman, 2012; Ekman, 2019; Malmqvist, 2015). Other studies have shown how social media provide means for participants in the far-right milieu to network (Veilleux-Lepage and Archambault, 2019), spread propaganda (Farkas et al., 2018), and radicalize (Scrivens et al., 2020). However, this study aims to address a lacuna by bridging this field of studies with studies on political violence by exploring how and in what context support for violence is articulated.

Motivation and legitimization of political violence

To capture theoretically how online discourse and interactions contribute to acts of far-right violence, we have chosen to draw on two separate strands of theory: social movement theory, with its long cumulative tradition of explaining political action; and criminology, with its prominence in explanations of violence. Generally speaking, these two fields of research address the following two conundrums: why are people active in politics even though it is often more convenient not to be so, and why do people commit criminal acts even though they are widely regarded as immoral and/or illegal? In other words, a major concern for explanations of political mobilization concerns motivation, whereas a major concern for explanations of violent actions concerns legitimization. If violent far-right activists need both to be motivated and to perceive violence as legitimate, online discourse may promote political violence in relation to either or both of these aspects.

Regarding the political and often collective aspects of political violence, potential activists need to become convinced that a social problem is important, decide what needs to be done about it, and believe that individual participation in collective mobilization is important and urgent. The established theoretical term for this is collective action framing: an ongoing process in which movement actors negotiate and propagate definitions of a social problem, such as its character, causes and solutions (e.g. Benford and Snow, 2000).

Crucially, frames are not just abstract ideas. They convince and mobilize people by fuelling emotions and moral sentiments (Jasper, 2011). Mobilization has also been shown to occur in interactions with peers, such as family, friends or work colleagues. People are rarely mobilized on their own, solely by exposure to a powerful mobilizing frame. This is especially true in relation to high-risk activism (McAdam, 1986), where support from friends is fundamental to overcoming fear. However, people may be more prone to being mobilized on their own, or at least without prior connections to a movement, when they are exposed to what Jasper and Poulsen (1995) call moral shocks: events or states of affairs that are depicted in such a way as to give rise to strong emotions and moral condemnation. Jacobsson and Lindblom (2016) argue that moral shocks are not only a mechanism for recruiting new movement participants, but are also deliberately induced in movements to sustain commitment and motivation. In their study, animal rights activists are involved in moral micro-shocking by exposing themselves to material depicting the ill-treatment of animals.

However, potential participants in violent political actions need to convince themselves and their peers that the action is not only politically important but also morally legitimate. A criminological starting point for understanding how individuals – depending on their interactions with others – learn techniques and legitimizations (as well as motives) for norm-breaking actions is social learning theory (Akers, 2017). According to this framework, predispositions to commit certain types of crimes are affected by learning processes whereby people acquire definitions – various learnt attitudes, motivations, rationalizations and justifications – conducive to criminal actions. The social aspect of learning is captured by the concept of differential association, meaning direct or indirect interactions with people who participate in or promote certain kinds of norms and behaviour, including family and close friends as well as ‘virtual peer groups’ (Warr, 2002).

Social learning theory arguably has wide applicability to a broad range of criminal behaviours, including political violence (Akers and Silverman, 2004; Pauwels and Schils, 2016). Participation in a far-right online counter-public can in itself be regarded as an example of differential association, through which definitions supporting political violence are disseminated. Such definitions include collective action frames that identify a problem and motivate action. However, as noted earlier, to legitimize violent actions, participants in the online interactions must collectively establish either sub-cultural norms that frame anti-immigrant violence as morally right or techniques of neutralization that help potential perpetrators situationally circumvent established norms against using violence (Colvin and Pisoiu, 2020). According to Sykes and Matza (1957), there are five generic types of techniques of neutralization to make norm-breaking acts possible: denial of responsibility (why one is not fully – or at all – responsible for the act), denial of injury (why the act does not imply serious harm), denial of the victim (framing the victim of an act as irrelevant or even deserving), condemnation of the condemners (criticizing actors who express moral concerns over one’s actions) and appeal to higher loyalties (higher principles or affiliations that override ordinary moral/normative considerations).

While all the above-mentioned techniques of neutralization may contribute to legitimizing political violence, ‘denial of the victim’ deserves some special attention in this context. An extensive body of research demonstrates the importance of one particular form of such denial for both accepting and perpetrating violent acts: dehumanization (e.g. Bandura, 1999; Smith, 2011). This involves denying or downplaying aspects of an individual’s or group’s humanity (Haslam and Loughnan, 2014). (In the case of ‘mere’ downplaying of humanity, some researchers speak of infrahumanization.) A typical manifestation of dehumanization is the use of animal metaphors in reference to a specific group, such as ‘vermin’, ‘pigs’ or ‘apes’. According to an influential perspective on dehumanization, it refers to a failure to see another person as having a mind (Harris and Fiske, 2006) and/or lacking features typically distinguishing humans from animals (e.g. rationality, intelligence) or non-living objects (e.g. emotions, individuality; Haslam, 2006). Rai et al. (2017) argue that more strict expressions of dehumanization – involving denial of another’s agency or capacity for experiencing, and moral emotions – is actually only connected with support for instrumental violence (violence as a means towards an end) and not with moral violence (acts where inflicting harm is a goal in itself, for example, for retribution). However, Smith (2016) argues that dehumanization is best conceived as regarding others as (outwardly) human and non-human (inside) at the same time, thereby perceiving them as unclean or as monsters (see Douglas, 2002 [1966]). We regard the above different senses of dehumanization as complementary for explaining far-right violence. Whereas dehumanization as conceived by authors such as Rai et al. (2017) has the effect of making violence against the dehumanized people at least permissible, dehumanization in Smith’s (2016) sense also makes violence against those perceived as unclean seem desirable.

Methodology and research design

Having established how political violence may be instigated by certain forms of discourse online we move on to our empirical investigation of this form of rhetoric. We applied a mixed-methods approach to data gathered from the Stand up for Sweden Facebook group, described in the background section, combining various large-scale machine-learning techniques with in-depth qualitative analysis. This research design represents a type of inductive triangulation that combines an overview of the structure and content of a large corpus with qualitative close reading to identify and explore discursive patterns. Rather than employing these methods as separate and independent steps, the research process was iterative. Continuously moving back and forth between the quantitative and qualitative methods, in each step we validated prior results and interpretations while informing subsequent analytical choices.

The research data were accessed by scraping the group using custom-made web crawlers, then anonymized and stored in a local database to facilitate further analysis. 3 The corpus consists of a total of 86,108 posts, 2,989,299 top-level comments and 11,371,421 likes of posts, and covers the period from the creation of the group on 5 February 2017 until 31 March 2018. During the data collection phase, Facebook changed its policy, limiting application program interface (API) access to postings in public groups only to group administrators. This occurred after we had retrieved the posts, top-level comments and likes of top posts, but before we could collect lower level comments and reactions to comments. However, lower level comments – which are rather uncommon in this group – were included in the comment threads manually downloaded for qualitative analysis.

Following the theoretical discussion earlier, we explicitly distinguish between dehumanizing and violent rhetoric. This enabled us to study the role of dehumanizing rhetoric in relation to more explicit calls for violent acts. To capture the usage of violent rhetoric in the group, a list of keywords was used to search for especially violent comment threads, which we analysed in order to revise the original list. We only included words that were exclusively found in explicit calls for violence. Our strategy was to include as many relevant words as possible, while at the same excluding words that tend to produce ‘false positives’: for example, comments that do not prescribe violence but merely describe violent acts performed by others. Such excluded words include some common violent words such as ‘kill’ and ‘murder’. There is still a risk that our keywords captured some discussions that criticize or merely describe violent acts, but no such cases were detected in the qualitative analysis. The dehumanizing/infrahumanizing words were similarly selected with a clear priority of minimizing false positives, while acknowledging that many instances of dehumanizing and infrahumanizing expressions were not included in the count. In the quantitative analysis, the impact of occasional non-systematic errors and false positives is reduced by the large size of the corpus.

The selected violent words: flogging| flog| beating| burn down| burn them| burn it| set fire to| bash| tie up| castrate| shoot in the head| ball tap| scrag| swing| execute. The list of dehumanizing words is: rat(s)| parasite| pig(s)| swine(s)| carrion| wretch| scum| dregs| refuse| beast| vermin| rabble| monkey| barbarian| bastard| amoeba.

These keywords were used to create sub-corpora consisting of threads that contain different levels of violent and dehumanizing rhetoric (henceforth, VD rhetoric). Initially, we employed quantitative analysis of a large sample to investigate the discursive context in which VD rhetoric is used in the group. In order to do this, a large sub-corpus was created, consisting of a relatively high frequency of such rhetoric: ⩾ 3 violent words and/or ⩾ 10 dehumanizing words per thread. The ‘violent’ corpus consisted in 407 threads with an average length of 521 comments (in total, 212,613 posts and comments). The number of posts may not look very high, but because of our restrictive operationalization, we expect the identified posts to be just the tip of the iceberg of the actual numbers of posts expressing some form of violent or dehumanizing rhetoric. In addition, a comparable ‘non-violent’ sub-corpus was constructed consisting of all threads with no identified violent comments, each comprising between 410 and 610 comments, which resulted in 481 threads with an average length of 488 comments (in total, 235,216 posts and comments).

Unsupervised topic modelling was then employed on both sub-corpora combined. 4 Topic modelling is a catch-all term for a collection of algorithms that uncover the hidden thematic structure in document collections by revealing recurring clusters of co-occurring words. They have the advantages of producing systematic overviews of large datasets, increasing replicability, and enabling detection of small but systematic tendencies that may not be discoverable in smaller corpora (Jacobs and Tschötschel, 2019). We used the currently most common algorithm: Latent Dirichlet allocation (LDA; Blei et al., 2003). The dataset was lemmatized 5 to reduce inflectional forms to a common base form, and commonly occurring stop words (both Swedish and English) were removed (including numbers, punctuation and separators). 6 Moreover, pronouns (such as ‘he’, ‘she’, ‘her’, ‘we’ and ‘them’) were classified as stop words because these words otherwise tend to dominate many topics. However, because these words may still carry significant meaning, they were included in a separate step of the analysis.

The number of topics was set to 15 in the model. The appropriate number of topics depends on several factors, such as the size of the corpus and the purpose of the analysis. Typically, a lower number of topics tends to produce more general topics, while high numbers provide more specific – but potentially fragmented – topics. Following standard procedure, the number of topics was decided using a manual trial-and-error approach to evaluate the coherency and interpretability of the topics. This was also supported by statistical metrics, according to which 15–16 topics resulted in the best performance. 7 Besides varying the number of topics, we applied various additional measures to test the robustness of the model, such as varying the strictness of the stop words list and using different types of tools for lemmatization and stemming. These models identified similar topics, which supports the reliability of our analysis. The name and description of each topic were decided through surface reading of the 50 most representative words, together with a close reading of the 10–20 threads with the highest percentage of that topic.

To make sure that the quantitative findings were correctly interpreted and to better capture how violence is motivated and legitimized, we conducted an in-depth qualitative analysis of a smaller sample of the threads that contained the highest frequency of violent rhetoric (⩾ 4 of the violent keywords), resulting in 15 threads containing 7028 comments in addition to their top posts. 8 Our assumption was that this sample represents the kind of top posts most likely to evoke violent rhetoric, and comments among which one would find typical examples of the latter, as well as articulations of frames and legitimizations that support violent actions. This sub-corpus was analysed using the software ATLAS.ti, relying on theory-guided coding (looking specifically for, for example, techniques of neutralization) combined with inductive open coding (Charmaz, 2006) to maintain sensitivity to unexpected themes.

The research project has been assessed and approved by the Gothenburg Regional Ethical Review Board. Because of the considerable size of the group, and the fact that all content is published publicly and is frequently discussed in mass media, we regard the group as a public domain that does not require individual consent for (anonymous) inclusion in research, based on the ethical guidelines for Internet research provided by The Association of Internet Researchers (franzke et al., 2020) and by British Sociological Association (BSA, 2017). To ensure both anonymity for the users and safety for the researchers, user names and other highly identifiable information were deleted when collecting the corpus. Individual quotes are translations from Swedish to English and were modified to make it difficult to trace them back them to any individual user.

Patterns in violent and dehumanizing rhetoric

The use of VD rhetoric in the group remained relatively constant over the studied period, but appeared to be affected by certain external events. For instance, a peak in violent rhetoric in August 2017 coincided with a protest by Afghan youths in Stockholm against deportations to Afghanistan. This event evoked indignant and often violent discussions within the Facebook group, which became a platform for organizing a counter-demonstration on 19 August (see Wahlström and Törnberg, 2019).

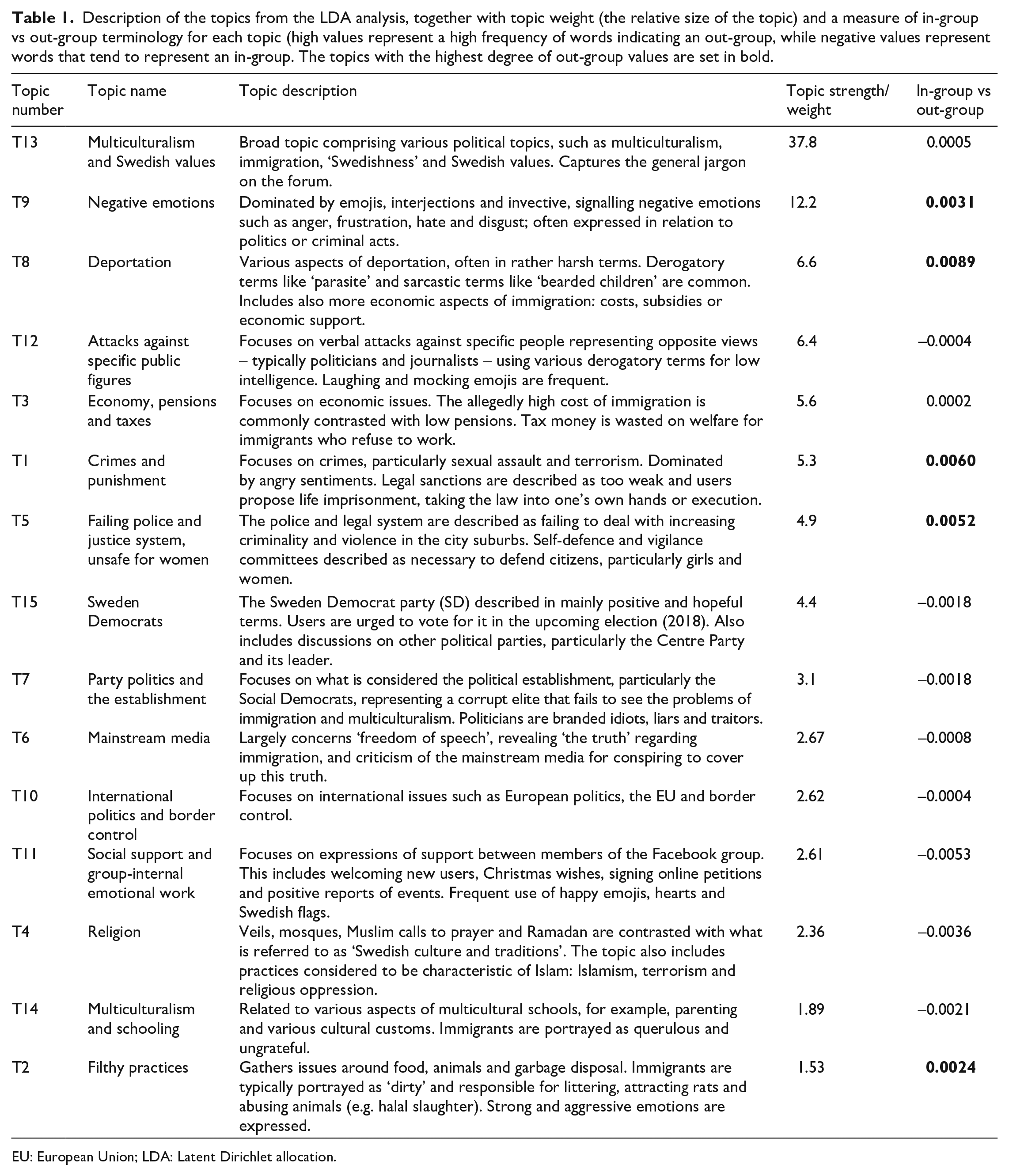

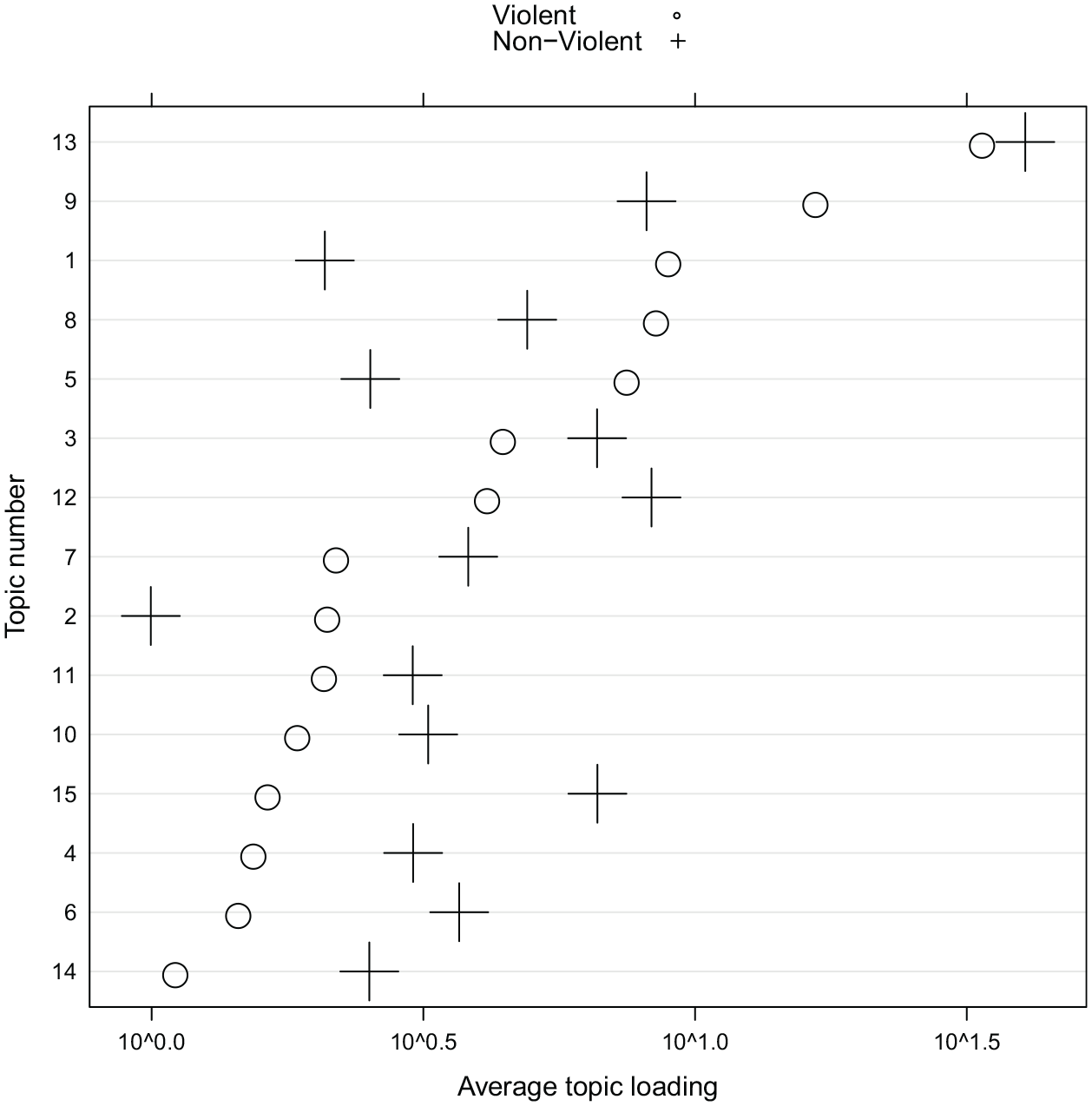

Table 1 below shows an overview of all 15 topics from the LDA analysis, sorted according to their overall weight in the corpus. The topic weight indicates each topic’s distribution over the entire dataset, and the in-group-versus-out-group measure indicates the extent to which the topic is dominated by words that represent in-group (e.g. we, us) versus out-group words (e.g. they, them, these). Figure 1 shows the extent to which each topic is dominated by VD terminology. We represent this by creating two scores for each topic based on the average loadings of the topics in documents in each corpus (represented by the X-axis).

Description of the topics from the LDA analysis, together with topic weight (the relative size of the topic) and a measure of in-group vs out-group terminology for each topic (high values represent a high frequency of words indicating an out-group, while negative values represent words that tend to represent an in-group. The topics with the highest degree of out-group values are set in bold.

EU: European Union; LDA: Latent Dirichlet allocation.

Topic connection to documents respectively classified as violent or non-violent. Each document is a mixture of topics, and the figure shows the average proportion of each topic in the two corpora. The Y-axis shows topic number and the X-axis shows the average loadings of the topics to documents in each corpus. The heavy dominance of topic 13 motivated a logarithmic transformation of the X-axis.

The results of the topic modelling provide an overview of salient themes and discussions in the group. The largest topic in both corpora is topic 13, which corresponds to the jargon dominating the forum as a whole, concerning issues such as multiculturalism and Swedish values. The most distinctive words in this topic include ‘Sweden’, ‘country’, ‘Swedish’, ‘people’ and ‘government’. This topic is not dominated by either violent or non-violent documents and has neutral in-group/out-group values.

The topics most strongly associated with VD rhetoric focus on violent and sexual crimes (T1), and a police force and judicial system that allegedly fail to deal with increasing violence in disadvantaged urban neighbourhoods (T5). Threads dominated by these topics frequently include stories about victims – often elderly, children, young women or animals – of violent perpetrators, typically assumed to be immigrants. These evoke strong sentiments and calls for violent revenge. Victimization themes – represented by words such as ‘child’, ‘girl’ and ‘woman’ – can also be found in the topic centring on negative emotions (T9), which is characterized by multiple interjections and emojis indicating emotions such as anger, disgust, frustration and malicious pleasure, combined with invectives with derogatory and sometimes dehumanizing connotations. Another topic that frequently evokes VD rhetoric is T8 on deportation, in which immigrants are described using various derogatory terms such as ‘parasites’ and ‘vermin’. Finally, T2 is associated with discussions about immigrants allegedly responsible for various ‘filthy’ practices, evoking disgust and aggressive emotions among the users.

The topics that are the least dominated by VD rhetoric tend to focus on broader and more general political discussions, such as international politics (T10), party politics (e.g. T7, T15), various cultural issues/conflicts (T2, T14) and the economy (T3). Some of these discussions undeniably evoke strong and relatively aggressive sentiments, for example, linked to the portrayal of specific journalists and politicians as idiots and fools (T12) or claims that the ‘corrupt’ establishment and media ‘cover up the truth’ (T6). However, these discussions seldom escalate to violent and/or dehumanizing rhetoric. Overall, the violent topics have a consistently higher out-group value, which indicates that they are dominated by words signifying an out-group.

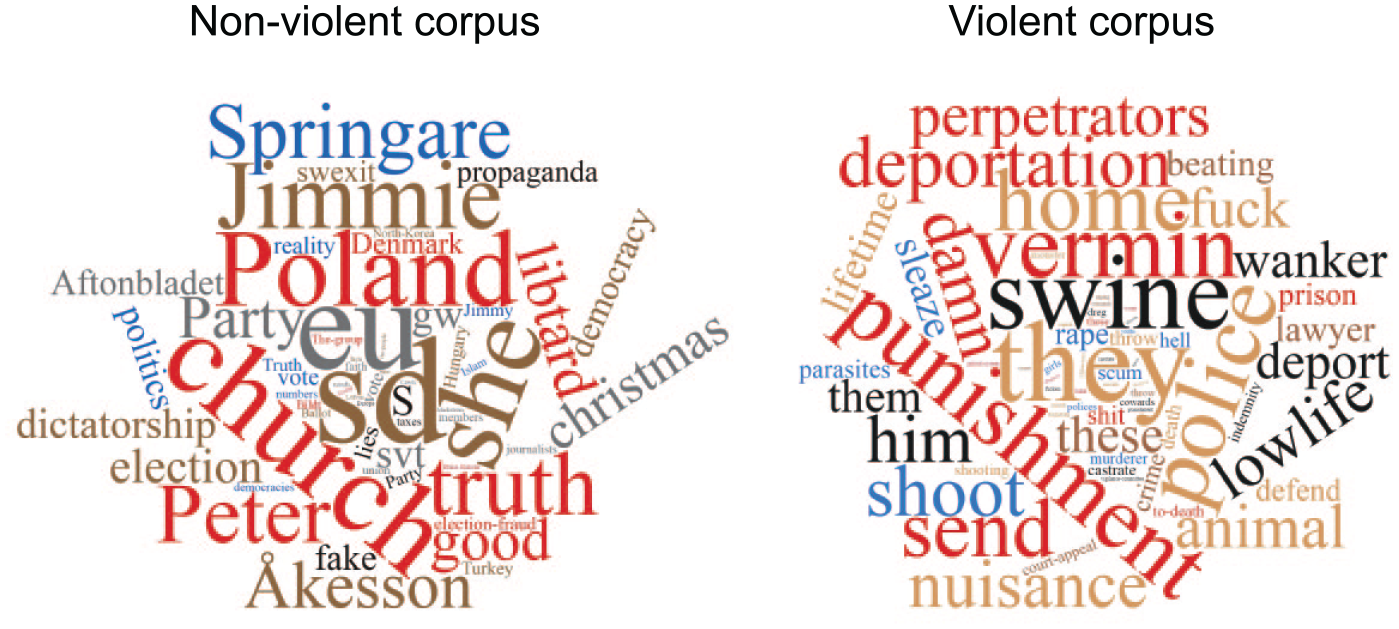

To further validate these results, we calculated the difference in word distribution between the two corpora using statistical z-test. This shows words that occur more frequently (relatively speaking) in one corpus compared with the other (using WORDij 3.0). The result, illustrated as word clouds in Figure 2, confirms that while the non-violent corpus relates to broader political themes, the violent corpus is primarily distinguished by words relating to crime and punishment, sexual crimes and strong emotional expressions.

These graphs illustrate the most distinguishing words for each sub-corpus. The left-hand cloud illustrates the non-violent corpus, while the one on the right shows the violent corpus. (Jimmie Åkesson is the leader of the Sweden Democrat Party and Peter Springare is the police officer in support of whom the group was originally created.)

Discursive connections between the topics

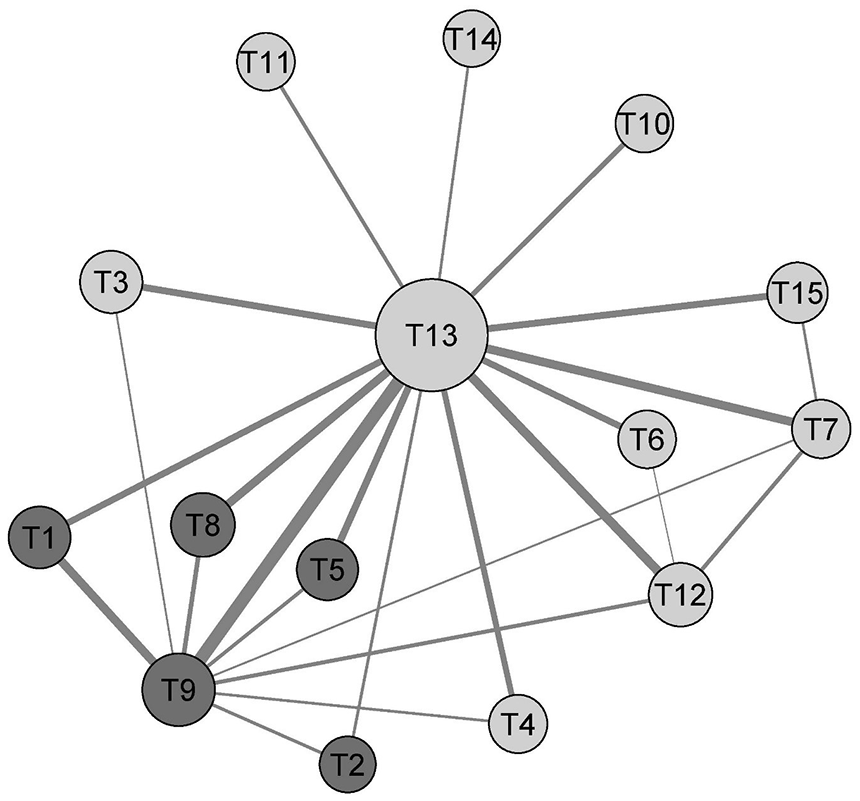

The ‘topics’ identified in the LDA analysis are not necessarily what would commonly be understood as distinct discussion topics. They are arguably best interpreted as themes that can be variously interlinked. Hence, to identify the discursive intersections where VD rhetoric tends to occur, we created a network illustrating co-occurrences of topics (see Figure 3). This graph maps the topics in space, that is, how the topics tend to coincide within the same thread (calculated using cosine similarity). The graph shows how the topics that contain relatively high proportions of VD rhetoric (darker shading) together form a cluster of topics with T9 (negative emotions and derogatory invectives) as the central node. This arguably shows that expressions of negative emotions are an important component, setting the tone in the violent discussions. A particularly strong connection can be found between T9 and T1 (sexual assault and punishment), which are also those topics with highest proportion of VD. Interestingly, T9 also connects to T3, T4, T7 and T12 (which are ‘non-violent’), which confirms our qualitative impression that commenters frequently appear to display frustration, hate and disgust in relation to subjects such as religion, perceived economic injustices and mass media personalities they dislike. However, such expressions translate into explicitly violent and dehumanizing rhetoric primarily in relation to the topics about crime, law and victimization.

Social network analysis of topics. The nodes represent topics, and the ties indicate that the topics tend to coincide within the same documents. The software package Gephi was used to construct the network.

Motivating and legitimizing violent rhetoric

We now explore the patterns identified in the quantitative analysis through a qualitative analysis of a smaller sample of the most violent threads. Thereby, we further validate and expand the analysis, looking closer at social problem framing that posits violent action to be morally/politically motivated and techniques of neutralization used to legitimize violence against the target groups.

The structural features of Facebook strongly shape the interactions in the group, as do other social media platforms such as Reddit and Tumblr (Massanari, 2015; Renninger, 2015). Merrill and Åkerlund (2018) argue that affordances such as the ‘reaction’ buttons and the ease of sharing posts facilitate the amplification and diffusion of racist discourse on Facebook. Important for our analysis is that Facebook’s commenting feature primarily lets users see the initial post and only the most recent comments. Therefore, users often post comments that respond to the initial post rather than other comments earlier in the thread (Farina, 2018). This limits the number of extended interactions among commenters and in our sample, violent rhetoric rarely seemed to be the product of a longer chain of escalating interactions.

Thus, while Ekman (2019) found racist and dehumanizing comments escalating in comment threads sampled from this group, we primarily found the more basic pattern that framing in the top post conditioned the occurrence of violent and dehumanizing rhetoric in the discussion threads. While the posts rarely contain explicit calls for violence, some include themes that seem to evoke VD rhetoric in the subsequent discussions. Not surprisingly, when we studied the characteristics of the top posts of the smaller sub-corpus of threads containing a particularly high degree of violent rhetoric we found themes that corresponded to the topics identified in the quantitative analysis as particularly connected to VD rhetoric.

Top posts

Posts that prompted much violent rhetoric in their subsequent comments were typically related to personal stories of victimization or witnessing criminal activities, links to news about crimes or criminals or movie clips depicting morally condemnable actions. In posts concerning victimization, the images of the victims had most or all the characteristics of what Christie (1986) has termed the ‘ideal victim’: weak and respectable, with a strong and unknown offender. Examples included a news story about a youth group assaulting a seal cub, a personal story about a victimized teenage daughter and a movie clip showing a group of young men verbally harassing an old lady. The following top post was shared over 1000 times and generated 810 comments, many of which were explicitly violent: Things are shaping up well. The Somalis that moved into my block in 2013 have now been reported by me 52 times for extreme noise. [. . .] Apparently my neighbours had also called the police when the Somalis were noisiest. However, the police never came. The police did not come when I called either. You are without legal protection nowadays. Thank you Prime Minister. [. . .] It costs Sweden 50 million [Swedish Kronor] to admit these five Somalis, who have been bothering me for four years. They have been using my window for football practice. Damn how ungrateful they are. And destructive. Concerning the immigrant kids who have been torturing cats in [the neighbourhood], some immigrant kids had even been to my neighbour’s flat and tried to take her cat. Lord! They have manhandled my neighbour’s wife’s cat. Plus other cats in [the neighbourhood]. I have asked the landlord three times what he thinks about the cat torturing. No reply. How long is this farce going to continue?

The quoted post includes several of the elements typical for the posts in the sample: a moral relationship is established between respectable Swedes and criminal – even evil – immigrants; the framing of a failing state and legal system (here exemplified by the failure of the police to intervene); and reminding of the alleged economic costs of immigration. This type of post is not just about establishing abstract frames about the state of society, but an instance of a continuous moral work that raises negative emotions and motivates action. The large number of responses to such posts – as well as their aggressive and indignant character – indicates that many forum participants use the stories for moral micro-shocking (Jacobsson and Lindblom, 2016) to fuel their engagement in the political issue.

Another prominent theme of posts that prompted violent comments were news stories about criminals in either of two scenarios: criminals getting off ‘too easily’ or criminals being informally physically punished by others (by inmates in the same prison or intended victims striking back). Apart from contributing to a stereotype of the criminal immigrant and a failing criminal justice system, these narratives arguably contribute to a motivational frame based on retribution, as we shall also see in our closer analysis of the comments.

Comment threads

It is among the comments one finds the most outspoken racist, violent and dehumanizing rhetoric. This may be an effect of stricter moderation at the post level. However, it could also indicate a higher threshold for using coarse language when one initiates an interaction, compared to when responding to someone else. Framing activities are thus structured in a simple interaction sequence: the initial poster establishes a diagnostic frame by exemplifying a social problem; subsequently, the commenters either elaborate on this diagnosis (who is responsible and how is the example part of a broader pattern) or move on to prognostic framing (what to do) and legitimizations (why it is morally permissible to do it). Notably, far from all comments to the posts are clear-cut framing activities. As reflected in the sizable topic on negative emotions that emerged in the quantitative analysis, many comments are also primarily expressions of emotions such as anger and disgust.

In the most violent threads, a prominent diagnostic frame was that immigration had caused increased criminality and a successive Islamization of society. Some claimed that politicians and Muslim organizations conspire to this end: It is time that we all wake up and realize what is happening . . . Sweden is going to be Islamized. Muslims shall be given preferential treatment . . . mosques and minarets will be built, often with money from Saudi Arabia. [. . .] Incidentally, this agenda is called ‘New Global Order’, a world without borders, and today we know what it means.

The Swedish police were characterized as too meek, or too restrained by excessively restrictive regulations, to contain the rising criminality. The police monopoly on violence in Swedish society was broadly depicted as failing or as having already failed. While the threat was indeed frequently framed in the comments as coming from Islam and Muslims, other categories were also mentioned, such as Arabs and Roma or more vaguely ‘immigrants’. Perpetrators of crimes were always assumed to be immigrants, even when there was no information about this in a news item. In the material, we found a handful of critical comments pointing out that immigrants were not obviously to blame in a particular case. Such comments were most often simply ignored and buried in the stream of new comments. In the odd cases such critical comments were picked up, they tended to be misinterpreted as defending the perpetrators or palliating the deed.

Dominant general solutions – prognostic frames – included voting for the Sweden Democrats in the next election and proper ‘Swedes’ creating vigilante groups to maintain order: Armed watch committees are the only solution. And strike mercilessly, because the politicians don’t do a damn thing about these criminal individuals. There will be change if the Sweden Democrats get power.

Both of these solutions implicitly or explicitly included deporting immigrants (at least those committing crimes in Sweden) to their countries of origin. Enforcing deportation also provided an instrumental motive for anti-immigrant and anti-Muslim violence. However, retribution appeared to be the predominant way to legitimize violence. While some calls for violence were presented in less vindictive terms such as ‘maintaining the law’, many commenters displayed a strong vindictive perspective on justice and contempt for more supportive and rehabilitative aspects of the criminal justice system. Perpetrators were alternately constructed as irreparably bad and at the same time, in need of ‘education’ by various forms of corporal punishment. For example, an alternative media news item about a gang of allegedly Moroccan street children receiving a beating from members of the public received numerous variations of the following reaction: ‘Smash them to pieces. It’s the only language they understand’.

As a technique of neutralization used to legitimize violence (Sykes and Matza, 1957), the Islamization and failing police frames both cited a higher loyalty (to an imagined ethnically homogeneous nation state) to neutralize proposed violent actions, but this was also sometimes elaborated as a form of denial of responsibility: The politicians have FORCED us into vigilante groups. If the politicians use the ostrich method, the next phase is armed vigilantes that defend us. The third phase, which is unpleasant, is that the citizens employ paramilitary groups who liquidate leaders of the criminal gangs and bury them in the woods. THAT is not how I want it, but there is a risk of Phase 3 in 10 years.

The ‘educational’ rationale for violent action against minors can be interpreted as a denial of injury, in Sykes and Matza’s (1957) terms, and was typically not connected with strongly dehumanizing terms. In contrast, the retribution theme is rather about denying the victims of retaliatory anti-immigrant violence, by describing them as ‘deserving’. While some labels for those allegedly deserving of retributive violent treatment had mainly moral connotations, such as ‘cowards’ and ‘murderers’, others were dehumanizing invectives such as ‘parasites’, ‘dregs’ and ‘pigs’.

9

Some dehumanizing characterizations went even further, implying monstrosity: ‘they are not humans, they are monsters without the right to breathe oxygen’. Echoing Smith’s (2016) argument that dehumanization is a designation of something unclean in Douglas’s (2002 [1966]) sense, dehumanizing posts also frequently expressed disgust: ‘Disgusting unnatural primates. Execute the bastards!’ Another apparently unclean category constructed by merging contradictory characteristics was also ‘bearded children’ – men who had allegedly tricked authorities into believing that they were children (i.e. below 18 years) to obtain a residence permit: Damn how I hope the bearded children get slaughtered, bloody fucking scum, now it is enough.

While violent rhetoric occurred also without explicitly dehumanizing discourse, calls for retributive violence in particular were often based on a framing of those deserving such violence as less than human and, in this capacity, also dirty and disgusting. Such comments typically occurred in threads following particularly morally shocking top posts. As indicated in the quantitative analysis, dehumanizing terms were also frequently used in comments that did not explicitly call for violence, or in which the possible use of violence was only implied, such as in calls for deportation. Another way of putting this is that dehumanization and infrahumanization were used to legitimize both instrumental and moral violence (cf. Rai et al., 2017).

Conclusion

We have argued that online media – and in particular, social media – provide a central context for far-right violence. Whereas other contemporary studies have demonstrated correlations between social media activity and political violence, this study contributes to understanding why these correlations appear. Broadly speaking, much rhetoric in the far-right discussion group reinforces general mobilizing frames, thereby at least indirectly contributing to violent actions. However, we argue that the character, distribution and reach of explicitly violent and dehumanizing rhetoric is key to understanding the more direct causal role of social media activity for political violence. This can be understood theoretically as online differential associations furthering social learning of definitions supporting political violence.

Whereas various anti-immigrant and right-wing populist themes were present in most discussion threads analysed in the study, the quantitative analysis demonstrated that dehumanization and calls for violent action were primarily linked to expressions of negative emotions and themes such as crime, victimization and a failing state. Corroborating these results, the qualitative analysis also indicated that top posts provided opportunities for moral micro-shocking among the participants, serving to maintain commitment and to legitimize violence. The mobilizing frames articulated in (and perpetuated by) these comment threads converged around depictions of inherently criminal and immoral immigrants and a failing state. This gave rise to emotions such as vindictiveness, disgust and hate, and calls for retribution and deportation. Legitimation of violent actions by appealing to higher loyalties was complemented by a denial of injury by framing violence as ‘educational’ and denial of the victim through dehumanization or by framing violence as ‘just retribution’. We argue that this adds up to a discursive context within which far-right violence comes to be regarded as not only acceptable but even desirable for participants.

This study opens up several routes for further inquiry. On the quantitative side, we believe that there is potential in using our approach to compare different groups and forums, changes over time, and to develop machine-learning approaches to better distinguish between descriptions of violence and prescriptions for violence. Future qualitative analyses should include forums with structures that facilitate more commenter interaction, to further explore the online interactional dynamics around calls for political violence.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was supported by the Swedish Research Council (Vetenskapsrådet) under Grant number 2016-03515.