Abstract

The current study evaluated a new training programme developed for generalist police who interview adults. It was designed to enhance officers’ use of open-ended questions with adult witnesses. The programme included all key training activities that had been used in successful earlier training evaluation studies related to the interviewing of child complainants of abuse. A total of 90 officers (recruits, n = 26; frontline, n = 37; and investigators, n = 27), completed the course over 6–12 weeks and their standardised mock interviews were analysed for open-ended question usage as well as auxiliary behaviours designed to support a narrative. Interviewer performance increased over time from baselines of 6–18% open-ended questions to 70–76% following the programme. Although there was a drop in performance at least 6 months after the training ceased (with no intervening follow-up sessions) (M = 9.02, SD = 3.07), performance was still significantly better than baseline, and the improvements in open-ended question usage were associated with improvement in broader interviewer behaviours known to enhance narrative detail such as the use of minimal encouragers. The practical implications of these findings and directions for future research are discussed.

Keywords

Police briefs of evidence about alleged crimes typically contain a range of substantiation points. Briefs are prepared by police and provide an overview of the evidence available. Physical evidence such as CCTV footage, phone records or DNA samples may be included. Witness statements also play a crucial role in the preparation of police briefs by providing first-hand accounts of the alleged offences or events (Moston, 2009; Powell et al., 2019). Irrespective of the type of event (e.g. traffic accident, robbery, assault, fraud, domestic violence), interviews with witnesses may be the only immediate way of determining how an incident or crime happened, and whether further investigation is necessary, or charges should be laid (Bull, 2018; Milne and Bull, 1999).

Eliciting reliable and useful accounts from witnesses is a complex task requiring specialised training. Of all the skills that police need to learn in investigative interview training, using non-leading open-ended questions is one of the most important (Powell et al., 2019). Open-ended questions are the technical building blocks of good interviewing practice. Decades of research on human memory have converged on the conclusion that non-leading open-ended questions elicit the most accurate, detailed and coherent narrative accounts of what has happened (Brubacher et al., 2020; Fisher and Geiselman, 2010). Although definitions vary somewhat, we define open-ended questions as those that elicit elaborate story (narrative) detail about what happened, and do not dictate what precise information needs to be reported (Powell and Snow, 2007). Unlike focused or directive questions (specific cued recall and closed questions), which tend to elicit short-answer, non-narrative responses focused on precise detail, open-ended questions cast the net wide, allow respondents to report the information they remember and report it in their own words (Powell and Snow, 2007; Snook et al., 2012). When open-ended questions are used well, they not only enhance the accuracy and detail of verbal evidence – they also make witnesses feel heard, elicit contextual detail, enhance witness credibility, reduce defensiveness and anxiety, and counteract witnesses’ natural inclination to withhold information (Brubacher et al., 2019; Pichler et al., 2019; Powell and Cauchi, 2013).

Consensus around the importance of open-ended questions is so great that maximising these questions forms the basis of all police and investigator interviewing guidelines around the globe, irrespective of the respondent type; for example, the National Institute of Child Health and Human Development protocol (Lamb et al., 2018); the Standard Interview Method (SIM; Powell and Brubacher, 2020); the Guidance for Achieving Best Evidence in Criminal Proceedings (Ministry of Justice, 2022); Conversation Management (Shepherd, 2007), the Principles on Effective Interviewing for Investigations and Information Gathering (Mendez et al., 2021) and the Cognitive Interview (Fisher and Geiselman, 2010). The presence of police interview guides, however, does not mean the guides are followed. To be most beneficial, interview guides should be used in conjunction with good quality training. Despite the wealth of knowledge around how witnesses should be interviewed, there has been a nagging gap between recommended technique and actual practice. Most post-training evaluations of police interview performance have revealed predominantly specific and closed questions, as opposed to open-ended questions, and as such, witness statements typically include little narrative detail (Akca et al., 2021; Clarke and Milne, 2001; MacDonald et al., 2017; Snook et al., 2012). The lack of transfer from the training curriculum to the field is the most talked about issue in the investigative interviewing literature across the globe, with many academics focusing their attention on establishing how training programmes can be improved (Clarke and Milne, 2001; Kleygrewe et al., 2022; Lamb, 2016; MacDonald et al., 2017; Powell et al., 2010c; Snook et al., 2012; Snook and Keating, 2011; Westera et al., 2020).

One of the main conclusions arising from the existing police training evaluation literature is that maintaining skill in using open-ended questions does not arise from brief instruction alone. Learning open-ended questions requires an incremental approach (Brubacher et al., 2022), and involves a variety of tasks including the ability to identify different questions and their effects (Powell et al., 2016a; Yii et al., 2014), learning different question stems (Powell and Wright, 2008), spaced practice in the use of open-ended questions during simulated interviews where interviewers are exposed to common challenges (Hughes-Scholes and Powell, 2013), and expert feedback focused on correcting behaviour and generating alternate strategies (Powell et al., 2022). Although researchers are still refining the precise elements and how they should be delivered, there is broad acceptance of the importance of these training elements across witness groups and across other disciplines such as health communication (Gilligan et al., 2021).

A second conclusion arising from the police training literature relates to the need for formal evaluation studies to better understand how to deliver training and maximise quality improvement. Analogous to the notion that having a good interview protocol does not mean that police can use it, having guidance around the elements that are important to include in training does not mean that training will be effective. There are many potential pitfalls that can occur at the point of training delivery in how to use open-ended questions. For training in open-ended questions to be effective, individual police need to see the relevance and value of these questions within the context of their work (Blume et al., 2009). Activities need to harness learners’ intrinsic motivation through good design, provision of clear expectations and dynamic (interactive) learning exercises (Diep et al., 2019). Standardised measures need to be developed that track progress in an objective and reliable way and allow wide variability in responses (Griffiths and Milne, 2006; Powell et al., 2010a, 2010b). Accessibility of learning materials also needs to be considered, because time constraints and high workload is a common complaint of trainees (Anderson, 2014; Benson and Powell, 2015; Leeds, 2014).

So which training programmes have proved effective to date? Most evaluation research in the police training field that has revealed sustained improvement of open-ended questions has targeted interviewers of child witnesses (Benson and Powell, 2015; Brubacher et al., 2022; Cederborg et al., 2021; Hershkowitz et al., 2017; Lawrie et al., 2021). Further, they have included incremental skill-building activities around the use of open-ended questions, starting with establishing an understanding of the effect of different questions (including open-ended subcategories), and then teaching interviewers how to choose appropriate questions and behaviours to achieve an immediate goal. Immediate goals include eliciting certain evidential detail, minimising defensiveness and anxiety, overcoming a witness's natural tendency to suppress information, avoiding raising information that has not yet been established, and encouraging accuracy and coherency.

For example, training programmes evaluated by Benson and Powell (2015), Powell et al. (2016a) and Brubacher et al. (2022) included the core activities such as establishing what constitutes best-practice interviewing, defining various questions, choosing the most effective open-ended questions, putting good questions into practice during simulated interviews, and eliciting a disclosure. Although the precise methods of delivery varied (e.g. online, face-to-face), the courses were interactive in nature, they focused largely on open-ended question usage and considerable time was spent providing practice activities (with feedback) on the utilisation of subcategories of open-ended questions during simulated interviews in which a researcher played the role of the child complainant. Training on the use of open-ended questions was also supplemented with broader interviewer behaviours such as knowing how to launch a narrative with an appropriate initial invitation, using a variety of minimal encouragers, allowing the child to talk without interruption, asking a range of open-ended question subtypes, using simple language, avoiding ambiguous pronouns, and using developmentally appropriate language. The effectiveness of the training on interviewers’ performance was demonstrated using standardised mock interviews where scenarios and trainer response styles were controlled (see Powell et al., 2022). Overall, the findings of these evaluations revealed that adherence to the open-ended questions and broader behaviours dramatically increased from pre- to post-training, and that post-training performance was sustained for months after the training ceased.

The evaluation literature involving generalist (e.g. frontline) police who interview adult witnesses across a range of events such as traffic accidents, robbery, fraud and domestic violence is devoid of studies such as those described above. Few formal research evaluations have been conducted that have included open-ended questions as a measure, and of those evaluations that did include such a measure, the only conclusion that has been drawn is that success is greater when there are multiple training sessions and there may be individual differences in how well people can take on certain skills (see Akca et al., 2021 for systematic review). In addition, no training evaluation studies involving generalist police have included all the core training activities that were included in the studies on police training of child witnesses. For example, Westera led a review of police training manuals across all Australian jurisdictions (Powell et al., 2016b, pp. 311). This review showed that all jurisdictions promoted a narrative-based approached, yet none of the training activities focused on identifying various subtypes of open-ended questions, there was no incremental approach to learning, and no expert feedback on trainee performance.

The trial and investigation of training strategies needed to improve generalist police officers’ use of open-ended questions is clearly in its infancy. It would seem prudent, therefore, to test the outcome of a training programme implemented for generalist police cohorts incorporating the sorts of training exercises that have produced sustained adherence to open-ended questions in courses on child witness interviewing. If the training was successful across all generalist groups (e.g. frontline, recruits), the results would attest to the importance and applicability of these training elements for police who interview adult witnesses. If the training was not successful, it would suggest that there may be a mismatch in perceptions between academics and generalist police about the degree of narrative detail needed for frontline police work, or it may indicate practical difficulties in the development of meaningful learning activities and standardised assessment measures that apply across different contexts in which police work.

To maximise the chance of success of the current new training programme, we developed a course on investigative interviewing that included all the key training activities used in successful prior evaluation studies. Further, all phases of development including course writing, implementation of the course and course evaluation were done in close partnership with staff at a police training academy. The co-production was intended to enhance trainee motivation and commitment, the relevance of activities and the likelihood of learning being transferred to police procedure and practice (Holloway et al., 2022).

Method

Participants

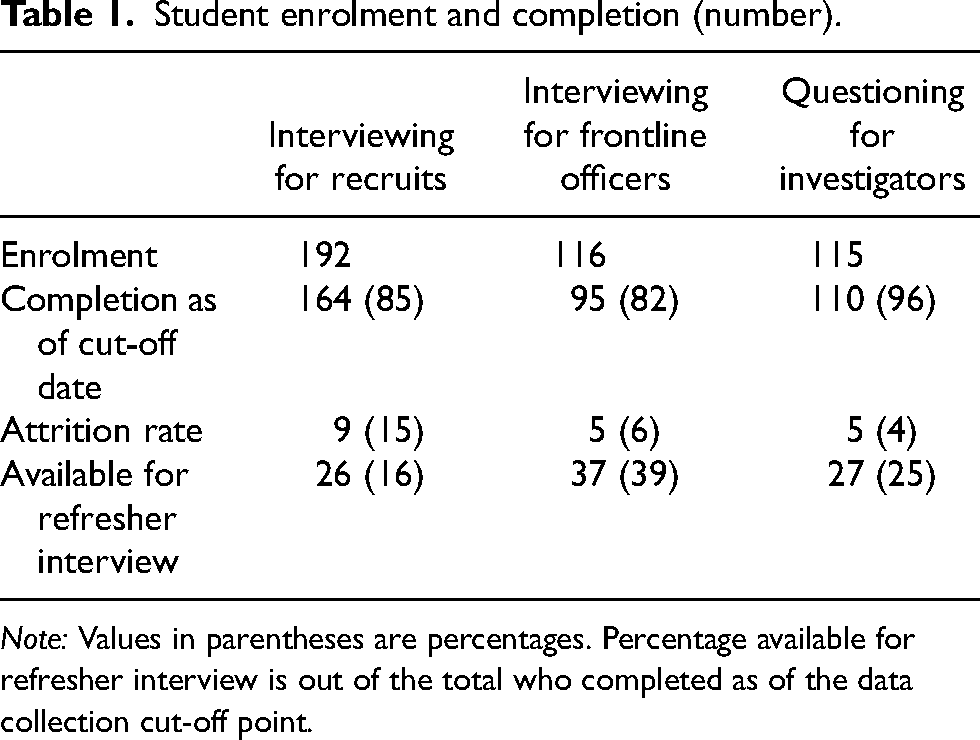

The study was approved by the Human Research Ethics Committee at Griffith University, as well as the executives of the police organisation. There were 423 enrolments in an adult interviewing course from 2018 to 2021 (period between when the course went live and data collection ceased). Table 1 provides a breakdown of the student enrolment, current completion and attrition rate for each of the courses, as of 6 December 2021. There were 22 students who did not complete a course for various reasons (e.g. personal circumstances, unable to complete the course within the time constraints, nine students did not complete the Interviewing for Recruits course because they left the police academy).

Student enrolment and completion (number).

Note: Values in parentheses are percentages. Percentage available for refresher interview is out of the total who completed as of the data collection cut-off point.

The trainee constables (hereby known as Recruits) completed the Interviewing for Recruits course and were recruited from the police academy. Those entering the police organisation completed this course as part of the mandatory recruit training curriculum. Generalist frontline officers of various constable levels completed the Interviewing for Frontline Officers course (hereby known as Frontline). Participants in this course were officers who wished to develop investigative skills or were interested in becoming investigators in the future. Officers who completed the Questioning for Investigators course were detectives or undertaking study to become detectives (hereby known as Investigators). Officers in this course were already serving in investigative areas or wished to be considered for those positions. Participants for the Frontline and Investigators courses submitted applications to the police organisation and acceptance was based on merit deemed by the police organisation. From the pool of 423 students, a total of 90 officers were available for a refresher mock interview (6 months post-completion) at the time when data collection ceased, which was used as the last timepoint for the current study. Our intention was to measure long-term retention of skills, so this timepoint was deemed necessary. This resulted in a final sample of 90; Recruits (n = 26), Frontline (n = 37) and Investigators (n = 27). It is not uncommon in evaluation studies for long-term follow-up participation to be low because of organisational demands and relocations (Benson and Powell, 2015; Lawrie et al., 2021).

Materials and procedure

Course structure and content

The course structure was identical for all of the participants except that Recruit participants were allocated blocks of time at the academy to complete the content, in addition to self-study. The training took approximately 6–12 weeks to complete and consisted of 11 modules covering a variety of topics with the main focus (the first seven modules) on open-ended questions. Additional topics specifically related to the police organisation's legislations and goals were included in the later modules (see Figure 1 for the list of learning modules and mock interview placement within the course and Appendix A (available online) for a detailed overview of the training programme content and structure). Officers progressed through the course at their own pace, with trainers tracking learner progress. Before commencement of training, trainees were provided with a ‘welcome package’ via email, which contained information about the online Learning Management System and clear instructions on how to navigate the system and log in. They were also provided with a brief overview of the training programme and activities, and the contact details of the trainers who were facilitating the course (e.g. assisting with questions about content and the mock interviews).

List of learning modules and mock interview placement within the training course.

Mock interviews

All officers in the current study participated in seven mock interviews, which were conducted over the telephone with actors trained to play the interviewees. Conducting the mock interviews over the phone provided flexibility in the delivery and participation of the training (e.g. scheduling times for a phone call is easier than for in-person practices). These mock interviews were completed by trained role-players using a variety of prescribed scenarios such as domestic violence, robbery and assault. The mock interviews provided an opportunity for the officers to demonstrate skill in eliciting a detailed and accurate account of an offence for evidential purposes. Four of the mock interviews were for purely practice purposes; these were spaced throughout the course and immediate feedback was provided. The remaining mock interviews were purely for assessment purposes. These were scheduled immediately before commencement of the course, immediately after completion of the course, and then between at least 6 months after completion (M = 9.02, SD = 3.07).

A team of trained role-players played the role of the adult interviewee in the mock interviews. These individuals were not police personnel, were external to the police organisation and were blind to the research design and hypotheses. All role-players were trained to ensure consistency in their responses. For instance, when faced with an open-ended question, the role-players were instructed to provide two or three relevant details in their response. Conversely, if a question requiring recall of specific information (such as who, why, how, etc.) was asked, the role-players were trained to provide only one piece of information. Similarly, when asked a yes/no question, the role-players were instructed to respond with a simple yes or no, without offering any additional information. This training approach, as outlined by Powell et al. (2022), was implemented to ensure fairness, reward ideal question types, and control the amount of information provided across different types of scenarios. Consequently, regardless of the scenario, the quantity of information available to participants was standardised. Statistical analyses on trained role-players who have adhered to this approach have shown negligible variability between their role-playing and no evidence of individual role-player impact on interviewer performance (Brubacher et al., 2022).

All mock interviews were completed as the final activity for the corresponding module as shown in Figure 1. The officers were randomly assigned a different scenario type for each mock interview. Before the mock interview session, the officers received an email containing a brief description of the scenario. For example: You are called to a private address in [Street Name] Street, [Suburb] by the radio room as the owner has reported a break in. Upon arrival you see that a panel of glass in the front door has been smashed. The resident, Ash, answers the door when you knock.

Coding

Question types

The interviews were audio-recorded and transcribed by a professional transcriber for coding purposes. A standardised protocol was used to code the transcripts. The questions were categorised into three types: open-ended, specific and leading/suggestive. Open-ended questions aimed to elicit comprehensive responses without specifying the required information (Powell and Snow, 2007). This included different types of open-ended questions: initial open-ended question invitations that encouraged a free narrative of events (Tell me everything that happened. Start from the beginning.), open-ended breadth questions that asked the interviewee to provide additional details (Then what happened?), open-ended depth questions that prompted elaboration on event details already reported (Tell me everything about the part where …), questions seeking clarification of the interviewee's meaning (What do you mean by ‘prang’?) and questions enquiring whether the interviewee had any further details to report about the alleged event (Is there anything else you can remember?). Specific questions included interrogatives (WH questions), yes/no questions and forced-choice. These were coded but not analysed because they were the opposite of open-ended questions. Both open-ended and specific questions could be additionally coded as leading. Leading questions were defined as those assuming or suggesting a detail not mentioned previously by the interviewee, without providing an opportunity for the interviewee to dispute it (What happened after you laid down?) when the interviewee did not mention laying down, only being asked to.

Interviewer behaviours

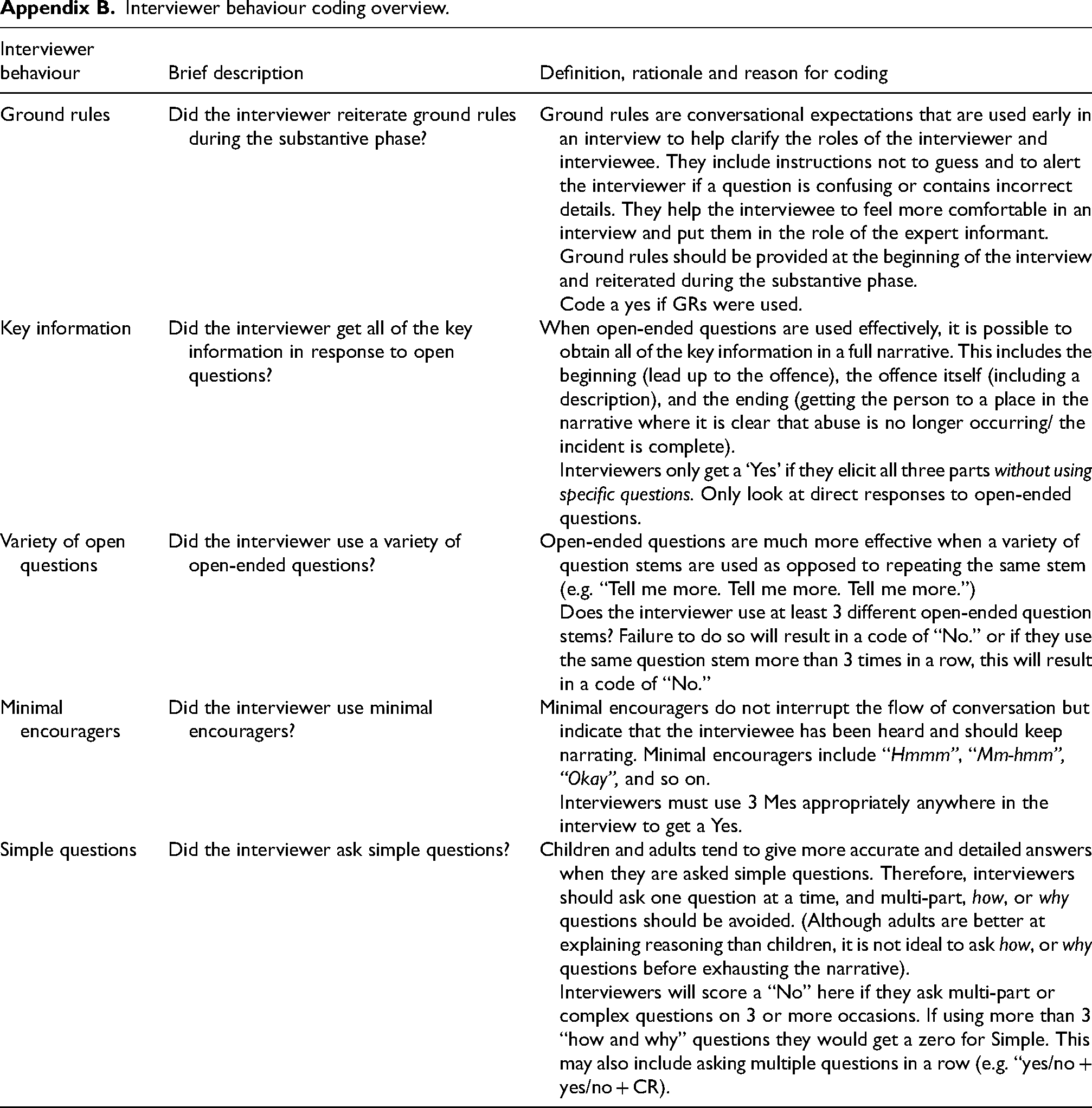

A standardised protocol was used to code the same transcripts for five positive interviewer behaviours: ground rules, key information, variety of open-ended questions, minimal encouragers and simple questions (Appendix B, available online).

Reliability

All transcripts were coded for question type and five interviewer behaviours. A postdoctoral researcher who was blind to the study and the hypothesis double-coded 20% of the transcripts for reliability purposes. Cohen's kappa (κ) was calculated to determine interrater reliability for question types and the five interviewer behaviours, and almost perfect agreement was found for all variables: question types κ = .97, ground rules κ = 1.00, key information κ = .89, variety of open-ended questions κ = .92, minimal encouragers κ = .1.00 and simple questions κ = .85.

Results

Data-checking and preliminary investigation

The data was checked for missing values and statistical assumptions. There were no missing values, but the proportions of open-ended questions used at pre-training and the final interview were not normally distributed, as assessed by inspection of the histograms and Shapiro–Wilk tests (p ≤ .007). As would be expected, pre-training, open-ended question use was near floor (M = .12, SD = .12) and immediately post-training it was near ceiling (M = .78, SD = .18). Refresher data (6 months post-training) approximated a normal distribution and was descriptively lower, on average, than the final interview (M = .67, SD = .18). Error variances were also not homogenous at pre-training and at the refresher interview (Levene's tests ≤ .027). Because of violations of the assumptions, we analysed the data with parametric [analysis of variance (ANOVA)] and non-parametric (Friedman's two-way analysis of variance by ranks) tests. The results were identical; for simplicity, we present the results of the parametric tests.

Proportion of open-ended questions

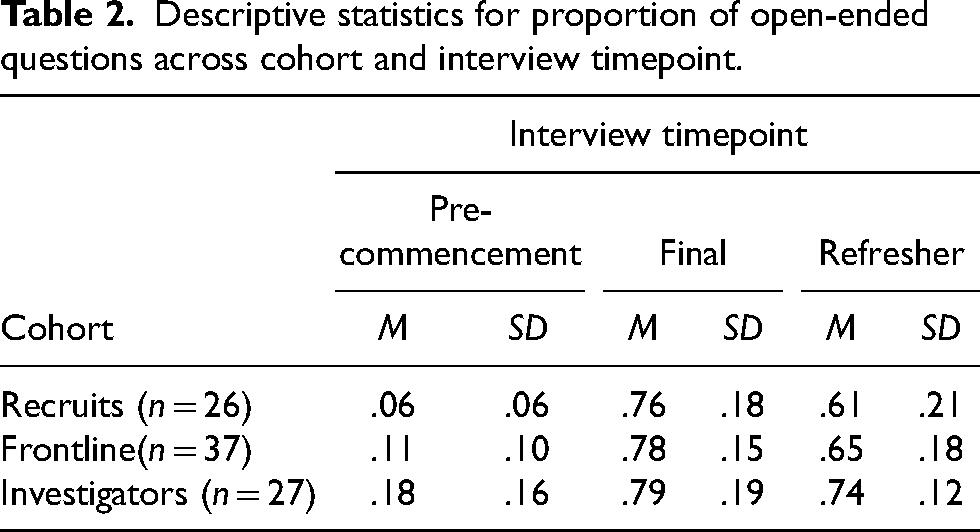

A 3 (Cohort) × 3 (Timepoint) mixed measures ANOVA was conducted with proportion of open-ended questions as the dependent variable. Cohort was entered as a between-subjects factor and timepoint was entered as a within-subjects factor. Descriptive statistics are given in Table 2. The main effect of cohort was significant (F(2, 87) = 5.560, p = .005,

Descriptive statistics for proportion of open-ended questions across cohort and interview timepoint.

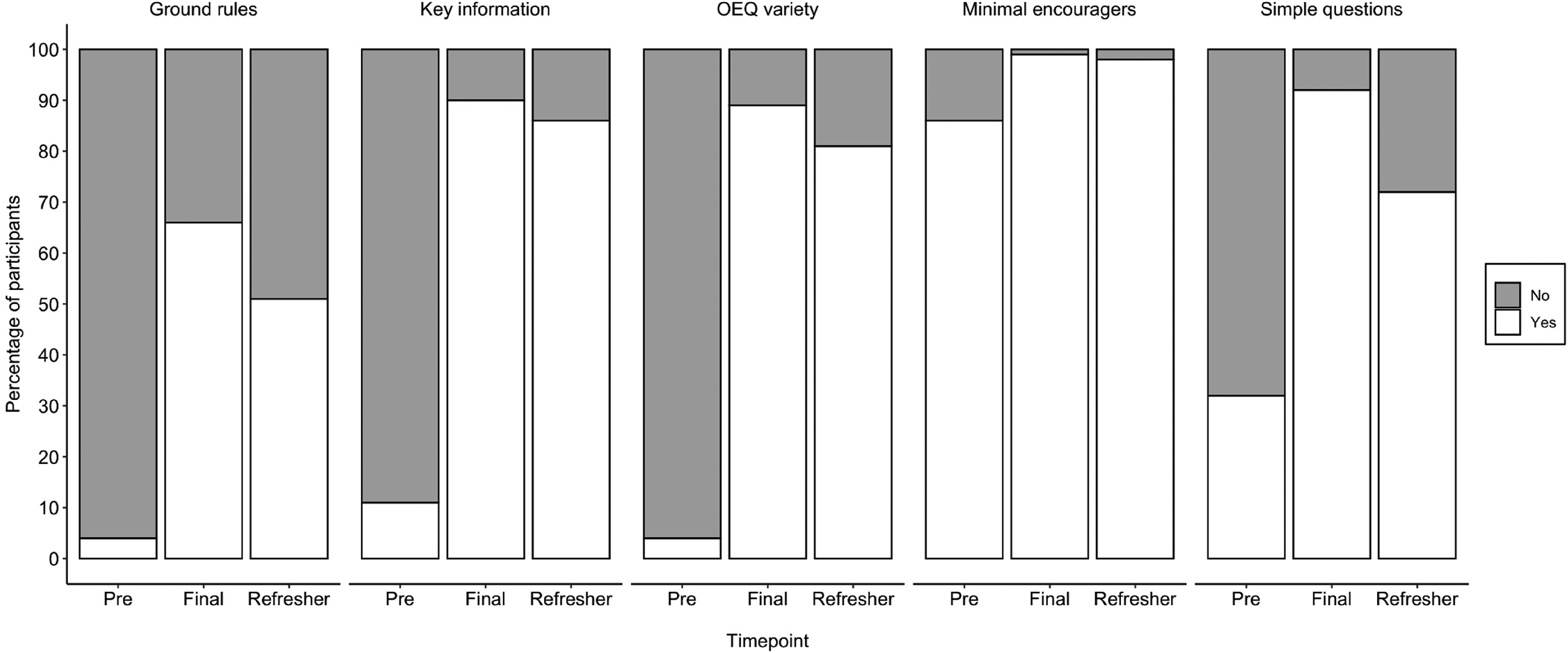

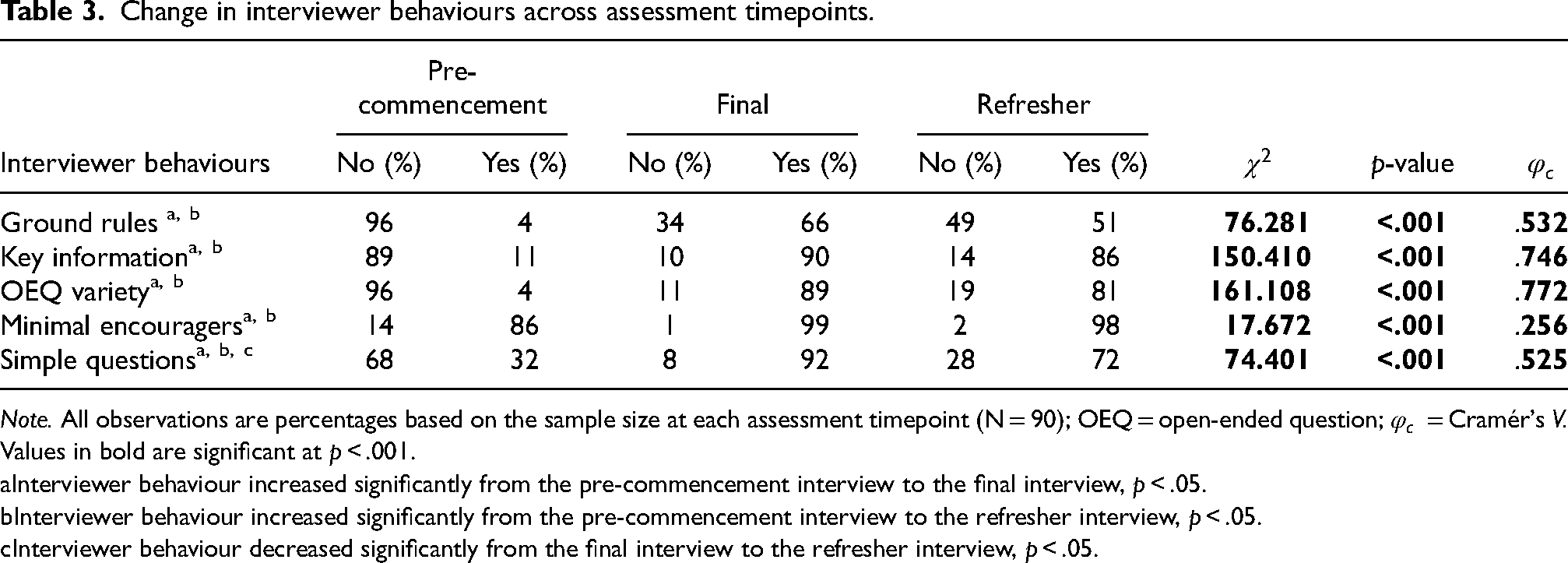

Interviewer behaviours

The prevalence of the five interviewer behaviours (ground rules, key information, open-ended question variety, minimal encouragers and simple questions) across the three assessment timepoints is depicted visually in Figure 1. To map the prevalence of the interviewer behaviours across the three assessment timepoints, five 2 (Interviewer Behaviour: Yes, No) × 3 (Timepoint: Pre-commencement, Final, Refresher) χ2 tests of independence were conducted for each interviewer behaviour. Bonferroni-corrected z tests were used to compare proportions across timepoints. Table 3 displays the observation rates and inferential statistics for each interviewer behaviour (Figure 2).

Observed frequencies of interviewer behaviours across assessment timepoints.

Change in interviewer behaviours across assessment timepoints.

Note. All observations are percentages based on the sample size at each assessment timepoint (N = 90); OEQ = open-ended question;

Values in bold are significant at p < .001.

Interviewer behaviour increased significantly from the pre-commencement interview to the final interview, p < .05.

Interviewer behaviour increased significantly from the pre-commencement interview to the refresher interview, p < .05.

Interviewer behaviour decreased significantly from the final interview to the refresher interview, p < .05.

For all five interviewer behaviours, the analyses revealed a significant association between interviewer behaviour and assessment timepoint (Table 3). The follow-up analyses revealed a significant increase in interviewer behaviours from the start to the end of the training programme. Specifically, the prevalence of interviewer behaviours increased significantly from the pre-commencement interview to the final interview and the refresher interview. Except for simple questions, there was no difference in the prevalence of interviewer behaviours from the final interview to the refresher interview, suggesting that learning was maintained over time. However, the use of simple questions decreased significantly from the final interview to the refresher interview.

Discussion

Previous training evaluations that focused on generalist police cohorts have shown few successes in producing sustained improvement in the use of open-ended questions (Akca et al., 2021, cf. MacDonald et al., 2017). By contrast, this study, which incorporated several intensive core training activities focused on open-ended question usage, was a major success. Specifically, interviewer performance increased over time from baselines of 6–18% open-ended questions to 76–79% after the training ceased. There was a drop in performance many months after the training ceased (with no intervening follow-up sessions); however, performance in the refresher mock interviews remained significantly better than baseline. The improvements in open-ended question usage were associated with improvement in broader behaviours known to enhance narrative detail such as the use of minimal encouragers. These results are consistent with those of other studies that incorporated a similar training structure and range of activities. For example, Benson and Powell (2015) found baseline values for open-ended results of around 10% open-ended questions, followed by post-training results of around 70% with very little drop 12 months after training had concluded.

Overall, there are three implications arising from these findings. First, the findings provide direct support for the need for police organisations who train generalist police to focus more intently on incremental skill-building around interviewing, particularly open-ended question usage (Milne et al., 2019). The complexity involved in using open-ended questions would appear to be underestimated by training designers who omit activities such as the identification of subtypes of open-ended questions, rote learning of question stems and spaced simulated practice opportunities. According to Westera and colleagues (Powell et al., 2016b), most training manuals in Australia are more than 150 pages long, and yet an average of five lines is devoted to applying open-ended questions. This implies that many activities are being prioritised at the expense of foundational skills.

Our conclusion about the need for an overhaul in the design of generalist police training is strengthened by the fact that two of the police cohorts in the current study – Frontline and Investigator police who produced 11% and 18% open-ended questions at baseline, respectively – had already received PEACE training on narrative-based interview protocols (Fisher and Geiselman, 2010; Shepherd, 2007), but without the core training elements. Irrespective of whether these previous courses promoted open-ended questions immediately post-training, it is clear from our results that open-ended question usage was not sustained over time. Although we have no measure of how police were actually interviewing in the field, Benson and Powell (2015) and Powell et al. (2010b) have shown that the results of the mock interviews are generalisable to field interviews.

The second implication from these results is that generalist police (as a group) see the value of open-ended questions in their work. Our measure of performance invited police to elicit detailed and accurate accounts and, in doing so, they chose to use a majority of open-ended questions at the post-training assessments. Think-aloud studies have shown that police who do not see the value in open-ended questions tend to discard these in simulated interviews (Wright and Powell, 2006). The mere fact that the Frontline and Investigator officers who had more experience in the field than Recruits used a similar proportion of open-ended questions post-training is a testament to the importance of narrative-based protocols.

Nonetheless, we acknowledge that the need for narrative detail may not be equal across all types of contexts. Anecdotally, we did find some fluctuation in the use of open-ended questions for some scenarios (e.g. trainees seemed to struggle more with traffic accident scenarios compared with domestic violence, which makes sense in the context that safety is paramount over getting a narrative in a high-traffic area). Scenarios have been shown to have an effect on open-ended question usage. Zekiroski et al. (2023) found that although open-ended question usage did increase across training timepoints, there was variability in open-ended question usage depending on the scenario. This may explain the drop in performance in the refresher mock interviews compared with other evaluation studies in which the scenarios are usually homogeneous (Benson and Powell, 2015; all scenarios involved child abuse and were more conducive to narrative-style questioning compared with a traffic incident). Although open-ended questions are needed in all police officers’ ‘toolkit of questions’ (Bull, 2018), further work is needed to generate instructions around when open-ended question should be used, and what type of scenarios provide the best gauge of how well officers can generate open-ended questions (Launay et al., 2022). Further research around police officers’ perceptions of the utility of open-ended question across various types of police work would also be beneficial.

The third and final implication to arise from the current results relates to the debate about the need for ongoing refresher training for interviewers. Notwithstanding the fact that any ongoing practice and feedback could be beneficial for skill development, this study, along with previous evaluations (Benson and Powell, 2015; Brubacher et al., 2022; Powell et al., 2016a) disputes suggestions that ongoing refresher training is a necessary component to sustain open-ended question usage. Although this appears to be a logical conclusion from studies that did not include the sorts of intense training opportunities that the current trainees received (Akca et al., 2021 for review), our data suggest that providing intensive, multicomponent training initially is a good investment because it promotes deep learning of skill, which is sustained over time.

Conclusion

The current research supports growing evidence that quality training around the use of open-ended questions can change interview skill at an immediate follow-up assessment, and in a manner that will be sustained over time. Although open-ended questions may not need to be a focus of all witness interviews conducted by generalist police, a clear consensus is starting to emerge about how these questions are best learned. That consensus is illustrated by the consistency and similarity of results from studies that include a similar type and range of incremental learning activities as that used in the current study.

Footnotes

Acknowledgements

The authors are grateful to the police organisation that participated in the research.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Author biographies

Appendices

Interviewer behaviour coding overview.

| Interviewer behaviour | Brief description | Definition, rationale and reason for coding |

|---|---|---|

| Ground rules | Did the interviewer reiterate ground rules during the substantive phase? | Ground rules are conversational expectations that are used early in an interview to help clarify the roles of the interviewer and interviewee. They include instructions not to guess and to alert the interviewer if a question is confusing or contains incorrect details. They help the interviewee to feel more comfortable in an interview and put them in the role of the expert informant. Ground rules should be provided at the beginning of the interview and reiterated during the substantive phase. Code a yes if GRs were used. |

| Key information | Did the interviewer get all of the key information in response to open questions? | When open-ended questions are used effectively, it is possible to obtain all of the key information in a full narrative. This includes the beginning (lead up to the offence), the offence itself (including a description), and the ending (getting the person to a place in the narrative where it is clear that abuse is no longer occurring/ the incident is complete). Interviewers only get a ‘Yes’ if they elicit all three parts without using specific questions. Only look at direct responses to open-ended questions. |

| Variety of open questions | Did the interviewer use a variety of open-ended questions? | Open-ended questions are much more effective when a variety of question stems are used as opposed to repeating the same stem (e.g. “Tell me more. Tell me more. Tell me more.”) Does the interviewer use at least 3 different open-ended question stems? Failure to do so will result in a code of “No.” or if they use the same question stem more than 3 times in a row, this will result in a code of “No.” |

| Minimal encouragers | Did the interviewer use minimal encouragers? | Minimal encouragers do not interrupt the flow of conversation but indicate that the interviewee has been heard and should keep narrating. Minimal encouragers include “Hmmm”, “Mm-hmm”, “Okay”, and so on. Interviewers must use 3 Mes appropriately anywhere in the interview to get a Yes. |

| Simple questions | Did the interviewer ask simple questions? | Children and adults tend to give more accurate and detailed answers when they are asked simple questions. Therefore, interviewers should ask one question at a time, and multi-part, how, or why questions should be avoided. (Although adults are better at explaining reasoning than children, it is not ideal to ask how, or why questions before exhausting the narrative). Interviewers will score a “No” here if they ask multi-part or complex questions on 3 or more occasions. If using more than 3 “how and why” questions they would get a zero for Simple. This may also include asking multiple questions in a row (e.g. “yes/no + yes/no + CR). |