Abstract

An adaptive sampling approach for parametric model order reduction by matrix interpolation is developed. This approach is based on an efficient exploration of the candidate parameter sets and identification of the points with maximum errors. An error indicator is defined and used for fast evaluation of the parameter points in the configuration space. Furthermore, the exact error of the model with maximum error indicator is calculated to determine whether the adaptive sampling procedure reaches a desired error tolerance. To improve the accuracy, the orthogonal eigenvectors are utilized as the reduced-order basis. The proposed adaptive sampling procedure is then illustrated by application in the moving coil of electrical-dynamic shaker. It is shown that the new method can sample the parameter space adaptively and efficiently with the assurance of the resulting reduced-order models’ accuracy.

Keywords

Introduction

Finite element method (FEM) has become a common approach to simulate the behavior of complex physical systems. However, large-scale complex systems usually result in high-dimensional computational models, leading to prohibitively intensive computations. To overcome this problem, model order reduction (MOR) methods have been employed to obtain a low-dimensional but accurate approximation of the large-scale dynamic system by projecting the high-dimensional model (HDM) onto a low-dimensional subspace described by an ROB. The commonly used MOR methods include proper orthogonal decomposition, Krylov-subspace based methods, and truncated balanced realization. 1 And these techniques have already applied in some areas like optimization and control,2,3 uncertainty quantification, 4 and parameter identification. 5

However, structural models are usually parameter-dependent. It is inefficient and impractical to reduce the model order repeatedly at different parameter values. This has promoted the study of parametric model order reduction (PMOR) technique, which aims to generate a parametric ROM approximating the original full-order dynamical system with high fidelity over the range of parameters. As for the PMOR strategies, Benner et al. 6 conducted an overall survey on the existing PMOR methods, including the global and local approaches. Geuss et al. 7 researched various matrix interpolatory methods and proposed a general method to unite them.

It is the fundamental issue how the parameter dependence is imported. For the systems explicitly dependent on parameters, 8 the standard MOR strategies have been extended and applied, like multi-dimensional moment matching algorithms. 9 While for the systems with affine parametric dependency, the global basis and interpolation among local bases approaches 10 are both effective in reducing the system order.

As for the implicit and non-affine parametric dependency, the matrix interpolatory PMOR framework is the most appropriate and general approach. Panzer et al. 11 interpolated the low-dimensional local system matrices via weighting functions to achieve the parametric ROMs. Amsallem et al. 12 interpolated the order-reduced matrices on a tangent space to eliminate the nonlinearity of precomputed ROMs.

The most fundamental and critical step in the existing PMOR methods is the sampling strategy of parameter space. The distribution of the parameter sample points should enable the surrogate model to capture the underlying parametric dependence to a maximum extent. There have been two major approaches aimed at finding the optimal sampling points with largest error indicator in the parameter space. The first one simply calculates the posteriori error indicator of a large discrete sets of candidate parameter values and finds the optimal point with max errors.13–15 It is very simple to compute but may suffer from the curse of dimensionality for multi-dimensional parameter space. The other one solves the model-constrained optimization problem using gradient-based approaches and selects the solution as the sampling point. 16 This kind of approach may not find the global optimal solution, but only the local ones. Besides, this approach can only be exploited in models with affine parametric dependency because of the need to express full-order system matrices explicitly.

Most of the existing adaptive sampling approaches are based on reduced basis PMOR methods. Few literatures applied the adaptive sampling strategy to matrix interpolatory PMOR framework. Varona and Lohmann 17 utilized the distance between subspaces as a measure and proposed an adaptive sampling procedure combined with PMOR by matrix interpolation. This approach can sample the parameter space adaptively and automatically, but it cannot evaluate the precision of the algorithm due to the absence of error estimation.

This article proposes an automatic adaptive parameter space sampling procedure for matrix interpolatory PMOR framework. It is applicable to the general case, in which the arbitrary parameter dependence is considered. To sample the parameter space adaptively, an error indicator for rapid error evaluation is introduced. The location of an optimal sample point is thereby identified by a direct exhaustive exploration of a large discrete candidate parameter sets. The maximum of the residual between the HDM and the ROM at this parameter value is subsequently calculated to monitor the convergence rate and used as the termination criteria. In addition, the orthogonal eigenvectors are applied as the ROB to improve the accuracy. This method combines the benefits of matrix interpolatory PMOR and those of adaptive sampling procedure. And it can serve as a substitute for the classical offline ROMs generation algorithm in PMOR.

It is organized as follows: The next section describes the classical matrix interpolatory PMOR method in detail. “The proposed adaptive sampling procedure” section outlines the automatic adaptive PMOR framework and summarizes the algorithm of generating the offline ROMs database. In “Numerical example” section, the proposed strategy is exploited to adaptively generate the parametric ROM for the moving coil of electrical-dynamic shaker. Results of the ROM and HDM are compared to illustrate the advantage and validity of the proposed approach.

The classical matrix interpolatory PMOR framework

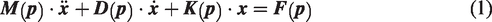

Based on the classical MOR method, the matrix interpolatory PMOR approach has become an essential technique for reducing the order of large FE models with varying parameter configurations. For parameter-dependent linear system, the structural–dynamical equation can be described as

The uniform or random sampling method can be applied to sample the continuous parameter field

Assume now that the uniform sampling method is utilized to obtain a discrete set of parameter samples

Then, each local large-scale model is reduced individually by the use of MOR methods. The general practice of this approach is to construct a low-dimensional subspace

Substituting equation (3) into equation (2) and multiplying the equation from the left by the transpose of the matrix

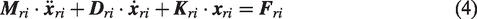

According to Antoulas, 1 Krylov-subspace reduction methods extract low-dimensional projection basis and project the HDM onto the subspace. The resulting ROM maintains the dynamic characteristics of original systems and has many advantages like high calculating stability, precision, and efficiency. Hence this kind of method is widely utilized in the classical PMOR framework.

With respect to the second-order dynamic systems, Bai 18 proposed a modified version of the Arnoldi process to construct internally orthogonalized basis vectors spanning the Krylov subspace. The modified Arnoldi process is utilized in this paper. One can refer to Bai18 for the detailed algorithm.

The subspace induced by matrices

According to equation (4), we can obtain m ROMs. However, since these reduced-order system matrices are defined in different coordinate systems, the direct interpolation of these matrices may be meaningless. Therefore, congruence transformations should be performed to adjust the local system matrices and basis vectors. As mentioned above, Panzer et al. 11 built a total matrix of all the basis vectors and conducted singular value decomposition (SVD) of this matrix to achieve the rth-order most important directions as the reference basis vector. All the precomputed local ROMs are then transformed into a consistent set of generalized coordinates.

Although the results are relatively accurate, the SVD procedure has to be repeated when a new parameter sample added, which could be time-consuming for multi-dimensional parametric large models. Therefore, an improved method presented by Amsallem and Farhat [19] is exploited in this research to adjust the local reduced models.

First of all, the following minimization problems is considered

From equation (6), the following maximization problem can be derived as

In equation (7), multiplication of the basis vectors is defined as follows

Then, we can simplify equation (7) as

According to Amsallem and Farhat,

19

the analytical solution to equation (9) is

The relation between different coordinate systems can thereby be expressed as

Inserting the coordinate transformation relation equation (12) in equation. (2), multiplying from the left by

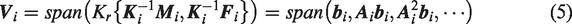

After the compatible reduced-order matrices obtained, the new matrices at the given parameter value can be constructed by direct interpolation or interpolation on the tangent matrix manifold. In comparison, interpolation on tangent manifold can weaken the influence of nonlinearity, and the result is more accurate. Thus, it is applied in this paper to construct the parametric model.

First of all, a reference matrix is selected, and the interpolation will be performed in the space tangent to the manifold at that reference. For example, we set

After all the reduced-order matrices projected, we can obtain the reduced-order model matrices at a new parameter point by matrix interpolation. As for the interpolation method, Lagrange interpolation could provide both enough accuracy and efficiency. Therefore, it is used in the online stage

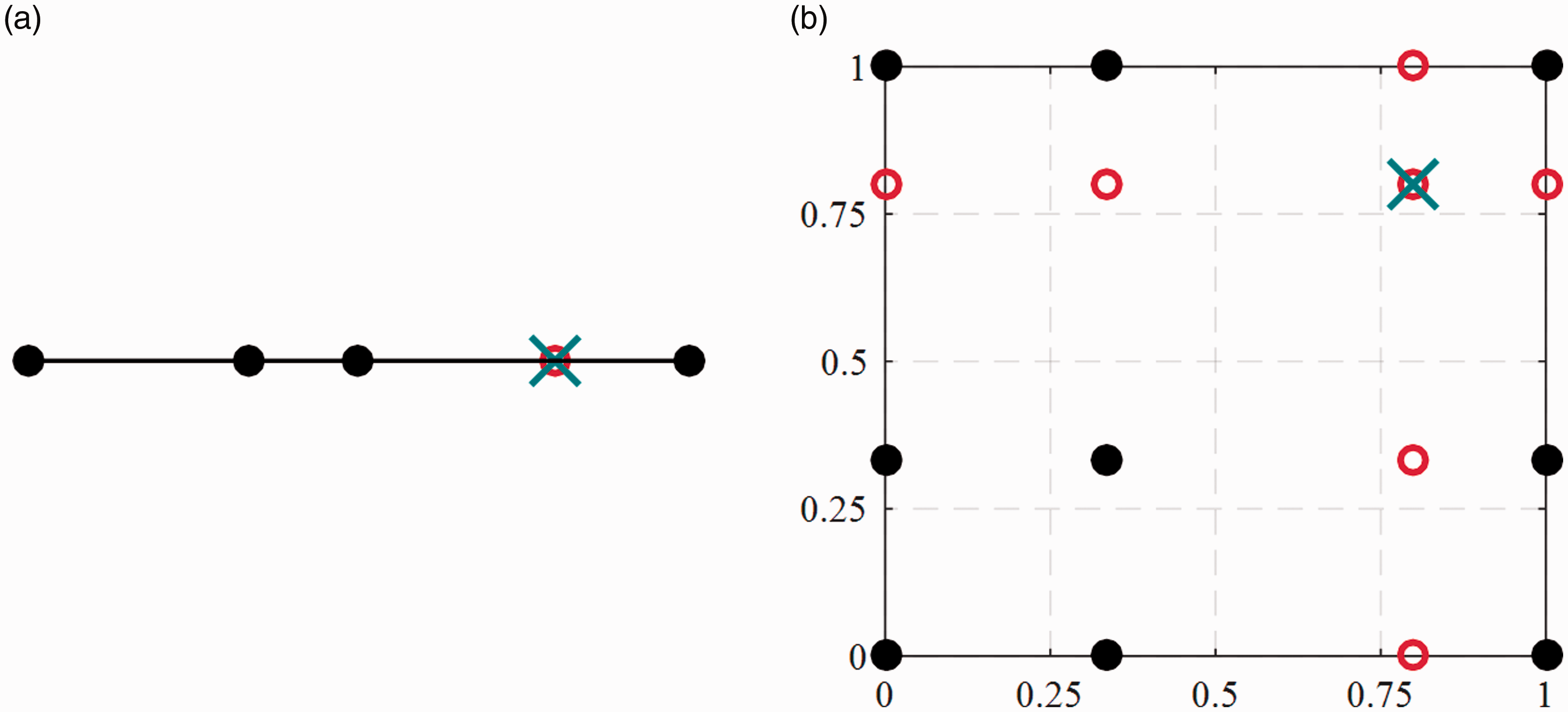

Then,

In this way, we can obtain the reduced matrices and basis vectors and then calculate the order-reduced response

The complete calculation procedure of PMOR method can be decomposed into offline and online steps. The calculation in the online stage includes only equations (14) to (16). But the focus of PMOR method is on the offline ROMs building steps. Thus, we summarize the offline ROMs generation algorithm of classical PMOR as follows: Uniformly select a set of Generate the corresponding matrices Calculate ROBs Construct the ROMs (

Choose a ROB Calculate the transformation matrix Adjust the local ROMs and obtain

Choose matrices Project the matrices onto the tangent space and obtain

Conclusively, the entire calculation flow of classical PMOR method can be summarized as: generate the uniformly distributed compatible ROMs in the offline stage, calculate the new ROM and the reduced-order results for the given parameter value in the online stage, and project them back to the original physical coordinate.

The proposed adaptive sampling procedure

Although the classical PMOR method can achieve satisfactory accuracy, it cannot provide the error estimation, convergence rate, and termination criteria. Thus, the minimum number of sample points to satisfy the predefined error cannot be determined. Besides, with respect to the uniform sampling approach, one has to regenerate all the precomputed ROMs when adding a new sample point. Another drawback is that the orthonormal basis of the Krylov subspace achieved by Arnoldi process can match only limited moments. To guarantee the accuracy, the order of the ROB has to be high enough, which is relatively time-consuming. Therefore, we propose an adaptive PMOR procedure to sample the parameter space and generate the ROMs efficiently and accurately.

First, the ROB

By selecting r modes vectors as the ascending order of the magnitude of the eigenvalues, we can obtain the first r columns eigenvectors

Assume the orthogonal eigenvectors after a Gram–Schmidt procedure as

Then, the order-reduced response and eigenvector defined in equations (17) and (18) can be replaced by the formula

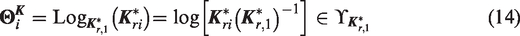

The goal of the adaptive parameter sampling approach is to estimate, at each iteration Niter, the parameter

This approach proceeds in two phases. In the first phase, a candidate parameter set is chosen as

Then, we compute the error estimator over the set ℂ. To define the error indicator for fast estimation, we substitute the eigenvector

To eliminate the accumulated error, we multiply the residual vector from the left by

After this error indicator calculated for the parameter values

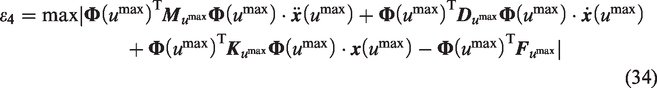

At each iteration Niter of the adaptive sampling process, the parameter value

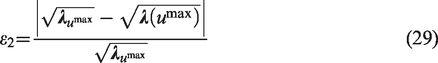

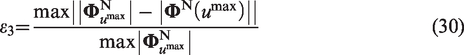

The parameter values are then used in the next phase to generate corresponding full-order matrices. After these matrices are reduced, the overall exact errors between the HDM and the ROM are evaluated and applied to monitor the convergence of the algorithm and terminate the procedure automatically. The exact errors of frequencies and modal shapes are defined as

It should be noted that the subscripts

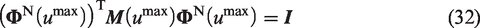

In order to obtain the regularization form of the modal shape vectors, we should regularize them columnwise. For the kth column of

And

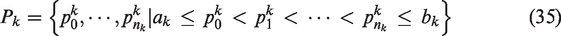

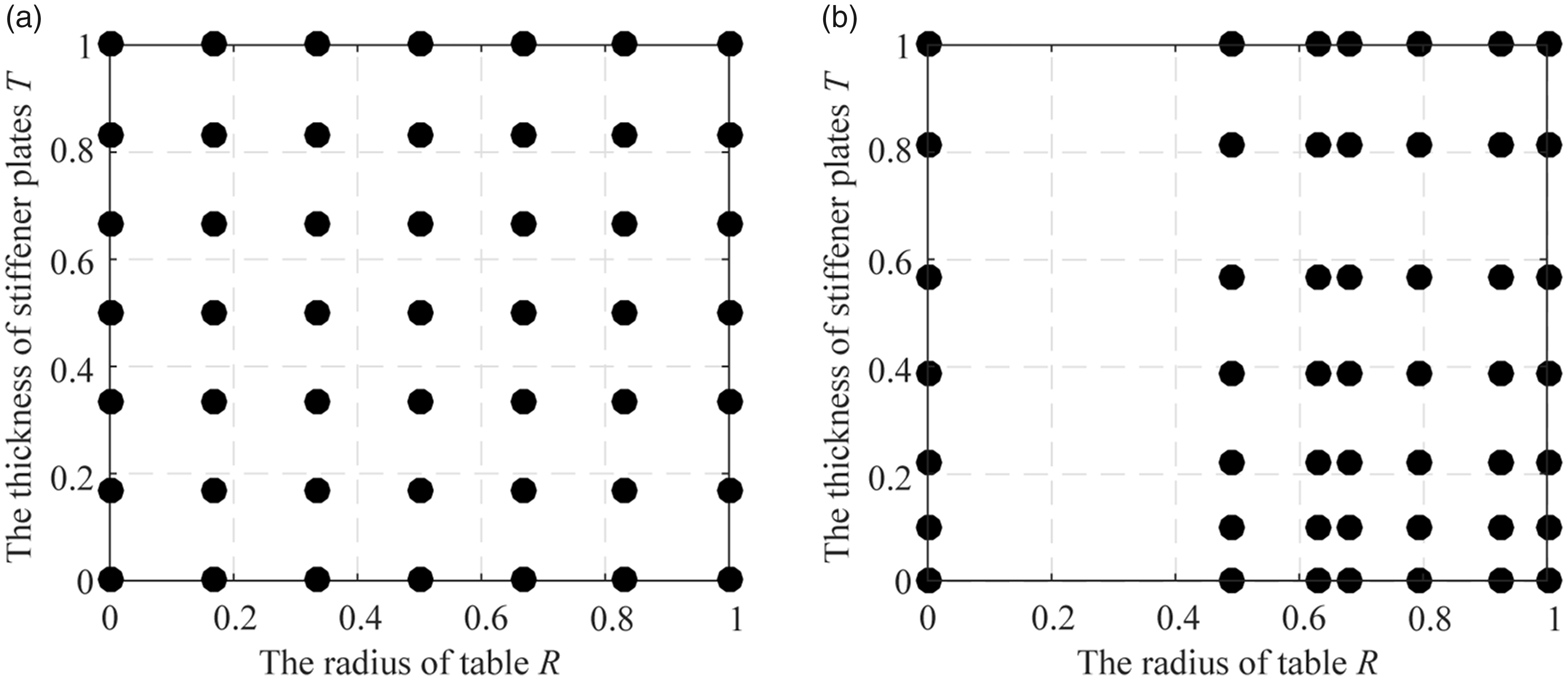

After exploring the parameter space and identifying the sampling points with maximum error indicators, we subsequently insert new parameter samples at these locations. In this paper, the regular parameter grid is used. The corresponding parameter points of a regular grid with dimension d have a certain structure which must be preserved during the inserting procedure. For one-dimensional parameter space as shown in Figure 1(a), we directly place a new sample in the space and utilize the interpolation method as equation (15). While for multi-dimensional case, it is a little more complicated. In addition to the point with maximum errors, we have to insert more points to preserve the structure of regular grids. Consider a two-dimensional grid as shown in Figure 1(b), the new inserted points are denoted by red circles, and the point with the maximum error indicator is indicated with a blue cross.

Points insertion for regular grids: (a) one-dimensional grid and (b) two-dimensional grid.

To construct the interpolants for the multi-dimensional parameter grid, we have to extend the one-dimensional interpolation formula. Assume that the nodes are given by the regular grid

By introducing the regular parameter grid and the d-variate lagrange interpolation formula, we can apply the proposed adaptive sampling procedure to the multi-dimensional case. The adaptive offline ROMs generation approach algorithm is summarized as follows: Choose the start and end points of the parameter domain Generate the full-order matrices Calculate the corresponding eigenvectors and obtain the orthogonal basis vectors through the Gram–Schmidt procedure. Build the offline ROMs database. Choose the ROB Calculate the transformation matrix Choose matrices Randomly select a set of Compute Compute the reduced-order eigenvector Compute the associated posteriori error indicator Construct a surrogate model Compute Generate the full-order matrices at Compute the exact eigenvector Compute the exact error of modal shape vectors Orthogonalize the modal shape vector Compute the corresponding ROM and transformation matrix. Adjust the local ROM, project the matrices onto the tangent space and update the offline ROMs database.

To sum up, the proposed adaptive PMOR method can sample the parameter space according to the error indicator and terminate the procedure automatically when the maximum actual error becomes smaller than a specified tolerance. This approach can provide a better performance with smaller relative errors and cheaper offline computational cost. However, as the dimension increases, the number of needed parameter samples quickly grows. Therefore, the method in this research is confined to cases with relatively low parameter dimensions.

Numerical example

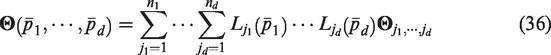

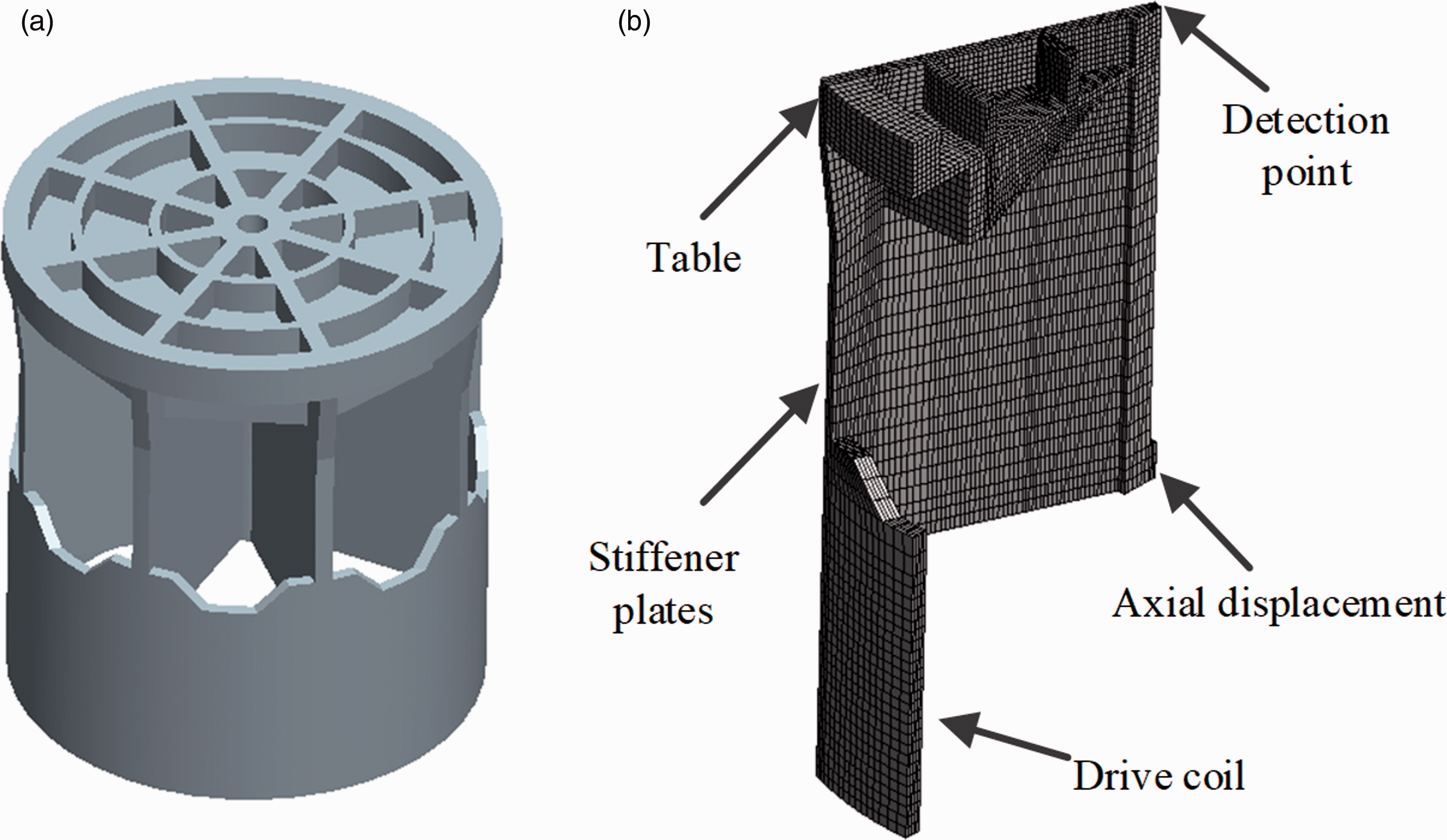

The adaptive PMOR approach proposed in previous sections is subsequently illustrated with its application to the moving coil of electrical-dynamic shakers. Because the geometric model has perfect cyclic symmetry property, it can be simplified to one over 16 of the entire model as shown in Figure 2. The finite element model consists of 15,608 SOLID elements and 21,246 nodes. The number of the full DOFs is 59,487.

The moving coil of electrical-dynamic shakers: (a) the entire geometric model; (b) the simplified FE model.

First, we import the simplified geometry model into HYPERMESH and generate the original regular mesh. Then we utilize the HYPERMORPH module to parameterize the mesh without changing the underlying topology. Several FE models corresponding to the initial parameter sets are generated, respectively. Their nodes coordinates are exported for the use of generation of new FE model in ANSYS. The boundary condition and excitation condition are also initialized in HYPERMESH. At the position shown in Figure 2(b), only axial displacement is permitted, and the normal displacement at the symmetric boundary is zero. The model is excited by 1 g base acceleration imposed on the drive coil.

The sampling scheme is automatically implemented in MATLAB including calling ANSYS to generate FE model and corresponding matrices, reading the matrices files, and conducting the classical and proposed adaptive PMOR methods.

This research is applicable to multi-dimensional cases. In this application, we consider a two-dimensional parameter space, which is spanned by the thickness of stiffener plates T, changing from 10 to 32 mm, and the radius of the table R, changing from 460 to 510 mm. The original parameter configuration is T = 18 mm and R = 485 mm.

Three different offline sampling procedures and ROMs generation methods are compared. The first one is the classical procedure outlined in Algorithm 1 in which the candidate set is uniformly selected (referred to as “Uniform + Krylov”). A modified Arnoldi algorithm 18 is applied to build the 30-order basis spanning the Krylov subspace. The second one is a variation of the classical algorithm in which the ROBs are built by 20-order orthogonal eigenvectors after a Gram–Schmidt process (referred to as “Uniform + Modal”). The third approach is the proposed adaptive procedure summarized in Algorithm 2 (referred to as “Adaptive + Modal”). The random candidates are selected by Latin hypercube sampling. The maximum number of random candidates is set to Nc = 30 for the adaptive procedure.

The performance of each approach is assessed by monitoring the maximum errors of frequency, modal shape, and response between the HDM and interpolated ROM. As the iteration increases, the number of corresponding parameter sampling points grows exponentially. With four initial points, a total of 49 parameter samples are added after six iterations.

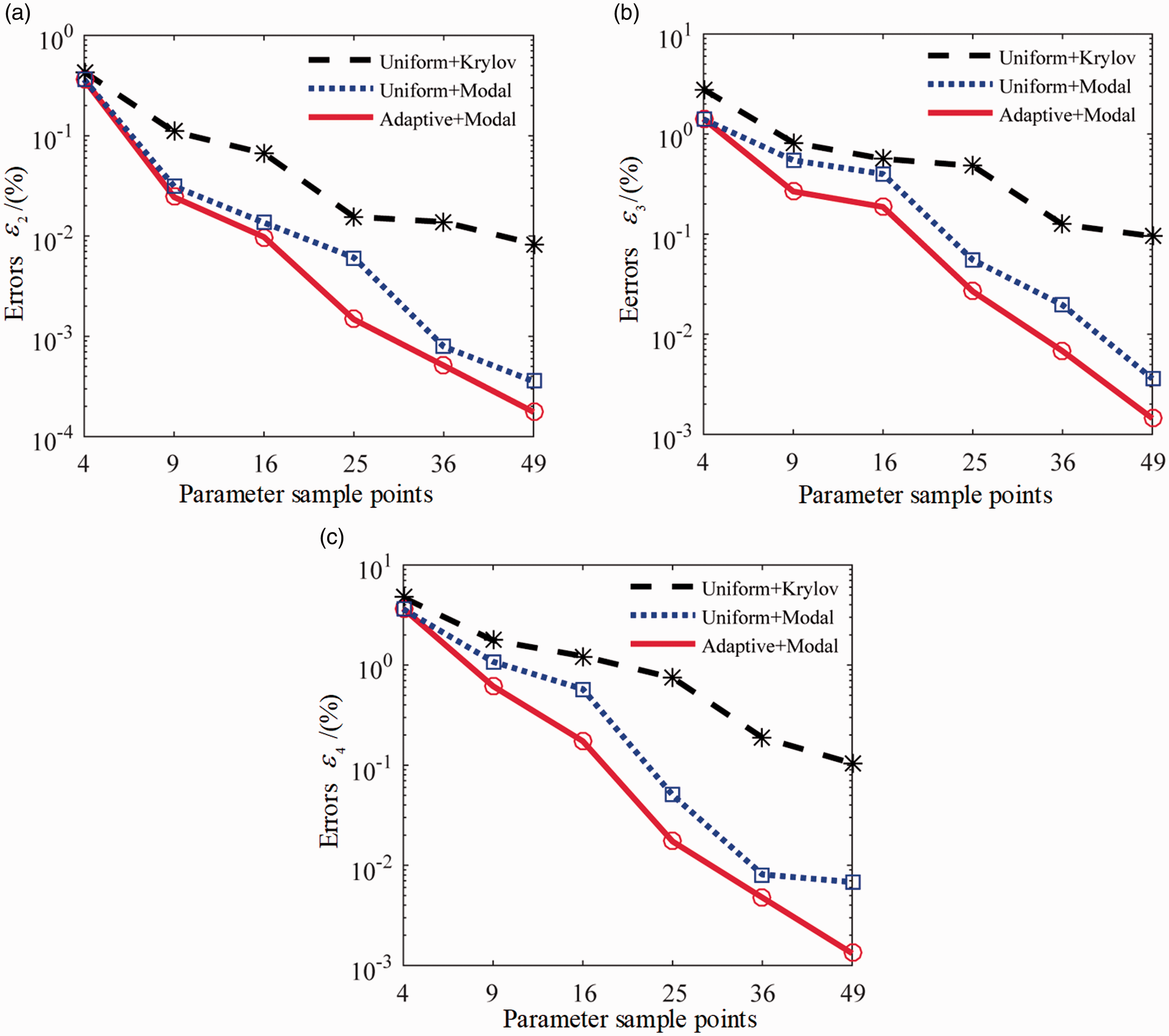

Figure 3 reports the evolution of the maximum errors. It can be observed that the maximum errors decrease a lot as the parameter sample points increase. Compared with frequency, the modal shape and response have larger errors and consequently can be used as the most rigorous criteria. In other words, once the error of modal shape or response match the specified tolerance, the underlying model should be accurate enough.

Error evolutions for different PMOR methods. (a) Frequency, (b) Modal shape and (c) Response.

One can also observe the superiority of the modified ROB over the regular Krylov-subspace based ROB. Take the errors of response for example, with the same 49 evenly distributed sample parameters, the PMOR approach with classical Krylov ROBs can only decrease the maximum relative error from 4.72 to 0.11%. While the one with modified modal ROBs achieves an asymptotic error of 0.007%. It means that the sampling approach can reach the same level of maximum error with fewer sample points by use of modified ROBs.

In contrast, the adaptive approach achieves a smaller error of 0.0013%. As a matter of fact, the adaptive approach outperforms the modified classical method in all the three errors, with the frequency error decreasing from 3.56 × 10−4 to 1.76 × 10−4%, the modal shape error from 0.0036 to 0.00144%, and the response error from 0.0068 to 0.0013%, after 49 points sampled. Compared with the uniform sampling procedure, the adaptive approach is more suitable for the models with relatively strong parameter dependency at a certain area of the parameter space. In this application, the proposed sampling procedure captures the nonlinear parameter dependence in the dimension of table radius R and refines the corresponding area adaptively, thus leading to more accurate PROMs. The final uniform and adaptive sample grids are shown in Figure 4. The parameter domain is normalized to [0, 1] × [0, 1] for the sake of an easier presentation.

The final parameter samples of uniform and adaptive methods: (a) uniform method; (b) adaptive method.

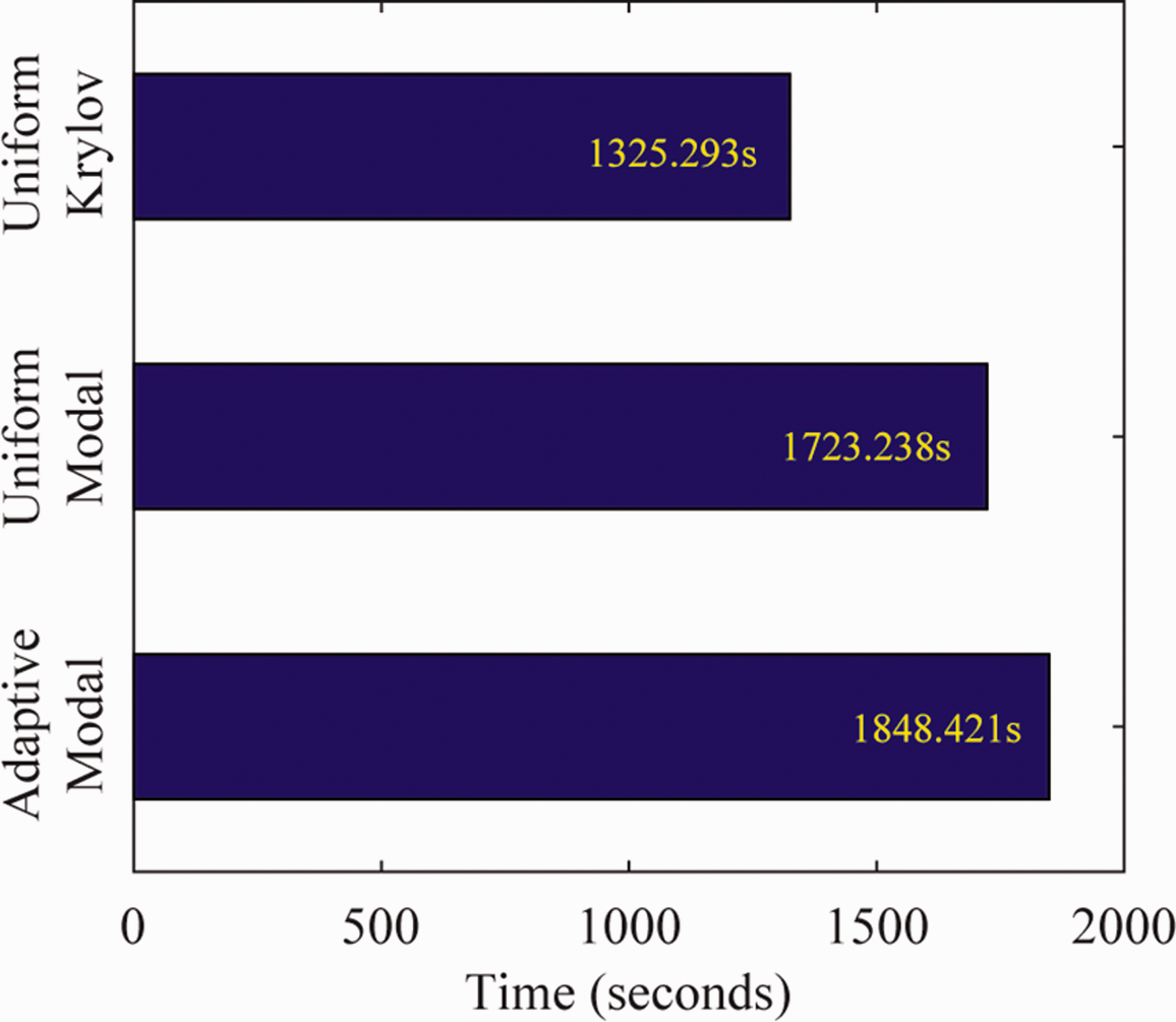

In order to comprehensively evaluate the performance of the proposed methods, we compare the calculation time of the three algorithms and present the results in Figure 5. As for the uniform sampling methods, the distribution of the parameter samples changes as the iteration increases. Therefore, the calculation time of the final iteration with 49 samples is considered. The total time includes FE model generation and reduction time, together with the extra exploration time for the adaptive procedure.

The calculation time of the three algorithms.

As shown in Figure 5, costs for the two modal basis-based approaches are comparable, which implies that the adaptive procedure carries no much extra computational cost compared with the uniform sampling method. While the calculation time of Krylov subspace-based method is nearly one-quarter smaller than its counterpart computed with modal basis. This is due to the fact that computing the eigenvectors requires more computational resources than calculating the Krylov basis. However, the modal basis could provide more accurate ROMs compared to the latter.

The convergence performance of the proposed approach is also considered. There is no guaranty that adding a parameter sample point will necessarily lead to a monotonic decrease of maximum errors. But in practice, the maximum error will decrease for most additional sample points due to the convergence property of Lagrange interpolation method exploited in our approach.

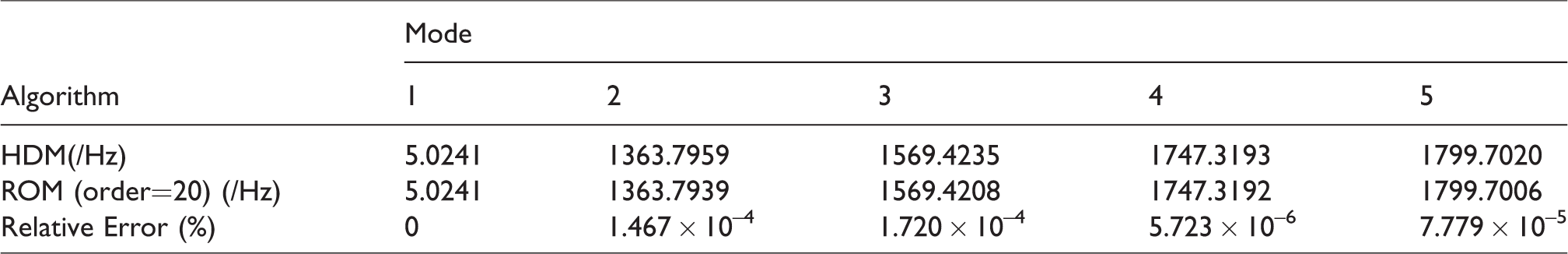

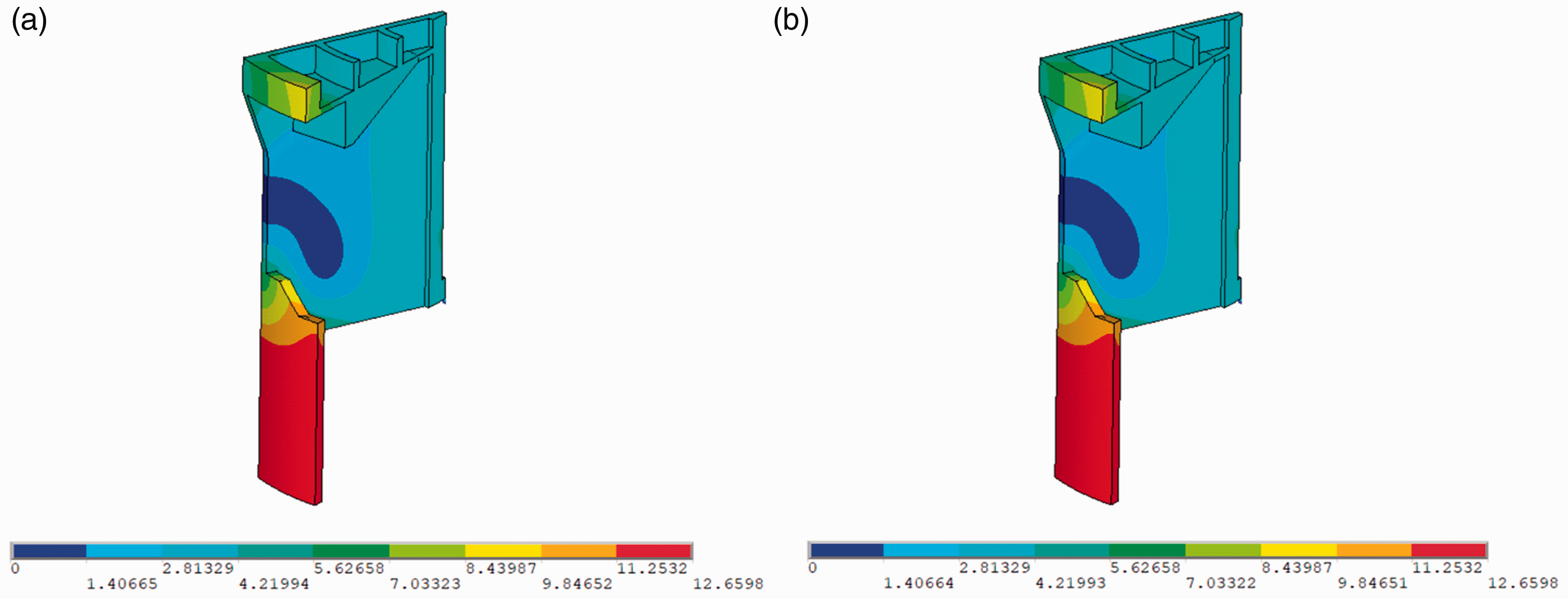

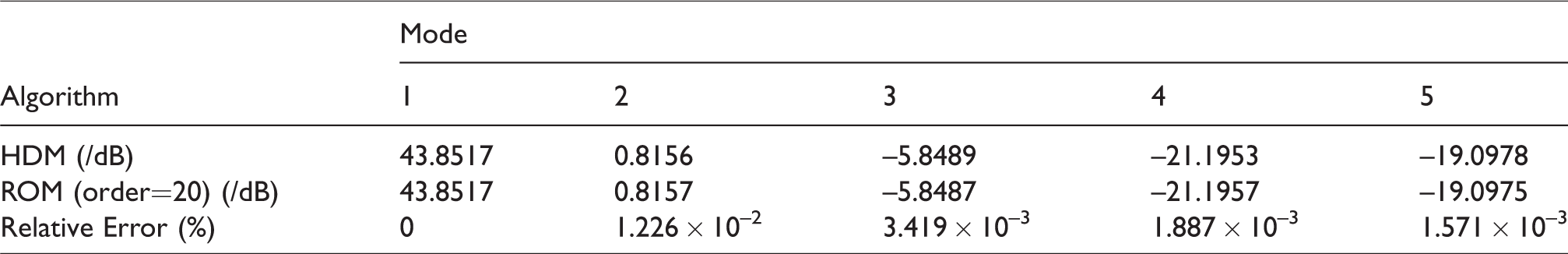

Furthermore, in order to validate the error indicator and display the intuitionistic comparison between the HDM and ROM constructed with adaptive PMOR approach, we compare the first five orders frequencies and the fourth-order modal shape after seven parameter points have been sampled. The query point is T = 24.93 mm and R = 472.44 mm at which the error indicator reaches the maximum.

It should be noted that these results are presented to validate the proposed PMOR method, not to illustrate the superiority. Therefore, the results of classical PMOR method are not included.

The results shown in Table 1 and Figure 6 reveal that the interpolated ROB after six sampling iterations is capable of reproducing very accurately the HDM solution with almost negligible errors.

Comparison of nature frequencies between HDM and ROM.

Comparison of the second order modal shape between HDM and ROM: (a) HDM and (b) ROM.

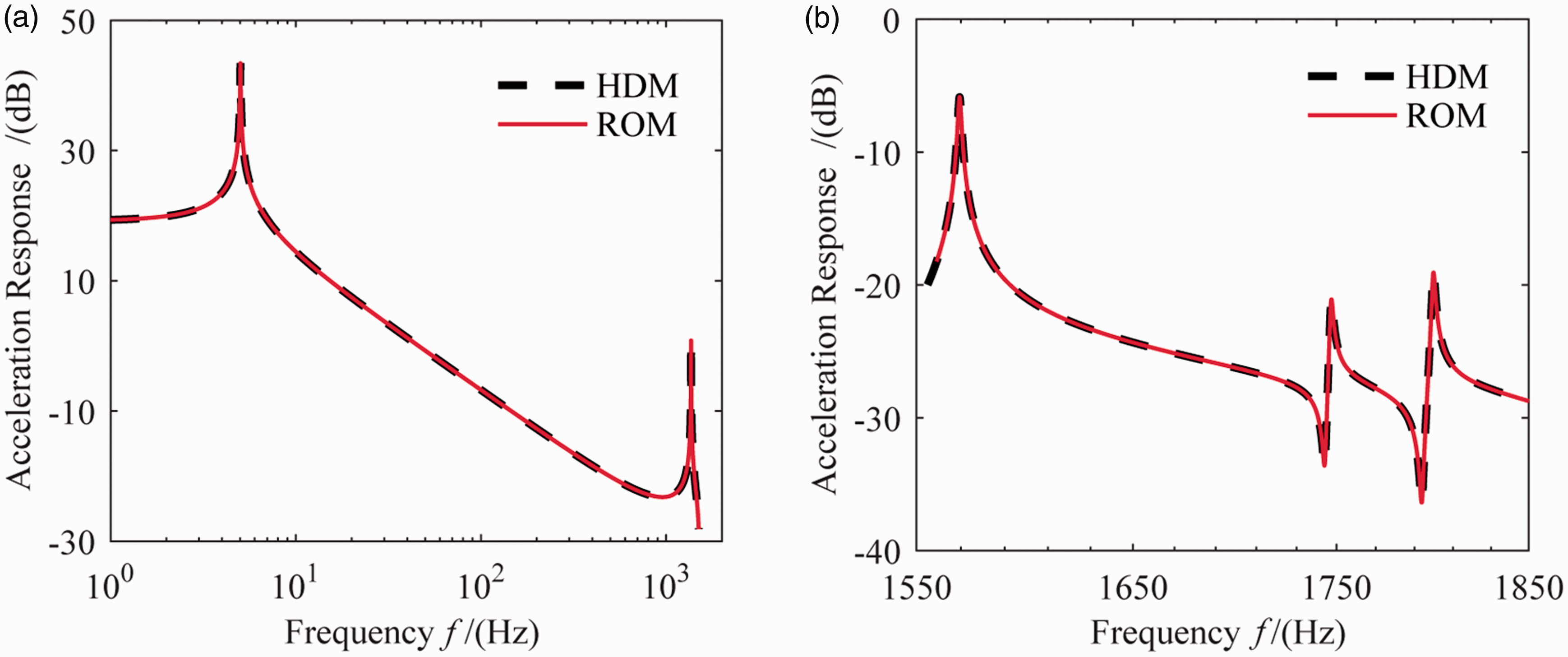

The harmonic response for the HDM and ROM is subsequently compared in the frequency range of 0–1850 Hz with the interval 0.1 Hz. The detecting point locates at the center of top surface as shown in Figure 2(b). Rayleigh damping coefficient is adopted in the harmonic response analysis (α= 2.5, β = 9 × 10−5). For clarity, the results between 0 and 1550 Hz are plotted using a base 10 logarithmic scale for the x-axis and a linear scale for the y-axis as shown in Figure 7(a). While for the range of 1550–1850 Hz, linear scales are used for both the x-axis and y-axis as shown in Figure 7(b).

Comparison of harmonic responses between HDM and ROM: (a) frequency range: 0–1550 Hz; (b) frequency range: 1550–1850 Hz.

As illustrated in Figure 7, the harmonic response predicted by the ROM is almost indistinguishable from its HDM counterpart. A perfect level of accuracy is observed in the full frequency range, validating the proposed framework.

To investigate exact errors and assess the accuracy of these PROMs, the first five orders resonance responses are compared in Table 2.

Comparison of resonance responses between HDM and ROM.

The results reported in Table 2 reveal that the relative errors are below 0.02%, and very good predictions are obtained with the ROM. It indicates that the proposed adaptive PMOR procedure can be exploited to generate high-precision surrogate ROMs for fast simulation of the complex FE models.

Conclusions

This paper presents an automatic adaptive sampling procedure for PMOR by matrix interpolation. The procedure is based on the definition of error indicator developed in this research as a measure of the need for a new sample point. The proposed approach combines the benefits of matrix interpolatory PMOR and those of adaptive sampling parameters procedure. The outlined scheme can be applied to the models with arbitrary parametric dependency and can adaptively determine the minimum parameter sample points to satisfy the predefined error. In addition, by utilizing the orthogonal eigenvectors as the ROB, this approach shows great superiority over the classical PMOR method. Numerical experiments illustrate that the ROM can provide perfect predictions of frequencies, modal shapes, and responses. This automatic adaptive method can be used for order reduction of realistic complex FE models.

Footnotes

Authors' contribution

All the authors have contributed equally to the conception and idea of the paper, implementing and analyzing the processing methods, evaluating and discussing the experimental results, and writing and revising this manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project is supported by National Natural Science Foundation of China (Grant No. 11427801).