Abstract

Multimodal artificial intelligence (MMAI) integrates and interprets diverse data types, such as images, text, video, and audio, and offers new opportunities for clinical decision support systems (CDSSs). Traditional CDSSs rely on unimodal data, which limits their predictive accuracy and coverage. The incorporation of MMAI holds promise for more accurate diagnosis, treatment optimization, and personalized patients care by synthesizing heterogeneous data sources. This narrative review explores the growing role of MMAI in improving diagnostic sensitivity, personalizing treatment, and improving healthcare delivery through the integration of heterogeneous data sources. It examines the evolution of MMAI technologies, such as large language models, large vision models, vision-language models, and large multimodal models, and their practical applications in clinical settings. The review also addresses key ethical, technical, and infrastructure challenges, such as data quality, model interpretability, bias, and system interoperability. Finally, it provides strategic recommendations for clinicians, researchers, and policy makers to promote responsible adoption of MMAI in healthcare. While recent developments show significant promise, addressing current limitations is essential to fully realize the transformative potential of MMAI in modern medicine.

Keywords

Introduction

Clinical care directly impacts patient health, from diagnosis and treatment to follow-up. The correct and effective execution of this process requires health professionals to possess a wide range of knowledge and skills.1,2 The healthcare sector faces enormous financial demands, exceeding $1.7 trillion per year in the US alone. 3 Additionally, global healthcare systems encounter challenges due to demographic shifts, the rising burden of chronic diseases, and resource limitations. 4 All these factors highlight the need for improved clinical decision-making tools to ensure the delivery of effective and quality patient care.

Clinical decision support systems (CDSSs) are software tools designed to assist healthcare professionals by providing real-time evidence-based guidance derived from complex medical data. Traditionally, CDSSs have often relied on single-modal data, such as images only or text only, and have been processed in isolation. This limits their scope because these approaches often fall short in integrating the diverse and complex data types generated in modern clinical environments s.5,6

Rapid advances are being made in digital technology, including artificial intelligence (AI), with healthcare a key domain. 7 The integration of AI into healthcare systems has been transformative, shifting from rule-based systems to advanced machine learning models capable of processing big and complex data.8,9

Multimodal artificial intelligence (MMAI) refers to AI systems that can simultaneously process and integrate multiple types of data, such as images, text, audio, and video. This ability allows for a more holistic approach to patient assessment, supporting a deeper analysis of complex clinical scenarios and providing more comprehensive responses. 10 Furthermore, MMAI can bring together different data sources and combine them to improve their quality and usability. 11 It has been shown in many medical fields that multi-modal approaches can enhance the performance of AI/ML systems compared to unimodal approaches for the same task. 12

The overall market size of MMAI projected to reach $1.2 billion by 2023 and is expected to grow at a compound annual growth rate of over 30% between 2024 and 2032, indicating the anticipated popularity of multimodality in the future. 13 Beyond cost-effectiveness, MMAI can improve early disease detection and support timely and tailored interventions by integrating diverse data such as imaging, clinical notes, and genomic data. This holds the potential to significantly enhance diagnostic accuracy, personalize treatment strategies, and streamline healthcare delivery models, extending to public health monitoring, predictive analytics, and patient tracking in remote or underserved areas.11,14

Despite its promise, gaps associated with current technology remain. It is crucial to consider applications along with critical implementation challenges such as interpretability, bias, and data interoperability. 14

This narrative review aims to provide a comprehensive roadmap for clinicians, researchers, and policymakers to navigate this rapidly evolving field by synthesizing recent developments in MMAI in healthcare and discussing key challenges and future directions.

Methods

This study is a narrative review that aims to synthesize recent developments, applications, and challenges related to MMAI in CDSSs. A targeted literature search was conducted for articles published between 2015 and 2025 using databases such as PubMed, Scopus, and Google Scholar. Keywords included “multimodal AI,” “clinical decision support,” “healthcare AI,” “large language models,” and related terms.

Peer-reviewed journal articles, reviews, and conference proceedings that addressed technological foundations, practical applications, and emerging trends in MMAI for healthcare were prioritized. Studies that assessed relevance to MMAI applications in CDSSs and healthcare contexts were included, while articles not in English and unrelated to healthcare practices were excluded. The evaluation focused on identifying MMAI applications, benefits, limitations, and implementation challenges in CDSSs.

Functioning basis of multimodal artificial intelligence

Background

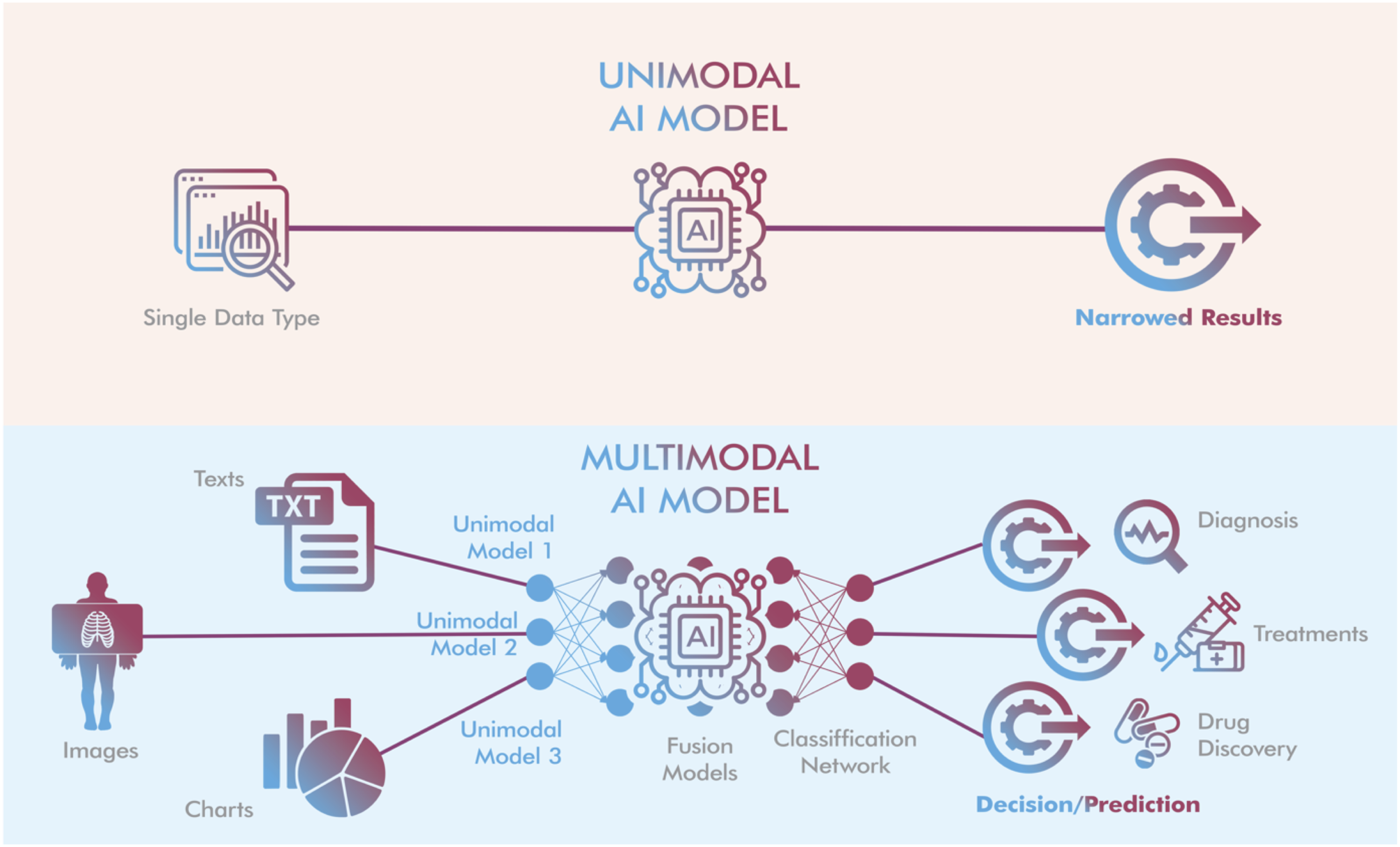

Although relatively similar in function, multimodal and unimodal AI models take different approaches in developing AI systems. Unimodal models focus on training for a specific task using a single data source, while multimodal models combine data from multiple sources (such as medical imaging, electronic health records, genomic data, laboratory results, and wearable sensor data) to effectively analyze a specific problem.

14

This capability enables MMAI to interpret the health data of the patient, ultimately contributing to improved diagnosis, prognosis, and treatment decision-making (Figure 1).

10

Schematic illustration of the key differences between unimodal and multimodal AI models. Modified from ref. 15 and 16 by drawing with Adobe Creative Suite Package [(Illustrator, version 28.7.1 and Photoshop, version 25.12) (Adobe Systems Incorporated, San Jose, CA, USA)].

Traditional CDSSs, which rely on single-modality data, have shown success in certain applications. However, their limited scope often fails consider the full spectrum of information available in clinical settings. For instance, relying solely on laboratory results may overlook essential clinical context from a patient’s medical history or imaging, leading to suboptimal decision making. Rapid advancements in deep learning, natural language processing, and computer vision have accelerated the application of MMAI in healthcare, paving the way for a new era in healthcare where patient data is processed holistically rather than as isolated streams of information. 15

The working mechanism of MMAI models is based on the ability to combine data from various modalities, such as text, image, video, and audio. They can convert these modalities into each other, for example, text to image, audio to text, etc. To achieve this, they first need to extract important features from the input data.

11

As shown in Figure 1, a MMAI system typically consists of three components

16

: (1) Input module: This module includes several single-mode neural networks, with each network processing a different type of data. For example, a text encoder network processes text, while an image encoder network processes images. (2) Fusion module: Once the input module collects the data, the fusion module combines the features of the data extracted from the various modalities by the single-mode encoders. The combined data is then classified into a specific output category to make accurate predictions based on the input. (3) Output module: This final component involves training the model on the integrated data. It provides the final output, along with predictions or recommendations, to help the machine learning system or user decide on the next step.

MMAI models offer numerous advantages over single-modal AI models.16,17 By combining input from different modalities, they facilitate the analysis of more complex scenarios and support the generation of more comprehensive response. For example, they can handle complex tasks and offer human-like responses to solve medical issues, assisting doctors in diagnosing and managing diseases more accurately. They can also facilitate more effective interactions between virtual assistants, and even between machines and humans, by analyzing the patient’s language, gestures, and visual cues. Additionally, MMAI can transfer knowledge from one domain to another. For instance, a model trained on text and images can transfer its knowledge from one domain to the other. These models have played a transformative role in various areas, such as chatbots, online learning, healthcare, and customer support, with cross-industry applications due to their ability to combine visual and auditory data.

Basic models in AI in terms of multimodality

MMAI is exponentially becoming a preferred tool because of its ability to quickly adapt to the specific needs of industries, including the health sector. Although multimodal models share fundamentally similar operating principles, multimodality can be expressed in different ways, such as text-to-image, text-to-sound, sound-to-image, and a combination of all of these.10,13 The key to these features comes from their ability to be trained on multimodal data such as text and images. 18 For example, text-to-image models generate images from patterns originally called Gaussian noise using a process known as diffusion. 19 Early diffusion models often struggled to produce sharply focused images due to a lack of direction. However, MMAI, which can process and understand multiple types of data simultaneously, has made significant progress in creating smarter, context-aware systems. 17

Broadly speaking, there are four basic categories of models, each focused on solving a different data model. 18 Large language models (LLMs) are text-oriented models that are trained on trillions of word recognitions, allowing them to produce human-like text. Another architecture, large vision models (LVMs), are trained on large image datasets to perform tasks such as image recognition and rendering more specifically. By training on datasets containing image-text pairs, vision-language models (VLMs) can generate unique images based on textual prompts. Large multimodal models (LMMs), representing the most advanced category, are designed to process and produce content in multiple formats, including text, images, video, and music.

MMAI models have found a variety of applications. For example, MMAI models such as OpenAI’s CLIP and Google’s ALIGN can generate textual descriptions from images, making it possible to produce human-like captions by processing image and text data. MMAI models, such as OpenAI’s DALL E model, can also generate images from textual descriptions. They can significantly improve video processing by combining visual data with audio and text and improve speech recognition in noisy environments by combining video and audio input. Furthermore, MMAI combines visual, textual, and sometimes audio data (e.g., heartbeat sounds), aiding in diagnosis and ultimately patient management. 17

AI is used in healthcare across a spectrum, including prevention, diagnosis, treatment of diseases, and monitoring of patients. Ultimately, it can help doctors make better clinical decisions. 20 MMAI contributes to healthcare more comprehensively by analyzing heterogeneous data sources such as medical imaging, electronic health records (EHRs), genetics, and laboratory results.4,10,14 Some MMAI models, such as DALL-E 2 (OpenAI), can generate realistic and proportional X-ray images by using text information of anatomical areas such as the skull, hands, chest, and ankle. However, they are insufficient in the detailed structure of bones. They also cannot generate accurate images for more complex modalities such as CT and MRI. 18 This indicates the need for models trained on more text-to-image medical images. Even so, MMAI development is a dynamic process and is constantly evolving, which demonstrates its promising potential to overcome these problems.

LLM: large language models (LLMs); LVM: large vision models; VLM: vision-language models; LMM: large multimodal models.

Multimodal AI for clinical decision support

Initially, AI models specialized in a single domain, such as language models understanding text, convolutional neural networks (CNNs) processing images, and deep neural networks processing sound. Recent breakthroughs have led to the rise of MMAI models that can analyze and process multiple types of data (such as text, images, and audio) simultaneously. A number of MMAI-based tools have been released in this area by different developers.16,21,22 These tools can analyze an image and read a description to answer questions. Furthermore, they learn and improve by receiving feedback, becoming better at solving various tasks over time. 16 For instance, LLMs, using different approaches, have found a widespread research area to find answers to medical problems. Bio-BERT has shown robust capabilities for biomedical text mining, and fine-tuning PALM on medical training data has resulted in Med-PaLM, which performs better in medical use cases. 23

CDSSs are essential tools in modern healthcare, helping physicians, nurses, and pharmacists make better-informed decisions about patient care. While some CDSSs operate automatically, others require manual input, such as clinical guidelines. EHRs capture comprehensive patient health information, providing a digital alternative to traditional paper records. The potential of CDSSs to reduce errors in decision-making and improve patient outcomes is well documented. However, the utility of CDSSs is particularly evident in scenarios that require rapid and accurate decision-making. Especially in cases involving complex, diverse, and voluminous data, integrating MMAI into CDSSs ensures that decisions made by these systems are both more accurate and faster, ultimately increasing their effectiveness. 7 MMAI models have tremendous potential to transform many aspects of the industry by bringing together multiple types of healthcare data, allowing that data to be processed and analyzed at an unprecedented scale and speed. Providing holistic and context-aware insights for clinical decision-making, this ability not only improves processing of complex medical conditions, but also lays the foundation for more refined and personalized medical treatments.10,24

MMAI has key abilities that contribute to advancing CDSSs. 25 With its data-driven insights and analytics, MMAI can detect patterns and anomalies from EHRs that are important for patient health and provide insights to adjust treatment strategies. 26 It can also identify patients at risk of disease, facilitating early intervention and potentially reducing the incidence of disease. The predictive analysis power of AI in big data contributes significantly to identifying correlations between factors such as genetic markers and environmental impacts on health, predicting disease outbreaks and guiding epidemiological research. This helps to develop new treatments and analyze complex health conditions such as diabetes and heart diseases. 27

Beyond clinical applications, MMAI also streamlines administrative tasks in healthcare settings by automating data entry, extracting information from clinical notes, and assisting with billing, thereby improving efficiency and accuracy. Through this second capability that enables workflow and administrative processes, MMAI organizes and categorizes vast information and helps healthcare providers access and utilize patient records while managing patient data. 25

The diagnostic and predictive analytical ability of MMAI is one of the key justifications for its integration into medicine for clinical decision making. In addition to its exceptional ability to interpret medical images such as X-rays, MRI scans and pathology slides, MMAI can combine them with other forms of data such as a patient’s medical history, environmental factors, and genetic profiles. MMAI algorithms help detect and diagnose diseases such as cancer and neurological disorders with higher precision, identifying signs of disease that can often be missed by the human eye.9,28,29 Moreover, it speeds up the diagnostic process, reduces errors, and supports personalized medicine, allowing for more tailored treatment plans. It also enables early intervention and personalized patient care by conducting risk assessment and predictive analysis based on personalized information, ultimately improving quality of life and reducing complications.30,31

Contribution to treatment optimization is another key feature of MMAI for application in CDSSs. MMAI analyzes current research and clinical guidelines to provide evidence-based treatment recommendations for optimal treatment pathways, including standardizing care across settings, customizing drug therapies based on patient-specific factors such as age and kidney function, and designing drug combination therapy based on specific factors derived from data. 32 Additionally, MMAI supports pharmaceutical research by identifying promising drug candidates and expediting the drug development process.1,8

The ability to manage and interpret diverse and large-scale information is the fifth feature of MMAI for contribution to CDSSs. This capability significantly aids in evaluating and selecting the best available evidence for clinical use.33,34 It also helps healthcare providers stay up to date with the latest research, clinical trials, and treatment protocols. Information management greatly enhances the efficiency and effectiveness of healthcare delivery by improving communication and coordination. For example, by serving as a central hub for patient information, MMAI ensures that all members of a healthcare team have access to up-to-date patient data, especially in complex cases involving multiple specialists.8,35

Finally, integrating MMAI into patient monitoring and telehealth plays a crucial role in contributing to CDSSs. Remote patient monitoring has a transformative impact on patient care by providing immediate data to healthcare providers, particularly for chronic conditions and post-operative recovery. Virtual consultations driven by MMAI overcome geographic barriers, especially in regions with limited access to healthcare. This integration allows for improved patient triage and assessment, reducing workload and cost.1,28,35

Challenges regarding multimodal AI for clinical decision support

The integration of MMAI into CDSSs promises to revolutionize healthcare by leveraging diverse data formats to provide more comprehensive and accurate decision-making support. However, this approach also presents unique challenges spanning technical, ethical, and operational domains.36,37

One important challenge is that MMAI systems require seamless integration of heterogeneous data sources, often stored in different formats across different systems. However, inconsistent data sources and collaboration issues hinder effective data collection, analysis, and real-time synchronization. The development of collaborative initiative standards such as Fast Healthcare Interoperability Resources (FHIR) standards and the exploration of advanced data fusion techniques to ensure consistent integration of diverse data types will help minimize these issues.25,38

Secondly, high quality and well-annotated datasets are crucial for integrating MMAI systems into CDSSs. Missing or errors in any of the data types can degrade the overall system performance. 39 To address this, it is important to create comprehensive multimodal datasets through collaborations between institutions such as hospitals and other technology providers. Additionally, the development of computational methods and algorithms to effectively handle missing or noisy data can be addressed.

MMAI models are often considered “black boxes.” This algorithmic complexity makes it difficult to understand how data from different modalities contribute to decision making. In this context, explainable AI (XAI) is crucial in overcoming the problem as it addresses the need for transparency and interpretability in CDSS. Integrating XAI into CDSS ensures that the decisions made by these systems are not only accurate, but also understandable and trustworthy to clinicians.7,39

The fifth challenge is the bias and generalizability barriers of MMAI models. Algorithms trained on specific institutions or patient populations may result in poor generalizability from diagnosis to treatment for others, raising concerns about fairness and equity.28,40 To reduce bias and increase generalizability, diverse datasets should be included in the training of MMAI models, and developers should regularly audit models. To keep it up-to-date, new data modalities and medical knowledge should be incorporated into the algorithm as they become available. However, in order to not make the increasing complexity in this process difficult to use, simplified interfaces of the algorithm should be added, and a centered design approach should be adopted. Informative training programs should be carried out for clinicians about the capabilities and limitations of MMAI.

Another major challenge in the use of MMAI in healthcare is data privacy and security. The integration of MMAI often involves analyzing large datasets containing sensitive personal information. This poses serious challenges in securely storing, transferring, and processing them.33,39 To overcome these challenges, data management systems such as federated learning and the implementation of different privacy techniques such as the adoption of robust encryption protocols and decentralized data management systems should be adopted.

The last important challenge is the inequalities faced by regions with limited access to healthcare due to a lack of infrastructure and healthcare professionals or cultural reasons.1,28,35 To solve these, it is recommended to establish inter-institutional partnerships, address the lack of hardware required for internet access, use systems such as MMAI-focused telehealth, offline or hybrid.

The outlined transformative potential of multimodal AI in CDSSs, challenges and solution proposals.

aFHIR: Fast Healthcare Interoperability Resources.

Discussion

MMAI systems are designed to process and synthesize multiple data types such as text, images, audio, and video, by integrating information from diverse input formats. 10 Although they have been developed for a variety of domains, their areas of use can be basically divided into general applications and those specific to healthcare. We believe that it is important to distinguish these applications to better grasp the flexibility and critical value of MMAI in clinical settings.

One common application area is content generation. For example, OpenAI’s DALL E generates images from text prompts, and Google’s Imagen generates high-fidelity images from language. Another application area is cross-modal search. In this context, CLIP (Contrastive Language-Image Pretraining) enables smarter content tagging and recommendation systems by matching images with textual descriptions.17,18 A third general application area is virtual assistants. In this context, MMAI integrates visual, speech, and language processing to support intelligent assistants such as Amazon Alexa and Google Assistant. The fourth area is the area of surveillance and security. Multimodal systems combine audio, video, and sensor data to enhance threat detection in intelligent surveillance platforms. 41

In healthcare-specific applications, MMAI models fulfill highly specialized roles. For example, visual language models such as MedCLIP play an important role in clinical imaging interpretation by generating image titles that are compatible with clinical language. 42 Another area in healthcare is multimodal diagnostics. Examples include ViT-L and RETFound, which combine data from pathology slides, laboratory results, and clinical history for accurate cancer and cardiovascular diagnoses. 43 The third is the field of electronic health record (HER) analysis. An example model of this is DeepMind’s Streams, which combines structured and unstructured health data to detect acute kidney injury. Finally, the field of remote monitoring, where wearable health devices combined with NLP and image analysis support real-time patient monitoring and alerts can be mentioned. 24

Data from available studies support predictions that the MMAI-CDSS can outperform unimodal systems, expert rules, and traditional clinical practices across a variety of clinical tasks. Available research encourages future research to expand these benchmarks to establish stronger generalizability across domains.

MMAI systems are tools capable of discovering new patterns within and across modalities that are suitable for explaining differences in patient outcomes. 44 These research results support claims that MMAI-CDSS can be superior to unimodal systems, expert rules, and traditional clinical practices in a variety of clinical tasks. For example, using multimodal AI can accelerate clinical decision-making processes such as oncology diagnoses, medical image interpretation, and treatment optimization by combining information from different sources, resulting in more accurate and faster results. However, it should be noted that the effect of MMAI may be heterogeneous across different healthcare applications.11,14,45 Moreover, even within a specialty such as radiology or oncology, its utility for certain tasks may be greater than for others. 5 It may even provide marginal improvements in routine decision support tasks, such as drug dosing or standard triage evaluation. 9 It is also necessary to consider the disadvantages of MMAI, such as its scalability and the time-consuming nature of information concatenation. 11 Considering all this, future research should aim to identify areas where MMAI is more effective and where traditional approaches may be adequate.

While MMAI systems offer significant promising advantages, one of the biggest challenges facing AI in healthcare is its integration into daily clinical practice. Technical challenges in model development due to the unique preprocessing requirements, resolution scales, and semantic representation challenges of each modality pose significant hurdles.46,47 Beyond these technical challenges, the integration of heterogeneous data types, including medical imaging (CT, MRI, ultrasound), clinical narratives, structured EHR data, genomic information, and temporal biosensor measurements in healthcare are other critical hurdles for successful implementation. Lack of systematic patterns specific to modality and significant varieties of quality metrics across modalities further adds to the complexity.11,14,31

Explainability in AI tools is often emphasized as it addresses the need for transparency and interpretability in CDSS. Transparency of AI is important for both healthcare providers’ trust in the system and the systems’ recommendations can be effectively reviewed and validated. 7 However, the explainability issue also leads to important limitations. Existing explainable AI (XAI) methods, such as importance maps, attention scores, or post-interpretability tools, generally lack consistency, reproducibility, and domain relevance, which is a significant hurdle for clinical usability.47,48 There are also researchers who suggest prioritizing rigorous validation over explainability, arguing that true explainability can never be achieved for complex models without sacrificing performance.7,48–50 In this regard, in high-risk scenarios, models with particularly superior accuracy can offer actionable confidence.

Different institutions use a variety of formats, coding systems, and data structures, and a standard health data interoperability remains a persistent challenge. Regulatory frameworks for AI in healthcare continue to evolve. Multimodal systems, on the other hand, face questions about how to validate performance across different combinations of available inputs. Current guidelines from regulatory agencies such as Health Insurance Portability and Accountability Act (HIPAA) in the US or General Data Protection Regulation (GDPR) in the European Union were primarily designed for unimodal systems, which have clearer performance metrics.40,51,52 While MMAI technologies offer tremendous promise to transform CDSS and improve healthcare delivery, there are multifaceted challenges that require meticulous attention and strategic solutions to ensure their effectiveness, safety, and ethical use. Regulatory frameworks, which should be addressed in a multidisciplinary manner, should well identify the technical limitations that affect the adoption and scalability of AI-driven CDSS, as well as the critical boundaries that ensure interpretability and transparency, and equal and convenient accessibility of AI models.

Innovative aspects and limitations of this review

This article reviews recent developments in MMAI in CDSS (Figure 2). However, this review is not without limitations. One important limitation is that this article is a narrative review and does not include detailed comparative study results. Another important limitation is that while MMAI has the potential to bridge critical gaps in CDSS, it has several challenges that hinder its broader application.10,42,48,49 Representative conceptual diagram outlining the position of multimodal artificial intelligence in clinical decision support systems (CDSSs).

One key challenge is data heterogeneity, making it difficult to integrate structured and unstructured data across formats and clinical settings. Secondly, interpretability remains a persistent challenge, especially in large-scale multimodal models, despite advances in XAI. Another challenge is technical deployment barriers, including computational resource requirements and the lack of standardized protocols for model training, testing, and reporting. Model generalizability is also a challenge, as many high-performance MMAI systems lack comprehensive case studies and have been retrospectively validated using single-institution datasets, limiting real-world applications. Ethical and regulatory issues also require careful consideration. Training MMAI systems on non-representative data can introduce bias, and existing regulatory frameworks often lag behind the pace of innovation, undermining the safety and effectiveness of MMAI models operating on heterogeneous inputs.

Future perspective

Although there are challenging obstacles to overcome, the future of MMAI in CDSSs is promising with the potential to revolutionize healthcare through rapid technological advances. Advanced integration frameworks such as FHIR, and enhanced data fusion techniques that can transform heterogeneous data types, supported by robust algorithms enabling seamless communication across diverse healthcare systems are likely to make healthcare more accessible and equitable.25,38

Future research should focus on developing explainable and interpretable MMAI models, as their transparency and explainability are crucial for building trust in AI among healthcare providers and patients. As XAI algorithms improve, the impact of MMAI in clinical decision-making will increase.7,39 Stakeholder collaboration is also critical for delivering robust MMAI systems to reduce bias and improve generalizability across diverse populations. Additionally, continuous auditing and updating will ensure their adaptability to new forms of medical knowledge and data.28,40 moreover, because the knowledge and skills of healthcare providers and practitioners are essential. Ongoing education and training of healthcare practitioners at all levels enable safer, more adaptable practice and can significantly reduce costs and risks.

By combining genetic, environmental, and lifestyle data, MMAI systems will advance personalized medicine, providing personalized prevention and treatment plans.27,30 Predictive analytics will further enhance early disease detection, reduce healthcare costs, and improve outcomes. MMAI will also accelerate drug discovery by analyzing complex datasets to identify actionable drug candidates, optimize clinical trial designs, and predict therapeutic outcomes.1,8

These limitations are not specific to a single model or application but are observed across multiple MMAI studies. For example, while both BioViL and RETFound have shown impressive performance on the dataset, their clinical utility has not been tested in large prospective, multicenter studies.12,53,54 Vision-language models such as MedCLIP and RadFormer exhibit strong zero-shot generalization. However, their underlying architecture of these organized image-text pairs limits their generalizability.42,55 Coordinated efforts among clinicians, data scientists, and regulators are essential to address these gaps. In addition to technical performance, future studies should also focus on bridging the translational gap between academic achievement and healthcare impact, generalizability, and clinical validation frameworks.

Conclusion

MMAI offers transformative potential for clinical decision support systems by integrating diverse data types to improve diagnostic accuracy, treatment personalization, and healthcare delivery. While the field is rapidly advancing, significant hurdles remain, including data integration, model interpretability, privacy, and ethical considerations. Future efforts should focus on building explainable, generalizable models and developing standardized frameworks for clinical validation to ensure safe and effective adoption. With coordinated collaboration between developers, clinicians, and policymakers, MMAI can become the cornerstone of next-generation, data-driven healthcare.

Footnotes

Author contributions

RD and NA conceptualized and drafted the manuscript. NA wrote the manuscript. RD and NA supervised and edited the manuscript. Both authors read and approved the final version of the manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: RD is president of the INVAMED Institute for Medical Innovation. NA is a volunteer consultant for Med-International UK Health Agency Ltd.

Data Availability Statement

All supporting data are included in the article.