Abstract

Keywords

Introduction

Background

The Convention on the Rights of Persons with Disabilities (CRPD) declares that disability is a dynamic concept. It arises from the interplay between individuals with impairments and social and environmental barriers that obstruct their full and equal involvement in society. 1 This is known as the “social model” of disability. According to the Ontario Human Rights Commission, disability “must be interpreted to include its subjective component, as discrimination may be based as much on perceptions, myths, and stereotypes, as on the existence of actual functional limitations.”

Meanwhile, artificial intelligence (AI) has become embedded in everyday life. 2 In particular, AI for healthcare has multiple benefits. It can be used for social good, such as predicting disease onset, tracing pandemics, 3 and advocating for people with disabilities. 4 More specifically, advancements in the use of AI are being made in health informatics, which can help organize and utilize health data. Health informatics is an interdisciplinary field that uses computer science, decision-making, information processing, and management science to design and implement information systems in a user-centric manner. These information systems are designed to obtain, store, process, and provide information to users to assist them in achieving their objectives. 5 AI is a field of study that allows computers to mimic human intelligence. 6 Machine learning techniques are a subset of AI techniques that detect patterns in data to assist users in making decisions. 7 Natural language processing (NLP) is another part of AI that allows computers to decipher the meaning of information and process phrases written or spoken in natural language.8–12 However, AI can also perpetuate biases against certain groups, including people with disabilities. This is because AI software learns from pre-existing data and uses that data to make predictions. As a result, it is essential to understand the interplay between AI and people with disabilities, and there is no published review on that interplay. This scoping review aims to summarize the current knowledge on the subject, including the benefits and challenges of AI for people with disabilities.

We followed Arskey and O’Malley’s five steps: (1) identifying the research question; (2) identifying relevant studies (through searches of databases, search engines, etc.); (3) study selection (screening of abstracts for relevance); (4) charting the data (study features such as type of technology, disability, etc.); and (5) collating, summarizing, and reporting the results. 13

Review question

Our review aims to answer the following question: What are the potential benefits and challenges of artificial intelligence (AI) and machine learning (ML) (Concept) for individuals with disabilities (Population) in various contexts (Context) as reflected in scholarly work, and what recommendations can we formulate for AI development?

Methods

Search strategy

The Population, Concept, and Context (PCC) method was used to guide the search strategy for conducting a scoping review. The following eight online databases were searched: ProQuest, IEEE Xplore, ACM Digital Library, Web of Science, Medline, PubMed, PsycINFO, and CINAHL. • • •

The search terms were established with the guidance of a supervisor to ensure comprehensive coverage of relevant scholarly literature. Of the 354 citations extracted, 84 articles were identified as non-duplicates. The complete search strategies are detailed in Appendix A.

Eligibility criteria

The inclusion criteria were predetermined and included scholarly studies published in the last 5 years, limited to journal articles and conference papers dealing with AI or machine learning and people with disabilities. The exclusion criteria were studies that were not written in English and were not scholarly publications. As this was a scoping review, the search terms were limited to the titles of the articles. The most recent search was done on February 17, 2023.

Selection process

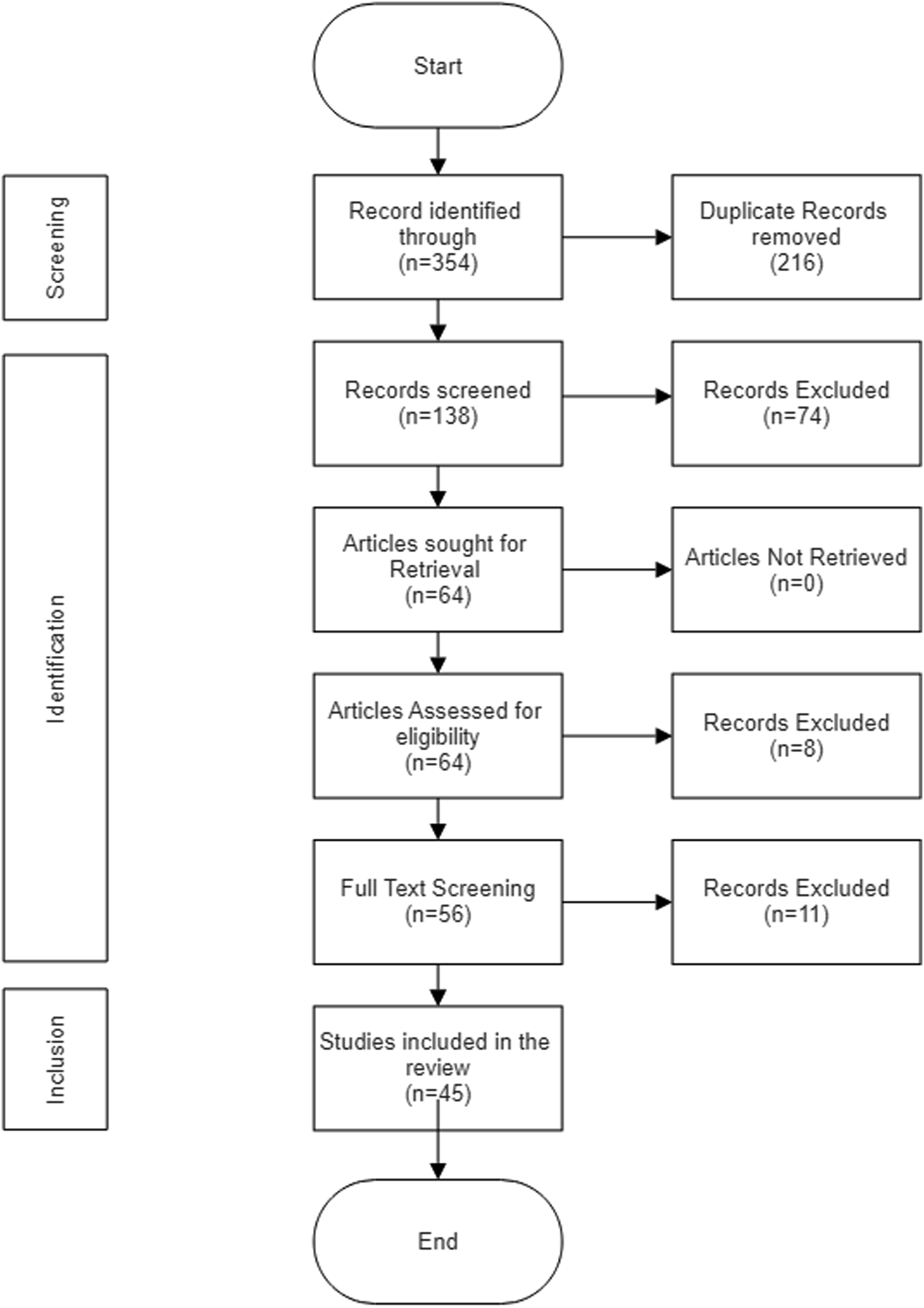

The inclusion and exclusion criteria were applied to 84 abstracts. Two authors (XX and YY) employed Rayyan software to screen 84 articles, and 64 were identified as potentially eligible. Both authors reviewed the full-text version of the 64 articles retrieved for review and synthesis (Figure 1). The literature search and article collection were conducted by a single author (XX), and the literature review and data collection from articles were conducted by both authors (XX and YY) and repeated by another author (ZZ). A discussion was held to reach a consensus in case of any ambiguities and disagreements. PRISMA Diagram.

The data charting strategy involved extracting relevant information from the selected articles and organizing it into a standardized format. This included the study’s focus (e.g., diagnosis, rehabilitation), the specific disability addressed, the type of AI technology used, and the study’s outcomes. This structured approach facilitated the comparison and synthesis of findings across different studies.

Data synthesis was primarily qualitative, involving thematic analysis of the extracted data. The authors identified recurring themes and patterns related to the benefits and challenges of AI for people with disabilities. These themes were then categorized and summarized to provide an overview of the current state of knowledge in the field. This review was not registered in a prospective review registry.

Results

The search process identified 354 articles in total. After removing 216 duplicates, 138 articles underwent screening. Out of the 138, 74 were excluded for being unrelated to the scoping subject. Following this, the remaining 64 articles were assessed for eligibility, of which eight were excluded. After the full-text screening, 11 additional articles were excluded. This led to a final selection of 45 studies included in the analysis.

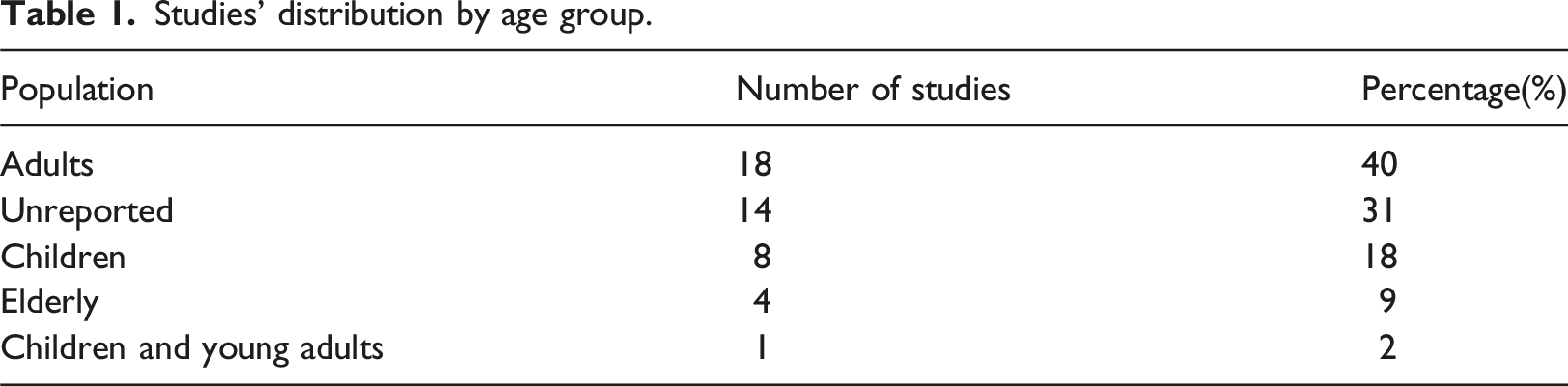

Studies’ distribution by age group.

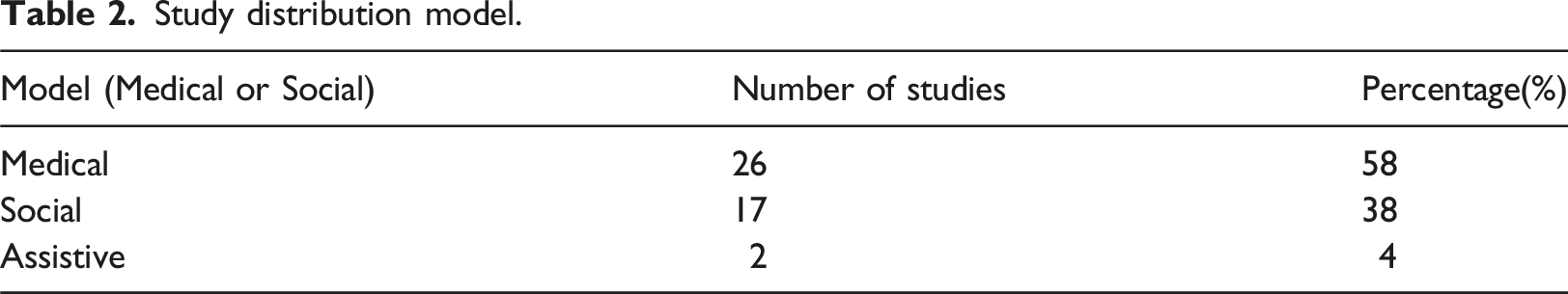

Study distribution model.

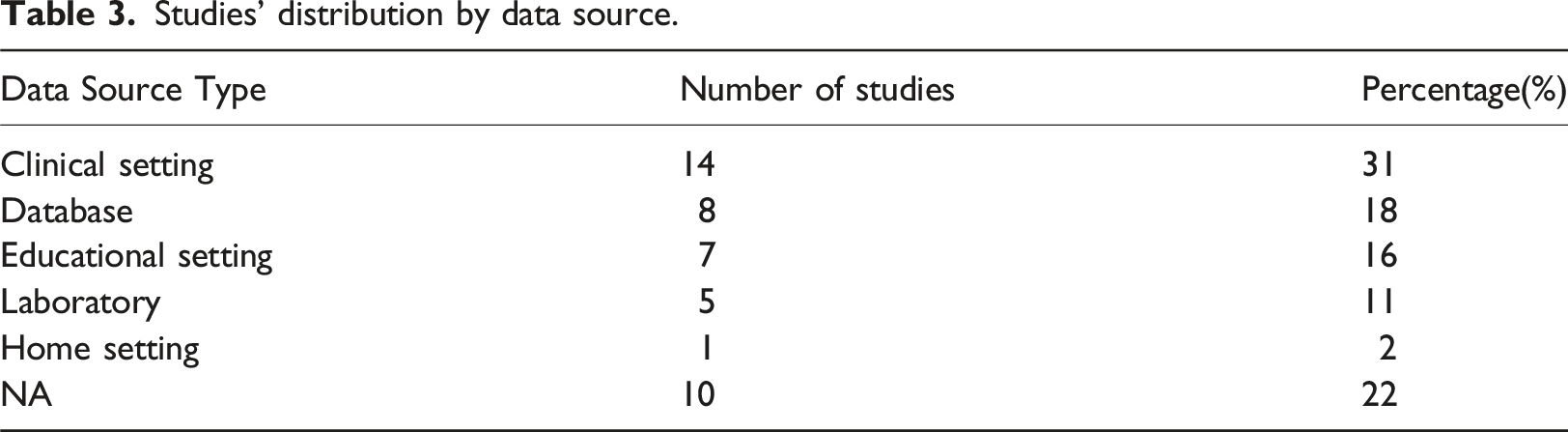

Studies’ distribution by data source.

All 34 studies (100%) that built AI models did not measure or address AI bias; the remaining 11 studies did not make AI models. Table 4 (see Appendix) provides a complete summary of the reviewed studies.

Advantages of AI for people with disabilities

Thirty-five articles uncovered three advantages toward which researchers are working: management of health (25 studies),14–37 assistive technologies (6 studies),38–43 and disability justice (4 studies).4,12,44,45

Management of health

Twenty-four studies were related to the management of health conditions: seven studies on diagnosis,14–20 10 studies on disability progression,21–30 two studies on rehabilitation,31,32 four studies on disability risk assessment,33–36 and one study on access to care. 37

Diagnosis

Our review found that AI was used to diagnose three disabling conditions: multiple sclerosis (MS), developmental disorders, and autism.

Three studies used AI to diagnose MS. Flauzino et al. aimed to identify significant factors contributing to increased disability in MS patients to help healthcare professionals develop personalized treatment plans. 14 Rehak Buckova et al. used ML to identify neuroimaging biomarkers related to motor disability in MS patients, which could improve understanding, diagnosis, and treatment for people with MS who have motor disabilities. 15 Montolio et al. applied ML to optical coherence tomography (OCT) data to diagnose and predict disability in MS. 16

Yang and Bai suggested using ML to identify causal genes and variants within patients to understand family diseases, especially X-linked conditions leading to developmental disorders. 17 Nikam et al. used AI and ML to establish specific thresholds of analytes for different levels of intellectual disabilities (ID) to enhance our understanding of disabilities and improve diagnosis, treatment, and management. 18

Two studies were concerned with diagnosing autism. Song et al. used ML with socio-demographic and behavioural observation data to diagnose children with autism spectrum disorder (ASD) and comorbid Intellectual Disabilities. 19 Yperman et al. developed an ML model to improve the accuracy of the Autism Diagnostic Observation Schedule (ADOS/2) in accurately diagnosing ASD, motor evoked potentials, 28

Disability progression

Ten studies addressed the prediction of MS disability progression using the Expanded Disability Status Scale (EDSS), 21 clinical notes, 22 thalamic atrophy measurements, 23 optical coherence tomography, 25 genetic information, 26 motor evoked potentials, 28 neuroradiological features, 29 and clinical data. 30 One study used ML to predict secondary progressive MS (SPMS) disability progression, allowing those at the highest risk of worsening symptoms to access experimental treatments. 24 A final study used ML to predict the progression of primary progressive multiple sclerosis (PPMS). 27

Rehabilitation

Two studies considered AI and rehabilitation. Islam et al. implemented an expert system using ML to predict the self-care problems of children with physical and motor disabilities. 31 This system helped therapists detect self-care issues early, make better decisions for children, and ultimately save time and costs in the healthcare system. Hori et al. used ML to identify the incidence of and risk factors linked to hospitalization-associated disability (HAD) in patients after cardiac surgery. 32

Disability risk assessment

Four studies predicted the risk of disabilities. Xiang et al. developed an ML model to predict disability risk in geriatric patients. 33 Koc et al. focused on predicting the permanent disability status of construction workers due to occupational accidents. 34 Erbeli et al. reported an ML model that predicts the risk of reading disabilities (RD) among students. 35 Finally, Modac et al. used NLP and a learning management system (Moodle) to detect dyslexic students. 36

Access to care

ML techniques were used in a study by Youssef and Youssef to develop a social network linking children with learning disabilities to their families and the appropriate specialists. 37

Assistive technologies

Six studies were related to using AI in assistive technologies: three in assistive communication38–40 and three in mobility.41–43

Communication

Tanabe et al. proposed an AI-based emotion recognition system that recognizes the emotions of children with profound intellectual and multiple disabilities (PIMD) using physiological and motion signals such as eye gaze, heartbeat, and tongue motions. 38 Herbuela et al. used ML to classify the movements of children with profound intellectual and multiple disabilities (PIMD) to support communication, 39 and Tamilselvan et al. reported the design of an AI-based writing robot to assist individuals with physical disabilities in writing without relying on others. 40

Mobility

Three studies focused on using AI for mobility. Ghazal et al. proposed an AI-powered service robot to assist elderly and disabled people during shopping experiences using a robotic arm with a lifting mechanism. 41 Encinas Cantaro and Montano Isabel used ML to enable disabled individuals to choose the best routing decisions on the road in urban areas. 43 Blanc et al. reported a low-cost prototype of an autonomous electric vehicle (EV) for visually impaired people. 42

Disability justice and AI

Only four articles centred AI around disability justice: one related to disability-centred AI development 44 and three on social justice.4,12,45

Disability-inspired ML models

One study suggested that AI entities like robots and intelligent agents could be implemented as disabled AI. 44 The researchers proposed that intentionally turning off certain aspects of an AI algorithm (i.e., disabling them) during the training process may also result in the algorithm developing compensational abilities, also known as coolabilities, thereby improving its performance after training. 44

Disability advocacy and social justice

Two studies related to social justice by El Morr et al. and Gorman et al. created a virtual platform for disability advocacy groups that utilizes AI techniques such as machine learning, the Semantic Web, and NLP.4,12 The platform is based on an ontology representing the United Nations Convention on the Rights of Persons with Disabilities (CRPD). It provides a multilingual and accessible environment for uploading and searching disability-related documents. 4 AI techniques enabled semi-automatic content tagging and intelligent semantic searches, giving access to trusted sources of information for people with disabilities and disability advocacy organizations. 4 Advocacy groups face challenges in monitoring human rights and uncovering systemic discrimination against people with disabilities due to the lack of disability data availability, but ML approaches can help.

Sobnath et al. used an ML approach to identify features to enhance the possibility of engaging disabled school graduates in employment around 6 months post-graduation. 45 This research can provide valuable insights into creating more inclusive employment opportunities for disabled individuals.

Disadvantages and challenges of AI for people with disabilities

11 studies identified four weaknesses or challenges that research in the field of AI and disability faces; these include social and legal challenges (2 studies),46,47 the exclusive design of AI solutions46,48–53 (7 studies), and the lack of a disability justice approach54–56 (3 studies).

Ethical and legal challenges

Despite the remarkable advancements in technology, every cutting-edge technology comes with challenges and potential drawbacks. Zdravkova et al. note that AI-based tools raise privacy concerns, while excluding people with disabilities from AI training can lead to biases, paternalistic designs, and neglect of privacy issues with “always-on” technologies. 46

Moreover, while AI offers significant benefits, it also poses privacy risks. AI relies on user data to learn and improve, raising concerns about how smart devices (e.g., assistive technology apps) collect, use, and protect this information. While some apps allow users to opt out of data collection, this may limit AI functionality. The risks associated with data collection include potential misuse by companies and the possibility of data breaches exposing sensitive information. These concerns raise questions about the extent and nature of data collected and the security measures in place to protect it. Such privacy and cyber-security concerns must be addressed through legal frameworks and incorporating solutions into the design of assistive technologies. Another challenge of using AI for people with disabilities raised by Huq et al. is the high cost of smart devices; this means that assistive tools may only be affordable for certain people, which further marginalizes those with disabilities who are already poor. 47

Exclusive design of AI solutions

AI classification of people uses big data, and technology developers tend to design for the majority, which makes it challenging to tackle the individual uniqueness of disabled people (e.g., unique visual or hearing impairments), making standard AI solutions inadequate for their needs as Domingo notices. 48

Disability is usually regarded as a homogenous concept, and data collection is generally not inclusive. Classification of disabled people as outliers can impact their equal access to primary services, such as health insurance, due to the marginalization caused by AI bias. 48 It is crucial to include individuals with disabilities in the development of AI systems. This helps better understand the implications of AI and design inclusive solutions for all. By creating technology that caters to the needs of people with disabilities, we can also improve satisfaction for those without disabilities. For instance, when sound is unavailable, everyone can benefit from the captions provided for deaf individuals. 48

Zdravkova et al. emphasize that a lack of engagement of people with disabilities can lead to paternalistic designs that fail to meet the actual needs of students with disabilities. 46 To address this issue, it is crucial to include people with disabilities in developing AI-based tools and potentially oversample them to ensure their needs and concerns are reflected in the data. 46

Newman-Griffis et al. notice that this is especially true for older adults with disabilities, who often cannot benefit from intelligent eldercare products due to the complexity of installation and use, resulting in imperfect support facilities. 52

AI-assisted decision-making can be biased due to specific design decisions, including problem formulation and data selection. Interpretations of disability can lead to biased AI design. Moreover, a lack of transparency and disabled participation during AI design can lead to inappropriate disability definitions and potential harm. Teng and Ren propose a framework to critically examine AI technologies in decision-making contexts and guide the development of a design praxis for AI analytics related to disabilities. The framework includes critical questions for disability-led design and participatory action to create more equitable AI technologies in disability contexts. 49

An AI-based medical ethics advisor tool has been developed by Wald. 51 Since people with disabilities often experience health disparities and social challenges, it is crucial to ensure that such AI-based medical ethics tools prioritize equitable implementation and consider the unique needs and experiences of people with disabilities. 51 To create more disability-responsive AI tools, developers should incorporate scoring systems that consider clinicians’ knowledge of patients’ needs. Additionally, approaches to evaluating a patient’s quality of life may need to adequately address the subjective nature of this assessment, particularly for people with disabilities. Therefore, AI tools should be trained to identify and address disability bias in quality-of-life reviews, ensuring fair and equitable decision-making processes for all patients. 51

Protecting against algorithmic discrimination, whether intentional or unintentional, requires enacting

People with disabilities face disproportionately high unemployment rates compared to the general population, and AI further perpetuates discrimination against people with disabilities during recruitment. Packin proposes that AI developers must

Lack of a disability justice approach to AI

AI-powered recruitment tools promise efficiency and reduce human bias but often fail to consider disability-related preferences and can reinforce problematic assumptions about disabilities.

54

To address these concerns, a

To ensure

The current workflow for

Discussion

Addressing disability justice

Of 45 articles, 35 (76.09%) discussed AI for disability, and 11 addressed challenges. Of these 35, 25 (71.43%) were medically focused. In comparison, six articles addressed assistive technologies for people with disabilities (17.14%), and only four articles (11.43%) addressed disability justice issues and hence focused on the social context of people with disabilities. However, one of the four articles technically focused on AI development, 44 and two belonged to the same study addressing disability justice.4,12 Hence, we conclude that only two studies out of 35 (5.71%) addressed disability justice issues. This reflects a social context where the medical model of disability is prevalent in AI.

There is a lack of use of the social model of disability in AI research. The social model focuses on the societal and environmental obstacles that disable individuals rather than solely attributing disability to an impairment. 57 There is a need for interdisciplinary research between AI researchers and disability researchers to expand the understanding of disability beyond impairment to the social context and to empower people with disabilities in the engineering and computer science fields. It is crucial to uphold the “nothing about us without us” principle in these fields to ensure that the disability community’s voice is heard and represented in AI research. 58

This can be achieved through concerted interdisciplinary research involving critical disabilities, social work, and health informatics researchers and by increasing targeted funding to advance the social agenda related to people with disabilities in AI.59,60 Involving people with disabilities in collaborative efforts can contribute to developing more inclusive AI systems that prioritize the needs and rights of individuals with disabilities. Additionally, integrating the perspectives of people with disabilities into AI research can help address biases and ensure that AI technologies are leveraged to advance equity and justice. 61

Prevalence of the medical perspective

The high prevalence of the medical perspective in the use of AI for people with disabilities is reflected in this review. This perspective sees disability primarily as a medical issue that requires treatment or management. It focuses on diagnosing and predicting the onset or progression of diseases or disabilities. For example, AI has been used to predict the progression of multiple sclerosis (MS) and to diagnose autism spectrum disorders, often utilizing clinical data and neuroimaging biomarkers.19,62,63 Such applications underscore the dominance of the medical model, which prioritizes technological solutions for managing health conditions over addressing social and environmental factors that contribute to disability. 64 The emphasis on medicalization has been criticized for overlooking the broader social impacts and the rights of individuals with disabilities. 65 The failure to incorporate the social model of disability into AI research underscores the necessity of a comprehensive approach that takes into account the societal obstacles and prejudice experienced by people with disabilities. 57

Assistive perspective: Missing the broader implications

While studies on AI for people with disabilities have covered assistive technologies related to life experiences, communication, and support for older people, 66 these applications raise serious concerns about data security and individual privacy. This is especially pertinent when the individuals concerned are people with developmental or intellectual disabilities. AI-driven assistive technologies often collect and process sensitive personal data, which can be vulnerable to misuse and breaches.67,68 Despite these significant concerns, the interplay between intellectual disability and privacy has received little attention in AI research.

The challenges faced by people with developmental or intellectual disabilities significantly impact their ability to consent and make decisions, affecting their privacy and autonomy. 69 Unlike elderly individuals or those with physical disabilities, this population often lacks decision-making capacity and is hindered by communication or literacy issues. 70 By the time privacy breaches are recognized, people with developmental disabilities may have already lost some privacy rights. Therefore, it is crucial, from a policy perspective, to increase awareness, education, and knowledge tools for caregivers and service providers to help protect privacy rights for people with developmental or intellectual disabilities effectively. Future research should focus on developing robust privacy frameworks to protect individuals with intellectual disabilities from potential data exploitation and ensure that these technologies are ethically designed and implemented.71–73 It is essential to address these issues to build trust and guarantee the ethical use of AI in assistive technologies.

Disability risk: A narrow view

The use of AI to manage disability at work aligns with previous reviews that covered the use of AI to predict absenteeism and temporary disability. 74 However, out of 58 articles reviewed, none discussed the social implications of human rights approaches. There is a notable tendency to use AI from a technological perspective to achieve effectiveness and efficiency, often neglecting the broader impacts of technology use on social justice and human rights.50,53 This narrow perspective overlooks the socio-economic factors that influence disability and can lead to biased outcomes that disadvantage already marginalized groups. 75 Broadening AI research and implementation is crucial to incorporate considerations of equity, inclusion, and the human rights of individuals with disabilities.

From AI ableism to knowledge creators

Previous studies have shown that individuals with disabilities are often portrayed as users of AI and machine learning (ML) technologies with a techno-optimistic tone in various sources, including academic literature, Canadian newspapers, and Twitter. 76 However, these individuals are rarely depicted as knowledge producers or influencers in AI/ML discussions. This exclusion highlights the need for a more diverse representation of disabled people in AI/ML contexts to ensure they become meaningful contributors and beneficiaries in AI/ML discussions. 77 The current ableist approach in AI research overlooks the valuable perspectives and expertise that people with disabilities can bring to the development and governance of AI technologies. 49 To bridge this gap, it’s crucial to promote inclusive approaches that acknowledge and empower people with disabilities as valuable contributors and ethical leaders in the AI/ML domain. 78

Bias is off the radar: the Absence of debiasing strategies

The articles that discussed prediction did not consider bias or measure it concerning different populations, such as gender or type of disability. This indicates a need for more awareness, research, and reporting on debiasing strategies in AI. Acknowledging and tackling bias in AI is essential to prevent it from perpetuating and worsening existing disparities. This is crucial for ensuring fair and just results for all users, particularly for those in marginalized communities, such as individuals with disabilities.79,80 It is important to keep in mind that AI systems trained on biased data can perpetuate discriminatory practices and further entrench social biases. This is especially problematic in contexts such as healthcare, employment, and social services.81,82

It is crucial to incorporate strategies to mitigate bias into the creation and implementation of AI technologies. This includes using varied datasets, utilizing machine learning algorithms that prioritize fairness, and regularly monitoring AI systems for biased results.83,84 Furthermore, introducing ethical considerations and awareness of bias into the education of AI researchers can encourage a more responsible approach to AI development. 61 Emphasizing the potential risks of AI, such as bias, and the actions taken to mitigate them should be a key focus in research to guarantee that AI technologies benefit all members of society equitably.

Study limitations

While the results of this study can inform future research, it has limitations. First, we have excluded grey literature and focused on scholarly evidence. This decision may have limited the scope of the review and excluded potentially valuable insights from non-academic sources, such as reports, policy documents, and the perspectives of people with disabilities. Second, this review primarily focused on summarizing the findings and identifying key themes. While this approach aligns with the exploratory nature of scoping reviews, it may limit the ability to assess the robustness and reliability of the evidence base. Also, this review’s inclusion criteria focused on studies published in the last 5 years, limited to journal articles and conference papers. This narrow timeframe and source selection excluded older but still relevant research, potentially restricting the comprehensiveness of the review.

Recommendations

Based on our findings, seven recommendations and implications can be drawn to enhance the development and implementation of AI for people with disabilities:

Conclusion

This systematic scoping review highlights the transformative potential of AI in enhancing healthcare and improving the lives of people with disabilities. However, it also reveals a concerning prevalence of the medical model of disability and ableist perspectives within AI research. To harness the full potential of AI for social good, a paradigm shift is necessary to emphasize a disability justice approach, prioritize inclusivity, and address biases in AI algorithms and datasets.

Researchers must actively engage with and prioritize the social model of disability, recognizing its multifaceted nature and the importance of addressing societal barriers. Interdisciplinary collaboration between AI experts, disability scholars, and people with disabilities is crucial to ensure that AI technologies are developed and implemented inclusively and equitably.

Policymakers and practitioners also play a pivotal role in shaping the future of AI by enacting policies that promote ethical and responsible use, safeguard the rights and interests of people with disabilities, and ensure the accessibility and affordability of AI technologies.

By adopting a disability justice approach, prioritizing inclusivity, and addressing biases, we can ensure that AI technologies are used to create a more equitable and empowering future for people with disabilities.

Footnotes

Acknowledgements

The authors express their sincere gratitude to those colleagues within the School of Health Policy and Management at York University who exemplify an equitable, inclusive, innovative, and supportive environment, which has been instrumental in fostering this research. The authors are also deeply grateful for the vibrant intellectual community within the Center for Feminist Research at York University; its commitment to inclusivity, innovation, and collaboration has inspired this research and enriched our experience as scholars.

Author contributions

C.E., R.G., and Y.E. designed the study and received the funds. C.E. supervised B.K., F.M., and S.T., who performed and reported the summaries. C.E. verified the analysis and prepared the first draft. All authors provided critical feedback and revised it; they approved the final version and agreed to be accountable for all aspects of the submitted paper.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is funded by the Social Sciences and Humanities Research Council (SSHRC). (Grant No. 872-2022-1025).