Abstract

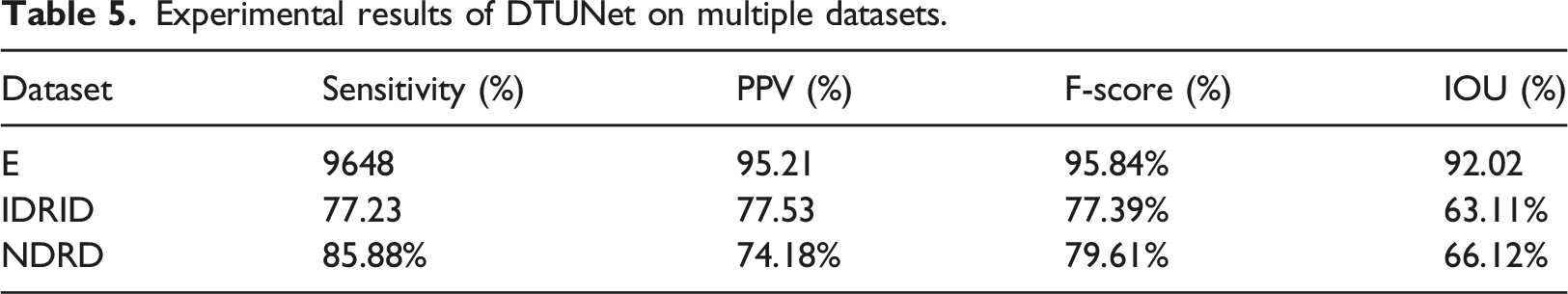

Objectives: In this article, we provide a database of nonproliferative diabetes retinopathy, which focuses on early diabetes retinopathy with hard exudation, and further explore its clinical application in disease recognition. Methods: We collect the photos of nonproliferative diabetes retinopathy taken by Optos Panoramic 200 laser scanning ophthalmoscope, filter out the pictures with poor quality, and label the hard exudative lesions in the images under the guidance of professional medical personnel. To validate the effectiveness of the datasets, five deep learning models are used to perform learning predictions on the datasets. Furthermore, we evaluate the performance of the model using evaluation metrics. Results: Nonproliferative diabetes retinopathy is smaller than proliferative retinopathy and more difficult to identify. The existing segmentation models have poor lesion segmentation performance, while the intersection over union (IOU) value for deep lesion segmentation of models targeting small lesions can reach 66.12%, which is higher than ordinary lesion segmentation models, but there is still a lot of room for improvement. Conclusion: The segmentation of small hard exudative lesions is more challenging than that of large hard exudative lesions. More targeted datasets are needed for model training. Compared with the previous diabetes retina datasets, the NDRD dataset pays more attention to micro lesions.

Introduction

Diabetes retinopathy (DR) is the main cause of irreversible visual impairment and blindness worldwide. Among diabetes patients, about one-third of diabetes patients will develop diabetic retinopathy (DR) 1 diabetes retinopathy (DR) is one of the most common microvascular complications of diabetes, which is the leakage and blockage of retinal microvessels caused by chronic progressive diabetes, resulting in a series of fundus diseases, such as microangioma, hard exudation, cotton wool spots, neovascularization, vitreous proliferation Macular edema and even retinal detachment. 2 DR can be divided into proliferative diabetes retinopathy and nonproliferative diabetes retinopathy based on whether there is abnormal neovascularization from the retina. The severity of diabetic retinopathy(DR) is related to the course of diabetes and the degree of blood sugar control. Patients with this disease are generally asymptomatic at the early stages. However, with the continuous development of the disease, as the patient’s condition worsens, blurred vision, floating shadow or shadow, impaired vision and other symptoms begin to manifest. When diabetic retinopathy detected, it should be treated as soon as possible. Following early treatment, 90% of the patients can retain their vision. If it is detected late, the visual impairment can seriously affect the quality of life of the patients, and even lead to blindness. 3

Retinal hard exudates are of great significance. In patients with nonproliferative diabetic retinopathy, a large number of hard exudates are the main reason for the decline in vision, and are an important sign of blindness caused by diabetic macular edema (DME) and other eye diseases. As one of the manifestations of diabetic retinopathy,

4

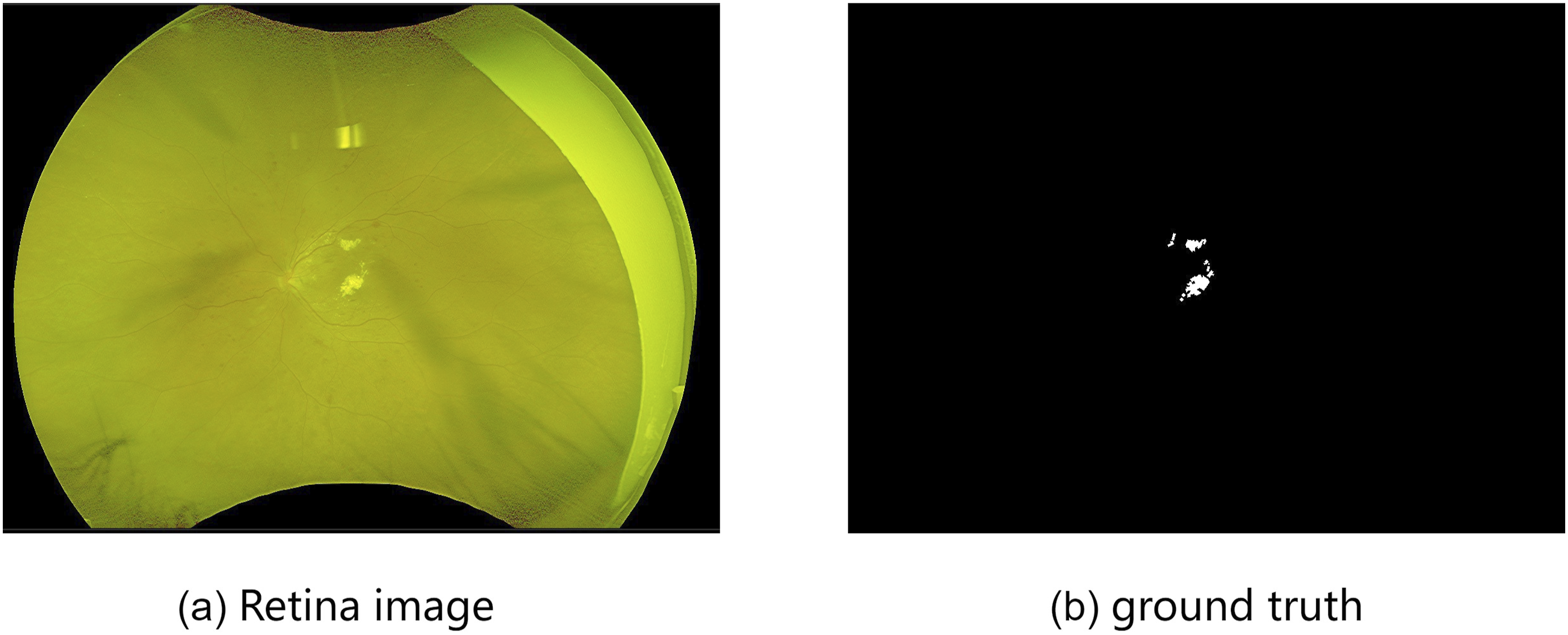

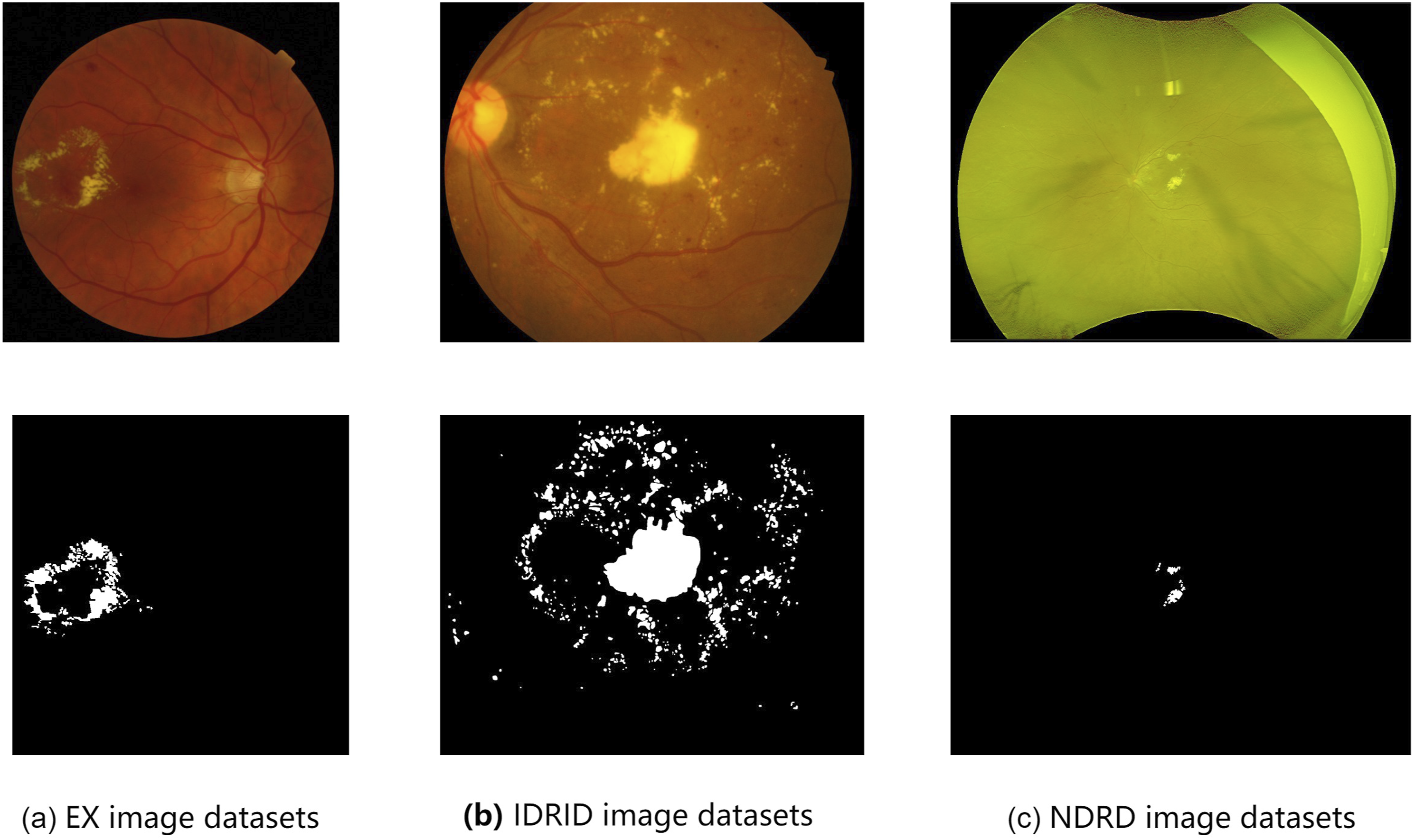

retinal hard exudates are the key to realize DR automatic detection. Figure 1 shows the pixel level labels of a fundus retinal image and the hard exudation. Examples of retinal images with hard exudation. (a) Retina image, (b) ground truth.

So far, the early screening of diabetes retinopathy has remained a thorny problem. The identification of fundus hard exudation requires years of experience. Generally, doctors perform naked eye identification. If they conduct careful screening, it will inevitably take a long time. However, the number of ophthalmologists is not enough to support them to conduct large-scale screening, which makes it difficult to identify early hard exudation. Especially in places with poor medical facilities, it is more difficult. However, if the diagnosis of hard exudation of the fundus can be realized in the early stages, visual impairment of patients can be greatly reduced. The use of computers to replace doctors in the screening of fundus hard exudation can greatly shorten the time and reduce doctors’ diagnostic pressure.

Traditional fundus hard exudation detection methods mainly include morphological method,5,6 threshold based method,7,8 clustering based detection9,10 and region growth detection.11,12 Later, some researchers proposed using machine learning to realize the automatic detection of hard exudation. Traditional machine learning methods rely heavily on feature engineering and deep learning occupies a leading position in medical image segmentation. Its basic idea is to build a multi-layer network to represent the target in a multi-level manner, so as to express the abstract semantic information of data through multi-level high-level features and obtain better feature robustness. Deep learning has also been used for fundus image analysis, and a series of innovations have been made to improve the accuracy.

However, the limitations inherent in the public dataset make the powerful methods obtained from theoretical studies fall short of expectations in practical clinical applications. There are several reasons for this: (1) Most of the hard fundus exudates in the public dataset are mid-to-late-stage images, and the hard fundus exudates are already largely concentrated and cluster-like. Therefore, many of the current methods are more adept at segmenting large hard exudates and less adept at identifying small hard exudates. (2) The current publicly available fundus image datasets used an ordinary fundus camera, which can only take fundus images with a 45° field of view (FOV).However, with advances in medicine, ordinary fundus cameras are rarely used in clinical medicine today.

Our starting point is to alleviate the above problems. First, in order to make the experimental data align more closely with the clinical data, better segment the DR lesions in the early stage, effectively inhibit the advancement of the disease, and reduce the decline of vision, we use the latest Optos ultra-wide angle fundus camera to take fundus color images, and select the images in the early stage of fundus hard exudation, so that the fundus hard exudation in the dataset is small and scattered. It helps the deep learning network can better deal with small and scattered fundus hard exudation.Second, we provided a DR dataset, which named NDRD, for DR lesion segmentation. the NDRD dataset consists of 77 images with pixel-level labeling. Third, five deep learning models were used for experiments on NDRD. The results show the method proposed by 13 performance.

In summary, the contributions of this paper are as follows: • A new dataset called NDRD is presented in this paper. To our knowledge, this is the first time that fundus images taken with the Oberberg camera have been used as segmentation images of fundus hard exudates. • An advanced semantic segmentation model and a network focused on segmentation of fundus hard exudates were used on NDRD datasets to evaluate model performance. The experimental results show that further improvements in the segmentation of small lesions are necessary to apply deep learning models to clinical practice.

Materials and methods

In semantic segmentation, especially in medical image segmentation, high-quality medical image databases are crucial for reliable evaluation and analysis of medical image segmentation networks. In order to be able to evaluate the network, it is necessary to provide relevant information about the medical images and ground truth together with medical images. The related information about the database, including the pathological images and their corresponding basic facts, is mainly introduced in this paper.

Dataset collection

The data was collected from patients in a hospital in Shanxi from January 2021 to December 2021. The male to female ratio is 11:9, and the age range is 45 to 66 years. To ensure the quality of the dataset, there are several aspects that need to be noted during the data collection process: (1) Choose patients with high levels of cooperation. (2) Choose patients with clear refractive stroma. (3) Before the examination, the patient will be dilated. The images were initially screened by professional doctors. However, images whose fundus could not be identified for more than one-fourth of the area and data with missing medical records were excluded. The single view image was adopted for data acquisition, and the following principles were followed for photographing: First, the center of an eyeground image should be located at the midpoint of the line between the optic disk and the fovea. Second, the focus should be sharp, and the various structures such as the disk, retinal blood vessels, retinal nerve fiber layer, and macular fovea should be clearly visible in the retina of healthy people. For patients with DR, the lesions should be clear and readable. There were more than 77 original data collected, but due to the quality of the images themselves, only 77 fundus images of diabetic retinopathy could be used in the experiment after screening. The reasons for image exclusion are: (1) the eyelashes of the collected diabetic retinopathy images were too long, resulting in occlusion; (2) the images were blurred and the lesion points were not clear.

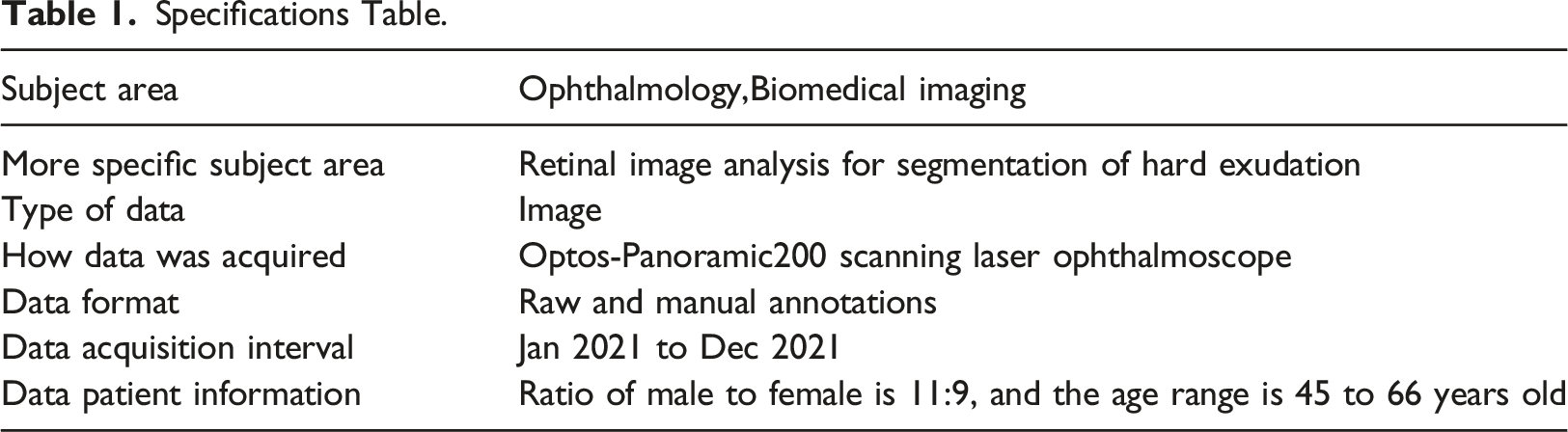

Specifications Table.

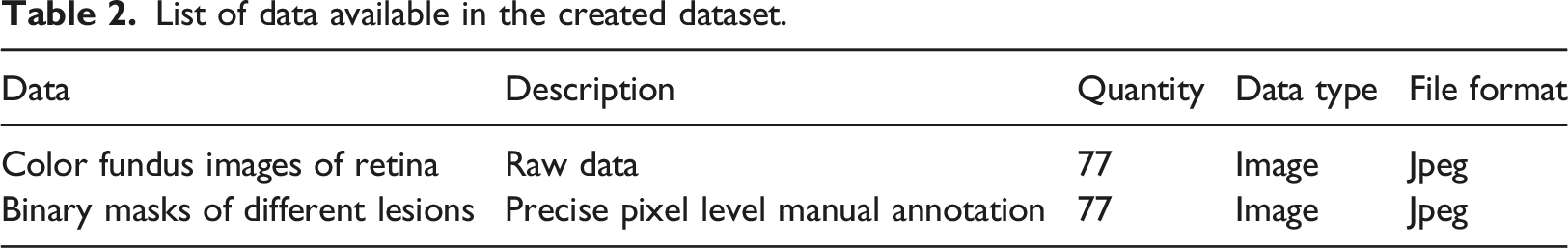

List of data available in the created dataset.

As a dataset for segmentation of nonproliferative fundus hard exudates, the present dataset meets the universal quantitative criteria.

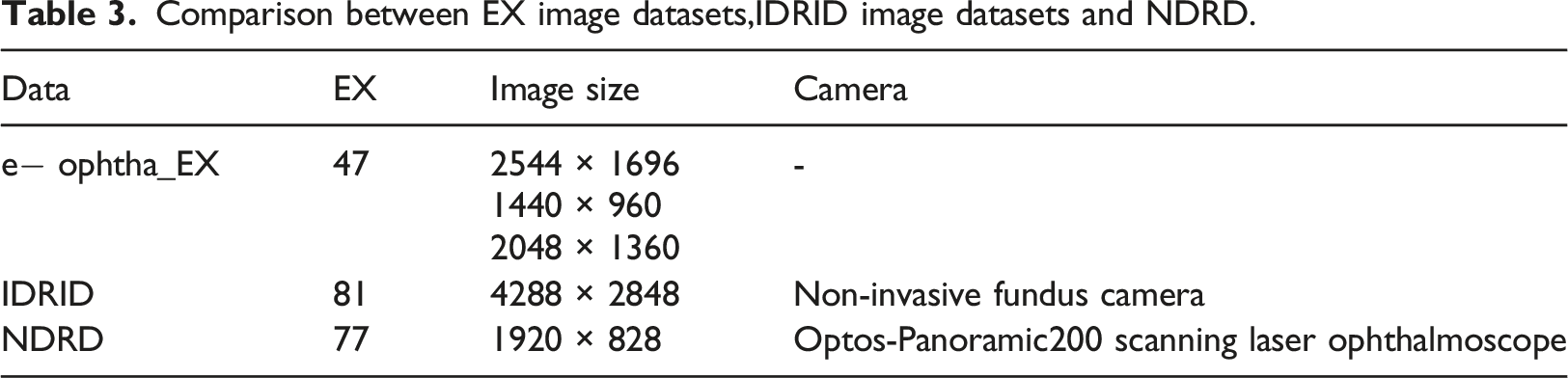

Comparison between EX image datasets,IDRID image datasets and NDRD.

Comparison between EX image datasets,IDRID image datasets and NDRD. (a) EX image datasets, (b) IDRID image datasets, (c) NDRD image datasets.

Dataset annotation

The corresponding ground truth for the color fundus images in our dataset was annotated at the pixel level under the guidance of medical experts using the integrated tool labelme, 14 an image annotation tool developed by the Computer Science and Artificial Intelligence Laboratory at Massachusetts Institute of Technology, which allows one to create customized annotation tasks or perform image annotation. The computer monitor used in the annotation was not calibrated. After the initial annotation of hard exudate lesions on color fundus images, an expert would carefully view the initial annotations to update and add further annotations, especially at the free individual spots, which are of greater value for early identification of hard exudates, thus making the annotation results more accurate and complete. After an image was annotated, it was saved as a json file with the same name. The color fundus image and the corresponding ground truth can be extracted from the json file.

Experiments and analysis of results

Preprocessing

The main objective of image preprocessing is to eliminate irrelevant information from the image, recover useful and real information, enhance the detectability of the information in question, simplify the data to the maximum extent possible, and thus improve the reliability of feature extraction, image segmentation, matching and recognition.

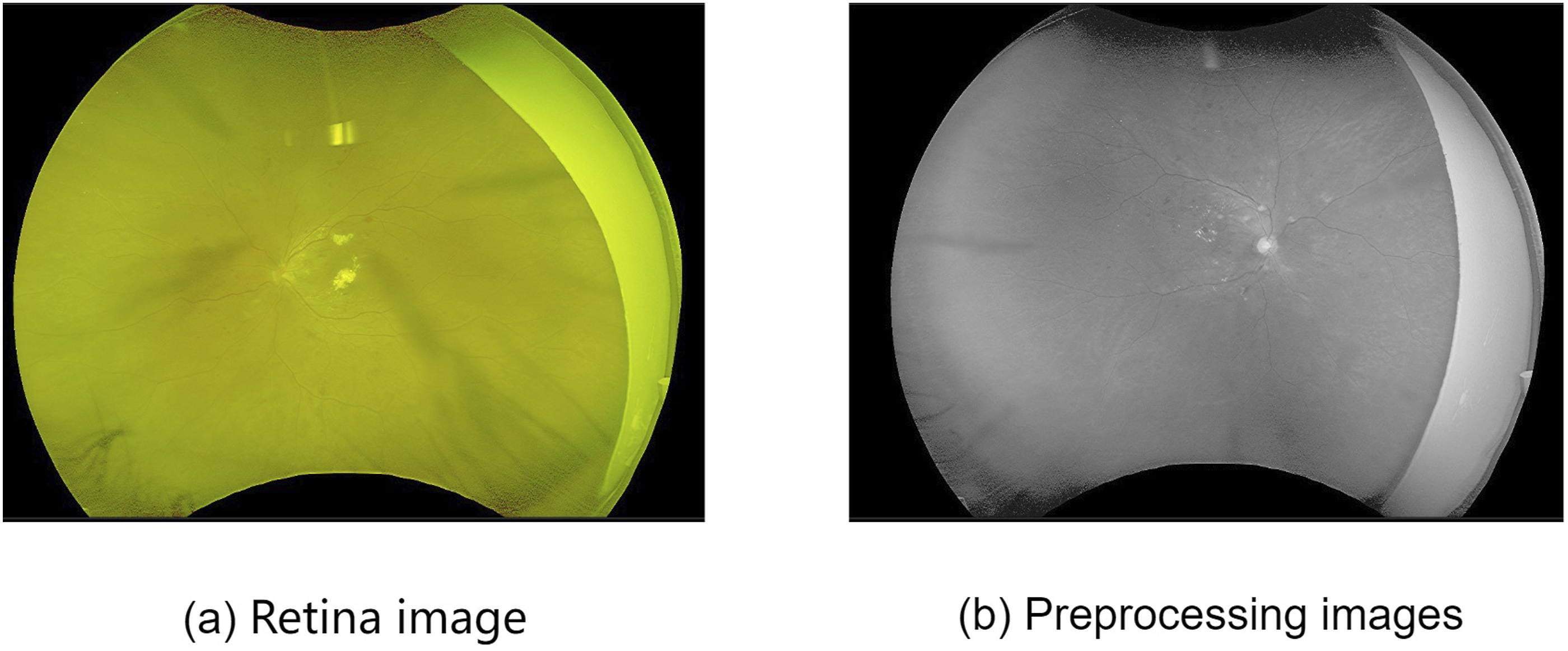

The images in the fundus hard exudate dataset are all in RGB format, with the G channel showing the best contrast in the retinal images

15

clearly distinguishing the structure and background of the fundus images. Therefore, the G channel was chosen for subsequent image segmentation. Figure 3 shows the original image sample and its ground truth. Before and after image preprocessing comparison. (a) Retina image, (b) Preprocessing images.

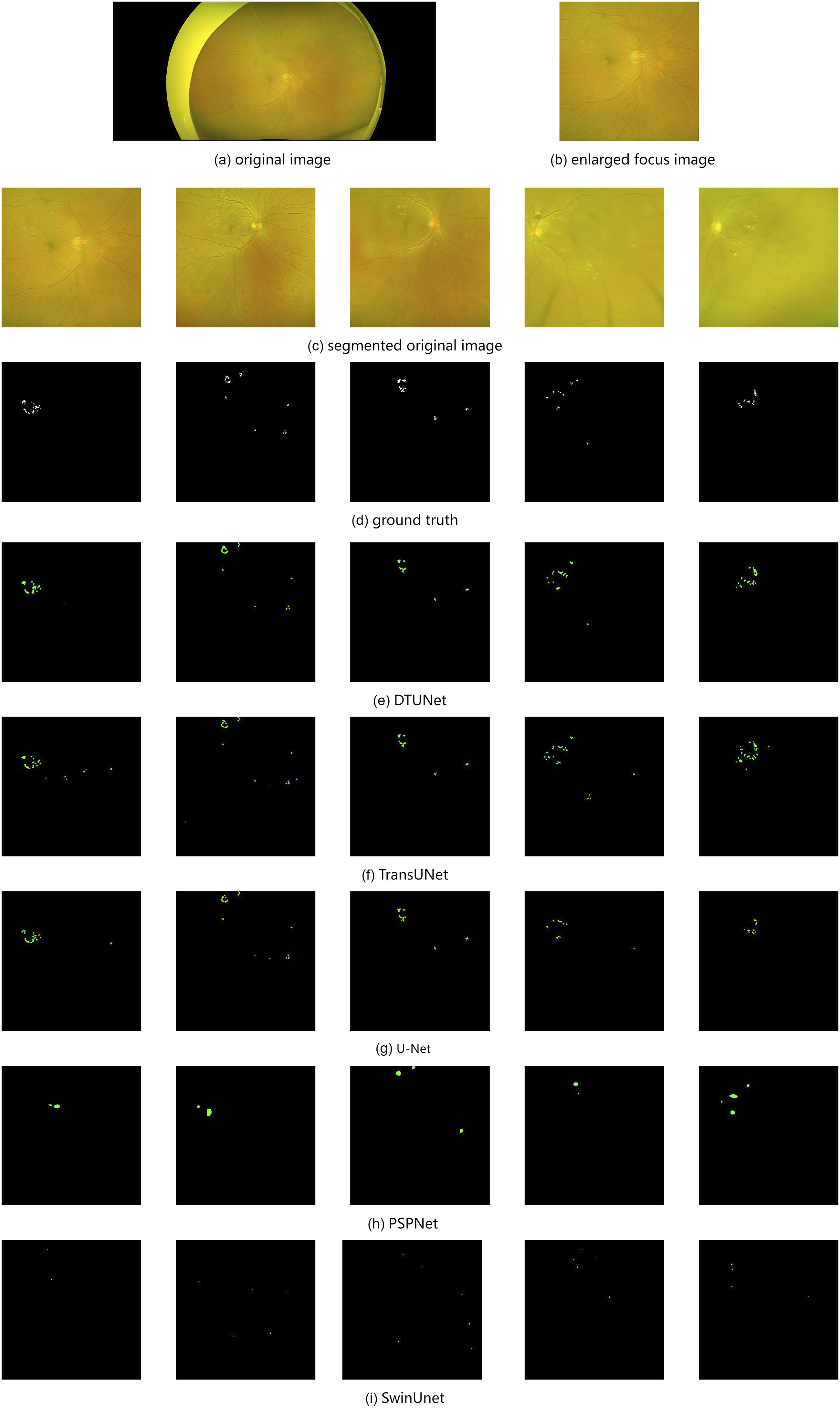

Because fundus hard exudation accounts for a small proportion in the fundus image, especially for the data given in this paper, the early hard exudation lesions are small and scattered, which aggravates the problem of category imbalance in the data set. Therefore, in this paper, the fundus image was segmented into 512 × 512 pixel size images, discarding the images that did not contain lesions, thus increasing the proportion of hard exudate in the image. After the prediction results were obtained, the images were spliced together for data evaluation.

DR lesion segmentation and results

NDRD relates to the semantic segmentation task in computer vision. In order to evaluate the difficulty of DR lesion segmentation in NDRD, five semantic segmentation models were used to experiment on this dataset: PSPNet, 16 U-Net, 17 TransUNet, 18 Swin-Unet 19 and DTUNet. 13 PyTorch framework is used by us to implement PSPNet, and ResNet is used as the backbone network of the model to initialize parameters. U-Net was also implemented in the PyTorch framework, using VGG16 as the model backbone network for initializing the parameters. TransUNet, Swin-Unet and DTUNet were also implemented in the PyTorch framework, using a backbone network that was pre-trained on ImageNet. 20 Our experiment used the SGD trainer to optimize the parameters. The learning rate was 0.01, the momentum was 0.9, and the weight attenuation was 0.0005. The network in this paper used one gpu on the Inspur rack server NF5280M5 to complete the experiment.

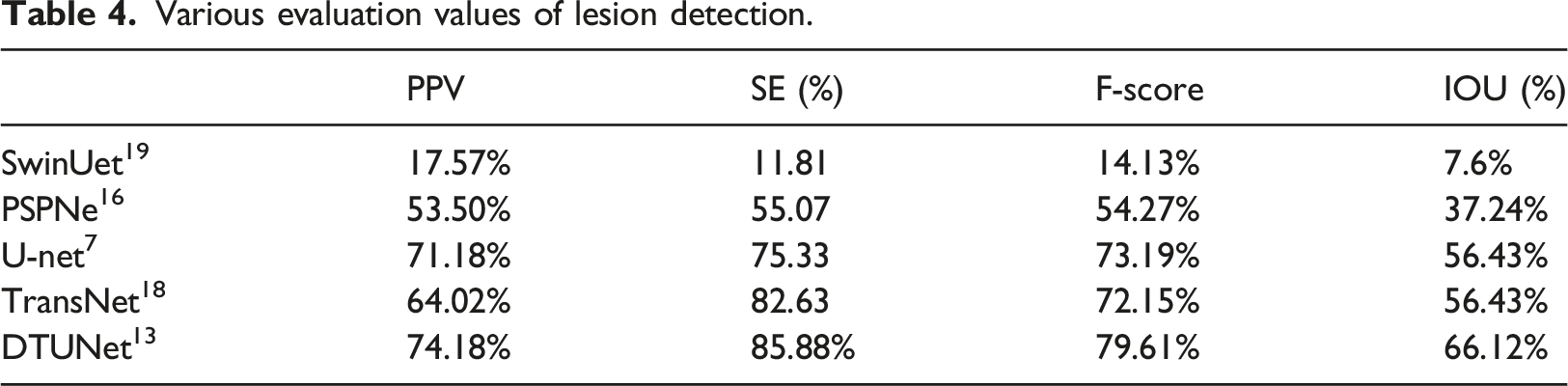

The segmentation results for each model are given in Figure 4. The four evaluation metrics for each model are given in Table 4: Sensitivity, Predictive value, F-score, and Intersection of Union. The results show that DTUNet has the best performance, but there is still some variation from clinical needs, and the experimental results suggest that there is still room for improvement in the segmentation of small hard exudates in the early fundus. Using the segmentation results of five segmentation networks, because the hard exudation area is small, we enlarged the focus area. (a) original image. (b) enlarged focus image. (c) segmented original image. (d) ground truth. (e) DTUNet. (f) TransUNet. (g) U-Net. (h) PSPNet. (i) SwinUnet. Various evaluation values of lesion detection.

Experimental results of DTUNet on multiple datasets.

Evaluation indicators evaluation

The segmentation effects of different segmentation methods on the dataset were evaluated at the pixel level. The commonly used pixel level evaluation method generally calculates the number of correctly classified pixels in all classifications, but this method is not suitable for the segmentation evaluation of hard exudation. In the daily evaluation, when doctors detect hard exudates, they cannot make the exudates completely match the images.

21

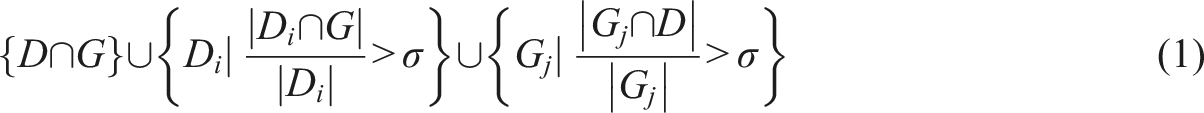

proposed a hybrid verification method, which requires the minimum overlap between the ground truth and candidate. We followed this article and redefined True Positives (TP), False Positives (FP), and False Negatives (FN). We used

A pixel is considered as a True Positive if, and only if, it belongs to any of the following sets:

Where

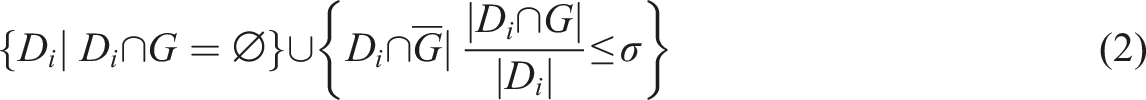

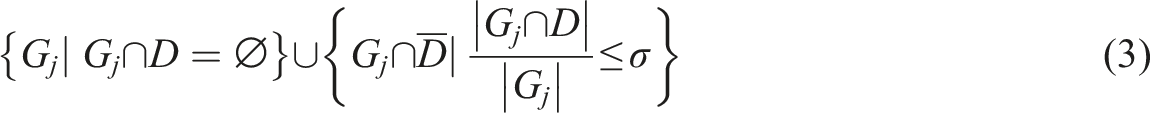

Here σ is set to 0.2. 22

A pixel is considered as a False Positive if, and only if, it belongs to any of the following sets:

A pixel is considered as a False Negative if, and only if, it belongs to any of the following sets:

All other pixels are considered as True Negatives (TN).

In this paper, sensitivity(SE = TP/FN + TP), positive predictive value(PPV = TP/FP + TP), harmonic value of Precision and Recall(F − score = 2 × SE ×PPV/SE + PPV), and intersection over union(IOU = TP/TP + FP + FN) are used to evaluate various segmentation methods.

Discussion and conclusion

Related datasets

In previous work, many color fundus image databases have been publicly used in order to study DR lesions.

The Kaggle DR dataset was derived from a DR detection challenge held on the Kaggle website in 2015, 23 which was sponsored by the California Health Care Foundation. The images in this dataset were taken using a variety of devices under a wide range of conditions, from a number of primary care sites in California and elsewhere. For each subject, two images of the left and right eyes with the same resolution were collected. A total of 88,702 color fundus images were collected, of which 35,126 images were used to train the model and the remaining 53,576 images were used for testing to assess the performance of the model. A clinician rated the data on an Early Treatment Diabetic Retinopathy Study (ETDRS) scale, 24 ranging from 0 to 4. This dataset is the largest publicly available DR classification dataset, however, it has several drawbacks. First, the dataset is used for classification and, as such, there is no manual annotation of the lesion contours and specific locations within it. Second, there are many poor quality images, which cannot be classified and which are of no relevance to the training of the model. Third, the scoring of all datasets was performed by a single doctor and could be biased.

The DiaretDB1 22 database is a publicly available dataset of high-quality fundus images of DR. The database consists of 89 color fundus images with a resolution of 1500 x 1152 pixels and a 50° FOV, but the dataset is only roughly labelled and is not labeled at the pixel level.

Messidor 25 was created by the TECHNO-VISION project, funded by the French Ministry of Defence Research in 2004, to evaluate different lesion segmentation methods for color fundus images in the framework of DR screening and diagnosis. It is also the largest publicly available database of fundus images, with a total of 1200 fundus images, consisting of 540 normal images and 660 abnormal images, from three different ophthalmological institutions for use. These fundus images were acquired by three ophthalmologic departments using a color video 3CCD camera on a Topcon TRC NW6 non-mydriatic retinograph with a 45 FOV where the image resolutions were 1440 × 960, 2240 × 1488, and 2304 × 1536. Eight hundred images were acquired with pupil dilation and 400 images without. It gives the corresponding diabetic retinopathy staging and macular edema symptoms and the DR severity level. An expert manually calibrated optic disk. The DR severity was graded into four levels based on the presence and number of microaneurysms, haemorrhages, and neovascularization. Hard exudates were used to grade the risk of macular edema. Again, there are some drawbacks to this database. First, there are no manually annotated lesions in this dataset. Second, it is susceptible to overfitting when trained with a deep neural network on the modified dataset. Third, its reference standard for DR grading is not consistent with international standards.

E-ophtha 26 is a database of color fundus images especially designed for scientific research in DR. It has been generated from the OPHDIAT Tele-medical network for DR screening in the framework of the ANR-TECSAN-TELEOPHT. A project funded by the French Research Agency (ANR). This dataset consists of two subsets, named e-ophtha MA and e-ophtha EX. Experienced professionals have labeled all images in this dataset at the pixel level. e-ophtha MA subset contains 148 images of microaneurysms or small hemorrhages and 233 images without lesions. e-ophtha EX contains 82 retinal images with resolutions ranging from 1440 × 960 pixels to 2544 × 1696 pixels with a 45° FOV, with 47 images of exudates and 35 images without lesions. All labels in e-ophtha are provided as losslessly compressed black and white images.

IDRiD 27 (Indian Diabetic Retinopathy Image Dataset) dataset consists of typical DR lesions and normal retinal structures annotated at the pixel level. A total of 81 images from IDRiD were divided into two groups: 54 images for the training set and 27 for the testing set. The images have a resolution of 4288× 2848 pixels, with 50° FOV. IDRiD is a high-quality dataset that provides not only pathology providing DR and DME, but also annotation of major fundus structures. However, the number of images in IDRiD is too small.

DDR 28 dataset is publicly available since 2019 for the classification, detection, and segmentation of DR. In the spirit of full coverage, the DDR dataset collected 13,673 color fundus images from 147 families, and these data cover 23 identities in China. The DDR dataset is by far the largest dataset for fundus hard exudate segmentation. For use in lesion segmentation, 757 fundus image pairs were selected for pixel level annotation in the DDR dataset, with image sizes ranging from 1440 × 960 pixels to 2544 × 1696 pixels and a 45° FOV. Of these 757 images, 383 were randomly selected for model training, 149 were used as the validation set and the remaining 225 were used as the test set to evaluate network performance.

Hard exudates are leaks of lipoproteins and other proteins through abnormal retinal blood vessels. They appear as small white or yellowish-white deposits with sharp edges. They are often arranged in clumps or ring-like annuli and are located in the outer retinal layer. 29 The early stages of hard exudate in the fundus have small and more dispersed exudate points that are less likely to cluster into sheets. In the process of hard exudation segmentation of diabetes retinopathy, there are two major problems: the huge difference in image size, and the imbalance in the types of image lesions, which account for a small proportion of the whole image. In the existing data set, most of the images were not lesions in the nonproliferative stage, and there were large hard exudation lesions. Make the model ignore the segmentation of small hard exudates during the training process.

Compared with these databases, the nonproliferative DR database proposed in this paper has images with early symptom of diabetes retinopathy, which can help early detection of the disease, resulting in early treatment and prevention of deterioration. Also, the Optos-Panoramic200 scanning laser ophthalmoscope can capture a larger area of the fundus, allowing for better coverage of fundus lesions.

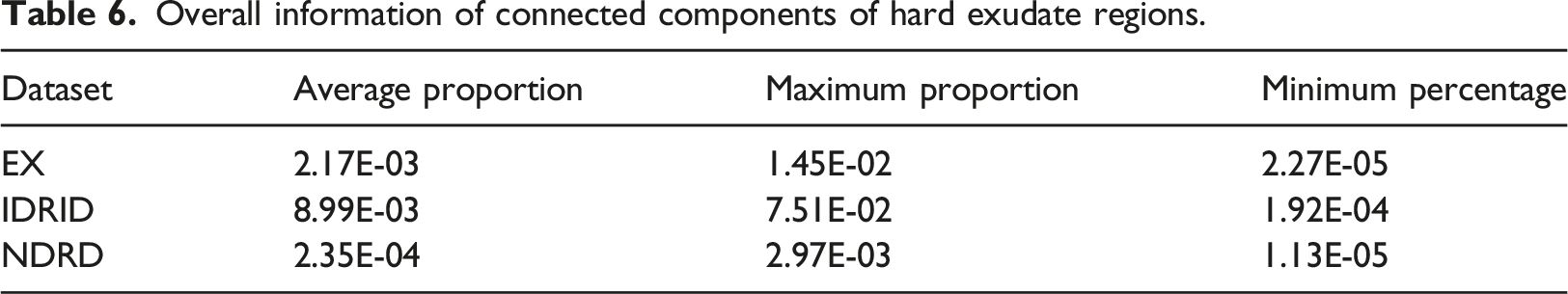

Overall information of connected components of hard exudate regions.

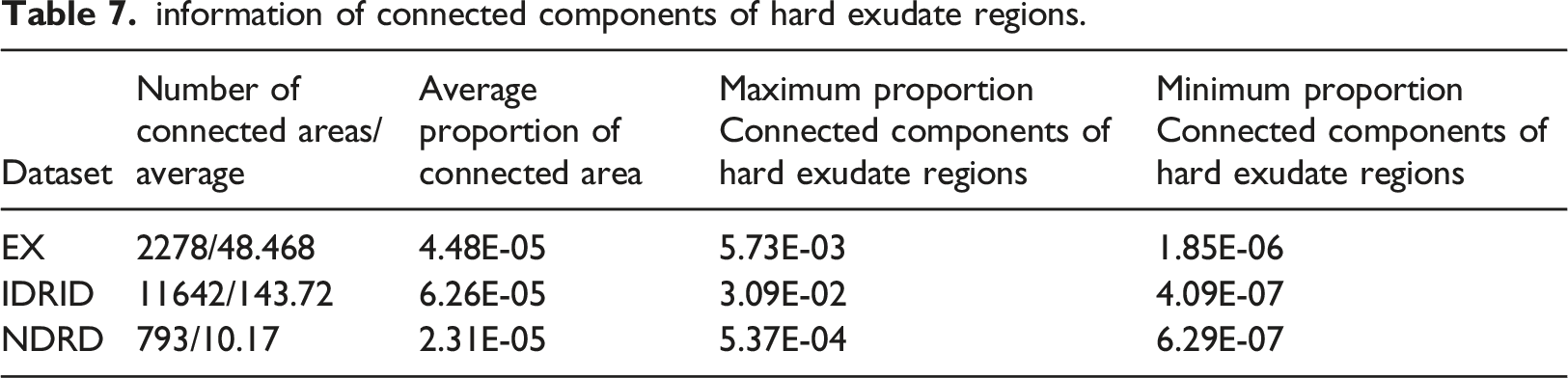

information of connected components of hard exudate regions.

Optos-Panoramic200 scanning laser ophthalmoscope

Conventional fundus cameras are mainly Topcon D7000, Topcon TRC NW48, Nikon D5200,Canon CR two cameras and non-invasive fundus cameras. With rapid advancements in technology, the development of fundus imaging cannot be underestimated. Optos Panoramic200 scanning laser ophthalmoscope has gradually become a standard examination tool for fundus diseases. With its characteristics of ultra-wide angle, no mydriasis, and fast imaging, it has subverted the traditional examination method of fundus color photography and shortened the clinical diagnosis and treatment process. The Optos scope has a wide shooting range. The traditional fundus camera has only a 30° or 45° shooting range. It is also difficult to use the jigsaw function to make panoramic images of peripheral lesions. In contrast, Optos has significant advantages. Optos can display a range of up to 200°, covering about 80% of the entire fundus area. Therefore, the range of fundus images taken by Optos is far greater than those of images in other databases. A wider range of fundus photos can effectively prevent missed diagnosis in the diagnosis process, and help further improve the accuracy and convenience of diagnosis and treatment. In addition, Optos has powerful functions. Combined with the eye position guidance function in four directions, the shooting range of Optos can reach 220° - 240°. It also makes up for the small scope of the traditional camera, with which it is easy to miss the diagnosis. In order to obtain the peripheral fundus conditions, it needs mydriasis, etc. Its functions include: 1. simple operation - no need to dilate the pupils as the pupil diameter is greater than 2 mm, 2. fast imaging - only 0.4 s to complete an imaging, 3. clear image intuitive display of the size range of the lesions, 4. reducing the patient’s waiting time, making it possible to optimize the ophthalmic diagnosis and treatment process It has great application value in improving medical quality.

Deep learning in hard exudate segmentation of the fundus

Good data is the basis for good work, and good tools can help us save time and improve the accuracy of work.

In recent years, deep learning has been widely used in hard exudate segmentation of the fundus. The powerful learning ability of deep learning enables it to learn the potential features of the target object from the original image. 30 proposed an improved Otsu segmentation method based on the minimum dispersion within a class to roughly segment the green channel of fundus images to obtain candidate focal regions. Then, multiple features of candidate regions are selected by logistic regression. Finally, the RBF neural network is established to segment the image using the optimized feature set of candidate regions and the corresponding decision results. 31 used convolutional neural network, color fundus images are segmented, and exudates in fundus images are detected. In order to improve the accuracy of exudate detection, the output of different anatomical marker detection algorithms is combined with the output of the exudate detection program 32 33 proposed an automatic method for the detection of hard exudates in fundus retinal images based on generative response networks. 34 proposed a generation countermeasure network based on the chain fusion of attention residuals to realize the segmentation of fundus HE.34,35 added the residual structure to the network to achieve segmentation. 36 used incremental learning to avoid retrospective interference caused by learning new features. 37 proposed the use of a support vector machine classifier and a fast R-CNN to preliminarily filtre the input images, and discard the images without hard exudative lesions, leaving only the images with hard exudative lesions. The number of images decreases, improving the speed. The images without lesions are discarded, so it is helpful to improve the accuracy. Three basic color spaces (RGB, LUV and HSI) were used for contrast enhancement by principal component analysis. 38 A group of training samples were generated in all color spaces and input into the CNN network for training. The results showed that different color space choices also affectd the detection of hard exudation.

The most commonly used loss in semantic segmentation is dice loss, and dice loss 39 is a regional loss, which measures the overlap error between prediction and ground truth. It is more effective when category imbalance is a serious problem. However, because the cost of small exudate regions in terms of dice loss is slight compared to that of large hard exudate regions, Fully Convolution Networks (FCNs) trained with dice loss are biased toward large hard exudate regions and result in small dentate identification of large hard exudates. This bias is exacerbated by the large size differences between the connected components of hard exudates, and it can easily misclassify small hard exudate regions as background. This makes it more difficult for the network to identify scattered lesions, making early identification of hard exudates difficult. A dual branch network is proposed by 40 to solve the problem of extreme category imbalance between the pathological changes and the background, as well as the difference in the size of different pathological changes in the fundus hard exudation data, so that with the increase of epoch, the attention of the network gradually shifts from large hard exudation to small hard exudation. With the proposal of Transformer, 41 more and more people applied it to medical image segmentation. 13 proposed a combined transformer and double-branching approach applied to the segmentation of hard fundus exudates.

In this paper, five segmentation models are used to test the data of nonproliferative DR. The results show that the segmentation of nonproliferative DR data is challenging and better models are needed.

The ultimate purpose of all scientific research is to provide assistance for clinical medical diagnosis. A good model can improve the speed and accuracy of disease identification, and a database close to the clinic can help the model find the correct direction for improvement. The poor performance of the most advanced algorithms in disease segmentation and detection indicates that there is a gap between research and clinical practice. The fundus disease images of patients with diabetes retinopathy in the early stage do not have large lesions like the images collected in the dataset, and deep learning also has generalization problems. A model that works well in a dataset may not be able to achieve good results in clinical images. The diagnosis of nonproliferative DR is of great significance for preventing further deterioration. Therefore, this paper focuses on collecting images of hard exudative fundus lesions at an early stage, and strives to provide assistance for the segmentation of hard exudates. In the future, we will continue to expand the database and the deep learning model to further promote the clinical application of scientific research.

Discussion

This paper proposes a new fundus image hard exudate database named NDRD, which includes color fundus lesion images and corresponding ground truth. To our knowledge, this is the first dataset to focus on early fundus hard exudates. The NDRD dataset provides a useful data set for the segmentation of nonproliferative diabetic retinopathy in order to verify the segmentation performance of the model. This paper uses five deep learning models to train on this dataset. The experimental results show that segmentation of small hard exudate lesions is more challenging than segmentation of large hard exudate lesions, and current models in the field of image variable segmentation are limited in their ability to segment small lesions. In the future, we will further expand and enrich the data set, including the number of data sets, the type of diabetes retinopathy, etc., to make the disease diagnosis more comprehensive. And develop better and more detailed models to help patients diagnose lesions early and effectively prevent disease progression.

Footnotes

Acknowledgements

The author expressed gratitude to the relevant contributors.

Author contributions

The author declares the contributions of each author in this article.

Declaration of Conflicting Interests

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was funded by the Shanxi Provincial Key Research and Development Project [Grant No. 202102020101007, 202102020101004] and National Natural Science Foundation of China [Grant No. 12271394 and Nature Science Foundation of Shanxi Province (202203021212111)].

Research ethics

The author declares that this is a retrospective observational study following the Declaration of Helsinki Principles.

Data availability statement

The datasets used and/or analyzed during the current study are available from the corresponding author on reasonable request.