Abstract

Chatbots can provide valuable support to patients in assessing and guiding management of various health problems particularly when human resources are scarce. Chatbots can be affordable and efficient on-demand virtual assistants for mental health conditions, including anxiety and depression. We review features of chatbots available for anxiety or depression. Six bibliographic databases were searched including backward and forwards reference list checking. The initial search returned 1302 citations. Post-filtering, 42 studies remained forming the final dataset for this scoping review. Most of the studies were from conference proceedings (62%, 26/42), followed by journal articles (26%, 11/42), reports (7%, 3/42), or book chapters (5%, 2/42). About half of the reviewed chatbots had functionality targeting both anxiety and depression (60%, 25/42), whereas 38% (16/42) targeted only depression, 38% (16/42) anxiety and the remaining addressed other mental health issues along with anxiety and depression. Avatars or fictional characters were rarely used in these studies only 26% (11/42) despite their increasing popularity. Mental health chatbots could benefit in helping patients with anxiety and depression and provide valuable support to mental healthcare workers, particularly when resources are scarce. Real-time personal virtual assistance fills in this gap. Their role in mental health care is expected to increase.

Introduction

Background

Depression and anxiety are one of the most common mental disorders, individuals can suffer from a combination of both. Over 264 million people of all ages suffer from depression alone.1–3 The figures for anxiety disorders are also a cause for concern, in 2017, 3.76% of the global world population was reported to have suffered from an anxiety disorder, which has changed little since 1990. 4

Using the traditional individual therapy sessions deemed as the gold standard to treatment becomes challenging to implement due to the shortage of mental health workers. Recent advances in technology such as chatbots for the purpose of assisting with therapy, training and screening has been recently reviewed in the context of mental health, 5 an important and welcome step as reports outline that developed countries have only about 9 psychiatrists per 100,000 people, 6 whereas countries classed as low income have as little as 0.1 for every 1,000,000 people, 7 this situation has only been exasperated by the COVID-19 outbreak as isolations and lockdowns have increased reported stress, anxiety and depression amongst the population.8,9 The additional challenge is those that require the help will not seek it due to the stigma attached to being diagnosed with mental health disorders which can give them the feeling of being exposed by seeking help from professionals.

Smartphone-based mental health apps, which include tasks usually take on by therapist or psychiatrist, represent a unique opportunity to improve the access to mental health services through overcoming the above-mentioned challenges. The number of mobile health (mHealth) apps often incorporate multiple techniques and features such as chatbots, such apps focused on mental health has rapidly increased; a 2015 World Health Organization (WHO) survey of 15,000 mHealth apps revealed that 29% focus on mental health diagnosis, treatment, or support. 10 Public health organisations are also promoting usage of such apps, 11 whilst technology companies are actively working to improve techniques. 12

Chatbots have been used in various fields such as customer services for some time now and have seen an increased use in the medical field, including mental health. Chatbots are computer programs that automatically communicate via text or spoken format and have been around since 1966. 13 Chatbots in mental health have been used as a means of support in training programs to level up your depression managing skills and prevention as opposed to actual therapy. 14 For example, MYLO 14 a chatbot designed explicitly based on Perceptual Control Theory and has been used to provide pain relief for depression, anxiety, and stress. Amongst some of the popular apps,15–17 “WoeBot” 15 is also a chatbot designed based on some known Cognitive Behavior Therapy (CBT) techniques, specifically psychoeducation for stress coping, and proved to effectively reduce symptoms of depression. Whereas we observed many studies containing chatbots for general mental health,18–22 fewer focus on anxiety and depression related chatbots alone.

Research problem and aim

With the vast amount of literature available about chatbots for depression and anxiety, it would be a tiresome task to check their features in detail on a rapidly evolving field. To the best of our knowledge, we did not come across previous reviews that explore and characterise the available up to date chatbot technologies for anxiety and depression. We predict that regular reviews of this nature will be necessary to keep users, healthcare providers, and developers informed of current evidence-based anxiety and depression chatbots. Therefore, this study aims to review the features of chatbots currently available for anxiety or depression.

Methods

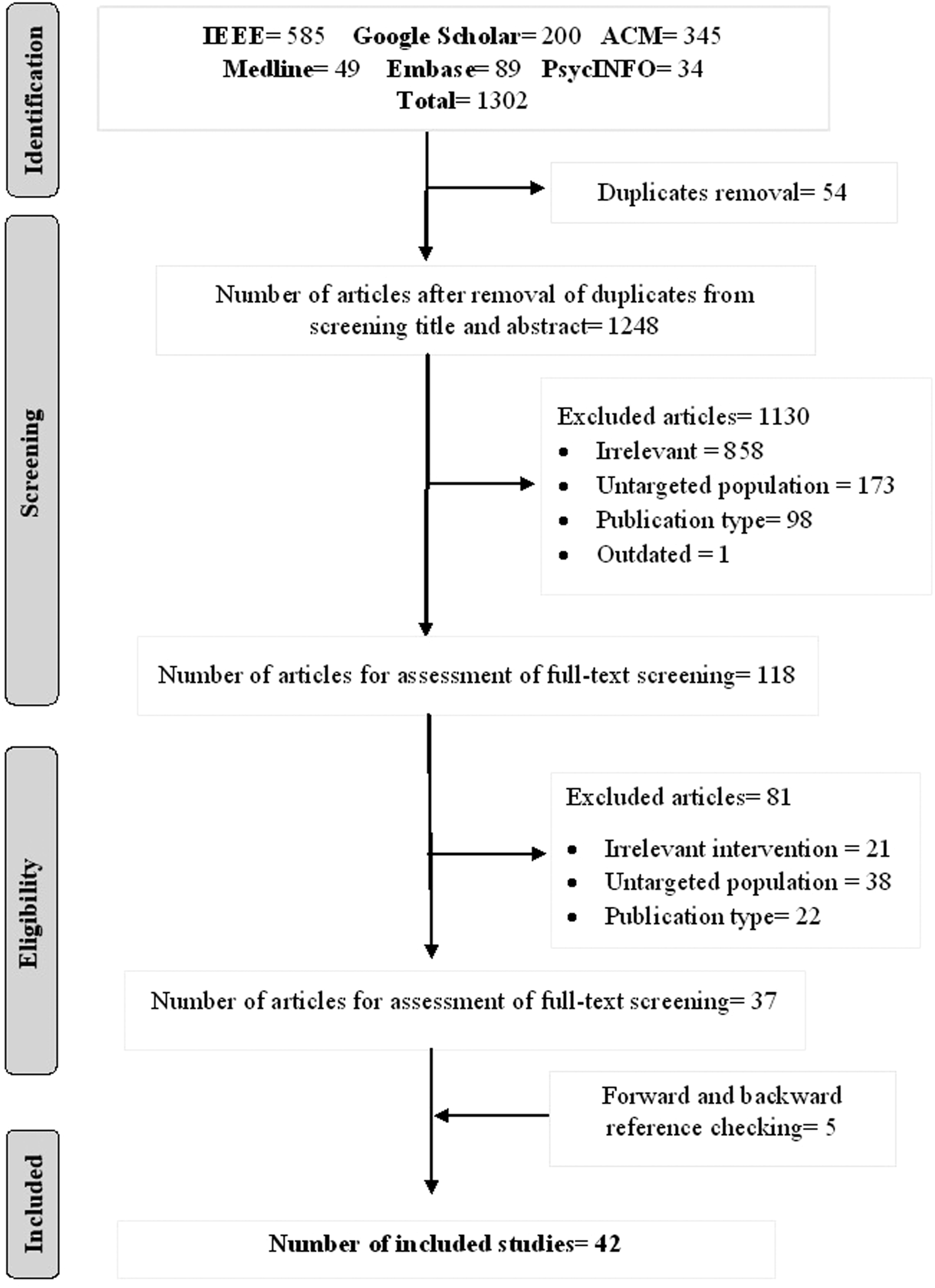

We investigated chatbots designated particularly for anxiety and depression. We followed Preferred Reporting Items for Systematic Review and Meta-Analysis (PRISMA, Figure 1) as a guideline for our scoping review. PRISMA chart.

Search strategy

Search sources

The research papers were obtained from 6 databases including: ACM digital library, IEEE, Google Scholar, Embase, Medline, and PsychINFO. We retrieved only the first 10 pages of Google Scholar particularly scanned the first 100 citations. Forward and backward reference list checking was also carried out to identify further relevant studies. We included studies from 2015 – 2022. The search process took place on 10th-18th of October 2020. An updated search was performed between 28th of March 2022 and 31st of March 2022 to include studies up to March 2022.

Search terms

This review’s search term combined two main elements, “anxiety and depression-related terms" and “chatbot related terms”. Given the population of (Anxiety and Depression) and intervention of (Chatbots) the search strategy was as per the following in ACM, Google Scholar, and IEEE: ((“Anxiety” OR “anxious” OR “depression” OR “depressed”) AND (“conversational agent*” OR “conversational bot*” OR “conversational system*” OR “conversational interface*” OR “chatbot*” OR “chat bot*” OR “chatterbot*” OR “chatter bot*” OR “smartbot*” OR “smart bot*” OR “smart-bot*”)). Similar search terms were carried out in MEDLINE, EMBASE, and PsychINFO, however with Medical Subject Headings (MeSH) was incorporated in these three databases.

Study eligibility criteria

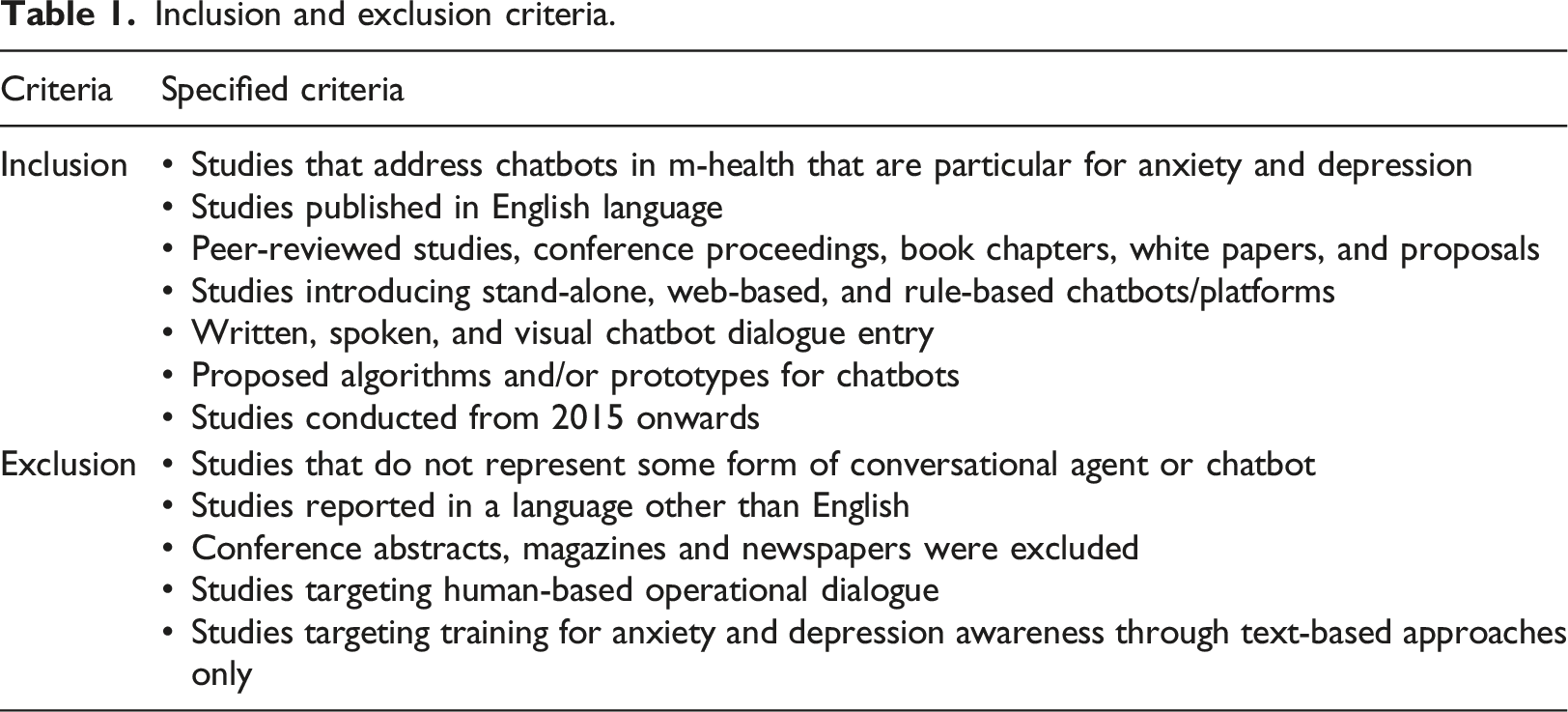

Inclusion and exclusion criteria.

Studies that targeted training programs for anxiety and depression awareness for mental health that did not contain chatbots were excluded from this review, since our objective was looking at chatbots only. We included all modes of input including written, spoken or visual, we excluded the articles that aimed for dialogues generated through human operators as these are clearly not chatbots.

Study selection

The included studies’ filtering process was performed in three consecutive phases starting with the identification, screening, and eligibility phase. The included articles were assessed through full texts and deemed relevant to the review. Two co-authors performed the screening and eligibility phase independently and any disagreements were constructed through discussion between the reviewers. The study selection process was supported using Rayyan software– A web-based systematic review tool that helps expedite the screening phase. 24 The studies were imported to Rayyan in RIS format and abstracts were screened whilst including and excluding studies according to our eligibility criteria.

Data extraction and data synthesis

Two independent reviewers carried out data extraction, and results were recorded. Chatbot related features were extracted, including the chatbot name, the aim of the chatbot, chatbot dialogue input and output, the type of dialogue was also recorded to indicate whether the input modality was initiated through the system or the user, we also included further information such as study features including authors name, year of publication and country. The purpose of the chatbot was classified into four categories: education, therapy, diagnosis, and counseling. Once extracted data was recorded, data synthesis was performed using a narrative approach.

Results

Search results

Six bibliographic databases were searched initially using the predefined search protocol. Figure 1 illustrates the search process undergone for this scoping review. The initial search returned a total of 1302 citations that contained a mixture of books, conferences, journal articles and reports. From the results, 54 duplicates were removed, leaving 1248 articles for the title and abstract screening. Out of the 858 titles or abstracts seemed irrelevant, 173 studies had a different population, 98 studies had publication type that did not match this study’s eligibility criteria, and one study had been outdated. By applying the exclusion criteria, a total of 1130 articles were removed after conducting the title and abstract screening. The remaining 118 articles went through full-text screening from which 81 articles were further removed. These 118 studies included 21 studies that did not match intervention requirements, 38 studies with different populations and 22 studies with publication type which did not pass the eligibility criteria. 37 studies were selected after the process of reading the full texts. These studies either contained an existing chatbot being used within the study or a chatbot in the form of proposals.

Furthermore, five articles were identified from forward and backward reference list checking. In the final step, 42 studies remained, which formed the final dataset included in this scoping review.

General description of the studies

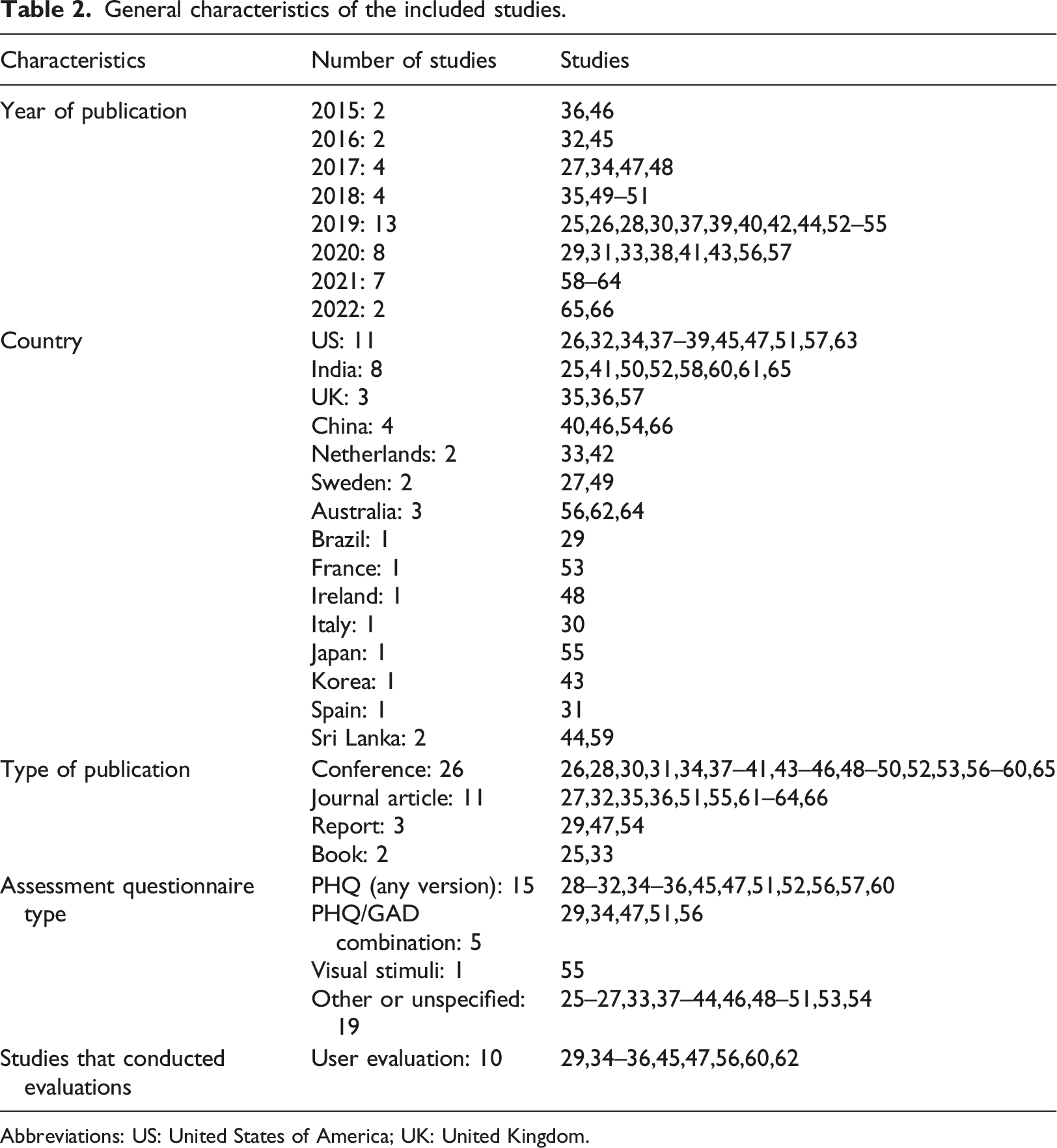

General characteristics of the included studies.

Abbreviations: US: United States of America; UK: United Kingdom.

While the number of included studies was stable between 2015 and 2018, it sharply increased in 2019 and reached 13 studies as outlined in Table 2. About 26% (11/42) of the studies originated from the US, 26% (11/42) of the studies from India and 19% (8/42) from China whereas 10% (4/42) were UK based. Sweden Netherlands and Sri Lanka jointly had 5% (2/42) each and the remainder (Australia, Brazil, France, Ireland, Italy, Japan, Korea, Spain) had one study each. Whilst most of the studies were conference proceedings (62%, 26/42) the remainder were either journal articles (26%, 11/42), reports (7%, 3/42) or chapters in books (5%, 2/42).

Twenty four percent (10/42) studies conducted preliminary evaluations of existing chatbots by analyzing their user experience, trust, engagement level, effectiveness, feasibility, efficacy and acceptability through clinical trials, RCT or data analysis.

Chatbot descriptions

This section describes the characteristics of the chatbots from the 42 included studies. The data was extracted in an excel file.

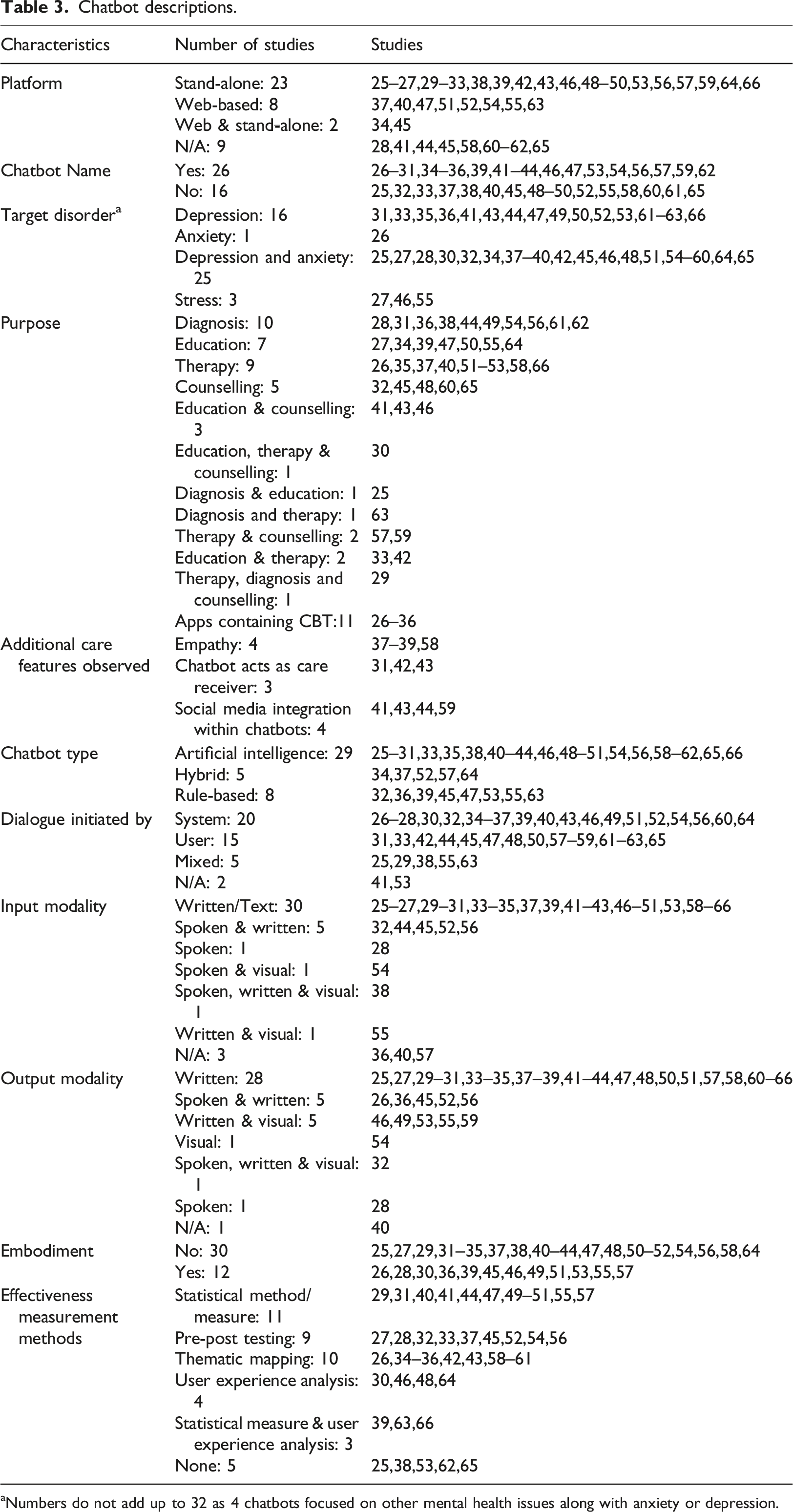

Platform and chatbot name

We identified 19% (8/42) studies with web-based chatbots and 55% (23/42) stand-alone chatbots; 21% (9/42)of the studies platform was not reported, as most of them were proposed chatbots. More than half (26/42) of the included studies have given a name to identified chatbots.

Target disorder

Majority of the chatbots had functionality targeting both anxiety and depression 60% (25/42), whereas 38% (16/42) studies targeted only depression and one targeted anxiety only. Seven percent (3/42) studies also targeted other mental health issues in addition to anxiety and/or depression such as stress. Other mental health disorders were reported in our findings which we categorized as additional disorders i.e. not anxiety or depression.

Purpose

Chatbot descriptions.

aNumbers do not add up to 32 as 4 chatbots focused on other mental health issues along with anxiety or depression.

Chatbot type (approach)

In this study, 19% (8/42) of the publications demonstrated the developed systems were either rule-based or Artificial Intelligence-based 69% (29/42). This study also revealed that the remaining 12% (5/42) were some sort of cross between the two (hybrid). Sequence to sequence model along with Bidirectional- Long Short-Term Memory (Bi-LSTM) module was used (10%, 4/42) in combination to process users text conversation either with the chatbot or on any social platform.

Assessment questionnaire

Patient Health Questionnaires (PHQ) were mentioned in some of the studies as a basis for assessment purpose. A number of PHQ versions (36%, 15/42) were used including PHQ-9 being the most prominent method adopted in studies, followed by PHQ-2 and PHQ-8. While others opted for either their own proposed questionnaires or generic depression questionnaires such as the self-report scales, some used measures of positive and negative affect such as the Positive and Negative Affect Schedule (PANAS). The depression anxiety level measurement scale Generalized Anxiety Disorder (GAD) version 7 was observed in 12% (5/42) of studies and used in combination with PHQ-9. Other methods used per study were visual stimuli, Structure Association Technique (SAT) method, observed gesture and expressions through images and identified unrecognized feeling and emotions of patients and provided therapy accordingly.

Dialogue initiated by

The initial dialogue techniques were initiated by the user in 36% (15/42) of the studies, by the system in 48% (20/42) and the remainder was with mixed (user and system) i.e. 12% (5/42) and 5% (2/42) did not specified (i.e. a proposed chatbot without actual implementation or not mentioned in the study, including proposals are important as this is a fast evolving field).

Input/output modality

About 71% (30/42) of the chatbots had a written option as a mode of input (keypad driven), 19% (8/42) also had spoken as an additional option for mode of interaction and 7% (3/42) reported some form of visual input such as via camera. The majority of chatbots in the included studies i.e. 67% (28/42) had a written output to the user, and 17% (7/42) had speech as an output modality.

Additional care features observed

Among the additional features observed within the chatbots 10% (4/42) reported their chatbot as being empathic towards users. Furthermore 7% (3/42) of the studies contained an interesting feature where they acted as the care-receiver as opposed to the care-giver. About 10% (4/42) studies integrated within their chatbots user’s social media platforms and analyzed their mental states through their social interactions.

Embodiment

Although chatbots have seen an increase in the use of embodiment techniques such as avatars, within this study the majority 71% (30/42) did not have such a feature whilst the remainder 29% (12/42) did.

Effectiveness measurement methods

The effectiveness was evaluated in 86% (36/42) of the studies indicating that chatbots are to be considered an effective tool for depression or anxiety. The majority 26% (11/42) of the studies reported statistical measures as method of evaluating the effectiveness of the chatbots, 21% (9/42) reported their methodology as pre-post testing meaning evaluating some sort of mental scoring before using the app and then after the use. Twenty four percent (10/42) applied thematic mapping. Seven percent (3/42) of the studies did not report on effectiveness.

Discussion

Principal findings

In this scoping review, we aimed to report on the major findings from the literature on chatbots that aid individuals with anxiety or depression. We identified 42 chatbots reported in literature with different characteristics and app features aimed at functioning as alternatives to individual therapy sessions with medical professionals. Our findings indicate that 60% (25/42) studies were reported within our search criteria that targeted anxiety and depression, of which 38% (16/42) targeted depression and excluded anxiety whilst some targeted additional disorders such as stress.

Majority of the chatbots had common purpose i.e. were for anxiety and/or depression diagnosis or screening (24%, 10/42). The remainder was divided between education, counseling, therapy, or a combination of these. Of the included 42 studies 69% (29/42) had some form of an AI-driven system in-line with the current trends and increasing demand for AI-driven systems in all aspects of life. Messenger style online systems have been around for a long time, historically these had been human-human systems and web-based. Automation of such systems has been attempted previously. Still, it is only recently that there has been an explosion in tech companies producing high-quality human-like chatbot apps that eliminate the need for humans and are replaced with an intelligent chatbot. The intelligence level varies with most (especially customer service style) chatbots not needing anything beyond a rule-based system. But as demand for more human-like and personalized systems increases, we see more AI-driven chatbots with big players such as Google and Amazon producing some remarkable results. Such systems can be a real solution to the shortage of medical professionals in mental health cases.

Cognitive Behavioral Therapy (CBT) is a therapeutic method that is proven to be an effective treatment for depression, and to change negative thought patterns into positive ones. 25 Therefore, it is embedded in many of the chatbots found in this review26–36 and used for stress reduction and motivational maintenance, public speaking anxiety (PSA) and to manage negative thoughts and depression in 26% (11/42) of the studies.

Most of the studies deployed Artificial Intelligent (AI) techniques, Natural Language Processing (NLP) for chatbots development. Sequence to sequence model along with Bidirectional- Long Short-Term Memory (Bi-LSTM) module was opted (4/42.10%) to process user’s text conversation either with the bot or on any social platform.37–40

The Majority (88%, 37/42) of the studies conducted preliminary evaluations for their chatbots, this is crucial not only to develop these mental health apps but also to evaluate their effectiveness and improve them accordingly. Moreover, it is important to indicate the questionnaire, or the survey used to generate or collect relevant data from clients with anxiety and depression. Within this study we observed that most chatbots made use of Patient Health Questionnaires (PHQ) be that various version (36%, 15/42) of including PHQ-9 being the most prominent method adopted in studies.

Normally the chatbots are observed to be following a traditional way of therapeutic counselling, whereas this study identified some additional unique features that chatbots offers to users experiencing symptoms related to depression or anxiety. For example, CARO 41 and EMMA 39 are two chatbots that uses empathetic phrases during conversation with the users. Similarly, another study 37 outlines a similar design while handling major depressive or other mental health symptoms in a careful and emotional manner.

Our study also revealed three unique chatbots, “Vincent”, 42 “Gloomy” 43 and “Perla” 31 where they acted as the care-receiver. These chatbots serve as the one having a mental illness and are looking for help. They draw a deeper level of understanding and belonging to clients as they disclose their vulnerable experiences. With this technique of the chatbot being the care-receiver it allows the user to self-reflect. Thus, caring for a chatbot can help people gain greater self-compassion, enhance their problem-solving skills, realizing and accepting they may be going through the same motions as the chatbot. Since social media has become a platform where people explicitly express their opinions or feelings, it can be used for the enhancement of mental health issues. The potential of social media websites to help analyze users' mental states is also portrayed in a few studies. Three studies used Facebook chat history and other data within the chatbots.41,44, and 43

Strengths and limitations

Strengths

Given that this review was conducted and reported according to the PRISMA Extension for Scoping Reviews, we were able to produce a high-quality review. This study is the first review in the literature that focused on chatbots for anxiety and depression, which are the most common mental disorders. Thus, it helps readers explore the current state and features of chatbots for anxiety and depression.

We searched the most commonly used databases in healthcare field and information technology field to retrieve as many relevant studies as possible. The risk of publication bias is minimum in this review because we searched Google Scholar and conducted backward and forward reference list checking to identify grey literature. The risk of selection bias is minimum in this review given that two reviewers independently screened the studies and extracted the data. Excluding studies conducted before 2015 made this review more up to date and conclusive, although evidence suggests not many exist pertaining to our inclusion criteria in any case. Some similar reviews to ours exist discussing chatbots and mental health without a specific focus on anxiety and depression such as a recent review by Abd-Alrazaq et al. 5 This review will help readers gain insight into the available literature around chatbots related to anxiety and depression.

Limitations

We did not report engagement measures or other detailed user metrics as this would go beyond the purpose of conducting an overview and would be more suited to a follow up systematic review and detailed thematic analysis which our group plans as a follow up study. A follow up study would include chatbots from the Google or Apple play stores and evaluate metrics such as user feedback and reviews, this would return 100s of apps that are not available as peer reviewed articles. Due to practical constraints, our search was restricted to English studies published between 2015 and 2022, and we were not able to search interdisciplinary databases (e.g., Web of Science and Scopus). Accordingly, we have likely missed some relevant studies.

Practical and research implications

Practical implications

Within this review we have highlighted the studies that discuss chatbots available conceptually, web-based or stand-alone in the form of a smartphone application. The summary information about each that we have outlined could aid healthcare professionals in advising end users with their decision-making process to identify the most appropriate chatbot for anxiety and depression. Where previous such reviews have concentrated on general mental health disorders, we have been able to filter around 1000 studies to a final set of 42 that fulfilled our inclusion criteria. Most of the studies we reviewed were chatbots for stand-alone (smartphone app) based indicating the increasing popularity of such chatbots targeting the smartphone market. However, we believe despite the availability of smartphones and internet, there are many people around the world who would benefit from such chatbots that would not have access to smartphones or accessibility levels would be higher via a web-based method, we would therefore encourage chatbot developers to target such audiences and not restrict chatbots to smartphone-based solutions. Twenty nine out of 42 chatbots we reviewed used some form of Artificial Intelligence (AI) and this is a promising step in the right direction, AI driven chatbots can create responses to complicated questions from humans and allow the user to lead the conversation as opposed to restricting to pre-defined responses. Furthermore, they provide a conversation which is less robotic and more human like through the use of natural language processing (NLP) and understanding the context of the conversation. In general, mental health chatbots do not make use of such technologies according to previous studies. 5 Within the chatbots we reviewed we were encouraged by the use of AI within anxiety and depression chatbots although not all the studies provided in depth details of the algorithms used, of the AI driven chatbots we reviewed 5 claimed use of NLP. We would encourage future developers of anxiety and depression chatbots to incorporate further usage of the NLP technologies fully especially as these are readily available, there is no reason why in the very near future all the anxiety and depression chatbots incorporate the latest and seamless NLP driven conversations other non-medical fields have already implemented. We would also encourage future developers of chatbots to incorporate different languages as all the reviews we looked at where primarily in the English language, as this would open the usage to parts of the world currently not targeted. This may not be as easy as it sounds with NLP not always as advanced in languages other than English.

Research implications

Despite the focus of chatbots primarily as a virtual assistance type character to provide therapy or “friend” character to the user, three bots “Vincent” 42 “Gloomy” 43 and “Perla” 31 particularly stood out more for their concept and not only due to the technological or AI they used. Rather than the chatbot being the care-provider these chatbots contained an interesting feature where they acted as the care-receiver. These chatbots serve as the one having a mental illness and are looking for help, they draw a deeper level of understanding and belonging to users as they disclose their vulnerable experiences in college or personal life and build online support by displaying depressive symptoms and uneasiness in life. The idea behind these apps was to develop a self-compassion among individuals, by helping others (the chatbot) having the same issues as them, which they did not recognize or neglected initially made them understand their situation better and how they can come out of such a situation. Thus, caring for a chatbot can help people gain greater self-compassion and enhance their problem-solving skills.

A study conducted in April 2019 42 demonstrated that caring for a chatbot can help people gain greater self-compassion than being cared for by a chatbot, while another study 45 showed that self-compassion increased for both conditions, receiving, and giving care to chat bot, but only those with care-receiving Vincent significantly improved. 42 We felt this concept of the user being the caregiver should be implemented in future anxiety and depression related chatbots.

Conclusion

This scoping review identified 42 different studies about chatbots for depression and anxiety. The most common purpose of the chatbots was the delivery of diagnosis, therapy, and education. The commonly used form for both input and output modality was recognized as written.

Chatbots are an emerging trend in psychiatric research. Although preliminary research speaks in favour of patient outcomes and adoption of chatbots, there is a lack of consensus on reporting and evaluation standards for chatbots, as well as a need for increased transparency and replication. There is currently a shortage of higher quality evidence for any form of diagnosis or management of anxiety and depression and in mental health research in general involving chatbots. With the right strategy, study, and clinical implementation process, however, the field can take advantage of this technological transition and is expected to gain the most from chatbots than any other area of medicine. By using existing fast-evolving technologies that incorporate Machine Learning, AI and NLP combined with evidence-based collaborations between developers and mental health experts we predict an increase in the development and usage of anxiety and depression related chatbots. Their role in mental health care is expected to increase following the COVID-19 pandemic and its impact on mental health and wellbeing of the world population especially for anxiety and depression. Use of such technologies have a promising and invaluable future as the use of chatbots provide a real beneficial and efficient way for psychiatric conversational therapies especially in areas of the world where the gold standard one on one psychiatrist to patient conversations are simply not possible or unaffordable.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Qatar National Research Fund (NPRP12S-0303-190204).