Abstract

Design of smart navigation for visually impaired/blind people is a hindering task. Existing researchers analyzed it in either indoor or outdoor environment and also it’s failed to focus on optimum route selection, latency minimization and multi-obstacle presence. In order to overcome these challenges and to provide precise assistance to visually impaired people, this paper proposes smart navigation system for visually impaired people based on both image and sensor outputs of the smart wearable. The proposed approach involves the upcoming processes: (i) the input query of the visually impaired people (users) is improved by the query processor in order to achieve accurate assistance. (ii) The safest route from source to destination is provided by implementing Environment aware Bald Eagle Search Optimization algorithm in which multiple routes are identified and classified into three different classes from which the safest route is suggested to the users. (iii) The concept of fog computing is leveraged and the optimal fog node is selected in order to minimize the latency. The fog node selection is executed by using Nearest Grey Absolute Decision Making Algorithm based on multiple parameters. (iv) The retrieval of relevant information is performed by means of computing Euclidean distance between the reference and database information. (v) The multi-obstacle detection is carried out by YOLOv3 Tiny in which both the static and dynamic obstacles are classified into small, medium and large obstacles. (vi) The decision upon navigation is provided by implementing Adaptive Asynchronous Advantage Actor-Critic (A3C) algorithm based on fusion of both image and sensor outputs. (vii) Management of heterogeneous is carried out by predicting and pruning the fault data in the sensor output by minimum distance based extended kalman filter for better accuracy and clustering the similar information by implementing Spatial-Temporal Optics Clustering Algorithm to reduce complexity. The proposed model is implemented in NS 3.26 and the results proved that it outperforms other existing works in terms of obstacle detection and task completion time.

Introduction

In recent years, Internet of Things (IoT) makes the all lives much easier though deployment of smart devices. In the same way, the smart devices aim to improve the life of Visually Impaired Peoples.1–3 The main challenging every visually impaired person face is navigation and obstacle detection. With the advancements of IoT, now it becomes easier through many sensors like ultrasonic, accelerometer etc. and artificial intelligence (AI) techniques.4,5 With this fusion, many real-time blind assistive systems have been developed to navigate the peoples by avoiding obstacles in their route. For that, the following methodologies are generally used,6–8 • Sensory based Obstacle Detection • Computer Vision based Obstacle detection

Sensory based approaches detect obstacles by fusing sensor data from different sensors. This data is further compared with the threshold value to detect the obstacle. In computer vision based approach, image processing techniques have been applied to detect the obstacles.9,10 In the computer vision based approaches, deep learning or machine learning approaches are used frequently. In both approaches, the aim is to generate optimal feedbacks to the users. The system can be implemented in a stick based or wearable based or cloud based.11,12 Most works are implemented to support navigation in indoor environment. Generally, the indoor navigation system navigates the users within certain environment such as home and smart campus.13–15 Data analysis is generally taken place in the smart device (can be electronic cane), local systems (can be laptop or smartphone) or cloud data storage.

16

Each method has some limitation as: (i) device based analysis lacks since the smart devices have only limited resources and (ii) cloud based data analysis increases latency and delay.

17

In most of the systems, the input is taken as audio or text. Then, the audio is converted by using natural language processing (NLP) technique.

18

The main issue in this context is that audio input is not always accurate and there will be some missing values.

19

Thus, it is necessary to improve initial query triggered by the users. Besides, the feedback is also important. The feedback can be buzzer, alarm or audio navigation.

20

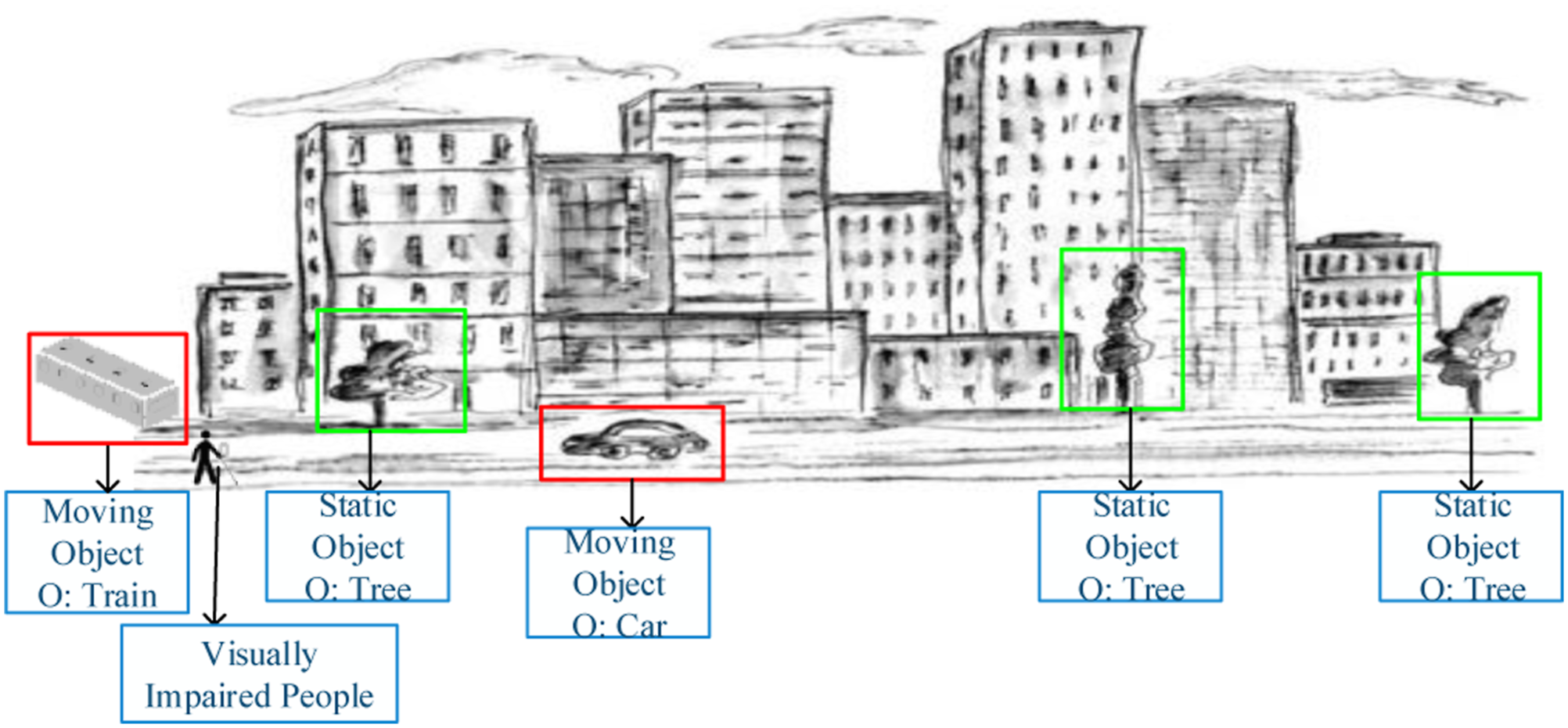

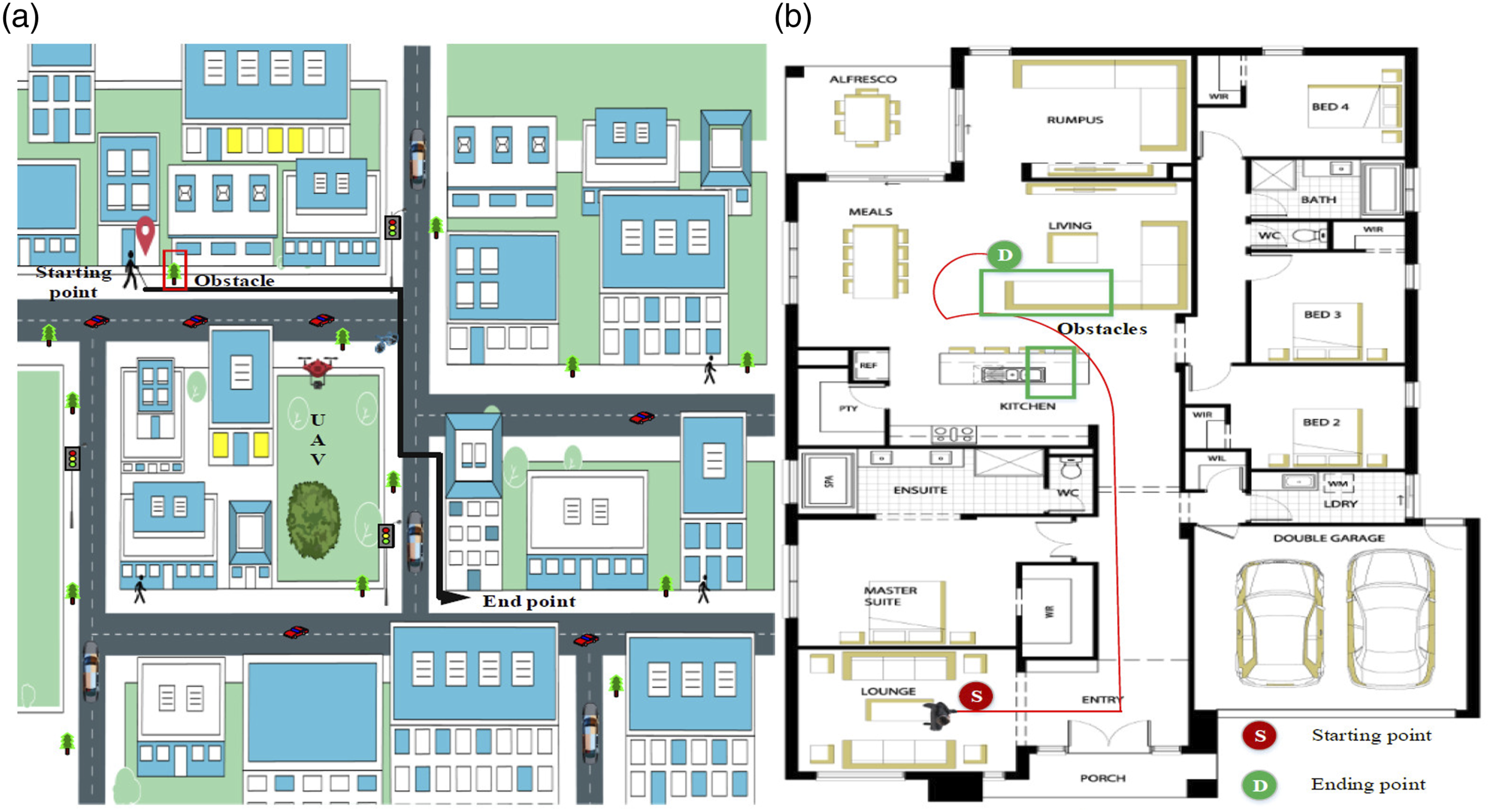

Figure 1 depicts the general scenario of the visually impaired people in outdoor environment, consisting of both static and dynamic obstacles. General Scenario of visually impaired people.

Motivation and objectives

The major aim of this research is to design a full-fledged assistance system for visually impaired peoples. The feedback given to the users is based on the dynamic changes in the environment. For that, we have utilized both sensory based analysis and image based analysis for obstacle detection and smart navigation. The navigation system provides end-to-end support from safe route selection and continuous monitoring for visually impaired peoples. To avoid unnecessary delays, fog computing is introduced between user and cloud layer. The major issues identified in the navigation assistance system for visually impaired people are as follows, • •

Therefore, in order to address these issues the proposed work is formulated. The major research objective of this work is to design a novel end-to-end smart navigation system for visually impaired peoples. The sub-objectives are listed as follows, • To achieve timely navigation system for blind peoples. • To minimize latency in navigating peoples. • To accurately detect the obstacles and classify the obstacles for analyzing impact of the obstacles. • To select safe route based on multiple criteria that minimizes the risk level.

Research contributions

We mainly focus on providing navigation assistance to the visually impaired person in both indoor and outdoor environment. The dynamic nature of the environment is taken into account and the trajectory guidance is provided spontaneously to facilitate reliable navigation system. Both sensor information and image details are acquired for this purpose. The major contributions of this research work are listed below, • The user’s query about the destination place is received and the missing words if any are corrected and the query is improved in order to provide accurate assistance to the users. • Multiple routes from the source (user’s current location) to destination are estimated and the routes are classified into three different classes to assist the visually impaired people in the optimal safest route. The environmental aware bald eagle search optimization is implemented for the purpose of safest route selection based on various parameters. • The fog nodes are deployed and the optimal fog node selection is carried out by the gateway using nearest grey absolute decision making algorithm in order to facilitate fast assistance to the users without latency. • The retrieval of the information for the purpose of monitoring and providing assistance to the users is carried out by the fog node by calculating the Euclidean distance. • The multi-obstacle detection is performed by implementing YOLO v3 tiny algorithm in which both the static and dynamic obstacles are identified accurately with low complexity and less time consumption. • The trajectory prediction for the purpose of precise navigation assistance is executed by using the adaptive Asynchronous Advantage Actor critic (A3C) algorithm and the decision is taken by the fog node. • The heterogeneous data management is provided by detecting and pruning the false data using minimum distance based extended kalman filter and the similar data are grouped by spatial temporal optics clustering to reduce the complexity in managing the data.

Paper organization

The remainder of this paper is organized as follows: The associated works suggested in the navigation system for blind persons are shown in the section under “Related Works.” In order to analyses the research gap in developing the smart navigation system for the visually impaired using fog computing, Section “Major Problem Statement” describes the major problem statements. Section “Proposed Work” provides a brief explanation of the steps involved in the proposed system. By contrasting the effectiveness of the proposed work with the existing methodologies, the simulation setup and validation of the suggested method are provided in the section under “Experimental Results.” The conclusion and future work of this study are then further upon in Section “Conclusion and Future Work.”

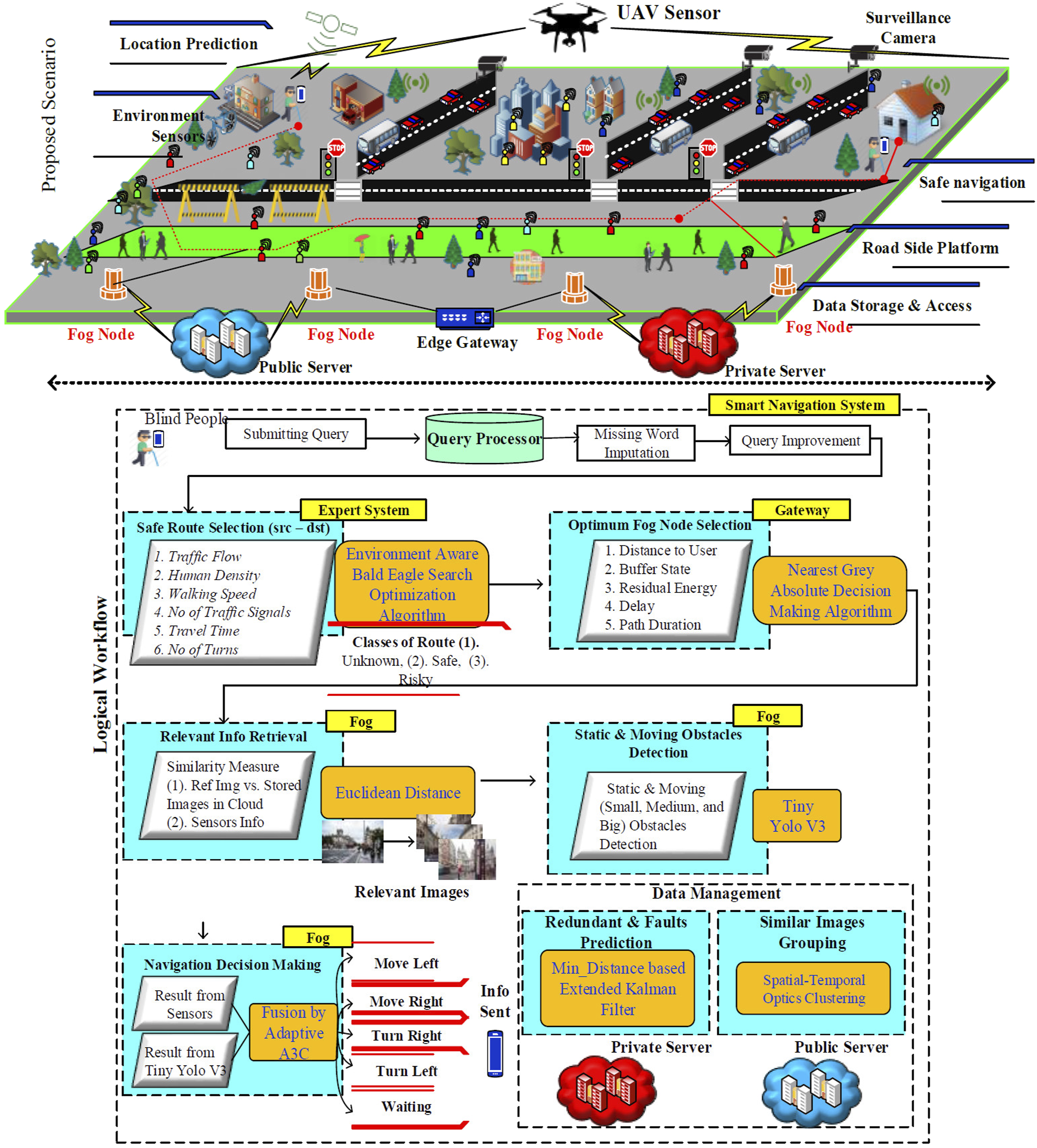

Related work

Authors in 21 proposed a visually impaired assistance system by analyzing multimodal images. This paper presented a localization method for enabling wearable assistive navigation. In this work, deep descriptor network is used to achieve the objective. Initially, the 2D–3D geometric verification is performed and images are collected in different modalities such as RGB, Infrared, and depth. The collected images are fed into dual disc network to generate global descriptors. The result determined by this network is further improved by sequence matching process. However, Initial localization is performed but continuous navigation and obstacle free routing is not yet supported in this work. In, 22 authors focused on image processing based impaired people guide. The images are collected from RGB camera while the wearable device collects necessary amount of environmental data. For safety walking, the object detection procedure is applied regularly. For that, Convolutional Neural Network (CNN) algorithm is utilized. Video sequence is taken as input, then environment depth and fine-tune depth are predicted from the video. After, floor and plane detection, the distance estimation is performed as the function of Euclidean function. If any obstacle/object is detected then sound feedback is given for navigation. Nevertheless, this work only analyzes the indoor environment that is it is not suitable for outdoor navigation. CNN only determines whether the obstacles are present or not, but the impact of the obstacle is not considered.

In, 23 authors proposed an indoor navigation system based on deep convolutional neural network (DCNN). Here, a pre-trained YOLO v3 is proposed for object/obstacle detection in indoor environment. The considered neural network model is multi-class classifier that classifies the objects into window, notice table, elevator, door, electricity box, sign, light, stairs and so on. The YOLOv3 is suitable for indoor navigation system that provides better accuracy. Multiple features are learned by YOLOv3 convolutional layers and object detection is performed. Based on the detected objects, the indoor navigation is performed. However, YOLOv3 is computationally intensive and requires large time for training and testing. Using highly intensive YOLOv3 for fast object recognition is ineffectual since it is not suitable for delay sensitive applications. This work only considered the image processing approach for navigation which is insufficient since it requires environmental sensory data for accurate navigation and the application is limited for indoor navigation only. Authors in 24 presented a user centric approach for indoor navigation. An electronic travelling guide is designed to assist visually impaired people. A navigation system consists of input module (collects data from wearable devices), computational module (that analyzes the collected data for navigation) and feedback module (that generates feedback signals to the user). All three modules are working together to navigate the visually impaired peoples. This paper also reviews the existing blind people assistance systems. This paper mainly highlights that choice sensors and analysis algorithm play pivotal role in blind monitoring and navigation system. It also highlights that a continuous navigation system is important instead of object detection for visually impaired peoples.

In this paper, 25 a convolutional neural network based automated walking guide is proposed. The CNN is executed on the embedded assistance device. The main responsibility of CNN is to detect the objects/obstacles accurately to navigate the visually impaired people. In particular, the potholes presented in the roadways are detected by CNN. Overall system is designed in such way that the user can avoid the potholes during navigation. Initially, this method measures distance with the user and compared with the distance threshold. If the distance is higher than the threshold value, then pothole detection result is considered. The pothole obstacle is only detected by this work. But there will be many static and moving obstacles which are not detected by this work. In 26 authors presented a multi-object scene detection method for assisting visually impaired peoples. In this work, ordered weighted averaging (OWA) approach that uses fusion of two deep CNN models. Two main CNN models such as VGG16 and Squeeze Net models are used here. Both two models are pre-trained with the introduction for residual errors. For error estimation and confidence measure, OAW technique is utilized. All two results are fused to make decision on object detection and obstacle detection. Overall, two CNN models are trained to navigate the impaired people in indoor environment. However this work is suitable for indoor navigation only which is not possible in real-world scenarios where the user needs assistance even in the outdoor environment.

Assisted navigation

Authors in 27 designed an indoor and outdoor navigation system for visually impaired people. This work uses sensor data based detection and image based navigation is smartphone based navigation system. The system provides both direction estimation and tracking monitoring. A system is designed and it is named as path recognition for indoor assisted navigation with augmented perception (ARIANNA) method. The information is collected from accelerometer, gyroscope and camera. Then, these data utilized in activity recognition and computer vision module. Final decision is made based on extended kalman filter (EKF) algorithm. However, limited features are only considered which is inefficient to make optimal obstacle detection and tracking. An interactive search engine for visually impaired users is presented in. 28 In this work, a formal concept analysis (FCA) is used for data analysis. With the combination of context interactive navigation, an interactive search engine (InteractSE) is designed. This work implements an interface that learns the context through FCA approach. After new concept and context discovery, the search results are retrieved to the visually impaired peoples. The analysis is carried out based on the usability characteristics required for the pupils. Nevertheless, this work is only applicable for limited applications and not able to ensure continuous assistance for visually impaired peoples which limits the adoption of this approach in real time scenarios. During the Covid-19 epidemic, visually impaired persons find it difficult to maintain adequate social distances from others. In, 44 Simultaneous Localization And Mapping (SLAM) and deep learning algorithms are used by assistance systems to address this issue.

An intelligent system for assisting visually impaired people was implemented in. 31 The proposed approach is designed upon cloud based data management model. The visually impaired peoples are allowed to wear a smart glass with an intelligent walking stick. The objective of this work to make visually impaired people to avoid aerial obstacle collisions and accidents. The smart glass detects obstacles using two axis sensor nodes while intelligent stick prevents the falls. In cloud environment, data management and alert generation is performed. In this way, assistance system is developed with intelligent system. Nevertheless, the Image recognition used in this approach is inaccurate and the data management in cloud layer increases processing delay and response delay.

Vision based navigation

Authors in 32 proposed V-Eye that is vision based navigation system based on global positioning system (GPS). Camera based image processing technique is proposed for visually impaired peoples. In particular, image segmentation techniques are utilized for understanding the images. The images captured by wearable camera are processed by local system using ORB-SLAM approach which computes the relative pose estimation. Then, the localization information and semantic segmentation information are gathered from corresponding servers based on key frame. In ORB-SLAM, the obstacle presence is predicted then the result is given as audio feedback. However, Local system processing degrades the detection accuracy due to resource constraint nature. Authors in 33 introduced a multi-layer perceptron for obstacle detection and classification. The proposed work aims to be flexible even at noise level is high. The proposed ENVISION method encompasses of four steps including speech recognition, path finding, obstacle detection and merging phase. In obstacle detection phase, deep learning approach is introduced. The deep learning model first learns the features like RGB color histogram, HOG, HSV and LBP features. On the basis of features, six classifiers are used for testing. The considered classifiers are MLP, CNN, recurrent neural network (RNN), self-organizing map (SOM) and stacked auto-encoder (SAE). It is concluded that MLP works with better accuracy but MLP only detects that the obstacle is present but continuous navigation is not supported in this work. In 34 a vision based indoor assistive navigation system for visually impaired peoples. The proposed system detects the dynamic obstacles and adjusts the path planning adaptively. An on-board RGB-D camera is used to acquire images form the path selected for navigation. Then, the time-stamped map kalman filter (TSM-KF) algorithm is adopted for avoiding obstacles in the route. In order to improve the easiness of use, this paper introduces a multi-modal human-machine interface. The depth data is maintained in a point cloud and then it is denoised by filtering approach whereas this work is not suitable for more complex and cluttered environments. Authors in 35 proposed obstacle detection and fall detection problem is resolved jointly for real-time monitoring applications. The proposed system comprises on ultrasonic sensors, PIR motion sensor, accelerometer and a smartphone based application. The microcontroller module transmits the sensed data to the smartphone which is responsible to generate audible instructions for visually impaired peoples. If the obstacle is detected then it is checked whether the distance is lower than 200 cm. If it is true then, it is again checked whether it is located within 100 cm. If it is true, then the alert is generated. However, Performance of the system is poor since obstacle detection is performed based on threshold value.

Major problem statement

This section explains the specific problems on designing of smart navigation system for visually impaired people in an elaborative manner. In existing works, smart navigation system design is a complex task for visually impaired people. Fusion of computer vision based sensor based analysis is important that affects the obstacle detection accuracy.

41

A full-fledged smart navigation system is still challenging due to involvement of sensing errors and fault data in the sensory data.

43

The major problems in this research are highlighted below. • • • •

Authors in

36

proposed a smart assistance system by utilizing the progress of smart objects (SOs). The SO2SEES system is designed to act as interface between user and the neighboring smart objects. In SO2SEES, the peoples are allowed to interact with the smart objects through optimal query processing. The overall system is built upon distributed on internet of things (IoT) cloud platforms. The system uses sensory perception, spatial perception and spatial cognition for making decisions on motion of the users. The user’s query is processed to convert the formal high-level query into lower-level queries. For result retrieval, natural language processing (NLP) tasks are executed to produce the results. The main problems in this paper are listed below, • Here, error occurrence probability is high since the collected sensory data contains faults and repeated data. Processing this fault data increases the false alarm rate which is inefficient to work in real-time scenarios. • There is high latency for result retrieval since overall process is performed in the cloud platform.

In the cases of continuous navigation and event detection, this latency is relatively high. • The discovery phase for user query solely depends upon NLP processes but accurate object detection and interoperable management is major issue.

In

37

authors proposed, an astute assistive navigation system for visually impaired peoples. The proposed NavCane device supports navigation in both indoor and outdoor settings. Here, a low cost and low power embedded device is utilized in NavCane for performing obstacle detection process. The NavCane is assisted with ultrasonic sensors, RFID reader and GPS positioning system. The considered sensor model composes of wet floor sensor, vibration sensor and gyroscope sensor. The obstacle detection algorithm collects data from the sensor nodes on the basis of priority values. Further, the object detection is performed based on the RFID reader values fed from the objects. However, this approach also had several problems which are as follows, • Obstacle and object detection is performed with RFID values and sensory readings. In general, the sensory and RFID readings are corrupted by channel and external noises which degrade the accuracy of navigation. • Lack of priority alert generation and safe route detection increases the risk level of navigated paths. In the public transportation or outdoor environment, a safe path is necessary for visually impaired peoples. • All data processing and detection processes are carried on the NavCane system which has only limited energy and processing power. With the marginal processing capability, the processing performance is poor.

Authors in

38

stated that Safe route recommendation is the significant process in visually impaired people navigation. In general, visually impaired peoples suffer a lot in the presence of heavy vehicle or human flow.

42

This paper focuses on this problem by developing an application namely “Follow Me.” The application generates optimal safe routes for blind peoples by gaining road images from smartphone. The proposed machine learning (ML) based approach is robust against pedestrians’ movement which collects the data from monocular camera. Here, random forest ML technique is used for object detection through human flow analysis. On the basis of random forest results, the routes are classified into unknown area, safe area, and dangerous area. The problems involved in this paper are listed below, • In this work, object detection accuracy is low that affects the overall safe route recommendation. In particular, outdoor objects detection is highly affected by luminance changes. • In this work, the initial route is followed throughout the navigation. However, the selected route may have unwanted events suddenly which demand continuous route monitoring and update recommendation. • RF algorithm is computationally expensive and produces inaccurate results. Besides, the slow prediction of number of trees in the RH makes it unsuitable for real-time applications.

In

39

authors’ focuses on image based navigation system or visually impaired peoples. In this paper, a generative adversarial network (GAN) model is proposed to detect and display the ground images for blind peoples. In this work, a portable device is designed to be used for visually impaired peoples. The GAN model classifies the obstacles and objects by learning the environment. If the object or obstacle is detected, then it is converted to vibrotactile signal which is then generated as alert vibration for navigation. In this way, the visually impaired peoples are assisted with the alert system. However, this approach also had several problems which are as follows, • The GAN system detects all objects as obstacles which is not able to detect the objects based on the sizes since alert generation for relatively smaller objects is unnecessary which increases false alarm rate. • The assisting technology is not suitable for supporting continuous navigation and monitoring which is more important for blind peoples • Overall process is performed in the portable device which has only limited power and storage capacity. In this case, training of GAN in the portable device becomes challenging.

Authors in

40

stated that fog computing is emergent as the solution for visually impaired people assistance applications. A fog based framework is presented with PEN (Phone + Embedded + Neural compute stick) for visually impaired peoples. The neural compute stick has the image processing capability to serve motion based navigation system. The data collection is performed from robot, sensors and actuators. The overall system involves with visual function, natural language processing function, decision function and motion function. The neural compute stick is executed in the fog layer to server users’ on-time. Here, object detection is performed by using single shot multi box detector (SSMD) based deep neural network (DNN) approach. The main problems in this research work are as follows, • This work only detects the static objects for navigation but moving object detection is more important since moving objects pose vulnerability for peoples. • Although fog computing minimizes latency, the performance of the fog computing always relies on the data load processing in the particular fog node. Lack of optimal fog selection degrades the performance of the system. • DNN only learns limited features and doesn’t extracts all important features from the images, thus the accuracy of object detection is low.

The problem statements of these works insist the need of smart navigation system which should address the existing problems in both indoor and outdoor environment. Some of the common challenges in outdoor environment such as dynamic traffic pattern, crowd should be considered in order to design a precise navigation assistance for the visually impaired people. The proposed system is formulated to provide solutions to all the existing problems in navigation system for visually impaired people.

Proposed work

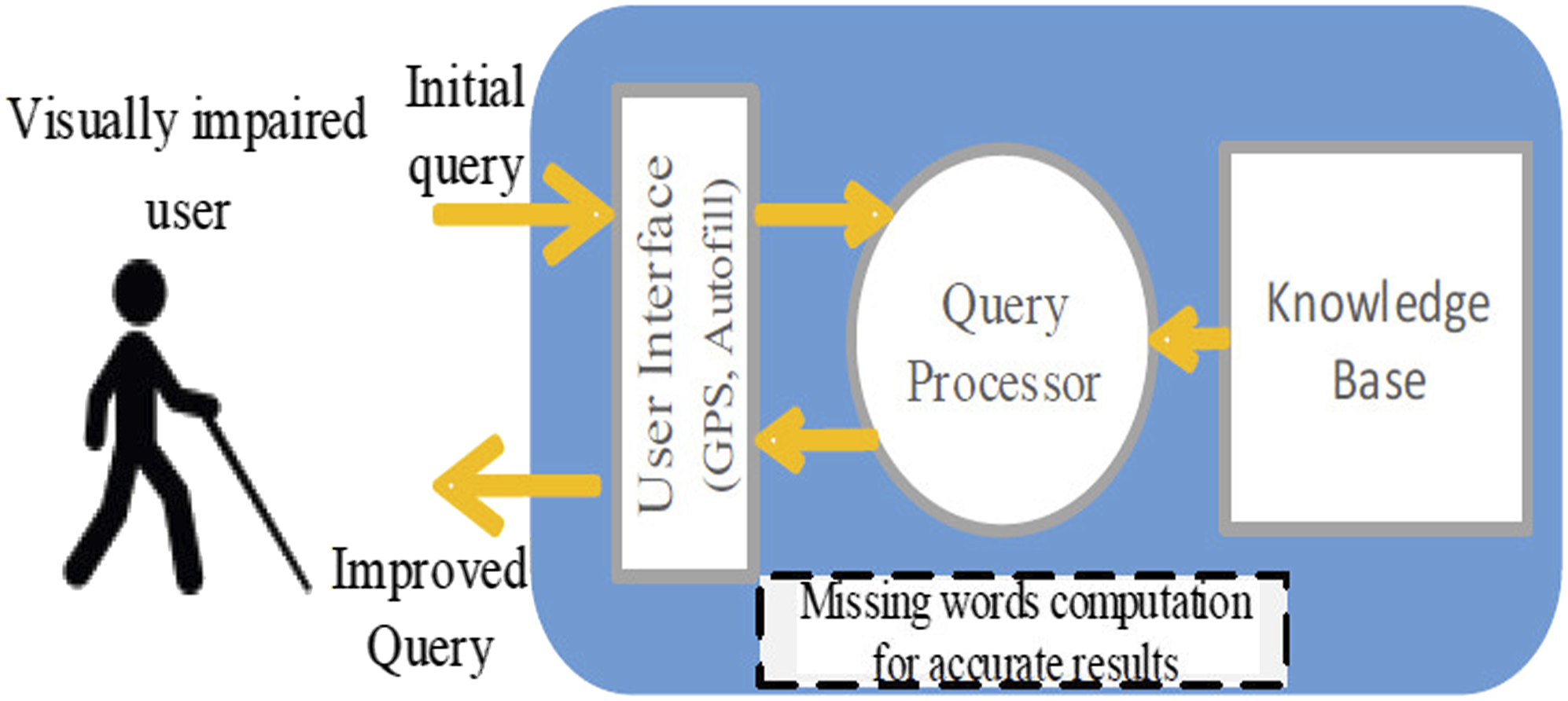

The proposed smart navigation system is designed for visually impaired people at any location. This system is constructed under the fog connected IoT-cloud environment. In particular, hybrid cloud environment is considered which a combination of public and private cloud servers is deployed. The proposed system architecture is shown in Figure 2. The detailed description of the proposed model is given below, System architecture.

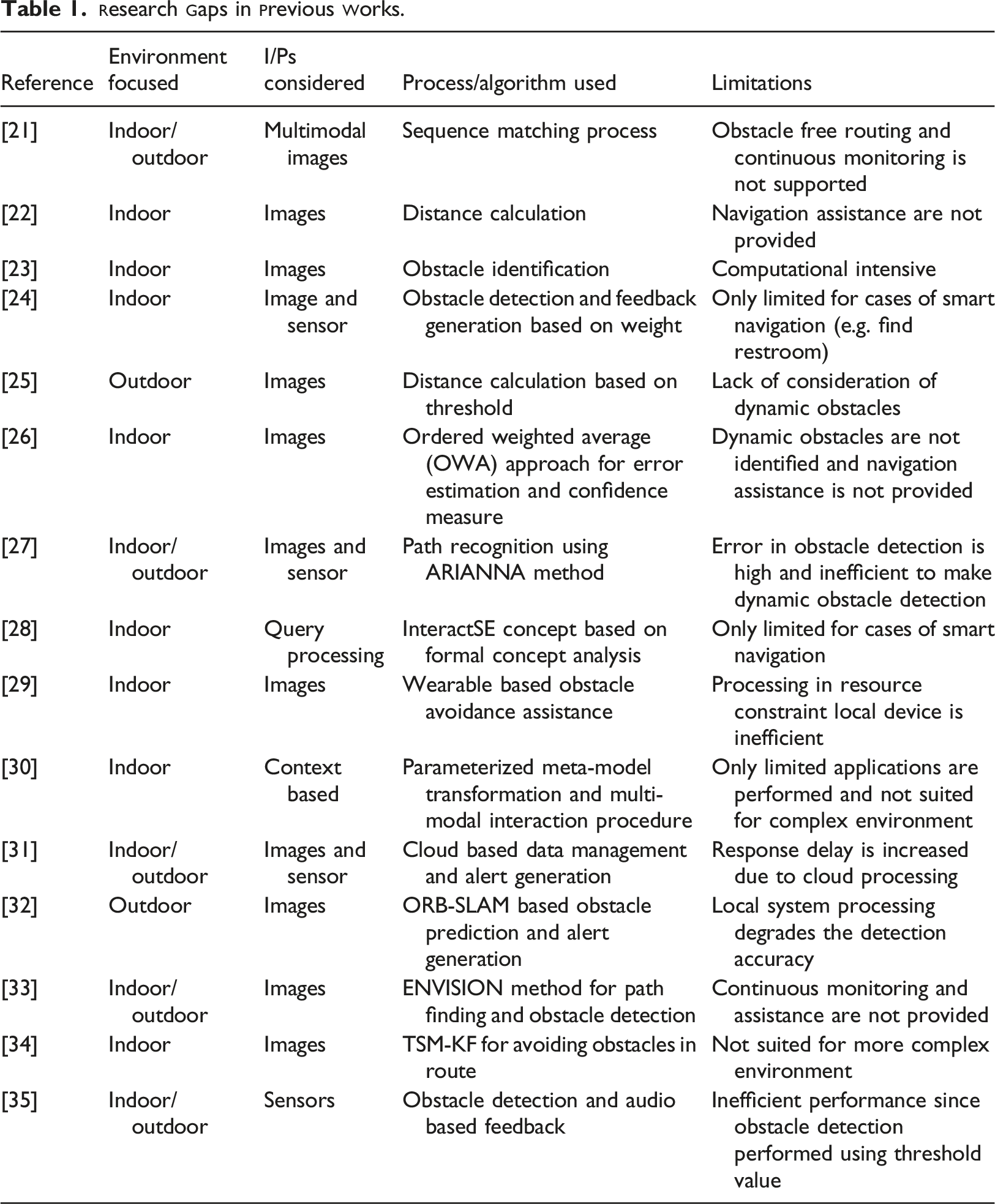

Query processing

Initially, users (blind people) current location is known by the GPS and the destination of the user is obtained from the user as a query which is processed in a query processor. In this step, missing words are improved in a user given query and a new query is built for retrieving accurate navigation results. Figure 3 depicts this process. User query processing.

Safe route selection

The safe route selection is performed by the expert system based on the query of the user. In this step, multiple routes are determined from the source to the destination location of the users. The routes are selected based on multiple criteria such as traffic flow, human density, walking speed, number of traffic signals, travel time and number of turns which are vital in selecting the route. For instance, walking speed helps to know the traffic signal crossing time and the signal time must be greater than or equal to the walking speed of user.

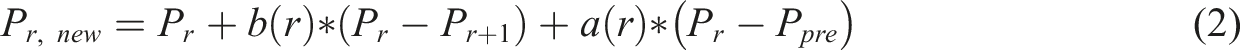

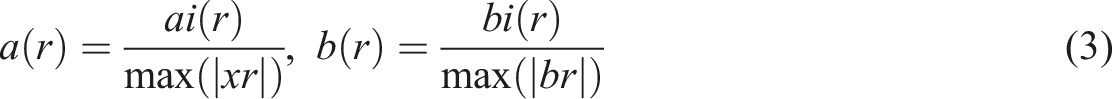

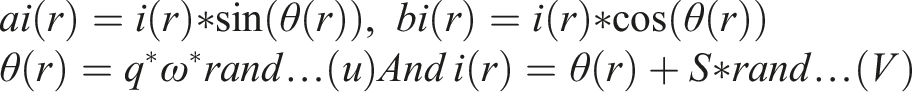

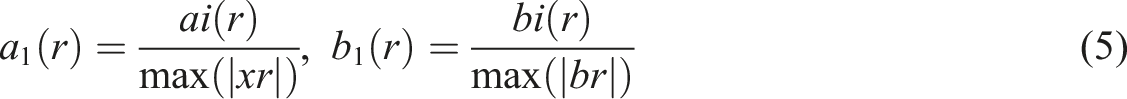

Environment aware Bald Eagle Search Optimization algorithm (E-BES) is used for the purpose of route selection. Initially, the select phase is implemented in which all the available route to the destination is selected which can be expressed as,

Here,

Where q is used to determine the edge and S is used to compute number of cycles in search phase. The bald eagle travels in a spiral pattern to determine the safest Route based on the parameters computed in the search phase.

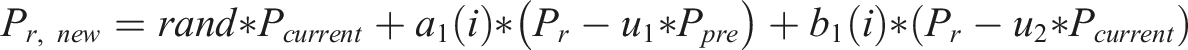

The parameters q and S are altered in order not to stick in the local minima. In the swooping phase the bald eagle classifies the routes intro three different classes namely safe, risky and unknown and the safest route is suggested to the users by the expert system. This can be represented as

Through this the bald eagle selects the safest route from the source to destination. The pseudo code for the safest route selection is provided below which comprises of initialization, fitness calculation followed by select phase, search phase and swoop phase until the safest route is determined.

Initialize the routes randomly from source to dest; Fitness calculation for every route: WHILE (safest route not determined) Select phase For (every route r)

If

Else if ft

End Else If End If End For Search phase For (every route r)

If

Else if ft

End Else If End If End For Swoop phase For (each route r)

If

Else if ft

End Else If End If End For Set m=m+1; END WHILE

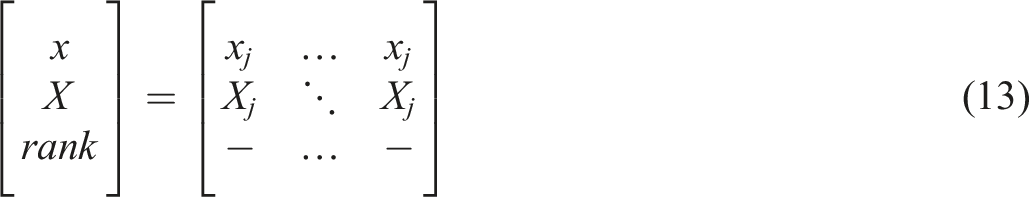

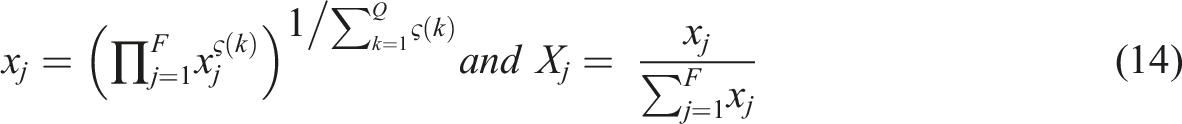

Optimal fog node selection

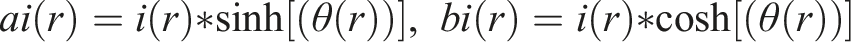

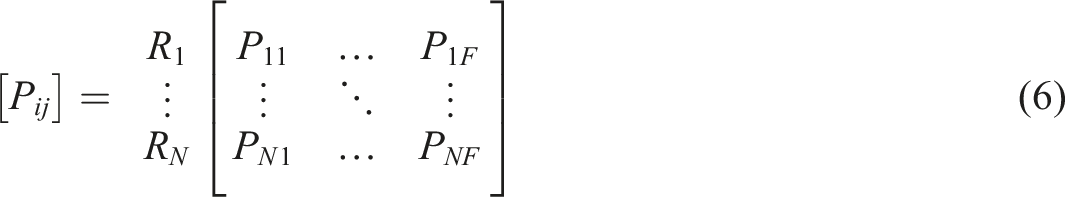

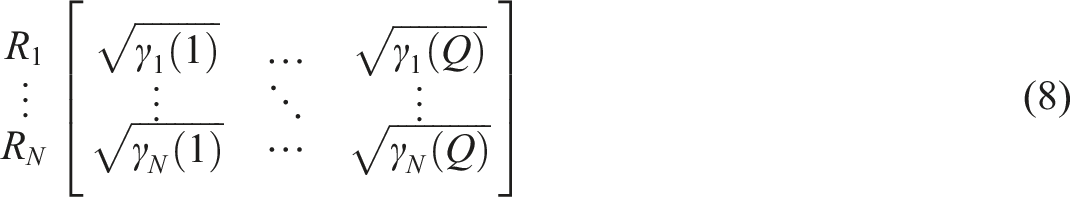

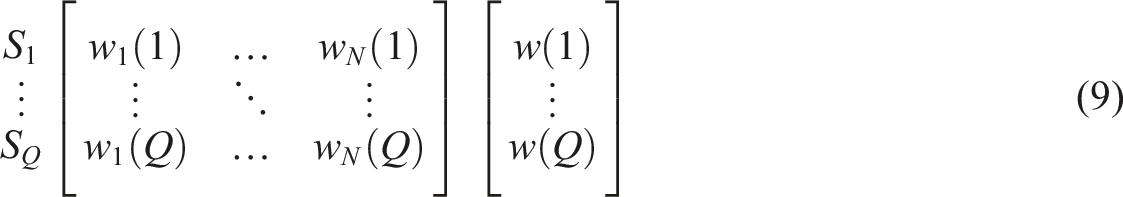

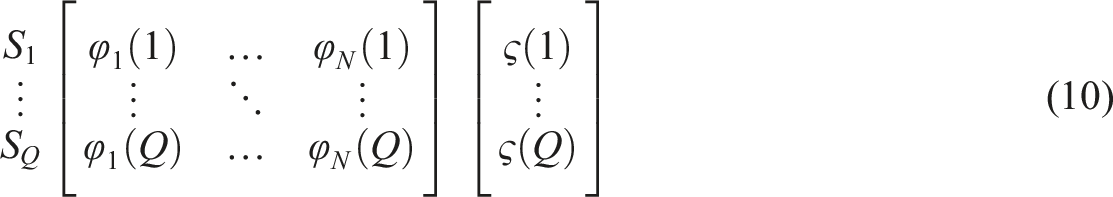

The fog nodes are deployed in order to reduce the computational latency while processing directly in the cloud server. Introduction of fog nodes in the environment reduces the processing time and assists the user with the time constraint. To do so the selection of fog node should be done in an optimal manner. We select the optimal fog node using the Nearest Grey Absolute Decision Making Algorithm (GADA) in which the decision upon the optimal fog node is made based on multiple parameters such as Distance with User, Residual Energy, Buffer State, Delay and Path Duration (Assistance Duration Time). These parameters are computed for all the fog nodes and based on that the nearest idle or under loaded fog node is selected. If F denotes the number of alternative fog nodes

The absolute grey relation grade

In which

The parametric weights (w) is expressed as,

In which w (k) is the geometric mean of all the weights of the parameters and the simulated weights of the criteria is computed as,

Here,

The relative weight

Finally, the weight aggregation of each criteria is formulated and can be represented as,

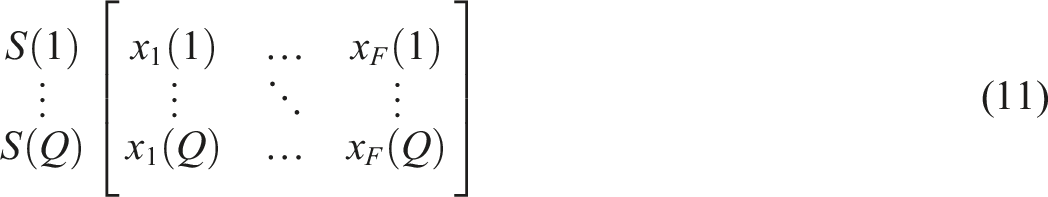

Relevant info retrieval

For the reference information such as image and environment/road side sensors detail, relevant information is retrieved from the cloud by fog. In fog, reference image is compared with the database images by means of Euclidean Distance. Similarly, sensors information is compared with each other for comparison. These reference images and sensor signals are obtained from the wearable of the users. For the purpose of information retrieval the distance between the feature vectors of both reference image/signal and database image/signal is calculated which can be represented as

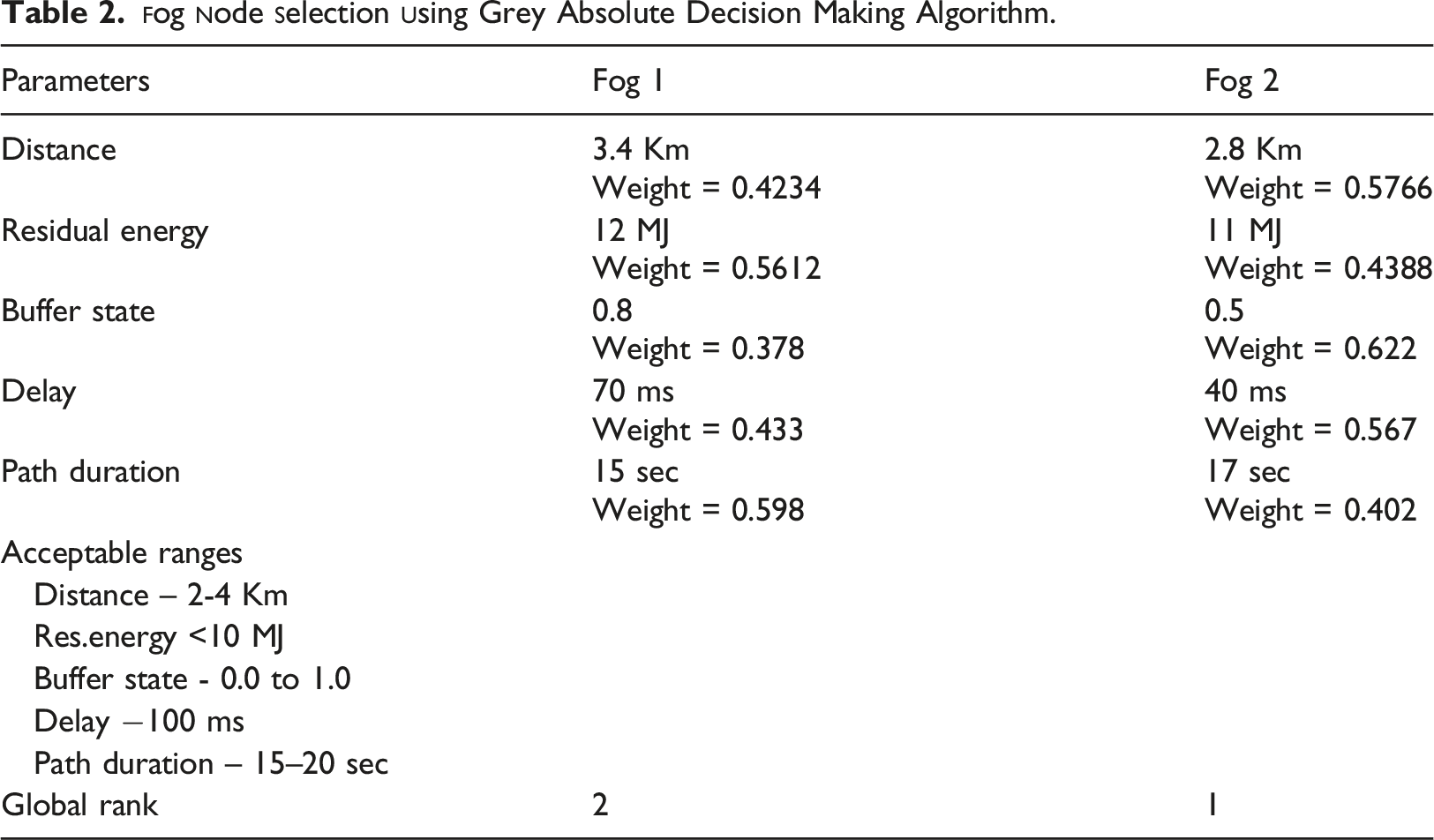

Multi-obstacle detection

The obstacles in the environment can be classified into two classes namely static and dynamic. In order to design a smart navigation system both the static and dynamic obstacles are to be identified and path of the visually impaired people should be adjusted accordingly. The YOLOv3 Tiny algorithm is implemented to detect the obstacles accurately and to classify the obstacles into three classes’ namely small, medium and large obstacles which improve the further accuracy. YOLOv3 Tiny algorithm has lower complexity and time consumption. The proposed YOLOv3 Tiny learns multiple significant features that adaptively support for complex shape of scenes and works faster than previous versions of Yolo V3 Tiny. Initially, the retrieved image from the fog node is divided into blocks of cells then the bounding box and prediction of class is carried out in a three scale order to detect the objects in a meaningful manner. The multi-obstacle detection is shown in Figure 4 in which the small, medium and large obstacles are identified accurately. Multi-obstacle detection using YOLOv3.

Decision making by fusion

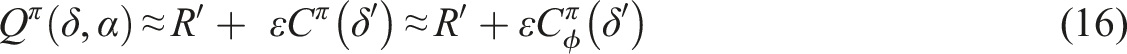

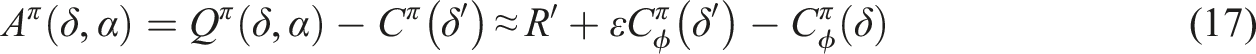

The moving decision of the visually impaired user is performed by considering both the image based (IR) and sensor based (SR) detection. The fusion of this information is taken place in order to provide precise decisions dynamically. In order to learn the environment and adaptive choose the decision, Adaptive Asynchronous Advantage Actor-Critic (A3C) algorithm. Some of the decisions to assist the visually impaired user are: go forward (GF), stop, take right (TR) and take left (TL). The data analysis and navigation results for the users are provided by the fog node which is selected in the previous processes. By doing so, the latency involved in processing the decision is minimized and is seemed to be effective under resource constrained IoT environment. The alerts are generated based on the impact of obstacle detected and the safe routing decision is made based on emergency events. The actor-critic comprises of two networks in order to represent the policy

In which

The training of the critic for the respective policy

The approximation is performed in order to update the target in each state which can be represented as

Through this method in Figure 5, the dynamic moving decision of the visually impaired user is provided by the proposed smart navigation system. The pseudo code for the decision making process is presented below which comprises of advantage estimation, target computation, critic loss computation, critic gradient computation, actor gradient computation in each move. A3C for decision making.

Input: IR, SR Output: D{GF, TL, TR, stop } Initialization of weights Use policy Advantage estimation for each move M, Target computation, Critic loss computation, Critic gradient computation, Actor gradient computation, Compute

Heterogeneous data management for smart navigation

The management of heterogeneous data is an important process in order to provide efficient assistance to the users. The handling of massive heterogeneous data is carried out by performing two significant tasks namely, • Redundant and Fault Data Prediction and Pruning. • Similar Information Grouping.

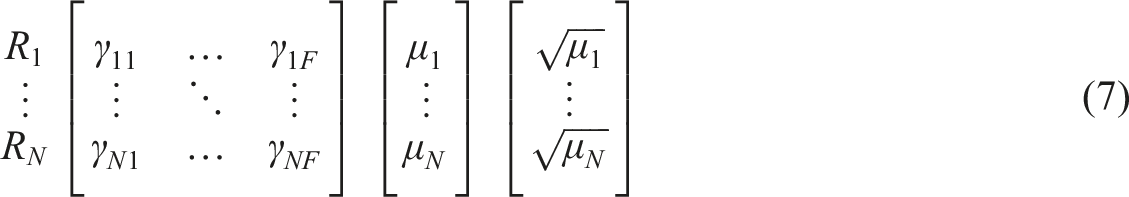

Redundant and fault data prediction and pruning

The signal readings from the wearable’s of the visually impaired user is obtained and the prediction of fault data is executed in order to prune the error associated with the signal reading thereby further improving the accuracy of the obstacle detection. The Extended Kalman Filter based on minimum distance is implemented in order to reduce the redundant data in the signal. The state of the signal is estimated which can be expressed as

The error covariance for the respective state of the signal is obtained by

The error covariance is used to obtain the kalman gain for the respective state which can be formulated as,

The next state of the signal is estimated by means of the kalman gain and can be represented as follows,

The updated error covariance is computed from the updated kalman gain which reduces the fault in the signal, this can be expressed as,

Similar information grouping

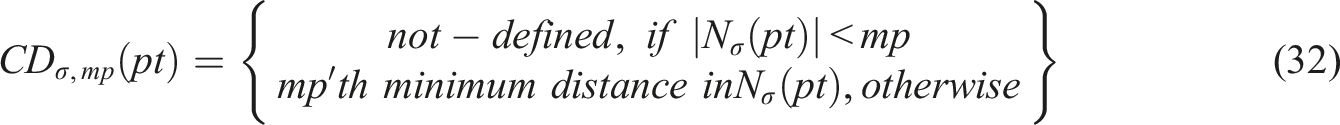

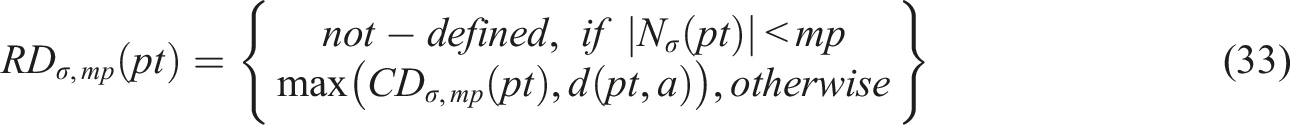

The massive heterogeneous data in the database leads to increased complexity in retrieval process. To solve this problem, the data are clustered by using spatio-temporal optics clustering. In which the like images and signals are clustered which will further reduce latency in processing. The maximum distance with respect to the core point of the cluster is presented as

In which CD refers to the core distance,

Each data in optics is processed once in which the

Limitations

Limitations of our system, • Privacy and security of personal and private data are big issues, and this is especially true in the context of technology-based navigation systems for visually impaired people. • When building a navigation system for the blind and visually handicapped, private and personal data management should be taken into account.

Exprimental results

This section comprises of experimentation of the proposed model through extensive simulations. This section is divided into three sub-sections namely simulation setup, comparative analysis and research highlights to prove the efficiency of the proposed model.

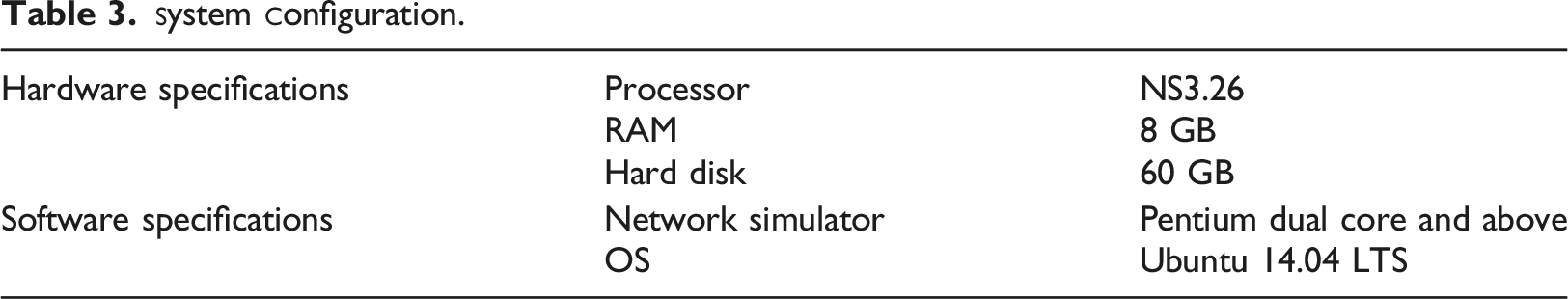

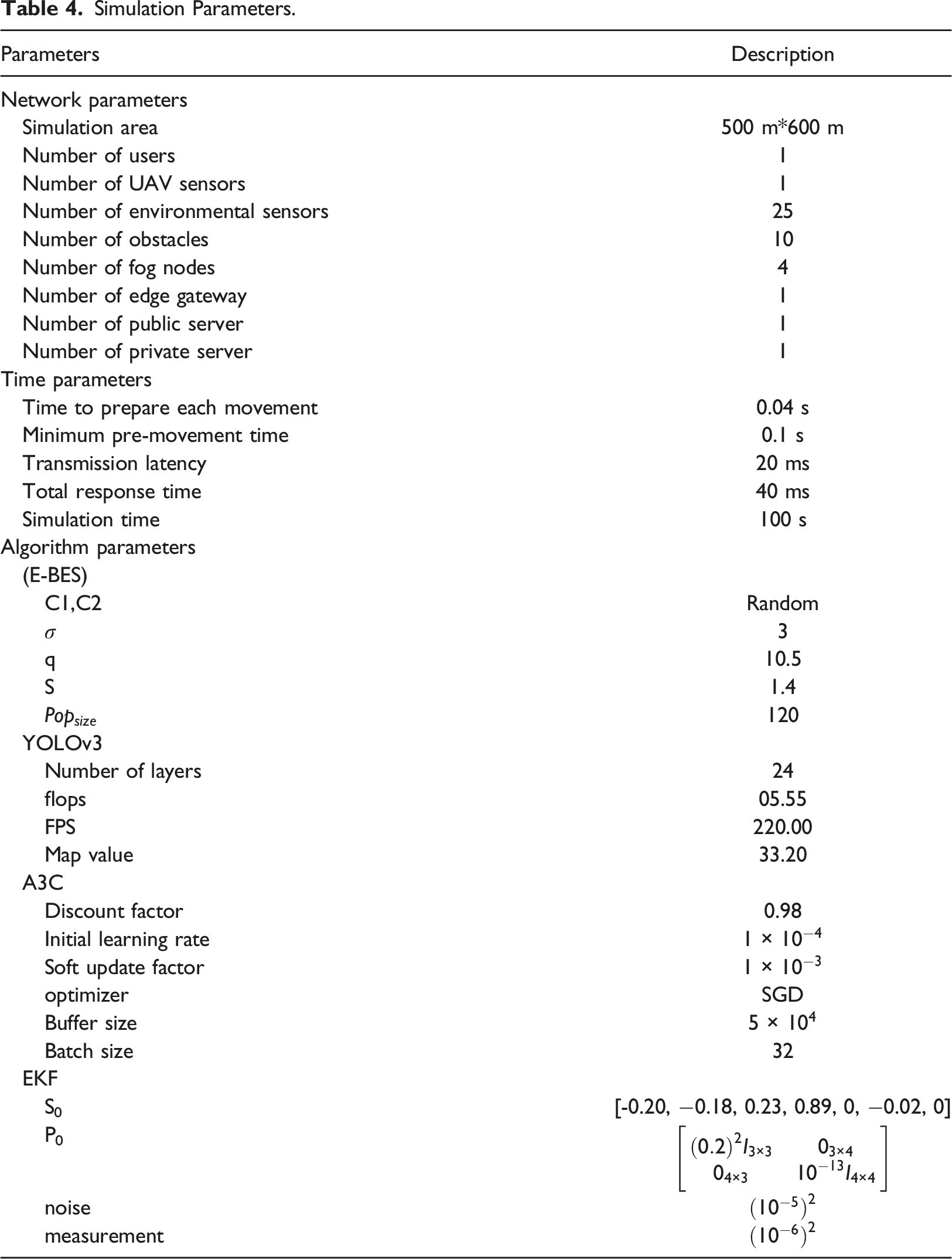

Simulation setup

Simulation Parameters.

In order to design the smart navigation system for visually impaired people in both indoor and outdoor environment the following devices are used, • Ultrasound sensors - the obstacle detection is calculated by analyzing the time taken for the transmitted signal to be received. • Smartphone – the input query of the visually impaired user is interpreted and processed in order to improve the accuracy. • Camera – reference images from the camera are analyzed for obstacle detection and based on that the assistance is provided to the user. • GPS – the current position of the user coordinates(x, y, and z) are known to navigate the user from source to destination. • UAV – the unmanned aerial vehicles are deployed for the purpose of monitoring the users of a particular coverage area

Use case

The fog aided smart navigation system for both indoor and outdoor environment is illustrated in Figure 6 in which the visually impaired user is provided navigation assistance by computing the obstacles in the environment. In outdoor environment the user wearable camera and UAV camera are deployed to capture the surrounding of the user for the purpose of obstacle detection. The environmental sensors are deployed in the environment (trees).The ultrasound sensors are also deployed to improve the accuracy of the obstacle detection. Initially, the users query is acquainted from the smartphone in which the query processing takes place further the safest route selection is carried out which is based on users surrounding such as obstacles, traffic intensity, number of crossover, user’s walking speed etc. further with the help of fog computing the smart navigation is provided to the user. In indoor environment, the user is currently in the lounge and wants to move to living area. The current location of the user is provided by the GPS from which the destination is also located. The obstacles in the route are identified by performing multi-obstacle detection and the decision about next move is given to the user in the audio feedback. Through this the smart navigation of the user is ensured in both indoor and outdoor environment scenarios. Smart navigation system (a) outdoor and (b) indoor environment.

Comparative analysis

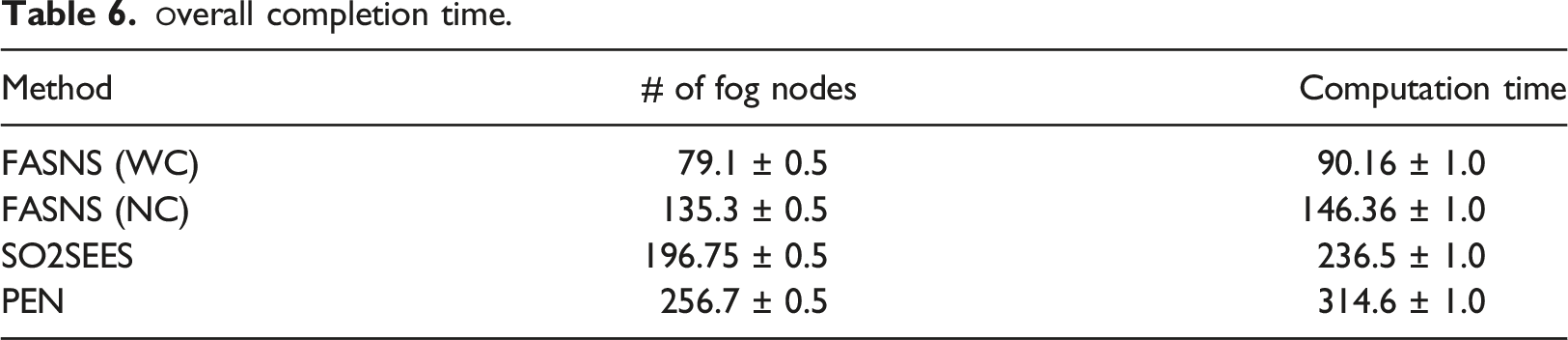

In this sub-section, the proposed fog assisted smart navigation system (FASNS) for the visually impaired people is validated by using several performance metrics. Various existing research works are compared with the proposed work in order to prove its efficiency. In particular, the performance metrics such as latency, task completion time, obstacle detection accuracy, task completion percentage and error rate under several scenarios were evaluated.

Impact of latency

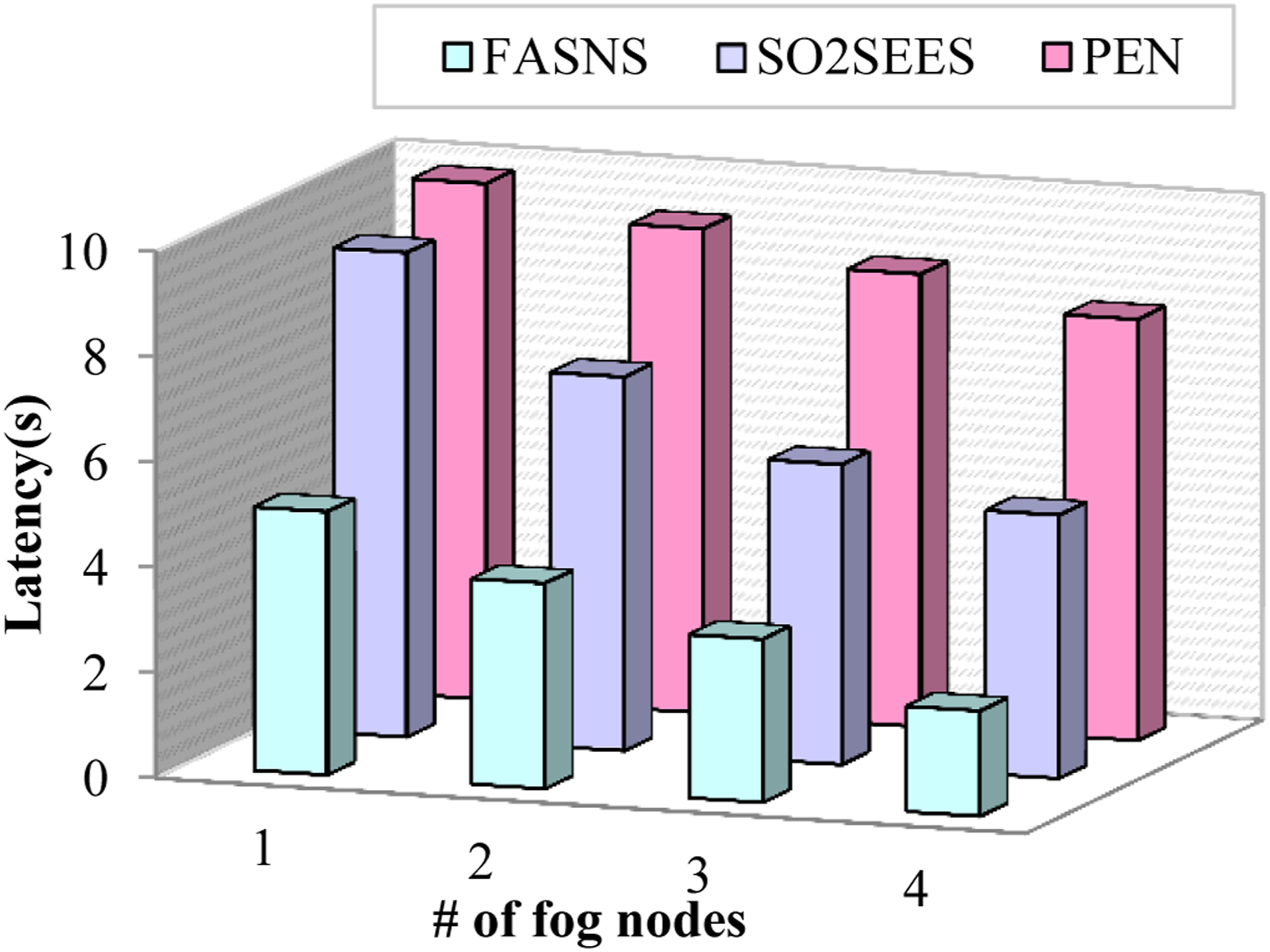

Latency is referred to as the total time associated with the transfer of task from user and reception of the response from the fog node. In Figure 7 the proposed FASNS model is compared with several existing research works in terms of latency in which the latency decreases with increase in the number of fog nodes, this is because when the number of nodes increases the traffic from the users are managed properly which eventually decreases the latency. The FASNS model has very low latency compared to other existing works due to selection of optimal fog node by nearest grey absolute decision making. The existing works did not consider the necessity of selection of fog nodes which affects their performance. Figure 8 depicts the comparison of latency with respect to the cloud processing time for proposed and other existing works. In the absence of fog node, the tasks are received by the remote cloud server which increases the latency. The proposed FASNS model has low latency compared with other existing works because the heterogeneous management of the massive data are managed efficiently which reduces the complexity and latency significantly. The existing works lack proper management of data which increases the latency. Number of nodes versus latency.

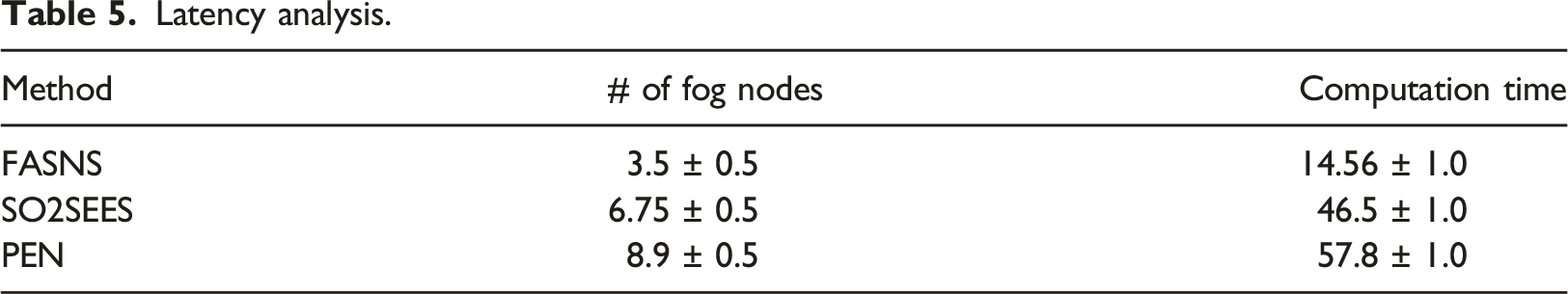

Latency analysis.

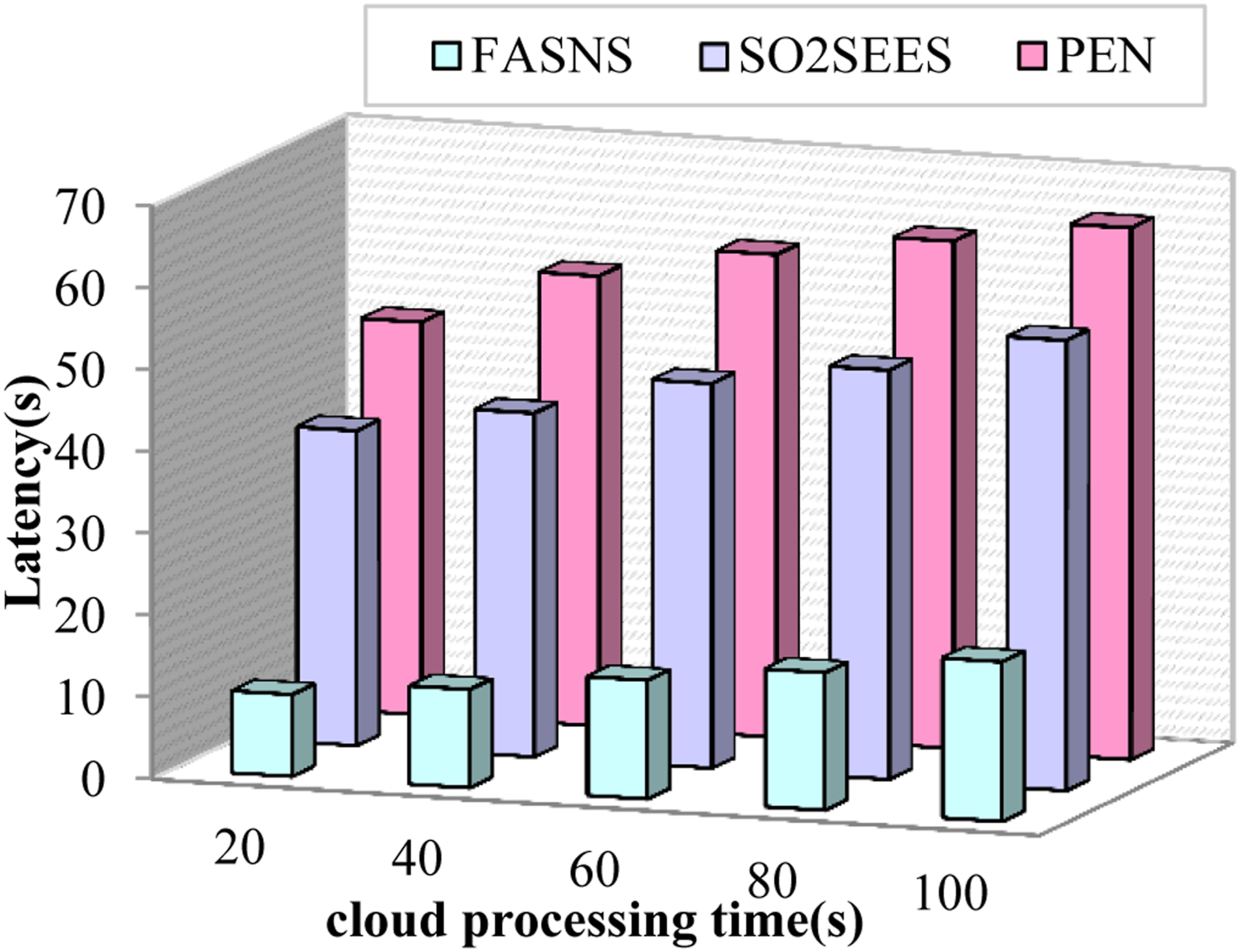

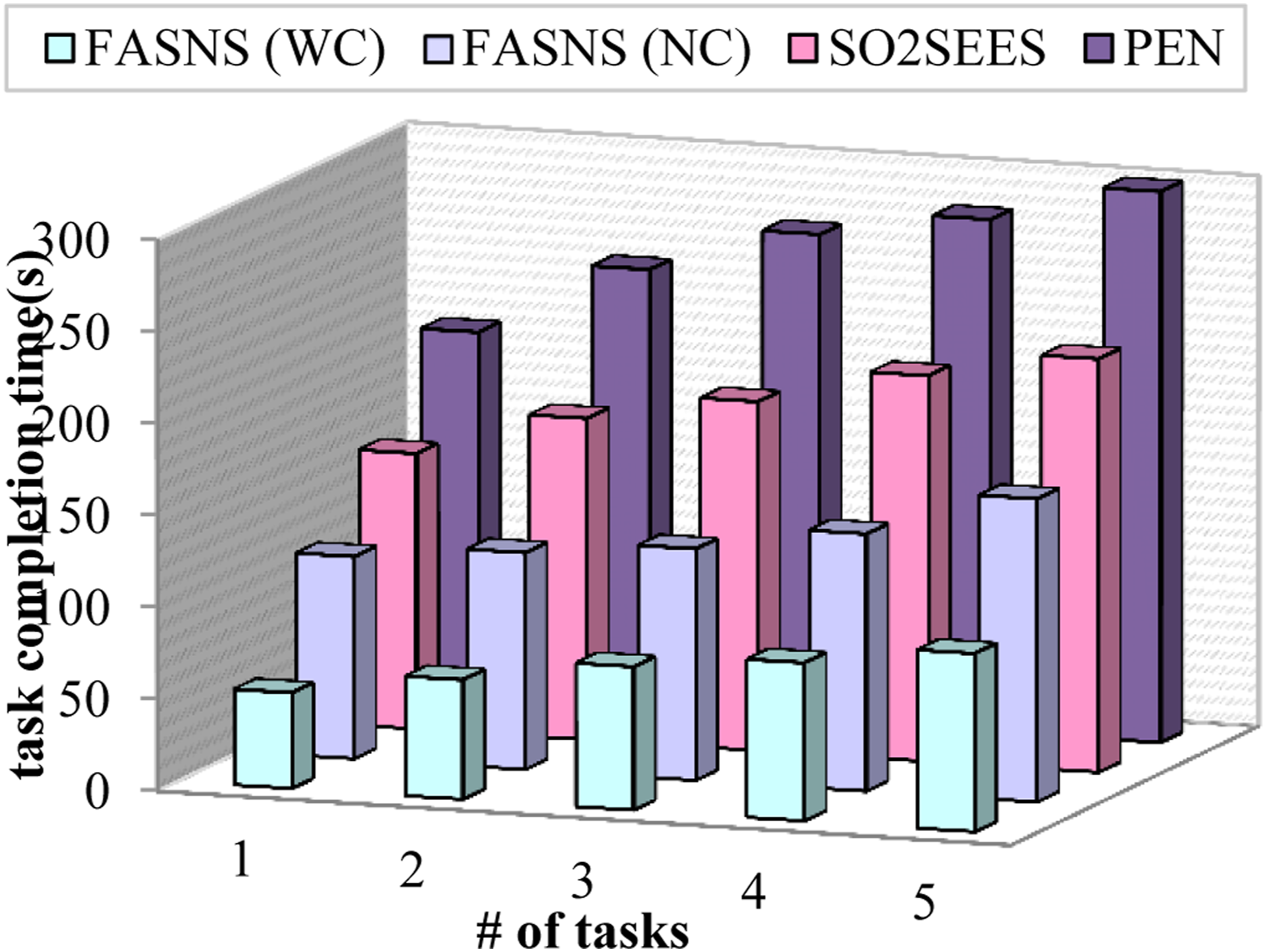

The task completion time is described as the time taken by the fog node to compute the task given by the visually impaired user. In Figure 9 the task completion time of the proposed FASNS model is compared with other existing works in which two scenarios of the proposed work is considered one by performing clustering of heterogeneous data and other without performing clustering of data. From the figure it is clear that the task completion time increases with increase in number of tasks. The task completion of the proposed FASNS with clustering seems to be low because the time of processing the task is reduced by performing clustering of heterogeneous data. Further the task completion time of the proposed FASNS without clustering is lower when compared to other existing works this is due to the optimal selection of fog node performed by the gateway. The existing works lack in optimal fog node selection and proper management of available heterogeneous data. Cloud processing time versus latency.

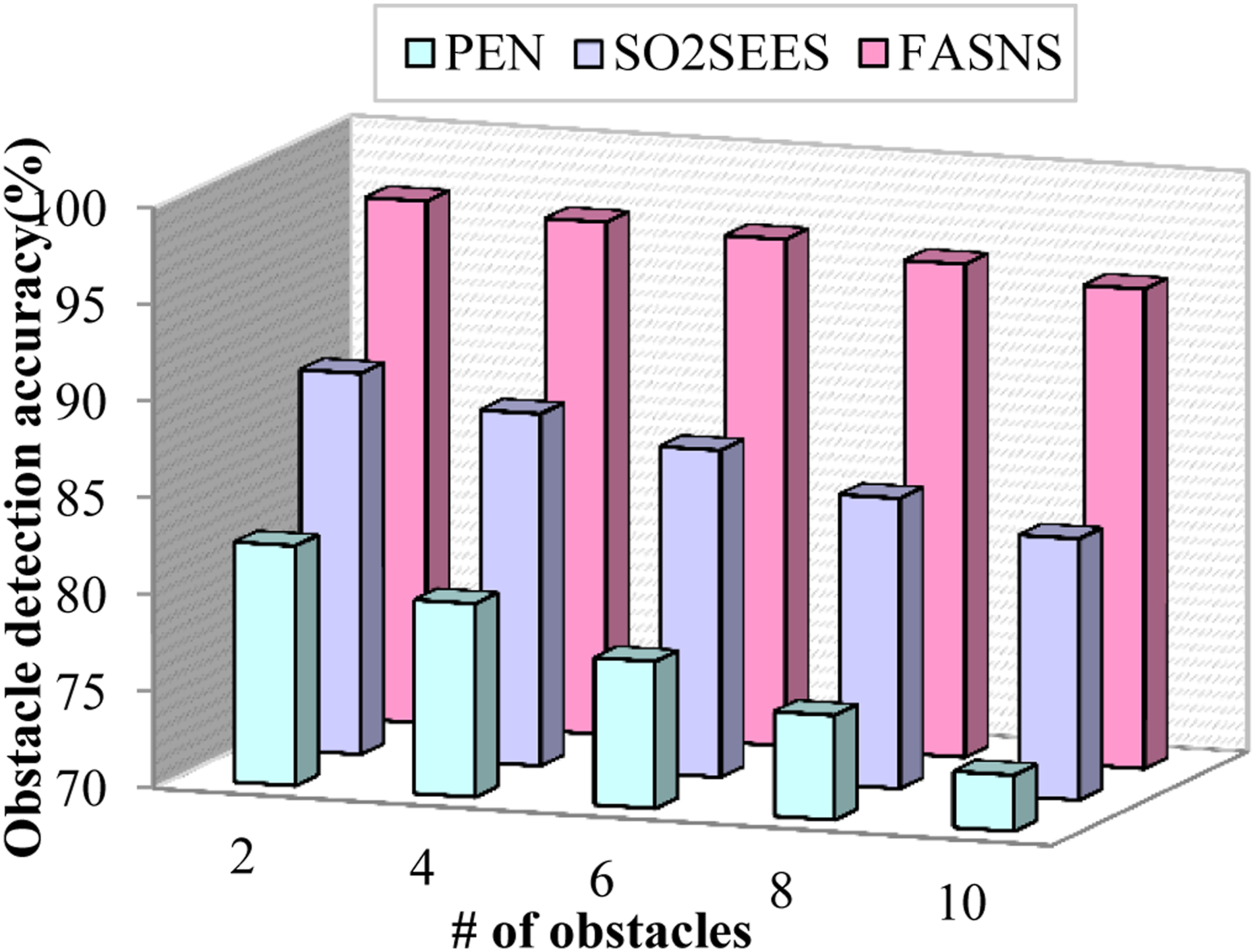

The obstacle detection accuracy is referred as how much amount of accuracy the works possess in detecting the obstacles during the navigation of the visually impaired people. In Figure 10 the obstacle detection accuracy of the proposed FASNS model is compared with various existing research works with respect to number of obstacles in the route. Form the figure it is clear that the obstacle detection accuracy decreases with increase in the number of obstacles. The obstacle detection accuracy of the proposed FASNS model is high due to the implementation of multi-obstacle detection by YOLOv3 Tiny which detects the multiple obstacles in the given image and the fault detection and pruning of fault sensor reading by the extended kalman filter. The navigation decision of the proposed FASNS model is based on fusion of both image and sensor reading resulting in increased obstacle detection accuracy. The existing works either consider image details or sensor readings for obstacle detection. Number of obstacles versus obstacle detection accuracy.

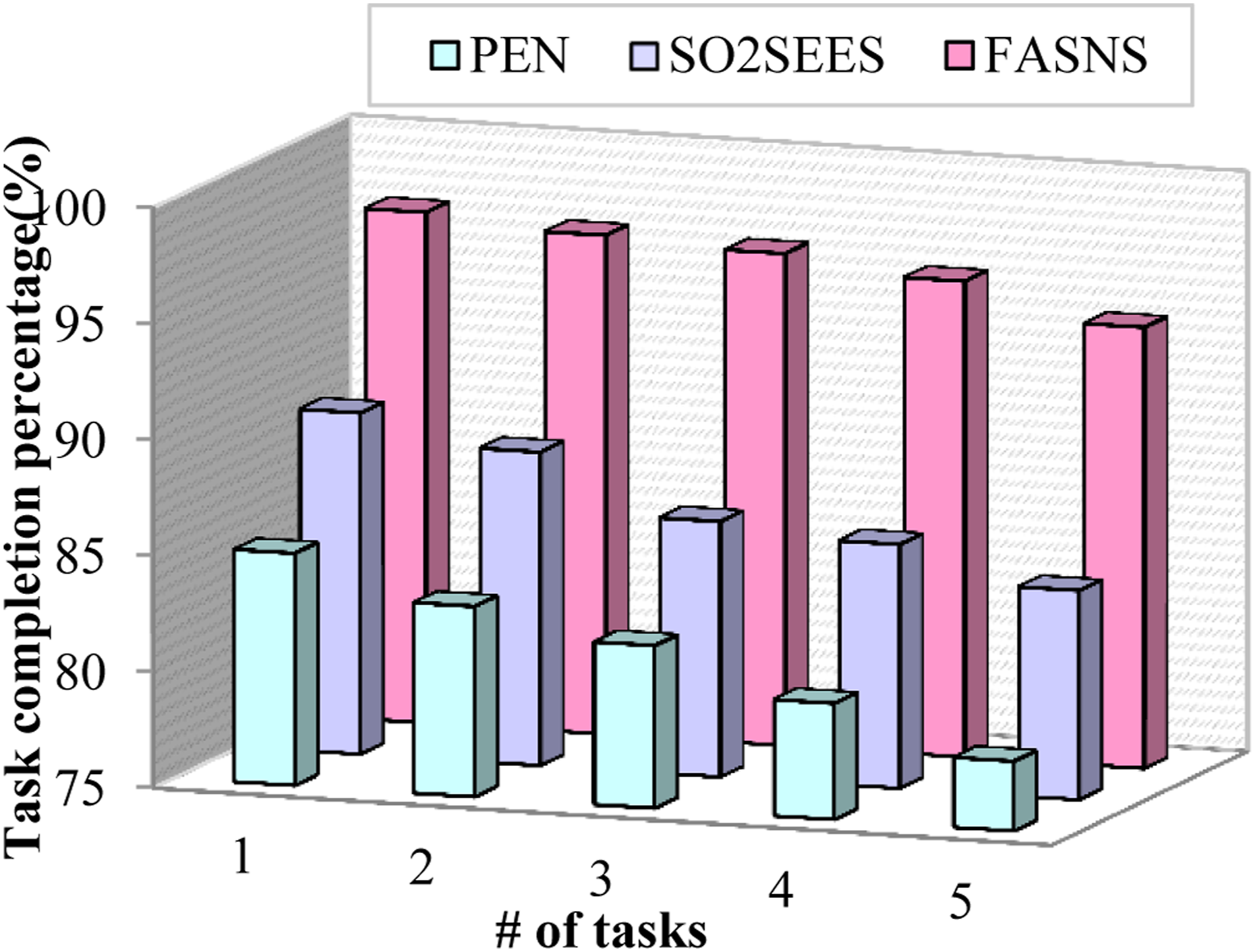

Impact of task completion percentage

The task completion percentage is described as the percentage of total tasks completed by the work in a certain amount of time. In Figure 11 the task completion percentage of the proposed FASNS model is compared with several existing works with respect to the number of tasks generated. From the figure it is clear that the task completion percentage decreases with increase in the number of tasks. The task completion percentage of the proposed FASNS model is high due to the dynamic decision made by the fog node by implementing adaptive asynchronous actor critic (A3C) algorithm which make decisions based on both image and sensor readings. The latency in processing the task is minimized by proper management of heterogeneous data which is performed by implementing spatio-temporal clustering. The existing research works have lower task completion percentage due to inefficient obstacle detection and lack of optimal selection of fog node. Number of tasks versus task completion time.

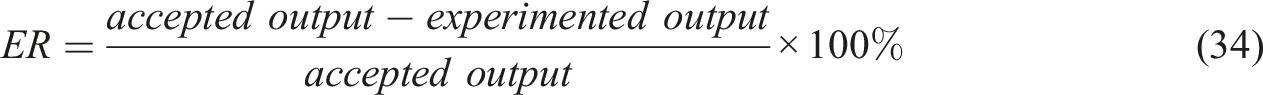

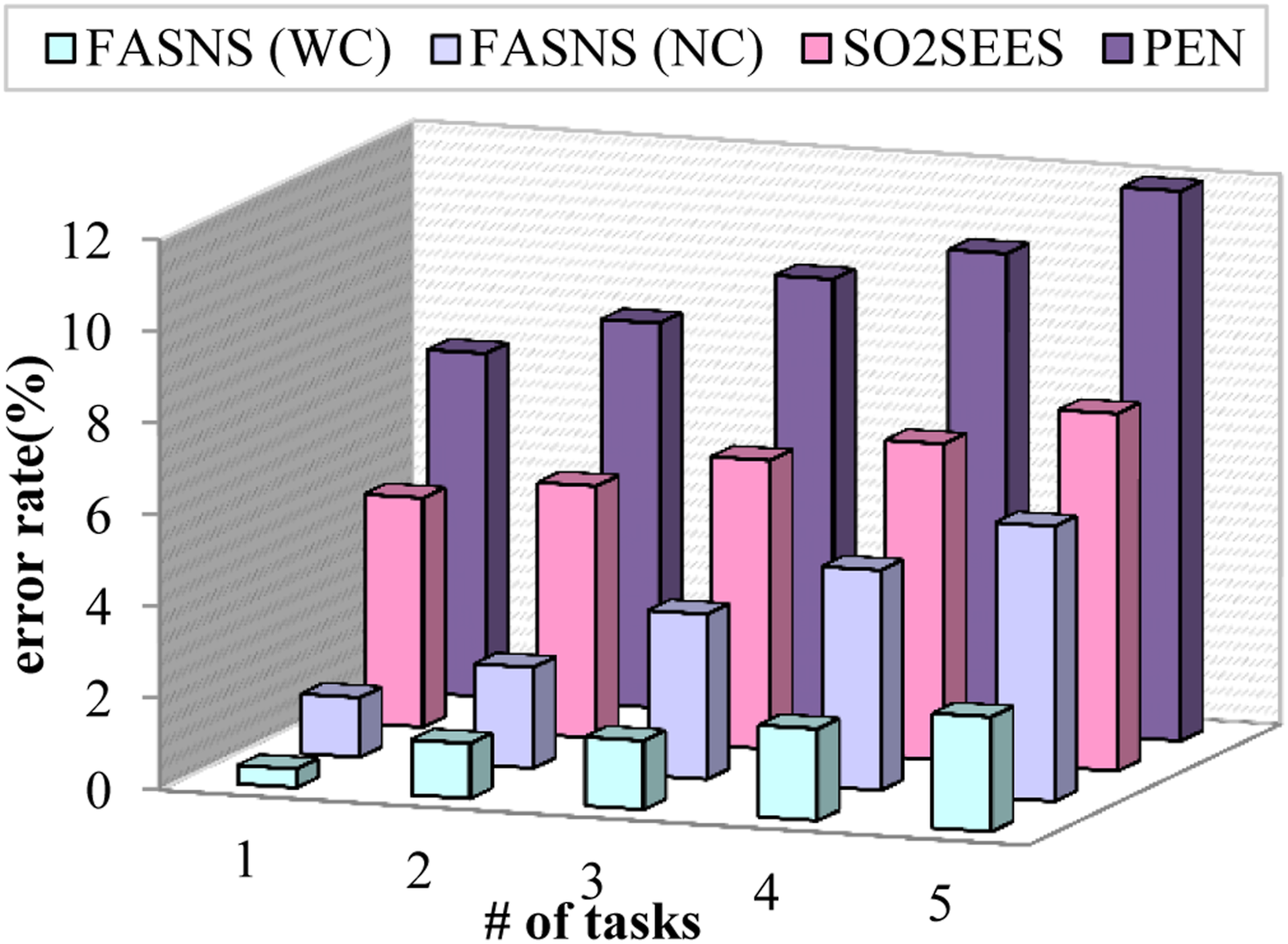

The error rate is referred as the measure of efficiency of the model in computing the task. The lower the error rate the high is the efficiency of the model. The error rate can be expressed as,

Figure 12 illustrates the comparison of error rate with respect to number of tasks. From the figure it is clear that the error rate increases with increase in the number of tasks. Here two scenarios of proposed FASNS are considered one by performing clustering and other without performing clustering. The error rate of the proposed work is less due to the detection of multiple obstacles in the route from the images by implementing YOLOv3 Tiny and fault detection of sensor readings using extended kalman filter. Further the decision by fusion of these information increases the efficiency of the proposed FASNS model. The existing research works lack in proper detection of static and dynamic obstacles in the route which increases the error rate of those approaches. Number of tasks versus task completion percentage.

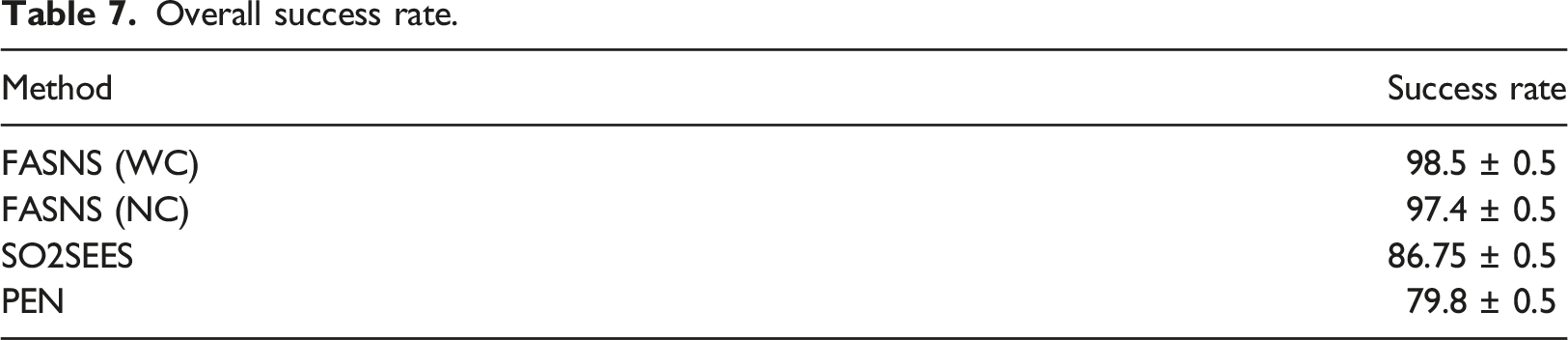

Overall success rate.

Number of tasks versus error rate.

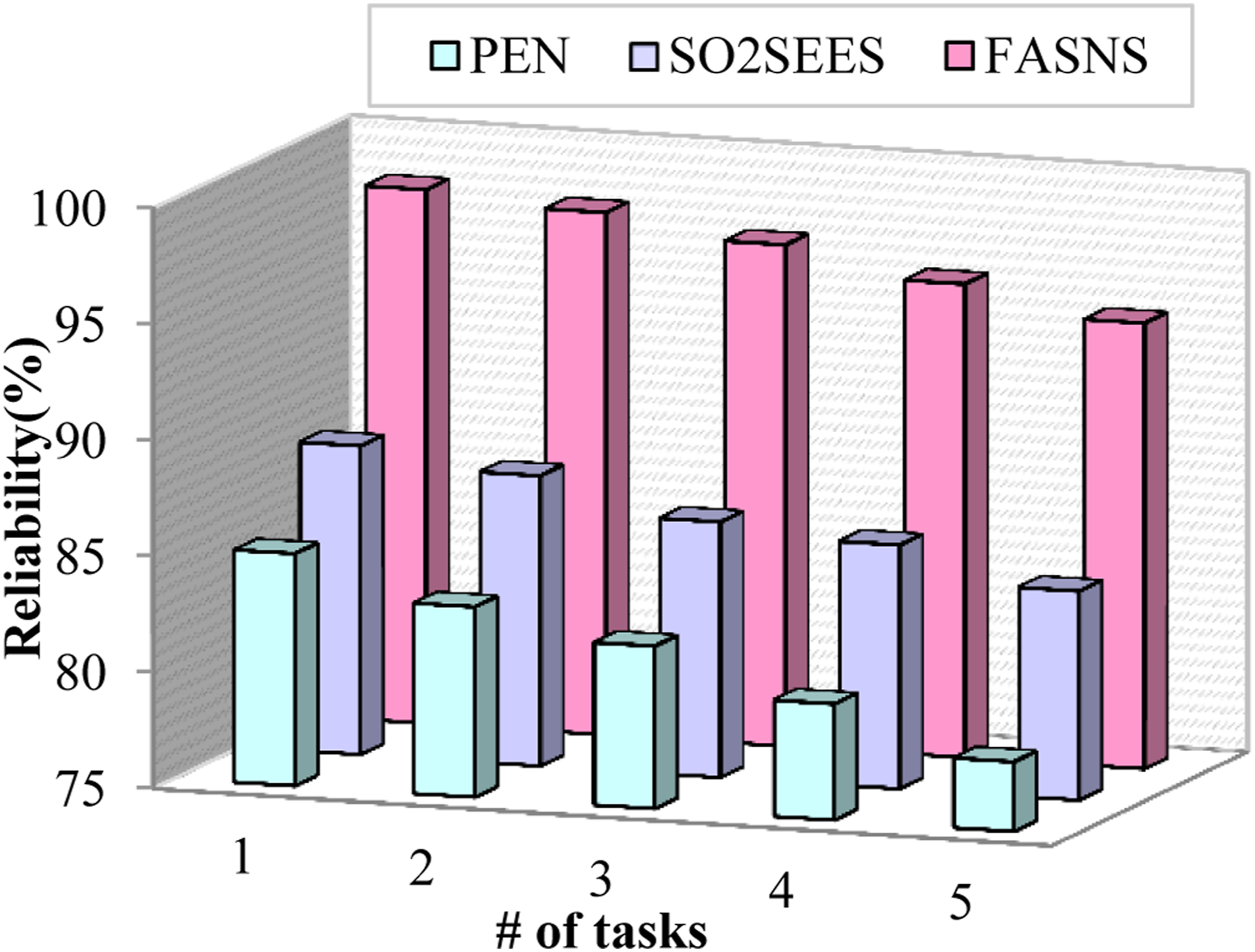

Impact of reliability

Reliability is defined as the measure of consistency, in which the same success rate is achieved for the same tasks computed for large number of times. In Figure 13 the reliability of the proposed FASNS approach is compared with the several existing approaches with respect to number of tasks. From the figure it is clear that the reliability decreases with increase in number of tasks. The reliability of the proposed work is high due to the heterogeneous management of data and optimal decision made by the fog node. The lack of proper management of data made existing approaches with less reliability. Number of tasks versus reliability.

Research highlights

• The query from the visually impaired user is obtained by the smartphone and the query processing is performed to improve the input query in order to achieve greater accuracy. • The safest route selection is carried out by the Environment aware bald eagle search optimization in which the location parameters from the GPS along with other significant parameters are considered to classify the routes into unknown, safe and risky and the safest route is suggested to the user. • The optimal fog node selection is taken place by implementing the nearest grey absolute decision analysis based on multiple parameters which increases the computational accuracy and reduces the latency of the proposed FASNS model. • The retrieval of relevant information is carried out by the fog node for computation of the tasks which is carried out based on calculating Euclidean distance between the reference and database information. • The images from the camera of the wearable and the UAV are processed in order to detect multiple obstacles in the route based on the size by implementing YOLOv3 Tiny. This process contributes to increased obstacle detection accuracy. • The decision about navigation is computed based on fusion of both image and sensor feedback which will be computed in the fog node by implementing Adaptive Advantage Actor Critic (A3C) algorithm. This process increases the task completion percentage of the proposed model. • The complexity in providing navigation assistance to the users is eliminated by performing proper management of heterogeneous data in which the fault data from the ultrasound sensor is predicted and pruned before feeding it into the decision process and the clustering of similar information is taken place by implementing spatio-temporal optics clustering which further reduces the latency.

Conclusion and future work

In this paper, the smart navigation system for the visually impaired people in both indoor and outdoor environment is provided with the aid of fog computing. The query from the user is processed further in order to compute the missing words which will improve the navigation accuracy. The safest route is selected to the destination by implementing environment aware bald eagle search optimization. The efficiency of computing the tasks is facilitated by selecting the optimal fog node by implementing nearest grey absolute decision analysis. The relevant information about the references is retrieved from the cloud server by calculating the Euclidean distance between the information. The multiple obstacles in the route is identified by processing the reference image using YOLOv3 Tiny in which the multiple obstacles are categorized into small, medium and large to improve the obstacle detection accuracy thereby providing precise assistance to the visually impaired users. The decision about navigation is provided by fusion of both the image and sensor feedback which are processed using Adaptive Advantage Actor Critic (A3C) algorithm thereby providing accurate navigation assistance to the visually impaired users. The heterogeneous massive data management is carried out by predicting and pruning the fault data from the sensor and performing spatio-temporal clustering of similar information in order to eliminate latency and complexity associated with navigation system. The proposed FASNS is experimented and evaluated in network simulator NS 3.26 and java and the topology structure is constructed in ifogsim. The performance of the model in terms of latency, task completion time, obstacle detection accuracy, task completion percentage, error rate and reliability is validated to show the efficiency of the proposed FASNS model. In future the privacy of the visually impaired user while accessing the smart navigation system is to be taken into concern and the proposed fog aided smart navigation system will be improved in security aspects.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by the Deanship of Scientific Research (DSR), King Abdulaziz University, Jeddah, KSA, under the grant no. G: 275-156-1440. The authors, therefore, acknowledges with thanks DSR technical and financial support.