Abstract

This study aims to capture the online experiences of young people when interacting with algorithm mediated systems and their impact on their well-being. We draw on qualitative (focus groups) and quantitative (survey) data from a total of 260 young people to bring their opinions to the forefront while eliciting discussions. The results of the study revealed the young people’s positive as well as negative experiences of using online platforms. Benefits such as convenience, entertainment and personalised search results were identified. However, the data also reveals participants’ concerns for their privacy, safety and trust when online, which can have a significant impact on their well-being. We conclude by recommending that online platforms acknowledge and enact on their responsibility to protect the privacy of their young users, recognising the significant developmental milestones that this group experience during these early years, and the impact that algorithm mediated systems may have on them. We argue that governments need to incorporate policies that require technologists and others to embed the safeguarding of users’ well-being within the core of the design of Internet products and services to improve the user experiences and psychological well-being of all, but especially those of children and young people.

Introduction

Young people have been categorised as a generation innately proficient in embracing and utilising the Internet and digital technologies. 1 They are a cohort often identified as ‘Cyberkids’, 2 and the ‘Internet Generation’, ‘Net Generation’, ‘n-geners’ 3 or digital natives.4,5 In recent decades, technological, digital and online services have experienced a vast amount of change and innovation within most homes, schools, workplaces and institutions. It is estimated that a third of Internet users are minors 6 and it is reported that 86% of 3–4 year-olds have access to a tablet, 83% of 12–15-year-olds own a smartphone and 64% of children aged 12–15 years old own 3+ digital devices. 7 Moreover, children who use social media are likely to post an average of 26 times per day, amounting to a staggering figure of almost 70,000 posts by the time they reach 18 years of age. 8 The 2019 Ofcom ‘Media Nations’ report shows that on average 18–34-years-olds spend more time each day on YouTube and playing video games than they do watching live television. 9 Collectively, the addition of mobiles phones, tablets, and other portable devices currently utilised by adolescents, has led to major modifications in communication, information exchange and interaction amongst peers. 10 Ofcom’s 11 research suggests that 44% of parents of 12–15-year-olds find it hard to control their child’s screen time—concerns that are shared by an increasing proportion of young people in that age range. Moreover, with the arrival of 5G mobile technology and the rise of immersive technologies (i.e. virtual reality, augmented reality and mixed reality), it is expected to further increase consumer demand for online content and services.12,13 This situation is problematic because most users, especially children and young people, are not fully aware of the ubiquity of personal data collection, analysis and processing by online services. 14

The impact of algorithms on well-being

Algorithms are a sequence of instructions or commands that a computer can work through to solve a task. News feeds, search engine results and product recommendations increasingly use personalisation algorithms to help users cut through the vast amounts of available information. For example, when using a search engine, an algorithm will rank in order of preference the outputs of the research term. Data, especially personal data, is the fuel that feeds algorithms to provide a more personalised, appealing and engaging online experience. Algorithmic decision-making often lacks transparency and, when using online services, users are generally given next-to-no information about the algorithms, or even the data that is used to feed those algorithms or the ranking strategies applied (e.g. sponsored adverts). Users are instead expected to blindly trust the service provider. This lack of transparency raises many questions; how can users know if the information they receive really is the best match for their interests? How do users deal with the lack of transparency of the algorithm’s decisions-making? And, most important, what is the impact of algorithms on children and young peoples’ well-being?

This paper focuses on understanding how concerns surrounding algorithms (e.g. issues around data privacy, transparency, lack of control and agency, and not understanding what is happening to personal data) can affect the well-being of children and young people. Research has demonstrated that fake news (especially reported by high-status individuals such as politicians) can assist in the development of cynicism, negative self-worth and mistrust. 15 A report by the Children’s Commissioner 16 identified several factors relating to the collection of children’s data by social media platforms that have the potential to affect the well-being of children. For example, the potential for the collection of this data to cause harm through revealing the user’s location, as in the case of Snapchat’s Snap Maps feature, but also that even the impact of technology enabling the likes of parents and teachers to monitor children in a number of ways has led experts to be concerned that this ‘. . .normalises the act of surveillance’. 8 This has led to concerns that children will not realise the sensitivity around some of their data, potentially becoming unaware about what personal data they give away 17 which may lead to problems later in life that affect their well-being, if they do not realise the importance of guarding their personal data. 8

Moreover, the very structure of the Internet has not been conducive in providing transparency to any of its users. For example, the opaqueness of online Terms and Conditions has not provided children with an acceptable level of awareness of how platforms function, what data is collected from users and what happens to this data after it is collected. 16 This lack of transparency may affect the well-being of children and young people by causing them to feel disempowered when online. The General Data Protection Regulation 18 is set to force platforms to address this lack of transparency to ensure that users are more informed about how online services operate. Additionally, the Information Commissioner’s Office 19 released a consultation document that has triggered an amendment to the UK Data Protection Bill to introduce mandatory guidance to platforms in the form of an ‘Age Appropriate Design Code’ to ensure that online services are sufficiently transparent to children and minimise data collection for this age group.

Evaluating the impact

A vast quantity of research has identified both positive and negative impacts of children and young people engaging in online interaction and digital technology.20,21 However, research addressing the impact of algorithm mediated platforms (e.g. news feeds, search engines, personalised advertisement, etc.) on children and young people is scarce. The existing literature flags a predominant focus on the negative impact of social media (i.e. excessive screen time and cyber-aggression) rather than on positive impacts with no consideration of the effect of data sharing, algorithm decision-making, etc. This paper adds to this discussion by focussing particularly on online platforms that are mediated by algorithms, and the effects that interacting with this technology might have on young people. This goes beyond the social considerations of interacting with other people online, to consider the mechanisms that dictate what the online world looks and feels like from young’s peoples perspective. It is important to place users at the centre of this inquiry to further understand how digital technologies and algorithms interconnect within the experiences that children and young people have, exploring both the negative and positive impacts that may consequently affect mental health, well-being and potential risk of psychopathology.

Positive impacts

As the growth of digital technology innovation and the online world advances rapidly, it is not perceived as an optional extra for the children and young people of today’s era. Digital technology and devices are often used in education, to assist as a tool to study and learn, as well as exploring interests, sharing opinions, and digesting news/entertainment. According to research by Kidron and colleagues, 7 it is believed that the rapid growth of the digital environment is both desirable and essential, but it must equally meet the specific needs of children and young people, whilst also allowing access to the online world creatively, knowledgeably and fearlessly.

Although as aforementioned, an increasing amount of young people’s time is spent using digital technology and in the online world, there is evidence to suggest that this is not associated with less participation in leisure-time activities that promote positive well-being, and that communication amongst peers through this medium can increase young people’s social connectedness.22,23,24 This was also demonstrated through a longitudinal study of adolescent participants (n = 719), which reported a positive relationship between involvement in activities such as sports and clubs whilst also spending a moderate time online. 25 Taking into consideration that there is currently little neuroscientific evidence to suggest that typical Internet use can harm young people’s brains, it is thought that rather than digital technology influencing their well-being negatively, it is the displacement of participation in ‘real-world’ activities that is the source of ill-being. 22

The ability to access important information through digital technology, explore individual creativity, carry out research, develop and uphold relationships and social interaction online cannot be underestimated. However, children and young people in the UK report that only 3% of their time in the online world is focused upon creative activities, 26 and that increasingly less time is spent exploring informative resources, research, creative output, in comparison to years prior. 27 Online safety is a vital concern that needs to be understood and managed within the use of digital technology; the risks include cyberbullying, grooming, non-consensual sharing of explicit sexual pictures, harassment and abuse, fraud and traumatic material. Nevertheless, it needs to be recognised that digital technology and the online world has social, cognitive and personal benefits, which should be investigated in balance against the risks.

Negative impacts

The UK government has identified in the Online Harms White Paper 28 the negative effect of online media, demonstrating both physical and mental harm displayed to children and young people. It has been reported that approximately one in five 11–16 year-olds have been exposed to potentially harmful user-generated content online including hate messages and sites displaying content surrounding anorexia, bulimia, self-harm and drug experiences. 21 Moreover, research has shown that social media can have possibly damaging effects on well-being, and a risk factor of psychopathology in children through exacerbating symptoms of anxiety, amplifying disturbances in body image and disrupting sleep. 29 An increase in time spent on social media has been associated with decreased life satisfaction and quality of life in children, which has led to disagreements in the designated role of social media in this cohort. 30 Moreover, it has been reported that one third of American children (aged 12–18-years-old) found it difficult to reduce device time, with half of this cohort expressing concerns about the inability to disengage from digital technologies. 31 However, an estimated 95.6% of adolescents do not qualify as excessive Internet users, suggesting Internet addiction cannot be applied to a majority. 32 Recently, parents have been advised not to focus only on screen time, 33 but rather on the online content children and young people consume and engage on offline conversations reinforcing family’s values and priorities. 34

Research addressing the greatest concerns shared by parents and educators in regard to digital technology usage explore social media’s negative consequences. 35 These have been found to be conveyed in psychopathological behaviours linked to depressive disorders, addictions – such as Internet addiction – and social isolation. 36 It has been found that bedtime use of digital technology stimulates the brain’s production of melatonin, which can double the risk of poor sleep, explicitly reducing quality and quantity of sleep and increasing excessive daytime sleep/fatigue within children and young people. 37 These findings have been challenged by a recent cohort study designed to determine the extent to which time spent with digital devices predicts meaningful variability in sleep. 38 It shows only between 3 and 8 fewer minutes of sleep for each hour of digital screen time and identifies key social and contextual factors that could be more influential (e.g. affordances for outdoor activities) in predicting sleep hygiene. These results show the urgent need for more research activity design to understand the impact of screen-time including context and content on children and young people’s mental health. 39 Recently, parents have been advised not to focus only on screen time, 33 but rather on the online content children and young people consume and engage on offline conversations reinforcing family’s values and priorities. 34

In educational settings digital technology has been found to support and enable basic dangerous communication phenomena such as risky behaviour and cyberbullying within young people.40,41 Although it is thought that there has been progression in improving the negative consequences of digital technologies using parental control features and age verification, it is demonstrated that children are still not suitably equipped with the correct skills needed to be able to navigate the online world. 7 According to Ditch the Label 42 an annual bullying survey found that 69% of respondents self-proclaimed causing online abuse towards other peers, fuelled by the need to get noticed, 35% of respondents had reported sending screenshots of other young people’s social media in order to receive humour and validation from peers within a group chat. In response to this, in a sample of 1,002 young people, it has been found that almost 50% of girls and 40% of boys (aged 11–18-years-old) have experienced some form of online abuse or harassment. 43 Research also suggests that the negative impact of cyberstalking is comparable to offline stalking and can have detrimental effects on young people’s mental health and well-being. 44 Advancing on this, it has been reported that student’s academic performance in examinations improves when they are banned from using digital technology, as smartphones were found to exacerbate pre-existing academic inequalities. 45

Often children and young people are presented with pressures to share opinions, personal information and photographs on an extreme scale, influenced with persuasive loops of reward, duty, exchange, and a need for validation. 46 It has been suggested that due to this susceptibility to peer pressure online, children and adolescents’ well-being is at risk. 47 Digital technologies, so far, have failed to adapt to children and young peoples’ need and developmental milestones, for example, 10–12-years-old are already aware of social pressures and expectations and may change aspects of themselves in order to fit in and be accepted by peers online. 18 Also the ‘fear of missing out’ or FoMo can increment children and young peoples’ anxiety levels, often aroused by posts seen on social media.48,49 The management of frequent public interactions inflicts vast pressures for children and young people, which are increasingly manifesting as anxiety, low self-esteem and mental health disorders.50,51 This is supported by research suggesting a correlation between excessive digital technology use and elevated levels of depression.52,53 It is suggested that this is due to unrealistic expectations of the world promoted in the online world, leading to conclusions that life is not as good as it should be. 54

Regarding relationships, it has been found that the quality of social communication and interaction is reduced the more digital technology is used. 55 Within social media, the large number of users, with the need for numerical validation and popularity quantified statistically (e.g. number of likes and shares), quality of communication has found to be reduced.55,56 Furthermore, this has been found to correlate with social withdrawal, self-neglect, poor diet and family conflict.31,57

Coleman, Pothong, Pérez and Koene 58 postulate that whilst considerable and important attention has gravitated towards introducing measures to safeguard and protect children on the internet, ‘[. . .] this should not be allowed to overwhelm other questions about the kind of Internet that children and young people want’ (p. 9). 58 It is an imperative that research also addresses the latter by consulting children and seeking their recommendations in improving their Internet experiences. The changes in the GDPR 18 offer an opportunity for online service providers and others to take note of the recommendations of children and young people, allowing them to be involved in the production of such agreements, with the view to improve the online experiences of this vulnerable group.

This study reflects on the online experiences of young people and their impact on their well-being. We draw on qualitative and quantitative data from a total of 260 children and young people who took part in a ‘Youth Jury’, which is similar to a focus group were the facilitator presents evidence or materials developed to sparkle discussions among participants. The aim of the juries is to promote digital literacy by eliciting discussions and by bringing their opinions, concerns and recommendations to the forefront of the debate. This paper will draw on quotes from a series of Youth Juries by asking young people to consider the benefits, advantages and positive function of algorithms, but also to reflect on the newly established principle of ‘online harms’ by considering potential psychosocial impact associated with the use of digital technologies and ubiquitous algorithms. We conclude with the needs and concerns articulated by young people themselves.

Method

The Youth Jury method is highly regarded as an effective methodology to involve young people in discussion that is youth-led and that centralises the importance of discussion and reflection. Youth Juries are similar to focus groups where participants are encouraged to share experiences, discuss and debate issues, form and change opinions, and ultimately put forward recommendations for how an issue may be solved. This method is designed to understand the thoughts, ideas and recommendations of young people about their experiences of the Internet, through facilitated group discussions around specific scenarios that are co-created with age-matched peers.58,59 Scenarios often include dilemmas and real examples that include different perspectives or viewpoints aiming to sparkle debate and reflection. The Youth Jury method and the presentation methods for conducting juries has been recently evaluated60,61 indicating the appropriateness of this methodology to tackle the challenges related to the online world by engaging young people with the problems they may encounter, and by encouraging them to reflect on their online behaviours.

The overall purpose of the Youth Juries was to bring the voices of young people to the forefront, by understanding how young people use the Internet and to gather a sense of their experiences when interacting with algorithm mediated platforms. The juries set about eliciting the views of young people by using a facilitator to stimulate discussion on different aspects of algorithmic decision-making processes. The content of the juries and topics discussed were dynamic and changed in response to the knowledge and experience of the young people attending, although a common format ran throughout all sessions. Both waves of juries started with a multiple-choice questionnaire to establish basic information about jurors’ knowledge and opinions, and a post-session questionnaire to establish change in attitude, gauge learning and interest, and to collect responses about the themes covered in the sessions. Focusing on youth-led discussion and debate, in the first wave the facilitator used slides to introduce the concept of algorithms and how they affect the online world, across each of three main topics. The three main topics were:

(1) personalisation through algorithms, including consideration of filter bubbles and echo chambers;

(2) autocomplete strategies, search results, and fake news, considering how search results are ranked, issues surrounding ‘gaming the system’, biases, and how users might identify fake or unreliable information; and

(3) algorithm transparency and regulation, in which jurors were asked to consider a hypothetical legal case and think about who can be held accountable when an algorithm causes a bad outcome.

The topic was introduced, and a couple of examples given, and the jurors were encouraged to share their opinions and experiences of the issues. They were then asked how they would like to see things change or how they would like specific issues to be tackled. The second wave of juries was a lot more interactive, but covered the same themes as the first wave, using activities that were co-created with a group of young people who took part in the first wave. A detailed outline of the method can be found online as well as open educational resources.I The discussion topics were refined into the following areas: online personalisation, including filter bubbles and echo chambers; the regulation of algorithms, looking it what should be done when an issue arises or something goes wrong, and by whom; and algorithm transparency, discussing what information about how algorithms are working would be meaningful and useful to participants. The study was approved by the Ethics Review Board for the Department of Computer Science at the University of xx. All of the Youth Juries were audio recorded with the permission of participants, transcribed by a University approved, GDPR compliant external company, and then thematically analysed. A single researcher used a qualitative approach (i.e. thematic analysis) to code all of the transcripts, before grouping them into themes using NVivo. Three other members of the research team independently coded a random selection of the transcripts. The results were validated collectively as a team, in order for any discrepancies to be discussed and reconciled, and it was found that the coding was consistent between all coders. Written recommendations and notes were also added to the qualitative dataset and coded in the same way. Key themes were then identified from this analysis. Questionnaires were analysed using SPSS, predominantly focusing on descriptive comparisons and summary data. Participants were recruited from secondary schools and youth groups in the geographical area of xx. Recruitment packages were sent to head teachers, IT teachers and youth group coordinators. The study was also advertised via xx University’s outreach group. Youth Juries took place within xx university premises if teachers were able to organise a visit to our campus or in their school premises as part of their IT sessions. Young people recruited via youth organisations were also given these two options. Travel expenses and lunch were offered to the groups that came to campus. All participants were compensated for their contribution and time with a £10 gift voucher.

This paper draws on data from the xx project’s first and second wave of Youth Juries that took place in February 2017 and February/March 2018. The main objective of the xx project was to promote digital literacy among children and young people with regards to the use of algorithms. To do that, the xx research team engaged with young people in the co-development of educational materials and resources to support youth understanding of online environments, while also providing evidence to UK Parliament inquiries62,63 and policy briefings.64,65

Results and discussion

A total of twenty-five two-hour juries were conducted that included 260 young people aged between 12 and 23. The study was advertised to secondary schools and several regional youth organisations representing ethnic minorities to ensure our sample was representative and inclusive. The definition of ‘young person’ included the traditional age bandings mapped on to the United Nations General Assembly, 66 Unicef 67 and World Health Organisation definitions 68 : Adolescents 10–19 year olds, Young people 10–24 year olds, Young adults 20–24 year olds and youth 15–24-years-old. The rational for this selection strategy was to include all students from secondary schools and prevented excluding young adults recruited from youth organisations not linked to mainstream education.

Note that while the age distribution for jury participants recruited via schools was small (e.g. Year 9 class only included ages ranging between 14- and 15-year-old), the age distribution for jury participants recruited via youth organisations was larger as groups were not linked to specific school years. During study feasibility testing, it was noted that familiarity (i.e. participants knowing each other) and facilitator expertise were two key factors for engagement and participation rather than age. Juries during wave 1 were facilitated by researcher EPV and co-facilitated by LD who later on facilitated wave 2 with HC as co-facilitator.

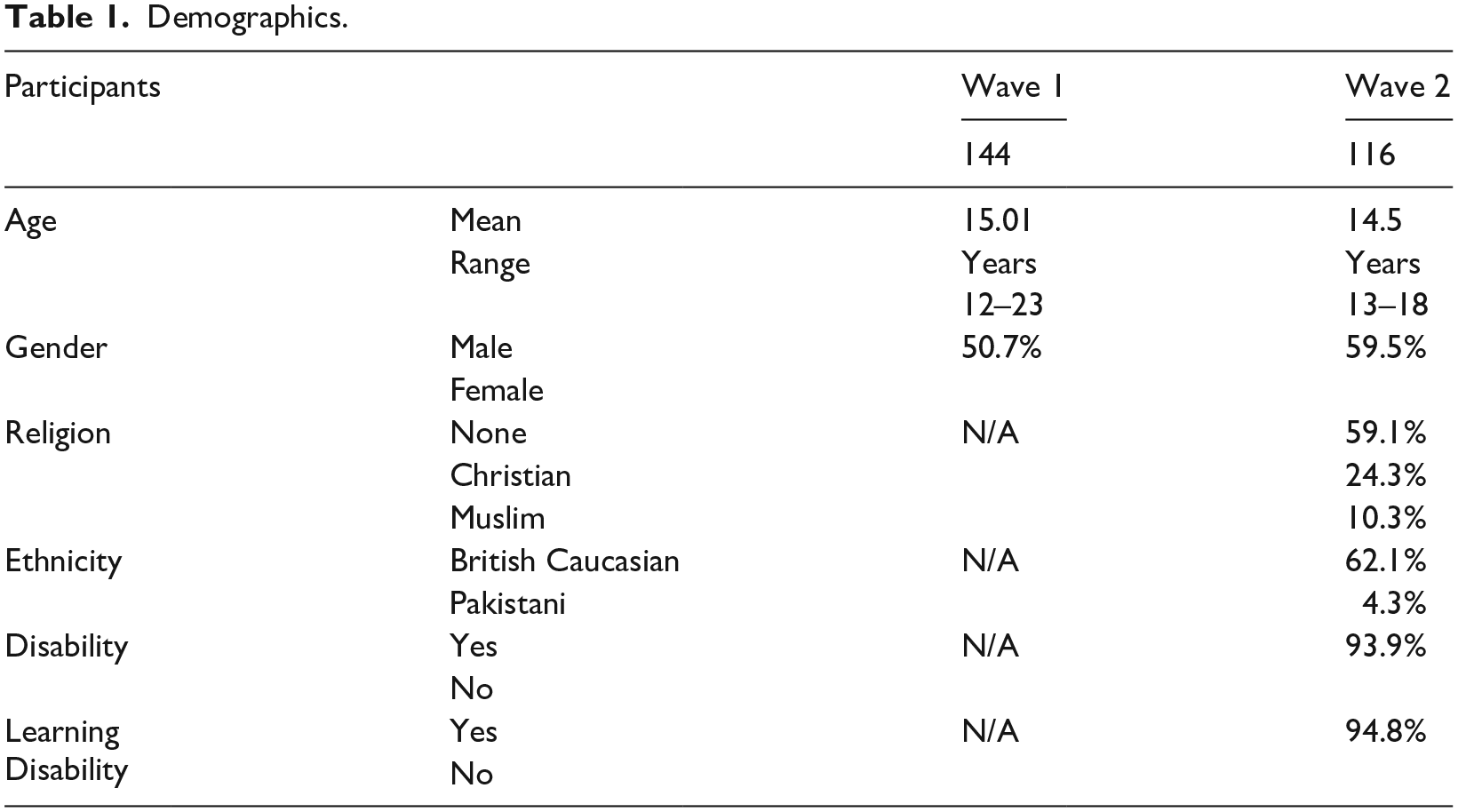

Wave 1 consisted of 14 juries with 144 young people aged 12–23 (only 4 were aged 19 or over), and wave 2 consisted of 11 juries with 116 young people aged 13–18. Overall, the mean age of participants was 15 years, and they were 56.2% male. See Table 1 for demographic characteristics.

Demographics.

Despite, as previously stated, many scholars claim that young people are innately proficient in utilising the Internet and digital technologies,

1

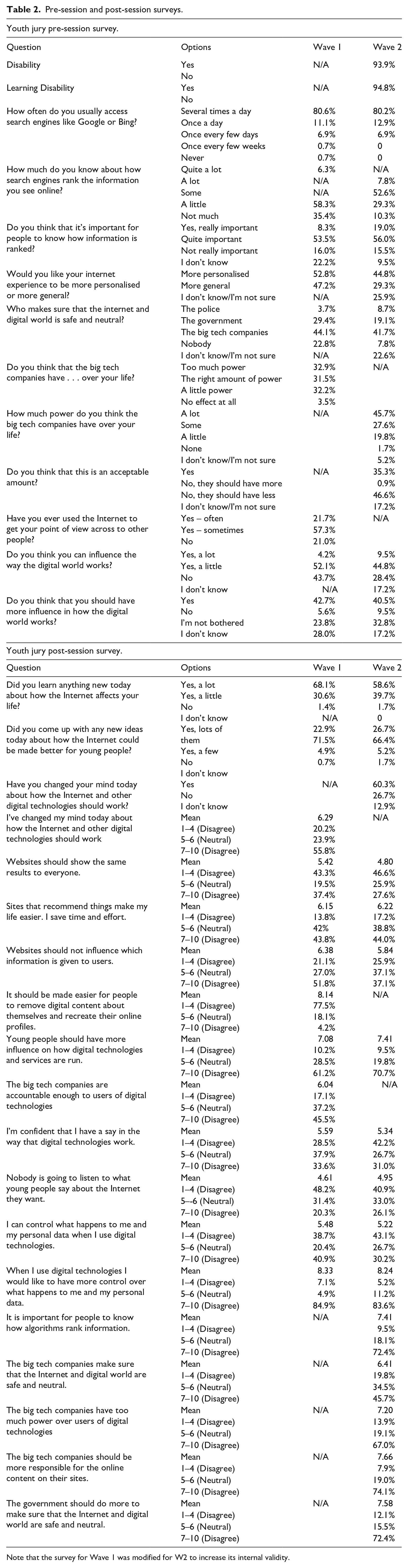

results of the pre-session questionnaire (see Table 2) across both waves of juries show that nearly half felt they only knew a little about how such services rank the information they see online (45.4%), and a quarter knew not much or nothing at all (24.2%). They did however feel that this knowledge was important (67.7%, including 13.1% who thought it was really important). In the post-session questionnaire in both waves, most indicated that they had learnt something about how the Internet affects their lives (95.8%, including 24.6% who learnt a lot), and came up with ideas about how the Internet could be made better for young people (93.8%, including 24.6% who came up with a lot of ideas). More than half also changed their minds about how the Internet and other digital technologies should work (57.9%). Importantly, 65.6% agreed with the statement that ‘young people should have more influence on how digital technologies and services are run’. This was also explored within the Youth Jury discussions, where they agreed that the Internet and digital technology use is increasingly more common amongst younger children: ‘People should listen more to younger generations as it is our generation that is more tech based, meaning we should have a say’(W2, YJ10)

Pre-session and post-session surveys.

Note that the survey for Wave 1 was modified for W2 to increase its internal validity.

The following sections highlight juror’s experiences using digital technology, social media and the online world, exploring the impact on their privacy constraints, trust, and feelings of disempowerment, but also the benefits experienced.

Privacy, safety and distrust

As previously mentioned, children and young people growing up in the online world often feel social peer pressure to conform to using social media frequently, and fear social exclusion if they do not. 46 Therefore, it is important to recognise the implications for their privacy and how this affects their well-being. The following highlight the numerous ways in which the jurors were able to make sure they felt their need for privacy online was met, whilst also exploring the impact of how the online world governs young people’s privacy.

Many of the young people articulated extreme concerns regarding their privacy in the online world. The following discussion captures these concerns:

Facilitator (F): And that was very well known and it was quite shocking. So when you think about all the positives of recommendations, but you get an extreme like that, how do you feel, how would you feel about it? What about you?

Young Person 1 (YP1): I think I’d feel like it was an invasion of privacy.

Yeah the same.

I was just thinking the same as well.

So everyone agrees on personalisation algorithms can get a bit into, too much into your private day, private life.

Yeah, I think it might be better if say she might have wanted those recommendations, but just not at that time. So I you had the ability to choose whether you can have these recommendations on different sites. So not like you’d say oh I want recommendations and it gives you then for every site. But say if you wanted it for Spotify but not on Facebook or not on like a supermarket, and what you want them for and stuff, that would really help.

That’s a good point, because it’s good and it’s very useful and I just gave another positive example of it as well. But I completely agree with that as well. Being able to have a more controlled web where you would like to have personalised algorithms, or in which other platforms you wouldn’t like to. Anyone else would like to add something?

I think personalised algorithms can get quite annoying as well. So if I watch a YouTube video on chemistry then for the next month’s it’ll always recommend me chemistry when it’s just like I watched that one video.

One juror expressed that: ‘I know this sound a bit extreme, but they’re like taking away your human rights’. (W1, YJ10) and another has gone as far as to hide their camera on their phone in order to prevent being monitored: ‘You know the facial recognition actually put me off buying any of those phones because, um, I’m the sort of person who keeps blue tac on my, um, camera and my laptop and all that sort of stuff. Because I really don’t like the idea of someone hacking into my camera and seeing where I am and looking at me while I’m doing things’. (W2, YJ5).

Stalking, both online and offline, can have detrimental effects on young people’s mental health and well-being.

44

Related to this, the jurors revealed serious concerns that, due to the algorithms and monitoring used on the Internet and social media ‘this is essentially stalking’ (W2, YJ7). This was further explored by jurors, in response to a screenshot showing the results of a computer scan for spyware: ‘It is kind of frightening, because then you think that it’s not, when you’re on the Internet you mostly think it’s just you looking at that page, it’s just you, broadband and then the internet, but the idea of this, this has detected 985 [tracking cookies] makes you think maybe people are maybe, well, basically stalking you or something, I don’t know’. (W1, YJ13).

Jurors revealed they controlled their privacy online by using certain strategies to assess the privacy they were offered by platforms: ‘I’m on, like, every social media where I have, I always have it put on private, so I have, if, if someone wants to follow me, I have to follow them’. (W2, YJ1) and ‘Also, on Snapchat, um, although you can’t put your account on private, my username is so obscure, no-one would ever type it in’. (W2, YJ1), One juror mentioned that although it was at the risk of becoming socially excluded: ‘Anything that you can’t be private on, I just don’t have’. (W2, YJ1). However, this led to other jurors expressing feelings that they are ‘missing out’. Not all children and young people exhibited concern or anxiety about their privacy however, with some exclaiming that they are not overly bothered by it: ‘I wouldn’t be too scared if, like you came up to me and said oh yeah I saw you on Snapchat or something else. I’ll be like, okay’ (W2, YJ5) and they believe there is no cause for concern regarding privacy due to not being famous: ‘I haven’t really done anything, so nobody’s really going to, you know, go look out and want to know where I live’. (W2, YJ5). One juror revealed they feel safe online, due to the idea that if your behaviour online is sensible and legal, then you can remain safe: ‘I generally, like, search to be anonymous and not to be recorded but, at the end of the day, if you don’t do anything ridiculously awful on the Internet then, you’ve got nothing to worry about. If you search at normal things, asking random questions, being curious, having a little look on your Facebook and everything like that they will know who you are but, unless you’ve done something awful then, I don’t think you should care if they know who you are’. (W1, YJ2).

The young people showed a strong awareness of the issues within safety in the online world and using social media in regard to children: ‘But the problem of younger kids signing up to stuff as well, that becomes a problem because it’s easy to lie about your age, all you have to do is put a different year and suddenly you’re five years older, systems like that don’t work’. (W1, YJ6).

However, other jurors were of the opinion that children and young people have ‘got to be exposed to everything eventually’ (W2, YJ6) and that ‘they shouldn’t be given a safe space’ (W2, YJ6), but rather should ‘throw them in the deep end’ (W2, JY6). One particular juror expressed that: ‘If you are given a safe space throughout your whole childhood, then as soon as you go outside of that safe space, you’ll crumble’. (W2, JY6).

Many of the young people recognised that due to their concerns surrounding the privacy and safety of social media and the online world, they had severe mistrust with digital technology: ‘Computers shouldn’t be trusted as much’. (W1, YJ11). It is often mentioned that they feel ‘deceived’ and that ‘people are lying’ (W2, YJ10). However, it was revealed that the reliability and validity of the website, or its verification status had a significant impact on the trust of the information posted: ‘people tend to trust it more because it’s more familiar’ (W1, YJ3) and ‘I’d trust a popular newspaper more than what someone had wrote online’. (W1, YJ11).

A lot of the Youth Juries revealed concerns regarding fake news, which can assist in the development of cynicism, negative self-worth and mistrust.

15

Some jurors went as far to describe this as an act of terrorism: ‘It’s basically like an act of terrorism, a small act of terrorism’. (W1, YJ8) For example: ‘I think the media’s a really scary place, especially with people like Donald Trump running America. That’s ridiculous, because it clearly shows that Google don’t really care about morals, they have none. So if Donald Trump wants to, and he’s got the money to because he was a billionaire before he was the President, so now he’s even richer. And he’s got even more power, so it’s Google basically saying, all those other Safari etc, well if you’ve got enough money then we can publicise whatever you want us to. So now we can say actually no, we’re not banning Muslims from the country, this is not the case. Or no this didn’t happen, Donald Trump is the most amazing person in the world, he’s like the best President. I think it’s just wrong because there needs to be some moral code’. (W1, YJ5).

Such responses point to concerns by users that platforms are failing to be governed by an ethical framework that moderates content fairly. They also highlight the user’s lack of confidence in platforms to prioritise the ethical moderation of content over the opinions of those who hold a position of power. There was much debate around privacy, safety and distrust issues and, in general, discussions highlighted a sense of disempowerment and lack of agency.

Disempowerment

Our investigation suggests that children and young people’s perceptions about social exclusion and disempowerment triggered by their experiences online, impacts on their mental health and well-being. Jurors expressed severe concerns regarding the impact of algorithms used in social media and the online world: ‘It’s a bit disturbing. . . It kicks in that they know, they can know everything about you. It’s a bit worrying’ (W2, YJ1) and how ‘there is a fine line where it’s like creepy and not creepy’. (W2, YJ5). Some jurors expressed concerns of being watched and/or monitored: ‘Obviously, it’s not really disturbing, but it’s disturbing at the same time because in a way you’re being watched’ (W2, YJ2) and ‘I feel like it’s just basically like monitoring your life’. (W2, YJ4). Some jurors even expressed concerns about conspiracies regarding the government invading their privacy ‘Don’t you think this is how easy it would be for someone. . .for the government or someone to just find you’. (W2, YJ5).

The Youth Juries expressed fear, and anxiety when talking about the online world and digital technology: ‘I feel in danger’, ‘Disturbing, in danger’. (W2, YJ2). This is explained within the aforementioned research, which suggested that social media can be a risk factor for psychopathology in children, through exacerbating symptoms of anxiety. 29 However, other jurors explored the positive impact of social media and the online world, suggesting that if you were in danger, the use of the algorithms could potentially assist you: ‘I think like it’s weird when you know about it but then if you didn’t know about it, if you were in danger or something and I don’t know, maybe you went missing or something, they could do something like that. Because usually at this place at this time’. (W2, YJ2). In the pre-session questionnaire, jurors also indicated that whilst they felt they could influence the way the digital world works at least a little (55.4%), many of them also wanted the capacity to be more influential (41.7%). This was echoed in the post-session questionnaire, where 40.7% disagreed that they could control what happens to them and their personal data when they use digital technologies, and 84.3% agreed that they would like to have more control. Positively, 44.8% also disagreed that nobody would listen to young people about the Internet they want (with another 32.1% feeling neutral).

Some jurors reported that social media and digital technology impacts their personal relationships through peer pressure: ‘People take things so seriously, like if you don’t like someone’s photo it’s like oh, you’re not my friends anymore’. (W2, YJ5). This supports research which suggests that the quality of social interaction is reduced the more digital technology is used and that the need for statistical validation through ‘likes’ and shares negatively impacts quality communication.46,55,56 Another juror referred to the judgement that young people are now subjected to when posting online: ‘. . .And so a lot of teenagers have that problem nowadays that you can post anything and you think it’s a really nice picture. But people will find things to be mean about’. (W1, YJ10).

Convenience, entertainment and personalisation

In contrast to the concerns expressed in the Juries, there were also several benefits reported by the young people. Research expresses that digital technology is desirable, essential and should meet the needs of children and young people.

7

The majority of the jurors reported how much they like the amount of ‘freedom’ they received online: ‘Like the fact that you’ve got the freedom to be able to watch and do what you want’ (W1, YJ10). Another benefit expressed by the jurors was the accessibility and convenience, for example ‘it’s quicker’ (W1, YJ12), ‘the Internet is more efficient’. (W2, YJ2, written recommendation). In the post-session questionnaire, 43.9% of jurors agreed that sites that recommend things make their lives easier, saving them time and effort (a further 40.7% of jurors responded neutrally to this). One juror mentioned the convenience in contacting long-distance friends: ‘Generally, it’s quite good because I’ve got a lot of long-distance friends and family, so it’s really nice to be able to talk to them really quickly so you don’t have to send a letter, because that would take forever’(W1, YJ10).

However, not all jurors thought that the level of accessibility and convenience was beneficial; it was expressed that it ‘makes people lazy’. (W2, YJ12).

Additionally, many jurors expressed that the entertainment factor presented with digital technology and social media was one of its benefits: ‘I like it because it’s quite entertaining’ and ‘I like the entertainment, various things like that’. In particular, one juror revealed they were able to use a particular search engine focused on helping the environment, which appeared to have a positive impact on their well-being: ‘I use Ecosia, which is, I don’t know whether this is true or not but it makes you feel good. It’s obviously when you search it tracks how many, it says it plants trees for every time you search. Because they say that searching on Google has the emissions equivalent of making a cheeseburger at McDonald’s. So this is like giving something back. I mean I don’t know how true any of that is but like I said it makes me feel better. And you still find the same amount of stuff’. (W1, YJ10).

Furthermore, one of the most commonly reported benefits of the Internet according to the jurors is the personalisation of the online world. Many jurors expressed that the personalisation of social media, digital technology and the online world was ‘convenient’ (W1, YJ1 and YJ12) to them. The Youth Juries were particularly happy about the ability to use a digital technology that is ‘tailored to our use’ (W2, YJ8) because ‘it shows my interests’ (W1, YJ13). In the pre-session questionnaire, nearly half of the jurors wanted their Internet experiences to be more personalised (49.2%); additionally in the post-session questionnaire, nearly half disagreed that websites should show the same results to everyone (44.7%; 32.9% agreed). However, 45.1% also felt that websites should not influence what information is given to users (31.6% were neutral), suggesting they wanted control over the personalisation and the content they saw, and not to leave the choice to the websites. It was also mentioned by a juror that personalisation within the Internet may have the benefit of preventing exposure to traumatic experiences: ‘You hear stories about the “dark web” etc. Through personalisation you could argue that we are exposed less to this’(W2, YJ12).

One juror went as far as to say that personalisation is the reason why we are committed to and find hard to disengage from digital technology and the online world: ‘I like how it’s personalised and I think it’s worked obviously because we’re all still hooked on social media’ (W1, YJ7).

Conclusion

This paper puts forward the opinions and experiences of young people to explore and reveal their feelings of disempowerment, lack of privacy and mistrust. This study applies the Youth Juries methodology to capture the online experiences of young people when interacting with algorithm mediated systems and their impact on their well-being. Some young people felt severe concern regarding their privacy in the online world, specifically due to the lack of transparency on how algorithms operate and monitoring used on the Internet, referring to it as ‘stalking behaviour’. Fake news was reported as a negative impact of young people’s experience online, potentially influencing the development of cynicism. Whilst adults may also have similar experiences with privacy online, this paper argues that given the dramatic extent of data collection online, it has never been more important to ensure that children’s privacy are robust and enforceable. In this paper, we also argued that young people should play an instrumental role in shaping the digital world. It is vital that solutions are put forward that promote and cultivate digital literacy, agency and autonomy amongst young people. In the UK, regulators are increasingly acknowledging that children require additional protection for their data, even when (in a general level) consent has been provided to process it. As digital technology becomes more and more embedded in children’s lives, parents must be supported by a regulatory regime in which companies share responsibility for protecting the privacy of their child users. The UK’s Age Appropriate Design Code, set to become law in the next few months, is one example of this more principle-based approach to children’s data protection- but it is consistent with a consensus that is emerging around the world. We would like also recommend that the UK’s Age Code extends its protections to all minors, not just under 13s, challenging the status quo in which millions of young people aged 13–17 years receive almost no specific data protection during some of the most vulnerable years of their life.

It is important to involve children and young people in the monitoring of the impact of digital technology and the online world has on mental health and well-being. This paper presented some of the jurors concerns and issues to help highlight the negative and positive impacts experienced. Both the industry and the government must recognise the significance of the thoughts and opinions expressed by children and young people, who despite being a major representation for the online world demographic, are often overlooked. It is important to take responsibility in adapting the digital technology to protect, empower, and bring trust to children and young people in order to better support their well-being and mental health as they grow up in a predominantly digital world.

The results from the juries support policy recommendations including the need for government interventions to improve digital well-being such as encouraging digital business to design their product and services to actually increase well-being and empower users to manage their digital lives. This paper also highlights the need to prioritise the strictest enforcement of Data Protection law and in particular UK Data Protection Act 69 ‘Age appropriate design’ to services targeting and / or accessed by children, including that services should assume by default users’ need in relation to child protection until explicit action is taken to opt out.

Key messages

Young people is concerned for their online privacy, safety and trust which can have a significant impact on their well-being.

Algorithms are often designed to provide a more personal and engaging user experience, as a consequence, more user personal data is capture for commercial purposes. The ubiquitous persuasive design embedded in technologies aims to maximise the capture of personal data. This is an unethical commercial practice that does not comply with data protection regulations.

Government, industry chiefs and technology designers should incorporate policies that minimise the capture of personal data (i.e. GDPR data minimisation) and maximise privacy by default features (i.e. ICO Age Appropriate Design Code)

Practitioners should consider the differences between pathological Internet addiction and excessive digital screen use. While addiction is robustly associated with important physiological, cognitive and emotional clinical outcomes, excessive digital screen use is linked to users’ inability to disengage at will due to persuasive design properties (e.g. notifications, auto play by default, alerts)

Limitations

While the number of participants is considerably large for a qualitative approach, the geographic representation may be limited. Future research should consider the inclusion of young people living in Scotland and Wales as well as rural areas.

Our recruitment strategy targeted young people from BAME communities but we did not collect data on gender diversity or approach groups from the Lesbian, Gay, Bisexual, Transgender, Queer, Intersex, Asexual (LGBTQIA) communities. To ensure representativeness and inclusion, future research should monitor gender diversity and proactively engage with underrepresented groups.

Another limitation was the classroom setting. While some youth juries took place at The University of XX, others took place in secondary schools during school hours and part of their Information Computers and Technology (ICT) lessons. We acknowledge that these two settings are very different and that participants could behave and respond differently depending of the context. The result did not show any differences (e.g. discussion topics or participation level) but future research should be mindful of the potential differences that can emerge from the different contexts. We recommend facilitating a couple of ice-breaking exercises to ensure participants feel safe and any power dynamics neutralised.

Footnotes

Acknowledgements

Dr Elvira Perez Vallejos also acknowledges the financial support of the NIHR Nottingham Biomedical Research Centre and UK Research and Innovation (UKRI). UKRI does not necessarily endorse the view expressed by the author.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by EPSRC grant ‘UnBias: Emancipating users against algorithmic biases for a trusted digital economy’ (EP/N02785X/1) and EPSRC grant ReEnTrust: Rebuilding and Enhancing Trust in Algorithms (EP/R033633/1).