Abstract

Background:

The integration of artificial intelligence (AI) into our digital healthcare system is seen as a significant strategy to contain Australia’s rising healthcare costs, support clinical decision making, manage chronic disease burden and support our ageing population. With the increasing roll-out of ‘digital hospitals’, electronic medical records, new data capture and analysis technologies, as well as a digitally enabled health consumer, the Australian healthcare workforce is required to become digitally literate to manage the significant changes in the healthcare landscape. To ensure that new innovations such as AI are inclusive of clinicians, an understanding of how the technology will impact the healthcare professions is imperative.

Method:

In order to explore the complex phenomenon of healthcare professionals’ understanding and experiences of AI use in the delivery of healthcare, an integrative review inclusive of quantitative and qualitative studies was undertaken in June 2018.

Results:

One study met all inclusion criteria. This study was an observational study which used a questionnaire to measure healthcare professional’s intrinsic motivation in adoption behaviour when using an artificially intelligent medical diagnosis support system (AIMDSS).

Discussion:

The study found that healthcare professionals were less likely to use AI in the delivery of healthcare if they did not trust the technology or understand how it was used to improve patient outcomes or the delivery of care which is specific to the healthcare setting. The perception that AI would replace them in the healthcare setting was not evident. This may be due to the fact that AI is not yet at the forefront of technology use in healthcare setting. More research is needed to examine the experiences and perceptions of healthcare professionals using AI in the delivery of healthcare.

Introduction

The integration of artificial intelligence (AI) into our healthcare system is seen as a significant strategy to contain Australia’s rising healthcare costs, support clinical decision making, manage chronic disease burden and support our ageing population.1,2 With the increasing roll-out of ‘digital hospitals’, electronic medical records, new data capture and analysis technologies, as well as a digitally enabled health consumer, the Australian healthcare workforce is required to become digitally literate to manage the significant changes in the healthcare landscape.3–6 Along with these innovations comes an increase in the amount of data that is available for clinical decision making. Machine learning (NL) and AI technologies are increasingly being deployed in healthcare settings to process, interpret, refine and act on the vast volume of data that is now available. AI promises benefit to the healthcare industry, but it also poses challenges in this new dimension of healthcare, and its impact on health professionals, organisations and governments is yet unknown.1,2 Internationally, AI is being built into the foundations of society with at least 19 countries publishing National Strategies on AI and aiming to become global leaders in its application and use, including Australia. The strategies are collectively focusing on the development of infrastructure, research excellence, collaborative opportunities, education, recruitment and economic, ethical and legal policy development.7–24 A National Digital Health Strategy was created by the Australian government in 2016 in an effort to produce an efficient healthcare service and reduce healthcare expenditure. 25 The digital strategy has enabled the widespread implementation of digital technology to update clinical and data governance systems, medication management and communication within health districts. It promises collaboration between clinicians, researchers and industry to develop and integrate new innovations such as AI by 2022, thereby building on Australia’s existing leadership in digital healthcare. 25 One of its priorities aims to have the ‘healthcare workforce confidently using digital health technologies to deliver health and care’. 25 In order to do so, we must first establish the understanding and experience of the healthcare professional and the impact this technology will have on their ability to deliver care. The scope and versatility of AI when introduced to the healthcare setting can potentially redefine the role of healthcare professionals and their underlying beliefs, values and assumptions in the provision of healthcare. 26 Akin to the National Digital Strategy, the Commonwealth Scientific and Industrial Research Organisation (CSIRO), 4 the Australian Government’s research agency, is developing a range of innovations which target areas of need within the healthcare system, such as waiting times and access to services and predicting at-risk patients to coordinate care. CSIRO’s Digital Impact Strategy for the workforce identifies a growing need for knowledge, education, training and information for the healthcare sector.4,27 It is not known to what degree healthcare professionals are currently engaged with AI, their experiences nor their perceptions of its use in the provision of healthcare. To ensure that innovations such as AI are inclusive of clinicians, an understanding of how the technology will impact the healthcare professions is imperative.

Background

AI has been around since the 1950s when Alan Turing first conceptualised ‘computer intelligence’ and the possibility that a machine could essentially ‘learn’ from its own experiences and alter its own instructions to solve problems.28,29 Turing predicted that by the turn of the century, it would be possible to programme computers that are indistinguishable from human beings.29,30 It has now become a race globally to become the first country to advance their position and become the leading force in the development of AI.31–33 Many countries are investing significant amounts of money into AI research and development with over US$20 billion invested by the United States, China and the United Kingdom alone in 2016.23,24

AI is not a single piece of technology, but rather a term used to describe a large and growing range of functions, categories, terminologies and types of computer technology. 34 It can be general or narrow in definition depending on its performance and use of data,23,34 and because of this, a widely accepted definition of AI in health has not yet been established. There are several examples of AI development that are well-represented and, therefore, more well-known, such as deep learning (DL), ML and natural language processing (NLP). 34 Russell and Norvig 34 explain that AI has been associated with functions such as planning, perception, reasoning, learning, decision making, communicating, analysing, problem solving and autonomy. There are neurally inspired models that mimic the function of the brain 35 and ML and AI models that rely on statistics and mathematical algorithms to perform. 36 AI applications are expanding and can now be found in robotics, predictive analytics, image recognition, planning and navigation, knowledge representation and NLP. 37 A commonly shared conjecture among AI applications is that ‘every aspect of learning or any other feature of intelligence can, in principle, be so precisely described that a machine can be made to simulate it’. 38

In the 21st century, AI and its potential in healthcare lies in analysing unstructured data, detecting abnormalities, providing correlations and automating and assisting human tasks. These are achieved through the use of NLP algorithms, DL and ML programmes.39–42 NLP algorithms search for key phrases and/or terms in raw Electronic Medical Records data and offer support in decision making. 42 For example, recommendations for additional imaging have been made following computer analysis of a radiology report. 43 Fernandez-Luque and Imran 44 described AI programmes with the ability to identify major disease outbreaks, some triggered by natural disasters, suggesting its application to humanitarian crises. AI has been applied to intensive care unit (ICU) clinical data and found to be of exceptional value in identifying clinical cases of nosocomial infection in an automated manner. 45 DL is a modern version of a neural network, whereby multiple layers of a linear network can discover more complex non-linear patterns in clinical data to aid in detection of subtle abnormalities/variations. 46 Garcia-Chimeno and Garcia-Zapirain 47 concluded that neural networks are capable of conducting accurate pathology classifications. Orjuela-Cañón et al. 48 demonstrated AI’s ability to detect tuberculosis however, concluded that more data were needed to obtain a consistent result and that further research in this area is required. In a study investigating emotional response to music in traumatic brain injury (TBI) patients, a data mining algorithm was found to be capable of clustering and classifying reported emotional responses to music as positive or negative, with the application of this treatment in the unconscious TBI patient conceivable in the future. 49 ML programmes are able to use analytical algorithms to extract features from data, make correlations and offer predictive modelling to the clinician or the patient.41,50–52 E-Health mobile applications utilising AI in their technology are being used in primary healthcare and in mental health. The technology is easy to implement and improves the quality of the treatment decisions.53–55 According to Bennett and Hauser, 56 even in its early stages of development, AI technology can easily out-perform the current treatment-as-usual case-rate/fee-for service models of healthcare. Kavakiotis et al. 57 examined the applications of ML, data mining techniques and tools in the field of diabetes research which demonstrated enhanced classification accuracy and supported the argument for further research in this field.

The revolutionary means by which AI can transform data into outcomes that could improve quality of care and clinical practices of our health professionals is exciting. To date, research has explored the acceptance or intention to use AI, based on the perceived usefulness of the technology and in order to improve the function of the technology.58–61 The focus of AI research has largely been directed towards the technology, where to connect it and how to leverage its use. However, the other central component to this is the human being. What must now be considered is the impact the technology has on the user, in this case, the healthcare professional, how they will connect with AI and how it will affect their ability to deliver care. AI capability and use will differ between disciplines and healthcare professionals. With a lack of a widely accepted definition for AI in health, this means that the healthcare professional may not understand that they are using AI within their practice. To support a sustainable healthcare workforce equipped to meet this rapidly changing digital healthcare environment, there is a need to understand clinicians’ experiences and perceptions of AI in the delivery of healthcare. The interface between technology and the clinician needs to be further explored. The purpose of this integrative review is to critically review the literature investigating healthcare professional’s experiences and perceptions of AI technology in the delivery of healthcare.

Method

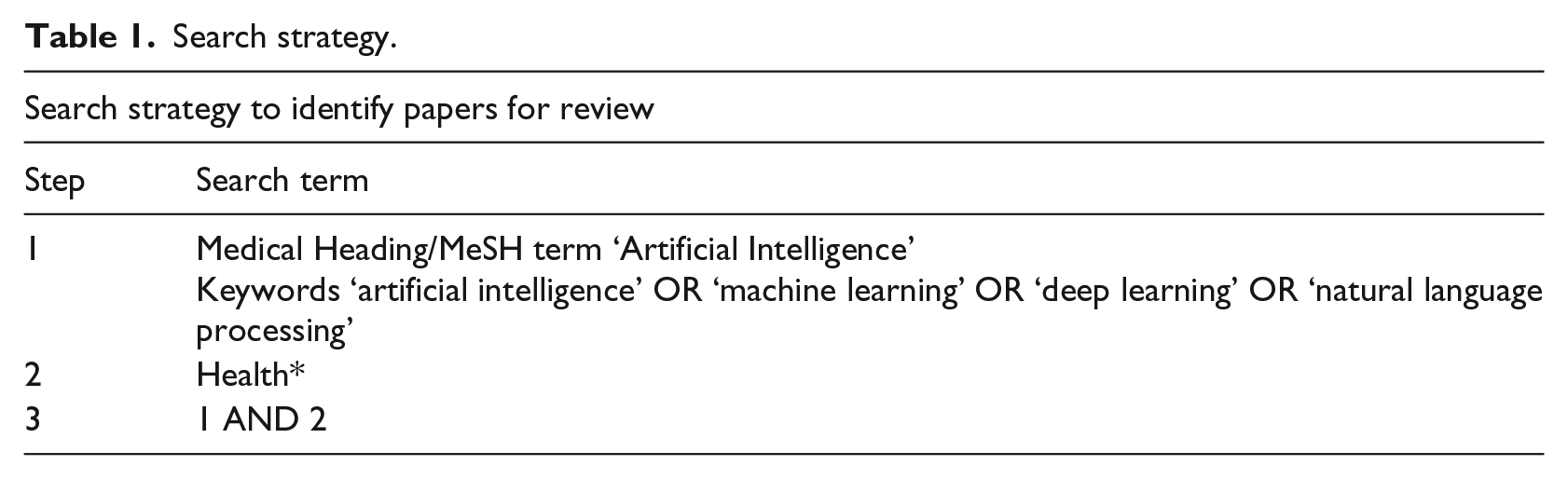

In order to explore the complex phenomenon of healthcare professionals’ understanding and experiences of AI use in the delivery of healthcare, an integrative review inclusive of quantitative and qualitative studies was undertaken in June 2018. A computerised search of health and technology databases was conducted. Searches were conducted in the following databases: the Cumulative Index to Nursing and Allied Health Literature (CINAHL), Medline, Web of Science, Scopus, ProQuest and Computer & Applied Science EBSCO. The MeSH heading ‘Artificial Intelligence’ and key terms ‘artificial intelligence’ OR ‘machine learning’ OR ‘deep learning’ OR ‘natural language processing’ AND ‘Health*’ were searched in three steps (Table 1). These terms were identified as the most common terms used in relation to the concept of AI in the absence of an agreed definition. For each step, articles were limited to those published in English within the years 2008–2018 and duplicates were removed. Reference lists were also searched as a further check that all relevant articles were included. A systematic identification of peer-reviewed articles was conducted for both quantitative and qualitative studies as described below.

Search strategy.

Inclusion and exclusion criteria

At each stage of the integrative review, two authors conducted an evaluation of the articles independently before comparing and discussing their results. A third author was consulted to determine inclusion or exclusion of articles that could not be decided upon. The inclusion criteria were primary studies that examined the experiences and perceptions of healthcare professionals using AI in the delivery of healthcare. All healthcare professionals were included in this study to explore possible professional differences in the use of AI and whether experiences of using AI differed between professions. Articles were excluded if the study was not published in English, the topic was not related to AI or health, if it was not a primary study or the results focused only on the technology. Only technology that incorporated AI was included. Finally, articles were excluded if they did not examine the experiences or perceptions of healthcare professionals using AI in the delivery of healthcare.

Search outcome

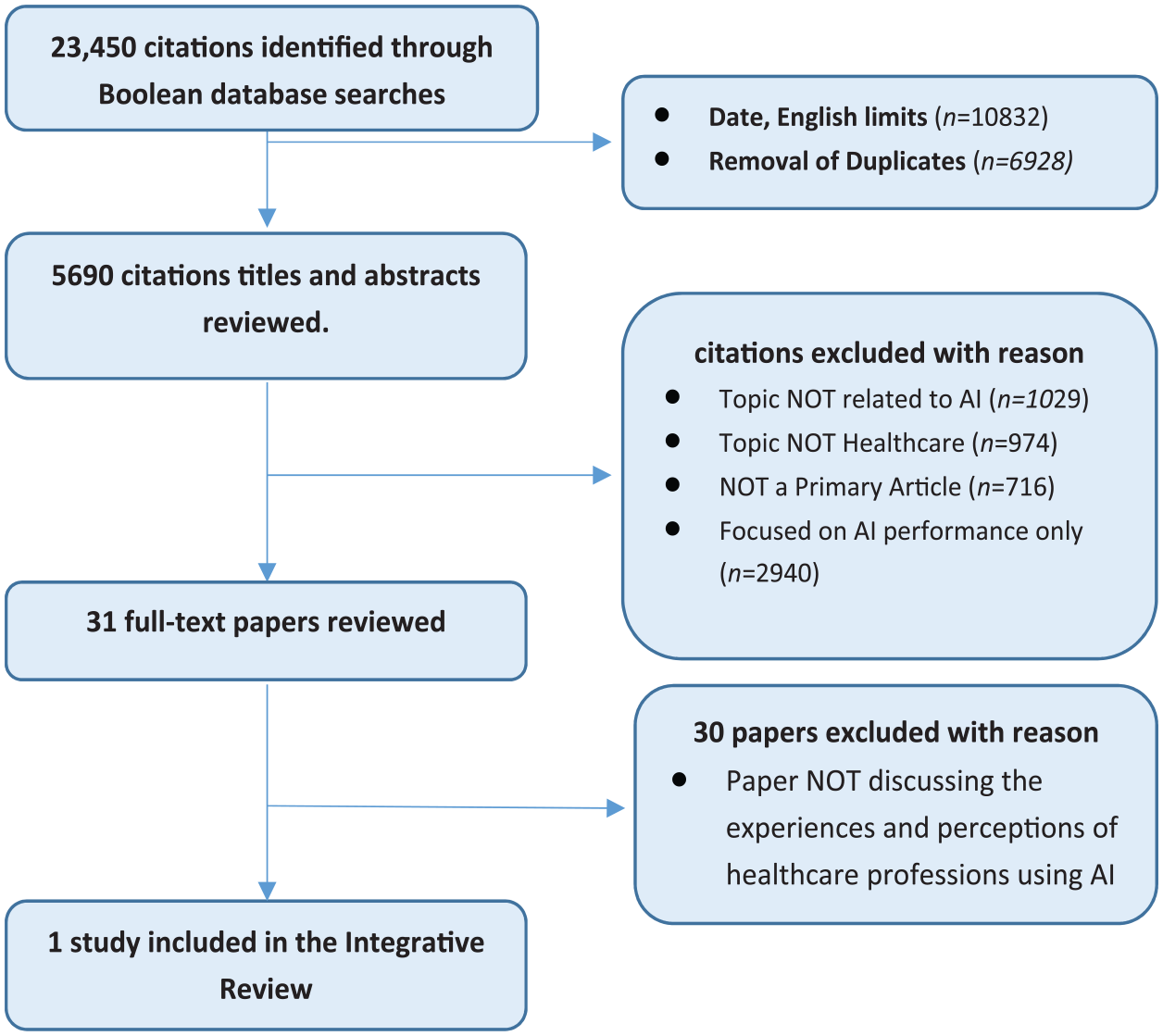

The search strategy yielded 23,450 studies, of which 17,760 did not meet English language and date limits (10,832) or were duplicates (6928), leaving 5690 titles and abstracts to be reviewed. Of these, 5659 were excluded with reason (see Figure 1) and the remaining 31 full-text articles were reviewed. As the purpose of this integrative review was to examine the experiences and perceptions of healthcare professionals using AI in healthcare delivery, articles that did not focus on the healthcare professional were omitted from the review. One study was finally included in the integrative review.

PRISMA flow diagram.

Quality appraisal, abstraction and synthesis

In order to provide a critical assessment of the final article, the Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) 62 tool was used. This method of appraisal is widely used for designs of analytical epidemiology such as cohort, case-control and cross-sectional studies. The article was assessed for its design methods, primary objective, sample population and size as well as its outcome measures and main findings.

Results and quality appraisal

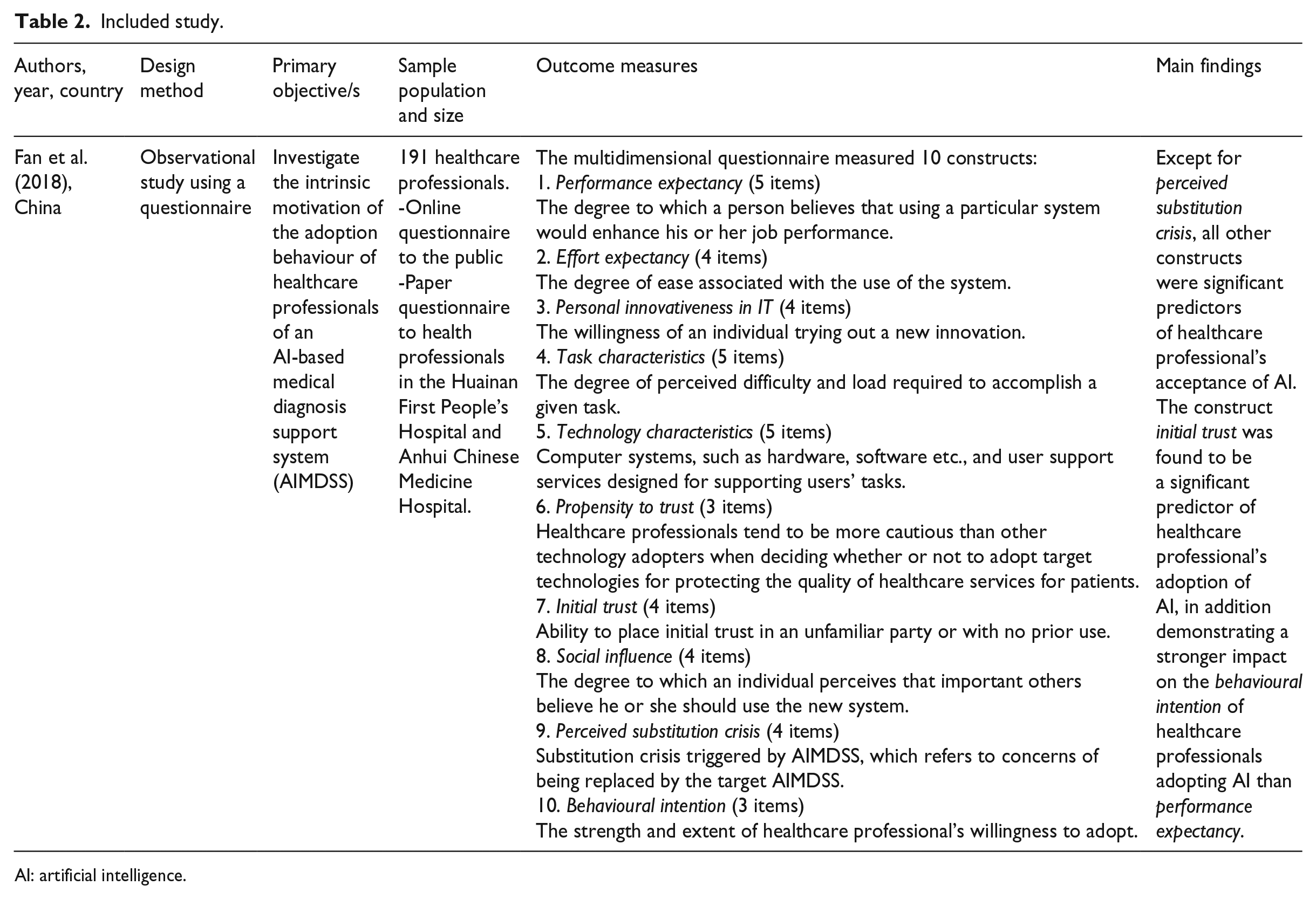

One study, published in 2018, met all inclusion criteria 63 (see Table 2). This study was an observational study which used a questionnaire to measure healthcare professionals’ intrinsic motivation in adoption behaviour when using an artificially intelligent medical diagnosis support system (AIMDSS). The study recruited 191 participants through a public online questionnaire and a paper questionnaire given to healthcare professionals at the Huainan First People’s Hospital and Anhui Chinese Medicine Hospital. The questionnaire was adapted from four previous studies,64–67 and included 43 items, measured using a 5-point Likert-type scale, with answer choices ranging from ‘strongly disagree’ to ‘strongly agree’. The multidimensional questionnaire measured 10 constructs (see Table 2 for definitions): performance expectancy (five items), effort expectancy (four items), personal innovativeness in IT (four items), task characteristics (five items), technology characteristics (five items), propensity to trust (three items), initial trust (four items), social influence (four items), perceived substitution crisis (four items) and behavioural intention (three items). 63 Measurement model evaluation found that the reliability and validity of each construct indicated a high level of convergent validity. Twelve hypotheses were proposed by the authors that were used to demonstrate the relationships between the constructs and how they affected the adoption of the technology.

Included study.

AI: artificial intelligence.

The STROBE tool 62 was used to evaluate the components of the study. Fan et al. 63 clearly identified in the abstract and introduction the aims and rationale of this study. The methods were also clearly described; however, there were a number of design weaknesses associated with this small observational study. The questionnaire was distributed online and via post, with 76% (n = 71) of the respondents under 40 years of age. The majority were female (62.8%; n = 120) and physicians (85.8%; n = 164), with the remaining 14.1% (n = 27) unable to be determined. It was also unclear if participants had used the technology previously. The study did not perform correlational analysis on potential variables such as age, gender and discipline, factors which may have a relationship with technology acceptance. While the Cronbach’s alpha scores for each item ranged from 0.818 to 0.926, further psychometric testing is required to determine the validity and reliability of the questionnaire. The authors used a flow diagram to demonstrate the relationships between the constructs and established hypotheses; however, the findings are limited to one country. A larger international study could confirm the results.

The study by Fan et al. 63 found that initial trust and performance expectancy were significantly predictive of behavioural intention to use AI technology in the delivery of healthcare. Moreover, initial trust showed a strong impact which is not consistent with previous studies, 68 as performance expectancy usually had the greatest impact. The author suggested that this finding was unique to the healthcare setting. That is, the intention of healthcare professionals to adopt AI was based on trust that the technology would support patients’ health, rather than the actual performance of the technology. Except for perceived substitution crisis, the other constructs were significant predictors of healthcare professionals’ acceptance of AI, a result which was unexpected in this study. Fan et al. 63 proposed that AI has only recently been introduced in China, and as yet, healthcare professionals are not under threat of being replaced by the technology.

Discussion

The aim of this study was to critically review the literature investigating healthcare professional’s experiences and perceptions of AI technology in the delivery of healthcare. One study, by Fan et al., 63 investigating the intrinsic motivation of the adoption behaviour of healthcare professionals of an AIMDSS, met the criteria. This small observational study by Fan et al. 63 found that healthcare professionals were less likely to use AI in the delivery of healthcare if they did not trust the technology or understand how it was used to improve patient outcome or the delivery of care. This finding is specific to the area of health, and similarities are found when looking at the area of digital technology in health that does not use AI. A recent study by Nakrem et al. 69 examined the experiences of patients and healthcare professionals using digital technology in medicine delivery. The results showed that if the healthcare professional did not understand the rationale for digital technology or believe that it would improve the relationship or the quality of care delivered, they were less likely to adopt it. 69 Previous studies have identified that healthcare professionals’ knowledge of relevant digital systems is limited, 70 and a greater understanding of its benefits and barriers particularly in the setting of healthcare is needed. 71 Kolltveit et al. 72 explored telemedicine technology in caring for people with diabetic foot ulcers and concluded that insights from the health professionals’ experiences will inform the context of technology use. Nilsen et al. 73 suggested that a much closer relationship between the innovators, developers and users of the technology, which would include both the patient and the healthcare professional, is needed to ensure technology is appropriate to the healthcare setting.

A perception by healthcare professionals that AI would replace them in the healthcare setting was not evident in the study by Fan et al. 63 This may be due to the fact that AI is not yet at the forefront of technology use in this healthcare setting. In another setting (the Assisting Carers using Telematics Interventions to meet Older persons’ Needs (ACTION) study), 74 it was found that healthcare professionals were at times fearful that the technology would replace them or replace high-quality care delivery. However, the World Health Organization 75 addressed digital health at the Seventy-First World Assembly and recognised that ‘while technology and innovations can enhance health service capabilities, human interaction remains a key element to patients’ well-being’. Health disciplines such as occupational therapy are beginning to explore the role of technology in practice and ways in which it can be incorporated into their professional framework. 76 Hoffman and Svenaeus 77 suggested that medical technology will influence not only the perception of the doctor in regard to treatment and therapy options but also the way they understand the patient’s disease and interpret their complaints. Therefore, research is needed to examine the impact of AI on the role and function of the healthcare professional.

The main limitation of this study is that only one study was eligible for inclusion, and therefore, the results from this review should be interpreted with due caution. A key finding from the review is that there is a clear gap in the literature, a lack of clarity around the definition of AI within the context of health and, therefore, a clear need for future research to address this. In this review, search terms were limited to AI, DL, ML and NLP which may have omitted articles with different understandings of the concept. Another potential limitation of the review was limiting the search to primary studies only. However, this review highlights the need for further research that investigates health professionals’ experiences and perceptions of AI technology in healthcare.

In summary, current literature suggests that healthcare professionals’ trust of emerging AI acts as a key determinant of intention to adopt the technology, as they are concerned about the impact on their patient and did not foresee AI replacing their roles in the near future.

Significance

The increasing integration of AI into global healthcare systems is inevitable as healthcare costs rise with our ageing population. Consequently, further research that explores healthcare professionals’ experiences and perceptions of AI technology is needed. This will inform workforce training and education that supports the integration of AI into the delivery of care.

Footnotes

Authors’ note

The views expressed in the submitted article are of the author’s and not an official position of the institution.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.