Abstract

This study proposes a structural usability model to identify the relationship between the user interface design and the usability of an exergame system that includes a software system and a separate hardware device. The model consisted of two dimensions: the interface design, which was evaluated using Nielsen’s heuristic evaluation method, and the usability, as defined by ISO 9241-11. An empirical study used the iFit exergame system to test the physical fitness of 101 seniors in order to evaluate the model’s validity. The results showed a strong correlation between the interface design and the usability of the exergame system. An improved interface enabled users to interact with the system better, and the usability of the whole system was enhanced, including the device and the system itself. The results show that the proposed usability model can be used to evaluate other exergame systems.

Introduction

Since the introduction of the Nintendo Wii and DS consoles, research has been conducted on the usability of exergame systems. Exergames, unlike traditional videogames, provide a completely new interactive experience for and relationship with users. Exergames are defined as “any types of video games/multimedia interactions that require the game player to physically move in order to play.” 1 Nevertheless, exergaming is a generic term that refers to different styles of interaction. Users might interact with the system freely, with sensors detecting the user’s gestures and body movement (i.e. Xbox Kinect), or they might interact with a hardware device that communicates with the system’s software (i.e. Nintendo Wii, Wii Balance Board, Dance Dance Revolution). The two interaction styles have distinct usability characteristics because the addition of a device means that users must consider not only the user interface of the system but also the device itself. Therefore, when usability studies of exergames are conducted, the interaction style should be considered.

Few of the previous usability studies of exergame systems considered the user interface and device simultaneously. In the study of the Xbox 360 Kinect by Marinelli and Rogers, 2 the system’s user interface was investigated only using Nielsen’s heuristic evaluation method. Among studies of the Nintendo Wii system and other exergame systems that comprise a software system and a hardware device, Meldrum et al.’s study employed both quantitative and qualitative methods to assess the usability of a Nintendo Wii application for rehabilitation. The system usability scale was implemented to investigate user interface technologies, and an open-ended questionnaire was used to collect information on users’ subjective experiences. 3 Papaloukas et al. developed a heuristic evaluation form for experts to use to evaluate user interface designs based on Nielsen’s method. In addition, in an effort to define the relationship between games and usability, ISO 9241-11 was adopted as the standard of usability. 4 Billis et al. 5 used ISO 9241-11 to investigate the relationship between a senior’s emotional state and an exergame platform’s usability and acceptability. The only study that discussed the usability of both a device and a user interface employed a questionnaire to study seniors playing with a Nintendo Wii system. The results indicated that the sensitivity of the Wii’s remote control and its button design caused physical difficulties for the seniors, which was not an intentional function of the game. This study also mentioned video game–specific problems such as the elements, foreground, and background of the game’s user interface. 6

According to a review of exergame usability studies in the literature, researchers usually use Nielsen’s heuristic evaluation method to investigate the design of the system’s user interface. The definition of usability is derived from ISO 9241-11 and includes three basic parameters: effectiveness, efficiency, and satisfaction. However, few researchers have investigated a system’s user interface and hardware device simultaneously. Whitlock et al. merely used an open-ended questionnaire to identify the system’s underlying usability problems. The relationship between the user interface design and the usability of the system was not discussed. As a result, no structural usability model has been constructed for investigating the usability of exergames, especially those with separate software user interfaces and hardware devices, whereas this analysis has been undertaken for usability studies in other fields.7–10

In this study, usability is regarded as a latent variable, and the relationship with its interface factor is discussed. 9 All of the usability studies described above used the three parameters of efficiency, effectiveness, and satisfaction as latent variables and proposed relationships between them and other factors that influenced interface usability. All of these studies were concerned with usability; however, the factors affecting the usability of the user interface were different. In addition, in these studies, no clear definition of the three observed variables (efficiency, effectiveness, and satisfaction) was provided, and no actual measurements were performed. Although all of the studies proposed conceptual models of usability, they focused only on analyzing and comparing interface usability factors, and there was no further analysis or verification of the overall structural equation model.

Overall, this study proposes a structural usability model for identifying the relationship between user interface design and the usability of an exergame system that includes a software system and a separate hardware device. The proposed model includes two dimensions: user interface design, which is evaluated using Nielsen’s heuristic evaluation method, 11 and usability, which is defined according to ISO 9241-11. To evaluate the validity of this model, an empirical study of seniors using an exergame system 12 to test their physical fitness was conducted. The results demonstrated the feasibility of the proposed model.

The structural equation modeling–based approach

The model structure

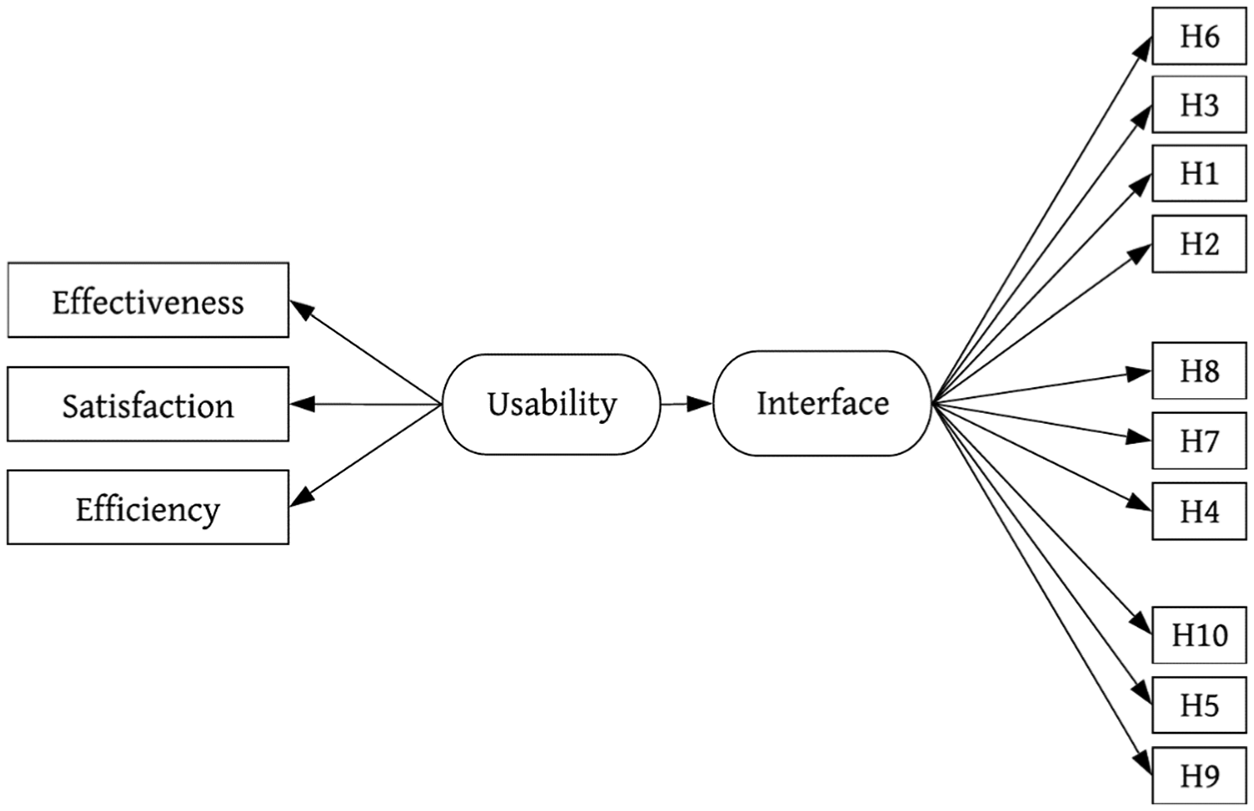

In this study, the usability structural equation model has two dimensions (latent variables). One of the dimensions is usability, and the other consists of heuristic factors. The two latent variables (dimensions) comprise 13 observed variables. Usability consists of the three observed variables defined in ISO 9241-11: efficiency, effectiveness, and satisfaction. The 10 heuristic factors, H1–H10, were proposed by Nielsen. 13 Of the two latent variables, the usability variables are exogenous, and the heuristic variables are endogenous. In accordance with the above relationship between the variables, the complete theoretical structural equation–based model used in this study is shown in Figure 1.

The structural equation–based model of usability used in this study.

The usability dimension

The variables observed in the usability dimension include effectiveness, efficiency, and satisfaction (as defined in ISO 9241-11), which are the primary indexes in this study. The primary purpose of a usability evaluation is to understand the usability of the overall system, including the user interface and the system hardware. Therefore, this study proposes the following parameters for the three indexes: efficiency—the time required by the user to complete a task; effectiveness—the number of incorrect operations while the user completes a task; and satisfaction—a five-item system satisfaction questionnaire completed after the user has completed a task. The following sections explain how to observe and record results for these three usability indexes in a task.

Efficiency

Efficiency indicates the time to complete a task. In this study, the time required by a user to complete a usability task is recorded, and the results are classified into five levels. Khajouei et al. 14 noted the limitation of using time as an indicator for assessing the efficiency of usability. Therefore, an evaluation scale was obtained for this study by dividing the pretest results into five equal groups. If a user completes a usability task in 9 min, that user has performed with maximum efficiency, and 5 points are granted. If a user completes a usability task in 11 min, that user has performed with high efficiency, and 4 points are granted. If a user completes a usability task in 13 min, that user has performed with intermediate efficiency, and 3 points are granted. If a user completes a usability task in 15 min, that user has performed with low efficiency, and 2 points are granted. If a user completes a usability task in 17 min, that user has performed with minimum efficiency, and 1 point is granted.

Effectiveness

This is a method for calculating the number of errors. Based on the iFit exergame system’s evaluation procedure and the operation instructions provided in the test, this study proposes four evaluation tasks for the iFit system: a grip strength test, a responsiveness test, a sense of balance test, and a flexibility test. Each task comprises five major steps. While the four tasks are being performed, the researchers complete an evaluation table for each step to observe and record the states of the participants performing the task. If a participant performing a task succeeds in completing an action, then the participant performs the next action; if the participant fails to complete an action, the researchers record the failure. The number of errors in operation made by the users while executing the task is assigned to one of five levels. The evaluation scale for this study is obtained by dividing pretest measurements equally. Five points are granted if a user makes 0 operation errors in the task; 4 points are granted for two operation errors, 3 points for four operation errors, 2 points for six operation errors, and 1 point for eight operation errors.

Satisfaction

A satisfaction questionnaire is used to measure satisfaction. In this study, the user satisfaction survey includes seven questions. After completing a usability task, the user must answer the seven questions on the satisfaction questionnaire. The results are divided into five levels: 5 points for very satisfied, 4 points for satisfied, 3 points for average, 2 points for dissatisfied, and 1 point for very dissatisfied.

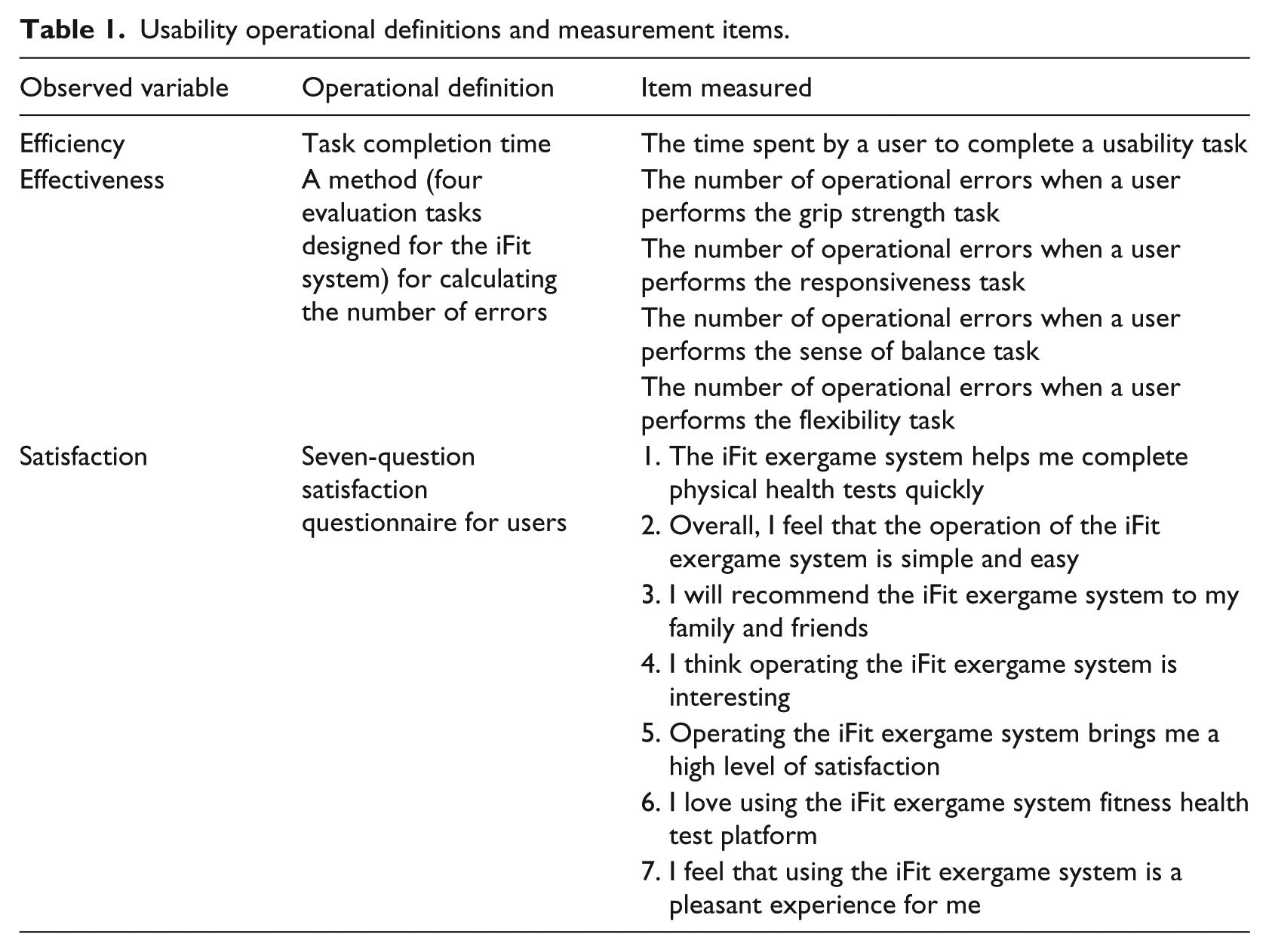

Based on this description, the usability dimension of this study has three essential elements: effectiveness, efficiency, and satisfaction. Operational definitions of these three observed variables are provided. A quantitative method is used, and indexes for measuring the usability dimension are shown in Table 1. The observed variables and the evaluation rating table are calculated using the five-point Likert-type scale method and shown in Table 2.

Usability operational definitions and measurement items.

Evaluation rating table for all items.

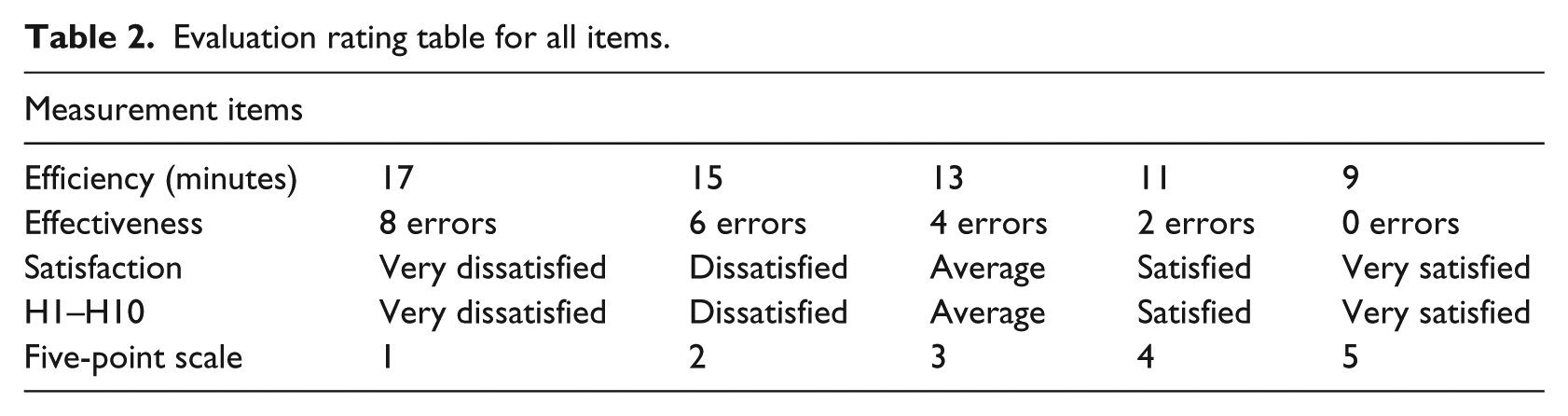

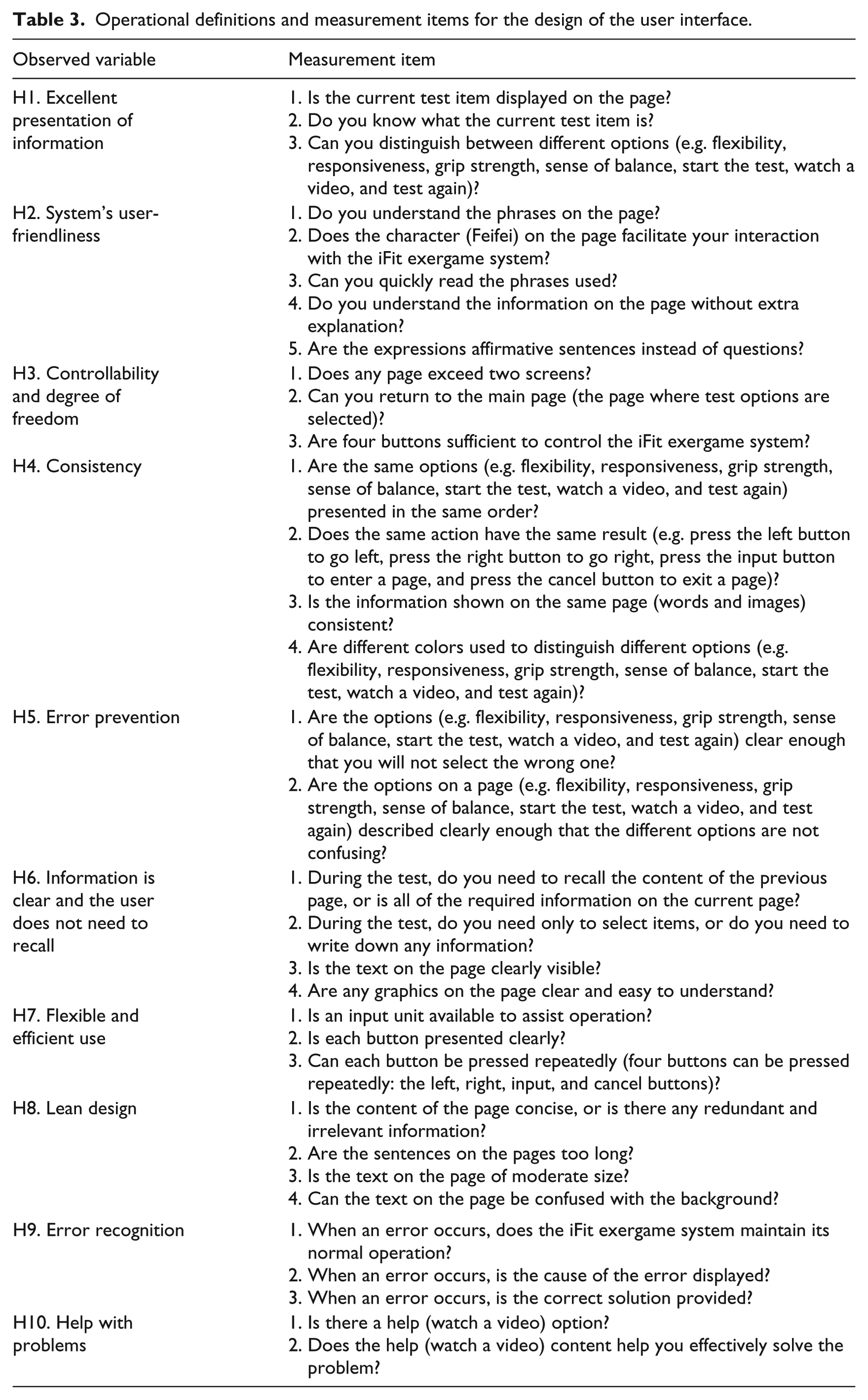

The heuristic evaluation dimension

The method of evaluating the heuristic items uses the 10 heuristic factors proposed by Nielsen for evaluating usability. Because this study tests the usability of an exergame system’s user interface, topics irrelevant to the characteristics of exergaming are removed from the list. In addition, the description and wording of each topic match the characteristics of exergaming so that elderly individuals can easily read and comprehend it. As proposed by Wong et al., the users are the evaluators. The results of a study by Wong et al. 15 showed that when the users were the evaluators, the results accurately reflected their satisfaction levels and opinions about the system’s usability. In addition, usability evaluation has been changed from using an expert-oriented 0–4 point scale to evaluate the level of severity of a usability problem to using a five-point scale from 1 point, very dissatisfied, to 5 points, very satisfied (shown in Table 2). Table 3 shows the operational definitions and measurement items for the design of the user interface.

Operational definitions and measurement items for the design of the user interface.

Empirical study

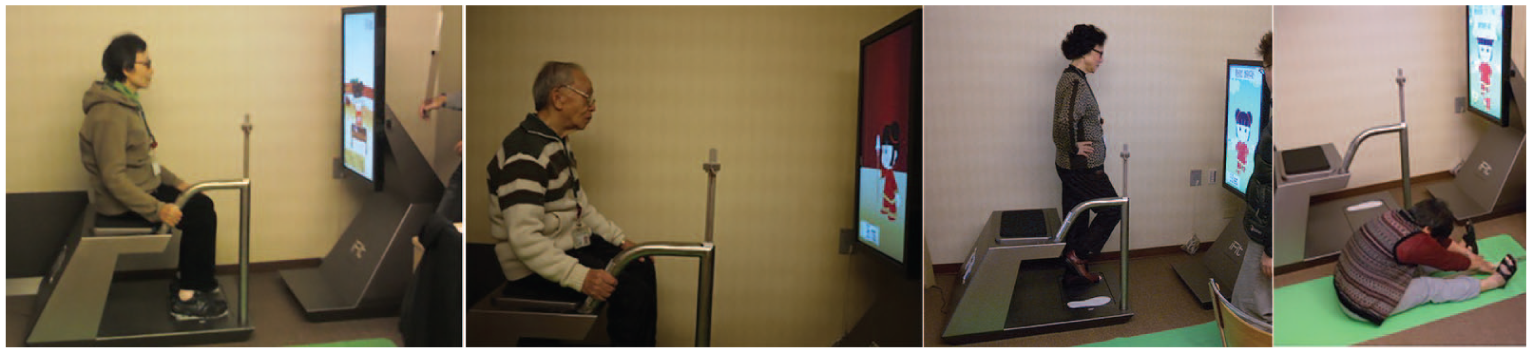

In this study, the test system is the iFit exergame system for seniors (see Figure 2). The following sections review its hardware, software, and operation.

The iFit test platform.

iFit exergame

The iFit exergame comprises modules, sensor communication protocols, positioning algorithms, software interfaces, and a database and can transmit the data collected by microsensors embedded in its cushion, measurement gauge, and handle wirelessly to the database for further processing, storage, and management. In addition, the database of physiological information and the display platform manage the physiological data collected by the game, and the use of an easily understood graphical user interface helps seniors understand their fitness and degeneration states. The database primarily uses each person’s number as an index, and it records the individual’s name and gender along with various physiological information.

The iFit exergame is an integrated fitness test system for which design and construction primarily follow the innovative product design and development theory. Political, economic, social, and technological analyses and marketing, engineering, design, and scenario analyses were introduced in the early stages of its design to quickly identify topics with high value and potential for development. The results show that, in the current sports and rehabilitation medical equipment market, the common practice is to dedicate each type of equipment to a specific action, such as a treadmill, and there is no integrated fitness equipment. In addition, the test method is basically to assign one medical professional to each test, which is a waste of labor, material, and space. Therefore, to accommodate the game design, display interface, and test items, the iFit system includes two parts: the first is the integrated package of host, receiver, and display and the second is the design of the test equipment package. In addition to basic weight and height measurements, grip strength and balance measurements are performed using embedded pressure sensors in the handle and pedal. In addition, the handle is used to measure responsiveness, and the cushion is used in the flexibility test. Moreover, the system’s edges and corners are all rounded to prevent accidental bumps and cuts.

System operation

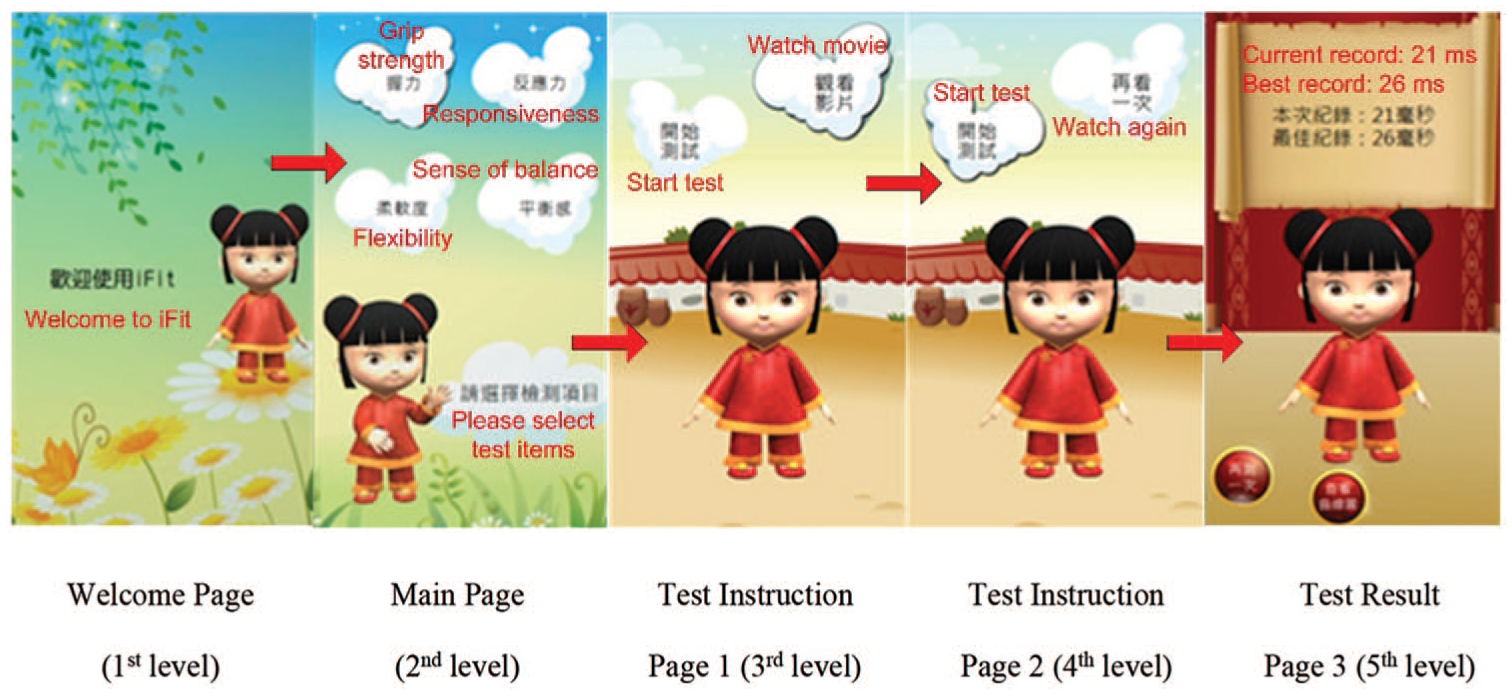

This fitness testing system for seniors offers four tasks: grip strength, responsiveness, sense of balance, and flexibility (shown in Figure 3). Each task contains five steps labeled (a)–(e), as follows: (a) select a test item, (b) watch an instructional video, (c) select “start the test,” (d) start the test, and (e) return to the main page (shown in Figure 4). Of these steps, only action (d) results in a different operation for each of the four tasks. The screen first displays a welcome page, and after 3 s it enters the main page. The main page contains four degeneration tests (grip strength, responsiveness, flexibility, and sense of balance) for the participant to choose from. After a test is selected, the options “start the test” and “watch a video” are presented. If “start the test” is selected, the test will be performed; after the test, “test again” or “return to the main page” can be selected to complete the task. If “watch a video” is selected, an instructional video is displayed; at the end of the video, the “start the test” and “watch again” options are displayed on the page. Both the grip strength test and the responsiveness test require placement of the participant’s hands on a handle with sensors. The sense of balance test requires the participant to stand on balance sensors, and the flexibility test requires the participant to sit on the flexibility test equipment. At the end of each test, the result is shown on the page.

Participants performing the four tasks.

The five levels of the system’s operation.

Participants

The users are the evaluation system’s end users. The users’ roles are as experimental participants and heuristic evaluators. In this study, the participation requirements are as follows: (a) over 65 years old; (b) able-bodied and able to move freely without aids such as wheelchairs or crutches; (c) mentally healthy, no dementia or delirious behavior; (d) able to hear normally and understand common expressions; and (e) provided with careful guidance and instruction about the HE method before the experiment. Study participants included 101 residents of a senior community who were between 65 and 93 years old. They included 26 males and 75 females, and their average age was 79.8 years. We obtained ethics approval for our study from the Institutional Review Board for research (No. 99-1389B). All relevant ethical safeguards were met in relation to patient and subject protection. After we meticulously explained the purposes of this study to the subjects, all subjects signed informed consent forms before participating. In addition, the subjects in the photographs in this manuscript provided written informed consent for the publication of their photographs. All participants were provided careful guidance and instruction about the HE method before the experiment.

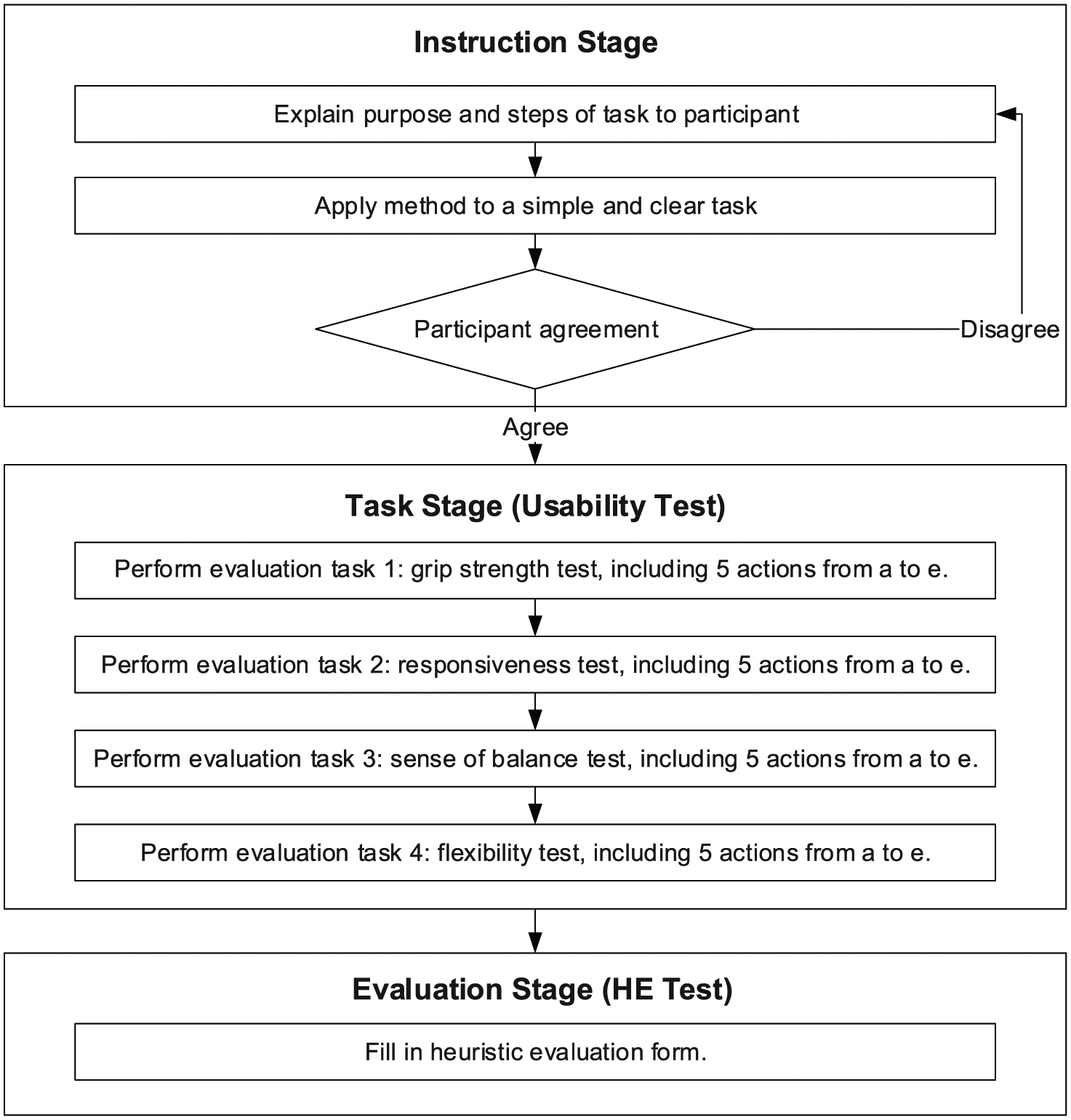

The evaluation procedure and methods

The evaluation procedure was based on two independent rounds of testing with three stages, as shown in Figure 5: the instruction stage, the task stage, and the evaluation stage. In the instruction stage, the purpose and steps of each task were first explained to the participant, and the participant was asked to sign the participant agreement and provide basic information. The researcher also asked for the participant’s permission to take photographs and record video during the test. To verify the participant’s learning ability, an initial learning test was conducted. The participant evaluated the health and fitness testing system for seniors. This stage was conducted using an iterative method (after each iteration, a modification was made based on the suggestion of an analyzer who was an expert at this method) until the participant’s command of the information was recognized as sufficient. The task stage comprised a total of four tasks: grip strength, responsiveness, sense of balance, and flexibility tests. During the evaluation, each participant first completed the four tasks in order. As each task was performed, researchers observed the user closely, filled out an evaluation form that identified whether the participant had successfully executed the five steps of the task, and recorded the participant’s state while performing the task. In the evaluation stage, after the tasks were completed, researchers helped the participant fill out the heuristic evaluation form and provide an evaluation based on the results of the tasks performed. Finally, the results were analyzed. The researchers combine the results of the heuristic evaluation and the evaluation form for the execution of each task’s steps in the usability evaluation stage, and they explained the user’s level of satisfaction with the system usability in addition to user-reported problems and their causes.

Evaluation test procedure.

The data analysis method used in this study has two parts. The first part is a reliability test for participants’ basic information and the observed variables, with SPSS version 20 as an analytical tool. The second part is based on a structural equation model and uses AMOS version 2.0 as an analytical tool.

Study results

Descriptive statistical analysis

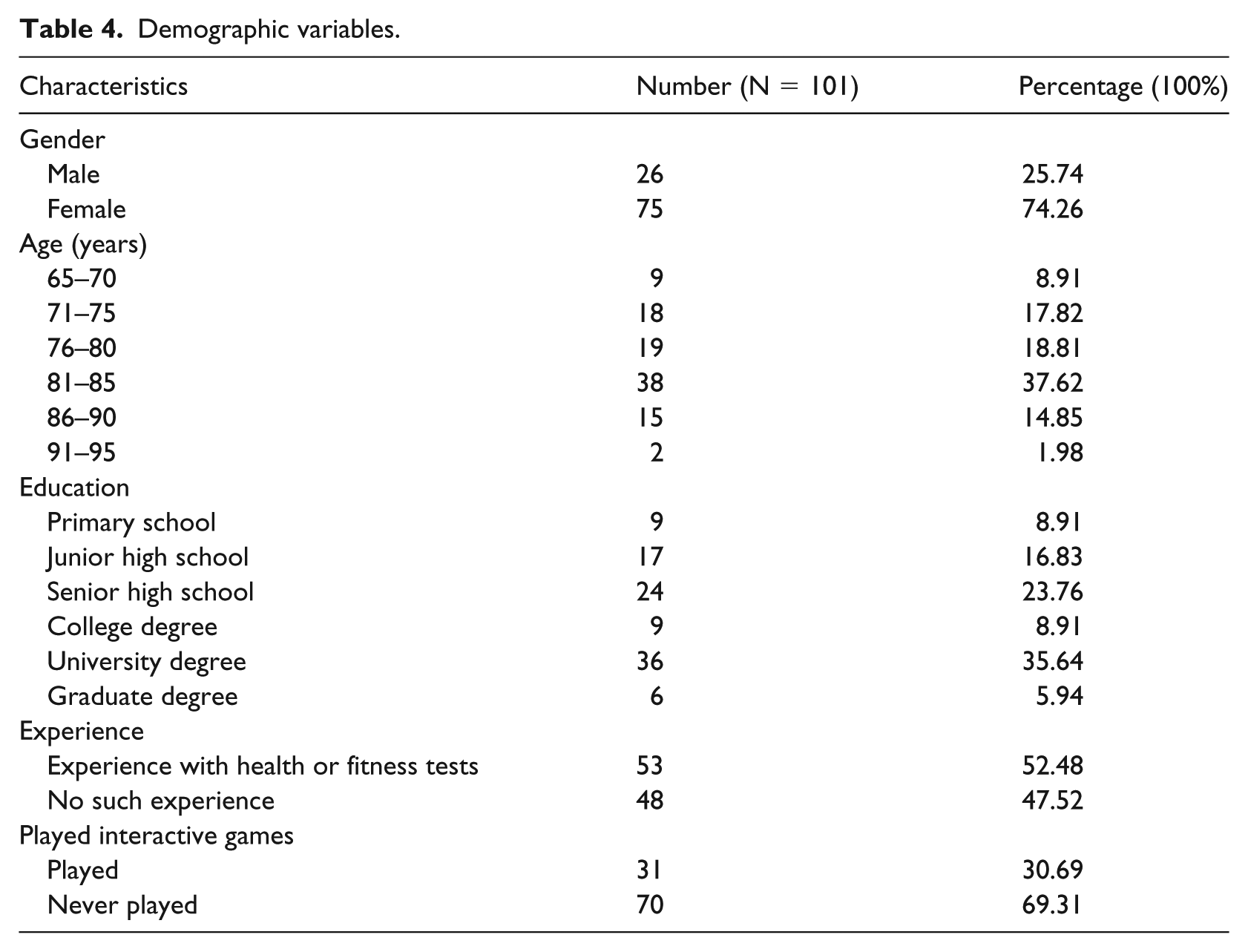

In this study, 101 seniors were recruited (see Table 4). The gender distribution was 26 males (25.74%) and 75 females (74.26%). Nine participants were 65–70 years old (8.91%), 18 were 71–75 years old (17.82%), 19 were 76–80 years old (18.81%), 38 were 81–85 years old (37.62%), 15 were 86–90 years old (14.85%), and 2 were 91–95 years old (1.98%). Nine participants had a primary school education (8.91%), 17 had a junior high school education (16.83%), 24 had a senior high school education (23.76%), 9 had college degrees (8.91%), 36 had university degrees (35.64%), and 6 had graduate degrees (5.94%). Of the participants, 53 had experience with health or fitness tests (52.48%) and 48 had no such experience (47.52%). And 31 had played interactive games (30.69%) and 70 had never played interactive games (69.31%).

Demographic variables.

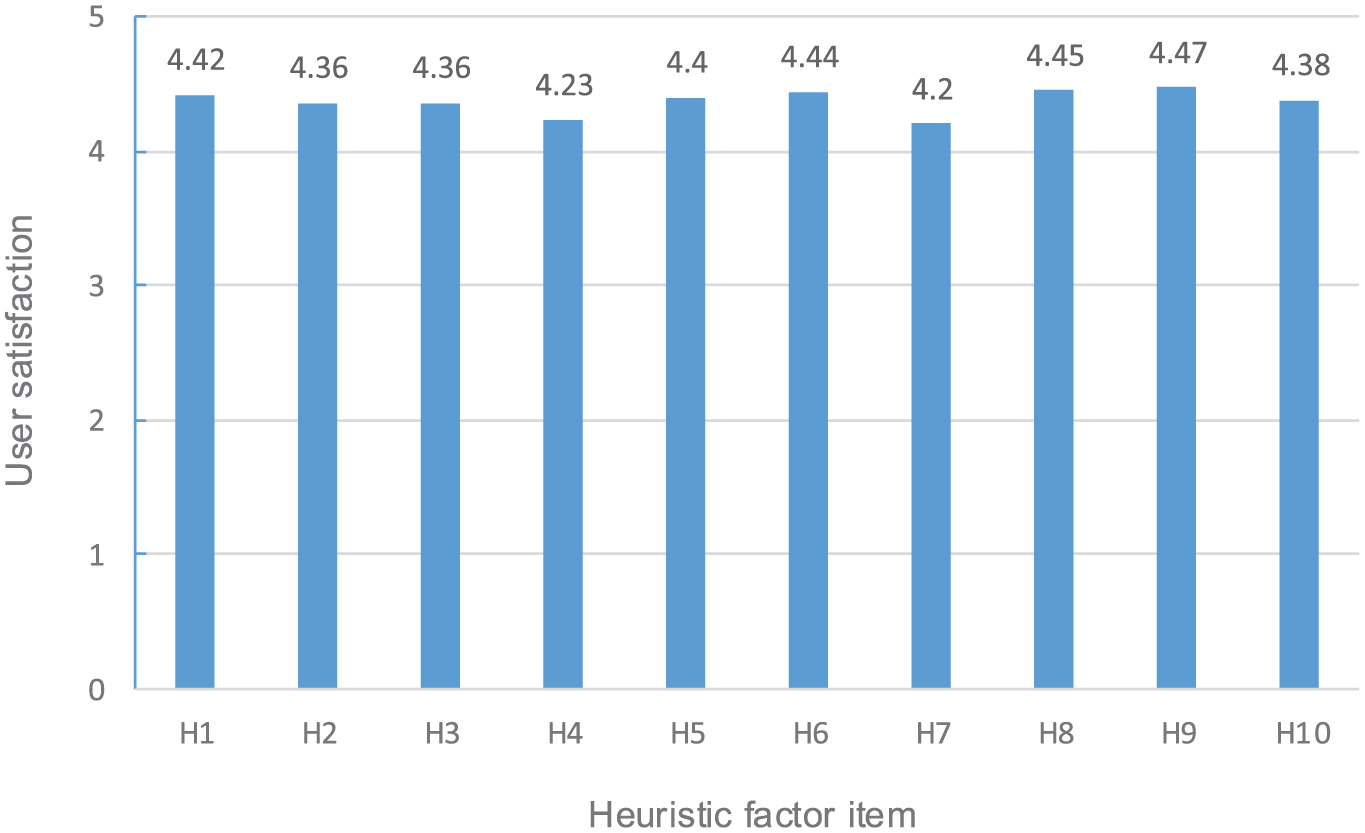

Heuristic factor evaluation

The heuristic factor evaluation recorded the users’ satisfaction with and opinions on the usability of the system’s user interface. The results of the study include the processed evaluations of user satisfaction with all of the heuristic items. Because some heuristic factors’ averages use different scales, this study uses the evaluation rating group interval to process the records. The results show that the averages of the users’ overall satisfaction with all of the heuristic items, from H1 to H10, are between 4.20 and 4.47 points, as shown in Figure 6; this range means that the users’ ratings of the heuristic usability factors are between “satisfied” and “very satisfied.” On a six-point Likert-type scale ranging from 0 = “not satisfied at all” to 5 = “very satisfied,” the respondents’ satisfaction level for heuristic factors H1–H10 is described as follows:

Heuristic factor H1 (4.42)—excellent presentation of information. Users think that the information is presented in a clear manner and that each page is clearly displayed.

Heuristic factor H2 (4.36)—system’s user-friendliness. Users unanimously believe that the lovely virtual character improves their interaction with the system; a small number of users suggest that the instructions for each button be added to the page, as otherwise, when they use the system for the first time, they do not know how to operate it.

Heuristic factor H3 (4.36)—user controllability and degree of freedom. Due to system instability, when users press a button, the system sometimes cannot return to the main page, and rebooting is required before the test can be continued.

Heuristic factor H4 (4.23)—consistency. Due to system crashes, pages freeze when some participants press the button, the system cannot respond, and rebooting is required before the test can be continued.

Heuristic factor H5 (4.40)—error prevention. The page design is simple and clear enough that users are unlikely to give incorrect responses.

Heuristic factor H6 (4.44)—clear information. The participants agree that the button operation is simple and clear.

Heuristic factor H7 (4.20)—flexible and efficient use. Some of the participants report that the operation buttons cannot be distinguished by color and are too small; the result is that they are difficult to recognize, which leads to problems during the operation.

Heuristic factor H8 (4.45)—lean design. All of the users agree that the page presentation is concise and clear and that the button presentation is simple.

Heuristic factor H9 (4.47)—error recognition. Sometimes, system errors occur, and there is no message about the error’s cause and solution; therefore, when an error occurs, the system must be rebooted to continue operating.

Heuristic factor H10 (4.38)—help with problems. The participants feel that the demonstration videos are lively and easy to understand and can help them understand the tasks.

Result for each heuristic item.

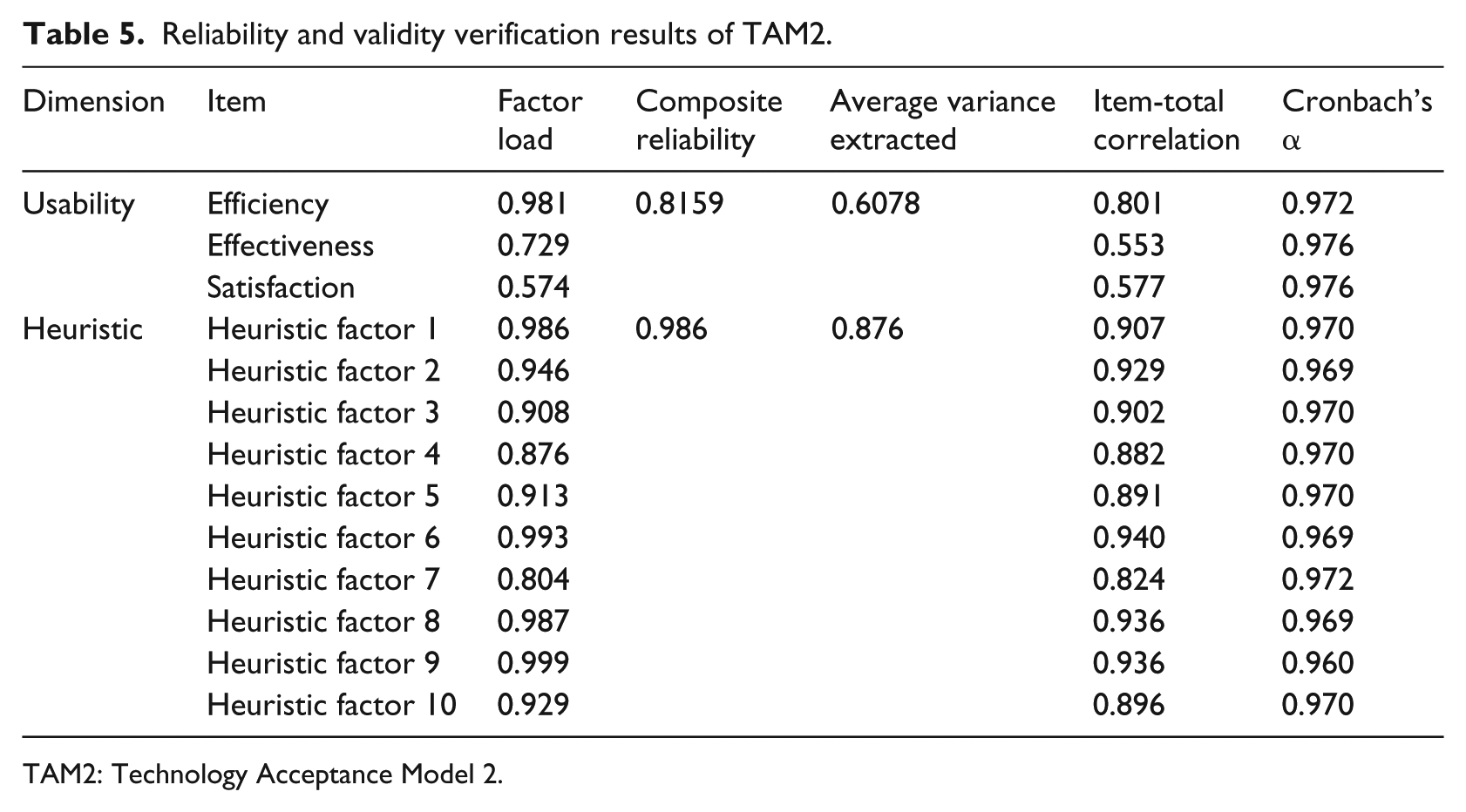

A reliability and validity analysis

The questionnaire’s reliability and validity are verified using Cronbach’s α coefficient to analyze each questionnaire item’s consistency and level of correlation. These results are used as criteria for the reliability determination. In this study, Cronbach’s α ≧ 0.7 and an item-total correlation value > 0.3 are used as the determination criteria. It can be seen from Table 6 that in this study Cronbach’s α for the two variables “usability” and “heuristic” satisfy the criterion for a high level of reliability, and the item-total correlation coefficients in each dimension are almost always above the determination criterion of 0.3, which means that the items on this questionnaire have a rather high level of reliability and that the results are rather stable.

Convergence validity refers to the level of consistency between the measured variables of the same construct. If a latent variable’s average variance extracted (AVE) is at least 0.5 and its composite reliability (CR) is above 0.7, then this variable can be regarded as having sufficient convergence validity.

Table 5 shows that in this study the constructs’ AVEs are all above the threshold value of 0.5, and the latent variables’ CRs are all above 0.7, which means that the measurement tools and each construct used in this study have excellent convergence validity.

Reliability and validity verification results of TAM2.

TAM2: Technology Acceptance Model 2.

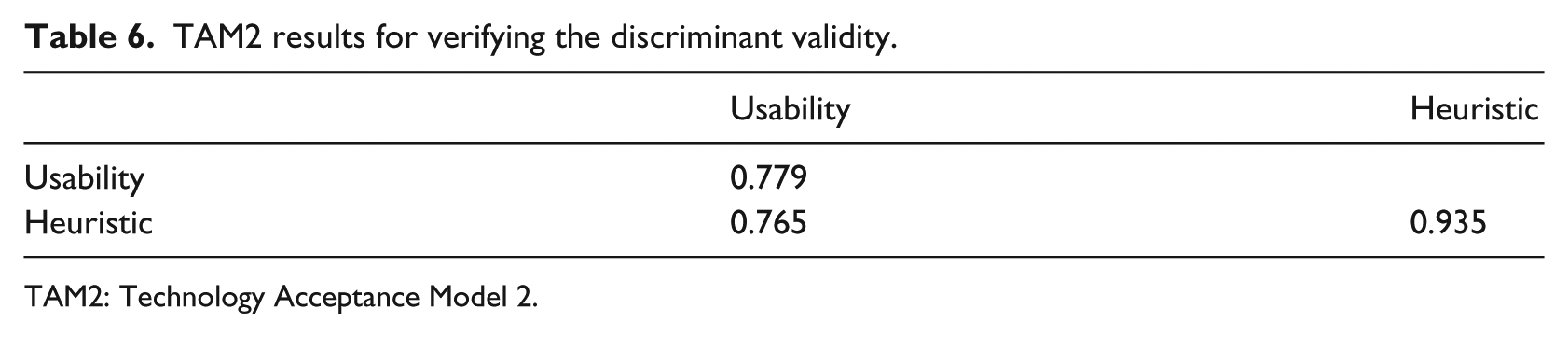

With regard to the discriminant validity, for a measurement-based model to have sufficient discriminant validity, the square root of each latent variable’s AVE should be greater than the correlation coefficient of the other dimension. Table 6 shows the correlation coefficient array for each dimension (the diagonal values are the square roots of the AVEs). It can be seen from Table 6 that in this study the square root of each latent variable’s AVE is indeed greater than the correlation coefficient of the other dimension, which means that each measuring tool and construct in this study have excellent discriminant validity.

TAM2 results for verifying the discriminant validity.

TAM2: Technology Acceptance Model 2.

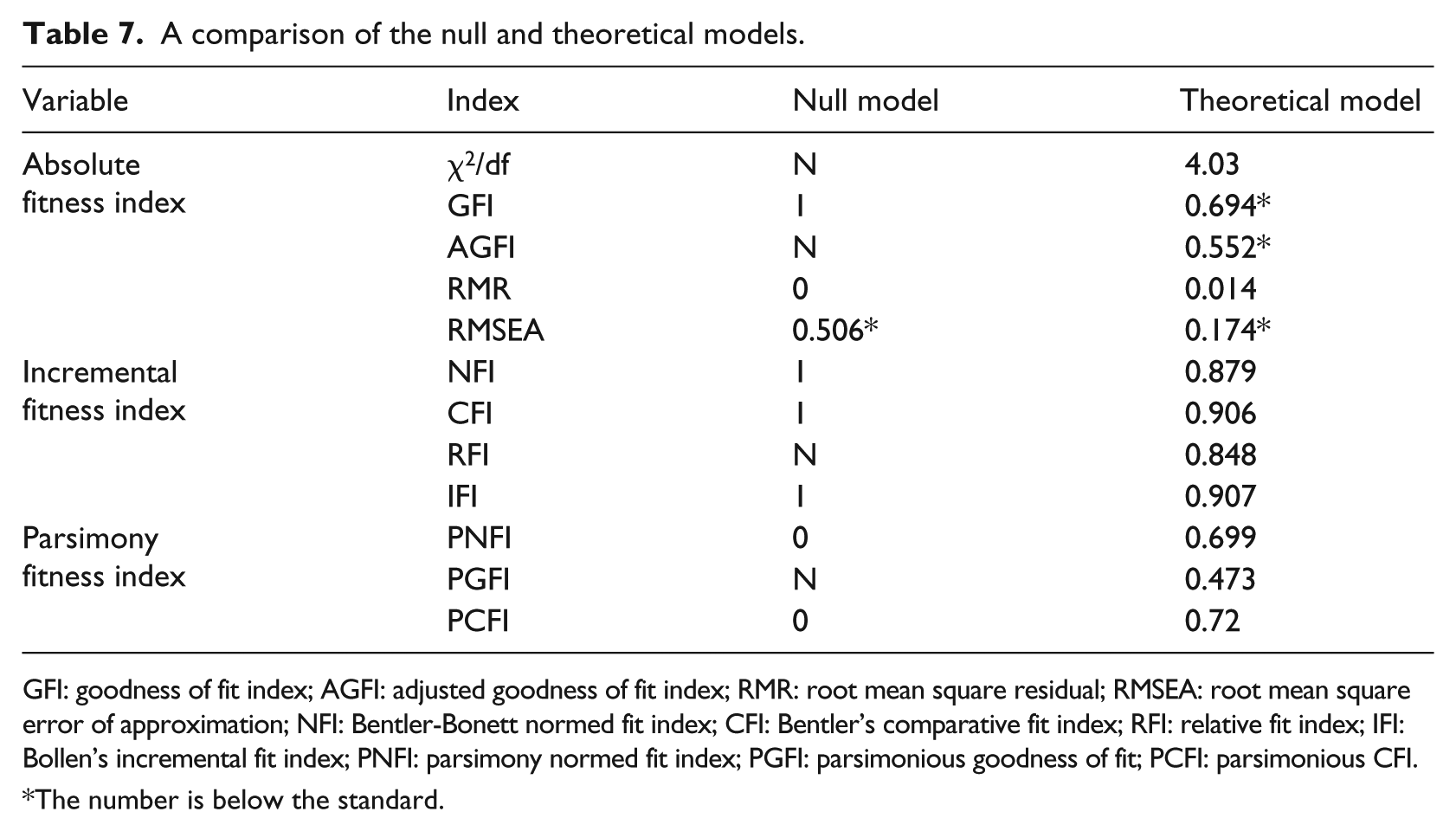

The model fitness

During the overall model fitness verification process, two models, a null model and a theoretical model, are used. In the null model, the measurement items have no specific factors. To make the model valid, a shared factor should be present; however, all of the measurement items and factors’ parameters are set to 0 to evaluate the model fitness. The theoretical model is based on references; in it, all of the possible paths between the variables are explored and analyzed to verify the validity of the theoretical architecture. Table 7 shows the comparison of the two models’ fitness tables, showing that the theoretical model is better than the null model; therefore, the theoretical model should be used.

A comparison of the null and theoretical models.

GFI: goodness of fit index; AGFI: adjusted goodness of fit index; RMR: root mean square residual; RMSEA: root mean square error of approximation; NFI: Bentler-Bonett normed fit index; CFI: Bentler’s comparative fit index; RFI: relative fit index; IFI: Bollen’s incremental fit index; PNFI: parsimony normed fit index; PGFI: parsimonious goodness of fit; PCFI: parsimonious CFI.

The number is below the standard.

The fitness verification of the theoretical model shows that, among the absolute fitness indexes, AGFI (0.552) and RMR (0.014) are below the standard; some of the incremental indexes are above 0.8, and some of them are above 0.9, which is close to the standard; and all of the parsimony indexes are above 0.4, which meets the standard.

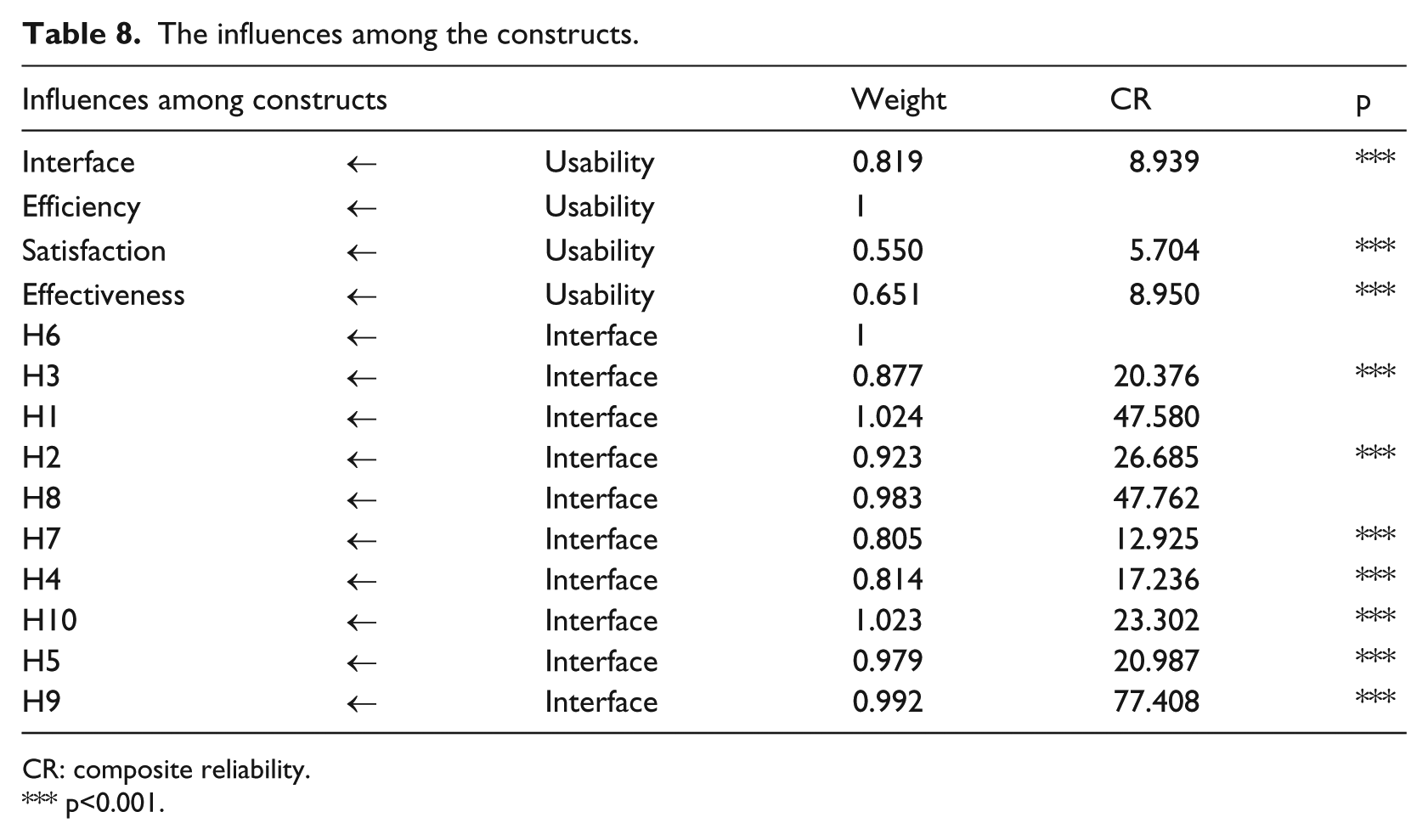

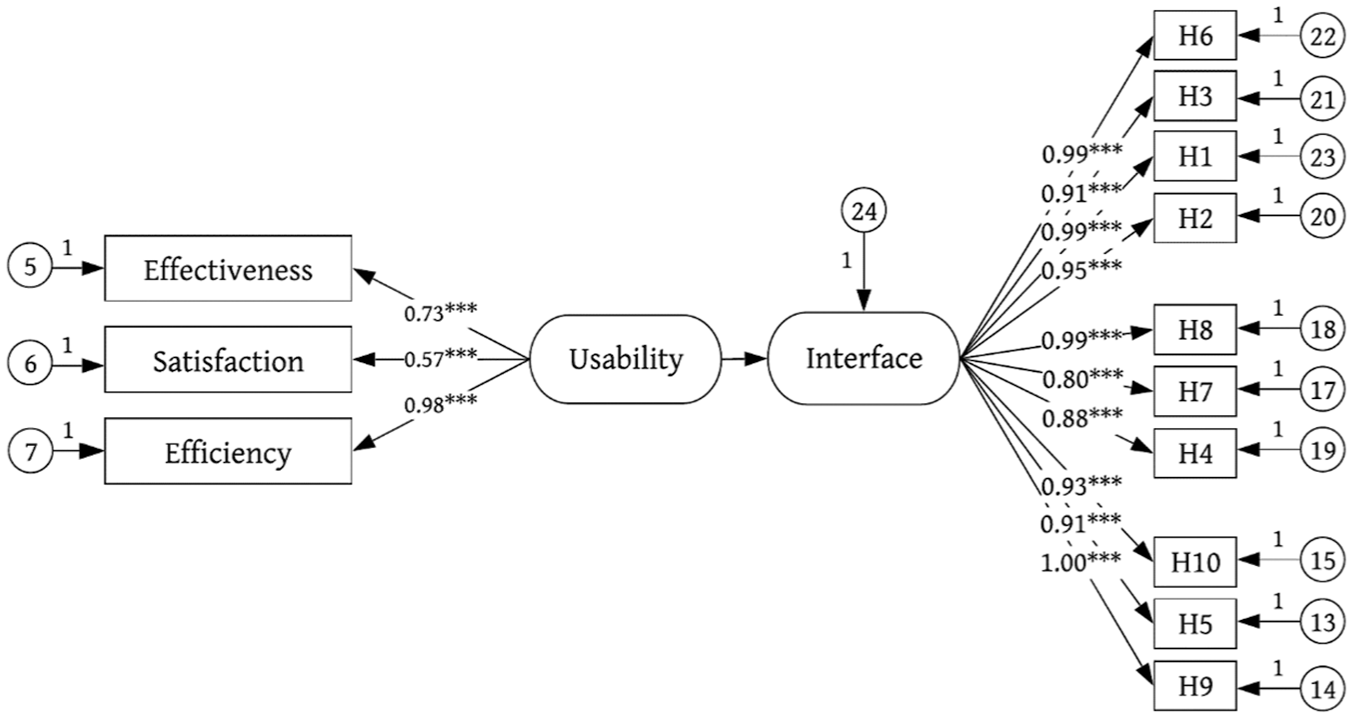

Verification of the assumptions

In addition, the degree of mutual influence among the model’s constructs is investigated, and the results are shown in Table 8 and Figure 7. The results show that in this study the influences identified by the two constructs are all statistically significant (p < 0.001), and all of the assumptions in this study are supported by data. Among the usability constructs, the influence of efficiency on usability is used as a benchmark (its path coefficient is 1), and effectiveness has the strongest influence on usability at 0.651. Among the heuristic constructs, the influence of H6 on the interface is used as a benchmark (its path coefficient is 1), and H9 has the strongest influence on the user interface at 0.992. The results of the path analysis of the structural equation model for usability proposed in this study are consistent with the assumptions in this study. All of the paths in the structural equation model have significant positive correlations. However, the model’s overall fitness level is below the standard.

The influences among the constructs.

CR: composite reliability.

p<0.001.

A path diagram of the complete theoretical model before standardization.

Discussion

The principal findings of this study are that the factors affecting the usability of the user interface were different, no clear definition of the observed variables was provided, and no actual measurements were performed. Similarly, a usability study was conducted by Oztekin et al., 9 but the relationship between quality and usability was not experimentally analyzed.

As highlighted in recent years, the development of new exergame systems for fitness has been increasing, but their use in real fitness programs is still limited. The lack of user compliance and the poor holistic consideration of the system’s user interface and device for senior users are the main reasons. 16 The two interaction styles have distinct usability characteristics because the addition of a device means that users must consider not only the system’s user interface but also the device itself. Therefore, when usability studies of exergames are conducted, the interaction style should be considered. However, although all of the previous studies proposed conceptual models of usability, they focused on only analyzing and comparing interface usability factors, and there was no further analysis or verification of the overall structural equation model.

From the above-mentioned discussions, we developed a structural usability model for identifying the relationship between user interface design and the usability of an exergame system that includes a software system and a separate hardware device. The model described in section “The structural equation modeling–based approach” allows a holistic consideration of the system’s user interface and device in terms of the needs of senior users during senior fitness exergame design.

As indicated in many exergame studies, no structural usability model has been constructed for investigating the usability of exergames, especially the relationship between their user interface design and their usability.17,18 In our empirical study, the main advantage of the proposed model was validated, and the correlation between the interface design and the usability of the exergame system was evaluated. The results showed that an improved interface enabled users to operate the system better and enhanced the usability of both the device and the system itself. Therefore, it can be claimed that the proposed model is a structural usability method because the results of the case study showed that this method successfully reveals significant usability problems based on the relationship between the user interface design and the usability of the exergame. This was achieved by merely focusing on some, not all, of the usability problems. The limited resources of exergame designers should primarily be allocated to critical improvements in usability.

This study has some limitations. First, the proposed model assumes that the usability analyst can conduct structural equation modeling (SEM)-based criticality metric analysis. However, the proposed model could also be utilized to conduct a structural analysis of an exergame by considering only the mean scores of the test participants, as in Table 5. Second, SEM is a model testing approach rather than a prediction method. It only determines whether a model is plausible under a statistical significance level with available data on hand. In this study, we tested the model shown in Figure 1 because it best described our intended hierarchical structure. However, potential users of the proposed model could observe and test other variables. This would require model testing through SEM. In addition, further analysis and validation must be performed to identify how the proposed model can be used by different stakeholders to determine the extent to which the usability of an exergame system can be attributed to the user interface design. Future studies are planned to explore these factors.

Conclusion

Exergaming, which has the additional characteristic that users physically interact with the system, provides a whole new usability experience to users. The interaction style also has an effect. Some exergame systems require the user to interact only with the system’s interface (Kinect), while others require the user to use a device to interact with the system (Xbox, Wii). The use of an additional device means that users must consider not only the system’s interface but also the device itself. Although there have been studies of the usability of exergame systems, few have considered both the system’s interface and its usability. When they have done so, they have merely used open-ended questionnaires to identify the system’s underlying usability problems. The relationship between the interface design and the usability of the system has not been discussed. Therefore, based on our research, we propose a structural usability model to identify the relationship between user interface design and the usability of an exergame system that includes a software system and a separate hardware device. The model consists of two dimensions: the interface design, which is evaluated using Nielsen’s heuristic evaluation method, and the usability, as defined by ISO 9241-11. Overall, 101 community seniors were invited as participants to use the iFit exergame system for a physical examination to test the structural usability model. During the experiment, the seniors were asked to complete four physical examinations, and their performance and subjective satisfaction were considered as usability indexes. After completing the tasks, the seniors were asked to evaluate the system’s interface. The results showed that there was a strong correlation between the design of the interface and the usability of the exergame system. With an improved interface, users were able to interact with the system better, and the usability of the whole system, including both the device and the system itself, was enhanced. As a result, the proposed usability model could be used to evaluate other exergame systems.

There are limitations to this study. One purpose of exergames, like any other videogame, is to trigger users’ positive emotions and enhance their experiences. However, in our study, we primarily focused on the design of the system’s interface and the system’s overall usability. Because the relationship between the design of an interface and the usability of an exergame system has been confirmed, our next step could be to consider user experience to build a more comprehensive structural model. Other future studies may include modifying the current structural usability model and testing it with various devices, including virtual and augmented reality systems.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the Ministry of Science and Technology of Taiwan, ROC under Contracts MOST 107-2221-E-182-055-, MOST 106-2628-H-003-009-MY3 and MOST 108-2622-8-003-001-TM1, by the Chang Gung Medical Foundation (grant nos. CMRPD3E0373, CMRPG5E0083, CMRPD2F0211, CMRPG5F0143, and CMRPD2F0213). The funders had no role in the study design, data collection and analysis, decision to publish, or preparation of the manuscript.