Abstract

Speech recognition software can increase the frequency of errors in radiology reports, which may affect patient care. We retrieved 213,977 speech recognition software–generated reports from 147 different radiologists and proofread them for errors. Errors were classified as “material” if they were believed to alter interpretation of the report. “Immaterial” errors were subclassified as intrusion/omission or spelling errors. The proportion of errors and error type were compared among individual radiologists, imaging subspecialty, and time periods. In all, 20,759 reports (9.7%) contained errors, of which 3992 (1.9%) were material errors. Among immaterial errors, spelling errors were more common than intrusion/omission errors (

Introduction

Although there are many ways for radiologists to provide the results of an imaging examination to referring clinicians, the signed written report remains the primary and often sole means of communication.1,2 In many radiology practices, transcribed dictation by a professional transcriptionist has been replaced by real-time speech recognition and self-editing of reports. In general, speech recognition software (SRS) greatly improves turnaround times for reports compared with remote transcription and allows for more immediate control over report editing than traditional paper markup or asynchronous transcription modification.3–6 However, this often leaves the radiologist as the sole author and editor of the final text that is placed in the radiology information system or electronic medical record.

Most currently available commercial SRS products rely on nearest match for each word transcribed and do not check for logical relevance or perform natural language processing for real-time recognition and transcription of dictation. Self-editing can be prone to typographic or other errors that may not be noticed by the radiologist. Consequently, persistent undetected report errors that could impede understanding or lead to erroneous conclusions are much more common than seen with expert transcription by a trained professional with excellent typing skills and an understanding of medical language and context. In addition to errors in radiologic diagnosis, simple errors in syntax and grammar that occur during report transcription can have dire consequences for which the signing radiologist is liable. 7 Communication errors are extremely common and one of the top reasons radiologists are sued for medical malpractice. 8 Self-editing errors by a radiologist represent a mitigable threat to appropriate patient care.

The primary reason for the emergence of errors in syntax and semantics in radiology reports is the relatively recent application of SRS and consequent assimilation of transcription duties by the radiologist.4,6,9 Examples of such errors fall into several categories: (1) omission of appropriate words/phrases, which includes deletions and missing words; (2) intrusion of incorrect words/phrases, which includes interjection, incorrect words, wrong word substitution, insertions, or right–left substitutions; and (3) spelling errors, which includes word truncation, most likely due to the manual editing of text by a radiologist through typing errors or inaccurate selection of text to be removed or edited. Additional errors that do not necessarily fit into the above categories include incorrect dates, image/series numbering errors, measurement scale errors (e.g., cm vs. mm), template errors, and punctuation errors. 10 Different combinations of these error types may lead to nonsense phrases with variable effects on interpretation and comprehension of the final report. Some omissions or intrusions (particularly the word “no” in various contexts) and word substitutions (such as “new” rather than “no”) can potentially affect patient care. 1

At our institution, a portion of all the signed radiology reports generated with SRS are regularly audited by transcriptionists and assessed for syntactic and semantic errors. Any potential errors comprising apparently illogical or inappropriate words/phrases, misspellings, or other errors discovered by the transcriptionist trigger a notification of the staff radiologist with an opportunity to correct the report and notify the referring service. We reviewed the data from these audits to investigate any possible patterns that could help us improve our report quality in the future. Specifically, we investigated four hypotheses, on the basis of our clinical experience: (1) error rate and type vary by radiologist; (2) error rate and type vary by type of imaging examination—we expect more complicated and longer reports, such as cross-sectional (CS) imaging or procedural reports, to contain more errors than shorter plain radiography (CR) reports; (3) the implementation of a quality control program with regular feedback should decrease errors over time; and (4) a Dictaphone hardware upgrade should decrease error rates.

Methods

Our institutional review board approved the study protocol. Informed consent was waived because of the retrospective nature of the study and deidentification of the patients and radiologists involved in the analysis. As part of our department’s radiology report quality assurance, an in-house transcriptionist reads every single report dictated using an SRS dictation system (PowerScribe; Nuance Communications, Inc) 2 days per month for every staff radiologist and evaluates these reports for potential errors. If the percentage of reports containing at least one error exceeds 3 percent on either day for a particular radiologist, then all of their reports are similarly scrutinized every subsequent day until their error rate drops below 3 percent. All radiologists with error rates below 3 percent continue to have all their reports audited, on average, 2 days per month. A total of 13 trained medical transcriptionists with experience ranging from 1 to 23 years participated.

Errors are categorized as “material” or “immaterial.”

In addition to type of error and radiology staff member, the date of the report and imaging subspecialty of the examination (ISE) were captured. Imaging methods included computed tomography (CT), magnetic resonance imaging (MRI), plain radiography (CR), nuclear medicine (NM), neuroradiology (NR), and ultrasonography (US). Specific ISEs recorded were “CT Body” (computed tomography of the chest, abdomen, pelvis, or extremities), “CT Neuro” (computed tomography of the head, neck, or spine), “CR,” “MR Body” (magnetic resonance imaging of the chest, abdomen, pelvis, or extremities), “MR Neuro” (magnetic resonance imaging of the head, neck, or spine), “NM,” “NR” (NR procedures such as lumbar puncture and myelography), “US,” “V&I” (vascular or interventional procedures), and “OS” (reinterpretation of any radiology study from another/outside institution of any body part or modality). For analysis purposes, several groups of ISEs were created, including a cross-sectional (CS) imaging group (CT Body, CT Neuro, MR Body, MR Neuro, and US), a procedural group (V&I and NR), and a diagnostic group (CT Body, CT Neuro, CR, MR Body, MR Neuro, US, and NM).

We retrospectively retrieved all reports generated by SRS and signed by 147 different radiologists from 3 January 2011 through 16 April 2014. Mammography reports were excluded because only a small fraction of these examinations are interpreted and reported using SRS at our institution. Similarly, many of our CR examinations and procedures are transcribed without SRS, either by immediate direct transcription in the room, or asynchronously through a digital dictation and remote transcription system. The main reason for the use of direct transcription over SRS with CR examinations is that direct transcription generates a finalized report faster, and CR results are often emergently required. Therefore, the number of CR reports completed with SRS is far less than the total number of these types of examinations reported at our institution. Radiologists were grouped into four categories on the basis of their total error percentage quartiles over the entire time period: group 1 (<5.5% total errors), group 2 (5.5%–7.9% total errors), group 3 (8.0%–10.5% total errors), and group 4 (>10.5% total errors).

Reports were divided into four time periods of exactly 300 days to analyze trends over time: 3 January to 29 October 2011, 30 October 2011 to 24 August 2012, 25 August 2012 to 20 June 2013, and 21 June 2013 to 16 April 2014. A total of 36 radiologists were excluded from the time analysis portion only because of an insufficient number of reports (<100) reviewed by transcription as part of the quality control project in any of the time periods to mitigate confounding of time-based trends by individual radiologists.

Our department updated the dictation microphones from the PowerMic I to PowerMic II Dictaphone (Nuance Communications, Inc) during August and September 2013. To test for differences in error rate as a result of this hardware modification, we also compared reports created in the 6 months immediately before (1 February through 31 July 2013) and immediately after (1 October 2013 through 31 March 2014) the upgrade.

Descriptive categorical data are presented using counts and percentages. Contingency (

Results

Errors by radiologist

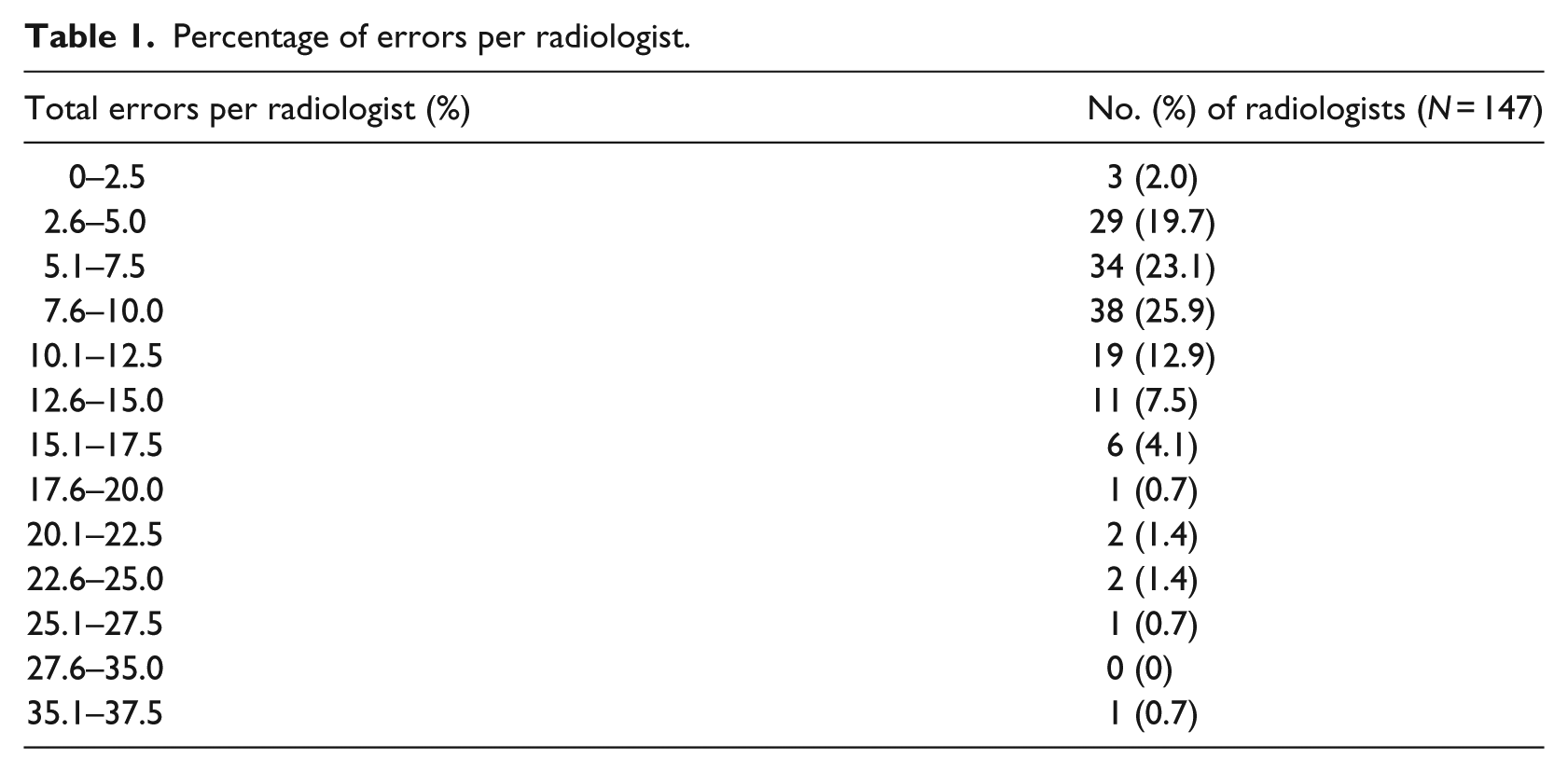

A total of 213,977 reports were retrieved. Among these, 20,759 (9.7%) had errors, including 3992 (1.9%) with material errors. The mean (standard deviation (SD)) total error percentage by radiologist was 8.7 percent (5.0%; range, 0.8%–35.1%), and the percentage differed significantly among radiologists (

Percentage of errors per radiologist.

Errors by exam type

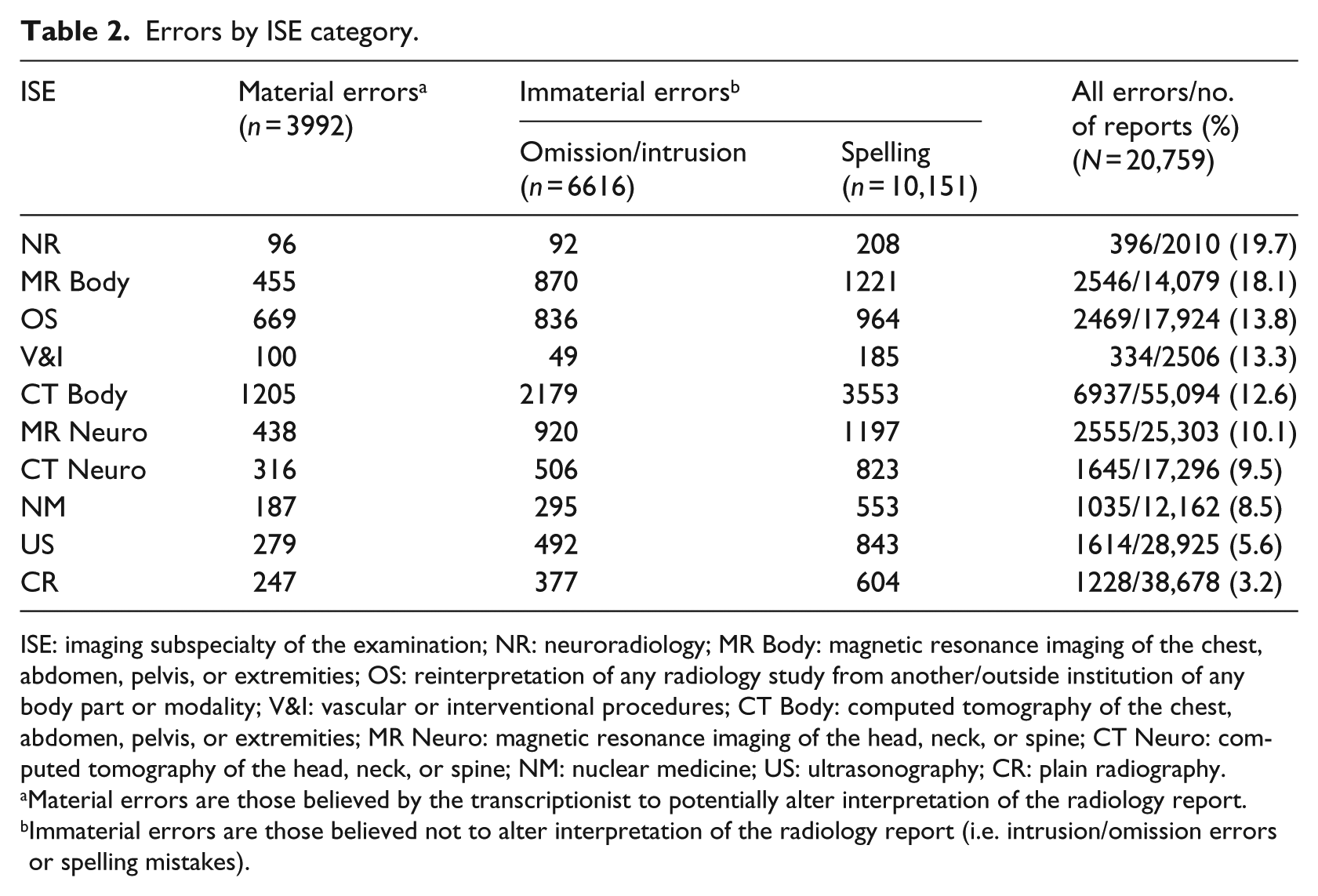

When the data were separated by ISE category, the mean (SD) overall error percentage per category was 11.4 percent (5.2%), material error percentage was 20.8 percent (4.6%) of total errors, and spelling error percentage was 63.1 percent (7.1%) of immaterial errors. These error rates also varied significantly by ISE (

Errors by ISE category.

ISE: imaging subspecialty of the examination; NR: neuroradiology; MR Body: magnetic resonance imaging of the chest, abdomen, pelvis, or extremities; OS: reinterpretation of any radiology study from another/outside institution of any body part or modality; V&I: vascular or interventional procedures; CT Body: computed tomography of the chest, abdomen, pelvis, or extremities; MR Neuro: magnetic resonance imaging of the head, neck, or spine; CT Neuro: computed tomography of the head, neck, or spine; NM: nuclear medicine; US: ultrasonography; CR: plain radiography.

Material errors are those believed by the transcriptionist to potentially alter interpretation of the radiology report.

Immaterial errors are those believed not to alter interpretation of the radiology report (i.e. intrusion/omission errors or spelling mistakes).

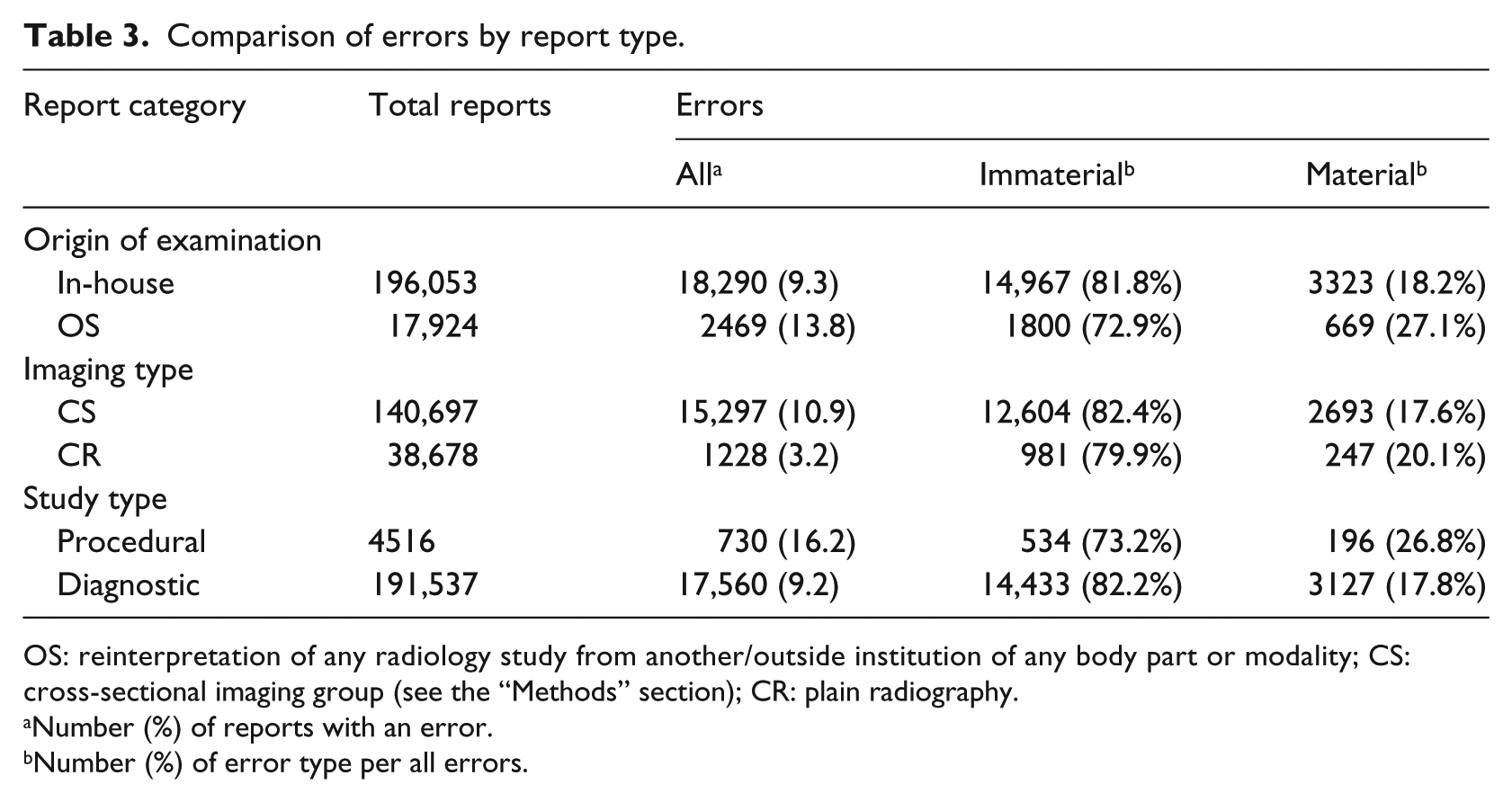

Percentages of errors for different types of reports are shown in Table 3. Compared with in-house dictations, reports dictated on outside examinations (OS category) were significantly more likely to result in an error (OR, 1.55; 95% CI, 1.48–1.62) or material error (OR, 1.67; 95% CI, 1.52–1.84;

Comparison of errors by report type.

OS: reinterpretation of any radiology study from another/outside institution of any body part or modality; CS: cross-sectional imaging group (see the “Methods” section); CR: plain radiography.

Number (%) of reports with an error.

Number (%) of error type per all errors.

Error type trends

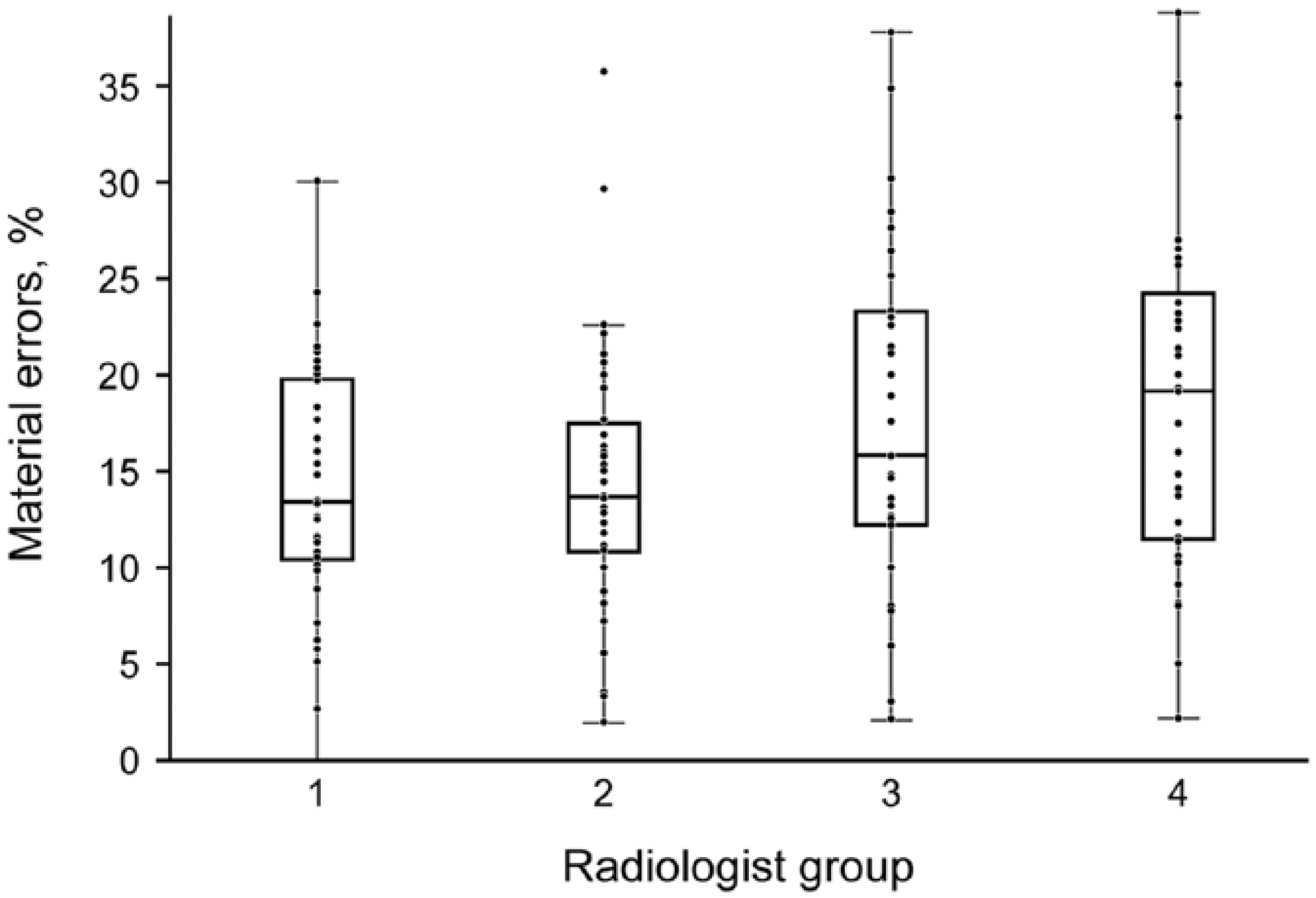

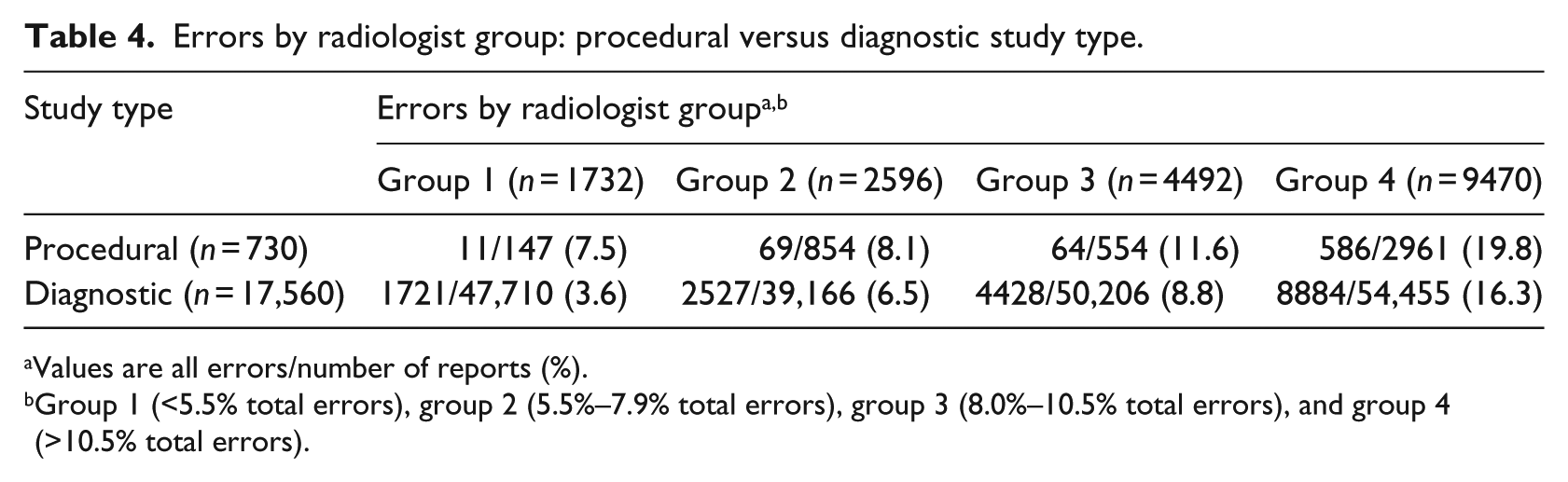

The four groups representing quartiles of radiologists, comprised 38 radiologists in group 1 (<5.5% total errors), 36 in group 2 (5.5%–7.9% total errors), 37 in group 3 (8.0%–10.5%), and 36 in group 4 (>10.5% total errors). With the exception of comparisons between adjacent groups 4 and 3 (

Percentage of material errors by radiologist error quartile (

Since all types of errors were more common in the procedural group of reports, and the distribution of radiologists is known to differ between this group and the diagnostic imaging group, we hypothesized that the different radiologist makeup of each group may explain this disparity. Testing for associations between radiologist group and ISE group (

Errors by radiologist group: procedural versus diagnostic study type.

Values are all errors/number of reports (%).

Group 1 (<5.5% total errors), group 2 (5.5%–7.9% total errors), group 3 (8.0%–10.5% total errors), and group 4 (>10.5% total errors).

Error rate over time

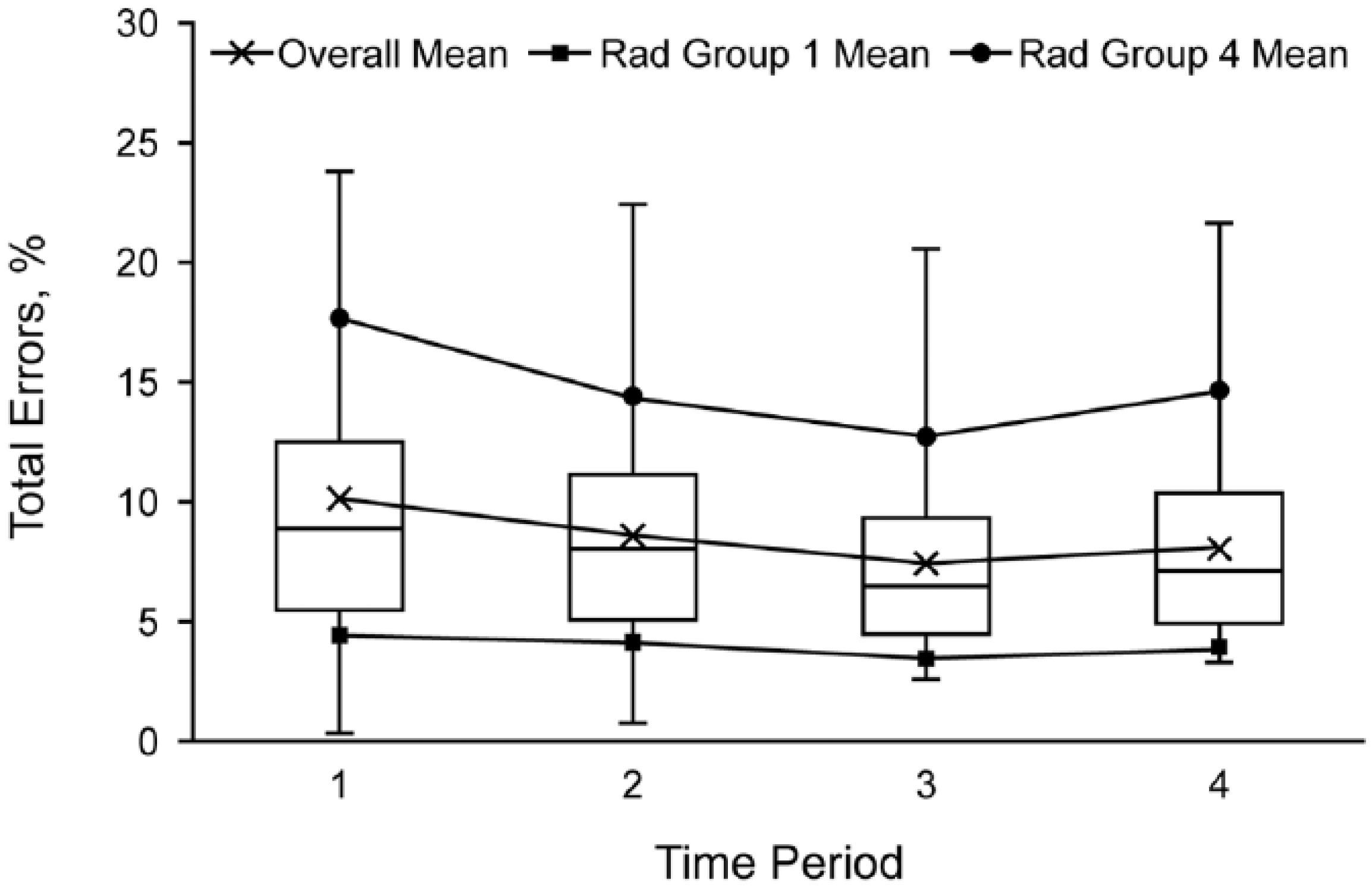

The overall error rate decreased significantly over time when comparing either of the first 2 time periods with any later time period (

Box plot of total errors by time period (

Discussion

SRS-related error rates reported in the literature vary between 4.8 and 38 percent among finalized radiology reports.1,4,10,11 Higher error rates, in general, have been reported in studies that examined only CS modalities. For example, Pezzullo et al. 4 reported a total error rate of 35 percent using SRS to interpret spine MRI, and Quint et al. 10 reported 22 percent total errors in CT of the head, neck, chest, abdomen, and pelvis. However, only a 6 percent error rate was reported by Chang et al. 1 among radiography (“CR group”) reports, compared with a 38 percent error rate in their “non-CR” group. A study by McGurk et al. 11 that excluded MRI reports found a 4.8 percent error rate. Our results examining nearly a quarter-million reports confirm this trend, with a lower error rate of 3.2 percent for CR, compared with 11.0 percent for CS (OR, 3.72). Chang et al. 1 calculated a relative risk of error in their “non-CR” group of 3.5 compared with CR. Our reported OR converts to a relative risk of 3.42 (95% CI, 3.23–3.62), showing excellent agreement with Chang et al. 1 despite large differences in error rates for each modality group between the two studies. This is good evidence for a real increase in the probability of SRS-related error in CS imaging reports versus CR reports (3.4- to 3.5-fold increased risk). This effect may persist even when macros are used. 5

There are many reasons for differences in error rate between various studies in the literature, not the least of which is heterogeneity of particular software vendors, versions, and equipment. 12 Our errors may be at the low end of the spectrum because of a strict quality control policy in place. There is evidence for this in the time-dependent decrease in error rate since the transcriptionist-auditing program began essentially at the beginning of our study period (Figure 2), with the notable exception that we do not have data predating the quality control program to compare. Of interest, the decrease did not continue throughout, suggesting that there may be a lower limit to the error rate achievable at a large institution. The significant variation in error rate among radiologists may play a role. The group 4 radiologists (with the highest and most variable error rates) actually had increased total error rates in the last time period (Figure 2), which suggests that routine feedback regarding errors does not affect every radiologist in the same way, at least over time. Another possibility may be degradation of speech recognition for some radiologist voice models over time, or perhaps aging hardware/microphones in some areas frequented by these radiologists. Radiologist-dependent variability in error rate has been documented by others, as well, 1 ranging from 0 to 100 percent. 10

Despite the increased probability of error in CS compared with CR studies, a greater proportion of material errors were present among the CR reports. Similarly, Rana et al. 5 found a greater incidence of “major errors” among their CR reports compared with CS, despite greater total errors in the CS cohort. Although CS reports may theoretically be more likely to contain an error because they contain more words and phrases than CR reports, 5 this does not explain the opposite discrepancy in material errors. In fact, Chang et al. 1 found the opposite, with “very significant” errors found in 8 percent of reports in the “non-CR” group and 0.5 percent in the “CR group.” It is possible that the relatively shorter ratio of interpretation time to report dictation/editing time in CR examinations than in CS examinations results in lower awareness of significant intrusions, omissions, or other errors in CR reports. Because of the large number of reports in our data set, we did not quantify length of report, although we would expect that it might correlate with total error frequency. 5

Despite this notable exception in CR reports, we generally found a greater fraction of material errors to be associated with greater total error rates. This was specifically the case among OS reports, procedural reports, different radiologist groups, individual radiologists, and ISEs. We also found increased error rate in procedural reports (e.g. vascular interventional, lumbar puncture) compared with diagnostic reports (OR, 1.91) and OS compared with in-house examinations (OR, 1.55). We could not find other reports of similar findings in the literature. Although we did not specifically track the use of templates, the CS ISE reports with the fewest errors, US, were frequently made with the use of templates.

Surprisingly, spelling errors were the most common type of immaterial error and usually did not significantly differ between group comparisons. SRS systems do not make spelling mistakes. This means that many of our radiologists type their reports or make edits when proofreading their reports rather than use the SRS process. The spellcheck function in our software is not automatic and must be manually triggered by the user before signing the report, no doubt further contributing to spelling errors in a busy environment. This strongly contradicts earlier work demonstrating decreased spelling errors with SRS. 13 Ironically, then, a consequence of using a technique that should not result in spelling errors has been a rather large increase in spelling errors in an environment in which spellcheck is not mandatory or visible in real-time as highlighted or otherwise marked text. The human interaction component to technology cannot be overlooked. Unfortunately, we do not know the proportion of spelling errors that contributed to material errors, and therefore the clinical consequences are unknown. Contributors to this phenomenon, as well as other errors, may include cursory report editing due to pressure for quick turnaround time or other failures in the proofreading process, as well as an underestimation of actual error frequency. 10 Busy inpatient working environments and nonnative English-speaking status have also been linked with increased error rates.11,14 We did not look for associations between error rate and radiologist experience level or presence of trainees, although others have found no such relationships.5,10,11

Our study has several limitations. Report errors were not automatically parsed by a computer but were elucidated via human proofreading, with its inherent fallibility and subjectivity. This may result in underestimation of the number of errors, as well as misclassification of types of errors. This limitation is likely to be small, however, given that experienced professional medical transcriptionists were used. Another limitation is the absence of subclassification of material errors. Whereas spelling errors are the most common immaterial error, it is unclear whether this is also true among the material errors, which are more likely to obfuscate report meaning to the extent of complicating or altering patient management. Although such judgments were made by transcriptionists, it was not feasible for a quarter-million reports to be re-reviewed by radiologists or other physicians. A related limitation is the difficulty in quantifying and comparing “very significant,” “major,” and “material” types of errors. Our use of “material” error, defined as any error that could potentially impede understanding of any part of the report, may then include errors that would not necessarily be categorized as “major” or “very significant” in other publications.

Other potential biases are related to the retrospective nature of the study and data collection. The most significant may be that reports from radiologists with error rates greater than 3 percent were reviewed more frequently than those with lower error rates, possibly skewing the data. However, since only 8 of 147 radiologists had an average total error rate less than 3 percent, this skew is likely mild. We also note that most CR reports at our institution are transcribed directly to a transcriptionist, without the use of SRS, which may bias our results for studies that are performed off-hours or in areas where CR is not routinely reported.

SRS has clear advantages, including ease of integration with the radiology information system and picture archiving and communications systems,

4

decreased report turnaround time3–5,9,13,15,16 as dictation and transcription processes are combined,

6

and shorter reports.

13

Claims of cost-effectiveness13,17 can be dubious depending on which costs are included in the analysis and how

Future research

Our results suggest several potential areas of focus for future research. Given the variability in error rates among different radiologists and different types of imaging examinations, departments with limited resources may wish to take a more targeted approach to the problem. Selective auditing of CS and procedural reports, for example, could potentially have a larger impact on overall error rates. Further study of differences in error frequency between reports generated using templates and macros, compared with traditional SRS, is certainly warranted. In addition to our US data results, other studies have found the regular use of macros or templates to be potentially helpful for reducing report errors.11,25 We are currently expanding our use of templates into other divisions, as are many other departments throughout the world, to determine whether error frequency can be further decreased.

Given the high incidence of spelling errors in our data, we have decided to permanently enable the spellcheck feature of our SRS, so that it is no longer optional. It will be interesting to note any future changes in error frequency and report turnaround time. As suggested earlier, further research regarding the “send-to-editor” functionality of some SRSs is greatly needed. Although, in theory, the “send-to-editor” function may combine the advantages of SRS and transcription, there are also potential disadvantages, including some potential loss of efficiency and increased turnaround time. 22 As SRS technology progresses, new implementations of hardware and software must be tested in real clinical environments before being widely adopted, particularly given the associated cost. For example, our Dictaphone hardware upgrade was expected to improve speech recognition, presumably based on vendor testing, but in our hands it had no effect on report error rates.

Conclusion

SRS-related errors are more common in CS reports (compared with CR), OS reports (compared with in-house examinations), and procedural studies (compared with diagnostic). When the total error rate increases, the fraction of material errors usually increases as well, except in the case of CR, in which material errors were more common than in CS reports. Error rates are highly variable among radiologists. Spelling errors are the most common type of immaterial error when automatic spelling correction is not mandatory, which suggests that editing radiologists often type rather than use SRS for report editing. A hardware upgrade from PowerMic I to PowerMic II Dictaphones had no effect on error rate. A quality control program with regular feedback can decrease errors over time, but there may be a limit.

For departments that use SRS, we recommend the following actions: (1) regularly audit reports, with feedback, for quality control; (2) focus efforts and resources on reports that are longer and more technical—in radiology departments, these include CS and procedural reports; (3) use automation whenever possible, including templates, macros, and automatic mandatory spellcheck; (4) perform trials of all costly hardware and software upgrades in your environment under your conditions; and (5) regularly retest the system for efficacy after any substantial changes.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.