Abstract

In our prior work, we conducted a field trial of the mobile application DocCHIRP (

Keywords

Introduction

Given the pace of medical practice, opportunities for face-to-face collaboration between health care providers (HCPs) are sporadic, and neither text paging nor email lends themselves towards effective collaboration at the point of care. 1 Physicians typically have two questions for every three patients encountered, yet finding relevant articles to address focused questions quickly is difficult and in practice, providers use medical references fewer than nine times per month. 2

The “information overload” problem is not specific to the field of medicine, and other disciplines have started to adopt an evolving form of digital collaboration called crowdsourcing. 3 Fueled by expanded access to the Internet and availability of web-enabled smart devices, crowdsourcing has been used to engage online communities to accomplish tasks of varying complexity. Large organizations crowdsource the collective wisdom of employees and the public at large to solve internal operational issues, conduct market research, and advance their branding presence. 4 Corporations are also beginning to consider the frequency and quality of employee engagement with internal crowdsourcing systems as part of the formal job review and promotion process. 5 To a lesser degree, the medical community has begun to use both social media and crowdsourcing in practice with doctors using Facebook and Twitter to engage their patients and promote their reputation. 6 Examples of crowdsourcing have begun to emerge in the public domain with companies proposing to connect patients with providers using programs like HealthTap’s “Talk-to-Docs” or to invite HCPs to solve diagnostic dilemmas through sites like crowdmed.com. It remains unclear whether physicians would use crowdsourcing for peer-to-peer collaboration.

To study this question, we conducted a field trial of the mobile crowdsourcing application DocCHIRP (

Methods

Program design

DocCHIRP is both a mobile and web-based application that allows HCPs to post questions to trusted colleagues in real time. Details regarding program development and design have been previously reported. 7 The mobile application was designed to run on both Apple and Android devices, and trial participants downloaded the program from the Apple App store or the android compatible version directly from the DocCHIRP server. Network access was restricted to users holding verified server accounts, and providers were able to select and manage members of a single crowd, set notification preferences (email, texting, or both), and publicly display their areas of expertise. When faced with a clinical question, the consulting provider (hereafter referred to as the index provider) could send consult questions and carry on one-to-many conversations with colleagues in real time. Responses, which were collated according to response time, were associated with the initial consult question and viewable by all participants in that provider’s group.

Study population

Both the DocCHIRP field trial and post-trial survey were approved by the University of Rochester Research Subjects Review Board (RSRB) and designated as minimal risk. Trial participants (

Data analysis

The 10-min survey was anonymous and conducted online (https://www.surveymonkey.com). Closed-ended questions, in which the respondent picked an answer from a given number of mutually exclusive options (see supplemental materials), were grouped by category. Questions included items regarding provider demographics, current use of mobile technologies in clinical practice, frequency and modes of provider-to-provider communication, and impressions of medical crowdsourcing. To understand factors affecting technology adoption, survey respondents were asked to self-identify as users or non-users by recalling the frequency of program engagement (never, occasionally, regularly). Responses were verified against the server transcripts.

Statistical analyses

We performed all analyses using Statistical Product and Service Solutions (SPSS) version 15.0 software (SPSS Inc., Chicago, IL, USA). We used standard summary statistics to describe overall demographics and survey sub-domains. Data were organized in two-by-two tables, and Fisher’s exact tests were performed to look for differences between users and non-users. An exact, two-sided α level of less than 0.05 was considered statistically significant.

Results

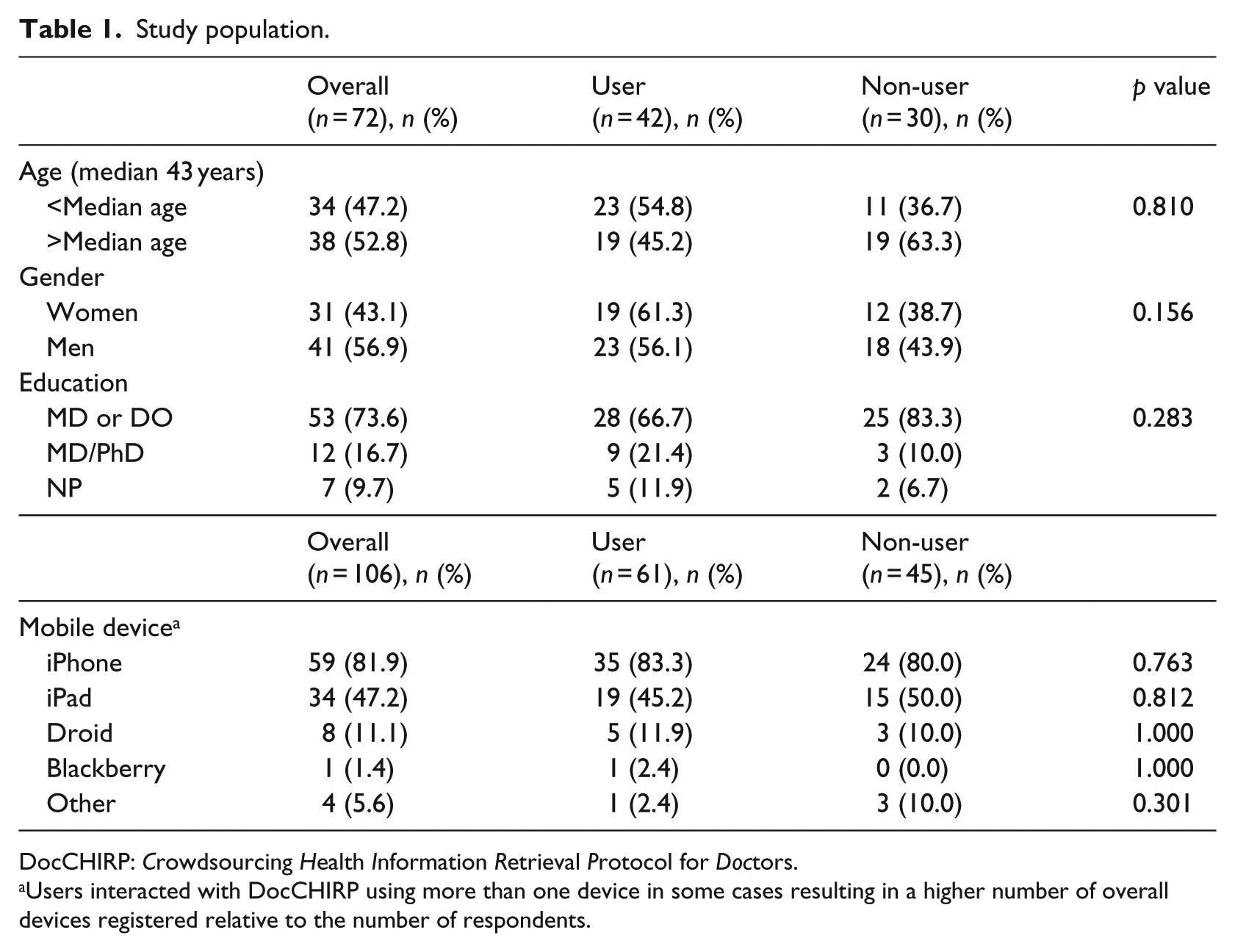

Participant demographics

Of the 85 providers that created DocCHIRP accounts, we received responses from 72 participants, resulting in an 85 percent response rate. DocCHIRP registrants were divided into “user” and “non-user” groups by self-report of whether they used DocCHIRP

Study population.

DocCHIRP:

Users interacted with DocCHIRP using more than one device in some cases resulting in a higher number of overall devices registered relative to the number of respondents.

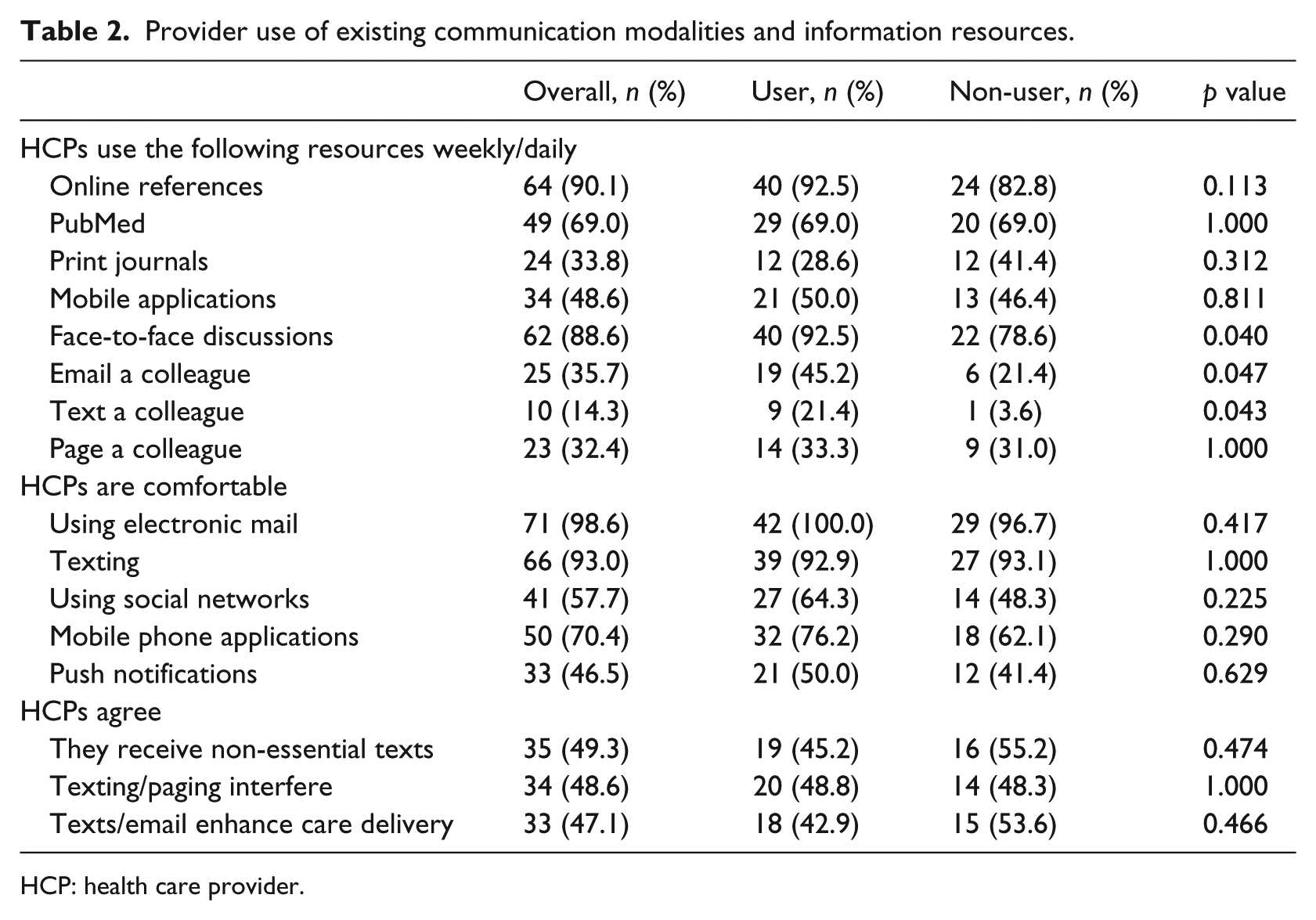

Existing information seeking and communication behaviors

When asked how difficult it is to find good evidence or actionable information to solve clinical issues when needed, most providers indicated it was either “very easy” (17%) or “easy” (62%), while 22% indicated having difficulty. To understand where clinicians turn when they need to close knowledge gaps and solve clinical problems, we assessed the range of reference materials providers reported using at the point of care on a weekly or daily basis (Table 2). Clinicians relied heavily on online resources like Up to Date or eMedicine (90.1%) and face-to-face advice from colleagues (88.6%). We also found no differences between users and non-users in terms of their use of mobile applications (i.e. Epocrates, 5-Minute Consult), the published literature, or paging a colleague. However, compared to non-users, DocCHIRP users were more likely to engage their colleagues through face-to-face discussions (92.5% vs 78.6%;

Provider use of existing communication modalities and information resources.

HCP: health care provider.

In terms of their current use of online and mobile communication tools, trial participants indicated being comfortable using both electronic mail (98.6%) and texting (93%), while fewer providers (57.7%) used social networking tools (Facebook, LinkedIn, Twitter). With respect to their impact on clinical care, 47.1% of respondents agreed that having access to texting and email enhanced quality. That said, nearly half felt that they receive non-essential texts and pages (49.3%) that disrupt their daily workflow and that these interruptions interfered with quality care (48.6%). These sentiments were shared among users and non-users alike.

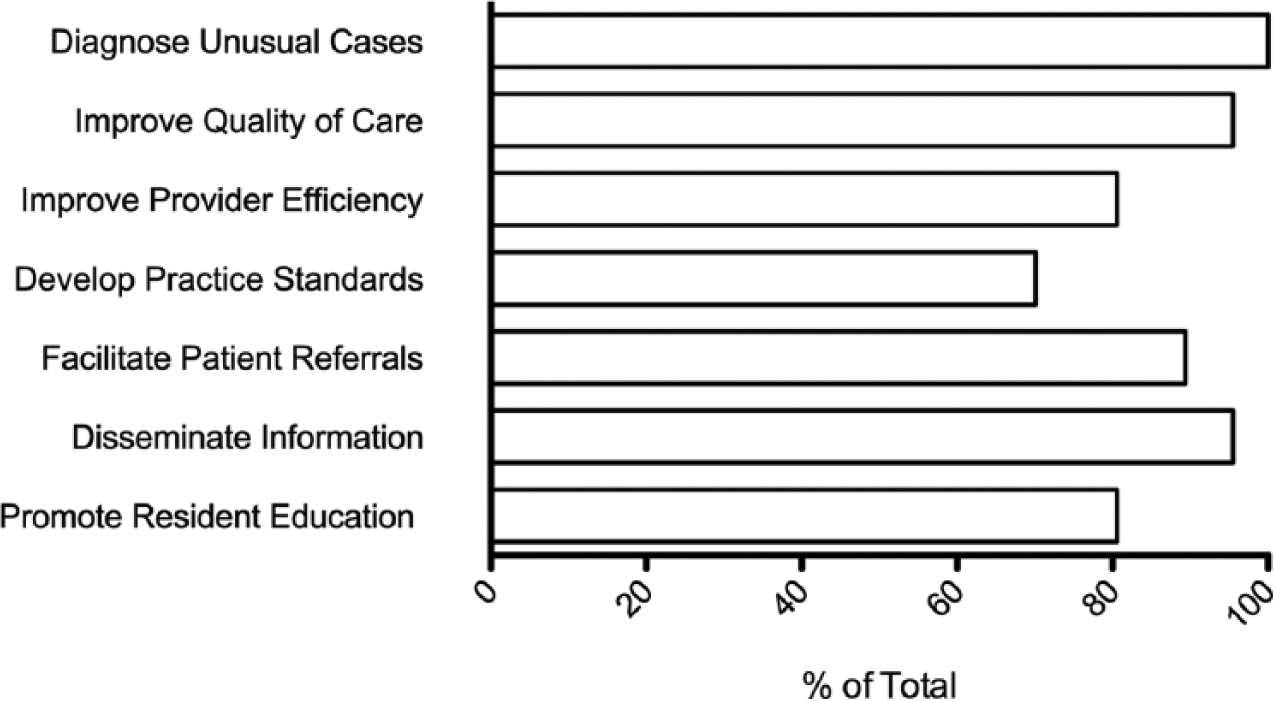

Crowdsourcing in medicine: perceptions and strategies

We next turned to the question of whether perceptions regarding the use of crowdsourcing in the medical setting differed among trial participants. Over 80 percent of respondents agreed that crowdsourcing could have a net positive impact on routine patient care, medical education, making appropriate patient referrals, and diagnosing unusual cases (Figure 1). We also found that more non-users than users were concerned that the use of near-real-time crowdsourcing could interfere with personal “off the clock” time (89% vs 65%;

Health care provider perceptions regarding the utility of crowdsourcing in medical practice. Providers were asked to select areas in which they felt mobile crowdsourcing could be used to enhance a range of activities in academic medicine.

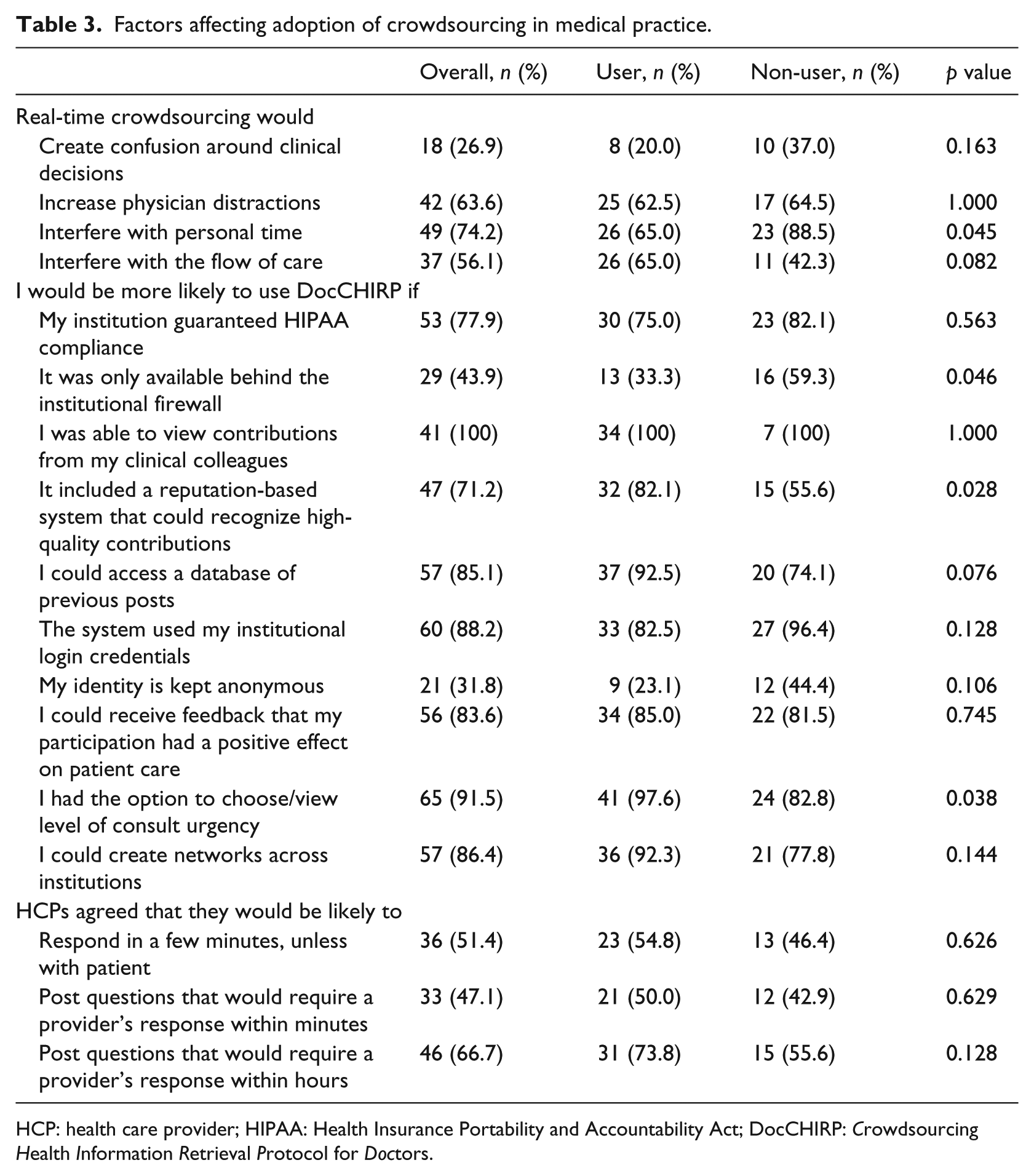

Factors affecting adoption of crowdsourcing in medical practice.

HCP: health care provider; HIPAA: Health Insurance Portability and Accountability Act; DocCHIRP:

When asked about particular features that would enhance adoption of DocCHIRP, clinicians cited several factors including the following: (1) receiving feedback that their participation had a positive effect on patient care (84%), (2) having an institutional guarantee of Health Insurance Portability and Accountability Act (HIPAA) compliance (78%), and (3) being able to use institutional login credentials to access the system (88%). More users than non-users agreed that recognition of high-quality contributions by the system would be beneficial (82% vs 56%;

Consultation practices by clinicians

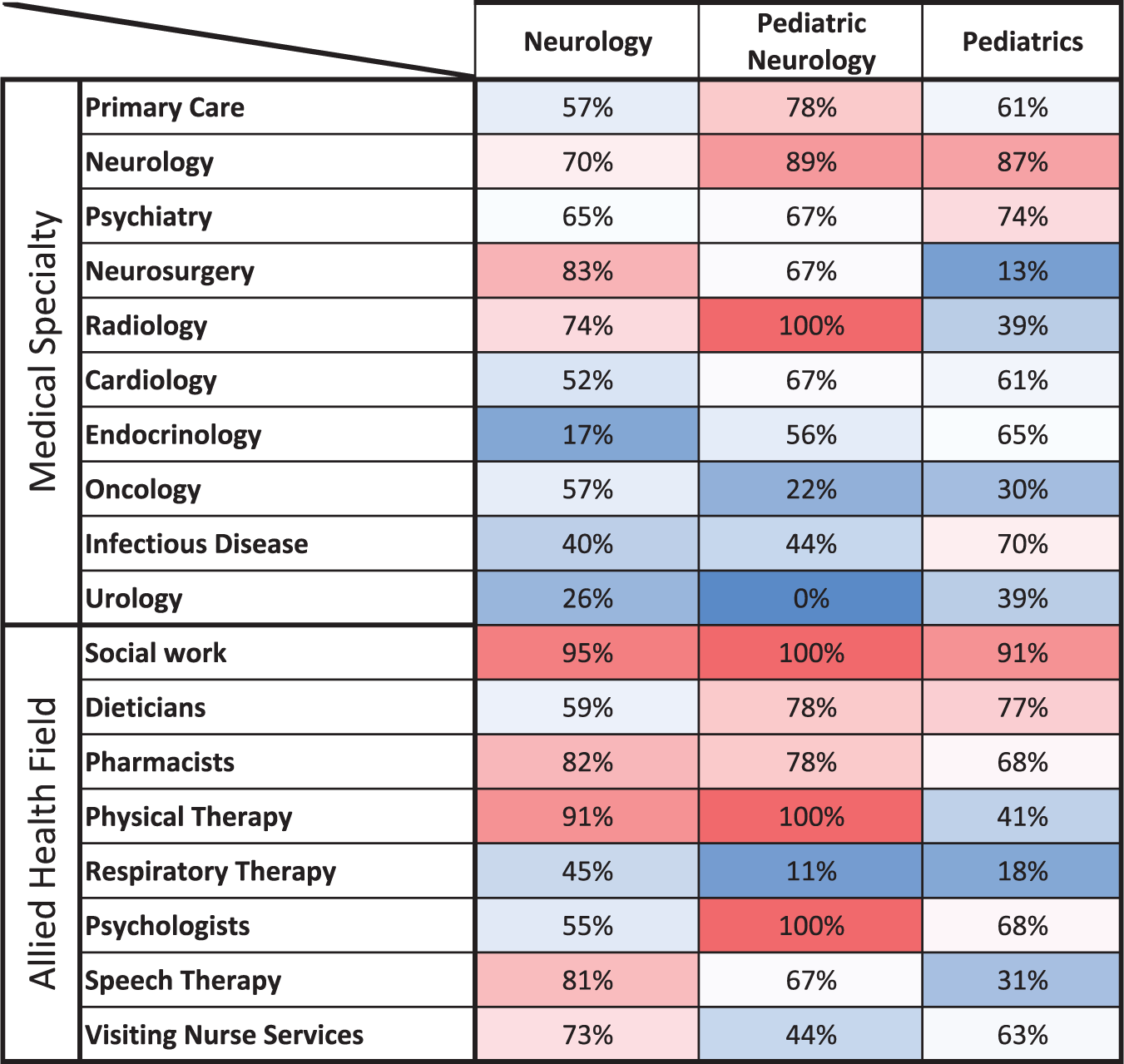

While the DocCHIRP prototype used in the field trial supported communication between a single group of colleagues selected by the user, it did not support ad hoc consultation across medical specialties. In other words, while pediatricians could consult through the application with other pediatricians, they were unable to consult with neurologists. Not surprisingly, we found most clinicians (93% of users vs 78% of non-users) indicating that access to this feature would enhance the utility of the program. To understand existing collaborative networks, we next asked providers in Neurology, Pediatrics, and Pediatric Neurology to indicate which of the various provider groups they have consulted over the past year (Figure 2). As expected, patterns varied between groups where, for example, while neurologists sought contact with colleagues in neurosurgery and radiology, pediatricians were more likely to seek input from neurologists, psychiatrists, and infectious disease specialists. We also considered whether providers would seek the advice from colleagues in the allied health professions. We found that while each discipline sought input from social work, a range of unique preferences for other allied health providers was also observed.

Historical provider consultation practices. Survey participants were asked to identify the medical specialists and allied health professionals with whom they had consulted in the previous year. Data for the cohort of neurologists (

Discussion

Although crowdsourcing is widely utilized in the commercial and manufacturing sectors by companies including Coca-Cola, General Electric, and Disney, 8 the health care field is beginning to show signs of innovation in this space. Most activity has occurred in the private sector rather than within academic institutions, where startups facilitate the client–crowd relationships between patients and the lay public (https://www.crowdmed.com), patients and physicians (https://www.healthtap.com), as well as between companies and physicians (www.sermo.com). However, with recent legislation promoting the creation of regionally based Accountable Care Organizations (ACOs), it is anticipated that mobile health solutions that foster communication between clinicians practicing within individual ACO structures will become more common.

Clinicians are constantly presented with questions regarding best practices in caring for their patients, 2 and in some settings spend less than 10 min on average with a given patient. 9 These forces create bottlenecks in HCP workflows that compromise their ability to provide high-quality care. In this study, we wanted to understand whether providers from the DocCHIRP field trial felt crowdsourcing could address this problem, and to identify particular features of the mobile application that would facilitate adoption. Providers judged program success on the basis of receiving high-quality responses in a timely manner. In the course of these studies, we have identified three key features essential to the success of near-real-time crowdsourcing among HCPs: (1) to satisfy the expectation of a timely response, there must be a crowd of sufficient size available; (2) the crowd must hold the appropriate expertise to opine on the question posed; and (3) HCPs must trust that the information provided will contribute to overall patient benefit, without bringing undue exposure to professional risk or harm to their reputation. And while our results show that while there was wide agreement that crowdsourcing had the potential to enhance care delivery, this enthusiasm was tempered by concerns regarding information privacy and the potential ill effects on provider workflow.

Adapting near-real-time crowdsourcing to medical practice

While crowdsourcing has typically involved using the collective intelligence of the crowd without explicit time constraints, advances in “real-time crowdsourcing” have made it possible to reduce crowd response to under a few seconds for certain tasks. 10 Similarly, “near-real-time crowdsourcing” approaches, including the system VizWiz designed to help the visually impaired navigate in a sighted world, can deliver crowd responses within 5 min. 11 With this in mind, we considered whether real-time crowdsourcing could also benefit HCPs. We found that 96 percent of providers felt crowdsourcing could have an overall net positive effect on their practice. In particular, providers indicated that crowdsourcing could help solve unusual cases, promote medical education, and facilitate communication and the exchange of clinical information between existing collaborative groups. There was also significant interest in using crowdsourcing to communicate with colleagues outside their own specialty as well as with allied health professionals.

Potential barriers to adopting crowdsourcing in practice were also identified. Research and anecdotal experience tell us that interruptions by pagers, mobile phones, and other devices can adversely affect the quality of care delivered. 12 Although providers in our study were willing to answer consults in under 5 min if not otherwise engaged in clinical tasks, more than half recognized the potential for DocCHIRP consult requests to interfere with their workflow. Potential solutions to this problem included having a tagging system, visual prompts, and notification preference settings to help triage consult urgency. HCPs were also concerned that participation would undermine their reputation through public disclosure of their knowledge gaps. We therefore asked participants whether they felt having the opportunity to post consults anonymously would mitigate this problem. Interestingly, most providers felt while it could potentially benefit the index provider, hiding the identity of the consultant would undermine user confidence in the action plan recommended by the crowd. Users also felt that public recognition of high-quality contributions would likely encourage program use.

Trust in network security was another dominant theme in the post-trial survey. Wary of the potential disclosure of protected health information, providers preferred to have their employer coordinate the deployment and oversee security of the crowdsourcing system. Despite this, respondents also saw value in being able to collaborate with colleagues across institutions. In contrast to non-users, DocCHIRP users were less concerned about this issue and disagreed with having the employer involved. It is not surprising that clinicians seek opportunities for open intellectual interactions with their colleagues in an environment where both their reputation and the confidentiality of their patients are preserved. Further study will be required to understand how best to engineer the system to accommodate these competing interests.

In the DocCHIRP trial, it was also suggested that incomplete information from the crowd could mislead the index provider, a criticism voiced elsewhere regarding the safety and utility of curbside consultation. 13 Prior work from the crowdsourcing literature indicates that large, diverse crowds outperform smaller groups of experts, 14 and at the societal level, such diversity is essential to the retention of culturally acquired skills and the avoidance of societal collapse. 15 While it remains to be seen whether this will translate into the clinical domain, it seems intuitive to expect that response quality may suffer where crowd size is small and where anonymity and casual participation undermine accountability.

While clinicians tend not to trust differential diagnosis programs based on algorithmic approaches, most providers surveyed were not concerned about the legality of crowdsourced responses. This sentiment is consistent with recent guidelines from the Food and Drug Administration that deem DocCHIRP and other medical references as exempt from regulatory oversight. 16 Overall, it appears that licensed providers consider real-time crowdsourcing with the same skepticism applied to other resources when making treatment decisions for their patients.

Consultation practices by clinicians

Successful design and integration of crowdsourcing in a complex system requires understanding both user motivations to collaborate and the existing social networks at play. While our users and non-users consulted reference materials with equal frequency, DocCHIRP users were more likely to collaborate with their peers by email or texting and seek face-to-face discussions. Of note, this drive was independent of their taste for digital technologies. With regard to the specific groups that providers wished to collaborate with, some of the consultation patterns could be predicted based on the specialty. However, we were intrigued to find that collaboration with social work and other allied health professionals was pervasive, and in some cases specialty specific. And while not included in our survey, data indicate that physicians value the contributions of medical center librarians highly. 17 McGowan et al. 18 found that 80 percent of the answers provided by the librarians were rated as having a positive effect on provider decision-making. Taken together, we believe that inclusion of medical informaticists and other allied professionals within provider crowdsourcing networks will play a critical role as the volume and complexity of available data regarding best practices continue to expand.

Study limitations and future directions

As with other social media applications, DocCHIRP is subject to network effects, whereby a good or service becomes more valuable as the size of the user pool grows, reaching the required critical mass to sustain productive use. Although communication between clinicians did not easily allow clinicians to consult outside their group, we expect to observe a wide range of potential uses with the implementation of more robust crowd management systems. Our data indicate that clinicians agree that consultation with a crowd of known colleagues can produce high-yield answers. In this case, these individuals have a high likelihood of possessing the knowledge in question and are motivated to respond in a timely fashion. However, it remains to be seen what will happen when users reach out to larger groups, either within or across disciplines, in terms of quality and the timeliness of responses. We anticipate this latter model, where, for example, generalist practitioners seek information from a crowd of trusted yet unknown specialists, has the potential to fetch the most useful information. Yet, while the literature supports the assumption that crowd size is directly proportional to the quality of the responses received, 19 the outstanding question remains whether clinicians will be motivated to connect by virtue of their affiliation within an institution or ACO, absent an established personal connection. In addition to serving as the vehicles for information delivery, this and other medical crowdsourcing applications will provide a unique window on this novel mode of communication.

Despite having an 85 percent response rate, the total number of providers in our post-trial sample was relatively small (

Footnotes

Acknowledgements

The authors would like to thank the physicians and nurse practitioners at the University of Rochester who participated in the trial.

Declaration of conflicting interests

Collaborative Informatics, LLC provided integrated mobile and server software used in this study. Dr Marc W Halterman is co-owner of Collaborative Informatics, LLC, and oversaw the specifications and construction of the software used in this study. Dr Marc W Halterman has provided the necessary conflict of interest documentation in keeping with the requirements of the University of Rochester.

Ethical approval

The DocCHIRP study was reviewed by the Institutional Review Board at the University of Rochester and received approval posing minimal risk.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.