Abstract

This article draws on data collected during a 2-year project examining the deployment of a computerised decision support system. This computerised decision support system was designed to be used by non-clinical staff for dealing with calls to emergency (999) and urgent care (out-of-hours) services. One of the promises of computerised decisions support technologies is that they can ‘hold’ vast amounts of sophisticated clinical knowledge and combine it with decision algorithms to enable standardised decision-making by non-clinical (clerical) staff. This article draws on our ethnographic study of this computerised decision support system in use, and we use our analysis to question the ‘automated’ vision of decision-making in healthcare call-handling. We show that embodied and experiential (human) expertise remains central and highly salient in this work, and we propose that the deployment of the computerised decision support system creates something new, that this conjunction of computer and human creates a cyborg practice.

Introduction

Science fiction and Hollywood movies have long promulgated versions of what our digitally enhanced future might look like, offering visions of artificial intelligence, digitised informatics, bioengineered bodies and robots. Common narratives offer dystopian futures in which we cede cerebral supremacy to digital masters and where humans will be increasingly occupied with attempts to wrest control back from ‘the machines’. 1 Such imagery contrasts with a more progressive view of both information and communication technologies (ICTs) and robotics in the context of healthcare. These technologies are seen as offering positive transformational possibilities – indeed they promise to deliver enhanced health, greater access to health services and sharing of health information, more evidence-based care, improved effectiveness and safety and the possibility of reducing healthcare costs.2–5 For all their apparent differences, both tropes – the science fiction fantasy and the transformational vision – speak to the nature of relationships between humans and machines. They posit new interactions and the potential of enhanced human capacity by combining with technology; borrowing from Haraway, 6 they offer up a cyborg, or from Hayles, 7 a post-human informational pattern within a biological substrate. We explore ideas about human – non-human interactions in relation to ideas about cyborgs in this article to show how this has helped us think differently about our empirical work in the field of healthcare informatics and technology. In so doing, we pick up Nyberg’s 8 challenge to think differently about work practices and the relationships between humans and machines.

Computerised decision support systems (CDSSs) are computer programmes designed to aid healthcare decision-making processes. They typically combine an expert information base with algorithmic or inference-based rules to guide decisions. CDSSs have diffused rapidly across a number of healthcare systems, and in the UK National Health Service (NHS), CDSSs are deployed to support general practitioner (GP) Prescribing Rationally with decision support in General Practice (PRODIGY), to deliver triage in urgent and emergency care (Advanced Medical Priority Dispatch System (AMPDS), NHS Pathways) and to support diagnosis and treatment in the NHS Direct telephone service and in NHS Walk-in Centres (NHS Clinical Assessment System (CAS) and triage clinical decision support system (TAS)). In many healthcare settings, CDSSs are used by clinicians – predominantly nurses, but also doctors, where they have been understood as complex interventions used as an adjunct to clinical judgement and experiential expertise. However, in the context of increasing downward pressure on healthcare budgets and attempts to use labour substitution and workforce reconfiguration to reduce salary costs, it is unsurprising that CDSSs are increasingly being used to support non-clinically qualified staff to deliver core aspects of health services. In this scenario, where the user of the computer application does not have clinical expertise, it might be argued that CDSSs exemplify an ‘intelligent machine’: the clinical expertise lacking in the human operator has been relocated in a computer programme. Yet we know from previous research that technologies seldom work in practice in the ways envisaged by developers, 9 instead they acquire new forms though their interaction with users and environments. 10 In this article, we examine the relationship between human users and computer technology using our empirical study of a single CDSS used in different healthcare settings.

Methods

We undertook a cross-case comparative study of a single CDSS used in three different healthcare settings using qualitative observation, interviews and documentary methods. Our data include approximately 500 h of observation in urgent and emergency care call centres, deliberately structured to capture activity at different times and conducted over 35–45 days at each site. We conducted semi-structured interviews with 64 individuals: 34 call-handlers, 11 supervisors or service managers, 4 clinicians and 15 senior managers. We also examined a range of documents pertaining to the design, development, evaluation and policy surrounding the CDSS, as well as relevant organisational and training materials.

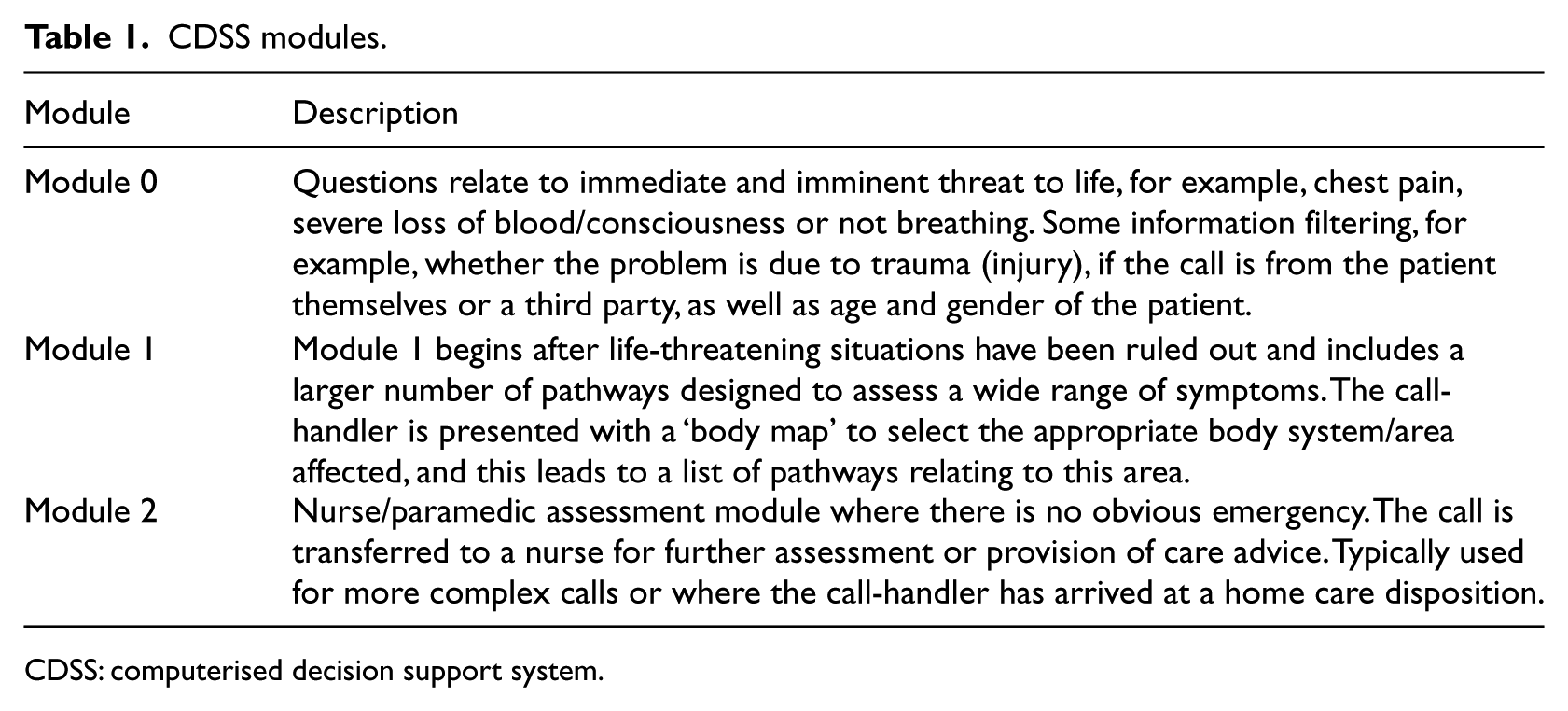

The CDSS studied was designed for the prioritisation and management of telephone calls to emergency (999) and urgent care (typically for medical advice outside general practice normal office hours). Although the software contains modules that can be used by clinically trained staff, it is mainly used, in the three settings we studied, by non-clinical (clerical) staff. It is an expert information system containing a series of logical algorithms that guide questions that the call-handler uses to determine the clinical service required and the timeframe in which this should be accessed. The wording of the questions has some flexibility, and there are suggested prompts to ‘probe’ for information, for example, getting callers to describe the nature of chest pain (as ‘crushing’, ‘shooting’, ‘aching’ and so on). The CDSS is used to arrive at a disposition, which can be a request for an ambulance, an appointment with a GP or advice regarding self-care or other health services such as community pharmacies. The CDSS comprises three modules, which are summarised in Table 1. The first is for the immediate identification of life-threatening problems – those that require an ambulance. Once these have been excluded, the second module runs through questions designed to assess symptoms. At the start of this module, the call-handler is presented with a ‘body map’ (which is age and gender specific) and can click the computer mouse to select affected areas and to access ‘menus’ of symptom pathways. A further module (module 2) is designed for use by a nurse or paramedic and contains algorithms to support further clinical advice and prioritisation.

CDSS modules.

CDSS: computerised decision support system.

The three settings we studied were as follows: an Ambulance Trust that provides 999 emergency services to a population of over 2.5 million in a geographical area, which includes large cities and towns, and some more remote rural areas; a single-point-of-access (SPA) service for two Primary Care Trusts serving a population of around 600,000 and referring to five urgent care centres (UCCs); and a service that provides out-of-hours (OOH) cover for 150 GPs for a population of approximately 140,000 in a large town and the surrounding conurbation. We refer to these as 999, SPA and OOH, respectively.

Our data were thematically analysed. An initial sample of field notes and interview transcripts were read and coded independently by members of the research team, and these codes were discussed and refined and formed the basis for a coding scheme used within Atlas.Ti 6.1. We held regular research meetings to discuss emerging codes and themes and to develop our interpretations. As the study progressed, we structured our analysis by looking at data within each setting and then across all three settings, using our research questions and theoretical ideas from the wider literature to guide this work. We used matrix or charting techniques to facilitate comparison of the themes and had ongoing discussions with the wider research team and project advisors to check our interpretations. The study was approved by the Wiltshire Research Ethics Committee (08/H0104/56).

Results

A core claim made by call-handlers, CDSS developers, managers and policy makers was that the CDSS was based on highly formalised knowledge drawn from systematic reviews and research and that it was evidence-based. This made it ‘safe’ for non-clinicians to use: You’ve got to trust the system. Sometimes I think that shouldn’t be the disposition, but then again, that system [CDSS] has been designed by doctors and nurses and like a team of specialists … Even though I sometimes think ‘that question is ridiculous’, there’s a reason for it being there so you’ve got to ask it. (Interview, 999 call-handler) [Call-handler] explains that [the CDSS] has been designed by ‘a huge group of people … non-clinicians, clinicians – lots of input … it’s a very safe system to use, used correctly’. (Observation, 999)

This argument was also used to suggest that the expertise or knowledge required to undertake the task of call management and prioritisation was securely located in the machine. Documents on recruitment and training for each organisation emphasised the need for ‘people’ skills, such as good personal manners and ability to communicate with general public, and required a relatively modest secondary school level of educational qualifications, no previous experience of ICTs or call-handling and no prior health-related experience or knowledge, all confirming this view of expertise as ‘in the computer’. However, our analysis of call-handling work suggested that this might not be the case. We identified a range of skills developed and used by the call-handlers, which challenge this view, and we use these findings to suggest that what emerges from this technology in use is a human–machine configuration that resembles a cyborg.

Human expertise

Flexibility

Some call-handling and CDSSs have tightly prescribed scripts and algorithms, which do not allow user discretion or interpretation. We found that in the three settings studied, call-handlers exercised significant flexibility about the phrasing of questions and how they navigated the CDSS pathways, as in the following example: [The call-handler] tells me how she is required to follow the system and the system makes the decision, but it’s her job to use probing questions to clarify if the information she has obtained is accurate. Whilst on the one hand the system goes on what the patient says to be true, she is required to probe – particularly where she thinks the caller may be misinterpreting the question – deliberately or accidentally, and where she suspects the patient is blatantly lying to ensure that they get an ambulance. A decision has to be made when to stop probing – this seems to be when a) the call-taker has satisfactorily got enough information to answer with ‘enough certainty’ yes or no or when b) when the patient insists, reiterates that they are e.g. gasping for breath, pains are in their chest (even when other things they have said in the call provides evidence to the contrary). (Observation, 999)

Even in ‘negative cases’ where call-handlers asserted that they simply followed pathways dictated by the CDSS, this sense that there is something more complicated going on, that call-handlers need to use their own expertise, was apparent: the only responsibility you have as a call-taker, is to listen exactly to what you’re saying, and you make sure what you’re answering is right. You don’t have the kind of final decision on […] Because the questions are longer, you go more into depth, it does give you more time to think about the call, and make sure you’re getting it right. (Interview, OOH call-handler)

Clinicalisation

We have indicated that the call-handling workforce was predominantly non-clinical, yet they undertake healthcare work, albeit at a distance from the patient. Despite the claims that all the clinical knowledge was embedded in the CDSS, we found that there were strong clinical components to the work done by the call-handlers. This included giving clinical advice: that was an advantage … for the first time our non-clinical operators could give directed clinical advice, or direct patients to be able to go and see their GP or healthcare professional within several days. (Interview, OOH GP)

In addition, we observed lots of team sharing of knowledge, especially on clinical matters, which drew on call-handlers’ medical and life experience as well as what they had ‘learned’ from the CDSS: It’s great working here because we’ve all got our bits of knowledge that no-one knows anything about’ for example, someone has a husband with diabetes and [name] knows about asthma. So they can ask each other for advice/learn from each other. She tells the story of her handling of a fitting baby – 6 months old. The old advice [pre CDSS] was to strip the baby down, open windows and sponge with cold water. Now they don’t advise this because the shock of the cold water can be bad for the baby. But when she was handling a call she still asked the parents what the baby was wearing – ‘A little cardy because she’s been poorly? Right well, undo the top buttons of the cardy and open a window or two’. The young call-taker next to her is laughing and she asks why at the end of the call. He says she sounds like ‘a mam’, says she’s great. The call handler is merging her advice with [the CDSS] to offer what she thinks is the best advice. Her peers know this is off-piste, but are not shocked or critical. (Observation, 999)

The training for call-handlers comprises 6 weeks of classroom-based activities that include organisational induction, ICT and telephone communication skills and worked examples of the CDSS pathways. Call-handlers are reminded that they do not need clinical knowledge to deliver the service; nonetheless, there are opportunities to learn about clinical matters – interactions with trainers who often have clinical backgrounds, questions about medical terminology and the CDSS pathways themselves reinforce the clinical nature of the work. Some call-handlers deliberately seek additional clinical knowledge, as in the following example: I’m doing an anatomy and pathophysiology course which is in my own time. They’re offering us further learning in terms of medical practice, so hopefully we’re going to start picking up on it […] It’s through the university, and [the Trust] are paying for it. […] So if we pass these exams, we can go and do student paramedic, but I mean, I don’t necessarily want to be a paramedic, it’s just more further learning and it backs up things at work. (Interview, SPA call-handler)

Negotiation

A premise of the CDSS is that it enables a process that leads to a clear decision (in this case, the disposition). The logic of the algorithm is rational, producing an outcome or referral for advice. This is all well and good in theory, but in the messy reality of social interaction, this ‘decision-making’ can be highly problematic. Callers and patients challenge the decisions offered, forcing call-handlers to negotiate: the final disposition was a 19 minute ambulance. The call-handler explained that an ambulance was going to be on its way but the patient did not want an ambulance: she refused it. So the call-handler called the clinical supervisor and clicked on early exit. He then arranged an appointment at one of the primary care centres. (Observation, SPA)

Callers sometimes resisted the disposition offered by the CDSS, and again, this meant that the call-handlers had to negotiate. Especially, in urgent care settings (SPA and OOH) and occasionally in 999, some callers sought human–human (social) interactions, not computerised decisions: [the caller] did not want to answer the questions but preferred to talk about her personal life. I observed [call-handler] trying to control the call pace. She was reading different options and clicking on them once the questions had been asked. She also clicked on some options below the sentences [including ‘change answer’, ‘restart triage’, ‘early exit’]. Sometimes she did not read and was anticipating the answer. It was evident that she could not wait to finish she seemed quite impatient. Every time the call-handler was asking a question the patient talked about a new symptom. It was very difficult to triage. (Observation, SPA)

These themes of flexibility, clinicalisation and negotiation all point to the central role of human expertise in delivering these services. The CDSS is a resource for these workers, supporting an intensely social process. Far from everything being ‘in the machine’, the work of triage and managing calls is a joint enterprise between technology and humans.

The challenge cyborg practices

The use of CDSS by a non-clinical workforce for healthcare decision-making is relatively new and has not been well studied. However, our data suggest that this work requires something more than the simple deployment of a technical (computerised) solution. It is clear that the call-handlers’ knowledge and skill are augmented by the CDSS but equally that they bring their human expertise to bear in ways that enable this computer technology to work. We propose to consider this mutual shaping drawing on ideas about cyborgs as the interdependency of human and machine.

As Hayles 7 has pointed out, much of the reaction against cyborgs (played out so effectively in science fiction and cinema) has stemmed from a fear that humans would be modified by machines and, in so doing, cede some essential part of their humanity. While ‘technical cyborgs’ have been partially realised (notably in medicine, for example, in coronary pace makers or bionic hands), the use of computers in cognitive construction – as in the CDSS studied here – is the more dominant form of relationship between digital technologies and humans. Hayles along with writers such as Nyberg 8 and Barad 11 has alerted us to the importance of understanding these types of social and material entanglements. Indeed, Nyberg 8 pushes this further and asks us to stop creating the binary of machine or human and subject or object and instead to use the metaphor of the cyborg to see unity and interdependence as an ‘assembly of actors, together, performed the practice in a state beyond the human and non-human mode of “inter-action”’ (p. 1193).

These ideas about cyborgs seem to offer a way of understanding the deployment of CDSS. We suggest that the call-handling practices we have described here present an emerging cyborg. The CDSS and the human call-handler are inextricably related. Expertise is ‘in’ the machine and ‘in’ the worker; it is co-created in their interaction. The cyborg metaphor gives us some purchase on this relationship between computer technologies and call-handlers.

It is worth noting at this juncture that ideas about cyborgs have become somewhat detached from the wider literature on cybernetics, which began with Wiener 12 and Ashby 13 as the study not of machines, but of behaviour, and developed into the study of complex organisational systems with the work of theorists such as Stafford Beer. 14 Yet, if we reconnect the metaphor of the cyborg with this organisational behaviour literature, we may be able to focus on the work rather than the embodiment of the cyborg; that is, we can begin to say that it is the practice of call-handling that creates and constitutes the cyborg.

This cyborg practice raises some tensions and challenges. First, there is an apparent contradiction between the rhetoric of clinical expertise in the machine and the call-handler experience of doing clinical work. While call-handlers are aware that they are not clinically qualified, they are doing a ‘kind of’ clinical work and using their expertise (for lower pay than clinicians): You’ve got to drive it and you’ve got to have the knowledge and the background to work with that because you’ve got to understand the inference of what it is that you’re asking. If you don’t understand what you’re asking, you’re not going to be able to use it effectively. (Interview, OOH call-handler) Some will use it like a robot … But I always say to them, we expect you to use some common sense and judgement and it’s not about deviating from the system; it’s about sometimes having that gut feeling that we all get when we think something’s not right. (Interview, 999 manager) we’re not diagnosing, we’re not … if I’d wanted to be a doctor or a nurse I would have done that years ago. I don’t think that, for our purposes, we really need, … I mean, obviously, it’s interesting, but I don’t think we need it actually for the job, because [the CDSS] does it for you.[…] Our job is just to collect information, input it, and let the system, not diagnose, but come out with the best, appropriate course of action. (Interview, OOH call-handler)

CDSSs, at least in the context of healthcare work, are not a technical replacement for human labour. While they can be successfully deployed to deliver health services in urgent and emergency care, they are only successful as the conjunction of humans and computers – the cyborg practice augments what the call-handler can do but simultaneously requires and creates human expertise to do so.

Second, if we return to our observations about dystopian cyborgs, one of the core questions often raised centres on the moral and emotional challenges of hybrid human or machines. We were acutely aware that despite working at a distance from the patient – caller, the work of call-handling in urgent and emergency care poses similar challenges to frontline healthcare work. Even at the end of a telephone line, this work involves human frailty, fear and feeling. It requires emotional labour in the same way as nursing and medicine do15,16 – this reflexive observation illustrates just one small example of this emotional work: Next case is a girl with a dislocated knee. A passer-by seems to be calling for her and we can hear her screaming in the background. The questions focus on whether the knee looks out of place or not in the normal position. The caller is able to get a name from the girl. He keeps saying it is dislocated but [call handler] establishes that there is no blood. As a result (and surprisingly to me) this gets a 1 hour ambulance [not life threatening]. [The call handler] explains that the ambulance will come but that it will not be ‘on a blue light or sirens’ because this is not necessary. She runs through the instructions about keeping the patient warm and not giving anything to eat or drink. … I wonder what it must be like at the scene of this incident with the girl screaming … It is as if the only things that matter are the answers to the questions and there isn’t one about screaming. (Observation, 999)

Managing social interactions in the context of healthcare need is never easy to accomplish. The CDSS equips the call-handlers with a narrative structure to support the interaction, but the call-handlers draw on their own interpersonal and communication skills to accomplish the healthcare task at hand. The call-handler and the CDSS combine to enact healthcare work (and in this context being designated as ‘Emergency Care Support Worker’ rather than ‘Call Centre Operative’ carried vital symbolic power).

Conclusion

For technological interventions to come successfully into everyday use requires considerable human effort. They do not simply ‘happen’; indeed, the history of the implementation of technologies and ICTs is one of failures and abandoned projects rather than success.17–20 Our research has studied a computer technology that has, for the time being at least, been successfully brought into use in multiple settings. We know that digital technologies bring new forms of knowledge into play. 21 Our work here suggests that some technologies interact with human actors in ways that create new forms of practice. We propose that the healthcare call-handlers using this CDSS constitute something more than the technology or worker alone – something that resembles a cyborg. This argument runs counter to the traditional marketing pitch for digital technologies, which has them neatly substituting for human labour. It is at odds with a policy rhetoric, which proposes that ICTs will solve some of the human problems inherent in health services.22,23 If this understanding of CDSS is correct, it also raises new questions about the nature of human–computer relations, forcing us to look at the intersection of a number of different fields. To understand these new cyborg practices, we may need to draw on not only computer science and human–computer interaction, social science and science and technology studies, but perhaps also the insights of science fiction.

Footnotes

Acknowledgements

We thank all the anonymous participants for taking part in this research.

Declaration of conflicting interests

The views and opinions expressed therein are those of the authors and do not necessarily reflect those of the National Institute for Health Research Health Services and Delivery Research Programme, National Institute for Health Research, NHS or the Department of Health.

Funding

The project was funded by the National Institute for Health Research Health Services and Delivery Research Programme (project number 08/1819/217).