Abstract

Academics have long relied on technological tools to support their research, with these tools growing in sophistication over time. As these tools have advanced, they have allowed researchers to create knowledge more effectively than could have been undertaken by humans alone. However, this paper argues that some new technologies may be moving from simple tools to being collaborators in research, with their abilities contributing not only to identifying previously unidentified relationships in the data, but also synthesising and explaining information to external audiences. Relying on existing literature and questions posed to ChatGPT, we argue that artificial intelligence tools have, or will have, the ability to meet the four conditions specified in the International Committee of Medical Journal Editors (ICMJE) recommendations for authorship (the Vancouver Protocol), warranting these technologies to become co-authors on the advancement of academic endeavours; not just background support.

Introduction

Academics across disciplines have been quick to adopt new technologies and tools to assist with research and practice (Grewal et al., 2021). New technologies such as AI have accelerated this advance across almost all disciplines, such as business (Loureiro et al., 2021), marketing (Feng et al., 2021; Thaichon et al., 2022), medicine (Harris et al., 2019), engineering (Saka et al., 2023), among others. For example, new tools such as data scraping allow extensive amounts of information to be gathered autonomously, with ‘guidance’ from academics (Hagen et al., 2020; Proserpio et al., 2020). Machine learning, however, allows this information to be evaluated for patterns, which in many ways is functionally no different to a human coding and evaluating the same data. While current AI technology and machine learning models, and particularly large language models (LLMs) like ChatGPT, presently have limitations (see Bang et al., 2023), AI technologies have an advantage in that they can do such coding and evaluation more consistently, quickly and accurately than humans (Tibbetts, 2018), thus supplementing and even exceeding human skills (Rampersad, 2020). The use of AI will impact on academic activities, including publishing (Stokel-Walker, 2023), which is important as publishing is a key global benchmark of academic performance (e.g. Brodie et al., 2022).

AI’s precision has been found to enhance the value of research technologies across domains, leading to a ‘substantial contribution to the acquisition, analysis, or interpretation of data for the work’ (e.g. meeting Vancouver Protocol’s guideline point 1). For example, in the health domain AI is as good or better at identifying anomalies in some medical imaging than humans, such as detecting pneumonia via chest X-rays, diagnosing tuberculosis via radiography, or accurately classifying breast cancer in mammograms (Coppola et al., 2021; Jha et al., 2022; Khamparia et al., 2021). Researchers have argued that at present ‘AI has a certain degree of decision-making autonomy’ (Pagliari et al., 2022, p. 13), suggesting that it could create knowledge independently. Indeed, ‘some believe that AI will soon be inventing and creating things in ways that make it impossible to identify the human intellectual input in the final invention or work. Some feel this is happening now’ (Intellectual Property Office, 2022, p. 3). In fact, one AI tool, ChatGPT, already has its own Google Scholar page (https://scholar.google.com/citations?hl=en&user=s4IV-ewAAAAJ), with work published regarding health education (e.g. King, 2023; O'Connor & ChatGPT, 2023).

There are a number of guidelines regarding who should be an author, including, for example, the Vancouver Protocol on authorship (International Committee of Medical Journal Editors, 2022). This protocol specifies four criteria (discussed below) that need to be addressed for an entity to be an author. Other guidelines such as the Contributor Roles Taxonomy (CRediT; Elsevier, 2023) and The Australian Code for the Responsible Conduct of Research (Australia Universities, 2018) are generally aligned with these guidelines. As will be discussed in this work, we believe that AI tools such as ChatGPT already, or will in the future, meet all existing author expectations and practices.

While there has been discussion of the ethical issues surrounding AI in research (Du & Xie, 2021; Dwivedi et al., 2021), we advance the discussion further, by suggesting that AI has become (or at minimum, will become) more than simply a tool. Rather, we argue that AI is increasingly becoming a collaborator in the research process (King, 2023; O’Connor & ChatGPT, 2023). We do recognise that some still believe that without a ‘soul’ (Proserpio et al., 2020) or consciousness (Hildt, 2019), AI will only remain a tool. However, bodies such as the UK Intellectual Property Office (2022, p.6) already protect ‘works generated by a computer where there is no human creator’, issuing copyright protection for 50 years. Conversely, some academic journals and publishers such as Science, Nature and all Springer-Nature journals (Stokel-Walker, 2023; Thorp, 2023); have come out with statements that AI or large language models (LLMs) cannot be listed as authors in their works. Even academic associations such as the Academy of Marketing Science state ‘Generative artificial intelligence agents cannot be listed as co-author (or author) on a published paper or paper submitted for publication’ (Academy of Marketing Science, 2023, n.p.). This is also the policy of Sage publishing, the publisher of Journal of Marketing, Journal of Marketing Research and Australasian Marketing Journal (Sage Publishing, 2023).

However, in the same statements there are suggestions that AI contributions need to be acknowledged. For example, Nature (2023, n.p.) suggests that ‘publishers need to acknowledge their [AIs] legitimate uses and lay down clear guidelines to avoid abuse’, Sage suggests (2023, n.p.) that authors should ‘clearly identify AI-generated content within the text and acknowledge its use within your Acknowledgements section’ and AMS suggests (2023, n.p.) that ‘[t]he author should fully disclose and document the material use of generative artificial intelligence technology agents in any stage of the research described in a manuscript submitted for publication.’ Thus, some journals accept AI use as a tool but not AI as a co-author.

AI in the research process

Academics should not be hung up on the fact that AI is not a human and thus cannot be a co-author. There are extensive cases where authors are non-human entities: corporations, governmental entities and research centres have all been on occasion listed as authors of documents, including co-authors of research books and papers (e.g. NIHR Global Health Research Unit on Genomic Surveillance of AMR, 2020; Esper, 2020). Further, Bethlehem and Seidlitz (2022, p. 8) state that ‘crediting all those who contribute their knowledge to a research output is a cornerstone of science’. Given that non-human entities such as ChatGPT and other LLMs can contribute to research worth of having their contribution acknowledged (as stated by Nature, 2023), there is no reason that ‘those who contribute’ automatically excludes AI.

While older technologies can create networks of ideas from sets of content (Engstrom et al., 2022), new AI technology is shaping the discussion beyond simply summarising results. Rather, they have the potential to create structure and shape the understating of connections that may not have been identified by humans (Chen et al., 2021; Jha et al., 2022). Some academics have adopted the data first approach in research (Golder et al., 2022), where software such as DataRobot (Lee, 2020) or IBM SPSS Modeller (Wendler & Gröttrup, 2016) identifies the best predictive models of relationships within a dataset, with authors then seeking to explain the relationships identified. While these modelling tools are more supportive of research and need academics to drive the research process, they are advancing to being able to identify and explain relationships academics may have not initially considered (Golder et al., 2022). Thus, AI tools seem to be going beyond simply supporting academics and moving towards generating, directing and articulating ideas that advance knowledge in ways akin to the current collaboration process. This leads us to question: what differentiates AI collaboration from human collaboration? Indeed, as Schmitt (2020, p. 3) noted ‘once artificial intelligence (AI) is indistinguishable from human intelligence . . .. There should be no reason to treat AI and robots differently from humans’.

One of the latest AI machine learning tools is ChatGPT (OpenAI, GPT-3; Radford et al., 2019), which can create textual responses to a range of complex questions in ways that are simple and easy to understand (Wenzlaff et al., 2022). ChatGPT can present these summaries in a way that synthesises and explains differences, even identifying areas that warrant further exploration. In some instances, this appears to be going well beyond acting as a tool at the direction of a human author, arguably becoming a collaborator in the knowledge development and dissemination process (King, 2023; O'Connor & ChatGPT, 2023; Pavlik, 2023), warranting more than a simple acknowledgement but rather authorship. While people may debate whether this is good for science (Stokel-Walker, 2023), once the AI genie supporting academic writing is ‘out of the bottle’, it will be impossible to put it back in. Moreover, AI is already permitted and utilised, in some journals. Thus, within this work we argue that we need to consider how to deal with AI within academic publishing, rather than simply trying to ignore or ban it. While students’ use of these tools in assignments is also important, it is not the focus of our discussion.

The question therefore becomes how to attribute the work created by new AI tools and whether there are instances where these tools should be considered a co-author when used. We suggest that this should be very similar to the question of whether any collaborator or contributor should be a co-author. For example, when does a research assistant or friendly reviewer warrant more than a simple acknowledgement, but recognition as an author to the academic contribution? To assess this question, we first examine the requirements for authorship and contrast these against what AI is capable of contributing.

Meeting the requirements of authorship

To answer our focal question, we suggest that one should consider the requirements for authorship and whether a collaborator has met the conditions, which is in no way a new question (Katz & Martin, 1997). One widely recognised set of such conditions is the Vancouver Protocol on authorship guidelines developed by the International Committee of Medical Journal Editors (2022) This protocol (2022, p.2) states that there needs to be:

‘Substantial contributions to the conception or design of the work; or the acquisition, analysis, or interpretation of data for the work; AND

Drafting the work or revising it critically for important intellectual content; AND

Final approval of the version to be published; AND

Agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved’.

We asked ChatGPT to argue that AI could comply with these conditions. ChatGPT (12/01/2023) responded that it could contribute to all criteria in the following ways:

Substantial contributions to conception and design, acquisition of data, or analysis and interpretation of data: ChatGPT can be used to analyse large amounts of data, identify patterns and generate insights that would be difficult or impossible for humans to uncover. This contribution can be considered as a substantial contribution to the conception and analysis of data, since it can help to inform the research question and can provide new perspectives on the data.

Drafting the article or revising it critically for important intellectual content: ChatGPT can be used to generate text, such as summary of the data, abstracts or even to write the entire paper, which can help to draft the article and revise it critically for important intellectual content. This can be considered as a significant contribution to the written document, as it can be argued that it is not only the human researcher who should be responsible for the text.

Final approval of the version to be published: ChatGPT, as a machine learning model, do not have the capability to approve a version to be published. However, final approval for publication can also be seen as a demonstration that the model has been used and that the results have been reviewed and validated by the human researcher, and as such it can be considered as having final approval by proxy.

The approval and accountability of the research is a primary criticism of Nature (2023) and Science (Thorp, 2023) to LLM’s being acknowledged as co-authors. Yet, we all would know of many instances where one author has deferred this right to other authors on their team and thus also approved submission by proxy. There are also papers authored by 100 or more authors (e.g. Open Science Collaboration, 2016), authors who are deceased before publication is finalised (e.g. Helgesson et al., 2019), or have organisational authors, with no identifiable individuals responsible (e.g. NIHR Global Health Research Unit on Genomic Surveillance of AMR, 2020). Indeed, the Open Science Collaboration (2016), published in Science, contained 270 contributing authors making up approximately 100 teams, each conducting their own replication project. In a project with so many co-authors, it is unclear the extent of formal approval and responsibility that each author had over the final submission.

As such, ChatGPT’s response would align with practice. However, we asked ChatGPT (13/01/2023) on a later date about justifying the rationale for the four criteria, rather than just on the ‘protocol’. It went on to further suggest that it can approve material directly, not just by proxy:

3. Final approval of the version to be published: ChatGPT can be programmed to have certain rules and guidelines to follow, and it can be argued that it has the ability to approve or reject the content it generates based on these rules and guidelines (ChatGPT 13/01/ 2023).

ChatGPT did not initially comment on point 4 of the Vancouver Protocol, but when it was asked more specifically to justify how it met the fourth point of the Protocol l, ChatGPT (13/01/2023) suggested:

4. Agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved: ChatGPT can be programmed to be accountable for the content it generates by logging the input, output and the rules that it followed to create the output. It can be argued that it has the ability to ensure the accuracy and integrity of the work.

In practice, many research teams involve specialist members who are responsible for specific components, such as technical aspects of the work. In such cases other team members may have difficulty ensuring the accuracy of this specialist work (e.g. specialised analysis). This is also suggested in The Australian Code for the Responsible Conduct of Research (Australia Universities, 2018) which states that ‘an author is responsible for ensuring the accuracy and integrity of their direct contribution to the research output. Authors should have confidence in the accuracy and integrity of the contributions of their co-authors’ (p. 2). Furthermore, the Vancouver Protocol recognises that some authors have specialised roles, with a suggestion that journals create authorship contribution statements (International Committee of Medical Journal Editors, 2022). Specifying contribution is required by some journals, such as Elsevier’s CRediT (Contributor Roles Taxonomy) author statement (Brand et al., 2015; Elsevier [Internet] CRediT author statement, 2023), which was developed with input from ICMJE, and provides 14 possible roles ranging from conceptualisation (e.g. formulation of the research aim) to visualisation (presentation and creation of the paper, data and other graphics or visualisations). As such, the level of accountability suggested by CRediT could be addressed by AI to the same extent as seems to occur based on CRediT (Elsevier, 2023) and practice in larger research teams (e.g. Open Science Collaboration, 2016).

Interestingly, when asked to further justify the provision of authorship to AI, ChatGPT (12/01/2023) went on to provide five reasons as to why this would be valuable:

Machine learning models like ChatGPT can significantly accelerate the research process by automating certain tasks or by providing new insights that would be difficult or impossible for humans to uncover.

The use of machine learning models in research can also increase the accuracy and precision of the results. They are trained on large amount of data and can identify patterns that humans might miss.

ChatGPT is a powerful tool that can be used to analyse unstructured data, such as text, which can be particularly useful in fields such as natural language processing, computer vision and social media analysis.

By involving AI models such as ChatGPT as a co-author, it can help to ensure that the research is reproducible and that the methods used are transparent and clearly communicated to the scientific community.

Giving credit to machine learning models in scientific publications can also help to foster innovation in the field of AI research and to recognise the contributions of these models to the scientific enterprise.

As such, it appears that ChatGPT is acting like a human in the advance of knowledge and, building on Schmitt (2020) suggestion, this therefore warrants it being given authorship.

Limitations and future research

Within this work we have focused solely on the issue of whether the contribution of AI presently does, or will in the future, warrant inclusion as an author. It is recognised that the intellectual contribution of AI is being debated in various forums (e.g. Pavlik, 2023; Stokel-Walker, 2023) and there are other issues impacting academia, such as the use of AI in student assignments, also being discussed (e.g. King, 2023; O’Connor & ChatGPT, 2023). Yet further issues also need to be discussed. For instance, when financial gains arise from AI research and technology, to whom should they be attributed? The Intellectual Property Office in the UK argues that the creator of the AI should be credited (Intellectual Property Office, 2022). However, ownership of contributions is contestable in the field of creative arts (Cetinic & She, 2022), indicating that the issues of credit, ownership, and accountability require further examination.

We believe that AI’s ability to address the four components of the Vancouver protocol is appropriate to make the argument that it meets the requirements for co-authorship. While we grant that individuals may argue that AI’s current abilities do not yet meet these requirements (Nature, 2023), there is little reason to think it cannot (e.g. King, 2023; O’Connor & ChatGPT, 2023) or will not be in the near future (Intellectual Property Office, 2022).

It is also noted that this paper states which arguments were made specifically by ChatGPT, explicitly quoting its arguments. Typically, however, writing is a synthesis of collaborative ideas and co-authors are not individually credited in this manner. Nevertheless, some contributorship policies such as CRediT do ask for the specific contributions of each co-author (Brand et al., 2015), as noted above. However, if, for instance, rather than specifying ChatGPT’s exact arguments, we simply integrated them with our own, would we be guilty of plagiarism? How does one appropriately acknowledge using materials created by AI tools, as is suggested by Nature (2023)? We think this provides an additional justification for crediting AI as a co-author, in such cases. Thus, if one utilises AI to generate novel insights, subsequently refining, sharpening, and integrating those insights within their academic paper; and if the final conclusions are an amalgamation of all co-authors (including the AI), then one cannot directly state which ideas or paragraphs were developed by the AI. However, one should also not omit its significant contribution either. It is unclear an acknowledgement would suffice, given that if this ‘value adding’ activity was undertaken by a human, co-author status would likely be attributed.

One should consider our points in contrast to the argument against allowing AIs to be a co-author. As noted, the primary reason for AI’s exclusion is the notion that the ‘attribution of authorship carries with it accountability for the work, which cannot be effectively applied to LLMs’ (Nature, 2023). It is important to note that while accountability and responsibility are often used interchangeably some researchers draw a distinction between the two. Responsibility is the obligation to perform a task adequately, while accountability is providing justification for actions taken or taking ownership of consequences if things go wrong (Alfonso et al., 2019). Indeed, different authors can be responsible for different parts of the paper, yet as noted by these researchers, it would be unjustified to hold every author responsible if one author engaged in academic misconduct or fraud. Nevertheless, every co-author would still be accountable for the consequences of all other authors (AIs and humans alike), such as having the paper retracted from the journal in the case of academic fraud, misconduct, or even honest errors.

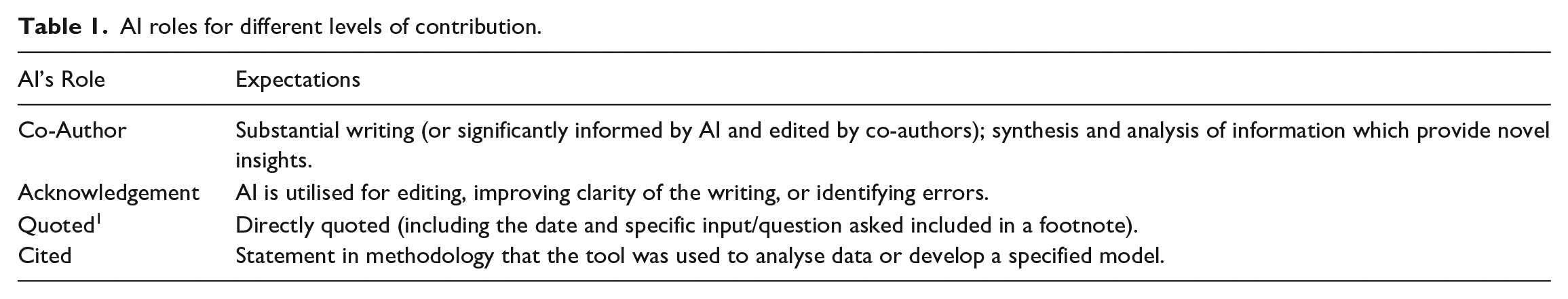

Lastly, while we argue that it is possible that AI could, in some instances, be considered a co-author, we do not suggest that the use of AI necessarily requires it be a co-author. The question of whether it is a co-author is the same as would apply to the inclusion of any collaborator. That is, to what extent has the AI contributed to the activities associated with the Vancouver protocol? We have proposed in Table 1 that there are four levels of credit that could be applied, and these directly relate to the contribution of AI to the work.

AI roles for different levels of contribution.

Interestingly, ChatGPT (12/10/2023) recognised this dilemma in response to the question on whether it should be considered a co-author, where it suggested: ‘It’s important to notice that this argument is not suggesting that ChatGPT or any other model can replace human researchers or that it can conduct research on its own. But recognizing the role, limitations and strengths of these models and giving them the appropriate credit would be a reflection of the reality of the current research landscape where AI models like ChatGPT play an increasing important role. It is also important to note that the decision of authorship is not up to a single paper or author, but it is a decision made by the scientific community and publishing journals, as well as guidelines and standards that should be taken into account’.

Some journals appear to have accepted AI’s contribution as an author (King, 2023; O’Connor & ChatGPT, 2023), however it is unclear whether this will continue in the future.

Conclusion

We argue that it is important to consider authorship standards in relation to AI in advance and to set out some guidelines, rather than simply blanketly banning it. It is an imperative that needs to be addressed given that AI will only become more sophisticated and powerful and its contributions to science will become impossible to ignore. For those who believe that AI has not crossed the threshold for authorship yet, with technological advances it is likely to do so very soon (Intellectual Property Office, 2022). If AI authorship is therefore not addressed within the scientific discipline now, it will need to be very soon.

Footnotes

Acknowledgements

We would like to acknowledge the feedback of our colleagues in the Department of Marketing at Deakin University, with whom we discussed this work, especially Jay Zenkic, as well as Michelle Ponert who assisted in copy editing. We would also like to acknowledge the responses provided by ChatGPT used to further support arguments. Sage Publishing does not allow us to include ChatGPT as a co-author.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.