Abstract

Background

Emotion recognition ability is essential for social cognition, enabling humans to interpret and respond to emotion-related cues. However, so far, it is not known how underlying cognitive deficits, face processing and emotional intelligence are associated with emotion recognition, particularly in patients with mild cognitive impairment (MCI).

Objective

To examine emotion recognition performance in patients with MCI and the associations between emotion recognition, face processing, emotional interference, and emotional intelligence.

Methods

60 participants (patients with MCI = 30, healthy controls (HC) = 30), aged 50–86 years (M = 66.8, SD = 8.66), completed the Emotion Composite Task (ECT), Facial Composite Task (FCT), and Emotion Stroop Task. Emotional intelligence (EI) was assessed using the Trait Emotional Intelligence Questionnaire (TEIQUE).

Results

Overall, patients with MCI performed worse on the ECT than healthy controls (β = −0.36,

Conclusions

Although overall emotion recognition performance did not significantly differ between groups, patients with MCI showed selective impairments in recognizing anger. Face processing and emotional intelligence were associated with better emotion recognition, suggesting that patients with MCI who have stronger perceptual and socio-emotional skills preserve their emotion recognition abilities more effectively.

Keywords

Introduction

Emotions determine how we respond to immediate challenges that affect our survival or matters that concern our overall well-being. 1 Despite there being various cultural and social factors that influence emotional experiences and their expression, several studies support the notion of six universally recognized emotions, namely: anger, fear, happiness, sadness, disgust, and surprise. 2 To understand the emotions that another person is expressing, we need to be able to process, perceive, and interpret the cues that are available to us. 3 Important, but not exclusive, emotional signals are conveyed through nonverbal facial expression cues (for example, raising eyebrows in anger). 4 Therefore, to interact successfully with our peers, emotion recognition, the ability to perceive and categorize emotions, and the ability to infer people's internal states are needed.

While emotion recognition impairments are well documented in dementia and particularly in Alzheimer's disease (AD),5,6 only a few studies have highlighted these deficits in mild cognitive impairment (MCI). According to Peterson et al.,7,8 MCI is a syndrome in which individuals report a cognitive complaint, have a greater cognitive decline in various domains such as attention, language, memory, executive functioning, and visuospatial construction as compared to other individuals who belong to the same age group and educational level. 9 It is, however, important to note that patients with MCI—in contrast to patients with dementia—are still capable of independently performing their daily activities. 7 Compared with healthy controls, patients with MCI have been shown in the literature to have impairments in emotion recognition, 10 especially in recognizing negative emotions such as anger, fear, and sadness. In addition, the reduced ability to recognize emotions is a significant problem for the patient's caregiver and can lead to caregiver burden.11,12

A reasonable explanation is that patients with MCI tend to have deficits in cognitive domains that influence their performance in several tasks. These deficits overlap with the domains that play a major role in emotion recognition, such as memory, attention, language, executive functioning, and visuospatial construction, 13 leading to deficits in recognizing emotions accurately. In addition, emotional intelligence, which includes the ability to perceive, understand, and manage emotions, has been identified as an important factor in social interactions. Emotion recognition is considered a key component in this framework. Hildebrandt et al. (2015) 14 state that the ability to perceive and remember emotional expressions is a key aspect of emotional intelligence, suggesting that deficits in this ability may not only affect social functioning but also indicate impairments in broader cognitive abilities.

Based on the available literature, there is evidence of changes in patients with MCI, suggesting that they may have difficulty in processing different facial features, 15 and hence raising questions about their ability to integrate these features into a coherent whole. This process is known as configural face processing and is needed for a holistic representation of the face. 16 It is widely agreed that we process most objects based on their individual parts, 16 especially emotion recognition which heavily relies on understanding the spatial relationships between different facial features. 17 For example, when we describe that someone is happy, we often focus on the smile of the individual, which includes the mouth as well as other features such as the eyes and the cheeks. Hence, the deficits in configural face processing may contribute to emotion recognition difficulties in patients with MCI. However, it remains unclear whether structural changes are specifically causing deficits in emotion recognition or whether they reflect broader impairments in face processing. To understand the nature of emotion recognition deficits in MCI in association with overall face processing, it is essential to address this question at the behavioral first.

Thus, this study aims to gain a deeper understanding of the emotion recognition abilities of patients with MCI compared to healthy controls (HC). Unlike previous research, we include both an emotion recognition task and a face processing task to bridge the gap between recognition of emotional and non-emotional faces, given that the two were shown to be highly associated with younger individuals. 14 This approach allows us to investigate whether impairments in MCI are specific to emotional expressions or reflect broader difficulties in face processing, thus addressing a critical gap in existing studies. 18 In addition to the facial stimuli (both emotion and non-emotion related), we used stimuli comprised of words (both emotion and non-emotion related) to assess how emotional interference is associated with the ability to focus on a primary task such as reading and comprehension to gain an insight into the emotion-cognition interactions in language processing and its alterations in MCI.

The behavioral assessment battery included several tasks such as the Emotion Composite Task (ECT), Facial Composite Task (FCT), and Emotion Stroop Task to measure emotion recognition, facial processing, and emotional interference (Emotional Stroop Effect, ESE), respectively. Hence, we expect that (1) patients with MCI will show reduced emotion recognition performance compared to HC, indicating that patients with MCI have a reduced ability to attend to emotional cues. Furthermore, we expect that (2) in patients with MCI, the association of face processing to emotion recognition will be stronger than in HCs. Finally, we expect (3) that higher emotional interference to emotional words is associated with lower emotion recognition in patients with MCI, but less in HCs. The association of additional predictors such as age, gender, emotional intelligence, and neurocognitive test scores in domains such as memory, attention, executive function, and language with emotion recognition were investigated across groups.

Methods

The current study investigates two main groups of individuals: HC and patients with MCI. The study was pre-registered (https://osf.io/yn3gp). Reporting follows the TREND Statement Checklist in Supplemental Table 1. 19

Participants

The study was carried out in the Department of Psychology at the University of Oldenburg, Germany, from October 2023 until December 2024. Patients were recruited at the Evangelisches Krankenhaus Oldenburg. A total of 60 participants belonging to the age range of 50 to 86 years (

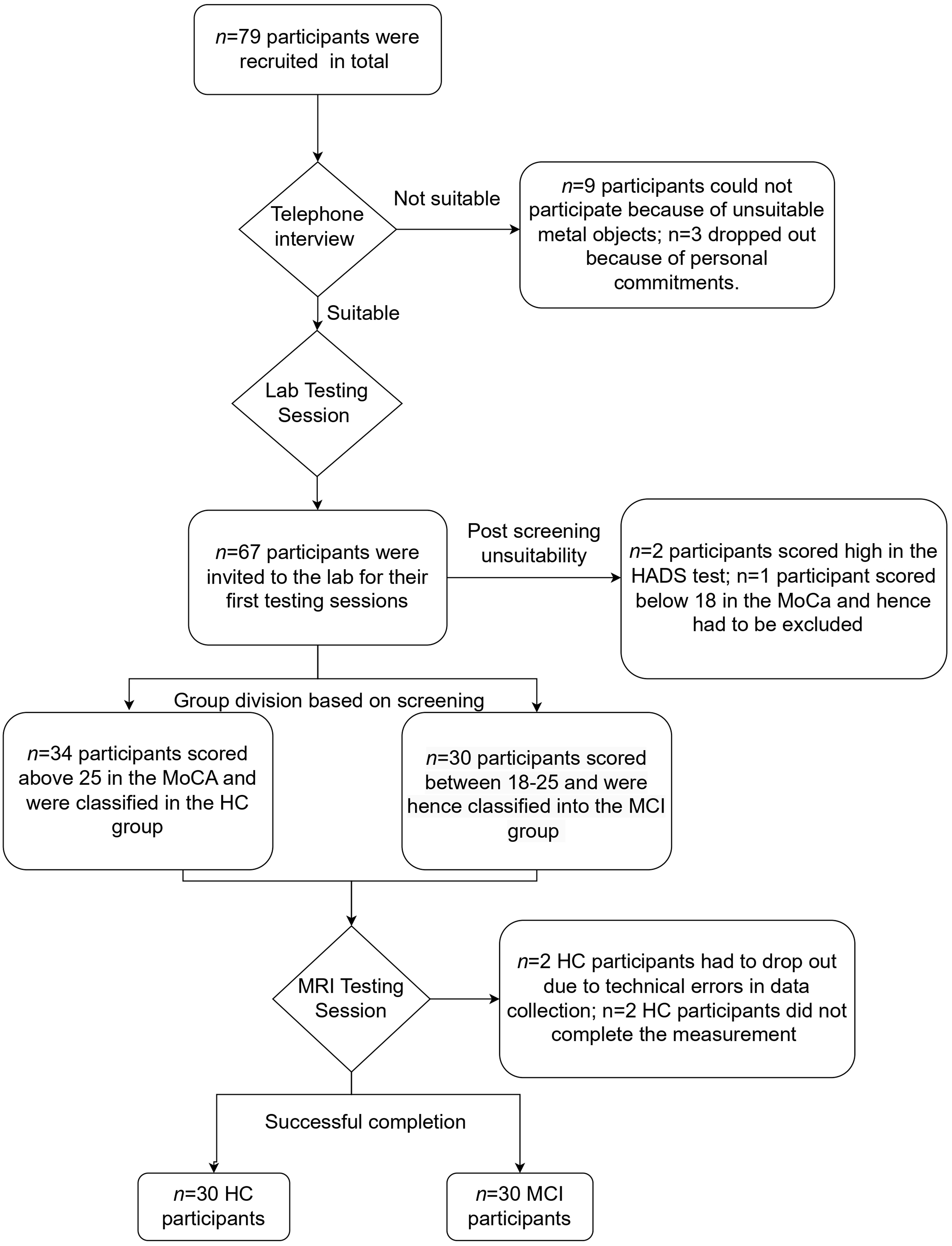

The inclusion criteria of the study ensured that the participants were 50 years or above in age, fluent in German, did not have any known psychiatric or neurological disorders, and had an absence of substance dependency in the last 6 months. Exclusion criteria were based on scores obtained in the Instrumental Activities of Daily Living (IADL) 20 questionnaire (participants had to score 6 points or above to indicate that they could function independently) and the cut-off scores obtained from the Hospital Anxiety and Depression Scale (HADS) 22 for HCs was limited to 8 points, and for patients with MCI was limited to 11 points. 23 This was to rule out the possibilities of existing anxiety and depression that could alter the cognitive task performances. Further, participants were excluded if they had an existing dementia diagnosis and if they had implanted devices or metal objects that were not suitable for the magnetic resonance imaging machine. Although this study also collected MRI data, this paper focuses on the behavioral task results. The MRI results that explore the structural and functional brain characteristics of emotion recognition in MCI are reported elsewhere. A detailed summary of the flow of participation is shown in Figure 1.

Participant recruitment process from the screening stage through the testing stages.

Procedure

During the initial recruitment phases, we conducted telephone interviews with potential participants to check their eligibility. Once the criteria were met, we invited the participants to the lab for the first testing session. In this session, a written informed consent form was presented, and the participants signed it once they were fully informed about the procedure. This session included neuropsychological assessments and an emotional intelligence questionnaire; the details of these tests are described below in the materials section. Next, the Facial Composite Task (FCT), the Emotion Composite Task (ECT), and the Emotion Stroop Task were conducted, all of which were administered via a computer. In the end the participants were invited to the MRI session.

Materials

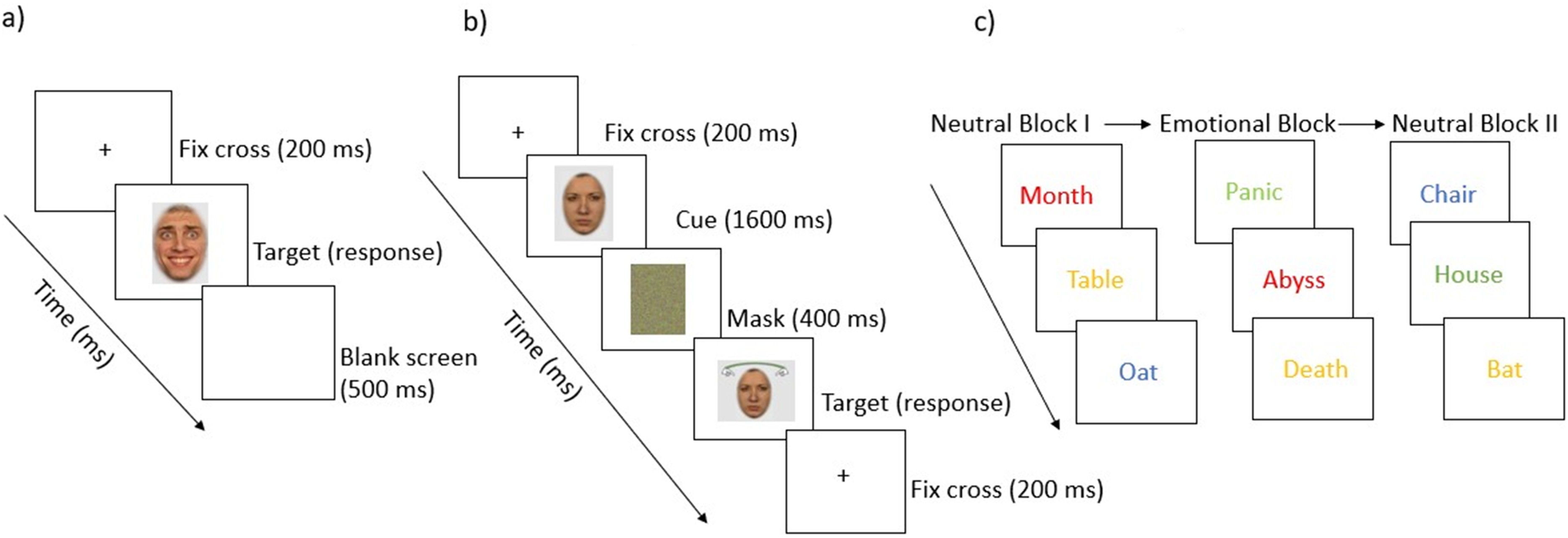

Overview of the experimental tasks: a) Emotion Composite Task, b) Facial Composite Task, and c) Emotional Stroop Task.

The task was specifically designed to ensure that participants attended to both halves of the face and were able to switch their attention to the half where the hands were displayed. Between trials, a black screen was displayed for 500 ms. Participants could respond using marked keys to indicate whether they thought that the displayed half of the face was the same or different from the half of the face that was shown first.

The task started with the training round to let participants familiarize themselves with the key mapping. The training block consisted of 20 neutral words and was followed by the main task. First, participants were presented with 20 neutral words that were presented in different color inks, and these words are known to induce no emotional arousal (e.g., tree, hat). Following the first neutral block, 20 emotional words were presented in different color inks (e.g., abyss, crisis). Finally, a second block of 20 neutral words was presented. The words appeared on a neutral background, and participant had to respond via keys associated with the color of the words, namely, red, blue, green, or yellow. Between each block, a break of 3000 ms, and between each trial of the blocks, a break of 600 ms was incorporated. Once the stimuli were displayed, the reaction time was measured, i.e., the amount of time the participant took to identify the color and respond using the keys (see also Figure 2). Participants who were color blind (n = 2 participants in our study did not take part due to color blindness) did not have to take part in this task, and their rows were marked as missing values in the dataset. This task intended to measure the Emotional Stroop Effect (ESE), which refers to “the phenomenon that the emotional information of stimuli will delay the reaction of participants when they are asked to respond to the non-emotional information in a task”.32,33 That is, in simpler terms, the ESE is the result of the longer reaction time to name the ink colors of emotional words than to name the ink colors of neutral words and is calculated by subtracting the reaction times of the emotional block from the first neutral block.

Statistical analysis

Statistical analysis was conducted using R Software for Statistical Computing (v4.1.2; R Core Team 2021). Descriptive summary statistics were calculated for continuous variables such as age, education, and test scores (CERAD, MoCA, EI, and TEIQUE scores), and independent

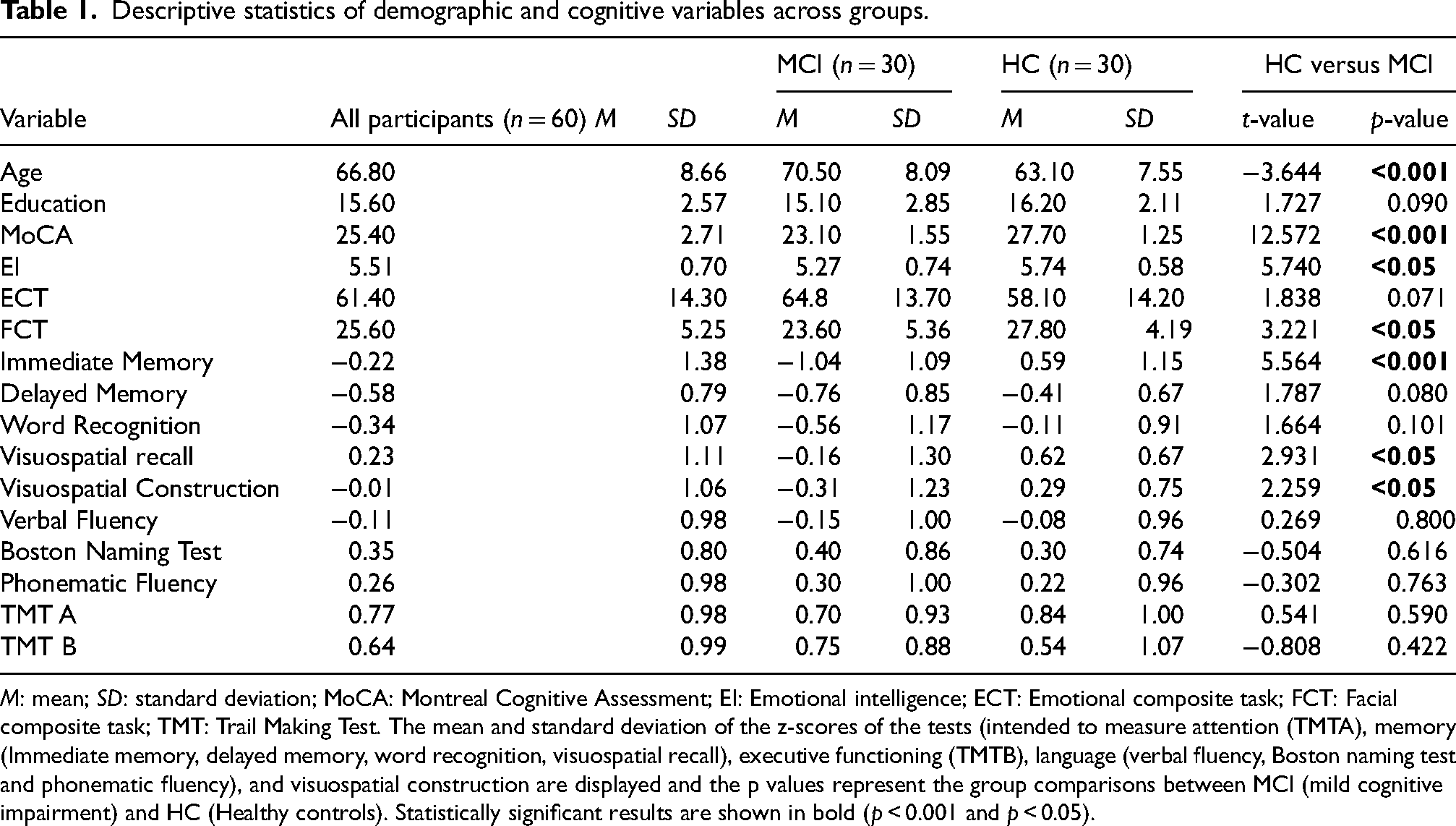

Descriptive statistics of demographic and cognitive variables across groups.

For hypotheses testing, we estimated multiple one-level general linear regression and multilevel models and performed our analysis in a stepwise approach. The one-level linear regression models were used to examine the overall group differences, while the multilevel models were used to account for within-subject differences, such as recognizing specific emotions. For hypothesis 1, we tested whether patients with MCI exhibit reduced emotion recognition performance compared to the HCs. To examine group differences (HC versus MCI), we estimated a categorical regression model with the groups as the main categorical predictor and ECT scores as a continuous outcome variable. To test group differences in recognizing different emotion categories, we estimated a multilevel model that included an interaction between emotion categories and the group membership. Dummy coding was applied with happiness as a reference (coded zero on all coding variables). For the binary grouping variable, the HC group was coded with zero and thus served as a reference category, whereas the patients with MCI were coded with 1. For hypothesis 2, we postulated that the association of face processing with emotion recognition will be stronger in HCs than in patients with MCI. To test this hypothesis, we further estimated a multilevel model that included FCT scores as a predictor, along with the interaction between each FCT and the groups, and FCT with emotion category coding variables. For hypothesis 3, we postulated that higher emotional interference to emotional words will be associated with lower emotion recognition in patients with MCI than in HCs. To test this, we estimated a multilevel model that included the ESE interference effects as a predictor, along with the interaction between each ESE and the groups, and ESE with emotions. All analyses included age as a covariate to control for group differences in age. To control for multiple comparisons, all

As an exploratory analysis, we further estimated a series of one-level general linear regression models to examine the associations between EI, cognitive test

Results

Descriptive statistics

The demographic information and cognitive scores of the entire sample, including distinctive group characteristics, are summarized in Table 1. Patients with MCI were significantly older and had a lower MoCA score than HCs. Additionally, the HCs outperformed the patients with MCI in a wide range of cognitive domains tested.

Analysis

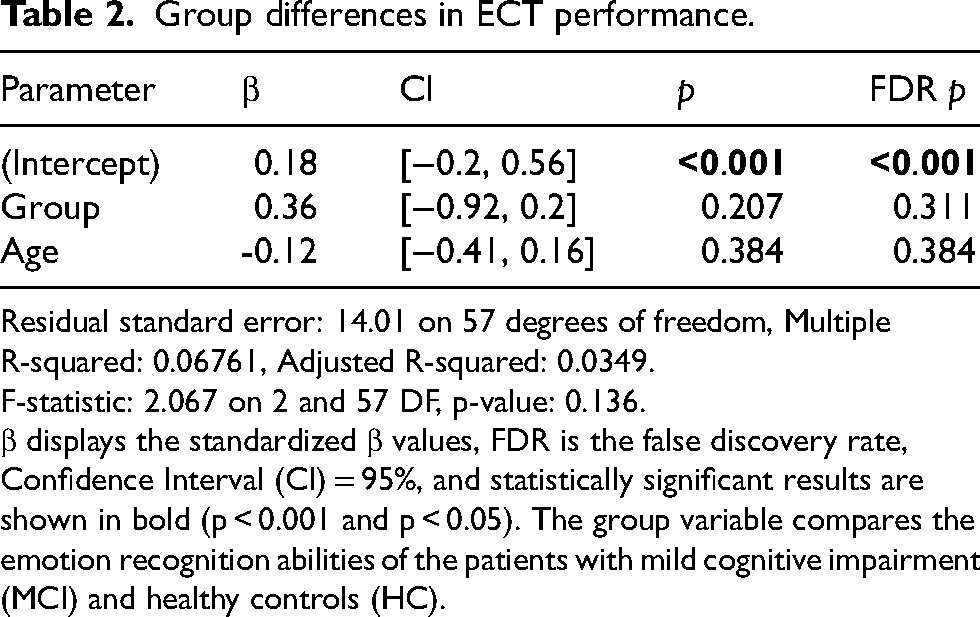

Group differences in ECT performance.

Residual standard error: 14.01 on 57 degrees of freedom, Multiple R-squared: 0.06761, Adjusted R-squared: 0.0349.

F-statistic: 2.067 on 2 and 57 DF, p-value: 0.136.

β displays the standardized β values, FDR is the false discovery rate, Confidence Interval (CI) = 95%, and statistically significant results are shown in bold (p < 0.001 and p < 0.05). The group variable compares the emotion recognition abilities of the patients with mild cognitive impairment (MCI) and healthy controls (HC).

A multi-panel violin plot showing a) Emotion-specific performance on the emotion composite task (ECT) across groups (HC and MCI), b) Facial composite task (FCT) score distribution across groups (HC and MCI), c) Emotional intelligence (ei) global score distribution across groups (HC and MCI).

Emotion-specific performance across groups.

Random Effects: σ2 = 10.00, τ00 ID = 3.78, ICC = 0.27, N ID = 60, Observations=360, Marginal R2 / Conditional R2 = 0.498 / 0.635.

β displays the standardized values, FDR is the false discovery rate, Confidence Interval (CI) = 95%, and statistically significant results are shown in bold (

FCT and group interaction.

σ2 = 10.25, τ00 ID = 2.89, ICC = 0.22, N ID = 58*, Observations = 348, Marginal R2 / Conditional R2 = 0.522 / 0.627.

β displays the standardized β values, FDR is the false discovery rate, Confidence Interval (CI) = 95%, and statistically significant results are shown in bold (p < 0.001 and p < 0.05). FCT represents the scores of the Facial composite task and Emotions (anger, sadness, fear, surprise and disgust) represent the individual scores on the Emotion composite task. The group variable compares the emotion recognition abilities of the patients with mild cognitive impairment (MCI) and healthy controls (HC). The interaction between each FCT and the groups was used to assess the contribution of FCT across groups in recognizing emotions. The interaction between each emotion and the groups was used to assess whether the recognition of individual emotions differed and the level to which FCT contributes to it. *n = 2 participants did not complete the FCT task due to technical errors and their missing values were accounted for in R.

ESE and ECT performance.

σ2 = 10.54, τ00(ID) = 3.74, ICC = 0.26, ID = 58, Observations = 348, Marginal R2 / Conditional R2 = 0.492 / 0.625.

β displays the standardized β values, FDR is the false discovery rate, Confidence Interval (CI) = 95%, and statistically significant results are shown in bold (p < 0.001 and p < 0.05). ESE represents the scores of the Emotional Stroop Effect and Emotions (anger, sadness, fear, surprise, and disgust) represent the individual scores on the Emotion composite task. The group variable compares the emotion recognition abilities of the patients with mild cognitive impairment (MCI) and healthy controls (HC). The interaction between each ESE and the groups was used to assess the contribution of ESE across groups in recognizing emotions. The interaction between each emotion and the groups was used to assess whether the recognition of individual emotions differed and the level to which ESE contributes to it.*n = 2 participants did not complete the ESE task due to color blindness, and their missing values were accounted for in R.

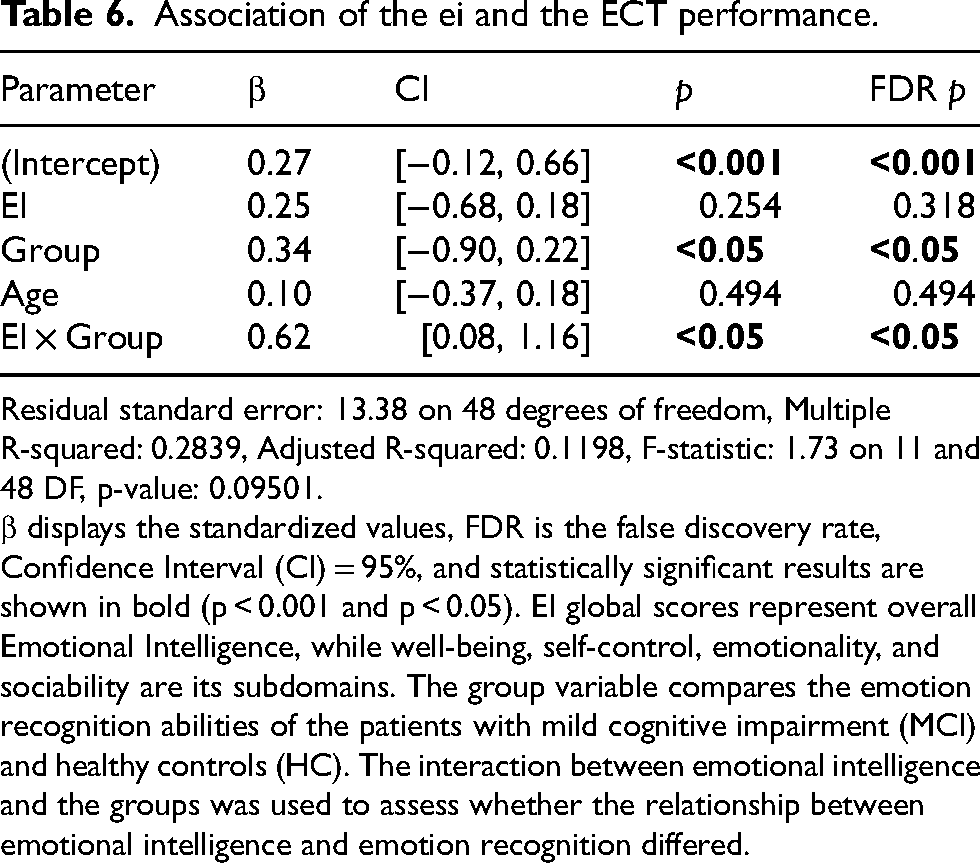

Association of the ei and the ECT performance.

Residual standard error: 13.38 on 48 degrees of freedom, Multiple R-squared: 0.2839, Adjusted R-squared: 0.1198, F-statistic: 1.73 on 11 and 48 DF, p-value: 0.09501.

β displays the standardized values, FDR is the false discovery rate, Confidence Interval (CI) = 95%, and statistically significant results are shown in bold (p < 0.001 and p < 0.05). EI global scores represent overall Emotional Intelligence, while well-being, self-control, emotionality, and sociability are its subdomains. The group variable compares the emotion recognition abilities of the patients with mild cognitive impairment (MCI) and healthy controls (HC). The interaction between emotional intelligence and the groups was used to assess whether the relationship between emotional intelligence and emotion recognition differed.

Discussion

The aim of this study was to examine deficits in emotion recognition abilities of patients with MCI compared to the HCs and to determine whether these deficits could be predicted by several factors, including face processing abilities, emotional intelligence, emotional interference, cognitive abilities, and demographic factors. This study adds new insights into emotion recognition in MCI and links it to underlying cognitive mechanisms such as face processing and emotional intelligence.

Emotion recognition deficits in MCI

Consistent with the literature, our results indicate that patients with MCI have lower emotion recognition than HCs. While the overall difference in performance did not reach statistical significance, emotion-specific analysis revealed that patients with MCI had significantly lower accuracy in recognizing anger and reduced accuracy in recognizing disgust. Previous studies have shown that emotion recognition deteriorates as subjective cognitive decline progresses to MCI and AD. 34 Interestingly, although most studies have reported that patients have difficulties with both positive and negative emotions, most of the impairments lean toward negative emotion recognition.10,34,35 One explanation for the greater impairment in negative emotion recognition compared to positive emotion recognition could be due to the asymmetrical representation of negative and positive emotions in tests, where there are four negatives (sadness, fear, anger, and disgust) and only two positives (happiness and surprise). 34 A second explanation could be that the recognition of negative emotions require more fine-grained discrimination of visual cues, for example, furrowed brows for anger and widened eyes for fear, whereas positive emotions such as happiness are often easier to recognize. 34 A third explanation could be the structural and functional changes that are often associated with neurodegenerative disorders, for example, reduction in the volume of the amygdala and prefrontal cortex, which play a key role in emotional processing. 36 Research has shown that this impairment is greater for negative emotions, 37 which is consistent with the understanding that one of the primary functions of these regions involves threat detection including the regulation of fight or flight responses to stimuli encountered daily life.38–40 Given that the recognition of negative emotions is essential to help us identify threats and avoid misinterpretations, deficits in this area could lead to an increase in intrapersonal conflict and potentially contribute to caregiver burden. 11

The association of face processing and emotion recognition

One of the findings of our study was that face processing was not significantly associated with overall emotion recognition. However, the interaction between face processing and emotion showed that better face processing skills were associated with better anger recognition in both groups. This suggests that the difficulties in recognizing specific emotions may be due to configural face processing, where attention is paid to specific facial regions regardless of cognitive status to recognize the holistic faces. For example, the mouth is more relevant for happiness and the eyes are more relevant for recognizing sadness and anger. 41 Building on this, ECT, which was designed to examine the recognition of emotions from different halves of the face further supports this notion. That is, the finding that patients with MCI showed greater difficulty in recognizing anger presented in the upper half of the face suggests that impairments in face processing particularly the inability to pay attention to all the facial features may hinder their ability to detect subtle emotional cues, that would lead to challenges in their daily life.

The association of cognitive functions and emotion recognition

Another finding was that most of the neurocognitive domains (language, verbal memory, executive function, attention, and visuospatial construction) were not significantly associated with emotion recognition after FDR correction. These findings suggest that general cognitive abilities do not play a major role in explaining variability in emotion recognition, at least not within this sample.

The association between emotion recognition and verbal memory tests was not significant, and this was consistent with previous research suggesting that verbal memory does not necessarily impair emotion recognition. 42 Even though the association between language and emotion recognition was not significant in our study, prior research has shown that language can support labeling emotions 41 ; however, interpreting facial emotions may be highly non-verbal, and hence, it may only be partially dependent on a participant's language proficiency. 43 Attention and executive functioning, as measured by the Trail Making Tests A and B, respectively, did not significantly predict emotion recognition, despite results from prior research which highlight an association between these cognitive domains and emotion recognition.44,45 One possible explanation is that, although these tasks involve executive control, they also rely on lower-level cognitive processes 46 such as visual scanning and set-shifting. 47 The absence of an association in our study may therefore reflect the multifactorial nature of this task rather than a lack of executive involvement in emotion recognition. Given that the performance of patients with MCI was comparable to that of the HCs across the cognitive domains in our study, we can rule out the influence of broad cognitive decline as a contributor to emotion recognition. Instead, emotion recognition difficulties in MCI may stem from more specific impairments in socio-emotional or perceptual processing rather than from generalized cognitive dysfunction.

The association of emotional interference and emotion recognition

The study also revealed a significant association between emotional interference as measured by ESE reaction times and emotion recognition. Interestingly, this effect occurred independent of the general cognitive factors, which were not significant predictors in our models, hence highlighting the role of the automatic emotion-related processes that can affect perceptual tasks despite the absence of traditional top-down attentional models. 48 The emotion-specific analysis suggested that disgust was less affected by the overall negative effect of ESE, which may be due to its evolutionary attachment to social norms and repulsion, which allows attention to the recognition of this emotion even in high-interference situations. 49 However, at the group level, the results suggest that the association of interference with emotion recognition was similar in both groups, suggesting that while heightened interference is associated with lower emotion recognition, the impairments in patients with MCI could be explained by facial processing and emotional intelligence deficits rather than by interference alone.

The association of emotional intelligence and emotion recognition

Finally, our study showed that emotional intelligence (EI) is significantly associated with emotion recognition in patients with MCI. Hence, emotional awareness enhances sensitivity to facial expressions and attention to emotional cues-especially since emotional expressions are known to capture attention more effectively than neutral ones. 50 Previous research also shows that people who can connect their emotions to their thoughts are better at understanding the emotions of others. 51 Moreover, individuals with high EI may be particularly attuned to subtle differences in emotional expressions, enabling them to more accurately perceive emotions by integrating multiple facial features. 52 These findings suggest that deficits in EI may underlie difficulties in emotion recognition in MCI. Consequently, future studies and interventions should consider enhancing emotional awareness and emotion regulation in patients with MCI.

Strengths and limitations

The study contributes to the existing literature on the importance of emotion recognition and the influence of an individual's cognitive status on this ability, particularly in patients with MCI. There are several strengths of this study, one of which is that it examines cognitive predictors and their contribution to emotion recognition while distinguishing between the performance of patients with MCI and healthy controls. Second, the study highlights the importance of holistic face processing in emotion recognition, thus consistent with previous studies that highlight the common neural pathways between face identity recognition and emotion recognition. 53 These strengths would aid clinical efforts to develop targeted interventions that could assist patients with MCI in their interaction with others.

While the study provides valuable insights into emotion recognition deficits in patients with MCI, the limitations should be acknowledged. In our initial analysis plan, a priori power analysis suggested that 74 participants were needed, and due to recruitment constraints, specifically due to difficulties in recruiting patients with naMCI, we conducted a post hoc analysis and concluded data collection with 60 participants. While the sample size was sufficient for the preliminary analysis, future studies should include a more diverse sample (e.g., both aMCI and naMCI) to strengthen the generalizability of the findings. Second, caregiver burden data was collected using the Zarit Burden Interview (ZBI) 12 to explore how the patient's emotion recognition deficits contributed to caregiver burden. However, this variable was not included in the final analysis because the data showed minimal variability as no participant scored more than a few points on the scale. Future studies should consider using a broader approach to capture caregiver burden in different contexts.

Future directions

The results of this study provide several avenues for future research aimed at improving emotion recognition deficits in patients with MCI. Our use of composite face images that presented emotions on different halves of the face as a controlled approach allowed us to examine how patients with MCI process emotions from different facial regions. Future research could explore how videos (dynamic facial changes) would be able to reflect more real-world social interactions, where emotions unfold over time. 34 Comparing results obtained from static and dynamic stimuli could provide more insight into the deficits concerning the emotion recognition abilities of those patients with MCI.

In terms of clinical intervention, one promising direction, for example, is the use of Ambulatory Assessment (AA), which could pave the way for assessing and incorporating emotion recognition training in the participant's natural environment. In addition, AA could be used to create mobile applications that prompt individuals to practice recognizing facial features, such as eyes and mouth, that are most salient for certain emotions. 54 Such real-time interventions could improve emotion recognition, particularly for patients with MCI who have difficulty recognizing complex negative emotions, which require detailed processing of different facial regions.

Conclusion

In summary, our study provides evidence that the emotion recognition deficits in MCI are particularly pronounced for negative emotions such as anger and are linked to impairments in face processing. Furthermore, our study demonstrated the association between EI and emotion recognition in patients with MCI, hence highlighting the need for further socio-emotional factor exploration in aging, especially for populations with cognitive decline. Future research should assess the impact of impaired emotion recognition on social interaction in an everyday context and aim to develop interventions, for example, using AA to support emotion recognition abilities in patients with MCI.

Supplemental Material

sj-docx-1-alz-10.1177_13872877251414969 - Supplemental material for Emotion recognition in patients with mild cognitive impairment: The role of face processing and emotional intelligence

Supplemental material, sj-docx-1-alz-10.1177_13872877251414969 for Emotion recognition in patients with mild cognitive impairment: The role of face processing and emotional intelligence by Rachana Mahadevan, Naomi Kristin Giesers, Thomas Liman, Karsten Witt, Andrea Hildebrandt and Mandy Roheger in Journal of Alzheimer's Disease

Footnotes

Acknowledgements

The authors would like to thank the staff at the Evangelisches Krankenhaus Oldenburg for their support in participant recruitment. We would also like to thank all our participants for taking part in our study.

Ethical considerations

The Ethical approval for this study was obtained from the ethics committee of the University of Oldenburg “Kommission für Forschung Folgenabschätzung und Ethik” (AKZ 2023-062).

Consent to participate

Written informed consent was obtained from all participants prior to participation in the study.

Consent for publication

Not applicable.

Author contribution(s)

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The data supporting the findings of this study are not publicly available due to patient confidentiality. Data may be available from the corresponding author upon reasonable request.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.