Abstract

It is well known that the media display an asymmetric reaction to real-world events, which results in the prioritisation of negative coverage. However, there is still much to discover regarding the qualitative distinctions between different media types and the dynamics of negativity. This article investigates how different media outlets framed Greece in evaluative terms during and after the Sovereign Bond Crisis relying on 12,376 articles published in the British press between 2009 and 2018. The study confirms earlier findings that the ‘negativity bias’ differs across media types in terms of the level of negative tone. In addition, the study’s significant contribution is to highlight the persistence of negativity. Fractional integration time series econometrics is employed to assess the extent to which tonality persists over time. As theorised, all the time series of tonality exhibit long-term memory. Moreover, some evidence is found of differentials in negativity persistence across media outlet types.

Keywords

Mass media play a critical role by bridging the informational gap between the electorate and the elected. From a normative standpoint, citizens need to know enough to effectively exercise their political rights (Eberl et al., 2017). Historically, the media have been citizens’ main source of information. Supplying citizens with balanced and objective information is a central responsibility of the media. As a vast and growing literature has shown, though, the media often fall short of these expectations (D’Alessio and Allen, 2000; Eberl et al., 2017). Against this backdrop, this article explores how generalist quality papers and specialist financial papers differ in terms of one specific kind of bias, that is, negativity (or tonality) bias. While the media’s tendency to report negative news (selection) and to report negatively about the news (tone) is a robust finding in the literature, still much is to be learnt about the qualitative differences between heterogeneous media types, and the dynamics of tonality bias over time. This article focuses on negativity in the media during and after the Greek sovereign bond crisis. As a salient international issue over a long period of time, the ‘Greece topic’ lends itself well to the study of long-term dynamics in media’s negativity. This is a particularly important topic at a time of ever-growing competition in increasingly globalised media markets, which might pressure media outlets’ slant to appeal rather than challenge their readers’ priors (Davis, 2006; Mullainathan and Shleifer, 2005).

The contributions of this article can be summarised as follows. First, previous findings about the level of negativity across outlets’ types (e.g. Boukes et al., 2022) are confirmed. Empirically, the analysis demonstrates how generalist papers reported on Greece using more negative language than specialised financial outlets. Second, an often-overlooked aspect of negativity – its persistence over time – is highlighted. Concepts and techniques from the econometrics literature are borrowed to suggest that the very concept of negativity bias implies the existence of a specific univariate time series property. In essence, since the media tends to emphasise negative events over positive ones, positive shocks fade away more quickly than negative ones, generating ‘long-term memory’ series, also known as fractionally integrated series. Through direct estimation of the fractional integration parameter, this article contributes to the literature by testing not only the level of negativity bias but also its persistence (memory) over time across different media types. The empirical results comport with the view that tonality in the media has long-term properties underappreciated in current scholarship. Moreover, the empirical tests suggest that there might be a difference in the persistence of negativity between financial papers and generalist papers, thus complementing previous findings on the differential levels of negativity across outlet types. Finally, this article contributes to the increasing literature on the media–finance connection during the sovereign bond crisis, as they uncover a more detailed depiction of how the media portrayed the Greek crisis (e.g. Nones, 2024).

Negativity bias in economic news

Much research in media studies focuses on the relationship between economic news coverage and economic conditions (Vliegenthart et al., 2021). One key concept of this literature is the so-called ‘negativity bias’, that is, the findings that the media are asymmetrically responsive to economic conditions. This is a particularly strong and consistent finding (Damstra et al., 2018; Soroka et al., 2015; Vliegenthart et al., 2021).

Several explanations have been proposed to account for this phenomenon. According to one perspective, negative coverage serves as a check on governments by exposing their policy failures to the public, while positive coverage does not fulfil this function (Damstra and Boukes, 2021). Another explanation is that references to negative events are generally perceived to make a news story more likely to be read in a cultural environment that views progress as the ‘normal and trivial thing that can pass unreported’ (Galtung and Ruge, 1965: 69–70). Consequently, negative news is more likely to be selected by journalists due to its inherent ‘surprisingness’ (Boukes and Vliegenthart, 2020). Finally, the psychological literature suggests that individuals tend to respond more strongly to negative stimuli than to positive stimuli (Rozin and Royzman, 2001).

Importantly, scholars have also explored the qualitative differences in negativity across media types (Boukes and Vliegenthart, 2020; Boukes et al., 2022; Lischka, 2014; Soroka et al., 2018). The most common explanation for differences in negativity deals with how the incentive structure of journalists differs across media types (Hamilton, 2004; Lischka, 2014). Two main cleavages have been found to be important: Popular/Tabloids vs Quality/Broadsheets; Generalist vs Specialist. This article focuses on the latter cleavage, which allows us to study the persistence of negativity over a longer period. 1

The decision to publish or not an article as well as the ways in which to stylistically engage with the story are a function of two main factors: first, the inherent characteristic of a story; second, expectations about its commercial values, that is, expectations about its target audience (Harcup and O’Neill, 2001). The inherent characteristics of a story remain consistent across different media types. However, outlets vary in how they assess a story’s commercial value and the strategies they use to enhance its marketability. In contrast to generalist papers, the target audience of specialist news outlets differs from the general population (Hallin and Mancini, 2004). In particular, the main goal of the consumers of financial media is to be informed about news upon which they will base their financial decision (Davis, 2006). Indeed, financial newspapers have been found to write from a more international perspective (Allern, 2002) and to emphasise different news factors (Boukes and Vliegenthart, 2020). Clearly, financial outlets’ incentive is to engage with, rather than shy away from, complex topics that a more general audience would consider ‘dull’ (Manning, 2013: 179). Targeting a relatively sophisticated and already interested audience, the same factors that would guarantee newsworthiness in mainstream media are perceived as unnecessary and redundant. Moreover, these outlets’ fortune depends on being perceived as objective as possible, thus resulting in a less overtly emotional and sensationalist style (Doyle, 2006). Hence, financial quality papers are likely to exhibit less negativity relative to their generalist quality counterpart. This leads to a straightforward hypothesis concerning the level of negativity across different outlet types:

Hypothesis 1 (H1). On average, at a given point in time, generalist papers’ coverage of Greece will display more negative language than financial quality papers’ coverage.

Negativity bias: Persistence

For fine ideas vanish fast/While all the gross and filthy last.

2

Since the seminal work of Galtung and Ruge (1965) scholars have emphasised the importance of negativity as a news value at a given point in time. This is what I refer to as negativity in levels. As suggested in hypothesis 1, at any given moment in time we would expect generalist papers to display greater negativity than financial papers. As Galtung & Ruge noticed at the time, though, the value of a potential news is also dependent on its history, which they label ‘continuity’ and I will refer to as ‘persistence’. 3 As Hollanders and Vliegenthart put it more recently, ‘news is news, [partly] because it was news yesterday’ (Hollanders and Vliegenthart, 2008: 48). Indeed, it is common for journalists to follow up on topics in a similar fashion as they did previously, also because it implicitly justifies the journalist’s prior decision (Harcup and O’Neill, 2001). Over time, while several scholars have proposed their own modifications to the original list, both negativity (in levels) and persistence have featured prominently in scholars’ accounts of news making (Dick, 2014; Harcup and O’Neill, 2001; Harcup and O’Neill, 2017).

While the level and the persistence of negativity are related, the two concepts are analytically distinct. Usually, the focus has been on the former with the latter relegated to a nuisance parameter that needs to be accounted for to make correct inferences. Empirically, the standard approach to model media tonality is to estimate single- or multiple-equation autoregressive models, usually Auto-Regressive Integrated Moving Average (ARIMA) or Vector Auto-Regressive (VAR) models (e.g. Van der Meer et al., 2022). These econometric models require weak stationarity, also known as second-order (or covariance) stationarity, which implies that the mean and covariance of the process remain constant over time, while allowing for short-term memory through autoregressive parameters and/or moving average error terms. 4 The standard practice in the literature is to test for stationarity, and first-difference the series if it contains a unit root. While methodologically sound, reliance on such models allows researchers to explore only short-term dynamics, thus obstructing a thorough investigation of longer-term dynamics.

To be sure, the scholarly literature in political communication has not disregarded temporal dynamics, as exemplified by the cross-lagged empirical approaches in the early agenda-setting research (McCombs and Shaw, 1972), the rolling cross-sectional analyses (Johnston et al., 1992), and the modelling of opinion dynamics using overtime shifts in news content (Fan and Tims, 1989). Nevertheless, ‘more often time has been set aside for the limitation section of cross-sectional studies’ (Wells et al., 2019, p.3). Moreover, to the best of my knowledge, no paper to date has offered a theoretical justification for why we may expect media series to have long-memory properties. Indeed, the few papers that identify this possibility tend to treat it as a ‘nuisance’, that is, a statistical problem that needs to be fixed to preserve valid inference (Habel, 2012; Key, 2012; Lukito, 2020).

By contrast, the point raised here is not solely methodological but also substantive. As shown in a later section, we can leverage the univariate stochastic properties of the data to answer interesting questions in political communication. Indeed, as long as both the level and the persistence of negativity are of significance in news framing, a resulting series capturing news media’s tone is likely to derive from a distinct data-generating process that features interesting long-term memory properties. One such stochastic process is known in the econometric literature as fractional integration. 5 Fractionally integrated series possess two main characteristics (Box-Steffensmeier and Smith, 1996). First, they have less than complete persistence. Second, they result from the aggregation of underlying heterogeneous processes. I will discuss each characteristic in turn and in reference to the Greece topic.

The memory – or persistence – of a time series can be defined as the rate at which a process moves towards its stationary equilibrium after being perturbed by a shock (Box-Steffensmeier and Smith, 1998). Hence, a process can exhibit 1) short-term or no memory; 2) perfect memory 6 and 3) long-term memory. As mentioned, most studies in political communication (implicitly or explicitly) assume that a typical media time series can be characterised as having either zero/short memory or perfect memory. Only a few researchers have explored the possibility of long-term memory (Habel, 2012; Key, 2012; Lukito, 2020). On the one hand, if a time series is integrated of order 1, denoted I(1), it describes a non-stationary (or unit root) process. In this case, the persistence of the series is complete, that is, its memory is perfect. In our case, such process seems unlikely as it would imply that the negative real-world shocks that characterised the Greek financial crisis led to a new (lower) plateau of negativity that would continue indefinitely (or at least until an equally sizable set of opposite shocks perturbs the series). Provided that the time series to be analysed is extended enough to allow for a return to its long-term equilibrium, it seems unlikely that most time series typically used in the political communication literature would exhibit such behaviour. 7 On the other hand, if a time series is integrated of order 0, denoted I(0), it describes a stationary process. Any shock dissipates immediately (memoryless) or quickly (short-term memory) as the series returns to its mean equilibrium over time. A stationary time series with only static changes (no memory) as a function of real-world events may arise if journalists (and/or newspapers) interpreted new information about the topic in isolation relative to past coverage of the same topic. This also seems unlikely, given what we know about news persistence and journalistic practices. Indeed, if previous coverage is an important factor in today’s news, we would expect any resulting series to exhibit some kind of temporal dependence. While the process might result in a stationary time series characterised by short-term memory, it should not be assumed to do so. Indeed, if the degree of persistence in news coverage tonality is high enough, short-term dynamics may not suffice in describing the stochastic properties of the series. In fact, less than complete dependence is also the first necessary (but not sufficient) condition for long-term memory processes, that is, fractional integration. A fractionally integrated series is still mean reverting (unlike in the non-stationary/unit root case), but shocks fade away more slowly than in the short-memory case (Lebo et al., 2000). If a series exhibits such long-term dependence, it is described as fractionally integrated, denoted I(d), where the order of integration d lies between the two extreme cases of perfect stationarity (d = 0) and perfect memory (d = 1).

While determining the degree of dependence is an empirical matter that can be tested, one also needs good theoretical reasons to expect a times series to exhibit long-term memory (Young and Lebo, 2009). This is where the second defining characteristic of fractionally integrated stochastic series comes in: such series typically derive from aggregating heterogeneous processes, a classic example of Granger’s Aggregation Theorem (Granger, 1980). 8 The best-known data generating process underlying fractional integration is the aggregation of different individual units with varying levels of stability in the characteristics under investigation (Box-Steffensmeier and Smith, 1998; Box-Steffensmeier and Tomlinson, 2000; Lebo et al., 2000). This point is particularly relevant in the case of economic news research. After all, the news topic ‘economy’ is the aggregation of a multitude of sub-topics (e.g. fiscal policy, unemployment, the bond market) which, while interconnected, may very well display different levels of serial dependence in the media for various reasons. For example, some newspapers might be more interested in reporting repeatedly on certain economic sub-topics because they speak to their core readers’ concern.

A second 9 and less-known way in which long-term memory processes may arise, though, is by aggregating a series of shocks that persist for varying lengths of times. At any given period, the realised value of a series is the sum of those shocks that survive up to that point, and the distribution of the duration of each shock determines whether, and the degree to which, a series is fractionally integrated (Grant, 2015; Liu, 2000; Parke, 1999). Under this perspective, one may think of varying persistence after a shock in terms of a regime-switching data generating process. Depending on which of the two (or more) regimes is active, the persistence of the shock varies. In other words, the regimes affect the degree of persistence of the shocks in different ways (Liu, 2000). This is in contrast with ARIMA models, which assume (and constrain) all shocks to decay at a comparable rate. As Grant (2015) argues, while such reasoning may apply to aggregate data, it is not a requirement. In other words, the rationale described below for expecting differentials in persistence between positive and negative shocks may be a sufficient condition for fractional integration even for media series that are carefully constructed to avoid the aggregation of heterogenous processes.

Why may shocks to a typical media time series decay at varying rates, thus resulting in a fractionally integrated process? The previous discussion offers an intuitive explanation for why this might happen. Research on negativity suggests that the media report more, and more negatively, on negative real-world events relative to positive real-world events. In other words, negative shocks are stronger in absolute value than opposite and equivalent positive shocks. Hence, even under a conservative assumption such that persistence applies equally to both positive and negative shocks, the relative prevalence of one over the other suggests that negative shocks will have longer duration than positive ones. This is because, even if the shocks decay at the same rate (i.e. persistence applies to both shocks equally), one type of shock is initially stronger than the other. Having moved further away from its mean because of a negative shock relative to an equivalent real-world positive shock, the series will take longer to revert to its long-term mean in the former case, even if the rate at which they move towards equilibrium is the same. Clearly, if persistence is stronger after a negative shock than it is after a positive shock (i.e. persistence does not apply to both shocks equally), the resulting process would be even more persistent. In the Greek Sovereign Bond crisis context, this view is consistent with previous studies that found how the media were quick to report negative judgements in their discussion of negative events, while they reported more slowly on the (positive) Greek reforms (Teschendorf and Otto, 2022).

The above argument should apply across all media types, albeit possibly to different extents. As such, the second hypothesis is:

Hypothesis 2 (H2). On average, all series will exhibit neither short nor perfect memory, but long-term memory. In other words, they are fractionally integrated.

Nevertheless, and mirroring our previous discussion on the levels of negativity, different media types may differ also in terms of persistence. Indeed, Boukes and Vliegenthart (2020) suggest that what they refer to as continuity (see fn 3) is less important for financial newspapers relative to other types. Such specialised outlets do not have to demonstrate how the news of the day builds on to yesterdays’ news. While the authors apply this line of reasoning to the selection of topics, the underlying logic may hold for tonality as well. Indeed, such outlets speak to a more interested audience who consciously demand information for investment purposes. As such, financial journalists are more likely to select what to read based on the topic’s inherent value and to expect the story to convey precise, objective, and economically useful information (Eilders, 2006). In other words, economic news will be valuable to their audience anyway, and journalists’ need to ‘construct’ its perceived newsworthiness is diminished (Davis, 2006). These outlets’ audience is more likely to read the news in an instrumental fashion, that is, to gain information that would then specifically inform their investments’ decisions. An unnecessarily long negative spin, that is, excessive persistence notwithstanding changing economic factors, may be inefficient and even counterproductive as it might fail to inform its audience about positive developments that would have otherwise affected their financial decisions. The sophisticated and interested reader of financial news is more likely to be a ‘Bayesian reader’ who, driven by material self-interest, is looking for information to update their priors rather than to reinforce them (Mullainathan and Shleifer, 2002). As such, the third hypothesis concerns the persistence of negativity across different outlets type:

Hypothesis 3 (H3). On average, generalist papers’ coverage of Greece will display more persistent negative language than financial quality papers’ coverage.

Research design

The empirical focus is on the written media’s characterisation of the Greek economy since the onset of the Greek Sovereign Bond crisis (October 2009) until 2018, when Greece finally exited the third and last bailout programme. By focusing on a highly salient international issue that prominently figured in the press for many years, the effect of agenda-setting bias is minimised (Boukes et al., 2022). The articles are retrieved from the Factiva database. The decision to start in October 2009 is due to theoretical, practical, and econometric concerns. First, this is the time when the Greek government admitted that their predecessor had cheated and falsified economic data, thus catalysing financial investors’ concerns about the country’s finances. Second, and because of the above, too few articles on Greece were published prior to the beginning of the crisis, thus making it impossible to construct a meaningful weekly series. Third, it is well known that a series with structural breaks, especially those resulting in a shift in equilibrium, may be identified as a fractionally integrated process even if it is not (Granger and Hyung, 2004). Hence, all series will start in October 2009 to avoid the structural break.

To select the sample, an effort was made to balance the number of articles from the financial press and the quality press. The focus was on high-circulation daily newspapers, which are more likely to have reported extensively on Greece for a sufficiently long period. Long time series with no missing values are needed to test for fractional integration and estimate the degree of persistence (H2 and H3) (Keele et al., 2016). The Financial Times is selected as the premier daily financial British paper. Regarding the quality press, the Daily Telegraph, the Guardian, and the Times were selected. These are often referred to as ‘the big three’ British quality papers. They offer meaningful variation across the ideological spectrum, with the Guardian considered left-of-centre, the Daily Telegraph as conservative, and the Time as right-of-centre-leaning. Written news articles, both in print and online formats, are included. To prevent double-counting of identical articles published in both formats, the ‘exclude similar duplicates’ option in the Factiva database is applied.

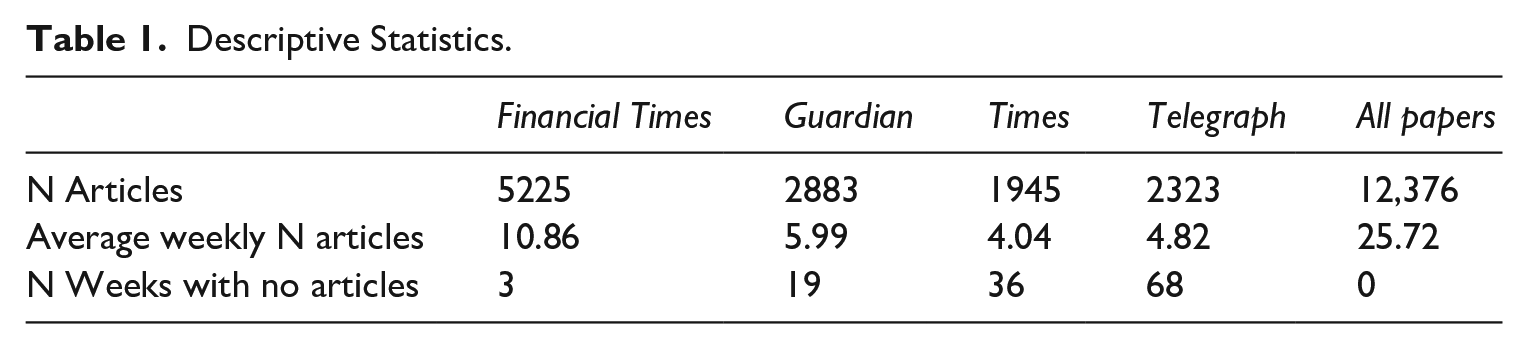

The data collection phase is informed by the need to identify enough relevant articles to construct a long time series at the weekly level. There is a trade-off between precision and coverage. The initial search criteria consisted of the following: 1) at least three mentions of the country or its population, or the country’s adjective (such as Greece, Greek, Greeks); 2) at least three mentions of economics or related words (such as econom*). Unfortunately, this search criterion resulted in too many missing values for the low-salience periods (e.g. late 2013 and 2014). As such, I relaxed the search criteria to include only one mention of Greece/Greek/Greeks (while still requesting three mentions of economics-related words). A manual examination of a random sample of articles from each newspaper confirmed that the selection criteria were sufficient. 10 Table 1 gives some descriptive information about the sample. As we can see, the number of articles is roughly balanced across media types, with the Financial Times accounting for 45% of all articles in the sample and the quality papers combined comprising the remaining portion. The Financial Times published roughly 11 articles a week, on average, followed by the Guardian (6), the Telegraph (5), and the Times (4).

Descriptive Statistics.

Tonality in written texts is captured using a standard dictionary-based approach. The use of a dictionary is a classic example of a ‘bag-of-words’ technique, where we simply tally the occurrences of words in a predetermined lexicon. Following best practice in the literature, tonality is quantified via a dictionary specifically designed by Loughran and McDonald (2011) to measure sentiment in financial documents and newspapers articles content. Before the introduction of the Loughran and McDonald (2011)’s word lists, scholars relied on sentiment dictionaries from fields like psychology and sociology to assess the tone of financial texts. However, these sentiment dictionaries proved to have notable limitations when applied to financial documents, given that numerous terms classified as positive or negative from a general or psychological perspective may not carry the same meaning or connotation when used in an economic context. While the original dictionary list was designed to analyse financial texts concerning the private sector (e.g. firms, industries), it is also considered the benchmark measure in the literature on sovereign bond markets (e.g. Consoli et al., 2021; Liu, 2014).

The main analysis presents results using the finance-specific Loughran-McDonald dictionary. 11 To ensure robustness, an additional dictionary – the general-purpose Hu-Liu (Hu and Liu, 2004) – is also employed. In the economic news literature, the results from the Loughran-McDonald (LM) and Hu-Liu (HL) dictionaries are often compared to verify the robustness of the findings (Kalamara et al., 2022; Shapiro et al., 2022). The results using the HL dictionary are consistent with those shown in the paper and can be found in the Online Supplemental Appendix. After estimating the positive and negative loadings of each article, the sentiment score is calculated as follows: 100 × (# positive words – # negative words)/total word count. 12 The resulting metric encompasses both the direction and magnitude of the article’s sentiment. Higher scores indicate positive sentiment, and negative scores indicate negative sentiment.

Once each article score is aggregated at the weekly level, the issue of missingness arises. As described in a later section, a reliable estimation of persistence requires long time series. For this reason, it was decided to aggregate the series weekly rather than monthly. At the same time, though, there cannot be any missing observation to estimate a series persistence. As Table 1 shows, all outlets failed to publish in Greece for at least some weeks (out of 481 weeks). Both the Times and the Daily Telegraph contain several missing weeks. For this reason, H2 and H3 are tested using only the FT, the Guardian, and a combined measure of the three quality papers’ tonality scores. Even by doing so, a few observations remain missing in the Financial Times (3 weeks), the Guardian (19 weeks), and the combined broadsheet series (not shown in the table, 4 weeks). Interpolating missing data with the common time series strategies would bias my results in undesirable ways. Indeed, interpolation strategies for time series data take advantage of the temporal dependence of the data to estimate the missing counterfactual. Hence, doing so would artificially inflate the temporal dependence in the data prior to estimating it empirically. By construction, it would become easier to reject the null hypothesis when the null is true (Type I error), thus making it easier to find confirmation of H2. Worse still, since generalist papers (the Guardian and the three combined) contain more missing weeks than the Financial Times, the artificially inflated temporal dependence would be larger in the former cases than in the latter. Thus, it would be easier to find confirmation of H3 as well. To avoid this, the conceptually opposite strategy is employed. To fill each missing week, a value is drawn at random from a normal distribution with the mean and variance set at each series’ empirical distribution. 13 In essence, this strategy entails adding white noise (stationary by definition) in way that bias the results against my hypotheses. By artificially disrupting the local temporal dependence of the series around the missing weeks, it becomes harder to find evidence in favour of H2. By the same logic mentioned above, since there are more missing weeks in the case of generalist papers’, more (stationary) noise is added to those cases relative to the financial papers, thus making it harder to find evidence in favour of H3.

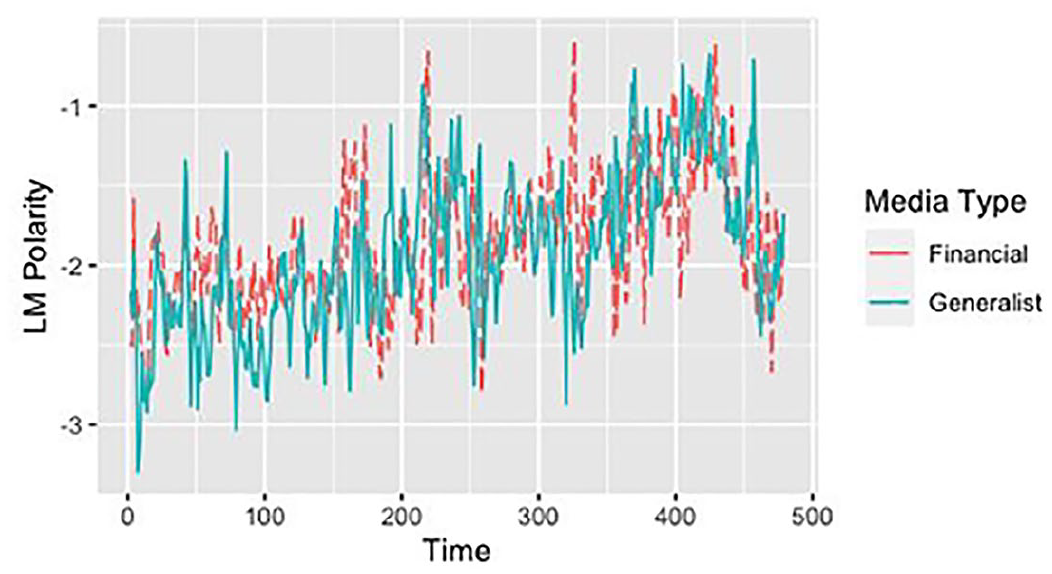

Figure 1 shows the resulting series for financial and generalist outlets (the average across the three generalist outlets). To facilitate interpretation, I smooth the two series with a 3-month rolling average. Supplemental Figure 3A in the Appendix shows the graph relying on the HL Dictionary.

Sentiment score across financial and generalist outlets (weekly average).

It is important to keep in mind that the absolute scores may lack a meaningful interpretation. This is primarily because it is not possible to form strong prior expectations regarding the ‘benchmark’ sentiment in written texts during ‘normal’ times (Nones, 2024). On the one hand, the negativity bias causes news media to exhibit a preference for negative news, leading to an increase in negative tone. On the other hand, the ‘negative’ and ‘positive’ dictionaries are not necessarily equal in the number of words in each list. Even if the two lists were entirely balanced, it is still possible that natural languages are inherently biased. In fact, the English language seems to be positively biased (Kloumann et al., 2012).

Analysis

Levels of negativity

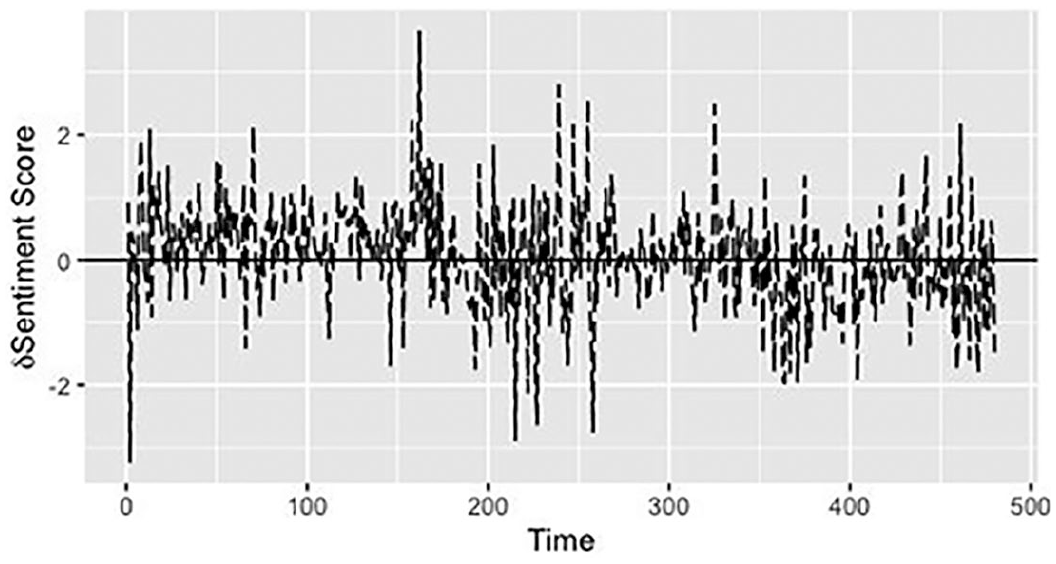

This section explores the relationship between the levels of negativity and media types in two ways. First, the difference in sentiment scores between financial papers and the average for quality papers is calculated for each week (Figure 2). The figures are overlaid with a horizontal line at zero, representing the neutrality point. Most observations (56%) are above the zero line, thus indicating that the Financial Times tend to display less negative tone most of the time relative to generalist papers. The Online Supplemental Appendix contains the graphs comparing only the Guardian to the FT and using the HL dictionary (for both all generalist papers together and the Guardian separately). The FT displays a more positive tone 53%, 61% and 62% of the times, respectively (see Supplemental Figures 4A–6A).

Difference in sentiment score across financial and generalist outlets (weekly average).

While the above analysis suggests that the financial press is less frequently negative than the generalist press, it is silent regarding the extent to which each outlet’s articles differ in tonality. To do that, the hypothesis is tested more rigorously in a regression framework with the following model:

The media type is regressed on the sentiment score controlling for year-month-week fixed effects (

OLS regression – difference in tone across media types.

p < 0.10, **p < 0.05, ***p < 0.01.

By and large, hypothesis 1 seems confirmed. As the issue becomes salient in late 2009, generalist papers exhibit a greater negative tone than financial outlets. This differential in negativity is visible in terms of both frequencies (the number of months when one media type is more negative than the others) as well as in a regression framework with each article as the unit of analysis. As shown in Table 5A in the Online Supplemental Appendix, the results are similar when negativity is measured with the alternative dictionary. 16

Persistence of negativity

As discussed in the theory section, negative and positive shocks may be of varying duration, thus resulting in a fractionally integrated time series. Moreover, the system of incentives of different media types suggests that financial newspapers should function as more ‘neutral’ conveyors of information than generalist papers. In other words, they should update their tone more quickly and resist the temptation to stick with the previous negative narrative if economic conditions do not warrant such negativity anymore. To explore the univariate properties of the series (i.e. its persistence), several unit root, stationarity, and long-range dependence tests are estimated. Then, the degree of fractional integration is formally estimated. As mentioned before, this part of the analysis leverages only the three series with few missing values, that is, the FT, the Guardian, and the weekly series comprising the three quality papers combined.

To begin with, the univariate properties of the variables are analysed. Any statement about the degree of ‘memory’ in the series would be meaningless if the two series were unequivocally stationary (memoryless) or non-stationary (perfect memory). Table 3 shows the results of multiple unit root, stationarity, and long-range dependence tests. 17 By investigating the patterns of rejection that results from using tests with different null hypotheses, we can obtain information about whether a series is likely to be fractionally integrated. Rejection of both null hypotheses – that of stationarity and that of a unit root – are consistent with the idea that the process under investigation is fractionally integrated (Baillie et al., 1996). The results in Table 3 (and Table 6A in the Online Supplemental Appendix) point to that direction. All tests with the null of a unit root are rejected, but so are all the tests with the null of stationarity, in all specifications and under different assumptions. Importantly, the two tests explicitly designed to detect long-term memory – the classic and modified Range-over-Scale tests – reject the null of no long-range dependence. An alternative way to gather evidence in favour of fractional integration is to inspect the autocorrelation function of the first-differenced series (Young and Lebo, 2009). The intuition for why this is the case is simple. Assume that the series is fractionally integrated such that d = 0.3. Upon first-differencing the variable, the resulting series becomes of order d = 0.3 − 1 = −0.7. Such series will have an anti-persistent component that did not exist prior to taking the first difference. Then, the autocorrelation function of the transformed series will display a large negative autocorrelation in the first lag, whereby none existed in the original series. As Supplemental Figure 7A in the Appendix shows, this is indeed the case. Overall, the combined evidence is strongly suggestive of fractional integration and suggests that we should proceed to directly estimate the d fractional parameter to diagnose the level of integration, that is, the memory, of each series (Box-Steffensmeier and Tomlinson, 2000; Clarke and Lebo, 2003).

Tests of univariate property.

Statistical significance threshold at 5% unless otherwise indicated.

VR tests for q = 2, 4, 8, 16 as suggested in Lo and MacKinlay (1988).

Reject at 10% level.

Same conclusions allowing for two structural breaks.

The degree of memory/persistence is the critical feature of fractionally integrated processes. Quite a few fractional integration estimators have been proposed in the literature. While an in-depth review of all the available estimators is beyond the scope of this article, it will suffice to say that not all estimators perform equally well under different circumstances. 18

I follow an extensive literature in political science (Box-Steffensmeier and Tomlinson, 2000; Byers et al., 2000; Clarke and Lebo, 2003; Dickinson and Lebo, 2007; Helgason, 2016) and use both parametric (Sowell, 1992) and semi-parametric estimators (Robinson, 1995; Shimotsu, 2010). Sowell’s Maximum Likelihood parametric estimator offers one significant advantage as it allows the inclusion of exogenous covariates. Moreover, it is worth noting that Sowell’s parametric estimator is commonly used in the political science literature, enhancing comparability across various studies. While it has been found to be more bias-prone in finite samples (Grant and Lebo, 2016; Helgason, 2016), the dataset under study has a large enough sample size, which should mitigate this problem. 19

On the other side, a semi-parametric estimation of d may be appealing because it is agnostic about the dynamics of the process and more robust to misspecification (Box-Steffensmeier et al., 2009; Shimotsu, 2010). Within the semi-parametric class, two common statistical procedures are the log-periodogram regression and the local Whittle estimation.

The semi-parametric local Whittle requires fewer underlying assumptions, is more efficient for low values of d, and is best suited for finite samples. Indeed, Robinson (1995) suggests that the Whittle estimator is unbiased for as few as 64 observations. As such, I rely on the most recent modification of the Local Whittle Estimator proposed by Shimotsu (2010). The authors observe that the asymptotic properties of previous estimators do not account for the fact that a typical time series is often modelled with an unknown mean and a polynomial time trend. To redress that, they propose a Two-Stage Exact Local Whittle estimator that is consistent and asymptotically normal over a wide range of integration. This is particularly relevant in the case under study for two reasons. First, as it is evident from Figure 1, the series starts after reaching a trough in the Fall 2009 and then trends upwards. Hence, the first observations in the sample cannot be representative of the long-run mean. Second, recall the previous discussion on how absolute scores lack a meaningful substantive interpretation because there is no way of knowing what the ‘benchmark’ sentiment is during normal times. These two facts, both individually and combined, suggest that the long-term mean should be modelled as unknown.

Finally, the semi-parametric log-periodogram estimator proposed by Robinson (1995) allows for a more efficient simultaneous estimation of the fractional parameters across multiple time series. This feature is convenient in the context of hypothesis 3, which entails a comparison between the tonality persistence across outlet types. Hence, these results are confirmed relying on this estimator as well. 20

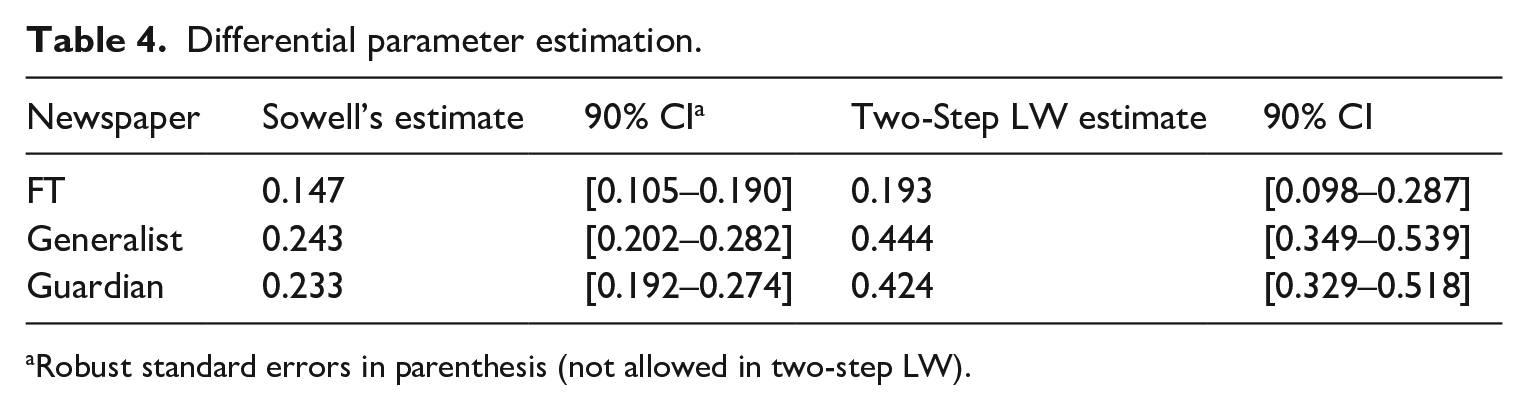

Table 4 displays the fractional parameter values derived from the two main estimators. Two observations seem in order. First, the estimates for the combined generalist papers (second row) and the Guardian (third row) are very similar. This result is comforting as it indicates that, even if we do not have long enough series to test H2 and H3 directly on the remaining generalist papers (Times and Daily Telegraph), the results are unlikely to be very different from those for the Guardian (if they were, the estimated parameters for the combined series and the Guardian would diverge). Second, the estimates differ across estimators. This is not particularly surprising since the estimators are derived under different distributional assumptions. Anyway, in both cases, the evidence strongly supports hypothesis 2. The newspapers’ tonality is characterised by long-term memory, that is, the confidence intervals of the d estimates do not overlap with either 0 (which would indicate no or short-term memory) or 1 (which would indicate perfect memory). Furthermore, Table 4 also offers some evidence in favour of hypothesis 3, albeit less strongly. Indeed, the tonality series for the Financial Times is systematically more persistent than that for the Guardian and the generalist papers combined, as indicated by the non-overlapping confidence intervals – albeit only at the 90% level. 21 For good measure, the degree of fractional integration is re-estimated for all three variables simultaneously using the log-periodogram estimator (Robinson, 1995). A test for equality of the three d coefficients yields an F = 3.47 and a p-value = 0.032 (the equivalent test for the HL dictionary yields an F = 3.38 and a p-value = 0.031). Table 13A in the Online Supplemental Appendix shows the full results.

Differential parameter estimation.

Robust standard errors in parenthesis (not allowed in two-step LW).

As shown in the Online Supplemental Appendix (Table 7A), the results are similar when negativity is measured with the alternative dictionary. 22 Moreover, as briefly noticed above, the Sowell’s estimator allows for the inclusion of exogenous variables in the models. As such, it is possible to ensure that the results are robust after accounting for the possibility that the underlying economic conditions might also be long-memory processes. The models are re-estimated after including the Greek long-term (10 years) bond yields. The results remain robust to this estimation procedure (see Table 8A in the Online Supplemental Appendix). Moreover, the coefficients of the exogenous regressor are correctly signed and statistically significant across all models, as we would expect. As the Greek bond yields increase (thus indicating a worsening of creditworthiness), the tone in the media becomes more negative. Finally, it is important to ensure that the results are not driven by the way missing observations are handled (see footnote 11). Tables 9A, 10A, 11A and 12A in the Online Supplemental Appendix show the results after interpolating the missing weeks in different ways.

Overall, the findings are consistent with the main hypotheses and shed new light on the dynamics of tonality in the media. The mixture of positive and negative shocks from real-world events results in a data generating process characterised by distinctive long-term memory. This is relevant for both methodological and substantive reasons.

On the methodological front, fractional integration has some advantages. Misdiagnosing a series as stationary when it is not may lead to spurious inferences (Newbold and Granger, 1974; Tsay, 2005). By contrast, misdiagnosing a series as non-stationary when it is not may lead researchers to over-differencing the series which, in turn, artificially builds a moving average process into the data (Dickinson and Lebo, 2007). Failing to appropriately account for the temporal property in the data in a regression framework may thus lead to misleading findings. For example, imagine a researcher interested in the relationship between some macro-financial variables (e.g. the Greek Sovereign Bonds) and tonality in economic news (as measured in this paper). Typically, researchers rely either on the DF/ADF tests or the KPSS tests, but not both (and let alone the comprehensive list of tests used here). As Table 3 shows, in the former case, they would conclude that the series is stationary. In the latter case, they would conclude that the series is non-stationary. Depending on the other variables’ characteristics, this modelling choice may lead to different results. While inferential threats may not be as severe with d < 0.5 (as most, but not all, estimates in this article), the literature shows how issues might still arise in contexts that are typical in applied political communication research. As Tsay and Chung (2000: 155) find in the bivariate case, ‘as long as [the variables’] orders of integration sum up to a value greater than 1/2, the t-ratios become divergent and spurious effects occur’. Unsurprisingly, the fractional spurious regression problem becomes more severe in the multiple regression case (Ventosa-Santaulària et al., 2022). Hence, most d parameters estimated in this article are high enough to warrant attention, above all in those applications where the regressors may also be fractionally integrated. 23 By contrast, under some conditions (e.g. large N), fractional integration allows us to estimate the degree of persistence more precisely and to deal with it accordingly.

In addition, fractional integration also serves a substantive purpose as it allows to directly test the hypotheses about the persistence of negativity and to compare them across different outlets. This is an important avenue for research because the media emphasis on negativity has significant societal implications. It can distort public perception, leading to increased fear, anxiety, and cynicism, and may contribute to a more polarised and divided society. Understanding negativity bias in the news is crucial because it underscores the need for media literacy and balanced reporting to foster a more informed and resilient public. The (sometimes implicit) mechanism linking media negativity to readers’ behavioural outcomes focuses on the level/strength of negativity. In addition, this study shows how negativity may have a stronger effect on individuals not just because it is stronger but because it tends to last longer in the media cycle.

Conclusion

In conclusion, this study has shed light on old and new claims on negativity across different news outlets. First, previous findings about the differences in the levels of negativity between generalist and financial papers are confirmed. Second, this study has drawn attention to an often-overlooked dimension of negativity, its persistence. There are good theoretical reasons to expect that the negativity bias in media reporting is likely to generate a fractionally integrated process. The empirical evidence strongly supports this conjecture. This is an important and novel finding that sheds new light on the dynamics of negativity in the media. These findings suggest that empirical scholars in political communication should consider and test whether their series are fractionally integrated, given the possible pitfalls of misdiagnosing the univariate properties of a time series (Dickinson and Lebo, 2007; Newbold and Granger, 1974). Third, there is some (but weaker) evidence in terms of differences in tonality persistence across media types. As suggested in the theory section, financial outlets update their reporting more quickly than their generalist counterparts as a function of real-world events (at least on economic matters). Indeed, the empirical evidence seems to support this possibility. Finally, these results add to a growing body of research on the relationship between media and finance in the context of the sovereign bond crisis by revealing a more nuanced picture of how the media discussed the Greek crisis (e.g. Nones, 2024; Teschendorf and Otto, 2022).

The results from this article also suggest new intriguing directions for future research. Most obviously, it would seem worth exploring the extent to which the duration of media negativity, rather than its level, has real-world effects on public opinion. Moreover, it would be interesting to investigate whether the persistence of negativity further varies across other media types, such as tabloids, and national media systems. Finally, future research might extend the application of seasonal/cyclical versions of long-memory estimators to media series (e.g. Gil-Alana, 2001; Skare et al., 2024). These models are particularly useful for studying recurring patterns or cycles in media coverage. For instance, topics like climate change and immigration often exhibit seasonal or cyclic dynamics in media reporting. Coverage of climate change tends to peak around international conferences (e.g. COP summits) or extreme weather events, reflecting periodic fluctuations in salience and tone (Luxon, 2019). Similarly, immigration debates often re-emerge cyclically in response to election cycles or policy changes, leading to temporally clustered shifts in media tone (Brouwer et al., 2017). Leveraging cyclical models would enable a more nuanced analysis of such patterns, distinguishing between persistent long-memory processes and seasonally driven fluctuations.

Supplemental Material

sj-docx-1-bpi-10.1177_13691481251317892 – Supplemental material for The dynamics of negativity in media outlets during the Greek sovereign bond crisis

Supplemental material, sj-docx-1-bpi-10.1177_13691481251317892 for The dynamics of negativity in media outlets during the Greek sovereign bond crisis by Nicola Nones in The British Journal of Politics and International Relations

Footnotes

Funding

The author received no financial support for the research, authorship and/or publication of this article.

Data availability statement

The data that support the findings of this study will be available from the corresponding author’s website and/or journal website upon acceptance.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.