Abstract

International rankings push governments to adopt better policies by providing comparative information on states’ performance. How do citizens respond to this information? We answer this question through a preregistered survey experiment in Israel, testing the effect of rankings in the fields of human rights and the environment. We find that citizens respond to international rankings selectively. Informed about a high ranking given to their country, citizens tend to express a more positive assessment of the country’s performance. By contrast, they seem to dismiss poor rankings of their country. We further find that poor rankings on a polarising issue, such as human rights, might face a particularly strong resistance from citizens. Overall, our results engage with and support recent scholarship sceptical of the impact of international shaming on public opinion. Even gentle shaming – expressed through a low numerical grade – might not be well received by the public.

In recent years, states have come under increasing normative pressure to change their policies and bring them closer to international standards. Among these means of pressure are international rankings and similar indicators that offer regularised grading of the performance of states. 1 Published by various actors, international rankings typically possess several qualities that allow them to pressure states that deviate from an internationally expected conduct: they are public and easily available, appear regularly, and compare the performance of dozens of countries. Through these qualities, rankings may shape state reputation: states ranked high will seem successful or effective, whereas poorly ranked states might seem failing or illegitimate. Policymakers, concerned for the reputation of the state and for their own good name and careers, may be motivated to change policy to restore a damaged reputation (Davis et al., 2012; Kelley and Simmons, 2019; Merry, 2011).

But how does the general public respond to international rankings? Do citizens care about the information that rankings convey? Do they change their assessment of their country’s performance in accordance with international rankings? The response of the public is consequential. In a democracy, public opinion may shape the state’s response to external pressures and challenges (Baum and Potter, 2015; Tomz et al., 2020), and it may certainly affect governments’ response to international rankings. On the one hand, citizens can reinforce the impact of rankings by expressing dissatisfaction with the poorly graded policy and demanding reform. On the other hand, if the public dismisses the rankings, the government may feel free to reject or even denigrate them. Currently, however, we know little about the public’s response to international rankings.

This study assesses the impact of international rankings on domestic public opinion, following a similar development in the study of shaming more broadly. The literature had long overlooked shaming’s impact on domestic opinion, and several recent studies have sought to fill this gap with conflicting results. Some studies suggest that shaming may generate domestic pressure for compliance with international standards (Ausderan, 2014; Koliev et al., 2022; Tingley and Tomz, 2022). Other studies sound a more sceptical tone, arguing that shaming may leave citizens indifferent or even bring them to rally behind their government in a backlash effect (Greenhill and Reiter, 2022; Gruffydd-Jones, 2019; Kohno et al., 2023). These studies focus on ‘traditional’ shaming, that is, the verbal denunciation of states by external actors. Adding to this body of work, we examine the public response to international rankings – a distinct form of shaming that uses grades to express a state’s distance from international norms (cf. Doshi et al., 2019). Building on recent advances in the analysis of public opinion, we study how rankings influence both attitudes and behavioural intentions (Sheppard and von Stein, 2022), and how this influence varies across issue areas (Greenhill, 2020; Koliev et al., 2022).

This article presents the results of a preregistered survey experiment in Israel, which examined the impact of rankings in the areas of human rights and the environment among a sample of 4016 respondents. Consistent with our expectation, the results indicate that citizens use rankings to update their assessment of their country’s performance: respondents who read about Israel receiving a high ranking expressed a more favourable assessment of the country’s record compared to those informed about a low ranking.

Our evidence also suggests that while the high ranking indeed affected respondents’ views, the low ranking did not have the respective negative effect: respondents were generally disinclined to take in the low ranking and update their views accordingly. These conditional findings suggest that citizens treat international rankings selectively: embracing the good, dismissing the bad.

Furthermore, consistent with our expectation, we find that the impact of rankings varies between the area of the environment and the area of human rights. We argue that significant polarisation over human rights in Israel left respondents less receptive to the critical portrayal of the country’s record, as expressed in a low ranking, compared to the ranking on the environment: an issue perceived (in Israel) as more technical and less polarised. Indeed, the poor grade on human rights seemed to have triggered a backlash, leading respondents to adopt a more favourable assessment of Israel’s human rights record.

Finally, and contrary to our expectations, we find that the source of the rankings mattered little. While Israelis generally have greater faith in the United States than in most international organisations (IOs) or nongovernmental organisations (NGOs) (Fagan, 2023; Wike et al., 2022), the rankings created by these different actors had a similar effect on respondents.

Overall, we find that rankings do influence public opinion, but arguably not in the way intended by those publishing the rankings. Rankings do not aim primarily to congratulate the high-performing countries; rather, their key purpose is to shame and pressure the low performers (Kelley and Simmons, 2019). Our results, however, show that, at least in Israel, the public is willing to accept rankings as a source of adulation, but not criticism. This means that international rankings may not generate public demand for compliance with international standards; in some cases, they might even incite a counterproductive backlash. Consistent with studies sceptical of shaming (Efrat and Yair, 2023; Snyder, 2020a, 2020b; Terman, 2019), this article suggests caution regarding the ability of international criticism to sway domestic public opinion.

The impact of rankings on public opinion: Expectations

Studies of naming and shaming often proceed from a rationalist premise, in which external actors perform an informing role: In an environment of uncertainty, the public may not know how the government behaves and whether its behaviour violates international standards. By providing the missing information, external actors can bring citizens to recognise their government’s misconduct (Greenhill and Reiter, 2022: 401; Tingley and Tomz, 2022: 449).

Similarly, we argue that international rankings allow citizens to revise their assessment of national policies (Dobbin et al., 2007: 460; Elkins and Simmons, 2005). Through rankings, citizens can overcome informational limitations in the evaluation of the quality of policy, possibly leading to an adjustment of that evaluation in light of the new information. Since citizens typically lack sufficient information on policy performance (Clinton and Grissom, 2015), they might express satisfaction with their own country’s performance, unaware that their country is actually falling behind and that other countries have better policies in place. International rankings allow citizens to fill this knowledge gap by offering an ‘outsider’s view’, based on a large-scale cross-national comparison (Guardino and Hayes, 2018). Low rankings provide citizens with new information that indicates their state’s weak performance in comparison to others (Grek, 2009). Based on this information, citizens may realise that their country is doing more poorly than they thought, which, in turn, may increase their dissatisfaction with existing policy and create awareness of the need for reform. On the other hand, a high ranking means that the country is doing well relative to others. Citizens may respond by adopting a more favourable assessment of the national performance. We therefore hypothesise that citizens informed of a high ranking will be more likely to consider their country as performing better with respect to the relevant policy/issue area, compared to citizens informed of their country’s receiving a low ranking.

We also hypothesise that rankings may affect the policy preferences of citizens. Having learned, through a poor ranking, that their country is falling behind others, citizens are likely to favour a change of policy to come closer to the better-performing countries. On the other hand, a high ranking may diminish the support for policy improvement: if the country already performs well, as a favourable grade indicates, there is little need to demand further advancement.

We further hypothesise that international rankings may influence the willingness for political action. Citizens may feel emboldened by rankings that portray their country as lagging behind. The comparative information from the rankings provides proof that the country is indeed deviating from widely held standards, and it reinforces and legitimises the demand for a policy change and improved performance (cf. Simmons, 2009: 149–152). While ‘conventional,’ verbal shaming may not motivate citizens to take political action (Greenhill and Reiter, 2022), a poor ranking offers the motivation and legitimacy to actively demand a better policy. By contrast, a high ranking informs citizens of the country’s satisfactory performance, likely diminishing the willingness to demand policy improvement.

We summarise our hypotheses as follows 2 :

H1a. Citizens are more likely to evaluate the performance of their country as better when the country receives a high ranking, compared to when it receives a low ranking.

H1b. Citizens are less likely to support efforts to improve the performance of their country when the country receives a high ranking, compared to when it receives a low ranking.

H1c. Citizens are less willing to take political action in demand of improved policy performance when the country receives a high ranking, compared to when it receives a low ranking.

We further suggest that effect of rankings on public opinion varies across issue areas. International rankings evaluate state performance in a variety of domains, including economics, development, governance, education, health, social matters, technology, and human rights. We argue rankings on issues that show a high degree of polarisation will have a weaker effect on the views of citizens. In general, changing partisans’ attitudes on highly salient and polarised issues is much more difficult than changing their attitudes on low-salience, less polarised issues. On matters that exhibit strong, polarised views, the public is affected more by party cues than by substantive information (Druckman et al., 2013; Guisinger and Saunders, 2017; Levendusky, 2010; see also Mummolo et al., 2021). And, when an opinionated public is exposed to information, it tends to view that information through partisan lenses, in many cases resulting in no attitudinal change even if the information presents contrary evidence (e.g. Lord et al., 1979; Nicholson, 2012; Taber and Lodge, 2006; cf. Guess and Coppock, 2020). For this reason, the effect of the rankings will likely be weaker on issues that are characterised by strong, polarised views. Such views are more entrenched and resistant to change. Furthermore, an external evaluation of, and interference in, a domestic polarised matter may be seen as illegitimate (Tomz and Weeks, 2020). By contrast, where polarisation is low, the effect of rankings will likely be stronger: citizens will be more willing to take in new information and update their attitudes and behavioural intentions accordingly.

H2. Rankings more strongly affect attitudes and behavioural intentions of citizens on issues where polarisation is low, compared to where polarisation is high.

We also consider whether the impact of rankings on the public varies with the identity of the actor who issues them. Specifically, we hypothesise that rankings have a greater effect on citizens’ views when the actor producing the rankings is seen as fair and trustworthy. When the source enjoys trust, citizens will give the rankings a more serious consideration. They are also more likely to learn from the rankings, as those are viewed as a credible indication of the country’s performance (see Kelley and Simmons, 2019: 496–497).

We focus here on three types of actors which are among the primary producers of rankings: IOs, NGOs, and the U.S. government. There are indeed good reasons to trust each of these actors and the rankings they publish. IOs may be seen by the public as politically neutral, and their broad membership endows them with legitimacy: each organisation represents the collective will of a large group of states. IOs may also possess technical expertise that can make their assessment of states’ performance more reliable (Fang, 2008; Greenhill, 2020; Thompson, 2006). NGOs may possess legitimacy and authority by virtue of their independence and autonomy from major political actors. They are often perceived as being driven by values – not by national interests or domestic pressures (Zou and Wang, 2021). As the richest and most powerful country in the world, the United States enjoys authority and prestige. Its vast resources allow the collection of high-quality information which enhances the credibility of the rankings it produces (Kelley, 2017).

At the same time, the public may view all three actors – IOs, NGOs, and the U.S. government – as biased (Chapman, 2011; Murdie, 2014), or even as unfriendly or hostile towards their country. Citizens may dismiss a poor ranking of their country as ‘politically motivated’ when the source of that ranking is perceived as an adversary, and they will respond more favourably to a ranking issued by an actor who is a friend or an ally (Terman, 2019; Terman and Voeten, 2018).

Whether the source of the ranking commands trust and respect or whether it is seen as biased will vary with the national context. International institutions, such as the UN, enjoy popularity among some publics, but in other places, they are treated with suspicion (Fagan, 2022). NGOs increasingly face polarised attitudes: Western countries largely appreciate their role in promoting democracy and tackling a range of challenges, but they are seen in many non-Western countries as foreign agents promoting a harmful agenda (Chaudhry, 2022). Similarly, in some countries, the public views the United States more favourably than in others (Wike et al., 2022). We expect that rankings will more easily sway public opinion when the actor producing them enjoys trust and legitimacy.

H3. Rankings have a stronger effect on citizens when the rankings come from an actor whom citizens trust.

The site of analysis

Israel serves as our site of empirical investigation, offering a non-U.S. perspective on rankings and their public-opinion impact. In addition to testing the threefold effect of rankings (H1) in Israel, we test how this effect varies with the level of issue polarisation (H2). For that, we focus on human rights versus the environment: Israeli politics and public opinion are much more polarised on the former than on the latter. For example, right-wing politicians have long accused human rights organisations of weakening Israel by unfairly criticising it while advancing the Palestinian struggle against Israel. In recent years, those politicians have proposed multiple bills aiming to inhibit the activities of human rights organisations. Rhetoric against human rights groups has recently escalated, with a right-wing leader denouncing them as an ‘existential threat’ to Israel (Association for Civil Rights in Israel, 2019; Gordon, 2014; Shpigel, 2022). In contrast, there is no similar elite-level left-right polarisation on the environment.

More pertinent to our research, Israeli public opinion is more polarised on human rights than on the environment. For example, a recent study has found a substantial left-right polarisation on an item tapping attitudes towards human rights organisations, with 80% of right-leaning respondents believing that human rights organisations are harming the country, compared to only 15% of left-leaning respondents (Hermann et al., 2023). In contrast, as we show in Online Appendix Section F, the Israeli public is much less polarised on environmental policy.

Given this difference in the level of polarisation, we expect that rankings will more strongly affect the attitudes and behavioural intentions of Israelis on the environment, compared to human rights.

Adapting H3 to the Israeli context, Israelis are more likely to dismiss rankings issued by IOs and NGOs, as those actors are considered unfriendly or hostile towards Israel. Across 24 countries surveyed by Pew (Fagan, 2023), Israelis held the most negative view of the UN, with 62% expressing an unfavourable opinion about this institution. Israelis also resent NGOs’ efforts to bring pressure on their country (Amnesty International Israel, 2021; Efrat, 2012, 2016; Steinberg, 2006). With so little faith in IOs and NGOs, these actors’ assessment of Israel’s performance may carry little weight with Israelis. By contrast, Israelis have much greater trust in the United States. Indeed, Israelis care deeply about their country’s relations with the United States – more than the relations with any other foreign country. They tend to have a favourable opinion of the United States and to strongly support it. The Israeli public also believes in the U.S. commitment to Israel’s security and wishes to maintain that commitment (Israeli, 2020; Wike et al., 2022). All this makes Israelis particularly susceptible to social influence by the United States through rankings. If it is the U.S. government that grades Israel poorly, that evaluation is more likely to be treated as credible and to influence Israelis’ views: its impact is likely greater than that of a similar evaluation made by an IO or NGO.

Methodology

To test our hypotheses, we conducted an online survey in Israel between 14 and 23 August 2022. The survey contained an embedded, preregistered experiment.

Sample

Our sample consists of 4016 respondents: A relatively large number of respondents to allow us to obtain enough power to detect interaction effects (see Sommet et al., 2023). Respondents were recruited by Geocartography, a company that conducts online and telephone surveys in Israel. Our sample is not fully representative of the Israeli population, with several deviations from population benchmarks, such as a lower percentage of Arab citizens of Israel than the population at large. Still, our sample is diverse with regard to key socio-demographic and political variables: mean age is 40.6 (SD = 14.4), with women constituting 53.0% of the sample. Those who identified as ideologically ‘right’ (1–3 on a 1–7 ideological self-placement measure) comprised 60.0% of the sample, while ‘centrists’ (4 on that measure) comprised 22.8%, and ‘leftists’ (5–7 on that measure) comprised 17.2% of respondents. Such a distribution of political orientation is largely consistent with the overall Israeli population. Additional details about the sample are reported in Online Appendix Section A.

Experimental design

All respondents read a short vignette (between 61 and 74 words in Hebrew) describing a ranking issued by an international actor with respect to a specific issue area: either human rights or the environment. The vignette stated that the ranking is published every year covering 180 countries and laid out some of the specific indicators that are factored into the ranking. The vignette ended with a presentation of Israel’s ranking for the year 2021. At the end of the questionnaire, respondents were debriefed about the nature of the manipulation and informed that the information they received concerning a ranking of Israel was fictitious. 3

The experimental component involved a random assignment of respondents to three types of relevant information on (1) the actor that produced the ranking, (2) the issue area and (3) the ranking assigned to Israel. In the first experimental factor ( ‘ranking source’), respondents were randomly assigned to read about one of three international actors who published the ranking: the U.S. government, the Organisation for Economic Cooperation and Development (OECD) or a fictional international NGO. In the second experimental factor (‘issue area’), respondents were randomly assigned to receive information on a ranking in one of two areas: human rights or the environment. For each issue area, the vignette included information on the ranking criteria; for example, the fairness of elections and the status of minority rights for the human-rights ranking; air and water quality for the environmental ranking. Finally, the third experimental factor (‘ranking’) randomly informed respondents that in 2021, Israel was ranked either 14th in the world (high ranking) or 78th in the world (low ranking) in the assigned issue area (the full text of the vignettes appears in Online Appendix Section B).

Overall, the experiment involved 12 conditions in a 3 (ranking source: United States, OECD and NGO) × 2 (issue area: human rights and environment) × 2 (ranking: high and low) fully crossed design, which allows for a test of all research hypotheses. Online Appendix C reports balance tests and shows that respondents in the different conditions are indeed balanced on various socio-demographic characteristics and political measures.

Measures

After reading the vignette, we presented respondents with three questions. First, respondents were asked to indicate their level of agreement with the following statement: ‘The state of [human rights/the environment] in Israel is good’. This item sought to capture the effect of the treatments on respondents’ evaluations of Israel’s performance. Second, respondents were asked to indicate their level of agreement with the following statement: ‘The government of Israel should make more effort to improve [human rights/the environment]’. This item was intended to identify the respondents’ policy preferences following exposure to the ranking: their level of support for increased government efforts to enhance the country’s performance. Finally, the third, behavioural-intention item asked respondents to indicate their level of willingness ‘to sign a petition calling on the government of Israel to take action to improve [human rights/the environment]’. This item measured respondents’ readiness to take political action in demand of improved policy performance. Overall, then, this study includes three dependent variables: an assessment of Israel’s current performance, a preference for greater government efforts, and a willingness to act politically. Response options for all three items were shown on a 5-point scale in which 1 = Strongly agree; 2 = Agree; 3 = Neither agree nor disagree; 4 = Disagree; 5 = Strongly disagree. 4

We rescaled the three variables to vary between 0 and 1, with higher values denoting greater agreement with the relevant statement. Mean evaluation of Israel’s performance for human rights (M = 0.56; SD = 0.27) was higher than for the environment (M = 0.45; SD = 0.26) (t(3,880) = 13.21; p < 0.001). Mean support for improvement of the government’s policy on human rights (M = 0.70; SD = 0.26) was lower than for the environment (M = 0.78; SD = 0.23) (t(3,917) = 10.68; p < 0.001). And mean willingness to sign a petition was lower for human rights (M = 0.55; SD = 0.31) than for the environment (M = 0.67; SD = 0.28) (t(3,685) = 12.78; p < 0.001). These results suggest that, overall, respondents hold a more favourable assessment of Israel’s human rights performance compared to its environmental performance and are less interested in, or willing to act for, an improvement of human rights compared to the environment.

Pretreatment measures

We preregistered H1 as an expectation of the relative effect of a high ranking compared to that of a low ranking. However, testing which of the two rankings exerts an independent effect required a control condition without any ranking. Such a condition would have enabled us to ascertain whether the relative effect we observe is driven by the high ranking (which may positively influence the evaluation of the country’s performance), the low ranking (which may negatively influence the evaluation of the country’s performance), or perhaps both rankings push in opposite directions simultaneously. Nevertheless, we decided against having a control condition that includes no ranking. Because our vignette introduced respondents to specific numerical rankings, we considered it problematic to present respondents with a control condition that lacks any numerical ranking. Such a control condition would have been too different from the experimental conditions and would have served as a questionable baseline for identifying the treatment effect.

To partially mitigate this limitation, we presented all respondents, prior to the experimental manipulation, with several pretreatment items that tapped respondents’ satisfaction with Israel’s performance in five areas: security, education, health, and, most importantly for our purposes, human rights, and the environment. 5 As we show below, the latter two items allow us to establish a de facto baseline, albeit not a perfect one, for respondents’ evaluation of the quality of human rights and the environment.

Results

We begin with a systematic test of our hypotheses, presenting three sets of OLS regressions intended to examine H1, H2, and H3, respectively. In each set of analyses, we employ our three dependent variables: the evaluation of Israel’s performance, support for policy improvement, and willingness to sign a petition. All regressions use the 5-point scale of the pertinent dependent variable, rescaled to vary between 0 and 1, with higher values denoting greater agreement with the relevant statement. Our primary independent variable is the ranking, measured with a binary indicator taking 1 when Israel receives a high ranking and 0 when it receives a low ranking. The models also include several individual-level socio-demographic and political measures: age groups, gender, Jewish/Arab respondents, college education, ideological self-identification (right, centre, and left), and religiosity. 6

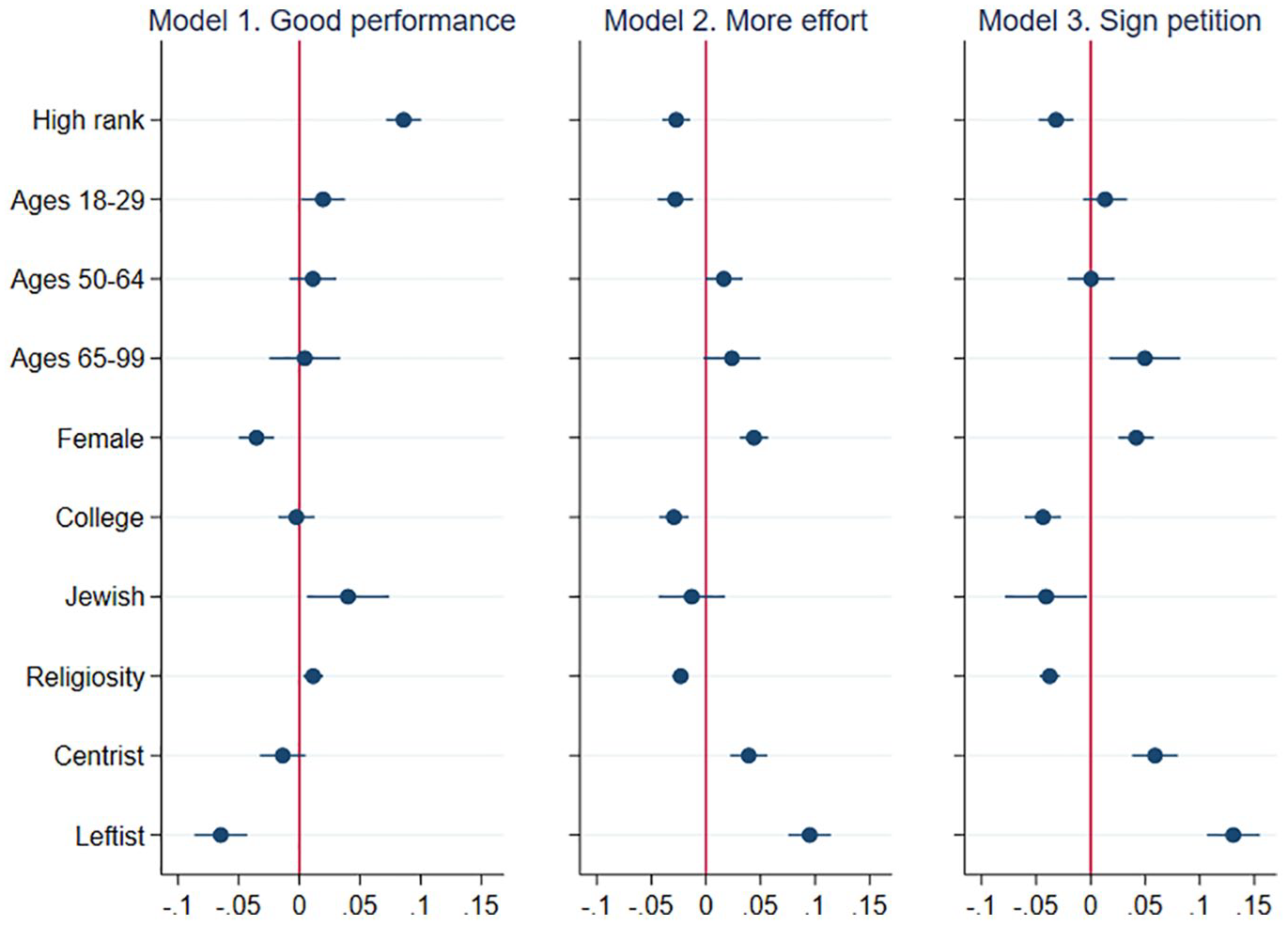

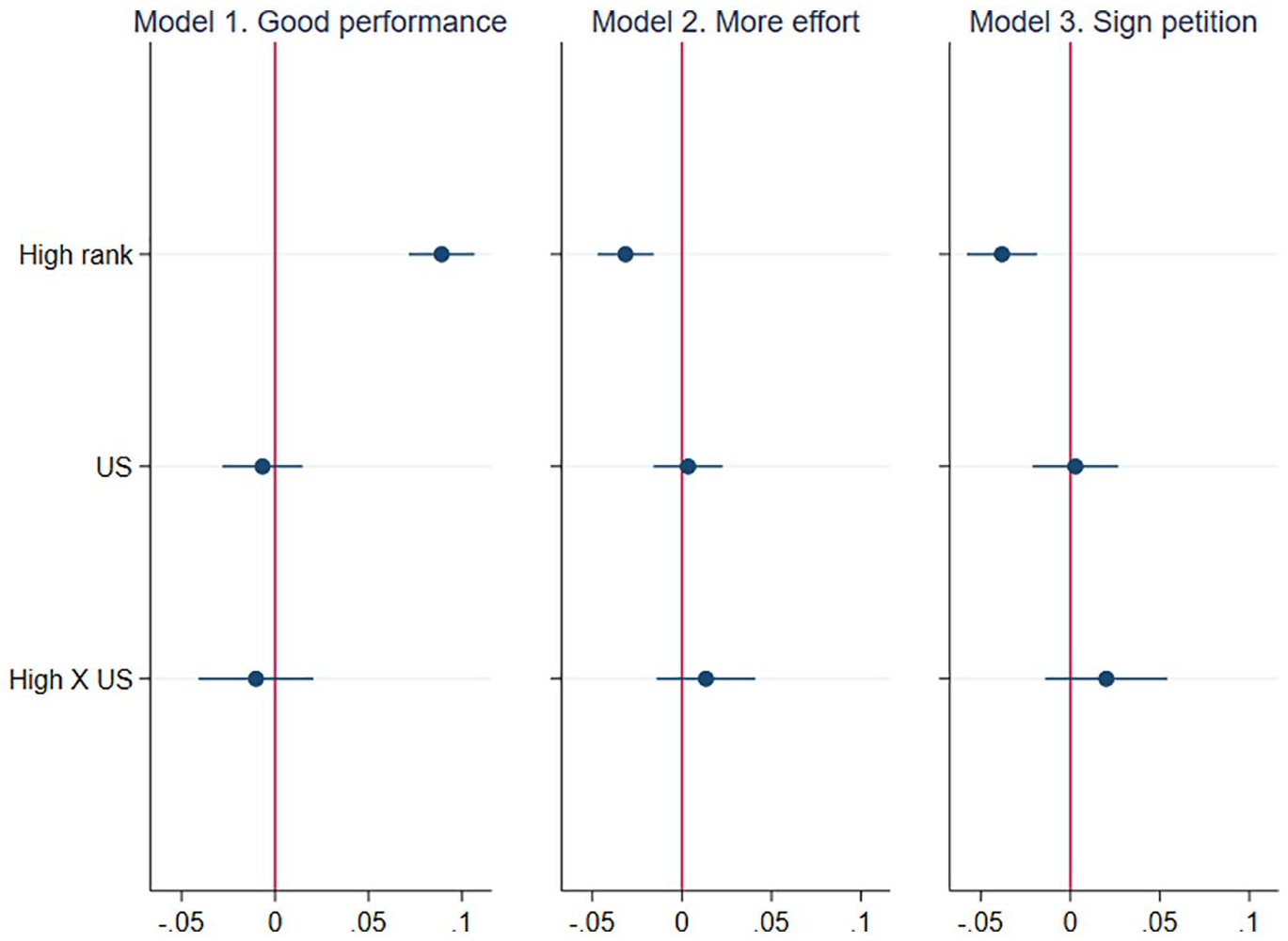

Figure 1 summarises the results of three OLS models that test H1. 7 In Model 1, the high-ranking treatment, compared to the low-ranking treatment, makes respondents significantly more likely to express satisfaction with Israel’s performance, supporting H1a (b = 0.09; p < 0.001; in keeping with the preregistration, we analyse the experimental results using one-tailed tests). This effect represents a medium-sized effect, with an increase of about 0.3 of a standard deviation. Model 2 shows that the high-ranking treatment also significantly decreases respondents’ support for government efforts to improve the country’s performance (b =−0.03; p < 0.001), consistent with H1b. This effect is rather small, representing a change of about 0.13 of a standard deviation. In Model 3, the high-ranking treatment significantly decreases respondents’ willingness to sign a petition demanding improved performance (b =−0.03; p < 0.001) – in line with H1c. This effect is again a small one, representing a change of about 0.12 of a standard deviation.

Effect of a high ranking on performance evaluation, preferences, and action.

Overall, the results in Figure 1 provide consistent support for H1, showing that the high-ranking treatment is associated with all three dependent variables moving in the expected direction: compared to respondents receiving the low-ranking treatment, respondents receiving the high-ranking treatment express a less critical and more positive evaluation of their country’s performance, alongside a weaker support for policy improvement and a lesser willingness to sign a petition demanding such an improvement.

The control variables behave as commonly expected. Compared to Arab respondents, Jewish respondents hold a more favourable assessment of their country and are less willing to act politically in demand of change; the same is true with more religiously observant respondents. Left-wing identification is associated with a more critical perception of Israel’s performance and a greater willingness to demand better performance. Women (compared to men) more negatively evaluate the country’s performance and more strongly express their support for improvement and their willingness to sign a petition. College-educated respondents seem less hopeful about the prospects of improvement and less willing to push for one.

Returning to our key results, we have found that the high-ranking treatment affects all three dependent variables compared to the low-ranking treatment. Yet, we cannot tell with certainty what is driving these results. Is it the high ranking that brought respondents to look at Israel’s performance more favourably? Or is it the low ranking that led respondents to think more critically about the country’s performance? Or is it both? Answering these questions requires a baseline and, as explained above, we employ for this purpose two pretreatment items that asked respondents about their satisfaction with Israel’s state of human rights and the environment. As expected, these two items are strongly correlated with the first dependent variable (Israel’s performance) in both the human rights and the environmental conditions 8 ; that is, the pretreatment items and the first DV capture rather similar attitudes, even if they are not identical. Accordingly, we consider these pretreatment items as a de facto baseline to which we can compare the values of the first dependent variable in both the high-ranking and low-ranking conditions.

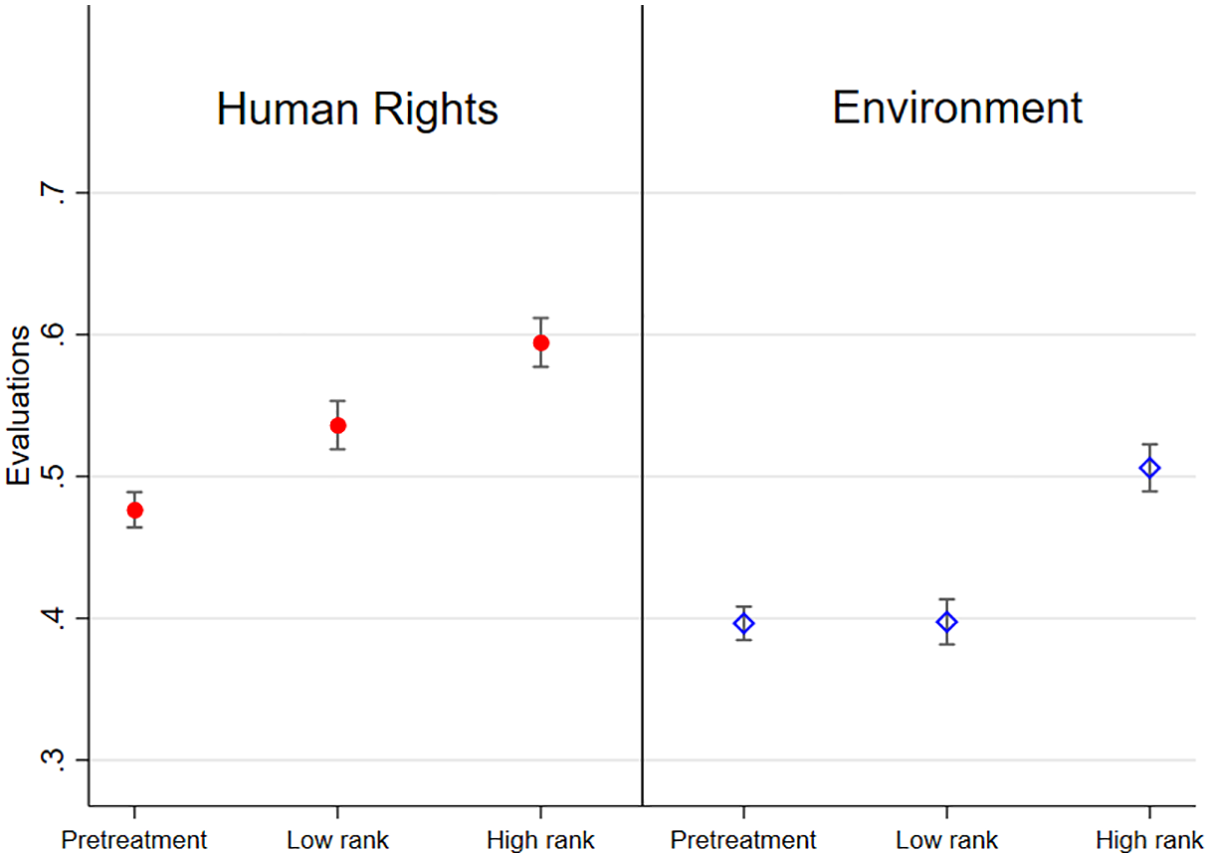

Figure 2 presents the mean value of the pretreatment items alongside the mean value of the dependent variable (evaluation of Israel’s performance) in the high-ranking and low-ranking conditions – in both the human rights condition (on the left) and the environment condition (on the right).

Pretreatment items compared the DV (evaluation of Israel’s performance).

Starting with the human rights condition (Figure 2, left-hand side), we can see that for respondents in both the high-ranking and low-ranking conditions, the mean evaluations of Israel’s performance are significantly higher than the pretreatment, by 0.11 and 0.06 on a 0–1 scale, respectively. 9 These results suggest that respondents in both the high-ranking condition and, somewhat unexpectedly, the low-ranking condition, evaluated Israel’s performance on human rights as better than the pretreatment item. The counterintuitive response to the low ranking – a better assessment compared to the pretreatment – could be a backlash effect: in response to external criticism of their country’s human rights record, respondents reacted defiantly and expressed their approval of that record. Several studies have theorised or empirically demonstrated such backlash effect (Deitelhoff, 2020; Efrat and Yair, 2023; Greenhill and Reiter, 2022; Gruffydd-Jones, 2019; Snyder, 2020a; Terman, 2019). In any event, it is quite clear that the low ranking did not lead respondents to adopt a more critical view of the state of human rights. 10 By contrast, the results strongly suggest that the high ranking led respondents to evaluate the country’s human rights record more favourably.

The right-hand side of Figure 2 demonstrates even more strongly that it is the high ranking, and not the low ranking, that affected respondents’ evaluation of the state of the environment. Respondents in the high-ranking condition had a mean evaluation of Israel’s performance that was significantly higher than that of the pretreatment, by 0.09; yet those in the low-ranking condition had a mean evaluation of Israel’s performance that was insignificantly different from that of the pretreatment (difference = 0.01). 11

Overall, Figure 2 strongly suggests that the high-ranking condition resulted in a more positive evaluation of Israel’s performance compared to a baseline. This figure provides no indication that, compared to a baseline, the low-ranking condition resulted in a more negative evaluation of Israel’s performance. In other words, it is most likely the high ranking, and not the low ranking, that is driving the key results.

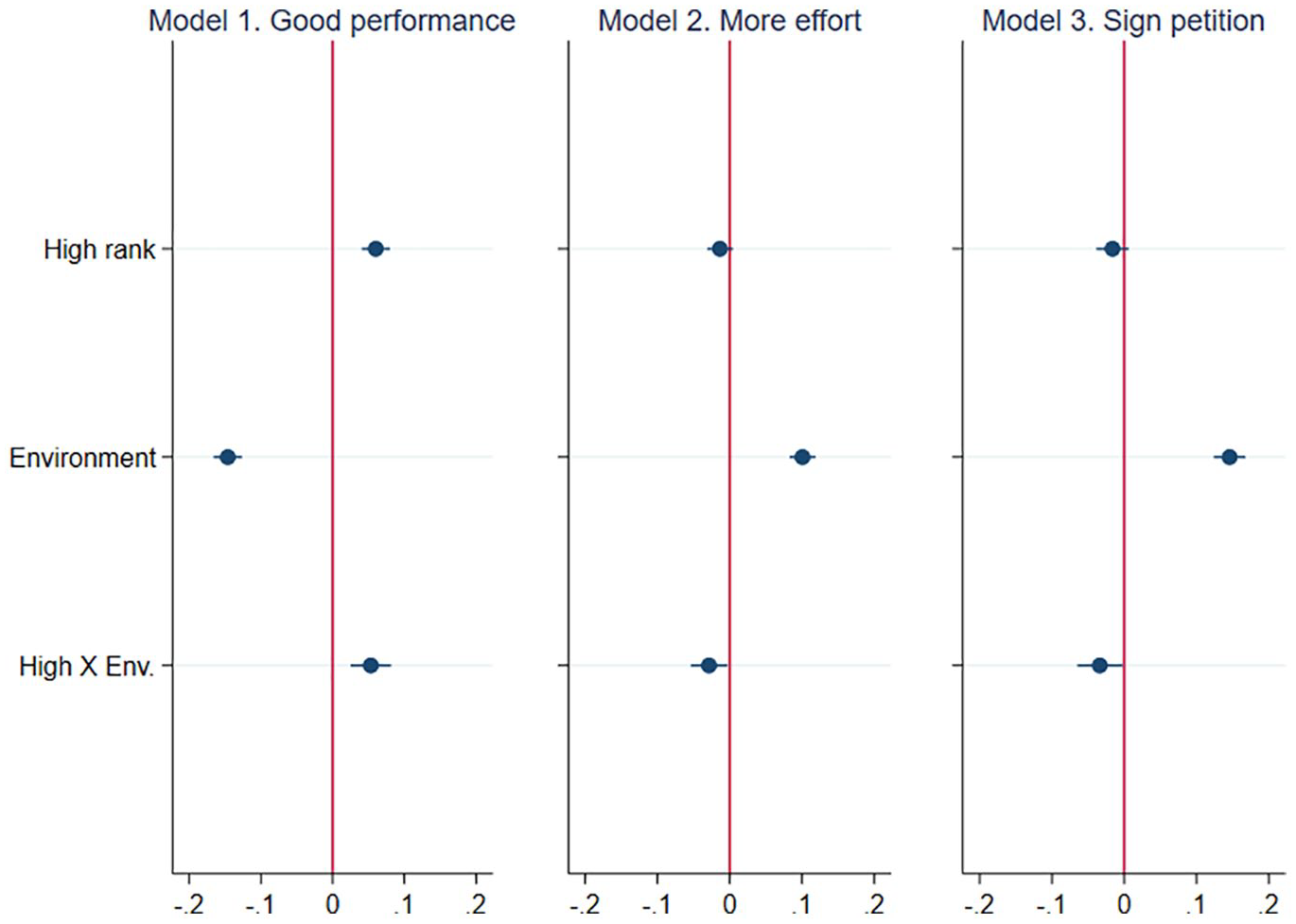

To test H2, we estimated three OLS models that capture the effect of the high-ranking treatment, conditional on the issue area (human rights vs the environment). These models include a binary indicator capturing the environment condition compared to the human rights condition, and a binary indicator capturing the interaction between high ranking and environment (1 if high ranking and environment, and 0 otherwise). We summarise the results of the conditional factors in Figure 3.

Testing for variation between issue areas.

All three models in Figure 3 provide support for H2. 12 In Model 1, the coefficient of the interaction between the high-ranking condition and environment condition is positive and significant (b = 0.05; p = 0.001), indicating that high ranking more strongly increases respondents’ evaluation of the country’s environmental performance (less polarising issue), compared to the human rights performance (more polarising issue). Specifically, the effect of high ranking in the environment condition is b = 0.11 (p < 0.001), compared to b = 0.06 (p < 0.001) for the human rights condition. 13

In Model 2, the coefficient of the same interaction is negative and significant (b = –0.03; p = 0.032). This means that the negative effect of high ranking on respondents’ support for policy improvement is stronger in the environmental area compared to human rights. In the environment condition, the effect of high ranking is b = –0.04 (p < 0.001), while the effect is insignificant for the human rights condition (b = –0.01; p = 0.106). 14

Examining the willingness for petition signing in Model 3, the coefficient of the interaction is again negative and significant (b = –0.03; p = 0.039): the high-ranking treatment more strongly reduces the willingness for action on the environment compared to human rights. While the effect of high ranking for the environment condition is significant at b = –0.05 (p < 0.001), it is insignificant for the human rights condition (b = –0.02; p = 0.117). 15

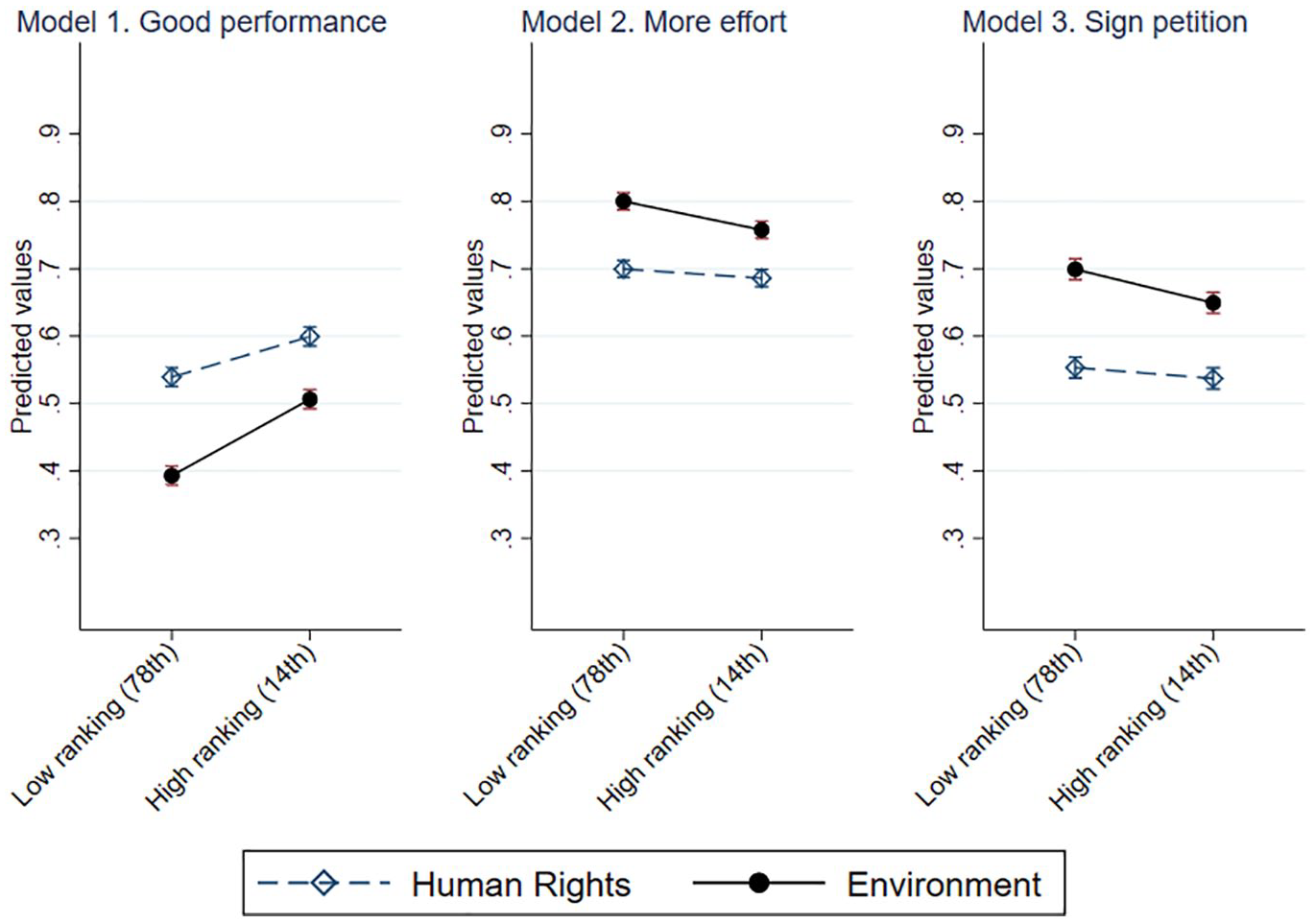

To facilitate interpretation of these findings, Figure 4 presents predicted values, based on Online Appendix Table D2. Across the three models presented, the high-ranking treatment exerts a stronger effect on the environment condition, compared to the human rights condition. For example, in Model 1, the predicted value of the first dependent variable (evaluation of performance) in the environment condition is 0.51 for those in the high-ranking condition and 0.39 for those in the low-ranking condition (difference of 0.12). In the human rights condition, the predicted value for those in the high-ranking condition is 0.60, while for those in the low-ranking condition, it is 0.54 (a smaller difference of 0.06).

Predicted values of the tests of H2.

Overall, Figures 3 and 4 offer strong support for H2: the high ranking more strongly affects the attitudes and behavioural intentions of Israelis on the environment. On this less polarised issue, they are more open to responding to new information and adjusting their views. It is harder, however, to change their views on the polarised subject of human rights.

Finally, we estimate three additional OLS models to test for the conditional effect of the ranking’s source (H3). The models, shown in Figure 5, include a binary indicator capturing the coefficient of the U.S. condition compared to the OECD and NGO conditions (combined, as Israelis harbour mistrust of both IOs and NGOs), as well as an interaction term between the high ranking and U.S. indicator (1 if low ranking and the United States, and 0 otherwise). 16

Testing for variation across sources of rankings.

As Figure 5 shows, none of the three models provides support for our hypothesis. The coefficients of the interaction between the high-ranking condition and U.S. condition are small (bs ⩽ 0.02) and insignificant in all three models (ps > 0.16) while also taking the opposite sign to our prediction. Thus, we find no evidence of a differential effect of the rankings across the actors producing them. Sympathy for and trust in the United States do not render Israelis more susceptible to influence by the U.S.-issued ranking.

Robustness tests

We conducted several tests to strengthen the robustness of our results, reported in full in Online Appendix Section E: running all analyses without any control variables (Tables E1–E3), using ordered logistic (ordinal) regressions on the original variable (scaled 1–5) instead of OLS regressions (Tables E4–E6); treating those who answered ‘don’t know’ as the middle category instead of excluding them from the analysis (Tables E7–E9) 17 ; testing H3 with two interactions instead of one, to ascertain that the results do not merely reflect the combination of the NGO and OECD conditions (Table E10); testing for heterogeneous effects across respondents’ ideological leaning (right, centre, and left), (Tables E11–E13); and, finally, controlling for respondents’ prior attitude (i.e. the pretreatment item) to verify that any potential differences pretreatment did not affect the main results (Table E14). Overall, these tests further buttress our main results.

Discussion and implications

Our study identified a clear impact of international rankings on domestic public opinion, consistent with our expectations: compared to a low-ranking scenario, citizens informed about their country’s high ranking expressed a more favourable assessment of the country’s performance, alongside weaker support for efforts to improve performance and a diminished willingness to sign a petition. Yet, these results only partially conform to the theoretical logic we outlined. We expected high rankings to lead citizens to evaluate their country’s performance more favourably and poor rankings to fuel the disapproval of existing policy. Yet our results are driven solely by the high ranking, which indeed leads people to view the national performance more positively. By contrast, the low ranking does not diminish the public’s satisfaction with the country’s performance.

This finding requires an explanation. The simple and intuitive nature of rankings makes it easy to understand the message that they convey about government performance and its distance from international standards (Kelley and Simmons, 2015, 2019). Yet, we find that citizens reject that message when it is negative, indicating that their country is performing below many other countries; they only embrace a favourable message, which portrays their country as doing well compared to others. This selective response is consistent with self-enhancement theory, the psychological assertion that people seek to increase the positivity – or reduce the negativity – of their self-views (Leary, 2007; Sedikides and Gregg, 2008). The human preference for positive, self-enhancing evaluations from others explains why people are persuaded by high rankings, but not poor rankings, of their country. This, however, is bad news for rankings, whose primary goal is not to praise the successful countries; rather, rankings aim to pressure laggard countries to improve (Kelley and Simmons, 2019). Such pressure, we find, is unlikely to arise from domestic public opinion. A public reluctant to acknowledge the reality of underperformance, as reflected in a low ranking, will not demand that their country do better.

The bad news continues when one disaggregates rankings by issue area. As expected, a ranking of the environment – a less polarising matter in Israel – exhibited a stronger impact than a ranking of the more polarising human rights record. Figure 2 suggests the likely cause. Not only are people less open to rankings on a highly polarised matter, as we argued above, but they might react defiantly to poor rankings on a heavily polarised subject. Indeed, Figure 2 suggests a backlash triggered by the poor human rights ranking: respondents informed about the low ranking expressed a more favourable assessment of Israel’s human rights record. Several studies of verbal shaming have documented a similar backlash effect (Greenhill and Reiter, 2022; Gruffydd-Jones, 2019; Lupu and Wallace, 2019). Since individuals derive their self-esteem from the status of their group, shaming – which offends the group’s status – might leave them angry, frustrated, hostile, and prone to nationalist sentiments. This may translate into greater support for the government’s norm-violating conduct (Snyder, 2020a; Terman, 2019).

A counterproductive backlash, however, should have been less likely to result from poor numerical rankings. Words of condemnation such as ‘abusive’, ‘brutal’, or ‘dangerous’ might stir anger, hatred, and humiliation among citizens, triggering resistance, but a number is presumably more objective and gentle and less offensive or emotionally charged than strongly worded criticism (Snyder, 2020a: 112–113). Furthermore, unlike verbal shaming, low rankings do not pointedly criticise policy, nor do they explicitly identify legal or normative violations. Rather, they generate more subtle pressure through a comparison among countries. Also, a global ranking that evaluates a large number of countries seems fairer and more legitimate, and less biased, compared to verbal shaming that targets a specific country. All this should have made the public more tolerant of poor rankings.

Yet, we find that a poor ranking of a highly polarised issue might still feel like an impermissible, insulting foreign interference that could fuel a defiant response. More broadly, the difference between the effects of human rights and environmental rankings, as captured here, demonstrates the importance of looking across issues to gain a more complete understanding of international influences on public opinion (Greenhill, 2020; Koliev et al., 2022).

Citizens’ selective approach to rankings – embracing the good and rejecting the bad – also accounts for the similar effect of rankings across the different actors producing them, contrary to H3. The trust in the ranking’s source matters little, since citizens only heed the favourable rankings: they are willing to accept positive messages about their country even from sources that otherwise enjoy a low level of trust. Trust matters more for the acceptance of poor rankings that convey criticism about the national performance; but, as we have seen, citizens tend to dismiss such criticism (cf. Buda and Zhang, 2000).

Obviously, results obtained through a single-country design have their limitations, since the effect of rankings on public opinion may vary across countries. Furthermore, one may suggest that Israel constitutes a unique case: As their country constantly faces international criticism, Israelis may have developed an indifferent, dismissive attitude towards outsiders’ negative evaluations of their country. The failure of international rankings to produce their intended effect in our experiment might simply be an artefact of Israelis’ unique attitude. While we cannot rule out this interpretation, we do believe our findings have a broad applicability, beyond the case of Israel. The reason is that public-opinion studies in other countries, including the United States, have similarly shown that international criticism might be ineffective or even counterproductive, failing to change citizens’ attitude in the desired direction (Greenhill and Reiter, 2022; Gruffydd-Jones, 2019; Kohno et al., 2023). The failure of negative rankings to change Israelis’ attitude, as documented here, is consistent with these studies. Therefore, it may indicate a general, cross-country problem with the impact of rankings on public opinion. This impact might be diminished by people’s reluctance to hear outsiders disapproving of their country.

Our key message is that analysts of international rankings, and the actors producing them, should recognise the possibly limited – and even counterproductive – impact of this instrument on the public. In some cases, rankings may indeed achieve the desired impact of opening citizens’ eyes to the poor performance of their country. But in other cases, as we saw here, citizens might prefer to take in the positive rankings and dismiss the negative ones. For those wishing to amplify the pressure that rankings put on governments, public opinion might be of limited utility.

Supplemental Material

sj-pdf-1-bpi-10.1177_13691481241230859 – Supplemental material for Do international rankings affect public opinion?

Supplemental material, sj-pdf-1-bpi-10.1177_13691481241230859 for Do international rankings affect public opinion? by Amnon Cavari, Asif Efrat and Omer Yair in The British Journal of Politics and International Relations

Footnotes

Acknowledgements

We thank two anonymous reviewers for helpful comments.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study received funding from the Program on Democratic Resilience and Development (PDRD), which is supported by the Konrad Adenauer Foundation.

Supplemental material

Additional supplementary information may be found with the online version of this article.

Section A: Comparison of the sample to Israeli benchmarks Table A1. Comparison of the sample with a nationally representative sample Section B: Text of the experimental vignettes Section C: Balance tests for the experimental conditions Table C1. Balance tests for the High/Low Ranking experimental factor Table C2. Balance tests for the Issue Area experimental factor Table C3. Balance tests for the Ranking Source experimental factor Section D: Full results of the multivariate analyses shown in the main text Table D1. Testing H1 (Figure 1 in the main text) Table D2. Testing H2 (Figure 3 in the main text) Table D3. Testing H3 (Figure 5 in the main text) Section E: Robustness checks Section F: Israeli public opinion on human rights and the environment Table E1. Testing H1 – without any control variables Table E2. Testing H2 – without any control variables Table E3. Testing H3 – without any control variables Table E4. Testing H1 using an ordered logit (ordinal) regression Table E5. Testing H2 using an ordered logit (ordinal) regression Table E6. Testing H3 using an ordered logit (ordinal) regression Table E7. Testing H1 – with ‘don’t know’ responses included Table E8. Testing H2 – with ‘don’t know’ responses included Table E9. Testing H3 – with ‘don’t know’ responses included Table E10. Testing H3 – separating the three ranking sources Table E11. Testing H1 – testing for heterogeneous treatment effects by ideological self-identification Table E12. Testing H2 – testing for heterogeneous treatment effects by ideological self-identification Table E13. Testing H3 – testing for heterogeneous treatment effects by ideological self-identification Table E14. Testing H1–H3 – controlling for prior attitudes concerning the first dependent variable (evaluation of national performance) Section F: Israeli public opinion on human rights and the environment

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.