Abstract

Prescription for Play (P4P) is a social impact program delivered during 18–36-month well-child checks to promote caregiver-directed play. To understand the impact of P4P on caregiver outcomes and whether it can be feasibly delivered in safety-net settings, this research evaluated P4P’s implementation across six federally qualified health centers (FQHCs). The association of P4P on caregiver outcomes was assessed using an interrupted time series design in which two separate caregiver samples completed surveys before (n = 180) and after (n = 262) P4P implementation. Implementation of P4P was further assessed using a mixed methods evaluation of 27 staff surveys, 25 staff interviews, and 44 clinic observations. Caregivers reported positive views towards play and a strong desire to play with their children before and after P4P implementation; independent samples t-tests showed that group differences were not statistically significant for any survey construct. Triangulation of quantitative and qualitative implementation data showed that P4P can be implemented as designed within varied FQHC settings and is acceptable among clinic staff. Although P4P does not appear to influence caregivers’ positive views of and strong investment in play, this study offers important evidence that P4P can be implemented to fidelity within FQHCs, supporting its feasibility for delivery in safety-net settings.

Introduction

Early childhood play improves life-course trajectories (Colliver et al., 2022; Normandin et al., 2023; Tessier et al., 2016; Walker et al., 2011) by fostering critical skills like language acquisition, physical capabilities, and socioemotional competencies (Dankiw et al., 2020; Lee et al., 2020; Pellegrini et al., 2007; Weisberg et al., 2016). Children from lower income families are less likely to have equal access to or opportunities for play due to factors like social and economic stressors (Milteer et al., 2012). These play disparities can impede the developmental course of under-resourced children by preventing them from fully benefiting from play (Milteer et al., 2012).

Numerous interventions have sought to increase play among under-resourced children by promoting play within homes (Worku et al., 2018), in classrooms and Head Start programs (Duch et al., 2019; Nicolopoulou et al., 2015; Scalise et al., 2017), and through built environment improvements (Audrey and Batista-Ferrer, 2015). Unfortunately, these previous play-based initiatives have been narrowly implemented (Audrey and Batista-Ferrer, 2015; Duch et al., 2019; Worku et al., 2018). Without significant social and structural changes to promote play universally, there remains a need to find creative solutions to deliver play-based interventions more widely (Souto-Manning, 2017). One underutilized strategy for increasing access to play-based interventions, particularly among under-resourced families, is to leverage well-child check (WCC) attendance (Yogman et al., 2018). The American Academy of Pediatrics (AAP) recommends that all pediatricians educate caregivers on play’s importance and encourage them to play with their children (Sulaski Wyckoff, 2018). However, limited efforts have attempted to develop a standard approach for integrating play discussions into routine WCCs to bolster caregiver-led play (Yogman et al., 2018).

One program aimed at addressing this gap is Prescription for Play (P4P; Emery Tavernier et al., 2024). P4P is designed for healthcare providers seeing 18–36-month-old patients for WCCs to increase play through caregiver education. P4P was developed in consultation with pediatric primary care providers in response to AAP’s recommendation that play education should be a standard component of WCCs (Sulaski Wyckoff, 2018; Yogman et al., 2018). P4P is guided by the Theory of Planned Behavior (Ajzen, 1991), which posits that one’s behavioral intentions are the strongest predictors of their actual behavior and that these intentions are influenced by attitudes toward the behavior, subjective norms of the behavior, and perceived control over the behavior (see Supplemental Figure 1). P4P primarily focuses on improving caregiver attitudes towards play through education, with potential opportunities to normalize and identify barriers to play.

Recent preliminary data from our group shows that caregivers of children exposed to P4P report fewer developmental concerns on the Parents’ Evaluation of Development Status (PEDS) screening tool over time compared to those whose children never received P4P (Oo et al., 2024). The PEDS is a commonly used screening tool in pediatric primary care settings (Abdoola et al., 2023) designed to elicit caregiver concerns about early child development across language, motor, behavior, self-help, school, and social domains (Glascoe, 1999). Our research used electronic health record (EHR) data at a large federally qualified health center (FQHC) and found that the odds of caregivers reporting any concerns on the PEDS were lower among patients who received P4P versus those who did not at subsequent WCCs (OR = .64, 95% CI [.42, .98]; Oo et al., 2024). Nevertheless, P4P remains an evidence-informed program and is not currently evidence-based as no work has formally investigated the process and outcomes of its implementation.

Aim

To (1) explore how P4P exposure influences caregiver attitudes and behavioral intentions towards play according to the Theory of Planned Behavior (Ajzen, 1991) and (2) assess P4P implementation among FQHC providers and clinic staff using an established implementation framework (Carroll et al., 2007; Hasson, 2010).

Materials and methods

Prescription for Play program

P4P is a partnership between the Weitzman Institute and LEGO® Group, supported by the LEGO Foundation. P4P is designed to help U.S. healthcare providers promote play in WCCs by offering a freely available virtual hub to healthcare teams that includes program resources and trainings, implementation toolkits, and technical assistance (https://www.rx4play.org/). Interested organizations enroll in P4P through the virtual hub by designating a site coordinator, who is responsible for supporting program implementation by ordering P4P kits and encouraging provider participation. P4P kits are free for participating organizations and contain age-appropriate LEGO® DUPLO® bricks and related educational materials, which are used as prompts during 18–36-month WCCs to engage caregivers on play’s benefits. Providers are required to complete an online (15-min) training video through the P4P virtual hub prior to distributing these kits, which is eligible for continuing medical education credits. The training video is taught by a pediatrician and includes a brief overview of play’s scientific benefits and a description of how to integrate P4P into 18–36-month WCCs. Providers are instructed to model play with their patients using the kit in real-time while educating caregivers on play’s role in child development. In line with AAP recommendations (Yogman et al., 2018), providers are encouraged to give caregivers a “prescription” to play daily with their child and are advised to continue conversations on play and its importance at subsequent visits. Site coordinators and participating providers receive access to an electronic resource library, additional online trainings, and technical assistance. Support staff like nurses and medical assistants are often involved in the implementation workflow (e.g., preparing the kits for eligible patients) and are able to create their own account in the virtual hub but are not required to complete provider training. P4P was pilot tested for feasibility and acceptability to inform its current resources, training, and implementation support (Panjwani et al., 2022).

P4P tracks information from participating organizations to document program implementation via a biannual impact report survey. Since December 2021, P4P has delivered almost three million kits to 1686 organizations nationwide. Participating organizations consist of safety-net settings and lookalikes, private primary care and pediatric practices, private medical centers, hospital-affiliated practices, and health systems.

Procedures

Research site recruitment

P4P implementation was assessed at multiple FQHCs, which provide medical services at low- or no-cost to underserved communities (Jones et al., 2016; Wolf et al., 2018). Site recruitment occurred through broad outreach via an organizational listserv of over 72,000 individuals and targeted strategies via personal invitations to FQHCs that were likely to meet eligibility criteria. Recruitment material described study procedures and solicited applications from interested organizations. Eligibility criteria required participating organizations to (1) be a FQHC, (2) have at least 40% of patient households list Spanish as their preferred language to ensure language-diversity, (3) demonstrate a dedicated team for data processing and transfers, and (4) have ability to seek Institutional Review Board approval. Participating organizations were paid up to $50,000 to offset the staffing effort required to achieve research goals. Participating sites identified a site coordinator responsible for managing program implementation and research objectives. Site coordinators met monthly with the senior program manager to discuss research updates and troubleshoot challenges. Monthly meetings were conducted virtually throughout the study duration and lasted approximately 30 minutes. All sites otherwise received the same level of support offered to all participating P4P organizations via the virtual hub, with access to program implementation staff for technical assistance.

Interrupted time series design to assess caregiver outcomes

An interrupted time series design was used to understand whether P4P relates to caregiver outcomes. An interrupted time series design is a quasi-experimental approach used to evaluate intervention effects by comparing outcomes before and after implementation when randomization is not feasible (Hategeka et al., 2020). A baseline group of caregivers was recruited from each organization prior to P4P implementation. This baseline group of caregivers received a handout during their child’s 18–36-month WCC asking them to complete a pre-implementation phone survey on their views of and behavioral intentions towards play. During the pre-implementation phase, site coordinators ordered P4P kits and began establishing implementation procedures (e.g., locating storage space for kits and developing workflows) to facilitate implementation once they were approved to do so. The pre-implementation phase lasted up to 2.5 months or until 50 caregivers completed pre-implementation surveys, whichever came first.

Sites were approved to begin implementing P4P after completing the pre-implementation phase. Implementation involved having participating providers complete the required P4P training through the virtual hub. Completion of provider trainings was managed by site coordinators; training evaluations were not conducted. Trained providers then began implementing P4P during WCCs, often with support from other staff (e.g., nurses and medical assistants), who ensured workflows were followed. An additional sample of caregivers, who received P4P during their child’s 18–36-month WCC, were recruited to complete an identical phone survey as the pre-implementation survey. The post-implementation phase continued until the end of the study period or until 50 caregivers completed surveys, whichever came first.

All caregivers for the pre- and post-implementation surveys were required to (1) be ≥ 18-years-old, (2) be the pediatric patient’s legal guardian, (3) have phone access, and (4) speak English, Spanish, or Haitian Creole (the primary languages spoken by patients at participating sites). Phone surveys were conducted by a third-party survey vendor (Crossroads Group, Inc.) within 2 weeks of the completed WCC. Caregivers received a $30 Amazon gift card for compensation.

Mixed methods assessment of program implementation

A mixed methods approach was undertaken to evaluate P4P implementation. This mixed methods approach was guided by Carroll’s modified framework for implementation fidelity to evaluate the process of implementing P4P (Carroll et al., 2007; Hasson, 2010). This framework suggests that implementation fidelity is measured by adherence and influenced by implementation moderators. Adherence is defined as the extent to which a program is delivered as designed and is measured by factors like program content (i.e., whether intervention components were implemented as planned) and coverage (i.e., whether the target population was reached) while implementation moderators are considered barriers and facilitators to implementation and include factors like quality of delivery and participant responsiveness. A convergent design was used in which qualitative and quantitative data were collected concurrently (Fetters et al., 2013).

A site visit was conducted with participating organizations in the first month post-implementation. Two trained, Master’s-level research assistants visited each site to complete observations of P4P implementation. Research assistants identified as female and did not have a relationship with any participating sites. Research assistants shadowed participating providers over the course of 2 days and sat-in on a convenience sample of 18–36-month WCCs after receiving verbal permission from the caregivers of the pediatric patients to do so. Research assistants completed an observation protocol to document P4P delivery (James et al., 2017), which involved recording notable factors related to implementation adherence and moderators. Observation protocols were completed during or immediately following each WCC using a combination of checklists and qualitative memoing (Lofland et al., 2022). Observations were held for 18–36-month WCCs and were conducted in English, Spanish, or Haitian Creole. Research assistants who spoke Spanish attended visits conducted in Spanish while all visits held in Haitian Creole had an interpreter present as part of standard medical care.

During site visits, research assistants also gathered surveys and qualitative interviews from a purposive sample of participating providers and clinic staff to better understand implementation adherence and moderators. Eligible providers and clinic staff were identified by site coordinators and included those actively involved in implementing P4P either by delivering P4P directly (i.e., providers) or supporting its implementation (e.g., medical assistants). Surveys conducted during site visits were done on paper while interviews were completed in-person with the trained research assistant. Surveys took <10-min to complete while interviews took <30-min. Eligible providers and clinic staff, who were not available during site visits to complete surveys or interviews, were given additional opportunities to do so remotely via an emailed survey link and an option to complete the interview online. In-person and online interviews were audio-recorded and transcribed verbatim.

Study procedures were approved by the Community Health Center, Inc. Institutional Review Board (Approval #1196) and complied with the ethical standards outlined in the Belmont Report and Declaration of Helsinki. All participants provided verbal consent prior to participating in study procedures. Data were collected between July 1, 2022 and December 31, 2023.

Measures

Caregiver outcome survey

To understand caregiver views on and behavioral intentions towards play, a survey was developed by the research team according to the Theory of Planned Behavior (Ajzen, 1991; see Supplemental Material). Survey development was informed by a comprehensive literature review focused on caregiver-child play engagement and the Theory of Planned Behavior framework as outlined by Montaño and Kasprzyk (2015). The survey was piloted with a single caregiver for feedback on content and understandability before the instrument was finalized. The final survey included four subscales that aligned with Theory of Planned Behavior constructs: (1) attitudes, (2) subjective norms, (3) perceived behavioral control, and (4) behavioral intentions. The attitudes subscale (4-items) assessed beliefs that play is enjoyable, pleasant, helpful, and valuable. The subjective norms subscale (3-items) evaluated support and approval from others regarding play. The perceived behavioral control subscale (3-items) assessed the extent to which play is within a caregiver’s control and not prevented by others. The behavioral intentions subscale (3-items) assessed intentions to play daily. Questions were rated using a 4-point Likert scale and subscale items were averaged, with higher scores indicating more positive views of and higher behavioral intentions to play. Cronbach’s alphas ranged from .68 to .78 for the pre- and .63 to .79 for the post-implementation surveys. Only the behavioral intentions subscale had an alpha <.70, indicating largely adequate reliability. Caregivers also reported demographics (e.g., age and race/ethnicity).

Implementation measures

Quantitative (surveys) and qualitative (interviews and observations) measures were designed by the research team according to Carroll’s modified framework for implementation fidelity (Carroll et al., 2007; Hasson, 2010) to evaluate specific components of program adherence (i.e., content and coverage) and potential implementation moderators (e.g., intervention complexity, quality of delivery, participant responsiveness, and facilitation strategies; see Supplemental Material).

Staff survey and interview

The staff survey asked respondents to report their current role in their organization and included 21 close-ended questions assessing alignment with program content (e.g., beliefs about P4P’s usefulness) and coverage (e.g., frequency in which play is discussed during WCCs) and implementation moderators (e.g., organizational features that support or impede implementation). Four open-ended questions allowed for additional opportunity to describe implementation moderators (e.g., challenges to implementation). A cognitive interview was conducted with one pediatric primary care provider during survey development to ensure clarity, relevance, and appropriateness.

Semi-structured staff interviews were conducted to provide deeper insight into implementation processes. Interviews consisted of 9 open-ended questions with additional probes that focused on assessing implementation moderators (e.g., participant responsiveness, quality of delivery, implementation barriers, and facilitators). Interviewees also reported their current role, gender, age, and race/ethnicity.

Clinic observation

The observation protocol was adapted from similar research on intervention fidelity (James et al., 2017) to align with Carroll’s modified framework for implementation fidelity (Carroll et al., 2007; Hasson, 2010). Adherence to P4P content was assessed using a checklist to determine whether or not providers (1) gave out the P4P kit, (2) discussed play’s importance on different aspects of child development (e.g., emotion regulation), and (3) delivered a “prescription for play” to caregivers. The length of time spent delivering P4P in minutes was recorded. Additional prompts to record implementation moderators (e.g., participant responsiveness, facilitation strategies) and contextual factors (e.g., clinic workflow) were provided through both checklists and prompts for qualitative memoing (Lofland et al., 2022).

Analysis

Analysis of caregiver surveys

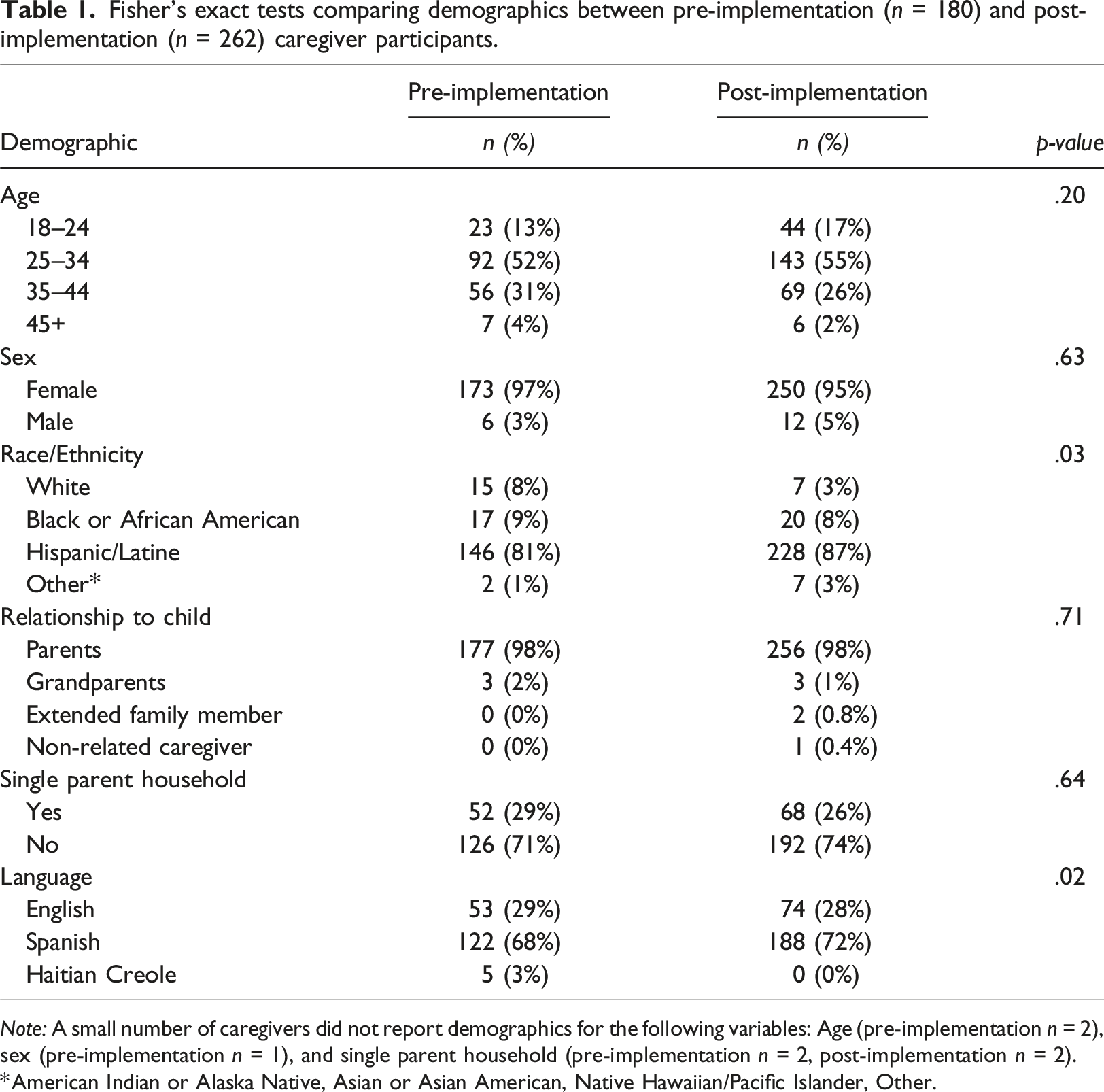

Statistical assumptions were tested prior to conducting analyses of caregiver data (Verma and Abdel-Salam, 2019). Given small cell sizes for several caregiver demographics, characteristics between pre- and post-implementation respondents were compared using Fisher’s exact tests, which are robust to small cell sizes (Winters et al., 2010). All survey subscales were normally distributed, with skewness and kurtosis values between ±2.0 (Verma and Abdel-Salam, 2019). No outliers were identified. Independent samples t-tests were thus used to compare survey responses between pre- and post-implementation groups. Results were interpreted according to whether equal variance assumptions were met. Analyses were completed using SPSS Statistics Version 27 (IBM Corporation, Armonk, NY, USA).

Mixed methods analysis of program implementation

Data from qualitative interviews were transcribed verbatim and observation texts compiled into separate Microsoft Excel documents. An inductive/deductive hybrid thematic analysis approach was used to separately evaluate interview and observation data (Proudfoot, 2023). Two independent coders (SAB, PR) reviewed each set of material for initial impressions and identified preliminary codes. Codes were deductively sorted into themes by team consensus according to the factors outlined by Carroll’s modified framework for implementation fidelity (Carroll et al., 2007; Hasson, 2010). Inductive themes were considered. Final codebooks for the qualitative interview and observation data were established by team consensus, and the two independent coders separately coded each set of material until 100% agreement was achieved.

Observation checklists and quantitative items from staff surveys were descriptively summarized and organized according to the factor they were designed to assess from Carroll’s modified framework for implementation fidelity (Carroll et al., 2007; Hasson, 2010). Because a limited number of open-ended comments from staff surveys were received, comments were sorted according to this same framework through team consensus rather than through qualitative analysis. Investigator and methodological triangulation of qualitative and quantitative data was used to document consistencies and discrepancies between the independent raters and data sources, which were reviewed until team consensus was reached (Morse, 1991).

Results

Research sites

Three research sites were recruited through targeted strategies while three additional sites were selected from the 243 organizations that responded to the broad outreach efforts. The six participating FQHCs were geographically diverse and located in California, Connecticut, Florida, Indiana, New York, and North Carolina.

Caregiver survey results

Caregiver characteristics

Fisher’s exact tests comparing demographics between pre-implementation (n = 180) and post-implementation (n = 262) caregiver participants.

Note: A small number of caregivers did not report demographics for the following variables: Age (pre-implementation n = 2), sex (pre-implementation n = 1), and single parent household (pre-implementation n = 2, post-implementation n = 2).

*American Indian or Alaska Native, Asian or Asian American, Native Hawaiian/Pacific Islander, Other.

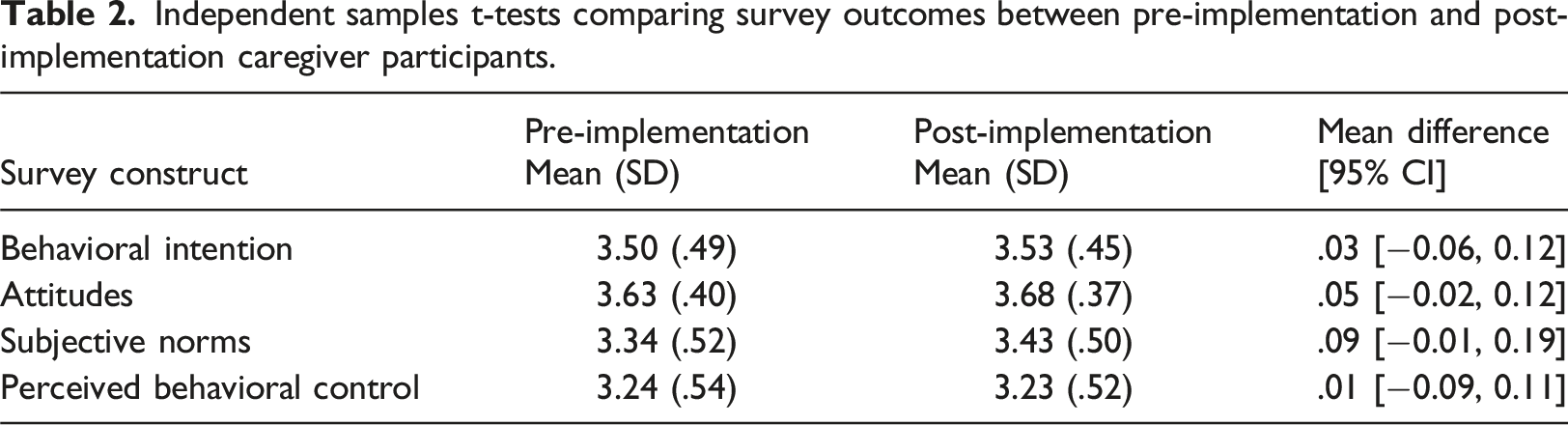

Caregiver outcomes

Independent samples t-tests comparing survey outcomes between pre-implementation and post-implementation caregiver participants.

Implementation results

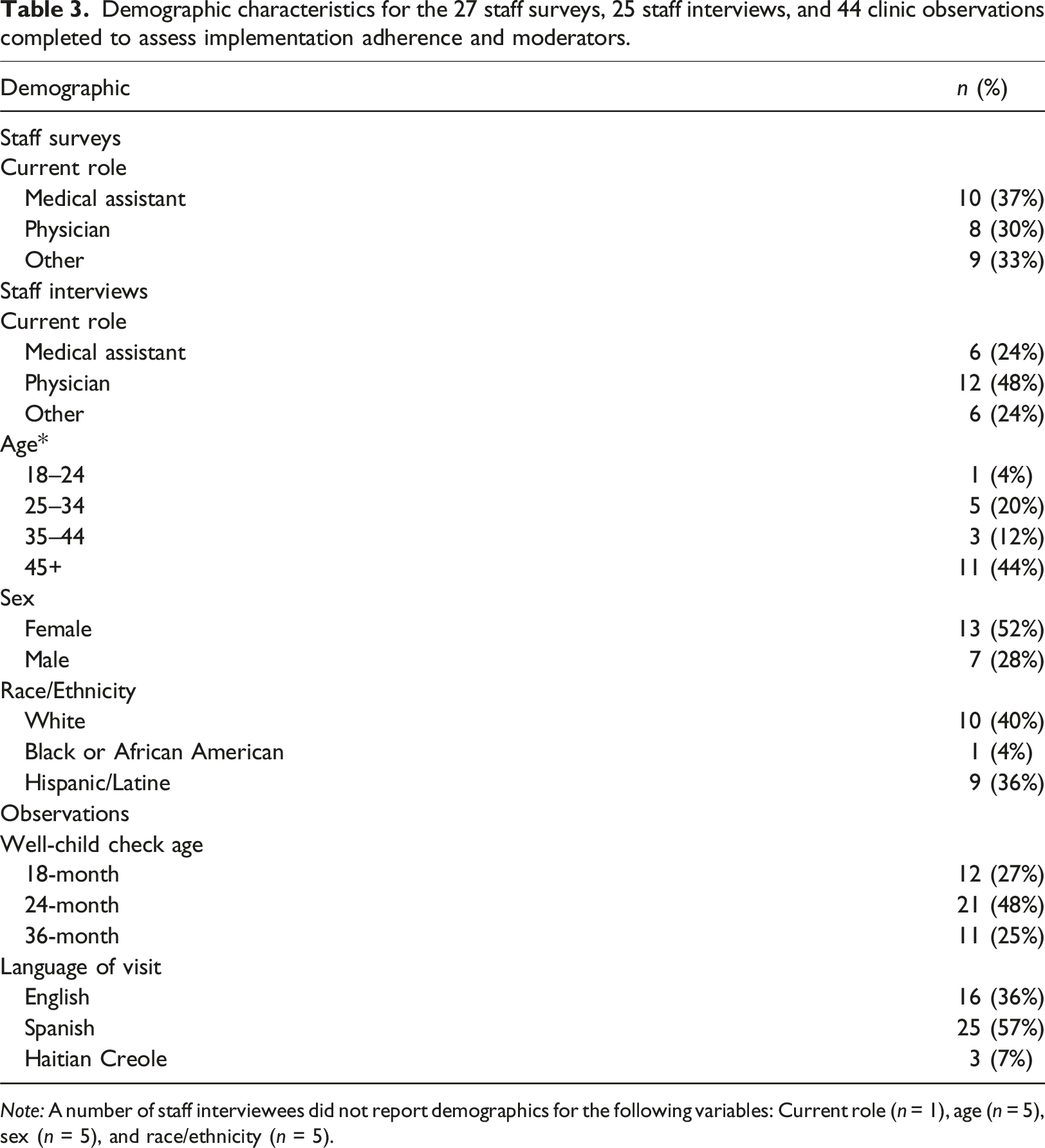

Care team and clinic characteristics

Demographic characteristics for the 27 staff surveys, 25 staff interviews, and 44 clinic observations completed to assess implementation adherence and moderators.

Note: A number of staff interviewees did not report demographics for the following variables: Current role (n = 1), age (n = 5), sex (n = 5), and race/ethnicity (n = 5).

Implementation evaluation

Mixed methods data were deductively organized according to Carroll’s modified framework for implementation, which included factors related to adherence and implementation moderators. No inductive themes emerged. Due to missing data across survey items, survey responses are reported according to the total number of respondents per item rather than the total number of overall survey respondents. Pseudonyms are used throughout.

Adherence

Two subthemes related to adherence were deductively identified: coverage (i.e., whether the target population was reached) and content (i.e., whether intervention components were implemented as planned).

Coverage

According to survey data, 15 (68%) of 22 respondents reported occasionally, rarely, or never incorporating discussions about the importance of play into WCCs before completing P4P training, with 14 (58%) of 24 respondents reporting that they began always doing so after completing P4P training. Interviewees noted that P4P training encouraged them to more consistently discuss play, with one pediatrician stating: “I find myself talking about play in other visits where I'm not giving the [play kit] a lot more, but I think having the [play kit] and being able to just model some of the behaviors is very helpful.” (James)

In addition, 7 (33%) of 21 survey respondents reported inviting other providers from their organization to complete P4P training to expand its reach.

Content

Among the 26 survey respondents who reported their views on P4P, 24 (92%) somewhat or strongly agreed they were excited about P4P, 25 (96%) found P4P valuable, and 25 (96%) believe play is important to development. Observations showed that most providers demonstrated alliance with program goals. In all 44 (100%) observations, providers successfully gave out the P4P kit, during which 26 (60%) modeled play. In 42 (95%) observations, providers discussed play’s importance. Meanwhile, providers delivered a specific “prescription for play” to caregivers in 27 (61%) observations. Observations were consistent with interviews, with most respondents describing success in implementing P4P as designed. One pediatrician stated: “[When I deliver P4P], I talk about the different kinds of areas that we're looking at for development, fine motor skill, gross motor skills, and problem solving and social skills and how they're learning a lot of those skills as they're playing with toys. And also as [caregivers are] playing with them with the toys. Usually I'm playing with the toys a little bit with the kids back and forth at the same time.” (Sarah)

However, staff surveys indicated more variability in adherence to program content, with only 10 (45%) of 22 respondents reporting that they model play using P4P kits at least most of the time.

Implementation moderators

Several implementation moderators that promoted program adherence were deductively identified across data sources: intervention complexity, quality of delivery, participant responsiveness, and facilitation strategies.

Intervention complexity

Staff indicated that P4P fit nicely into their existing visits, with 16 (89%) of 18 survey respondents agreeing that P4P’s content is relevant to their practice. In interviews, staff described P4P training as a low-lift that enhanced their knowledge, further noting that the intervention itself was not time-consuming and complemented their workflows. One medical director described P4P training by saying: “It was pretty straightforward and easy. It was fun. I guess it wasn't overwhelming.” (Steven)

Observations confirmed the simplicity of the intervention by demonstrating that P4P took an average of 2.9 ± 1.9 minutes to deliver, with core messaging largely built into standard anticipatory guidance. However, some interviewees noted that the additional time needed to deliver P4P was challenging at times, with one pediatrician saying: “The challenges are always the challenges [of] time, right? Some of these visits are a little bit more labor-intensive visits and working things in can sometimes be a challenge.” (William)

Quality of delivery

In interviews, providers described tailoring play education based on patient needs, with one medical director stating: “You have to know your audience. I think that as far as what goes on in the room that is what dictates what happens in the room discussions.” (Steven)

From the 44 observations, 28 (64%) included encouragement to use simple toys and/or have screen-free time as well as play’s role in language acquisition and speech development. In addition, 24 (55%) observations included discussions on the relationship between play and brain development, 23 (52%) included discussions on play and socioemotional skills, and 14 (32%) included discussions on play and emotional regulation.

Participant responsiveness

A key facilitator to P4P implementation perceived by clinic staff was its positive reception by families. In interviews, staff reported the toy was appreciated as a free resource, with one medical assistant noting: “The kids get happy…and parents get a little bit more trustworthy [when you give them the P4P kit].” (Maria)

Observation memos showed that children were often eased or comforted by the playfulness P4P brought. Interviewees noted this allowed them to better evaluate their patients’ developmental status because children were more likely to display physical evidence of developmental milestones, like stacking blocks, speaking, or interacting socially, when comfortable, with one pediatrician noting: “I also love making comments to the parent about how the kid is playing [with the P4P kit] while we're doing the exam…I think it can promote discussion that way about developing and what we're looking for.” (Jennifer)

Meanwhile, some interviewees expressed challenges with engaging caregivers in play discussions, which may relate to different approaches in program delivery. One medical assistant stated: “I feel like some [parents] give you their attention and some don't…Thinking of ways to kind of make it exciting.” (Ana)

Caregivers were seen to engage more actively in P4P when providers modeled play during observations. In 11 (25%) observations, families did not play with the P4P kit, and of those 11 visits, 9 (82%) did not have providers model play, which may have impacted caregiver engagement.

Facilitation strategies

EHR tracking, workflows, and storage plans, facilitated P4P implementation. Sites participating in Reach out and Read (Zuckerman and Khandekar, 2010) were observed to incorporate P4P into their same workflow for distributing books, which appeared to simplify the process. One pediatrician expanded on this upon interview: “The [P4P kits] are right under our Reach Out and Read books… So it makes it very easy when we go to get the book, we go and grab the blocks and the handouts. And it makes it easy to remember to do it and it's not a lot of other running around trying to grab stuff.” (Henry)

Additional interviewees discussed challenges when implementation systems were not in place, with one medical assistant remarking: “I think it's just something that needs to get into the routine. But we just need to find a better process of how we need to do it…I think if [the responsibility of implementing P4P is] all going to the [medical assistant], that's probably going to be like a big barrier.” (Daniella)

The importance of these organizational systems was further reflected in open-ended comments in staff surveys, with respondents expressing several challenges they faced in delivering P4P kits when organizational systems were not in place, like “Making sure each patient who qualifies for a kit gets identified” and “Running out of P4P kits.”

Discussion

Contrary to expectations for our first aim, P4P did not alter caregiver views and behavioral intentions towards play. However, results provide preliminary support for our second aim that P4P can be implemented as designed within safety-net settings.

Caregivers reported positive views of and a high investment in playing with their children regardless of P4P exposure, which likely resulted in a ceiling effect that made detecting group differences difficult (Šimkovic and Träuble, 2019). These findings are consistent with prior qualitative research among low-income families demonstrating that parents view play favorably and report a strong desire to encourage their children’s developmental skills through play (Shah et al., 2019). Although behavioral intentions are one of the strongest predictors of actual behavior (Fishbein and Ajzen, 1977; Michie et al., 2011), we did not assess whether the high behavioral intentions reported by caregivers translate to play habits. Given that P4P aims to increase caregiver-directed play, future work is needed to clarify whether P4P promotes measurable changes in the frequency and amount of play that caregivers engage in with their children to better evaluate its effectiveness.

Despite the null caregiver findings, this research provides support for implementation adherence when delivering P4P in FQHCs. Perhaps most importantly for program implementation and sustainability (De Wit et al., 2018; Green et al., 2012; Lau et al., 2016; Vendetti et al., 2017), we found high provider and staff buy-in, with over 90% of providers reporting P4P to be exciting and valuable. Nearly 60% of providers reported always discussing play after completing P4P training and a majority demonstrated fidelity to P4P implementation during observed visits. However, it is worth noting that adherence to P4P content was lower in anonymous surveys, meaning that observer effects may have contributed to the high fidelity found during clinic observations (Oswald et al., 2014).

In line with previous research examining determinants of intervention uptake in primary care settings (Lau et al., 2016), several additional implementation moderators influenced P4P delivery. Given competing demands and time constraints during routine WCCs (Liljenquist et al., 2023; Okobi et al., 2023), P4P’s simplicity was viewed as an important strength, with the average length of intervention delivery taking <3 minutes. Although some providers expressed challenges with incorporating P4P during busy WCCs, many were observed to integrate P4P messaging into their usual anticipatory guidance, which supported the quality of P4P delivery without notably impacting visit length. This approach of integrating intervention content into standard developmental conversations during WCCs mirrors that used in similar pediatric primary care programs that have been scaled nationally (Klass et al., 2009). Clinic staff further reported high caregiver responsiveness to the P4P program, with greater patient engagement observed when providers followed recommendations to model play with their patients. These findings suggest that P4P is likely to be acceptable among its target population, which is a necessary component of intervention success (Sekhon et al., 2017), though future work is needed to confirm this. Finally, adherence to P4P was enhanced when organizational systems were in place that supported P4P implementation, like EHR tracking, established workflows, and storage plans. The need for clear and established implementation support systems is consistent with previous work identifying clinic infrastructure as a key contributor to intervention success in primary care settings (Lau et al., 2016).

Study limitations

This research had several limitations. First, response rates to the caregiver survey were low and may be subject to social desirability or selection bias. Second, providers and families may have experienced an observer effect during clinic observations and altered their behaviors to appear more favorable to program adherence. Third, the measures used were researcher-designed and did not include previously validated assessments. Finally, this study was conducted in FQHCs with a caregiver sample largely comprised of Hispanic/Latine patients who identified as women and reported Spanish as their preferred language, which limits generalizability.

Implications for practice

P4P demonstrates feasibility within FQHCs. Its integration into routine well-child care was widely supported by providers, who reported high levels of enthusiasm and perceived value. The brevity and simplicity of the intervention facilitated implementation even within time-constrained clinical settings. These findings suggest that, with appropriate training and workflow integration, P4P can support pediatric primary care providers in following AAP recommendations to include play education as a standard component of well-child care (Sulaski Wyckoff, 2018; Yogman et al., 2018). However, these findings should be interpreted as preliminary. Future hybrid trials are needed to more comprehensively document P4P’s effectiveness alongside its implementation to inform organizational decisions to implement the program.

Conclusion

In summary, this study evaluated the early feasibility and implementation outcomes of P4P in safety-net pediatric settings. While caregiver outcomes did not differ by exposure to P4P, the program was well-received by clinic staff, who successfully integrated it into WCCs. These findings offer important preliminary insights into the implementation of a play-focused intervention in routine pediatric care.

Supplemental Material

Supplemental Material - Prescription for play: Implementing a pediatric play promotion intervention in federally qualified health centers

Supplemental Material for Prescription for play: Implementing a pediatric play promotion intervention in federally qualified health centers by Rebecca L. Emery Tavernier, May Oo, Shelby Anderson-Badbade, Lynsey G. Huppe, Peyton Rogers, Ho-Choong Chang, Randall W. Grout, Sal Anzalone Kelechi Ngwangwa, Joan East, Jan Lee Santos, and Mandy Lamb in Journal of Child Health Care

Footnotes

Author’s note

Funding from the LEGO Foundation provided salary support for RLET, MO, SAB, LGH, PR, HCC, and ML. To support data collection, this funding further provided organizational payments to the participating health centers affiliated with RWG, SA, KN, JE, and JLS. RWG, SA, KN, JE, and JLS did not directly receive any financial incentives for their involvement in this research. Although Prescription for Play is conducted in partnership with the LEGO Group, none of the authors are affiliated with the LEGO Group, and this research was conducted without input from the LEGO Group. The LEGO Group was not involved in any of the research activities described in this manuscript. The LEGO Group had no influence in the data analysis, reporting, or writing of this manuscript. The results reported herein do not necessarily reflect the views held by the LEGO Group.

Acknowledgments

The authors would like to acknowledge the contributions of the participating organizations, clinic staff, and caregivers, without whom this research would not be possible.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the LEGO Foundation.

Ethical consideration

All study procedures were approved by the Community Health Center, Inc. Institutional Review Board (#1196).

Consent to participate

All participants provided verbal consent prior to participating in the study procedures.

Data Availability Statement

The datasets supporting the conclusions of this article will be made available upon reasonable request to the corresponding author.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.