Abstract

Aims and Objectives:

Sentence repetition (SRep) tasks are a popular, valid, and cost-effective method of measuring language development. However, their heterogeneity and flexibility of use and adaptation may impact the obtained results. This may be particularly significant when using SRep tasks to identify language problems in children.

Methodology:

To bring attention to this issue, we carried out a systematic review of English-language studies using SRep tasks with samples of bilingual children with and without language problems. We report the results of a systematic review according to the 2020 PRISMA guidelines.

Data and Analysis:

A total of 774 records were screened, resulting in 141 studies subjected to a narrative analysis to characterize the versions of the reported SRep tasks used.

Findings:

Aside from summarizing broad publication trends regarding SRep tasks in studies on bilingual children, our systematic review found variability in the specific formal characteristics of the SRep tasks as reported in the publications. In particular, task standardization, length, and procedure as well as the use of analog versus digital versions of the SRep task emerged as potentially significant areas of differences.

Originality:

We offer an overview of how SRep tasks are structured and reported in the literature on bilingual children and language problems, pointing to areas of difference which, unless further examined, may impair conclusions and generalizations. We also offer suggestions on how to improve the transparency and clarity of reporting the methodological details of the SRep tasks.

Implications:

The systematic review lays out directions of further studies to refine the SRep methodology as applied to bilingual children and identifying language problems. Our findings have the potential to stimulate empirical research into how various characteristics of the SRep task may introduce unwanted variability into the measurement.

Keywords

Introduction

Sentence repetition (SRep) tasks are a language assessment in which participants are asked to repeat sentences verbatim as they are presented one at a time. They are commonly used to assess children’s morphosyntactic development and to identify language difficulties. SRep tasks have been traditionally used in studies of language processing and in clinical neuropsychological diagnosis (e.g., Fraser et al., 1963; Jarvella, 1971; Meyers et al., 2000; Vinther, 2002). They are also a valuable diagnostic tool for language disorders (Klem et al., 2015), particularly specific language impairment (SLI), developmental language disorder (DLD), and/or language impairment (LI) in bilingual children (Bishop, 2017). We use these terms as defined and/or used in the publications we refer to. SLI refers to children with language impairments of unidentifiable causes and with typical development and cognitive skills. DLD addresses SLI’s reliance on defining by exclusion, referring to children with language acquisition difficulties and making no reference to cognitive functioning as a criterion. Finally, as a broader term, LI encompasses various language issues without reference to the specific criteria of SLI, focusing on the impact of language difficulties on functioning, regardless of cause, cognitive level, or development in other areas (see Reilly et al., 2014; Volkers, 2018).

In this context, SRep tasks have shown high sensitivity to SLI, both in monolingual and bilingual children (Conti-Ramsden et al., 2001; Marinis & Armon-Lotem, 2015; Riches, 2012). Combined with their relative simplicity of design and use, this has made SRep tasks popular (Rujas et al., 2021). They are included in test batteries or as stand-alone tasks (e.g., Marshall et al., 2015; Stokes et al., 2006) and are versatile in terms of their application and purpose. For example, Kueser and Leonard (2020) employed an original SRep task involving “a series of four-word sequences” created to vary in terms of their frequency in spoken language as well as the level of predictability of the fourth word based on the preceding three (e.g., “All through the town,” “All through the hay,” “Go for a ride,” “Go for a bath” as high frequency/high predictability, low frequency/high predictability, high frequency/low predictability, and low frequency, low predictability, respectively, p. 1171). Importantly, predictability was based on corpus data. In this way, the task allowed for measuring word frequency and predictability effects on the performance of children with DLD compared with typically developing children. Notably from the point of view of our systematic review, Kueser and Leonard (2020) also report the modality of the stimuli (audio recordings, female speaker), the mode of administration (presented in-person by the experimenter), and the presence of training/trial items and feedback provision.

In contrast, regarding standardized SRep tasks, one widely used tool (although not the only one available, see, for example, Ward et al., 2024) is the LITMUS-SRep, developed as part of the COST Action IS0804 project, which has been adapted to various languages for cross-linguistic comparison (Marinis & Armon-Lotem, 2015). Developing parallel SRep tasks across languages required balancing cross-linguistic comparability with language-specific sensitivity. To achieve this, LITMUS-SRep tasks include two types of structures: those known to be challenging for children with SLI across languages, such as object relative clauses and object wh-questions, and those that are particularly difficult in a given language, based on prior research. For instance, one of the structures used across languages is the object relative clause, which involves both embedding and syntactic movement. Examples include:

English: “The swan that the deer chased knocked over the plant”

Russian: “jeto devochka, kotoruju narisovala mama” (“This is the girl that the mother drew”)

Hebrew: “ra’iti et ha-kelev še ha-sus daxaf” (“I saw the dog that the horse pushed”)

These structures were included because they have consistently been found to pose difficulties for children with SLI across typologically different languages, making them suitable for cross-linguistic assessment of morphosyntactic development.

There are several crucial features of SRep tasks to consider. Above all, they must be constructed separately for each language in order to account for language-specific features and developmental difficulties (Antonijevic & Meir, 2024; Fleckstein et al., 2016; Marinis & Armon-Lotem, 2015). For instance, passives are generally difficult across languages, but the nature of this difficulty varies across populations. English-speaking children with SLI have difficulty with passives, but typically developing bilingual children do not. In other languages, including Hebrew and Russian, passives pose a general difficulty due to their infrequency or the complexity of the case system, essential for understanding passive structures, making them particularly challenging for bilinguals. In Polish, complex sentences with relative clauses and prepositional phrases, such as “Ona idzie po trawie, na której leżą deski” [She is walking on the grass, on which boards are lying] are difficult due to the complex inflectional system. The noun “trawa” (grass) is inflected to “trawie” to indicate the locative case, triggered by the preposition “po” that requires the noun it governs to reflect location. Similarly, the relative pronoun “której” agrees with “trawie” in gender, number, and case (singular, feminine, locative) to maintain grammatical coherence within the relative clause “na której leżą deski.” The verb “leżą” is inflected for third person plural, aligning with its plural subject “deski,” while the prepositional phrase “na której” is locative. This alignment of case, number, and gender across different parts of the sentence through inflection exemplifies the complexity.

Although the task may appear to be memory-based, research has demonstrated that performing well on syntactically complex items requires morphosyntactic processing. Specifically, when sentence structures are sufficiently complex, accurate repetition requires speakers to access and apply grammatical knowledge rather than rely on simple recall (Erlam, 2006; Frizelle et al., 2017). As Devescovi and Caselli (2007) have noted, reproducing such structures involves the speaker reconstructing them internally based on their linguistic competence.

SRep tasks are also employed for a wide range of study goals, and their characteristics may be adjusted accordingly. Thus, for example, the authors may need to construct a new SRep task to measure proficiency in a given, typically minority, language. Alternatively, they may analyze the SRep data in nonstandard ways, for example, focusing on instances of codeswitching (Soesman et al., 2022).

The way SRep tasks are administered also varies considerably. Sentences may be read by the person administering the task or may be presented on a tablet/computer with prerecorded sentences. Elements such as short visual elements and animations, may also be added to make the task more engaging for children. Moreover, SRep tasks may differ in length, may be employed stand-alone or within a longer measurement session, and their administration may involve intermittent rewards or encouragement. Finally, with bilingual children, the instructions and administration of the SRep task need not necessarily be in the same language as the SRep stimulus sentences themselves (e.g., Aguilar-Mediavilla et al., 2019; Grech, 2022). On another level, the reporting of these features in publications is also important to maintain transparency. Similar to reporting other methodological choices and procedures, omitting this information or assuming it is intuitive runs the risk of occluding its potential impact on the obtained results (see Zogmaister et al., 2024).

Given the variability observable in the specific elements and administration of SRep tasks, which, similar to other areas of psychological testing, may impact the obtained results and their reliability and/or validity in clinical practice, we conducted a systematic review to describe and systematize these differences. In the research context, an unacknowledged variability between different versions of the tasks—and the assumption that they are equivalent without verification—may hinder the ability to compare and generalize results between samples and studies (Gilmore & Campbell, 2019; Nosek et al., 2022; Wolfe-Christensen & Callahan, 2008). Thus, our systematic review examined this variability in SRep task design and reporting to highlight potential concerns, stimulate further research, and suggest best practices.

Sentence repetition tasks in bilingual children

Notably, despite the increasing volume of research on bilingualism, most language development assessment tools, including SRep tasks, are primarily designed with monolingual speakers in mind. The tasks frequently rely on normative data derived solely from monolingual populations, which may not adequately capture the linguistic abilities of bilingual speakers. This has the capacity to further exacerbate existing issues with SRep task standardization and results interpretation. Differences in how bilingual children might perform on SRep tasks compared to monolingual peers can be attributed to their different language development trajectories, particularly because bilinguals often develop language skills in a nonlinear manner. Although bilingual children reach early language milestones at the same age as their monolingual peers (Muszyńska et al., 2025), specifically babbling, first word, 10th word, 50th word, and first multi-word utterance, their grammatical development may follow a different trajectory than the one of monolingual children. Their language development might progress rapidly in some areas while slowly in others, influenced by varying levels of exposure to each language, differing contexts in which the languages are used, and the cognitive demands of managing two linguistic systems simultaneously (see Marinis & Armon-Lotem, 2015). Given that bilingualism may follow different language developmental trajectories, there is a need to have tasks standardized for multilingual speakers. Otherwise, if they are tested with tasks standardized for monolinguals, there is a significant risk of inaccurately assessing their language abilities and, in applied settings, making suboptimal decisions based on this data, which may impact the children’s education and functioning (Cao & Yan, 2024; de Jong, 2024; Hunt et al., 2022). Therefore, it is important to study how tasks assessing language development work with bilingual children specifically.

However, few studies thus far have examined the potential impact of differences in SRep task characteristics on the obtained results. For example, Banasik-Jemielniak et al. (2023) have administered an online and in-person version of the SRep-PL task (Banasik et al., 2012) to a sample of 60 Polish-English and Polish-German bilingual children aged from 4 to 7 years. Despite a positive correlation between the online and offline version, the authors have also found statistically significant differences in the children’s scores on the two task modalities, and also noted that the pattern of performance was different for multilingual and monolingual children. In contrast, Pratt et al. (2022) compared scores of 10 children (5 monolingual English and 5 Spanish-English bilinguals) aged between 4 and 8 years on online and offline versions of a range of language assessment tools which included an SRep task and found that the scores obtained in both task modalities were highly correlated, which the authors have taken to imply no significant changes between the modalities. Therefore, available evidence suggests potential variability in bilingual children’s SRep scores depending on administration modality, a point which deserves further attention and study.

COST Action IS0804 and the LITMUS-SRep

A notable initiative in the context of standardizing the SRep tasks for use with bilingual children is the 2009 COST Action IS0804, entitled “Language Impairment in a Multilingual Society: Linguistic Patterns and the Road to Assessment.” It was aimed at enhancing the understanding of language impairment in multilingual settings, addressing the complexities of diagnosing and assessing language impairments in individuals who speak multiple languages. The core of the project was to determine linguistic patterns and developmental trajectories that are typical and atypical among multilingual children, a population that is growing in many parts of Europe due to increased migration and mobility.

One of the significant outcomes of COST Action IS0804 was the development and widespread adoption of the LITMUS (Language Impairment Testing in Multilingual Settings) tools, which include a variety of tasks designed to assess language abilities in children speaking different languages. One of the tools is the LITMUS-SRep task. It is designed to be adaptable across various languages to disentangle bilingualism from SLI. The primary goal was to create a standardized method to assess sentence repetition performance across a wide range of languages while taking into account linguistic and cultural differences. Two main principles guided the LITMUS-SRep task framework: inclusion of syntactically complex and simple structures and adaptation to language-specific features. That is, tasks included syntactically complex structures known to be difficult for children with SLI or language impairments across languages, such as object relative clauses, conditionals, and sentences involving syntactic movement. Also, control structures, such as mono-clausal sentences, were included to provide baseline comparisons. Each language version of the LITMUS-SRep task incorporated structures uniquely challenging for children with SLI or language impairments, but manageable for bilingual children with typical language development (Marinis & Armon-Lotem, 2015).

The current study

Although the COST Action IS0804 represents a crucial step toward improving the standards of SRep task use, the methodologies of SRep task use and their various elements remain heterogeneous across studies. This represents a potential, though untested, source of confounds in results, which may have consequences in both research as well as applied settings. Therefore, to further stimulate attention and research in this area, our purpose was to examine the patterns of variability in the formal characteristics of SRep tasks as reported in English-language studies on language development in bilingual children with and without SLI. Importantly, we highlighted both the SRep features themselves as well as the ways they were reported. To this end, we carried out a systematic review of published empirical studies. We sought to describe the most common patterns of features, contexts of use, and reporting practices of SRep tasks in these studies, outline potential future directions of research and areas requiring more attention, stimulate awareness of current methodological issues concerning the reporting of the SRep tasks in studies, and suggest tentative best practices, both in terms of SRep administration as well as the reporting thereof.

We are aware of several other systematic reviews and/or meta-analyses concerning this topic. First, Pawłowska (2014) carried out a meta-analysis of studies using verb tense grammaticality judgment tasks, nonword repetition tasks (NWR), and SRep tasks to establish their accuracy in diagnosing language impairment in monolingual English-speaking children (aged 3–12, with one study on a sample of 21-year-olds). Her systematic review included four studies using two types of SRep tasks: Redmond’s (2005) Sentence Repetition and the Recalling Sentences subtest from the CELF-4 (Semel et al., 1994). In contrast, our systematic review focused only on SRep tasks. In addition, we did not examine their diagnostic accuracy per se. Rather, we were interested in examining the differences in their contexts of use and formal characteristics, and in drawing attention to the need to systematize them. Second, Rujas et al. (2021) carried out a scoping review in which they established the patterns of SRep task use for diagnosing language difficulties in the years 2010–2021, focusing, for example, on the task languages, the studied populations, or the constructs assessed using SRep tasks (e.g., language abilities vs cognitive abilities). Our systematic review aimed to answer a more specific research question regarding the features of the SRep tasks in the context of bilingual children. While SRep tasks have been extensively utilized in linguistic and clinical research, the diversity in their implementation raises questions about the comparability of findings across studies, especially in the context of bilingual children’s language assessment. This heterogeneity not only reflects the adaptability and versatility of SRep tasks to meet specific research needs but also points at the potential methodological challenges in ensuring consistent and reliable research and diagnostic outcomes. Third, Ward et al. (2024) showed that SRep tasks are widely used in research on monolingual children’s language ability. Their systematic review and meta-analysis also classified different SRep tasks across studies (standardized vs nonstandardized). However, their specific focus was on examining the ability of SRep tasks to discriminate between typically developing children and children with DLD. Moreover, their review focused only on monolingual children. In contrast, we (a) focused in greater detail on the structural characteristics of SRep tasks beyond standardization itself (b) in studies on bilingual children.

Therefore, our review aimed to contribute by providing an overview of how SRep tasks have been used, adapted, and reported in studies involving bilingual children. By examining the formal characteristics of these tasks—ranging from the mode of sentence delivery to the inclusion of interactive elements—we hoped to shed light on the nuances that may influence task performance. This exploration may have implications for the development of best practices in the administration and reporting of SRep tasks. In summary, our systematic review aimed to answer the following research questions:

How do SRep tasks in studies on bilingual children with and without SLI/DLD/LI vary in their formal/structural features (e.g., languages tested, task formats, and scoring methods)?

How are these features reported in publications (i.e., are there pervasive omissions) and how could their reporting standards be improved?

Method

We report the systematic review in accordance with the 2020 PRISMA guidelines (Page et al., 2021) as applied to the current topic. The PRISMA flowchart of the literature search is shown in Figure 1. The current systematic review was not pre-registered.

PRISMA flowchart of the current systematic review.

The systematic review included empirical studies published in English in academic journals. The inclusion criteria for the studies were: (a) using the SRep tasks in any form or medium, (b) for their general intended purpose, that is, to measure morphosyntactic development or some of its aspects, (c) on both clinical (i.e., with SLI or other language disorders/difficulties) and typically developing samples of bilingual children, and (d) speaking any two languages. That is, although the systematic review was limited only to English-language publications, studies concerning any language were included. Accordingly, the exclusion criteria for the studies were: (a) studies using the SRep tasks for different purposes than measuring morphosyntactic development in whole or in part (i.e., to measure hearing or stuttering), (b) solely on samples of monolingual children or adults. The systematic review comprised Scopus, EBSCO (all databases), and PubMed. In addition, the list of publications on the official LITMUS-SRep task website (https://www.litmus-srep.info/) was screened for publications, since the LITMUS-SRep task, resulting from the COST Action IS0804, represents a crucial development in SRep tasks in the context of bilingual language assessment. In addition, this served to improve the reliability of our search. No criteria for the date of publication of the studies entered into the systematic review were set.

The same search string was used in searches of all three databases, save for syntax or input modifications necessitated by the user interface differences between the databases. Due to the heterogeneity with which the SRtep tasks are sometimes referred to in the literature (e.g., sentence repetition vs sentence elicitation), the search string was manipulated (i.e., expanded or reduced, for every line separately) via trial-and-error in order to maximize the number of results. All three authors designed the search string together. Thus, the search string was “sentence repetition” OR “sentence imitation” OR “sentence elicitation” OR srep AND bilingualism OR bilingual OR multilingual OR “second language” AND children OR child OR development OR acquisition

The database search was carried out on 26 June 2022 by the first author. The search results from every database and from the LITMUS website were exported into a spreadsheet and collated. A total of 1,202 publications were retrieved. Duplicates were then removed in two stages: first by using the Zotero software, and second, by the first author manually removing any remaining duplicates. A total of 537 duplicates were removed this way. On 27 March 2023, the database search was run again by the first author to account for potential new publications. A total of 59 eligible publications were retrieved, out of which 50 were duplicates. Finally, on 26 January 2024 a second update of the screening was carried out in the same way. A total of 102 eligible publications were retrieved, out of which 3 were duplicates. Thus, a total of 774 publications were subjected to title and abstract screening.

The title and abstract screening was carried out in two stages. First, the publication dataset was divided into three equal parts. The database was screened by three authors (Author A, Author B, and Author C), working in rotating pairs. Each pair (A–B, B–C, and A–C) reviewed one-third of the dataset. The results of the screening were collated into a single spreadsheet. Second, all three authors reviewed the spreadsheet simultaneously. In case of conflicting decisions about the inclusion of a given article within the screening pair, all three authors revisited the title and abstract of the article together to arrive at a unanimous decision. When necessary, the full text of the publication was examined to ascertain the aim and methodology of a given study. This step was necessitated by the fact that the titles and abstracts of the publications in the dataset frequently used various terminology to refer to the SRep tasks employed. In addition, at times, names of larger test batteries which contain SRep tasks were given in the abstracts, or references were made not to the SRrep tasks, but to the constructs they were used to measure in a given study (e.g., “the language and reading achievement of 44 children adopted from Eastern European orphanages were clinically assessed with standardized tests and natural-language samples to determine the extent and types of problems present in the areas of language [i.e., overall spoken language, receptive language, morphology, semantics, syntax, pragmatics] and reading,” Hough & Kaczmarek, 2011). Finally, the publications included in the scoping review by Rujas et al. (2021) were examined and those which met the inclusion criteria of the current systematic review were included. Only one publication was included that way. Overall, the results of the title and abstract screening yielded 163 eligible reports, out of which one was unavailable for retrieval, resulting in 162 reports included in the full-text screening.

Full-text screening and data extraction were carried out by one author who extracted information about the stated study aims, sample characteristics, SRep task characteristics explicitly provided in the text, other tasks used in the study, and raw SRep scores (if reported). In addition, for each publication, the same author prepared a general narrative description of the results pertaining to the SRep task. These categories were designed before full-text screening began. This part of the analysis was carried out by one author because it did not involve any qualitative coding. No measure of study quality or publication bias was used in the full-text screening. To cross-verify the results of the full-text screening, the screening of 13 publications (10% of the dataset) each, randomly chosen from the dataset was carried out by the other authors. The assignment of texts to authors for the cross-verification process was done using a method of algorithmically controlled randomization. Specifically, a language model (ChatGPT) was used to generate 3 different sets of 13 non-repeating numbers. This approach ensured a randomized yet methodologically consistent assignment of texts to authors, taking advantage of the algorithm’s ability to facilitate unbiased distribution. The results of the cross-verification were then discussed between the authors and ambiguities were resolved. For this reason, no quantitative measure of reliability/agreement was calculated.

In cases where information about a given aspect of the study was not explicitly stated in the text (e.g., whether the children were simultaneous or sequential bilinguals), no inferences were made and an “N/A” code was given. Full-text screening resulted in rejecting 27 publications included in the title and abstract screening: 19 publications did not include studies where an SRep task was used, 5 studies did not involve a bilingual child sample, 2 publications contained duplicates of studies reported in publications already included in the systematic review, and 1 publication was a theoretical review. Thus, the final number of publications in the systematic review was 135. The number of reported studies was 141.

Results

Due to their volume, the full results of the systematic review are presented in the online Supplementary Materials: https://osf.io/tcpnz/. First, to provide context, we present a general overview of the dataset before proceeding to a detailed examination of the features of the SRep tasks and their reporting. Table 1 presents a shortened summary of the sample characteristics and the standardized/nonstandardized character of the SRep tasks in each of the studies included in the database. In turn, Table 2 presents the frequencies of reporting of each formal characteristic of the SRep task that we focused on in our systematic review.

Summary of sample characteristics and SRep task standardization in the studies included in the systematic review.

Note. For ease of presentation and brevity, the age means and/or ranges for individual subsamples in each study have been collated into ranges where applicable. Similarly, the number/proportion of girls in each subsample has been summed into a single value where applicable. Full and detailed sample characteristics for each study are available in the Supplementary Materials. Studies included in the systematic review are marked with an asterisk in the end reference list.

Frequencies of reporting of SRep task characteristics in the studies included in the systematic review.

Publication years

Figure 2 presents the distribution of publication years of the publications identified in our systematic review. The earliest publication identified was Clay (1971), where the SRep task was used as a measure of language acquisition in the school context. Only 11 publications were identified in the 1971–2009 period. The publication by Marinis and Armon-Lotem (2015) on the SRep tasks created within the COST Action IS0804 can be considered an influential landmark which potentially facilitated subsequent studies—it has been cited 210 times as per Google Scholar at the time of writing. However, determining the specific impact of the COST Action IS0804 and Marinis and Armon-Lotem (2015) on the design and reporting of SRep tasks in studies with bilingual children was beyond the scope of the current systematic review. After 2015, the number of publications increased overall, though not consistently year-over-year. However, these numbers represent a relatively narrow area of application of the SRep tasks. For example, the study by Conti-Ramsden et al. (2001) which is also considered a foundational publication on the SRep task (Marinis & Armon-Lotem, 2015; Rujas et al., 2021), concerned only monolingual children, a strand of research which was excluded from our systematic review. SRep studies with bilingual children have more methodological considerations, with the need to frequently have SRep tasks in at least two languages possibly being the most crucial one. We turn to these considerations now.

Distribution of publication years in the systematic review.

Study aims and tasks used in conjunction with SRep

Study aims

Although the inclusion criteria of our systematic review limited it strictly to reports of studies using SRep tasks to investigate language development in bilingual children with or without SLI/DLD/LD, we also examined the study aims of the included reports. Qualitatively categorizing the studies based on their stated goals, the measures employed alongside SRep tasks, and the types of reported results (e.g., total SRep scores only or SRep scores divided by morphosyntactic categories), the following five categories were derived from the dataset. The same report could be classified into multiple categories if the report’s stated study aims, methodology, and reported results supported it. For example, Ziethe et al. (2013), compared bilingual children with and without SLI on their SRep and digit span performance and tested how these scores predicted their language abilities. Thus, the report fit multiple of the below categories:

(a) General language development (68 reports, 50.37% of the dataset), or studies which examined broad language-related variables such as language acquisition, reading outcomes, or narrative skills as well as various facets of language background. These studies also reported SRep scores in general, without analyzing specific morphosyntactic categories. For example, Graham et al. (2017) examined the impact of an oral versus literacy-based teaching approach on children’s linguistic outcomes, operationalizing these via the SRep and photo description task total, vocabulary, and grammar scores. Hough and Kaczmarek (2011) examined language skills in adopted children, including the SRep task from a standardized language assessment battery in a composite score of syntactic proficiency. Similarly, Wagley et al. (2022) analyzed bilingual reading comprehension utilizing SRep scores within a composite score of morphosyntactic knowledge. This category was the most frequent in our dataset, although it can also be considered to be the broadest conceptually.

(b) Specific language development (48 reports, 35.55% of the dataset), or studies which focused on the development, acquisition, or performance in an explicitly defined element of language, reporting SRep scores related to this element rather than only generally. For example, Friesen et al. (2021) measured “differences in English syntactic knowledge” (p. 2951) and reported SRep scores broken down into short and long sentences, active and passive sentences, and noun, verb, and prepositional phrases. Sopata and Długosz (2022) studied word order specifically, dividing SRep scores into categories of negation, inversion, complex verb structures, and subordinate clauses. Finally, Janssen and Meir (2019) focused specifically on the accusative case in Russian, analyzing SRep scores based on four syntactic categories (SVO, SVOO, SOV, and OVS).

(c) Cognitive factors (32 reports, 23.70% of the dataset), or studies which focused on variables related to cognitive processes beyond assessing them during participant recruiting, sample matching, or screening for language difficulties. These chiefly included short-term memory or working memory, and intelligence. These were also studies which used SRep as a measure of cognitive processes related to language. For example, Talli and Stavrakaki (2020) measured verbal short-term and working memory in children with and without DLD and analyzed their influence on syntactic production measured by an SRep task. Aguilar-Mediavilla et al. (2019) measured visual and auditory attention, phonological awareness, verbal short-term memory, and access to verbal information as part of a longitudinal study of children with DLD where the SRep total score was used as one of the measures of verbal short-term memory. Meir (2017) also used an SRep task to measure verbal short-term memory differences in monolingual and bilingual children with and without SLI.

(d) Language difficulties (58 reports, 42.96% of the dataset), meaning studies which concerned language difficulties such as DLD, SLI, or dyslexia in any capacity, whether in terms of using SRep as a diagnostic tool or to measure language-related variables in children experiencing language difficulties. For comparison, Rujas et al. (2021) reported that 76 studies in their dataset of 203 (37.44%) included children with DLD, SLI, LI, or language delay. Regarding our dataset, for example, Abed Ibrahim and Fekete (2019) examined the relative and combined diagnostic accuracy of the LITMUS SRep (both identical repetition and target structure repetition scores) and nonword repetition tasks. Similarly, Tuller et al. (2018) “focused on whether and how well [. . .] LITMUS-NWR and LITMUS-SR, can identify SLI in 5–8-year-old bilingual children having one of the three home languages (Arabic, Portuguese or Turkish), but growing up in different sociocultural and L2 settings, in France and in Germany” (p. 890), utilizing the identical repetition score. In contrast, Bedore et al. (2020) studied a sample of Spanish-English children with DLD, using the SRep total score as an index of their grammatical proficiency before and after a targeted teaching intervention, Language and Literacy Together.

(e) SRep task development (7 reports, 5.18% of the dataset), or studies which strictly reported on the process of creating or refining an SRep task, rather than using an SRep task (standardized as well as made by the authors for the purpose of the study) as part of a larger study. For example, Grech (2022) reported creating a Maltese-language SRep, reporting evidence for its reliability and validity. In contrast, Fitton et al. (2019) examined the psychometric properties of the SRep within the BESA test battery, namely, its factor structure and validity, concluding that from an applied point of view, “the sentence repetition tasks can be treated as essentially unidimensional” (p. 26). Finally, the landmark chapter by Marinis and Armon-Lotem (2015) introduces the COST Action IS0804 LITMUS SRep tasks together with a discussion of preliminary data concerning its diagnostic accuracy for SLI in monolingual and bilingual children. However, regarding this category, it bears mentioning that the small number of studies it contains may have been a consequence of our search strategy, as we explicitly focused on studies on bilingual children. It is possible that additional data on SRep tasks specifically exists in other literature.

Tasks used in conjunction with SRep tasks

For further insight into the contexts of using SRep tasks in studies on bilingual children, we also examined the tasks which were used alongside them. In 15 studies (10.64%), the SRep task was the only measure used, while the remaining studies reported using one or more other tasks. Three studies in one report reported only the SRep results, but stated that “the children participated in a battery of standardized and non-standardized assessments and experimental tasks,” without specifying them further (Chiat et al., 2013, p. 78). Considering that our systematic review focused specifically on studies on bilingual children with or without SLI/DLD/LI, the studies in our dataset reported using SRep tasks in conjunction with other language-related measures. These included various language background/history questionnaires, language proficiency and vocabulary tests, and more specific measures, for example, of narrative abilities (Whiteside & Norbury, 2017) or codeswitching (Quirk, 2020). In addition, studies also frequently employed cognitive measures—intelligence testing batteries or tasks measuring short-term/working memory. Due to the significant heterogeneity of these measures in terms of their target and language, we did not subject them to further classification.

Notably, aside from SRep tasks, NWR tasks are another popular measure of language difficulties and/or working memory (Conti-Ramsden et al., 2001; Pawłowska, 2014; Pham & Ebert, 2020). In these tasks, children are asked to repeat phonologically well-formed sequences that conform to the phonotactic rules of the target language but lack semantic content. Because these nonwords are not part of the mental lexicon, the task requires the listener to rely on phonological encoding, short-term storage, and articulation, without drawing on existing lexical knowledge (Coady & Evans, 2008; Gathercole et al., 1994). Originally conceptualized within the framework of working memory theory, NWR has been interpreted as a measure of phonological short-term memory, particularly engaging the phonological loop: a component responsible for temporary retention and rehearsal of unfamiliar verbal material (Gathercole & Baddeley, 1990). By excluding familiar lexical items, NWR isolates the capacity to process and retain novel phonological forms, which is critical during early stages of language acquisition (Gathercole et al., 1992; Archibald & Gathercole, 2006). In our dataset, 33 (23.4%) studies were reported to use NWR tasks together with an SRep task in one or more languages. Research has shown that performance on NWR tasks correlates with broader language abilities and can indicate developmental language disorder (Polišenská, 2011). NWR has been developed into language-independent tools, such as the cross-linguistic nonword repetition (CL-NWR; Polišenská et al., 2020).

Languages measured using SRep tasks

Of the 141 studies in the systematic review, 60 (42.55%) reported using SRep tasks for two languages or more within a single study. The remaining used only one SRep task. Regarding the studied languages, the most popular one was English (n = 62), then Spanish (n = 27), likely reflecting the dominance of the United States context (i.e., Spanish-English bilingual children) in terms of publications. Popularly studied languages also included German (n = 20), Hebrew (n = 16), French (n = 16), Russian (n = 13), Greek (n = 8), and Turkish (n = 6). This broadly reflects the findings from the scoping review of SRep tasks in the literature between 2010-2021 by Rujas et al. (2021), especially the dominance of the English language. The remaining studied languages were Afrikaans, Albanian, Arabic, Cantonese, Catalan, Dutch, Farsi, French Sign Language, Icelandic, Irish, Italian, Kam, Malay, Malaysian English, Maltese, Mandarin, Norwegian, Polish, Quebecois French, Sepedi, Somali, Swedish, Syrian-Arabic, Tamil, Urdu, Vietnamese, and Welsh (n < 4).

Samples

Next, we classified the studies in our dataset based on whether the sample consisted of typically developing bilingual children, bilingual children with any type of language disorder or disability, or both within a mixed sample. Importantly, the methods or procedures of language development assessment themselves were outside of the scope of our systematic review.

Seventy-three (51.77%) studies reported having an entirely typically developing sample, the most popular sample type in our dataset. On the other hand, seven (4.96%) studies focused specifically on children with any type of language disorder, while 47 (33.33%) studies reported having a mixed sample. We counted any sign, presence, or frequency of any language difficulties as the language disorder/disability or mixed category. This included, for example, risk of dyslexia in Taha et al. (2022), children receiving speech pathologist services in Castilla-Earls et al. (2023), or children identified as at risk for language learning difficulties on the basis of the study results themselves in Erdos et al. (2014). Importantly, 14 (9.92%) studies did not clearly specify whether the sample was typically developing or not. We counted them as a separate category due to our focus on reporting standards, although it may likely be inferred that due to the absence of any information otherwise, these samples were typically developing.

Two of the studies in our systematic review (Andreou, Tsimpli, et al., 2020; Meir & Novogrodsky, 2020) concerned samples of bilingual children with autism spectrum disorder (ASD), with Meir and Novogrodsky (2020) including subsamples of neurotypical and ASD children both with and without DLD. Nevertheless, since our systematic review focused on the use of SRep tasks for purposes of language assessment in bilingual children, both typically and atypically developing, we did not exclude those two studies as they met these criteria.

SRep task features and reporting

SRep task standardization

The main aim of our systematic review was to describe the varieties in the SRep tasks as reported in the empirical literature on bilingual children’s typical and atypical language development. To this end, we first examined the proportion of SRep tasks reported in the publications as standardized, that is, being part of a published battery of language tests (e.g., the Bilingual English-Spanish Assessment, BESA, Peña et al., 2014, or the Clinical Evaluation of Language Fundamentals Preschool-2, CELF Preschool-2, Wiig et al., 2004) or developed within the COST Action IS0804. In case the same task was used in multiple studies within a single report, we only counted it once.

We identified 110 (78.01%) reported mentions of a standardized SRep task and 50 (35.46%) mentions of the creation of a new SRep task by the authors specifically for the purpose of the study (if the same task was used in multiple studies within a single report, we only counted it once). For example, Torregrossa, Eisenbeiß, & Bongartz (2023) reported creating their own version of an Italian SRep task “based [in terms of the examined syntactic structures] on existing SRTs” (p. 691). Monsrud et al. (2022) back-translated the standardized Språk 6–16 SRep task in Norwegian (Ottem & Frost, 2005), and Simon-Cereijido and Méndez (2020) reported using “researcher-developed sentence repetition tasks” in English and in Spanish. We also identified 14 (9.92%) mentions of the use of a task “adapted” from another task or a standardized version. For example, Antonijevic et al. (2017) created an Irish SRep task “following the principles used for other LITMUS-SRep tasks outlined by Marinis and Armon-Lotem (2015) and commenced from an adapted ‘School-Age Sentence Imitation Test-E32’” (p. 364). Similarly, Yang et al. (2023) used “a sentence repetition task (SRep) adapted into Kam (Marinis & Armon-Lotem, 2015)” (p. 5). Gangware (2016) reported using a Cantonese and English SRep task which was “adapted from the sentence repetition task by Devescovi and Caselli (2007)” (p. 15). It is not always clear what this adaptation process entailed, though this classification serves to highlight the diversity with which the authors utilize the SRep methodology more broadly, and the COST Action IS0804 guidelines more narrowly. Thus, the solutions ranged from using published tasks, using SRep subtests from larger language batteries, translating existing tasks into different languages, or designing SRep tasks from the ground-up, with and without using elements of existing SRep tasks, while taking into account the studied language and/or syntactic structures. For comparison, in their scoping review, Rujas et al. (2021) reported that 41% of the studies in their dataset used versions of SRep tasks that have been published previously, 33% used SRep tasks included in assessment batteries, and 25% used SRep tasks created for the purpose of the specific study. Our results also show that standardization of the SRep was the most frequent choice, although it took various forms between the studies. This heterogeneity of the SRep tasks results in the heterogeneity of their particular characteristics, which are additionally not always comprehensively reported. We turn to this point in greater detail now.

SRep task length and presence of practice items

Task length

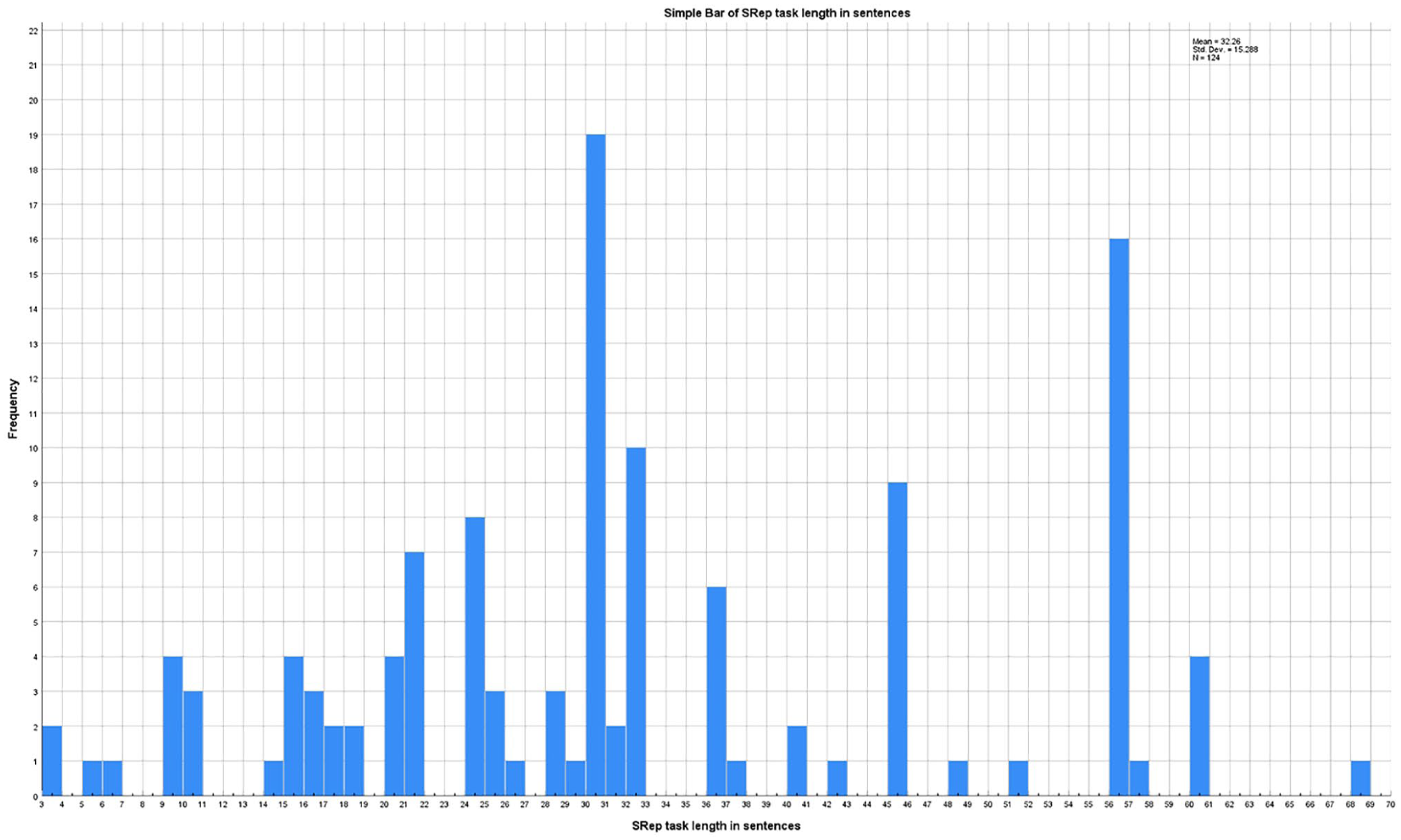

The number of items included in the SRep tasks was specified in 104 studies (73.75%; if the same task was used in multiple studies within a single report, we only counted it once). Korytkowski Longo (2011) was an exception, since in her study on the effects of a language teaching intervention on bilingual children with language/reading impairment, the children were tested with “5 to 7 sentence probes during each week of intervention over an eight-week period” (p. 11). Otherwise, the shortest reported SRep task consisted of three items: Limacher (2018) used three “sentences developed by Hack and colleagues (2012) for their sound inventory” (p. 19), and the children’s recordings were later rated for accentedness and comprehensibility. The longest reported SRep task contained 68 sentences: Haman et al. (2017) used the Polish-language LITMUS-SRep, based on the English SASIT task (Marinis et al., 2010) to compare Polish proficiency between early bilingual migrant children and nonmigrant monolingual children. The mean length of the reported SRep tasks was 32.26 (SD = 15.29), with the mode in our dataset being 30.

In 34 reports (24.11%), the number of items was not specifically reported. However, this does not necessarily mean that the length of the task is unknown. In the majority of these cases, the authors relied on a standardized SRep task which was properly cited, but the details of which were subsequently omitted. For example, Chondrogianni and John (2018) reported using the Sentence Structure subtest from the CELF-2, without specifying any further details. The results of the SRep task length are provided in Figure 3.

SRep task length across studies.

Practice items

The presence of practice items was specified in 45 studies (31.91%; if the same task was used in multiple studies within a single report, we only counted it once). The remaining 93 (65.95%) studies either did not use practice SRep items, or did so, but did not report it (e.g., due to using a standardized version of the task, following the manual, and omitting the reporting for reasons of brevity or space).

Computerization of SRep tasks

A potentially significant aspect of SRep task usage which might impact the obtained results is whether the SRep task was used in a computerized version or an analog version. Traditionally, SRep tasks involved the examiner reading the sentences out-loud and the child/examinee having to repeat them verbatim (see, e.g., Conti-Ramsden et al., 2001; Snow & Hoefnagel-Höhle, 1978). However, later studies began using a computerized version. The first report of such a task identified in our dataset comes from Trofimovich and Baker (2007), in which the children were presented with prerecorded SRep stimuli “in a quiet location using a personal computer and stimulus presentation software” (p. 256). Both versions of the SRep task are reported in the literature. In our dataset, 64 studies (45.39%; if the same task was used in multiple studies within a single report, we only counted it once)—the largest proportion—did not specify whether a computer was used to administer the SRep task. However, it should be noted that we arbitrarily assumed that the five studies in our dataset published prior to 1994 (ranging from 1971 to 1986) would not have used computers and have thus classified them as analog rather than as having omitted this information from the report. Twenty-two studies (15.60%) reported information specifying that the SRep task was administered in-person. For example, Chiat et al. (2013) used the SRep task from the Goralnik Screening Test for Hebrew (Goralnik, 1995), and stated that “the sentences of the sentence repetition subtest from the Goralnik test were presented to the children by a native speaker of Hebrew” (p. 68). Finally, 52 studies (36.87%) clearly specified using a computerized version of the SRep task. For example, Abed Ibrahim and Hamann (2017) used the German version of the LITMUS-SRT task (Hamann et al., 2013), reporting that it was “administered using a pseudo-randomized computerized version” (p. 8).

For a more detailed analysis, we also considered the individual aspects of task computerization: prerecording of sentences and the use of visual stimuli within the task.

Prerecorded SRep sentences

Forty-eight (34.04%; if the same task was used in multiple studies within a single report, we only counted it once) studies did not clearly report whether the sentences in the SRep task were prerecorded and played back or whether they were read out-loud. These were often studies which only reported using an SRep task from a standardized battery of tests, and which presumably followed the administration instructions appropriately. Thus, for example, Blom et al. (2021) only reported using the Syrian Arabic LITMUS-SRT task. Although information about prerecording the stimulus sentences or not is not clearly given in the report, it is possible for readers to infer that the LITMUS-SRT task is computerized. However, for example, Altman et al. (2014) reported designing their own SRep task specifically for the age group included in their study (Russian-Hebrew bilingual children aged between 4;6 and 6;9), but gave no details about its format or mode of administration. 30 studies (21.27%) were reported to use SRep items which were read out-loud, while 60 studies (42.55%) reported using prerecorded items, making it the most popular choice in our dataset. An additional concern involves the lack of reporting of the gender of the item speaker. We identified 15 studies (10.63%) in which the gender of the prerecorded item speaker was noted (1 male, 2 “male and female,” and 12 female). Interestingly, only one study in our dataset noted the gender of the examiner who read the items out-loud (female, in Jordaan & Ngwanduli, 2020).

Visual stimuli in SRep tasks

Another feature of SRep task computerization that we focused on was the presence of visual stimuli accompanying the items. The SRep task is intended to tap into language skills. Since they are frequently indicated as effective diagnostic tools for SLI, they also require standardization. However, when used with children, the difficulty of making children focus, stay on task, and maintain engagement arises. The presence of enriching visual stimuli emerges as a potential solution. Accordingly, in our dataset, 27 studies (19.14%; if the same task was used in multiple studies within a single report, we only counted it once) reported using any form of visual stimuli in the SRep task. The nature of these stimuli varied. For example, Sopata and Długosz (2022) incorporated a “picture of a speaking child” as a “signal for the child to repeat the sentence” (p. 9) in their German SRep task. Limacher (2018) presented children with English SRep items “with picture support using a tablet” (p. 19). In the French SRep task used by Courtney et al. (2017), “as the learners heard the sentence, they were also shown an image of the objects referred to in the sentence to encourage them to focus on meaning” (p. 831).

Thirty-four studies (24.11%) have specified administering the SRep task via a PowerPoint presentation. This number is higher than the number of studies reporting any visual stimuli use, as these PowerPoint presentations were not always described in detail and in some instances may have simply been a technical means to easily present the prerecorded sentences. Nevertheless, we mention this figure as the most typical visual enrichment of the SRep task was represented by the LITMUS-SRT tasks, which are typically presented in the form of a “child-friendly” PowerPoint presentation. It presents a short pictorial story which advances as the child repeats the sentences (see Marinis & Armon-Lotem, 2015), sometimes also referred to as the “treasure hunt.” In our dataset, 13 studies clearly reported using this child-friendly solution. Otherwise, 14 studies (9.92%) clearly reported not using any visual stimuli, while 97 studies (68.79%), a decisive majority, did not report explicitly whether visual stimuli were present or not. Importantly, this category may include studies which actually did use visual stimuli, but did not state it. For example, the Goralnik Screening Test for Hebrew utilizes pictorial stimuli alongside the target sentences in its SRep subtest. It was reported as the SRep task in three studies in our database (Altman et al., 2018; Chiat et al., 2013, Study 1; Rose et al., 2022), although the information about the pictorial stimuli was reported only by Chiat et al. (2013). Similarly, it is likely that other studies used SRep tasks from standardized test batteries which included pictorial stimuli, but did not report this explicitly.

Feedback given to children

Another method of maintaining children’s focus on the SRep task involves providing feedback, either in the form of verbal encouragement or small gifts contingent on performance. It may be argued that this element is of crucial importance for assessing the task’s validity (especially when using SRep tasks to identify SLI), as the administrator’s behavior has the potential of significantly impacting the child’s performance. Nevertheless, this information was very rarely disclosed in the reports in our database. Only 18 studies (12.76%) provided information about the feedback given to the children. For example, Torregrossa, Eisenbeiß, & Bongartz (2023) reported in the Supplementary Materials that during their gamified, story-based Italian SRep task administration, the children “each received positive feedback (i.e., “well done”), irrespective of the accuracy of the repetition” (p. 9). Woon et al. (2014) reported that during their Mandarin Chinese and English SRep task administration, “the researcher would also praise the children for any attempt at repetition” (p. 145). On the other hand, Janssen and Meir (2019) only reported that “positive feedback” (p. 14) was an element of the entire procedure.

SRep task scoring schemes

Marinis and Armon-Lotem (2015) describe six default scoring schemes for SRep tasks: (a) binary scoring of identical repetitions, (b) 3-point scoring for repetition accuracy, (c) counting content and function words, (d) binary scoring of overall repetition grammaticality, (e) binary scoring of target structure repetition, and (f) counting the number of changes between the item and the repetition. This variety is due to different traditions and procedures inspired by test batteries predating the COST Action IS0804 LITMUS tasks as well as different aims to which the SRep tasks can be used.

Accordingly, the studies in our dataset also differed in terms of the scoring schemes applied, with these differences also stemming from the task itself (i.e., using an SRep task from a specific test battery) or from the study aims. Thus, out of the studies reviewed, 36 studies (25.53%; if the same task was used in multiple studies within a single report, we only counted it once) reported using more than one scoring scheme. Twenty-three studies (16.31%) had no clearly identified scoring scheme, although the SRep task results were reported, typically as means or percentages of correctness. Six studies (4.25%) reported applying the scoring scheme from the test battery from which the SRep task was taken, but without stating this scheme in the report.

As regards the schemes, binary scoring based on identical repetitions was the most popular one, reported in 44 studies (31.20%). Binary scoring based on target structure repetition was the second most popular, reported in 31 studies (21.98%).

Alternative scoring schemes were employed in 19 studies (13.47%). In several instances, they involved qualitative analyses alongside employing more traditional scoring schemes. For example, Meir (2018) analyzed error profiles in addition to comparing quantitative scores based on target structure repetition. Similarly, Hamim and Hamid (2021) applied the binary scoring schemes for accuracy, grammaticality, and sentence type alongside a qualitative analysis of the error types. However, other studies used scoring schemes that were specifically matched to their aims. For example, Soesman et al. (2022) extracted instances of codeswitching from the recorded SRep trials and divided them into categories based on language and position of the switch within the sentence before subjecting these categories to further quantitative analysis. Bogliotti et al. (2020) used a French Sign Language SRep task and used qualitative ratings by annotators to identify regionalisms and substitutions more broadly, and phonological variables within them (e.g., location or orientation of the hands) more specifically. Finally, Trofimovich and Baker (2007) had native speakers judge the accentedness of English SRep sentences spoken by Korean-English bilingual children and adults.

Multipoint scoring schemes were reported in 20 (14.84%) instances, percentage calculations were reported in 16 instances (11.51%), and counting of specified instances was reported 20 (14.18%) times. As regards the targets of scoring, aside from identical repetition and target structure repetition, they included correctness of repetition, grammaticality, fluency, accentedness, word order and position, lexical or morphological targets, and so forth. Altogether, they were used for very specific study aims and are likely not intended for broader applications.

Discussion

Our systematic review aimed at describing the characteristics of SRep tasks used in published English-language empirical studies on language development in typically developing bilingual children and children with SLI. We hoped to draw attention to the variability in the various aspects of the SRep tasks as well as the typical procedures of their reporting, since they may potentially impact the obtained results and/or their utility. This way, we hoped to call for greater care and transparency in this regard.

Accordingly, our systematic review identified a large set of published studies (N = 141) in which SRep tasks were used to examine a broad range of topics, from typical and atypical language development, through cognitive functioning, to SLI diagnosis. We identified SRep-based investigations of 35 languages total, including both majority languages like English, Spanish, or French as well as relatively less common ones like Irish, Sepedi, or Quebecois French. Many studies examined two or more languages using SRep tasks, and the majority used SRep tasks in conjunction with other well-established measures of language ability and cognitive processes.

Based on our classification, the most prototypical, that is, following the most popular trends we have identified in our dataset, use of the SRep task in bilingual children to study language development would involve a typically developing sample and two or more SRep tasks, with one assessing English. The tasks would have about 30 sentences each and would be used together with a range of other language and/or cognitive tasks. The tasks would likely be standardized, contain prerecorded sentences, and be scored according to a binary scheme, potentially in conjunction with one or more different schemes. However, a report of such a task would likely omit mentions of computerization/gamification, the use of practice items, and whether the language of the task administration was different than the language of the task.

Overall, SRep is a useful measure in studies of language development in all domains and can be flexibly deployed in a variety of settings and populations, as well as in conjunction with a wide range of other tasks. SRep tasks are also largely available for major western European languages. However, additional studies are needed to appropriately respond to the call for increased availability of valid and reliable language screening tools for children speaking a variety of minority languages (Fleckstein et al., 2016; Rose et al., 2022). Furthermore, the classification of study aims testifies to the successful use of SRep in studies of bilingual child development (with studies on typical bilingual language development and bilingual language difficulties enjoying roughly similar popularity) as well as the characteristics, developmental dynamics, and detection of language difficulties (e.g., SLI, DLD, or dyslexia). This is particularly noteworthy, as bilingual language development remains a crucial area of research in which results from monolingual populations should not be easily carried over. This is because bilinguals may follow different pathways of development (Muszyńska et al., 2025), which not only require separate studies, but also require appropriately designed, standardized, and normed tests of language skills, SRep being one of them. In this context, the studies differed significantly both in terms of the features of the SRep tasks they used as well as in the quality of their description. Therefore, our systematic review highlights this area as important for facilitating further studies on bilingual language development. We consider recognizing heterogeneity in SRep tasks, ostensibly used toward the same research or applied purpose, as well as the reporting standards thereof a critical step toward improving them. In turn, greater clarity, transparency, and standardization of SRep tasks in published research will hopefully lead to intensified efforts aimed at designing SRep tasks in more languages, creating norms, and propagating standardization in SRep task use in applied contexts as well.

Probably the most significant area of difference involves computerized versus analog versions of the SRep task. The use of a PowerPoint presentation which frames the SRep task in the context of a picture book-like story was popularized due to its implementation in the COST Action IS0804 SRep tasks (see Marinis & Armon-Lotem, 2015). Although the intention of making the SRep task more engaging for children this way is persuasive, this solution may potentially introduce unwanted variability. First, the presence of gamification elements in the computerized version of tasks may yield higher scores overall than analog tasks due to being more engaging, motivating, or gratifying. On the other hand, they may be too distracting or may represent an additional cognitive load for the children which could disrupt their task performance. Although some evidence on both those points is available (Bernecker & Ninaus, 2021; but see Jagušt et al., 2018; Zhan et al., 2022), it comes from studies on tasks and contexts other than the SRep task. Therefore, more specific studies are needed to test these assumptions, especially since, as was mentioned above, available evidence comparing these task versions is equivocal (Banasik-Jemielniak et al., 2023; Pratt et al., 2022). Moreover, SRep task computerization may also vary between studies. For example, a recent study by Torregrossa, Caloi et al. (2023) used a different visual frame than the “treasure hunt” characteristic for the COST Action IS0804 SRep tasks. Nevertheless, despite these potential risks, using computerized versions of SRep tasks may help control for a variety of other confounding factors related to the testing situation (e.g., better or poorer rapport of a particular examiner with a particular child).

A related area is the use of prerecorded versus live SRep stimuli. With prerecorded stimuli, all children listen to exactly the same voice with the same pace, tone, and intonation. These may vary in live reading, introducing additional variables that are not accounted for. This may be especially important when using SRep tasks to identify SLI, as, presumably, eliminating various paralinguistic aspects and systematizing the stimuli may help tap more precisely into the examined child’s language processing capabilities. Moreover, prerecorded stimuli may be much more cost-effective and easier to use. On the other hand, the impersonal nature of prerecorded material may not be as motivating or engaging as a live interaction, which might facilitate a different dynamic. For example, Rice and Redcay (2016) showed that mere belief that the conversation being listened to is carried out live rather than prerecorded engaged mentalization to a greater degree, implying a difference in cognitive processing. As above, examining the impact of this feature on SRep tasks with bilingual children specifically requires specific tests. Nevertheless, it may be tentatively assumed that unlike the standardized, uniform delivery of prerecorded sentences, live reading allows for natural variability in speech, which can make the task feel more personalized and less mechanical. This can be particularly beneficial for maintaining children’s attention and motivation, as they may find the more interactive nature of live reading more appealing (e.g., “We decided to use live voice rather than prerecorded sentences as this helped engage these young children more readily in the task,” Frizelle et al., 2017, p. 1443). Another feature of live sentences is the ability for participants to use visual cues, such as lip-reading, to enhance their comprehension in the presence of auditory noise (Ma et al., 2009). The presence of a live speaker can also provide real-time feedback, which might be both an advantage (due to higher ecological validity) and disadvantage (due to uncontrolled for variance and the influence of potential examiner biases) for the quality of data collection. The live interaction also better facilitates the adaptation of the task administration to the child’s needs. We suggest that these costs and benefits should be considered depending on the specific aim of the study, although the specific impact of either of these SRep design features remains unknown and unexamined, despite the presence of some evidence from other pertinent areas of psychological assessment.

The above highlights another important issue: SRep task standardization. Using standardized SRep tasks, for example, those which constitute a part of a test battery or the COST Action IS0804 tasks, may contribute to the generalizability of the results and their valid interpretations. In applied contexts, it may also help build up a large amount of data which could potentially contribute to norm creation. As one of the aims of standardization is to minimize the error resulting from different assessment conditions, potential divergences between those conditions and task forms raise a question regarding the validity of interpretation. Specifically, would the study results be different if the assessment procedure was altered (see, for example, McCarthy & Elson, 2018), and if so, how? This question requires specific empirical testing in the context of SRep tasks used with bilingual children.

However, standardized SRep tasks are not available for many languages. Those that are available may also not always fit the specific aims of a given study, for example, when it focuses on the acquisition of a particular morphosyntactic feature. While recognizing the limitations in availability, we strongly recommend using SRep tasks which are standardized or which have been used in studies previously whenever possible. This is more so because, as we have sought to show in our systematic review, there are numerous other features of the SRep task beyond its language and the morphosyntactic structures it tests which should also be considered and which may potentially impact the obtained scores or the possibility of interpreting them. Indeed, our systematic review showed that SRep task length, the presence or absence of practice items, potential feedback given to participating children, as well as scoring schemes and—sometimes—reporting of raw SRep scores differed between the studies. In many cases, information about these aspects of the SRep task was not reported directly, which complicates the interpretation of the methodology and results of these studies.

SRep tasks are frequently deployed together with numerous other, often complex tasks (e.g., intelligence tests, language ability tests, working memory tasks). There is some evidence showing that lengthy study procedures lead to higher subjectively reported cognitive fatigue, but not lower objective performance, in adult participants (Ackerman et al., 2010). Although it may be assumed that this effect would occur with children participating in SRep tasks as well, which may impair their participation, and that this effect may be compounded by SRep task length, this needs specific empirical testing. In this context, based on the results of our systematic review, it appears that the reporting of using practice items within the SRep task is currently not customary in the literature. As above, this does not necessarily mean that practice items are not used, as they may nevertheless be part of the procedure for a given standardized task that the authors of a given study have used and cited properly. Nevertheless, the use of practice items in SRep tasks would benefit from additional examination in terms of its impact on the children’s performance or motivation and a uniform practice of reporting their presence/absence would increase the clarity of the reports.

In addition, establishing reasonable (i.e., motivating without biasing the results) rapport with the children and providing feedback should also be considered. Nevertheless, these solutions were used or reported to have been used relatively infrequently. Moreover, SRep task length was not directly reported in 34 studies (24.11%) of our dataset. This also applies to the SRep scoring schemes. By our classification, only 33.33% of the reports in our dataset reported SRep scores in specific morphosyntactic categories, with the rest reporting total scores only. Breaking down the SRep scores into more specific categories is not necessary in each study. Nevertheless, considering the variability of the SRep tasks, greater transparency in reporting the methodology and results appears warranted.

Finally, regarding the reporting of SRep task design specifically, for every formal SRep feature (with the exception of task language) that we considered, we were able to identify a subset of studies where appropriately clear information on it was not reported. This involves both features which may be considered relatively less important (although we argue otherwise), like the presence of visual stimuli in the task or the provision of feedback or reinforcement to the children throughout the task, as well as vital, core information like the task length in sentences. Even when acknowledging the fact that some studies either relied on an in-text citation to lead readers to further details or used a standardized version of the task (i.e., from a popular language testing battery) which informed readers may likely to be familiar with, in our view, this still represents an important oversight which should be corrected. On the one hand, lack of transparency regarding the specific features of the SRep task used in a given study inhibits appropriate judgments of its methodological quality, and thus the reliability and validity of the obtained results. On the other hand, it makes it more difficult for readers less experienced with this specific research context (e.g., students, early career researchers or researchers beginning their studies using the SRep methodology, or clinicians attempting to update their knowledge) to obtain accurate and detailed information, and thus proficiency. It also propagates a suboptimal standard in the literature, potentially disincentivizing the field from paying attention to and further studying the effects of SRep task heterogeneity. For similar cases in other areas of psychology, consider the over-reliance on Cronbach’s alpha as an index of reliability or the neglect in reporting effect sizes or confidence intervals in lieu of relying on statistical significance only (Fidler et al., 2004; Peng et al., 2013; Sijtsma, 2009).

Therefore, we would like to suggest that studies using SRep tasks directly report the following elements at minimum:

Language of the SRep task,

Origin of the SRep task: test battery, published task, author-made task according to the COST Action IS0804, author-made task according to other guidelines,

Length of the SRep task, presence and number of practice items,

Gamified computerization (including its characteristics, for example, visual elements, intermittent rewards) or in-person administration,

Use of prerecorded versus live sentences,

Feedback given to the children, if any,

Scoring scheme,

Raw SRep scores (means or percentages).

We consider this minimum to be relatively parsimonious, such that it should not be drawn out or redundant even if the authors use an SRep task from a popular test battery. Indeed, there are additional SRep elements which, if reported, could further improve methodological transparency, for example, the gender of the SRep item speaker, the length of SRep items in syllables, the presence or absence of parents or caregivers with the child during SRep task administration, or the language of task instruction (reported separately from the language of the task). However, we did not focus on these in our systematic review, as the initial stages of the full-text screening revealed that they were reported very infrequently. In addition, their influence on the SRep scores is difficult to infer without empirical testing, whereas the elements we did highlight appear to be more meaningful for the SRep methodology and there are some preliminary results on their point already (Banasik-Jemielniak et al., 2023; Pratt et al., 2021).

Limitations and future directions

The chief limitation of our systematic review is its scope. We focused only on English-language publications which included studies on bilingual children’s language development. However, SRep tasks may be used in a range of different contexts as well, both basic and applied. Therefore, our results may not necessarily describe the state of the literature in its entirety, and further reviews are likely needed. Nevertheless, we believe that greater transparency and systematization in terms of methodology reporting can only be beneficial.

Furthermore, we have limited our systematic review to only a general overview of SRep task characteristics. Although we suggest that differences in these characteristics may contribute to distorting the obtained results in uncontrolled and undesirable ways, further studies are needed to test this suggestion. These studies should include both direct comparisons as well as meta-analyses of extracted, appropriately described SRep data.

Finally, within our dataset, we focused only on SRep task characteristics. There are other methodological trends in the field of child bilingualism studies which would be valuable to analyze in greater detail, for example, the languages studied, the socioeconomic characteristics of the samples, the proportion of simultaneous or sequential bilingual children in the samples, or the methods of screening for SLI or gathering language background data. As with our systematic review, we believe that systematization in reporting these aspects of studies also have the potential of raising their scientific standard.

From another perspective, it may be potentially valuable to examine in-depth the impact of the COST Action IS0804 and the resulting SRep task framework on the changes in methodology and reporting standards in the literature (e.g., can specific changes in SRep task design or the way of reporting it be tracked or compared between the pre- and post-COST Action IS084 periods?). This way, valuable insights could be gained, potentially serving to formulate effective standards, guidelines, or reporting practices. 1