Abstract

Webcam sex platforms simultaneously host thousands of live performances. To allow users to explore these, platforms categorize. On porn sites, this has led to extremely detailed, hypercategorized classification systems. Developing the concept of “categorization regime,” which refers to all categorization options on a platform, we examine hypercategorization on 50 webcam sex platforms. In the heavily stigmatized, yet immensely popular, webcam sex industry hypercategorization shapes working environments and conceptions of desirability. Through critical content analysis, we find that the examined platforms, employing over 1700 unique categories, categorize in detailed, messy, and elaborate ways. Analyzing examples from categorization systems for gender, ethnicity and body type, the article demonstrates that these categories both celebrate diversity and offer earning opportunities, yet also reinforce regressive discriminatory and fetishizing narratives about marginalized groups of webcam performers.

Introduction

Categories shape how people navigate and make sense of everyday life (Bowker and Star 2000; Hacking 1986). They come into being through multifaceted social processes, in which power dynamics, group interests and cultural norms among many other factors play a role. In an era of digitization, platforms have become central actors in categorization processes. But how categorization unfolds on (sexual) platforms and how this shapes the experiences and opportunities of workers, has hardly been studied systematically.

This paper examines categorization on webcam sex platforms—sex being an area of social life where categorization has historically been particularly fraught with taboos, tensions and moral connotations. In doing so we propose a novel conceptual framework of categorization regime, which we define as the collection of all categorization systems on a platform. Categorization systems are single collections of categories that platform users can deploy with regards to one specific characteristic of a person or an object. These systems are in turn built up from categories, which refer to the individual options which can be selected within a categorization system. For example, on webcam platforms “redhead” is a category within the categorization system “hair color,” which in turn, along other categorization systems such as language and age, constitutes a key component of the categorization regime on most webcam sex platforms.

We focus on webcam platforms because they have rapidly become one of the leading segments of the online sex industry in terms of revenue and numbers of customers (Bleakley, 2014; 892; Van Doorn and Velthuis, 2018; 178). Secondly, like in the porn industry, categorization regimes on webcam platforms stipulate which desires and bodies are taken as the norm; along with, for example, ranking algorithms and banning practices, they determine who is and is not seen (Jones, 2015; Van Doorn and Velthuis, 2018; Stegeman, 2021). In that capacity, they also directly impact individual sex workers’ earning opportunities. Thirdly, while categorization of sexual content has received a fair amount of attention in academic studies of pornography, it has remained mostly undocumented for webcam platforms. Camming and online pornography are similar in the sense that both concern the display of sexual acts on the internet (Bleakley, 2014, 893). However, unlike porn, camming presents the possibility for real-time communication between audience-members and performers (Henry and Farvid, 2017; 119; Jones, 2020b; 1; Sanders et al., 2018; 15).

In the next two sections, we provide the conceptual grounding for our inquiry by discussing previous studies which have highlighted the connection between categorization, social meaning and power, as well as literature on categorization within the platform, sex and pornography industries. Subsequently, we explain the methodology of our critical content analysis of categories on 50 webcam platforms. Next, we demonstrate on the basis of our empirical analysis that webcam platforms give rise to hypercategorization (Saunders 2020; 58): the proliferation of categorization regimes consisting of many different categorization systems and even more categories. Through these regimes webcam sex platforms reinforce traditional discriminatory distinctions in terms of gender, ethnicity, and bodily characteristics. At the same time, however, we show how they enable the recognition and celebration of those identities and characteristics that have been historically rendered undesirable or invisible. In short, taking into consideration possibilities for subversion while recognizing the structuring force of platform systems we argue that these extensive categorization regimes are at once potentially harmful and emancipatory.

Categorization and social power

Categories can be theorized as tools and expressions of power. Nominalists theorists argue that new categories create new ways of being, rather than just describing an observable reality (Hacking, 1986; 164). Following from this is also the understanding that the hierarchization of categories is both powerful and historically socially constructed. In the realm of sexuality certain categories of individual attributes are ranked in “tiers of desirability” (Green, 2008;32). Further attending to categorization regimes within an intersectional lens (Crenshaw, 1990), as is done in this paper, reveals how overlapping categories compound and complicate the oppressive and liberatory potential of categorization.

The potential consequences of categorization as social power mechanisms are abundantly evident in the history of sex work, which is fraught with conflicts and tensions (Block and Adriaens, 2013; 277; Walkowitz, 2017; 22). The categorization of sex workers is just one example of the many historical and ongoing forms of oppressive categorization. The past and present categorization of Black people as hypersexual, of Black and brown women as impure and unrapeable, the categorization of homosexuality as a disease, to name just a few examples, harm people the world over (Miller-Young, 2015; 211; Srinivasan, 2021; 12). Categorizations do not just carry symbolic power, they shape experiences of life in very real, and potentially destructive ways.

Still, the results of categorization can pan out in both oppressive and subversive ways. Categorization simultaneously enables and limits possibilities, moral judgments, and social visibility, as categories give rise to and restrict ways of being (Bowker and Star 2000; 4). Socially dominant categories are often the result of external processes, top-down power enters in the creation, assignment and reproduction of categories (Jenkins, 2000; 3). But, as illustrated with homosexuality, oppressive categorization can also be co-opted by a community itself as a way to organize and claim legitimacy (Foucault 1976; 101). While dominant categorization practices may not be designed by the groups they refer to, they can still be co-opted as instruments for organization, representation, or as illustrated in this paper: monetization.

Categorizing sexual content online

Digitization and platformization have rendered aforementioned categorization dynamics all the more urgent. Users of digital platforms inevitably face large amounts of content. To navigate this, platforms provide categorization tools along with other affordances such as filters or the algorithmic ranking of content (Webster, 2014; 238). Particularly on music and television streaming services, categorization systems have proliferated (Madrigal, 2014; Stevens and O’Donnell, 2020). Initially online instances of extensive categorization were celebrated as opportunities for democratization; the flexibility, granularity, and participatory character of online categorization was contrasted with the often limited, restrictive, top-down categorization systems employed in the offline world (Bruns, 2008; Shirky, 2005). More recent studies question whether these democratic expectations have been realized, as most online categorization systems on major commercial platforms are controlled and generated by the platforms themselves (Gillespie, 2018; Poell et al., 2021). Other studies have pointed at the oppressive role of categorization on platforms: they can solidify stereotypes, especially those related to gender and race, thereby reproducing inequalities (Cheney-Lippold, 2011; Noble, 2018). This happens for instance when categories get factored into discriminatory platform algorithms.

Sexual content platforms are no exception in this respect: they feature what has called hypercategorization (Saunders, 2020). Where a clothing website might let you filter through color, size, brand and price, many porn websites already have more categories for you to decide where you would like to see sperm end up: “Facial, Cumshot, Creampie, Bukkake, Cum on Ass, Cum on Pussy, Cum on Tits, Cum Swap, Swallow” (Saunders, 2020; 58). Academic studies of these categories are, however, few. Studies refer to categorization only in passing, or as a sampling technique and tend to focus on porn rather than camming (Kendrick, 1997; McNair, 2013; Paasonen, 2016; Shor and Golriz, 2019). Additionally, there is a focus on studying the categorization of the genre of porn itself, not the categories that the industry uses. Attwood, for instance, has argued how the classification systems on porn websites reflect wider sets of opposition between “high” versus “low” culture, “reason” versus “passion” or “order” versus “disorder” (Attwood, 2002; 96). Other studies have likewise demonstrated how ‘deviance’ drives the categorization of porn (Mcnair, 2013; 20; Miller-Young, 2015; 6).

Some exceptions exist. Matt Fesnak’s research suggests that considering the diversity and “excess” of pornography, the systems used for its classification are surprisingly rigid (2016, 4; Saunders, 2020; 61). This inflexibility seems to signal a rigid conception of these porn sites’ audience members and their needs. For starters, content on most porn sites is by default presented in ways which assume heterosexual men as the audience, while content for women or gay viewers is relegated to distinct categories such as “popular with Women” and “Gay Only” (Bonik and Schaale, 2007; 83; Fesnak, 2016; 4; Mann, 2014). Similarly, the presence of white women in porn (marketed to men) is not specifically categorized on these sites, whereas, for example, “Asian” and “Ebony” are distinguished into separate categories (Saunders, 2020; 72). Pornhub, as an industry giant, through its rigid categories creates “a ‘baseline’ of desire organized by a standardized pornographic classification and genrefication system” (Watson, 2021; 4). This baseline consists of white, heteronormative and patriarchal conceptions of sexuality (Keilty, 2012; 429; Saunders, 2020; 72).

That corporate porn sites reproduce oppressive structures remains an important, if hardly surprising observation (Paasonen, 2010; 69). However, existing studies suggest that the categorization of online pornography can also be seen to diversify the sexual landscape. For instance, popular “deviant” or fetish categories such as “Bondage,” “Old/Young” and “Public,” can serve an emancipatory function (Chun, 2008; 84).

On webcam platforms, Jones has argued that fat performers can set themselves apart and display a diversity of bodies often lacking in traditional pornography (Jones, 2019; 284). Trans performers have likewise made use of these sites’ many gender categories to experiment with and monetize their gender expression (Mia, 2020; 238; Theunissen and Favero, 2021; 790). Through classification practices some performers can also capitalize on the fetishization of specific genders and sexualities (Jones, 2020b; 158). In this sense these categorization systems facilitate the display and marketing of diverse bodies on webcam sex platforms.

In the absence of a designated conceptual framework, studies on sexual categorization focus on a single or a limited number of platforms, on one categorization system (usually gender, race or ethnicity) or zoom in even further on a limited number of specific categories. These studies do not, however, engage with the categorization on online sex platforms in both systematic and critical ways. Our aim then is to study the presence, influence, and meaning of categories. To provide the necessary systematic-critical lense, we employ the framework of categorization regimes.

Methodology

To examine categorization in a systematic way, we started by identifying English language webcam sex platforms using the search terms “webcam sex” on “DuckDuckGo”. Rather than the dominant search engine Google Search, this privacy-oriented, non-personalized search engine allows for a more straightforward automatic collection of top search results. We collected region-specific hits for the query in October 2020 for the United States, United Kingdom, Ireland, Canada, New Zealand and Australia. This selection of countries was informed by the dominance of English in the global field of digital sexual services. Further research into other non-English webcam platforms would nevertheless be invaluable.

Using this method, we identified 228 platforms. We then selected only those platforms whose dominant interface focused on camming, rather than, for instance, porn videos. Of the remaining ones we analyzed the 50 platforms with most traffic, 1 22 of these turned out to be so-called white-label platforms: they have the same offering of webcam performances as other platforms in our database. In other words, these white labels, while appearing to be different platforms, host the same performers. The outer “layers” of such whitelabels often differ from one another, but actually rely on the same infrastructure and data (Swords et al., 2021; 5). Because many (although not all) of these white label platforms have unique platform designs with their own categorization regimes, and because some of them are highly popular and attract more visitors than “original” platforms, we have included them in our database.

Subsequently, between December 2020 and January 2021, five researchers manually collected all platform-generated categorization information from every platform’s landing page. In March 2021, one of the authors checked all collected regimes for consistency and missing 2 data.

Many of the examined platforms let users choose a categorization regime based on whether they are interested in seeing performances by women, men, couples or transgender performers. The regime for women is the default on most platforms, showing women and categorization systems for, for example, breast size. When selecting a different regime not just the displayed content changes, but also the categorization systems which are available (e.g., categorization systems for “endowment” and “facial hair” within the regime for men). To avoid inflating the data through these regimes, our analysis only includes unique categorization systems and categories on one platform. So, while a platform’s regimes for men and women usually include identical systems, for example, ethnicity, region or age, these systems are only counted once for a single platform, while categorization systems which differ across a platform’s regimes (e.g., for body hair) are counted separately.

Our dataset shows what the categorization options are on webcam platforms, not how these options are actually created and assigned by platforms or used by performers or platform visitors. Further qualitative work could investigate how performers and users make use of these categorizations and how platform power is enacted and subverted here. However, to critically examine such usage, it is imperative to also gain in-depth, systematic knowledge of what the categorization regimes designed by platforms are actually like.

To analyze these regimes we predominantly employ critical quantitative content analysis. This means that categories are first quantified, often exploring frequencies, in our analysis and then interpreted qualitatively (Macnamara, 2005; 14). The interpretations focus on the categories’ meanings concerning representation and the power structures which the categorization regimes make manifest, reproduce or, to the contrary, undermine. Specifically, in line with critical and intersectional analysis, language around gender, bodies and race is examined closely (Johnson et al., 2016; 5). Quantitatively, the analysis thus lays bare patterns within the universe of webcam platforms, while qualitatively, it scrutinizes the language of specific categorization systems.

Detailed and messy categorization regimes

First, what stands out when analyzing categorization on these 50 platforms is the non-standardized, imprecise, and unsystematic character of almost all their categorization regimes. For instance, in some regimes categories like “normal” or “average” or even “about average” are frequently used without specifying what is meant by them; body sizes are haphazardly categorized as “a few extra pounds.” Categories presented within one system sometimes overlap, or they combine different criteria within one system (e.g., hair length and hair color), inhibiting users to filter on both criteria simultaneously (e.g., seeking out performers with short, dark hair).

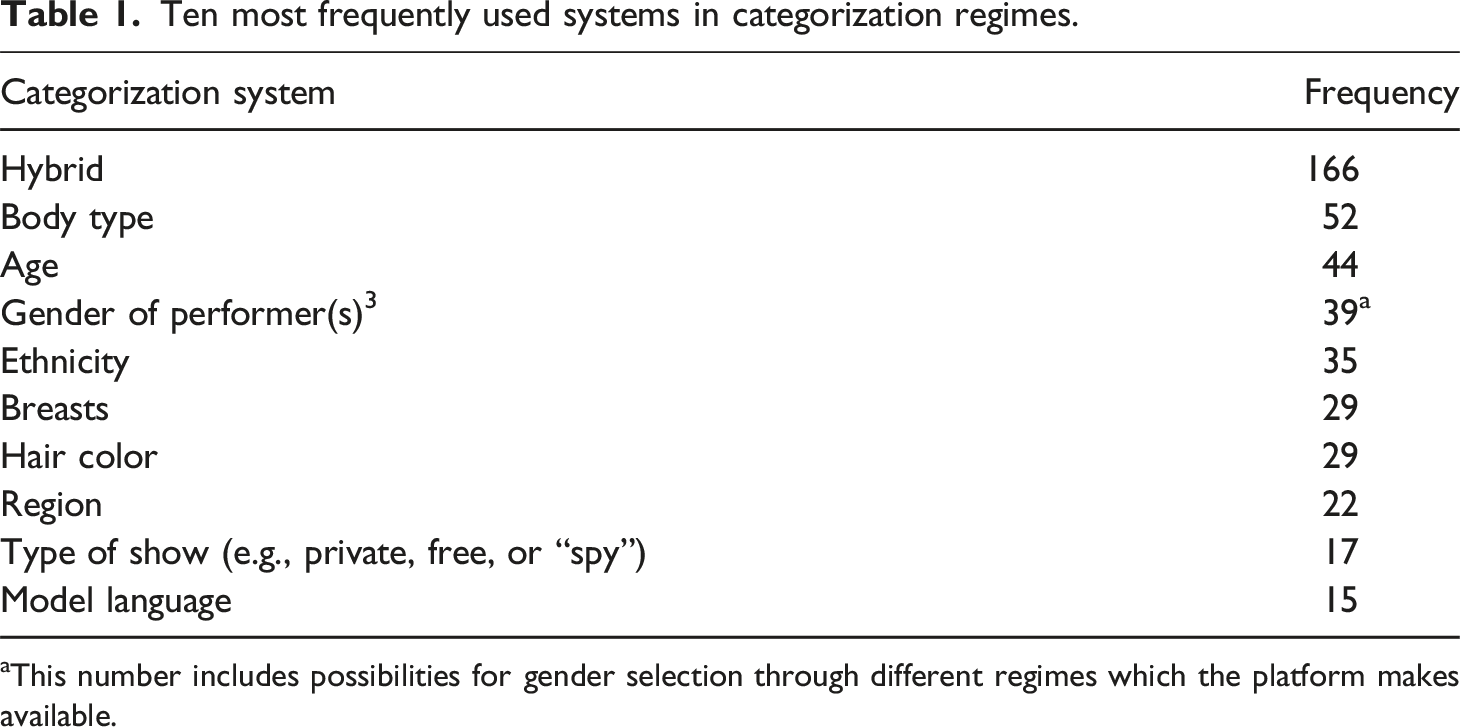

Next to their messiness, what stands out is the highly detailed nature of categorization regimes. For instance, the 50 platforms in our database feature 511 categorization systems in total. On average platforms offer 6.7 categorization systems (SD = 3,5), while most platforms offer nine systems. One platform (Xlovfecam) deploys 17 systems: it enables viewers to categorize performers among others on the basis of their height, weight, breast size, eye color, hair color, hair length and the absence or presence of pubic hair. The database also includes a platform (Plexstorm) which offers no categorization system whatsoever.

Ten most frequently used systems in categorization regimes.

This number includes possibilities for gender selection through different regimes which the platform makes available.

The plethora of categorization systems is the first but surely not the only evidence we find for hypercategorization in the webcam industry: the 511 categorization systems themselves are constructed out of 7321 categories, of which 1759 are unique (in other words, each category appears on average on 4,2 platforms). Stripchat (as well as its several “white label” versions), Camlust and Cam4 top the list: on each of these platforms, webcam performers can be filtered and selected using more than 500 different categories. On average, the platforms in our database offer users 102 categories to filter with, but variation across platforms is high (SD = 140,5). On Stripchat, many of these categories are constructed by recombining categories from two different systems, such as “curvy ebony” (combining the body type system with the racial system) or “granny anal” (combining the age system with a categorization system for sexual acts). As such the categorization regimes allow for a variety of intersecting identities such as being Black and fat or transgender and old, which are simultaneously fetishized and made desirable, and restrict other overlapping identities. In the next sections we will look in more detail at categories by zooming in on the three salient systems - for ethnicity, gender and body type.

Ethnicity

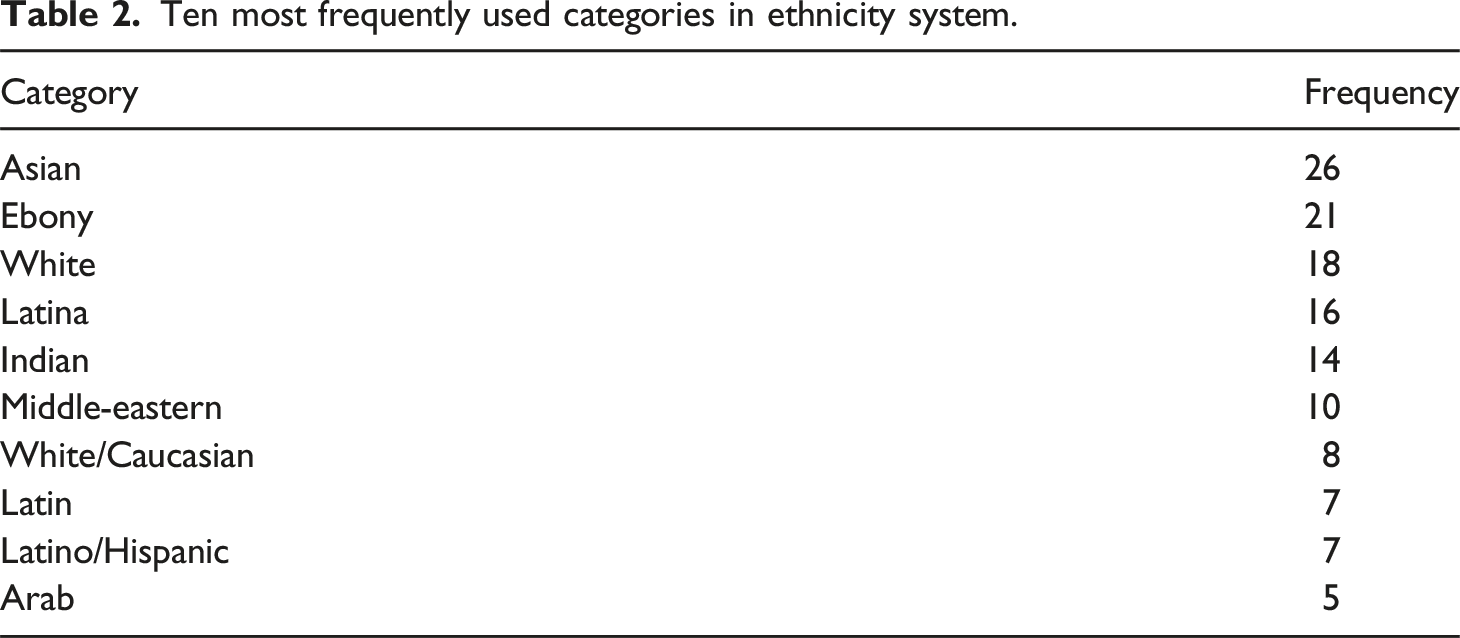

Ten most frequently used categories in ethnicity system.

The high frequency of categories for Asian, Black or Latin-American performers may suggest racial equality in the camming industry. Reality is different. For instance, as previous studies (Jones, 2015, 2020b) show, Black models are algorithmically ranked lower than white models. Webcam platforms’ ranking systems are thus not “colorblind”; they are one of the means through which racism is technologically embedded in the webcam industry (Noble, 2018; 9).

Without the option to select performers of different ethnicities, racist webcam platform algorithms would likely hide all but white performers from the audiences’ direct view. In this respect categorization systems offer strategic opportunities to minoritized groups. For instance, by categorizing themselves as “ebony” Black performers can monetize the fetishization of Black women (Jones, 2020b; 169). Through “illicit eroticism,” or “deviance as resistance” (Cohen, 2004; Miller-Young, 2010; 224) Black sex workers can “symbolically and strategically” profit of their hypersexualization. While historically Black work has been undervalued, certain performers can capitalize on a specific type of sexual capital by playing into “exoticizing” categories (Green, 2008; 44). The categorization of ethnicity on webcam platforms allows Black webcam performers to potentially monetize, and organize their labor.

But doing so comes at a cost. For racism is not only technologically embedded in categorization systems but also discursively, through the highly stereotypical language choices of the categories themselves. Especially noticeable is the difference in terminology describing white and Black performers. The only two terms for white performers are “white” and “Caucasian.” For Black performers the dominant term is “ebony”, although the combination “Black/ebony” or “Black” are also used occasionally. The explicitly orientalizing category “exotic” also surfaces on some platforms. While you see the terms for white performers on the census or in official data, “ebony” does not occur in this context (Nash, 2014; 447). Moreover, outside pornography and camming, Black is not synonymous with ebony, which describes either a type of wood, or connotes the sexualization of Black people.

More specifically, intersectional analysis suggests that the term ebony primarily sexualizes Black women. This becomes evident when toggling the categorization regime of a platform like Megacams. When selecting women, the options for “ethnicity” are: “Arabic, Asian, Ebony, Indian, Latina, Russian, White”. For men, however, the list is: “Arabic, Asian, Black, Indian, Latino, White”. This resonates with how porn, and Western society at large, have hypersexualized Black women (Miller-Young, 2010; 221). While more neutral terms tend to be used for white women and Black men on webcamming platforms, Black women are objectified more intensely through sexually-charged terminologies.

Gender

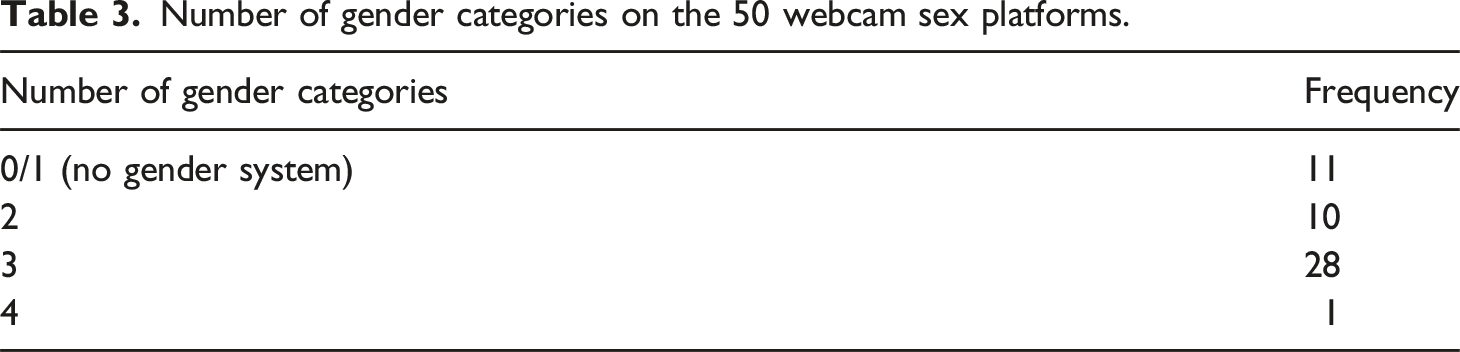

Out of the 50 platforms in our dataset, 39 allow for the specification of gender: 11 via a regular categorization system, 28 via categorization regimes for different genders. These gender systems make implicit ideas about gender in the online sex industry manifest.

First, it is noteworthy that 11 platforms in our data set do not contain a system for gender and that the majority of these (7) exclusively display women. Besides, the majority of the platforms that do have gender categorization systems, have women as the default when visitors access the platform’s landing page. This is for instance the case on the three most popular camming platforms BongaCams, LiveJasmin and Chaturbate (Alexa, 2021). Plenty of men also work as webcam performers, almost always for men at the audience too, in a lucrative gay industry (Jones, 2020b; 76). Still, the default gender regime for popular platforms is exemplary for an industry seen as primarily populated by women, catering to a straight men as their audience (Jones, 2020b; 181; Bonik and Schaale, 2007; Saunders, 2020). Obviously, this is in line with wider societal patterns of objectification and sexualization of women (Seale et al., 2006; 26).

Number of gender categories on the 50 webcam sex platforms.

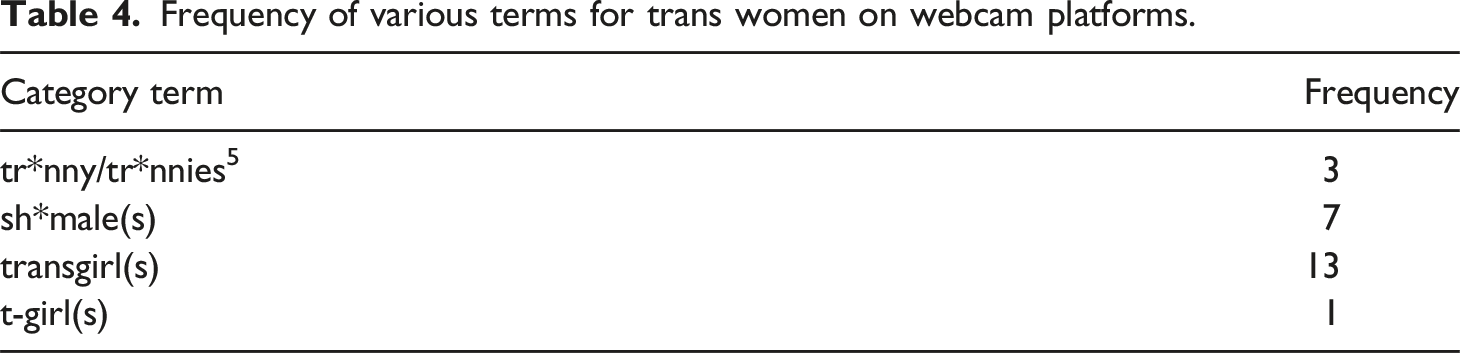

Frequency of various terms for trans women on webcam platforms.

The terms are not all offensive; of the 21 platforms which have a separate regime for transgender performers, five denote it using the neutral term transgender, 10 use trans, either by itself or as adjective in “trans cams,” while 6 use the medicalizing and outdated term transsexual. In lower-level categorization systems more problematic, explicitly transphobic and fetishizing terms surface (Williams, 2019; 22). Specifically, the top-term in Table 4 is often seen as a slur for trans people and linked directly to the sexualization and objectification of transwomen (Roehlkepartain, 2018; 14). The second category occurs quite often on webcam platforms. This term, also primarily associated with pornography, is regarded as a transphobic slur as well (O’Halloran, 2017; 222). This category is preoccupied with the presence of trans performers’ penis, misrepresenting sexual realities for transgender women (Steinbock, 2017; 31). In the porn industry this fixation on genitals is exemplified by the devaluation of trans performers after gender confirmation surgery, and performers limiting hormone blockers before shoots to be able to maintain erections (Del Rio and Pezzutto, 2020; 264). This sexualization of genitals and ejaculation is especially geared towards a fantasy held by audiences made up of straight men (Pezzutto and Comella, 2020; 161).

The setting apart of transgender performers in a gender ternary exemplifies the complex heterogeneous potentialities of hypercategorization: on the one hand, it means that especially transwomen are categorized as “other” and separate from the “women” category and therefore once again presented as non-women (Williams, 2019; 22) on camming platforms. On the other hand, this does constitute a clear move away from the previously imposed gender binary. The categories create new space and recognition for previously ignored performers. This is where transwomen in particular can explore, monetize and celebrate their gender (Mia, 2020; 238). As has been described for other types of sex work it might offer community and financial autonomy (Adams, 2020). Sex work can create gender affirming experiences for trans workers, precisely through being desired by clients (Capous-Desyllas and Loy, 2020; 356).

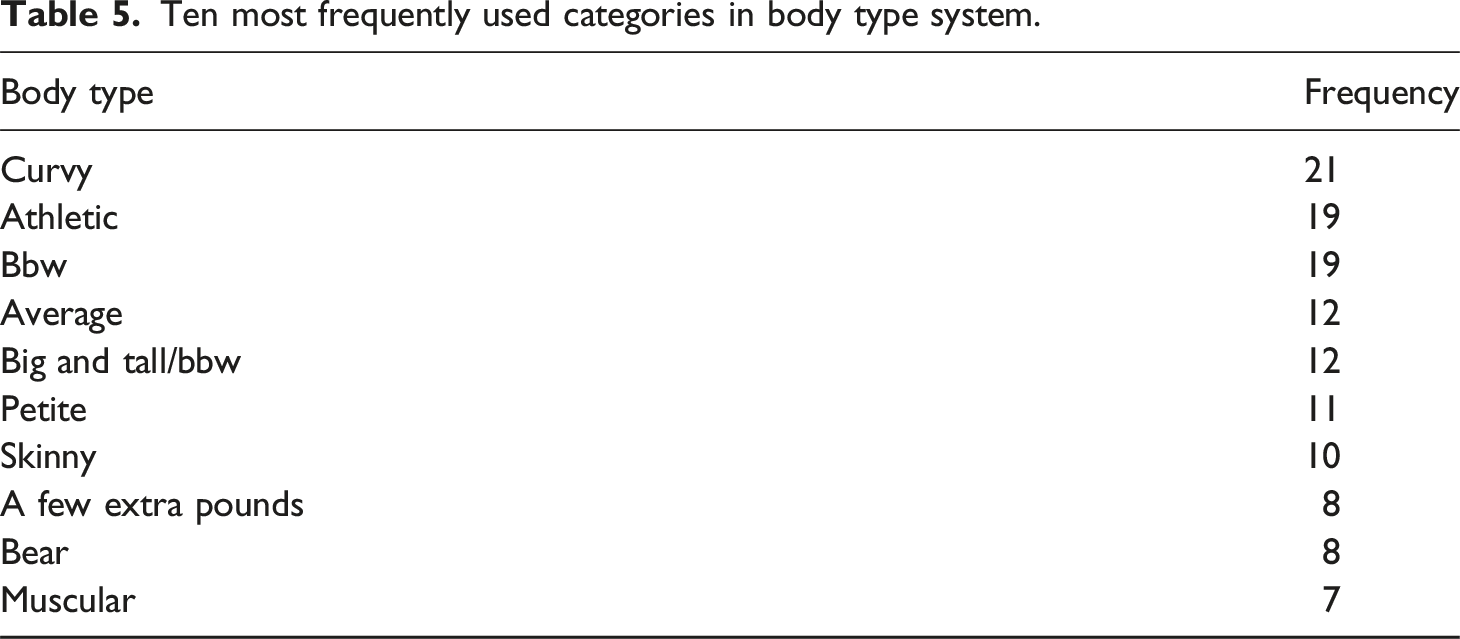

Body type

Where gender and ethnicity usually just have one system on each webcamming platform, the categorization of bodies is more segmented and can take up eight different systems on a single site. They frequently measure the size of entire bodies or parts of it through institutionalized ordinal scales (e.g., s/m/l/xl bodies, breasts, penises) or by using sexual slang for specific body parts such as “ass,” “tits,” and “cock.” If anything signals the commodification of performers’ bodies, it is this splitting up of the body through multiple categorization systems (Seale et al., 2006; 25). Given that platforms hold the power to decide what body parts matter, and how they should be classified and set apart, this form of hypercategorization can be seen as contributing to the performers’ platformized dehumanization.

Ten most frequently used categories in body type system.

In short, our data suggest that while hypercategorization of body parts bares the risk of dehumanizing forms of platform commodification, it also generates visibility to a wide variety of body types, allowing for the celebration of diversity as well as the admiration and monetization of performers’ body parts.

Discussion

This paper has sought to make three interrelated contributions. First, we have developed a nuanced understanding of the ways in which platforms shape the work and in particular the visibility of webcam performers. While current studies tend to focus on the role of algorithms in who is made visible (e.g., Van Doorn and Velthuis, 2018; Jones, 2020b), we have demonstrated the ways in which performers can get categorized on the basis of among others their gender, age, ethnicity, and body parts. Our analysis suggests that these categorization regimes subject performers to wider societal power structures, that is capitalist structures, given that platforms develop these regimes to extract value from platform workers. But also, heteronormative, white-centric, patriarchical structures, in which cis-gender, white, slender women are objects of desire, to be commodified through classification regimes. And, while performers falling outside of hegemonic norms do find a place in these regimes, they are frequently categorized in pejorative and oppressive ways.

At the same time, however, we have demonstrated that categorization regimes of online platforms, exactly because of their highly elaborate nature, provide opportunities for diverse performers to gain visibility, be desired, and monetize their diversity. In doing so, categorization regimes may provide an antidote to the algorithmic organization of platforms. Moreover, while the categories themselves may be superimposed, we know anecdotally that performers have some freedom in assigning categories to themselves in beneficial ways, for example, by categorizing themselves as single, or as younger than they actually are (Jones, 2020b; 160). However, future research should study the everyday use of categorical regimes by both performers and viewers in a more detailed and systematic fashion: are the categories which potentially celebrate diversity on platforms, actually being used as such by both performers and their audiences? How do performers themselves use categories to maximize their visibility, earnings and power? Interviews with performers, or surveys with clients, could provide more necessary insight here about who gets to achieve visibility online and how - which in this industry is the dominant currency.

Another antidote, both to algorithm organization but also to the super-imposed categorization regimes analyzed here, are the hashtags which performers can create themselves on many platforms to auto-categorize. These tags, which are also found with democratizing and diversifying potential on porn platforms (Mazières et al., 2014; 83), provide performers limitless opportunities to create visibility for the specific niche they like to occupy. But whether these bottom up tagging practices do indeed lead to different ways of categorizing than the top-down regimes, which we have mapped, remains to be seen. The conceptual framework of categorization regime which we have developed for this paper could be used to study this in empirical detail.

The second contribution of this paper is the conceptual framework of categorization regimes which we think can be used for other platforms both within and outside of the sex industry. For instance, discussions about how users of social media platforms are categorized, both on the platform and to potential advertisers (see e.g. Bivens and Haimson 2016), can be cast in terms of regime. The concept of categorization regimes enables more systematic investigations into which systems (such as the one for gender, Bivens, 2017) are included on platforms and which are excluded. If hypercategorization is a key feature of platforms like Spotify or Netflix, our framework allows for a detailed analysis of how this hypercategorization came about, and if this hypercategorization is mostly taking place on the systemic level (so providing users with many more different systems to organize content) or on the categorical level (so expanding the number of categories within each system). Another advantage of the proposed framework is that it enables a granular approach, linking the analysis of structural forces and power dynamics on the macro level to the categories which people face in everyday life on a micro level. The framework is generative for inviting questions related to the level of coherence of the regime or the power dynamics and agencies involved in shaping and changing the regime itself.

Additionally, our conceptual framework lends itself well to intersectional approaches to modes of labeling people. Where intersectional theory describes how specific overlapping identities impact experience in unique ways (Crenshaw; 1242), through categorization regimes we can explore how this is enacted in a range of categorization practices. Since the regime element of this framework invites the examination of all categories and systems in a space, it draws to the fore specific and overlapping categories. If a platform hosts multiple categorization systems, how does this allow people to express their multiple and overlapping identities? And what options does such a regime prevent from overlapping? Similarly, do single categories in a system draw together multiple identity aspects and thus reinforce their linkage? And especially relevant for the study of interactive platforms, as also demonstrated on webcam platforms here, does the selection of one identity alter the other options available?

Finally, with our critical quantitative approach we aim to make a methodological contribution to studies of classification. Critical studies of this topic often rely on an in-depth analysis of limited data but in doing so lack breadth, while quantitative approaches run the risk of remaining overly descriptive. The case presented here shows how a critical quantitative approach allows for the examination of large data sets and trends, while simultaneously scrutinizing the meanings of the individual categories. Had we just qualitatively critiqued some categories in-depth, readers would be left without an understanding of the broader categorization regimes on webcam platforms, including their key features such as hypercategorization and incoherence. However, if our focus instead was just on relaying the quantitative breadth of categorization on these platforms, the power structures at stake in these regimes would remain under-explored.

Conclusion

This paper has developed a new conceptual framework of categorization regimes to document the intensification and multiplication of ways in which sex workers and their performances get categorized on webcam platforms. Our paper suggests that these hypercategorization practices, which we documented in a new dataset containing regimes on 50 platforms, comprising 511 categorization systems and 1759 unique categories, do not lend themselves to any easy interpretation. On the one hand, we observed that, whether intended or not, platforms reduce performers to oversimplified, harmful and at times offensively worded bodily characteristics, which in few other industries would be seen as legitimate. Many popular categories have a discriminatory, sexist, ageist and sometimes blatantly racist or transphobic character. Through hypercategorization regimes, webcam platforms, at least partially, perpetuate the fetishization of historically and presently marginalized groups. Precisely because of the plethora of categories on these platforms social distinctions can be magnified and intensified.

On the other hand, however, our data indicate that platforms render individuals visible and desirable who have long been placed outside societal norms of acceptability. This concerns in particular body types and forms of sexuality which go far beyond the hetero, white cis-gender (and frequently blond and heavy-busted) women which at least until recently epitomized sexuality in Western mainstream media and the culture industries. While the existence of such non-hegemonic categories does not mean they are also popular, it at the very least signals that performers within these categories exist and can be desired.

Moreover, hypercategorization facilitates performers' capitalization of fetishes (e.g., Jones, 2020b; 169; Miller-Young, 2015, 176). Unlike many “traditional” categorization systems, webcam platforms also predominantly and explicitly include transgender performers in their classification of gender. This is in line with (some) transgender performers’ positive experiences of webcam platforms as celebratory and exploratory spaces (e.g., Mia, 2020; Theunissen and Favero, 2021). Webcam platforms’ hypercategorization practices, in this sense, provide recognition and constitute digital spaces where diversity can get celebrated. If we were to ignore the potential for the reclaiming of, and organizing through, some of the categories employed by webcam platforms, we would sell short the subversive abilities of the communities being categorized here.

Footnotes

Acknowledgements

The authors thank the participants, and especially the discussant Dr Alex Gekker, in the Global Digital Cultures work-in-progress seminar (University of Amsterdam) for their helpful comments on and suggestions on a draft of this article. We are also grateful to the anonymous reviewers and editors for their thoughtful feedback.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Nederlandse Organisatie voor Wetenschappelijk Onderzoek (406.DI.19.035).