Abstract

Non-autistic observers often interpret autistic emotional expressions more negatively, though it is unclear whether this reflects observer bias or genuine differences in autistic people’s emotional experience and expression. To examine this, 20 autistic and 20 non-autistic adults reported the intensity of their felt emotion while re-experiencing video-recorded events eliciting mild and strong happiness, sadness, and anger. A total of 379 non-autistic observers, half blind to diagnostic status, viewed the recordings and identified the emotion and its intensity. iMotions emotion recognition software also classified the emotional valence of the expressions. Overall, autistic and non-autistic participants reported comparable levels of felt emotion, although differences emerged in how their expressions were perceived. Observers more accurately identified happiness in non-autistic participants and sadness and anger in autistic participants. They also judged autistic participants as expressing sadness and anger more intensely. Informing observers of the diagnostic status of participants largely did not modulate effects. iMotions more often classified mild autistic expressions as neutral and mild non-autistic expressions as positive. Because observer and iMotion findings emerged despite autistic and non-autistic participants not differing in felt emotion, they suggest that non-autistic observers and emotion recognition algorithms differentially interpret authentic autistic and non-autistic emotional expressions, which may contribute to misinterpretations of autistic people.

Lay Abstract

Autistic people may express emotions in ways that differ from non-autistic people, and non-autistic people sometimes misinterpret them as flat, overly intense, or hard to read. This misunderstanding can affect how autistic people are judged in everyday life, including in job interviews, friendships, and other important situations. In this study, we wanted to know how well non-autistic people—and emotion recognition software—can identify emotions on the faces of autistic and non-autistic people when they are actually feeling emotion. To do this, autistic and non-autistic adults were videotaped while recounting personal experiences that made them feel mild and strong happiness, sadness, and anger. They rated how strongly they felt each emotion during the videotaping. Later, short video clips of their facial expressions were shown (without sound) to a large group of non-autistic viewers, who identified the emotion and rated its intensity. Some viewers were told whether the person in the video was autistic or not. We found that autistic and non-autistic people reported feeling emotions at comparable levels, but non-autistic viewers were better at recognizing happy expressions in non-autistic people compared to autistic people, and better at recognizing sad and angry expressions in autistic people compared to non-autistic people. Viewers tended to rate autistic expressions, especially sadness and anger, as more intense than those of non-autistic people, even though the computer software rated autistic expressions as more neutral compared to non-autistic participants. These results suggest that autistic people feel emotions just as deeply as non-autistic people, but differences in expressive style and non-autistic biases may lead to misinterpretation. These findings highlight the need for greater awareness of communication differences in autism and for reducing misinterpretations in how autistic people are perceived.

Keywords

Introduction

Autism is clinically characterized by difficulties in social interaction (American Psychiatric Association [APA], 2013). Historically, autism research and practice have largely attributed these difficulties to the social, cognitive, and behavioral characteristics of autism (Chevallier et al, 2012; Pelphrey et al., 2002), including differences in nonverbal communicative behaviors such as eye contact, use of gestures, and facial expressivity (APA, 2013; Baron-Cohen et al., 1985; Rutherford et al., 2002; Senju & Johnson, 2009). These deviations from typicality are often interpreted as deficits (Kapp, 2019), with the assumption that their remediation to normality will improve real-world social outcomes (Bottema-Beutel et al., 2018). However, interventions targeting social cognition and social skill often yield minimal and non-generalizable improvements in outcomes (Bishop-Fitzpatrick et al., 2013; Gates et al., 2017; Sandbank et al., 2020). For instance, social cognition and normative social skills only weakly predict real-world social experiences for autistic individuals (Morrison, DeBrabander, Jones, Ackerman, & Sasson, 2020), underscoring the need to view social competence as relational, context-dependent, and co-constructed rather than as a fixed personal trait (Bottema-Beutel, 2017).

More recently, an alternative framework born out of autistic experience and scholarship highlighting bi-directionality and social context has challenged the deficit view of social interaction in autism. This framework, known as the double empathy problem (DEP), emphasizes reciprocal misunderstandings between autistic and non-autistic people (Milton, 2012). It acknowledges that some autistic people may struggle to interpret certain non-autistic social cues, preferences, and expectations (Sasson et al., 2012) but extends beyond it by recognizing that non-autistic people also misinterpret autistic social cues and mental states (Alkhaldi et al., 2019; Edey et al., 2018; Sheppard et al., 2016). Indeed, contrary to a deficit view of autistic sociality, autistic people often experience better social outcomes (Chen et al., 2021; Morrison, DeBrabander, Jones, Faso, et al., 2020) and enhanced understanding (Crompton et al., 2020) when interacting with other autistic people relative to non-autistic ones.

In this way, the social experiences of autistic people are influenced not only by their individual characteristics but also by biases and misunderstandings from non-autistic people (Botha & Frost, 2020). For instance, autistic differences in social behaviors—such as facial expressivity, vocal prosody, gaze use, timing of gestures, and personal space preferences (de Marchena & Eigsti, 2010; Faso et al., 2015; Grossman et al., 2010; Kennedy & Adolphs, 2014)—are often misinterpreted and evaluated negatively by non-autistic people (Foster et al., 2024; Hubbard et al., 2017; Sasson et al., 2017; Sheppard et al., 2016). These misinterpretations can have real-world consequences. Autistic people are frequently misperceived by non-autistic observers as less credible and more deceptive in criminal justice settings (Lim et al., 2023), as misbehaving or underperforming in work settings (Szechy, 2023), and less competent in job interviews despite comparable qualifications (Whelpley & May, 2023). These misunderstandings may reduce personal and professional achievements for autistic people and contribute to heightened levels of anxiety, depression, and social isolation (Cage et al., 2018).

One potentially understudied contributor to cross-neurotype misunderstandings may be the mismatch between how autistic and non-autistic people produce and perceive emotional expressivity. Facial emotion among autistic people is often judged by non-autistic peers as unnatural (Faso et al., 2015), flat (Stagg et al., 2014), or exaggerated (Faso et al., 2015), despite experiencing emotions similarly to non-autistic people (Ben-Itzchak et al., 2016; Berkovits et al., 2017). The seemingly contradictory descriptions of “flat” and “exaggerated” facial emotion in autism can be reconciled by acknowledging the high heterogeneity in autism (Hajdúk et al., 2022) and by recognizing that outward displays of emotion for autistic people are strongly influenced by context, cognitive effort, and sensory sensitivities (Samson et al., 2012). For example, autistic people report using stimming behaviors to manage sensory overload and regulate emotional responses in overstimulating environments (Kapp et al., 2019), which may be misinterpreted as inappropriate by non-autistic observers. Conversely, autistic people may downregulate their emotional expressions in unfamiliar or socially challenging situations, leading to perceptions of flat or blunt affect (Stagg et al., 2014). Autistic people may also employ different strategies to regulate their affect, including masking or camouflaging, which can alter how their emotions are expressed and interpreted (Hull et al., 2017; Livingston et al., 2019). These strategies, often developed in response to social pressures to conform to neurotypical norms, can contribute to the misalignment between experienced and expressed emotion (Mandy, 2019).

Non-autistic people may misinterpret such expressions as a lack of emotional authenticity or sincerity (Cage & Troxell-Whitman, 2019) or a lack of emotional connection (Heasman & Gillespie, 2018). They tend to prefer displays of positive affect in personal and professional settings, associating such expressions with traits like trustworthiness and tolerance (Reis et al., 1990), and associating perceptions of negative affect with lower attractiveness (Lim et al., 2023; Scharlemann et al., 2001), social desirability (Reis et al., 1990), competence (Ueda et al., 2016), and cooperativeness (Scharlemann et al., 2001). Perhaps not coincidently, autistic people are consistently rated as less attractive, less likable, and more socially awkward than non-autistic adults (DeBrabander et al., 2019; Morrison, DeBrabander et al., 2019; Morrison, DeBrabander, Jones, Ackerman, & Sasson, 2020; Oh et al., 2019; Sasson et al., 2017; Sasson & Morrison, 2019), though these unfavorable impressions are mitigated somewhat when non-autistic observers are informed that they are evaluating an autistic person (Sasson & Morrison, 2019).

Recently, Foster et al. (2024) found that non-autistic observers and emotion recognition software detected less positivity and attributed more negativity to autistic faces during a job interview context. Although this may relate to the poorer evaluations autistic people often receive in professional settings, it remains unclear whether these differences were driven more by autistic participants expressing emotion differently from non-autistic participants or by them experiencing it differently. In this way, Foster et al. (2024) could not determine if non-autistic observers and emotion recognition software were accurately or inaccurately categorizing facial emotion expressed by autistic people as more negative than those expressed by non-autistic controls. There also remains a need to examine perception accuracy using naturally captured evoked expressions at different levels of emotional intensity. For example, certain emotions or intensities may be more frequently misinterpreted in autistic people compared to their non-autistic counterparts. Such insights could provide a more nuanced understanding of how autistic people express emotion and help identify the specific emotional experiences that contribute most to misinterpretations.

The present study

The present study investigates whether non-autistic adults and automated emotion recognition software are less accurate at interpreting the emotion and intensity of autistic relative to non-autistic adults based on their facial expressions while experiencing happiness, sadness, and anger. We also explore whether non-autistic adults’ interpretation of autistic emotion is influenced by diagnostic disclosure.

We hypothesized that autistic and non-autistic participants would not differ in the emotional intensity they reported experiencing, yet non-autistic observers would misidentify the emotional state of autistic participants more frequently than that of non-autistic participants and underestimate the intensity of emotions felt by autistic relative to non-autistic participants. We anticipated that these patterns would occur to a greater degree for subtle, more mildly felt emotions relative to strongly felt ones. Furthermore, given evidence that diagnostic disclosure improves non-autistic observers’ impressions of autistic people (Sasson & Morrison, 2019), we predicted that non-autistic observers would be more accurate in their emotion identification and intensity estimates when informed of the diagnostic status of participants. Finally, we predicted that, consistent with Foster et al (2024), iMotions emotion recognition software would classify non-autistic emotional expressions as more positive, and less negative, than autistic emotional expressions.

Methods

Participants and recruitment

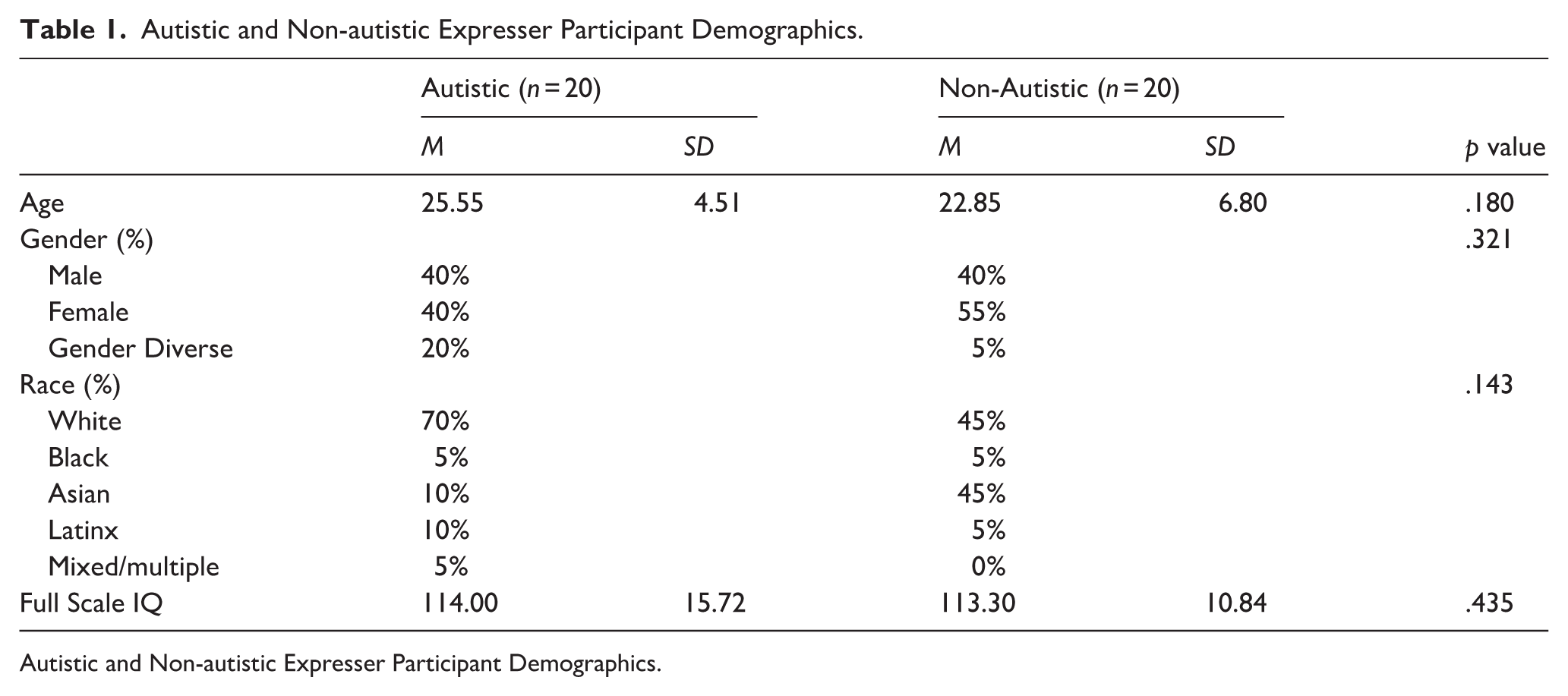

The study included two sets of participants. First, 20 autistic and 20 non-autistic adults served as expressers. These participants were recruited from the University of Texas at Dallas, the community, and a registry consisting of over 200 autistic adults who have consented to participate in research. Demographics of the autistic and non-autistic expressers can be viewed in Table 1. (Autistic participants included individuals with a formal diagnosis (n = 17) as well as those who self-identified as autistic (n = 3). Self-identified participants without a clinical diagnosis were included only if they scored above 72 on the Ritvo Autism and Asperger’s Diagnostic Scale-Revised (RAADS-R), indicating clinically relevant levels of autistic traits (Ritvo et al., 2011). Self-identifying autistic participants did not significantly differ on autistic traits on the RAADS-14 compared to those with a formal diagnosis (formal diagnosis M = 29.0, SD = 5.22, self-identified M = 30.3, SD = 6.66, p = .712). Excluding the self-identifying autistic expressers from analyses largely did not affect results. Two new significant effects emerged, and one disappeared. These are noted in the results section.

Autistic and Non-autistic Expresser Participant Demographics.

Autistic and Non-autistic Expresser Participant Demographics.

Second, a separate sample of non-autistic adults, recruited from the University of Texas at Dallas’ student research pool, served as observers. The observers consisted of 379 non-autistic adults (mean age = 19.95, SD = 2.73), with 67.7% identifying as female, 28.9% identifying as male, 0.7% as non-binary/gender fluid, and 0.2% undisclosed. The observer sample was ethnically diverse, with 49.9% identifying as Asian or Pacific Islander, 20.4% White, 14.3% Hispanic/Latine, 7% Black, 4.1% multiple ethnicities, and 3.4% other. Observers completed the 14-item Ritvo Autism and Asperger’s Diagnostic Scale screener (Andersen et al., 2011) and were excluded if they scored above 14, indicating high autistic traits. The Ritvo scores of the included sample averaged 5.91 (SD = 4.09), indicating low autistic traits. The final sample size of 379 non-autistic observers was established after excluding 93 for high autistic traits, 60 for reporting a first-degree relative with an autism diagnosis, 36 for incomplete survey responses, and one for self-identifying as autistic.

Using GPower version 3.1.9.7 (Faul et al., 2007), we found that we have 80% power to detect large mean-level differences (Cohen’s d = |0.9|) between variables from the non-autistics (n = 20) and autistic (n = 20) groups at a significance level of α = 0.05 using two-tailed tests. A Cohen’s d of .9 aligns with prior differences between autistic and non-autistic groups, as well as between clinical and non-clinical groups in affect production and recognition (e.g. Foster et al., 2024; Kohler et al., 2008).

We used the power analysis application developed by Judd et al. (2017) to determine the minimum effect sizes we are adequately powered (i.e. power = .80) to detect with our linear-mixed model design containing random intercepts for expressers (n = 40) and observers (n = 379) using variance estimates from our analyses. Presuming a within-subjects factor wherein expressers are only in one level of the independent variable (e.g. participant diagnosis), the minimum effect size that we can detect is a small-to-medium Cohen’s d of 0.35. In contrast, we are well-powered to detect a very small Cohen’s d = 0.03 for any within-subjects factors in which expressers participate in all levels of the factor.

The study was approved by the University of Texas at Dallas’ (IRB) and all participants provided informed consent prior to participating. Expressers were financially compensated for their time, and observers participated for course credit.

Phase 1: autobiographical emotional narratives

The study consisted of three phases. In the first phase, 20 autistic and 20 non-autistic expressers provided autobiographical emotional narratives describing separate past experiences of mild and strong anger, happiness, and sadness (see Faso et al., 2015; Kohler et al., 2008 for methodological details). These narratives were structured into a standardized format and reviewed for clarity. Before video recording, participants removed accessories, pinned back their hair, and wore a gray t-shirt to minimize visual distractions. An autistic researcher read each emotional narrative aloud in a neutral tone while instructing participants to relive and naturally express their emotions: “Please try to relive the emotion as it happened during the event and express it as you did at the time.” The neutral delivery ensured that participants’ expressions reflected their own emotional recall rather than cues from the reader’s tone. This approach aligns with prior affect research, which used standardized, non-emotive prompts to elicit authentic expressions (Healey et al., 2010; Kohler et al., 2008). Each emotional expression was recorded separately, with 45 second to one-minute breaks between recordings. Neutral stories were recounted before and after each emotional story to help participants return to baseline. Happy and angry narratives were presented in random order, while sad narratives were recorded last to reduce carryover effects, as sadness can elicit lingering mood and physiological changes that may dampen subsequent emotional expressions (Gross & Levenson, 1995). Although participants were informed that they could take breaks or withdraw if they felt any distress, this did not occur for any participants. Mental health resources were also provided after the session.

After each recording, participants rated the intensity of their emotions using the Emotion Experience Rating Scale (EERS), an 11-point scale from 0 to 10, with 0 representing no emotion and 10 representing extreme intensity, previously used in similar procedures (Healey et al., 2010; Kohler et al., 2008). Participants also identified the moment the emotion they felt peaked—whether at the beginning, middle, or end of their narrative. These peak moments guided the selection of video clips for Phase 2.

Phase 2: observer assessments

The video recordings were muted to eliminate narrative information and ensure observer ratings were based solely on their perceptions of emotional expressivity. Videos were trimmed into 10- to 15-second clips centered on the peak emotional intensity identified by expressers. Observers viewed videos in a random order delivered over the Internet using Redcap software (Harris et al., 2019). For each video, they answered the question, “What do you think this person is feeling?” by selecting one of the following options: anger, sadness, happiness, fear, or no emotion. Fear and no emotion were included as distractor options to increase task difficulty and sensitivity, and to minimize response bias. Observers also rated the intensity of the perceived emotion using the EERS. Half of the observers viewed videos with an accurate diagnostic label, either “this person is autistic” or “this person is not autistic,” while the other half viewed unlabeled clips.

Phase 3: quantifying affect

Facial expressions in the video recordings were analyzed using iMotions software. The analyzed videos were the same 10- to 15-second clips shown to observers, including one mild and one strong happy, angry, and sad clip for each participant. iMotions generated classifications of valence (i.e. positive, negative, or neutral) separately for each emotion-intensity category (e.g. mild happy, strong happy, and mild sad).

iMotions classifies emotional expressions frame by frame using facial action units (iMotions Lab, 2022). The software identifies key facial landmarks, analyzes pixel variations, and classifies expressions based on facial muscle movements, producing summary valence scores (positive, neutral, or negative emotions). We chose to have iMotions generate summary valence classifications rather than individual discrete emotions because the reliability of iMotions’ is substantially lower at the level of discriminating between discrete emotions—particularly for those within the same valence category (e.g. sadness vs anger). Automated facial-expression algorithms tend to show stronger validity for broad valence dimensions than for specific emotion labels (e.g. Dupré et al., 2020). Therefore, valence-based grouping emphasized the overall emotional tone and improved reliability, consistent with dimensional models of emotion that identify valence as a core organizing feature of emotional experience (Barrett & Russell, 1999; Russell, 1980). For instance, a higher classification of neutral affect may indicate that participants were not expressing positive or negative emotions in ways readily identified by the software.

Positionality

The lead author is an autistic adult. This experiential expertise informed the development, design, research questions, and finding interpretations. The project team and co-authors consisted of autistic and non-autistic researchers who all contributed to the development, design, interpretation, and writing of this study.

Analytic strategy

All analyses were conducted using SPSS (v29) and RStudio (2024.04.2). Group differences in felt emotion between autistic and non-autistic expressers were evaluated using the two one-sided tests procedure (TOST; Lakens, 2017) to assess statistical equivalence. The TOST approach allows researchers to formally test whether observed group differences are small enough to be considered practically negligible—providing evidence for similarity rather than merely failing to reject the null hypothesis of a difference. This offers information beyond traditional frequentist tests, which can only indicate whether groups differ significantly but not whether they are statistically equivalent within a defined effect-size range (Lakens, 2017; Lakens et al., 2018). In the TOST framework, equivalence is supported when the 90% confidence interval for the mean difference falls entirely within these bounds. Equivalence was concluded when both one-sided tests were significant at α = 0.05. Conventional t-tests were reported for descriptive purposes only.

The equivalence bounds (±0.70 units on the 0–10 scale; ≈±0.30 d) were selected because this magnitude reflects the smallest difference considered practically meaningful for the Emotion Experience Rating Scale. Although prior studies employing this scale (e.g. Healey et al., 2010; Kohler et al., 2008) do not specify a minimal meaningful difference, a shift of approximately 0.7 units is a reasonable threshold given the scale’s 0–10 metric, where changes of less than one point typically indicate only very slight perceptual differences in emotional intensity. This rationale aligns with recommendations by Lakens (2017), who emphasizes that equivalence bounds should be based on the smallest effect size of interest rather than arbitrary defaults.

Analyses of variance (ANOVAs) and t-tests were used for analyses involving non-hierarchically structured data, as the design did not include nested sources of random variation (e.g. multiple raters or trials per participant) that would require multilevel modeling. Multilevel modeling was used when nesting did occur (e.g. multiple raters nested within participants or repeated trials requiring random effects).

A repeated measures ANOVA was conducted to examine whether autistic and non-autistic expressers differed in the intensity of felt emotion during their autobiographical emotional narratives, and whether these ratings varied by emotion type (happy, sad, angry) and intensity condition (mild vs strong). Independent t-tests were also conducted to compare group differences for each emotion type within the mild and strong intensity conditions.

Next, we used multilevel models to examine whether observer accuracy in identifying the emotion experienced by expressers differed by diagnostic group, intensity, diagnostic disclosure, and emotion type. We then conducted chi-square tests of independence to investigate whether patterns of observer misperceptions of emotional states differed for the two groups. These follow-up analyses were intended to determine whether observers demonstrated biases for incorrectly perceiving certain emotional states in autistic participants. Post hoc z-tests comparing column proportions were conducted without multiple-comparison correction, consistent with the descriptive, exploratory nature of these analyses. Such proportion comparisons are often used in emotion-perception research to illustrate response patterns rather than to draw strong inferences (e.g. Jack et al., 2012; Tottenham et al., 2009).

Following analyses of observer accuracy, we conducted multilevel models to examine whether observer ratings of expressers emotional intensity differed by diagnosis (autistic vs non-autistic), emotion (happy, sad, anger), intensity (mild vs strong), and disclosure (disclosed vs non-disclosed). We followed up any significant interactions with diagnosis with simple slope analyses. Finally, to examine whether iMotions differentially classified emotional expressions in autistic and non-autistic participants, we conducted a mixed-model ANOVA with diagnosis as the between-subjects variable and classified affect (positive, negative, neutral), experienced emotion (happy, sad, anger), and intensity (mild vs strong) as the within-subject variables. Because the proportions of affect (positive, negative, and neutral) classified by iMotions sum to 100% for each expresser, a main effect of diagnosis is not possible. Rather, we are testing for interactions between diagnosis and the within-subject factors—affect, intensity, and emotion. Significant interactions were followed up with simple main effects analyses and subsequent post hoc comparisons that adjusted for family-wise error.

All continuous predictors were grand-mean centered prior to analysis to facilitate interpretation of fixed effects and reduce multicollinearity. Categorical predictors were dummy coded. For each categorical variable, one group was designated as the reference category: the non-autistic group for diagnosis, the “anger” condition for emotion type, the “strong” level for emotion intensity, and the “non-disclosed” condition for disclosure status. The affect ratings derived from iMotions (positive, negative, neutral) were entered as repeated measures and were not dummy coded, as they represent proportions summing to 100% for each observation.

Results

Intensity ratings of felt emotion by autistic and non-autistic expressers

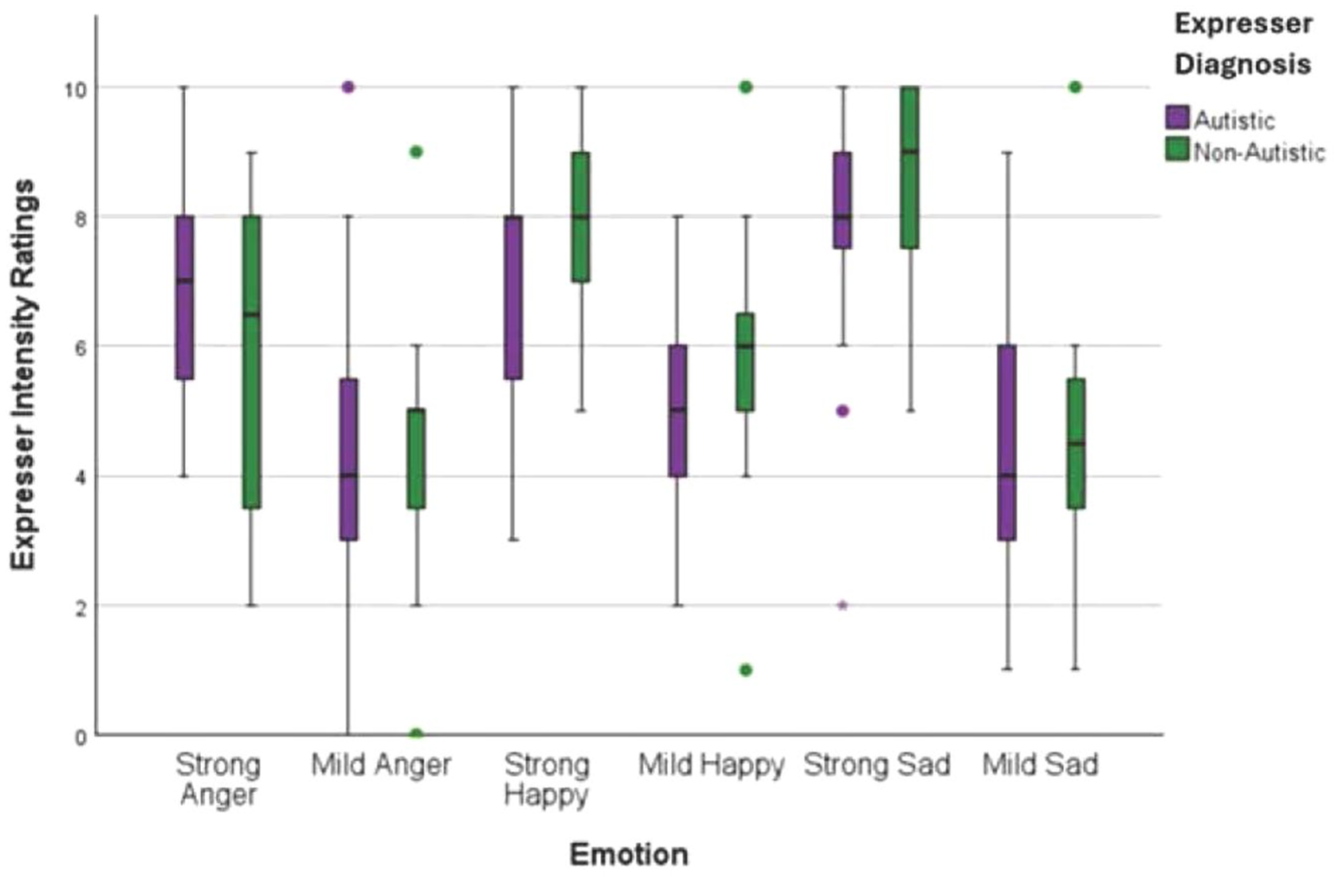

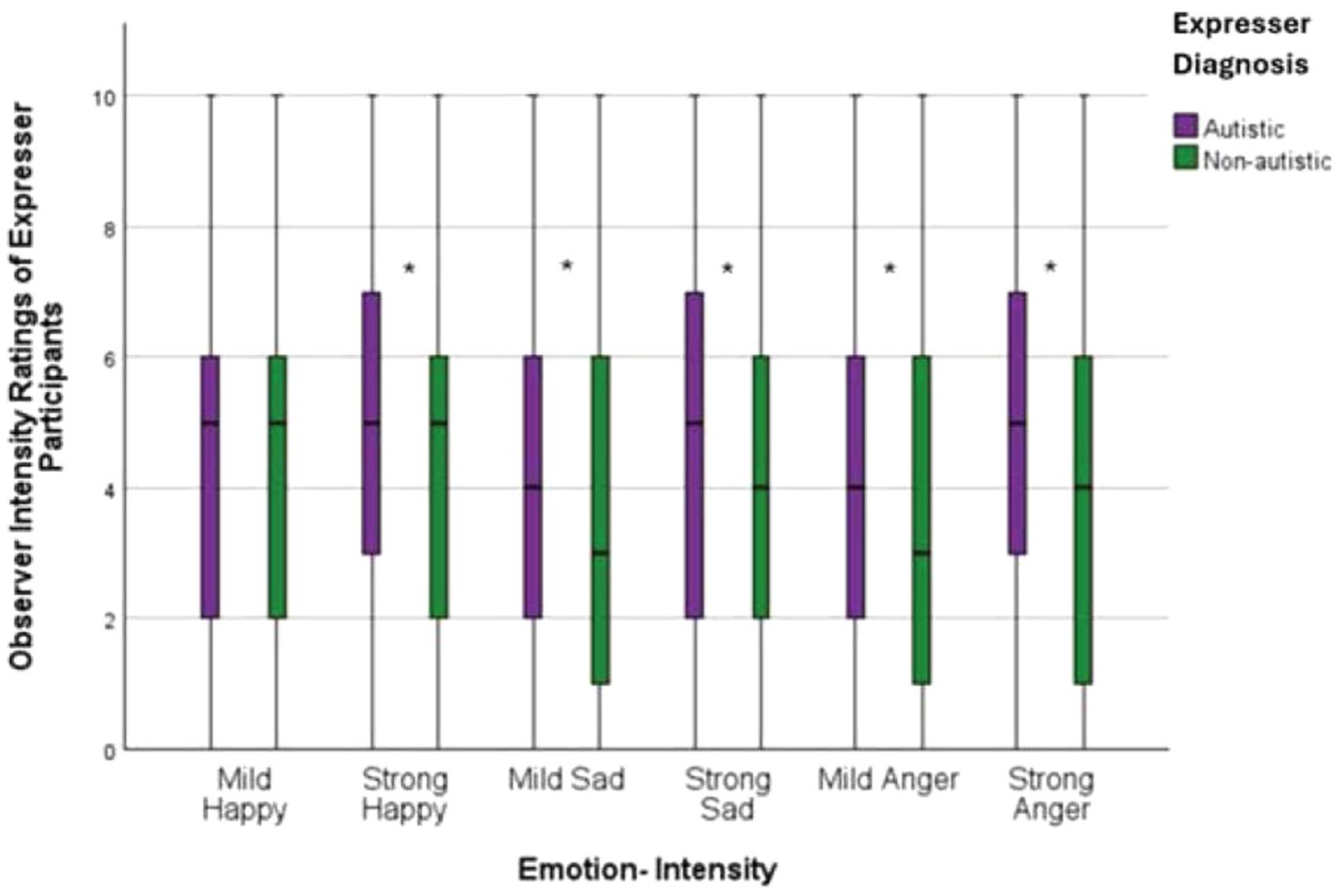

A repeated measures ANOVA, with emotion and intensity as within-subject variables and expressers diagnosis as the between-subject variable, determined that autistic and non-autistic expressers did not significantly differ in their ratings of felt emotion during the emotional narratives task (autistic: M = 6.01, SE = .30; non-autistic: M = 6.17, SE = .30, p = .699, d = 0.12; see Figure 1). When gender was included as a covariate, the group difference in felt emotion was still non-significant (p = .787).

Autistic and non-autistic expresser participant felt emotion intensity ratings. Plot generated using IBM SPSS Statistics (Version 28).

A two one-sided tests (TOST) procedure was conducted to examine whether the mean difference in intensity ratings between the two diagnostic groups was statistically equivalent within the pre-specified equivalence bounds of ± 0.70 units. The TOST indicated that the observed difference was statistically smaller than the upper equivalence bound, t(238) = –2.76, p = .0029, and statistically greater than the lower equivalence bound, t(238) = 1.70, p = .045, demonstrating that the mean difference fell within the equivalence interval. Because both one-sided tests were statistically significant (p < .05), and the effect size was negligible (d = –0.07), we conclude that the group means are equivalent within the ± 0.70-unit bounds. Thus, the results align with recommendations of Lakens (2017) and support statistical equivalence of intensity scores between the two groups.

The repeated measures ANOVA revealed a significant main effect of intensity (p < .001). As expected, participants reported feeling significantly more emotion when re-experiencing strong emotional narratives (M = 7.34, SE = .24) relative to mild ones (M = 4.84, SE = .27, d = 1.43, p < .001). A significant main effect of emotion also emerged (η² = .41, p < .001). Participants reported significantly greater felt emotion for happy narratives (M = 6.56, SE = .23) than angry narratives (M = 5.35, SE = .28, d = -.74, p < .001), and for sad narratives (M = 6.36, SE = .25) compared to angry narratives (d = -.72, p < .001). Finally, the analysis revealed a significant interaction between diagnosis and emotion (η² = .17, p = .034). However, follow-up simple main effects analyses did not yield significant group differences. Differences in felt happiness between groups were marginal (d = 0.31, p = .051). No significant interaction was observed between intensity and diagnosis (η² = .0001, p = .953), or among emotion, intensity, and diagnosis (η² = .11, p = .125). Independent t-tests conducted for each emotion type within each intensity condition showed no significant group differences: mild happiness (d = 0.59, p = .140), strong happiness (d = 0.59, p = .071), mild anger (d = 0.05, p = .444), strong anger (d = 0.45, p = .162), mild sadness (d = 0.10, p = .765), and strong sadness (d = 0.24, p = .449).

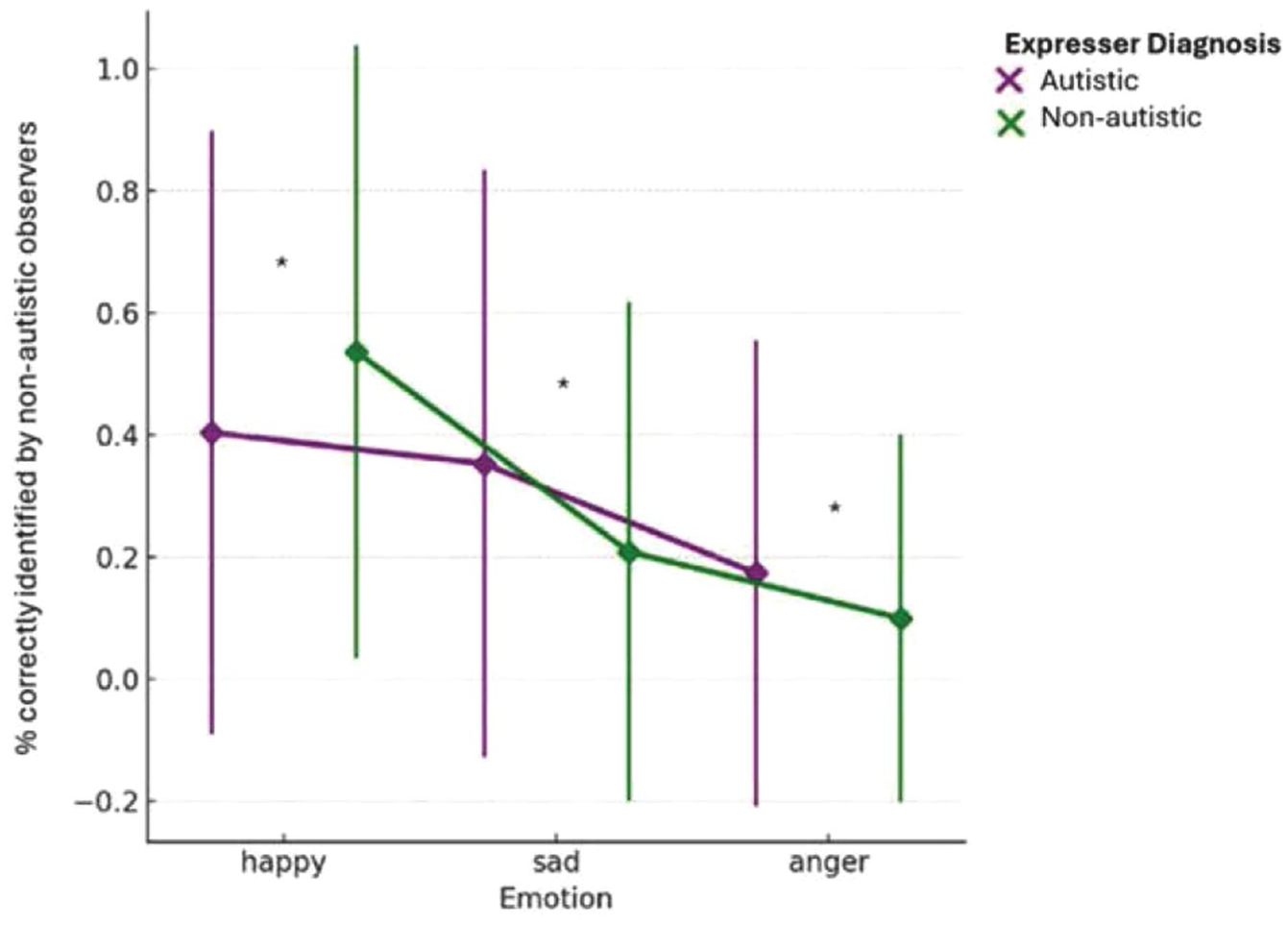

Observer accuracy at emotion identification of autistic and non-autistic participants

Multilevel models were used to examine whether observer emotion identification accuracy differed by diagnostic group, emotion type, intensity, and disclosure condition. There was a significant main effect of diagnosis (η² = .002, p < .001), with observers demonstrating higher accuracy at identifying emotions in autistic (MA = .323, SE = .01) relative to non-autistic participants (MNA = .283, SE = .01, d = 0.43). There was also a significant main effect of emotion (η²= 0.09, p < .001), with observers demonstrating greater accuracy for identifying happiness (M = .475, SE = .01) compared to sadness (M = .302, SE = .01, d = 0.74) and anger (M = .131, SE = .01, d = 2.38), and greater accuracy for sadness than anger (d = 0.61) (all ps < .001). In addition, there was a significant main effect of intensity (η²= .001, p < .001), with greater accuracy for strong emotions (M = .318, SE = .01) than mild ones (M = .288, SE = .01, d = 0.13). The main effect of diagnostic disclosure was not significant (η² = .0014, p = .483). Finally, there was a significant interaction between diagnostic group and emotion type (η² = .022, p < .001; see Figure 2). Although observers were more accurate at recognizing happiness in non-autistic (M = .538, SE = .01) compared to autistic (M = .412, SE = .01, d = -1.27) participants (p < .001), they were more accurate at recognizing sadness (MA = .395, SE = .01; MNA = .209, SE = .01, p < .001) and anger (MA = .163, SE = .01; MNA = .100, SE = .01, p < .001, d = 0.32) in autistic compared to non-autistic participants. Excluding the self-identifying autistic expressers (n = 3) from analyses resulted in a significant new three-way interaction between disclosure, diagnosis, and intensity (η² = .001, p = .017). Observers were more accurate at identifying intense emotions in diagnosed autistic participants when diagnostic status was not disclosed (M = .351, SE = .01) relative to when diagnostic status was disclosed (M = .303, SE = .01), p = .002, d = 0.07.

Marginal means of non-autistic observers’ emotion identification accuracy for sad, angry, and happy expressions, by diagnosis. Plot generated using IBM SPSS Statistics (Version 28).

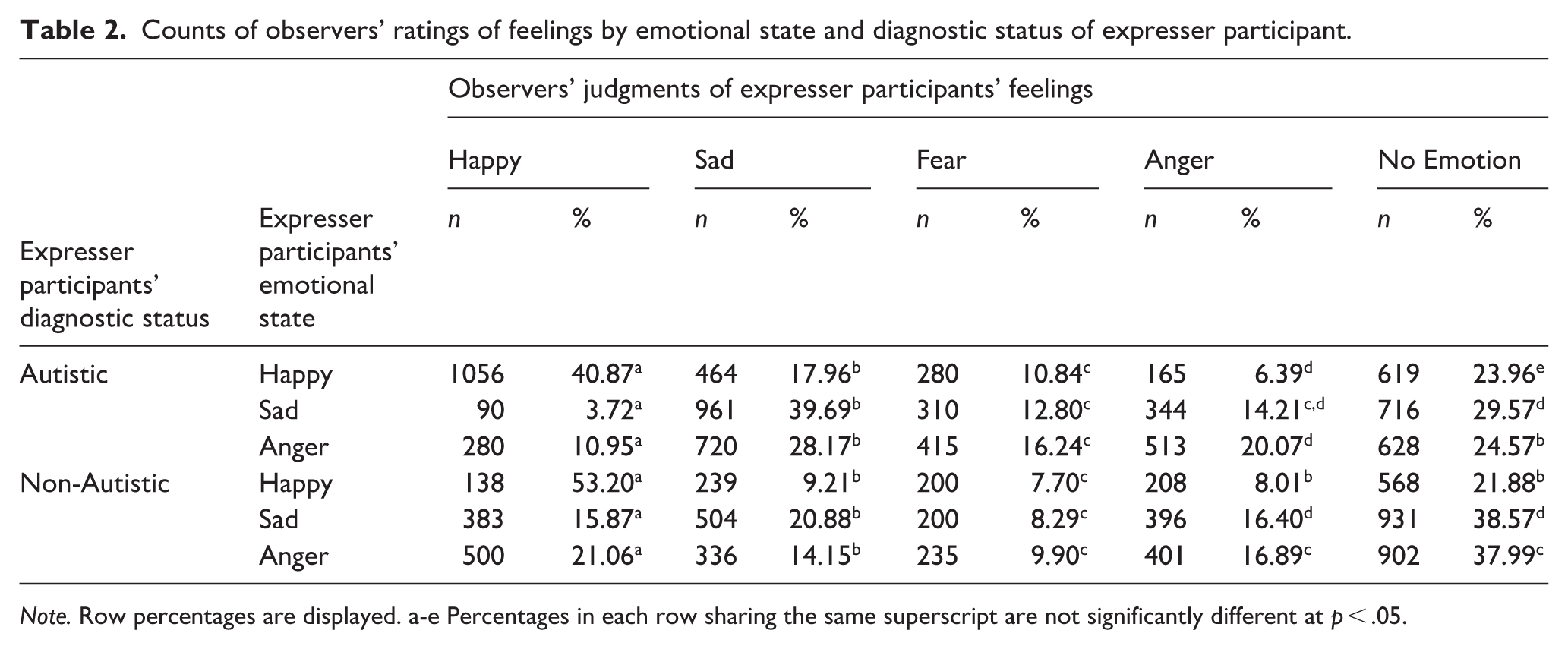

Chi-square tests of independence were then used to better understand observer misperceptions of emotional states for the two groups. We used z-scores to compare column-wise proportions in each row of Table 2. Observers were more likely to misperceive non-autistic expressers as displaying no emotion compared to autistic expressers. They also were more likely to misperceive anger experienced by autistic participants as sadness, while anger for non-autistic participants was more likely to be misperceived as happy or no emotion.

Counts of observers’ ratings of feelings by emotional state and diagnostic status of expresser participant.

Note. Row percentages are displayed. a-e Percentages in each row sharing the same superscript are not significantly different at p < .05.

Observer perceptions of emotional intensity felt by autistic and non-autistic participants

Multilevel models were used to examine differences in observers’ intensity ratings and whether these ratings varied by emotion (happy, sad, anger) and intensity condition (mild vs strong) diagnosis (autistic vs non-autistic), and disclosure (disclosed vs non-disclosed), incorporating random intercepts for both expressers and observers. Significant interactions were followed up with planned post hoc comparisons of simple main effects using Bonferroni-adjusted pairwise contrasts to control for multiple comparisons. This analysis revealed a significant main effect of diagnosis (η² = .011, p < .001) on observer ratings of emotional intensity: observers perceived greater emotional intensity in autistic (M = 4.43, SE = 0.07) compared to non-autistic participants (M = 3.93, SE = 0.07), MD (mean difference) = 0.50, d = 0.25. There were also significant main effects of intensity (η² = .004, p < .001), with strong emotional experiences rated as more intense (M = 4.33, SE = 0.07), MD = 0.30, d = 0.15) than mild ones (M = 4.03, SE = 0.07), and of emotion (η² = .005, p < .001), with happy being perceived as more intense (M = 4.43, SE = .07) than both sad (M = 4.06, SE = .07; MD = .365, d = 0.18) and angry (M = 4.06, SE = .07, MD = .372, d = 0.03) (all ps < .001). The main effect of disclosure (p = .121, d = 0.11) was not significant.

There was a significant two-way interaction between diagnosis and emotion (η² = .006, p < .001). Post hoc Bonferroni tests showed that observers rated emotional intensity as higher in autistic relative to non-autistic participants for sadness (M = 4.13 vs 3.81; MD = 0.49, p < .001, d = 0.24) and anger (M = 4.53 vs 3.58; MD = 0.95, p < .001, d = 0.03) but not happiness (M = 4.46 vs 4.40; MD = .061, p = .367, d = 0.03). There was also a significant interaction between diagnosis and intensity (η² = .0014, p < .001). Although autistic emotions were rated as more intense than non-autistic ones in both the mild (MA = 4.19, SE = .07; MNA mild = 3.87, SE = .07, d = 0.16) and strong (MA = 4.67, SE = .07; MNA = 3.99, SE = .07, d = 0.34) conditions, this discrepancy was larger in the strong condition (MD = .68) relative to the mild one (MD = .32).

In addition, there was a significant three-way interaction between emotion, disclosure, and diagnosis (η² = .0011, p < .001). To unpack this interaction, the two-way interaction between emotion and disclosure was examined separately for each diagnostic group. Among autistic participants, a significant interaction emerged (p < .001). Post hoc comparisons showed that sad expressions were rated as more intense in the non-disclosed condition (M = 4.56, SE = .11) compared to the disclosed condition (M = 4.05, SE = .12, p = .001, d = 0.25). In contrast, the two-way interaction was not significant for non-autistic participants (p = .062).

Finally, a significant three-way interaction was found between diagnosis, emotion, and intensity (η² = .008, p < .001; see Figure 3). To unpack this interaction, the two-way interaction between emotion and intensity was examined separately for each diagnostic group. It was significant for both autistic (p = .019) and non-autistic (p = .008) participants. However, although observer intensity ratings were higher in the strong relative to the mild condition for autistic participants on all three emotion types (all ps < .001), they were higher in the strong condition for non-autistic participants only on sadness (p < .001). For non-autistic participants, there was no significant difference in intensity ratings between the strong and mild conditions for happy (p = .225, d = 0.05) and angry (p = .331, d = 0.07).

Marginal means for non-autistic observer intensity ratings of strong and mild angry, sad, and happy emotional expressions of autistic and non-autistic participants. . Plot generated using IBM SPSS Statistics (Version 28).

Excluding the self-identifying autistic expressers yielded a significant Emotion × Intensity interaction (η² = .0011, p < .001). In the mild condition, happy expressions were rated as more intense than both sad (M = 4.33 vs 3.75; MD = 0.58, p < .001, d = 0.29) and angry expressions (M = 4.33 vs 3.68; MD = 0.65, p < .001, d = 0.32). In the intense condition, happy intensity ratings remained higher than sad ratings (M = 4.39 vs 4.01, MD = 0.33, p < .001, d = 0.17) and angry (M = 4.39 vs 4.14; MD = 0.26, p < .001, d = 0.13).

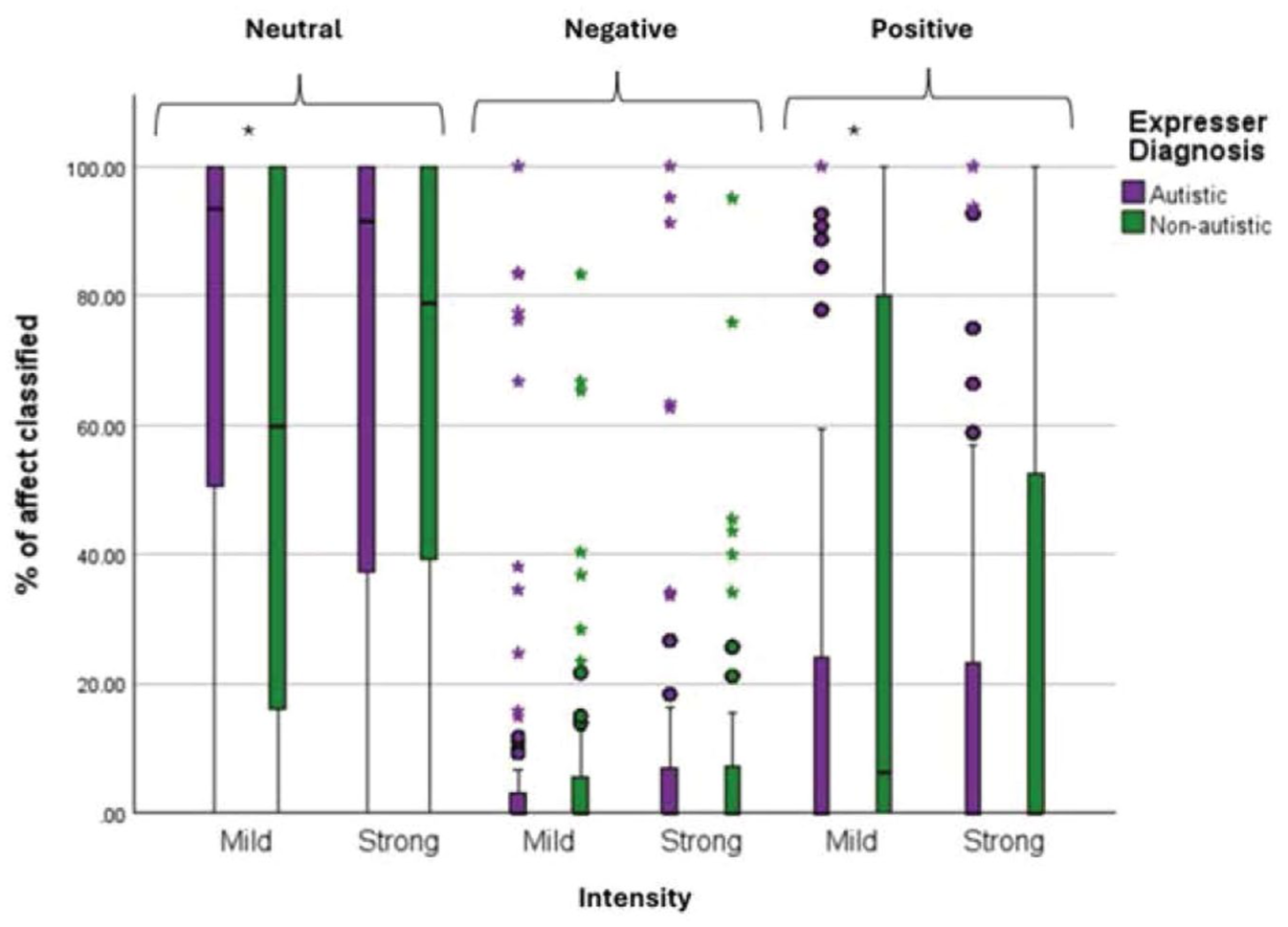

Classifications of positive, negative, and neutral affect by iMotions

A mixed-model ANOVA was conducted to examine whether iMotions classifications of affect differed between diagnostic groups across intensity conditions and emotion types. While the predicted Diagnosis × Affect interaction did not reach significance (η² = .09, p = .123), there was a significant three-way interaction between diagnosis, affect, and intensity (η² = .02, p = .048) (see Figure 4). Although the two-way interaction between intensity and affect was not significant for either autistic (p = .214) or non-autistic participants (p = .250), iMotions more frequently classified affect as neutral in in the mild intensity condition for autistic participants (M = 77.08, SE = 5.34) compared to non-autistic participants (M = 57.75, SE = 5.82), MD = 19.33, p = .020, d = 0.97. In contrast, iMotions more frequently classified affect in the mild intensity condition as positive for non-autistic participants (M = 39.17, SE = 5.91) than for autistic participants (M = 17.66, SE = 5.45, p = .011, d = 1.08). However, no significant differences were found in the strong intensity condition (ps > .212), and all other interactions involving diagnosis were non-significant (ps > .174). When the self-identifying autistic expressers were excluded, the three-way interaction between intensity, affect, and diagnosis was no longer significant (η² = .002, p = .077), likely due to reduced power.

Distributions of the percentage of video frames from autistic and non-autistic expressers, in the mild and strong expressive conditions, that were classified by iMotions as neutral, negative, and positive affect. Plot generated using IBM SPSS Statistics (Version 28)

Discussion

This study investigated how autistic and non-autistic individuals experience and express emotion during a naturalistic emotion-elicitation task across two intensity levels—mild and strong—and how accurately and intensely their emotions are perceived by non-autistic observers and automated facial analysis software. Observers were more accurate at identifying positive emotion (happiness) in non-autistic faces but more accurate at identifying negative emotion (sadness and anger) in autistic faces. Because these findings occurred despite autistic and non-autistic participants being similar overall in their felt emotion during the elicitation procedure, they suggest that differences in observer accuracy were largely attributable to emotional expressivity differences in autistic and non-autistic participants rather than differences in experienced emotion. Notably, both non-autistic raters and the iMotions software showed distinct classification patterns for the expressions of autistic and non-autistic participants in the mild condition. This pattern suggests that autistic and non-autistic individuals may express subtly felt emotions in distinct ways that challenge conventional affect-recognition frameworks. These classification differences also highlight potential perceptual biases—both human and algorithmic—shaped by neurotypical norms of expressivity.

An equivalency test confirmed that autistic and non-autistic participants were similar in the intensity of felt emotion they reported experiencing during the elicitation procedure. Autistic and non-autistic participants also did not differ in the intensity of specific emotions reported nor did they differ in the intensity of specific emotions at the mild and strong level. These results counter prevailing stereotypes that suggest that autistic individuals have diminished emotional capacities (critiqued in Nicolaidis et al., 2019). Both groups reported feeling more intense emotions in response to highly emotional narratives compared to mildly emotional ones, validating the effectiveness of the emotion induction procedure. In addition, both groups reported stronger emotional responses to happy narratives than to sad or angry ones, with sad narratives eliciting more intense responses than anger. This pattern suggests that the elicitation task used here may have been particularly effective at recreating positive emotional experiences and highlights commonalities in emotional responsivity for autistic and non-autistic adults in this context. Furthermore, evidence suggests that autistic people may feel more comfortable and disclose more when interacting with other autistic individuals (Crompton et al., 2020; Morrison, Pinkham et al., 2019), which may have supported more authentic emotional expression among autistic participants, given that the researcher conducting the procedure was autistic.

Previous studies examining perceptions of expressivity in autistic and non-autistic participants did not control for potential differences in felt emotion between the groups (e.g. Foster et al., 2024). Here, despite the groups not differing overall in felt emotion, non-autistic observers demonstrated higher accuracy in identifying emotions expressed by autistic compared to non-autistic participants, and demonstrated more differentiation in their intensity ratings of strong and mild emotions for autistic participants. Although these findings are not consistent with reports of flat affect in autism (Stagg et al., 2014), they do align with several other studies reporting enhanced recognition of facial affect in autistic participants with lower support needs (Faso et al., 2015; Hubbard et al., 2017). Such discrepancies between studies may correspond in differences in methodology and sample characteristics, but collectively they suggest that flat affect is not a universal characteristic of autism.

Here, observers were more accurate in identifying happiness in participants than sadness or anger, suggesting a general positivity bias. This tendency aligns with broader societal norms that privilege the recognition and reinforcement of positive affect (Leppänen & Hietanen, 2004), particularly within neurotypical communication frameworks. Notably, however, this positivity bias was not applied equally to autistic and non-autistic participants, as observers were more accurate at identifying happiness in non-autistic relative to autistic participants. For non-autistic individuals, whose social presentations typically align more closely with normative expectations, observers may default to assumptions of positivity—assumptions that are less readily extended to autistic individuals, whose expressions may be perceived as socially divergent or less predictable. These findings align with the double empathy problem, which emphasizes reciprocal challenges in cross-neurotype understanding: the disproportionate under-attribution of positivity to autistic expressivity relative to non-autistic participants suggests a reduced ability among non-autistic observers to gauge the intensity of internal positive states of autistic targets .

Indeed, although observers were more accurate at identifying happiness in non-autistic participants, they demonstrated the opposite pattern for negative emotions; they were more accurate identifying both anger and sadness in autistic participants. Although this pattern could reflect a greater expression of negative emotion by autistic participants and/or a greater suppression of negative expressivity in non-autistic participants, iMotions did not classify autistic expressions as more negative than non-autistic expressions. It is possible that autistic and non-autistic individuals differed subtly in how they expressed emotion, or that iMotions has limited sensitivity to the intensity or dynamics of affective displays, but it is also possible that the greater accuracy observers demonstrated in the identification of negative emotion in autistic participants could reflect a perceptual bias for perceiving more negativity in autistic individuals. This interpretation is supported by the finding that they rated autistic expressions of negative emotion as more intense despite the two groups reporting comparable levels of felt emotion during the task and by iMotions failing to classify their expressions as more negative. Thus, although on the surface non-autistic observers demonstrating greater accuracy at identifying negative emotions in autistic participants is inconsistent with a double empathy interpretation, it could represent an artifact of over-attributing negativity to autistic participants. In retrospect, inclusion of a “neutral” expressivity condition may have been able to provide additional support for this interpretation. A non-autistic bias for perceiving negative affect in autistic expressions should be apparent even in a neutral condition when no emotion is being expressed.

Taken together, the evidence points to observer bias as contributing to the heightened perceived negativity of autistic expressions. A non-autistic bias toward perceiving negativity in non-autistic expressions may be shaped by implicit associations, limited cross-neurotype familiarity, and stereotypes surrounding autistic emotional expression (Heasman & Gillespie, 2018; Sasson et al., 2019). The assumption that autistic individuals express emotion less clearly or less genuinely may lead observers to over-attribute intensity or misclassify negative affect (Brewer et al., 2016; Grossman et al., 2013). This dynamic was further supported by findings showing that non-autistic observers rated autistic expressions—especially those of sadness and anger—as more emotionally intense than those of non-autistic participants. In contrast, happiness was rated similarly across groups.

The finding that observers perceived more negativity in autistic participants despite iMotions not classifying autistic expressions as more negative raises the possibility that autistic individuals may express emotion through subtle or non-facial channels—such as body language, eye gaze, or timing—that are not easily captured by facial recognition software but may still be registered by human observers. Alternatively, in the absence of clear emotional markers, observers may default to inferring negativity when confronted with ambiguous expressions that deviate from expected norms. Taken together, these results suggest that automated systems, like human observers, may be attuned to neurotypical expressive patterns and less responsive to the affective cues characteristic of autistic expression. This has important implications for the application of affective computing tools in clinical, educational, and social contexts involving autistic individuals.

Informing observers of the diagnostic status of autistic and non-autistic participants did not affect either their overall emotion recognition accuracy or their emotion intensity ratings. These findings contrast with the influence of diagnostic disclosure on impression formation (Sasson et al., 2017; Sasson & Morrison, 2019) and suggest that while disclosure may foster more favorable impressions, it does not enhance observers’ ability to recognize or interpret autistic emotional expressions. In other words, disclosure may help observers contextualize perceived differences in social expression but does not necessarily enhance their capacity to accurately infer an autistic person’s internal state. Although the disclosure condition may have provided observers the opportunity to downplay or suppress biases present in the blind condition, this possibility cannot be confirmed from the current data. Future research is needed to disentangle these mechanisms more fully. There was one exception to the lack of an effect of disclosure, however. Sad expressions by autistic participants were rated as more intense when their diagnosis was withheld, suggesting that awareness of an autism diagnosis may dampen perceptions of autistic sadness—perhaps due to stereotypes about reduced emotionality in autism.

Supporting these observer-based findings, facial expression analysis using iMotions software also differentiated between autistic and non-autistic expressions. In the mild-intensity condition, iMotions more frequently classified autistic participants’ expressions as neutral and those of non-autistic participants as positive. However, these results should be interpreted with caution, as the follow-up comparison between intensity and affect was not statistically significant. Furthermore, because iMotions is normed on neurotypical expressions and may be less sensitive to naturally occurring autistic expressivity, it serves as a comparative reference rather than an absolute standard of objectivity. iMotions also showed minimal differentiation between intensity levels, despite both expressers and observers rating the intensity of the strong condition higher than the mild condition. This iMotions result, reflected in Figure 3, therefore may reflect limitations of the iMotions algorithm rather than an absence of expressive variation in participants’ affective displays.

Moreover, this pattern is consistent with findings from Foster et al. (2024), where non-autistic expressions were more often labeled as positive compared to autistic expressions, further supporting the presence of a positivity bias favoring non-autistic expressions. However, unlike Foster et al. (2024), iMotions in the present study also classified autistic expressions as more neutral than non-autistic expressions. One possible explanation for the discrepancy in iMotion classifications between the two studies is that the context of expression production differed substantially between the studies: Foster et al. (2024) examined expressions during a mock job interview and a discussion of a personal interest, both of which are more interactive and performative contexts. Non-autistic people may have been more inclined to perform expressive positivity in the job interview context to enhance how they were evaluated by their interactive partner. In the current study, however, participants were video-recorded individually when explicitly feeling emotion under both mild and intense conditions. Thus, iMotions classifying more neutral emotion in autistic expressions in the mild intensity condition may suggest that subtle naturally evoked emotions in autistic individuals may be underrecognized by automated emotion recognition.

While these findings offer valuable insights, several methodological and contextual limitations must be acknowledged. First, the expresser sample lacked diversity and did not reflect the full heterogeneity of the autism spectrum, limiting the generalizability of results. None of the autistic participants had intellectual disabilities or were non-speaking. Second, cultural, gender, and face structure differences influence how emotional expressions are produced, experienced, and interpreted (Deng et al., 2016; Jack et al., 2012; Stolier et al., 2018) but were not assessed here. The relatively small number of expressers may have reduced statistical power, precluding the ability to examine these potential moderating effects within or across the expresser and observer groups. In addition, the observer sample was predominantly Asian and female college students, further limiting generalizability. Gender is known to influence emotion perception: women tend to recognize emotional expressions—especially negative ones—with greater accuracy (Hall & Matsumoto, 2004; Sasson et al., 2010; Thompson & Voyer, 2014), and East Asian cultures often emphasize emotional restraint and contextual interpretation, which can shape responses to facial expressions differently than Western norms (Ekman et al., 1987; Matsumoto, 1990). Finally, a minority of autistic participants self-identified as autistic and did not have formal diagnoses. All primary findings did not change when self-identifying participants were excluded from the data set, suggesting that their data were generally consistent with those produced by the formally diagnosed participants. However, because one new interaction involving diagnostic disclosure did emerge, future research that includes self-identifying autistic participants are encouraged to consider whether and how their inclusion may alter results.

Several methodological choices may also have affected results and their interpretation. First, the absence of a neutral-expression condition limited our ability to establish a definitive ground truth for interpreting group differences in perceived negativity, and including a separate comparison group of autistic observers could have helped inform the presence or absence of non-autistic bias. Second, the emotion-elicitation task relied on instructed re-experiencing rather than spontaneous expression, which may have reduced ecological validity. Although we collected self-reported ratings of the intensity of felt emotion during the task, future research should also incorporate measures of spontaneous expression as well as assessments of how effectively participants re-experienced the emotional events. Given that observers struggled to accurately identify negatively valanced emotional states of non-autistic participants, these participants may have expressed felt sadness and anger too subtly in the face for observers to notice or relied more on using alternative expressive cues (e.g. body language) not assessed here.

Third, this study did not measure the possible presence of alexithymia or the broader social and emotional consequences of expressive differences— both of which warrant further investigation. Expressive differences may shape emotion regulation strategies in both autistic and non-autistic adults (Cai et al., 2018; Mazefsky et al., 2013) and alexithymia, defined by difficulties in identifying and describing emotions (Luminet et al., 2021), is significantly more prevalent among autistic people (Lin et al., 2025). However, all autistic and non-autistic participants were able to identify and recount autobiographical experiences of the three emotional states examined here, and the two groups did not differ in how much emotion they reported feeling during the elicitation procedure, although the difference in felt happiness between the groups approached significance. Nevertheless, future studies of emotion production and perception in autism should account for the potential presence of alexithymia. Fourth, masking—conscious or unconscious efforts to suppress or modify emotional expression—adds further complexity by shaping both the expression and interpretation of emotion (Cage & Troxwell-Whitman, 2019; Hull et al., 2017) and was not assessed in the current study. It is unclear, therefore, whether autistic and non-autistic participants may have masked some of their emotional expressivity in ways that affected observer perceptions. Future studies comparing how emotional expressivity in autistic people is perceived when they are and are not consciously masking is warranted.

Together, these findings highlight that emotional perception is shaped not only by individual expressivity, but also by the interpretive lenses of observers. Non-autistic observers perceived emotional expressions from autistic and non-autistic individuals differently under naturalistic conditions. They tended to attribute more negativity to autistic expressions and more positivity to non-autistic ones, despite both groups reporting similar emotional experiences. This bias for perceiving greater negativity in autistic faces could hinder non-autistic individuals’ ability to accurately interpret autistic emotionality, and in turn, prevent autistic individuals from receiving appropriate emotional support. Developing more inclusive approaches to emotion recognition—ones that validate and embrace neurodivergent expressive styles—may help reduce miscommunication, improve emotional attunement, and foster more meaningful connection and inclusion across neurotypes.

Footnotes

Ethical considerations

The study was approved by the University of Texas at Dallas Institutional Review Board (IRB)

Author contributions

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the UCLA Autism Intervention Research Network on Physical Health (AIR-P), although no funds from this grant were used in the execution of the project.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.