Abstract

Access to generative artificial intelligence (AI) and the ability to use it are becoming crucial for learning, work, and leisure. In this context, AI literacy is recognized as critically important for adult language learners, including those from migrant and refugee backgrounds. While there have been calls to include AI literacy in language learning programs, its inclusion requires knowledge about learners’ current practices with generative AI and associated capabilities. This article reports selected findings from a large mixed-method study which explored uses of generative AI within one Australian government-funded adult education program. The study included a survey and focus groups, representing participants across Australia. Integrating sociomaterial and sociocultural perspectives which, together, conceptualize literacy and literacy learning as materially and socially embedded practices, we explore the AI literacy practices and capabilities of adult English as an additional language (EAL) learners from migrant and refugee backgrounds within their everyday contexts. The study found that the majority of learners from migrant and refugee backgrounds either had not heard about generative AI, or had never used it. This is a noteworthy and troubling finding, especially given the commonly held understanding that AI literacy will be increasingly important in coming years. Nevertheless, the focus group participants who had used generative AI reported positive attitudes and emerging repertoires of AI literacy practices associated with learning, everyday tasks, leisure pursuits, and professional activities. While these participants showed emerging, though fragmented, AI literacy capabilities, there was a significant proportion of language learners without any apparent AI literacy capabilities. These findings suggest that these learners are missing out on many opportunities afforded by AI, which further reinforces the disadvantage of this group. The article concludes with implications for policy, curriculum, and practice, with the aim of bolstering the inclusion of AI literacy in language learning programs.

Keywords

I Introduction

Over the last several years, there has been an unprecedented rise and adoption of artificial intelligence (AI), especially generative AI, in different domains of life. Generative AI, which is defined as ‘an Artificial Intelligence (AI) technology that automatically generates content in response to prompts written in natural language conversational interfaces’ (United Nations Educational, Scientific and Cultural Organization, 2023, p. 8), has drawn significant attention since ChatGPT became publicly available in November 2022. Generative AI chatbots such as ChatGPT, Claude, Copilot, and many others can rapidly generate coherent and contextually relevant texts based on a given prompt for almost any topic and writing style. They also can create sophisticated multimodal content, maintain a ‘memory’ that carries over multiple interactions, engage in interactions in different languages, and incorporate search functionality which allows them to access and retrieve real-time information from the web (Fui-Hoon Nah et al., 2023; O’Dea et al., 2024).

AI technologies are reshaping daily life, learning, and work, bringing both benefits and challenges (Ng et al., 2021a, 2012b; Pasquale, 2015). The rise of generative AI has led many scholars to consider the benefits and practical applications for education settings (Fui-Hoon Nah et al., 2023; Zhu et al., 2023). Others remain deeply concerned about its negative impacts, which include over-dependence, ethical issues, and possible diminution of creativity and critical thinking (Williamson et al., 2024). Despite differing perspectives on its potential and limitations, the proliferation and accessibility of generative AI platforms have illuminated a growing need for AI literacy (Fui-Hoon Nah et al., 2023; O’Dea et al., 2024; Tour et al., 2025a, 2025b). Equipping people with relevant knowledge, skills, and understandings – or what we refer to as AI literacy below – is now seen as a key responsibility of educational institutions and learning initiatives (Ahmad et al., 2023).

AI literacy is especially significant for adult English as an additional language (EAL) learners in Australia. Australia’s population of around 25 million people represents over 300 ancestries, speaks approximately 490 languages, and practises a diverse array of cultural and religious traditions (Zhang et al., 2023). Furthermore, just over 7 million people in Australia, representing 27.6% of the population (ABS, 2022), were born overseas and came to Australia through a range of migration, asylum, and humanitarian programs. They often have to navigate AI-enhanced everyday bureaucratic systems and processes (Damar et al., 2024; Weerts, 2025). Furthermore, generative AI holds particular potential for this group of language learners in terms of overcoming language barriers, simplifying access to information, and bridging gaps in communication in areas such as online services, education, employment, and participation in social life (Jahani et al., 2024).

However, as indicated above, navigating AI-enhanced processes, accessing AI benefits, and overcoming its limitations requires AI literacy. Consequently, there is a growing call to include AI literacy in language learning programs (Creely et al., 2025; Pegrum et al., 2022; Warschauer & Xu, 2024). In Australia, there are several government-funded programs that assist eligible migrants and humanitarian entrants to develop their English language, navigate settlement processes, improve employability, and enhance readiness for further education. As technology reshapes our educational horizons, integrating AI literacy into these programs is becoming increasingly important. At the same time, there is a lack of understanding of how educators can support the development of AI literacy.

Such understanding requires knowledge about learners’ current AI literacy practices and capabilities. Literacy capabilities are taken to mean knowledge, skills, understandings, dispositions that enable reading, writing, and language use, while literacy practices are the observable, socially and culturally embedded behaviours and ways people actually use language in everyday contexts (Barton & Hamilton, 2012; Street, 1984). Practices are contingent upon capabilities, as what people do with literacy depends largely upon the underlying capabilities they possess. These insights need to be drawn from adult learners’ funds of knowledge, which enable them to participate as partners in co-creating relevant and meaningful learning experiences (Moll, 2019).

This article reports on findings from a large, mixed-method study which examined the use of generative AI in one government-funded educational program for adult EAL learners in Australia. This article addresses the following research questions:

What are adult EAL learners’ everyday AI literacy practices?

What do their everyday practices with generative AI reveal about their AI literacy capabilities?

The findings provide insight into where educational interventions may be needed, suggesting how AI literacy may be best incorporated into language learning programs.

II Literature review

Since the public release of ChatGPT and the subsequent rise of different generative AI tools, research interest in generative AI and its use in education has surged significantly. There is a growing body of conceptual research which hypothesizes about generative AI and theorizes its potential and limitations in teaching, learning, and administrative practices (e.g. Creely & Carabott, 2025; Koraishi, 2023; Law, 2024). More recently, as we explore below, empirical research has started to document users’ actual experiences with generative AI. However, in critically considering this literature, we noted that much of the research centres predominantly on higher education (HE) contexts, perhaps reflecting pressing challenges that universities face as AI rapidly permeates institutional practices (Baidoo-Anu et al., 2024; Chan & Hu, 2023; Johnston et al., 2024). Research considering the uses of generative AI outside HE institutions and in everyday contexts is very scarce. There is a small body of empirical research focusing on English language learners but again in HE settings rather than wider language learning settings (Alzubi, 2024; Huang & Mizumoto, 2024; Vo & Nguyen, 2024). Nevertheless, a review of this research provides a useful backdrop to the study reported in this article.

1 Practices with generative AI

Exploring experiences in HE settings, research has consistently found that students are generally aware of generative AI and actively use it for academic purposes. Baidoo-Anu et al. (2024) found that more than 60% of participants used generative AI in the context of Ghanaian HE, reflecting the findings of a UK study by Johnston et al. (2024) who found that 51% of respondents used generative AI for academic purposes. A slightly lower percentage of use (45%) was reported by Chan and Hu (2023) for HE students in Hong Kong. At the same time, Baidoo-Anu et al. (2024) point out that not everyone is an active user of generative AI in contexts which are characterized by socio-economic disparities, digital divides, and widespread reliance on mobile phones for internet access. In their research in Ghana, 23% (

Our review of the literature also identified a range of practices with generative AI. Reported examples of generative AI use for academic purposes include completing individual and group class work (Baidoo-Anu et al., 2024), supporting written assignments (Baidoo-Anu et al., 2024; Chan & Hu, 2023; Huang & Mizumoto, 2024; Johnston et al., 2024), developing better understandings of difficult concepts and topics (Baidoo-Anu et al., 2024; Chan & Hu, 2023), creating artwork (Chan & Hu, 2023), completing repetitive administrative tasks (Chan & Hu, 2023), and coding (Johnston et al., 2024). Research on English language learners has documented practices with generative AI related to language learning: translating (Alzubi, 2024), exploring new vocabulary (Alzubi, 2024), checking grammar (Huang & Mizumoto, 2024), and generating authentic materials or guidelines for language study (Vo & Nguyen, 2024).

Similar practices with generative AI for academic purposes were reported amongst high school students in one Californian (US) public school (Higgs & Stornaiuolo, 2024). These included accomplishing routine organizational and information tasks, catalysing thinking and writing processes, creating email templates, and checking grammar and spelling. Overall, students in all these studies had positive attitudes towards generative AI, perceived it as useful learning technology, and showed willingness to integrate it into learning (Baidoo-Anu et al., 2024; Chan & Hu, 2023; Huang & Mizumoto, 2024; Vo & Nguyen, 2024).

Research on the use of generative AI in everyday settings is more fragmented. Some studies report on HE students’ use of generative AI for personal and professional reasons (Baidoo-Anu et al., 2024; Johnston et al., 2024) but they do not provide details on what exactly HE students have done with generative AI within these domains. In contrast, Higgs and Stornaiuolo (2024) offer some insights into everyday practices with AI amongst high school students, indicating that 91% of their participants reported ‘experimenting’ (p. 641) or ‘messing around’ (p. 641) with generative AI for fun or to entertain themselves. Another study of adult (18–70 years) early adopters of generative AI from the UK, US and Ireland (

2 AI capabilities

While previous research exploring people’s generative AI capabilities was not necessarily conducted from a literacy perspective, it nevertheless offers useful insights into some of the skills, knowledge, and understandings of AI users. Studies of generative AI in HE settings generally report that students using AI can easily navigate and operate this technology. For example, O’Dea et al. (2024) found that students in Hong Kong and the UK had developed some basic understandings of the main functions and were able to utilize common generative AI tools but were less confident in understanding foundational principles and mechanisms behind AI. English language learners in studies by Vo and Nguyen (2024) and Alzubi (2024) reported that generative AI is easy to use, indicating that they found its interfaces and functions intuitive and accessible. Similarly, in Baidoo-Anu’s et al. (2024) study, students reported that it does not take too long to learn how to use ChatGPT and this technology does not require extensive technical knowledge.

In addition to technical capabilities, previous research also touches upon critical capabilities, reflecting well-documented concerns about AI limitations. Vo and Nguyen (2024) reported that English language learners doubted the quality of ChatGPT outputs, while Chan and Hu (2023) found that more than half of their participants had concerns about accuracy, transparency, privacy, and ethical issues. Similarly, Higgs and Stornaiuolo (2024) observed that high school students in their research approached generative AI ‘with a critical lens’ (p. 643) and demonstrated awareness of bias coded into algorithms, ethical concerns, and content ownership dilemmas. This suggests that, despite their age, these participants displayed a profound ‘depth of philosophical inquiry’ (p. 646) whilst working with generative AI.

At the same time, some findings suggest that users’ critical capabilities may be fragmented and uneven. For example, Fiialka et al. (2023) and Chan and Hu (2023) found that generative AI is often used as a search tool. While it was not clear what platforms were used by the participants for this purpose, such use can be deeply problematic. One of the main limitations of generative AI models is ‘hallucinations’, which are instances when generated content sounds plausible but is incorrect or entirely fabricated (Fui-Hoon Nah et al., 2023). Furthermore, O’Dea et al. (2024) reported that their participants were not very confident in evaluating AI tools. This is perhaps not surprising, given the complexity of generative AI practices and the limited (if any) training and education reported in previous research (Baidoo-Anu et al., 2024; O’Dea et al., 2024).

Research on AI literacy of migrants and refugees is scarce. Sūna and Hoffmann (2024) explored AI technologies in the lives of migrants in Germany and found that while the participants had basic awareness of AI, their knowledge of functionalities and consequences of AI varied greatly from case to case. Importantly, they found that most of the participants did not exercise agency in the context of AI use. Even if some participants found AI patronizing and manipulative, not many took action to address this.

3 Conceptual framework

AI literacy is widely viewed as an increasingly salient component, or perhaps an extension, of existing literacies (Luckin, 2023; Ng et al., 2021b), and digital literacies in particular (Munoz et al., 2023; Ng et al., 2021b; Sabzalieva & Valentini, 2023). As noted by Pegrum et al. (2022), digital literacies are typically described as involving some combination of individual skills or competencies; situated social practices; and dispositions, attitudes, or ‘habits of mind’ (EDUCAUSE Learning Initiative, 2019). As with other digital literacies, AI literacy definitions typically emphasize competencies, and particular practices, but we argue for greater focus on social practices and dispositions. The disruptive nature of generative AI demands a more integrated, relational approach.

As such, a thorough investigation of AI literacy practices and capabilities of adult EAL learners requires conceptual understandings that encompass both the sociocultural context in which AI is used and the nature of generative AI as a disruptive and agential technology. While sociomaterial and sociocultural perspectives have typically been viewed as separate theoretical traditions, we argue that generative AI necessitates their integration. The sociocultural model of AI literacy shown below positions AI as a mediational technology within human activity, while acknowledging that generative AI possesses forms of agency previously unrecognized in sociocultural theory. In our conceptualization, AI literacy practices emerge through a relational imbrication where human agency and AI agency are mutually constitutive, with neither fully autonomous nor completely subordinate. This integration allows us to maintain focus on human learning while recognizing the agential capacities of AI technologies that actively shape social practices and meaning-making processes within cultural contexts.

4 Sociomaterialism

Sociomaterialism is a posthuman paradigm that positions the personal, social, and material worlds as interwoven and intersecting, and where material entities possess agency, impacting and shaping human actions within sociocultural contexts (Orlikowski, 2007). This framework, then, suggests that agency does not belong solely to humans but arises through entanglements deriving from the interconnectedness of human and non-human actors (Barad, 2003), with ‘the interweaving of human and material agencies’ characterized as ‘imbrication’ (Leonardi, 2011, p. 150). A sociomaterial approach to the shared agency of humans and AI, sometimes also referred to as a sociotechnical or relational approach (Bearman & Ajjawi, 2023) or new materialism (Matthews, 2024; Tang & Cooper, 2024), is now being widely adopted in the research literature on generative AI in education, including in the context of AI for language learning (Godwin-Jones, 2024). This perspective informs the sociocultural model employed in this study.

5 AI literacy for language learners: a sociocultural perspective

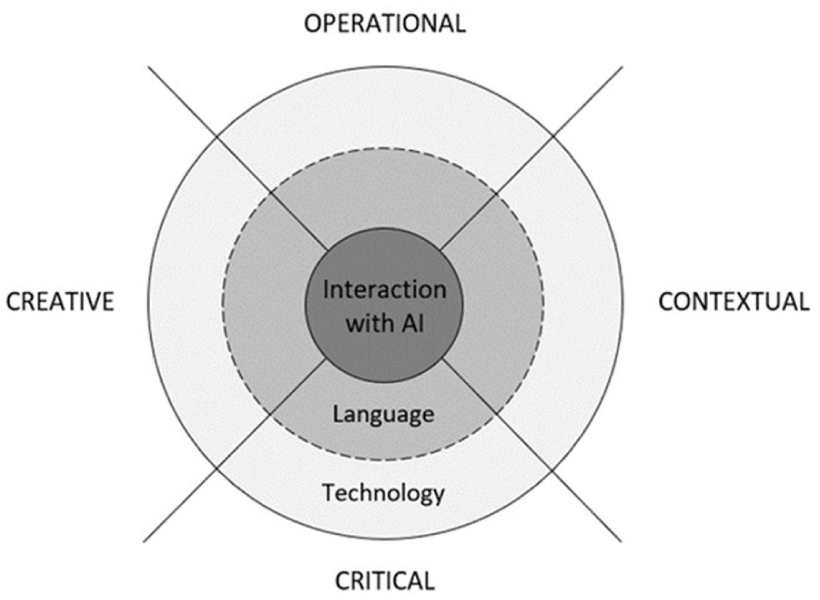

In this research, we employ a sociocultural model of AI literacy for language learners (Tour et al., 2025a, 2025b) which centres on human–AI interaction (Figure 1), and set it within the larger paradigm of sociomaterialism which brings attention to the shared agency of humans and AI in a posthuman world.

A sociocultural model of AI literacy.

Drawing on a sociocultural perspective on literacy (Durrant & Green, 2000; Jones & Hafner, 2021; Street, 2009), the model conceptualizes AI literacy as a repertoire of practices with AI. Such practices are grounded in interaction between people and AI where language and technology are tightly interconnected and where humans and AI share agency (i.e. humans and AI collaborate to produce a final product) (Creely & Blannin, 2025; Godwin-Jones, 2024). AI literacy practices are socially situated; that is, they are shaped by contexts, social purposes, identities and audiences, and influenced by power relationships. Examples of AI literacy practices might include asking ChatGPT to explain a grammar rule, draft an email, or plan a sightseeing itinerary.

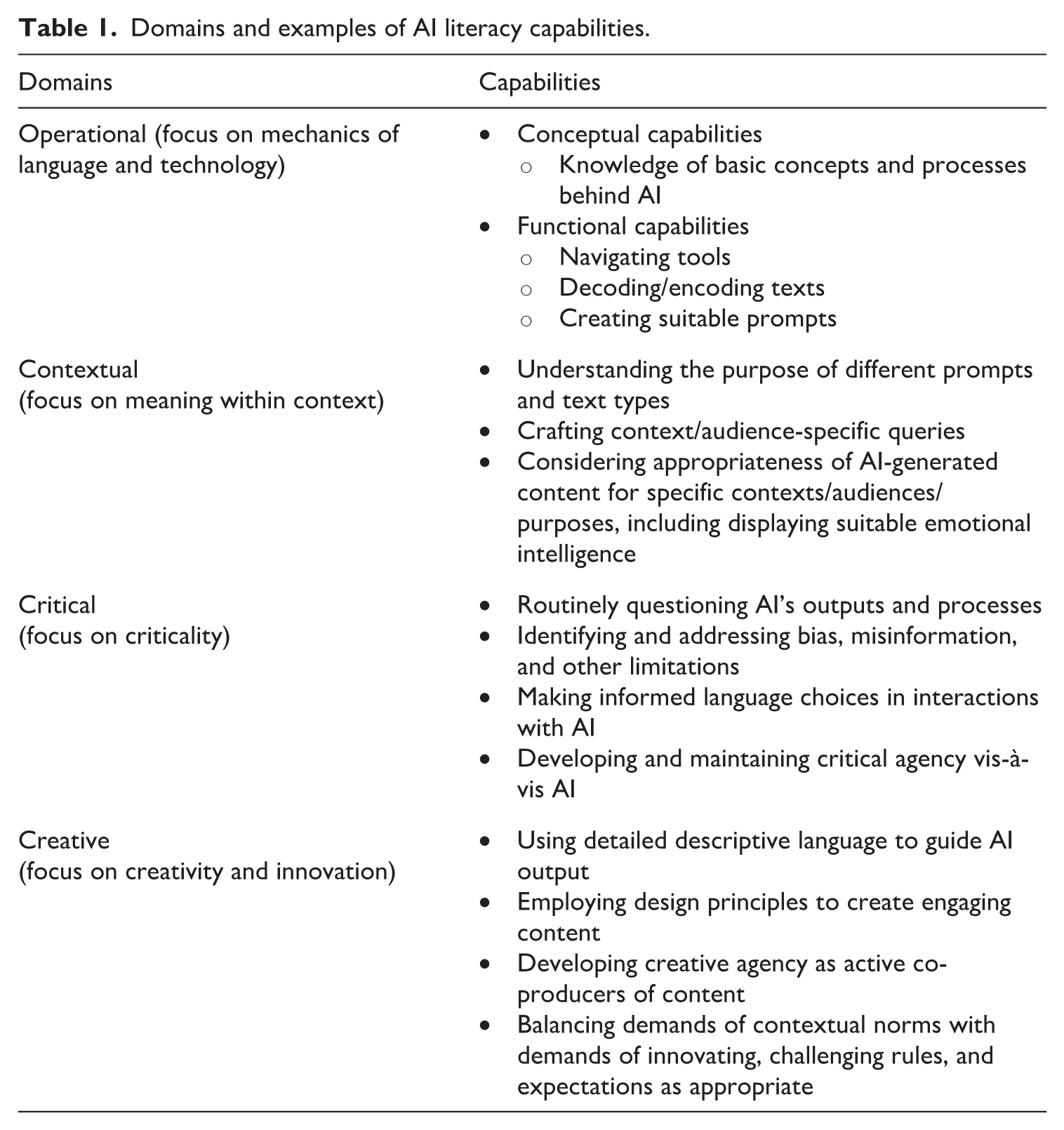

Meaningful engagement in AI literacy practices requires a range of capabilities (which encompass knowledge, skills, understandings, and dispositions) across all four domains (ie. the operational, contextual, critical, and creative domains). The notion of AI literacy capabilities is informed by a sociocultural perspective and it recognizes literacy as socially situated, culturally mediated, and agentive practices rather than a fixed set of technical skills (Barton & Hamilton, 2012; Street, 1984). It emphasizes not just what people know, but what they can do with literacy in their social worlds, drawing on their capabilities. As such, every AI literacy practice requires a unique combination of capabilities, depending on the AI platform used, purpose, context of practice, and nature of the AI-generated text. Some examples (rather than a comprehensive list) of AI literacy capabilities for each domain are summarized in Table 1 from Tour et al. (2025a, 2025b).

Domains and examples of AI literacy capabilities.

In brief, in order to explore people’s uses of generative AI in everyday life, learning, and work, it is useful to bring together a model of AI literacy – where practices grounded in human–AI interactions derive from capabilities across major domains – and a broad posthumanist, sociomaterial paradigm which accounts for the entanglement of human and AI agency.

III Research design and methodology

In this article, we draw on data from a larger mixed-methods project. The project examined the use of generative AI amongst three stakeholder groups in one government-funded educational program for adult learners in Australia (e.g. leaders/managers, teachers, and learners) in November 2023 – March 2024. For the purpose of this article our reporting is based on analysis of the data from a subset of the learner group; namely, the participants who identified themselves as EAL learners. Approval from Monash University Human Ethics Committee (ID 40630) and informed consent for participation was obtained prior to data gathering.

The first phase of the project involved a survey of the participants. A sequential mixed-methods approach was adopted by first using descriptive statistical analysis of the survey data to identify patterns and then utilizing these patterns to guide deeper exploration through semi-structured focus group interviews. The use of these two methods aligns with what Richardson (2000), drawing on postmodernist thinking, refers to as ‘crystallization’ (p. 13). In this sense, each method functioned as a facet of a crystal, allowing us to view the research phenomenon from multiple angles to develop a nuanced understanding.

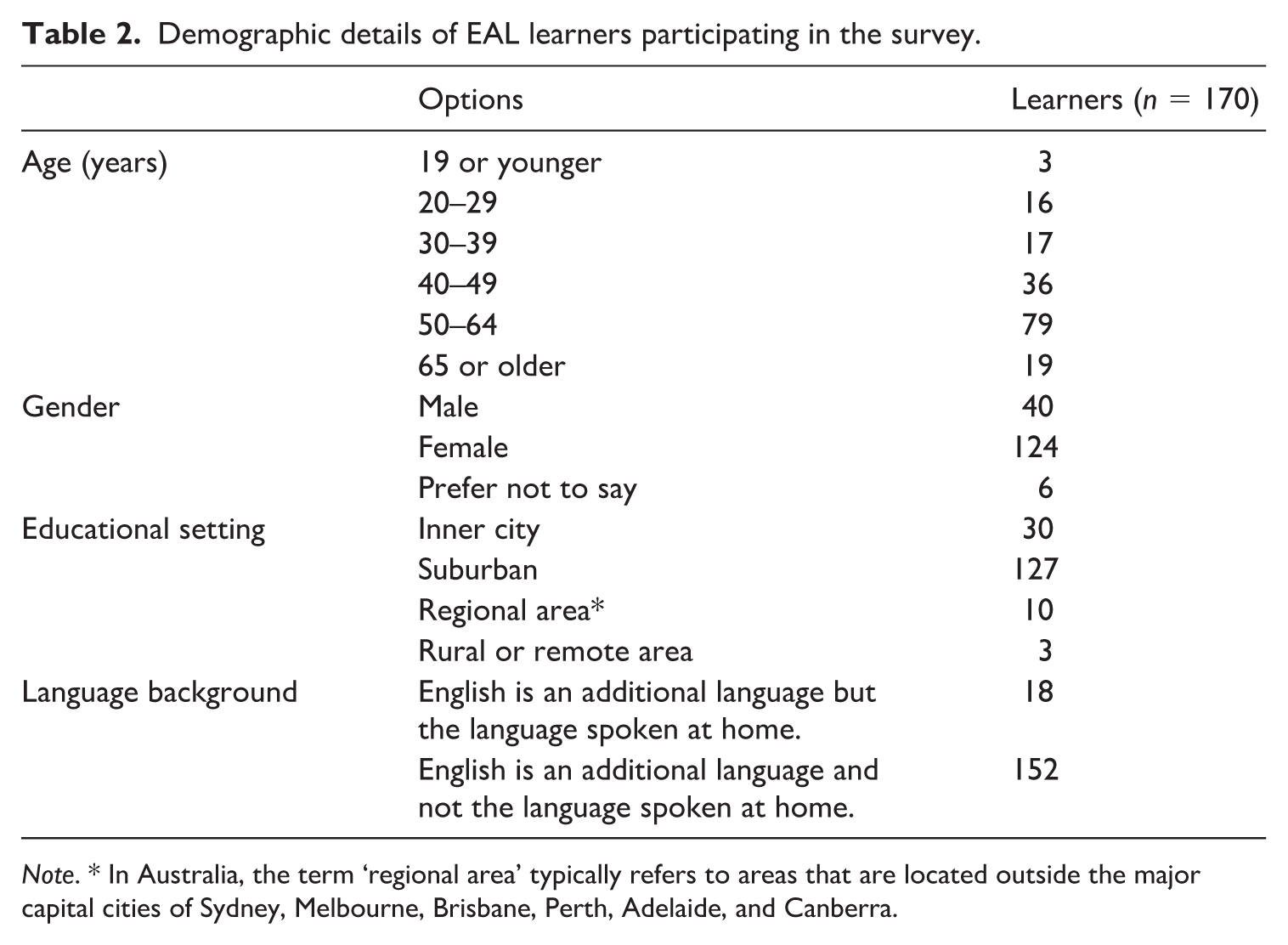

The online survey instrument was designed to address the key interests of the larger study: participants’ AI capabilities, current (or previous) uses of generative AI, attitudes towards generative AI, and future aspirations for its use. In total, 170 enrolled adult EAL learners completed the survey. As part of our commitment to respect participants’ choices about what they shared with us, the survey did not use forced responses on the items. Therefore, some of the items reported on in this article may have differing response rates, which are indicated as appropriate. Demographic details of the survey participants are provided in Table 2.

Demographic details of EAL learners participating in the survey.

Three 40-minute focus groups were conducted with individuals who had completed the survey and responded positively to an invitation for further discussion, allowing for deeper exploration, clarification, and open-ended conversation. The focus group discussions revealed a broader spectrum of practices and capabilities spanning multiple domains than the survey. This suggests that a collaborative dialogue may help EAL speakers articulate a wider range of experiences than they typically report in individual responses.

There were two focus groups with EAL learners (

Qualitative data were analysed using Brooks’ (2015) approach to coding and identifying themes. We employed

IV Findings: AI literacy practices and capabilities

The study found that the majority of the EAL learners from migrant and refugee backgrounds either had not heard about generative AI, or had never used it. Nevertheless, those who used generative AI reported positive attitudes and emerging repertoires of AI literacy practices associated with learning, everyday tasks, leisure pursuits, and professional activities. While these participants showed emerging, though fragmented, AI literacy capabilities, there was a significant proportion of language learners without any apparent AI literacy capabilities.

The findings of this study are presented in two sections. The first section presents the data captured from the survey of adult EAL learners, identifying broad trends in the respondents’ experiences with generative AI. The second section reports the focus group data and delves more deeply into the experiences of a smaller subgroup of participants. In particular, we present and analyse some examples of the participants’ practices, which allows us to explore what AI literacy capabilities they possessed and what capabilities required further development.

1 Survey findings

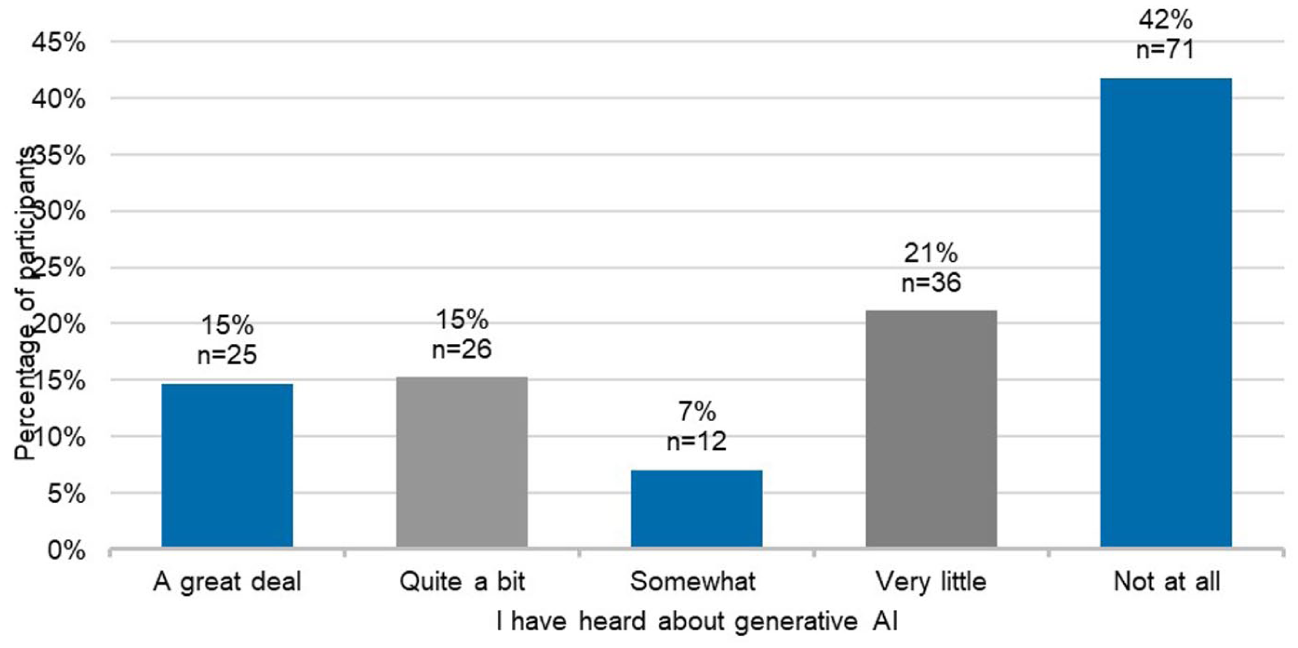

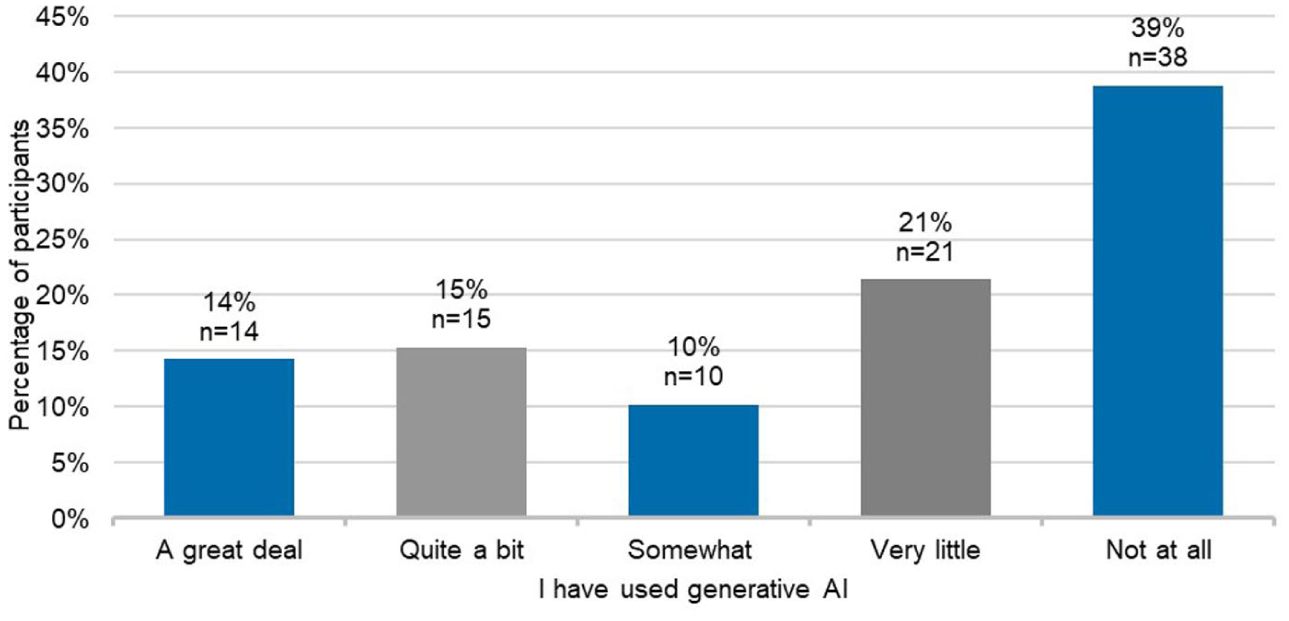

Given that this data was collected just one year after the introduction of ChatGPT, EAL learners (

Learners’ awareness of generative AI (

This data shows that at the time of data collection there was a considerable lack of awareness about generative AI among the EAL learner-participants, with a majority (

The subset of participants who had heard of AI (58%;

Learners’ use of generative AI (

Together, Figures 2 and 3 indicate that, overall, 64% (

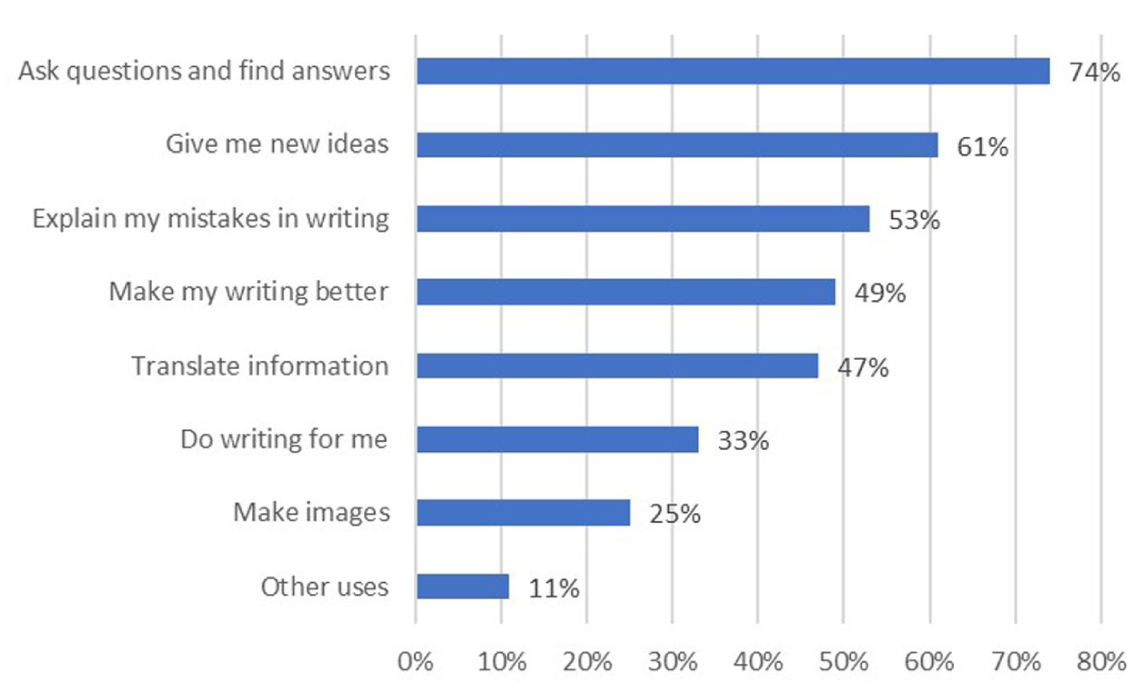

The survey respondents identified two specific platforms as their preferred choices: Open AI’s ChatGPT and Microsoft’s Copilot. In addition, the participants who had used generative AI (

Most common activities with generative AI.

The list of activities suggests that many of the participants were developing useful repertoires of AI literacy practices, extending beyond digital literacy practices associated with well-known technologies and moving to engage with the relationality inherent in generative AI. For example, in Figure 4 the top two responses may reflect some collaborative exchanges between humans and generative AI. Furthermore, the data show that the learners were requesting explanations of mistakes in their writing and creating images using generative AI, which other technologies were unable to support. This infers awareness of the affordances of generative AI, capacity to engage in interactive practices, and exercising agency when initiating dialogic exchanges to explain their errors and generate visual content.

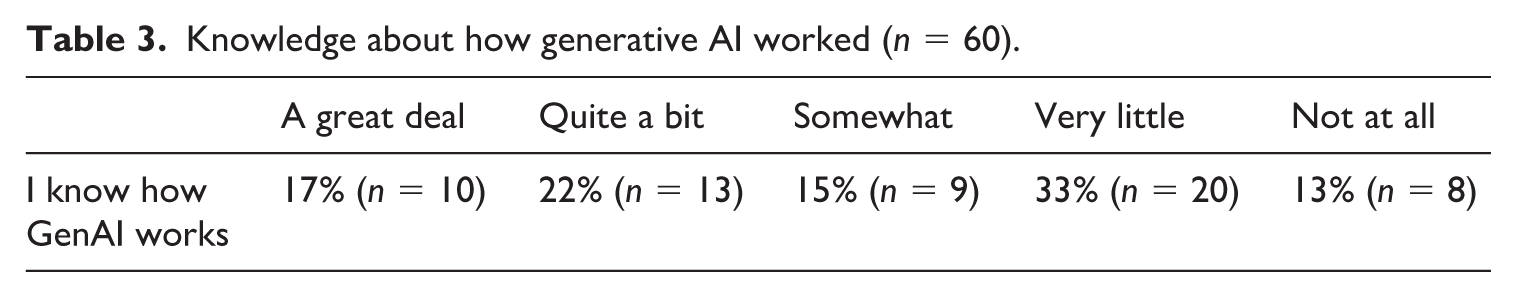

Furthermore, the survey included several questions in relation to AI literacy capabilities. In particular, the participants were asked if they knew how AI worked (Table 3). Among the subset of participants who reported using generative AI, 46% (

Knowledge about how generative AI worked (

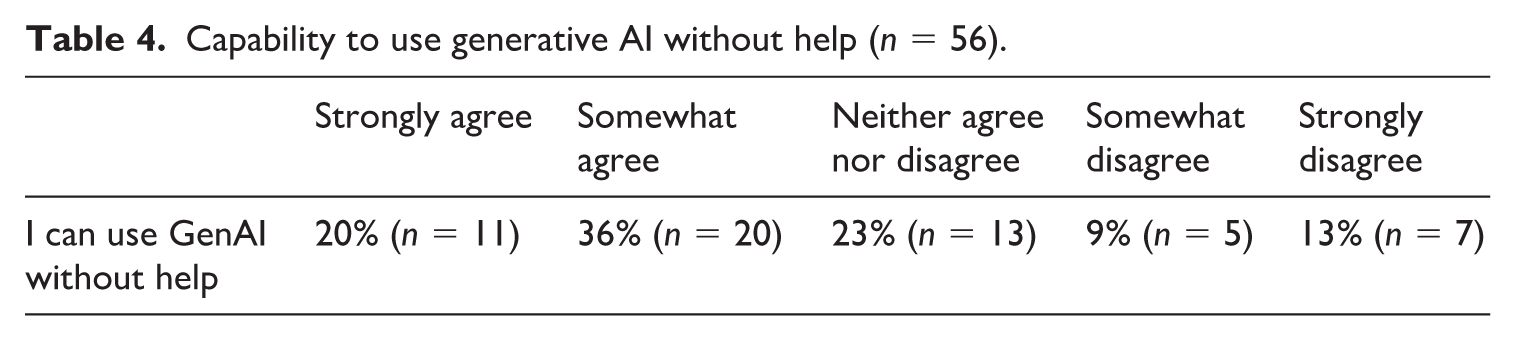

Those who used generative AI were further asked if they felt they could use generative AI without help (Table 4). The quantitative data reveals that only 20% of respondents (

Capability to use generative AI without help (

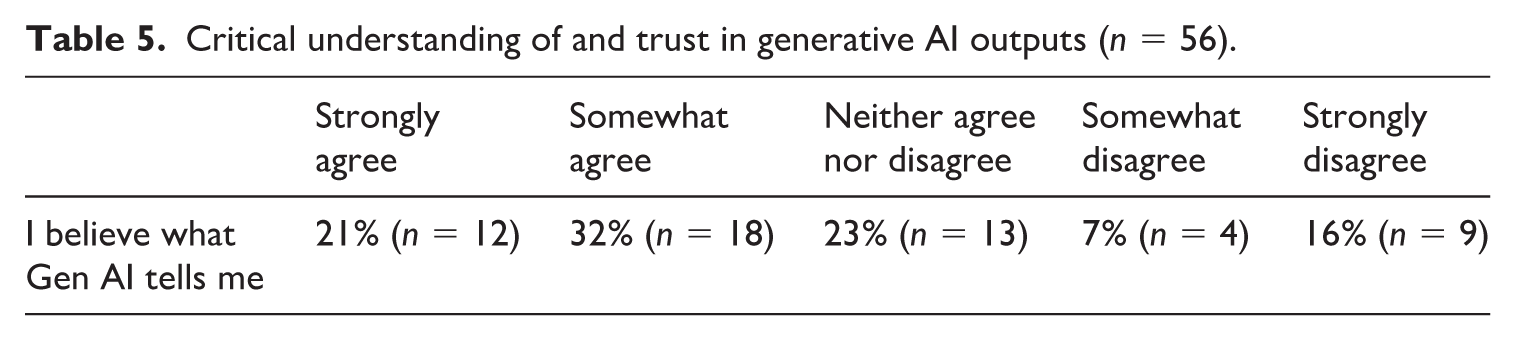

Turning to capabilities within the critical domain, survey participants were asked if they believed what generative AI told them (Table 5). Over half the survey respondents who had used generative AI (

Critical understanding of and trust in generative AI outputs (

In sum, the survey respondents demonstrated emerging but still developing AI literacy practices. Their capabilities were primarily concentrated in operational areas, including both conceptual understandings and functional skills, along with some critical thinking abilities. However, these skill sets remained somewhat narrow in scope.

2 Focus group findings

While the survey data revealed that the majority (

a Learning

Aligned with the findings from the survey (see Figure 4), many of the most popular AI literacy practices were associated with learning, and especially language learning, some related to participants’ educational programs and some self-initiated: Sometimes I use it [generative AI] for translation . . . [and] I do use ChatGPT to generate some of the suggestions for doing homework . . . It is very useful. The information it provides, much wider and in more detail, in two different languages, three languages. So, sometimes I want to search information in Chinese, and then in the Malay language, I can do that as well. (Participant 1). I mainly use it [generative AI] for information . . . The topics and all that – that I’m interested in from time to time. (Participant 1) [teacher translated for the student] She uses it for grammar, to check when she is writing messages, if they are correct grammar. (Participant 2) [teacher translated for the student] She is looking for books and texts and things, to help her to learn English. She’s found that there is an English tutor on ChatGPT. (Participant 2) General[ly], really, it’s learning . . . for the correct my grammars when I writing some letter – some emails, and study. Say study. For writing. (Participant 3)

All the participants saw generative AI as opening up many new learning opportunities that were not readily available previously. This highlights how learners were not only recognizing the potential of AI technologies but were also actively integrating them into their everyday practices. Statements like ‘I do use ChatGPT to generate some of the suggestions for doing homework’ reveal an ability to navigate AI interfaces and apply them to language learning. This reflects functional capabilities linked to the operational domain. The first quote also indicates a positive attitude to generative AI rooted in several practical benefits for this adult language learner. It suggests conceptual capabilities within the operational domain such as understanding of AI’s multilingual functionality and capacity to provide detailed and comprehensive information. Furthermore, meaningfully engaging with AI to realize specific linguistic and communicative goals, or using it to enhance writing and personal expression, reveal capabilities related to the contextual domain. These include understanding the purpose of different prompts and text types, crafting context-specific queries, and considering the appropriateness of AI-generated content for specific purposes.

b Everyday tasks

Another important group of AI literacy practices was related to different everyday tasks, which were not fully captured in the survey data. One participant often used ChatGPT when assisting her children with homework, while two others used it for generating recipes: [teacher translating] She stated that she wants to be able to help her children with their homework, so she can put in little questions on ChatGPT for the questions that her children have with their homework, so she can see what they’re asking and how to answer it, to help it. (Participant 2) Sometimes like yesterday I just, classic brownies, I just want to make. So, I just write down how to . . . very quickly. They save time, and very quickly come out the things. (Participant 4) At home ChatGPT might help us to find a recipe, let’s say, for cooking. It’s one of the perks I guess like even for our daily basics. (Participant 5)

These examples illustrate how generative AI had become part of participants’ everyday literacy practices. An emphasis on speed and convenience suggests a positive attitude grounded in valuing its time-saving efficiency. The phrase ‘It’s one of the perks’ very much highlights the enhancement of everyday life activities. Generative AI seemed to be especially useful for one EAL parent who was able to use it to support her children’s schoolwork in the language she was still learning herself. Participants again demonstrated capabilities associated strongly with the operational (especially functional) and contextual domains, with the latter seen in their ability to interact with AI platforms to achieve specific goals within their own unique contexts. AI and human participants seemed to exercise complementary agency: humans strategically deployed AI for specific purposes (homework support, recipe generation), while AI responded contextually, enabling users to transcend limitations.

c Leisure pursuits

There was evidence of generative AI being used for creative literacy practices associated with leisure purposes, providing further insights into the purposes of generative AI use. One focus group participant was a YouTube vlogger who used generative AI to support her video production: I’m daily vlogger, family vlogging about topics – how to manage your time, how to manage your work stress with your kids, your family. I just share my content especially for mums, for school, growing kids, and home chores. So, I’m sharing the travelling experiences, and even my recipes . . . So, they [generative AI tools] help me to be script writing. They give me the ideas, and even the languages you know, how to engage audience, how to be perfect, like scripting. When I start vlogging, I need good content – I’m just time saving. (Participant 4)

The vlogger utilized the creative capacity of generative AI to help her develop engaging scripts, strategically employing the affordances of AI to improve her content delivery. While her AI literacy practices reveal capabilities relating to multiple domains, including the operational and contextual, of particular interest is the creative domain. Her scripted videos are an example of exercising creative agency in content co-production with generative AI.

Another participant reported using generative AI for writing song lyrics together with her husband: [Teacher translating] Her husband is a singer, and he does post videos online . . . So, she says that her husband uses it to assist him with ideas for his songs and singing. And she helps him to use it. The meaning of the words of the songs. So, they work together to get the ideas for new songs, and they work together to find the meaning of the new words to make songs. (Participant 2)

This couple integrated innovative practices into the process of musical composition, employing AI to support development of themes and crafting of lyrics, once again providing a clear example of shared agency in a creative pursuit.

d Professional activities

Two focus group participants shared examples of AI literacy practices associated with work. While the survey revealed that many respondents used generative AI to create images, the focus group data provided an extension to such creative literacy practices. One participant used AI to generate visual content which supported her self-employed work, while another mentioned the use of generative AI for writing a résumé: I have a small business, like packaging back in my home country. So usually we create some image, like special promotion on stuff, we kind of like use generative AI to help us make the image in terms of that promotion and stuff. (Participant 5) I actually got it [generative AI] to help me do my résumé the other day. (Participant 6)

Once again these practices show capabilities related to the creative domain, underpinned by operational, contextual and, perhaps, implied critical capabilities in the recognition of the affordances of AI for promotional and communication activities. The practices and their underlying capabilities emerged from the clear agency exercised by both human participants and AI as evidenced by the use of ‘help’ as a linguistic marker of shared agency. They were shaped by individual personal and professional needs which were realized through this collaborative interaction.

e Fragmented capabilities

While the previous sections explored a range of AI literacy capabilities evident in the practices of the focus group participants, the data also revealed a number of gaps in capabilities across various domains of the AI literacy model that were not possible to capture with the survey. Some of these gaps were openly acknowledged and identified as areas for improvement by the participants, but at the same time they seemed to be unaware of some others, perhaps due to the novelty and complexity of AI technologies: I would like to learn . . . Even I use for year and a half, but very general – ask questions, get all the information. But I think AI have more function, but probably I don’t know what other function can offer. (Participant 3) That’s how I would like to learn, something like how to use them [generative AI] to make a picture or drawing. I don’t really know this function, so I would like to [learn]. (Participant 3) I know AI – there’s no person, or maybe they do scan and check. But mainly there is computer-generated information. That’s what I know. (Participant 4)

While participants could navigate AI platforms of their choice as discussed above, some of their responses suggest rather incomplete conceptual knowledge and functional skills required for the use of AI technologies. In the first quote, the participant acknowledged that she probably only knew the basics and expressed her interest in learning a wider range of operational skills associated with AI literacy. The second quote revealed her limited engagement in multimodal practices with AI and, thus, fragmented creative capabilities. As evident in the last quote, the participant was aware that the content is generated by software but it was unclear if she understood the nuances of how generative AI works (e.g. large language models recognize patterns in data and then use these patterns to generate new but similar outputs). The comment ‘maybe they do scan and check’ suggests that the participant did not fully grasp the extent to which humans can be involved in overseeing and verifying AI-generated outputs.

The fragmented nature of participants’ capabilities was especially noticeable in respect of the critical domain. Some were aware that generative AI can produce inaccurate and biased information and has privacy issues: [Generative AI can have] glitches and may be biased. (Participant 6) [Generative AI is] kind of a smart algorithm, they might tap into our data. (Participant 5)

It was not clear whether and how they mitigated these limitations through the use of language in prompts or other critical literacy strategies (e.g. questioning AI or cross-referencing information). However, a lack of critical capabilities was apparent in other comments: So, yesterday, I just search which content is trending on Google. So ChatGPT helps me – ‘This topic is trending. You must go with this.’ . . . search is very good. (Participant 4) I do watch world current affairs on different channels, different TV stations, or YouTube. And then sometimes when there is some interesting technology or topics, or political topics or something happens somewhere around the world, and I need [generative AI] to search more information. (Participant 1)

Both quotes point to significant misunderstandings of how generative AI works. The YouTube vlogger asked ChatGPT to identify ‘trending’ topics on Google to inform her vlog, instead of searching directly through Google Trends. At the time of data collection, ChatGPT did not have capacity for real-time internet search. Her comment that ‘search is very good’ suggests there was no attempt to cross-check the information produced by generative AI. Another participant used generative AI to follow up on ‘current affairs’ he came across through other media channels. This suggests a lack of recognition that AI may ‘hallucinate’ and make up information and that the data sets it was trained on were not, at the time, current.

V Discussion

The findings of this study reveal complex intersections between AI literacy, adult language learning, and digital equity that have significant implications for both theory and practice. A key finding is that the majority of the EAL learners from migrant and refugee backgrounds either had not heard about generative AI, or had never used it. Some EAL learners in this study demonstrated emerging repertoires of AI literacy practices and capabilities. However, there is a clear contrast between our participants’ limited engagement with generative AI and the widespread adoption documented among higher education students and early adopters (Baidoo-Anu et al., 2024; Chan & Hu, 2023). This is not surprising, perhaps, given that many migrants and refugees face systematic barriers, rather than lacking interest or capacity to engage with AI technologies.

The findings also point to an emerging ‘AI divide’ (Gonzales, 2024) which impacts adult EAL learners from migrant and refugee backgrounds, amongst others. It extends beyond mere technological access to AI platforms into the complex domains of AI literacy capabilities. This divide is particularly troubling given the accelerating integration of AI technologies across professional, educational, and personal domains internationally (Ng et al., 2021a, 2012b). There is a risk that it will exacerbate existing social and educational inequities. The gap in AI awareness and utilization among adult EAL learners suggests not just a technological divide, but a potential barrier to social and economic participation in an increasingly AI-mediated society.

However, our findings also reveal a more nuanced picture of AI literacy development among adult EAL learners which can help to address the call for the inclusion of AI literacy in language learning programs, as noted by some researchers (Warschauer & Xu, 2024). The development of positive attitudes toward AI technology, coupled with burgeoning AI literacy practices among some participants, suggests potential ways of addressing this divide. As in previous research (Higgs & Stornaiuolo, 2024; Skjuve et al., 2024), early adopters in our participant group demonstrated how AI technologies could be meaningfully integrated into personal learning, daily activities, and professional contexts. By emphasizing these strengths and ‘funds of knowledge’ (Moll, 2019), our study moves beyond a deficit discourse and shows that when access and awareness barriers are overcome, adult EAL learners can develop AI literacy practices and associated capabilities across operational, contextual, critical, and creative domains, exercising agency in the process.

The study also reveals an important distinction between operational skills and more complex AI literacy capabilities. Reflecting previous research (Alzubi, 2024; Baidoo-Anu et al., 2024; Sūna & Hoffmann, 2024; Vo & Nguyen, 2024), our study suggests that basic operational and even some contextual capabilities are comparatively easy to acquire through ‘experimenting’ (Higgs & Stornaiuolo, 2024, p. 641) with generative AI. However, as is evident in our study, some EAL learners may need additional support to learn about a wider range of AI platforms and functions as well as to develop a more nuanced understanding of how AI works.

This is particularly important given the widespread misperception of current generative AI as a reliable search engine (Chan & Hu, 2023; Fiialka et al., 2023) and the lack of understanding of foundational mechanisms behind AI (O’Dea et al., 2024). In particular, our findings reveal limited evidence of sophisticated AI literacy practices involving complex capabilities at the intersection of language and technology within the critical and creative domains. These appear to have been more difficult for EAL learners to develop independently, suggesting the need for dedicated AI literacy programs focusing on these domains. Learners’ existing AI literacy capabilities and even curiosity about generative AI can be used as a foundation for such learning.

Another significant contribution of this study lies in its demonstration of how the development of AI literacy practices, and their supporting capabilities, is deeply embedded in the social contexts, work aspirations, and life experiences of EAL learners. This is often implied but not explored in detail in previous research (e.g. Baidoo-Anu et al., 2024; Johnston et al., 2024). Participants who engaged in AI literacy practices in their everyday lives demonstrated the capacity to make informed choices about when and how to use AI to fulfil personal goals and situational requirements. They employed generative AI to support their specific language learning needs, daily activities, leisure pursuits, or professional aspirations, indicating that learner agency plays a crucial role in meaningful AI literacy development. This finding suggests that effective AI literacy development among adult EAL learners may be best supported through approaches that connect technology use to learners’ lived experiences and personal objectives. By leveraging these everyday practices, educators can empower EAL learners to develop more sophisticated AI literacy capabilities.

The relational nature of generative AI emerges as a crucial theoretical consideration. From a sociomaterial perspective, all literacy practices involve some entanglement of human and material agency. Such entanglement is particularly apparent in AI literacy practices, given the more obvious nature of the agency associated with a generative AI interlocutor that can somewhat independently converse with and react to humans via natural language. This has major implications for language learners, who must use natural language to prompt AI in order to have it generate resources or support for their language learning and other activities. A sociomaterial theoretical framing helps emphasize that successful AI literacy development requires not just technology-related capabilities, but conceptualization of how human agency and AI affordances are co-constituted through practice. Echoing previous research (Sūna & Hoffmann, 2024), this study suggests that there is a need to develop critical agency to mitigate the inbuilt biases and limitations of AI, and creative agency to draw out its transformative potential without the loss of human agency. Many learners, including the EAL learners at the centre of this study, are likely to require support in developing these forms of agency, linked in particular to the critical and creative domains. This can help ensure a balancing of human and AI agency in the meaningful co-generation of texts and artefacts.

The importance of investigating AI literacy among adult EAL learners from migrant and refugee backgrounds extends beyond literacy capabilities and practices to also encompass questions of equity and participation in digital society. As AI increasingly governs access to essential services, work, and civic life, limited AI literacy compounds existing disadvantages, particularly where language barriers intersect with restricted digital access. Understanding how AI systems classify, interpret, and sometimes misread human communication is vital for informed decision-making and self-advocacy. By developing this awareness, learners can recognize and respond to potential biases that may marginalize non-standard or accented language use. This is an important area of research because it highlights how AI literacy functions as a form of social capital, enabling migrants and refugees to act with greater confidence and autonomy in AI-mediated environments. Educators therefore have a crucial role in designing culturally and linguistically responsive AI literacy programs that empower adult EAL learners to navigate digital systems in healthcare, employment, education, and banking. Strengthening AI literacy not only supports individual empowerment but also contributes to broader goals of inclusion, equity, and participation in a more and more digitized world.

VI Conclusions

This study has some limitations. Our findings derive from self-reported AI literacy practices among the minority of participants who used generative AI, limiting both scope and depth. We could not engage extensively with the majority who reported no AI knowledge or use, and participants’ varying English proficiency may have constrained their contributions within this single national program context. Despite these constraints, our findings reveal a troubling reality: an emerging ‘AI divide’ that threatens to deepen existing inequalities for adult EAL learners from migrant and refugee backgrounds. The majority of participants had never heard of or used generative AI, creating a contrast with widespread adoption among other populations. This gap extends far beyond technological access. Indeed, it represents a potential barrier to social and economic participation in an increasingly AI-mediated world.

At the same time, this study also uncovers reasons for optimism. When access and awareness barriers were overcome, participants demonstrated meaningful AI literacy development across operational, contextual, critical, and creative domains. They integrated AI technologies into personal learning, daily activities, and professional contexts, exercising genuine agency in the process. This challenges deficit narratives and highlights the untapped potential within this community.

Our findings demand urgent action on multiple fronts. Educational initiatives must prioritize targeted interventions that increase AI awareness and access through wide-reaching campaigns, practical demonstrations, and hands-on experiences in both educational and community settings. AI literacy has become essential for employment and social participation, so leaving these learners behind is not an option.

Program design must recognize that AI literacy for EAL learners exists at the complex intersection of language and technology. The sociocultural model of AI literacy, informed by a sociomaterial perspective, can guide the development of learning programs that help learners develop and exercise agency in their AI interactions. This approach must balance operational skills with more sophisticated capabilities in critical and creative domains, where participants showed the greatest need for support. From a policy perspective, digital inclusion frameworks require fundamental reconceptualization. Existing digital literacy programs must be redesigned to explicitly address AI-specific components while maintaining sensitivity to the unique challenges facing adult EAL learners. The stakes are too high for incremental change.

In sum, this research addresses a critical gap in our understanding of AI literacy among adult EAL learners from migrant and refugee backgrounds. While further collaborative research with both learners and teachers remains essential, the implications are already clear: without deliberate intervention, we risk creating a new form of digital exclusion that compounds existing disadvantages. However, our findings also demonstrate that with appropriate support, these learners can acquire sophisticated AI literacy practices that enhance their agency and participation in society. The choice between perpetuating inequality and fostering empowerment lies in our collective response to these findings.

Footnotes

Data availability statement

The data that has been used is confidential.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Ethical approval

Monash University Human Ethics Committee approval (ID 40630).

Informed consent statements

Informed consent for participation was obtained from all participants prior to data gathering.