Abstract

This study investigates the relationship between students’ English language proficiency, their reported levels of academic English literacy, prior content knowledge and their attainment of content knowledge in English medium instruction (EMI). The study also examines students’ perceptions of difficulties with academic English literacy at different levels of English language proficiency. Pre-course and post-course content tests were administered to 27 EMI students in an introductory Chemistry course at a university in Tokyo, Japan. The test results were triangulated with data from a quantitative measure of reported academic literacy and follow-up interviews to explore perceptions of ease and difficulties for academic language skills (i.e. reading, listening, speaking and writing). The quantitative findings indicated that students’ proficiency statistically significantly predicted post-test scores. Interviews with students corroborated this finding, illustrating the specific difficulties in academic language literacy faced by students with lower proficiency. However, proficiency alone did not determine success as other factors, such as previous exposure to EMI and prior content knowledge, played significant roles. The study found a non-linear relationship between reported difficulties with academic English literacy and test outcomes, indicating that students who reported fewer academic difficulties were not necessarily more successful in gaining content knowledge than those facing significant challenges in academic language tasks. The findings emphasise that academic support in EMI programs should not solely focus on test outcomes but also address the broader challenges students face with academic English literacy. Implications are discussed regarding language support, EMI curriculum planning and future research directions.

Keywords

I Introduction

In line with global trends, higher education in Japan has recently witnessed rapid growth in English medium instruction (EMI) programs. In 2014, the Ministry of Education, Culture, Sports, Science and Technology (MEXT) launched the ‘Top Global University Project’ (TGUP), a large-scale, multimillion-dollar initiative that funds 37 universities to internationalise Japanese higher education (Rose & McKinley, 2018). The number of EMI courses offered by participating universities is expected to grow from 19,533 to 55,928 (2.86 times) by the end of the ten-year funding period in 2023 (MEXT, 2018), demonstrating the rapid expansion of the EMI boom. A growing body of EMI research has shown that university students experience challenges with academic English literacy when learning through EMI, which in turn causes an adverse effect on their content acquisition and mastery (e.g. Evans & Morrison, 2011; Kamaşak et al., 2021; Toh, 2020). However, much of this research relies on students’ self-reported experiences and perceptions (Macaro et al., 2018). Consequently, what remains unknown is whether and to what extent students’ self-assessed ability to perform academic tasks in English (i.e. academic English literacy skills) aligns with their actual academic performance in EMI programs (i.e. content knowledge attainment). This gap in the literature highlights the need for more empirical research that utilises direct measures of academic performance (i.e. content test scores). Therefore, this study addresses this gap in the literature by examining the relationship between students’ English language proficiency, self-reported academic English literacy, prior content knowledge and success in acquiring content knowledge through EMI. The study also qualitatively explores students’ perceptions of factors that contribute to the severity of their challenges with academic English literacy. By triangulating data from students’ perceptions and direct measurements of academic performance, this study investigates the relationship between academic literacy and academic success in EMI.

II Background to the research

1 EMI in Japan

Ongoing government policies in Japan, such as the Top Global University Project (TGUP), aim to globalise the workforce and internationalise higher education by attracting international students to local universities (Galloway et al., 2020). These policies have stimulated research into the implementation of EMI at the macro, meso and micro levels, highlighting various policy and institutional factors (Bradford, 2016; Toh, 2020). Nevertheless, the high school English curriculum in Japan, which emphasises discrete language knowledge for entrance exams, often falls short in preparing students for EMI. Consequently, Japanese students require substantial support through preparatory or concurrent academic language courses in the form of English for academic purposes (EAP), English for specific purposes (ESP) and content and language integrated learning (CLIL) (Galloway & Ruegg, 2021; Ruegg, 2021). This highlights the importance of students developing their discipline-specific literacies through academic language courses.

2 Academic English literacy and content knowledge in EMI

The literature on EMI highlights various language needs that arise (Pun et al., 2024). One significant issue is the difficulties associated with academic literacy, which impact students’ content learning outcomes compared to first-language instructed programs (e.g. Aizawa et al., 2024; Bälter et al., 2024). Academic literacy is a multifaceted construct which includes reading, writing and oral discourse for learning, can be discipline-specific, and requires knowledge of academic genres (Short & Fitzsimmons, 2007). Recent discussions on academic literacy emphasise its broader scope beyond language proficiency, highlighting the importance of disciplinary literacies, which refer to the skills required to comprehend, evaluate, interact with and produce texts in different academic disciplines (Wingate, 2018). A similar discussion has been applied to developing EMI students’ understanding of ‘ownership’ of academic English and the expectations of students to build academic literacy in English alongside cultural and critical literacies. The idea is that in the context of EMI, academic literacy involves the ability to understand and engage with complex academic content through critical thinking and effective communication. It equips learners to critically analyse and navigate their disciplinary studies, enabling them to engage with the content and develop the skills necessary for academic success in English medium environments (see McKinley, 2019).

This perspective forms the basis of our understanding of academic literacy, which includes both general academic skills and discipline-specific competencies. Therefore, our focus in this study is on broader academic competencies beyond the difficulties students face. We frame academic literacy as what students can accomplish within specific academic genres and tasks, rather than what they find challenging. This has prompted increased demand for research on students’ language needs, how they relate to student behaviour and how institutions can better support students in EMI programs (on students’ views of disciplinary literacies in EMI contexts, see, for example, Dafouz et al., 2023).

One of the earliest studies on academic literacy in EMI is Evans and Morrison’s (2011) investigation of academic English literacy-related challenges faced by first-year undergraduate students entering an EMI degree program in Hong Kong from Chinese and English medium school backgrounds. This study built on Leki’s (2007) landmark study of academic literacy development and extended the findings to an investigation of academic literacy within English medium education in Hong Kong universities. The study identified difficulty in understanding technical vocabulary and the comprehension of lectures, alongside problems with academic style and institutional requirements as key challenges. In another perception-based study, Kim et al. (2017) adopted a questionnaire to explore the challenges facing second language (L2) English engineering students in South Korea (n = 524). The study reported that less than 2% were satisfied with their learning quality through EMI and nearly 60% with Korean-medium instruction (KMI), indicating that most students favoured KMI over EMI. Their subsequent interviews revealed that many participants faced more difficulty understanding key engineering concepts and solving problem worksheets in EMI than KMI.

Academic literacy for L2 learners studying science includes discipline-specific aspects (Parkinson, 2000). Studies within the field of science education (e.g. Song & Carheden, 2014, in the US, and Chan, 2015, in Hong Kong) have pointed to areas of needed literacy development, including specific vocabulary and conjunctions such as ‘therefore’. Students also face subject-specific disciplinary literacy needs, such as interpreting chemical equations (e.g. [NO2], 2NO2) and understanding technical terms (e.g. oxidation, reduction). Research has shown that L2 science learners may need support to understand and create science-specific genres related to both oral and written texts (e.g. Kelly-Laubscher et al., 2017; Parkinson, 2000). Academic literacy for science purposes therefore requires mastering both the scientific content and the specialised language used to describe it. These disciplinary needs highlight the various demands placed on science students. Learners must not only master the content knowledge, but also general and specific aspects of academic literacy, alongside specialised terminology, symbols and formulas used to communicate and apply this knowledge in STEM subjects.

Despite the absence of direct success measures (e.g. content test scores), these investigations into language needs within EMI suggest a strong relationship between academic literacy and overall academic achievements in EMI. The most comprehensive prediction study to date was conducted by Kamaşak et al. (2021), who drew on Evans and Morrison’s (2011) measure of academic literacy and explored the relationship between students’ academic English literacy and self-reported success while accounting for key learner factors. Predictive variables of academic English literacy included the year of study, prior EMI experience, first language (L1) and proficiency. The study revealed that learners who reported higher levels of academic English literacy perceived themselves to be more successful in EMI than those who reported lower levels of academic English literacy.

Overall, although these studies appear to indicate that academic English literacy are associated with success, little research has investigated the relationship between perceptions of academic English literacy (i.e. self-reported measure of difficulty) and test scores (i.e. direct measures of success). To fill this gap, this current study adopted triangulation of content test scores, interviews and an academic literacy scale to capture this complicated interplay between academic literacy and success while also qualitatively exploring students’ perceptions of factors that contribute to students’ success.

3 English proficiency and academic success in EMI

‘Academic success’ in EMI can be defined in various ways, with previous studies focusing on course level success using measures such as midterm and final test scores (e.g. Curle et al., 2020; Rose et al., 2020), and more general measures such as Grade Point Average (GPA) (e.g. Soruç et al., 2021). Given that the Japanese Ministry of Education (MEXT, 2022) considers EMI to have the purpose of content attainment, we conceptualise success in the current study as the acquisition of content knowledge.

A substantial body of EMI research suggests a relationship between students’ proficiency and academic success (e.g. Lin & Lei, 2021; Trenkic & Warmington, 2019; Zhou, Fung, & Thomas, 2023). In contexts where EMI is still evolving as a form of education, limited L2 proficiency often leads to undesirable educational outcomes, such as reduced content coverage and inadequate content comprehension (e.g. Ismailov et al., 2021). In particular, some research shows apparent differences in students’ learning experiences according to proficiency; higher proficiency students face challenges associated with learning new academic subjects, while lower proficiency students experience considerably more onerous challenges (e.g. Altay et al., 2022), such as limited engagement with course material, difficulties in participating in discussions and challenges in understanding and producing academic writing.

Some studies have found a statistically significant positive relationship. For example, Sánchez-Pérez (2021) in Spain explored the relationship between disciplinary-literacy variables (e.g. text structure, cohesion, vocabulary and grammar) and students’ academic grades based on EMI engineering laboratory report writing performance (n = 136). Texts with higher frequency rates of these linguistic variables were considered more proficient by lecturers than those with a smaller number of features. The regression model containing these linguistic variables explained 48% of the variance in students’ academic grades, revealing the importance of academic writing skills in engineering written texts. Similarly, Rose et al. (2020) found that L2 English proficiency and grades from a preparatory English for Specific Purposes course explained 25.7% and 36.5% of the variance in content score attainment of university students majoring in International Business (n = 146), respectively. When including these linguistic variables alongside a measure of motivation (Ideal L2 self) in a multiple regression model, the overall model explained a total of 44.5% of content score achievement, with motivation not identified as a significant predictor, indicating the significance of proficiency in academic success in EMI. Based on correlation analysis, Cho and Bridgeman (2012) revealed that TOEFL scores of university students (n = 2,594) in the US explained 4% of the variance in GPA (r = .20), which was still a significant result, although the magnitude of its correlation was relatively small.

Conversely, other studies exploring the predictive effects of proficiency have found no correlation with academic success (e.g. Curle et al., 2020). Yan and Cheng (2015) investigated L1 Chinese undergraduate students (n = 138) studying through EMI at a South Korean university. Using multiple regression analyses, they found that self-assessed English proficiency had a significant yet small correlation (r = .21) but was not a significant predictor of undergraduate GPA after controlling for self-assessed Korean proficiency. This suggests that English, as a third language after learning Korean, had a limited impact on Chinese undergraduate GPAs in South Korean universities.

Overall, although most studies indicate that proficiency plays a role in EMI success (e.g. Rose et al., 2020; Thompson et al., 2022; Zhou et al., 2023), the findings are mixed and inconsistent. Research shows that challenges with academic literacy can adversely affect content acquisition (e.g. Evans & Morrison, 2011). However, much of this research relies on self-reported data. Few studies have examined how self-assessed academic literacy skills relate to actual academic performance. Our study, therefore, addresses this gap by adopting direct measures of academic performance to examine the relationship between English proficiency, self-reported academic literacy, prior content knowledge and content attainment.

4 Summary of the rationale for the study

In summary, there are few prediction studies that have measured the impact of proficiency and academic English literacy on EMI academic outcomes while considering other factors, such as ‘the level of prior knowledge of the academic concept’ (Macaro, 2020, p. 267). This is primarily because previous research has measured success using perception-based methods, resulting in the reliance on qualitative approaches (mainly interviews) rather than quantitative or mixed-method designs that utilise test scores (Macaro et al., 2018). Existing quantitative findings have primarily drawn from correlational designs exploring the relationship between proficiency, academic literacy and academic performance rather than controlling for possible effects of other variables (Yan & Cheng, 2015). Although proficiency and academic literacy appear to be paramount to higher levels of content attainment for EMI learners, mixed findings thus far have not clarified whether there is a relationship between academic literacy and academic success in EMI studies. Thus, the current study explores this relationship by examining whether and to what extent proficiency predicts academic success. Alongside other factors that have received less attention in the literature to date, such as existing content knowledge and perceptions of literacy.

III The study

The study addresses the following two research questions:

• Research question 1: To what extent are students’ English proficiency, reported academic English literacy and prior content knowledge associated with content knowledge attainment in EMI?

• Research question 2: How do EMI students perceive English proficiency to affect their perceptions of academic English literacy towards EMI study?

1 Research context and participants

The study was conducted at a university in Tokyo, Japan. The university has received national funding from the TGUP to facilitate globalization since 2014. Consequently, it has implemented a consistent approach to EMI provision, involving provision, with plans to increase the proportion of EMI modules from 16.5% to 40.0% and to offer EMI courses across a range of academic disciplines. The institution admits local and international students, resulting in a diverse range of English proficiency levels represented in the study, including students with IELTS scores of 6.5, 7.0, 7.5, 8.0 and those with a first language of English.

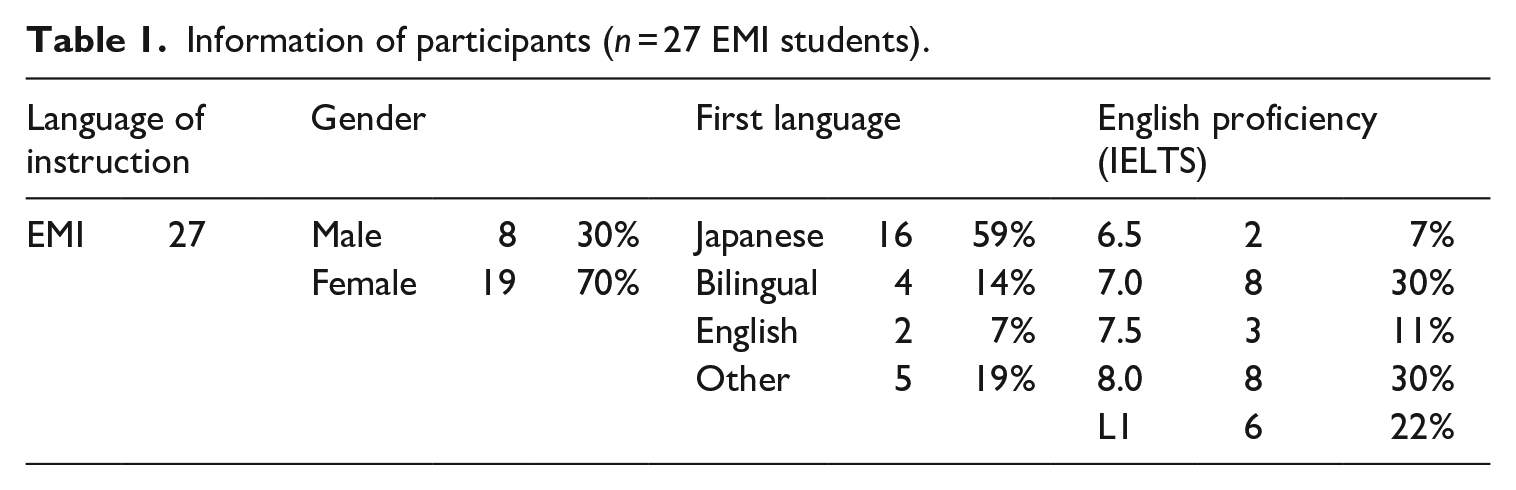

This study was conducted in the ‘Introduction to Chemistry’ module at the School of Natural Sciences. This module offers 70 minutes of lectures twice weekly for 12 weeks, comprising 21 classes. All students enrolled in the module (n = 27) participated in the study. Table 1 summarises students’ gender, L1 and IELTS scores based on the medium of instruction.

Information of participants (n = 27 EMI students).

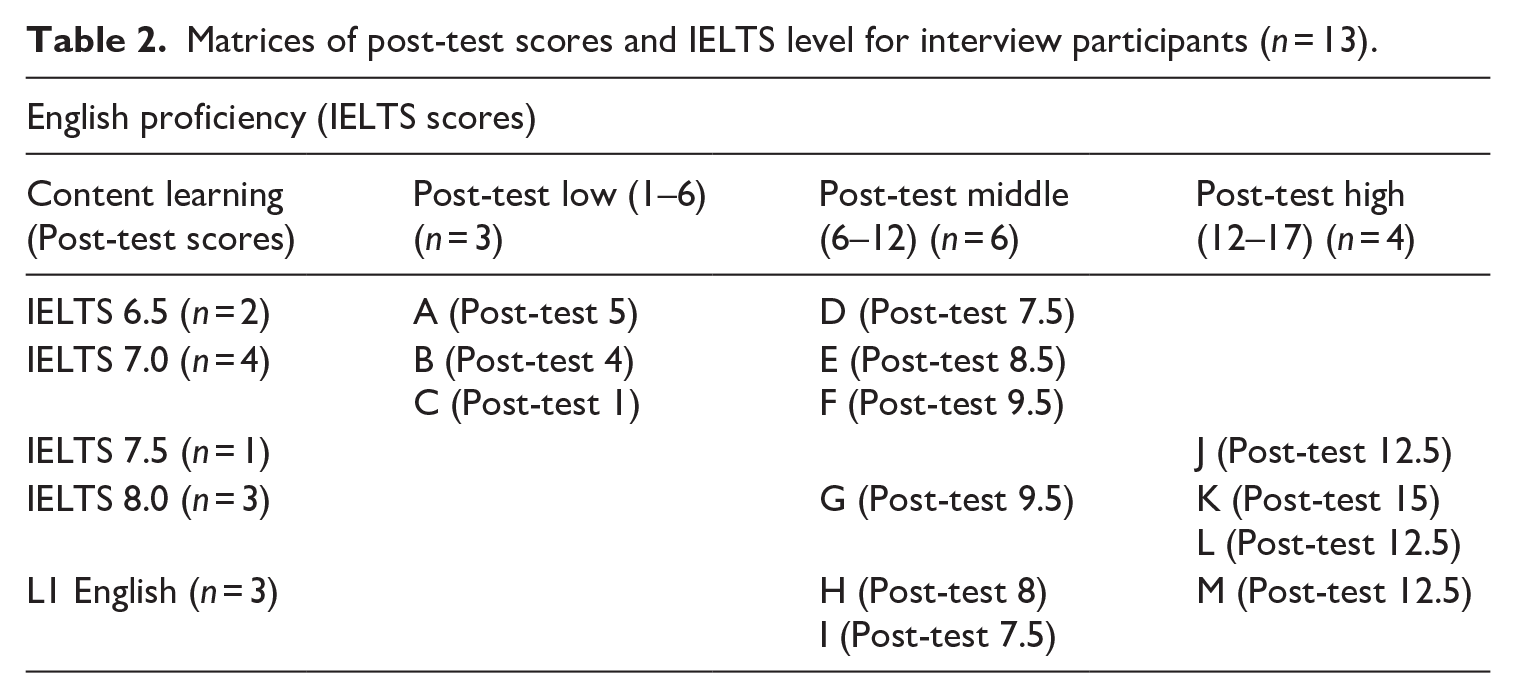

Adopting a maximal variation sampling strategy, we purposively selected volunteer students as individual cases to examine various variables influencing success in EMI. Table 2 shows the selected cases of 13 students representing a range of test post-test scores and IELTS levels.

Matrices of post-test scores and IELTS level for interview participants (n = 13).

The classification system using Pass (0%–40%), Merit (40%–70%) and Distinction (70%–100%) corresponds to the university’s Assessment Criteria, where ‘pass’ signifies a basic level of achievement, ‘merit’ represents a mid-level performance and ‘distinction’ indicates a high level of achievements.

2 Data collection instruments and procedures

The present research adopted the following research instruments and measures.

a Pre-test–post-test scores on a content knowledge test of Chemistry completed by students at the start and end of the semester

To assess students’ content mastery in Chemistry, their scores on a Chemistry test were used as a measure of success (see Appendix A). To ensure a high level of representation, 13 open-ended questions were developed by the content teacher, designed to represent those included in the final course examination. The pre-test and post-test were administered under the supervision of the content teacher during the first and last lectures of the semester. Each participant was given 25 minutes to complete the pre-test and post-test.

b English language proficiency

Student IELTS band scores were used as a proficiency measure and treated as numerical values measured on a discrete and continuous scale in increments of 0.5 from a minimum score of 1.0 to a maximum score of 9.0.

c Academic literacy scale

The scale consisted of 45 questions first compiled in Stephan Evans’s research into academic literacy in Hong Kong, which was reported in several publications, including Evans and Green (2007) and Evans and Morrison (2011). An adaptation of this scale has been validated by Aizawa et al. (2023) in Japan and Kamaşak et al. (2021) in Turkey. The scale measured students’ perceptions of the degree of difficulty and ease in completing various academic tasks regarding writing, speaking, reading and listening skills. Students rated their responses on a five-point Likert scale, where one indicates ‘very difficult’ and five indicates ‘very easy’. Although these subsequent studies framed this instrument as a measure of challenges, in our study, we return to conceptualizing it as a measure of academic literacy, as originally intended and theorised by its creators.

d Semi-structured interviews with 13 EMI students (see Table 2)

Student interviews were conducted to explore perceived learning experiences while identifying underlying factors affecting their content learning. The average length of interviews was 20.4 minutes (SD 4.4; Min 11; Max 31). See Appendix B.

3 Data analysis

To address research question 1, three separate linear regression analyses and one multiple regression analysis were conducted to explore the extent to which predictor variables (proficiency, academic literacy and pre-test scores), accounted for the variance in the post-test scores. Prior to performing statistical tests, assumptions for correlation, simple and multiple linear regression were assessed through linearity, independence of residuals, homoscedasticity and normality of residuals. Scatterplots confirmed linear relationships between predictor variables and post-test scores. Durbin-Watson statistics (1.853 for proficiency, 2.092 for academic literacy, 2.204 for pre-test score) ensured independent residuals. Normal probability plots verified homoscedasticity and approximately normal residual distribution. No outlier with a z-score greater than ±3.29 standard deviations of the sample mean was detected (Tabachnick & Fidell, 2007). The threshold for sample size in our regression analysis was also inspected, with at least 10 participants per predictor variable to achieve sufficient statistical power and reliable estimates (VanVoorhis & Morgan, 2007), confirming that the data met all regression assumptions. Data were also assessed for normality of distribution. A z-score for skewness and kurtosis was calculated, revealing approximately normal distributions for proficiency, academic literacy and post-test scores. In contrast, pre-test scores revealed positive skewness. As such, Spearman’s rank-order correlation analyses were used to explore the variance in post-test scores based on proficiency and academic literacy.

To extend upon the above analyses, 13 semi-structured student interviews were analysed to elicit a nuanced understanding of how English language proficiency may interact with students’ perceptions of academic English literacy in EMI. All interviews were transcribed and analysed using NVivo. Based on an abductive approach, the main themes were developed deductively from EMI literature on academic literacy and success and inductively from raw data (McKinley, 2020). Text passages were coded under the same main themes (text retrieval) to construct sub-themes, all compiled in NVivo. This process involved examining phrases and, to a lesser extent, entire paragraphs, highlighting regularities, patterns and significant meanings within the data. A final list of main themes was then generated, representing an array of key themes contributing to or alleviating students’ difficulties associated with academic literacy in EMI.

IV Results

1 Proficiency, academic literacy, prior content knowledge and content attainment in EMI

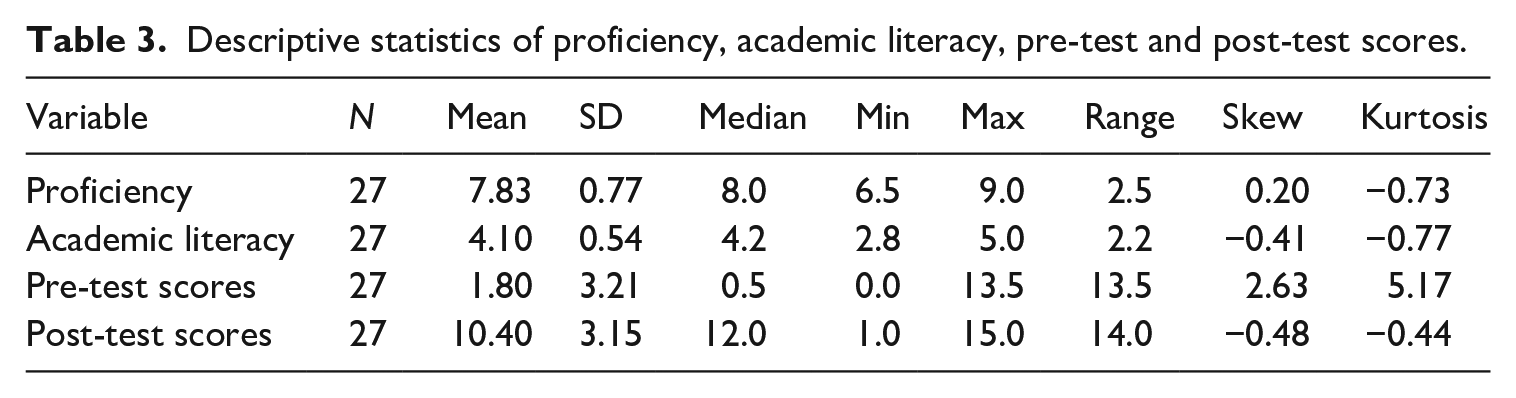

Descriptive statistics (n = 27) are shown in Table 3 for the proficiency, academic literacy, pre-test and post-test scores. The mean post-test score was 10.40 (SD = 3.15), with a median score of 12.0. The skewness was–0.48 (falling within −0.5 to +0.5) and kurtosis was–0.44 (falling within −3 to +3), an acceptable range (Briggs Baffoe-Djan & Smith, 2020) with no outliers. The mean score of academic literacy score was 4.10 (SD = 0.54, range = 2.2), with a range from 2.8 to 5.0. Skewness (−0.41) and kurtosis (−0.77) fell within the acceptable range of +1 to −1. IELTS band score (Mean = 7.83, SD = 0.77) had the highest value of 9.0 and the lowest value of 6.5, with a range of 2.5 and a median of 8.0 (skewness = 0.2, kurtosis = −0.73) with no outliers. These variables are neither significantly skewed nor kurtotic. However, the distribution of pre-test scores (Mean = 1.80, SD = 3.21) was positively skewed (skewness = 2.63, kurtosis = 5.17), with a large proportion of students being clustered around the lower end of the distribution. That is, most of the students had little to no prior content knowledge.

Descriptive statistics of proficiency, academic literacy, pre-test and post-test scores.

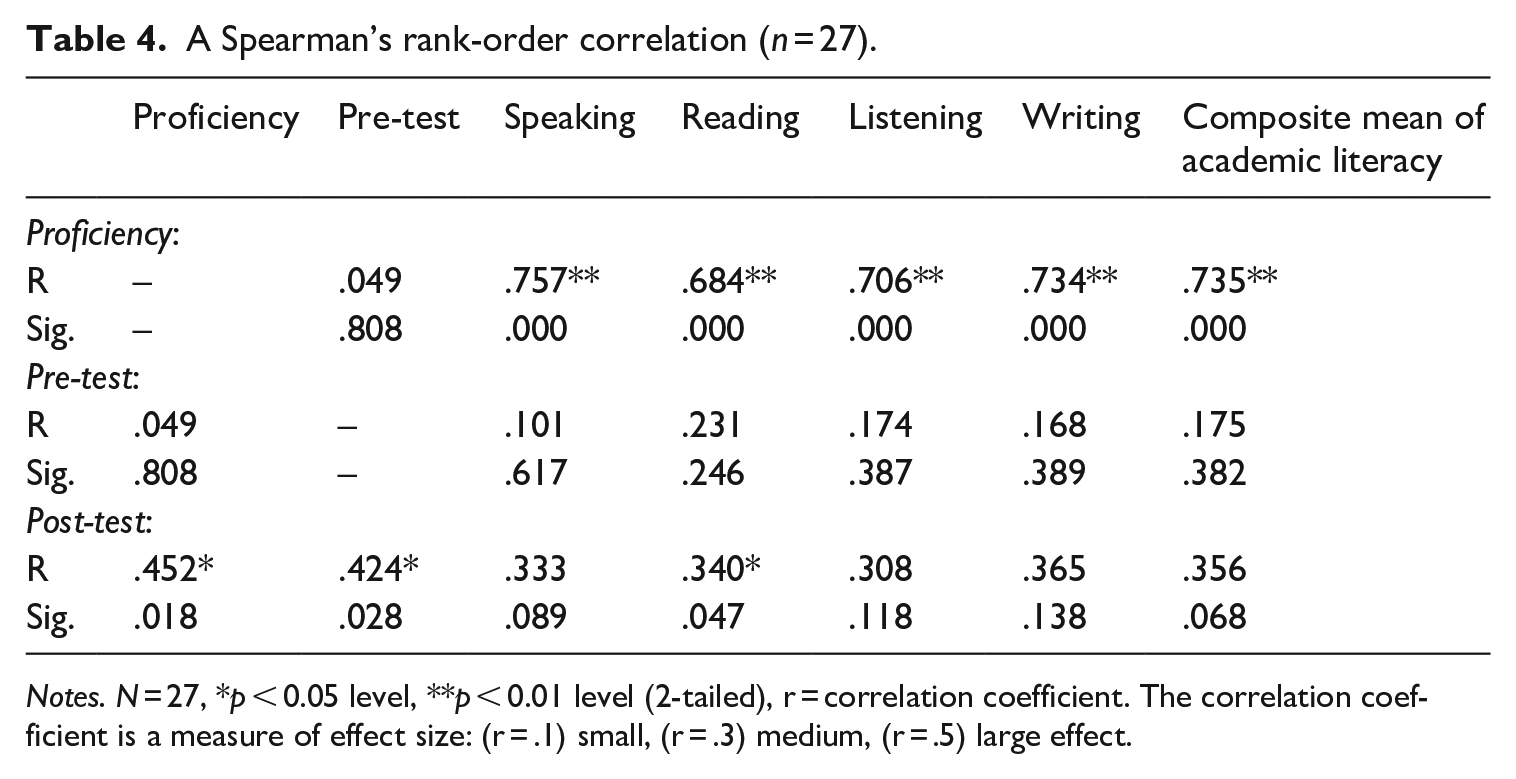

A Spearman’s rank-order correlation (Table 4) revealed a moderate to strong correlation between proficiency and post-test scores (r = .452, p < .05). There were strong correlations between proficiency and an overall composite mean for academic literacy (r = .735, p < .001) as well as each of the skill areas, such as speaking (r = .757, p < .001), reading (r = .684, p < .001), writing (r = .734, p < .001) and listening skills (r = .706, p < .001). This indicates that students with higher proficiency levels self-reported a more advanced level of academic English literacy across all skill areas and achieved higher post-test scores than their counterparts with lower proficiency. Conversely, there was no correlation between IELTS scores and pre-test scores (r = .049, p = .808). Possessing advanced language knowledge was not necessarily indicative of the level of prior content knowledge. Pre-test scores showed a strong and positive correlation with post-test scores (r = .424, p < .05), but were not correlated with academic literacy in any of the skill areas. This suggests that possessing substantial prior knowledge was highly facilitative of increased content test performance but was not directly related to academic English literacy. Finally, a weak to moderate correlation was found between post-test scores and academic literacy in reading (r = .340, p < .05). However, post-test scores were not correlated with self-reported academic English literacy in other skill areas, including the composite mean score (r = .356, p = .068). This implies that, although students reporting a higher proficiency in reading could potentially achieve better content test performance, overall, no statistically significant correlations were found between academic English literacy and post-test scores.

A Spearman’s rank-order correlation (n = 27).

Notes. N = 27, *p < 0.05 level, **p < 0.01 level (2-tailed), r = correlation coefficient. The correlation coefficient is a measure of effect size: (r = .1) small, (r = .3) medium, (r = .5) large effect.

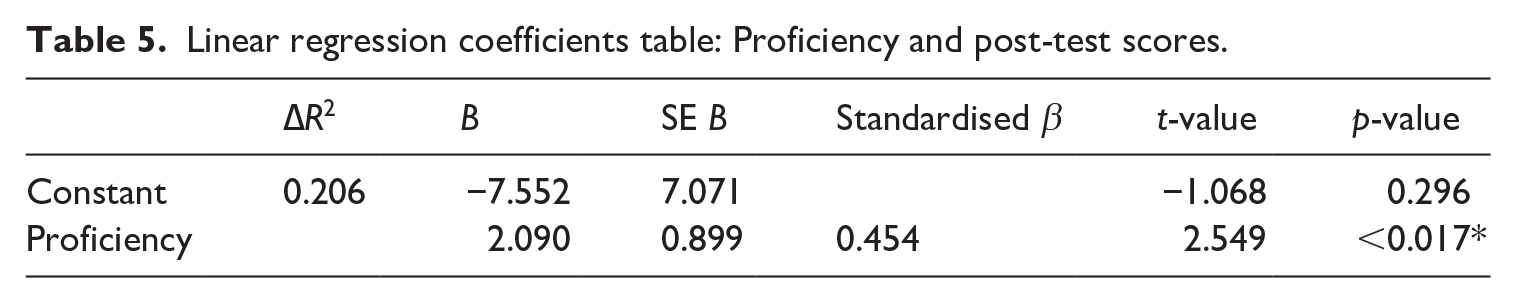

Linear regression revealed a statistically significant positive linear relationship between IELTS scores and post-test scores (β = 2.090. F(1, 25) = 6.497, p < 0.05), indicating that the magnitude of this relationship was a moderate to strong effect, which was confirmed with a Pearson correlation coefficient of 0.45 (p < 0.05). The slope coefficient for IELTS scores was 2.090, 95% CI [0.440, 4.141], which suggests that post-test score would increase by 2.090 for every 0.5 point increase in IELTS score. The value of R2 was 0.206, which means that 20.6% of the variance in post-test score was explained by this model containing proficiency score, with adjusted R2 = 17.5%. Table 5 shows that proficiency score achieved a standardised beta coefficient (β) with a value of 0.454. This indicates that with every increase of one standard deviation in IELTS level (SD = 0.77), post-test score rose by 0.454. To summarise, IELTS score statistically significantly predicted post-test score, and the higher the proficiency, the higher the content test score.

Linear regression coefficients table: Proficiency and post-test scores.

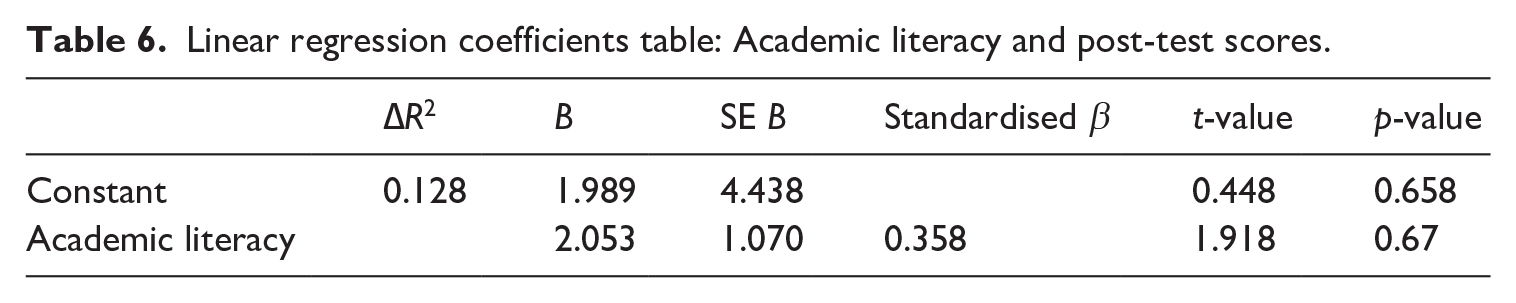

Second, linear regression of the relationship between academic literacy and post-test scores revealed that the R2 value was 0.128, indicating that reported academic literacy could account for 12.8% of the variation in post-test score, with adjusted R2 = 0.093 (9.3% of the variability), a smaller proportion of variance explained than that of IELTS score. However, when the statistical significance of the overall regression model was assessed, it became clear that academic literacy did not achieve a significant relationship with the post-test score (β = 2.053. F(1, 25) = 3.678, p = 0.67), which was also confirmed with a Pearson correlation coefficient of 0.356 (p > 0.05). Consistently, the non-statistically significant slope coefficient (p > 0.05) did not predict the increment of post-test score by the change in values of academic literacy score. Table 6 underpins these non-statistically significant findings as illustrated by the standardised Beta value of 0.358; post-test scores only increased by 0.358 for every one standard deviation increase in academic literacy scores (SD = 0.54). In summary, academic literacy was not a statistically significant predictor of the post-test scores.

Linear regression coefficients table: Academic literacy and post-test scores.

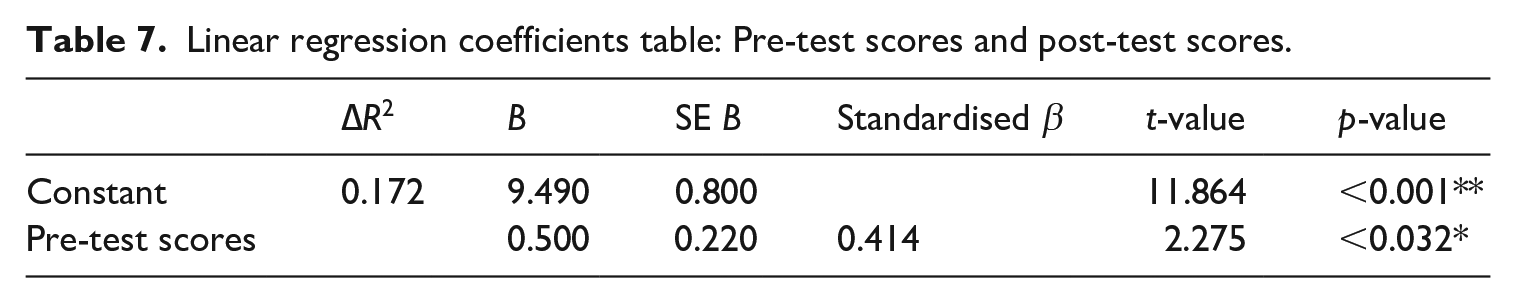

Third, a statistically significant linear relationship was found between the pre-test scores and post-test scores (β = 0.500. F(1, 25) = 5.176, p < 0.05), which was also confirmed with a correlation coefficient (r = 0.42, p < 0.05), as illustrated in Table 7. The slope coefficient for the pre-test score was 0.500, 95% CI [0.047, .953], suggesting that the post-test score increased by 0.5 for every point increase in the pre-test score. When exploring the proportion of variation explained by the model, the R2 value was 0.172, which indicated that 17.2% of the variation in post-test scores was accounted for by the model containing pre-test scores, with adjusted R2 = 13.8%. Table 7 also presents the standardised Beta value (β = 0.414), which reports that for each standard deviation rise in pre-test score (SD = 3.21), post-test scores rose by 0.414. In summary, pre-test scores statistically significantly predicted post-test scores.

Linear regression coefficients table: Pre-test scores and post-test scores.

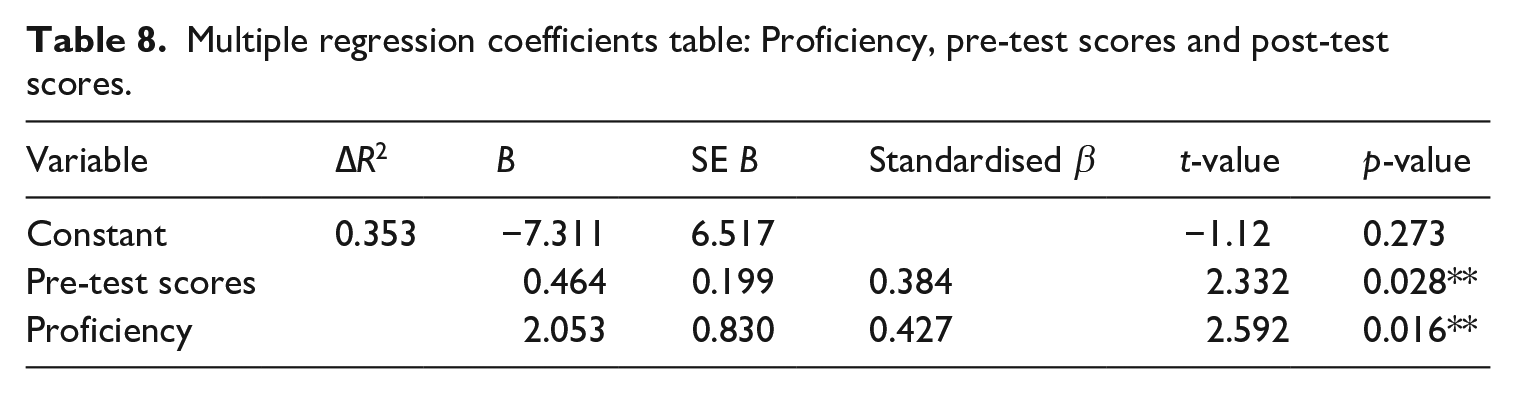

Finally, to explore the extent to which proficiency and pre-test scores explained the variance in the post-test scores, both variables were put into one multiple linear regression model as predictor variables. A significant linear regression equation was found (F(2, 24) = 6.545, p < 0.005**), with an R2 of 0.353 (see Table 8). These two predictors, therefore, explained 35.3% of the variance in post-test scores, with adjusted R2 = 30.0%. The strength of this linear association between the variables was also confirmed with the multiple correlation coefficient (r = 0.59, p < 0.005**). This model explained a larger proportion of variance in post-test scores than any of the separate single linear models performed above. Table 8 illustrates that both variables were significant predictors of post-test scores. The slope coefficient for the IELTS score was 2.053, 95% CI [0.440, 3.866], suggesting that the post-test score increased by 2.053 for every point increase in IELTS level when pre-test scores were held constant. Similarly, an increase in pre-test scores of one point was associated with an increase in post-test scores of 0.464, 95% CI [0.053, 0.874] (i.e. the coefficient of pre-test scores is .464) when proficiency was kept constant. To conclude, proficiency and pre-test scores were both significant predictors of post-test scores.

Multiple regression coefficients table: Proficiency, pre-test scores and post-test scores.

2 Perceived content attainment and academic literacy

The qualitative analysis offered detailed insight into how students perceived their academic English literacy in nuanced terms.

Interview data revealed two key findings:

• The extent of reported difficulties in academic English literacy varied according to proficiency level. Students with lower proficiency levels reported greater difficulties than their higher proficiency counterparts, with some difficulties unique to those with lower proficiency.

• Test outcomes were not indicative of the level of reported academic English literacy-related difficulties. Students who achieved successful test scores did not consistently report fewer difficulties in academic English literacy, while those with less successful test scores did not necessarily report greater difficulties.

a Difficulties with academic English literacy varied based on proficiency level

The most significant differences between students with higher and lower proficiency lay in the type of vocabulary they struggled with and their frequency of dictionary use and reliance on translation. That is, those with lower proficiency tended to report more challenges related to vocabulary. For example, Student A (IELTS 6.5, post-test 5) often looked up high-frequency vocabulary, noting that ‘I always look up words in a dictionary. When learning Chemical Bonding, I did not know words, like “organic”, “bond” and “law” ’. In his view, these words would have been common words for most L1 English speakers. In contrast, Student L (IELTS 8.0, post-test 12.5) only had difficulty with technical terms such as ‘oxidation’, ‘unit cell’ and ‘molecular orbital theory’. High-frequency academic vocabulary includes words commonly used across various academic disciplines (e.g. ‘analyse’, ‘concept’, ‘theory’), while technical vocabulary consists of discipline-specific terms crucial for subject-specific content (e.g. ‘oxidation’ in Chemistry). Thus, while low proficiency learners struggled with high-frequency academic words, students at all proficiency levels found technical terms challenging due to their association with complex academic concepts.

Students across all proficiency levels identified their academic reading speed in English as a significant challenge, affecting how they allocated their independent study time. Higher and lower proficiency learners approached their study schedules differently due to the varying speeds at which they could process English academic texts. For instance, compared to Student M (L1 English), Student A found that he needed to allocate more time for studying in English than in Japanese, indicating the additional time required to comprehend English academic materials.

Student A: I spend most of my study time revising for Chemistry because it’s in English. I’m also taking four other courses. I chose to study them in Japanese, but I still need to prepare a lot. I’m worried I might fail my courses. Student M: I registered for five courses this semester. I usually spend most of my time reviewing my biology course because I have a lot of assignments and tests. The content is more demanding than the Chemistry course because I haven’t studied Biology before.

Similarly, difficulties in sustaining attention during academic listening in EMI lectures were encountered by participants across all proficiency levels. However, students with lower proficiency reported this challenge more frequently than those with advanced levels of English. Student E (IELTS 7.0, post-test 8.5) attributed her proficiency as the primary reason for her difficulties, preventing her from focusing on lectures. Lower and higher proficiency students also differed in their comprehension of humour and jokes used by their professors. Student E struggled to follow the rapid and spontaneous delivery of lectures, whereas Student K (IELTS 8.0, post-test 15) made use of her previous experience of learning Chemistry through EMI in school to facilitate her comprehension of the lectures.

Student E: I always get tired when listening to lectures in English. My attention starts to wander. I find it exhausting to understand every single word in English. Student K: I remember many of my high school teachers were from America or England and often told us jokes to make us laugh in class. It would be difficult to understand jokes if I hadn’t attended English-mediated classes in high school.

Although all participants faced common challenges irrespective of proficiency, lower proficient learners encountered unique challenges. For example, while some advanced users of English identified reading speed as an area of difficulty, students with lower proficiency connected this issue to broader language-related concerns. For example, Student C (IELTS 7.0, post-test 1) faced challenges with grammatical constructions and had a slow reading speed. She noted, ‘I stop and read the same sentences many times. It takes me a long time to understand English sentences. I think about grammar, like SVO, SVC.’ Student A could not comprehend exam questions and instructions accurately. He reported misunderstanding the instructions on one of his exams and failed to realise that there was an answer bank at the end of the exam paper.

Student A (EMI): I had to spend a long time reading instructions for each exam question and ran out of time. I also did not notice that I was given an answer bank for one of the questions. I could have saved much time.

b Test outcomes did not necessarily reflect difficulties with academic English literacy

Consistent with the quantitative results, which showed no relationship between test scores and academic literacy, learners with similar test scores did not necessarily face the same difficulties. This indicates that pathways to success may vary depending on other factors. For instance, while Student J (IELTS 7.5, post-test 12.5) and Student M (L1 English, post-test 12.5) achieved the same score on the post-test, they perceived their experiences differently, reflecting how their differences in proficiency led to distinct paths towards the same outcome.

Student J: When I read the textbook, I have to look up not only technical words but also so many other basic English words. I wish I knew more English words. Student M: I usually understand the textbook without any language problem, like not understanding English words. My problem is more about not understanding technical terminologies in the textbook.

Additionally, prior content knowledge also played a crucial role in determining the severity of difficulties. Despite achieving the same test scores, Student I (L1 English, post-test 7.5) struggled with slow reading speed due to her unfamiliarity with technical terms. In contrast, Student D (IELTS 6.5, post-test 7.5) maintained a fast-reading speed because she had previously studied chemistry at school. Although Student I was also linguistically more favourably disposed towards EMI, Student D’s prior experience of learning Chemistry allowed for faster reading.

Student I: I need to learn too many basic Chemistry terms and concepts because I didn’t study Chemistry before university. I constantly need to look up keywords online, and reading the textbook takes a long time. Student D: When I read the textbook, I have to use online dictionaries a lot to search for unknown English words, but having learnt Chemistry in high school has helped me process information faster.

Levels of difficulty also depended on other factors, such as prior EMI experience. Students with little to no previous exposure to EMI education, such as Student F (IELTS 7.0, post-test 9.5) from a Japanese-medium school, had to relearn chemical elements in English. Conversely, Student G (IELTS 8.0, post-test 9.5) had already studied chemistry in English, giving her a significant advantage. Thus, regardless of the post-test outcomes, the challenges were more prominent among students with little to no previous exposure to EMI education.

Student F: I learnt Chemistry in Japanese in high school, so I have to learn basic words used in lectures and the Chemical elements in the periodic table again in English. Student G: I still need to learn new technical words introduced in lectures. But there are many words that I already learned in high school.

In conclusion, our qualitative findings show that learners with similar test outcomes faced vastly different difficulties. While higher proficient learners generally reported fewer challenges than lower proficiency students, other factors also contributed to their perception of the severity of challenges, such as previous exposure to EMI education and prior content knowledge. The non-statistically significant result of the academic literacy score, along with interview evidence, suggests that students appeared to achieve successful content test scores regardless of their perceived level of challenges. In this study, students who reported greater challenges were still able to navigate their EMI studies successfully to achieve higher scores on the content test. Thus, test scores provided one measure of the students’ experience, but they did not necessarily reflect the broader context of the challenges that the learners may have encountered when learning through EMI.

V Discussion and implications

1 The role of English proficiency and learner factors in EMI

Consistent with a growing body of EMI studies (e.g. Hu & Wu, 2020; Martirosyan et al., 2015), the results of our study have identified clear differences in students’ learning experience according to their level of English proficiency, emphasizing the importance of proficiency in undertaking content learning effectively. The regression of the current study shows that proficiency accounts for 20.6% of the variance in Chemistry content test scores. Such findings appear to support previous studies; for example, Rose et al. (2020) showed a similar R2 value of 0.25 for a Social Science subject, such as International Business: and Sánchez-Pérez (2021) showed a slightly higher but similar R2 value of 0.48 for a STEM subject, such as mechanical Engineering. However, other studies have shown a much smaller R2 value or even a non-significant finding; for example, Cho and Bridgeman (2012) revealed a smaller R2 value of 0.04 in a large-scale study involving students from ten universities (n = 2,594): and Yan and Cheng (2015) found that proficiency was not a significant predictor of undergraduate GPAs (n = 138).

To address these conflicting results, our study examined the relationships between proficiency, content test scores and other learner factors, including prior content knowledge and the perceptions of academic English literacy. The results of our multiple regression analysis show that the model, incorporating proficiency and pre-test scores, accounted for a larger proportion of variance in the Chemistry content test scores at 35.3%. This finding is largely consistent with prior EMI prediction studies (e.g. Curle et al., 2020; Lin & Lei, 2021) that also controlled for other learner variables to explain a larger proportion of variance in their test scores, such as motivation, self-efficacy and previous EMI learning experience. Our interview findings also revealed that the interactions between proficiency and other learner factors impinged on academic performance, such as prior EMI education experience and previous content knowledge. In other words, to achieve greater ease towards EMI studies, students may need to compensate for their low language skills with other skills beyond those assessed by standard proficiency tests, such as prior content knowledge, time management skills and previous EMI learning experience. Recognizing the constellation of these factors, this study highlights the need for academic language courses to improve academic English and other broader academic skills prior to EMI degree studies. Consequently, this study concurs with earlier research (e.g. Kamaşak et al., 2021; Rakhshandehroo & Ivanova, 2020) that reinforces the importance of academic language support (see the implications below for further discussion on academic language support).

2 Academic literacy and learning outcomes in EMI

The quantitative results of our study did not reveal a correlation between academic literacy and post-test scores. Our interview findings also corroborated this result, suggesting that successful students with higher content test scores did not necessarily experience greater ease with academic tasks in EMI studies. Such findings seem to stand in contrast with previous studies (e.g. Kamaşak et al., 2021), which indicate that higher severity of challenges leads to lower levels of success in EMI. These contradictory findings, therefore, suggest that general academic literacy may not be as predictive of success in the hard sciences. This lack of association emphasises that success in the hard sciences may depend more on specific content understanding and previous subject knowledge than on general academic English literacy skills. This echoes the findings of An and Macaro (2022), highlighting the critical role of discipline-specific language competence in content learning in Chemistry. This study showed that while test scores served as a proxy for test outcomes, the reported level of difficulty in academic English literacy was influenced by a combination of factors, including English proficiency, prior content knowledge and previous EMI learning experience. These findings support other previous studies that identified key factors that may lead to success in EMI, with, for example, learning strategies, motivation, and peer support (Evans & Morrison, 2011) in Hong Kong and L1 medium resources, interactive teaching and class size (Aizawa, 2023) in Japan. Furthermore, the concept of ‘double-crossing’ in STEM classrooms (Melo-Pfeifer, 2023) provides an additional layer of understanding regarding academic literacy in EMI. This concept refers to the interaction between L1 and L2 content knowledge, which can impact learning outcomes. Our findings suggest that students’ academic literacy challenges may be mediated by their ability to leverage L1 knowledge to support L2 content learning, suggesting the pedagogical benefits of incorporating students’ L1 in instructional practices.

This article, thus, revisits the concept of success in EMI, questioning why learners deemed successful might face greater challenges compared to those considered unsuccessful. This may suggest that the challenges faced by learners often perceived as unsuccessful may actually reflect their deeper engagement with academic content and a higher level of academic English literacy. The more advanced or successful a learner, the deeper their engagement with academic content, and potentially, the more varied (and occasionally more arduous) the challenges they might identify. We suggest that this contrasts with a simplistic view where challenges are seen only as obstacles to success, as has been implied in some previous research (e.g. Kamaşak et al., 2021). By situating this study within a conceptualization of academic literacy, we help to return the discussion to its original roots in academic English literacy in EMI (i.e. Evans & Green, 2007; Evans & Morrison, 2011). Consistent with Hultgren et al. (2023), this study exemplifies a transdisciplinary perspective on EMI, which allowed us to explore how language proficiency, L1 content knowledge and academic literacy in Chemistry intersect to influence student success in an EMI program.

3 Implications for future research and curriculum planning in EMI

This research has several implications for future directions for research and EMI pedagogy and curriculum planning. First, one limitation of the current study was the number of participants. Although the study included all participants enrolled in the Chemistry course and selectively interviewed around 50% of the students in the class, the small sample size may have limited its ability to detect smaller effect sizes. Future studies could explore the same relationships in contexts with greater student numbers to examine whether the non-significant relationship between academic literacy and success is consistent with a greater number of participants. Future research with larger sample sizes should thus employ multivariate analyses combined with post hoc tests to investigate these relationships further.

Our findings also suggest the need for additional research to determine whether differences in challenges connect to success via relationships with other variables. In other words, while our findings showed no direct relationship between challenges and actual success, the relationship between these variables may be mediated by other variables such as self-efficacy or self-concept (both of which are theorised to involve an assessment of an individual’s skills; see Thompson, 2020).

Additionally, future studies should examine academic literacy and content learning outcomes across various academic subjects. Our research focused on an introductory Chemistry course, where the test items were primarily made up of single-word questions, multiple-choice questions and problem sets, asking students to draw chemical structures and solve chemical equations. These questions were linguistically less demanding than essay-based exams. Recognizing the discipline specific nature of academic language (Alhassan, 2021), future studies should examine academic literacy and academic outcomes in other academic subjects where more diverse sets of assessments are present in the course curriculum, such as essays, presentations and reports. This would capture the full extent of how the medium of instruction could affect students’ academic outcomes in EMI.

We also found that students, irrespective of their English proficiency, encountered a range of academic challenges when learning Chemistry at university. Prior studies (e.g. Chang, 2023; Galloway et al., 2020) have proposed that preparatory language courses can help learners develop general academic skills necessary for discipline-specific activities at university, including critical thinking, academic writing, communication, presentation and bibliographic and referencing skills. Therefore, even students with higher levels of English proficiency might benefit from participating in such preparatory language courses to enhance their broader academic skills. Finally, this study suggests that preparatory language courses should be offered in the form of ESP or ESAP (English for specific academic purposes) courses, as opposed to EAP courses, to provide students with discipline-specific language support. For instance, a student enrolled in an EMI Chemistry program would benefit more from an ESAP course designed specifically for Chemistry, focusing on the specialised vocabulary, writing conventions and presentation skills pertinent to chemistry research articles, lab reports and academic discussions. Such suggestions concur with the current findings that general academic literacy skills did not predict content test scores as well as previous EMI research on academic skills support (e.g. Kamaşak et al., 2021). Our study found that learners encountered various discipline-specific challenges associated with learning Chemistry (e.g. understanding technical terms). Consequently, students may benefit more from receiving subject-specific language support tailored to their particular academic needs rather than generalised academic English courses.

VI Conclusions

In summary, our findings reveal a non-linear relationship between academic literacy and content knowledge attainment in EMI contexts. Interestingly, students who reported fewer academic difficulties were not necessarily more successful in gaining content knowledge than those facing significant challenges in academic language tasks. This suggests that while academic literacy skills are essential, they do not directly contribute to content knowledge success while considering a range of other learner factors, such as English language proficiency and prior subject knowledge. It indicates that challenges encountered in academic tasks may not directly impede, but rather reflect, students’ deeper engagement with academic content and a higher level of academic English literacy. This challenges the conventional view that interprets academic difficulties solely as obstacles to success, proposing instead that they mark significant academic involvement and advanced literacy in EMI programs.