Abstract

Digital tools have enhanced efficiency, scalability, and real-time insights in evaluation practices. Yet, these advancements introduce serious ethical risks—especially in South Africa—such as data privacy violations, coercive consent mechanisms, algorithmic bias, and diminished evaluator autonomy. This study aims to critically examine these ethical risks and identify gaps in existing policy and practice frameworks. Using a qualitative research design, it draws on semi-structured interviews with evaluators across government, non-governmental organizations, Voluntary Organizations for Professional Evaluation, academia, and private consultancies. The findings reveal that ethical risks are systemic and worsened by the absence of clear, context-specific digital evaluation guidelines. Although frameworks like the Protection of Personal Information Act and the National Evaluation Policy Framework offer a legal baseline, they fall short in addressing digital complexity. The study proposes a dedicated ethical governance model tailored to digital evaluation, supported by local artificial intelligence standards and enhanced evaluator training. It contributes actionable insights to support ethical, inclusive, and accountable digital evaluation practices in South Africa.

Introduction and background

The expansion of digital technologies has transformed governance, research methodologies, and data systems globally, enhancing efficiency, automation, and scalability across sectors. Governments, international organizations, and private institutions increasingly use digital tools to inform evaluation, decision-making, and policy development (Pisa et al., 2020; UNICEF, 2021; United Nations, 2015). The widespread integration of artificial intelligence (AI), big data, machine learning, and cloud-based infrastructure has enabled real-time monitoring, predictive modeling, and rapid feedback loops (African Union, 2019; Internet Society, 2020). While these innovations offer significant advantages—such as improved reach, speed, and adaptability—they also introduce new ethical complexities not fully addressed by existing regulatory systems (Panai, 2023). The legal landscape remains uneven, with inconsistencies in data protection, digital accountability, and institutional enforcement (Daigle, 2021; Feldstein, 2021). Regulatory instruments such as the European Union’s GDPR and South Africa’s Protection of Personal Information Act (POPIA) provide foundational data governance frameworks, but these are often general in scope and not tailored to the unique ethical demands of evaluation practice (DLA Piper, 2022; Pisa et al., 2020; UNDP, 2017).

In the South African context, digital evaluation tools—ranging from mobile data collection apps to AI-assisted analysis platforms—are increasingly used in both public and donor-funded programs. However, national oversight systems have yet to adequately adapt to these shifts. Regional governance instruments, such as the African Union’s Convention on Cyber Security and ECOWAS’s Supplementary Act on Personal Data Protection, set the groundwork for digital regulation (African Union, 2014; Daigle, 2021; ECOWAS, 2010). Likewise, countries such as Kenya and Tanzania have established research ethics bodies, while South Africa’s National Research Foundation (NRF) oversees ethical compliance in research (African Union, 2019; Internet Society, 2020). Yet these bodies rarely provide evaluator-specific guidance on digital technologies, leaving a critical policy vacuum (Srivastava, 2022). The African Union’s Digital Transformation Strategy (2020–2030) promotes sovereignty and governance of digital systems, but it does not directly address ethical risks in evaluation technologies such as algorithmic bias, data repurposing, or coerced consent (African Union, 2019; Pisa et al., 2020).

International organizations have acknowledged these challenges but largely focus on general AI or data ethics rather than sector-specific applications like evaluation. The OECD, UN, and World Bank have advocated for policy harmonization around algorithmic governance and data ethics (OECD, 2021; Pisa et al., 2020; United Nations, 2015). While influential frameworks like the Belmont Report (1979), the OECD AI Principles (2019), and the EU’s Ethics Guidelines for Trustworthy AI outline broad principles—such as transparency, accountability, and fairness—they lack context-specific provisions for evaluation processes (Daigle, 2021; Office of the Secretary, 1979; Pisa et al., 2020). Moreover, frameworks developed by the Internet Society and the African Commission on Human and Peoples’ Rights emphasize digital rights and expression but do not tackle the methodological and power-related ethical risks that emerge when digital tools reshape evaluation processes (African Commission on Human Peoples’ Rights, 2019; Internet Society, 2020). As such, evaluators are left navigating fragmented and often outdated ethical standards.

This study aims to address these gaps by critically examining the ethical risks posed by digital evaluation tools in South Africa and assessing the adequacy of existing policy frameworks to regulate them. It investigates how issues such as algorithmic bias, weak consent protocols, and evaluator disempowerment emerge in practice, drawing on empirical data from semi-structured interviews across sectors. Unlike traditional paper-based methods, digital tools raise unique risks related to data commodification, automation of analysis, and black-box decision-making, which require context-sensitive ethical frameworks. Although legislation like POPIA and policy guidance like the National Evaluation Policy Framework (NEPF) offer legal reference points, they fall short in regulating the ethical implications of AI-assisted evaluation or cloud-based data ecosystems. This study contributes by offering grounded recommendations to enhance digital ethics capacity, promote methodological autonomy, and develop a coherent, context-driven governance framework. The remainder of the paper reviews existing literature, presents the methodological design, and discusses findings that inform these recommendations (African Union, 2014; MERL Tech & CLEAR Anglophone Africa, 2020; Pisa et al., 2020).

Theoretical framework: Ethics of Technology

This study is underpinned by the Ethics of Technology, a framework that critically examines the moral and societal implications of technological systems by emphasizing their interaction with human values, institutional contexts, and socio-political structures. Rooted in the foundational works of Hans Jonas (1984) and Feenberg (1999), this perspective challenges the assumption that technological progress is inherently neutral or beneficial. Instead, it views technologies as socially constructed artifacts shaped by the ideologies and priorities of their creators and users (Feenberg, 1999; Winner, 1986). This is especially relevant in the South African evaluation context, where digital tools—such as mobile data platforms, AI-driven analytics, and algorithmic decision systems—are increasingly embedded in state and donor-funded evaluation practices. The framework draws attention to ethical concerns like algorithmic bias, data privacy, evaluator disempowerment, and coercive consent mechanisms, which often emerge when efficiency and scalability are prioritized over social justice and inclusion (Bijker et al., 2012; Stahl, 2021; Zuboff, 2019). By applying this framework, the study interrogates how digital evaluation tools reflect and amplify existing inequalities, particularly when evaluators lack autonomy to question tool design or institutional pressures distort ethical judgment.

Central to the Ethics of Technology are four key principles: responsible innovation, human agency, fairness, and participatory governance. Responsible innovation stresses that digital tools must be designed and implemented with ethical foresight, transparency, and accountability (Floridi, 2020; Von Schomberg, 2013). In evaluation, this includes ensuring that systems do not reproduce historical biases or obscure methodological assumptions. Human agency reminds us that evaluators are not passive users of technology—they interpret, contextualize, and challenge how digital systems are used, particularly under political or contractual constraints (Feenberg, 1991; MacKenzie and Wajcman, 1999). Fairness relates to how digital tools may marginalize rural or historically disadvantaged communities, often by relying on biased training datasets or inaccessible digital platforms (Brey, 2012). Participatory governance, finally, emphasizes involving stakeholders—especially evaluation participants—in decisions about how data is collected, analyzed, and reported (Bijker et al., 2012; Floridi, 2013). These principles guide the analysis in this study, which explores how ethical risks manifest through digital evaluation practices in South Africa and what governance reforms are needed to promote equity, legitimacy, and evaluator autonomy. By situating digital tools within this ethical framework, the study proposes that evaluation systems should not merely comply with legal regulations like POPIA but actively promote justice and public interest through inclusive and context-sensitive design.

Methodological framework

This study adopts a pragmatist research approach that emphasizes problem-solving and the generation of contextually relevant, actionable insights. Pragmatism is particularly well suited for examining complex, real-world challenges, such as ethical dilemmas in digitally mediated evaluations, because it integrates both objective and subjective perspectives to understand phenomena in situ (Creswell and Plano Clark, 2017). This paradigm supports methodological flexibility, enabling researchers to select tools and techniques best suited to the research problem (Kaushik and Walsh, 2019). In the South African evaluation context—marked by regulatory complexity and socio-political variability—this approach allows for a holistic analysis of ethical risks, institutional constraints, and practitioner agency. A qualitative design was selected to facilitate an in-depth, context-sensitive understanding of how evaluators experience and navigate ethical tensions associated with digital tools. This aligns with pragmatism’s emphasis on practical relevance and ensures that findings are both theoretically grounded and applicable to evolving professional and policy contexts (Patton, 2015). The integration of stakeholder experiences, institutional analysis, and applied ethics allows the study to connect macro-level governance concerns with micro-level evaluator practices, offering a robust methodological foundation for producing actionable policy and ethical recommendations.

Sampling and recruitment

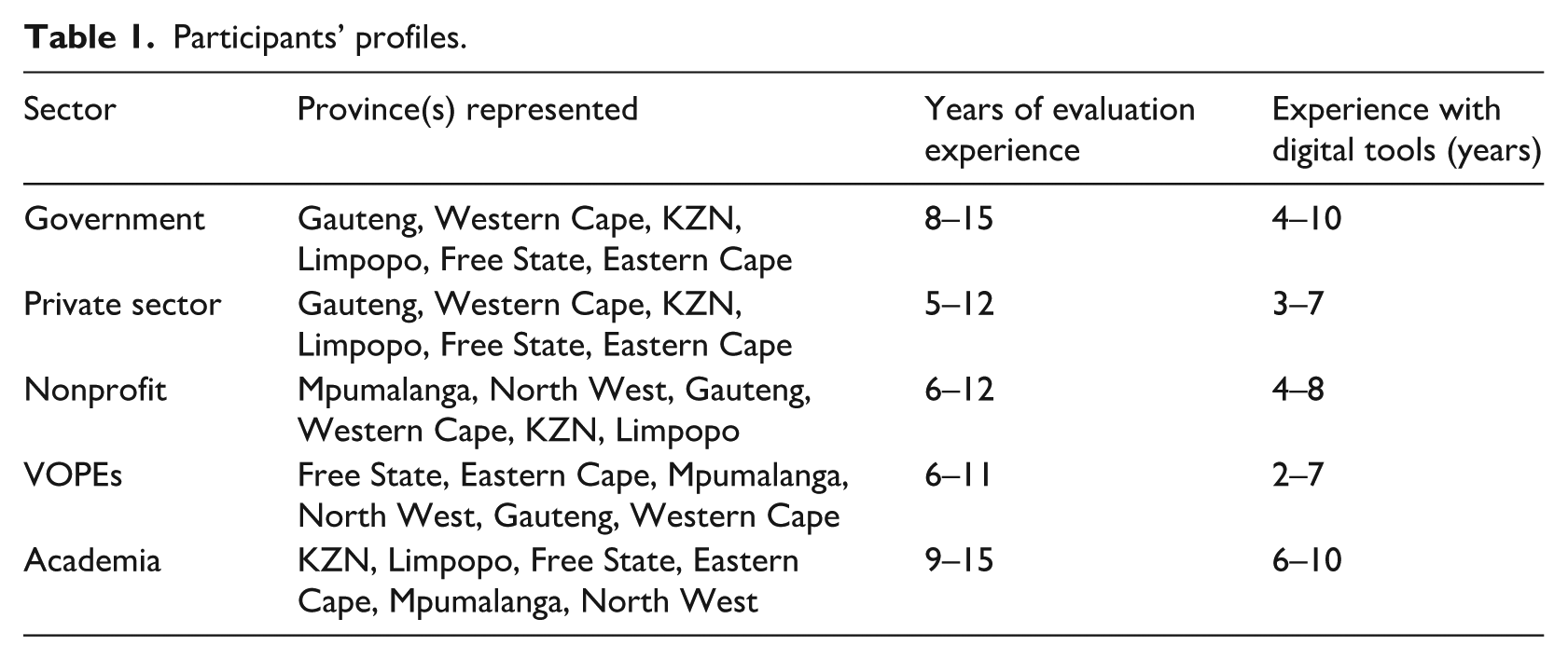

This study used stratified purposive sampling to deliberately select 30 participants (six from each of five sectors: government, private consultancies, NGOs, VOPEs, and academia) based on their expertise, qualifications, and direct involvement in technology-assisted evaluations (Bryman, 2016). Recruitment was conducted through SAMEA networks and academic referrals, with all participants providing informed consent in line with ethical research standards (Braun and Clarke, 2022). This approach ensured broad representation across institutional roles, digital proficiency levels, and ethical awareness, spanning all nine provinces of South Africa; a summary of participant profiles is presented in Table 1.

Participants’ profiles.

Data collection

Semi-structured interviews were used as the primary method of data collection, offering both structure and flexibility in exploring ethical concerns related to digital evaluation. This approach allowed the researcher to probe deeply into participants’ insights while adapting questions based on emerging themes and sector-specific experiences (Bryman, 2016). At the start of each interview, participants were provided with working definitions to ensure a shared understanding of key terms. “Digital tools” were defined as technology-enabled platforms used in evaluation, including mobile data collection apps, dashboards, and cloud-based management systems. “AI” referred specifically to algorithmic or machine-learning systems that automate analysis or decision-making in evaluation contexts. “Digital evaluation” was framed as the use of such technologies to assess programs and policies. These definitions were shared to ensure conceptual consistency and to clarify scope during the interviews.

The interview guide was structured around three thematic areas: (1) participants’ perspectives on ethical evaluation frameworks, particularly their alignment with South African and international regulations; (2) challenges in integrating digital tools, including concerns around data privacy, algorithmic bias, and informed consent; and (3) strategies for strengthening ethical governance in technology-assisted evaluations. Each interview lasted approximately 45–60 minutes and was conducted via video conferencing platforms, enabling participation from all nine provinces without geographic barriers (Creswell and Plano Clark, 2017; Guest et al., 2017). All interviews were audio-recorded with prior consent and transcribed verbatim. Field notes were taken during and after each session to capture contextual observations and non-verbal cues, enriching the analytical depth of the dataset (Braun and Clarke, 2022). The semi-structured format ensured consistency across interviews while leaving room for unanticipated insights based on participants’ diverse institutional backgrounds. This methodological approach contributed to the depth and richness of the data, aligning with the study’s objective to generate context-sensitive, actionable findings.

Data analysis

The study applied reflexive thematic analysis following Braun and Clarke’s (2006, 2022) six-phase framework, which offered flexibility in examining the ethical risks associated with digital evaluation. This method allowed both inductive (data-driven) and deductive (theory-informed) coding, providing a nuanced understanding of how participants experienced digital tools across institutional sectors (Guest et al., 2017). Guided by the Ethics of Technology framework (Feenberg, 1999; Stahl, 2021), the analysis began with an in-depth review of transcripts and field notes, with key terms like “digital tools” and “AI” used consistently for conceptual alignment. NVivo software supported the coding process, while reliability was strengthened through independent coding by a second researcher and consensus reconciliation (Guest et al., 2017). Trustworthiness was further ensured using Lincoln and Guba’s (1985) criteria of credibility, transferability, dependability, and confirmability, alongside reflexive journaling and an audit trail (Flick, 2018).

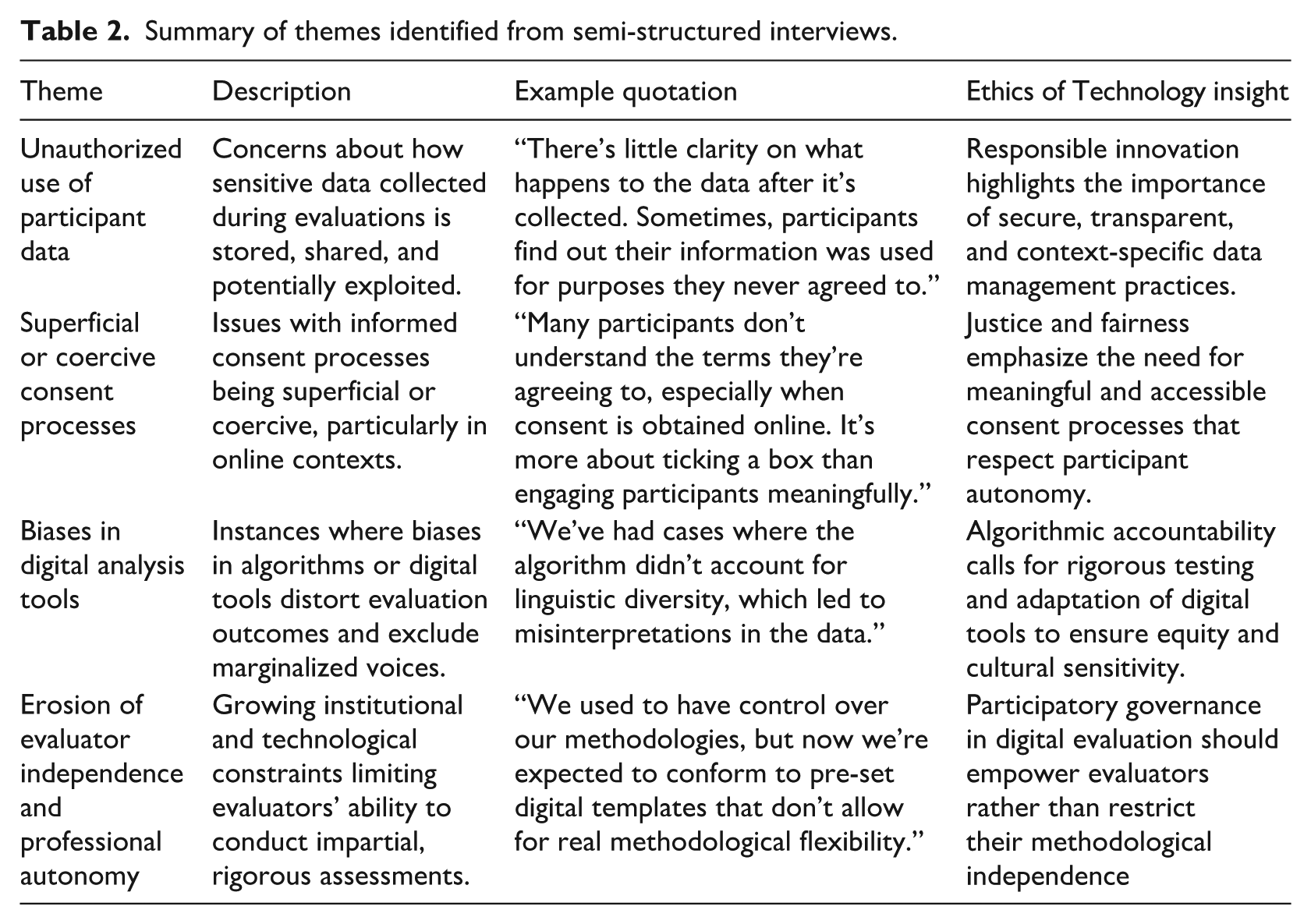

The analysis produced four major ethical themes reflecting systemic risks in digital evaluation: unauthorized use of participant data, superficial or coercive consent processes, biases in digital analysis tools, and erosion of evaluator independence. These themes highlighted governance gaps, regulatory weaknesses, and the need for stronger ethical safeguards. A summary of these findings, supported by participant quotations and analytical insights, is presented in Table 2, which underscores how digital evaluation practices often privilege efficiency and technological convenience at the expense of ethical principles such as fairness, accountability, and professional autonomy (Creswell and Plano Clark, 2017; Patton, 2015).

Summary of themes identified from semi-structured interviews.

Findings and discussions

Biases in digital analysis tools

This study revealed substantial concerns about the ethical and structural consequences of digital bias in evaluation, particularly in how digital tools marginalize rural and underrepresented communities. Evaluators across sectors—including government, NGOs, academia, and the private sector—consistently reported that algorithmic evaluation tools do not operate neutrally but instead encode and perpetuate pre-existing inequalities. One government evaluator reflected on this tension: “We conducted an impact assessment using a digital data analytics platform, and it consistently overrepresented urban participants while underrepresenting rural voices. The algorithm was designed for efficiency, not equity” (PGOV4). Structural conditions—such as poor Internet infrastructure, limited digital literacy, and reduced access to smartphones and cloud-based platforms—compound this bias, often excluding rural respondents from being “legible” to digital systems. As highlighted by Budziszewska and Potluka et al. (2025) and Atari et al. (2023), such exclusion is not incidental but systemic: algorithms privilege users with frequent, clean, and digitally traceable data, effectively sidelining communities without these affordances. This reinforces the critique from Feenberg (1999) and Stahl (2021) that digital systems reflect the priorities of their creators and institutional sponsors rather than the diversity of end-users. The result is an epistemic skew: evaluations that appear rigorous on the surface but exclude the very populations most in need of equitable policy attention.

Another major theme was the inability of digital tools—especially transcription software and natural language processing (NLP) algorithms—to adequately process multilingual, culturally nuanced qualitative data. Many evaluators noted that these tools struggle with South African languages and accents, often mistranscribing or deleting sections of interviews with rural or indigenous participants. One nonprofit evaluator shared, “We used an AI-based transcription tool to analyze interview data, but it consistently failed to recognize local languages and accents. Entire sections were either missing or incorrectly interpreted” (PNFP5). This issue was echoed by academic participants, such as one who said, “We had to manually recheck every transcript because the AI tool failed to pick up isiXhosa and Sepedi responses properly. If we hadn’t intervened, key insights would have been lost” (PACD3). Such exclusions are not only technical failures but violations of ethical principles of inclusivity and representation. According to Bender et al. (2021) and Blodgett et al. (2020), most NLP tools are trained on English-dominant, Western corpora and are ill-equipped for linguistically rich environments like South Africa. In an evaluation setting, these errors distort the dataset, undermining both the credibility of findings and the ethical duty to represent all voices equitably. Birhane (2021) warns that when evaluation software is applied uncritically, it can erase the very people evaluations aim to empower—an outcome that the Ethics of Technology framework sees as a failure of both design and governance.

Digital evaluation tools were also found to oversimplify complex human expressions through flawed analytic assumptions. Evaluators criticized sentiment analysis tools that misclassified participant feedback, particularly critiques or frustrations, as negative sentiment rather than recognizing them as signs of meaningful engagement. As one private-sector evaluator reported, “We used a commercially available text analysis tool for a health program evaluation, and it flagged critical participant feedback as ‘negative sentiment’ instead of recognizing it as constructive criticism” (PPVT2). In contexts where evaluation feedback is meant to shape future programming, these misinterpretations carry real risks: silencing dissatisfaction, flattening nuance, and presenting a falsely positive picture of program success. As Mohammad (2021) argues, AI-based sentiment analysis lacks contextual awareness and often fails to distinguish between critique as resistance and critique as care. These analytic gaps, when institutionalized into funder reports or dashboards, generate evaluation results that are not only methodologically flawed but ethically compromised. Atari et al. (2023) highlight that such tools risk amplifying the voices of already-privileged users while filtering out dissenting or culturally unfamiliar perspectives. The Ethics of Technology framework calls for accountability and responsiveness in tool design—but evaluators repeatedly noted that their attempts to intervene were blocked by technical inflexibility or institutional pressure to maintain status quo workflows.

Finally, evaluators expressed frustration over the growing power of digital tools to dictate methodological choices, especially in donor-funded and government-sponsored evaluations. Many described environments where AI-based tools were adopted for their speed and “innovation appeal,” even when those tools were poorly suited to local contexts. One nonprofit evaluator recounted, “We were expected to integrate AI-driven pattern recognition into our impact assessments because it ‘sounded innovative,’ but in practice, it generated misleading conclusions. The algorithm couldn’t account for cultural nuances or historical inequalities, but the client treated it as infallible” (PNFP3). Similarly, evaluators struggled to contest embedded biases once digital systems became entrenched. A government evaluator explained, “We discovered that the tool systematically undervalued informal learning interventions, but we were told the model couldn’t be changed” (PGOV6). These accounts echo Noble (2018) and O’Neil (2016), who caution that algorithms are often treated as objective arbiters, when in fact they reflect particular histories, datasets, and institutional logics. The final concern raised was the power asymmetry between evaluators and digital vendors or funders. One academic evaluator lamented, “We raised concerns about the statistical model being used, but we were told that since the model had been ‘validated’ internationally, it was not open for debate” (PACD4). This illustrates what Feenberg (1999) terms technological hegemony—where technology becomes a means of controlling methodological discourse, rather than a tool of democratic knowledge production. The study thus highlights an urgent need for participatory governance, localized tool design, and stronger ethical oversight mechanisms that center equity, transparency, and accountability in digital evaluation.

Erosion of evaluator independence and professional autonomy in digital evaluation

The findings of this study indicate that one of the most significant yet often overlooked ethical challenges in digital evaluation is the erosion of evaluator independence and professional autonomy. Evaluators across government, nonprofit, academic, and private sectors reported that while digital tools offer efficiency and scalability, they also introduce subtle but pervasive forms of external control. These tools have increasingly enabled funders, donors, and institutional actors to shape evaluation design, analysis, and reporting—frequently at odds with the evaluators’ methodological expertise. One nonprofit evaluator observed: “We used to have the final say on methodological decisions, but now we are often expected to align with pre-set digital systems that dictate how data should be collected, analyzed, and reported. There’s little room for professional judgment anymore” (PNFP6). This aligns with concerns raised by Patton (2015) and Bamberger et al. (2016) about the evaluator’s shifting role from an independent knowledge producer to a compliant technician. Within the Ethics of Technology framework, this reflects how technological systems can become instruments of managerial oversight, reducing evaluator discretion under the guise of procedural standardization (Feenberg, 1999; Stahl, 2021).

Evaluators frequently described being required to use digital data collection systems and tools that were externally designed and imposed by funding bodies or international agencies. While these systems were often promoted as promoting “consistency” and comparability, they rarely allowed for methodological flexibility or adaptation to local contexts. One evaluator from a government agency recounted: “We were required to use a pre-designed mobile data collection app, but it didn’t allow for open-ended responses, making it impossible to capture nuanced feedback from participants” (PGOV3). Such rigidity mirrors critiques from the Ethics of Technology framework, which highlight how technological artifacts are embedded with social and political assumptions—frequently reflecting the priorities of funders rather than the communities being evaluated (Winner, 1986; Feenberg, 1999). As digital infrastructures become the default, methodological pluralism—a core ethical principle in evaluation—is undermined by systematized procedures that prioritize speed and administrative oversight. These findings echo broader critiques of “technocratic evaluation,” where efficiency and uniformity are privileged over contextually grounded, ethically reflective inquiry (Morozov, 2013; Zuboff, 2019).

Beyond methodological constraints, evaluators described the growing role of digital tools in shaping how results are interpreted and reported—often through algorithmic outputs or data dashboards that favor quantitative summaries over qualitative insight. Several reported that AI-driven pattern recognition or automated reporting tools were treated as inherently objective, making it difficult to challenge outputs that did not align with field observations. One evaluator in a private firm noted: “The software identified ‘emerging trends’ in the data that didn’t actually align with what we observed in the field. But because it was AI-generated, the funders were reluctant to question it. They trusted the algorithm more than the evaluators” (PPVT4). This reflects concerns raised by Noble (2018) and O’Neil (2016), who caution that AI-based tools often obscure embedded biases by presenting their outputs as neutral. For evaluators, this creates a dynamic where interpretive authority is ceded to opaque systems, marginalizing human judgment and context-sensitive reasoning. Within the Ethics of Technology lens, such over-reliance on algorithmic outputs poses ethical risks by displacing professional expertise and reducing accountability for how findings are constructed and disseminated (Stahl, 2021).

Another key concern raised was the rise of real-time digital monitoring and cloud-based evaluation management systems, which evaluators described as a form of institutional surveillance. These platforms allow funders to track evaluation activities remotely, sometimes at a granular level, thus discouraging critical interpretation or divergence from expected outcomes. One evaluator noted, “We now have to submit all our raw data into an online system where funders can track progress in real-time. This might seem transparent, but in practice, it means our preliminary analyses are constantly scrutinized, and we feel pressured to align with funder expectations” (PNFP2). In many cases, evaluators also reported having to use digital reporting templates that simplified or restructured findings to fit predefined frameworks. An academic evaluator recalled: “We raised concerns about the digital template oversimplifying our findings, but were told it couldn’t be changed because it was standardized across all projects” (PACD4). These examples illustrate how digital tools can institutionalize epistemic control, narrowing the range of what counts as legitimate knowledge. The result is a system where professional autonomy is curtailed not just through direct intervention but through the infrastructures that govern what evaluators can see, say, and report. The Ethics of Technology framework calls for participatory governance in such systems, emphasizing that evaluators must have a voice in how digital tools are designed, implemented, and evaluated—not just used (Feenberg, 1999; Winner, 1986).

Superficial or coercive consent processes

Evaluation practitioners across all sectors described the growing superficiality of consent processes in digital evaluation environments. Participants repeatedly emphasized that digital platforms—whether basic survey tools or more complex AI-driven interfaces—often reduced informed consent to a perfunctory click or digital checkbox. What should be an interactive, meaningful ethical practice has, in many cases, become a passive, one-sided formality. An evaluator from a nonprofit organization shared, “When we moved to an online data collection system, we started getting consent through a simple ‘agree’ button. But let’s be honest; no one reads the full document. It’s a standard template, written in legal jargon, and participants feel like they have no choice but to accept” (PNFP3). These insights echo broader concerns in digital ethics literature, where scholars argue that consent in digital environments is often symbolic rather than substantive, undermining principles of autonomy and transparency (Floridi, 2013; Zuboff, 2019). The Ethics of Technology framework (Feenberg, 1999) further clarifies how the infrastructure of digital systems—interfaces, defaults, and automation—shapes the nature of human interaction, privileging efficiency over relational ethics.

This reduction of consent into a mere procedural form is especially evident in evaluations conducted through donor-driven, large-scale digital tools. Evaluators noted that in such settings, technological efficiency is prioritized over participant engagement, and the consent process is often automated to ensure scalability. This creates problems in low-literacy, low-connectivity contexts, particularly rural areas where digital fluency is limited. One evaluator working in a private consultancy observed, “We often collect data from participants in rural areas using mobile survey apps. There is often no direct human interaction, just an automated consent form on a screen. We assume they understand, but realistically, some participants click through without knowing what they’re agreeing to” (PPVT4). These findings reflect critiques by Feenberg (1999) and Winner (1986) that technologies are never neutral—they embed assumptions, shape user behavior, and often reinforce institutional priorities over user needs. In this case, digital tools reduce ethical engagement to rapid transactions, sidelining the interpersonal interactions that typically allow for clarification, negotiation, and trust-building. While tools like mobile apps and online forms are presented as neutral enablers, they in fact restructure power relations, often to the detriment of participants.

The problem is further complicated when consent processes, though technically present, function in coercive or misleading ways. Several evaluators shared examples where participation in a digital evaluation was framed as voluntary but was implicitly linked to program eligibility or service delivery. A government sector evaluator explained, “We were evaluating a social welfare program, and participants were required to complete digital surveys. They were told that participation was voluntary, but many believed that it might affect their eligibility for benefits if they refused. Even though we assured them otherwise, the power dynamics made true voluntariness impossible” (PGOV2). Such findings underscore the distinction between formal consent and ethical consent. Even when participants technically agree, that agreement may be shaped by fear, pressure, or misunderstanding—conditions that violate the principle of free and informed participation. These situations are particularly dangerous in digital contexts where interactions are mediated through impersonal systems, making it difficult for evaluators to detect concerns, explain terms, or correct misconceptions in real time. As the Ethics of Technology framework asserts, the structure of digital systems can reinforce existing social inequalities if not designed with critical attention to context and power (Feenberg, 1999; Stahl, 2021).

Finally, participants voiced serious concerns about the default settings embedded in many digital consent systems, which often favor data-sharing by default and require manual intervention to opt out. These defaults can result in participants unknowingly agreeing to extensive data use, repurposing, or third-party sharing. One evaluator from the private sector reported, “We discovered that the survey platform had a default setting that allowed them to keep a copy of all responses and use them for market research. It was buried in the terms and conditions, and unless we manually disabled it, every evaluation we conducted resulted in participant data being stored outside our control” (PPVT6). Another evaluator noted the lack of industry-wide guidance, stating, “We know that online consent is flawed, but there’s no industry standard for fixing it. Everyone just follows what the software allows, rather than designing consent processes that truly respect participants” (PNFP5). These findings resonate with critiques of digital surveillance and algorithmic manipulation (Winner, 1986; Zuboff, 2019), and they highlight a fundamental challenge: evaluators are increasingly required to use platforms whose ethical assumptions are embedded in technical architecture. Without updated standards from ethics review boards or VOPEs, evaluators remain trapped between institutional mandates and ethical ambiguity. As digital evaluations become more widespread, these tensions will only intensify—making it essential that consent is redefined not as a passive agreement, but as a deliberate, informed, and human-centered process adapted for the digital age.

Unauthorized use of participant data

The integration of digital tools—including mobile survey apps, cloud-based databases, analytics platforms, and AI-assisted dashboards—into evaluation practices has undoubtedly improved operational efficiency and data accessibility. However, as this study reveals, such advancements have also introduced significant ethical risks, particularly surrounding the unauthorized use of participant data. Many evaluators reported that once data is entered into digital systems, control over its use often shifts away from those who collected it, leaving them—and participants—uninformed about how that data may later be used, stored, or shared. One evaluator from the private sector explained, “We gather data on behalf of clients, and once it’s submitted, we have no control over how it’s used. I’ve had instances where I found our evaluation data being referenced in reports that had nothing to do with the original purpose. Participants have no idea this is happening, and there is no system in place to track it.” This breakdown in transparency and control illustrates how digital evaluation—defined in this study as the use of digital platforms, infrastructures, and analytical technologies in the design, implementation, and reporting of evaluation—can facilitate data misuse when ethical oversight is lacking. The Ethics of Technology framework (Feenberg, 1999; Jonas, 1984) highlights that technologies are not neutral; they embody the intentions, incentives, and asymmetries of those who build and deploy them. In this case, the architecture of digital systems often privileges institutional efficiency over ethical responsibility, sidelining the rights of participants in the process.

Several participants expressed concern that data collected under the promise of confidentiality and consent was later repurposed without their knowledge or approval. This was especially common in donor-funded or multi-stakeholder evaluations where data passed through multiple hands and digital repositories. A nonprofit evaluator reported, “Our organization switched to a cloud-based data collection system, and we were told it was secure, but we later realized that the software had clauses allowing for data to be shared for ‘research purposes’ without participant consent. It was buried in the fine print, and we had already used the system for multiple evaluations by the time we found out.” These experiences underscore the ethical fragility of AI-driven and algorithm-supported evaluations, where the automation and speed of data processing often outpace ethical review procedures. Such risks align with existing scholarship pointing to “consent collapse” in digital environments, where users—particularly vulnerable populations—are unable to meaningfully understand or negotiate the terms of digital participation (Pisa et al., 2020; Zuboff, 2019). As several evaluators noted, the shift toward digital platforms often reduces informed consent to a procedural checkbox rather than a substantive dialogue with participants. A government evaluator observed, “It’s very transactional—tick the consent box, collect the data, upload it. But do participants really understand what’s going to happen to their information? Probably not.” These practices risk undermining ethical evaluation altogether, substituting speed and scale for care, dignity, and participatory engagement.

The lack of enforceable guidelines was another major issue. While the POPIA offers a legal framework for safeguarding personal data in South Africa, participants pointed out its inconsistent application across sectors and the absence of mechanisms specific to the evaluation profession. Evaluators working in government reported having clear data protection protocols when conducting internal assessments, but noted that these standards often did not carry over when evaluations were outsourced to consultancies or NGOs. One evaluator explained, “When we conduct evaluations internally, we have specific data protection protocols to follow, but once evaluations are outsourced, we lose oversight. We don’t know if private consultancies follow the same standards, and there’s no mechanism for enforcement.” This regulatory gap creates a fragmented ethical landscape, where digital evaluations are subject to varying levels of scrutiny depending on institutional context. Scholars have argued that without embedding ethical governance within daily practice—through policies, institutional accountability, and evaluator capacity building—even robust legislation like POPIA cannot guarantee ethical outcomes (Daigle, 2021; Pisa et al., 2020; Stahl, 2021). The Ethics of Technology approach reinforces this concern, asserting that laws alone cannot address the ethical implications of technology unless supported by systems that reflect justice, accountability, and participatory values (Feenberg, 1999; Von Schomberg, 2013).

Perhaps most troubling were reports of data commodification and the increasing treatment of evaluation data as proprietary capital rather than a community resource. This was most visible in the private sector, where consultancies often retained ownership over data, even restricting access to clients or communities unless additional fees were paid. One evaluator recalled, “We completed an evaluation for a client, but they were later told that if they wanted access to the raw data, they needed to pay extra. The firm argued that it belonged to them because they had processed and digitized the data. It had nothing to do with ethical data governance; it was purely a business decision.” Others described data being reused in advocacy reports, media campaigns, or strategic proposals without re-consenting the participants who had provided it. An academic evaluator shared, “I’ve seen cases where evaluation findings were repackaged for advocacy reports without informing the communities that contributed the data. The justification is always that it’s for a good cause, but ethically, we should not make these decisions on participants’ behalf.” These findings speak to the broader issue of data extractivism—the process of harvesting data from populations without reciprocal accountability or benefit—and echo critiques of the corporatization of digital infrastructure, where a few powerful actors control access to insights derived from many (Zuboff, 2019; Feenberg, 1999; Stahl, 2021). The digitalization of evaluation, without proper checks and ethical safeguards, risks reinforcing the very power asymmetries evaluations are often meant to challenge. Evaluators must therefore play an active role not just as data collectors, but as ethical stewards committed to protecting participant rights in an increasingly digitized and commodified landscape (Havrda and Klocek, 2023).

Sectoral differences in ethical concerns and experiences

While core ethical concerns—such as algorithmic bias, consent issues, and evaluator disempowerment—were shared across all respondent groups, notable sectoral differences emerged in how these challenges were experienced and navigated. Government-sector evaluators often cited rigid procurement and compliance systems as reinforcing top-down decisions about which digital tools to adopt, limiting their ability to contest problematic analytics. In contrast, nonprofit-sector respondents were more concerned with the donor-driven imposition of AI-based tools that did not align with local community contexts, especially in multilingual settings. Private-sector evaluators, typically operating under contractual pressure for quick delivery, reported facing less ethical scrutiny from clients and greater institutional pressure to present AI outputs as authoritative, even when flawed. Meanwhile, academic evaluators highlighted the challenge of aligning ethical research standards with institutional requirements when participating in policy evaluations reliant on digital platforms. These sectoral contrasts reveal that while ethical risks in digital evaluation are systemic, they are also shaped by institutional mandates, funding relationships, and organizational cultures—factors that influence both the degree of autonomy evaluators hold and the capacity they have to resist unethical digital practices.

Strategies for addressing ethical challenges in digital evaluation

The ethical risks posed by digital tools in South African evaluations are not theoretical—respondents described direct threats to data integrity, inclusivity, consent, and professional autonomy. Yet, these risks are not immutable. This study highlights several grounded, context-specific strategies to promote responsible, ethical digital evaluation practices within South Africa’s evolving M&E ecosystem. Central to these proposals is a call for embedded ethical governance—defined not as compliance after the fact, but as a proactive, participatory approach to shaping how digital infrastructures are designed and used. One critical strategy is the development of evaluation-specific data governance frameworks that not only comply with South Africa’s POPIA but go further by enshrining the rights of participants and evaluators. Respondents stressed the need for data localization policies, especially in donor-funded projects using foreign platforms. A proposal that emerged is the formation of a National Data Ethics Council for Evaluation, hosted under the Department of Planning, Monitoring and Evaluation (DPME) and supported by SAMEA, to regulate the ownership, storage, and ethical use of evaluation data. This body would provide enforceable standards across sectors—ensuring that data remains under national jurisdiction and is used for public interest, not private gain. This strategy directly aligns with Feenberg’s (1999) call for participatory governance and Stahl’s (2021) emphasis on embedding ethics at the design stage of technology deployment.

Equity and inclusivity in digital evaluation cannot be achieved without addressing algorithmic bias—particularly the exclusion of rural and linguistically diverse communities. Evaluators recounted cases where NLP tools systematically failed to capture isiZulu, Sepedi, or isiXhosa responses, effectively silencing key populations. To address this, the study proposes the co-development of locally trained AI models with South African evaluators, linguists, and computer scientists. This approach shifts the locus of AI design from foreign vendors to local stakeholders, reducing epistemic dependence and increasing cultural specificity. It also aligns with the National Digital and Future Skills Strategy (DPME, 2020), which encourages domestic capacity for ethical AI. While resource-intensive, this strategy need not require building tools from scratch—instead, it involves retraining existing open-source models (e.g. spaCy, BERT) on South African linguistic datasets. Potluka et al. (2025) argue that failure to invest in inclusive AI perpetuates structural bias and undermines evaluation validity. Locally informed tools would not only improve representation but also give evaluators greater interpretive control over findings—countering the “black box” effect and fulfilling the Ethics of Technology principle of responsible innovation.

A third strategy involves protecting evaluator autonomy in the face of rising institutional and algorithmic control. Respondents described how pre-configured dashboards and rigid digital templates left little room for methodological flexibility, often forcing evaluators to prioritize funder interests over participant realities. To counter this, we propose an Ethical Evaluation Charter, developed collaboratively by DPME, SAMEA, and evaluation practitioners, which would establish methodological rights for evaluators working in state and donor-funded evaluations. These rights would include the ability to override digital tools that compromise ethical standards, to propose alternative methodologies, and to be consulted in tool selection. Reviewers rightly noted that the erosion of evaluator agency has profound implications for evaluation’s role in governance—this Charter is a direct response to that concern. Importantly, this proposal also addresses affordability and feasibility: it does not reject digital tools outright but ensures evaluators are empowered to shape their use. As Stahl (2021) and Zuboff (2019) caution, ethical digital transformation must decentralize control—not just digitize status quo power relations.

Finally, strategies to address superficial or coercive digital consent practices must go beyond improving UX design or translation. In South Africa, where digital literacy is highly uneven and many evaluations involve vulnerable populations (e.g. grant recipients or informal workers), checkbox consent is ethically inadequate. Respondents called for a hybrid consent model, combining digital documentation with in-person or telephonic conversations, especially in evaluations with high ethical sensitivity. This is not only feasible but already practiced in some health and education evaluations. It upholds the Batho Pele principles of transparency and citizen dignity, while recognizing the need for procedural innovation. Furthermore, to ensure sustainability, these ethical practices must be supported by capacity building: respondents emphasized the need for digital ethics, data literacy, and AI accountability training for evaluators. Currently, the expertise needed to critique algorithmic systems is concentrated among funders or developers, not evaluators. Embedding digital ethics in postgraduate curricula and SAMEA certification processes would build long-term resilience. This final strategy—ethics through professional capacity—ensures that evaluators are not passive recipients of technological change, but informed stewards of evaluation’s evolving ethical landscape.

Conclusions and future research

This study demonstrates that while digital tools and AI-driven systems enhance evaluation efficiency through faster data collection, streamlined analysis, and broader reach, they also introduce systemic ethical risks that South Africa’s current evaluation infrastructure fails to address. Findings show that digital evaluation is shaped less by methodological rigor than by funder interests, proprietary software constraints, and assumptions embedded in global technology platforms, privileging urban and digitally connected populations while marginalizing rural, multilingual, and socio-economically disadvantaged communities. Ethical concerns thus extend beyond technological use to questions of control, decision-making, and representation, with digital evaluation at risk of becoming a depoliticized compliance tool rather than a democratic mechanism for accountability. Future research should therefore investigate how power relations shape ethical decision-making, exploring tensions between evaluator autonomy, institutional mandates, and technological constraints, while examining how control over infrastructures such as consent systems, dashboards, and AI tools determines whose voices are heard, whose data is prioritized, and how findings are presented—revealing ethical breaches not as isolated failures but as systemic outcomes of digital and institutional governance.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.