Abstract

This study investigates the evolution of evaluation approaches within the charitable sector, emphasising the proliferation of options throughout waves of evaluation diffusion. We draw on imprinting theory to describe the persistence of specific practices despite the emergence of alternatives. The research aims to examine the imprinted values and practices of earlier evaluation waves on the current practice of evaluation by documenting the current methodological practice in a large sample of evaluation reports. The study illuminates the enduring impact of theory-driven evaluation, introduced in the 1980s, on current practices. However, it also reveals how this approach has evolved over subsequent evaluation waves, acquiring divergent characteristics. This imprinting is not only due to effectiveness but also due to normative pressures, which prioritise conformity with norms over practical utility. The study highlights the potential consequences of this imprinting, including management-focused evaluations and mission drift. We conclude by offering practical options to address tensions within the field and call for further research to better understand the nuances of decision-making in evaluation approaches.

Introduction

The refinement and improvement of evaluation approaches is a fundamental feature of the evaluation theory literature and has resulted in a vast array of options. As an illustration, within the articles published by this journal in the preceding year, approaches to evaluation have included theory-driven (Doherty et al., 2022; Hickey et al., 2023), mixed-methods (Tomei, 2023), randomised controlled trial (DiLiberto et al., 2023), process evaluation (DiLiberto et al., 2023), realist evaluation (Manzano, 2022; Van Belle et al., 2023) and participatory narrative inquiry (Zucchini et al., 2022). Despite the utility of different approaches, this ongoing proliferation presents as a growing problem (Ahearn and Mai, 2023; Arena et al., 2015; Bach-Mortensen and Montgomery, 2018; Corvo et al., 2021; Henry and Mark, 2003). Practitioners in the field are challenged when it comes to selecting and applying a context-appropriate approach. This is especially true in the charitable sector where evaluation studies of social programmes are required despite limited resourcing and capacity to invest in staff expertise (Bach-Mortensen and Montgomery, 2018). Furthermore, the charitable sector is especially rich in evaluation approaches; one review identified 47 distinct approaches actively promoted in this space (Ahearn and Mai, 2023).

Continuous innovation in evaluation design has been encouraged by the evolving socio-political environment in which evaluation practice exists (Vedung, 2010). However, as the environment shifts and new waves of evaluation roll in, preceding wisdom is not washed away entirely; instead, elements of previous evaluation approaches remain embedded in the evolving practice (Vedung, 2010). To elucidate the methodological practice of evaluations in the charitable sector, we analysed a collection of published reports. By methodological practice, we refer not only to practical data collection and analysis methods but also conceptual frames and epistemological values ascribed by evaluation approaches which steer the subsequent research direction. Our analysis revealed an unexpected lack of variation in approaches being adopted and a predominant preference for theory-driven evaluation (Chen and Rossi, 1983) and its associated variants. To understand this phenomenon, we utilise the theory of imprinting (Marquis and Tilcsik, 2013), as a scaffold to understand the entrenched nature of evaluation approaches. Our study provides evidence that theory-driven evaluation has substantially influenced the methodological practice of subsequent evaluation waves, but has not emerged unaffected itself. Consequently, we explore the specific values, patterns and norms embedded in evaluation practice. We do this not only to assess the fidelity of promoted evaluation approaches but also to consider the ramifications of imprinting on the evidence produced by evaluation studies.

Waves of evaluation

Even for those deeply familiar with evaluation theory, understanding the advantages of the numerous approaches is challenging (Alkin, 2004; Ebrahim, 2019). One influential conceptualisation has been the four waves of evaluation diffusion presented by Vedung (2010). This model rests on the thesis that the evolution of evaluation has been progressively influenced by the socio-political and governance doctrines of the time. Therefore, like global political systems, the practice of evaluation has been influenced by ‘strong currents from both the left and the right of the political spectrum’ (Vedung, 2010: 264). This oscillating influence in turn led to four waves of evaluation: science-driven, dialogue-oriented, neo-liberal and evidence.

The radical rationalisation movement which dominated the mid-20th century gave rise to the first wave, and indeed the birth of professionalised evaluation itself (Vedung, 2010). This science-driven wave called for evaluation to rigorously measure programme elements as variables and provide robust evidence as to their individual effectiveness (Cook, 2004). This evidence could then be used to instrumental effect to direct policy and practice decisions (Alkin and Taut, 2002). As we now know, the evaluation to policy pathway is far less linear than this suggests (Newman et al., 2017).

Through reaction to the failure of evaluation to lead to better policy, and the increased popularity of interpretivist and constructionist research in academia, the second wave was initiated in the 1970s (Vedung, 2010). The dialogue-oriented wave invoked a greater focus on external validity of evaluation. Participatory and democratic evaluation approaches surged during this time (Cousins and Whitmore, 1998; Greene, 1988; MacDonald, 1974). So did Chen and Rossi’s (1983) theory-driven approach which adopted previous engineering models of evaluation to include a greater focus on context, extraneous variables and mechanisms of action.

This wave came at a particularly sensitive time in evaluation history. Evaluation publications from the 1980s as well as historical reflections of evaluation practice demonstrate a considerable conflict within the field (Alkin, 2004; Cronbach’s, 1980; Scriven, 1986; Vedung, 2010). Chen and Rossi’s (1983) paper Evaluating With Sense: The Theory-Driven Approach opens with a discussion of the two contrasting sides: on the one side of the divide, the experimental and positivist paradigm promoting the use of randomised controlled trials to demonstrate causality between interventions and outcomes; and on the other side, the emerging constructionists of the second wave who argued that controlled trials forced artificial and standardised effects onto incompatible human services programmes (Alkin, 2004; Chen and Rossi, 1983). Chen and Rossi (1983) did not oppose either paradigm, but rather argued that both sides had neglected theory, thereby limiting ‘our understanding of social programs and the efficient employment of evaluation designs in impact assessment’ (p. 284). Therefore, the introduction of theory-driven evaluation marked a groundbreaking reconciliation of the two dominant paradigms of the time. This approach, heavily influenced by the public health domain’s emphasis on understanding mechanisms of action, harmonised scientific legitimacy with the need for practical applicability in human services research (Chen, 2004; Lincoln and Guba, 2004).

The dialogue-orientated wave was somewhat short lived thanks to the socio-political swing to the right typified by the introduction of New Public Management (Farazmand, 2017; Lapuente and Van de Walle, 2020; Vedung, 2010). The neo-liberal wave therefore saw evaluation and top-down scientific management of public services replaced by confidence in market mechanisms and competition (Vedung, 2010). Evaluation became an emergency mechanism for retrospectively assessing failures and was lumped under the banner of performance measurement (Vedung, 2010). Therefore, approaches developed during this wave carry a strong influence of private sector management principles such as results-based accountability (RBA) (Schorr et al., 1995).

The final wave identified by Vedung arrived around the turn of the 21st century. A resurgence in evidence-based governance and privileging of scientific experimentation over professional judgements typified the evidence wave (Alkin, 2004; Pawson and Tilley, 1997). Research synthesis bodies including the Campbell and Cochrane Collaborations were initiated to provide timely access to robust evidence (Hansen and Rieper, 2009). A resurgence in ‘randomized experiments and quasi-experiments’ was observed as well as a decline in qualitative studies indicating that the evidence movement represented ‘the return of science-based evaluation, but in a new disguise’ (Vedung, 2010: 247).

Shortly after the publication of Vedung’s four waves, Picciotto (2015) identified a fifth wave of evaluation coming into view. The social impact evaluation wave reflects the continuation of market reforms and outsourcing of public services to an increasingly hybridised sector of public–private partnerships, social enterprises and impact investing models (Ahearn and Mai, 2023; Picciotto, 2015). Subsequently, the evaluation field needs to ‘adapt its methods and processes to the dynamic pace of decision making favoured by the new actors’ (Picciotto, 2015: 5).

An essential aspect of the evaluation waves, deliberately emphasised through the metaphor, is that each wave is not a discrete epoch receding entirely to leave a blank slate prior to the arrival of the following wave. Instead, as the waves recede and merge into each other, they deposit layers of sediment so that ‘in due time, the evaluation landscape has come to consist of layers upon layers of sediment’ (Vedung, 2010: 265).

Among the most striking layers of sediment is that of theory-driven evaluation. Subsequent permutations of theory-driven evaluation encompass various devices which aim to present a theory of how a programme or intervention will meet its ultimate outcomes. These include theory of change, programme logic models, logical frameworks, outcomes maps and causal chains (Ruff, 2021). While sometimes presented in a narrative form (Reinertsen, 2022), the devices are predominantly depicted as visual models with consistent spatial arrangements programmed in Ruff (2021). Elements such as a typical left-to-right sequencing of interventions to outcomes which culminate in a definitive end point convey a rational and causal logic for the objectives of a social intervention (Ruff, 2021). While demonstrably heralding from Chen and Rossi’s rationalist paradigm, subsequent iterations of these visual models were influenced by the management and accountability expectations of the third wave (Reinertsen, 2022). Indeed, programme logic models are a required component of many RBA arrangements (Fortuin and Van Marissing, 2009; Friedman, 1997; Ruff, 2021). Furthermore, reviews of the fifth-wave social return on investment (SROI) analysis identify that theory of change models have become a frequent element of the analysis approach (Ali et al., 2019; Banke-Thomas et al., 2015).

Therefore, as these various devices evolved and iterated across successive evaluation waves, they have played a significant role in shaping the landscape of evaluation. These layers of sediment now combine together to form the complex landscape of evaluation methodological practice we see today, among which theory-driven evaluation stands out as a prominent layer. Coincidentally, this imagery of layers of sediment is not unique to Vedung’s paper. It is a common metaphor employed within the theory of imprinting.

Imprinting

Imprinting theory describes the pervasiveness of specific organisational elements or practices despite the emergence of better options, or changes in the external environment (Marquis and Tilcsik, 2013). Imprinting is defined as a three-part process that occurs during a period of sensitivity ‘in which the focal entity exhibits high susceptibility to external influences’ (Marquis and Tilcsik, 2013: 195). This is followed by the embedding of elements reflective of the environment at the time and then a continued inertia of those elements ‘despite subsequent environmental changes’ (Marquis and Tilcsik, 2013: 195). Imprinting is often linked with institutional theory as the inertia of elements can be accounted for by the legitimacy granted by adopting existing institutional norms into practice (DiMaggio and Powell, 1983). To elaborate, norms may be perpetuated through coercive, mimetic or normative pressures: they may be formally or informally legislated, copied from other legitimised actors in the environment or embedded within education and training of emerging professionals (DiMaggio and Powell, 1983).

Imprinting theory has previously been applied in the management and administration literature to explain how the socio-political context in which an organisation is formed has a persistent influence on its structure and individual staff capabilities (Marquis and Tilcsik, 2013). Imprinting is a promising theory in public and charitable sector management literature. It has been used to explain how social enterprise alignment to for-profit or for-purpose logic is influenced by the professional background of the enterprises’ founding leadership team (Lee and Battilana, 2013). This imprinting of professional background also influences the selection of performance measurement and evaluation approaches within the social enterprise sector (Lall, 2017). Beyond the organisational level, imprinting theory has previously been applied to understand practices and values at the disciplinary level (Little et al., 2018).

As outlined in the preceding discussion, evaluation practice has continuously evolved in response to external socio-political pressures. As expectations and conventions within the environment shift, evaluators move their practice in new directions and develop approaches to meet these demands. However, through imprinting theory, we consider that practice elements embedded in evaluation during earlier waves may persist in applied evaluation studies regardless of innovations in the evaluation literature. In other words, successive innovations in evaluation practice which logically build upon previous advancements do not inherently represent imprinting. Imprinting is the irrational persistence of elements despite changes in the environment.

This persistence is likely to affect the approach fidelity, but more importantly could also have constitutive impacts on the evaluation studies themselves (Dahler-Larsen, 2011; Ruff, 2021). The practice, findings and consequences of evaluations are not neutral, nor do they exist in a vacuum (Dahler-Larsen, 2011; Raimondo, 2018; Ruff, 2021). Rather, evaluation approaches dictate how the evaluand is defined and measured, subsequently shaping the findings and consequences of the evaluation. As demonstrated empirically by Ruff (2021), discourse and visual elements entangled within evaluation approaches influence the representation of data and therefore the findings of the evaluation. Specifically, the selection of approach influences the findings of the evaluation, leading to ‘very large, and even opposing’ findings from the same programme data (Ruff, 2021: 346). Theory of change models specifically prioritise top-down perspectives on programme outcomes and construct the programme theory away from the perspectives of service users (Ruff, 2021). Such constructions, when used to inform programme design or refinement, may shift the programme away from its ultimate mission, also known as mission drift (Ebrahim, 2019).

Ruff’s study further demonstrated that approach fidelity depends on the expertise of the team. Teams consisting of experts who have authority in the design and implementation of approaches faithfully implemented the approach. Conversely, teams consisting of practitioners made adaptations to approaches through drawing in practices from other often contradicting approaches (Molecke and Pinkse, 2017; Ruff, 2021).

Connecting these findings and the theory of imprinting uncovers two possibilities. First, if imprinting has occurred on the evaluation field, evaluations, especially those conducted by practitioners, are likely to demonstrate practices from previous waves. Second, the adoption of these practices can exert substantial influence, not only on the evaluation process but also on the findings, consequently shaping our understanding and knowledge of the social programme (Dahler-Larsen, 2011; Ebrahim, 2019).

Method

Our research aims to investigate if there is evidence of imprinting in evaluation, and if so, what specific elements have become imprinted. Through this we also seek to understand how patterns, values and norms imprinted by previous evaluation waves may continue to influence methodological practice and the evidence produced by evaluations.

We are particularly interested in evaluation studies conducted by practitioners in the charitable sector, rather than academics or evaluation authorities. These evaluations typically investigate a specific social programme offered by the organisation for the dual purposes of accountability and improvement (Reinertsen et al., 2022). The evaluation report is the standard output of evaluation studies (Reinertsen et al., 2022). We identify three common components of evaluation reports which speak to the methodological practice of the evaluation study: the stated evaluation approach, the methods described and the self-identified limitations. These markers of methodological practice are the focus of our analysis.

A large, systematised and reproducible sample of evaluation reports was required for this analysis. To be eligible for the review, publications needed to (1) describe an evaluation of (2) an Australian charity’s (3) 1 specific social programme, or other practice element within their organisation. To exclude informational or marketing material, the publications needed to include (4) reference to a research methodology. We further restricted our criteria to only include studies (5) conducted between 2015 and 2020.

Search strategy

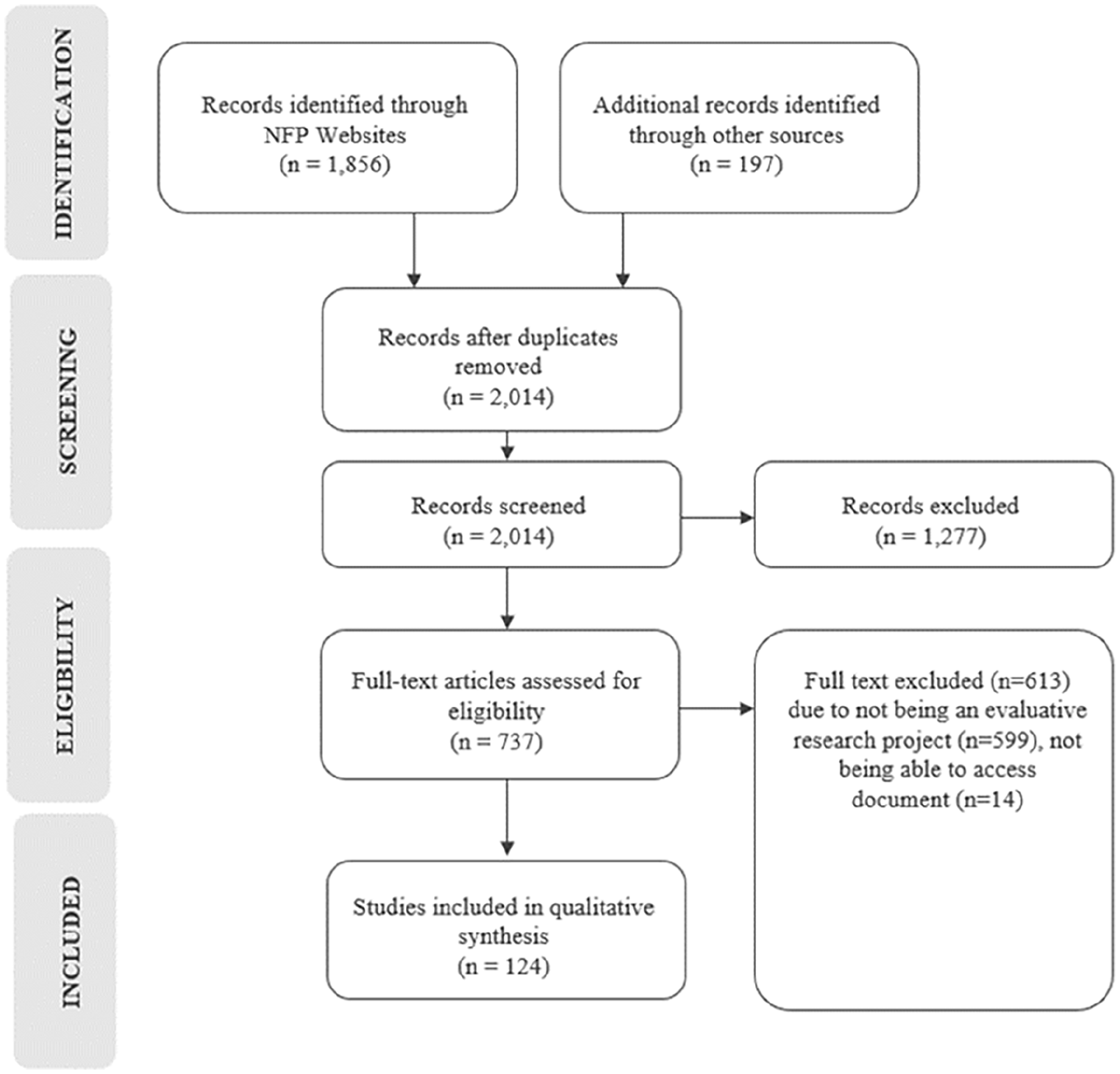

Existing scholarship on synthesising grey literature was used to guide our search (Adams et al., 2017; Godin et al., 2015; Mahood et al., 2014). Each charity’s website was searched extensively to identify published reports (Figure 1). 2

PRISMA diagram.

The website search was followed by a search of Google Scholar and the Australian Policy Observatory (APO). The APO is an open access collection of grey literature pertaining to Australian public policy issues, including charity evaluation reports. The search string entered was the charity name and ‘evaluation OR research’. An additional 197 records were identified through this search. After 39 duplicate records were removed, a total of 2014 unique reports were identified through our searches.

Selection process

Title and abstract screening was applied to the records where possible; however, the majority of records did not contain a formal abstract. Executive summaries, overviews and content pages often contained the information required to assess eligibility. This first round of screening led to the exclusion of 1277 records. These were excluded because they were not research or evaluation reports, rather they were internal policies and procedures, blog posts, fundraising advertisements or policy submissions.

Full-text assessment of the remaining 737 records was completed. The objective at this stage was to ensure included reports were relevant to the research question. A further 613 reports were excluded at this stage. The majority of these (599) were identified as pure or strategic basic research which did not evaluate elements of a specific social programme, or other practice element within the charities.

The final review sample comprised 124 evaluation reports.

Data collection

Each report was read once in its entirety and then again for the purpose of data extraction. Each of the 124 reports was assigned a unique identifier to ensure data continuity. The organisation name, publication year, title, programme or evaluand name, author or evaluator details, evaluand focus and funding model were recorded (see Supplemental Material Appendix A for included reports). To examine the evaluation approach and practice documented in the reports, three components were extracted verbatim: the stated evaluation approach, all data collection and analysis methods, and the stated limitations section. Throughout the analysis of the reports, researcher notes were recorded on common themes or patterns emerging between the texts.

Approaches, methods and limitations were often described at multiple instances throughout the reports, for example, in the executive summary, methods and findings sections. An additive approach was taken to extracting and coding this information so that all description of methods was included in the final analysis.

Content analysis

Conventional content analysis was employed to process and analyse the text describing the approach, methods and limitations of the evaluation reports (Hsieh and Shannon, 2005). Content analysis is a systematic coding and categorisation approach for unobtrusively finding meaning from large amounts of text (Hsieh and Shannon, 2005; Vaismoradi et al., 2013).

As with other forms of qualitative analysis, immersion within the text is the first stage of content analysis (Erlingsson and Brysiewicz, 2017; Hsieh and Shannon, 2005; Vaismoradi et al., 2013). The data collection process described above constituted the first stage of immersion. After this first stage, our analysis of data was situated within each component, rather than within the evaluation reports. The text within each component was read as a narrative for further immersion. For example, all text extracted pertaining to evaluation approaches from all 124 reports was read from beginning to end.

Within each component, the text was then organised through developing condensed summaries (Erlingsson and Brysiewicz, 2017; Vaismoradi et al., 2013). Differences in content across the three components led to different synthesis techniques from this point.

Evaluation approaches as the first component typically contained only one sentence of text with a high degree of homogeneity. For example, ‘a mixed methods design framed by a realist approach’ (C37R004) was condensed as ‘mixed-methods’ and ‘realist’. Codes were then generated from the condensed summaries where substantial overlap or replication existed. This non-exclusive or multi-coding approach was adopted across the analysis, whereby if multiple approaches or methods were identified in the report, it would be assigned to all relevant codes. To investigate the adoption of multiple approaches within one report, a co-occurrence matrix and subsequent network diagram were constructed. These depict the frequency of approaches being adopted in combination.

Condensed summaries were generated for research methods, although there was somewhat less homogeneity in this component. For example, ‘four focus group sessions were held with 30 [programme] disability and mental health coordinators and support workers’ (C01R002) and ‘a group interview with staff in each region’ (C15R011) were both condensed as ‘focus group interview with staff’. Codes were then generated from the condensed summaries where substantial overlap or replication existed. The same process was followed for data analysis, and the variables or themes being studied. Visual comparisons of the approach and methods codes including co-occurrence networks and hierarchy charts were conducted to assess relationships or patterns between evaluation approaches and the methods adopted.

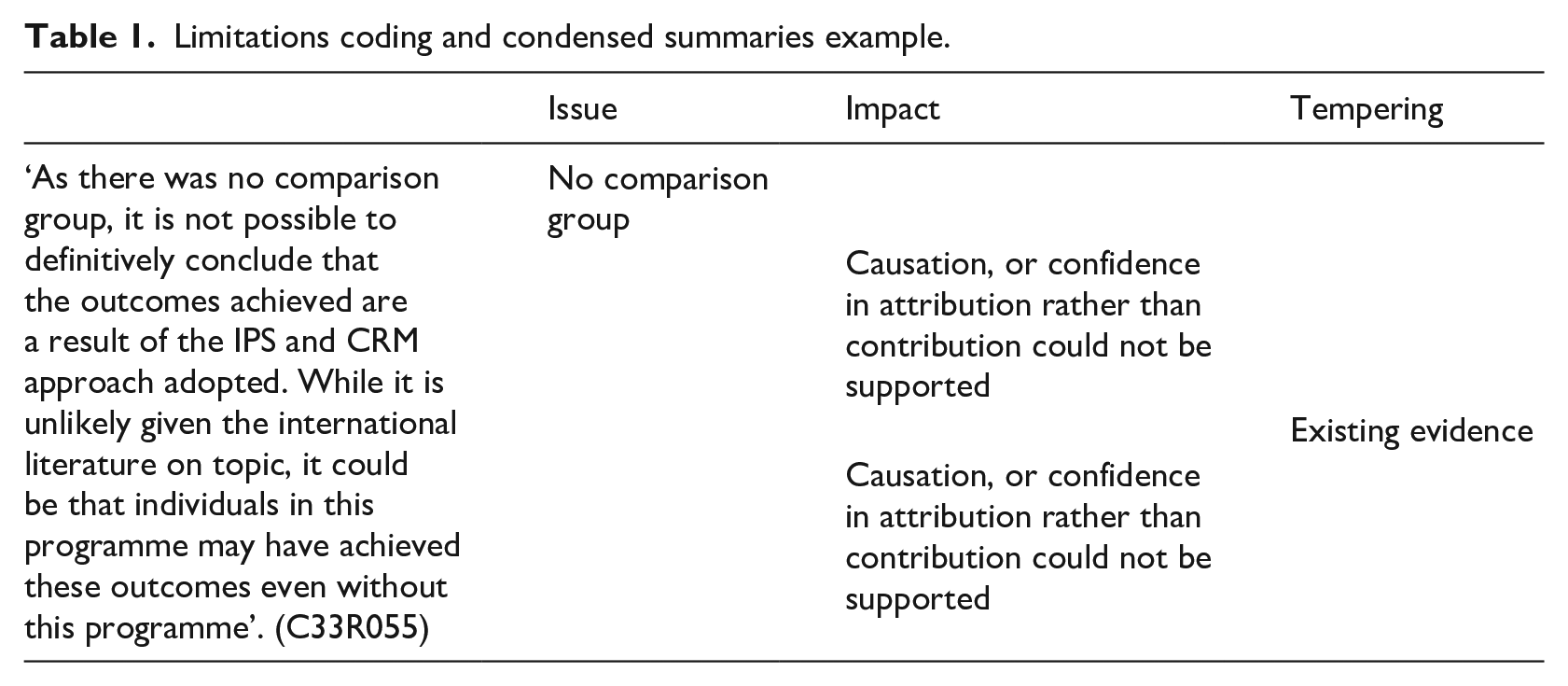

The limitation statements had the least homogeneity and required a more iterative analysis technique. We identified that there were three separate sub-components discussed within the limitation’s component: (1) a description of the methodological or data issues, (2) the impact this has on the quality or veracity of the evaluation findings and (3) a tempering statement which repeals the severity of the limitation and asserts the overall value of the research. These three elements align with the guidance of Ross and Bibler Zaidi (2019) who recommend such elements be included in the statement of limitations. Condensed summaries were assigned within these three sub-components. The limitations text was coded against each of these three sub-categories and then condensed summaries were generated. An example is provided in Table 1.

Limitations coding and condensed summaries example.

Findings

Review sample characteristics

Of the researchers conducting the 124 evaluations, almost half (57 studies) were conducted by internal researchers within the organisation. The next most common evaluator affiliation was with universities (48 studies), followed by consultants (11 studies). Evaluation reports were delivered in partnership in 19 cases in which internal staff worked with researchers from universities, consultancies, government or other non-profit organisations.

The evaluand was most often a specific social programme offered by the organisation. These programmes focused on a range of social issues including employment and training, mental and physical health, support for children and families, housing and accommodation, aged care and disability support services. Outsourced state provision of services was the most common operating and funding model within the sample with 67 programmes operating under state and territory or federal government funding. Philanthropic funding underpinned 13 programmes while internal funding was utilised for 14 programmes whereby non-profits combined funding revenue to deliver bespoke programmes. Hybrid models were also observed within the review sample with four evaluations of a social impact bond, four of social enterprise models and one of a public–private partnership. An evaluand funder could not be established from the content of 20 evaluation reports.

Evaluation approaches

Of the 124 evaluation reports, 116 directly identified an evaluation approach. However, reports often adopted a combination of terms when describing their evaluation approach:

The evaluation was multi-faceted; containing process, outcome and economic components. (C15R011)

A mixed-methods evaluation approach was the most frequently adopted within this sample (48 reports). A purely qualitative approach was adopted by 20 evaluations, while purely quantitative was adopted by 10. Process evaluation was adopted by 20 reports and outcomes evaluation by 4 reports. A range of named approaches was also observed in low quantities across the sample such as realist evaluation (Pawson and Tilley, 1997), participatory action research (Baum et al., 2006), most significant change (Dart and Davies, 2005), RBA (Schorr et al., 1995) and SROI analysis (Lingane and Olsen, 2004):

This technique, involving the collection of significant change stories, is drawn from ‘Most Significant Change’ evaluation methodologies. (C01R009) The method used to develop and reflect on performance measures was informed by a Results Based Accountability™ approach. (C34R066)

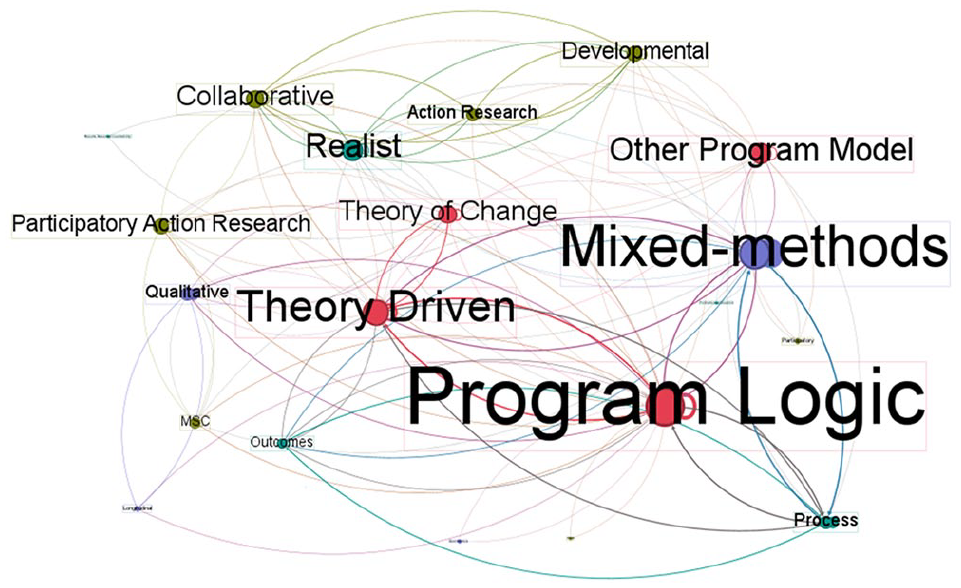

While 13 approaches identified themselves as being ‘theory-driven’ or ‘theory-informed’, a further 18 evaluation reports included a programme logic model, theory of change or other theory informed model. These reports combined the theory-driven derivates with a range of approaches listed above, including pure qualitative or quantitative, process or outcome focused, most significant change, participatory action research and SROI. The co-occurrence network in Figure 2 depicts the combination of these approaches. In this figure, the size of the text and node represent the ranking of the co-occurrences. Programme logic model had the greatest co-occurrence with other approaches, followed by mixed-methods, theory-driven evaluation, other visual models, theory of change and realist evaluation.

Co-occurrence network of evaluation approaches.

Evaluation methods

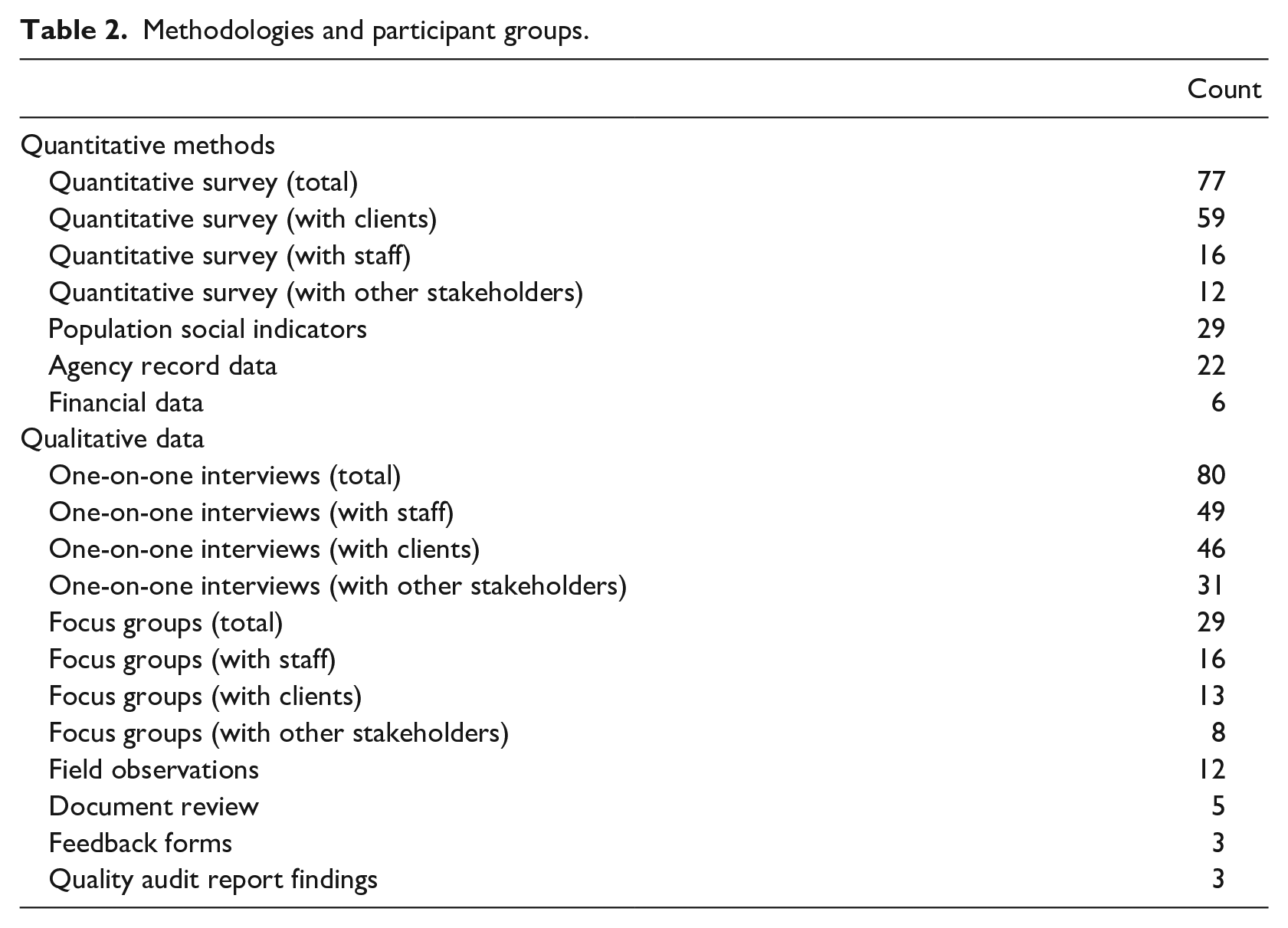

One-on-one structured or semi-structured interviews were the most common method accounting for 115 of the total data collection methods. This was followed by 62 instances of quantitative surveys and 41 studies utilising focus groups. While clients were the most frequently included stakeholder in quantitative survey collection, staff were slightly more frequent in both one-on-one interviews and focus groups. A complete breakdown of the data collection methods and participants can be found in Table 2.

Methodologies and participant groups.

Visual comparisons of the approach and methods codes including co-occurrence networks and hierarchy charts were conducted to assess relationships or patterns between evaluation approaches and the methods adopted. Approaches described as pure qualitative did have greater representation of qualitative methods, and approaches described as pure quantitative did have greater representation of quantitative methods. The SROI analyses have a much greater representation of economic data than any other approach. Aside from these very expected patterns, no other discernible patterns could be detected between the evaluation approach and subsequent methods employed. In other words, the mixture of evaluation methods in the above table was observed across all evaluation approaches adopted. However, reports which included a theory-driven approach or model measured more variables than those without.

Identified research limitations

A statement of study limitations was presented in 63 of our sampled evaluation reports.

Methodological and data issues

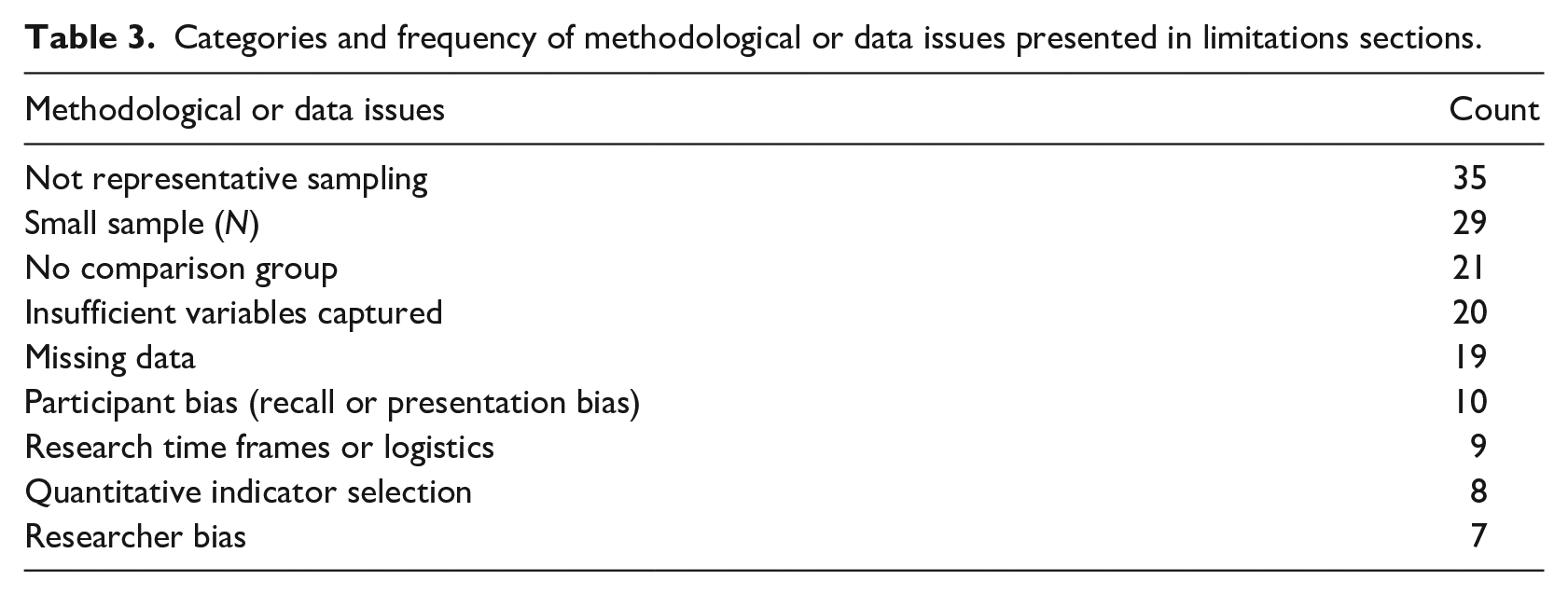

Within the 63 reports, 158 methodological and data issues were identified. Through content analysis of the 158 reported limitations, 9 core categories were identified (see Table 3).

Categories and frequency of methodological or data issues presented in limitations sections.

Impact on the findings

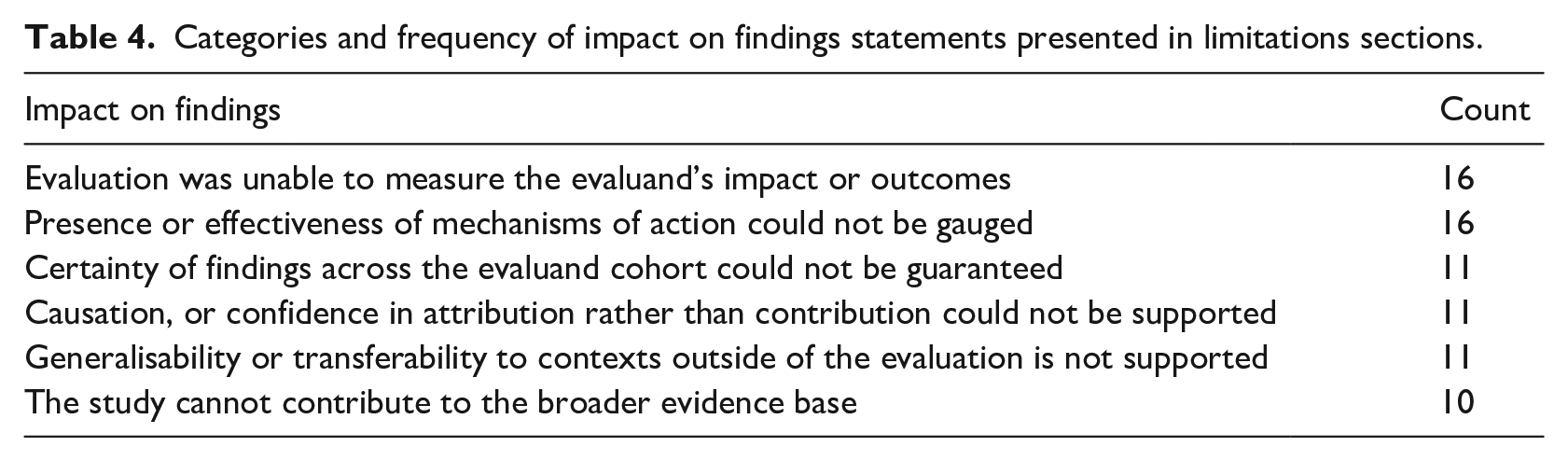

Sixty-one reports presented a statement on the impact of the methodological and data limitations on the findings. These impact statements related to six categories (see Table 4). Trends were observed regarding the issue identified and impact on findings whereby impacts are commonly attributed to specific issues.

Categories and frequency of impact on findings statements presented in limitations sections.

Comparison was conducted of the methodological and data issue codes, and impact on findings codes to assess for a relationship or pattern between them. The inability to measure or attribute the evaluand’s impact was often attributed to or positioned alongside the issue of insufficient data collection and variables. The related problems of missing data through loss to follow-up often resulting in the elimination of a comparison group also hampered the ability to measure outcomes. For example,

The retrospective evaluation design, short timeframe to conduct the evaluation, limited quantitative outcomes and program performance data and the small number of clients who participated in the evaluation were the main design limitations. These limitations meant it was not possible to draw overall conclusions on the effectiveness of the program. (C15R070)

Assessment of specific programme elements, activities or mechanisms of action was often linked to the inadequacy of measures or variables captured in the study. For example,

Current outcome measurements (e.g. training and employment) do not illuminate the process of change – for example, the practitioner approaches that influence participant trajectories, or the nature of external conditions/influences on young people’s engagement. (C01P332)

The generalisability or transferability of the evaluation findings to context outside of the evaluation was identified as a limitation in 11 evaluation reports, often linked to limitations in sample size, comparison group or sampling. For example,

the findings of this research are not able to be generalised more broadly across the early childhood sector in Australia, because of the limited number of interviews conducted, as well that these participants were representatives from medium and large [early childhood care] organisations. However, this research does add to existing body of research about wellbeing of educators in early childhood services through its identification of four important themes that emerged from the perspectives from senior managers. (C04R018)

Tempering statements

Finally, the tempering statements included in the limitations presented a contrasting perspective to the previous two elements. Tempering statements were less common than the other two elements, having only been included in 36 of the reports. While the preceding two elements typically addressed the inability of the study to provide a strong rationalist empirical enquiry, the tempering statements often reframed the benefit of the study as a more exploratory or constructionist value. For example,

This evaluation does not provide any quantitative measures of program effectiveness or program outcomes . . . Despite these limitations, the current evaluation still provides for insight into the process used in the development and implementation . . . as well as some of the facilitators and barriers that were encountered by different stakeholders. (C34R063)

Discussion

This study provides a descriptive account of evaluation methodological practice towards investigating the patterns, values and norms imprinted on the field through iterative evaluation waves. Our novel review approach enables us to view a previously hidden landscape of evaluation studies conducted by practitioners within the charitable sector. This viewpoint reveals tangible evidence that the imprinting of theory-driven evaluation has led to isomorphism in the evaluation field. In turn, this has constitutive impacts on the utility of evaluation studies, such as management-focused evaluations and the risk of mission drift.

Imprinting of theory-driven evaluation

Sedimentation from all five waves of evaluation could be observed in current practice. This is demonstrated by the presence of controlled trials of wave 1 (Campbell et al., 1966), participatory approaches of wave 2 (Greene, 1988), RBA of wave 3 (Schorr et al., 1995), realist evaluation of wave 4 (Pawson and Tilley, 1997) and SROI analysis of wave 5. However, co-occurring and enmeshed alongside these approaches was a pervading influence of theory-driven evaluation (Chen and Rossi, 1983), albeit compromised from its original form.

Rather, the essence of theory-driven evaluation and its permutations observed in our sample of reports is imbued with the values and norms of the second and third waves of evaluation. Today, theory-driven evaluations strive to uphold both empirical and positivist principles while also embracing neo-liberal managerial values. We argue that this configuration stems from the original values of theory-driven evaluation which combine empirical principles with a pragmatic consideration of extraneous influences and practical utility. This emphasis on practical utility enabled the theory-driven approach to align with the third wave of neo-liberalism, thereby embedding it with managerial values during this period. Consequently, ideologies such as competition, disaggregation and incentivisation typical of neo-liberal reforms (Lapuente and Van de Walle, 2020) are embodied within the methodological practice of theory-driven evaluations. Our subsequent discussion will further explore how these ideologies collectively manifest in our sample of evaluations.

Theory-driven evaluation, as an explicit approach, was among the most popular adopted within our sample. However, the permutations of theory-driven evaluation adopted typify its popularity but also clearly demonstrate its imprinting on evaluation practice. These permutations include process and outcome evaluation, which exemplify the specification of treatment models ascribed by the theory-driven approach (Chen and Rossi, 1983). Realistic evaluation, identified explicitly an offshoot of theory-driven evaluation (Pawson and Tilley, 1997), also reflects this influence. However, the most notable permutations observed were the programme logic, theory of change and other theory-based models. These are highly evident in Figure 2 which, when visualised as nodes, emerge as central sources of co-occurrence and are used in combination with the majority of other approaches including the pure quantitative and qualitative, most significant change and participatory action research approaches. However, the positivist values embedded within the visual depiction of these theory-driven models (Ruff, 2021) contradict interpretivist and constructionist values. The incorporation of diverse and sometimes conflicting approach elements into evaluations is referred to by Molecke and Pinkse (2017) as a bricolage. This terminology contends that actors utilise different elements in their practice as a way of asserting agency over prevailing institutional norms so as to maintain perceived legitimacy while also rendering useful outputs (Molecke and Pinkse, 2017).

Isomorphism of evaluation approaches

Although programme logic and theory of change models are sometimes presented in a narrative format (Reinertsen, 2022), in our review corpus they were depicted visually, illustrating the intended impact on the evaluand. Concurring with Ruff (2021), these models encompassed visual elements such as left-to-right sequencing of interventions to outcomes culminating in a definitive end point.

Programme logic models were more popular than theory of change models in our sample. Our documentation process of these drew directly from the descriptions provided in the report text. However, without the textual descriptions provided in the original reports, it would have been difficult to discern any marked differences between the theory of change and programme logic models. Indeed, without these descriptions, the models might often have been mistakenly categorised in the opposite category, underscoring their substantial similarity.

Reinertsen (2022) observes that in the aid context, the introduction of successive new versions of theory-based evaluation models – despite not differing in appearance or values – serves primarily as a symbolic gesture of change and innovation, rather than a departure from existing norms. This observation aligns with DiMaggio and Powell’s (1983) description of isomorphism in organisations, which posits that despite narratives of innovation, organisations are increasingly becoming more similar. This phenomenon of isomorphism arises because actors, aiming to construct a rational and legitimised environment, inadvertently limit their capacity for genuine change in the future. True change would challenge the very norms they have helped establish (Phillips et al., 2004).

Consequently, instruments like visual depictions of programme theory gain symbolic value that transcends their technical utility. In the case of theory-based models, they are no longer just tools for supporting evaluations but have also become instruments of management and communication (Carman, 2010; Reinertsen, 2022; Ruff, 2021). This multifaceted value has likely supported theory-driven evaluation and its permutations to endure successive waves of evaluation. For instance, our examination supports that the charitable sector has evolved into a hybridised and marketised space. Consequently, there is an emergence of fifth-wave evaluation approaches which provide investors and disaggregated funders with evidence to support grantee accountability. Given the aim of theory-driven evaluation to enable direct utility of findings, and assess evaluand causality, it symbolically integrates into this new environment. However, our findings, and previous scholarship, raise questions as to the ability of theory-driven evaluation to simultaneously meet these dual purposes.

Constitutive effects of imprinting on evaluation utility

Our examination of data collection and analysis methods suggests that these dual objectives are not being fulfilled. Instead, unintended patterns are perpetuated through the visual models. Although chosen evaluation approaches seemed to have less influence on method selection than anticipated, a discernible pattern within the sample was that evaluations employing a theory-driven approach tended to measure a greater number of variables or themes compared to the remainder of the sample. This was the case in both quantitative and qualitative methods, however with different implications. In quantitative methods, it frequently resulted in the use of extensive surveys, especially with clients, to measuring against the list of outcomes detailed in the theory model. In qualitative methods, it influenced the framing of data collection, analysis and presentation, based on the assumption that programme outcomes can be divided into consistent, measurable and distinct components (Ruff, 2021). We believe this trend is driven by the infusion of competition and incentivisation leading to the requirement to demonstrate accountability across numerous claimed outcomes. Essentially, the outcomes listed in the visual model serve as soft key performance indicators against which the evaluation must demonstrate progress. This could also account for the prevalence of quantitative surveys with clients, and qualitative interviews with staff, as evaluators allocate resources towards verifying or gathering evidence related to these outcomes.

However, this accumulation of evidence often contradicted the empirical research norms of theory-driven evaluation from both positivist and constructionist paradigms. In evaluations using surveys, in pursuit of assessing against extensive lists of outcomes independent variables crucial for understanding causal, mediating or moderating relationships were frequently omitted. Similarly, in qualitative studies, genuine co-construction of reality by participants was hindered, as evaluations were already predetermined to establish evidence against specific effects. Consequently, market values have largely undermined the inherent value offered by both research paradigms that dominated the second and fourth waves.

Management-focused evaluation and mission drift

The inertia of values was further observed within the limitation sections of the reports. The methodological and data issues identified by report authors most often pointed to research norms ascribed by the theory-driven approach. For example, measuring all programme outcomes and mechanisms of action, internal validity and demonstrating causation or attribution. These limitations were often raised regarding studies in which the evaluation aim, and selected approach, would not warrant these norms such as participatory or action research studies. This demonstrates how the imprint of theory-driven evaluation shapes the values and norms of the evaluation field, in particular, the need to demonstrate causality and therefore change.

This also highlights how competition and incentivisation influence theory-driven evaluation. Under neo-liberal ideology, public sector funders prioritise changing conditions over other logics typically upheld by social sector organisations (Parsell et al., 2022). Ruff’s (2021) analysis of the visual elements in theory models reveals a consistent undervaluing of stability and care in favour of attributable change. This constrained framing of the value of charitable sector programmes can lead to mission drift as organisations focus on upward accountability to funders rather than the needs and preferences of beneficiaries. For example, in our sample, theory-driven approaches were applied to several programmes where causal change models may not have been centrally valued by participants. For example, an Indigenous programme aimed at providing community and cultural connection for young people. Therefore, to meet upward accountability demands, social programmes are compelled to either articulate their intent through causal illustrations or genuinely shift their objectives to produce causal change. As a result, programmes that aim to provide care without directly altering participants, and that have not conformed to these norms, have likely been gradually de-funded and phased out of the sector. Considering the adage ‘what gets measured gets managed’, we must examine the impact of theory-driven evaluation as an infusion of empirical and neo-liberal values on evaluation findings and programme directions, as well as reflect on the impacts that may have already transpired.

Study limitations and future directions

Despite considerable previous efforts dedicated to offering guidance and examples of evaluation practice, there has been a limited amount of scholarship documenting applied methodological practice (Arena et al., 2015; Corvo et al., 2021; Kareithi and Lund, 2012). To overcome this, we employed a naturalistic review which focused on a specific set of published reports. This approach has proven valuable in uncovering insights into the otherwise hidden space of applied programme evaluations. However, with this foundational view now established, future research should continue to build upon it. We can foresee two promising avenues for further exploration.

First, there is a need to examine the decision-making processes of evaluators and evidence consumers during the commissioning and execution of evaluation. This would involve gaining a deeper understanding of the selection of evaluation approaches, and its subsequent influence on methodological design. Embedded methods such as ethnography hold promise for shedding light on these intricate processes.

Second, there is considerable scope for expanding this study by harnessing emerging methods such as natural language processing (NLP). By leveraging machine learning and data mining techniques, NLP can facilitate the analysis of a substantially larger set of evaluation reports. Moreover, employing more powerful topic modelling methods may reveal nuanced patterns that may not have been evident in this sample. However, while technology can enhance the scale and efficiency of analysis, human interpretation and classification of meaning remain indispensable. Therefore, the content of this article may assist in formulating exploratory models and hypotheses.

The theories applied in the interpretation of our naturalistic sample offer several practical implications for addressing tensions within the field. Isomorphism of evaluation practice emerging from pressures for conformity have led to the imprinting of values, norms and patterns in ways that are often incongruent and counterproductive to the aims of evaluation. Such pressures can be typically classified as coercive, mimetic or normative (DiMaggio and Powell, 1983).

Coercive isomorphism may manifest in requirements imposed by funders, such as mandating the inclusion of programme logic models in tendering documentation (Reinertsen, 2022). Therefore, funders should reassess the appropriateness of such requirements among different charitable sector models.

Normative isomorphism, on the other hand, may arise from professional training standards and formal education that emphasise elements of theory-driven evaluation to emerging evaluators. Similarly, mimetic isomorphism occurs when actors imitate practices of other legitimised actors in their environment. To address these issues, increased awareness of the pressures favouring theory-driven evaluation is encouraged. Moreover, understanding the counterproductive impacts that can result from these pressures among professional bodies and evaluators in the field may help mitigate the homogenisation of evaluation practices.

If we may offer a final note, although the fifth wave of evaluation approaches was evident within our review, and the hybridity of evaluand funding models would warrant its presence, we observed a potential sixth evaluation wave. Specifically, a digital era of evaluation may be emerging as indicated by several evaluations employing automated digital platforms to collect, analyse and report both quantitative and qualitative data. The exploration of digital measurement and its associated risks in the charitable sector has gained recent attention in the field of social accounting (see Cordery et al., 2023). However, building on the findings presented here, we can reasonably anticipate that the values, norms and patterns currently imprinted within evaluation will not be washed away by a digital wave. Rather, digital platforms will likely absorb and perpetuate norms through automated and accelerated processes. Hence, it is crucial to be mindful of the values being reinforced through current evaluation practices, particularly those that support a top-down perspective on the value offered by charitable sector programmes.

Conclusion

This study has shed light on the landscape of applied evaluation practices within the charitable sector, positioning them within the current fifth wave of evaluation diffusion, influenced by the increasing involvement of private sector and civil society players. Our analysis reveals that the imprint of theory-driven evaluation, introduced in the 1980s, has persisted and continues to shape contemporary evaluation practices, albeit with adaptations to accommodate for neo-liberal governance values. Overall, this study provides insights into the enduring impact of theory-driven evaluation on the field, raising important questions about the normative pressures and their potential consequences in the ever-evolving landscape of evaluation.

Supplemental Material

sj-docx-1-evi-10.1177_13563890241267729 – Supplemental material for Imprinting and the evolution of evaluation: A descriptive account of social impact evaluation methodological practice

Supplemental material, sj-docx-1-evi-10.1177_13563890241267729 for Imprinting and the evolution of evaluation: A descriptive account of social impact evaluation methodological practice by Elizabeth-Rose Ahearn and Cameron Parsell in Evaluation

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This research was funded by the Australian Research Council’s Centre of Excellence for Children and Families over the Life Course (Project ID CE200100025).

Data availability statement

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.