Abstract

Theory-based evaluation has undergone changes over time, adapting to challenges and aligning with trends that emphasise increased participation and complexity. However, integrating both high participation and complexity in programme theory does not consistently lead to productive outcomes in intricate systems. This article introduces contingency-oriented questions to assess the appropriate balance of complexity and participation in theory-based evaluation. Using a case study focused on the Accelerating Achievement for Adolescents Hub, a Global Challenges Research Fund initiative, we demonstrate the practical application of these questions. Our analysis highlights why efforts to elaborate a more complex programme theory were unsuccessful and explains the rationale behind concluding the project with a theory characterised by more moderate complexity and participation.

Introduction

Over more than 50 years, theory-based evaluation (TBE) has established itself as a prominent school of thought in evaluation. Although it comes in many forms and shapes under several umbrella terms (logic models, programme theory, theories of change, realist evaluation, contribution analysis, and more), a common core can be identified: A belief that some explication of the logical link between inputs, processes, outputs and outcomes is critical for evaluation, and that such explicit theory or model is also useful for programme design, monitoring, management decisions and learning.

TBE has thrived over the years by adapting itself to challenges. Two trends are particularly significant. The first trend is the increasing participatory involvement of stakeholders in the development of programme theories. The underlying assumption is that stakeholders can contribute locally relevant knowledge, enhancing ownership and relevance of the evaluation findings and therefore also evaluation use.

Another important development is a trend towards more complex programme theories. Some of the underlying arguments are that mono-causal ‘pipe-line’ programme theories are too simplistic, and today’s global problems cannot be solved without attention to complexity, interaction, feedback loops and uncertainty. Advocates argue that to effectively tackle contemporary issues, there is a need for programme theories that embrace systemic change and dynamic responses. Only through such an approach can TBE be made relevant in addressing the most urgent problems of today.

While we acknowledge and empathise with the above agenda, our support is tempered by our experiences as advisors and researchers in the field of evaluation. Stakeholders, often times, find complexity overwhelming. Contrary to expectations, we have also observed that although developing complex programme theories required more time, it did not necessarily result in their being more effectively or extensively utilised compared with simpler programme theories. This perspective underscores the value of coherence in programme theories, and the merit in embracing the recommendation that programme theories should be ‘as simple as possible’ (Funnell and Rogers, 2011: 70). This perspective also challenges the assumption that complexity often equates to enhanced effectiveness or understanding.

A comprehensive examination of the appropriateness and practicality of complex programme theory has become imperative due to three significant knowledge gaps within TBE. First, there is a notable absence of widespread empirical evidence substantiating the actual success of evaluations employing complex theories. Practical experience indicates that the application of programme theory is often inconsistent throughout the evaluation process (Funnell and Rogers, 2011: 50). Furthermore, research affirms that the programme theory initially presented in the early stages of an evaluation is frequently not rigorously employed as the theoretical foundation when interpreting findings and formulating recommendations (Coryn et al., 2011). In light of these challenges, the effectiveness of introducing greater complexity into programme theory remains uncertain.

Second, the interest in complex theory has grown concurrently with a focus on participatory approaches to theory development. While participation may contribute to complexity, it is less certain whether complexity, in turn, enhances participation. The nature of the relationship between the two warrants scrutiny and should not be assumed.

Finally, insufficient knowledge exists about the contingencies that determine the success or failure of complex theory. Here, we refer to the classical differentiation between focusing devices and contingency devices (Shadish et al., 1991). While a focusing device is believed to be generally applicable across circumstances, a contingency device explains how specific situational conditions necessitate a particular activity or approach to evaluation. We propose that a contingency-oriented approach facilitates an unbiased exploration of potentially relevant factors that may or may not support programme theories of varying degrees of complexity.

This article is organised as follows. First, we contextualise our discussion within broader trends in TBE, examining both promising leanings such as participation and complexity, and the limiting factors and unresolved issues in TBE as reflected in the existing literature.

Second, we outline contingency-orientated questions that we posit are valuable for determining the appropriate situational level of complexity in TBE.

Third, we delve into a case study: the Global Challenges Research Fund (GCRF) Hubs, specifically focusing on the Accelerating Achievement for Adolescents Hub. This case was considered a ‘most likely case’ for complexity due to its global ambitions, multiple interacting work packages (WPs) and complex operational environments. However, despite these factors, a programme theory of limited complexity was chosen as the optimal approach after several attempts at theory development.

Finally, we provide a summary and engage in a discussion of our findings.

Trends in TBE

In this section, we discuss two trends in TBE, one towards more participation and one towards more complexity. We argue that the relation between the two is complicated, non-trivial and not fully understood.

At its most fundamental, definitions of programme theory have emphasised the need for programme theory to articulate simplicity, plausibility and logical coherence. Bickman (1987) defined programme theory simply as ‘[T]he construction of a plausible and sensible model of how a programme is supposed to work’ (p. 6), whereas Chen and Rossi (1992) defined it in terms of ‘What must be done to achieve the desirable goals, what other important impacts may be anticipated, and how these goals and impact would be generated’ (p. 43).

An important characteristic of a good programme theory is the extent to which it is perceived as relevant and useful (Funnell and Rogers, 2011). Involvement of diverse perspectives from stakeholders can make the theory richer (Dahler-Larsen, 2018) and connect TBE with broader democratic perspectives (Hansen and Vedung, 2010). Collaboration with reference to theories of social change can also pave the way for new strategic partnerships which makes social interventions more effective. Stakeholder consensus building is thus seen as central to theory generation, with programme theory being defined by Chen (2005) as ‘a set of stakeholders implicit and explicit assumptions on what actions are required to solve a problem and why the problem will respond to the actions’ (p. 15). Donaldson (2007) similarly emphasises how in the TBE process, ‘evaluators typically work with stakeholders to develop a common understanding of how a programme is presumed to solve a problem’ (p. 39).

Another clear trend in TBE is one towards complexity. Concepts such as interconnectivity, feedback and emergent properties have been mobilised in attempts to let evaluation theory and evaluation approaches benefit from systems theory (Patton, 2011). The consequence has frequently meant the departure from simple linear models in favour of more complex models. The challenge for TBE is then to decide if the programme theory models we use should also reflect this complexity (Rogers, 2008: 29).

We define complexity in terms of the number of factors in a programme theory and the number of variations in the nature and links between these factors. Extant literature typically distinguishes between programmes designated as complicated – characterised by a profusion of interconnected elements transpiring either concurrently or sequentially, and programmes identified as complex – confronting the additional challenge of accommodating elements of emergence, non-linearity and surprise within their programme theories (Funnell and Rogers, 2011).

Although we concede the theoretical differentiation between complex and complicated programme theories in the literature, in practical application, both complex and complicated programme theories can present formidable challenges. Regardless of whether the evaluator discerns the scenario as complex or complicated, both scenarios require the evaluator to decide if the programme theory models we use should also reflect this complexity (Rogers, 2008: 29). Moreover, in practice, both complex and complicated contexts often lead to a process of protracted, labour-intensive revisions to the programme theory as stakeholders attempt to accurately encapsulate the prevailing programmatic landscape (Chapman et al., 2023). Such exertions may be of disputable value, considering that genuinely complex systems typically only achieve a semblance of coherence in hindsight (Patton, 2015: 422).

What is the nature of the relation between these two trends, participation and complexity? Rossi et al. (2004: 162) describe a somewhat idealised process of where these two elements come together, with successive engagements culminating inevitably in consensus building, where ‘the evaluator must interact with the programme stakeholders to draw out their implicit programme theory, that is, the theory represented in their actions and assumptions’, and that ‘this process continues until the stakeholders find little to criticize in the description’. This is in keeping with Birckmayer and Weiss (2000), who describe an evaluator as

[Asking] programme planners and programme managers, reviewing existing theories in the field, reviewing previous research and evaluations, hypothesizing a theory on the basis of this information, and then negotiating her formulation with programme managers and staff to come to an agreement that accords with their thinking (pp. 426–427).

In all of these process descriptions, there is no mention of divergences among the stakeholders in this process, nor is there reference to the frequent need of the evaluator to keep dissimilarities among stakeholders apart. The evaluator is assumed to emerge from this process with one unified programme theory. This is often not the case when diverse stakeholder participation is incorporated meaningfully into the theory building process. Moreover, experienced evaluators find that stakeholders may spend most of the time set aside for planning an evaluation on discussions of a limited corner of a programme theory (Funnell and Rogers, 2011: 143). The time for reworking a programme theory can challenge the collective cognitive capacity of stakeholders and ‘can easily expand to a level that threatens the practicality of doing so and engenders frustration and disengagement of stakeholders’ (Funnell and Rogers, 2011: 144).

Broad participation may widen the base of practical knowledge on which programme theory draws. It may lead to the discovery of interesting interconnections and feedback loops. Participation may breed complexity. Complexity may also increase the need for coordination and participation. However, it is known from participatory research that if more diverse stakeholders are involved in deep ways, a higher level of conflict and unpredictability may occur (Cousins, 2003). Broad participation may also narrow the prospects for consensus about the components in a programme theory that are acceptable to all. With many views and interests on board, it may be more difficult to agree on goals and values as well as on assumptions about causal pathways.

At times, political authorities have a pre-determined programme theory as the starting point for a given policy. The same may be true with a private funder. Some stakeholders may find themselves deeply engaged in practical commitments and tasks, making it challenging for them to allocate substantial time to the process of theory-making. This diversity of perspectives, constraints and preconceived notions within various stakeholder groups underscores the difficulty of synthesising programme theories that accommodate and align with the diverse backgrounds and commitments of those involved in policy development and implementation.

While the construction of some programme theory is often obligatory as a passage point towards funding, the factors mentioned above may help explain why the apparent consensus behind a given official programme theory may be shallow (Dahler-Larsen, 2018: 12). As long as these fundamental problems remain in TBE, more complex programme theory in itself may be of limited help. What is needed is perhaps ‘not a ‘correct’ conceptualisation, as one that could be understood by all participants and that would facilitate action rather than paralyze it’ (Simon, 2019 [1985]: 166).

Situated action, situated programme theory

Before we turn to contingency-oriented factors which may better offer guidance to evaluators around when and why a more or less complex programme theory fits the situation at hand, it is pertinent to unpack the situated nature of action, and, as a corollary, the situated nature of theory-making in evaluation. Contrary to the prevailing notion, we argue against the idea that the nature of a problem inherently determines the complexity of its corresponding programme theory.

The degree of complexity of a given theory about a problem is not solely determined by reality itself. It is also contingent upon interpretative relevance structures, the situatedness of the observer and the human sensemaking processes (Weick et al., 2005). Hence, the classification of challenges as either ‘simple’ or ‘complex’ is a human construction. Full comprehension of a situation is not always a prerequisite for effective action. We act in ‘situated’ ways but are unable to articulate a full account of all factors which together constitute ‘the situation’ (Dewey, 1938: 98). Knowledge about causal regularities often guides our practical action, but they do so in the capacity as heuristics of broad orientation and rules of thumb (Cartwright and Hardie, 2012: 158). They rarely exhaust everything that one needs to know in order to act intelligently in a given, local situation (Cartwright, 2013). The concepts we use in situated, social action are more loosely defined and socially negotiated than the concepts known from the natural sciences (Cartwright, 2022: 167). Furthermore, in practical life, the cognitive attention of human beings is a scarce factor. A model or programme theory which explains the most important things in an easily comprehensible way may thus function better than a model which attempts to provide full information (Simon, 2019 [1985]: 167). We therefore prefer to see the construction and use of causal models in evaluation as a tentative, pragmatic and situated endeavour.

Next, it is crucial to draw a distinction between a problem theory, which outlines the (hypothesised) causes leading to a specific social issue, and a programme theory, which delineates the intended effects of an intervention on the alleviation of that social problem (Vedung, 2017). The two are not the same. Although a well-developed problem theory can undoubtedly inform the crafting of a robust programme theory, it is not necessary for the latter to encompass the entirety of the former. Effective interventions can be designed based on a clear understanding of the intended outcomes, even in the absence of exhaustive knowledge regarding the underlying causes of a social issue. The effectiveness of a programme theory, therefore, lies in its ability to target and address the major contributors to a given social problem, rather than its complexity per se.

Finally, we wish to discuss the relation between complex theory and prompt action. While it is often assumed that complexity necessitates swift action, and conversely, swift action implies a complex programme theory, this is not always the case. Complexity does not inherently demand immediate action, nor does prompt action presuppose a complex programme theory. In fact, Funnell and Rogers (2011: 79) advocate for the adoption of agile heuristics over increasingly complicated logic models in situations of complexity. It is essential to recognise that genuine barriers to prompt action often stem from bureaucratic processes, conflicting interests, and institutional rigidity, rather than the sophistication or simplicity of programme theories. Thus, the challenges lie more in navigating the structural and political barriers inherent in bureaucratic systems and decision-making processes, rather than in the intricacies of programme theory construction.

Contingencies and complexity

Consistent with our view of theory-making as pragmatic and situational, we now turn to specific contextual factors that evaluators may wish to take into account when aiming for a high versus low degree of complexity in their programme theories. Before we do so, it is useful to remind ourself of the distinction between a contingency-oriented device and a focusing device (Shadish et al., 1991). Examples of focusing devices include logic models, theories of change and evaluation frameworks – whereas contingency devices involve systematically considering the various contextual factors and contingencies that may influence evaluation processes and outcomes. Both approaches have their strengths and limitations, and their effective integration can contribute to more rigorous and comprehensive evaluation practices. To effectively integrate contingency-oriented approaches into evaluation practice, evaluators must ask context-specific questions that reflect the unique characteristics and dynamics of each evaluative context. Hence, we outline below a series of contingency questions that allow the evaluator to carefully examine the conditions under which heightened complexity in a programme theory (i.e. the focusing device) may be both feasible and truly impactful.

Our contingency-orientated questions are as follows:

In which way does the ownership of the programme theory set parameters for theory-making?

How many cognitive and organisational resources are available for theory-making?

Is there a need for the programme theory to reflect a broad set of values, and perhaps value trade-offs?

Is it important to portray potential interactions between programme elements and contextual factors?

Is there a need to use programme theory to communicate the central idea in a programme to internal and external stakeholders?

Is there a need to use the programme theory to identify clear evaluation questions as a precondition for clear evaluative conclusions?

Regarding 1

A key theoretical distinction centres around the question of who fundamentally owns the programme theory. In a robustly participatory version, or a self-evaluation approach, stakeholders collectively own the programme theory. The evaluator assumes the role of one among them or a critical friend working in their service. The primary objective is to utilise the programme theory as a tool for reflection among the stakeholders themselves, with the hope of enhancing their collective action. Consequently, it becomes critically important for the stakeholders to recognise and acknowledge their own programme theory.

In alternate scenarios, a specific organisation, such as a foundation, may define the programme theory. This occurs, for instance, when a foundation funds various activities among stakeholders and aims to ensure that these activities align with a particular philosophy. In such cases, the foundation may articulate a specific programme theory with operational characteristics, serving as an action-oriented benchmark against which the compliance of project activities is assessed. The programme theory also communicates the broader philosophy underlying the foundation’s grants. Under these circumstances, there may be limited necessity to delve into a broader and more complex theory during the evaluation phase, unless the foundation is willing to facilitate meaningful discussions on this front.

Similarly, a fairly simple programme theory may underpin a policy determined by an authoritative decision-maker in a political chain of command within a legitimate representative democracy. Here, the bottleneck may reside in how political decisions are made within that political system. The challenge lies not necessarily in the simplicity of the programme theory in isolation, but rather in the social foundations of theory-making rooted in political authority and financial considerations, which cannot be overlooked.

In another version, a TBE serves knowledge interests which are distinct from those mentioned above. It might be that the evaluator is also a researcher. In that version, the evaluator may benefit from inputs from stakeholders, who may serve as interviewees to ‘confirm or falsify and, above all, to refine’ the ‘researcher’s theory’ (Pawson and Tilley, 1997: 159). So, there is a hierarchy of expertise where the researcher is ‘at the top of that hierarchy’ (Pawson and Tilley, 1997: 164). In that version, the evaluation researcher has ultimate responsibility for the shape and form of the programme theory.

These different ideal-typical situations, all belonging to the family of TBE, may create starkly different conditions for complexity in programme theory. A key issue is who owns the theory, and next, whose resources are spent on theory construction.

Regarding 2

Understanding whose resources are invested in theory-making is critical. Evaluation timelines are often unreasonably short (Patton, 2008: 406). While an evaluator might prioritise the evaluation during this period, the same may not hold true for stakeholders involved in constructing a programme theory. If a funder or a political authority asks various stakeholders to participate in an evaluative process, these stakeholders may feel that they are working ‘for free’ if they engage in theory-making beyond the time financed by the funder or authority. As professionals, managers or decision-makers, they may only be able to commit limited resources. Their time is constrained, and attention may be their scarcest resource (Simon, 2019 [1985]). Moreover, learning the specialised vocabulary in systems theory for participating in complex theory-making can be time-consuming.

It is reasonable to hypothesise that the time needed to construct a programme theory is influenced by both the number of elements and the diverse connections between them. An evaluator may harbour distinct and separate interests in complex theory-making as a knowledge construct, beyond the instrumental role that theory may play in the immediate evaluation. The evaluator may be willing to contribute additional resources for complex theory-making, but this is contingent on the commissioner providing these resources or the evaluator being situated in an institutional setting (such as a research institution) where complex theory-making is feasible and deemed necessary. The discussions around different types of connections can themselves become a substantial part of the process. In essence, complexity imposes time costs. Conversely, simplifying a programme theory with numerous elements into a more straightforward version with only a few key elements also requires time. However, in the broader context, it is fair to assume that a more complex theory demands more resources from stakeholders participating in its construction. Indeed, in general, there are diminishing returns when it comes to refining logic models (Funnell and Rogers, 2011: 40). Given limited resources and the understanding that theory-making should not disproportionately consume the overall resources allocated for evaluation, a compelling argument arises in favour of a moderately complex programme theory. This is particularly relevant when prioritising the participatory element in theory-making.

Regarding 3

In situations involving multiple stakeholders, a variety of values may come into play. Stakeholders might be more inclined to identify with a theory that not only reflects their individual values but also encompasses interactions and trade-offs in the relationships between activities supporting different values. An evaluator, particularly one who is also a researcher, may choose to portray multiple values in a programme theory. This approach serves to emphasise how various pathways can be selected to achieve goals, providing a nuanced understanding of the complex interplay between different values and objectives.

Pipeline models typically depict a single activity leading to one outcome (Funnell and Rogers, 2011). While moderately simple models can incorporate various outcomes representing different values, a programme theory is often most helpful when it represents a relatively limited number of values. In cases where values and goals are numerous, conflicting and contested, an evaluability assessment (Smith, 1989) may suggest reconsidering TBE. Instead, it may be advisable to clarify or prioritise values and goals through a deliberative, participatory and democratic process beforehand.

The primary strength of TBE, in our view, lies not in conflict resolution but in the exploration and testing of causal pathways, spanning from activities to impact, which inherently involve values. Therefore, we argue that even while acknowledging the centrality of values in all evaluations, TBE is most effective when values are represented in programme theories with low to moderate complexity. This ensures a more focused and practical exploration of causal relationships and pathways.

Regarding 4

While this framework is valuable, it is essential to avoid exaggeration. For instance, one might consider the influence of broader contexts that determine context–mechanism–outcome configurations, adding layers of complexity to the evaluation. The recursive nature of contextual influences highlights the need for a balanced and nuanced approach to understanding the interplay between programme components and their surrounding contexts in TBE.

Realistic evaluation appears most promising and feasible when a limited number of relevant context–mechanism–outcome configurations are deemed critical for desired outcomes. In essence, the ambition is to portray interactions within a programme theory of some complexity, but with the specific goal of capturing a few critical and significant context–mechanism–outcome configurations, rather than attempting to encompass all configurations in their interdependent complexity. Even when illustrating interactions between components internal to a programme, relatively simple models can effectively convey the necessary insights (Dahler-Larsen, 2018).

Regarding 5

A programme theory can serve as a valuable communication device, presenting the main idea of a programme or project in a visually appealing manner. This function is particularly crucial in applications for funding, where an engaging programme theory may play a decisive role. Funding decisions often occur under time constraints, leaving limited time to thoroughly review each proposal. An attractive programme theory is one that effectively communicates a key idea. However, in very complex programme theories where almost every element is interconnected, the key idea may be obscured, affecting the communicative function. This suggests that, unless the audience is already well-versed in complexity theory, the communicative function may favour a lower degree of complexity.

Irrespective of its causal validity, a programme theory can also function as an organisational recipe for prescribed action. In this context, a relatively simple programme theory might convey the necessary guidance more effectively than a complex one.

Regarding 6

One of the primary functions of a robust programme theory is to aid in identifying evaluation questions, underscoring the importance of articulating the programme theory first (Donaldson, 2007: 40). These questions play a crucial role in defining the parameters for clear evaluation results. Importantly, a programme theory should always be used evaluatively, guiding the purpose, direction and conclusions drawn by the evaluation process (Davidson, 2007).

A programme theory of limited complexity may be more effective in providing this guidance. With a streamlined approach to evaluation questions, the path towards selecting appropriate methods and data sources becomes more direct. This, in turn, supports the argument for efficient resource utilisation discussed earlier.

While theorising (Pawson and Tilley, 1997) and critical thinking (Buckley et al., 2015) are integral parts of evaluative activity, the ability of evaluation to provide clear answers to empirical questions is a crucial strength that should not be easily relinquished. Falsification of a programme theory, the detection of a ‘theory failure’, is a significant aspect in both epistemological and practical terms. As noted by Popper (1963), falsification may be the most decisive process leading to the advancement of knowledge and represents a realm where empirical work can make its strongest and clearest contribution. While falsification may not occur as frequently as hoped, given the Duhem-Quine thesis that highlights practical conditions and contextual factors acting as barriers to outright falsification in a given case (Gillies, 1993), the ability to deliver falsification of a programme theory remains a valuable option for evaluators.

However, it is important to note that falsification is most effective with a simple, testable theory. The application of falsification becomes less clear when dealing with very complex theories. At the very least, the desire to gain insights into complexity should be balanced against the risk of obtaining empirical findings about many different aspects without a clear and conclusive outcome. The challenge lies in navigating this balance effectively in the pursuit of both understanding complexity and delivering meaningful evaluation results.

The vocabulary used by proponents of complex systems-oriented thinking can pose challenges in adopting an evaluative perspective focused on empirical testing. While certain outcomes may be labelled as ‘uncertain’, which is not inherently problematic as evaluation can help ascertain whether these outcomes occur, the situation becomes more complex when outcomes are deemed ‘unknowable’. If the situation suggests a fundamental ontological and epistemological unknowability inherent in stated outcomes, it becomes challenging to see how evaluation can contribute meaningfully. While critical thinking and the construction of models, even complex ones, can foster learning, caution is warranted when dealing with questions related to fundamental unknowability. Therefore, it is advisable for evaluation efforts to allocate resources to questions where answers can be known (or ‘better known’) over time. Emphasising questions that allow for empirical testing and knowledge accumulation over time ensures a more grounded and practical approach in the evaluation process.

All things considered, the inclination to generate clear evaluation findings favours programme theories of limited complexity. In summary, questions Q1 through Q6 collectively advocate for low to moderate complexity in programme theories. In the subsequent section, we present a case study to reflect upon how these contingency questions might be applied.

The GCRF hubs – and the case of the Accelerating Achievement for Adolescents Hub

The 12 United Kingdom Research and Innovation (UKRI) GCRF hubs are interdisciplinary research initiatives representing a collective £200M planned investment by the UK government to address development challenges in developing countries, closely aligned with the United Nations (UN) Sustainable Development Goals (SDGs). Our case focuses on our experience working on the programme theory of 1 of these 12 hubs, specifically the Accelerating Achievement for Adolescents Hub, referred to as the Accelerate Hub.

While our primary focus is on the Accelerate Hub, we provide context to our case by incorporating data from formal interviews conducted with the evaluation leads of 9 out of the other 11 GCRF Hubs. These interviews help contextualise our experiences and derive broader insights from these collective experiences.

Ethics approval for this research was obtained through the University of Cape Town, and all individuals cited as informants provided their informed consent for the sharing of their narratives.

The Accelerate Hub, like other GCRF Hubs, consisted of several interdisciplinary WPs. These WPs collectively aimed to accelerate the progress of 20 million children and adolescents in Africa towards achieving the SDGs. The WPs were led by senior/early-career mentor pairs representing various disciplines, spanning three UK and nine African institutions across South, West, East and Central Africa. While the composition and mandates of the WPs were subject to considerable change over time, the overall programme logic remained as follows:

WP 1: Deliver co-creation, policy impact and capacity-building.

WP 2: Utilise existing open-source datasets to test impacts of combination services across SDGs.

WP 3: Use interdisciplinarity to identify new ways of synergising services, incorporating a number of pilot randomised controlled trials (RCTs).

WP 4: Test potential accelerators co-selected with policymakers and adolescents in RCTs.

WP 5: Test the cost-effectiveness, acceptability and scalability of accelerator synergies.

WP 6: Examine ways to deliver interventions that provide equitable governance, financial and risk management.

The core contextual issue of programme theory ownership influenced the parameters for theory-making in the Accelerate Hub from the outset. Throughout the extended proposal development and approval stage, the principal investigator and programme manager consistently grappled with balancing the need to develop a programme theory while acknowledging that the project was not yet funded. Consequently, partners could not reasonably be expected to prioritise resources for programme theory development. Another contextual consideration that should have been considered was determining how to lead a programme theory process when the principal investigator was a researcher without formal training in evaluation.

These various constraints led to an initial programme theory that primarily reflected the vision of the principal investigator, programme manager (who had some prior experience in evaluation work) and input from a graphic designer.

The first author of this article joined the evaluation team after the funding proposal had been accepted, and there was an immediate need for additional evaluation capacity on the project. Initial discussions about an evaluation partnership with the African Academy of Science did not materialise, leading to the urgent requirement for someone to step in and deliver a more detailed programme theory and evaluation plan to the funders before their deadline. Ownership remained a central issue at this stage, with WP leads still in the process of being brought on board, and time being of the essence. Given the urgency, stakeholder buy-in was challenging, and the necessary programme theory revisions were carried out largely in isolation.

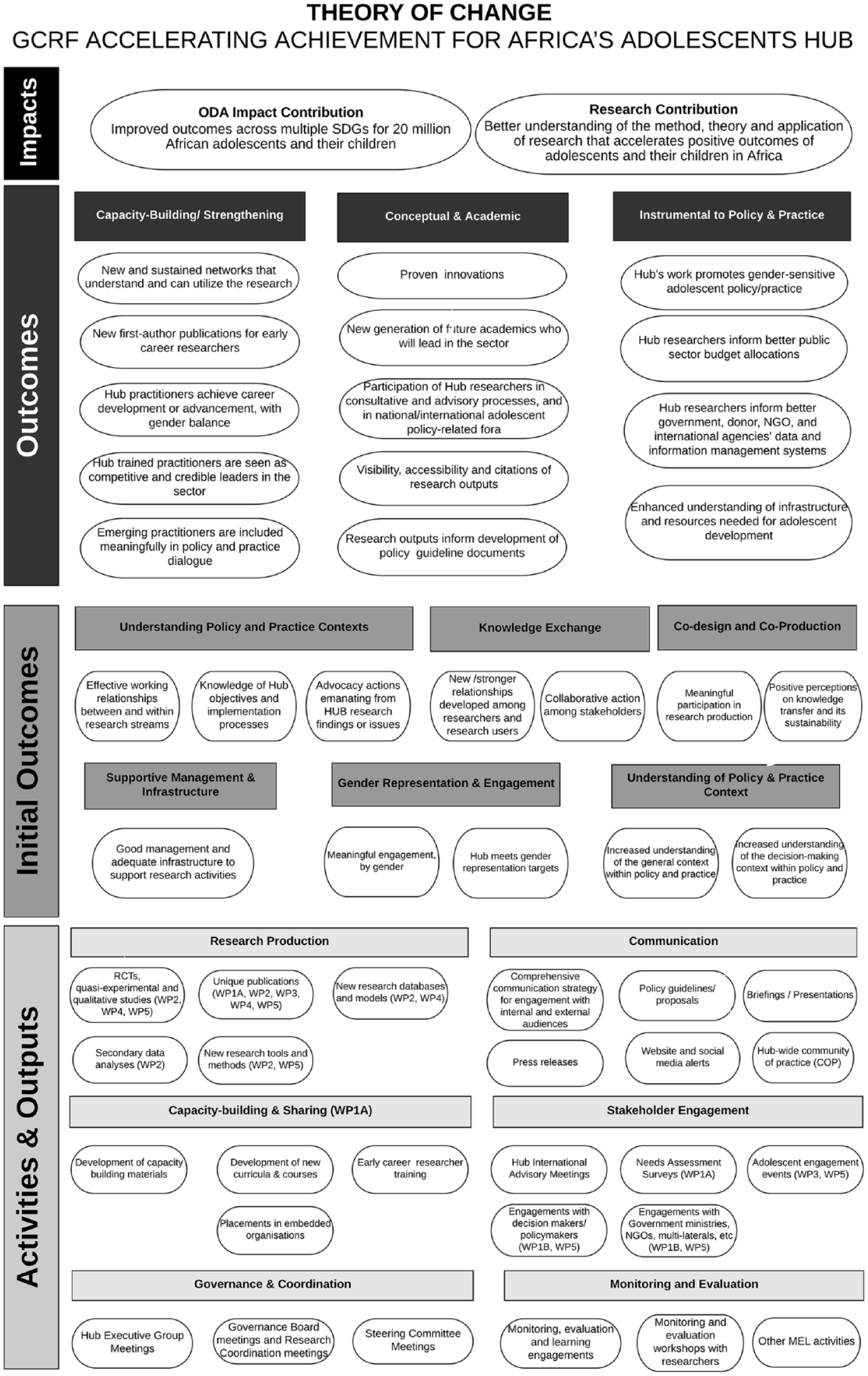

The primary objective was to align the programme theory with what was perceived as the funders’ expectations, and the role assumed was akin to that of a consultant. The resulting programme theory was shaped through a document review of the WP workplans, the research-for-development evaluation literature, and was heavily influenced by the funder’s requirement to align the programme theory with a logical framework (see Figure 1).

Programme theory for the Accelerate Hub.

During our interviews with other Hub evaluation leads, it became evident that our experience resonated with similar experiences across Hubs. In one Hub we interviewed, they described how they, like the Accelerate Hub, had an initial ‘head start’ on developing programme theories for past complex research-for-development programmes due to their prior experience in development evaluation, ‘because at that point in the application process we had to put a sort of a straw man of a theory of change’. At a later stage, the Hub did work on collaboratively refining the programme theory, but ‘there was a lot of divergences . . . which was torture to us . . . there was a lot of resistance’. Efforts were also frustrated by the funder who, ‘for some reason seem to forget that they’d already asked us [to elaborate the programme theory] and asked us to do it again’. At this point, ‘I think we had hired a consultant . . . we just didn’t have the time’.

With the funder satisfied with the ‘revised’ programme theory from the one initially submitted in the grant proposal (Figure 1), the Accelerate Hub experienced a collective sense of relief and internally resolved that it was now time for ‘real’ evaluation work. At this juncture, it would have been useful to apply a contingency-oriented approach to decision-making around how heavily to invest in additional complexity given the limited resources described, and the understanding that theory-making should not disproportionately consume the overall resources allocated for evaluation (which favours a moderately complex programme theory). Instead, a decision was made to involve WP leads in a process aimed at developing WP-specific programme theories. The objective was to use this exercise to guide evaluative thinking at a WP-specific level, with the belief that it would result in improved planning and more purposeful evaluation and learning for each of the WPs. With hindsight, this belief was misguided.

Simultaneously, the GCRF supported this broader vision of WP-specific programme theories by conducting a series of capacity development workshops for WP leads across Hubs.

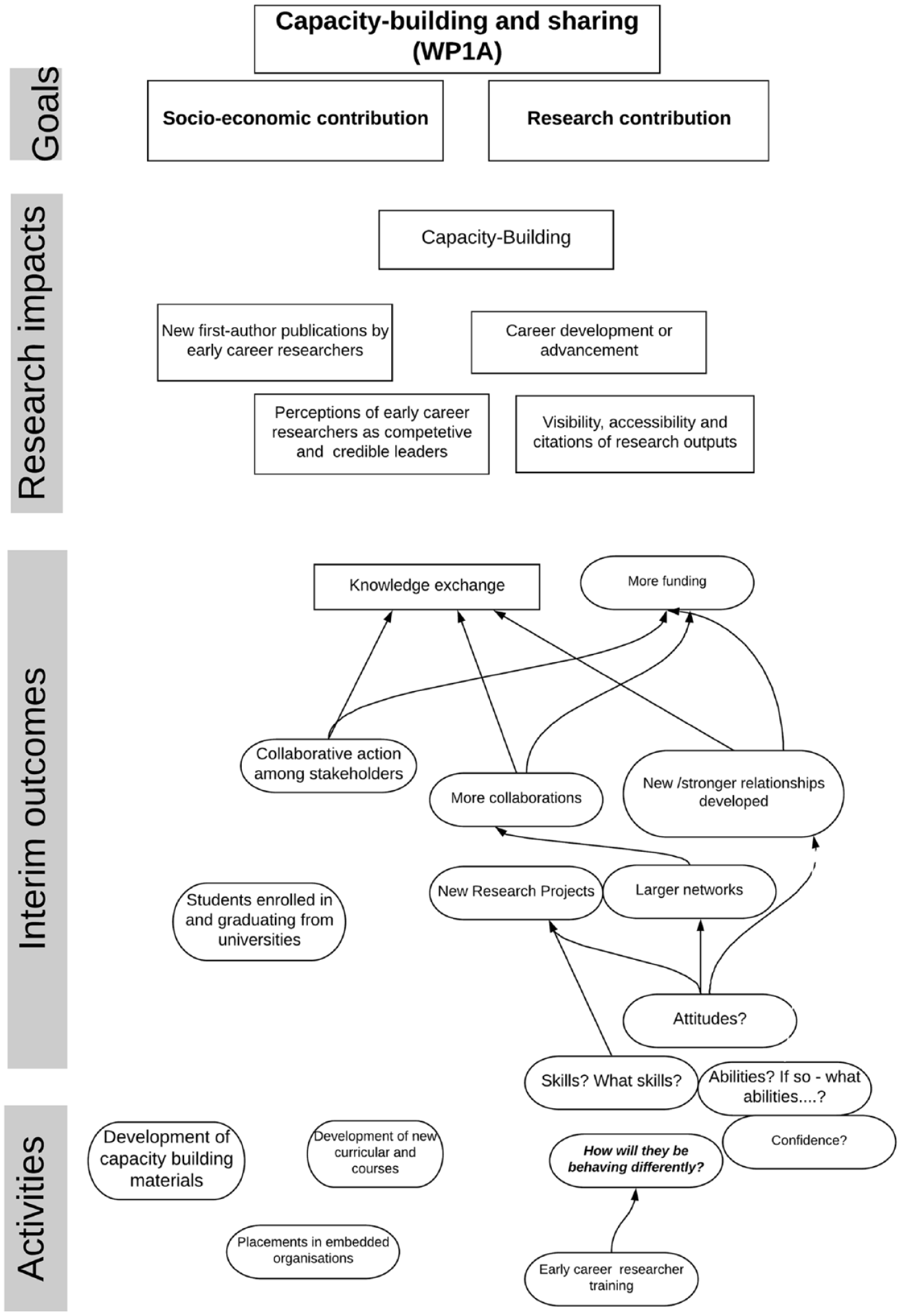

Upon resuming our role in the project, we collaborated with WP leads to expand on and elaborate these initial WP-level programme theories. As evaluation partners, our efforts were directed at encouraging WPs to extract mechanisms and outcome chains aligned with our revised Hub-level programme theory (Figure 1). Despite our concerted efforts, it must be acknowledged that the WP-specific programme theories were not consistently well elaborated, and many remained incomplete. Figure 2 provides an example of a partially elaborated (work-in-progress) WP-specific programme theory, illustrating intricate impact pathways and the need for further development of causal mechanisms. To the best of our knowledge, this initiative was eventually abandoned as a working tool by the WP leads.

Incomplete attempt at elaboration of the specific programme theory for WP 1: capacity strengthening.

Approximately halfway through the project, after several rounds of attempts, complexity and systems theory was used to help reconfigure the programme theory. Our attempt led to the development of a relatively loosely articulated ‘spheres of influence’ model, wherein the direct causal impact of the Hub was confined to the immediate boundaries of Hub influence.

The resulting theory encompassed a considerable number of activities, results, intended system changes and two broadly defined forms of impact, but it lacked the specification of causal links and feedback loops. This expansive and relatively loosely structured conceptualisation of impact proved to be liberating and significantly influenced the development of the Accelerate Hub’s programme theory. Aligned with an understanding of complexity and a broad systems-based approach, this configuration did not involve extensive detailing of potential contextual conditions, diverse causal links, feedback loops and potential emergent properties typically associated with complex system models. Consequently, our resultant programme theory was finally characterised by low to moderate complexity.

Discussion of case study in the light of contingency factors

In the following section, we reflect on the value of our programme theory building process, and the extent to which introducing more complexity into our programme theory aided the evaluation process. We do this in terms of the contingency-oriented questions mentioned earlier.

In which way does the ownership of the programme theory set parameters for theory-making?

A crucial contingency factor that could have been considered earlier was the extent to which the lack of ownership in the initial stages of programme theory development would persist and hinder the development of WP-specific programme theories. Once the revised programme theory was developed and approved by the funder, careful consideration was required regarding the potential advantages of prolonging the process. Burdening WP leads to an intricate process that might not prove useful unless endorsed by the funder necessitated thoughtful deliberation.

How many cognitive and organisational resources are available for theory-making?

The extent to which this WP-specific elaboration of programme theory was taken on board was messy, emergent, unpredictable and driven by factors hard to predict and control – such as personality and experience. Some Hubs commented how ‘the younger academics embraced it a lot more’ and expressed some exasperation that prior experience was an unpredictable ally; that is, ‘One of our partners said that they’ve done all this before, but when I looked at the Theory of Change it made no sense to people’. In another instance, the individual, idiosyncratic personalities of stakeholder drove the desire (or lack of desire) for complexity in that ‘[some] academics, will all over-complicate! Circles here and circles there–it’s a dog’s dinner, it’s not comprehendible’.

Is there a need for the programme theory to reflect a broad set of values, and perhaps value trade-offs?

Addressing the need for WP-specific programme theories to reflect a broad set of values, and determining whose values should be represented, could have been highly beneficial for the Accelerate Hub. In the Hub-level programme theory, it was evident that reflecting the values of GCRF funders and adhering to their strategic priorities was a non-negotiable requirement for Hub funding. Developing the initial two programme theories was relatively straightforward within these parameters. However, at the WP level, the negotiable aspect involved determining the pathways through which impact could be achieved.

Working backward from an overall broad-level vision of impact to project-level, WP-specific programme theories limited the potential for the emergence and complexity found in the most progressive forms of systems theory. Through interviews with other Hubs, it became evident that the challenges faced in elaborating the programme theory at the WP-specific level were not unique to the Accelerate Hub.

Is it important to portray potential interaction between programme elements and contextual factors?

Explicitly considering the interactions between programme elements and contextual factors may necessitate an expanded programme theory to accommodate the various configurations brought about by mechanisms and outcomes in specific contexts. For instance, in the Accelerate Hub’s WP1, which aimed at co-creation, policy impact and capacity-building, the complexities involved in such a programme theory should be approached with caution. Our case illustrates the importance of being conscious about these decisions to avoid investing significant resources in exercises that may not effectively address complexity (Figure 2). Other options do exist. One option is to choose to present a very high-level programme theory that is broad enough to encourage collaboration, allowing for various stakeholders’ different and emerging theories of change. Yet another strategy is to not present a causal model at all, but . . . ‘articulate the common principles or rules that will be used to guide emergent and responsive strategy and action’ (Rogers, 2008: 42–43). In practice, these might take the form of a series of assumptions or declarative statements around how change will be brought about in the complex system. These assumptions serve the purpose of setting some parameters while at the same time allowing for emergence and innovation. Similar reflections were relevant from interviews with other Hubs, where some were deliberate about portraying interactions, while others considered it a lost cause due to perceived complexity, and a third (majority) group vacillated between detail and simplicity, leading to a lack of clarity and purpose in their programme theory.

Is there a need to use programme theory to communicate the central idea in a programme to internal and external stakeholders?

Another lesson we learned from our experience in the Accelerate Hub was the importance of being explicit about the need to use programme theory to communicate the central idea in a programme to stakeholders. In all the Hubs, the programme theory was mandated as a communication tool by all funders. In some Hubs, there was a conscious decision to use the programme theory as a communication tool to external and internal stakeholders. This was powerful, effective and useful. For example, in one Hub, the programme manager spoke to us about using the programme theory to establish ‘clarity of mission’ and ‘the bigger picture’. The complexity and emergence of programme was not an obstacle here to simplicity. In fact, the more complex the programme, the greater the need for simplicity. A very simple programme theory was useful for many Hubs in helping programme managers navigate complexity and emergence. This was only possible when a conscious decision was made to keep the outcomes of interventions fixed and non-emergent. Programme managers who allowed activities and intermediate mechanisms to vary, waver, vacillate and become emergent, yet still curtailed and reined in activities when they digressed too far from a fixed target, found the mechanism of simplicity overlayed upon complexity profoundly useful. This was evident in our interviews with other Hubs where, in the words of one programme manager, ‘I’ve used it quite a lot and it to bring people sort of back. To make sure that we’re in the same sphere’.

Is there a need to use the programme theory to identify clear evaluation questions and produce clear evaluative questions?

Hindsight suggests that a more effective line of contingency thinking could have been to pause and question whether there was a genuine need to use programme theory to identify clear evaluation questions at the WP level. Furthermore, instead of expecting every WP to develop a programme theory, it might have been more beneficial to acknowledge that the utility of this process could vary at the WP level, with some WPs finding it more useful than others. This approach would have allowed for more flexibility and responsiveness to the specific needs and contexts of each WP within the broader programme.

A final contingency to consider is the importance of being explicit about the extent to which reaching a clear evaluative conclusion is necessary. The Accelerate Hub, like many others, grappled with this issue. The GCRF evaluation guidelines mandated that all Hubs adopt an evaluation approach allowing them to speak to impact, with impact indicators required for reporting. However, the realistic expectation of how clear evaluative conclusions could be drawn was not fully understood until the mid-term review cycle, when the GCRF initiative’s evaluation framework and programme theory were published (Barr et al., 2019). This revelation highlighted the need for explicit clarity on expectations regarding evaluative conclusions that need to be drawn from the programme theory from the outset of the project.

Conclusion

The contemporary world presents highly complex challenges, and a TBE process can offer plausible evidence on critical pathways, with room for surprises. However, we argue against the notion that a programme theory must offer a one-to-one representation of reality. Instead, we advocate evaluating programme theories based on their pragmatic benefits in a specific evaluative context, aiming to be ‘good enough’ for the present.

While intellectual ambitions may call for more complex programme theories to address today’s problems comprehensively, the feasibility and appropriateness of a more complex programme theory hinge on stakeholders’ capacity and willingness to engage in theory-making and the coordinated, collective action needed to realise its full potential. Increased complexity may also lead to controversy and demand exponential resource growth for empirical work.

In essence, we view complexity in programme theory not as an isolated goal but as a dimension tied to other evaluative aspects, involving dilemmas and trade-offs. In situations lacking sufficient resources, commitment or an ideal participatory process, opting for a programme theory of low to moderate complexity may be wiser, serving as a ‘good enough’ solution. A ‘good enough’ response takes in account limited time, limited resources and the needs of sponsors and stakeholders to use of programme theory for multiple purposes, such as expressing funder’s values, clarifying common purposes and communicating the key ideas in a given project.

In addition, we advocate for a contingency-oriented approach to comprehend our varied observations regarding the limited success of complex theory-making. Through our experience, complex programme theory has not consistently proven productive. This article aims to pinpoint and deliberate on situational factors that may support or, more sceptically, oppose the adoption of complex programme theory. Our approach combines a theoretical argument outlining potential contingency factors for or against complex programme theory, along with insights derived from case studies.

In conclusion, we view complexity in programme theory not as an inherent goal but as one aspect of evaluation intertwined with other dimensions, posing dilemmas and trade-offs. A contingency-oriented approach enables knowledge transfer across contexts while respecting the diversity of situations in which evaluation occurs. We offer our contribution in the hope that other evaluators and evaluation researchers find it helpful in their own situational analyses, aiming at the right level of complexity in their programme theories that work best under specific circumstances.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.