Abstract

Based on interviews and survey data, this article examines the role of civil servants in the process of external evaluations. Traditionally the political–administrative system and the research sector have been seen as worlds apart, their differences hindering the use of evidence in policy, creating a need for knowledge brokering. However, there are perspectives taking the opposite position, recognizing the mutual influence of policy and research in the coproduction of knowledge. This study suggests that both perspectives are relevant and together could provide a better point of departure for understanding the production and use of evidence in policy.

Research on the use of academic evidence in policy has been more or less explicitly based on the perception of the political–administrative system and the academic world as two communities (Caplan, 1979; Coleman, 1972; Freeman and Sturdy, 2014; Olejniczak, 2017). The differences in cultural imperatives, timeframes, and language between these two communities hinder the transfer of evidence into policy. This perspective is also evident in the recent literature on knowledge brokering, which argues for brokers to transfer or translate academic evidence from knowledge producers (evaluators and researchers) to users (for instance, the political–administrative system) (Olejniczak, 2017). However, there are theoretical perspectives that do not draw clear distinctions between knowledge users and producers, but rather recognize the mutual influence of policy and research in the co-production (Jasanoff, 2004) of knowledge. This allows other perspectives on the relationship between users and producers.

The way we understand the relationship between knowledge users and producers is a central factor in framing research on the use of evidence in policymaking. It has an impact on the questions asked and the explanations given in this field. If we believe that cultural differences between users and producers reduce the use of research and evaluations, then we will seek solutions to overcome this division, as is the practice in the knowledge-brokering tradition and in research on the factors that influence or impede the use of evidence in policy. However, if this assumption is wrong or just partly right, we will lose important insights by limiting the scope of research.

The article critically engages in dominant assumptions regarding the relationship between knowledge users and producers, which is believed to be central for research on the use of academic evidence in policy. The aim is to add new insights and, thereby, improve the point of departure for investigating the use of evidence in public policy. The data consist of responses to a survey and interviews with civil servants from six Norwegian agencies in the field of social policy.

The global trend of evaluation and evidence-based policymaking (EBPM) take place within national knowledge regimes characterized by their respective institutional and cultural features (Campbell and Pedersen, 2015). Yet, national examples, like the Norwegian case, are relevant for an international audience because the ideas described could easily be transferred to other settings, allowing for analytical generalization across borders (Flyvbjerg, 2006; Yin, 2013).

The context

When discussing evaluation in an international context, it is important to bear in mind that this form of knowledge production comes in a great variety. According to Duffy (2017), UK policy evaluation has been close to economic traditions like auditing and monitoring, and evaluation in the United Kingdom “. . . is not a manifestation of evidence-driven political decision-making but a reflection of emergent discourses of NPM and ICT” 1 (p. 66). In contrast, the Norwegian evaluation tradition is characterized by both the theoretical and methodological influence of social science ideals (Dahler-Larsen, 2013). Researchers from universities or the research sector have traditionally performed most public evaluations, and this remains true in social policy (Høydal, 2019). Terms like “research,” “applied research,” “reports,” and “evaluations” seem to overlap in the public sector (Kvitastein, 2002). Hence, in a Norwegian context it is difficult to draw a clear line between applied research and evaluations.

All parts of the Norwegian central administration are required to provide information on their efficiency, achievement, and results by conducting evaluations. The evaluations are to be integrated into plans and strategies along with other forms of knowledge production (Norwegian Government Agency for Financial Management [DFØ], 2016; Tornes, 2013). However, the number of evaluations performed varies significantly across sectors, and the use of external evaluations is especially common in the field of social policy (Askim et al., 2013; Tornes, 2012). This is the core of the Norwegian welfare state and evaluations in this area of policy could, therefore, have a great public impact. The agencies are interesting in this context because they play a central role in producing a knowledge base for Norwegian policy (Botheim and Solumsmoen, 2009; Christensen et al., 2014). Norwegian agencies are relatively autonomous professional organizations, structurally separated from the government offices but still under a parent ministry. The relationship between agencies and ministries balances the professional autonomy of the agencies against the political agenda of the ministries and the political regime. The agencies are central in the creation and administration of governmental programs, and they provide expertise and professional advice to the ministries, the public sector in general, the media and the public (Botheim and Solumsmoen, 2009).

Theoretical starting point and previous research

The idea of knowledge users and producers as representatives of two different cultures entails a view of the heterogeneity between the academic and the political world as a challenge for the use of evidence in policymaking. For instance, problems are believed to arise because politicians move at the pace of the political world and demand immediate evidence, while the production of evidence follows the slower, long-term logic of academia. What Coleman (1978) refers to as incompatibilities are also found in the differences between the precision of academic language and the need for policy research, such as evaluations, to be easily accessible to a broader nonacademic audience. The cultural differences are also evident in the very rationale behind academic knowledge production, which is concerned about theory building and critical thinking, and the political–administrative system interested in relevant practical knowledge (Coleman, 1972). According to Albæk (1995), the dichotomy between science and policy is closely linked to ideas of legitimate and illegitimate use of evidence in policymaking. Drawing on the work of Carol Weiss, Albæk claims that scientific control is prioritized over democratic adaption, the instrumental use of research results in policy is seen as legitimate, while other forms of research utilization are judged illegitimate and not properly investigated or recognized.

The idea of two incompatible cultures has resulted in a massive body of research seeking factors that could overcome these divisions, the so-called factor affecting literature (Nutley et al., 2007). Major reviews of such studies in evaluation research conclude that the relationship between evaluators and their clients seems to be the single most important factor in the evaluation results being used (Cousins and Leithwood, 1986; Johnson et al., 2009). Oliver et al. (2014) reveal the same tendency when reviewing research on policymakers’ use of evidence-based knowledge in a broader sense. Another factor believed to be important is the potential practical usefulness of the results (Contandriopoulos and Brousselle, 2012; Innvær et al., 2002; Nutley et al., 2007). The methodological quality, language, and the timing of the report (Bogenschneider and Corbett, 2011; Innvær et al., 2002; Oliver et al., 2014) and the alignment of the evaluation results with current policy have also been found to increase the use of evaluations and applied research (Innvær et al., 2002; Nutley et al., 2007; Smith, 2013; Weiss, 1998). Although broader contextual or organizational factors are also believed to influence the use of evaluation results, such factors remain less commonly explored in the literature (Alkin and King, 2017; Højlund, 2014; Vo and Christie, 2015).

As previously mentioned, the presumed differences between knowledge producers and users are the basis for the recent knowledge-brokering literature. This literature argues that evidence needs to be “brokered” to overcome the divisions between the academic world and the political–administrative system (Nutley et al., 2007; Olejniczak, 2017). According to Olejniczak (2017), knowledge brokers are organizations or individuals that serve as intermediaries between the worlds of research and policymaking, and within government analytical units could perform such roles, for instance, by helping decision-makers to acquire knowledge and translate this knowledge into practice.

Although knowledge users and producers may represent different interests and cultures, the process of knowledge production in the form of evaluation or applied research will involve dialogue, cooperation, and mutual influence between these groups. By separating the process of evaluation into phases or rather moments, because they do not necessarily take place in a chronological order or are easily separated, Dahler-Larsen (2006) illustrates variations in the relationship and the power balance between knowledge users and producers during the sequence of an evaluation process. He suggests eight such moments: the initiating, the framing, the organizing, the designing, the data gathering, the analyzing, the validating, and the decision-making based on the evaluation results. 2 For instance, as initiators, knowledge users make choices regarding the aim or methodological approaches that influence subsequent phases of the evaluation and, therefore, the evaluation result. Although external knowledge producers gather the data, analyze the findings, and write the report, knowledge users may also participate in these stages of the process through feedback loops.

There are theoretical perspectives recognizing this mutual influence of policy and research in the coproduction of knowledge (Jasanoff, 2004). That is, acknowledging that the two systems produce knowledge together and policy is a part of knowledge production in the same way as knowledge production is a part of policy. Therefore, the idea of coproduction is in opposition to “. . . the realist ideology that persistently separates the domains of nature, facts, objectivity, reason and policy from those of culture, value, subjectivity, emotion and politics” (Jasanoff, 2004: 3). The evaluation community could be described as representative of this “realist ideology,” eager to uphold the distance between knowledge producers and users, seeing this as a guarantee of objectivity and, therefore, quality (Pawson and Tilley, 1997; Tornes, 2013). Within such framing, knowledge users as politicians or civil servants discuss values and set goals, while knowledge producers find answers to society’s challenges in the form of objective facts. Thus, science’s influence on political–administrative processes does not violate either democratic basic principles associated with the idea of government (as opposed to the expertization of the public sector) or science’s own ideals of independent and value-free research (Albæk, 1988). This perspective may explain why the idea of the two communities has dominated evaluation research and more general research on the use of evidence in policy.

In this study, I will investigate the relevance of the dominant two-community perspective and the coproduction perspective to explain the relationship between knowledge users and producers. While the two-community perspective has been chosen because of its position, the coproduction perspective is applied as a contrasting way of understanding the relationship between knowledge users and producers. In addition, I will bring in the concept of knowledge brokering, which is seen as a consequence of the two-community perspective. The following analyses are theoretically informed by Dahler-Larsen’s (2006) model of the eight moments of an evaluation process. While previous work has tended to see the relationship between knowledge users and producers as static, characterized by their cultural differences, separating of evaluation process into different moments opens a more dynamic picture. By acknowledging that the relation between knowledge users and producers varies, the model opens up the dynamics between the two parts in various parts of the evaluation process. This provides a more realistic basis for studying the relationship between knowledge users and producers, than static perspectives. Learning more about the relation between knowledge users and producers is fundamental for understanding the use of evidence in policy, because this relationship is believed to have a major impact on the use of evidence (Cousins and Leithwood, 1986; Johnson et al., 2009; Oliver et al., 2014).

Methods and data

Methods

The data in this study consist of the results of a cross-sectional online survey of civil servants from six Norwegian social policy agencies and semistructured interviews with 12 civil servants. Both survey and interviews considered evaluations performed by external evaluators. The agencies taking part in the survey were the Norwegian Directorate for Education and Training, the Norwegian Directorate for Children, Youth and Family Affairs, the Directorate of Integration and Diversity, Norwegian State Housing Bank, the Norwegian Directorate of Health, and the Norwegian Directorate of Immigration.

Survey: Recruitment and response rate

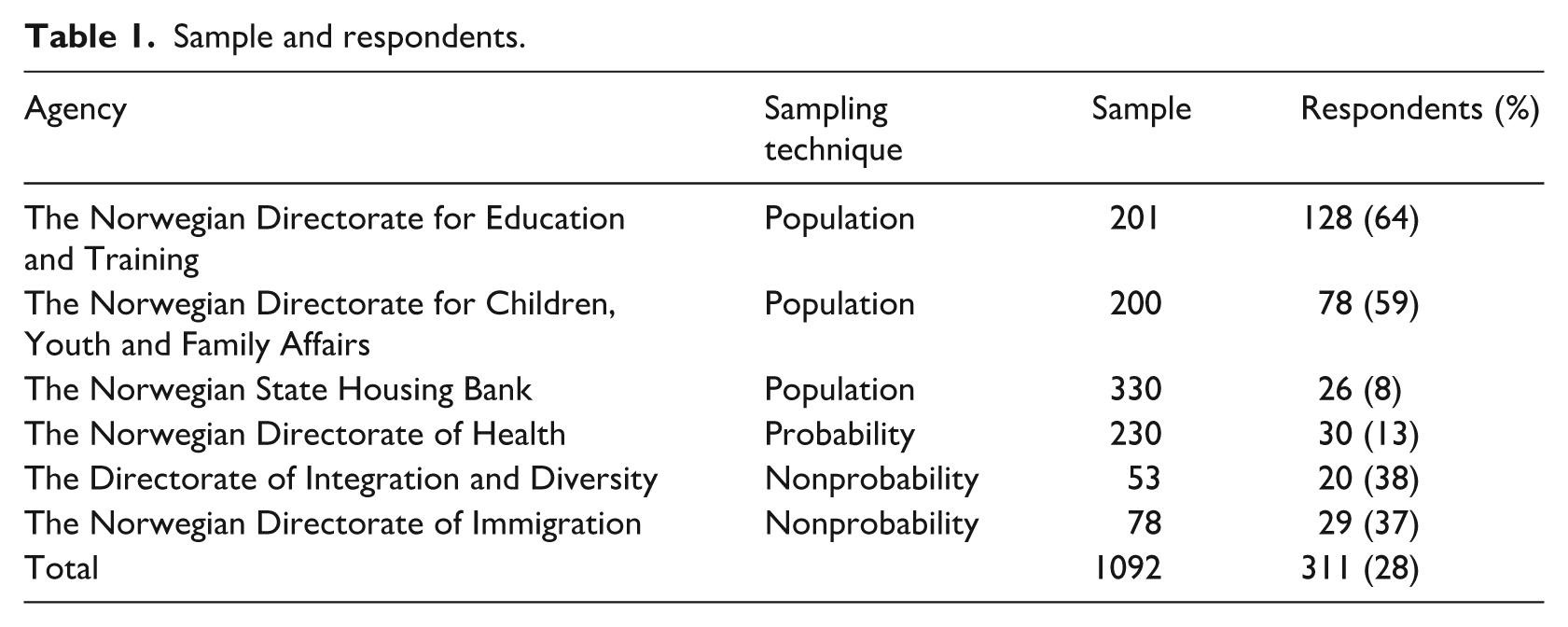

The ambition was initially for all employees of the six agencies to receive the survey invitation to reduce the risk of missing potential insights from omitted staff members. However, after the initial contact, the sampling procedures were customized for the respective agencies to facilitate participation. In practice, this meant that population sampling was applied to three of the agencies (the survey was sent to all civil servants). Nonprobability sampling was applied in two of the agencies (the survey was sent to civil servants considered to be relevant and available), and probability sampling was used with one agency (Table 1).

Sample and respondents.

In total, participation in the survey was requested from 1092 civil servants. Potential participants received an email invitation from the Questback online survey software (Questback, Oslo, Norway). The respective populations were later sent three reminders, which all increased the response rate. Of the 1092 invited, 311 responded, yielding a response rate of 28%. The Norwegian Directorate for Education and Training had the highest response rate (64%). This was also the only agency to discuss its participation at the highest organizational level and provide formal internal information about the survey. The relatively high response rate of the Norwegian Directorate for Children, Youth and Family Affairs could be due to an internal back-and-forth process before deciding to participate. In this process, the survey became known to the directors, who may have informed their employees. In the agencies that selected their own samples—the Directorate of Integration and Diversity and the Norwegian Directorate of Immigration—the invited employees received information from their leaders. The two agencies with the lowest response rates distributed the email invitation without further information. To filter responses from participants without relevant evaluation experience, the participants received an initial question on exposure to evaluations. Consequently, about one-third of the respondents were directed straight to the final section, concerning personal information.

The nonprobability sampling in two of the agencies and the low and uneven response rate to the survey leaves the results vulnerable to systematic biases. Therefore, to reduce this risk, I separated the dataset into two sets. One set includes all six agencies, while the other consists of data from the Norwegian Directorate for Education and Training and the Norwegian Directorate for Children, Youth and Family Affairs. These two agencies have an adequate response rate (64% and 59%, respectively), and the participants were selected by random sampling. In the text the results from the Norwegian Directorate for Education and Training and the Norwegian Directorate for Children, Youth and Family Affairs will be presented in parenthesis, after the total sample. SPSS Statistics software (IBM SPSS, Armonk, NY, USA) was used to analyze the results.

Interviews

Twelve civil servants were interviewed. Group A (seven informants) was interviewed in the process of creating the survey. The aims were to (1) obtain a richer impression of the evaluation activities in the agencies than presumably the survey could provide, (2) use the information from the interviews to improve the survey, and (3) recruit some of the informants to test the survey. Given the timing of the first interviews, the interviewees were recruited through referrals from my own network and through informants already interviewed. Group B (five civil servants) was recruited through the survey. Of the 311 respondents, 18 agreed to a follow-up interview. However, in the end only five civil servants responded to an interview invitation without further nudging.

In total, the two groups of interviewees represented five of the six directorates involved in the survey. The participating civil servants had various degrees of evaluation experience, but the majority had been particularly involved in evaluation at some point and probably had more than average knowledge of this field. Despite their differences, the two groups mainly reflected the same attitudes and experience regarding evaluation. They were also surprisingly outspoken about the role of evaluations in their own agencies, so their overall positive attitude to the evaluative activity was accompanied by clear critical input.

Survey design

The survey consisted of three parts. The first had questions concerning the participants’ most recent evaluation experience. The second part concerned their general evaluation experience, and the third, their personal information such as educational background, position, and years of work experience. This approach was used to gain an impression of the civil servants’ perceptions of general evaluation activity as well as to gather in-depth information about an evaluation the participants knew well. The survey included filter questions to ensure that participants were given questions they were qualified to answer. The numbers of participants answering each question varied owing to this filtering.

The validity of survey answers is a result of the quality of the questionnaire, and testing is important to ensure that future respondents have a correct understanding of the questions (Haraldsen, 1999). The questionnaire was tested by five civil servants, two of whom were recruited through the initial interviews, while the rest were professional contacts. The testers gave very valuable feedback on language and the structure of the questionnaire, and they contributed ideas regarding alternative responses and additional questions.

Analyzing data

Definitions and operationalization

Before analyzing the findings, the concepts of knowledge producers and users must be defined. While the knowledge producers in this case are the external researcher or consultants conducting the evaluation, the users are a more complex group. They could be the civil servants in the agencies, as well as ministry civil servants or politicians. Because I believe them all to be potential users of the evaluation results, I draw no sharp distinctions between these groups unless they are believed to take different positions. However, my focus is on the civil servants in the agencies who have varied evaluation experience. On one end of the scale we find those frequently directly involved in the process of commissioning evaluations or in leading and administrating evaluation processes. At the other end we find those who are indirectly affected, through the evidence base and professional discourse in the agencies. As previously mentioned, the ones indirectly affected are not taking part in the survey but directed straight to the final section. In the following I do not separate between the other groups of civil servants, primarily because people take on different roles or degrees of involvement from project to project and therefore it is difficult to draw any clear lines between the user groups. Second, because civil servants not directly involved in procurement processes or leadership also do represent a valuable source regarding evaluation processes.

The evaluation processes are investigated from the perspective of coproduction and the idea of the two communities. Coproduction is understood to be situations where the knowledge users are involved, and they affect knowledge production. Situations where the knowledge users and producers represent different ideals, values, or interests are seen as expressions of the two-community perspective. In situations where the two groups reflect different cultures, I investigate whether the civil servants in the agencies take the role of knowledge brokers, bridging the gap between the knowledge producers and users.

Inspired by Dahler-Larsen’s eight moments of an evaluation process, I have constructed four moments for my analytical purposes. I am leaving out the two last moments, which involves the internal organizational validation and decision-making based on the evaluation results. Although important, I consider these less central for the purpose of this article. I have also merged the original three moments of framing, organizing, and designing, to a single category called planning. This was because I believe the different tasks in practice are difficult to separate and by reducing the moments the presentation becomes more stringent. The new model consists of what I consider to be the four main elements of an evaluation process: (1) initiation, when the knowledge users plan what, why, and when to evaluate; (2) planning, when the knowledge users frame the evaluation according to their objectives, preferred perspectives, and methodological approaches as well as plan the overall project; (3) data collection; and (4) reporting, when the knowledge producers analyze and write the report, and knowledge users give feedback until a final version is accepted. It is, however, important to emphasize that these moments will overlap, and they will most likely not take place according to a tidy timeline.

The initiation

According to Olejniczak (2017), already by helping decision-makers in acquiring knowledge, civil servants take on the role of knowledge brokers. As relatively autonomous, professional organizations, central in producing a knowledge base for Norwegian policy (Christensen et al., 2014), the very existence of the agencies, between political interests and external expertise could be understood as a broker position and should be of our consideration in the following analyses and discussion: We are subject to the Ministry and thus it is in principle the Ministry that decides what is worth investigating further. Our existence as an agency is a positioning exercise. We are a tool for the Ministry and the government, while at the same time by appointing an agency the politicians recognize the importance of academic debates and professional independence. However, when it comes down to it . . . if we’re called in on the carpet. . . (Agency 2, Informant 1)

The involvement of the ministries illustrates the difficulties involved in separating knowledge production from politics. In the role of initiator, knowledge users have the power to decide what, when, and how to evaluate. Simply by choosing a program for evaluation, the authorities signal the program’s importance or relevance (Dahler-Larsen, 2006). While one agency seems to be directly steered by their ministry to initiate evaluations, the majority reported a more independent role, and the internal processes for initiating evaluations vary across the organizations. However, even though this includes brainstorming and open meetings, the informants believe that evaluations are often determined by the prestige, debate, investment, or size of the programs in question. According to the survey, 67% of the total sample believe that their agency initiates new programs with a plan to evaluate them (76% in the Norwegian Directorate for Education and Training and the Norwegian Directorate for Children, Youth and Family Affairs). This indicates that evaluation is a result of routine and culture rather than ad hoc political interests and decisions.

As Kirkhart (2000) points out, an evaluation is most likely to be a result of a combination of reasons, depending on whom you ask, and the same evaluation results could be conducted for different reasons, such as to be instrumental, symbolic, or enlightening. When asked why their agency conducted evaluations, the most common answer from the informants was “. . . how can we make a decision or a recommendation if we don’t have any knowledge about the case in question?” A senior officer gave the following description when asked about the role of evaluations: In all the work I do, . . . when we make professional recommendations, everything is always based on solid documentation. I cannot write anything based on my own ideas it has to be based on evidence. All the information available for stakeholders on our website is also based on knowledge from, for example, evaluations. I use both our own evaluations and reports from other part of public sector in my own work. (Agency 1, Informant 2)

Another civil servant emphasized the culture of the agency and the various useful aspects of the evidence from evaluations: We are very concerned that our work is evidence based. There is a culture for this . . . and a strong interest among people working here; some love to read new reports. I am not one of those, but if I need to . . . if I am going into a new field, I read reports and discuss them with colleagues. Often the reports confirm stuff we already know, and that is useful. However, I also learn new things, and sometimes the results surprise me. We might have a hypothesis that has not been confirmed, and that’s exciting! We are always looking for new knowledge and new perspectives. (Agency 2, Informant 2)

The survey confirms the impression of evaluations as a central part of the activity in the bureaucracy. The most recent evaluation was read as a whole or in parts by 95% of all participants (96% of those in the education and family affairs agencies); 82% of all participants had been informed about the results in internal meetings (87% of those in the education and family affairs agencies). In addition, the quantitative data indicates a general pro-evaluation culture; 80% of all the participants believed that their most recent evaluation experience led to useful information or knowledge (76% of those in the education and family affairs agencies).

The planning

The planning involves questions concerning the framing and organization of the project. These discussions are most likely to start in the initiation, but as the process evolves, they become more candid and definitive. The planning also involves commissioning evaluation, which is, according to the informants, an important aspect of the final quality of the report. In Norway, external evaluations costing more than 1,300,000 Norwegian kroner (Nok) (110,337 British pounds) are regulated by law and regulations for public procurement (https://www.anskaffelser.no/avtaler-og-regelverk/terskelverdier-offentlige-anskaffelser). According to DFØ (2016), most public evaluations fall in this category. The aim is to make a fair and transparent system, whereby all service providers have equal opportunities to compete for public contracts. The system strictly regulates contact between knowledge users and producers in the procurement process. The parties are also required to adhere to the project description in the initial announcement and the evaluation assignment. According to the informants, this lack of flexibility can create challenges for the process and it makes the formulation in the announcement of great importance.

The agencies use their role as initiators to formulate the evaluation announcement in ways that steer the process in certain directions: The announcement will include a list of aspects to be included in the evaluation. Sometimes we will even include a list of aspects that should not be part of the evaluation, to prevent the evaluators from spending time on planning projects that are irrelevant to current policy. (Agency 4, Informant 1)

In addition, the agencies influence the process through methodological preferences, which are normally part of the announcement of the evaluation assignment. By emphasizing which questions the evaluators should investigate or not and how these aspects should be studied, the knowledge users influence the evaluation process. The bureaucracy may therefore be considered as coproducers of the evaluation results. However, in this part of the evaluation process, I also found support for the two-community thesis and the civil servants in the agencies taking the position of knowledge brokers: Politicians look for positive effects during their own term of government. If they could choose, they would like a very clear answer, with a methodological approach from the top of the evidence hierarchy within a few months. . . . in reality it is give and take. We ask for more time, they want an answer at once. In practice, it often takes four or five months to get an evaluation project started and the evaluators need another year to do the job. (Agency 2, Informant 1)

This senior civil servant indicates that the political–administrative world and the academic world follow different logics. The politicians have their own timeline driven by elections and public debates, whereas researchers and evaluators have to take the time needed to do their work according to academic standards. Between political interests and academic considerations, the civil servants in the agencies take the role of knowledge brokers and seek pragmatic solutions for all parties.

The informants referred to different strategies in response to political pressure with potentially negative effects on the quality of the evaluations. The first step would normally be to discuss the evaluation in question with the ministry and try to avoid methodological approaches believed to be inappropriate. Yet, according to the survey, 29% of the total participants (33% of those in the education and family affairs agencies) believed their own agency had initiated effect evaluations in cases where they believed the use of this approach was questionable. However, in situations where the agencies end up organizing evaluations with a methodological focus seen as unsuitable, the civil servants try to take precautions: . . . if the effect is believed to be impossible to measure, we could for instance ask the evaluators to find a probable effect. I know it is not the best solution, but. . . We will always be careful when communicating such results from the final report. (Agency 3, Informant 1)

There are several likely reasons for the civil servants in the agencies to take the role of knowledge brokers in these situations: (1) as employees in the agencies, their mandate is to ensure a high-quality knowledge base for Norwegian policy; (2) they will normally have better methodological or evaluation competence than the politicians, and they are qualified to assess these matters from a professional point of view; (3) they are under less political pressure than their colleagues in the ministries, hence in a better position to discuss these issues; (4) the agency organizing the evaluation must manage the practical implications of inadequate choices; and (5) the agencies will also be responsible for its final quality—and the unit to blame—if the evaluation is of insufficient quality to be useful.

Although different groups of knowledge users have different preferences regarding the general framing of evaluations, the informants were eager to communicate their interest in critical or alternative perspectives from evaluators: Often we will ask the evaluators to present their own ideas in their offer. If they manage to present a good concept, this illustrates their knowledge about the field and a certain degree of commitment. It might also contribute to new and useful perspectives in the evaluation process. (Agency 4, Informant 1)

Several informants also mentioned that they were not interested in reports “too eager to please.” The findings seem to reveal a level of ambiguity regarding the critical role of evaluations, to which I return later.

The data collection

According to Dahler-Larsen (2006), knowledge producers are likely to be the most influential part of the data collection; however, knowledge users may also have a more active role in the data collection than traditionally assumed. One informant explained how the deliberate selection of cases influenced the outcome of an evaluation process in a desired direction. The results were later used as the basis for increased allocations and expansion of the initiative. However, according to my interviewees, this kind of blatant strategizing was not common. Nevertheless, there are more subtle methods of interference: We are seldom involved in the process where the evaluators are doing their job. However, in some larger projects we might arrange seminars where preliminary results are presented and discussed to which we might invite external stakeholders. The evaluators might get useful ideas during these sessions; still, the framing of the projects is bound by contracts. The communication between the agency and evaluators is primarily via written comments on drafts. We do not take part in workshops or listen to the evaluators doing interviews; however, I know that others do. . . (Agency 2, Informant 1)

These examples illustrate that knowledge users could influence even the process of data collection, traditionally seen as the domain of the knowledge producers, through various forms of interference. This indicates a shortcoming in the traditional differentiation between knowledge users and producers—knowledge and policy.

Decisions regarding the aim of the evaluation and the methodological approach taken in the planning stage of the process will clearly influence data collection. A number of my informants expressed concern over their own organizations’ excessive demands for data collection, claiming that the agencies “expected research while paying for evaluation.” These excessive demands could include many case studies or data from a combination of resource-intensive methodological approaches. The challenges could also be a result of the abovementioned mismatch between politicians eager to show the effect of a certain program and the methodological premises for evaluations. Reflecting on the burden on knowledge producers, the civil servants seemed to take the role of knowledge-brokers, communicating insights into the world of knowledge producers as well as their own.

The reporting

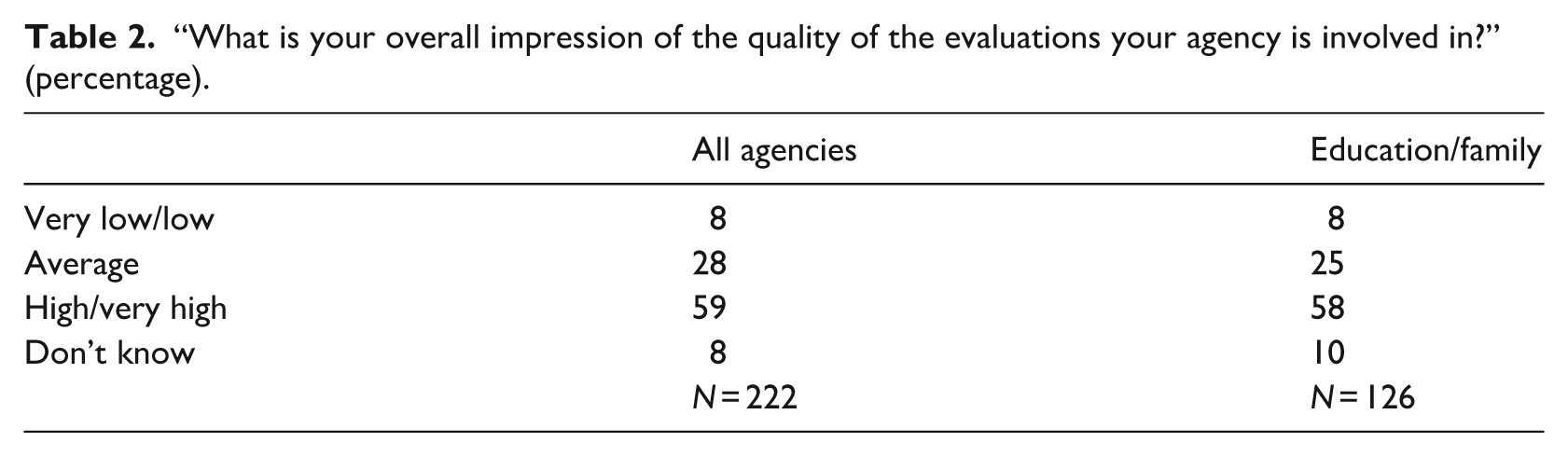

In the reporting of the evaluation process, the knowledge producers systematize the data, analyze their findings, write a draft, and send it to the knowledge users. This is the knowledge users’ chance to influence the final evaluation report: “We get the picture when we see the draft. At the moment, I’m involved in a project where the first draft was not good enough, so I’m quite tense about the next version” (Agency 3, Informant 1). This quote reflects the level of influence the knowledge users have in this phase of the process. Although the majority believe the evaluations their agencies are involved in to be of high or very high quality (Table 2), all drafts receive feedback. They are critiqued on every aspect from language and presentation to the number of interviews presented, and for performing “shallow analyses” or “. . . lacking a deep understanding of the subject and the context.”

“What is your overall impression of the quality of the evaluations your agency is involved in?” (percentage).

While discussions regarding aspects like number of interviews or deadlines may be easily resolved, managing conflicts regarding more subjective aspects, such as the framing of the analyses or the conclusion, was seen as complicated. On some occasions, the mismatch between the final report and the agencies’ expectations lead to intense negotiations or conflict. One of the informants had experience of evaluators who did not accept the framing of a project and criticized the policy in a specific field in general: “If evaluators write the report as if they believe the Norwegian policy in this field to be altogether wrong, then their reflections will be of no use to us. Their political opinions or mine do not matter” (Agency 3, Informant 1). This comment reflects an interesting paradox. On one hand, it supports the idea that policy and evidence are separate fields and that evidence production should not be influenced by political opinions. On the other hand, it illustrates the point that evaluation is exactly the opposite; it is knowledge produced within a certain political framing where critique of the system is not welcome. In this way, the civil servant illustrates why the two communities and the coproduction perspective are both relevant in discussions of the role of evidence in policy.

Although disagreements between knowledge users and producers sometimes arise, they seldom lead to termination of the evaluation process: The internal threshold for claiming breach of contract is very high. In legal terms it is also very complicated. We are very careful regarding this. We do not want to be seen as people who try to control a research process. In fact, maybe we are too careful. After all, we administer the money of the society. (Agency 2, Informant 1).

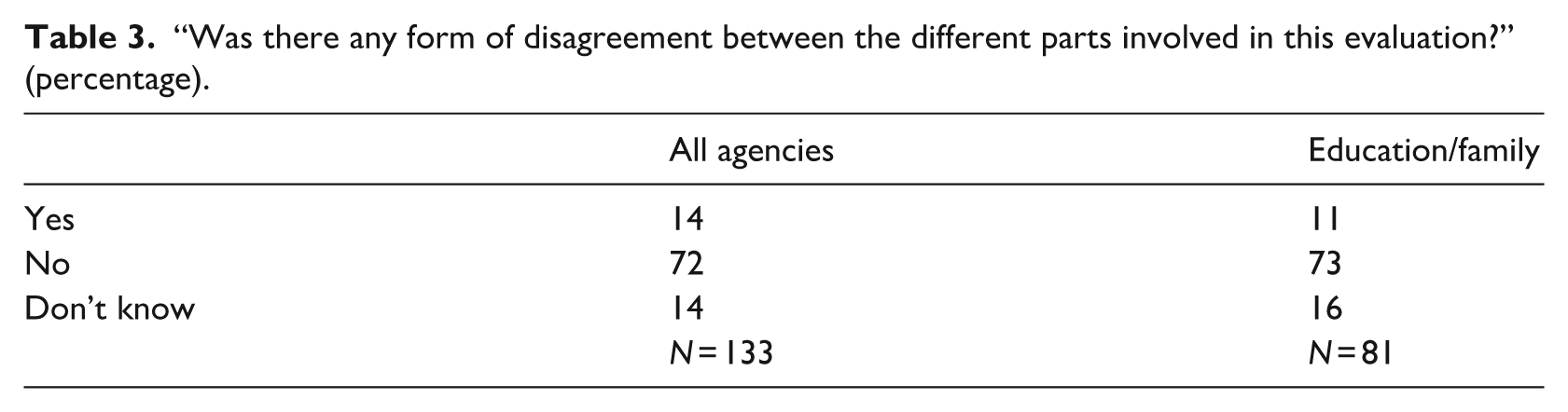

According to the civil servants taking part in the survey, conflicts are not a problem in evaluation processes (Table 3). However, from the literature we know there might be degrees of self-censorship among evaluators, and this could be a reason why conflicts do not arise (Furre and Horrigmo, 2013).

“Was there any form of disagreement between the different parts involved in this evaluation?” (percentage).

Sometimes, the agencies involve external consultants to ensure the scientific quality of their reports, and one agency had a permanent scientific panel: We have a permanent panel of scientists reading drafts. They make sure the evaluations have been carried out properly and check whether the analyses are reasonable, etc. It is a form of feedback similar to the peer review system. We, for our part, make sure they deliver the report on time and we handle the financial aspect. We also have lawyers who give feedback if, for example, the researchers use the wrong legal terms and so on. We give a sort of technical feedback. . . (Agency 2, Informant 1)

The quotes indicate a fear of being seen as controlling the process of knowledge production and reflecting the ideal of knowledge production and policy as separate domains. By involving a professional third party, the agency avoids the uncomfortable role of criticizing the academic level of the evaluation draft. Instead, the agency can concentrate on formal aspects, such as budgets and deadlines. Most likely, it is difficult for the knowledge producers not to accept criticism from the scientific panel, which in this case has the role of an objective third party. However, it is reasonable to assume that the scientific panel members are not the most eager academic critics of the current policy.

Regarding the form and language of the drafts, the informants clearly reflected the two-community hypothesis. One informant wondered “. . . why after 15 years in the job evaluators still do not know how to write a summary?” These reflections were elaborated by a civil servant from another agency: The quality of the executive summary is important. Politicians will just read the summary. However, we get a lot of . . . for instance, sometimes the quality of the report is great. Inside the report, there is plenty of great stuff, but this is hardly mentioned in the summary. . . . We have to make sure that the summaries are good, but should we rewrite the summaries when we pay others to do the job? We spend enough time controlling the report itself. (Agency 1, Informant 2)

If summaries or the language of the report were believed to be of inadequate quality, some civil servants would “find their pen and do the job,” while colleagues in the same agency would return the draft and ask for a revision. These forms of final adjustments seem to be the rule rather than the exception.

Overall, these findings suggest three key insights. First, the results indicate that evaluation is a process of coproduction where knowledge users and producers together influence the final report. The influence of the knowledge users takes different forms in various phases of the process. In the initiation and planning, the agencies influence the process through general framing and by methodological preferences for the project. These guidelines have an impact on the rest of the process, such as the data collection, the analyses and, therefore, probably the conclusion. The knowledge users can also be involved in the data gathering process, for instance, by case selection, participation in interviews, or by arranging seminars where preliminary results receive feedback. Finally, during reporting, the agencies comment on the draft report. In some cases, this will lead to comprehensive rewriting. By being active partners in the evaluation process, the knowledge users seem to steer the evaluation in directions that are consistent with current policy. By producing knowledge within the frames of the political regime and existing budgets, evaluations increase their chances of being used and have an instrumental impact (Weiss, 1998).

Second, there are findings supporting the idea that the differences between the world of the knowledge users and that of the producers create challenges in the evaluation process that may hinder the future use of evaluation results. For instance, the political appetite for quick answers and the positive effects of current policy could lead to low-quality evaluations that are difficult to use as intended. Problems could also occur if the knowledge producers take the role of critical academics and challenge current policy to a greater degree than there is room for in the bureaucracy. Finally, in the reporting, there are clear issues related to the presentation. From the civil servants’ perspective, the summaries and conclusions are the most important parts of the evaluation reports, and they wonder why the evaluators never seem to master the art of good summaries. However, while inadequate presentation may represent extra work for the civil servants, it does not seem to prevent the final evaluation reports from being used.

This brings us to the third insight. When cultural clashes between the academic world and the political–administrative system occur, the civil servants in the agencies seem to take the role of knowledge brokers. From their position between the ministries’ strategic politicians eager for results and the evaluators bound by academic ideals, the civil servants in the agencies negotiate and facilitate the process of evaluation. As professionals, they argue against political demands for inadequate methodological approaches and attempt to persuade the ministries to choose other options. If the summaries of the reports are long and unappealing, they will return them to the evaluators or rewrite the summaries themselves. As professionals with academic degrees, they acknowledge that the process of evaluation takes time and resources, and they admit that they sometimes ask for research when paying for less. This reveals a general understanding of the situation of the evaluators, which may lead to a better relationship between knowledge users and producers and generally produce a less challenging process. Overall, the role of civil servants as knowledge brokers most likely improves the relationship between knowledge users and producers as well as the quality of the evaluations. Both are central factors in the use of evaluation results (Cousins and Leithwood, 1986; Johnson et al., 2009).

Study limitations

Definitions and operationalization

There are a number of limitations in the material presented here. The present study is based on the participants’ perceptions of evaluation use. There is a danger that participants in an evaluation process rationalize their experience after the process has ended and present a more positive image than is justified (Albæk, 1988; Dahler-Larsen, 2000). The civil servants may also be affected by a system that seems to embody an overall pro-evaluation culture. If I had interviewed knowledge producers, they may have presented another perspective on the process of evaluation. From their point of view, the involvement of the political–administrative system may be inappropriate interference and more clearly represent a culture with values and ideals other than academic ones. In addition, they might feel they have to practice a degree of self-censorship in their dialogue with the bureaucracy, because they want to make a good impression to get new projects. Ideally, I should have interviewed both groups; however, by interviewing the knowledge users, I learned about their involvement in knowledge production. This information challenges the traditional picture of users and producers as two groups with little mutual interference; therefore, it supplements the research on the relationship between knowledge users and producers. According to Oliver et al. (2014), most studies in this field focus on the perceptions of researchers, and the present study, therefore, contributes a further perspective.

Nevertheless, as in all interview studies based on self-recruitment and voluntary participation, there is a risk that the informants represent a group of civil servants who are particularly engaged in and enthusiastic about the phenomenon in question. The survey data reflect the same main tendencies found in the interviews, strengthening the overall data quality. Nevertheless, the survey participants do not represent a random sample of civil servants and, combined with a low response rate, this represents a clear limitation in the available material. However, this survey represents a large group of civil servants in a certain policy area and reveals valuable descriptive information about the evaluative activity of the bureaucracy. When the respondents were divided into two groups according to their sampling method and response rate, the trends both still pointed in the same direction.

Discussion and conclusion

This article is an investigation of the role of civil servants in the process of initiating and following up public evaluations performed by external researchers or consultants. The political–administrative system and the research sector have traditionally been seen as worlds apart, and their differences are believed to hinder the use of research or evaluation results. A solution to this problem is believed to be found in knowledge brokering, where qualified professionals bridge the gap between the two systems (Nutley et al., 2007). The findings presented in this article support these perspectives. However, the data also indicate that despite cultural differences and conflicts of interests, knowledge producers and users are, in fact, both actively involved in the evaluation process and influence the final report, reflecting the perspective of coproduction.

While evaluation research has traditionally seen the division between knowledge users and producers as a guarantee of objective and high-quality evaluations, which is believed to positively affect the use of the evaluation reports, this study suggests a different perspective. In the process of coproduction, knowledge users ensure the evaluations investigate questions believed to be relevant to current policy. This framing seems to lead to evaluations considered useful and ultimately, most likely, to strengthen the evaluation culture of the central administration (Høydal, 2019).

It is imperative to note that these findings do not imply that the process of evaluation is dictated by the bureaucracy or leads to evaluation reports of dubious character. On the contrary, civil servants use their brokerage skills to ensure the final quality of the reports, negotiating between academic ideals and unrealistic political expectations. However, as civil servants must balance political and professional considerations, the present data indicate that critical evaluations are welcome, as long as the critique takes place within the bounds of current policy. Thus, even when contributing with new and relevant knowledge, evaluations could be accused of being “ . . . the extended arm of politics, which examines the world in the language of power” (Meyer and Myklebust, 2002: 14), leading to the status quo rather than innovation and radical changes and illuminating the difficulties involved in attempting to separate knowledge from policy.

By acknowledging the involvement of the political–administrative system in the evaluation process, this article suggests that the concept of coproduction allows organizational factors and contexts to be understood as important explanations of the use of evidence. In this study central contextual factors include the organizational role and position of Norwegian agencies, the general interest in evidence-informed policy and the pro-evaluation culture in the central administration. Although these contextual factors appear specific to the Norwegian case, the results are relevant for an international audience, because the perspectives used to analyze the relationship between knowledge users and producers and the model of the ‘moments’ of an evaluation process, could all be applied and investigated within similar institutional and cultural contexts, such as the European Union (EU).

From this study, we learn that the relationship between knowledge users and producers is more multifaceted than is often recognized. The study challenges the dominant perspective on the relationship between knowledge users and producers as worlds apart. By questioning this idea, this article addresses the assumption that policy and knowledge are two distinctively different phenomena, a perspective that has dominated evaluation research for decades. Acknowledging the influence from policy in knowledge production, should lead to a research interest in the process of evidence production and in the nature of the evidence produced for public sector. In sum the results indicate that it is time for a broader perspectives on central questions concerning the relationship between knowledge and policy. I therefore conclude this article by calling for further research in this area based on other perspectives than familiar realist or other post-positivist approaches.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by departmental resources.