Abstract

Knowledge brokering is a promising practice for addressing the challenge of using research evidence, including evaluation findings, in policy implementation. For public policy practitioners, it means playing the role of an intermediary who steers the flow of knowledge between producers (researchers) and users (decision makers). It requires a set of specific skills that can be learnt most effectively by experience. The article explains how to develop knowledge brokering skills through experiential learning in a risk-free environment. It reports on the application of an innovative learning method—a game-based workshop. The article introduces the conceptual framework for designing game-based learning. Then, it demonstrates how this framework was applied in practice of teaching knowledge brokering. In conclusion, article discusses the first lessons of game application in training public policy practitioners. It concludes that game-based workshop is a promising method for learning about knowledge brokering strategies that increase evaluation use.

Keywords

Recent literature on evidence use in public policies argues that bringing credible and rigorous evidence to decision makers is not sufficient; the evidence needs to be “brokered” (Nutley, Walter, & Davies, 2007; Olejniczak, Raimondo, & Kupiec, 2016; Pielke, 2007). Knowledge brokers are individuals or organizations that serve as intermediaries between the worlds of research and policy-making practice (Cooper, 2013; Heiskanen, Mont, & Power, 2014). They steer evidence flows between knowledge producers (researchers, experts) and knowledge users (decision makers, project managers; Armstrong, Waters, Crockett, & Keleher, 2007; Turnhout, Stuiver, Klostermann, Harms, & Leeuwis, 2013). Within government, evaluation or analytical units often perform the role of brokers. They help decision makers in acquiring, translating into practice, and using existing knowledge for better planning and implementation of public interventions. They also contract out or execute new analyses and evaluation studies by themselves.

Brokers need to operate because decision makers and researchers are driven by different imperatives and time frames using different languages and practices (Caplan, 1979; Clark & Holmes, 2010; Wehrens, Bekker, & Bal, 2011). Literature on evidence-informed policies points to “knowledge brokering” as a promising strategy for bridging this know-do gap between research and decision-making process (Dobbins et al., 2009; Frost et al., 2012; Lomas, 2007; Meyer, 2010; Olejniczak et al., 2016).

Teaching knowledge brokering to public policy practitioners could address the problem of evaluation utilization. However, brokering is a complex issue. It entails a set of specific skills that can be learnt most effectively by experience. Experiential learning is difficult in the public sector. It requires experimentation and learning from actions and often mistakes. This method seems contrary to the interests of public institutions because they are naturally concerned with the costs of errors and risk-taking. It also raises a challenge for educators and the training of public policy practitioners. We are, thus, motivated to ask, “How can public sector professionals learn how to be knowledge brokers and the challenges related to this role without bearing the costs of mistakes that are an inevitable part of the learning process?”

Serious games are games with the primary purpose of teaching or training in a low-risk environment. Their focus on learning and behavioral change is usually contrasted with tabletop or computer games that were developed primarily for entertainment and recreation (Connolly, Boyle, MacArthur, Hainey, & Boyle, 2012; Ma et al., 2011). The term often references video games or games that use some degree of digital or visual techniques. Some authors further distinguish between simulations and serious games, making the former very context-specific, while the latter tends to have an element of fantasy (Charsky, 2010). However, for the purpose of this article, both terms will be used interchangeably.

Serious games appear to offer key features of effective learning: active, experiential, situated, social, problem-based, and accompanied by differentiated and immediate feedback (Connolly et al., 2012; Iten & Petko, 2016). When combined with more conventional training, they can provide a powerful means of knowledge transfer (Ma et al., 2011). Recent overviews of empirical research confirm its effectiveness in teaching (Freitas & Liarokapis, 2011; Kapp, 2012, pp. 75–104).

During the last 10 years, we can observe the growing popularity of serious gaming in experiential learning of adult professionals. Examples come from almost every application domain: business and management (Faria, 1998; Palmunen, Pelto, Paalumaki, & Lainema, 2013), the military (Oswalt, 1993; Smith, 2010), medicine and health care (Graafland, Schraagen, & Schijven, 2012; Kaczmarczyk, Davidson, Bryden, Haselden, & Vivekananda-Schmidt, 2016; Lane, Slavin, & Ziv, 2001), crisis response (Quanjel, Willems, & Talen, 1998), transportation (Backlund, Engström, Johannesson, & Lebram, 2010; Meijer & Raghothama, 2014), and other public policy and government activities (Harteveld, 2011, pp. 39–54; Michael & Chen, 2005).

In the area of evaluation training, despite calls for incorporating new educational experiences (Dillman, 2013, pp. 280–281), the use of games has been very rare and reported only incidentally (Febey & Coyne, 2007; Patton, 2008). This article addresses this knowledge gap.

This article addresses the question by discussing the application of serious gaming as a promising method of teaching knowledge brokering to professionals.

We endeavor to achieve these aims by (1) providing a conceptual framework for designing a game-based learning experience, (2) demonstrating how this conceptual framework was applied in the practice of teaching evaluation, and (3) summarizing the initial lessons coming from 10 game sessions with 194 public policy professionals and students, conducted over the period of over 1 year. The article closes with general implications for teaching evaluation with games.

In the whole, this article aims to enrich the domain of evaluation training with insight from experiential learning and serious gaming—a dynamically developing field of adult education. Our hope is that educators and trainers will benefit from the conceptual framework described here and the practical illustration of how to apply a game-based method to teaching complex public management issue.

Games as Learning Tools

The recent developments in literature on cognition, neuroscience, and andragogy provide us with fascinating insight into human processes of sensemaking and learning. This section brings together those findings and combines them with game terminology to provide a conceptual framework for designing new learning experiences.

Learners, Mental Models, and Concept Maps

The literature on cognition and learning indicates that people—both individuals and teams—learn and organize their understanding of the world through the development of mental models, also called cognitive maps or theories in use (Argyris & Schön, 1995; Johnson-Laird, 2009; Held, Knauff, & Vosgerau, 2006). At the most basic level, a mental model is a representation of how a person or group of people thinks about a certain phenomenon or situation, its operation, and its relationships with the world (Paton & Gregg, 2013).

The practices of education and industrial design introduce an important distinction. Mental models reside in the human mind, thus they are intangible. However, they can be visualized in graphic form. This simplified visualization is called a concept map or conceptual model (Ambrose, Bridges, DiPietro, Lovett, & Norman, 2010, pp. 228–229; Laukkanen & Wang, 2015, p. 13; Weinschenk, 2011, pp. 72–75).

The information and assumptions stored in mental models have three components: descriptive, explanatory, and predictive-prescriptive (Zaccaro & Wood, 2004). Thus, mental models help us to make sense of our surroundings, construct an explanation of how things work, predict future events, and come up with prescriptive algorithms of how things could be influenced.

Mental models can evolve as people acquire new information actively (by experience) or passively (by communication with others). New content can be interpreted to fit with an existing mental model (so-called assimilation) or it can be rejected. However, as Paton and Gregg (2013) point out, this propensity to assimilate information makes mental models quite rigid and difficult to change by communication only.

For educators who are novices at designing learning experiences, this discussion translates into three practical activities: defining the desired learning goals, identifying the group of learners that will be targeted with the training, and being explicit about the mental models that they want to build or alter in the learners’ minds during the teaching process.

Sequence of Experiential Learning

One of the best ways of developing the mental models of adult learners is to expose them to hands-on experience during which their mental models can be altered. This approach, called experiential learning, has been a major learning strategy for adults. It is deeply rooted in basic human “trial-and-error learning,” and it underpins such pedagogical approaches as problem-based learning, inquiry-based learning, and service learning (Glyn, 2014, p. 314). Initially described by Dewey (1938), it was further expanded by other researchers (Boud, Keogh, & Walker, 1985; Schön, 1983) and popularized by Kolb (1984) in the form of the experiential learning model (ELM).

The ELM is driven by the dialectics of action–reflection and experience–abstraction. An adult learner goes through a recursive cycle of experiencing, reflecting, thinking, and acting. A concrete experience triggers reflective observation. This generates an abstract conceptualization assimilated in the form of a mental model. This, in turn, brings implications for actions (the prescriptive part of a mental model) that can be actively tested and guide new experiences (Kolb, 2013). So the ELM is, in fact, a process of constant relearning based on testing and adapting mental models.

Although the ELM has proved to be a highly effective approach for teaching adult learners, it brings a substantial challenge for educators and has certain constraints. When designing new learning experience, educators need to plan carefully how elements of action–reflection and experience–abstraction could be embedded in the overall learning process. Especially important is how learners can face the consequences of their decisions and verify their mental models based on that experience. Due to the discussed rigidity of mental models, the process of learning does not always follow a smooth cycle. Learners can be exposed to conflict and frustration when their old models are altered, ambiguity is introduced, and they face the prospect of shouldering blame. Furthermore, in some situations, the danger and costs of the mistakes are extremely high. In such cases, experiential learning cannot be applied. Finally, learners need to experience the full course of the process being studied, while some real-life processes are extended in time. This can make hands-on experience too time-consuming. Those challenges requires creative methods—such as games—to make the learning experience safe and show the consequences of learners’ decisions that in real life would come with a long delay (Keeton, 2004).

Principles of Serious Games Design

The literature on gaming and simulations indicates four conditions that have to be met to make games an effective learning experience. These relate to (1) what system the players are immersed in, (2) what the players do, (3) what the players see, and (4) what the players receive.

Games, even serious simulations, are abstractions of reality. Therefore, the first step is to simplify the real-world system that is the subject of the game and translates it into a game design. This means identifying the key actors, resources, and core cause-and-effect relationships we want to portray while removing the extraneous elements and reducing complexity (Brathwaite & Schreiber, 2008, p. 251; Duke & Geurts, 2004, pp. 202–209; Fullerton, 2008, pp. 111–147).

Proper game mechanics and activities must then be in place. This covers the structure of motivators and the rules of interactions between players (e.g., pressure for competition or cooperation) as well as the technical arrangement of the task sequence in rounds. These elements introduce real-life dynamics but also an element of fun (Kapp, 2012, p. 88). Although community of game designers have not established a unified typology of game mechanics, some useful overviews can be found in a literature (Brathwaite & Schreiber, 2008; Fullerton, 2008) and online communities (BoardGameGeek, 2017).

The third condition is the aesthetics of the game, that is, what players see. This includes color and shape of the board game and its elements, visuals on game results, and so on. A physical design of the game should allow players to immerse themselves in game experience and enjoy its aesthetics (Dickey, 2015, pp. 1–11; Kapp, 2012, pp. 46–48).

The final condition of a successful game is well-structured feedback and proper debriefing. This is what players receive both within and outside of the game. Feedback provides immediate information on players’ performances. It is a direct impulse for reflection and learning during the game. In contrast, debriefing serves the purpose of turning the experiences of players into knowledge and learning beyond the game. This means guided reflection on the mechanisms experienced by players and translation of those observations into reality. A number of authors point out that debriefing is what turns a game into a full educational experience (Crookall, 2010; Kato, 2010; Kriz, 2010).

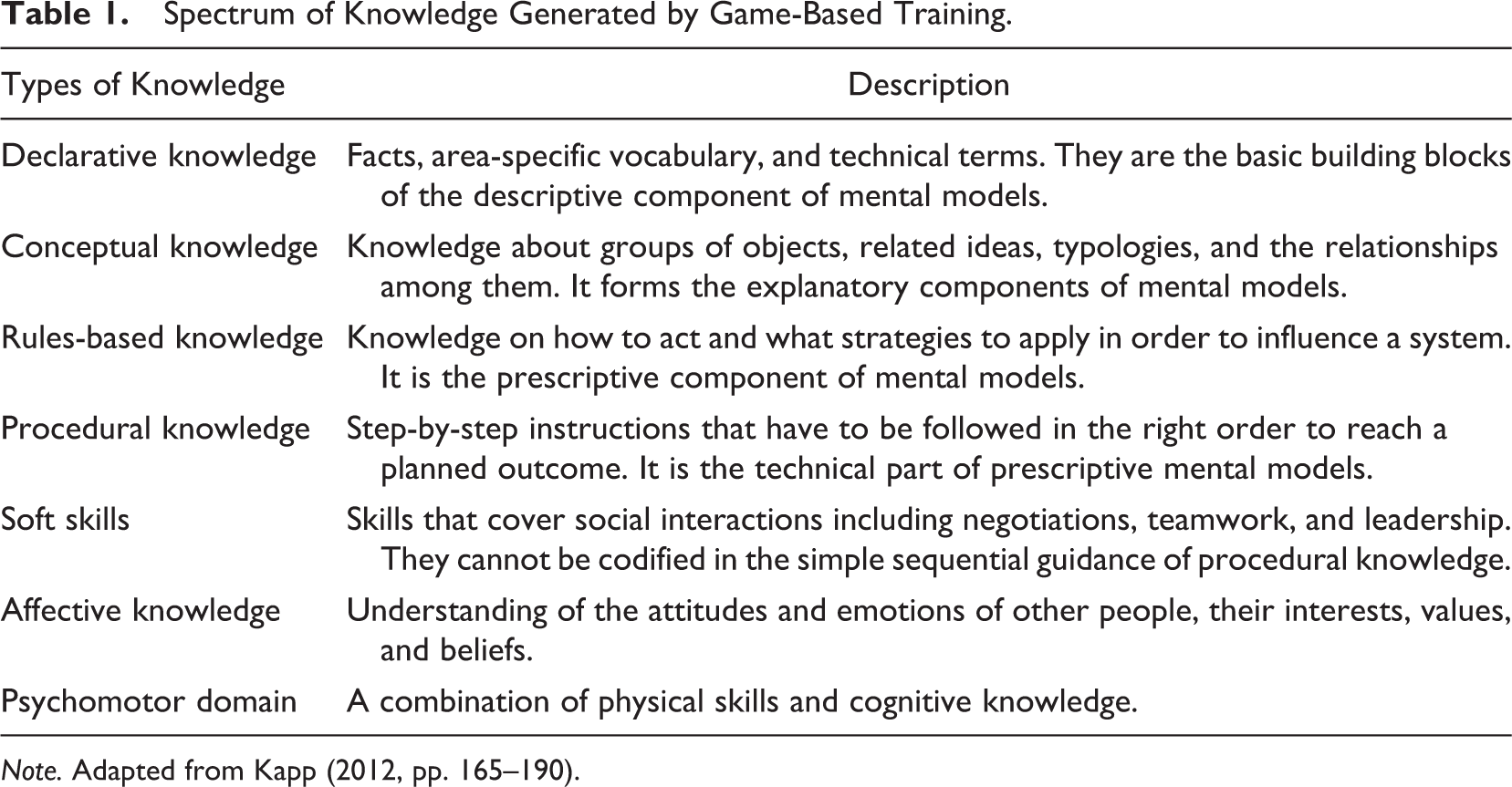

Although not greatly emphasized in the gaming literature, learning objectives are also important in the design of the serious games when they are used in teaching tools. Depending on the scope of the game and learning objectives, game-based training can generate a whole spectrum of knowledge types. They have been synthesized in Table 1.

Spectrum of Knowledge Generated by Game-Based Training.

Note. Adapted from Kapp (2012, pp. 165–190).

Explicit consideration for the knowledge types that can result from game play has the following three implications for educators and trainers. First, they should define key teaching goals for a specific group of learners and articulate mental models they want to alter or build during the educational process, specifying their descriptive or prescriptive function, and preferably visualizing models in the form of concept maps.

Second, educators should carefully consider how to expose students to actual experience and how to structure their sequence of learning. The crucial aspect to consider is how the game could be nested in the cycle of experiencing, reflecting, thinking, and acting.

Third, when designing the game, educators should address what the system players explore, game activities, mechanics, game aesthetics, feedback and final scoring, and the types of knowledge they anticipate.

Practical Application: Knowledge Brokers Workshop

This section presents the case study of the game-based workshop, designed to teach knowledge brokering to evaluation practitioners (www.knowledgebrokers.edu.pl). The demand for the innovative method was driven by the real needs of evaluation practitioners in Poland. The Polish system of evaluation, despite its dynamic development, has been experiencing the problem of the utilization of produced studies (Kupiec, 2015; Olejniczak, 2013). The architects of the system from the Ministry of Economic Development of Poland hoped that knowledge brokering could help addressing the issue of limited use of evidence in public policies. The challenge was to bring this new insight into busy professionals of the evaluation system and allow them to relate it to their jobs.

A group of experts, inspired by an overview of organizational learning practices in the public sector (Olejniczak, 2015), decided to try a serious game as a method of teaching. The game-based workshop called “knowledge brokers” was designed over a 2-year period by the team from two Polish companies: Evaluation for Government Organizations and Pracownia Gier Szkoleniowych.

The development of the game-based workshop closely followed the three elements of the conceptual framework presented in the previous section: (1) definition of teaching goals, learners, and the concept maps the workshop should promote; (2) structure of the learning experience; and (3) game design. They are discussed in detail in the following sections.

Workshop Goals, Learners, and Concept Maps

The key teaching goal of the knowledge brokers game-based workshop was defined as helping evaluation professionals to build concept maps that address three areas: Understanding the system of relationships between research evidence and policy cycle and the key factors that drive that system, learning six activities of knowledge brokering that can increase the use of evidence in public policy (understanding knowledge needs of users, acquiring, feeding and accumulating knowledge, building networks, and managing resources), and recognizing limitations of a knowledge broker’s influence in public policy decision-making.

The learners were defined as two target groups. The first group was evaluation professionals, namely, the staff of evaluation units. The workshop could be used to develop and test their strategies for effective knowledge brokering. The second group was potential future staff of evaluation units, students of academic courses on public policy and program evaluation. When used toward the end of their courses, the workshops could provide an opportunity to apply knowledge in practice.

The literature offers an extensive list of elements and relationships between research evidence (including evaluation findings) and public policy decision-making (Alkin & Taut, 2003; Cartwright, 2013; Johnson et al., 2009; Leviton & Hughes, 1981; Nutley et al., 2007; Patton, 2008; Prewitt, Schwandt, & Straf, 2012; Shulha & Cousins, 1997). However, in order to be understandable, educators need to focus on key system relationships.

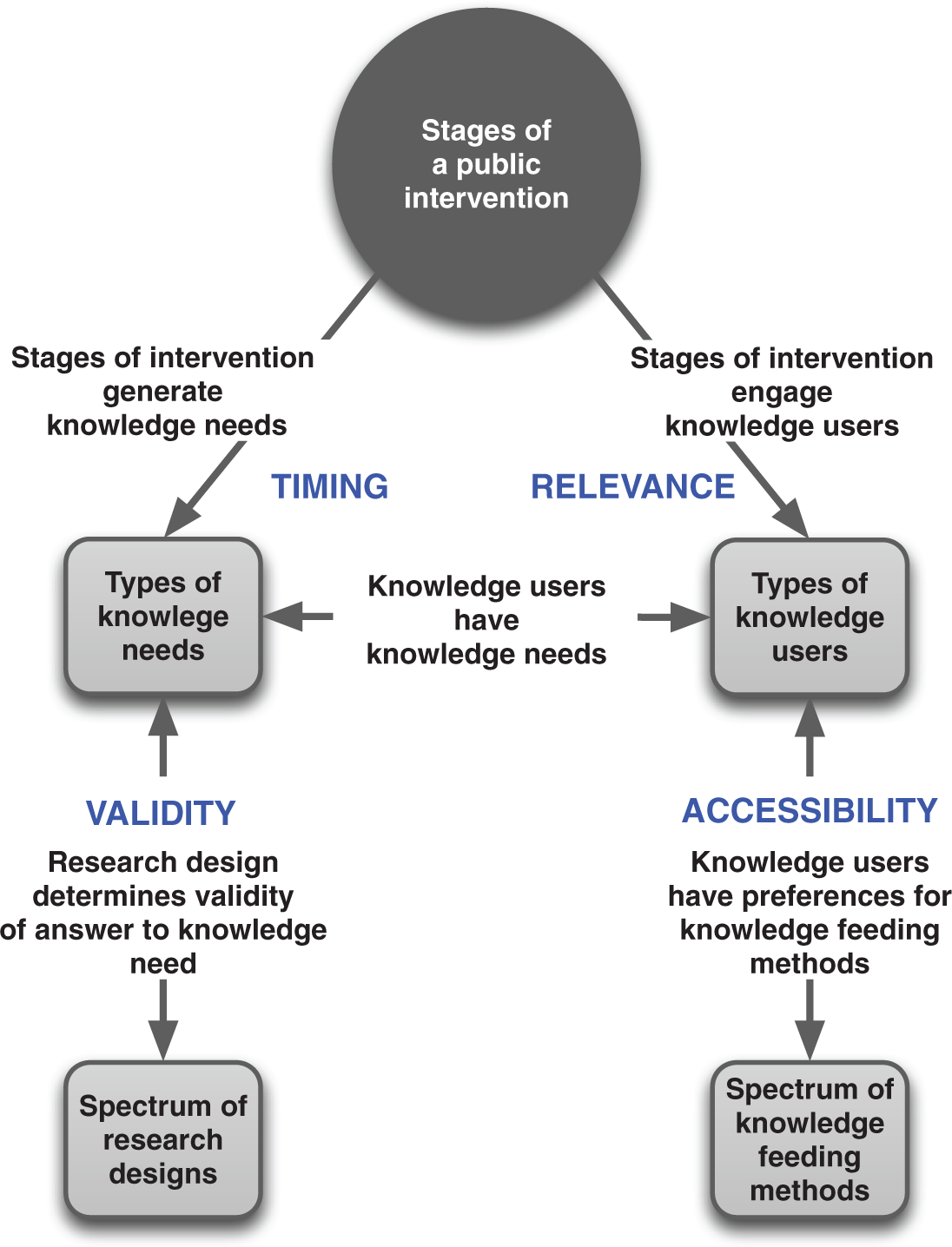

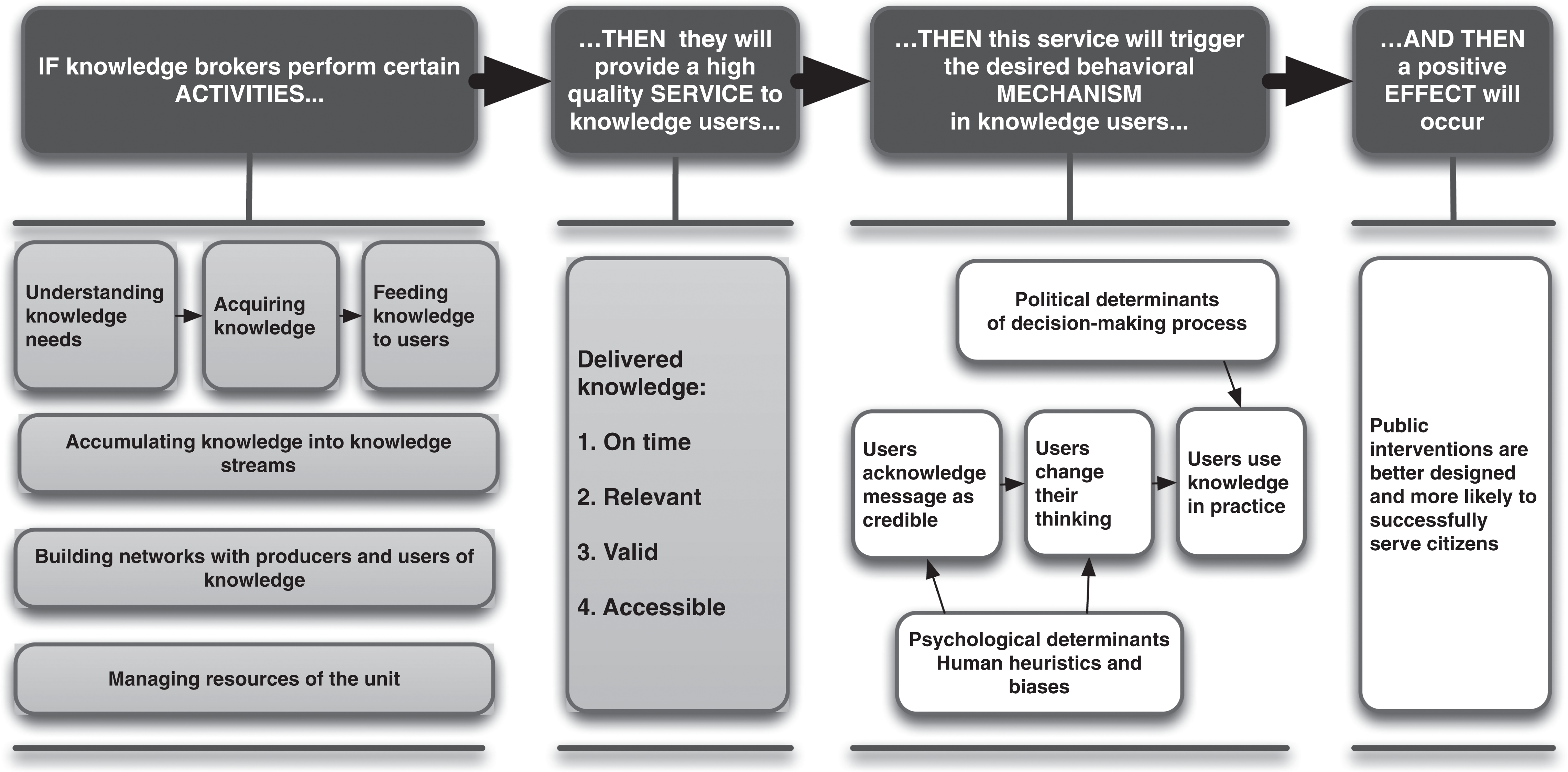

Therefore, the designers of the knowledge brokers workshop translated the complex topic of research use in public policy into two concept maps. Map A (see Figure 1) explains the system in which knowledge brokers will operate, while Map B (see Figure 2) provides a prescriptive list of what knowledge brokers can do to succeed in this system. Map A is a descriptive explanatory. It names the elements of the system and explains the key interdependencies crucial to knowledge use. It provides declarative knowledge—specific vocabulary and technical terms such as research designs, types of knowledge, types of users, and feeding methods. It also gives conceptual knowledge—the relationships between those terms. Map B is a prescriptive one. It provides a spectrum of actions to be performed by a broker. Map B focuses on rules-based knowledge (i.e., how to act and what to do to influence the system) and procedural knowledge (i.e., a sequence of step-by-step movements that have to be completed to reach outcomes). Map B also makes players become aware of the broker’s limited role in the policy process.

Concept Map A: System of knowledge use in public policy.

Concept Map B: Knowledge broker activities for evidence use.

The content of those two maps has been crucial in defining the scope of learning, structuring the workshop, designing the game, and framing knowledge gains. We discuss it in more details.

On Map A (Figure 1), the focal points are public interventions that aim to address socioeconomic issues. Policies, programs, and projects proceed in stages (Howlett, Ramesh, & Perl, 2009; Jones, 1984). This workshop focuses on planning, implementation, and assessment of projects’ outcomes.

In order to run interventions successfully, different types of knowledge are required at different stages. They span from diagnostic knowledge (know about the policy issue), through questions about possible effects (know what would work) or actual effects of implemented interventions (know what worked), questions about mechanisms explaining success or failure (know-why), to technical know-how (Ekblom, 2002; Nutley, Walter, & Davies, 2003).

Running the interventions is the business of policy actors. Numerous types of actors engage at certain policy stages (e.g., politicians, public and media, lobbyists, and bureaucrats). The workshop focuses on three types of actors: politicians, high-level civil servants, and public managers. That is because earlier empirical research showed that primary clients of evaluation units are within government structures. Those actors have different information preferences ranging from strategic issues to technical matters. They are potential knowledge users because, once involved in a particular stage of an intervention, they face certain knowledge needs.

Knowledge needs can be addressed by different sources (Davies, Nutley, & Walter, 2010, pp. 201–202). The workshop focuses on evidence coming from research studies. Their validity is determined by the quality of methodological rigor. Rigor consists of a match between research design and the research question (Petticrew & Roberts, 2003; Stern et al., 2012, pp. 15–16). This is the so-called platinum standard that has emerged in recent public policy literature as a pragmatic response to the war of paradigms (Donaldson, Christie, & Mark, 2008; Sanjeev & Craig, 2010).

Policy actors have certain preferences for forms and channels of communication. Some of them favored detailed form and formal contacts, while others favored a concise message and face-to-face discussion (Torres, Preskill, & Piontek, 2005). The range of these preferences was labeled in the game as “feeding methods,” embracing both forms of presentations (e.g., policy brief, infographics) and channels of communication (e.g., discussion meeting, conferences, etc.).

The logic of knowledge brokering activities can be formulated as a theory of change. Map B (Figure 2) presents a prescriptive concept map of the broker activities undertaken to effectively increase knowledge use.

A few things should be pointed out in relation to this theory of change. The knowledge broker directly controls the first two blocks (activities and services). It can only indirectly influence the third and fourth blocks (the mechanism and effect).

Three of the activities controlled by the knowledge broker are sequential (understanding, acquiring, feeding), relating to the concrete intervention and its knowledge need. The other three activities are horizontal actions. The accumulation of knowledge over time allows the combining of single reports into streams of knowledge, networking allows monitoring demand and supply of knowledge and reacting quickly, while resource management allows the everyday running of the evaluation unit.

The key success factor of knowledge brokers is the quality of their service. It directly corresponds with the relationships in the system of knowledge use from Map A. The four aspects of quality are (1) delivering knowledge when users need it (timing), (2) being relevant to their information needs (relevance), (3) keeping methodological rigor in the particular study (validity), and (4) using right form of presentation and channel of delivery (accessibility).

The mechanism of user’s knowledge absorption and decision-making is complex. It is influenced both by human constraints and by political dynamics. Research shows that credibility in the eye of the beholder is determined by number of factors: quality of the study (both in terms of scientific validity and in terms of basic factual accuracy), applicability of findings (also called action orientation), trust in a source (reputation of information producer, surface credibility of text), and conformity with existing evidence (studies that challenge existing mental models and organizational status quo are often ignored; Miller, 2015; Weiss & Bucuvalas, 1980). Quality service from the knowledge broker substantially increases the chances of knowledge use, but it is rarely decisive. At the end of the day, research findings are just one of the factors in the complex, nonlinear dynamics of policy-making (Davies et al., 2010; Tyler, 2013; Weiss, 1980). Understanding that evidence is only a part of decision-making opens space for further explorations on user behaviors, organizational behaviors, and policy process dynamics.

Workshop Structure

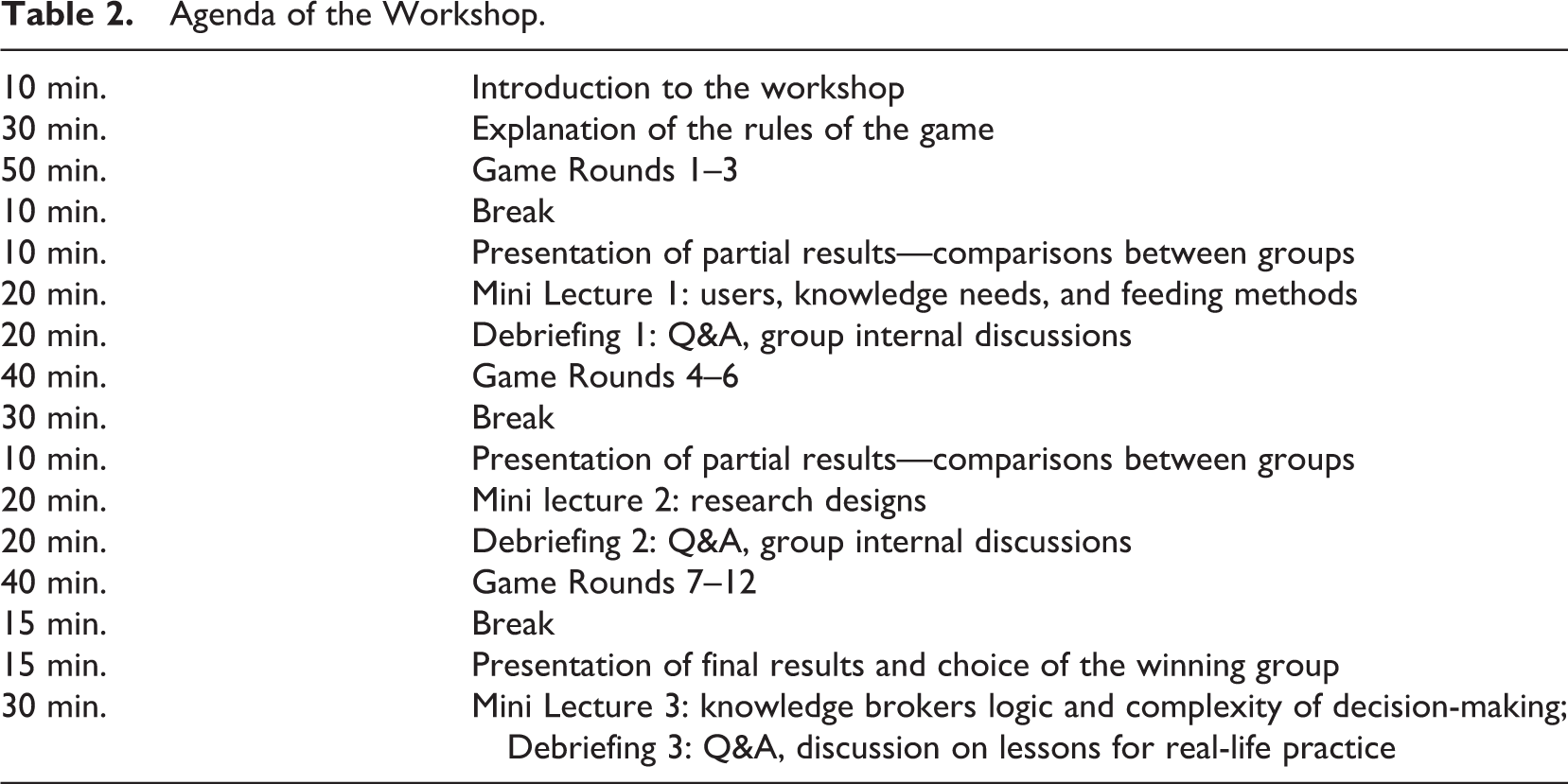

The structure of experiential learning has been designed as a 1-day educational experience for a group of up to 30 adult learners, with a serious game as the core activity.

In order to embrace key drivers of the ELM, specifically action–reflection and experience–abstraction, the workshop encompassed three elements: game, mini lectures, and debriefings.

The game rounds aimed at allowing participants to experience the dynamics, the pressure, and often even chaos of the real work of a knowledge broker and to test their own brokering skills in a safe and engaging environment.

Mini lectures were assigned to provide participants with more theoretical knowledge, revealing gradually the two concept maps that underpin the game dynamics. Lectures explained relationships between stages of the policy cycle, research questions and designs, policy actors, and knowledge feeding methods.

Debriefings were designed in a form of carefully animated discussions, supported by real-time feedback from game results. Their function was to allow players to reflect on their strategies within the game. On that basis, they could adapt strategies for new game rounds and ultimately transfer key learnings into the practice of their organizations. For example, during the first debriefing, facilitators discuss issue of accessibility. Players discover that feeding methods consist of two complementary groups—forms of presentations and channels of communication. Broker has to combine both of them in order to influence user.

During the whole workshop, these three elements—game, mini lectures, and debriefings—intertwined with each other (see workshop agenda in Table 2). As a result, learners were able to go 3 times through the whole sequence of ELM—from experience, through discovery of the theory behind the system, to reflection and actions based on their own modified strategies.

Agenda of the Workshop.

Game Design

What players explore and accomplish

In the game, players explore life as knowledge brokers working in an evaluation unit. Their mission is to provide expertise to decision makers that would help in implementation of four different types of socioeconomic projects. They do it by responding to the knowledge needs and contracting out studies with an appropriate research design, targeting the key users of the study, and choosing methods for feeding knowledge to users. The winning team is the one with the highest record of utilized reports.

On the practical level, 30 participants of the workshop are divided into six groups. Each group of players manages an evaluation unit in a region for 12 rounds (one round represents 1 month in real life and takes, on average, 12 min in the game session). The four public projects they should assist with knowledge are combating single mothers’ unemployment, developing a health-care network, revitalizing a downtown area, and developing a public transportation system for a metropolitan area (see Appendix A). With each turn, knowledge needs appear for each project. Needs have a form of magnetic plate with a description of the contextual situation in a project, the problem question, and deadline for the answer to be delivered. Over the course of the game, players have to manage 19 different knowledge needs.

Upon receiving the knowledge need, the team has to make a number of decisions. First, they decide if they want to work on that need. If they do, they assign one pawn from their resources to supervise study progress. Second, they choose a research design that matches the research question. Designs have different execution times, so players have to choose not only the one that is most appropriate from a merit point of view but also feasible to be implemented before the deadline. Third, players choose which knowledge user would be interested in the results of that study. Finally, they decide what feeding method would be most appropriate for that type of user.

Players can perform three additional activities during each round, if they have spare pawns (human resources) who have not been assigned to knowledge needs. The team can invest an additional human resource into feeding activities to add two more feeding methods to the study of their choice. That increases the chances of successfully transferring the report to the decision-making world. The team can also use its staff to explore an archive to bring already existing reports for the particular topic. The report withdrawn from the archive can be added to their own study to strengthen their body of evidence. Furthermore, the team can be proactive and send a networker who will check for the chosen project if there are new needs brewing for the next month. If that is the case, he will bring the knowledge need earlier, giving the team a time advantage.

The cycle of described decisions is repeated every turn. When a particular report is ready, the team delivers it to the jury for feedback. The example of the game process is presented in Appendix B.

What drives the activities of players are game mechanics. Knowledge brokers workshop uses a combination of six game mechanics to accelerate players’ decision-making process and engage them on an emotional level (see Table 3).

Game Mechanics Used in Knowledge Brokers.

Game elements and sequence of activities are built on and tightly aligned with two concept maps discussed in Workshop Goals, Learners, and Concept Maps. Concept Map A (see Figure 1) provides all elements of the game (stages of public interventions, types of knowledge needs, research designs, knowledge users, and feeding methods). The blocks of concept Map B (see Figure 2) provides the game with a sequence that has to be completed by players in order to reach outcomes (block called “activities”), scoring criteria (block called “quality service”), and mechanism and effects that players contribute to but cannot control (two blocks on the right side of the figure).

The incorporation of the concept maps into the game design allows players to learn their content as they progress in the game. So, throughout the game, as they are looking and interacting with the physical pieces of the game, players gradually learn Map A. While undertaking actions in the game and receiving scores, they learn the content of Map B—the optimal combination of knowledge broker activities that could increase evidence use.

What players see—Game aesthetics

Each group plays on a large board (4.5 ft. × 3 ft.) that provides them with everything they need to run an evaluation unit effectively (see Appendix A, Figure A1). The size of the board allows for group cooperation and interaction. The game aesthetic is simple and clear, reflecting the serious aesthetics of public bureaucracy. Colors are used only to distinguish groups of elements and types of interventions.

The central element of the board is the calendar and time line of the four projects implemented in the region. It is the axis of players’ activity that demands their attention and frames their experience. Projects are at different stages of implementation. The size of the board’s element visually indicates its importance and signals that players are knowledge service providers for the ongoing projects.

At the top of the game board is a place for players’ resources (see Appendix A): human resources—staff of their unit in a form of pawns; research designs—magnets representing eight research designs—review and synthesis, experiments and quasi-experiments, statistical study, simulation game, theory-based evaluation, case-based design, participatory approach, and descriptive study; knowledge users—three types of primary users of knowledge produced by the unit—politician, head of a department, and project manager; feeding methods—magnets with 10 types of forms and channels of dissemination—policy brief, table of recommendations, logic model, video presentation or infographics, argument map, dashboard, small discussion meeting, big meeting or conference, contact through advisors, and personal contact with user; and archives: cards of reports prepared by other analytical units in the past.

Players build their reports combining their resources on the given magnetic plates. This offers a feel of “report weight” and activates the motor memory of “building” a good study with key building blocks (i.e., see Appendix B).

The delivery of the report is designed as a physical activity—an actual walk to the judges’ desk with the package of reports. Feedback is provided in the form of plates as well. Players collect them and can analyze them visually (see Appendix B). The overall results, ranking of groups and passage of time, are pictured on the main screen.

What players receive—Feedback and scoring

After each turn, the groups of players that have completed their reports receive scores. The basic condition is timing—only reports that arrived within the given deadline are provided with detailed feedback in a form of a card with infographics. The feedback card includes choices made by players and percentage points on how well the team matched (1) the knowledge need with users’ interests (relevance), (2) research designs with knowledge needs (validity), (3) feeding methods with user profile (accessibility), and (4) information on the final effect—if a policy actor made a decision based on the delivered knowledge or on other premises (e.g., political rationale).

The structure of the scoring system closely follows concept Map A and the definition of high-quality service on Map B. Workshop facilitators use automated algorithm to quickly assess the results. The game algorithm includes spectrum of good enough and optimal choices. It was developed on the basis of research literature review, tested on experts’ panels with Polish professional evaluators, and further calibrated during four game sessions. The example of the assessment is presented in Appendix B.

In principle, the higher the matches, the higher are the chances that decision makers will use the knowledge. Making all perfect matches, the team can win 99 points per report. The proper use of an archive can further increase the chances of success by 10 points (or reduce it by 5 if used incorrectly). The use of a networker also adds 10 points because, as research shows, networking usually prepares the ground for results. However, the final effect has always an element of randomness. This mirrors the complexity of decision-making in public policy, and the fact that research evidence is only one of the factors that contributes to decisions. The scores for single reports are added up during the game. The maximum possible score for the 19 reports is 1,881 points plus extra 10 points for each use of networker (up to 170 extra points). The final results of the teams are compared to that benchmark and to each other.

Conclusions

Modern theories of learning clearly indicate that learning is most effective when it is active, experiential, situated, problem-based, and accompanied by immediate feedback (Connolly et al., 2012; Kapp, 2012). This calls for applying new practice-oriented educational experiences. It is especially important for evaluation training, a field of applied research that serves practitioners of public policy.

Serious gaming is an emerging experiential learning method that has all of the features of effective learning. It engages participants using a gamification approach (the so-called fun factor). It combines hands-on experience with immediate feedback and more reflective discussion. Because of this, participants can go through a whole cycle of experiential learning—from experience through reflection and theory building to action. They gain a holistic understanding of mechanisms that drive systems. This is a unique opportunity, especially for staff of bureaucratic organizations that often have a fragmented view of the policy-making process. The game-based method also provides safe experimentation, enabling players to control the learning process and “freeze” the action to clarify matters. It allows the learning experience to be intensified as case of knowledge brokers, when players complete 1 year of an evaluation unit’s activities in 1 day. This helps to show the consequences of players’ decisions that in real life, especially in the case of complex public policies, would come with a long delay. Finally, the simulation allows participants to comprehend a spectrum of knowledge types, from declarative and conceptual knowledge through rule-based and procedural knowledge to even soft skills.

The example of the knowledge brokers workshop discussed in this article indicates that the application of serious gaming indeed seems promising for teaching evaluation skills to professionals in practice but without bearing the costs of mistakes—an inevitable part of the learning process.

Ten game sessions have been conducted with 194 public policy professionals and students. So far, the assessment of the game has been based solely on the results of posttraining surveys with the participants and facilitator’s observation. Current data suggest number of positives. Eighty-three percent of workshop participants stated that the workshop improved their understanding of the topic. Also, the Polish evaluation units that took part in the training began to use knowledge brokering concepts in their work, namely, to plan and describe the scope of their work activities.

The assessment of the effects of training still requires robust studies. One could think of two types of research. The first type is a well-designed, pretest, and posttest experimental study that would compare the effects of a game-based workshop with traditional lecture, in terms of learners’ knowledge absorption and retention. The second type is follow-up analysis on how participants applied the gained knowledge and skills to their workplaces, in the particular organizational environment.

Despite this limitation, the current observations from sessions provide four lessons on the workshop implementation. They relate to the conceptual framework for game-based learning method.

Lesson 1: Fit of the Goals and Participants

Understanding the factors and mechanisms that could enhance evaluation utilization has been vital to evaluation teaching, evaluation capacity-building initiatives, and, more broadly, reflexive social learning in public policies (Alkin, 2004; Bourgeois & Cousins, 2013; Läubli & Mayne, 2014; Patton, 2008; Preskill & Torres, 1999; Sanderson, 2002; Weiss, 1998). The topic of the training clearly met the interest of participants coming from the world of policy practice. In posttraining survey, 79% declared that the knowledge and skills they learnt would be useful in their jobs.

The workshop was designed for evaluation professionals and students of public policy and program evaluation. However, initial response from policy practitioners indicates that the group of learners could be extended. In five cases, public authorities contracted a game-based workshop for a mixed group of professionals—staff of evaluation units and the staff of units involved in policy planning and implementation. Their aim was to build a network within their organization and an understanding of the utility of evaluation as knowledge service. This way of thinking about the game application has been echoed in the postworkshop survey. One hundred eighty of 194 participants indicated that they would recommend this workshop to others, and 32% of that group was pointing out to nonevaluation professionals such as senior decision makers, staff involved in strategic planning and delivery of policies and programs. They suggested that workshop could help in raising awareness on the utility of research evidence and becoming more mindful as users of knowledge.

Lesson 2: The Importance of Concept Maps

The literature on adult learning states that learning is about organizing information in the form of mental models that helps us understand certain phenomena or relationships (Held et al., 2006; Johnson-Laird, 2009). Making these models explicit and visualizing them in the form of concept maps enhance learning (Adesope & Nesbit, 2013; van den Bossche, Gijselaers, Segers, Woltjer, & Kirschner, 2011).

Experiences coming from the workshop indicate utility of concept maps in guiding the whole educational experience and organizing learners’ thinking. Fifty-one percent of the participants pointed out that the most valuable part of the workshop was the holistic picture of the relationships among key elements of the system (Map A) and their role as brokers in it (Map B). Concept maps allowed them to see the organizing principles while in the same time “unpacking” elements of the maps without losing the “bigger picture.”

Based on facilitators’ observation, we can speculate that two aspects were helpful in making concept maps work. The first is the visualization of concept maps. After the first two workshops, the maps were turned into an animated Prezi presentation (to see animated concept maps, visit https://prezi.com/av0mg4c3eikh/game_knowledge-brokers/). This allowed showing learners entire concept maps (as on Figures 1 and 2) and then zooming in on their elements to explore details. For example, during the first mini lecture, learners were zooming in on the box of feeding methods on concept Map A. They were able to see descriptions and examples of particular methods but also discover the internal grouping between forms of presentation and ways of communication. The second possibly beneficial aspect was the gradual presentation of maps. Map A was shown during the first mini lecture, while its elements were discussed gradually throughout the workshop to help players see and construct system relationships. Map B was revealed at the last mini lecture, as a summary of all game activities, allowing players to reflect on their strategies. We have to note however that validity of these initial observations should be verified with systematic research.

Lesson 3: Workshop Structure as Learning Cycle

The ELM requires a recursive cycle of experiencing, reflecting, thinking, and acting (Kolb, 1984). In the discussed example, this cycle has been translated into game rounds, mini lectures, and debriefing. Almost all of the participants declared that they enjoyed the workshop (96%) and that the training method used was appropriate for the audience (95%). Of 102 participants who suggested improvements, 32% postulated more time for discussions and reflection on feedback to learn about theory behind the concrete cases. Not all groups covered all 12 months of the game. Some groups proceeded fast in the game, focusing on game progress and discussion within groups, while the others wanted to spend more time debating their choices and sharing their experiences in the main forum.

Thus, the workshop facilitators should observe group dynamics and be flexible in terms of time slots assigned between game (experience) and discussion (reflection). In general, the game should be treated as just a means for the ultimate goal of participants’ reflection. More time should be devoted to Q&A (Questions & Answers), and discussion of players’ choices collated with insights from real research cases.

Lesson 4: Application of the Game Design Principles

The creators of the workshop have found helpful the four principles of game design in guiding the process of constructing the educational game experience. The first principle is creating the world in which players will operate. Knowledge brokers showed how to build, with the use of empirical research, simplified concept maps of reality and then align the game narrative with them. Practitioners of the game session were expressing in their comments during and after the game that the game is close to their work reality.

In terms of game activities and mechanics (drivers that motivate players), it seemed useful, as in the discussed example, to line up activities of the players directly with learning content (in this case, brokers’ actions). The case also illustrated benefits of combining different mechanics together.

The game aesthetics should correspond with the nature of the explored topic, allowing players to immerse themselves in the experience. For example, knowledge brokers used magnet plates to create a sense of building the studies from pieces. It also used simple aesthetics to reflect the nature of public bureaucracy.

The final game design issue is well-structured feedback with proper debriefing (Crookall, 2010; Kriz, 2010). As indicated by the application of knowledge brokers, providing players with the real-time results of their decisions allowed for substantial group reflection grounded in data. Also, time for debriefing is indispensable for learners to build their understanding of the explored problem.

Closing Remarks

Looking back at the general picture, linking fields of evaluation and serious gaming creates opportunities not only for teaching but also for cross-field research. There is a need for systemic evaluation studies on effects of serious games on individuals, groups, and organizations, in comparisons to more traditional teaching methods. The evaluation community can contribute substantially to the sound testing of games as a learning method. From the discussed example of the knowledge brokers workshop, we learn that creating synergies between fields of evaluation and gaming brings the promise of exciting developments for evaluation students, teachers, and researchers.

Footnotes

Appendix A

Appendix B

Acknowledgments

The author would like to express his gratitude to colleagues involved in the design and testing of the game: Łukasz Kozak, Igor Widawski, Jakub Wiśniewski, Joanna Średnicka, Bartosz Ledzion, and Jagoda Gandziarowska-Ziołecka. The author would also like to thank Estelle Raimondo and Tomasz Kupiec, who coauthored the empirical research on knowledge brokering, and a number of Polish civil servants who participated in the testing of the game. Last, but not least, the author would like to thank Anne T. Vo—the editor of the section and the two anonymous reviewers for their most valuable comments that substantially improved the content of this article.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The Knowledge Brokers game-based workshop discussed in the article was developed by a team of experts from two Polish companies: Pracownia Gier Szkoleniowych (PGS) and Evaluation for Government Organizations (EGO s.c.), and it was used by those companies for training and educational purposes. The author of this article is the co-founder of EGO s.c. He was a leader of the team that designed the workshop and has been using the workshop in his academic teaching and professional trainings.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The University of Warsaw paid for the open access of this article.